Abstract

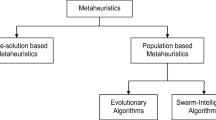

Methaheuristics (MHs) are techniques widely used for solving complex optimization problems. In recent years, the interest in combining MH and machine learning (ML) has grown. This integration can occur mainly in two ways: ML-in-MH and MH-in-ML. In the present work, we combine the techniques in both ways—ML-in-MH-in-ML, providing an approach in which ML is considered to improve the performance of an evolutionary algorithm (EA), whose solutions encode parameters of an ML model—artificial neural network (ANN). Our approach called TS\(_{in}\)EA\(_{in}\)ANN employs a reinforcement learning neighborhood (RLN) mutation based on Thompson sampling (TS). TS is a parameterless reinforcement learning method, used here to boost the EA performance. In the experiments, every candidate ANN solves a regression problem known as protein structure prediction deviation. We consider two protein datasets, one with 16,382 and the other with 45,730 samples. The results show that TS\(_{in}\)EA\(_{in}\)ANN performs significantly better than a canonical genetic algorithm (GA\(_{in}\)ANN) and the evolutionary algorithm without reinforcement learning (EA\(_{in}\)ANN). Analyses of the parameter’s frequency are also performed comparing the approaches. Finally, comparisons with the literature show that except for one particular case in the largest dataset, TS\(_{in}\)EA\(_{in}\)ANN outperforms other approaches considered the state of the art for the addressed datasets.

Similar content being viewed by others

Notes

Arms or actions will be referred to as alleles from now on in the text.

We cannot call them optimal choices. We only know that the approach provided better results using them.

References

Back, T., Fogel, D.B., Michalewicz, Z.: Handbook of Evolutionary Computation, 1st edn. IOP Publishing Ltd., Bristol (1997)

Baymurzina, D., Golikov, E., Burtsev, M.: A review of neural architecture search. Neurocomputing 474, 82–93 (2022)

Bouneffouf, D., Rish, I., Aggarwal, C.: Survey on applications of multi-armed and contextual bandits. In: 2020 IEEE Congress on Evolutionary Computation (CEC). IEEE Press, pp. 1–8 (2020)

CASP (2020) Protein structure prediction center. https://predictioncenter.org/

Cohen, F.E., Kelly, J.W.: Therapeutic approaches to protein-misfolding diseases. Nature 426(6968), 905–909 (2003)

Conover, W.J.: Practical Nonparametric Statistics, 3rd edn. Wiley, New York (1999)

Cuvelier, T., Combes, R., Gourdin, E.: Statistically efficient, polynomial-time algorithms for combinatorial semi-bandits. Proc. ACM Meas. Anal. Comput. Syst. 5(1), 7387 (2021). https://doi.org/10.1145/3447387

Darwish, A., Hassanien, A.E., Das, S.: A survey of swarm and evolutionary computing approaches for deep learning. Artif. Intell. Rev. 53(3), 1767–1812 (2020)

Dua, D., Graff, C.: UCI machine learning repository (2017). http://archive.ics.uci.edu/ml

Fairee, S., Khompatraporn, C., Prom-on, S., et al.: Combinatorial artificial bee colony optimization with reinforcement learning updating for travelling salesman problem. In: 2019 16th International Conference on Electrical Engineering/Electronics, Computer, Telecommunications and Information Technology (ECTI-CON), pp. 93–96 (2019). https://doi.org/10.1109/ECTI-CON47248.2019.8955176

Floreano, D., Mattiussi, C.: Neuroevolution: from architectures to learning. Evol. Intell. 1, 47–62 (2008). https://doi.org/10.1007/s12065-007-0002-4

Gao, Z., Chen, Y., Yi, Z.: A novel method to compute the weights of neural networks. Neurocomputing 407, 409–427 (2020). https://doi.org/10.1016/j.neucom.2020.03.114

Gascón-Moreno, J., Salcedo-Sanz, S., Saavedra-Moreno, B., et al.: An evolutionary-based hyper-heuristic approach for optimal construction of group method of data handling networks. Inf. Sci. 247, 94–108 (2013). https://doi.org/10.1016/j.ins.2013.06.017

Gendreau, M., Potvin, J.Y. (eds.): Handbook of Metaheuristics, 2nd edn. Springer, New York (2010)

Hassan, M., Sabar, N.R., Song, A.: Optimising deep learning by hyper-heuristic approach for classifying good quality images. In: Shi, Y., Fu, H., Tian, Y., et al. (eds.) Computational Science—ICCS 2018, pp. 528–539. Springer, Cham (2018)

Hoang, T.N., Hoang, Q.M., Ouyang, R., et al.: Decentralized high-dimensional Bayesian optimization with factor graphs. In: Proceedings of the Thirty-Second AAAI Conference on Artificial Intelligence and Thirtieth Innovative Applications of Artificial Intelligence Conference and Eighth AAAI Symposium on Educational Advances in Artificial Intelligence. AAAI Press, AAAI’18, pp. 3231–3238 (2018)

Jaafra, Y., Laurent, J.L., Deruyver, A., et al.: Reinforcement learning for neural architecture search: a review. Image Vis. Comput. 89, 57–66 (2019)

Kaelbling, L.P., Littman, M.L., Moore, A.W.: Reinforcement learning: a survey. J. Artif. Int. Res. 4(1), 237–285 (1996)

Karimi-Mamaghan, M., Mohammadi, M., Meyer, P., et al.: Machine learning at the service of meta-heuristics for solving combinatorial optimization problems: a state-of-the-art. Eur. J. Oper. Res. 296(2), 393–422 (2022). https://doi.org/10.1016/j.ejor.2021.04.032

Lattimore, T., Szepesvári, C.: Bandit Algorithms. Cambridge University Press, Cambridge (2020). https://doi.org/10.1017/9781108571401

Liang, X., Xu, J.: Biased relu neural networks. Neurocomputing 423, 71–79 (2021). https://doi.org/10.1016/j.neucom.2020.09.050

Liu, Y., Sun, Y., Xue, B., et al.: A survey on evolutionary neural architecture search. IEEE Trans. Neural Netw. Learn. Syst. PP, 1–21 (2021)

Mahajan, A., Teneketzis, D.: Multi-Armed Bandit Problems, pp. 121–151. Springer, Boston (2008)

Mathieu-Gaedke, M., Böker, A., Glebe, U.: How to characterize the protein structure and polymer conformation in protein-polymer conjugates—a perspective. Macromol. Chem. Phys. 224(3), 2200353 (2023). https://doi.org/10.1002/macp.202200353

Mitchell, T.M.: Machine Learning, 1st edn. McGraw-Hill, New York (1997)

Ozsoydan, F., Gölcük, I.: A hyper-heuristic based reinforcement-learning algorithm to train feedforward neural networks. Eng. Sci. Technol. Int. J. 35, 101261 (2022). https://doi.org/10.1016/j.jestch.2022.101261

Pagliuca, P., Milano, N., Nolfi, S.: Maximizing adaptive power in neuroevolution. PLOS ONE 13(e0198), 788 (2018). https://doi.org/10.1371/journal.pone.0198788

Pathak, Y., Rana, P., Singh, P., et al.: Protein structure prediction (rmsd \(\le \) 5 Å) using machine learning models. Int. J. Data Min. Bioinform. 14, 71–85 (2016). https://doi.org/10.1504/IJDMB.2016.073361

Poyser, M., Breckon, T.P.: Neural architecture search: a contemporary literature review for computer vision applications. Pattern Recognit. 147(110), 052 (2024). https://doi.org/10.1016/j.patcog.2023.110052

Real, E., Moore, S., Selle, A., et al.: Large-scale evolution of image classifiers. In: Proceedings of the 34th International Conference on Machine Learning, vol. 70. JMLR.org, Sydney, NSW, Australia, ICML’17, pp. 2902–2911 (2017)

Russo, D., Roy, B., Kazerouni, A., et al.: A Tutorial on Thompson Sampling. Now Publishers, Boston (2018). https://doi.org/10.1561/9781680834710

Sabar, N.R., Turky, A., Song, A., et al.: Optimising deep belief networks by hyper-heuristic approach. In: 2017 IEEE Congress on Evolutionary Computation (CEC), pp. 2738–2745 (2017). https://doi.org/10.1109/CEC.2017.7969640

Sabar, N.R., Turky, A., Song, A., et al.: An evolutionary hyper-heuristic to optimise deep belief networks for image reconstruction. Appl. Soft Comput. 97(105), 510 (2020). https://doi.org/10.1016/j.asoc.2019.105510

Santra, S., Hsieh, J.W., Lin, C.F.: Gradient descent effects on differential neural architecture search: a survey. IEEE Access 9, 89602–89618 (2021)

Shalev-Shwartz, S., Ben-David, S.: Understanding Machine Learning: From Theory to Algorithms. Cambridge University Press, USA (2014)

Singh, B., Toshniwal, D.: MOWM: multiple overlapping window method for RBF based missing value prediction on big data. Expert Syst. Appl. 122, 303–318 (2019). https://doi.org/10.1016/j.eswa.2018.12.060

Sun, Y., Xue, B., Zhang, M., et al.: A particle swarm optimization-based flexible convolutional autoencoder for image classification. IEEE Trans. Neural Netw. Learn. Syst. 30(8), 2295–2309 (2019). https://doi.org/10.1109/tnnls.2018.2881143

Sun, Y., Yen, G.G., Yi, Z.: comment-cator-based evolutionary algorithm for many-objective optimization problems. IEEE Trans. Evol. Comput. 23(2), 173–187 (2019). https://doi.org/10.1109/TEVC.2018.2791283

Sun, Y., Xue, B., Zhang, M., et al.: Automatically designing CNN architectures using the genetic algorithm for image classification. IEEE Trans. Cybern. 50(9), 3840–3854 (2020). https://doi.org/10.1109/tcyb.2020.2983860

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. A Bradford Book, Cambridge (2018)

Thompson, W.R.: On the likelihood that one unknown probability exceeds another in view of the evidence of two samples. Biometrika 25, 285–294 (1933)

Turkeš, R., Sörensen, K., Hvattum, L.M.: Meta-analysis of metaheuristics: quantifying the effect of adaptiveness in adaptive large neighborhood search. Eur. J. Oper. Res. 292(2), 423–442 (2021). https://doi.org/10.1016/j.ejor.2020.10.045

Ünal, H.T., Basçiftçi, F.: Evolutionary design of neural network architectures: a review of three decades of research. Artif. Intell. Rev. 55, 1723–1802 (2021)

Wan, X., Ru, B., Esparança, P.M., et al.: Approximate neural architecture search via operation distribution learning. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 2377–2386 (2022)

Wang, Y., Pan, S., Li, C., et al.: A local search algorithm with reinforcement learning based repair procedure for minimum weight independent dominating set. Inf. Sci. 512, 533–548 (2020). https://doi.org/10.1016/j.ins.2019.09.059

Wu, M.T., Tsai, C.W.: Training-free neural architecture search: a review. ICT Express (2023)

Zhou, Y., Hao, J.K., Duval, B.: Reinforcement learning based local search for grouping problems: a case study on graph coloring. Expert Syst. Appl. 64, 412–422 (2016). https://doi.org/10.1016/j.eswa.2016.07.047

Zielesny, A.: From Curve Fitting to Machine Learning: An Illustrative Guide to Scientific Data Analysis and Computational Intelligence. Intelligent Systems Reference Library. Springer Berlin Heidelberg (2011). https://books.google.com.br/books?id=TG7JUVgVJUIC

Acknowledgements

This work has been partially supported by Grant 314699/2020-1 of the National Council for Scientific and Technological—CNPq.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Venske, S.M.S., de Almeida , C.P. & Delgado , M.R. Metaheuristics and machine learning: an approach with reinforcement learning assisting neural architecture search. J Heuristics (2024). https://doi.org/10.1007/s10732-024-09526-1

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10732-024-09526-1