Abstract

Proactive analysis of patient pathways helps healthcare providers anticipate treatment-related risks, identify outcomes, and allocate resources. Machine learning (ML) can leverage a patient’s complete health history to make informed decisions about future events. However, previous work has mostly relied on so-called black-box models, which are unintelligible to humans, making it difficult for clinicians to apply such models. Our work introduces PatWay-Net, an ML framework designed for interpretable predictions of admission to the intensive care unit (ICU) for patients with symptoms of sepsis. We propose a novel type of recurrent neural network and combine it with multi-layer perceptrons to process the patient pathways and produce predictive yet interpretable results. We demonstrate its utility through a comprehensive dashboard that visualizes patient health trajectories, predictive outcomes, and associated risks. Our evaluation includes both predictive performance – where PatWay-Net outperforms standard models such as decision trees, random forests, and gradient-boosted decision trees – and clinical utility, validated through structured interviews with clinicians. By providing improved predictive accuracy along with interpretable and actionable insights, PatWay-Net serves as a valuable tool for healthcare decision support in the critical case of patients with symptoms of sepsis.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Avoid common mistakes on your manuscript.

1 Highlights

-

This article proposes PatWay-Net, a novel machine learning framework for predicting critical pathways of patients with sepsis symptoms. Our framework retains patient pathway data in its natural form by combining non-linear multi-layer perceptrons (MLPs) for each static feature (i.e., static module) and an interpretable LSTM (iLSTM) cell for sequential features (i.e., sequential module).

-

Our results reveal that our approach outperforms commonly used interpretable machine learning models in our case, such as decision tree and logistic regression by 10.4% and 7.3% in terms of the area under receiver operating characteristic curve, respectively, and non-interpretable models, such as random forest and XGBoost by 4.4% and 1.2%, respectively.

-

PatWay-Net provides decision support to clinicians and hospital management in predicting the pathway of a patient accurately while remaining interpretable and can, therefore, help to improve hospital resource management.

-

To enhance the model’s interpretability and utility for clinical decision-makers, we have developed a comprehensive dashboard that visualizes patient health trajectories, predictive outcomes, and associated risks, facilitating informed clinical and resource allocation decisions.

-

The clinical utility of our framework is supported by structured interviews with independent clinicians, confirming its interpretability and actionable insights for healthcare decision support.

2 Introduction

As healthcare organizations face increasing demands and limited resources, the efficiency and compliance of healthcare processes are becoming increasingly important [1]. The pandemic has served as a stress test for these processes, revealing several weaknesses, such as gaps in resource allocation, inefficiencies in patient triage, and limitations in data-driven decision-making [2, 3]. As a remedy, advanced decision support systems based on modern machine learning (ML) models can be employed to improve the performance of healthcare processes and provide proactive insights for clinical decision-makers [3,4,5]. By using large amounts of data that are ubiquitously generated in today’s healthcare information systems, such models can learn non-trivial patterns from historical patient trajectories.

A rich source of historical patient data is represented by so-called patient pathways, a timeline of each patient that describes the different departments, measurements, treatments, and transitions that a patient has gone through during a clinical stay [6]. This information can be used to make accurate predictions about future health outcomes, informing the allocation of resources or the focus of medical professionals on specific patients [e.g., 6, 7,8,9]. In this way, healthcare institutions can derive recommendations for managing and controlling patient pathways early and identify risks and issues before they emerge.

Such recommendations are especially crucial in the context of sepsis symptoms, a complex and time-sensitive condition that demands rapid identification and intervention to improve patient outcomes [10]. By leveraging patient pathway data, healthcare institutions can not only derive timely recommendations to manage and control disease progression but also identify risks and issues, such as early signs of sepsis, before they escalate [11]. Consequently, early detection and treatment of sepsis, facilitated by the analysis of patient pathways, can significantly reduce a patient’s deterioration.

ML models represent a promising choice for predicting patient pathways as they can rapidly process large amounts of patient data and find latent patterns that help make informed decisions about patient outcomes. ML models come in various forms and facets. For critical applications, clinical decision-makers typically favor interpretableFootnote 1 ML models like decision trees, linear and logistic regression, and generalized additive models (GAMs) [e.g., 12, 13, 14, 15, 16, 17]. They have the advantage of providing a clear understanding of how predictions are derived, which is crucial for making informed and accountable decisions. At the same time, however, such interpretable models have the limitation that they cannot handle sequential data structures in their natural form, limiting their prediction capabilities for time-varying patient data.

In contrast, there is an increasing interest in using more advanced and flexible models, such as bagged and boosted decision trees [e.g., 6, 8, 9, 18] or deep neural networks (DNNs) [e.g., 19, 20, 21]. DNNs are of particular interest for predicting patient pathways because of their ability to automatically discover and learn complex patterns in high-dimensional data [22]. This ability also allows them to capture hidden patterns in sequential data structures that are difficult to identify with traditional ML models. However, DNNs generally have the limitation that they lack model interpretability because their internal decision logic is not directly comprehensible by humans [4, 23]. This renders them black boxes for model developers and decision-makers, which is why they are unsuitable for critical healthcare applications.

To address the limitations of both research streams above, we propose PatWay-Net, an innovative ML framework that is designed for both high predictive accuracy and intrinsic interpretability in modeling pathways from patients with symptoms of sepsis. With this framework, we leverage the principle of interpretable ML models while harnessing the flexibility of a DNN architecture. More specifically, our contributions are as follows:

-

PatWay-Net is designed to constrain feature interactions, ensuring full model interpretability across the entire DNN architecture.

-

The architecture blends non-linear multi-layer perceptrons (MLPs) for static features with an interpretable LSTM (iLSTM) cell for sequential features, preserving the natural data structure of patient pathways.

-

A comprehensive dashboard supports PatWay-Net’s applicability by enabling clinical decision-makers to interpret PatWay-Net’s predictive outcomes and associated risks easily.

-

Structured interviews with independent medical experts rigorously validate PatWay-Net’s utility and interpretability, attesting to its real-world healthcare applicability.

We evaluate our proposed model using a real-life data set from an emergency department of a Dutch hospital, containing health records of patients with sepsis symptoms [11]. During their stay, patients go through different activities (e.g., changing departments, receiving medications) and develop different trajectories of severity, resulting in individual patient pathways. The data set contains a rich set of static and sequential features, such as socio-demographic data, blood measurements, medical treatments, and diagnoses, which provide a valuable basis for predicting the future behavior of individual pathways. Specifically, we use this information to predict whether a patient will be admitted to the intensive care unit (ICU), which constitutes a highly relevant prediction task for clinical professionals and administrative staff to support proactive resource allocation [9, 12, 24]. By comparing different types of ML models, we show that PatWay-Net outperforms commonly used interpretable models, such as decision trees or logistic regression, and even non-interpretable models, such as random forest and XGBoost, in terms of area under the receiver operating characteristic curve (\(AUC_{ROC}\)) and F1-score. We then use PatWay-Net for interpreting both static and sequential features of the real-world setting to demonstrate its applicability for healthcare decision support.

Our paper is organized as follows: Section 2 motivates the task of predicting critical patient pathways from a clinical point of view. Section 3 presents relevant background and related work. Section 4 introduces our proposed ML framework for interpretable patient pathway prediction, PatWay-Net. Section 5 outlines the evaluation and application results based on the real-life use case for predicting ICU admission for patients with symptoms of sepsis. Section 6 summarizes our work by drawing implications for research and practice, reflecting on limitations, and providing an outlook for future work.

3 Clinical relevance

3.1 Patient pathways and clinical decision support

Healthcare processes are generally concerned with all activities related to diagnosing, treating, and preventing diseases to improve well-being [25]. This includes patient-related activities organized in patient pathways and administrative activities that support clinical tasks [26]. Patient pathways are directly linked to a patient’s diagnostic–therapeutic cycle and, therefore, do not constitute strictly standardized processes. However, accurate prediction of patient pathways is crucial for optimizing resource allocation, improving patient outcomes, and facilitating timely clinical interventions, thus making it an essential tool for enhancing healthcare efficiency and effectiveness.

Figure 1 illustrates a patient’s hospital stay at multiple departments. In each department, various tasks must be performed to ensure a safe and well-organized patient transition. In this example, the patient was transferred from the emergency room to the coronary care unit. Depending on the patient’s condition, the patient may be transferred to the ICU or the normal care unit (NCU). Therefore, both departments must be prepared for patients. By using a decision support system that accurately predicts the next station, resources for one of the departments can be saved.

Technically, a patient visiting the hospital produces a patient pathway. A set of multiple patient pathways is then stored as an event log. Table 1 presents an example representation of an event log. Here, one visit of a patient is represented by a patient pathway (ID = 1). In the beginning, the patient registered at the emergency room at 2024-02-20 12:11:01. Also, the gender of the patient is registered as male (M). In the next patient activity, the blood pressure is measured at 180. Later, medication is administered before the blood pressure is measured for a second time at 195. The next activity then describes the patient being transferred to the ICU.

As shown in Table 1, the patient information in an event log is not structured to be easily processed by prediction models. Therefore, careful processing of static and sequential patient information is necessary to predict following patient activities accurately. In addition, experts generally have to ensure that the model learns meaningful patterns from the data, which constrains the model to be intrinsically interpretable. Both points are addressed in this work.

In the development of decision support for healthcare applications, the involvement of medical experts is inevitable [27]. Their insights can ensure that the proposed approach aligns with the complexities and problems of clinical practice. In this work, a comprehensive dashboard serves as a translational interface, bridging the gap between high-level computational outputs and real-world clinical decisions. It provides a demonstration of a model’s potential for real-world applicability, ensuring that its capabilities are both understandable and useful to healthcare practitioners.

3.2 The case of sepsis

Sepsis results from the body’s overwhelming response to an infection and can be life-threatening [28]. Therefore, sepsis is a time-sensitive issue that needs clinicians’ attention as early as possible to enable the best possible outcome for each patient [10]. Based on this, it is critical to predict this outcome during an ongoing patient pathway to provide timely recommendations for controlling the disease’s progression [11, 29]. However, the importance of sepsis lies not only in the urgency of its treatment but also in its complex and variable nature that can be detected in the resulting patient pathways [30]. While its symptoms and, thus, underlying medical indicators, can progress or change rapidly, treatment needs to be adapted dynamically which influences the patient pathway [29]. Ultimately, in the context of developing interpretable ML models for predicting patient pathways, the focus on patients with sepsis symptoms is crucial, given the imperative to enhance clinical decision-making, resource allocation, and ultimately, patient outcomes in this high-stakes domain.

4 Methodological background and related work

ML models are increasingly being integrated into clinical applications to assist healthcare professionals in diagnosing diseases, predicting patient outcomes, and making treatment decisions [7,8,9]. While the predictive power of these models is often decisive, it is also essential that they provide comprehensible outputs due to the critical nature of healthcare decisions. Comprehensible outputs promote transparency, reduce the risk of unintended biases, and ensure the reliability of the model results, ultimately contributing to safer and more effective patient care [31,32,33].

From a methodological point of view, there are generally two distinct streams of research dealing with comprehending ML models. Table 2 provides an overview of both streams with exemplary approaches, which can be further classified according to the type of input features they support.

4.1 Explainable machine learning

The first stream of research refers to the concept of explainable ML. It promotes the use of flexible ML models with high predictive power, which subsequently require post-hoc explanation methods to convert their complex mathematical functions into easier-to-understand explanations [23, 32]. Common representatives of flexible ML models for static features are bagged and boosted decision trees such as random forest [42] and XGBoost [43]. Such models excel at handling static tabular data because they can capture complex interactions between features, allowing them to achieve high predictive performance [6, 8, 9, 18]. In this work, we include both models as strong baseline approaches in our evaluation section. However, the construction of high-level interactions creates a lack of transparency because the individual feature effects are no longer understandable by humans and therefore require additional explanation methods.

For sequential features, the field has increasingly focused on DNNs in recent years [22]. Their multi-layered network architecture allows them to automatically discover and learn complex patterns in high-dimensional data structures that are relevant for the prediction task [4, 5]. Of particular interest are recurrent neural networks and long short-term memory (LSTM) networks because they can capture temporal patterns and therefore offer superior predictive performance compared to traditional approaches in dynamic and complex healthcare process environments [e.g., 19, 20]. Furthermore, such network architectures have the advantage that they can be modified to capture static and sequential features simultaneously [e.g., 21, 41]. Nevertheless, the nested, multi-layered structure of DNNs also creates a lack of transparency, because it is not directly observable what information in the input data drives the models to generate their prediction, rendering them black boxes for model users. In our work, we adopt the overall idea of an LSTM network [44] but propose a modification to ensure full model transparency.

To turn the internal decision logic of black-box models into comprehensible results, the field of explainable ML has proposed a variety of post-hoc explanation methods [6, 45]. Some of these methods are model-specific. That is, they are designed for specific types of models and derive explanations by examining internal model structures and parameters (e.g., layer-wise relevance propagation for DNNs [6, 36]). Other methods are model-agnostic and, therefore, broadly applicable to different ML models. One of the most widely used model-agnostic methods is Shapley additive explanations (SHAP) [46]. SHAP uses a game-theoretic approach to explain the output of any ML model. It has been applied, for example, to mortality prediction in ICUs [31] and to process prediction models based on general event logs [35]. An overview of existing post-hoc explanation methods is given by Loh et al. [32]. Overall, post-hoc explanation methods have the advantage of providing a high degree of flexibility while encouraging the use of models with high predictive performance. Furthermore, they can lead to valuable insights, especially for exploratory analysis purposes [47].

However, post-hoc explanation methods must also be viewed with caution. They generally attempt to reconstruct the cause of a generated prediction by approximation. As a result, they can never fully explain the entire black-box model without losing information, which may lead to unreliable results. Similarly, explanations are provided only after a model’s prediction, making it impossible to fully validate the functioning of the model for all inputs before model deployment. This issue becomes particularly critical when the distribution of input data changes over time, and the model may need to handle input feature ranges that were not encountered during its training phase. Overall, such deficiencies can lead to misleading conclusions and potentially harmful results [33, 48]. For this reason, we refrain from pursuing this general research stream in this paper.

4.2 Interpretable machine learning

The second stream of research refers to the field of interpretable ML, which promotes the development of intrinsically interpretable models [23, 49]. In this research stream, the structure of an ML model is constrained, such that the resulting model allows for a better understanding of how predictions are generated. Traditional representatives are linear models and decision trees, which are easy to comprehend and therefore often remain the preferred choice in critical healthcare applications [e.g., 12, 14, 15, 17]. At the same time, however, they are generally too restricted to capture more complex relationships.

A more advanced class of intrinsically interpretable ML models are GAMs [23, 49]. In GAMs, input features are modeled independently in a non-linear way to generate univariate shape functions that can capture arbitrary patterns but remain fully interpretable. The resulting shape functions for each feature are summed up afterward to produce the final model output. Thus, GAMs include additive model constraints yet drop the linearity constraint of a simple logistic/ linear regression model. This structure is simply interpretable as it allows users to verify the importance of each feature. That is, the fitted shape functions directly reveal how each feature affects the predicted output without the need for additional explanation.

In recent years, a wide variety of GAM variants have been proposed that can learn specific types of shape functions depending on the underlying learning procedure, for example, based on splines [50], decision trees [51, 52], or even neural networks [53,54,55]. However, all of these approaches have in common that they primarily focus on processing static features and, therefore, cannot handle sequential data structures in their natural form [49]. As a consequence, their application in the healthcare domain is usually limited to preprocessed features in a static and aggregated form [e.g., 13, 16]. In this work, we adopt the general idea of GAMs to capture non-linear effects of individual features and propagate this idea not only to static features but also to sequential features to obtain a powerful yet fully interpretable model.

Apart from that, there are also interpretable ML models that are specifically designed to capture sequential patterns. Traditional approaches include probabilistic finite automatons [37] or hidden Markov models [38]. Such models have the drawback that they require explicit knowledge about the form of an underlying process model [56], which is challenging to discover or reconstruct from complex event data in dynamic healthcare environments [11, 57]. Therefore, recent approaches increasingly pursue the idea of constraining the structure of DNN architectures to obtain models that can process sequential features in their natural form while remaining intrinsically interpretable. To date, however, little work exists in this area and current approaches often do not distinguish between sequential and static features [e.g., 39, 40].

In summary, only a limited amount of approaches deal with the development of intrinsically interpretable models for transparent patient pathway prediction. In particular, it lacks an innovative approach that can capture non-linear relationships in the form of flexible shape functions for static as well as sequential patient features while providing comprehensible model outputs that visualize the different feature effects for transparent decision support. Likewise, to the best of our knowledge, none of the existing approaches can automatically detect and integrate (sequential) feature interactions to control the model’s flexibility for improved predictive performance. As a remedy, we propose PatWay-Net, a novel ML framework that combines all these aspects within a single approach.

5 PatWay-Net

This section describes PatWay-Net, an interpretable ML framework building on a DNN model with an architecture that transfers the ideas of GAMs into a novel, intrinsically interpretable LSTM module for sequential features, and intrinsically interpretable MLPs, for static features.Footnote 2 We apply this proposed DNN architecture of PatWay-Net to the problem of patient pathway prediction but want to emphasize that our proposed architecture is universal and can be applied to a variety of problem sets that combine sequential and static data (see also Appendix D for evaluations on other use cases).

In the following, we first describe the underlying problem of patient pathway prediction (Section 4.1), before mathematically describing the architecture (Section 4.2) and the training process (Section 4.3) of PatWay-Net’s DNN model. Subsequently, we describe the different interpretation plots that can be derived from the intrinsically interpretable architectural design of PatWay-Net’s DNN model (Section 4.4).

5.1 Problem statement

An ML model \(f \in \mathcal {F}\) should map patient pathways to a target of interest, with \(\mathcal {F}\) denoting the so-called hypothesis space. Patient pathways comprise two sets of information, one set describes static information about the patient, and one set describes dynamic or sequential information about the patient.

Definition 1

(Patient Pathways) Mathematically, the information that describes a set of patients can be expressed as a tuple

where \(\textbf{X}_{static} \in \mathbb {R}^{s\times q}\) is the static patient data, and \(\textsf{X}_{seq} \in \mathbb {R}^{s\times T \times p}\) is the sequential patient data. The dimension s denotes the number of patient pathways, q indicates the number of static variables that describe a patient (e.g., one-time diagnoses or gender), and T and p describe the number of time steps that we recorded for the sequential information and the number of features tracked in each time step, respectively. A single patient’s patient pathway i is denoted by the static information \(\textbf{X}_{static}^{(i)}\) and the sequential data \(\textsf{X}_{seq}^{(i)}\).

The objective of this work is to find a prediction model \(f \in \mathcal {F}\) that maps the static information \(\textbf{X}_{static}\) and sequential information \(\textsf{X}_{seq}\) about patients to target outcomes \(\textbf{y} = (y_{1}, \dots , y_{s})\), that is

The target outcomes \(\textbf{y}\) can thereby represent various patient activities in the future, such as ICU admission.

A timely prediction of the future occurrence of an activity is crucial, as it can prevent the worsening of the patient’s condition and initiate successful treatment by medical experts. Therefore, a prediction model should not only make predictions once the full patient pathway is present but should make predictions already at earlier stages, that is, with less information included in the patient pathways. Thus, we define the patient pathway prefix in the following.

Definition 2

(Patient Pathway Prefix) Given patient pathway i with static information \(\textbf{X}_{static}^{(i)} \in \mathbb {R}^{q}\) and sequential information \(\textsf{X}_{seq}^{(i)} \in \mathbb {R}^{T \times p}\), the patient pathway prefix of length \(t^*\) is defined as a tuple

where \(\textsf{X}_{seq}^{(i)}[:t^*] \in \mathbb {R}^{t^* \times p}\) denotes the first \(t^*\) time steps of the patient’s sequential information.

5.2 Architecture of the DNN model

The proposed interpretable architecture of PatWay-Net is shown in Fig. 2. It contains a static, a sequential, and a connection module. While the first two modules naturally model the event log data, the connection module maps the outputs of these modules onto predictions of patient activities (in our case ICU admission).

5.2.1 Static module

The static module resembles a GAM [50], yet combines the underlying idea with the power of DNNs [54]. By making this architectural choice, we allow our proposed model to remain fully transparent. That is, the effect of each input feature on the model output can be fully assessed after training the model. This is achieved by mapping the input features separately to output values (i.e., there are no interactions between input features). This separation naturally constrains this DNN but, on the other hand, allows the visual inspection of the effect each static feature has on the network’s output. Consequently, although our proposed model is derived from the field of DNNs, we make careful choices about our architecture to allow for a fully transparent white-box model (in contrast to the black-box behavior of general DNNs).

Mathematically, for q static input features \(\textbf{X}_{static}[1], \ldots , \textbf{X}_{static}[q]\), the static module maps the input features to outputs \(o^{1}, \ldots , o^{q}\) through

where each \(f_{MLP}^l\) denotes a neural network and \(o^{l}\in \mathbb {R}\) indicates a single scalar. The neural networks of the architecture are trained in individual sub-modules so that the weights of the different neural networks are trained independently from each other (cf. the boxes around the neural networks of the static module in Fig. 2). With this architecture, we can later compute the outputs \(o^l\) for various input values for each neural network \(f_{MLP}^l\) and, thereby, visually inspect the effect that the input has on the output.

5.2.2 Sequential module

The sequential module extends the previous idea of our static module to a sequential setting. For this, we propose a novel interpretable LSTM (iLSTM) layer to encode the values of each sequential feature \(\textsf{X}_{seq}[j]\) into a vector \(\textbf{h}_{t}^{j} \in \mathbb {R}^{m}\), with \(j \in \{1,2, \dots , p\}\), where m denotes the hidden size for a single sequential feature in the iLSTM cell. To ensure intrinsic interpretability of the iLSTM, each sequential feature has its corridor throughout the gates and state vectors of the original LSTM [44], without the possibility to interact with any other feature (similar to the previous static module, in which each static feature went through a separate neural network). Such feature corridors in the iLSTM layer have a specific size, defined by the internal element size of the corresponding sequential feature m, defining how much vector space is reserved for the sequential feature value computation, from the gates to the hidden state.

Similar to a vanilla LSTM [44], the iLSTM uses a forget gate, an input gate, and an output gate, as well as a candidate state, resulting in the vectors \(\textbf{f}_t\), \(\textbf{i}_t\), \(\textbf{o}_t\), and \({\tilde{\textbf{c}}}_t\), respectively. The information of the sequence is then stored in a cell state \(\textbf{c}_{t}\), and a hidden state \(\textbf{h}_{t}\). Technically, this restriction, to not allow uncontrolled interactions, is realized by multiplying weight matrices with masking matrices. A masking matrix includes only 0 or 1 values. If an element of a weight matrix should be considered, the corresponding element in the masking matrix is set to 1, else it has the value 0. Mathematically, the iLSTM can be formalized as

Here, \(\sigma \) denotes the sigmoid activation, \(\otimes \) is the matrix multiplication, and \(*\) denotes the element-wise multiplication. \(\textbf{U}_m\) and \(\textbf{V}_m\) are masking matrices that ensure that the individual features are computed independently using values from their corridor and, therefore, omitting interactions between sequential features. By contrast, a traditional, non-interpretable LSTM [44] does not use such masking matrices and, therefore, allows any interaction between features for which values are to be computed. As output, the iLSTM layer returns for each sequential feature \(\textsf{X}_{seq}[j]\) the vector \({\textbf {h}}^{j} \in \mathbb {R}^{m}\), that is, the last hidden state of the iLSTM for the sequential feature j. Let \(f_{iLSTM}^j \in \mathbb {R} \rightarrow \mathbb {R}^m\) denote this function, which maps the j-th sequential feature onto the corresponding hidden state, and let \(f_{iLSTM} \in \mathbb {R}^p \rightarrow \mathbb {R}^{p*m}\) denote the function that maps all sequential features to the complete hidden state vector.

Beyond single sequential features, the iLSTM layer can encode values of a pairwise sequential feature interaction (j, k) in \((1,\ldots ,p) \times (1,\ldots ,p)\) into a vector \(\textbf{h}^{j,k} \in \mathbb {R}^{m}\). Mathematically, the iLSTM computes such interactions as an additional sequential feature that does not interact with other features. The interactions to be used in PatWay-Net can be chosen manually or can be detected automatically using heuristics. We describe such a heuristic in Appendix A.

5.2.3 Connection module

In the connection module, the information from the static module and the sequential module are then combined to compute the estimations \({\hat{\textbf{y}}}\) for the target outcomes \(\textbf{y}\). Mathematically, we use the hidden outcome values \(o^1,\ldots ,o^q\) for static features, the hidden state values \(\textbf{h}^{1},\ldots ,\textbf{h}^{p}\) for sequential features, and potentially \(\textbf{h}^{j,k}\) for interacting sequential features \(j,k\in (1,\ldots ,p) \times (1,\ldots ,p)\). These values are then concatenated and mapped onto the output neuron to provide the estimations \({\hat{\textbf{y}}}\). The mapping is performed using a single feed-forward layer with sigmoid activation, as the prediction of patient activities (in our case ICU admission) is defined as a binary classification task.

5.3 Parameter optimization of the DNN model

All parameters from the three modules are combined into one DNN model in which these are optimized simultaneously. Let \(f_{\text {PatWay-Net}}\) denote this DNN model with parameters \(\beta \). Depending on the task, the fit of \(f_{\text {PatWay-Net}}\) to the target outcomes \(\textbf{y}\) is then measured by a loss function \(\mathcal {L}\). In our real-world data application, we use binary cross-entropy, as ICU admission represents a binary decision. Overall, we minimize the empirical risk, that is

where we iterate over the patient pathways s and over the prefixes for each patient pathway T.

We address this optimization problem using an adaptive moment estimation (Adam) optimizer [58] with default hyperparameters. For every epoch, we perform a mini-batch gradient descent to optimize the internal parameters batch-wise efficiently.

5.4 Interpretations of the DNN model

Based on the architectural design of PatWay-Net’s DNN model, different interpretation plots can be created, allowing an interpretation of how the model input affects the model output. The interpretation plots are part of a comprehensive dashboard, that serves as a decision support tool for clinical decision-makers (cf. Section 5.4). Table 3 provides an overview of the four interpretation plots that we propose in this paper, including plot names, the underlying equations, and short descriptions of the plots’ purposes.

In the real-life data application that follows, we prefer the designation (medical) indicator over feature because it is more comprehensible for decision-makers in the medical domain. Accordingly, we name our four interpretation plots medical indicator importance, medical indicator shape, medical indicator transition, and medical indicator development (see Table 3). The importance, shape, and transition plots are based on so-called shape functions [52]. Traditionally, shape functions are only computed for static features [e.g., 13, 16, 51, 52, 53, 54, 55]. However, one of our paper’s contributions is that we also extend their idea to sequential features to obtain interpretable model results for sequential features.

In general, shape functions describe the effect on the model output for various values of a single indicator. Thus, these plots answer the question, “How does the model output change for various values of a medical indicator?”. For a static indicator l, the shape function represents the function described by \(f_{MLP}^l\) and the corresponding parameters in the connection module, that is, it shows values \(\textbf{X}_{static}[j]\) for the l-th static indicator on the x-axis and

weighted by the parameters in the connection module, on the y-axis. For sequential data j, the iLSTM layer can be illustrated similarly, with the x-axis showing the values \(\textsf{X}^{(i)}_{seq}[j, t]\) of a sequential indicator j for an individual pathway and a single time step t, and the y-axis, showing the corresponding effects on the model output via

weighted by the parameters in the connection module. This plot can also be extended to interactions of two sequential indicators with a three-dimensional plot, in which the color denotes the interaction effect on the model output, as exemplarily shown in Appendix A. We call this plot the sequential medical indicator interaction plot. Note that the \(f_{iLSTM}\) layer preserves the history up to time step t. In our case, we are showing the effect of sequential data, depending on the history of a patient’s pathway.

An indicator importance can be derived by computing the area under the shape functions, that is, under the plots that are described in Eqs. 12 and 13. Thereby, PatWay-Net allows computing the overall importance of static and sequential indicators, answering the question, “Which medical indicators are the most important?”.

A medical indicator transition illustrates the change of effect on the model output for sequential data from time step \(t-1\) to t. This answers the question, “How does the model output change from the previous to the current time step?”. To answer this question, for a given sequential indicator j with the sequence \(\textsf{X}^{(i)}_{seq}[j]\), we calculate the difference in the effect in the sequential module between the time steps t and \(t-1\), that is, we calculate

weighted by the parameters in the connection module. To illustrate all combinations of changes in the value from the last to the current time step, along with the change of effect on the model output, we use a three-dimensional plot, in which the z-axis (the color) describes an increase or decrease in the change of the effect.

Lastly, a medical indicator development describes the trajectory of an indicator over time. Due to the design of PatWay-Net’s DNN model, we can illustrate the sequential effect over time. That is, the model output can be tracked for each time step of the patient pathway’s sequential information and plotted afterward. This plot is specifically useful to answer the question, “What effect did a sequential medical indicator of a given patient pathway have on the model output over time?”. As such, we adopt the general idea of Weinzierl et al. [36] to provide transparency at the local instance level. However, instead of using a post-hoc explanation method, we can directly plot the interpretable results from our sequential and connection module. Mathematically, this can be derived for a sequence \(\textsf{X}^{(i)}_{seq}[:\,t], \, t=1,\ldots ,T\) of indicator j through a plot, showing the time steps \(1,\ldots ,T\) on the x-axis, and, on the y-axis,

weighted by a scalar value from the connection module.

6 Evaluation and application of PatWay-Net

We evaluate PatWay-Net and demonstrate its applicability using a real-world use case from a Dutch hospital. After introducing the use case (Section 5.1) and describing the baseline models (Section 5.2), we perform a three-step evaluation procedure. First, we evaluate the predictive performance of PatWay-Net’s intrinsically interpretable DNN model through a benchmark study (Section 5.3). Second, we evaluate the meaningfulness of PatWay-Net’s interpretation plots through a demonstration as part of a comprehensive dashboard for clinical decision-makers and a discussion including clinical evidence of the visualized interpretation aspects (Section 5.4). Finally, we validate the utility of PatWay-Net for decision-makers through structured interviews with clinicians from different hospitals and different domains. The results of those interviews are also presented in Section 5.4, while additional information can be found in Appendix C.Footnote 3

6.1 Use case description

Our real-life publicly-available data set comprises pathways of patients with sepsis symptoms from a Dutch hospital with approximately 50,000 patients per year [11].Footnote 4 The hospital uses an enterprise resource planning (ERP) system to track all performed patient events. The process consists of logistical activities, including the patient’s stations through the hospital, and medical activities, such as blood value measurements and medical treatments. Although the aforementioned process can be described in a fairly structured manner based on the information provided by the use case provider [11], this structure is only reflected to a limited extent in the underlying event log. In addition, patients can run through different activities in highly individual pathways, making it difficult to detect patterns to estimate an individual pathway’s outcome manually.

Based on these patient pathways, we predict whether a patient will be admitted to the ICU. This prediction is highly relevant for both healthcare providers and insurance companies. First, capacity and staff planning in ICUs are crucial and influence the patient’s probability of recovery [59]. Second, admissions to the ICU for septic patients are among the highest costs compared to other diseases [24].

The patient events of the event log can be differentiated into 16 activities with different purposes, for example, release type, type of measurement, or stating whether the patient was admitted to normal care. They all represent sequential medical indicators. In addition to the control-flow information, the event log contains another 27 indicators. Three are sequential and numerical and represent the measured values of C-reactive protein (CRP), Leukocytes, and LacticAcid. Furthermore, patient Age is a numerical and static indicator. The summary statistics of the numerical indicators are presented in Table 4.

Besides Age, there are 22 categorical static indicators (e.g., type of medical staff executing the activity), or binary values (e.g., stating whether or not the patient received an infusion) [11]. To avoid data leakage, we remove the medical indicator diagnosis as the large majority of the patient pathways with certain diagnoses describe patients who are later admitted to the ICU. That is, it can be assumed that the hospital guidelines require all patients with a certain diagnosis to be admitted to the ICU. A detailed description of the further data preprocessing steps, as well as evidence that the size of the used event log is appropriate for PatWay-Net’s DNN model to achieve accurate and timely predictions, can be found in Appendix A.

6.2 Baseline models

We benchmark PatWay-NetFootnote 5 against three groups of ML approaches. The first group includes decision tree, K-nearest neighbor, naïve Bayes, and logistic regression. This group allows us to assess how well PatWay-Net performs compared to traditional shallow ML models that are intrinsically interpretable. These models are limited to processing static patient information and cannot handle sequential data. The second group includes random forest and XGBoost. This group allows us to assess how well PatWay-Net performs compared to commonly used black-box ML approaches. Like the first group, these models are limited to processing static patient information and cannot handle sequential data. Third, we include a state-of-the-art LSTM model that uses the static module of PatWay-Net and combines it with an unrestricted LSTM cell [44] to process the sequential patient information. This model represents the model with the highest flexibility and modeling capacity. Yet, it does not allow for a transparent interpretation of how the predictions are derived. Thus, its applicability in high-stakes decisions is generally limited.

These baseline models are then compared to our proposed PatWay-Net. Here, we compare two versions. First, PatWay-Net without any interactions between the sequential medical indicators. Second, PatWay-Net with pairwise interaction between a set of sequential medical indicators.Footnote 6 In doing so, we tune models by applying a grid search, evaluate models by performing a five-fold stratified cross-validation, and measure the predictive performance of models by calculating \(AUC_{ROC}\) and F1-score. More details about our model tuning, model evaluation, and model selection can be found in Appendix A.

6.3 Results on predictive performance

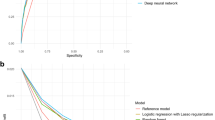

The predictive performance of PatWay-Net and the baselines for the use case are summarized in Table 5. Among the interpretable shallow ML models, logistic regression and naïve Bayes models outperform the decision tree and K-nearest neighbor models with an improvement of 1.9 to 8.9 percentage points in terms of \(AUC_{ROC}\) performance on the test sets.

PatWay-Net outperforms all shallow ML baseline models across all metrics. We observe that PatWay-Net, without any interactions, pushes the predictive performance by 5.1% in comparison to shallow ML models. By incorporating an interaction term in our sequential module, we achieve an \(AUC_{ROC}\) performance on the test sets of 0.734, which is an improvement of 7.3% compared to the logistic regression, and an improvement of 10.4% compared to the decision tree. PatWay-Net with the interaction in the sequential module even outperforms the non-interpretable models XGBoost and random forest by 4.4% and 1.2%, respectively. We conduct a Friedman test and a Wilcoxon signed-rank test with Holm p-value adjustment [60], which shows that the difference is statistically significant with \(\alpha = 1\%\) for the decision tree, logistic regression, and K-nearest neighbor models, and with \(\alpha = 10\%\) for the naïve Bayes model. Further information on the statistical tests can be found in Appendix A.

As an upper bound, the state-of-the-art LSTM network leads to an \(AUC_{ROC}\) performance on the test sets of 0.757, which is only slightly higher than our proposed PatWay-Net. However, it does not allow any intrinsic model interpretation.

6.4 Results on interpretation

PatWay-Net’s interpretation plots are presented to medical decision-makers via a comprehensive dashboard (see Fig. 3), which is structured into four parts, a) to d).

Part a) provides general (static) information on a patient, such as age, height, or weight as well as their history during their current stay. For example, it shows when a patient has been admitted or when certain measurements have been taken. Part b) provides a short textual description of the urgency of the ICU admission depending on the model’s prediction. Parts c) and d) comprise PatWay-Net’s interpretation plots. In particular, part c) shows an overview of the most impactful medical indicators on the model prediction, and d) provides further interpretation details on selected static or sequential medical indicators. In what follows, we focus on parts c) and d) of the dashboard and demonstrate PatWay-Net’s interpretation plots for the medical indicator importance as well as static and sequential medical indicators.

The interviews conducted with medical experts show that the dashboard is helpful as a support for decision-making. Moreover, all medical experts confirm the usefulness of the interpretation plots to understand at a glance what caused the prediction. The interviewees also positively assessed the visual plots and thought that such plots are the language that is spoken medically. All interviewees stated that they prefer simple plots because they usually have to act relatively quickly. Another outcome of the interviews is that interpretations in the form of PatWay-Net’s dashboard would positively influence their trust in the predictions. Therefore, they think that it increases the acceptance of such predictions. Additional information on the interviews can be found in Appendix C.

6.4.1 Importance of medical indicators

Figure 4 shows the medical indicator importance plot, highlighting the 20 most impactful indicators in our model. These indicators have the greatest effects on the model output in forecasting the potential need for ICU admission.

The medical indicators Oligurie, Hypotensie (hypotension), and Leukocytes emerge as the top contributors with the most substantial impact on the model prediction. The static indicator Oligurie, representing decreased urine output, is an essential medical indicator in our model for predicting ICU admission as it is often associated with severe sepsis due to its connection with reduced kidney perfusion [e.g., 61]. Likewise, Hypotensie, or low blood pressure, is a critical static medical indicator in our model as it can represent a possible consequence of significant blood vessel dilation caused by systemic inflammation [e.g., 62]. The sequential indicator Leukocytes, that is white blood cell count, also holds significant importance in our model for the prediction of ICU admission. Variations in leukocyte counts often signal the body’s immune response to infections such as sepsis [e.g., 63]. As our proposed ML framework’s unique capability is to include this sequential medical indicator in the analysis, it enables us to compare the importance of sequential indicators with the importance of the static indicators directly.

6.4.2 Static medical indicators

Figure 5 shows the interpretation plot for the static medical indicator Age in the dashboard. Specifically, it shows PatWay-Net’s shape function for this specific indicator, revealing a non-linear effect of Age on ICU admission prediction. This demonstrates the flexibility of PatWay-Net’s DNN model in capturing arbitrary relationships between individual medical indicators and the prediction target, which, inspired by traditional GAMs [e.g., 54, 55, 51, 53, 52], is more suitable for detecting and learning complex patterns in data than simple linear models.

Interestingly, the effect for the medical indicator Age decreases as the value (i.e., the patient’s age) increases. At first glance, this trend may appear counter-intuitive, considering that higher age is typically associated with more severe sepsis cases and a higher risk of adverse outcomes [e.g., 64]. However, the patient population in our dataset is comparatively old, which might be a reason for this observed medical indicator shape. Additionally, there could be specific hospital protocols or clinical guidelines that apply to patients above a certain age, which could influence the patient’s pathway and the eventual outcome.

6.4.3 Sequential medical indicators

Figure 6 shows the interpretation plots for the sequential medical indicator Leukocytes in the dashboard, depending on a given patient’s previous pathway. The plots reveal the model’s transparent decision logic regarding the development, shape, and transition of the medical indicator.

The medical indicator shape plot (lower left in Fig. 6) shows the shape function of the sequential medical indicator Leukocytes. Again, we can see the flexibility of PatWay-Net’s DNN model in capturing non-linear relationships between the medical indicator and the prediction target. This time, however, the indicator represents a sequential feature captured in its natural form, which constitutes an innovative advancement over traditional interpretable models, such as GAMs and decision trees.

Leukocytes play a pivotal role in the body’s immune response, and a considerable alteration in the leukocyte count is a common physiological response to sepsis [e.g., 63]. In Fig. 6, while the Leukocytes value decreases, the effect on the prediction for ICU admission increases considerably. Thus, we can see a substantial alteration in the leukocyte count. Moreover, an elevated leukocyte count can be a typical indicator of an ongoing systemic inflammatory response to an infection, like sepsis [e.g., 65]. However, a decrease in Leukocytes can also occur in severe cases where the immune system is overwhelmed, indicating a worsening of the patient’s condition [e.g., 66]. In such an acute case, there exists a potential necessity for the patient to receive intensive care.

The medical indicator transition plot (lower right in Fig. 6) shows how the prediction changes from the previous to the current Leukocytes value measurement. The figure illustrates that a decrease in the Leukocytes value (from a previous value of 0.0 to a current value of 1.0) corresponds to an increased probability of the patient requiring ICU admission. This is consistent with clinical understanding, as a decrease in leukocytes often denotes a heightened vulnerability to developing an infection like sepsis, suggesting a more severe disease course that may require intensive care. Conversely, if there was a low Leukocytes value at the previous time step that subsequently increases by the current time step to a normal value, prediction indicates a lower likelihood of the patient being transferred to the ICU. This could suggest that the patient’s immune response is stabilizing, or the infection is being effectively controlled, thus reducing the necessity for intensive care.

The medical indicator development plot (upper plot in Fig. 6) shows what effect the sequential medical indicator Leukocytes has on the model prediction over time. Up to time step three (2014-09-18 13:46 - 2014-09-18 13:56), the effect of Leukocytes is high since no measurement has been taken yet. From time step three to four (2014-09-18 13:56 - 2014-09-18 14:11), the effect on the prediction decreases, as a medium-high Leukocytes value of 0.51 has been measured in this time period.

7 Discussion and future work

7.1 Implications for healthcare management and practice

Our research has multiple implications for healthcare management and practice. First, PatWay-Net supports a straightforward analysis of patient pathways using patients’ historical event data. In this way, subjectivity is avoided, and manual effort can be reduced to a minimum in decision-making. Likewise, our model provides high predictive performance in the context of patients with symptoms of sepsis without relying on explicit process knowledge. This allows flexibility for decision support applications in highly complex and dynamic healthcare environments. In our case, experiments have shown that the predictive performance is superior to traditional approaches by combining patients’ static features with sequential features in a DNN architecture that remains fully interpretable. This is a great advantage because prediction tasks in the healthcare sector are usually dominated by linear and logistic regression models with underlying static features to ensure a high degree of transparency [e.g., 12, 13, 14, 15].

At the same time, PatWay-Net can improve decision-making in both patient-specific and administrative decision contexts. For example, in a patient-specific decision context, a model interpretation for admission to ICU prediction may indicate an increase in a patient’s probability of being transferred to the ICU after being treated with a certain medication. Based on this insight, medical experts have the chance to intervene and apply corrective treatments to prevent worse consequences. In an administrative context, model interpretation could reveal shortcomings in the hospital’s IT system. For instance, conflicting predictions between PatWay-Net and clinicians can be traced down to potentially missing patient information within an ERP system, allowing for optimization of hospital operations.

Finally, PatWay-Net provides timely decision support. From a technical point of view, PatWay-Net’s inference time is similar to one of the shallow interpretable models as the underlying model of PatWay-Net represents a function mapping the data input to the prediction output. Compared to the inference time, the training time of PatWay-Net is considerably higher than the training time of the shallow interpretable models as PatWay-Net is a DNN with a recurrent iLSTM cell. Further, the training time increases with each sequential feature as each sequential feature is passed through a single corridor in the iLSTM cell. However, for our purpose, the inference time is far more important than the training time as the models are created and trained before they are applied in an online mode where the models are used for providing effective decision support. On the other hand, PatWay-Net’s interpretations can be immediately retrieved from the model itself. In doing so, it is considerably faster than applying a post-hoc explanation method such as SHAP for reconstructing explanations for non-interpretable DNN models.

7.2 Implications for research

PatWay-Net combines two crucial streams of research. The first stream follows the idea that more complex models, such as DNNs, can naturally model specific structures of the underlying data and, thereby, increase predictive performance. Thus, PatWay-Net employs an iLSTM in its sequential module to model temporal structures of sequential data, and several MLPs in its static module to model non-linear structures of static data. The second stream follows the idea that explanations of complex models can never provide the same understanding as that of intrinsically interpretable models [33, 49]. Consequently, approximated explanations of complex models should be avoided or used carefully. As a remedy, PatWay-Net remains fully interpretable and prevents uncontrolled interactions of static and sequential features by incorporating the main principle of GAMs into its entire DNN architecture. As such, the model also provides an extension to traditional GAMs, which are unable to capture sequential data structures in their natural form [e.g., 54, 55, 51, 53, 52].

Within the realm of medical research, this work is aligned with emerging trends advocating for a shift from static, tabular data to multimodal data representation [67]. Traditional approaches often simplify complex health data such as images and vital signs into aggregated statistics or explicit features, thereby losing important information and only capturing a snapshot of the patient’s health. Our framework addresses this gap by accurately modeling health trajectories through both, sequential and static data. The architecture is not limited to merely processing patient pathway data but it can also be adapted to other temporal sequences commonly encountered in healthcare, such as data from wearable and ambient biosensors [68]. By facilitating a more rigorous representation of human data, we improve not only the predictive performance but also the clinical utility of ML models in healthcare settings.

7.3 Limitations and outlook

As with any research, our work is not free of limitations. First, we focused in this paper on a use case of patients with symptoms of sepsis to demonstrate the benefits of PatWay-Net in cases where trust in the ML system is crucial to allow for practical applications. However, the application of PatWay-Net is not limited to this use case but can also be used to predict process-related outcomes in other tasks or domains involving static and sequential features, as shown by the results of the additional use cases in Appendix D. Here, we find mixed results, highlighting that full generalizability in other contexts requires further work.

Second, the event log sample from the real-life data application was relatively small, and using this sample for training PatWay-Net showed a performance decrease from validation to test scores. This difference could be an indicator of model selection criterion overfitting [69], which might affect PatWay-Net’s generalizability to unseen data. However, to mitigate the effect of this overfitting type, we followed the suggestion from Cawley and Talbot [69] and adopted solutions for the problem of overfitting to the training criterion. In particular, we tested model regularization, hyperparameter minimization, and early stopping [69,70,71]. Among these solutions, performing early stopping achieved the best predictive performance for our use case of patients with symptoms of sepsis. In addition, results that we obtained from a further use case on loan applications (see Appendix D) confirm that this type of overfitting is likely to be less present when the event log size is larger. However, despite these overfitting concerns, PatWay-Net achieved relatively high predictive performance, and being a neural network, it can be expected that its predictive performance will further improve when trained with more data [22].

Third, PatWay-Net’s sequential medical indicator plots provide interpretations that are tied to a patient’s individual pathway. This limitation is necessary because the predictive effect of a sequential medical indicator within our iLSTM cell is determined by the patient-specific trajectory over previous time steps. As a result, varying historical trajectories can lead to different outcomes, which may also affect the results of the interpretation plots. However, at this point, it is not practical for clinical decision support to include global interpretation plots for all conceivable trajectory variants across all patients in a single dashboard. Therefore, we decided to focus on developing a patient-specific dashboard with all relevant information to support clinicians in an easily accessible way. Nonetheless, future research should address this limitation to identify new ways of how feature effects of multiple sequences over several time steps can be visualized in a comprehensive, yet fully understandable manner. This may require new visualization techniques (e.g., interactive filter mechanisms) or additional abstraction layers (e.g., clustering of patient trajectories leading to similar outcomes and interpretation plots), which offer promising directions for future work.

Fourth, PatWay-Net’s mechanism to automatically detect and integrate interactions covers pairwise interactions among sequential features. For the use case addressed in this paper, we can show that the predictive performance of PatWay-Net with this mechanism is close to the predictive performance of an unrestricted LSTM cell (see Appendix A). Nevertheless, we assume that other types of interactions (e.g., more complex interactions between sequential features or interactions between sequential and static features) are more present in other use cases. The results obtained for a further use case on hospital billings (see Appendix D) give the first indication for this assumption and therefore provide an entry point for future research.

Fifth, the proposed version of PatWay-Net does not currently consider a mechanism for selecting relevant features. This may become relevant when dealing with a large collection of features in other real-world applications, where the full set of features may lead to impractically large computational costs and a higher risk of overfitting. Future research could follow up on this point to investigate which feature selection methods are appropriate for combining static and sequential features. Nevertheless, PatWay-Net already provides some guidance for selecting the most important features (or medical indicators) through its medical indicator importance plot, thus facilitating clinicians or hospital management when dealing with a large collection of medical indicators.

Sixth, as with any ML model, PatWay-Net’s results are only as good as the data it consumes. That is, PatWay-Net is not only a reflection of possibly biased decisions made in the past but also of any data quality issues embedded in the data set. For example, in our use case of patients with symptoms of sepsis, some interpretation plots showed counter-intuitive relationships between medical indicators and the prediction target that may not be reflected in the medical literature. These findings underscore the need for rigorous data management in hospital operations to enable analytics tools like PatWay-Net to enhance decision-making substantially. Similarly, we want to emphasize that the learned feature effects should not be interpreted causally, as they are still based on correlations. Thus, it is not possible to say with certainty why some of the effects shown in the interpretation plots are present. This could be due to correlations with other (unmeasured) features, or other underlying phenomena. However, despite these limitations, PatWay-Net still offers a fully transparent model that can be used to allow clinicians to compare the model results with their domain knowledge to iteratively debug and improve the model, identify underlying data quality issues, or initiate further investigations for the identification of causal relationships.

Finally, our current approach pertains to the creation of a clinical dashboard that relies solely on the provided interpretation plots generated from the shape functions of PatWay-Net. While these plots offer exact insights into the model’s decision logic, they may still lack the level of context and intuitiveness required for effective clinical application. In the next steps, we intend to address this limitation by harnessing the capabilities of large language models [72, 73]. By incorporating a large language model in an adaptive dialogue system, we aim to provide more intuitive and contextually relevant explanations for clinical professionals when presenting the interpretation plots. This enhancement will not only make the model’s outputs more accessible but also foster improved communication between the model and the healthcare practitioners, thereby enhancing the model’s utility in real-world clinical settings.

Data Availability

Only a public data set was used, as cited in the text.

Code availability

The code is available under the link provided in the text.

Notes

We make a strict distinction between the terms “interpretation” and “explanation”. Interpretation is derived from models designed to be intrinsically interpretable, whereas an explanation can be created by applying a post-hoc analytical explainable-artificial-intelligence approach to a black-box model (cf. Section 3).

For reproducibility, all developed and used material can be found here: https://github.com/fau-is/patway-net

We further verify and demonstrate the validity of PatWay-Net’s created interpretation plots by comparing these with post-hoc-generated explanations for black-box models, and verify the end-to-end training capability of PatWay-Net’s DNN model (Appendix A). We also perform a simulation study (Appendix B) and verify the prediction capability of PatWay-Net’s DNN model with two additional data sets from other domains (Appendix D).

For simplicity, we will sometimes refer to PatWay-Net’s DNN model as PatWay-Net in the following.

Information on further experiments with multiple pairwise interactions can be found in Appendix A.

For the purpose of reproducibility, additional material can be found in the repository.

For better interpretability, we applied post-processing to the effect values by adjusting the y-axis to 0.

We select these time steps because they are the last time steps at which a Heart Rate and Blood Pressure activity occur. However, the remaining figures can be found in the repository.

The interview guideline can be found in the online repository.

References

Rojas E, Munoz-Gama J, Sepúlveda M, Capurro D (2016) Process mining in healthcare: A literature review. J Biomed Inform 61:224–236. https://doi.org/10.1016/j.jbi.2016.04.007

Morton A, Bish E, Megiddo I, Zhuang W, Aringhieri R, Brailsford S, Deo S, Geng N, Higle J, Hutton D et al (2021) Introduction to the special issue: Management science in the fight against Covid-19. Health Care Manage Sci 24(2):251–252. https://doi.org/10.1007/s10729-021-09569-x

Bertsimas D, Boussioux L, Cory-Wright R, Delarue A, Digalakis V, Jacquillat A, Kitane DL, Lukin G, Li M, Mingardi L et al (2021) From predictions to prescriptions: A data-driven response to COVID-19. Health Care Manage Sci 24:253–272. https://doi.org/10.1007/s10729-020-09542-0

Janiesch C, Zschech P, Heinrich K (2021) Machine learning and deep learning. Electron Mark 31(3):685–695. https://doi.org/10.1007/s12525-021-00475-2

Kraus M, Feuerriegel S, Oztekin A (2020) Deep learning in business analytics and operations research: Models, applications and managerial implications. Eur J Oper Res 281(3):628–641. https://doi.org/10.1016/j.ejor.2019.09.018

Barrera Ferro D, Brailsford S, Bravo C, Smith H (2020) Improving healthcare access management by predicting patient no-show behaviour. Decis Support Syst 138:113398. https://doi.org/10.1016/j.dss.2020.113398

Yang C-S, Wei C-P, Yuan C-C, Schoung J-Y (2010) Predicting the length of hospital stay of burn patients: Comparisons of prediction accuracy among different clinical stages. Decis Support Syst 50(1):325–335. https://doi.org/10.1016/j.dss.2010.09.001

Elitzur R, Krass D, Zimlichman E (2023) Machine learning for optimal test admission in the presence of resource constraints. Health Care Manage Sci 1–22. https://doi.org/10.1007/s10729-022-09624-1

Krämer J, Schreyögg J, Busse R (2019) Classification of hospital admissions into emergency and elective care: A machine learning approach. Health Care Manag Sci 22:85–105. https://doi.org/10.1007/s10729-017-9423-5

Komorowski M, Celi LA, Badawi O, Gordon AC, Faisal AA (2018) The artificial intelligence clinician learns optimal treatment strategies for sepsis in intensive care. Nat Med 24(11):716–1720. https://doi.org/10.1038/s41591-018-0213-5

Mannhardt F, Blinde D (2017) Analyzing the trajectories of patients with sepsis using process mining. In: Proceedings of the 18th international working conference on business process modeling, pp 72–80

Lee S-Y, Chinnam RB, Dalkiran E, Krupp S, Nauss M (2020) Prediction of emergency department patient disposition decision for proactive resource allocation for admission. Health Care Manage Sci 23(3):339–359. https://doi.org/10.1007/s10729-019-09496-y

Caruana R, Lou Y, Gehrke J, Koch P, Sturm M, Elhadad N (2015) Intelligible models for health care: Predicting pneumonia risk and hospital 30-day readmission. In: Proceedings of the 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp 1721–1730

Shipe ME, Deppen SA, Farjah F, Grogan EL (2019) Developing prediction models for clinical use using logistic regression: An overview. J Thorac Dis 11(S4):574–584. https://doi.org/10.21037/jtd.2019.01.25

Bertoncelli CM, Altamura P, Vieira ER, Iyengar SS, Solla F, Bertoncelli D (2020) Predictmed: A logistic regression–based model to predict health conditions in cerebral palsy. Health Inform J 26(3):2105–2118. https://doi.org/10.1177/14604582198985

Magunia H, Lederer S, Verbuecheln R, Gilot BJ, Koeppen M, Haeberle HA, Mirakaj V, Hofmann P, Marx G, Bickenbach J et al (2021) Machine learning identifies ICU outcome predictors in a multicenter COVID-19 cohort. Critical Care 25(1):295. https://doi.org/10.1186/s13054-021-03720-4

Bertsimas D, Dunn J, Gibson E, Orfanoudaki A (2022) Optimal survival trees. Mach Learn 111(8):2951–3023. https://doi.org/10.1007/s10994-021-06117-0

Liu M, Guo C, Guo S (2023) An explainable knowledge distillation method with XGBoost for ICU mortality prediction. Comput Biol Med 152:106466. https://doi.org/10.1016/j.compbiomed.2022.106466

Reddy BK, Delen D (2018) Predicting hospital readmission for lupus patients: An RNN-LSTM-based deep-learning methodology. Comput Biol Med 101:199–209. https://doi.org/10.1016/j.compbiomed.2018.08.029

Ye X, Zeng QT, Facelli JC, Brixner DI, Conway M, Bray BE (2020) Predicting optimal hypertension treatment pathways using recurrent neural networks. Int J Med Inform 139:104122. https://doi.org/10.1016/j.ijmedinf.2020.104122

Zilker S, Weinzierl S, Zschech P, Kraus M, Matzner M (2023) Best of both worlds: Combining predictive power with interpretable and explainable results for patient pathway prediction. In: Proceedings of the European Conference on Information Systems

LeCun Y, Bengio Y, Hinton G (2015) Deep learning. Nature 521(7553):436–444. https://doi.org/10.1038/nature14539

Barredo Arrieta A, Díaz-Rodríguez N, Del Ser J, Bennetot A, Tabik S, Barbado A, Garcia S, Gil-Lopez S, Molina D, Benjamins R, Chatila R, Herrera F (2020) Explainable artificial intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI. Inf Fus 58:82–115. https://doi.org/10.1016/j.inffus.2019.12.012

Moerer O, Plock E, Mgbor U, Schmid A, Schneider H, Wischnewsky MB, Burchardi H (2007) A german national prevalence study on the cost of intensive care: An evaluation from 51 intensive care units. Critical Care 11(3):1–10. https://doi.org/10.1186/cc5952

Mans RS, van der Aalst WMP, Vanwersch RJB (2015) Process mining in healthcare: Evaluating and exploiting operational healthcare processes. Springer

Rebuge Á, Ferreira DR (2012) Business process analysis in healthcare environments: A methodology based on process mining. Inf Syst 37(2):99–116. https://doi.org/10.1016/j.is.2011.01.003

Wiens J, Saria S, Sendak M, Ghassemi M, Liu VX, Doshi-Velez F, Jung K, Heller K, Kale D, Saeed M et al (2019) Do no harm: A roadmap for responsible machine learning for health care. Nat Med 25(9):1337–1340. https://doi.org/10.1038/s41591-019-0548-6

Rhodes A, Evans LE, Alhazzani W, Levy MM, Antonelli M, Ferrer R, Kumar A, Sevransky JE, Sprung CL, Nunnally ME et al (2017) Surviving sepsis campaign: International guidelines for management of sepsis and septic shock: 2016. Intensive Care Med 43:304–377. https://doi.org/10.1007/s00134-017-4683-6

Rello J, Valenzuela-Sánchez F, Ruiz-Rodriguez M, Moyano S (2017) Sepsis: A review of advances in management. Adv Therapy 34:2393–2411. https://doi.org/10.1007/s12325-017-0622-8

Schuurman AR, Sloot PM, Wiersinga WJ, van der Poll T (2023) Embracing complexity in sepsis. Critical Care 27(1):102. https://doi.org/10.1186/s13054-023-04374-0

Thorsen-Meyer H-C, Nielsen AB, Nielsen AP, Kaas-Hansen BS, Toft P, Schierbeck J, Strøm T, Chmura PJ, Heimann M, Dybdahl L et al (2020) Dynamic and explainable machine learning prediction of mortality in patients in the intensive care unit: A retrospective study of high-frequency data in electronic patient records. Lancet Digit Health 2(4):179–191. https://doi.org/10.1016/S2589-7500(20)30018-2

Loh HW, Ooi CP, Seoni S, Barua PD, Molinari F, Acharya UR (2022) Application of explainable artificial intelligence for healthcare: A systematic review of the last decade (2011–2022). Comput Methods Prog Biomed 226:107161. https://doi.org/10.1016/j.cmpb.2022.107161

Rudin C (2019) Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nat Mach Intell 1(5):206–215. https://doi.org/10.1038/s42256-019-0048-x

Moreno RP, Metnitz PGH, Almeida E, Jordan B, Bauer P, Campos RA, Iapichino G, Edbrooke D, Capuzzo M, Le Gall J-R, SAPS 3 Investigators (2005) SAPS 3–From evaluation of the patient to evaluation of the intensive care unit. Part 2: Development of a prognostic model for hospital mortality at ICU admission. Intensive Care Med 31(10): 1345–1355. https://doi.org/10.1007/s00134-005-2763-5

Galanti R, Coma-Puig B, d Leoni M, Carmona J, Navarin N (2020) Explainable predictive process monitoring. In: Proceedings of the 2nd international conference on process mining, pp 1–8

Weinzierl S, Zilker S, Brunk J, Revoredo K, Matzner M, Becker J (2020) XNAP: Making LSTM-based next activity predictions explainable by using LRP. In: Proceedings of the BPM 2020 international workshop, pp 129–141

Breuker D, Matzner M, Delfmann P, Becker J (2016) Comprehensible predictive models for business processes. MIS Quarterly 40(4):1009–1034

Lakshmanan GT, Shamsi D, Doganata YN, Unuvar M, Khalaf R (2015) A Markov prediction model for data-driven semi-structured business processes. Knowl Inf Syst 42(1):97–126. https://doi.org/10.1007/s10115-013-0697-8

Kaji DA, Zech JR, Kim JS, Cho SK, Dangayach NS, Costa AB, Oermann EK (2019) An attention based deep learning model of clinical events in the intensive care unit. PloS one 14(2):0211057. https://doi.org/10.1371/journal.pone.0211057

Zhang D, Yin C, Hunold KM, Jiang X, Caterino JM, Zhang P (2021) An interpretable deep-learning model for early prediction of sepsis in the emergency department. Patterns 2(2):100196. https://doi.org/10.1016/j.patter.2020.100196

Esteban C, Staeck O, Baier S, Yang Y, Tresp V (2016) Predicting clinical events by combining static and dynamic information using recurrent neural networks. In: Proceedings of the 2016 IEEE international conference on healthcare informatics, pp 93–101

Breiman L (2001) Random forests. Mach Learn 45(1):5–32. https://doi.org/10.1023/A:1010933404324

Chen T, Guestrin C (2016) XGBoost: A scalable tree boosting system. In: Proceedings of the 22nd international conference on knowledge discovery and data mining, pp 785–794

Hochreiter S, Schmidhuber J (1997) Long short-term memory. Neural Comput 9(8):1735–1780. https://doi.org/10.1162/neco.1997.9.8.1735

Rai A (2020) Explainable AI: From black box to glass box. J Acad Market Sci 48(1):137–141. https://doi.org/10.1007/s11747-019-00710-5

Lundberg SM, Lee S-I (2017) A unified approach to interpreting model predictions. In: Proceedings of the 30th conference on advances in neural information processing systems, pp 4765–4774

Senoner J, Netland T, Feuerriegel S (2022) Using explainable artificial intelligence to improve process quality: Evidence from semiconductor manufacturing. Manage Sci 68(8):5704–5723. https://doi.org/10.1287/mnsc.2021.4190

Babic B, Gerke S, Evgeniou T, Cohen IG (2021) Beware explanations from AI in health care. Science 373(6552):284–286. https://doi.org/10.1126/science.abg1834

Zschech P, Weinzierl S, Hambauer N, Zilker S, Kraus M (2022) GAM(e) changer or not? An evaluation of interpretable machine learning models based on additive model constraints. In: Proceedings of the 30th European Conference on Information Systems, pp 1–18

Hastie T, Tibshirani R (1986) Generalized additive models. Stat Sci 1(3):297–310. https://doi.org/10.1214/ss/1177013604

Lou Y, Caruana R, Gehrke J, Hooker G (2013) Accurate intelligible models with pairwise interactions. In: Proceedings of the 19th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp 623–631

Lou Y, Caruana R, Gehrke J (2012) Intelligible models for classification and regression. In: Proceedings of the 18th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp 150–158

Yang Z, Zhang A, Sudjianto A (2021) Gami-net: An explainable neural network based on generalized additive models with structured interactions. Pattern Recogn 120:108192. https://doi.org/10.1016/j.patcog.2021.108192

Agarwal R, Melnick L, Frosst N, Zhang X, Lengerich B, Caruana R, Hinton GE (2021) Neural additive models: Interpretable machine learning with neural nets. In: Proceedings of the 34th Conference on Advances in Neural Information Processing Systems, pp 4699–4711

Kraus M, Tschernutter D, Weinzierl S, Zschech P (2023) Interpretable generalized additive neural networks. Eur J Oper Res. https://doi.org/10.1016/j.ejor.2023.06.032

Marquez-Chamorro AE, Resinas M, Ruiz-Cortes A (2018) Predictive monitoring of business processes: A survey. IEEE Trans Serv Comput 11(6):962–977. https://doi.org/10.1109/TSC.2017.2772256

Huang Z, Lu X, Duan H, Fan W (2013) Summarizing clinical pathways from event logs. J Biomed Inform 46(1):111–127. https://doi.org/10.1016/j.jbi.2012.10.001