Abstract

Digital platforms facilitate the coordination, match making, and value creation for large groups of individuals. In consumer-to-consumer (C2C) online sharing platforms specifically, trust between these individuals is a central concept in determining which individuals will eventually engage in a transaction. The majority of today’s online platforms draw on various types of cues for group coordination and trust building among users. Current research widely accepts the capacity of such cues but largely ignores their changing effectiveness over the course of a user’s lifetime on the platform. To address this gap, we conduct a laboratory experiment, studying the interplay of cognitive and affective trust cues over the course a multi-period trust experiment for the coordination of groups. We find that the trust-building capacity of affective trust cues is time-dependent and follows an inverted u-shape form, suggesting a dynamic complementarity of cognitive and affective trust cues.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Digital market platforms critically depend on mechanisms of group coordination, that is, matching the different sides–typically supply and demand (Bui et al. 2006; Dann et al. 2020; Lusch and Nambisan 2015; Ströbel and Stolze 2002). As “posting services [on digital market platforms] does not […] necessarily lead to market transactions” (Bui et al. 2006, p. 469), platforms facilitate coordination and the ensuing value creation for large groups of individuals. Trust between individuals is a central concept for whether or not group coordination, collaboration, and transactions will eventually take place (Cheng et al. 2021; Cheng and Macaulay 2014; Engelmann et al. 2022; Lai and Turban 2008; Teubner and Camacho 2023). Consumer-to-consumer (C2C) platforms represent a very successful and fast-growing business model (Gawer 2014; Mittendorf et al. 2019; Saadatmand et al. 2019; Sundararajan 2016; Zimmermann et al. 2018). For instance, the accommodation sharing platform Airbnb was only founded in 2007 but has, as of now, more than 4 million active users and facilitated over 1.4 billion stays (Airbnb 2023). For such platforms, creating and maintaining trust among users is one of the most crucial endeavors for the coordination and realization of transactions (Dann et al. 2019; Jensen et al. 2015; Möhlmann 2021; Teubner et al. 2021; Teubner and Camacho 2023). Platforms can create trust between users by implementing different types of mechanisms (Nicolaou and McKnight 2006). Beyond cognitive trust cues such as (numerical) ratings, affective trust cues engender trust through emotions (Komiak and Benbasat 2006; Stewart and Gosain 2006) and the probably most widely-used example are profile photos (Ert et al. 2016; Riedl et al. 2014).

Previous research builds on the implicit assumption that antecedents of trust have relatively stable effects (McKnight et al. 1998, 2002a) while little is known about how the effectiveness of trust cues holds up over time, that is when being applied again and again (Möhlmann 2021). Especially new users face the dilemma of limited credibility (i.e., an empty track record), which impedes their ability to transact (and hence to build a track record). Surprisingly, most previous research on trust cues in online platforms take snap-shot perspectives and have not yet addressed dynamic trust perceptions over the course of time (Cabral and Hortaçsu 2010; McKnight et al. 1998, 2002a). We hence ask:

How do cognitive and affective trust cues affect trusting behavior over time—and how do they complement each other?

To address this research question, we build on insights from the Elaboration Likelihood Model (ELM) on information processing (Petty and Cacioppo 1986; Petty et al. 1983) and apply it in the context of trust research. We conduct a controlled laboratory experiment, investigating trusting behavior across several interactions with varying counterparts. Previous research into rating systems (Abrahao et al. 2017; Banerjee et al. 2017; Cheng et al. 2019) and profile photos (Ert et al. 2016; Fagerstrøm et al. 2017) has commonly conceptualized trust through self-reported scales on (hypothetical) intentions. While such approaches have undoubtedly informed our understanding of trust within online market places, they have not considered the emergence of trusting behavior across several interactions. To capture trust behavior over time, we hence conduct an experiment in which participants are incentivized by monetary outcomes and interact within a controlled peer-to-peer platform environment.

In line with a large body of experimental research (Blue et al. 2020; Ewing et al. 2019; Gefen et al. 2008), we operationalize trust as the exhibited behavior in an adapted version of Berg et al. (1995)’s seminal trust game. Thereby, we extend the original experiment with multiple periods and endogenous match-making (i.e., participants decide on whom to interact with themselves), where participants take the role of consumers or providers. Specifically, we employ a 2 (reputation system: provided/not provided) × 2 (profile photos: provided/not provided) between-subjects design. Further, our experimental design reflects that (1) peer-to-peer matches occur endogenously as the result of a market-based requests-and-response process and (2) exchanges create (economic) exposure for both sides (e.g., risk of fraud, theft, verbal/physical violence, privacy invasions, etc.). Although the risk of worst-case scenarios is commonly considered very low, the list of reported incidents is long (AirbnbHell 2023).

We contribute to trust research in multiple ways (Gawer 2014; Lucas et al. 2021; Möhlmann 2021). Our starting point is previous trust research that builds on the implicit assumption that the effects of trust cues are time-invariant (McKnight et al. 1998, 2002a) and increase with quality and quantity (Cabral and Hortaçsu 2010). In contrast to this, we challenge existing stability assumptions, putting forward that trust cues may be less stable and hence have temporary effects (Bhattacherjee and Sanford 2006; Petty and Cacioppo 1986; Petty et al. 1983). Indeed, our findings indicate that the trust-building capacity of cognitive trust cues is very limited initially but increases steadily, while affective trust cues start out from a higher level and follow an inverted u-shape form over time. We argue that affective trust cues may hence serve as a powerful complement in early stages of platform evolution and may thus help to overcome the inherent “cold start problem” of platforms in general and the users thereon in particular (Wessel et al. 2017).

2 Theoretical Background

2.1 Elaboration Likelihood Model (ELM)

When applied in the context of trust research, the Elaboration Likelihood Model (ELM) on information processing (Petty and Cacioppo 1986; Petty et al. 1983) challenges the assumption that in-the-moment snap-shots are adequate to sufficiently capture the dynamics of trust and how they may play out over time. Petty and colleagues distinguish two different routes of information processing–the central and the peripheral route. The central route refers to changes in attitudes resulting from an individual’s cognitive considerations of the information’s actual quality, such as a careful calculation of costs and benefits (e.g., Bhattacherjee and Sanford 2006). Second, the peripheral route to attitude change is not based on extensive contemplation about the issue at hand, but the mode of evaluation relies on affective conclusions drawn from intuitive impulses and impressions (Chang et al. 2020; Cyr et al. 2018). ELM researchers theorize that changes of attitudes associated with the peripheral route of information processing are (more) temporary and less predictable (Petty and Cacioppo 1986; Petty et al. 1983), as they are less stable over time (Bhattacherjee and Sanford 2006).

Surprisingly, previous trust research has not addressed potential temporary and unpredictable perceptions about trust cues processed via the peripheral route over the course of time yet. These seem to challenge well-established assumptions made about rather stable and predictable (McKnight et al. 1998, 2002b) or steadily increasing (Cabral and Hortaçsu 2010) effects of trust cues on trust as communicated in previous research.

2.2 Star Ratings as Cognitive Trust Cues

Cognitive trust cues instigate a process of calculative reasoning and reputation systems are a prime example of such cues (Chen et al. 2015; Mishra et al. 1998). On peer-to-peer online platforms, users typically interact with transaction partners that they have never met or interacted with before (Teubner 2018). Thus, users cannot build a history of personal interaction or gain first-hand experience of others’ trustworthiness. Reputation systems help to overcome this gap by enabling access to another user’s past behaviors (Bolton et al. 2013; Mazzella et al. 2016; Mohan 2019; Möhlmann 2021).Footnote 1 This track record, in turn, sets expectations and reduces uncertainty about future behaviors, for instance, regarding whether a product or service will be delivered as promised, or about an individual’s amicability, mindfulness, or integrity.

Based on the work by McKnight et al. (1998, p. 476), we associate star ratings with the central route of information processing as discussed in the ELM (Petty and Cacioppo 1986; Petty et al. 1983). In their model on the initial formation of trust, McKnight et al. (1998) theorize knowledge about reputation to be a cognitive process. Star ratings are arguably the most widely-used type of trust cue and are employed in some form by most consumer platforms (Dann et al. 2020; Hesse et al. 2020; Mohan 2019; Schoenmüller et al. 2018). Star rating scores evolve over time as they represent the aggregation of feedback from continuous transactions with ever-varying partners (Ba and Pavlou 2002; Dellarocas 2006; Rice 2012). To avoid the risk of collusion or retaliation, these systems commonly follow a simultaneous evaluation process, in which ratings are only revealed after both parties have submitted their evaluations (Fradkin et al. 2018). Consequently, a user’s average rating score serves as a quantified proxy of their trustworthiness based on their overall (past) behavior on the platform. Indeed, positive ratings are a driver for demand (Ert et al. 2016) and allow users to enforce higher prices (Gan and Wang 2017; Gibbs et al. 2017). Rice (2012) showed that, while the mere existence of a numerical rating system encourages participants to engage in the market at all, the specific information conveyed by the ratings facilitates transactions among them.

One well-established assumption made in prior research addressing reputation, which we associate with the central route of information processing as introduced in the ELM (Petty and Cacioppo 1986; Petty et al. 1983), is that trust is rather stable (McKnight et al. 1998), and steadily increasing over time (Cabral and Hortaçsu 2010). Rating systems are used to build and maintain trust in various contexts (Dellarocas 2003; Mohan 2019). We argue that in the context of peer-to-peer platforms, star ratings can be considered as persuasive messages of high personal relevance. In absence of strong distractions, deliberately processing a message’s content is likely and will lead to behavioral change—that is, trusting behavior (Petty and Cacioppo 1986) where the cue’s strength directly impacts persuasion outcomes (Kim and Benbasat 2009). Updating a star rating periodically (through additional transactions) improves it continuously in terms of reliability by reducing the potential impact of fraudulent, shill, or erroneous reviews (Rice 2012; Tadelis 2016). Numerical rating systems are hence likely to become more reliable and functional for increasing numbers of completed transactions. Thus, we expect that the influence of continuously updated star ratings will result in an increasing effect on trusting behavior over time.

H1:

The effect of star ratings on trusting behavior in peer-to-peer sharing transactions increases over time.

2.3 Profile Photos as Affective Trust Cues

Profile photos are one of the most common affective trust cues in online settings and the human brain processes faces intuitively and subliminally (Kanwisher et al. 1997). Research identified the so-called fusiform face area as being “selectively involved in the perception of faces” (Kanwisher et al. 1997, p. 4302). This human tendency to process faces is genetically encoded (Anzellotti and Caramazza 2014). Already infants react to faces within the first minutes after birth (Goren et al. 1975)–the process is hence not socially or culturally learned. Also, detecting facial expressions happens unconsciously and fast (i.e., in the magnitude of milliseconds) (Willis and Todorov 2006). Affective trust cues such as photos are hence processed without deliberate consideration. For this reason, we associate profile photos with the peripheral route of information processing (Petty and Cacioppo 1986; Petty et al. 1983). The effects associated with this route are considered to be less stable over time (Bhattacherjee and Sanford 2006).

Notably, trust-building is not solely a calculative process but also involves emotions (Komiak and Benbasat 2006). At the same time, human behavior can be emotional, spontaneous, and impulsive, rendering affective trust cues pivotal for trust formation. Profile photos in online environments showing human faces are hence bound to trigger emotion (Komiak and Benbasat 2006).

The underlying basic effect of photographs on trust can be explained through various theoretical frameworks. For instance, Social Presence Theory (Cyr et al. 2007; Gefen and Straub 2004; Hess et al. 2009; Lowry et al. 2010) suggests that the extent to which a person’s online presence resembles their real-world presence affects how others perceive and trust them. When a profile includes an actual face, it adds a human element to the online interaction, making the person seem more real and relatable. This perceived “social presence” can enhance trust because it feels like you are interacting with a genuine individual rather than an anonymous entity. Moreover, Social Identity Theory (Güth et al. 2008; Tanis and Postmes 2005) suggests that people tend to trust others who they perceive as part of their in-group or sharing similar characteristics. When a profile photo includes an actual face, it humanizes the individual and allows viewers to associate them with a real person. This can lead to a stronger sense of connection and trust, as the person appears to be more relatable and potentially part of the same social or cultural group. Also, the Mere Exposure Effect (Bornstein and D’Agostino 1992) suggests that people tend to develop a preference for things they are exposed to repeatedly. When you see someone’s actual face in their profile photo, you become more familiar with them over time, even in the online context. This increased familiarity can lead to a greater sense of trust, as you feel like you “know” the person better. Naturally, the notion of anonymity reduction may also play a role here. By disclosing a personal profile photo, users may provide hints regarding their sex, ethnicity, approximate age, and lifestyle–that is to say, their personal identity. Online environments usually come with a considerable degree of anonymity, which can lead to distrust due to the potential for deceit or misrepresentation. However, when a person includes their actual face in their profile photo, it reduces anonymity to some extent. This can signal a willingness to be more transparent and accountable for one’s actions, which can–in turn–foster trust (Cyr et al. 2009; Gefen and Straub 2003, 2004; Hassanein and Head 2007; Ou et al. 2014), especially in computer-mediated communication such as in electronic commerce or online social networks (Qiu and Benbasat 2010; Steinbrück et al. 2002). Last, a genuine profile photo, especially when it appears unaltered or not overly staged, can serve as a cue of authenticity. As people are likely to trust others who they perceive as being truthful and honest, when a profile photo shows a real face, it can signal that the person is not hiding behind a mask or using a fake identity and hence engender trust.

The trust-promoting effects of human images and profile photos on trust have been confirmed in various settings, including various platforms (Cyr et al. 2009; Ert et al. 2016; Teubner 2022; Teubner et al. 2022). It is hence not surprising that most user-centered online services and platforms offer customizable profiles, and the majority of (if not all) users make use of this feature (Ert et al. 2016; Fagerstrøm et al. 2017; Hesse et al. 2020). In fact, many platform operators actively encourage their users to upload a profile photo when setting up an account. The ride sharing platform BlaBlaCar even provides a search option allowing users to filter rides based on the condition that the driver has uploaded a photo and claims that on average, users with a photo are contacted three times more often than those without a photo (BlaBlaCar 2022).

As the context of peer-to-peer sharing puts a particular focus on the perception of profile photos, we argue that the processing of these affective cues will lead to an effect on trusting behavior. We expect that profile photos will be processed as affective trust cues through the peripheral route. However, since profile photos convey no persuasive argument per se, they will trigger “relatively primitive affective states” (Petty and Cacioppo 1986) such as the perception of social presence. This state is associated with a positive effect on trusting behavior but–as we hypothesize here–the effect decreases over time. This is for mainly two reasons. First, while some measures of attitude can be remarkably stable (e.g., toward a political party), trusting attitudes such as here may be prone to decay (Bhattacherjee and Sanford 2006; Petty et al. 2009). Since–in contrast to star ratings–the informational value of profile photos does not change (hence: not increase) over time, their impact can be expected to “wear off” simply due to habituation and the assumption of profile photos as a given (i.e., familiarity and desensitization). Second, while ratings represent (a presumably) objective measure of past behavior (i.e., a strong signaling device and hence a hard currency for trust building), the interpretation of photos (i.e., much softer cues) is more susceptible to counterarguments (Petty and Cacioppo 1986). For instance, over time, people are likely to have subpar experiences such as low quality or exploitative behavior. As by the nature of photos as weak signaling devices, these are bound to occur for any photo condition. While, in general, negative experiences are quite rare on most platforms (Zervas et al. 2015), the likelihood of exposure increases with the overall number of transactions. This will, over time, make users realize that there is no reliable correlation between photos and behavior. We hence expect that the positive trusting effect based on profile photos will decrease over time.

H2:

The effect of profile photos on trusting behavior in peer-to-peer sharing transactions decreases over time.

2.4 Experimental Studies

Despite this evidenced practical relevance of cognitive and affective trust cues, only few studies have experimentally assessed their effects on trusting behavior (Bente et al. 2014a, b; Qiu et al. 2018). Furthermore, the literature review reveals certain, systematic limitations of previous research. First, most of these studies capture either affective or cognitive cues, but usually neither both nor–let alone–their interplay. Second, previous studies only consider one side of the trust game without allowing for actual two-way interactions. Third, these studies comprise only one single period of transactions and neglect the dynamic context of most actual trust-building scenarios. Fourth, previous work usually draws on exogenous match-making between transaction partners, which is, of course, highly unrealistic for most peer-to-peer transaction scenarios. Table 1 provides an overview of the most relevant related studies.

3 Method

To test the outlined hypotheses, we conduct a controlled laboratory experiment. Behavioral experiments for investigating platform-related questions have experienced increasing popularity in various fields. Most importantly, the use of experiments enables causal inferences, augmenting the inferential power of correlative models (Friedman and Cassar 2004). In our experiment, participants engaged in a series of peer-to-peer transactions in a proprietary web interface reflecting typical features of “Airbnb-like” platforms.

3.1 Treatment Structure

The experiment employed a 2 (star ratings: yes/no) × 2 (profile photos: yes/no) full-factorial between-subjects design. Moreover, each participant took either the consumer or the provider role, and kept this role for the entire experiment. Further, to capture the dynamics of cognitive and affective trust cues over time, each experimental session included a total of 6 periods. To avoid end-game effects, some vagueness was introduced in that participants only knew that the experiment would have between 5 and 8 periods (Bolton et al. 2013; Rice 2012).

Illustrating this treatment design, Fig. 1 shows examples of how the user profiles appear in the four treatment conditions. Each session (i.e., cohort) included 12 participants, who were randomly allocated the roles of consumers and providers (6 each) of one and the same treatment condition. Hence, depending on the treatment condition, either all 12 participants in this cohort were able to see and provide star ratings, or none of the participants were. Similarly, either all 12 participants were able to see profile photos, or none of them were. In total, we conducted three sessions for every of the four treatment conditions, resulting in a total sample size of 144 participants ( = 4 conditions × 3 sessions/condition × 12 participants/session). This sample size is sufficient to detect main treatment effect sizes of 0.20 with a power of 0.95 (see Appendix C).

Examples for the display of user profiles in the four different treatment conditions. Note: The examples are from the provider perspective. The corresponding screens for the consumer perspective are shown in Appendix A. Profile photos are pixelated to preserve participants’ privacy

Star Ratings—In the star ratings conditions, participants saw the other market side’s average rating scores (rounded to the half unit) along with the number of ratings received. In addition, each participant also saw their own average rating score. Participants evaluated each other on a scale from 1 to 5 stars after having completed the transaction. To avoid retaliation or tit-for-tat strategies (or the anticipation thereof), ratings were submitted simultaneously (i.e., without knowing the rating one receives from one’s transaction partner). This is the most common mechanism design on most contemporary peer-to-peer platforms. In contrast, in the conditions without star ratings, participants could neither see any other participants’ ratings nor did they rate each other after transactions.

Profile Photos—In the profile photos conditions, participants’ profiles included a photo as provided by the participants themselves. A few days prior to the experiment, we reached out to the signed-in participants via email, notifying them that in the experiment, they would engage with others through a platform-like interface. In this email, participants in the profile photo conditions were informed that they may represent themselves to other participants by means of a profile photo, which they were able to provide via email before the experiment. They were advised that the photo should ideally have a height-width ratio of roughly 4:3 with sufficient resolution. No other instructions were provided with regard to the photo’s content or style. While the provision of a photo was voluntary, all 72 participants in the photo condition in fact provided a photo. Within these photos, the participants’ face was clearly visible in 60 cases, partly visible in 5 cases, and not visible in 7 cases.Footnote 2 In the conditions without profile photos, participants were not able to provide a photo but were represented by a uniform default image (see Fig. 1; right-hand side).

4 Experimental Task

To operationalize and evaluate trusting behavior between peers, we build on Berg et al. (1995)’s trust game. The trust game has become one of the most commonly applied experimental tasks for modeling a large variety of real-world transactions (Riegelsberger et al. 2005). It has been applied to study a variety of artifacts such as avatars (Riedl et al. 2014), ratings (Bolton et al. 2004; Rice 2012), photos (Ewing et al. 2015), and many more.

In the original trust game, two subjects—the trustor and the trustee—engage in a two-staged game. In the first stage, the trustor decides on how much of an initial endowment (e.g., $10) to transfer to the trustee. The transferred amount y is multiplied by a factor greater than one (e.g., by 3). In the second stage, the trustee then decides on how much of the received (multiplied) amount to return back to the trustor (z). These transferred amounts are generally considered as manifestations of trusting behavior (y) and trustworthiness (z). Building on the transactions on actual peer-to-peer platforms, we refer to the trust game’s players as providers (i.e., the trustors) and consumers (i.e., the trustees). The basic interaction of the trust game is thus a simplified analogy to the interactions on peer-to-peer platforms, where providers entrust a private resource (e.g., their apartment) to consumers, who will use and return it either in a trustworthy (e.g., clean and intact) or in an untrustworthy (e.g., dirty and/or marred) manner. Further, to model peer-to-peer transactions, we extend the original experiment in two important ways:

-

1.

A matching phase, in which participants are able to form dyads themselves, and

-

2.

A booking fee, which creates some degree of exposure also for consumers when entering a transaction.

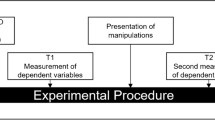

These two extensions refer to the actual booking process on Airbnb-like platforms, where selecting and booking a resource in advance (only based on the available information revealed through the platform) exposes consumers to the risk of paying for a resource that could potentially fail to meet their expectations. Taken together, the experimental task comprised three phases: (I) matching, (II) transaction, and (III) rating, as summarized in Fig. 2. These three phases resemble the basic mechanics of sharing platforms on which consumers first request a resource from a provider and wait for confirmation. Second, after the provider has accepted the request, consumer and provider enter the transaction, where the provider grants access to their resource in exchange for a payment. Third, provider and consumer mutually rate each other based on their transaction experience.

(I) Matching Phase—To capture the notion that peer-to-peer transactions are commonly initiated by consumers and confirmed by providers, we include a matching phase in which participants form dyads themselves (Bolton et al. 2004). Note that any consumer-provider dyad usually only occurs very few times within peer-to-peer sharing (or even only once; Teubner 2018). To account for this fact, consumer requests are restricted to providers that they had not engaged within the preceding two periods.Footnote 3,Footnote 4 Fig. 3 shows an example of this request mechanics.

The matching phase works as follows: Using the online interface, consumers could send one request to a provider at a time (i.e., asking to enter into a transaction with that provider). If the provider declined the request, the consumer was able to submit a request to the remaining providers. Importantly, in each period, consumers could also abstain from sending requests at all and instead click “skip period”. Providers, on the other side, could receive multiple requests from different consumers, but only accept one request per period. Once a request was sent, the provider saw the requesting consumer’s profile along with buttons to either accept or decline the request (see Fig. 1). If the provider did not respond to a request within 30 s, the request was automatically rejected. Once a provider accepted a request, all pending requests from other consumers were automatically rejected. Similar to consumers, providers were able to skip the current period and decline (or wait out) all incoming requests.

The matching phase ended when (1) all requests had either been accepted or declined, and (2) no further requests were possible (e.g., because consumers/providers without matches decided to skip the period). Hence, the matching phase represented a two-sided “trade fair,” mediated by the online interface in which participants negotiated the formation of matches. Participants’ photos and/or star ratings (i.e., the main treatment variables) served as cues as to which provider to contact or which consumer’s request to accept or reject.

(II) Transaction Phase—Once a provider confirmed a consumer’s request, the corresponding consumer-provider dyad entered the transaction phase. This phase includes three steps. In the first step, the consumer pays a booking fee of 5 monetary units (MU) to the provider. This reflects the fact that the consumer faces some exposure in that the provider may not “deliver,” that is, for instance, provides an apartment in bad condition. In the experiment, this may occur when the provider decides not to transfer any MUs, which would leave the consumer with a loss compared to not engaging in a transaction at all. In this second step, the provider decides on how much of their endowment to transfer to the consumer (y) where 0 ≤ y ≤ 10 MU. Hence, the providers’ endowment of 10 MU represents their private asset (e.g., apartment) that they bring into the transaction. The transferred amount y (trusting behavior) is tripled and credited to the consumer. Contextualized to the setting of peer-to-peer platforms, this transfer captures the service delivery from the provider to the consumer. In the third step, the consumer decides on how much to return back to the provider where this values z is an ex-post proxy of the consumer’s trustworthiness (0 ≤ z ≤ 3y MU). It reflects the consumer’s behavior or the way the provider’s asset is treated (e.g., tidy or devastated apartment). For any transfer y > 0, the provider hence faces exposure. The second and third steps of the transaction phase are identical to the original trust game (Berg et al. 1995). A summary screen completes the transaction phase.

(III) Rating Phase—After completing the transaction phase, each consumer-provider dyad enters a rating phase in which they evaluate each other using a star rating score from 1 to 5 stars. Naturally, this phase does only exist in the star rating treatment conditions.

4.1 Overview of Variables

Table 2 provides an overview of the independent variables (treatment structure) and dependent variables (outcome measures).

4.2 Procedure, Sample, and Randomization Check

The experiment was conducted at the experimental lab of a large European university. We recruited 144 participants (56 female, 88 male, average age = 22.2 years, age range = 18 to 36 years) from a student subject pool using the hroot system (Bock et al. 2014). Informed consent was obtained from all participants, explicitly including permission to use the provided profile photos for scientific purposes. The experiment was implemented through a proprietary online environment based on standard web development languages (HTML, PHP, CSS). Written instructions were handed out to all participants and were read out aloud at the beginning of each session. Participants answered 6 quiz questions to ensure comprehension. All instruction materials are provided in Appendix A. Sessions took about 50 min on average. All monetary units earned within the experiment were converted into EUR (€) at a rate of 4 MU = €1.00. At the end of each session, 3 out of the 6 periods were selected for each subject at random and paid out in cash (average payoff: €11.17).

Table 3 provides sample demographics for each treatment. A set of regression analyses confirms that none of these variables (age, gender, and experience with peer-to-peer platforms–either as a host or as a guest) exhibits significant differences across treatments (Appendix D).

5 Results

Our experimental design yield a multi-level structure of the data, where period is nested within participants (6 periods each) and participants are nested in sessions. As we focus on trusting behavior here, only 6 of the 12 participants per session are relevant (i.e., the hosts). This yields a theoretical maximum of 12 sessions × 6 participants/session × 6 periods/participant × 1 observation/period = 432 observations. As not all participants actually ended up in a transaction in all periods, the de facto number of observations is somewhat lower (n = 394). To analyze the data, we hence use mixed effects regression analysis (lmer in R), estimating fixed effects for our main treatment variables (i.e., photo, rating) as well as period, and random intercepts for subjects and sessions (where subjects are nested within sessions).

Figures 4 and 5 illustrate the overall treatment effects (Fig. 4) as well as how they develop over time (Fig. 5). As suggested there, both star ratings and profile photos have positive effects on trusting behavior, while there does not seem to occur any strong interaction. In all treatment conditions, we observe increases in trusting behavior over time, whereas the profile photo conditions exhibit a markedly different (i.e., curvilinear) pattern where trusting behavior decreases after a distinct initial increase.

To corroborate this visual assessment statistically, we consider a set of mixed effects panel regressions (Table 4). In the first two models (I and II), we model linear (and independent) period effects and control for demographic variables (i.e., age, gender, experience). This shows significant general treatment effects both of star ratings (β = 1.338, p = 0.011) and profile photos (β = 1.529, p = 0.006), as well as a positive overall period effect (β = 0.190, p < 0.001). Beyond that, Model II shows that the period effect is predominantly driven by the star rating conditions, which appear to “build up” their effect over time (supporting H1: β = 0.226, p = 0.012) but have no significant effect in the first period yet (β = 0.827, p = 0.158). Conversely, profile photos have an immediate effect right from the start (β = 1.520, p = 0.011), which then, however, is time-invariant. Hence, H2 is not supported (β = 0.002, p = 0.984)—at least when assuming a linear trend. Additionally (not shown in the table here), we did not find any significant interaction between the main treatment variables (photos and ratings; β = −0.956, p = 0.375).

As suggested by Fig. 5, the assumption of linearity, however, does not hold as there appears to exist a curvilinear progression when profile photos are present. In Models III a-d, we hence introduce quadratic period effects. To avoid uninterpretable triple interactions (Photos × Ratings × (Period + Period2)), we estimate a separate model for each treatment condition. These analyses show that both conditions with profile photos exhibit a curvilinear structure with positive and significant linear estimates (β = 1.739, p < 0.001; resp. β = 0.850, p < 0.001) and negative and significant second-order estimates (β = −0.322, p < 0.001; resp. β = −0.19, p < 0.01). When only star ratings are present, there is a “simple” linear and positive time-trend (β = 0.582, p < 0.001). In the setting with neither profile photos nor star ratings, no significant period effect occurs, albeit the direction is slightly positive (β = 0.411, p > 0.05).

None of the control variables (gender, age, experience) exerts significant effects on trusting behavior in any of the models.

5.1 Effect Decomposition of Star Ratings

In line with previous research, we have established the link between the presence of a star rating system and trusting behavior and seen an intricate dynamic pattern. Note, however, that there may be different factors at play since star ratings play a multi-layered role. First, the presence of a star rating allows for an improved assessment of one’s counterpart (i.e., the consumer in this case) as some historic information about their behavior is displayed. Second, it may allow for higher degrees of provider’s trusting behavior since malicious exploitation of this trust could be penalized by means of the rating system (ex post). Note that there exists a third aspect. Since the rating system works in a mutual way, also the provider will have to take into account that he or she will be rated after the transaction by the consumer. The anticipation thereof may, additionally, increase the exhibited trusting behavior (ex ante).

Hence, it is important to delineate these effect components in order to assess which fractions of the observed trusting behavior are actually due to the displayed rating scores (i.e., their net effect). As a next step, we hence drill down how trusting behavior evolves over the course of the six periods. Note that providers exhibit substantial trusting behavior even in the treatment condition in which no trust cues whatsoever are displayed (“baseline” condition). In fact, in this condition, providers transfer about half of their endowment (51.3%) to consumers on average. Moreover, there exists a slightly increasing trend. We hence consider these the General Trust Baseline and the General Time Effect (see Table 5 and Fig. 6). Also, note that in the very first period, participants in the star rating conditions were not able to draw on specific rating scores because no participant had had the chance to collect ratings at that point. Nevertheless, we still observe higher first-period trusting behavior as compared to participants in the non-star-rating conditions. This shadow-of-the-future effect indicates that the mere existence of the star rating system (even without the display of actual rating scores) facilitates trusting behavior due to the anticipation of rating and being rated as outlined above. Making use of this temporal distinction, we can further subtract the shadow-of-the-future effect in all subsequent periods (t ≥ 2), yielding a residual (red lines in Fig. 6). This residual can be considered as the Rating Score Net Effect. We observe that the net effect increases only slowly within the first four periods and then jumps to a level of about 0.15, comparable in size to the shadow-of-the-future effect. This observation suggests that the impact of time (and/or the number of underlying ratings) on the net effect of an aggregated star rating score is more complex than a simple linear trend, potentially involving discontinuities.

5.2 Complementary Analyses

Star Ratings and Trusting Behavior (Appendix B1): Overall, our results’ rating distributions are consistent with what is typically observed on platforms. Moreover, the analysis reveals that the ratings providers and consumers receive depend on their respective behavior (i.e., the amount they transfer or transfer back). Importantly, also consumers’ chances of being accepted as well as providers’ trusting behavior depend on the consumer’s aggregated star rating score. Hence, behavior is reflected in star ratings and, vice versa, star ratings affect behavior.

Visual Photo Properties and Trusting Behavior (Appendix B2): Similar to the analysis of specific star rating scores, we consider how specific visual properties of the profile photos, such as face visibility, attractiveness, and visual trustworthiness, affected trusting behavior. However, we did not find any evidence for significant effects with regard to these attributes.

Value Decomposition (Appendix B3): Combining the findings of trusting behavior (providers’ behavior) and ex post trustworthiness (consumers’ behavior), we can decompose the overall value providers receive along these (factorial) partial effects. This analysis grants further insight into how specifically the trust cues “generate” value. For instance, we find that while overall, trusting behavior is similar when either one or the other cue type is present, the presence of star ratings yields higher trustworthiness. This treatment difference can hence be attributed to the ratings’ effect on consumers rather than provider behavior.

Matches and Requests (Appendix B4): Both across treatments and periods, we observe non-significant differences with regard to the number of transactions. The matching rate exceeds 90% throughout the experiment, so that basically every participant is matched in almost every period. However, both star ratings and profile photos have positive effects on the share of participants who sent at least one request. However, as there are no significant effects on the fractions of participants who received at least one request, the additional requests cannot be distributed evenly but concentrate on those who already receive requests from other participants. Consequently, this does not result in differences in the number of matches. Period did neither affect the number of matches or request behavior.

6 Discussion

The number of peer-to-peer sharing businesses is growing and already shapes a substantial part of the e-commerce landscape (Gawer 2014; Mittendorf et al. 2019; Möhlmann 2021). At the same time, creating trust among users is of the utmost importance for these platforms, particularly for new users (Hesse et al. 2022).

6.1 Cognitive Trust Cues Over Time: Effect of Star Ratings

In the very first period of our experiment, participants in the star rating conditions were not yet able to draw on any insights communicated by rating scores (as they were still in the process of building their reputation capital). Still, in these conditions, we observe more pronounced trusting behavior (i.e., higher transfers) as compared to the non-star-rating conditions. This finding reflects previous research such as by Rice (2012), who distinguishes between the trust-building effect of the mere existence of a rating system and specific scores. Our findings indicate that already the existence of a rating system per se affects trusting behavior. We offer a potential explanation for this observation based on participants’ anticipation of being rated—the shadow-of-the-future effect. In a sense, the prospect of leaving a rating and being rated seems to represent a mutually impending threat, causing participants to exhibit trusting as well as trustworthy behavior. Next, the effect of star ratings on trusting behavior becomes stronger over time. The fact that star ratings seem to represent a reliable cue, and that their effect is steadily increasing for increasing numbers of underlying ratings, is consistent with previous research (Burtch et al. 2014; Cabral ansd Hortaçsu 2010).

6.2 Affective Trust Cues Over Time: Effect of Profile Photos

Interestingly, we find that that the effectiveness of profile photos for engendering trust follows an inverted u-shape over time. Profile photos start out to function as a relevant trust cue, and this effectiveness then increases even further. However, its trust-promoting capability collapses back to approximately its origin level later on. The fact that this pattern can be observed in both photo conditions (i.e., with and without star ratings) is not only an indicator for the reliability of this result but also highlights the importance of viewing it from a dynamic (rather than from a static) perspective. We suggest that the eventual decrease of trusting behavior is driven by the drop in returns, which occurs in the middle of the experiment at the peak of the trusting behavior curve (see Fig. 29, Appendix B1). This drop precedes the downward slope in the inverted u-shape curve. This drop can be interpreted from two perspectives: First, it implies emerging exploitation of providers’ trusting behavior by consumers. This exploitation can be interpreted as a counterargument that burdens the positive effect of the affective trust cue. Second, Petty and Cacioppo (1986) describe an “elaboration continuum,” which states that the mode of information evaluation is not subject to a strictly binary classification but rather a continuous scale. As such, the mode of processing the affective cue may shift across transactions.

Conceivably, overall trusting behavior may be subject to two partial effects: (1) the accumulation of experience or confidence within the transactional environment, and (2) the demonstrated effectiveness of cues. Initially, participants have limited or no experience/confidence within the transactional environment, including familiarity with the cues or the overall experimental setup. This initial lack of experience may lead them to adopt a rather cautious approach, resulting in limited trusting behavior, reflected in low transfers. Providers who are unsure whether the trust they put in their respective transaction partner will ultimately be rewarded or exploited, therefore, may behave rather cautiously in their first transactions. Simultaneously, participants may initially have rather high expectations concerning the cues’ effectiveness or their informational value. Initially, expectations should be high as providers see potential transaction showing their colors, that is, revealing their identity and thus personally vouching for their trustworthiness. However, over time, these expectations may undergo certain transformations. As participants engage in multiple transactions over time, they continuously realize that profile photos may not align with those initial (high) expectations. For instance, hosts are likely to–eventually–experience disappointing results, such as by receiving low or zero returns. The more often such exploitative behavior is experienced, the lower the expectation towards the photo cue should become.

It can be argued that, in order to function as a trust-building device, users need to be both (1) experienced with the decision environment in which they encounter profile photos and (2) have faith in the photos’ effectiveness. Hence, trusting behavior emerges as the interaction of both (see Fig. 7). Given that one factor (experience) increases over time and the other factor (faith in effectiveness) decreased (e.g., towards some level close to zero), the result is a curvilinear progression of trusting behavior (inverted u-shape).

Of course, this is merely a speculative explanation at this point, but it offers a rationale for the observed behavior. Future research will have to examine the particular levels and courses of experience and faith in effectiveness as well as their interaction and effects on trusting behavior.

Overall, the finding of the inverted u-shape suggests that profile photos convey varying effects on trust, depending on the specific phase of transactions. Thus, our results extend previous research, which has often abstracted from such potential time-dependencies of interpersonal trust by taking a snap-short perspective rather than presenting sound empirical findings how trust behavior may change over time (Bapna et al. 2017; Gefen 2000; Gefen et al. 2003; Pavlou and Gefen 2004).

6.3 Dynamic Complementarity of Cognitive and Affective Trust Cues

To some extent, our findings reveal that cognitive and affective trust cues complement each other over time. In contrast to star ratings, profile photos allow a “kick-starting” of trust in early phases in which star ratings are less accurate and reliable, helping to overcome this cue’s inherent “cold-start problem” (Hesse et al. 2022; Wessel et al. 2017). However, the presence of both trust cues leads to higher trusting behavior than when only one is available. Interestingly, the cues do not significantly interact and have an additive effect. This can be interpreted as support for the assumption that the cues are processed through different mental paths. In fact, Petty and Cacioppo (1986) already pictured this additivity when combining centrally and peripherally processed information for one-time exposure–a presumption that seems to hold and extend to exposure throughout multiple periods when applied in the context of trust behavior across repeated rounds of interaction.

6.4 Theoretical Contributions

Our study makes several contributions to trust research in the context of online sharing platforms (Gawer 2014; Lucas et al. 2021; Möhlmann 2021). Previous research widely agrees that the effects of trust cues on trusting behavior are relatively stable across different phases of their “lifecycle” as their effects are time-invariant. To this end, McKnight and colleagues have theorized that certain trust cues may indicate stability through structural assurances and situational normality (McKnight et al. 1998, 2002a). Only recently, the issue of longitudinal examination of trust cues has begun to receive increased attention (van der Werff and Buckley 2017). Yet, previous research does not sufficiently capture on how the trust-building capacity of cognitive and affective trust cues on trusting behavior may be subject to dynamic changes across multiple periods and/or transactions. We extend previous research addressing cognitive and affective trust cues by taking a “dynamic” perspective. We do so by drawing on assumptions about the central and peripheral route of information processing as introduced in the ELM (Petty and Cacioppo 1986; Petty et al. 1983). In line with our theoretical reasoning, assumptions about stable or increasing effects of trust cues apply to star ratings (cognitive trust cues), associated with the central route of information processing but not to profile photos (affective trust cues), associated with the peripheral route. Rather than assessing their trust-building potential in isolation (Komiak and Benbasat 2006; Stewart and Gosain 2006), we analyze the combination and interplay of two specific types of cues. Thereby, we follow the calls for more research on “the roles of [information] repetition and [information] variation” and that “researchers and practitioners would benefit from a better understanding of the degree to which the attitudes created or changed by their efforts persist over time, resist change, or predict behavior” (Schumann et al. 2012, p. 62). To investigate trust-building through the respective trust cues as a dynamic process, we conducted an experimental study with multiple transactions. Showing that cognitive and affective trust cues exhibit time-dynamic complementarity, our findings indicate that previous research may have underestimated the role of affective trust cues so far as they play an important role in complementing cognitive cues–in particular in the earlier stages of the usage process.

6.5 Methodological Contributions

Our study offers a distinct methodological contribution. Specifically, we extend the trust game (Berg et al. 1995) to the context of online sharing platforms, by providing a controlled experimental setting in which the emergence of trust behavior can be investigated over the course of multiple periods. Complementary to the existing approaches drawing on surveys (Dann et al. 2020; Ert et al. 2016; Teubner et al. 2022), experiments (Teubner 2022; Teubner and Camacho 2023), or field data (Edelman et al. 2017; Fradkin et al. 2018), this experimental setup provides a proxy for understanding user behavior on peer-to-peer sharing platforms, particularly when considering how trusting behavior evolves dynamically over time. Our experimental design complements previous research by allowing for a more natural investigation of transactional behavior. In contrast to prior studies, we use a “natural” endogenous process of matchmaking with requests and responses, similar to what is observed on many (if not most) actual peer-to-peer online platforms.

6.6 Managerial Implications

Our findings have important implications for consumers, providers, and managers of online sharing platforms. Specifically, they show that these stakeholders should be aware of the different phases and how they may affect trusting behavior (Lucas et al. 2021; Möhlmann 2021). On the one hand, platform managers should actively and early on encourage consumers and providers to upload profile photos as a means to kick-start the formation of trust–particularly during the initial and early stages of platform evolution. On the other hand, it is important for platform managers to understand that the beneficial effect of profile photos decays over time. Hence, a rating-based system should be used and users should be prompted to make active use of it. It also emphasizes the dual role of human information processing via central and peripheral routes, both of which should be reflected in platform design (Cyr et al. 2018). While we focused on a scenario with an open, market-based request-and-response process (endogenous matching) and highly transactional exchanges, there is reason to believe that our results may provide insights for a broader range of online platforms. While on platforms such as Airbnb, most users upload profile photos and evaluate each other after most transactions, there exist other platforms such as Craigslist or Gumtree (some of the most popular peer-to-peer platforms in the US, the UK, and Australia) that do not enable and/or encourage their users to do so (Hesse et al. 2020). Our findings suggest that platforms should reconsider their practices. Furthermore, on some platforms, even if they allow users to upload personal photos, this option is far from being used by everyone (Hesse et al. 2020). Uber seems to have even experimented with “forcing” users to leave a rating, for instance, by requiring them to provide feedback about a driver’s performance before allowing them to engage in another transaction.

6.7 Limitations and Future Work

Alike any research, this study exhibits several limitations, some of which, however, provide viable starting points for future work.

Behavior as a Proxy of Trust: The operationalization of trusting behavior as the amount providers are willing to transfer represents a limitation–as are any behavioral proxies for trust (such as the trust game). While this approach certainly captures one aspect of trust, it is an incomplete reflection as behavior is a multifaceted concept influenced not only by trust, but also by other factors such as risk aversion, prior experience, or necessity.

Congruency of Period and Ratings: Since virtually all participants engaged in a transaction in almost any period (the overall fraction of realized transactions is 91% and varies only negligibly between treatments), some caution is required concerning the process of trust-building which may root either in time or the number of ratings (or both). While there is some rationale for time- or period-contingent trusting behavior (e.g., gaining experience and hence confidence in the processes and other users overall), the underlying number of star ratings too represents a very plausible explanation for trust (i.e., cue accuracy and reliability). Artificially preventing participants from conducting a transaction each period (e.g., by limiting supply or demand) could help to disentangle these factors.

External Validity: While our study is based on actual and incentivized user behavior and hence provides valuable insights into the formation of trust, it is still conducted within an artificial laboratory environment and without framing to a particular application context. In contrast to actual real-world transactions, there occurs no physical interaction down the line, such as, for instance, a stay in someone’s apartment, renting their car, or sharing a ride. The interpretation of our findings hence requires some caution with regard to external validity, and thus, the transferability to actual transactions for platforms out in the wild.

Dynamic Effects of Other Trust Cues: Our study provides a sound understanding of the effects of star ratings and profile photos, common examples of cognitive and affective trust cues, over time. It is important to note that we addressed that most prototypical trust cues capturing cognitive and affective characteristics, while other trust cues may in theory comprise elements of both. Thus, future research should consider to investigate the effects of other trust cues (e.g., labels, badges, certificates, text elements, videos). From the perspective of an online platform provider, it is essential to leverage a portfolio of trust cues, which add up to an overall trust enhancing effect that is effective over the whole platform evolution.

6.8 Concluding Note

Both cognitive (e.g., star ratings) and affective (e.g., profile photos) trust cues represent effective means for trust-building. While we find no evidence for an interaction of these cues, they complement each other over time. Our findings inform both platform operators and users attempting to support and sustain trust in such environments. Furthermore, our experimental design may serve as a basis for scholars seeking to further investigate trusting behavior within the emerging platform economy landscape.

Notes

Pioneered by eBay in the 1990s, reputation systems are primary trust formation tools in digital environments (e.g., Gefen and Pavlou 2012; Rice 2012) and have been widely adopted on peer-to-peer sharing platforms (Hesse et al. 2020). On peer-to-peer platforms, users can commonly only submit a rating and/or a review after a completed transaction.

Complementary analysis showed that the degree of face visibility within the profile photos did not yield significant differences in behavior (see Appendix B2).

A great majority, 69%, of all transactions were first-time encounters. Overall, there occurred 272 distinct dyads and 394 transactions. Hence, each dyad met 394/272 = 1.45 times on average, and meeting only once was, in fact, the most likely outcome. Specifically, 161 dyads matched only once (59%), 100 dyads matched twice (37%), and 11 dyads matched three times (4%). Hence, 161·1 = 161 of all 394 transactions were one-time encounters (41%), 100·2 = 200 were part of a two-time encounter (51%), and 11·3 = 33 were part of a three-time encounter (8%).

Due to a technical issue, the restriction on sending requests ruled out only one (rather than two) periods in four of the twelve experimental sessions. This led to the few instances with three-fold transactions. Note that the four affected sessions included all four treatment conditions equally so that no systematic confound was created.

References

Abrahao B, Parigi P, Gupta A, Cook KS (2017) Reputation offsets trust judgments based on social biases among Airbnb users. Proc Natl Acad Sci 114(37):1–6

Airbnb (2023) “About us,” (available at https://news.airbnb.com/about-us/; retrieved May 2, 2023).

AirbnbHell (2023) AirbnbHell: Uncensored Airbnb stories from hosts and guests,” (available at https://www.airbnbhell.com/; retrieved August 22, 2023).

Ananthakrishnan UM, Li B, Smith MD (2015) A tangled web: evaluating the impact of displaying fraudulent reviews. In: ICIS 2015 proceedings, pp 1–19

Anzellotti S, Caramazza A (2014) The neural mechanisms for the recognition of face identity in humans. Front Psychol 5(672):1–6

Ba S, Pavlou PA (2002) Evidence of the effect of trust building technology in electronic markets: price premiums and buyer behavior. MIS Q 26(3):243–268

Banerjee S, Bhattacharyya S, Bose I (2017) Whose online reviews to trust? Understanding reviewer trustworthiness and its impact on business. Decis Support Syst 96:17–26

Bapna R, Qiu L, Rice S (2017) Repeated interactions vs. social ties: quantifying the economic value of trust, forgiveness, and reputation using a field experiment. MIS Q 41(3):841–866

Barbosa NM, Sun E, Antin J, Parigi P (2020) Designing for trust: a behavioral framework for sharing economy platforms. Proceed World Wide Web Conf 2020:2133–2143

Ben-Ner A, Putterman L (2009) Trust, communication and contracts: an experiment. J Econ Behav Organ 55(4):106–121

Bente G, Baptist O, Leuschner H (2012) To buy or not to buy: influence of seller photos and reputation on buyer trust and purchase behavior. Int J Hum Comput Stud 70(1):1–13

Bente G, Dratsch T, Kaspar K, Häßler T, Bungard O, Al-Issa A (2014a) Cultures of trust: effects of avatar faces and reputation scores on german and Arab players in an online trust-game. PLoS ONE 9(6):1–7

Bente G, Dratsch T, Rehbach S, Reyl M, Lushaj B (2014) Do you trust my avatar? Effects of photo-realistic seller avatars and reputation scores on trust in online transactions. In: international conference on HCI in business, pp 461–470

Berg J, Dickhaut J, McCabe K (1995) Trust, reciprocity, and social history. Games Econom Behav 10(1):122–142

Bhattacherjee A, Sanford C (2006) Influence processes for information technology acceptance: an elaboration likelihood model. MIS Q 30(4):805–825

BlaBlaCar. 2022. “Uploading your profile photo,” (available at https://www.blablacar.co.uk/faq/question/why-do-i-need-to-add-a-photo; retrieved May 2, 2023).

Blue PR, Hu J, Peng L, Yu H, Liu H, Zhou X (2020) Whose promises are worth more? How social status affects trust in promises. Eur J Soc Psychol 50(1):189–206

Bock O, Baetge I, Nicklisch A (2014) Hroot: hamburg registration and organization online tool. Eur Econ Rev 71:117–120

Bolton G, Katok E, Ockenfels A (2004) How effective are electronic reputation mechanisms? An experimental investigation. Manag Sci 50(11):1587–1602

Bolton G, Loebbecke C, Ockenfels A (2008) Does competition promote trust and trustworthiness in online trading? An experimental study. J Manag Inf Syst 25(2):145–170

Bolton G, Greiner B, Ockenfels A (2013) Engineering trust: reciprocity in the production of reputation information. Manag Sci 59(2):265–285

Bornstein RF, D’Agostino PR (1992) Stimulus recognition and the mere exposure effect. J Pers Soc Psychol 63(4):545–552

Bui T, Gachet A, Sebastian HJ (2006) Web services for negotiation and bargaining in electronic markets: design requirements, proof-of-concepts, and potential applications to e-procurement. Group Decis Negot 15:469–490

Burtch G, Ghose A, Wattal S (2014) Cultural differences and geography as determinants of online pro-social lending. MIS Q 38(3):773–794

Cabral LL, Hortaçsu A (2010) The dynamics of seller reputation: evidence from eBay. J Indust Econom 58(1):54–78

Chang HH, Lu YY, Lin SC (2020) An elaboration likelihood model of consumer respond action to facebook second-hand marketplace: impulsiveness as a moderator. Inform Manag 57(2):103–171

Charness G, Dufwenberg M (2006) Promises and partnership. Econometrica 74(6):1579–1601

Chen X, Huang Q, Davison RM, Hua Z (2015) What drives trust transfer? The moderating roles of seller-specific and general institutional mechanisms. Int J Electron Commer 20(2):261–289

Cheng X, Macaulay L (2014) Exploring individual trust factors in computer mediated group collaboration: a case study approach. Group Decis Negot 23(3):533–560

Cheng X, Fu S, Sun J, Bilgihan A, Okumus F (2019) An investigation on online reviews in sharing economy driven hospitality platforms: a viewpoint of trust. Tour Manage 71:366–377

Cheng X, Bao Y, Yu X, Shen Y (2021) Trust and group efficiency in multinational virtual team collaboration: a longitudinal study. Group Decis Negot 30(3):529–551

Cyr D, Hassanein K, Head M, Ivanov A (2007) The role of social presence in establishing loyalty in e-service environments. Interact Comput 19(1):43–56

Cyr D, Head M, Larios H, Pan B (2009) Exploring human images in website design: a multi-method approach. MIS Q 33(3):539–566

Cyr D, Head M, Lim E, Stibe A (2018) Using the elaboration likelihood model to examine online persuasion through website design. Inform Manag 55(7):807–821

Dai YN, Viken G, Joo E, Bente G (2018) Risk assessment in e-commerce: how sellers’ photos, reputation scores, and the stake of a transaction influence buyers’ purchase behavior and information processing. Comput Human Behav 84:342–351

Dann D, Teubner T, Weinhardt C (2019) Poster child and guinea pig-insights from a structured literature review on Airbnb. Int J Contemp Hosp Manag 31(1):427–473

Dann D, Teubner T, Adam MTP, Weinhardt C (2020) Where the host is part of the deal: social and economic value in the platform economy. Electron Commer Res Appl 40:100923

Dellarocas C (2003) The digitization of word of mouth: promise and challenges of online feedback mechanisms. Manag Sci 49(10):1407–1424

Dellarocas C (2006) How often should reputation mechanisms update a trader’s reputation profile? Inf Syst Res 17(3):271–285

Edelman BG, Luca M, Svirsky D (2017) Racial discrimination in the sharing economy: evidence from a field experiment. Am Econ J Appl Econ 9(2):1–22

Engelmann A, Bauer I, Dolata M, Nadig M, Schwabe G (2022) Promoting less complex and more honest price negotiations in the online used car market with authenticated data. Group Decis Negot 31(2):419–451

Ert E, Fleischer A, Magen N (2016) Trust and reputation in the sharing economy: the role of personal photos in Airbnb. Tour Manage 55(1):62–73

Ewing L, Caulfield F, Read A, Rhodes G (2015) Perceived trustworthiness of faces drives trust behaviour in children. Dev Sci 18(2):327–334

Ewing L, Sutherland CAM, Willis ML (2019) Children show adult-like facial appearance biases when trusting others. Dev Psychol 55(8):1694–1701

Fagerstrøm A, Pawar S, Sigurdsson V, Foxall GR, Yani-de-Soriano M (2017) That personal profile image might jeopardize your rental opportunity! On the relative impact of the seller’s facial expressions upon buying behavior on Airbnb™. Comput Hum Behav 72:123–131

Fradkin A, Grewal E, Holtz D (2018) The determinants of online review informativeness: evidence from field experiments on Airbnb. Work Paper. 41:12

Friedman D, Cassar A (2004) Economics lab: an intensive course in experimental economics. Routledge, Vasa

Gan C, Wang W (2017) The influence of perceived value on purchase intention in social commerce context. Int Res 27(4):772–785

Gawer A (2014) Bridging differing perspectives on technological platforms: toward an integrative framework. Res Policy 43(7):1239–1249

Gefen D (2000) E-commerce: the role of familiarity and trust. Omega 28(6):725–737

Gefen D, Pavlou PA (2012) The boundaries of trust and risk: the quadratic moderating role of institutional structures. Inf Syst Res 23(3):940–959

Gefen D, Straub DW (2003) Managing user trust in B2C e-services. E-Serv J 2(2):7–24

Gefen D, Straub DW (2004) Consumer trust in B2C e-commerce and the importance of social presence: experiments in e-products and e-services. Omega 32(6):407–424

Gefen D, Karahanna E, Straub DW (2003) Trust and TAM in online shopping: an integrated model. MIS Q 27(1):51–90

Gefen D, Benbasat I, Pavlou P (2008) A research agenda for trust in online environments. J Manag Inf Syst 24(4):275–286

Gibbs C, Guttentag D, Gretzel U, Morton J, Goodwill A (2017) Pricing in the sharing economy: a hedonic pricing model applied to Airbnb listings. J Travel Tour Market 35:46–56

Goren CC, Sarty M, Wu PYK (1975) Visual following and pattern discrimination of face-like stimuli by newborn infants. Pediatrics 56(4):544–549

Güth W, Levati MV, Ploner M (2008) Social identity and trust—an experimental investigation. J Soc Econom 37(4):1293–1308

Hassanein K, Head M (2007) Manipulating perceived social presence through the web interface and its impact on attitude towards online shopping. Int J Hum Comput Stud 65(8):689–708

Hawlitschek F, Jansen LE, Lux E, Teubner T, Weinhardt C (2016) Colors and trust: the influence of user interface design on trust and reciprocity. In: HICSS 2016 proceedings, pp 590–599

Hess T, Fuller M, Campbell D (2009) Designing interfaces with social presence: using vividness and extraversion to create social recommendation agents. J Assoc Inf Syst 10(12):889–919

Hesse M, Teubner T, Adam MTP (2022) In stars we trust–a note on reputation portability between digital platforms. Bus Inf Syst Eng 64(3):349–358

Hesse M, Dann D, Braesemann F, Teubner T (2020) Understanding the platform economy: signals, trust, and social interaction. In: HICSS 2020 Proceedings, pp 5139–5148

Ho B (2012) Apologies as signals: with evidence from a trust game. Manage Sci 58(1):141–158

Ignat CL, Dang QV, Shalin VL (2019) The influence of trust score on cooperative behavior. ACM Trans Internet Technol 19(4):1–22

Jensen PH, Palangkaraya A, Webster E (2015) Trust and the market for technology. Res Policy 44(2):340–356

Kanwisher N, McDermott J, Chun MM (1997) The fusiform face area: a module in human extrastriate cortex specialized for face perception. J Neurosci 17(11):4302–4311

Kas J, Corten R, van de Rijt A (2020) Reputations in mixed-role markets: a theory and an experimental test. Soc Sci Res 85:102366

Keser C, Späth M (2020) The value of bad ratings: an experiment on the impact of distortions in reputation systems. Working Paper 3571119: 1–33.

Kim D, Benbasat I (2009) Trust-assuring arguments in B2C e-commerce: impact of content, source, and price on trust. J Manag Inf Syst 26(3):175–206

Komiak SYX, Benbasat I (2006) The effects of personalization and familiarity on trust and adoption of recommendation agents. MIS Q 30(4):941–960

Lai LSL, Turban E (2008) Groups formation and operations in the web 2.0 environment and social networks. Group Decis Negot 17(5):387–402

Lowry PB, Zhang D, Zhou L, Fu X (2010) Effects of culture, social presence, and group composition on trust in technology-supported decision-making groups. Inf Syst J 20(3):297–315

Lucas B, Francu RE, Goulding J, Harvey J, Nica-Avram G, Perrat B (2021) A note on data-driven actor-differentiation and SDGs 2 and 12: insights from a food-sharing app. Res Policy 50(6):104266

Lusch RF, Nambisan S (2015) Service innovation. MIS Quartely 39(1):155–176

Mazzella F, Sundararajan A, Butt d’Espous V, Möhlmann M (2016) How digital trust powers the sharing economy: the digitization of trust. IESE Insight 30(3):24–31

McKnight DH, Cummings LL, Chervany NL (1998) Initial trust formation in new organizational relationships. Acad Manag Rev 23(3):473–490

McKnight DH, Choudhury V, Kacmar C (2002a) Developing and validating trust measures for e-commerce: an integrative typology. Inf Syst Res 13(3):334–359

McKnight DH, Choudhury V, Kacmar C (2002b) The impact of initial consumer trust on intentions to transact with a web site: a trust building model. J Strat Inf Syst 11:297–323

Mishra DP, Heide JB, Cort SG (1998) Information asymmetry and levels of agency relationships. J Mark Res 35(3):277–295

Mittendorf C, Berente N, Holten R (2019) Trust in sharing encounters among millennials. Inf Syst J 29(5):1083–1119

Mohan V (2019) On the use of blockchain-based mechanisms to tackle academic misconduct. Res Policy 48(9):103805

Möhlmann M (2021) Unjustified trust beliefs: trust conflation on sharing economy platforms. Res Policy 50:104173

Nicolaou AI, McKnight DH (2006) Perceived information quality in data exchanges: effects on risk, trust, and intention to use. Inf Syst Res 17(4):332–351

Ou CX, Pavlou PA, Davison RM (2014) Swift Guanxi in online marketplaces: the role of computer-mediated communication technologies. MIS Q 38(1):209–230

Pavlou PA, Gefen D (2004) Building effective online marketplaces with institution-based trust. Inf Syst Res 15(1):37–59

Petty R, Cacioppo J (1986) The elaboration likelihood model of persuasion. Adv Exp Soc Psychol 19(1):123–205

Petty RE, Cacioppo JT, Schumann D (1983) Central and peripheral routes to advertising effectiveness: the moderating role of involvement. J Consum Res 10(2):135–146

Petty RE, Barden J, Wheeler SC (2009) The elaboration likelihood model of persuasion: developing health promotions for sustained behavioral change. Emerg Theor Health Promot Pract Res Jossey-Bass 2:185–214

Qiu L, Benbasat I (2010) A study of demographic embodiments of product recommendation agents in electronic commerce. Int J Hum Comput Stud 68(10):669–688

Qiu W, Parigi P, Abrahao B (2018) More stars or more reviews? Differential effects of reputation on trust in the sharing economy. In: CHI 2018 Proceedings, pp 1–11

Rezlescu C, Duchaine B, Olivola CY, Chater N (2012) Unfakeable facial configurations affect strategic choices in trust games with or without information about past behavior. PLoS ONE 7(3):1–6

Rice SC (2012) Reputation and uncertainty in online markets: an experimental study. Inf Syst Res 23(2):436–452

Riedl R, Mohr PNC, Kenning PH, Davis FD, Heekeren HR (2014) Trusting humans and avatars: a brain imaging study based on evolution theory. J Manag Inf Syst 30(4):83–114

Riegelsberger J, Sasse MA, McCarthy JD (2005) The mechanics of trust: a framework for research and design. Int J Hum Comput Stud 62(3):381–422

Saadatmand F, Lindgren R, Schultze U (2019) Configurations of platform organizations: implications for complementor engagement. Research Policy 48(8):103770

Schoenmüller V, Netzer O, Stahl F (2018) The extreme distribution of online reviews: Prevalence, drivers and implications. Working Paper

Schumann DW, Kotowski MR, Ahn H-YA, Haugtvedt CP (2012) The elaboration likelihood model—A 30-year review. In: advertising theory. Routledge, pp. 51–68.

Steinbrück U, Schaumburg H, Duda S, Krüger T (2002) A picture says more than a thousand words: Photographs as trust builders in e-commerce websites. In CHI 2002 Proceedings, pp 748–749

Stewart KJ, Gosain S (2006) The impact of ideology on effectiveness in open source software development teams. MIS Q 30(2):291–314

Ströbel M, Stolze M (2002) A matchmaking component for the discovery of agreement and negotiation spaces in electronic markets. Group Decis Negot 11:165–181

Sundararajan A (2016) The sharing economy: the end of employment and the rise of crowd-based capitalism. MIT Press, Cambridge, MA

Tadelis S (2016) Reputation and feedback systems in online platform markets. Ann Rev Econom 8(1):321–340

Tanis M, Postmes T (2005) A social identity approach to trust: interpersonal perception, group membership and trusting behaviour. Eur J Soc Psychol 35(3):413–424

Teubner T (2018) The web of host-guest connections on Airbnb: a network perspective. J Syst Inf Technol 20(3):262–277

Teubner T (2022) More than words can say: a randomized field experiment on the effects of consumer self-disclosure in the sharing economy. Electron Commer Res Appl 54:101175

Teubner T, Camacho S (2023) Facing reciprocity: how photos and avatars promote interaction in micro-communities. Group Decis Negot 32(2):435–467

Teubner T, Adam MTP, Hawlitschek F (2021) On the potency of online user representation: Insights from the sharing economy. In: Gimpel H, Krämer J, Neumann D, Pfeiffer J, Seifert S, Teubner T, Veit DJ, Weidlich A (eds) Market engineering: insights from two decades of research on markets and information. Springer, London, pp 167–181

Teubner T, Adam MTP, Camacho S, Hassanein K (2022) What you see is what you g(u)e(s)t: How profile photos and profile information drive providers’ expectations of social reward in co-usage sharing. Inf Syst Manag 39(1):64–81

Teubner T, Adam MTP, Camacho S, Hassanein K (2014) Understanding resource sharing in C2C platforms: the role of picture humanization. In ACIS 2014 Proceedings, pp 1–10

Van der Werff L, Buckley F (2017) Getting to know you: a longitudinal examination of trust cues and trust development during socialization. J Manag 43(3):742–770

Wessel M, Thies F, Benlian A (2017) Competitive positioning of complementors on digital platforms: evidence from the sharing economy,” ICIS 2017 Proceedings, pp. 1–18.

Willis J, Todorov A (2006) First impressions making up your mind after a 100-ms exposure to a face. Psychol Sci 17(7):592–598

Zervas G, Proserpio D, Byers J (2015) A first look at online reputation on Airbnb, where every stay is above average,” Working Paper.

Zimmermann S, Angerer P, Provin D, Nault BR (2018) Pricing in C2C online sharing platforms. J Assoc Inf Syst 19(8):672–688

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that there are no conflicts of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A: Experiment Instructions

This appendix includes the material provided to the participants in the experiments. Depending on the specific treatment condition, the material slightly differed in terms of whether (1) star ratings and (2) profile photos were available. This relates back to our 2 (star ratings: yes/no) × 2 (profile photos: yes/no) full factorial between-subjects treatment design (see Treatment Structure). The material shown in this appendix was specifically for the treatment condition where both star ratings and profile photos were available. All participants saw the welcome screen. Then, depending on the particular role assigned, the participant either saw the material for a consumer or a provider.

Welcome: You are participating in an experiment from which you can earn money. During the whole experiment you will operate with monetary units (MU), which will be converted into Euros and paid out afterwards. A conversion factor of 4 MU = 1.00 € applies. The amount of your payoff depends on your behavior and the behavior of the other participants. The results at the end of each period you will play are decisive. The role you take in the experiment was randomly determined. You either take the role of a provider or a consumer. You will retain this role for the entire experiment (Figs. 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26 and 27).

The experiment randomly comprises between five and eight periods. Each period comprises two phases in which you can undertake different actions. At the end of each period, a summary and your payoff for this period is depicted. After the experiment, three of your periods are randomly selected and you get the payoffs from those periods paid out.