Abstract

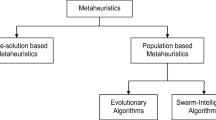

Cartesian genetic programming, a well-established method of genetic programming, is approximately 20 years old. It represents solutions to computational problems as graphs. Its genetic encoding includes explicitly redundant genes which are well-known to assist in effective evolutionary search. In this article, we review and compare many of the important aspects of the method and findings discussed since its inception. In the process, we make many suggestions for further work which could improve the efficiency of the CGP for solving computational problems.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The term “Cartesian genetic programming” (CGP)Footnote 1 first appeared in 1999 [65]. Although, it was a generalisation of a method of encoding and evolving electronic circuits that was first described in 1997 [71]. In 2000, it became established as a new form of genetic programming [70]. Since that time CGP has been adopted by many researchers, adapted by others and applied to many applications areas. This article provides an extensive review of the different variants of CGP, and an analysis of many important aspects of CGP. It discusses numerous open issues and questions. The article contains many suggestions for further work which could lead to improvements in the efficiency of the CGP for solving computational problems.

In contrast to tree-based genetic programming [53, 80], CGP encodes computational structures as directed graphs. Its invention was heavily influenced by earlier work on creating and evolving genetic representations of circuits. These are most naturally encoded as graphs and can be described using structures called netlists. CGP uses a netlist-inspired address-based genotype consisting of integers pointers to an either an array of primitive functions or data locations. Encoding graphs, rather than trees has advantages in that nodes can be multiply used and graphs can have multiple outputs. It is a very adaptable representation as it can easily represent many types of computational structures, such as systems of equations, state-machines, neural networks, algorithms and electronic circuits. Another defining characteristic of CGP is that the genotype can include non-coding genes. The genotype is recursively decoded from program outputs to inputs and in so doing nodes can be ignored, these are non-coding. As we shall the presence of non-coding genes has strongly influenced the choice of search algorithm that is most suitable for CGP (see Sect. 2).

In the article, we discuss many variants of CGP that have been proposed. We begin with a graph-based form of GP called parallel distributed GP (PDGP) which was developed by Poli prior to CGP [77, 78]. This has many similarities with CGP but also uses various graph-based crossover and mutation operators and restricted graph connectivity. Modular CGP (MCGP) is based on standard CGP but has additional mutation operators which allow CGP-encoded sub-functions (called modules) to be captured, re-used or modified. This was motivated by Koza’s work on automatically defined functions in tree-based GP [54]. In real-valued CGP (RVCGP) all genes are real-valued and a decoding step translates these into standard CGP genes. This is attractive as it allows many methods for evolving genotypes used in evolutionary algorithms work to be used to evolve programs (e.g. “flat” crossover see Sect. 5).

Implicit-context CGP (ICCGP) removed the positional dependence of genes in CGP by representing components by evolved entities called enzymes which are self-assembled to form CGP-like phenotypes. Self-modifying CGP (SMCGP) extended CGP by introducing new kinds of primitive functions (self-modifying) which carried out transformations of the phenotype, this allowed sequences of phenotypes to be evolved from a single genotype. It also required genes in CGP to use relative addressing (also used in PDGP). Mixed-type CGP (MTCGP) borrowed some features from SMCGP and also allowed CGP to handle different data types (i.e. scalar and vector). Recurrent CGP (RCGP) is a simple extension to standard CGP which allowed connections between primitive functions to be both feed-forward or feedback (i.e. breaking acyclicity). Iterative CGP (ICGP) introduced conditional loop-genes into CGP which allowed CGP to encode algorithms.

Differentiable CGP (DCGP) is a form of CGP in which phenotypes are differentiable. Primitive functions are truncated Taylor’s series and graph connections are weighted. This allows gradient descent to be used to arrive at highly-tuned graphs. We also discuss a recently developed form of graph-based GP called evolving graphs by graph programming (EGGP) in which phenotypes are encoded using a graph description language. This allows graphs to be manipulated more freely than is possible in the standard form of CGP. We also discuss the recently proposed positional CGP (PCGP). This a real-valued form of CGP form in which graph nodes have evolvable positions, and connections are made to the nearest node.

We also discuss in depth various promising forms of crossover, mutation and search algorithms that have been suggested in the literature of CGP. It should be noted that very recently, another review of CGP has appeared. It discusses CGP and its variants and focuses in detail on their representational differences and evolutionary operators [62].

The plan of the article is as follows. First we review CGP-encoded representations of programs that have been suggested in the literature (Sect. 3). We follow this with a discussion of work done in mutation (Sect. 4) and crossover (Sect. 5). Section 6 discusses various search algorithms that have been investigated for CGP. In Sect. 7 we examine ways in which CGP can be made faster by using hardware. Section 8 discusses major applications of CGP including CGP encoded artificial neural networks. In Sect. 9 we discuss the relatively high comprehensibility of CGP programs. We give comparisons of the efficiency and human-competitiveness of CGP to other machine learning techniques in Sect. 10. The growing amount of publicly available software for CGP is reviewed in Sect. 11. We close with sections on open questions and suggestions for further investigation (Sect. 12) and conclusions. For reference and completeness we begin with a description of the standard form of CGP.

2 Aspects of standard CGP

2.1 Representation

In its standard form CGP programs are representations of directed acyclic graphs. These graphs are represented using a two-dimensional grid of computational nodes. Hence the term “Cartesian”.

Each node in the directed graph represents a particular function and is encoded by a number of integer genes. Each node has a function gene which is the address of the computational function of the node in a user-defined look-up table of functions. The remaining node genes are connection genes and say where the node gets its data from. These genes represent addresses in a data structure (typically an array). Nodes take their inputs from either the output of a node (in acyclic CGP, from a previous column) or from a program input. The number of connection genes a node has is chosen to be the maximum number of inputs that any function in the function look-up table has. If any node function requires less inputs, the remaining connection genes are ignored. The program data inputs are given the absolute data addresses 0 to \(n_i-1\) where \(n_i\) is the number of program inputs. The data outputs of nodes in the genotype are given addresses sequentially, column by column, starting from \(n_i\) to \(n_i+n_n-1\), where \(n_n\) is the user-determined upper bound on the number of nodes. A schematic is shown in Fig. 1.

If the problem requires \(n_o\) program outputs, the genotype is augmented with a number of output genes (\(O_i=n_o\)). Each of these is an address of a node where the program output data is taken from. Nodes in columns cannot be connected to each other. In its standard form the graph in CGP is directed and feed-forward; this means that a node may only have its inputs connected to either input data or the output of a node in a previous column. However, as we will see in Sects. 3.7 and 3.8, this restriction can be relaxed to allow recurrent or cyclic graphs. A schematic of the genotype is shown in Fig. 1.

Standard form of CGP representation. It is a grid of nodes whose functions are chosen from a set of primitive functions. The grid has \(n_c\) columns and \(n_r\) rows. The number of program inputs \(n=n_i\) and the number of program outputs \(m=n_o\). Each node is assumed to take as many inputs as the maximum function arity a. Every data input and node output is labeled consecutively (starting at 0), which gives it a unique data address which specifies where the input data or node output value can be accessed (shown in the figure on the outputs of inputs and nodes)

CGP has three user-selectable graph parameters that define the dimensionality and connectivity of the encoded graphs. These are the number of columns, the number of rows and levels-back. These are denoted by \(n_c\), \(n_r\) and l, respectively. The product of the first two parameters determine the maximum number of computational nodes allowed: \(n_n = n_c n_r\). The parameter l, called levels-back, controls the connectivity of the graph encoded. Levels-back constrains which columns a node can get its inputs from. If \(l=1\), a node can get its inputs only from a node in the column on its immediate left or from a primary input. If \(l=2\), a node can have its inputs connected to the outputs of any nodes in the immediate left two columns of nodes or a primary input. If one wishes to allow nodes to connect to any nodes on their left, then \(l=n_c\). Varying these parameters can result in various kinds of graph topology. An important special case of these three parameters occurs when the number of rows is chosen to be one and levels-back is set to be the number of columns (one dimensional topology). In this case the genotype can represent any bounded directed graph where the upper bound is determined by the number of columns. The length of a CGP genotype is given by \(L_{cgp} = n_n (a+1) + n_o\).

2.2 Search algorithm

Standard CGP uses a search algorithm (Algorithm 1) that is inspired by the \(1+\lambda\) evolutionary strategy [83] in which a single parent genotype is mutated to create \(\lambda\) offspring. An offspring that has a fitness better or equal to the parent becomes the new parent for the next generation. This is still the most widely used search algorithm for CGP. We discuss work suggesting other search algorithms in Sect. 6.

2.3 Non-coding genes

The reason that such a simple search strategy operating on such a small population is effective is strongly connected with the presence of non-coding genes in the genotype. Non-coding genes arise in CGP when the outputs of nodes are not referenced in the flow of data from program inputs to program outputs. It is this which leads to both variable length phenotypes and evolutionary neutral drift in the genotype. It should be noted that the genotype-phenotype mapping process in CGP incurs very little computational overhead as it is only carried out once per genotype. The \(1+\lambda\)-inspired algorithm promotes neutral drift since often mutational offspring differ only in inactive genes and so have equal fitness to their parent [69, 110, 119, 128, 129]. Such identical offspring can be detected before fitness evaluation so incurring no fitness calculation. Thus, even when it appears that the algorithm is stuck on a local optimum fitness value the search algorithm is exploring many possible different solutions by mutating different genotypes. The maximum allowed size of the genotype is highly influential here and Turner et al. investigated the relationship between it and performance on a suite of hard benchmarks [110]. They found that the relationship was approximately quadratic with a clear optimum value for \(n_n\). Often the optimum number of nodes was quite large (hundreds or thousands of nodes).

2.4 Absence of bloat

CGP does not suffer from a phenomenon called bloat [105], in which over evolutionary time, programs become larger [91]. Bloat has been described as “program growth without (significant) return in terms of fitness” [80]. It was thought that the lack of bloat in CGP was caused by either neutral genetic drift (NGD) [66] or length bias (LB) [18]. The former argued that since NGD is beneficial to search, it is beneficial to maintain a large number of inactive genes. This provides a pressure to limit the number of active genes. LB argues that the lack of bloat is a consequence of the genotype representation which has the property that nodes closer to the program inputs are much more likely to be active since they can be connected to by many nodes on their right. This causes a strong bias towards small program sizes. Interestingly, both these hypotheses for the lack of bloat in CGP have been disproved [105] where it was shown that when NGD is disallowed in CGP bloat still does not occur. LB cannot be the reason why there is no bloat since Recurrent CGP (see Sect. 3.7) has no length bias and yet it too does not exhibit bloat. Thus the cause of lack of bloat in CGP is still an open question.

3 Alternative genotype representations

3.1 Parallel distributed genetic programming

For completeness, and because of later discussion, we include a brief discussion of a form of graph-based genetic programming that has a representation that is similar to CGP. It is called parallel distributed GP. Poli proposed this in 1996 [77, 79]. Like CGP, PDGP represented graphs using a Cartesian grid of nodes. Connection to nodes were restricted to the previous layer (i.e. in CGP terms, with levels-back equal to 1) and the connection genes were relative (as in SMCGP see Sect. 3.5) and counted back from a node position to the source of node input. A pass-through (or wire) function was allowed so that nodes could be connected to deeper layers. Poli devised various crossover operators. They are all based around the idea of swapping sub-graphs defined by selecting random crossover points in the parents to create offspring. One can choose the first crossover point at random and the other must respect the limitation that the graphs have a maximum depth (in CGP terms, a maximum number of columns). By choosing crossover points either in either active or inactive nodes or both, he described a number of types of crossover. He also discussed two forms of mutation, one he called global in which a random sub-graph is generated and replaces an existing sub-graph. Actually, he states that global mutation was implemented by crossing over an individual with a randomly generated new individual [79]. The second mutation operator he called link which is the same as point mutation in CGP. Using an evolutionary algorithm with population sizes of around 1000 and tournament selection with crossover and both types of mutation Poli showed that PDGP was markedly more efficient at solving problems than standard tree-based GP (even with ADFs) and often the evolutionary algorithm was most efficient with small grid sizes. PDGP was also used to evolve artificial neural networks [81].

3.2 Modular

Koza demonstrated the usefulness of automatically-defined functions (ADFs) in tree-based GP [54]. Walker and Miller introduced an equivalent to ADFs for CGP called modules [122, 123]. In modular CGP sub-functions or modules can be captured or destroyed by mutation operators. Capture or acquisition happens via two random positions in the genotype. The genotype is extracted and placed in a module list and the removal site replaced by a module containing the re-labelled sub-genotype so that the meaning of the genotype is identical to the original genotype before module acquisition. Empirical comparisons of results with and without ADFs were made on a number of benchmarks and appeared to show that CGP with module acquisition and evolution obtained solutions in much fewer numbers of evaluations that standard CGP, particularly on hard problems. However, it should be noted that the comparisons with CGP were unfair in the sense that the total size of allowed genotype in nodes with modules was much greater than the number allowed in standard CGP. Thus, it still remains an open question as to whether module acquisition is beneficial.

Noting that in modular CGP modules were acquired or destroyed randomly (i.e. via mutation), Kaufmann and Platzner introduced some new techniques for creating modules: age-based and cone-based [38]. The age-based module creation operator identifies primitives nodes that have remained unchanged for a number of generations and places these into modules (only primitive nodes can reside in a module). The module is given an age that is the average of the ages of the primitive nodes within it. Two candidates are generated using this operator and the one that is older is chosen. In contrast to standard or age-based module creation, cone-based module acquisition (MA) aggregates only primitive nodes that are within a structure called cone (see [38] for details). Cones are a widely-used concept in circuit synthesis. They compare the computational effort (as defined in [53]) of the original modular CGP with versions that allow either age-based or cone-based MA on circuit synthesis and classification problems. They found that in almost all cases the age-based technique was superior to the original MA. However, cone-based MA largely proved superior only on circuit problems.

3.3 Real-valued

Clegg et al. devised a genotype representation for CGP in which all genes are floating point numbers in the interval [0.0, 1.0] [10]. We refer to this as a real-valued CGP representation (RVCGP). The motivation for devising this representation was that crossover in CGP might be more effective using a real-valued representation. We will discuss this in more detail in Sect. 5. Real-valued CGP has an additional decoding step in which a standard CGP genotype is obtained from the real-valued genotype. The real-valued genotype still has genes grouped by nodes consisting of a function gene and a number of connection genes.

Assume that the node function look-up table has \(n_f\) functions. Standard CGP node function integer genes, \(ig_f\) are obtained from the floating point gene, \(rg_f\), by examining which of \(n_f\) segments of the interval [0.0, 1.0], \(rg_f\) lies within. This is accomplished with Eq. 2.

For instance assume there are four node functions. Then if \(rg_f\) is less than 0.25 then \(ig_f = 0\) while if \(rg_f\) is greater than 0.25 and less than 0.5, \(ig_f=1\), and so on.

Real-valued connection genes, \(rg_c\) corresponding to node address, \(n_p\) are decoded to standard integer connection genes, \(ig_c\) by dividing the unit interval into \(n_p\) segments. The equation that accomplishes this given in Eq. 3.

For instance, suppose that \(n_p=6\), and there are two program inputs and four nodes. Then the unit interval is divided into as many segments as the node address (i.e. 6). If \(rg_c\) is between 0 and 1/6 then \(ig_c=0\), so that the first input to node 6 is the first program input (whose address is 0), if \(rg_c\) is between 5/6 and 1 then \(ig_c=5\) and the first input of node 6 is connected to the output of node 5 (the previous node). Output genes are decoded in the same way as the connection genes corresponding to node address of one more than the highest node address.

Using floating point values as genes for CGP has the potential to make the genotype more evolvable since when small changes are made to the genes the gene can move gradually to a value which will result in genotype change (assuming Gaussian mutation), whereas in standard CGP random mutations either abruptly moves a node input connection to an entirely different node, or changes the function of the node. Also, another motivation was to transform the discrete nature of CGP genotypes to smooth functions of n variables [10]. Finally, it allowed the prospect that much of the research into the optimization of real-valued vectors using evolutionary algorithms could be applied to CGP and hence the evolution of programs or other computational structures. Indeed, Clegg (see Sect. 5) used a form of crossover showing it appeared to be beneficial for symbolic regression problems. However, in his master’s thesis, Turner [101] examined RVCGP on three additional classes of computational problems, digital circuit synthesis, function optimisation and agent-based wall avoidance. On these problems, it was found that RVCGP together with the crossover operation performed worse than standard CGP. However, he found that implementing the RVCGP representation but with the same selection and mutation methods as CGP gave equivalent performance to CGP.

Meier et al. interpreted the RVCGP genes as mean values in a multivariate Gaussian distribution [63]. From these distributions new genotypes can be sampled. They defined an operator called forking which decides whether a genotype should be interpreted either as a point or as a distribution in genotype space. The decision to fork uses population statistics based on an analysis of phenotypes (called fingerprints). Early in evolution an individual’s phenotype is likely to be rare in the population, in which case the forking operator is more likely to interpret the individual as a point. As evolution progresses, individuals focus on fewer regions so that the current individual’s phenotype may be shared by other individuals. In this case, its phenotype fingerprint frequency increases and the forking operator will be more likely to interpret the current individual as a distribution, so that sampling happens more frequently. They evaluated their approach on four symbolic regression problems and found the forking operator reduced the number of generations to converge to high fitness and the computation time per run, as compared with standard RVCGP.

Walker et al. modified the RVCGP representation to encode transistor circuits [121]. Six floating point genes were used to specify each CGP node. Four genes defined the transistor characteristics while two specify the node inputs. A three-stage genotype to phenotype mapping was employed to obtain a valid circuit simulator netlists that could be simulated using the SPICE simulator.

Wilson et al. used a recursive variant of the real-valued representation to evolve highly effective Atari game playing agents in [125]. In addition, each node also had a parameter gene which used used by some node functions and was also used to weight the node output (as used in [52]).

3.4 Implicit context

In the Sect. 2 we saw that in CGP all nodes and inputs have an address which expresses where they are positioned in the genotype. Lones created a form of GP called enzyme GP in which the structure of a program is not given explicitly (as in most forms of GP) but is derived from connection choices made by each component of the program in a bottom-up fashion. He argued that in biological genomes the location of genes is relatively unimportant and criticized GP systems for being positionally dependent [60]. However, this point of view is at best debatable since some biological studies indicate that genes are located in positional neighbourhoods [12]. Furthermore, it has been discovered that a gene’s location in a chromosome does play a significant role in shaping how an organism’s traits vary and evolve [84].

Smith et al. [95] adopted many aspects of Enzyme GP and proposed a new representation of CGP called implicit context CGP (ICCGP). In enzyme GP and ICCGP program nodes are called enzymes. Each enzyme has a type (referred as ‘activity’), a number of binding vectors (\(\underline{b_1}\), \(\underline{b_2}\)), an output vector called a shape, \(\underline{s}\) and a function gene (see Fig. 2). The elements of the vectors are integers in the range 0 to 255 (though in some implementations real numbers between 0 and 1 are used). Type merely indicates whether the enzyme is a program input, computational node or a program output. The number of elements that a binding or shape vector have is given by the sum of the number of external program inputs, \(n_i\) and the number of possible computational functions in the function look-up table, \(n_f\).

In ICCGP nodes are called enzymes. An enzyme has two binding vectors and a shape vector. Each binding or shape vector has a number of elements equal to the sum of the number of program inputs and the number of possible node functions. The shape vector is a function of the binding vectors and a vector representing the enzyme function (Eq. 4). The binding and shape vectors determine how enzymes will bind to other enzymes, and hence, assemble the CGP graph. The computational inputs are X and Y and the enzyme has one computational output. In this example, the enzyme function is a simple if statement

As with CGP, the number of bindings (inputs) an enzyme has, is defined by the the arity of the enzyme function. Enzyme’s of type 0 have only a shape as these represent external inputs and enzyme’s of type 2 only have a single binding vector as these represent external outputs. An enzyme’s shape is defined as:

where \(\underline{f}\) is a vector of the same length as the binding vectors, but whose only non-zero element is 255 at position \(n_i+f\) where f is the enzyme’s function gene. The multiplying constants ensure that the elements of the shape vector are in the range 0 to 255. The idea behind shape is that it represents the affinity that a component has to form connections with other components.

Genes are initialised randomly. An assembly process creates the phenotype. It starts at the type 2 enzymes (i.e. outputs). These bind to other enzymes (types 0 or 1). Binding is how connections between computational nodes occur. The binding vectors define whether and how strongly an enzyme can bind to another enzyme’s shape. This is determined by the degree of matching between the binding of one enzyme with the shape of another. Input enzymes have a shape, outputs a single input binding. Connections between enzymes, inputs and outputs are determined by which bindings form the strongest match with shapes. The smallest difference between the elements of the binding vector and shape indicates the strongest match. Construction of the phenotype is similar to standard CGP. It begins with outputs binding to component or input shapes. Then these components bind and so on, until all enzyme’s are bound. At this point a CGP-like phenotype can be obtained. The genotype has \(L_{iccgp}=(n_i+n_n+n_o)(n_i+n_f)\) elements. Genotypes are mutated via point mutation and bindings and enzyme functions can be mutated at different rates.

The length of the genotype representation in ICCGP is much greater than in CGP as each enzyme has \((n_i+n_f)\) elements compared with \(n_a+1\) in CGP. For instance, suppose we choose 100 nodes or enzymes (a modest figure in CGP). Assume also that the problems we are trying to solve have many inputs (e.g. Boolean circuits with say 50 inputs and five outputs) and there are many possible node functions (say 16) then the genotype length would be \((50+100+5)(50+16) = 10{,}230\) genes compared with 305 in standard GP! As a consequence, ICCGP has only been applied with a very modest numbers of enzymes.

To date, as far as the author is aware, there has been only a single comparison of the computational efficiency of ICCGP with CGP and this was in the very limited context of the three input parity function over a range of rectangular topology parameters [8]. Thus, it remains unclear whether ICCGP offers any computational benefits. Despite this ICCGP has been extensively and successfully applied to various problems in medical diagnostics [56, 58, 59, 93, 94, 96,97,98].

3.5 Self-modifying

Self-modifying CGP introduced another type of node, a self-modifying (SM) node, one that refers to the code itself [26, 28, 29]. Such nodes modify the phenotype. For instance, a node may be a deletion operation, which deletes CGP nodes between two positions in the phenotype. Such operations imply that the phenotype is iterated in something akin to a developmental process. The process is as follows. The genotype is first duplicated to create the first phenotype. So here phenotypes mean genotype-like strings of numbers in the same format as CGP. The active self-modification nodes in the phenotype are applied one after the other to produce a new phenotype. This process defines one iteration. This is repeated until either there are no SM nodes in a phenotype or until a user-defined limit on the number of iterations is reached.

The presence of SM nodes makes it more appropriate to replace absolute node addressing as in standard CGP with relative addressing. The latter is where connection genes count back from the node position. In addition, functions were introduced that provided external inputs (via a circular register) or wrote calculations to external outputs. This was done so that SM operations could change the number of inputs and outputs. SM nodes also require parameters which dictate where they operate in the phenotype (i.e. copy nodes between n and m and insert them at position p).

SMCGP can produce a sequence of phenotypes, each of which could solve a different computational problem (e.g. produce a series of parity functions of increasing number of inputs). This allows SMCGP to be applied to classes of problems that non-developmental encodings can not solve. Indeed, it has been shown that SMCGP could find provably general solutions to certain classes of problems: parity, binary addition [26], computation of \(\pi\) and e [27]

It is interesting to note that even without the presence of SM nodes, SMCGP introduced a possible modification of the standard CGP genotype representation (relative addressing and input/output functions). This was actually used in place of CGP in a number of papers [23, 24, 55]. However, to date, there has been no quantitative comparative studies of the two CGP representations and their efficacy.

3.6 Mixed-type

Harding et al. [23] introduced a form of CGP called mixed-type CGP (MTCGP) in which the data which flowed through the CGP graphs could have different types. In MTCGP node functions inspect the types of the input values being passed to it, and determine the most suitable operation to perform. This in turn determines the output type of the function. Node functions return a default value in cases where there is no suitable operation for the types being passed to the function. In MTCGP inputs can be variable length vectors of reals or individual values. Since MTCGP can handle multiple and mixed data types it allows a much wider range of node functions to be used. They used relative addressing and input gathering and output producing functions (as in SMCGP).

MTCGP was applied to a number of classification tasks in the UCI Machine learning repository: Wisconsin breast cancer, phoneme, diabetes, and heart datasets. For each of the classification tasks, the inputs presented to the program were both the entire attribute vector as well as the individual attribute values of the vector. They used a large set of primitive functions ranging over list processing, mathematical and statistical and compared results with a suite of well-known classification methods producing highly respectable results. Often also, the evolved CGP programs were very small and readable.

Wilson et al. in their work on evolving human-readable Atari game playing programs, extended multiple data types handling node functions to include also a matrix type [125].

3.7 Recurrent

CGP is usually described as a representation of acyclic graphs. However, it is straightforward to extend it to recurrent or cyclic graphs [108, 109]. To do this, the restriction that connection genes of a node must have values less than its position is lifted, so that it can connect to itself and any other node. The genotype is decoded in a very similar way to standard CGP to produce a list of active nodes. First the active nodes need to be determined. This can be done recursively from output to inputs in the usual way for CGP except that one only adds new nodes to the active list, not ones already present (this breaks cycles). After this the following steps are carried out

- 1.

set all active nodes to output zero

- 2.

apply the next set of program inputs

- 3.

update all active nodes once from program inputs to program outputs

- 4.

read the program outputs

- 5.

repeat from 2 until all program input sets have been applied.

Of course, in step 3 one could choose to update active nodes more than once and use some function of the output values (i.e. the average). However, there would always be the issue of when to stop.

To control the numbers of recurrent versus non-recurrent connections, Turner et al. introduced a additional parameter called recurrent connection probability which controlled the likelihood of recurrent and non-recurrent connections. The performance of RCGP was compared with CGP on a number of benchmarks [108], the Artificial Ant, Sunspot prediction and a number of integer sequences [109]. In all cases RCGP outperformed CGP significantly. In addition RCGP outperformed various published methods for the Fibonacci sequence. RCGP should be regarded as “standard” CGP in that when the recurrent connection probability is zero it becomes the original form of CGP.

3.8 Iterative

In iterative CGP (ICGP) conditional cyclic loops can be represented [86]. To accomplish this a linear CGP geometry is assumed (i.e. one row and multiple columns) with levels-back set to be the number of columns. All nodes have four genes. The first gene is a node function gene, the next two are connection genes and the last gene represents a Boolean condition which determines whether a loop should be continued or terminated. The first connection of a node always refers to a node previous to the node (i.e. a standard feed-forward CGP connection). The second connection gene is either ignored or represents a cycle. The gene is ignored if it refers to a previous node or input. However, it may refer to a subsequent node (or itself) in which case it defines a loop. The nodes between the position of the calling node and the forward connection are then in a cycle. The condition gene is an address in a look-up table of possible loop exit conditions. If it exits then the next instruction is decided by the node immediately after the end of the loop, if it does not exit it executes the instruction in the next node on the right. There is a single output gene (\(O_A\)) which points to the last executed node (10). An example genotype is shown in Fig. 3.

An example of an iterative CGP genotype. There are ten nodes each with four genes. The node function is shown underlined. The second gene represents a feed-forward connection. The third gene can either refer to a previous node (in which case it is ignored) or refers to a subsequent node in which case it represents a cycle or loop. The fourth node gene (in italics) is the address of a Boolean loop exit condition. It is shown on the cycles. In this example there are two nodes that are not referred to so are ignored (shown dashed)

In the example, the nodes are executed in the following order: 1, 2, 3, 6, 7, 2, 8, 1, 9, 10. We have assumed that on the second call of node 2, condition 1 is met, so execution passes to the next node after the loop (node 8) and thence to 1. We also have assumed in this example that loop condition of node 1 (2) is also met thus causing program execution to move to the next node (9) after the loop terminates at node (8). There are some rules required to enforce valid loops:

- 1.

For any nodes inside an existing loop, branching genes can only connect to either any previous node or input (acyclic) or a node with a higher index that is inside the current loop (cyclic). For instance, in Fig. 3, the branching gene of nodes 3–6 can be valid if its value is lower than 7. However, any branching genes greater than the node label would create loops within the already existing loop starting at node 2 and ending at node 7.

- 2.

For any nodes outside an existing loop, their branching genes can only connect to a node that is outside any existing loops. For instance, because the nodes 2–7 are already in a loop, the branching gene of node 1 can only point to either the input or nodes 8, 9, or 10.

Ryser-Welch applied iterative CGP to three classes of problems: travelling salesman, mimicry and nurse-rostering [85, 86]. For these problems, the node operations were very sophisticated and applied existing heuristic algorithms rather than merely a mathematical function of input data. In this way it was shown that new human-readable, standalone problem-solving algorithms could be produced.

3.9 Differentiable CGP

Genetic programming is normally considered as a derivative-free method, however in an impressive paper Izzo et al. [33] show how it is possible to obtain a complete representation of the differential properties of a program encoded by a genetic programming expression. They applied this to CGP creating a new form of it called called differentiable CGP (dCGP). In dCGP all connections have weights (like artificial neural networks) and node functions are represented as truncated Taylor expansions of a given order. They show that this allows arithmetic operators +, −, *, / to be extended to operate on truncated Taylor expansions. For instance, using this idea they show that a CGP encoded function \(O_0 =sigmoid(yz+1)/x\) can be written also as second order Taylor expansion of differentials,

The dCGP approach means that not only can the output function of a CGP program be computed at a given point but also all its derivatives up to a given order. Their approach makes it possible to apply concepts such a back propagation to CGP encoded programs.

They evaluate the dCGP technique on a suite of varied symbolic regression problems in which \(\pi\) and e appear. Newton’s method was used to back-propagate the errors on ephemeral constants in evolved expressions to determine the constants in the Taylor expansions. The fitness is the final error. They showed that they could evolve the target formulae exactly (with all weights set to 1). However, they note a drawback to this method: the number of ephemeral constants used as additional terminals needs to be pre-determined. However, by associating weights to every edge of the graph, the differential properties of the error with respect to weights can be determined. This allows symbolic regression to be carried out with no extra terminal inputs but the values for all the weights has to be determined. Since there can be many weights, the dCGP method selects random weights and then iteratively back-propagates the errors. They used a \(1+4\) evolutionary strategy to evolve the dCGP expression and interestingly, each offspring, \(i = 1\) to 4 received i mutations. They also showed that dCGP could be applied to solve partial, ordinary differential equations and to search for expressions that are prime integrals of sets of differential equations

3.10 Graph programming

Recently, an interesting new method of evolving graphs has been proposed. It is called evolving graphs by graph programming (EGGP) [7]. This method evolves graphs directly rather than using linear or grid-based genotypes. Using a probabilistic extension to the graph programming language, GP2, Atkinson et al. are able to evolve graphs. They show firstly that any CGP individual can be represented as an EGGP individual, whereas the converse may not always hold when the number of rows in a CGP individual is greater than one. Secondly they note that some feed-forward preserving mutations in EGGP are not possible in the standard form of CGP. Figure 4 shows how an allowable mutation in EGGP is not allowed in CGP. The EGGP approach is shown to significantly out-perform CGP on a collection of circuit benchmarks (particularly the harder benchmarks).

However, it should be noted that since recurrent CGP allows both feedforward and feedback connections the mutations which are illegal in standard CGP but possible in EGGP are also legal in recurrent GP. It may also be possible to introduce a new mutation operator to make these currently illegal mutations possible in standard CGP.

Taken from [7] (Color figure online)

Example of illegal CGP mutation but allowable graph transformation of a Boolean function. An EGGP mutation which changes a connection (red) from node 2 to node 1 is replaced with a connection (blue) directed to node 3. This mutation produces a valid circuit but is impossible in standard CGP as mutations have values less than the node position (to guarantee having a feedforward) property

3.11 Positional CGP

Positional CGP [126] is an interesting new real-valued representation of CGP in which inputs, and nodes have evolvable positions. All node genes are floating point numbers in range [0, 1], which correspond to the connections of each node n, \(x_n\) and \(y_n\), the node function \(f_n\), and a parameter gene \(c_n\) which can be used to weight the output of nodes or act as a parameter of a function. In addition, every node has a position gene \(p_n\) which determines the position of the node. Connections are formed by converting the connection genes \(x_n\) and \(y_n\) to coordinates by multiplying the genes by the node position, \(p_n\) and then “snapping” these connections to the nearest node. Inputs also have evolvable position genes, \(i_n\) but are constrained to the interval \([-1, 0]\). Output genes do not have positions and take values in the interval [0, 1]. A small example of the PCGP representation is shown in Fig. 5.

Taken from [126]

Example of PCGP genotype. A PCGP genome (a), including input \(i_n\) and positional \(p_n\) genes. These are translated to input and node positions (b) and connection positions “snap” to the nearest node. As in CGP, a resultant graph (c) and output program (d) are then extracted.

Since node positions are evolved, it is highly unlikely that two will occupy the same position, even between different genotypes. Furthermore, over evolution, nodes which are connected can have positional genes and connection genes which are highly related. Finally, a node’s connection positions depend only on its position, which is in its genes, rather than the node’s placement in the genotype. This allows node genes to be exportable; the same genes in a different individual will form connections in the same place. If multiple genes are exported together, entire sections of the graph can be migrated between individuals. In PCGP, nodes can be added or removed from a genome without disturbing the existing connection scheme, unlike in CGP, where a node addition and deletion causes a shift in all downstream node positions. Experiments were carried out comparing PCGP with CGP on nine benchmarks (three each of classification, symbolic regression and reinforcement learning). Results showed PCGP to be superior to RVCGP on classification and regression benchmarks but inferior on reinforcement problems.

3.12 Genotype-phenotype complexity

It is important to realise that translating a CGP genotype to a phenotype is done once per genotype. Evaluating the fitness of a genotype is expected to far outweigh this decoding time. However, on problems where fitness evaluation is very fast, the decoding time for different CGP variants may be significant.

The decoding of genotype to phenotype in standard and PDGP is straightforward. One begins at the output nodes and recursively activates nodes that are required until inputs are encountered. Along the way, a list of active nodes and their connections are stored for later use (i.e. the phenotype). This decoding step only needs to be carried out once per genotype. Data is only presented and fitness calculated using the stored phenotype. In modular CGP, the decoding is more complex as the phenotype is a collection of CGP programs consisting of the main program and the CGP code of all the modules. When modules are captured, the captured code is re-written so that module code refers to its inputs in the same way as standard CGP refers to program inputs. Also when modules are destroyed, the modular code has to be translated back into standard CGP format. This “book-keeping” increases the time complexity of the decoding step. A large number of modules would increase the time taken for the decoding step. However, it is expected that for problems where modules are beneficial the evolution time would be shortened. The decoding step of RVCGP is little different from standard CGP, one merely requires an initial pass to convert the real-valued representation into standard CGP. In positional CGP one also needs to identify which nodes are located closest to the positions of the inputs to the nodes, this is an extra step so will increase genotype-phenotype decoding time. Self-modifying CGP requires self-modifying operations to be applied to the old phenotype to generate the new one. This obviously adds to the genotype-phenotype decoding time. However, by using a limited list of self-modifying operations one can control this complexity to acceptable limits. The decoding step in recurrent and mixed-type CGP is little different to standard CGP unless in the former case one recurses over the genotype multiple times (which is not done in practice, see Sect. 3.7). The complexity of the genotype-phenotype mapping in iterative CGP is slightly more complex due to branching conditions. Differentiable CGP has a genotype phenotype decoding that is little different from CGP, however gradient descent of weights would certainly increase the time required for fitness evaluation. Implicit context CGP as we have seen requires much larger genotype sizes and also undergoes matching operations to find out which components bind to each other, so genotype-phenotype mapping time would be longer than standard CGP.

4 Mutation

In standard CGP mutation either point or probabilistic mutation can be used. In the former, the user decides the percentage of the total number of genes of a parent genotype to be mutated to create an offspring. In probabilistic mutation every gene is considered for mutation according to a user-defined probability. Point mutation is easier to implement and more efficient than using a probabilistic mutation as one does not need to linearly walk through the genes to decide which ones to mutate. However, choosing a discrete number of genes to mutate means that only certain mutation rates can be chosen. Probabilistic mutation is continuous and very low mutation probabilities can be chosen and investigated. It is also sometimes useful to use different mutation rates for different classes of genes (connection, function, output). However, the utility of this has not been investigated in detail.

One of the very interesting aspects of the CGP representation is that a large variety of phenotypes can be found by applying mutation. Since many genes in CGP are redundant, often mutations occur only in the redundant regions, which means that the mutated genotype has the same phenotype as its parent. In such cases one does not need to carry out a fitness evaluation (see below). However, other mutations might change an output gene. In this case the program output comes from a formerly redundant node, which in turn may connect to previously redundant genes. This can cause large changes in the phenotype.

Goldman and Punch compared several mutation strategies on a range of circuit benchmarks [19]: Normal, Skip, Accumulate and Single. Normal is just standard mutation (probabilistic) with no check for offspring having identical genotypes to their parent. Skip checks offspring to see if the phenotype is identical to the parent (by comparing active genes) and if so returns the fitness of the parent. Accumulate continues to mutate an offspring until some of its active genes are different from the parent. Single mutates the offspring until one active gene is changed. They found that the performance of Skip and Accumulate were fairly insensitive to mutation rate whereas Normal’s performance was very sensitive to mutation probability. Overall, the performance of Single which has no mutation parameter was close to the best performance of the other strategies.

There is a considerable length bias in standard CGP in favour of small phenotypes [18]. In addition there is a strong positional bias in CGP in that it is much more likely that nodes on the left side of the genotype (i.e. close to the inputs) will be active. This is simply because the inputs of any node on the right of a given node are allowed to connect to it. For instance the first node can be connected to by the input of any node on its right. While the penultimate node on the right can only be connected to by either the last node or an external output. These biases mean that the location of inactive nodes in the genotype are not distributed evenly and nodes toward the right (towards the outputs) are likely to have many inactive nodes between them.

In a comprehensive and detailed study of CGP, Goldman and Punch investigated two strategies for compensating for positional bias [20]: Reorder and DAG. In the former, prior to mutation of a parent, the position of nodes in the parent are shuffled without changing the semantics of the phenotype. Reorder is used once each generation to shuffle the nodes of the parent’s genome. As the shuffling does not semantically change the parent, it does not require re-evaluation. Reorder causes active nodes to be, on average, more likely to be located halfway between the input and output ends of the genotype. The DAG strategy allows connections to be feedforward or feedback (as long as no cycle is created) and removes the positional bias in CGP. In an extensive series of experiments they concluded that Reorder had the best performance overall. Of course, this should also be combined with Skip. Further, when Reorder (or DAG) was not used, the number of nodes never used during evolution was between 43% or higher (depending on benchmark problem)! They also found that large numbers of nodes compute a constant value irrespective of the program input. They suggested also that the advantage of large amounts of inactive nodes is purely that they provide mutation with random nodes for exploratory purposes (i.e. useful previously found sub-structures are not stored in inactive code). This accords with the author’s unpublished finding that randomising inactive genes prior to mutation appears not to cause any performance impact. It would be interesting to reduce the rate at which inactive nodes were randomised by mutation to see if allowing memory of past useful structures in inactive code gives some advantages.

It would also be interesting to investigate how mutation could be defined so that the chance of any node being connected would be equal for all nodes. This would require mutation to be dependent on position so that the connection gene is much more likely to be changed to connect to nodes that are nearer to it. A simple way to achieve this would be to record the number of mutations that have occurred so far at each node location during an entire evolutionary run. A new mutation could be restricted to choosing to mutate only the least mutated node. This would ensure that any node was equally likely to be connected to.

Kalkreuth [34] introduced an interesting new pair of mutation operators into standard CGP: inactive node activation and active node deactivation. He referred to these as “insertion” and “deletion” respectively. An insertion mutation chooses an inactive node and changes one or more connection genes in the genotype to make it active. Conversely, deletion alters connections to an active node so that the node becomes inactive. He examined the impact of the new mutation operators (operating together with the standard point mutation) on three Boolean benchmarks and a suite of symbolic regression problems. On all problems, the two operators gave improved performance. More detailed studies are required to confirm and strengthen these findings.

Vašíček and Sekanina made a breakthrough in the optimisation of digital circuits by showing how circuits with many inputs could be optimised using CGP by starting with a state-of-the-art logically correct reference circuit and employing a SAT solver algorithm to decide if an evolved circuit was logically correct. If it is incorrect it receives a fitness of zero, if correct the fitness is the difference between the genotype size in nodes and the number of gates utilised. This technique has allowed the optimisation of industrial-sized digital circuits [120].

In this technique, Vašíček [120] discovered the surprising result that there is no need to utilise inactive nodes in CGP when optimising fully functional digital circuits! He defined a “zero neutrality” search process by ensuring that mutation respects the following conditions: (a) inactive gates are unchanged (b) active gates (or primary output) can not connect to an inactive gates and finally, (c) the second input connection of single-input gates are not mutated. He compared the performance of this mutation against standard CGP on a suite of 100 industrial-sized circuits and found that the performance of the two were statistically indistinguishable. This needs more investigation on a wider set of problems.

The genotypes in the initial population were exactly the size of the circuits synthesised by the ABC algorithm.Footnote 2 In other words there was no redundancy in the initial circuits and redundancy could only arise by some gates not being required (i.e. by successful optimisation). It would be interesting to see what the results would have if the initial genotype had been larger than that produced by ABC by adding inactive gates. Vassilev et al. [118] found that smaller three-bit multipliers were found by encoding a working multiplier with a small amount of redundancy. This is also supported in the work of Gajda and Sekanina [16] who discovered while optimising digital circuits that in long evolutionary runs strictly selecting circuits which had fewer gates did not produce as small circuits as merely choosing circuits that were functionally correct (that is with lower fitness). They showed that the frequency of occurrence of correct circuits was greater using the weaker fitness criterion. This highlights the importance of neutral drift for exploration and the importance of maintaining redundancy.

5 Crossover

In standard CGP crossover is not used. In his original paper on CGP [65], Miller found that crossover appeared to have little effect on the efficiency of CGP and for the most part, subsequent work ignored crossover.

In real-valued CGP Clegg et al. [10] used a simple method of real-valued genotype crossover called “flat” crossover [82] which generated two offspring, \(o_i\) from two parent genotypes, \(p_i\) using Eq. 6, where \(0<r_i<1\) is randomly generated and \(i=0,1\)

Kalkreuth et al. [36] investigated RVCGP using adaptive crossover, mutation and selection. Adaptation of these operators is based on maximising the diversity of phenotypes in the population. They compared their technique with Clegg’s RVCGP on a number of symbolic regression problems showing that the new approach improved performance.

Walker et al. devised a multi-chromosome representation which could be applied to special classes of problems [124]. They looked at seven multiple output digital circuit problems and instead of allocating as many output genes as circuit outputs they divided the genotype into as many chromosomes as the number of outputs. For the problems chosen (all digital circuits) the fitness of each chromosomes could be assessed independently. The parent genotype in the \(1+\lambda\) EA was constructed by choosing each of the fittest chromosomes. For all benchmarks this produced spectacularly better results than the one chromosome version. Indeed for one problem it was possible to evolve a solution approximately 392 faster than using a single chromosome with the same total number of nodes.

However, most computational problems do not have the property that multiple outputs can be evaluated for fitness independently. It would be interesting to investigate a multi-chromosome form of CGP in such cases. One way this could be done would be to allow multiple chromosomes each providing a single output and also having an additional “coordinator”chromosome which uses the outputs of the other chromosomes as inputs. Fitness would be determined from the coordinator chromosome only. This would allow parent genotypes to produce offspring by crossover of non-coordinator chromosomes. Indeed, the coordinator chromosome would be free to utilise any or all of the non-coordinator chromosomes.

Slaný and Sekanina examined how various crossover operators and standard mutation affected the smoothness and ruggedness of the series of fitness values of the best population member in each generation. They examined this in the domain of CGP applied to image filter design. They found that either point mutation (with \(\lambda = 7\)) or single-point crossover where only one offspring of the crossover operation is mutated and included into the new population, generated the smoothest fitness landscape.

In their work on new techniques for acquiring modules in modular CGP (see Sect. 3.2), Kaufmann and Platzner also investigated a cone-based crossover operator to be used with a genetic algorithm (rather than \(1+\lambda\)-ES). This generates a recombined chromosome by transplanting a cone of a donor chromosome into a clone of a recipient chromosome. They investigated the utility of the crossover operator using various population sizes using a genetic algorithm. They found smaller population sizes worked best in the new approach but it only performed better than the original modular CGP technique on two of the six benchmark problems (two and three-bit multipliers).

Kalkreuth et al. have recently investigated sub-graph crossover in CGP [35]. Sub-graph crossover is like single-point crossover except that the active nodes both sides of the crossover point are preserved. The crossover point is always chosen so that it lies between nodes. This idea of sub-graph crossover is that it should reduce the disruption caused by single-point crossover in standard CGP and truly recombine meaningful sub-graphs. If after single-point crossover active genes would change then the connection genes on the right of the crossover point are randomly re-generated to preserve the active genes on the left of the crossover point. They used a standard genetic algorithm with population size 50 and tournament selection. They evaluated the utility of the new crossover operator for a range of crossover rates on a suite of benchmark problems in circuit design, symbolic regression and image filter design. Often high crossover rates were beneficial and in all cases a nonzero crossover rate performed better than without crossover. However, they did not compare their results with standard CGP so it still remains unclear whether crossover has utility over purely mutational CGP.

Kalkreuth and Husa have recently proposed block crossover [32]. This is defined using the one-dimensional representation of CGP. First before carrying out block crossover blocks must be identified in two parent genotypes. Blocks are groups of nodes that meet the following criteria: (1) The block contains a desired number of nodes, (2) All nodes in the block are directly linked through their inputs or outputs, (3) All nodes in the block are part of the genotype’s active path. Block crossover then randomly selects one block from each genotype and swaps them. The position of the nodes transferred as part of the block may change inside the new genotype. However, their mutual links are preserved and the function performed by the block stays the same. After block crossover, point mutation is applied. They conducted parameter sweeps with standard CGP (\(1+\lambda\)) versus genetic algorithms using block crossover on a suite of Boolean functions and symbolic regression problems. They found that there was no single set of crossover parameters that worked best over the problems. They also found that the best value of \(\lambda\) varied with problem. Although it is possible for crossover operators to outperform the standard \(1+\lambda\) strategy, if both methods have their parameters fine-tuned, the \(1+\lambda\) strategy usually remains as the overall best strategy.

6 Search algorithms

6.1 Evolutionary strategy and hybrid algorithms

Although the most usual search algorithm used in CGP is \(\mu +\lambda\) evolutionary strategy with \(\mu =1\) and \(\lambda =4\), sometimes larger values of \(\lambda\) have been chosen. For instance \(\lambda =14\) [16] was used in digital circuit optimisation and \(\lambda =8\) in the evolution of image filters [88].

Recently Milano et al. [64] examined the robustness to mutation and the evolvability of CGP genotypes encoding digital circuits. Mutational robustness is capability of a system to preserve its functionality after mutations. Evolvability is the likelihood of producing adaptive heritable phenotypic variations as a result of mutation (we are not considering crossover). Their experiments used a \(20\times 20\) array of two input logic gates (AND, OR, NAND, NOR) and the aim was to find a functionally correct even-5 parity function ( a moderately difficult task).

They studied two \(\mu +\lambda\) evolutionary strategies. The first uses \(\mu +1\) and the second is \(1+\lambda\). In \(\mu +1\) each of \(\mu\) parent are mutated to produce a single offspring, then the best \(\mu\) individuals from the \(2\mu\) genotypes are chosen as the new population. Having \(\mu > 1\) allows the population to have a greater genetic diversity, since multiple mutational searches take place around the multiple parents. However, they observe that in this method the offspring of individuals that are more robust to mutations have more chance to be selected than the offspring of individuals that are less robust. They show that this drives evolution to produce small phenotypes and it has markedly worse performance than \(1+\lambda\).

They proposed a new algorithm which they call a Parallel Stochastic Hill Climber (PSHC) is a combination of \(\mu +1\) and \(1+1\). The aim is to preserve population diversity while maintaining strong selection pressure. In this algorithm each of the \(\mu\) parents, \(p_i\) is adapted using a \(1+1\) evolutionary strategy for a certain number of steps. The best candidate solution obtained after this phase, \(\acute{p_i}\) is then used to replace \(p_i\) and, also with a certain low probability, the worst individual of the population. Additionally, they allow a certain degree of stochasticity in the \(1+1\) phase by adding a random variation to the fitness, however at the end of this phase the decision whether or not to replace \(p_i\) by \(\acute{p_i}\) is on the basis of the true fitness.

They found that performance of the PSHC algorithm is statistically significantly better than the \(1+\lambda\) algorithm.

Hybrid search algorithms have also received attention in the work of Kaufmann and Platzner [39]. They investigated in particular hybrids of \(1+\lambda\) (as a form of local search) with either of the multi-objective algorithms, NSGAII [13] and SPEA [131]. They found that for some multi-objective benchmarks and for all digital circuit benchmarks the hybrid algorithms outperform NSGAII and SPEA.

6.2 Simulated annealing and unusual \(\mu +\lambda\) evolutionary strategies

Kaufmann et al. recently conducted a large and detailed empirical study of the performance of CGP various \(\mu +\lambda\) with a wide range of values of \(\mu\) and \(\lambda\) and also looked at simulated annealing (SA) [37]. They also used Iterated Race for Automatic Algorithm Configuration (iRace) [61] to optimise CGP parameters, \(n_c\), \(n_r\), \(\mu\), \(\lambda\) and the mutation rate. They found that for harder digital circuit problems (e.g. 3-bit multiplier, and even-parity with higher than six inputs) SA was the best performing search algorithm. SA worked best with unusual topologies (e.g. 300 columns and 10 rows, or 150 columns and 8 rows). They also examined the performance of various \(\mu +\lambda\) strategies on a suite of symbolic regression problems where they found that large values of \(\mu\) and \(\lambda\) performed very well (e.g. \(\mu =22\), \(\lambda =4096\)). However, unfortunately, they did not choose a fixed maximum number of nodes (\(n_n=k\), where k is a constant). \(n_n\) is an extremely important parameter for CGP. It is easy to see why. Choosing \(n_n\) to be small constrains neutral drift markedly and it is well understood that neutral drift in CGP is an extremely important search mechanism (Sect. 2.3).

6.3 Balanced CGP

Yazdani and Shanbehzadeh [127] pointed out that CGP does not have any possibility of sharing information among solutions. To achieve this they suggested incorporating features from biogeography-based optimization (BBO) [92] and opposition-based learning (OBL) [100]. They applied BBO’s migration operator which allows information sharing between individuals. They also introduced a new mutation operator inspired by OBL. They evaluated their approach on five symbolic regression problems. The found that the proposed BCGP method outperforms traditional CGP in terms of accuracy and the convergence speed.

6.4 Coevolution

Šikulová et al. [14, 90] developed a combination of fitness prediction with coevolution in CGP to reduce the number of expensive full fitness evaluations. The method replaces some of the objective fitness evaluations with an alternative fitness calculated using a fitness predictor. The fitness predictors are coevolved in a second population in order to provide accurate fitness predictions. The population of solutions is evaluated with the current best fitness predictor while the population of fitness predictors evolves to minimize the difference between the true fitness and the predicted fitness when measured using the current population of solutions.

Fitness predictors are represented as an adapted variable size array of pointers to elements of a subset of the training data. The population of candidate programs are evolved with the usual \(1+\lambda\) evolutionary strategy and the population of fitness predictors are evolved with a simple genetic algorithm. They also use two archives one of fitness trainers and one containing the best evolved fitness predictors. The archive of fitness trainers is used by the predictor population for the evaluation of evolved fitness predictors. It contains copies of selected candidate programs obtained during the evolution. The fitness predictor from the other archive is used to evaluate candidate programs. The overall methodology is depicted in Fig. 6.

Coevolution of populations of CGP programs (a) and fitness predictors (b) [14]. Some of the objective fitness evaluations are replaced with an alternative fitness calculated using a fitness predictor. The population of solutions is evaluated with the current best fitness predictor. The population of fitness predictors evolves to minimize the difference between the true fitness and the predicted fitness. An archive of fitness trainers (c) is used by the predictor population to evaluate evolved fitness predictors. Fitness predictors are represented using variable size array of pointers to elements of a subset of the training data (d)

Drahosova et al. [14] evaluated their method on suites of varied symbolic regression problems and image filter design problems. They showed that the coevolutionary approach outperforms standard CGP in terms of CPU time required to converge.

7 Acceleration of CGP

Vašíček and Slaný developed an efficient acceleration technique designed to speedup the evaluation of candidate solutions in CGP. The method translates a CGP phenotype to machine code that is consequently executed. An attractive feature of the method is that the translation mechanism requires only a marginal knowledge of target CPU instruction set. They exemplified the technique by applying it to a symbolic regression problem. It was shown that for a cost of small changes in a common CGP implementation, a significant speedup can be obtained even on a common desktop CPU.

Vašíček and Sekanina have also accelerated CGP for circuits, symbolic regression [113] and image filter design [114]. In the former, they designed and built an application-specific virtual reconfigurable circuit (VRC) and fitness unit, obtaining speedups of 40 times optimized software implementations. In image filter design CGP was implemented on a single FPGA and gave a significant speedup (170) in comparison with a software implementation.

Coevolutionary CGP (Sect. 6.4) has also been implemented in dedicated hardware (FPGA) achieving 58 times speedups over highly optimized software implementations [31] .

Cartesian genetic programming (CGP) was one of the first genetic programming representations to take advantage of the general purpose computing capabilities of modern graphics processing units (GPUs) [22]. The implementation of CGP on GPUs was benchmarked on regression and Boolean problems and when compared to a naive, CPU-based C# implementation, was able to execute evolved programs hundreds of times faster. Harding et al. also implemented CGP on clusters of GPUs. This made it possible to evaluate the population in parallel, which in turn increased the speed at which the population could be evaluated [25].

8 Applications

Here we highlight some of the major application areas of CGP which are likely to continue to grow in the future.

Vašíček gives a thoughtful and insightful analysis concerning some of the problems that remain in moving CGP digital synthesis to industry [112]. He notes that when using CGP to optimise logically correct circuits a large number of new candidate solutions need to be generated and evaluated before a new candidate is found with the same or better fitness (about 180 on average). He attributes this to the fact that random point mutation is very inefficient and that some form of mutation which is invested with domain knowledge could be much more efficient. Perhaps one can define mutation operations that replace small sub-structures in a phenotype with alternative but logically equivalent sub-structures?

In the synthesis of approximate circuits and programs Vašíček and Sekanina opened up an area of research which is rich with research opportunities [89, 116]. In many application areas (e.g. digital filters, artificial neural network) one can trade-off accuracy with power consumption, speed, or other properties of digital circuits. However, to evolve large-scale approximate circuits with CGP requires an efficient method to determine the approximation error. They solved this using an equivalence checking algorithm operating over binary decision diagrams (BDD) [117]. Recent work has used CGP successfully to synthesize approximate circuits with formal guarantees of approximation error [9]. Impressively, they have been able to synthesise aproximate 32-bit multipliers and 128-bit adders.

CGP has been successfully used in the design of various kinds of image filters [24, 74, 88]. Sekanina pioneered the use of CGP for image filter design [87] and showed that image filters could be automatically designed that were competitive with conventionally designed filters both in image quality and hardware cost. Harding demonstrated the advantages of employing extensive domain knowledge in CGP. He used a large function set (over 50) which included many Open Computer Vision operations.Footnote 3 Using an island-based parallel implementation of CGP he examined its performance on various image related problems: noise reduction, recognising cell mitosisFootnote 4 and object recognition for the iCub Humanoid Robot. He obtained excellent results on all three problems and in particular the evolved iCub robot image filters were often extremely small and could track moving objects in live video stream under a range of lighting conditions. CGP using OpenCV is now being used commercially to detect faults in machined metal.Footnote 5

Artificial neural networks can be easily encoded in CGP by allowing all connections to have weights [46, 48, 49]. These are referred to as CGPANNs. Evolving ANNs with CGP allows the benefits of the CGP representation and techniques to be carried over to the evolution of neural networks (e.g. heterogeneous neural activation functions [107]). Also, it has been shown that evolving ANNs with CGP produced networks whose performance is much less sensitive to topology choices than fixed topology evolved ANNs [104]. Comparisons of CGPANNs with other neuroevolutionary methods on reinforcement learning tasks consistently show them to be competitive with many other neuroevolutionary methods [103, 111]. Recurrent CGPANNs also show promise in time-series prediction [111]. It should be noted that, in his PhD thesis, Turner [102] used R package implementationsFootnote 6 of many machine learning techniques and compared their performance with CGPANNs on classification problems. This appeared to show that although CGPANNs performed reasonably well they were not as good as many other methods. There is an oddity here, as on one particular dataset (breast cancer) CGPANNs were compared with published results for 31 other machine learning papers [103] and CGPANNs proved to give better results than 21 other techniques. It is not clear why CGPANNs appear not to perform so well on classification problems. Clearly, this issue needs further investigation.

Various other types of ANNs have been evolved using CGP including convolutional ANNs [99] and wavelet ANNs [47, 50]. There is now a growing literature on CGPANNs and they have been applied to many other applications including financial [130],medical [1,2,3,4], client prediction [5], load forecasting [41, 42, 45], internet traffic estimation [44] and signal reconstruction [51].

Other promising areas of application of CGP are in multi-step forecasting [15], and cryptography [75, 76]. Also further discussion of CGP applications is presented in [62].

9 Comprehensibility of CGP solutions

CGP phenotypes are often very small and interpretable, this is partly connected to the bias that CGP has towards small programs (see discussion in Sect. 4). This often means that CGP programs can be understood and offer insights and understanding into problem features and aspects that can be interesting and useful at the application level. This was seen in the small size of the object recognition filters (see Sect. 8), data classifiers [23], the understandable successful Atari game-playing strategies found by Wilson et al. [125] and the usefulness of CGP interpretable results to medical practitioners using the results of ICCGP [56, 58, 59]. CGP has also been used to create small readable data filters for drug discovery [17] and understandable intrusion detection programs [6]. This white-box property of CGP can be highly valuable.

10 Comparisons of CGP methods with other techniques

CGP has been applied to many application areas and benchmark problems. In Table 1, we summarize the comparative results published for CGP variants with other methods. The fourth column gives evidence for the high performance of CGP techniques. This is either by the ratio of minimum computational effort of tree-based GP (TGP) to CGP (above 1 means CGP is superior), or performance ranking compared with other machine learning techniques, or by type of medal received at the annual human competitive workshop at the GECCO conference.Footnote 7 CGP clearly excels in many domains but particularly in optimised circuits, image processing, classification, reinforcement-learning, time-series prediction and sequence induction.

11 Software

CGP implementations are available in a number of languages: C, C++, Java, Matlab, Python, Julia.Footnote 8 The three most extensive packages are described below.

A cross-platform, open source, extensive and extensible software libraryFootnote 9 has been written in C by Andrew Turner [106]. The package includes standard and recurrent CGP including Artificial Neural Networks. It has been designed to be simple to use and adapt. It defines a well documented CGP Application Programming Interface (API). This means that the user does not need to understand or edit the underlying implementation in order to use the CGP library. Also users can benefit from backwards compatible updates to the library. A compiled library has the advantage that it can be used natively by the C and C++ programming languages but also imported into other languages including Python. The CGP library can also be compiled for a wide range of operating systems as it only depend upon standard C libraries.

Differentiable CGP [33] is available in C++ and Python.Footnote 10 It includes examples and tutorials on a number of problem types.

Recently, a freely downloadable flexible toolbox called CGP4MatlabFootnote 11 has been developed [73] that allows CGP to be run within MATLAB. The toolbox is particularly suited to signal and image processing. As a demonstration Miragaia et al. used the toolbox for pitch estimation and obtained results comparable with the state-of-the-art.

12 Open questions and issues

We discuss a number of ideas, questions and open issues that are worthy of further investigation. It is very important when new algorithms and methods are proposed that they are evaluated on suites of different computational problems using benchmarks recommended by the wider research community. At present although the many operators and algorithmic variants look promising and interesting, further investigation is required to establish whether they have general advantages. Conducting experiments on the basis of both a fixed number of fitness evaluations and a fixed budget of active nodes processed appears offer promise for fair comparisons with different parameters or methods. Indeed, this could be adopted generally in the GP community.

12.1 Modularity