Abstract

Context

Model driven development envisages the use of model transformations to evolve models. Model transformation languages, developed for this task, are touted with many benefits over general purpose programming languages. However, a large number of these claims have not yet been substantiated. They are also made without the context necessary to be able to critically assess their merit or built meaningful empirical studies around them.

Objective

The objective of our work is to elicit the reasoning, influences and background knowledge that lead people to assume benefits or drawbacks of model transformation languages.

Method

We conducted a large-scale interview study involving 56 participants from research and industry. Interviewees were presented with claims about model transformation languages and were asked to provide reasons for their assessment thereof. We qualitatively analysed the responses to find factors that influence the properties of model transformation languages as well as explanations as to how exactly they do so.

Results

Our interviews show, that general purpose expressiveness of GPLs, domain specific capabilities of MTLs as well as tooling all have strong influences on how people view properties of model transformation languages. Moreover, the Choice of MTL, the Use Case for which a transformation should be developed as well as the Skill s of involved stakeholders have a moderating effect on the influences, by changing the context to consider.

Conclusion

There is a broad body of experience, that suggests positive and negative influences for properties of MTLs. Our data suggests, that much needs to be done in order to convey the viability of model transformation languages. Efforts to provide more empirical substance need to be undergone and lacklustre language capabilities and tooling need to be improved upon. We suggest several approaches for this that can be based on the results of the presented study.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Model transformations are at the heart of model-driven engineering (MDE) (Sendall and Kozaczynski 2003; Metzger 2005). They provide a way to consistently and automatically derive a multitude of artefacts such as source code, simulation inputs or different views from system models (Schmidt 2006). Model transformations also allow to analyse system aspects on the basis of models (Schmidt 2006) and can provide interoperability between different modelling languages, e.g. architecture description languages like those described by Malavolta et al. (2010). Since the emergence of the MDE paradigm at the beginning of the century numerous dedicated model transformation languages (MTLs) have been developed to support engineers in their endeavours (Jouault et al. 2006; Arendt et al. 2010; OMG 2016b). Their appeal is driven by the promise of many advantages such as increased productivity, comprehensibility and domain specificity associated with using domain specific languages (Hermans et al. 2009; Johannes et al. 2009).

A recent literature study of us revealed, that, while a large number of such advantages and also disadvantages are claimed in literature, there exist only a few studies investigating to what extend these claims actually hold (Götz et al. 2021). The study presents 15 properties of MTLs for which literature claims advantages or disadvantages. In this context, a claimed positive effect on one of the properties means an advantage whereas a negative influence means a disadvantage. The properties identified in the study are: Analysability, Comprehensibility, Conciseness, Debugging, Ease of Writing (a transformation), Expressiveness, Extendability, (being) Just Better, Learnability, Performance, Productivity, Reuse & Maintainability, Tool Support, Semantics & Verification and Versatility.

Our study also revealed, that most claims in literature are made broadly and without much explanation as to where the claim originates from (Götz et al. 2021). Claims such as “Model transformation languages make it easy to work with models.” (Liepiņš 2012), “Declarative MTLs increase programmer productivity” (Lawley and Raymond 2007) or “Model transformation languages are more concise” (Hinkel and Goldschmidt 2019) illustrate this. We assume that authors make such claims while having certain context and background in mind, but choose to omit it for unspecified reasons. Some likely reason for omission of the context are, that authors believe it to not be worth mentioning or to preserve space which is often sparse in publications.

Regardless of the concrete reasons, a result of this practice is a lack of cause and effect relations in the context of model transformation languages that explain both why and when certain advantages or disadvantages hold. Claims are thus easily dismissed based on anecdotal evidence. Furthermore, setting up proper evaluation is also difficult because the claims do not provide the necessary background to do so.

To close this gap, we executed a large-scale empirical study using semi-structured interviews. It involved a total of 56 researchers and practitioners in the field of model transformations. The goal of our study was to compile a comprehensive list of influences on properties of model transformation languages guided by the following research questions:

RQ 1: What are the factors that influence properties of model transformation languages?

RQ 2: How do the identified factors influence MTL properties?

To concentrate our efforts and best utilize all available resources, we decided to focus on 6 of the 15 properties of model transformation languages identified by us in the preceding SLR (Götz et al. 2021). The 6 properties investigated in this study are: Comprehensibility, Ease of Writing, (practical) Expressiveness, Productivity, Reuse and Maintainability and Tool support. We have chosen these six because they all play a major role in providing reasons for the adoption of model transformation languages.

Interviewees were presented with a number of claims about MTLs from literature and asked to reveal their views on the matter, as well as assumptions and reasons that lead them to agree or disagree with the presented claims. We qualitatively analysed the interviews to understand the participants perceived influence factors and reasons for the advantages or disadvantages stated in the claims. The extracted data was then analysed to find commonalities between interviewees. This was done for single claims as well as for overarching factors and reasons that influence a variety of aspects of MTLs.

We present a comprehensive explanation of factors that, according to experts, play an essential role in the discussion of advantages and disadvantages of model transformation languages for the investigated properties. This is accompanied by a detailed exposition of how factors are relevant for the properties given above. Lastly, we discuss the most salient factors and argue actionable results for the community and further research.

As the first study of this type, we make the following contributions:

-

1.

A comprehensive categorisation and listing of factors resulting in advantages or disadvantages of MTLs in the 6 properties studied.

-

2.

A detailed description of why and how each identified factor exerts an influence on different properties.

-

3.

Suggestions for how the presented information can be utilised to empirically investigate MTL properties.

-

4.

Procedural proposals for improving current model transformation languages based on the presented data.

The results of our study show, that there is a large number of factors that influence properties of model transformation languages. There is also a number of factors on which this influence depends on, i.e. factors that have a moderation effect on the influence of other factors. These factors provide a solid basis that allows further studies to be conducted with more focus. They also enable precise decisions on where improvements and adjustments in or for model transformation languages can be made.

The remainder of this paper is structured as follows: Section 2 introduces model-driven engineering and model transformation languages, the context in which our study integrates. Section 3 will detail our methodology for preparing and conducting the interviews and the procedures used to analyse the data accumulated through the interviews. Afterwards Section 4 gives an overview over demographic data of our interview participants while 5 presents our code system and details the findings for each code based on the interviews and analysis thereof. In Section 6 we present overarching findings and in Section 7, we discuss actionable results that can be drawn from our study that indicate avenues to focus on for the research community. Section 8 contains a detailed discussion of the validity threats of this research, and in Section 9 related efforts are presented. Lastly, Section 10 draws a conclusion for our research and proposes future work.

2 Background

This section will provide the necessary background for the context in which our study is integrated in.

2.1 Model-Driven Engineering

The Model-Driven Architecture (MDA) paradigm was first introduced by the Object Management Group in 2001 (OMG 2001). It forms the basis for an approach commonly referred to as Model-driven development (MDD) (Brown et al. 2005), introduced as means to cope with the ever growing complexity associated with software development. At the core of it lies the notion of using models as the central artefact for development. In essence this means, that models are used both to describe and reason about the problem domain as well as to develop solutions (Brown et al. 2005). An advantage ascribed to this approach that arises from the use of models in this way, is that they can be expressed with concepts closer to the related domain than when using regular programming languages (Selic 2003).

When fully utilized, MDD envisions automatic generation of executable solutions specialized from abstract models (Selic 2003; Schmidt 2006). To be able to achieve this, the structure of models needs to be known. This is achieved through so called meta-models which define the structure of models. The structure of meta-models themselves is then defined through meta-models of their own. For this setup, the OMG developed a modelling standard called Meta-object Facility (MOF) (OMG 2016a) on the basis of which a number of modelling frameworks such as the Eclipse Modelling Framework (EMF) (Steinberg et al. 2008) and the .NET Modelling Framework (Hinkel 2016) have been developed.

2.2 Domain-Specific Languages

Domain-specific languages (DSLs) are languages designed with a notation that is tailored for a specific domain by focusing on relevant features of the domain (Van Deursen and Klint 2002). In doing so DSLs aim to provide domain specific language constructs, that let developers feel like working directly with domain concepts thus increasing speed and ease of development (Sprinkle et al. 2009). Because of these potential advantages, a well defined DSL can provide a promising alternative to using general purpose tools for solving problems in a specific domain. Examples of this include languages such as shell scripts in Unix operating systems (Kernighan and Pike 1984), HTML (Raggett et al. 1999) for designing web pages or AADL an architecture design language (SAEMobilus 2004).

2.3 Model Transformation Languages

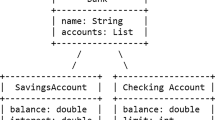

The process of (automatically) transforming one model into another model of the same or different meta-model is called model transformation (MT). They are regarded as being at the heart of Model Driven Software Development (Sendall and Kozaczynski 2003; Metzger 2005), thus making the process of developing them an integral part of MDD. Since the introduction of MDE at the beginning of the century, a plethora of domain specific languages for developing model transformations, so called model transformation languages (MTLs), have been developed (Arendt et al. 2010; Balogh and Varró 2006; Jouault et al. 2006; Kolovos et al. 2008; Horn 2013; George et al. 2012; Hinkel and Burger 2019). Model transformation languages are DSLs designed to support developers in writing model transformations. For this purpose, they provide explicit language constructs for tasks involved in model transformations such as model matching. There are various features, such as directionality or rule organization (Czarnecki and Helsen 2006), by which model transformation languages can be distinguished. For the purpose of this paper, we will only be explaining those features that are relevant to our study and discussion in Sections 2.3.1 to 2.3.7. Table 1 provides an overview over the presented features.

Please refer to Czarnecki and Helsen (2006), Kahani et al. (2019), and Mens and Gorp (2006) for complete classifications.

2.3.1 External and Internal Transformation Languages

Domain specific languages, and MTLs by extension, can be distinguished on whether they are embedded into another language, the so called host language, or whether they are fully independent languages that come with their own compiler or virtual machine.

Languages embedded in a host language are called internal languages. Prominent representatives among model transformation languages are FunnyQT (Horn 2013) a language embedded in Clojure, NMF Synchronizations and the .NET transformation language (Hinkel and Burger 2019) embedded in C#, and RubyTL (Cuadrado et al. 2006) embedded in Ruby.

Fully independent languages are called external languages. Examples of external model transformation languages include one of the most widely known languages such as the Atlas transformation language (ATL) (Jouault et al. 2006), the graphical transformation language Henshin (Arendt et al. 2010) as well as a complete model transformation framework called VIATRA (Balogh and Varró 2006).

2.3.2 Transformation Rules

Czarnecki and Helsen (2006) describe rules as being “understood as a broad term that describes the smallest units of [a] transformation [definition]”. Examples for transformation rules are the rules that make up transformation modules in ATL, but also functions, methods or procedures that implement a transformation from input elements to output elements.

The fundamental difference between model transformation languages and general-purpose languages that originates in this definition, lies in dedicated constructs that represent rules. The difference between a transformation rule and any other function, method or procedure is not clear cut when looking at GPLs. It can only be made based on the contents thereof. An example of this can be seen in Listing 1, which contains exemplary Java methods. Without detailed inspection of the two methods it is not apparent which method does some form of transformation and which does not.

In a MTL on the other hand transformation rules tend to be dedicated constructs within the language that allow a definition of a mapping between input and output (elements). The example rules written in the model transformation language ATL in Listing 2 make this apparent. They define mappings between model elements of type Member and model elements of type Male as well as between Member and Female using rules, a dedicated language construct for defining transformation mappings. The transformation is a modified version of the well known Families2Persons transformation case (Anjorin et al. 2017).

2.3.3 Rule Application Control: Location Determination

Location determination describes the strategy that is applied for determining the elements within a model onto which a transformation rule should be applied (Czarnecki and Helsen 2006). Most model transformation languages such as ATL, Henshin, VIATRA or QVT (OMG 2016b), rely on some form of automatic traversal strategy to determine where to apply rules.

We differentiate two forms of location determination, based on the kind of matching that takes place during traversal. There is the basic automatic traversal in languages such as ATL or QVT, where single elements are matched to which transformation rules are applied. The other form of location determination, used in languages like Henshin, is based on pattern matching, meaning a model- or graph-pattern is matched to which rules are applied. This does allow developers to define sub-graphs consisting of several model elements and references between them which are then manipulated by a rule.

The automatic traversal of ATL applied to the example from Listing 2 will result in the transformation engine automatically executing the Member2Male on all model elements of type Member where the function isFemale() returns false and the Member2Female on all other model elements of type Member.

The pattern matching of Henshin can be demonstrated using Fig. 1, a modified version of the transformation examples by Krause et al. (2014). It describes a transformation that creates a couple connection between two actors that play in two films together. When the transformation is executed the transformation engine will try and find instances of the defined graph pattern and apply the changes on the found matches.

This highlights the main difference between automatic traversal and pattern matching as the engine will search for a sub graph within the model instead of applying a rule to single elements within the model.

2.3.4 Directionality

The directionality of a model transformation describes whether it can be executed in one direction, called a unidirectional transformation or in multiple directions, called a multidirectional transformation (Czarnecki and Helsen 2006).

For the purpose of our study the distinction between unidirectional and bidirectional transformations is relevant. Some languages allow dedicated support for executing a transformation both ways based on only one transformation definition, while other require users to define transformation rules for both directions. General-purpose languages can not provide bidirectional support and also require both directions to be implemented explicitly.

The ATL transformation from Listing 2 defines a unidirectional transformation. Input and output are defined and the transformation can only be executed in that direction.

The QVT-R relation defined in Listing 3 is an example of a bidirectional transformation definition (For simplicity reasons the transformation omits the condition that males are only created from members that are not female). Instead of a declaration of input and output, it defines how two elements from different domains relate to one another. As a result given a Member element its corresponding Male elements can be inferred, and vice versa.

2.3.5 Incrementality

Incrementality of a transformation describes whether existing models can be updated based on changes in the source models without rerunning the complete transformation (Czarnecki and Helsen 2006). This feature is sometimes also called model synchronisation.

Providing incrementality for transformations requires active monitoring of input and/or output models as well as information which rules affect what parts of the models. When a change is detected the corresponding rules can then be executed. It can also require additional management tasks to be executed to keep models valid and consistent.

2.3.6 Tracing

According to Czarnecki and Helsen (2006) tracing “is concerned with the mechanisms for recording different aspects of transformation execution, such as creating and maintaining trace links between source and target model elements”.

Several model transformation languages, such as ATL and QVT have automated mechanisms for trace management. This means that traces are automatically created during runtime. Some of the trace information can be accessed through special syntax constructs while some of it is automatically resolved to provide seamless access to the target elements based on their sources.

An example of tracing in action can be seen in line 16 of Listing 2. Here the partner attribute of a Female element that is being created, is assigned to s.companion. The s.companion reference points towards a element of type Member within the input model. When creating a Female or Male element from a Member element, the ATL engine will resolve this reference into the corresponding element, that was created from the referred Member element via either the Member2Male or Member2Female rule. ATL achieves this by automatically tracing which target model elements are created from which source model elements.

2.3.7 Dedicated Model Navigation Syntax

Languages or syntax constructs for navigating models is not part of any feature classification for model transformation languages. However, it was often discussed in our interviews and thus requires an explanation as to what interviewees refer to.

Languages such as OCL (OMG 2014), which is used in transformation languages like ATL, provide dedicated syntax for querying and navigating models. As such they provide syntactical constructs that aid users in navigation tasks. Different model transformation languages provide different syntax for this purpose. The aim is to provide specific syntax so users do not have to manually implement queries using loops or other general purpose constructs. OCL provides a functional approach for accumulating and querying data based on collections while Henshin uses graph patterns for expressing the relationship of sought-after model elements.

3 Methodology

To collect data for our research question, we decided on using semi-structured interviews and a subsequent qualitative content analysis that follows the guidelines detailed by Kuckartz (2014). Semi-structured interviews were chosen as a data collection method because they present a versatile approach to eliciting information from experts. They provide a framework that allows to get insights into opinions, thoughts and knowledge of experts (Meyer and Booker 1990; Hove and Anda 2005; Kallio et al. 2016). The qualitative content analysis guidelines by Kuckartz (2014) were chosen because of their detailed descriptions for all steps of the analysis process. As such they provide a more detailed and modern framework compared to the procedures introduced by Mayring (1994), which have long been a gold standard in qualitative content analysis.

An overview over our complete study design can be found in Fig. 2. It shows the order of activities that were planned and executed as well as the artefacts produced and used throughout the study. Each activity is annotated with the section number in which we detail the activity. We split our approach into three main-phases: Preparation (detailed in Section 3.1), Operation (detailed in Section 3.2) and Coding & Analysis (detailed in Section 3.3).

For the preparation phase, we used a subset of the claimed properties of model transformation languages identified by us (Götz et al. 2021) to develop an interview guide. The guide focuses around asking participants whether they agree with a claim from one of the properties and then envisages the usage of why questions to gain a deeper understanding of their opinions on the matter. After identifying and contacting participants based on the publications considered during our previous literature review (Götz et al. 2021), we conducted 54 interviews with 55 interviewees (at the request of two participants, one interview was conducted with both of them together) and collected one additional written response. During the Coding & Analysis phase, we coded and analysed all 54 transcripts, as well as the written response, guided by the framework detailed by Kuckartz. In doing so, we focused first on factors and reasons for the individual properties and then on common factors and reasons between them.

The remainder of this section will describe in detail how each of the three phases of our study was conducted.

3.1 Interview Preparation

Our interview preparation phase consists of the creation of an interview guide plus selecting and contacting appropriate interview participants. We use the guidelines by Kallio et al. (2016) for the creation of our interview guide and expand the steps detailed there with steps for selecting and contacting participants. In addition, we use the guidance from Newcomer et al. (2015) to construct our study in the best possible way.

According to Kallio et al. (2016) the creation of an interview guide consists of five consecutive steps. First, researchers are urged to evaluate how appropriate semi-structured interviews are as a data collection method for the posed research questions. Then, existing knowledge about the topic should be retrieved by means of a literature review. Based on the knowledge from the knowledge retrieval phase, a preliminary interview guide can then be formulated and in another step be pilot tested. Lastly the complete interview guide can then be presented and used. As previously stated, we enhance these steps with two additional steps for selecting and contacting potential interview participants.

In the following, we detail how the presented steps were executed and the results thereof.

3.1.1 Identifying the Appropriateness of Semi-Structured Interviews

The goal of our study, outlined in our research questions, is to collect and analyse reasons and background information of why people believe claims about model transformation languages to be true. Data such as this is qualitative by nature and hence requires a research method capable of producing qualitative data. According to Hove and Anda (2005) and Meyer and Booker (1990) expert interviews are one of the most widely used research methodologies in the technical field for this purpose. They allow to ascertain qualitative data such as opinions and estimates. Interviews also enable qualitative interpretation of already available data (Meyer and Booker 1990) which perfectly aligns with our goal. Moreover the opportunity to ask further questions about specific statements made by the participants (Newcomer et al. 2015) fits the open ended nature of our research question. For these reasons, we believe semi-structured interviews to be a well suited to answer our research questions.

3.1.2 Retrieving Previous Knowledge

In our previous publication (Götz et al. 2021), we detailed the preparation, execution and results of an extensive structured literature review on the topic of claims about model transformation languages. The literature review resulted in a categorization of 127 claims into 15 different categories (i.e. properties of MTLs) namely Analysability, Comprehensibility, Conciseness, Debugging, Ease of Writing a transformation, Expressiveness, Extendability, Just better, Learnability, Performance, Productivity, Reuse & Maintainability, Tool Support, Semantics and Verification and lastly Versatility. These properties and the claims about them serve as the basis for the design of our interview study presented here.

3.1.3 Interview Guide

The interview guide involves presenting each interview participant with several claims on model transformation languages. We use claims from literature instead of formulating our own statements, to make them more accessible. This also prevents any bias from the authors to be introduced at this step. Participants are first asked to assess their agreement with a claim before transitioning into a discussion on what the reasons for their decision are based on an open-ended question. This style of using close-ended questions as a prerequisite for open-ended or probe questions has been suggested by multiple guides (Newcomer et al. 2015; Hove and Anda 2005).

We focus on a subset of six properties. This is due to the aim of keeping the length of interviews within an acceptable range for participants. According to Newcomer et al. (2015) semi-structured interviews should not exceed a maximum length of one hour. As a result, only a number of properties can be discussed per interview. In order to still talk with enough participants about each property, the number of properties examined must be reduced. The properties we discuss in the interviews and the reasons why they are relevant are as follows:

-

Comprehensibility: Is an important property when transformations are being developed as part of a team effort or evolve over time.

-

Ease of Writing: Is a decisive property that influences whether developers want to use a languages to write transformations in.

-

Expressiveness: Is one of the most cited properties in literature (Götz et al. 2021) and main selling point of domain specific languages in general.

-

Productivity: Is a property that is highly relevant for industrial adoption.

-

Reuse & Maintainability: Is another property that enables wider adoption of model transformation languages in project settings.

-

Tools: High-quality tools can provide huge improvements to the development.

The list consists of the 5 most claimed properties form the previous literature review (Götz et al. 2021) and is supplemented with Productivity, because we believe this attribute to be the most relevant for industry adoption.

To maximize the response rate of contacted persons, we aim for an interview length of 30 minutes. This decision is based on experiences from previous interview studies conducted at our research group (Groner et al. 2020; Juhnke et al. 2020) and fits within the maximum interview length suggested by Newcomer et al. (2015).

To best utilize the limited time per interview, the six properties are split into three sets of two properties each. In each interview one of the three sets is discussed.

For each property, one non-specific, one specific and one negative claim is used to structure all interviews involving this property around. A complete overview over all selected claims can be found in Table 2.

We consider non-specific claims to be those that do not provide any rationale as to why the claimed property holds, e.g. “Model transformation languages ease the writing of model transformations.”. The non-specific claims chosen simply reflect the property itself. They serve the purpose of getting participants to state their assumptions and beliefs for the property without any influence exerted by the discussed claim.

We consider those claims as specific, that provide a rationale or reason for why the claimed property holds, e.g. “Model transformation languages, being DSLs, improve the productivity.”. And we consider negative claims to be those, that state a negative property of model transformation languages, e.g. “Model transformation languages lack sophisticated reuse mechanisms.”. Generally, we use claims where we believe the discussions about the reasons to provide useful insights.

There exist several reasons why we believe this setup of using the same three none-specific, specific and negative claims for each property to be appropriate. First, the non-specific claim allows participants to provide any and all factors or reasons that they believe influence a claimed property. The specific claim then allows us to introduce a reason, that participants might not have thought about. It also prompts a discussion about a particular reason or factor that is shared between all participants. This ensures at least one area for cross comparison between answers. The negative claim forces participants to also deliberate negative aspects, providing a counterbalance that counteracts bias. Furthermore, the non-specific claim provides an easy introduction into the discussion about a specific MTL property that can present the interviewer with an overview of the participants thoughts on the matter. It also allows participants to provide other influence factors not specifically covered through the discussed claims or even new factors and reasons not present in the collection of claims from our literature review (Götz et al. 2021).

The complete interview guide resulting from the aforementioned considerations can be seen in Fig. 3. After introductory pleasantries we start all interviews of with demographic questions. Although some sources discourage asking demographic questions early in the interview due to their sensitive nature (Newcomer et al. 2015), we use them to break the ice between the interviewer and interviewee because our demographic questions do not probe any sensitive information.

After this initial get-to-know each other phase, the interviewer then proceeds to explain the research intentions, goals and the procedure of the remaining interview. Depending on the property-set selected for the interview, participants are then presented with a claim about a property. They are asked to rate their agreement with the claim based on a 5-point likert scale (5: completely agree, 4: agree, 3: neither agree nor disagree, 2: disagree, 1: completely disagree). The likert scale is used to allow the interviewer to better assess the participants tendency compared to a simple yes or no question. This part of the interview is intended solely to get a first impression of the view of the participant and not for a quantitative analysis. It also creates a casual point of entry for the interviewee to think about the topic under consideration. We communicate this to all participants to reduce any pressure they might feel to answer the question correctly. Afterwards an open-ended question inquiring about the reasons for the interviewees assessment is asked.

Some terms used within the discussed claims have ambiguous definitions. We tried to ask participants to explain their understanding of such terms, to prevent errors in analysis due to interviewees having different interpretations thereof. This allows for better assessment during analysis. The terms we have deemed to be ambiguous are: ‘succinct syntax’, ‘mapping’, ‘specific skills’, ‘high-level abstractions’, ‘convenient facilities’, ‘sufficient tool support’, ‘powerful tool support’, ‘sophisticated reuse mechanisms’ and ‘expressiveness’. We provide a definition for the term expressiveness. This is, because we are only interested in a specific type of expressiveness, i.e. how concisely and readily developers can express something. We are not interested in expressiveness in a theoretical sense, i.e. the closeness to Turing completeness.

This process of presenting a claim, querying the participants agreement before discussing their reasons for the assessment is repeated for all 3 claims about both properties. After discussing all claims, it is explained to the participants that the formal part of the interview is finished and that they are allowed to make final remarks about all discussed topics or other properties they want to address. After this phase of the interview acknowledgements on the part of the interviewer are expressed before saying goodbye. The complete question catalogue for the interviews can be found in Appendix A.

The interview guide was tested in a pilot study by the main author with one co-author that was not involved in its creation. After pilot testing, we changed the question about agreement with a claim from a yes-no question to one that uses a likert scale. We also extended the question sets with non-specific claims that do not contain any reasoning. Before adding the non specific claim, discussions focused too much on the narrow view within the presented claims.

3.1.4 Selecting & Contacting Participants

The target population for our study consists of all users of model transformation languages. To select potential participants for our study we rely on data from our previous literature review (Götz et al. 2021). The literature review produced a list of publications that address the topic of model transformations and model transformation languages. Because search terms such as ‘model to text’ and the like were not used in the study, using this list limits our results to model to model transformation languages. We discuss this limitation more thoroughly in Section 8.2.

All authors of the resulting publications are deemed to be potential interview participants. We assume, that people using MTLs in industry do have some research background and thus have published work in the field. There is also no other systematic way to find industry users. We also assume that people who are still active in the field have published within the last 5 years. This limits outreach but makes the set of potential participants more manageable. For this reason, the list was shortened to publications more recent than 2015 before the authors of all publications was compiled. This resulted in a total of 645 potential participants.

After selection, the authors were contacted via mail. First, everyone was contacted once and then, after a week, everyone who had not responded by then was contacted again. The texts we use for both mails can be found in Appendix B. Ten potential participants, from the list of potential participants, were not contacted through this channel but via personalised emails, as they are personal contacts of the authors.

Within the contact mails, potential participants are asked to select a suitable date for the interview and fill out a data consent form allowing us to record and transcribe the interviews.

Overall of the 645 contacted authors, 55 agreed to participate in our interview study resulting in a response rate of 8.53%Footnote 1.

3.2 Interview Conduction and Transcription

All but one interview were conducted by the first author using the online conferencing tool WebEx and lasted between 20 and 80 minutes. Due to scheduling issues, one interview had to be conducted by the second author, who had a preparatory mock interview with the main interviewer. Additionally, at the request of two participants, one interview was conducted with both of them together. Since our main focus for all interviews is on discussions, we do not believe this to have any effect on its results. WebEx is the chosen conferencing tool, due to its availability to the authors and its integrated recording tool which is used to record all interviews. For data privacy reasons and for easier in-depth analysis later on, all recordings are transcribed by two authors. To increase the readability of heavily fragmented sentences they are shortened to only contain the actual content without interruptions. In case of audibility issues the transcribing authors consulted with each other to try and resolve the issue. Altogether the interviews produced just over 32 hours of audio and about 162.100 words of transcriptions.

Each day, the main author decided on which question sets to use for all participants that had agreed to partake in the interviews. The question sets had to be chosen daily, as many participants only responded to the invitation after interviews had already taken place.

The goal of the decision process was, to ensure an even spread of participants over the question sets based on relevant demographic backgrounds, namely research, industry, MTL developer and MTL user. We consider those relevant because each group has a different view point on model transformation languages and their usage for writing transformations. It is therefore important to have answers from each group for each set of questions, to reduce the risk of missing relevant opinions.

We were able to ensure that at least one representative for each demographic group provided answers for each question set. A complete uniform distribution was not possible due to overlaps in the demographic groups.

3.3 Coding & Analysis

Coding and analysing the interview transcripts is done in accordance with the guidelines for content structuring content analysis suggested by Kuckartz (2014). The guideline recommends a seven step process (depicted in Fig. 4) for coding and analysing qualitative data. All steps are carried out with tool support provided by the MAXQDAFootnote 2 software. In the following, we explain how each process step is conducted in detail. We will use the following statement as a running example to show how codes and sub-codes are assigned and how the coding of text segments evolved throughout the process: “Of course some MTLs use explicit traceability for instance. But even then you have a mechanism to access it. And if you have a MTL with implicit traceability where the trace links are created automatically then of course you gain a lot of expressivity because you don’t have to write something that you would otherwise have to write for almost every rule.” (P30)

Process of a content structuring content analysis as presented by Kuckartz (2014)

3.3.1 Initial Text Work

The initial text work step initiates our qualitative analysis. Kuckartz (2014) suggests to read through all the material and highlight important segments as well as to write notes for the transcripts using memos. Following these suggestions, we apply initial coding from constructivist grounded theory (Charmaz 2014; Vollstedt and Rezat 2019; Stol et al. 2016) to mark and summarize all text segments where interviewees reason about their beliefs on influence factors about the discussed properties. To do so, the two authors, which conducted and transcribed the interviews, read through all transcripts and mark all relevant text segments with codes that preferably represented the segment word for word. The codes allow for easier reference in later steps and, due to tooling, we are still able to quickly read the underlying text segment if necessary.

During this step, the example statement was labelled with the code automatic tracing increases expressiveness because no manual overhead.

3.3.2 Developing Thematic Main Codes

For developing the thematic main codes for our study we follow the common practice of inferring them from our research questions as suggested by Kuckartz (2014). Since the goal of our research is to investigate implicit assumptions, and factors that influence the assessment of experts about properties of model transformation languages three main codes arise:

-

Properties: Denoting which property is being discussed (e.g. Comprehensibility).

-

Factors: Denoting what influences a discussed property according to an interviewee (e.g. Bidirectional functionality of a MTL).

-

Factor assessment: Denoting an evaluation of how a factor influences a property (e.g. positive or negative or mixed depending on other factors).

The sub-codes for the property code can be directly defined based on the six properties from our previous literature review (Götz et al. 2021). As such they are deductive (a-priori) codes that are intended to mark text segments based on the properties that are being discussed in them.

3.3.3 Coding of All the Material with Main Codes

In order to code of all the material with the main codes one author analyses all interview transcripts. While doing so, the conversations about a discussed claim are marked with the code that is based on the property stated in the claim. To exemplify this, all discussions on the claim ”The use of MTLs increases the comprehensibility of model transformations.” are coded with the main code comprehensibility.

This realisation of the process step breaks with Kuckartz’s specifications in multiple ways. First, we do not code the material with the main codes Factors and Factor assessment, because all factors and factor assessments are already coded with the summarising initial codes. These will be refined into actual sub-codes of Factors and Factor assessment in a later step. Second, we directly code segments with the sub-codes for the Property main code, because the differentiation comes naturally with the structure of the interviews and delaying this refinement makes no sense. And third, this way of coding makes it possible that unimportant segments are also coded, something that Kuckartz suggests not to do. However, we actively decided in favour of this, because it accelerates the coding process enormously. Furthermore, only overlaps of the property codes with the other codes are considered, in later steps, thus automatically excluding unimportant text segments from consideration.

During this step, the coding for the example text segment was extended with the code Expressiveness. While this does not look like much of an enhancement on the surface, it is paramount to allow for systematic analysis in later steps.

After this step the example segment had its initial code, summarising the essence of the statement, and the explicit property sub-code Expressiveness, providing the first systematic categorisation of the segment.

3.3.4 Compilation of All Text Passages Coded with the Same Main Code

This step forms the basis for the subsequent iterative process of inductively developing sub-codes for each main code. Due to the use of the MAXQDA tool, this step is purely technical and does not require any special procedure outside of the selection of the main code that is being considered in the tool.

3.3.5 Inductive Development of Sub-Codes

The inductive development of sub-codes forms the most important coding step in our study. Inductive development here means that the sub-codes are developed based on the transcripts contents.

Kuckartz suggests to read through all segments coded with a main code to iteratively refine the code into several sub-codes that define the main category more precisely (Kuckartz 2014). We optimize this step by analysing all the initial codes from the Initial Text Work step, to construct concise and comprehensive codes for similar initial codes that could be used as sub-codes for the Factor or Factor assessment main codes. In doing so we follow the focused coding procedure of constructivist grounded theory to refine the initial code system.

All sub-codes of the Factor main code, that are refined using this process, are thematic codes, meaning they denote a specific topic or argument made within the transcripts. As a result, the sub-codes represent factors explicitly named by interviewees that influence the different properties. In contrast, all sub-codes of the Factor assessment main code, that are refined using this process, are evaluative codes, meaning they represent an evaluation, made by the authors, about an effect. More specifically, the codes represent an evaluation of how participants believe factors influence various properties.

Because of the importance of this coding step, the sub code refinement is created in a joint effort by three of the authors. First, over a period of three meetings, the authors develop comprehensive codes based on the initial codes of 18 interviews through discussions. Then the main author complements the resulting code system by analysing the remaining set of interview transcripts, while the two other authors each analyse half of them. In a final meeting any new sub code, devised by one of the authors, is discussed and a consensus for the complete code system is established.

During this step no code segment is extended with additional codes. Instead new codes derived from the initial codes are saved for usage in the following steps.

From the example code segment and its initial code, a sub-code automatic tracing for the Factors code was derived. The finalised sub-code Traceability was decided upon based on the combination with other derived codes of similar meaning, like traces.

3.3.6 Coding of All the Material with Complete Code System

After the final code system is established, the main author processes all transcripts to replace the initial codes with codes from the final code system. For this, each coded statement is re-coded with codes indicating the influence factors expressed by the interviewees as well as a factor assessment, if possible. This final coding step is done by the main author while all three co-authors each check 10 coded transcripts to validate the correct and consistent use of all codes and to make sure all relevant statements are considered. The results from the reviews are discussed in pairwise meetings between the main author and the reviewing co-author before being incorporated in a final coding approved by all authors.

During this step, the initial code for the example segment was dropped and replaced by the codes MTL advantage and Traceability.

The final codes assigned to the example text segment thus were: Expressiveness, Traceability and MTL advantage. The reasoning given within the statement as to why automatic tracing provides an expressiveness advantage, are manually extracted during analysis using tooling provided by MAXQDA.

3.3.7 Simple and Complex Analysis and Visualisation

The resulting coding and the coded text segments are then used as the basis for our analysis which, in accordance with our research question, focuses on identifying and evaluating factors that influence the properties of MTLs. As recommended by Kuckartz (2014), this is first done for each Property individually before analysis across all properties is conducted (as shown in Fig. 5).

Analysis forms in a content structuring content analysis as presented by Kuckartz (2014)

For analysing the influence factors of an individual property, we use the MAXQDA tooling to find segments coded with both a factor and the considered property. Using this approach we first compile a list of all factors relevant for a property, before then doing an in-depth analysis of all the gathered statements for each factor. Here the goal is to elicit commonalities and contradictions between the opinions of our interviewees that can be used to establish a theory on how each factor influences each property individually.

In terms of our example text segment, the segment and all other segments coded with Expressiveness and Traceability were read and analysed. The goal was to see if reduced overhead from implicit trace capabilities played a role in the argumentation of other participants and to gather all the other mentioned reasons.

For the analysis over all properties combined we apply the theoretical coding process of constructivist grounded theory (Charmaz 2014; Stol et al. 2016) to develop a model of influences. To do so, the Factor assessment s are used to examine how the factors influence the respective properties, what the commonalities between properties are and where the differences lie. The goal here is to develop a cohesive theory which explains the influences of factors on the individual properties but also on the properties as a whole and potential influences between the factors themselves.

In terms of our example text segment, the results from analysing Expressiveness and Traceability segments were compared to results from analysing segments coded with other property codes and Traceability. The goal was to find commonalities and differences between the analysed groups.

3.3.8 Privacy and Ethical concerns

All interview participants were informed of the data collection procedure, the handling of the data and their rights surrounding the process, prior to the interview.

During selection of potential participants the following data was collected and processed.

-

First & last name.

-

E-Mail address.

For participants that agreed to the partake in the interview study the following additional data was collected and processed during the course of the study.

-

Non anonymised audio recording of the interview.

-

Transcripts of the audio recordings.

All data collected during the study was not shared with any person outside of the group of authors. Audio recordings were handled only by the first and second author.

The complete information and consent form can be found in Appendix D. All participants have consented to having their interview recorded, transcribed and analysed based on this information. All interview recordings were stored on a single device with hardware encryption and deleted as soon as transcriptions were finalised. The interview transcripts were processed to prevent identification of participants. For this, identifying statements and names were removed.

Apart from the voice recordings and names, no sensitive information about the interviewees was collected.

The study design was not presented to an ethical board. The basis for this decision are the rules of the German Research Foundation (DFG) on when to use a ethical board in humanities and social sciencesFootnote 3. We refer to these guidelines because there are none specifically for software engineering research and humanities and social sciences are the closest related branch of science for our research.

4 Demographics

We interviewed, and got one written response, from a total of 56 experts from 16 different countries with varied backgrounds and experience levels and collected one comprehensive written response. Table 4 in Appendix C presents an overview of the demographic data about all interview participants. Experts and their statements are distinguished via an anonymous ID (P1 to P56).

4.1 Background

As evident from Fig. 6 participants with a research background constitute the largest portion of our interviewees. Overall there is an even split between participants solely from research and those that have at least some degree of industrial contact (either through industry projects or by working in industry). Only 3 participants stated to have used model transformations solely in an industrial context. This is in part offset by the fact that 25 of interviewees have executed research projects in cooperation with industry or have worked both in research and industry (22 and 3 respectively). While there is a definitive lack of industry practitioners present in our study, a large portion of interviewees are still able to provide insights into model transformations and model transformation languages with an industry view.

Lastly, 10 of our participants are, in some capacity, involved in the development of model transformation languages. They can provide a different angle on advantages or disadvantages of MTLs compared to the 46 participants that use them solely for transformation purposes.

4.2 Experience

50 interviewees expressed to have 5 or more years of experience in using model transformations. Moreover, 24 of the participants have over 10 years of experience in the field. Lastly there was a single participant that had only used model transformations for a brief amount of time during their masters thesis.

4.3 Used Languages for Transformation Development

To better assess our participants and to qualify their answers with respect to their background we asked all interviewees to list languages they used to develop model transformations. Figure 7 summarises the answers given by participants while categorizing languages in one of three categories namely dedicated MTL, internal MTL and GPL. This differentiation is based on the classifications from Czarnecki and Helsen (2006) and Kahani et al. (2019).

The distinction between GPL and dedicated/internal MTL is made, to gain an overview over how large the portion of users of general purpose languages for the development is, compared to the users of model transformation languages. Furthermore, it also allows for comprehending the viewpoint participants will take when answering questions throughout the interview, i.e. do they compare general purpose languages with model transformation languages based on their experience with both or do they give specific insights into their experiences with one of the two approaches. Internal MTL is separated from dedicated MTL because one claim within the interview protocol specifically explores the topic of internal model transformation languages.

52 participants have used dedicated model transformation languages such as ATL, Henshin or Viatra for transforming models. Only half as many (27) stated to have used general purpose languages for this goal. Lastly, only 5 indicated the use of internal MTLs.

When looking at the specific dedicated MTLs used ATL is by far the most prominent one used by interviewees. A total of 37 participants mention having used ATL. This is more than double the amount of the second most used language namely Henshin which is only mentioned by 17 interviewees. The QVT family then follows in third place with QvT-R having been used by 13 participants, QvT-O by 11. A complete overview over all dedicated model transformation languages used by our interviewees can be found in Fig. 8. Note that several interviewees mentioned using more than one language, making the total number of data points in this figure larger than 52.

In the group of GPL languages used for model transformation (summarised in Fig. 9), Java is the most used language with 14 participants stating so. Note that several interviewees mentioned using more than one language, making the total number of data points in this figure larger than 27. Java is closely followed by Xtend which is mentioned by 12 interviewees. Then follows a steep drop of in popularity with Java Emitter Templates having been used by only four participants.

Lastly, only four internal model transformation languages, namely RubyTL, NTL, NMF Synchronizations and FunnyQT, are mentioned. This shows a lack of prominence thereof. Moreover none of the languages is used by more than two interviewees.

5 Findings

Based on the responses of our interviewees and our analysis, we developed a framework to classify influence factors. It allows us to categorize how factors influence properties of MLTs and each other according to our interviewees. Note that we split the property Reuse & Maintainability into two properties for the purpose of reporting. This is done because interviewees chose to consider them separately. Thus reporting on them separately allows for presenting more nuanced results.

The factors themselves are split into six top-level factors namely GPL Capabilities, MTL Capabilities, Tooling, Choice of MTL, Skills and Use Case. The first factor, GPL Capabilities, encompasses sub-factors related to writing model transformations in general purpose languages. MTL Capabilities encompasses sub-factors that originate from transformation specific features of model transformation languages. Tooling contains factors surrounding tool support for MTLs. Choice of MTL details how the choice of language asserts its influence. The factor Skills encompasses sub-factors associated with skills. Lastly, the Use Cases factor contains sub-factors that relate to the involved use case an its influences.

Within the framework we differentiate between two kinds of factors. The first kind are factors, that have a positive or negative impact on properties of MTLs. These include the factors GPL Capabilities, MTL Capabilities and Tooling as well as their sub-factors. The second kind are factors that, depending on their characteristic, moderate how other factors influence properties, e.g. depending on the language, its syntax might have a positive or negative influence on the comprehensibility of written code. We call such factors moderating factors. These include the factors Choice of MTL, Skills and Use Case and their sub-factors.

Table 3 provides an overview over the answers given by our interviewees. The table shows factors on its rows and MTL properties on its columns. A + in a cell denotes, that interviewees mentioned the factor to have a positive effect on their view of the MTL property. A - means interviewees saw a negative influence and +/- describes that there have been mentions of both positive and negative influences. Lastly, a M in a cell denotes, that the factor represents a moderating factor for the MTL property, according to some interviewees. The detailed extent of the influence of each factor is described throughout Sections 5 and 6.

In the following we present all top-level factors and their sub-factors and describe their place within our framework. For each factor we detail its influence on properties of model transformation languages or on other factors, based on the statements made by our interviewees. All statements referred to in this section can be found verbatim in Table 5 in Appendix E.

5.1 GPL Capabilities

Using general purpose languages for developing model transformations, as an alternative to using dedicated languages was extensively discussed in our interviews. Interviewees mentioned both advantages and disadvantages that GPLs have compared to MTLs that made them view MTLs more or less favourably.

The disadvantages of GPLs compared to MTLs stem from additional features and abstractions that MTLs bring with them and will be discussed later in Section 5.2. The advantages of GPLs on the other hand can not be placed within the MTL Capability factors. These will instead be presented separately in this section.

According to our interviewees, advantages of GPLs are a relevant factor for all properties of MTLs.

General purpose languages are better suited for writing transformations that require lots of computations. This is because they were streamlined for these kinds of activities and designed for this task, with language features like streams, generics and lambdas. As a result, general purpose languages are far more advanced for such situations compared to model transformation languages, which sacrifice this for more domain expressiveness [Qgpl1].

Much like the language design for GPLs, their tools and ecosystems are mature and designed to integrate well with each other. Moreover, according to several interviewees, their tools are of high quality making developers feel more Productive [Qgpl2].

Lastly, multiple participants noted, that there are much more GPL developers readily available for companies to hire, thus making GPLs more attractive for them. This helps the Maintainability of existing code as such experts are more likely to Comprehend GPL code [Qgpl3]. Whether this aspect also improves the overall Productivity of transformation development in a GPL was disagreed upon, because it might be that developers trained in a MTL could produce similar results with less resources.

It was also mentioned, that much more training resources are available for GPL development, making it easier to start learning and using a new GPL compared to a MTL.

5.2 MTL Capabilities

The capabilities that model transformation languages provide that are not present in GPLs, are important factors that influence properties of the languages. This view is shared by our interviewees that raised many different aspects and abstractions present in model transformation languages.

The influence of capabilities specifically introduced in MTLs is diverse and depends on the concrete implementation in a specific language, the skills of the developers using the MTL and the use case in which the MTL is to be applied. We will discuss all the implications raised by our interviewees regarding the transformation specific capabilities of MTLs for the properties attributed to MTLs in detail, in this section.

5.2.1 Domain Focus

Domain Focus describes the fact that model transformation languages provide transformation specific constructs, abstractions or workflows. Interviewees remarked the domain focus, provided by MTLs, as influencing Comprehensibility, Ease of Writing, Expressiveness, Maintainability, Productivity and Tool Support. But the effects can differ depending on the specific MTL in question.

There exists a consensus that MTLs can provide better domain specificity than GPLs by introducing domain specific language constructs and abstractions. This increases Expressiveness by lifting the problem onto the same level as the involved models allowing developers to express more while writing less. MTLs allow developers to treat the transformation problem on the same abstraction level as the involved modelling languages [Qdf1]. This also improves the ease of development.

Several interviewees argued, that when moving towards domain specific concepts the Comprehensibility of written code is greatly increased. The reason for this is, that because transformation logic is written in terms of domain elements, unnecessary parts are omitted (compared to GPLs) and one can focus solely on the transformation aspect [Qdf2].

Having domain specific constructs was also raised as facilitating better Maintainability. Co-evolving transformations written in MTLs together with hardware-, technology-, platform- or model changes is said to be easier than in GPLs because “Once you have things like rules and helpers and things like left hand side and right hand side and all these patterns then [it is] easier to create things like meta-rules to take rules from one version to another version [...]” (P23).

Domain focus also enforces a stricter code structure on model transformations. This reduces the amount of variety in which they can be expressed in MTLs. As a result, developing Tool Support for analysing transformation scripts gets easier. Achieving similarly powerful tool support for general purpose languages, and even for internal MTLs, can be a lot harder or even impossible because much less is known solely based on the structure of the code. Analysis of GPL transformations has to deal with the complete array of functionality of general purpose language constructs [Qdf4]. While MTLs can be Turing complete too, they tend to limit this capability to specific sections of the transformation code. They also make more information about the transformation explicit compared to GPLs. This allows for easier analysis of properties of the transformation scripts which reduces the amount of work required to develop analysis tooling.

The influence of domain abstractions on Productivity was heavily discussed in our interviews. Interviewees agreed that, depending on the used language, Productivity gains are likely, due to their domain focus. However, one interviewee explained that precisely because of Productivity concerns companies in the industry might use general purpose languages. The reason for this boils down to the Use Case and project context. Infrastructure for general purpose languages might already be set up and developers do not need to be trained in other languages [Qdf5]. Moreover, different tasks might require different model transformation languages to fully utilise their benefits, which, from an organisational standpoint, does not make sense for a company. So instead one GPL is used for all tasks.

5.2.2 Bidirectionality

According to our interviewees bidirectional functionality in a model transformation language influences its Comprehensibility, Ease of Writing, Expressiveness and Maintainability and Productivity. Its effects on these properties then depends on the concrete implementation of the functionality in a MTL. It also depends on the Skills of the developers and the concrete Use Case.

Our interviewees mentioned that the problem of bidirectional transformations is inherently difficult and that high level formalisms are required to concisely define all aspects of such transformations. Many believe that because of this solutions using general purpose languages can never be sufficient. Statements in the vein of “in a general purpose programming language you would have to add a bit of clutter, a bit of distraction, from the real heart of the matter” (P42) were made several times. This, combined with having less optimal querying syntax, then shifts focus away from the actual transformation and decreases both the Comprehensibility and Maintainability of the written code.

Maintainability is also hampered because GPL solutions scatter the implementation throughout two or more rules (or methods or files) that have to be adapted in case of changes [Qbx2]. Expressive and high level syntax in MTLs helps alleviate these problems and increases the ease at which developers can write bidirectional model transformations.

Interviewees also commented on the fact that, thanks to bidirectional functionalities, consistency definitions and synchronisations between both sides of the transformation can be achieved easier. This improves the Maintainability of modelling projects as a whole and allows for more Productive workflows. Manual attempts to do so have been stated to be error-prone and labour-intensive.

It was also pointed out that the inherent complexity of bidirectionality leads to several problems that have to be considered. MTLs that offer syntax for defining bidirectional transformations are mentioned to be more complex to use as their unidirectional counterparts. They should thus only be used in cases where bidirectionality is a requirement. Moreover, one interviewee mentioned that developers are not generally used to thinking in bidirectional way [Qbx3].

Lastly, the models involved in bidirectional transformations also play a role regardless of the language used to define the transformation. Often the models are not equally powerful making it hard to actually achieve bidirectionality between them, because of information loss from one side to the other [Qbx4].

5.2.3 Incrementality

Dedicated functionality in MTLs for executing incremental transformations has been discussed as influencing Comprehensibility, Ease of Writing and Expressiveness. Similar to bidirectionality its influence is again heavily dependent on the Use Case in which incremental languages are applied as well as the Skills of the involved developers.

Declarative languages have been mentioned to facilitate incrementality because the execution semantics are already hidden and thus completely up to the execution engine. This increases the Expressiveness of language constructs. It can, however, hamper the Comprehensibility of transformation scripts for developers inexperienced with the language because there is no direct way of knowing in which order transformation steps are executed [Qinc1].

On the other hand interviewees also explained that writing incremental transformations in a GPL is unfeasible. Manual implementations are error-prone because too many kinds of changes have to be considered and chances are high that developers miss some specific kind. Due to the high level of complexity that the problem of incrementality inherently posses interviewees argued that writing such transformations in MTLs is much easier [Qinc2].

The same argumentation also applied for the Comprehensibility of transformations. All the additional code required to introduce incrementality to GPL transformations is argued to clutter the code so much that developers “[will be] in way over their head[s]” (P13).

As with bidirectionality interviewees agreed, that the Use Case needs to be carefully considered when arguing over incremental functionality. Only when ad-hoc incrementality is really needed should developers consider using incremental languages. In cases where transformations are executed in batches, maybe even over night, no actual incrementality is necessary and then “general purpose programming languages are very much contenders for implementing model transformations” (P42). It was also explained that using general purpose languages for simple transformations is common practice in industry as they are “very good in expressing [the required] control flow” (P42) and because none of the aforementioned problems for GPLs have a strong impact in these cases.

5.2.4 Mappings

The ability of a MTL to define mappings influences that languages Comprehensibility, Ease of Writing, Expressiveness, Maintainability and Reuse of model transformations. Developer Skills, the used Language and concrete Use Case also play an important role in the kind of influence.

Interviewees agreed, that the Expressiveness of transformation languages utilising syntax for mapping is increased due to them hiding low level operations [Qmap1]. However, as remarked by one participant, the semantic complexity of transformations can not be hidden by mappings, only the computational complexity.

According our interviewees mappings form a natural way of how people think about transformations. They impose a strict structure on how transformations need to be defined, making it easy for developers to start of writing transformations. The structure also aids general development, because all elements of a transformation have a predetermined place within a mapping. Being this restrictive has the advantage of directing ones thoughts and focus solely on the elements that should be transformed [Qmap2]. To transform an element, developers only need to write down the element and what it should be mapped to.

The simple structure expressed by mappings also benefits the Comprehensibility of transformations. It allows to easily grasp which elements are involved in a transformation, even by people that are not experienced in the used language. Trying to understand the same transformation in GPLs would be much harder because “[one] would not recognize [the involved elements] in Java code any more” (P32). Instead, they are hidden in between all the other instructions necessary to perform transformations in the language. Interviewees also mentioned that, due to the natural fit of mappings for transformations, it is much easier to find entry points from where to start and understand a transformation and to reconstruct relationships between input and output. This is aided by the fact that the order of mappings within a transformation does not need to conform with its execution sequence and thus enables developers to order them in a comprehensible way [Qmap3].

One interviewee explained that, from their experience, mappings lead to less code being written which makes the transformations both easier to comprehend and to maintain. However, they conceded that the competence of the involved developers is a crucial factor as well. According to them, language features alone do not make code maintainable. Developers need to have language engineering skills and intricate domain knowledge to be able to design well maintainable transformations [Qmap4]. Both are skills that too little developers posses.

Moreover, several interviewees raised the concern, that complex Use Cases can hamper the Comprehensibility of transformations. Understanding which elements are being mapped can be hard to grasp if several auxiliary functions are used for selecting the elements. Here one interviewee suggested that a standardized way of annotating such selections could help alleviate the problem.

It was also mentioned that, while mappings and other MTL features increase the Expressiveness of the language, they might make it harder for developers to start learning the languages. Because a lot of semantics are hidden behind keywords, developers need to first understand the hidden concepts to be able to utilise them correctly [Qmap5].

Other features that highlight how much Expressiveness is gained from mappings have also been mentioned. Mappings hide how relations between input and output are defined. This creates a formal and predictable correspondence between them and thus enables Tracing. Moreover, the correspondence between elements allows languages to provide functionality such as Bidirectionality and Incrementality [Qmap6].

Because many languages that utilise mappings can forgo definitions of explicit control flow, mappings allow transformation engines to do execution optimisations. However, one interviewee explained that they encountered Use Cases where developers want to influence the execution order, forcing them to introduce imperative elements into their code effectively hampering this advantage. It has also been mentioned that in complex cases the code within mappings can get complicated to the point where non experts are unable to comprehend the transformation again. This problem also exists for writing transformations as well. According to one interviewee mappings are great for linear transformations and are thus very dependent on the involved (meta-)models. Also in cases where complex interactions needs to be defined mappings do not present any advantage over GPL syntax and sometimes it can even be easier to define such logic in GPLs [Qmap7].

Lastly, mappings enable more modular code to be written. This in turn facilitates reuse, because reusing and changing code results in local changes instead of several changes throughout different parts of GPL code [Qmap8].

5.2.5 Traceability

The ability in model transformation languages to automatically create and handle trace information about the transformation has been discussed by our interviewees to influence Comprehensibility, Ease of writing, Expressiveness and Productivity. However, the concrete effect depends on the MTL and the skill of users.

All interviewees talking about automatic tracing agreed that it increases the Expressiveness of the language utilising it. In GPLs this functionality would need to be manually implemented using structures like hash maps. Code to set up traces would then also need to be added to all transformation rules [Qtrc1].

However, interviewees disagreed on how much this actually impacts the overall transformation development. Most interviewees felt like automatic trace handling Eases Writing transformations and even increases Productivity since no manual workarounds need to be implemented. This is because manual implementation requires developers to think about when and in which cases traces need to be created and how to access them correctly. It also enables languages that allow developers to define rules independent from the execution sequence. One interviewee however felt like this was not as effort intensive as commonly claimed and thus automatic trace handling to them is more of a nice to have feature than a requirement for writing transformations effectively. Moreover, for complex Use Cases of tracing such as QvTs late resolve, the Users are required to understand the principle of tracing [Qtrc2]. And according to another interviewee teaching how tracing and trace models work is hard.

Comprehending written transformations can also be aided by automatic trace management. Manual implementations introduce unnecessary clutter into transformation code that needs to be understood to be able to understand a whole transformation. This is especially true if effort has been put into making tracing work efficiently, according to one interviewee. Understanding a transformation is much more straight forward when only the established relationships between the input and output domains need to be considered, without any additional code to setup and use traces [Qtrc3].