Abstract

Background

Developers spend more time fixing bugs refactoring the code to increase the maintainability than developing new features. Researchers investigated the code quality impact on fault-proneness, focusing on code smells and code metrics.

Objective

We aim at advancing fault-inducing commit prediction using different variables, such as SonarQube rules, product, process metrics, and adopting different techniques.

Method

We designed and conducted an empirical study among 29 Java projects analyzed with SonarQube and SZZ algorithm to identify fault-inducing and fault-fixing commits, computing different product and process metrics. Moreover, we investigated fault-proneness using different Machine and Deep Learning models.

Results

We analyzed 58,125 commits containing 33,865 faults and infected by more than 174 SonarQube rules violated 1.8M times, on which 48 software product and process metrics were calculated. Results clearly identified a set of features that provided a highly accurate fault prediction (more than 95% AUC). Regarding the performance of the classifiers, Deep Learning provided a higher accuracy compared with Machine Learning models.

Conclusion

Future works might investigate whether other static analysis tools, such as FindBugs or Checkstyle, can provide similar or different results. Moreover, researchers might consider the adoption of time series analysis and anomaly detection techniques.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Software teams spend a significant amount of time trying to locate defects and fix bugs (Zeller 2009). Fixing a bug involves isolating the part of the code that causes unexpected behavior of the program and changing it to correct the error (Beller et al. 2018). Bug fixing is a challenging task, and developers often spend more time fixing bugs and making the code more maintainable than developing new features (Murphy-Hill et al. 2015; Pan et al. 2009).

Different works addressed this problem (D’Ambros et al. 2010; Osman et al. 2017), relying on different information, such as process metrics (Nagappan and Ball 2005; Moser et al. 2008; Hassan 2009a) (number of changes, recent activity), code metrics (Subramanyam and Krishnan 2003; Gyimothy et al. 2005; Nagappan et al. 2006) (lines of code, complexity) or previous faults (Ostrand et al. 2005; Hassan and Holt 2005; Kim et al. 2007). The research community also considered the impact of different code quality issues on fault-proneness, with a special focus on Fowler’s code smells (Palomba et al. 2018; Gatrell and Counsell 2015; D’Ambros et al. 2010; Saboury et al. 2017; Lenarduzzi et al. 2020b).

In our previous works, we investigated the fault-proneness of SonarQube rules, first with machine learning techniques (Lenarduzzi et al. 2020e), and second with classical statistic techniques (Lenarduzzi et al. 2020b). Also, the approaches adopted in our previous work did not allow us to identify the correlation of each individual SonarQube rule with fault-proneness. As a result, developers commonly struggle to understand which metric or SonarQube rules they should consider to decrease the fault-proneness of their code (Vassallo et al. 2018), mainly because the ruleset includes more than 500 rules per development language.

In this paper, we aim at advancing the state of the art on fault-inducing commit prediction based on an in depth investigation among several features, a large number of projects and commits, and multiple Machine learning and Deep Learning classifiers.

Starting from the results obtained in our previous work (Lenarduzzi et al. 2020b), we designed and conducted an empirical study among 29 of the 33 Java projects of the Technical Debt dataset (Lenarduzzi et al. 2019b) analyzed with SonarQube version 7.5 that violated more than 1.8M of SonarQube rules, and where the faults were determined applying the SZZ algorithm (Śliwerski et al. 2005). We compared the fault prediction power of different features (SonarQube rule and product and process metrics) using the three most accurate Machine Learning models identified in our previous work (Lenarduzzi et al. 2020b) and two Deep Learning models. Moreover, to increase the validity of our results, we better preprocessed the data to avoid multicollinearity and to account for the unbalanced dataset; we also adopted a more accurate data validation strategy.

The results of our study reveal a number of significant findings. Considering the features selection, SonarQube rules can be used as fault predictors only under specific conditions such as the classifier and the variables preprocessing. Using historical data (Deep Learning) allows for better results (AUC 90% in average) than adopting Machine Learning models. Grouping the SonarQube rules by types positively improves the accuracy only when using Machine Learning models. Also, the rule types grouping reduces the features (predictors) number allowing to manage the time and simplify the process.

However, even if the results regarding SonarQube rules and Machine Learning are contrasting with those obtained in the previous work (Lenarduzzi et al. 2019b), they are more reliable and realistic because of the new preprocessing approach and the more accurate validation strategy.

Looking at the selected product and process metrics, the results clearly identified a set of the metrics which provided a higher accurate fault prediction. Specifically, Rahman and Devanbu (2013) (92.45% in average) and Kamei et al. (2012) (96.53% in average) provide good results both adopting Machine or Deep Learning models. Considering the metrics calculated by SonarQube, only Deep Learning models provide a good level of accuracy (79% in average). Moreover, including the SonarQube rules in all the metrics combinations, the results are always impressive. We reach the best performance (AUC more than 97% on average) when Deep Learning is adopted as model category.

Regarding the best model selection, our results highlighted the higher accuracy performance achieved by Deep Learning models. Compared with Machine Learning models, Deep Learning increases the AUC, enables the correct fault identification, and decreases the probability of incorrect identification.

The contribution of this paper is three-fold:

-

A comparison of the prediction power of the fault-proneness of SonarQube rules and product and process metrics

-

A comparison of the effectiveness and accuracy of Machine Learning and Deep Learning models for the identification of fault-inducing SonarQube rules and product and process metrics

-

A set of important features (SonarQube rules, product and process metrics) and models to achieve an accurate fault prediction.

The remainder of this paper is structured as follows. In Section 2 we introduce the background in this work, introducing the original study, SonarQube violations and the different machine and deep learning models. Section 3, describes the case study design, while Section 4 presents the obtained results. Section 5 discusses the results, and Section 6 identifies threats to validity. Section 7 describes the related works, while Section 8 draws the conclusion highlighting the future works.

2 Background

In this Section, we illustrate the background of this work, introducing our previous study (called “previous”), SonarQube static analysis tool, and the Machine and Deep Learning models adopted in this study.

2.1 The Previous Study

In this Section, we illustrate the previous study (Lenarduzzi et al. 2020e) and the obtained results. Moreover, we explain the reasons why we conducted this study, and we compare it with the previous one. We followed the guidelines proposed by Carver for reporting replications (Carver 2010).

We decided to consider for this study, only the paper (Lenarduzzi et al. 2020e) since – as far as we know – this is the only one that provide a ranking of importance of SonarQube issues that could induce bugs in the source code. Moreover, two of the authors of this paper are also authors of the previous study.

The previous study investigated the fault-proneness of SonarQube rules in order to understand if rules classified as “Bug” are more fault-prone than security and maintainability rules (“vulnerability” and “code smell”). Moreover, the previous study evaluated the accuracy of the SonarQube quality model for the bugs prediction. As context, the previous study analyzed 21 randomly selected mature Java projects from the Apache Software Foundation. All the commits of the projects were analyzed with SonarQube (version 6.4), and the commits that induced a fault were determined applying the SZZ algorithm (Śliwerski et al. 2005). The SonarQube rules fault proneness were investigated with seven Machine Learning algorithms (Decision Trees (Breiman et al. 1984), Random Forest (Breiman 2001), Bagging (Breiman 1996), Extra Trees (Geurts et al. 2006), Ada Boost (Freund and Schapire 1997), Gradient Boost (Friedman 2001), XG Boost Chen and Guestrin 2016). Results show that only a limited number of SonarQube rules can really be considered fault-prone.

Differently from the previous study (Table 1), we considered the 29 Java projects of the Technical Debt dataset (Lenarduzzi et al. 2019b), analyzed with SonarQube version 7.5, that include more than 1.8M SonarQube rules violated, and on which there was calculated 24 software metrics, and where the faults are determined applying the SZZ algorithm (Śliwerski et al. 2005). Moreover, we considered process and product metrics proposed by Rahman and Devanbu (2013) and Kamei et al. (2012) to corroborate the software metrics included in the SonarQube suite. We adopted Deep Learning models, and we made a comparison between the detection accuracy of Deep Learning and Machine Learning models in order to identify which ones better predict a fault. We adopted the three Machine Learning models that exhibit the best accuracy performance (AUC = 80%) in the previous study.

In order to improve the previous results, we adopted a data pre-processing step to check for multicollinearity between the variables. This was done using the Variable Inflation Factor (VIF) (O’Brien 2007). Moreover, the authors reported that the commits labelled as fault inducing account for less than 5% of the total number of commits considered. This causes a highly unbalanced dataset, where the positive class (fault-inducing commit) accounts for less than 5% of the total number of samples. This type of data negatively impacts the performance of normal classifiers (both Machine Learning and Deep Learning). For this reason, we adopted an oversampling technique to rebalance the dataset. For this step we used a Synthetic Minority Oversampling Technique (SMOTE) (Chawla et al. 2002).

2.2 SonarQube

SonarQube is one of the most common open-source static code analysis tools adopted both in academia (Lenarduzzi et al. 2017, 2020c) and in industry (Vassallo et al. 2019a). SonarQube is provided as a service from the sonarcloud.io platform, or it can be downloaded and executed on a private server.

SonarQube calculates several metrics such as the number of lines of code and the code complexity, and verifies the code’s compliance against a specific set of “coding rules” defined for most common development languages. In case the analyzed source code violates a coding rule, or if a rule is outside a predefined threshold, SonarQube generates an “issue”. SonarQube includes Reliability, Maintainability, and Security rules.

Reliability rules, also named “bugs”, create issues (code violations) that “represent something wrong in the code” and that will soon be reflected in a bug. “Code smells” are considered “maintainability-related issues” in the code that decreases code readability and code modifiability. It is important to note that the term “code smells” adopted in SonarQube does not refer to the commonly known code smells defined by Fowler and Beck (1999) but to a different set of rules. Fowler and Beck (1999) consider code smells as “surface indication that usually corresponds to a deeper problem in the system” but they can be indicators of different problems (e.g., bugs, maintenance effort, and code readability) while rules classified by SonarQube as “Code Smells” are only referred to maintenance issues. Moreover, only four of the 22 smells proposed by Fowler et al. are included in the rules classified as “Code Smells” by SonarQube (Duplicated Code, Long Method, Large Class, and Long Parameter List).

SonarQube also classifies the rules into five severity levels:Footnote 1 Blocker, Critical, Major, Minor, and Info.

In this work, we focus on the SonarQube violations, which are reliability rules classified as “bugs” by SonarQube, as we are interested in understanding whether they are related to faults. Moreover, we consider the 32 software metrics calculated by SonarQube. In the replication package (Section 3.5) we report all the violations present in our dataset. In the remainder of this paper, column “squid” represents the original rule-ID (SonarQube ID) defined by SonarQube. We did not rename it, to ease the replicability of this work. In the remainder of this work, we will refer to the different SonarQube violations with their ID (squid). The complete list of violations can be found in the file “SonarQube-rules.xsls” in the online raw data.

2.3 Machine Learning models

We selected the three machine learning models that turned out to be the most accurate in the faults prediction in our previous study (Lenarduzzi et al. 2020e): Random Forest (Breiman 2001), Gradient Boost (Friedman 2001), and XGboost (Chen and Guestrin 2016). As for Lenarduzzi et al. (2020e), Gradient Boosting and Random Forest are implemented using the library Scikit-LearnFootnote 2 with their default parameters. XGBoost model is implemented using the XGBoost library.Footnote 3 All the classifiers are fitted using 100 estimators.

Random Forest

Random Forest (Breiman 2001) is an ensemble technique based on decision trees. The term ensemble indicates it uses a set of “weak” classifiers that help solve the assigned task. In this specific case, the week classifiers are multiple decision trees.

Using a randomly chosen subset of the original dataset, an arbitrary amount of decision trees is generated (Breiman 1996). In the case of random forest, the subset is created with replacement, meaning that a sample can appear multiple times. Moreover, it is also chosen a subset of the features of the original dataset, without replacement (appear only once). This helps reducing the correlation between the individual decision trees. With this setup, each tree is trained on a specific subset of the data, and it can make prediction on unseen data. The final classification given by the Random Forest is decided based on the majority vote of the individual decision trees.

The process of averaging the prediction of multiple decision trees, allows the random forest classifier to better generalize the data and overcome the overfitting problem to which decision trees are prone. Also, using a randomly selected subset of the original dataset, the individual trees are not correlated with one another. This is particularly important in our case, as in this study we are using a high number of features, and therefore the probability of the features being correlated to one another, increases.

Gradient Boosting

Gradient Boosting (Friedman 2001) is another ensemble model which, compared to the random forest, generates the individual weak classifiers sequentially during the training process. In this case, we are also using a series of decision trees as weak classifiers. The gradient boosting model creates and trains only one decision tree at first. After each iteration, another tree is grown to improve the accuracy of the model and minimize the loss function. This process continues until a predefined number of decision trees has been created, or the loss function no longer improves.

XGBoost

The last classical model used, is the XGBoost (Chen and Guestrin 2016). This is nothing but a better-performing implementation of the Gradient Boosting algorithm. It allows for faster computation and parallelization compared to gradient boosting. It can therefore result in better computational and overall performance compared to the latter, and can be more easily scaled for the use with high dimensional data, as it is the one we are using.

2.4 Deep Learning Models

Deep learning is a subset of Machine Learning (ML) based on the use of artificial neural networks. The term deep indicates the use of multiple layers in the neural network architecture: the classical artificial neural network is the multilayer perceptron (MLP), which comprises an input layer, output, and a hidden layer in between. This structure limits the quantity of information that the network can learn and use for its task. Adding more layers allows the network to increase the amount of information that the network can extract from the raw input, improving its performance.

While machine learning models become progressively better at whatever their function is, they still need some guidance, especially in how the features are provided in input. In most cases, it is necessary to perform some basic to advance feature engineering before feeding them to the model for training. Deep learning models, on the other hand, thanks to their ability to progressively extract higher-level features from the input in the multiple layers of their architecture, require little to no previous feature engineering. This is particularly helpful when dealing with high-dimensional data.

Also, as seen Section 2.3, most of the classical machine learning models suffer from performance degradation when dealing with large datasets and high dimensional data. Deep learning models, on the other hand, can be helpful as thanks to the different types of architectures, they can be more scalable and flexible.

In this Section, we briefly introduce the Deep Learning-based techniques we adopted in this work: Fully Convolutional Network (FCN) (Wang et al. 2017) and Residual Network (ResNet) (Wang et al. 2017).

These two approaches are adopted from Fawaz et al. (2019), where it was shown that their performance is superior to multiple other methods tested. In particular, Fawaz et al. showed in their work that the FCN and the ResNet were the best performing classifiers in the context of the multivariate time series classification. This conclusion was obtained by testing 9 different deep learning classifiers on 12 multivariate time series datasets.

Residual Network

The first deep learning model used is a residual network (ResNet) (Wang et al. 2017). Among the many different types of ResNet developed, the one we used is composed of 11 layers, of which 9 are convolutional. Between the convolutional layers, it has some shortcut connection which allows the network to learn the residual (He et al. 2016). In this way, the network can be trained more efficiently, as there is a direct flow of the gradient through the connections. Also, the connections help in reducing the vanishing gradient effect, which prevents deeper neural networks from training correctly. In this work, we used the ResNet shown in Fawaz et al. (2019). It consists of 3 residual blocks, each composed of three 1-dimensional convolutional layers alternated to pooling layers, and their output is added to the input of the residual block. The last residual block is followed by a global average pooling (GAP) layer (Lin et al. 2013) instead of the more traditional fully connected layer. The GAP layer allows the features maps of the convolutional layers to be recognised as a category confidence map. Moreover, it reduces the number of parameters to train in the network, making it more lightweight, and reducing the risk of overfitting, when compared to the fully connected layer.

Fully Convolutional Neural Network

The second method used, is a fully convolutional neural network (FCN) (Wang et al. 2017). Compared to the ResNet, this network does not present any pooling layer, which keeps the dimension of the time series unchanged throughout the convolutions. As for the ResNet, after the convolutions, the features are passed to a global average pooling (GAP) layer. The FCN architecture was originally proposed for semantic segmentation (Long et al. 2015). Its name derives from the fact that the last layer of this network is another convolutional layer instead of a classical fully connected layer. In this work, we used the architecture proposed by Wang et al. (2017), which uses the original FCN as a feature extractor, and a softmax layer to predict the labels. More specifically, the FCN used in this work is adopted from Fawaz et al. (2019). This implementation consists of 3 convolutional blocks, each composed of a 1-dimensional convolutional layer and by a batch normalization layer (Ioffe and Szegedy 2015). It uses a rectified linear unit (ReLU) (Nair and Hinton 2010) activation function. The output of the last convolutional block is fed to the GAP layer, fully connected to a traditional softmax for the time series classification. tThis model has proven to be on par with the state-of-the-art models in time series classification in previous works on time series classification (Wang et al. 2017). Moreover, it is smaller than the ResNet, which would make the FCN model more computationally efficient.

3 Empirical Study Design

We designed our empirical study based on the guidelines defined by Runeson and Höst (2009). In this Section, we describe the empirical study, including the goal and the research questions, the study context, the data collection, and the data analysis.

3.1 Goal and Research Questions

The goal of this paper is to conduct an in depth investigation among several features, a large number of projects and commits, and multiple Machine learning and Deep Learning classifiers to predict the commits fault proneness. This study allows us to: 1) corroborate our assumption that SonarQube rules fault proneness was low, extending our previous works Lenarduzzi et al. 2020b, 2020e, and 2) build models to predict whether a commit is fault-prone with the highest accuracy as possible. As features, we selected the SonarQube rules and different product and process metrics (Section 3.4).

The perspective is of both practitioners and researchers since they are interested in understanding which variables.

Based on the aforementioned goal, we derived the following Research Questions (RQs).

-

RQ1 What is the fault proneness of the SonarQube rules?

-

RQ2 What is the fault proneness of product and process metrics?

-

RQ3 To what extent can SonarQube rules impact the performance of fault prediction models that leverage process and product metrics?

-

RQ4 Which is the best combination of features and the best model for the fault prediction?

More specifically, in RQ1 we aim at investigating the impact of all the SonarQube rules on fault-proneness. The goal is to understand how accurate the prediction can be for fault-proneness if developers do not violate all the SonarQube rules. To provide a complete evaluation, we considered all the SonarQube rules first, and then grouped by type (Bug, Code Smell, and Vulnerability). We selected SonarQube, since it is by far one of the most popular tools and its popularity is increased in the last years, considering discussion in platforms such as Stack Overflow, LinkedIn, and Google groups (Vassallo et al.2018; Lenarduzzi et al. 2021a, 2020d; Avgeriou et al. 2020). However, as reported by Vassallo et al. (2018), developers commonly get confused by the large number of rules, especially because their severity assigned by SonarQube is not actually correlated with the fault proneness (Lenarduzzi et al. Lenarduzzi et al. 2020b, 2020e).

Software metrics have been considered good predictors for fault-proneness for several decades (D’Ambros et al. 2010; Pascarella et al. 2019). Therefore, in RQ2 we are interested in investigating the fault proneness of different software metrics combined, including the ones proposed by Rahman and Devanbu (2013), Kamei et al. (2012), and SonarQube suites. In order to have a baseline for the next RQ, in this RQ we aim at investigating the impact of each product and process metrics set on fault proneness.

In RQ3, we assess the actual prediction capability using the relevant features coming from the previous research questions (RQ1 and RQ2) when predicting the presence of a fault in the source code.

Finally, in RQ4, thanks to the achieved results for each feature and model, we identify their best combination of predictors and models that allows developers to reach the highest accuracy when predicting a fault in the source code.

3.2 Study Context

As context, we considered the projects included in the Technical Debt Dataset (Lenarduzzi et al. 2019b). The data set contains 33 Java projects from the Apache Software Foundation (ASF) repository.Footnote 4 The projects in the data set were selected based on “criterion sampling” (Patton 2002), that fulfill all of the following criteria: developed in Java, older than three years, more than 500 commits and 100 classes, and usage of an issue tracking system with at least 100 issues reported. The projects were selected also maximizing their diversity and representation by considering a comparable number of projects with respect to project age, size, and domain. Moreover, the 33 projects can be considered mature, due to the strict review and inclusion process required by the ASF. Moreover, the included projects regularly review their code and follow a strict quality process.Footnote 5 More details on the data set can be found in Lenarduzzi et al. (2019b).

For each project, Table 2 reports the number of commits analyzed, the number of faults detected, and the number of occurrences of SonarQube rules violated.

3.3 Data Collection

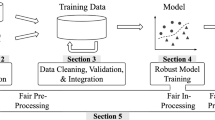

The Technical Debt Dataset (Lenarduzzi et al. 2019b) contains the information of the analysis of the commits of the 33 Open Source Java projects. In this work, we considered the following information, as depicted in Fig. 1:

-

SonarQube Rules Violations. We considered the data from the Table “SONAR_ISSUES” that includes data on each rule violated in the analyzed commits. The complete list of rules is available onlineFootnote 6 but can also be found in the file “sonar_rules.csv” of the Technical Debt Dataset while the diffuseness of each rule is reported in Saarimäki et al. (2019). As reported in Table 2, the analyzed projects violated 174 SonarQube rules for 1,914,508 times. Since in our previous work (Lenarduzzi et al. 2020b) we found incongruities in the rules type and severity assigned by SonarQube, we decided to consider all the detected rules. Table 3 shows the SonarQube ruled violated grouped by type and severity.

-

Product and Process Metrics. We considered the 24 software metrics measured by SonarQube (Table “SONAR_MEASURES” of the Technical Debt data set) as listed in Table 4, related to

-

Size (11 types)

-

Complexity (5 types)

-

Test coverage (4 types)

-

Duplication (4 types)

-

-

Fault-inducing and Fault-fixing commits identification. In the dataset, the fault-inducing and fault-fixing commits are determined using the SZZ algorithm (Śliwerski et al. 2005; Lenarduzzi et al. 2020a) and reported in the Table “SZZ_FAULT_INDUCING_COMMITS”. The SZZ algorithm identifies the fault-introducing commits from a set of fault-fixing commits. The fault-introducing commits are extracted from a bug tracking system such as Jira or looking at commits that state that they are fixing an issue. A complete description of the steps adopted in the SZZ algorithm is available in Śliwerski et al. (2005).

Technical Debt Dataset Tables (Lenarduzzi et al. 2019b) considered in this study

Moreover, to enrich the data regarding the product and process metrics contained in the dataset, we considered the product and process metrics proposed by Rahman and Devanbu (2013) and Kamei et al. (2012), implemented by Pascarella et al. (2019). Moreover, these metrics were previously validated in the context of fine-grained just-in-time defect prediction. These metrics cover various aspects of the development process (Table 5):

-

Developers’ expertise (e.g., the contribution frequency of a developer Kamei et al. 2012)

-

The structure of changes (e.g., the number of changed lines in a commit Rahman and Devanbu 2013)

-

The evolution of the changes (e.g., the frequency of changes Rahman and Devanbu 2013)

-

The dimensional footprint of a committed change (e.g., the relation between uncorrelated changes in a commit Tan et al. 2015).

3.4 Data Analysis

In this Section, we report the data analysis protocol adopted in this study including data preprocessing, data analysis, and accuracy comparison metrics.

3.4.1 Data Preprocessing

In order to investigate our RQs we need to preprocess the data available in the Technical Debt Dataset. Moreover, since we are planning to adopt machine learning and Deep Learning techniques, we need to preprocess the data accordingly to the models we aim to adopt.

The preprocessing was composed of three steps:

-

Data extraction from the Technical Debt Dataset

-

Data pre-processing

-

Data preparation for the Machine Learning Analysis

-

Data preparation for the Deep Learning Analysis

Data extraction from the Technical Debt Dataset

The data in the tables SZZ_FAULT_INDUCING_COMMITS, and SONAR_MEASURES of the Technical Debt Dataset already list the information per commit. However, the table SONAR_ISSUES contains one row for each file where a rule has been violated. Therefore, we extracted a new table by means of an SQL query (see the replication package for details Lomio et al. 2022). The result is the new table SONAR_ISSUE_PER_COMMIT. Then, we joined the newly created table SONAR_ISSUE_PER_COMMIT with the tables SZZ_FAULT_INDUCING_COMMITS and SONAR_MEASURES using the commit hash as key. This last step resulted in the final dataset that we used for our analysis (Table FullTable.csv in the replication package Lomio et al. 2022), which contains the following information: the commit hash, the project to which the commit refers to, the boolean label Inducing, which indicates if the commit is fault inducing or not, and the set of sonar measures and sonar issues introduced in the commit.

Moreover, we calculated the software metrics proposed by Rahman and Devanbu (2013) and Kamei et al. (2012) according to Pascarella et al. (2019) procedure. Pascarella et al. (2019) provided a publicly accessible replication package with all the scripts used to compute the metrics. The tool collects the new metrics as soon as a new file Fi was added to a repository, (2) updated the metrics of Fi whenever a commit modified it, (3) kept track of possible file renaming by relying on the Git internal rename heuristic and subsequently updating the name of Fi, and (4) removed Fi in the case it was permanently deleted.

Due to the characteristics of the projects, we were able to calculate the metrics proposed by Rahman and Kamei only on 29 of the 33 projects, leaving out the following projects: Batik, Beam, Cocoon, and Santuario. In order to be able to compare the results obtained using the different metrics as features, we excluded these projects also for the analysis with the SonarQube rules.

We combined the metrics by a step-wise method: we grouped the metrics based on Rahman and Devanbu (2013) + Kamei et al. (2012), Rahman and Devanbu (2013) + SonarQube metrics, and Kamei et al. (2012) + SonarQube metrics. Finally, we also considered all the metrics together. Based on this grouping, we designed seven different metrics combinations. We also extended this grouping in order to combine each of the metrics also with SonarQube rules and SonarQube rules type, hence resulting in 14 additional combinations. The full list of combinations can be seen in Table 6.

The complete process is depicted in Fig. 2.

Data Pre-processing

As recommended in literature, we applied a set of pre-processing steps to avoid bias in the interpretation of the results (Tantithamthavorn and Hassan 2018).

Firstly, each SonarQube violation has been normalized for each project, so that the impact of the specific violation becomes more evident.

We applied a feature selection method to remove correlated variables that provide the classifiers with the same (or similar) information, and that might cause them not to be able to derive the correct explanatory meaning of the features. This step allows avoiding multi-collinearity (O’Brien 2007). We exploited the Variable Inflation Factor (VIF) method (O’Brien 2007): for each independent variable, the VIF function measures how much the variance of the model increases because of collinearity. The features having a VIF coefficient higher than 5 were removed; the process was repeated, iteratively, until the point where all the remaining features had a VIF coefficient lower than the defined threshold.

Since we have an imbalanced dataset, with the commits labelled as fault inducing accounting for less than 5% of the total number of commits considered, we included an oversampling step to improve the performance of the classifiers used. We applied the Synthetic Minority Oversampling Technique (SMOTE) (Chawla et al. 2002): for each project, this technique generates artificial samples of the minority class (i.e., faulty commits in our case) in order to rebalance the classes. Unfortunately, we found that the technique could not be applied on all the considered projects. Particularly, SMOTE requires the presence of at least two samples of the minority class to be able to replicate them and properly oversample the dataset. The total number of samples considered for the analysis after the SMOTE was applied, along with the number of features selected through the VIF method for each subset considered, can be found in Table 6.

Moreover, since our commit data is dependent on the time, we also included Deep Learning models, in order to include the effect of past commits in determining the faultiness of the current ones. Compared to Machine Learning models, it is, in fact, possible to include also past data as input, instead of only the current data point.

Data Preparation for the Machine Learning Analysis

In order to predict if a commit is fault-inducing or not, based on the violation of a SonarQube rule or to the change of a metric, we identified the fault inducing (Boolean) variable as the target (dependent) variable.

The machine learning models described in Section 2.3, allow only to have a two-dimensional input (N,M), where N is the number of samples and M is the number of features. This means that we can classify a commit as fault inducing or not, only based on the information related to that commit itself: we cannot include the commit’s history. For this reason, to prepare the data for answering RQs, for each commit, we selected the target variable, which is the boolean label Inducing. As input features, we prepared multiple sets, including SonarQube rules and SonarQube rules type (RQ1), product and process metrics (RQ2), and their combinations (RQ3).

It is important to notice that at this point, we are interested in classifying a snapshot of the commit as fault inducing or not; therefore, the time dependency information is not taken into account.

Data Preparation for the Deep Learning Analysis

The deep learning models described in Section 2.4, allow the use of three-dimensional input (N,h,M), where N and M are the numbers of samples and features, as for the machine learning models, while h indicates the number of commits in each sample. This means that we are able to include the features related to the past commits in the classification of another commit (Fig. 3): we can include the history of the commit and are not limited to using only its current status.

For this reason, we had to reshape the data in order to include the past status of the commits. We used the previous 10 commits as input variables for our models and the label of the following commit as the target variable. Going more in detail, as we have multiple projects in our dataset, we first divided the data into subsets, including only one project. This helps us include only commits from the same project in each sample. After doing this, we reshaped the data using a rolling window of length 10 and step 1, selecting 10 commits and storing the following commit label as target variable. We did this iteratively for all the commits for each project. Similarly to what was done for the machine learning case, we prepared multiple sets of inputs, including SonarQube rules and SonarQube rules type (RQ1), product and process metrics (RQ2), and their combinations (RQ3).

Once the new samples are obtained, they are shuffled and divided into train and test sets. Contrary to the machine learning case, here we take into account the time dependency between commits. Still, it is indeed important to notice that this is done in each individual sample. Therefore it is not necessary to consider any temporal order in the train-test split.

3.4.2 Data Analysis

We first analyzed the fault-proneness of SonarQube rule (RQ1) and of software metrics (RQ2.1) with the three Machine Learning models that better performed on this task in our previous work (Lenarduzzi et al. 2020e). Then, we applied Deep Learning models on the same data to get better insights of the data with more advanced analysis techniques. Finally, we compared the results obtained and applied statistical tests to assess the results.

Machine Learning Analysis

The three machine learning models presented in Section 2.3, were all implemented using Scikit-learn library, except for the XGBoost model, implemented using its own library. All the classifiers were trained using 100 decision trees. The models were trained using a LOGO (Leave One Group Out) validation strategy. All three ML models were run on an Intel Xeon W-2145 with 16 cores and 64GB of RAM.

Deep Learning Analysis

The deep learning models described in Section 2.4, were implemented in TensorFlow (Abadi et al. 2015) and Chollet et al. (2015), using a similar approach as Fawaz et al. (2019). Both models were trained for 50 epochs, with a mini-batch size of 64 and using as optimizer the Adadelta algorithm (Zeiler 2012), which allow the model to adapt the learning rate. In order to better compare the results with the ones obtained using classical machine learning methods, also the deep learning models were trained using a LOGO validation strategy. Both models have been trained on a computational cluster with a total of 32 NVIDIA Tesla P100 and 160 CPU cores specific for training deep learning models. Each of our model had available 1 NVIDIA Tesla P100 with 16GB of VRAM, 1 CPU core, and 40GB of RAM.

Accuracy Comparison

As validation technique we adopted the Leave One Group Out (LOGO) validation. This technique divides the dataset into train and test sets using a ’group as discriminant (in our case the project is used). All the groups but one are used to train the model, and the remaining is used for testing. This is done for each group in the dataset. This means that n models are trained, with n the number of projects in our data. For each fold, n − 1 groups are used for training, and 1 for testing. This means that for our analysis, the training set was composed 28 projects. The remaining 1 project was used to validate the model. This process was repeated 29 times, so that all the projects in the dataset were in the test set exactly once. It is important to highlight that the commit of a project cannot be split between train and test set. This constraint avoids the possible bias due to the time-sensitive nature of code commits: in other words, we never allow a commit belonging to a project to be seen by the model before the train.

The selection of the LOGO validation technique was based on the need to have a validation strategy which would minimize the possible bias given the nature of the data that we had for our analysis. More specifically, a normal k-fold cross-validation would not be suitable as it would include commits from projects in the test set, already in the train set, resulting in a bias classification. Also, a time based validation would not work with our data as there would be many folds in which there would not be any fault-inducing commit (as they represent less than 5% of the data), hence the classifiers used would not work. This problem would arise also considering a within project validation, especially for those projects that had very few fault-inducing commits. tThis validation could be used without any probelm with larger projects (i.e., Ambari, Bcel), but it would leave out many of the smaller projects which are necessary to strengthen and better generalize our results. It is obvious that also using a time based validation, mixing all commits from all projects would create a bias as for the k-fold cross validation. Also, it is important to notice that for both the machine learning and for the deep learning classifiers, we intrinsically take into consideration the time nature of the data: we are using models which consider the samples statically, without having memory of any time-based dependency between samples. For this reason, we could avoid using a strict time based validation.

The alternative we were left with, was therefore to use a validation strategy that would eliminate as many biases as possible while ensuring to have enough samples of both classes in all the folds of the validation strategy.

As for accuracy metrics, we first calculated precision and recall. However, as suggested by Powers (2011), these two measures present some biases as they are mainly focused on positive examples and predictions, and they do not capture any information about the rates and kind of errors made.

The contingency matrix (also named confusion matrix), and the related f-measure help to overcome this issue. Moreover, as recommended by Powers (2011), the Matthews Correlation Coefficient (MCC) should also be considered to understand the possible disagreement between actual values and predictions as it involves all the four quadrants of the contingency matrix. From the contingency matrix, we retrieved the measure of true negative rate (TNR), which measures the percentage of negative sample correctly categorized as negative, false positive rate (FPR) which measures the percentage of negative sample misclassified as positive, and false negative rate (FNR), measuring the percentage of positive samples misclassified as negative. The measure of true positive rate is left out as equivalent to the recall. The way these measures were calculated can be found in Table 7.

Finally, to graphically compare the true positive and the false positive rates, we calculated the Receiver Operating Characteristics (ROC), and the related Area Under the Receiver Operating Characteristic Curve (AUC). This gives us the probability that a classifier will rank a randomly chosen positive instance higher than a randomly chosen negative one.

In our dataset, the proportion of the two types of commits is not even: a large majority (approx. 99%) of the commits were non-fault-inducing, and a plain accuracy would reach high values simply by always predicting the majority class. On the other hand, the ROC curve (as well as the precision and recall scores) are informative even in seriously unbalanced situations.

Statistical Analysis

To assess our results, we also compared the distributions of the software metrics groups and SonarQube rules using statistical tests. We needed to compare more than 2 groups with not normally distributed data (we tested the normality applying Wilkinson testFootnote 7), and dependent samples (two (or more) samples are called dependent if the members chosen for one sample automatically determine which members are to be included in the second sample). To identify a set of important features and models for fault prediction, we need to verify whether the differences in the performance achieved by the various experimented models were statistically significant. We had two possible options adopting ScottKnott test (Tantithamthavorn et al. 2017, 2018) or Nemenyi testFootnote 8 post-hoc test (Nemenyi 1962). The selection depends on the data distribution: if the normality is proved we will opt for ScottKnott, otherwise we will select Nemenyi. Based on the result achieved from the test, we identified the best models and built them using only the most important features and compared with the ones using all the features. For each RQ, we identified which data groups differ after a statistical test of multiple comparisons (null hypothesis is that the groups are similar), making a pair-wise comparison.

3.5 Replicability

In order to allow the replication of our study, we published the complete raw data, including all the scripts adopted to perform the analysis and all the results in the replication package (Lomio et al. 2022).

4 Results

In this Section, we first report a summary of the data analyzed, and then we answer our RQs.

4.1 RQ1. What is the Fault Proneness of the SonarQube Rules?

We considered 59,912 commits in 29 Java projects that violated 174 different rules a total of 1,823,118 times. Out of 174 rules detected in our projects, only 161 are categorized with a SonarQube ID, and these are the ones that we used as input for our analysis, as described in Section 3.4. The 455 commits labelled by SZZ as fault-inducing, violated 149 Sonarqube rules 397,595 times, as reported in Table 8.

In the remainder of this Section, we refer to the SonarQube Violations only with their SonarQube ID number (e.g. S108). The complete list of rules, together with their description is reported in the online replication package (Lomio et al. 2022).

It is important to remember that, according to the SonarQube model, a Bug “represents something wrong in the code and will soon be reflected in a fault”. Moreover, they also claim that zero false positives are expected from bugs.Footnote 10 Therefore, we should expect that Bugs represented the vast majority of the rules detected in the fault-inducing commits. However, all the three types present a similar distribution: 19.85% of Bug, 20.09% of Code Smells, and 33.04% of Security Vulnerabilities. In Table 9 we report the occurrences of the top-10 violated SonarQube rules in the fault-inducing commits. Considering the average of each rule per commit, the distribution shows that the top-10 recurrent SonarQube rules are detected in almost all the cases in the fault inducing commits. Only a little portion (less than 3%) is also detected in the not-inducing commits (Fig. 4).

We analyzed our projects with the three selected Machine Learning models (Gradient Boost, Random Forest, and XG Boost) and with two Deep Learning models (FCN and ResNet) to predict a fault based on SonarQube rules.

We considered both the rules individually and grouped by types (Bug, Code Smell, and Vulnerability). We aimed to understand if the presence of a Sonar issue of different types has a higher probability of introducing a fault in the source code.

Figures 5 and 6 depict the box plots reporting the distribution of AUC and F-measure values obtained during the LOGO validation of the three Machine Learning and the two Deep Learning models on the considered dataset. Instead, Figs. 7 and 8 refer to FNR and FPR values. In both figures, each color indicates the model produced considering the rules individually (Blue) and grouped by types (Orange).

Considering the SonarQube rules individually, the three Machine Learning validation results reported an average AUC of 50% (as also shown in Table 10 and Fig. 5). In our previous work (Lenarduzzi et al. 2020e), the AUC obtained an average value of 80%. We believe that avoiding multi-collinearity (O’Brien 2007) (VIP) and adopting a more accurate and realistic validation approach (LOGO) provided a more reliable prediction accuracy.

Deep Learning models, instead, enabled to better predict a fault (Table 14 and Fig. 5). We can see that in terms of AUC both Deep Learning models over-performed the machine learning models, with an average AUC of 90%. For the other accuracy metrics, we have good results (better than with the machine learning models).

Moreover, the FNR is higher in the case of the Machine Learning models, as they incorrectly identified normal commits as faulty (Fig. 7). It must be said that even if Deep Learning models look better for FNR, they incorrectly identify faulty classes (FPR - Fig. 8).

Grouping the SonarQube rules by types increases the prediction accuracy (Table 10) in terms of AUC (Fig. 5) and F-measure (Fig. 6) when we applied the Machine Learning models. Instead, Deep Learning models seem to not be affected by the grouping. The same trend can be observed looking at FNR (Fig. 7) and FPR (Fig. 8).

These differences in results and performance improvement can be explained with the curse of dimensionality. The data we are using can be considered as high dimensional data, when considering all the SQ rules individually. This type of data has been shown to limit machine learning models’ performance, while affecting less (in this case, for instance) the performance of deep learning models. Machine learning models slightly improve their overall performances when dealing with fewer features instead (i.e. SQ rule types).

Based on the overall results, Deep Learning models are good fault predictors considering all the accuracy metrics.

Moreover, adopting LOGO validation strategy, increases the overall performance of both Deep and Machine Learning models, as we can see in Table 15 and Figs. 23 and 24 reported in the A.

4.2 RQ2. What is the Fault Proneness of Software Metrics?

In this Section, we investigated the fault proneness of product and process metrics considering the ones proposed by Rahman and Devanbu (2013), Kamei et al. (2012), and SonarQube suites (Table 11).

As for RQ1, Figs. 9 and 10 depict the box plots reporting the distribution of AUC and F-measure values obtained during the LOGO validation of the three Machine Learning and the two Deep Learning models on the considered dataset. Instead, Figs. 13 and 14 refer to FNR and TNR values. In both figures, each color indicates the model produced considering different features.

Similarly to RQ1, we used the three selected Machine Learning models (Gradient Boost, Random Forest, and XG Boost) and with the two Deep learning models (FCNN and ResNet) to predict a fault based on software metrics. Table 12 reports all the accuracy metrics for the machine learning and the deep learning models.

Considering the results obtained with Machine Learning model, Kamei (2012) metrics and Rahman (2013) metrics work better individually (91% and 90% in average respectively), while SonarQube metrics presents the lowest accuracy (60% in average). Combining together different metrics provide a benefit only for sonarqube metrics (Table 12, Figs. 9 and 10).

On the contrary, Machine Learning models correctly identified the non-faulty classes (TNR - Fig. 14), while for Deep Learning models it depends on which software metrics are used as predictors.

As happened for RQ1, adopting LOGO validation strategy increases the overall performance of both Deep and Machine Learning models (Table 16 and Figs. 25 and 26 reported in the A Section).

To assess whether the accuracy metric distributions were statistically different when considering different metrics combinations, we first determine the normality of the data and since it was not satisfied, we run the post-hoc Nemenyi rank test (Nemenyi 1962) on all the Machine and Deep Learning models. For the sake of space limitations, we only report the results for the more accurate Machine and Deep Learning models for all the considered software metrics: XGBoost and ResNet. We report the statistical results achieved when considering the AUC and F-Measure of he models trained using the Rahman and Devanbu (2013), Kamei et al. (2012), metrics suite (Figs. 11a and 12a), and F-measure (Figs. 11b, 12b). Statistically significant differences are depicted in dark violet. The complete results are reported in our online appendix (Lomio et al. 2022).

Considering XGBoost, AUC values (Fig. 11a) obtained between the models built with SonarQube metrics (SQ) are statistically significant differences and the Rahman and Devanbu (2013), Kamei et al. (2012) metrics. Moreover, there is a statistically significant difference considering the other metrics combined together. The trend is observable for the values of F-measure (Fig. 11b). Looking at ResNet model, AUC (Fig. 12a) statistically significant differences results are observed between Kamei et al. (2012) and SonarQube metrics, while there is no substantial difference between Rahman and Devanbu (2013), Kamei et al. (2012) ones (Table 13).

4.3 RQ3.To What Extent Can SonarQube Rules Impact the Performance of Fault Prediction Models that Leverage Process and Product Metrics

In this Section, we considered in the metrics combination used in RQ2, including also the SonarQube rules. Table 10 depicts the accuracy metrics results for the SonarQube individually and with the Sonarqube rules types using the Machine learning, while Table 14 presents the results adopting Deep Learning models (Figs. 13 and 14). Figures 15 and 16 depict the box plots reporting the distribution of AUC and F-measure values obtained during the LOGO validation of the three Machine Learning and the two Deep Learning models on the considered dataset considering the SonarQube individually. Instead, Figs. 17 and 18 refer the Sonarqube rules grouped by types. In both figures, each color indicates the model produced considering different models.

As for the other RQs, to assess whether the accuracy metric distributions were statistically different when considering in the first case SonarQube rules and in the second case the rule types, we run the post-hoc Nemenyi rank test (Nemenyi 1962). We considered all the metric combinations and all the models (Figs. 27a, 28, 29, 30, 31, 32, and 33b in A).

SonarQube Rules

Evaluating the effect obtained including SonarQube rules with each metric combination, the observed change in terms of AUC and F-measure is not substantial (Table 10) adopting Machine Learning models. Instead, and unsuspected, the change is negative in all the combinations except for the pair SQ rules + SQ metrics with Gradient Boost as model and for SQ rules + Rahman and Devanbu (2013) + Kamei et al. (2012) metrics with Random Forest as model, where the change is significant. Instead, the results obtained with Deep Learning models turned out the best in terms of AUC. All the combinations significantly benefit from the inclusion of SonarQube rules. Considering the other accuracy metrics, we can observe the same trend as for AUC and F-measure. FNR rate is consistently below 20%, TNR up to 97%, and FRP below 3%. These results confirmed the better accuracy of Deep Learning compared with Machine Learning models. Deep Learning models are able to correctly identity a faulty commit, with a low probability of incorrect identification.

SonarQube rule types

The scenario is thoroughly different including SonarQube rule types, since we obtained different results from the ones seen with Machine Learning models. For all the combination of SonarQube rules and metrics, we observed a significant discrepancy of results for AUC and F-measure in both models. SQ metrics and Rahman and Devanbu (2013) + Kamei et al. (2012) metrics benefit from the inclusion of the SonarQube rules, while Kamei et al. (2012) metrics and Rahman and Devanbu (2013) are not affected. The other combinations see a decreased in the AUC. Instead, the effect observed with Deep Learning model is negligible. Considering the other accuracy metrics, we can observe the same trend as for the results obtained with the individual rules.

4.4 RQ4.Which is the Best Combination of Metrics and the Best Model for the Fault Prediction?

As for the previous RQ, to assess whether the performance distributions of the different software metrics and SonarQube rules were statistically different when considering different combinations of Machine Learning and Deep Learning models, we run the post hoc Nemenyi rank test (1962). For the sake of space limitations, we only report the results for the more accurate combinations features (SonarQube rules, product and process metrics) and for more accurate models for all the considered features. For consistency, we show the p-values of the Nemenyi rank test computed on the distribution of AUC and F-measure values by the means of heatmaps (Figs. 19a, b, 21a and b) where statistically significant differences are depicted in dark violet. The complete results are reported in our online appendix (Lomio et al. 2022).

Nemenyi test p-values obtained for comparing the models trained on SQ rule types, SQ metrics and Rahman and Devanbu (2013) using the different models (RQ4)

Looking at the results obtained in the previous RQs, and considering the values of the accuracy metrics obtained, we identified the Deep Learning models as more accurate than the Machine learning ones. Notably, the ResNet was shown to outperform all the other models, including the FCN.

The two feature sets in which the ResNet achieves the best results are:

-

SonarQube rule types + SonarQube + Rahman and Devanbu (2013) metrics (Fig. 19a and b)

-

SonarQube rule types + SonarQube + Rahman and Devanbu (2013) + Kamei et al. (2013) metrics (Fig. 20a and b)

Figures 19a and 20a show statistically significant differences (depicted in dark violet) in AUC values between Machine Learning and Deep Learning models. These results confirm the large positive effect that Deep Learning models provide to the two identified feature sets. On a similar note, Figs. 19b and 20b show, in terms of F-measure, the presence of statistically significant differences in the same feature sets as for AUC. This further supports the contribution provided by the Deep Learning models. It can be further seen in Fig. 21a and b and Fig. 22a and b, that the SonarQube rule types + SonarQube + Rahman and Devanbu (2013) metrics and SonarQube rule types + SonarQube + Rahman and Devanbu (2013) + Kamei et al. (2013) metrics yield significantly better results when used as feature set for the ResNet model.

5 Discussion

In this Section, we discuss the results obtained according to the RQs. The results achieved revealed a number of insights that may lead to concrete implications for the software engineering research community.

Only SonarQube Rules are Not Enough

One of the main outcomes of our study revealed the ability of SonarQube rules alone to predict faults only under certain conditions. In order to achieve the best performance, the analysis should be run considering Deep Learning models as classifiers. Unfortunately, Machine Learning models led to poorly accurate results and did not provide comparable values. The obtained values are lower, making the prediction similar to a “random guess”. Adopting historical data instead of a single snapshot (as for Machine Learning models) can be better when the commit data is time-dependent. Even if these results with Machine Learning models are contrasting with the previous ones (Lenarduzzi et al. 2019b), they are more reliable and realistic because of the new preprocessing approach and the more accurate validation strategy.

However, in the latter case, when we considered the SonarQube rule types as predictors, we observed unsuspected results. We observed that Machine Learning models have benefited from the grouping, while Deep Learning models seem unaffected by it. We should notice that the benefit achieved with Machine Learning models is small but significant.

In the light of the facts, our suggestion is to equally include the rule types as predictors mainly because it is more simple to monitor the analysis since the number of variables is less than considering all the rules without grouping.

Our results, therefore, represent a call for further investigation regarding the role of static analysis tools for faults prediction. The different static analysis tools can classify and group similar rules differently or provide different classifications. It should be interesting to evaluate if the same trend observed with SonarQube could also be recoverable with other static analysis tools, such as Findbugs or Checkstyle. In particular, the focus should be reserved to the case where the rule types are considered as predictors to confirm or deny the results obtained with sonarQube. It should be important to determine if the negligible effect of the rules types achieved from Deep Learning is intrinsic of the adopted tool or can be generalizable. In particular, it is important to determine if the trend is attributable to the classifier and not to the static analysis tool.

Product and Process Metrics. Which Ones?

The performances reached adopting process and product metrics as fault predictors are higher in terms of AUC and F-measure. This is particularly evident when considering Rahman and Devanbu (2013) and Kamei et al. (2012) metrics individually, confirming the previous study results (Kamei et al. 2012; Pascarella et al. 2019). However, when these two metrics sets are combined the performance decreases. This phenomenon deserves further and deeper investigation. Considering the third metric set provided by SonarQube, the performances are inferior; however, combined with Rahman and Devanbu (2013) or with Kamei et al. (2012) set the prediction accuracy increases, especially when combined with Kamei et al. (2012) metrics. Considering all the three metric sets together does not provide an evident improvement.

SonarQube Rules, Product and Process Metrics. All Together?

Even if we achieved a higher accuracy when considering Rahman and Devanbu (2013) and Kamei et al. (2012) metrics, including SonarQube rules still improves the prediction. The accuracy metrics reached stunning values (more than 95%), better than expected. These results deserve further focus and a deep investigation in order to determine if it is an isolated case attributable only to SonarQube or can be generalizable to other static analysis tools. As for the previous case, when we considered only the SonarQube rules as features, we suggest to deeply investigate the role of the other static analysis tools in combination with the different software metrics.

Machine Learning or Deep Learning?

We observed that the classifiers’ choice between single snapshot (Machine Learning) and historical data (Deep Learning) and inside the single classifier categories has a significant impact on the resulting capabilities.

Considering the three Machine Learning models, we notice that, as expected, boosting methods performed better the faults detection accuracy, compared with traditional ensemble models such as Random Forest. We believe that the reason behind this is due to the boosting models’ characteristics. Such characteristics allow to iteratively train a weak classifier on subsequent training data, assigning a weight to each instance of the training set, and modifying it at each iteration, increasing the weight for the misclassified samples. Consequently, the boosting methods are focused more on misclassified samples, which results in better performances.

Results discriminated Machine Learning and Deep Learning models performance in terms of accuracy. Deep Learning models work better than Machine Learning ones, and the difference between the two Deep Learning models is negligible. The performances of the ResNet were expected, as similar results were also found in other time series classification tasks (Lomio et al. 2019). The better performance of the deep learning models can be attributed also to the fact that these can take into account the time dependency of the commits as this can bring additional useful information which should be considered (Saarimäki et al. 2022). Compared with Machine Learning models, Deep Learning increases the AUC rate, enables the correct fault identification, and decreases the probability of an incorrectly identification.

Regarding the preprossessing approach, we found that, independently from the classifier categories, when the dataset is imbalanced, the commits labeled as fault inducing represent a very small portion of the total number of commits. The inclusion of an oversampling step (e.g., SMOTE) improves the performance of the classifiers. Therefore, we recommend researcher to consider oversampling techniques in similar contexts.

6 Threats to Validity

In this Section, we discuss the threats to validity, including internal, external, construct validity, and reliability. We also explain the different adopted tactics (Yin 2009).

Construct Validity

This threat concerns the relationship between theory and observation due to possible measurement errors. SonarQube is one of the most adopted static analysis tool by developers (Vassallo et al. 2019a; Avgeriou et al. 2021). Nevertheless, we cannot exclude the presence of false positives or false negatives in the detected warnings; further analyses on these aspects are part of our future research agenda. As for code smells, we employed a manually-validated oracle, hence avoiding possible issues due to the presence of false positives and negatives. We relied on the ASF practice of tagging commits with the issue ID. However, in some cases, developers could have tagged a commit differently. Moreover, the results could also be biased due to detection errors of SonarQube. We are aware that static analysis tools suffer from false positives. In this work, we aimed to understand the fault proneness of the rules adopted by the tools without modifying them to reflect the real impact that developers would have while using the tools. In future works, we plan to replicate this work manually validating a statistically significant sample of violations, to assess the impact of false positives on the achieved findings. In addition, it is worth mentioning that while SonarQube is a very well known and used static analysis tool, there are many others from which it differs for number and type of metrics. This could therefore lead to very different prediction results in terms of fault-proneness. For this reason, in the future we plan on further extending the analysis including and comparing static analysis tool beyond SonarQube. As for the analysis time frame, we analyzed commits until the end of 2015, considering all the faults raised until the end of March 2018. We expect that the vast majority of the faults should have been fixed. However, it could be possible that some of these faults were still not identified and fixed.

Internal Validity

This threat concerns internal factors related to the study that might have affected the results. As for the identification of the fault-inducing commits, we relied on the SZZ algorithm (Śliwerski et al. 2005). We are aware that in some cases, the SZZ algorithm might not have identified fault-inducing commits correctly because of the limitations of the line-based diff provided by git, and also because in some cases bugs can be fixed modifying code in other locations than in the lines that induced them. Moreover, we are aware that the imbalanced data could have influenced the results (more than 90% of the commits were non-fault-inducing). However, the application of solid machine learning techniques, commonly applied with imbalanced data could help to reduce this threat.

External Validity

Our study considered the 33 Java open-source software projects with different scope and characteristics included in the Technical Debt dataset. All the 29 Java projects are members of the Apache Software Foundations that incubates only certain systems that follow specific and strict quality rules. Our empirical study was not based only on one application domain. This was avoided since we aimed to find general mathematical models for the prediction of the number of bugs in a system. Choosing only one or a very small number of application domains could have been an indication of the non-generality of our study, as only prediction models from the selected application domain would have been chosen. The selected projects stem from a very large set of application domains, ranging from external libraries, frameworks, and web utilities to large computational infrastructures. We analyzed commits until the end of 2015, considering all the faults raised until the end of March 2018. We are aware that recent data can provide different results.

We are aware that different programming languages, and projects at different maturity levels could provide different results. Our empirical study was not based only on one application domain. This was avoided since we aimed to find general mathematical models for the prediction of the number of bugs in a system. Choosing only one or a very small number of application domains could have been an indication of the non-generality of our study, as only prediction models from the selected application domain would have been chosen.

Conclusion Validity

This threat concerns the relationship between the treatment and the outcome. We adopted different machine learning and deep learning models to reduce the bias of the low prediction power that a single classifier could have. We also addressed possible issues due to multicollinearity, missing hyper-parameter configuration, and data imbalance. We recognize, however, that other statistical or machine learning techniques might have yielded similar or even better accuracy than the techniques we used. It is not to be excluded that the results might differ slightly when considering a within-project validation. Unfortunately, due to the nature of the data, having less than 5% of samples belonging to the positive class, the only way to have enough samples of both classes is to consider all projects together, using, therefore, a cross-project validation setting. We tried using a within-project validation, but this unfortunately would “break” the algorithms used since there are many data “splits” in which there are no inducing commits. For this reason we chose to use a cross-project validation.

7 Related Work

Software defect prediction is one of the most active research areas in software engineering. Faults prediction has been deeply investigated in the last years, where research focused mainly on improving the predictions granularity (Pascarella et al. 2019) such as method or file (Menzies et al. 2010; Kim et al. 2011; Bettenburg et al. 2012; Prechelt and Pepper 2014), adding features, e.g., code review (McIntosh and Kamei 2018), change context (Kondo et al. 2019), or applying machine and deep learning models (Hoang et al. 2019; Lenarduzzi et al. 2020e).

As factors to predict bug-inducing changes some authors adopted change based metrics (McIntosh and Kamei 2018), including size (Kamei et al. 2013), the history of a change as well as developer experience (Kamei et al. 2013), or churn metrics (Tan et al. 2015). Another study included code review metrics for the predictive models (McIntosh and Kamei 2018). One aspect investigated was also the decreasing of the effort required to diagnose a defect (Pascarella et al. 2019). Researchers included several other software properties, like structural (Basili et al. 1996; Chidamber and Kemerer 1994), historical (D’Ambros et al. 2012; Graves et al. 2000), and alternative (Bird et al. 2011; Pascarella et al. 2020; Palomba et al. 2017) metrics. The achieved results considering software properties, product and process metrics are the most promising ones (Pascarella et al. 2020).

In the recent years, researchers investigated mainly shorter-term defects analysis, since this better fits the developers’ needs (Pascarella et al. 2018b). Moreover, developers can immediately identify defects in their code adopting shorter-term approaches (Yang et al. 2016).

Two studies included as factors static analysis warnings (Querel and Rigby 2018; Trautsch et al. 2020) for building just-in-time defect prediction models. According to their results, they can improve the predictive models accuracy (Querel and Rigby 2018). Moreover, both code metrics and static analysis warnings are correlated with bugs and that they can improve the prediction (Trautsch et al. 2020).

The most adopted approaches are based on supervised (Graves et al. 2000; Hall et al. 2012; Jing et al. 2014) and unsupervised models (Fu and Menzies 2017; Li et al. 2020). These models consider features such as product (e.g., CK metrics Chidamber and Kemerer 1994) or process features (e.g., entropy of the development process Hassan 2009b).

Significant milestones for just-in-time defect prediction are represented by the works made by Kamei et al. (2012, 2016). They proposed a just-in-time prediction model to predict whether or not a change will lead to a defect with the aim of reducing developers and reviewers’ effort. In particular, they applied logistic regression considering different change measures such as diffusion, size, and purpose, obtaining an average accuracy of 68% and an average recall of 64%. More recently, Pascarella et al. (2019) complemented their results considering the attributes necessary to filter only those files that are defect-prone. The reduced granularity is justified by the fact that 42% of defective commits are partially defective, i.e., composed of both files that are changed without introducing defects and files that are changed introducing defects. Furthermore, in almost 43% of the changed files a defect is introduced, while the remaining files are defect-free.

Faults prediction were investigated adopting Machine learning models focusing on the features role such as change size or changes history, that can represent a code change, and using them as predictors (Kamei et al. 2013; Pascarella et al. 2018a, 2019).

Machine learning techniques were also largely applied in detection of technical issues in the code, such as code smells (Arcelli Fontana et al. 2016; Di Nucci et al. 2018; Pecorelli et al. 2020b; Lujan et al. 2020). While machine learning has been mainly applied to detect different code smell types (Khomh 2009; Khomh et al. 2011), unfortunately, only few studies applied machine learning techniques to investigate static analysis tool rules, such as SonarQube (Falessi et al. 2017; Tollin et al. 2017; Lenarduzzi et al. 2020e) or PMD (Lenarduzzi et al. 2021c).

Considering defect prediction Yang et al. (2017) proposes a novel approach TLEL composed by a two layer ensemble learning technique. In the inner layer, we adopted bagging based on decision tree to build a Random Forest model. In the outer layer, they ensembled many different Random Forest models.

Machine learning techniques were applied to detect multiple code smell types (Arcelli Fontana et al. 2016), estimate their harmfulness (Arcelli Fontana et al. 2016), determine the intensity (Arcelli Fontana and Zanoni 2017), and to classify code smells according to their perceived criticality (Pecorelli et al. 2020b). The training data selection can influence the performance of machine learning-based code smell detection approaches (Di Nucci et al. 2018) since the code smells detected in the code are generally few in terms of number of occurrences (Pecorelli et al. 2020a).

Moreover, machine learning algorithms were successfully applied to derive code smells from different software metrics (Maneerat and Muenchaisri 2011).

Considering the detection of static analysis tool rules, SonarQube was the tool mainly investigated, focusing on the effect of the presence of its rules on fault-proneness (Falessi et al. 2017; Lenarduzzi et al. 2020e) or the change-proneness (Tollin et al. 2017).

Machine learning approaches were successfully applied since results showed that 20% of faults were avoidable if the SonarQube-related issues would have been removed (Falessi et al. 2017), however, the harmfulness of the SonarQube rules is very low (Lenarduzzi et al. 2020e). Positive results application were collected also considering class change- proneness (Tollin et al. 2017).

Machine learning approaches were also used to determine if the SonarQube technical debt was be predicted based also on software metrics (Lenarduzzi et al. 2019a). Results demonstrated the impossibility to have positive prediction. Another point of view which has benefited from machine learning was the evaluation of the remediation effort calculated by SonarQube (Saarimaki et al. 2019; Baldassarre et al. 2020). Results highlighted the model overestimation of the time to fix the Technical Debt-related issues.

In order to satisfy computer performance that are fastly increasing in the last years, Deep Learning is becoming popular in many domains (Hinton and Salakhutdinov 2006) such as image classification (Krizhevsky et al. 2017) or natural language processing (Sarikaya et al. 2014). There also many existing studies that leverage deep learning techniques to address other problems in software engineering (White et al. 2015; Lam et al. 2015; Gu et al. 2016, 2018; Guo et al.2017). Since the promising results, Deep Learning could be a valid approach to adopt also in bug prediction in order to improve the performance of just-in-time defect prediction.

Deep learning can be useful to improve the logistic regression weaknesses when the study should combine features to generate new ones. This approach was successfully applied in Yang et al. (2015) considering 14 traditional change level features in order to predict bugs.

The benefit of using Deep Learning instead of machine Learning to improve the performance of just-in-time defect prediction is still under investigation (Yang et al. 2015; Abozeed et al. 2020; Ferenc et al. 2020; Wang et al. 2020). The results achieved until now demonstrates a promising improvement in the bug prediction accuracy compared with other approaches (32.22% more bugs detected) (Yang et al. 2015) especially for small dataset and in the feature selection (Abozeed et al. 2020), and to predict the presence of bugs in classes from static source code metrics (Ferenc et al. 2020).