Abstract

Context

Software engineering is a social and collaborative activity. Communicating and sharing knowledge between software developers requires much effort. Hence, the quality of communication plays an important role in influencing project success. To better understand the effect of communication on project success, more in-depth empirical studies investigating this phenomenon are needed.

Objective

We investigate the effect of using a graphical versus textual design description on co-located software design communication.

Method

Therefore, we conducted a family of experiments involving a mix of 240 software engineering students from four universities. We examined how different design representations (i.e., graphical vs. textual) affect the ability to Explain, Understand, Recall, and Actively Communicate knowledge.

Results

We found that the graphical design description is better than the textual in promoting Active Discussion between developers and improving the Recall of design details. Furthermore, compared to its unaltered version, a well-organized and motivated textual design description–that is used for the same amount of time–enhances the recall of design details and increases the amount of active discussions at the cost of reducing the perceived quality of explaining.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Software engineering is a social activity and requires intensive communication and collaboration between developers. In large companies, developers work in different development teams and collaboratively communicate with many stakeholders. In such a setting, the quality of communication between the stakeholders plays an important role in reducing the overall teams’, and thus projects’, development effort. In a multiple-case study on challenges and efforts of model-based software engineering approaches, Jolak et al. (2018) analyzed the distribution of efforts over different development activities in two software engineering projects. Interestingly, they found that communicating and sharing knowledge dominates the effort spent by developers. The effort on communication, as Jolak et al. found, is actually more than all of the efforts that developers spent in any of the other observed development activities, such as, requirements analysis, design, coding, testing, integration, and deployment.

Furthermore, poorly defined software applications (due to miscommunication between stakeholders) can affect the final structure and/or behavior of these applications. This is in line with Jarboe (1996) and Kortum et al. (2017) who consider that the quality of communication does influence developers’ activity experience and achievement, and therefore customer’s satisfaction.

The aforementioned studies underline the importance of communication in Software Engineering (SE). They also highlight the need to study communication in-depth to determine elements or criteria of its efficiency and effectiveness. The study we present in this article is inline with this concern: we investigate how different software architecture design representations affect the communication of design knowledge. In particular, we compare textual vs. graphical representations. In contrast to a textual representation, a graphical representation provides a two-dimensional visuospatial description of information reflecting the actual spatial configurations of the parts of a process or system (Tversky 2018). With respect to knowledge communication, we look into the following communication aspects:

-

1.

Explaining: or knowledge donating, communicating the personal intellectual capital from one person to others (De Vries et al. 2006).

-

2.

Understanding: or knowledge collecting, receiving others’ intellectual capital (De Vries et al. 2006).

-

3.

Recall: or memory recall, recognizing or recalling knowledge from memory to produce or retrieve previously learned information (Anderson L.W. et al. 2001).

-

4.

Collaborative Interpersonal Communication (Soller 2001), which includes:

-

(a)

Active Discussion: questioning, informing, and motivating others.

-

(b)

Creative Conflict: arguing and reasoning about others’ discussions.

-

(c)

Conversation Management: coordinating and acknowledging communicated information.

-

(a)

1.1 Rationale

Kauffeld and Lehmann-Willenbrock (2012) suggested that effective team communication and information flow are prerequisites for the success of software development projects. In a study on requirements practices in start-ups, Gralha et al. (2018) identified knowledge management and communication as increasingly important strategies for risk mitigation and prevention. As a consequence, research concerning different factors influencing the degree and way in which people communicate and share their knowledge is actually relevant for maximizing the aforementioned advantages.

Graphical descriptions encode and present knowledge differently from textual descriptions. In particular, they provide a visuospatial representation of information, and can recraft these information into a multitude of forms by using fundamental graphical elements, such as dots and lines, nodes and links (Tversky 2018). Moreover, graphical descriptions encourage spatial inferences (e.g., inferences about the behavior, causality, and function of a system) to substitute for and support abstract inferences (Bobek and Tversky 2016).

This is inline with Moody (2010), who states that graphical and textual knowledge representations are differently processed by the human mind. Empirical evidence on how graphical descriptions affect developer’s achievement and development productivity is still underwhelming, as reported by Hutchinson et al. (2011). Moreover, Meliá et al. (2016) report that the software engineering field lacks a body of empirical knowledge on how different representations (graphical vs. textual) could provide support for improving software quality and development productivity.

In this study, we focus on design knowledge communication/transfer between two software developers, where, by using a graphical vs. textual software design description, one developer is taking the role of design Explainer (i.e., design knowledge owner), and one developer is taking the role of design Receiver (i.e., design knowledge receiver). Rus et al. (2002) reported that greatest challenge of companies is to retain tacit knowledge, mainly, but also explicit knowledge (e.g., models).

Companies, such as Ericsson Software TechnologyFootnote 1 and sd&m,Footnote 2 started initiatives –Ericsson’s initiative is called “Experience Engine”– to exchange knowledge between developers by connecting two individuals, a problem owner and experience communicator. The problem owner is the employee who requires information or support to solve a specific problem and the experience communicator is the employee who has in-depth knowledge of the problem domain. Having been connected, the experience communicator has to educate the problem’s owner on how to solve it. The aforementioned initiatives illustrate that our study has a practical relevance.

1.2 Objective and Contribution

We planned and conducted a family of experiments with a goal to understand and compare the effect of using a Graphical Software Design description (GSD) versus a Textual Software Design description (TSD) on software design communication.

Through this, we contribute to the body of empirical knowledge on the practical use of graphical versus textual software design descriptions. Such knowledge might lead to achieving more effective software design communication, which in turn would help in reducing the total effort of software development activities.

Consequently, we address the research objective by answering the following question:

-

R.Q.1 How does the representation of software design (graphical vs. textual) influence [Communication Aspect]?

Where the investigated [Communication Aspect]s are the following: (1) Design Explaining, (2) Design Understanding, (3) Design Recall, (4) Active Discussion, (5) Creative Con ict, and (6) Conversation Management. We first understand how each software

design representation (i.e., graphical/textual) affect the six aspects of communication that we described previously (i.e., explaining, understanding, recall, active discussion, creative conflicts, and conversation management). Then, we compare the effect of using the graphical vs. textual software design description on the considered communication aspects. To address certain threats to external validity, we also compare the effect of using a cohesiveFootnote 3 and motivatedFootnote 4 TSD versus less cohesive and unmotivated TSD on software design communication. In particular, we address the following research question:

-

R.Q.2 Does using a cohesive and motivated TSD in uence [Communication Aspect]?

Where the investigated [Communication Aspect]s are the following: (1) Design Explaining, (2) Design Understanding, (3) Design Recall, (4) Active Discussion, (5) Creative Conflict, and (6) Conversation Management.

The remainder of this paper is organized as follows: We discuss the related work in Section 2. We describe the family of experiments in Section 3. We present the results in Section 4. We discuss the results and threats to validity in Section 5. Finally, we conclude and describe the future work in Section 6.

2 Related Work

Effective communication depends on various factors, such as personality (Cruz et al. 2015), distance (Jolak et al. 2018), or knowledge representation (graphical vs. textual) (Heijstek et al. 2011; Meliá et al. 2016; Sharafi et al. 2013).

In a recent study on design activities of co-located and distributed collaborative software design (Jolak et al. 2018), the authors investigate whether advanced technologies for distributed communication can replace personal meetings. The main result is that co-located face-to-face meetings remain relevant as facial reactions and body language are often not transmitted by current communication software. This is partly due to technical challenges, such as unstable or slow Internet connection, that affect communication results.

In contrast to that study, our family of experiments does not investigate distributed communication and is conducted without the use of communication tool-support

to mitigate the effects of technical challenges.

Meliá et al. (2016) describe an experiment in which students perform maintenance tasks on a graphical model and on a textual model. The authors investigate whether a model’s syntax affects subjective and objective performance and whether the notation influences developer satisfaction. Objective performance is measured by the number of correct answers in the task whereas the subjective performance is the performance as perceived by the developer. In the experiment, participants were divided into two groups, one group worked with a model for selling tickets, the other group had a model for organizing online courses. Participants received the models in textual and in a graphical notation and were asked to find 5 errors in each notation. They also received 5 tasks in which they had to extend and modify each model. Participants using the textual notation performed significantly better in finding errors in the domain model and also spent less time until finishing the task. Nonetheless, participants preferred to work with the graphical notation. The authors believe this to originate from the fact that students learn graphical modelling languages such as UML as stereotypes for domain models whereas less attention is given to textual modelling languages.

In another study, the authors measure how well participants extract the required information (such as architectural design decisions) from different media (Heijstek et al. 2011). The researchers collected participant-specific information in two questionnaires, filmed participants during tasks, and asked them to think out loud. The experiment is comprised of four architectures, out of which each consisted of a graphical and a textual description. Participants (students and professional developers) were asked three questions per architecture. The authors observed that no notation was clearly superior in communicating architecture design decisions. Nonetheless, participants tended to first look at the graphical notation before reading the text. The authors attribute this to the clarity of the graphic representation, which enables participants to grasp the structure of the model more quickly.

In a case study on comparing graphical versus textual representations, the researchers measure the accuracy and time spent to solve three requirement comprehension tasks (Sharafi et al. 2013). The study does not indicate results concerning accuracy (both notations yield correct results), but participants spent less time when working with textual requirements. Participants preferred to work with the graphical representation nonetheless. Also, when working with a combination of graphical and textual representations, the study measured the best results concerning time and accuracy.

Other research investigated a combined usage of textual and graphical representations (Liskin 2015). The researcher interviewed 21 practitioners to find out how developers work with different requirements artifacts of various granularity and notation and how they handle scattered information. The researcher found out which artifacts the practitioners used and which problems they encountered. A share of 70% of the interviewees reported issues when working with multiple artifacts. The main shortcomings were inconsistencies and the additional effort for documenting.

In contrast to our research, the studies described in (Heijstek et al. 2011; Liskin 2015; Meliá et al. 2016; Sharafi et al. 2013) do not observe communication based on using graphical and textual representations, but how well both notations are suited to share information. Therefore, more research concerning both notations as a base for explaining and discussing software architectures is required.

Other related research aims to find out if drawing improves the recall ability, compared to making textual notes (Meade et al. 2018). The participants of this research were divided into two groups, younger and older adults, to measure whether the notation influences both groups in the same way. Participants were told 30 nouns, one after the other, and asked to either draw or to note the items textually. Afterwards, they were asked to recall and list all items. For the drawn nouns both groups performed equally well, but for the textual words, young adults performed better. This indicates that a graphical notation can compensate for the age-related deficit.

To summarize the related work section, Table 1 provides a brief comparison between the research objectives of our study and related work.

3 Experimental Design

This section describes the protocol that is used to perform the experiments and analyze the results. In particular, we report the experiment according to the guidelines suggested by Jedlitschka et al. (2008).

3.1 Family of Experiments

Easterbrook et al. (2008) highlighted that controlled experiments help to investigate testable hypotheses where one or more independent variables are manipulated to measure their effect on one or more dependent variables. A family of experiments facilitates building knowledge and extracting significant conclusions through the execution of multiple experiments pursuing the same goal. Basili et al. (1999) reported that families of experiments can help to (i) generalize findings across studies and (ii) contribute to important and relevant hypotheses that may not be suggested by individual experiments.

We planned and conducted a family of experiments based on the methodology of Wohlin et al. (2012). Our family of experiments are between-subject designs to minimize learning effects and transfer across conditions. The family of experiments consists of one original experiment and three external replications involving 240 participants in total (See Table 2).

The original experiment (OExp) was conducted at the University of Gothenburg involving a mix of 50 B.Sc. and M.Sc. Software Engineering students. In OExp, we study the effect of using a graphical software design description (GSD) vs. textual software design description (TSD) on software design communication. The first replication (REP1) was conducted at RWTH Aachen University with 36 M.Sc. and Ph.D. SE students. The second replication (REP2) was conducted at the University of Lille involving 94 M.Sc. SE students. REP1 and REP2 replicated the original experiment as accurately as possible (strict replications (Basili et al. 1999)). The third replication (REP3) was conducted at the Slovak University of Technology with 60 B.Sc. and M.Sc. SE students. REP3 varied the manner in which the original experiment was conducted, so that certain threats to external validity were addressed. More specifically, REP3 is a replication that varies a variable intrinsic to the object of study (Basili et al. 1999): we study the effect of using a graphical (GSD) vs. altered- textual software design description (A-TSD) on software design communication. More details regarding this variation are provided in Section 3.5.

The experiment material and communication language in OExp, REP1, and REP3 were in English. In contrast, the experimental material and communication language in REP2 (which was conducted at the University of Lille, France) were in French. The gender distribution in each experiment is also shown in Table 2. The majority of the participants are males (79%).

3.2 Scope

Developers intensively communicate ideas, decisions, progress, and updates throughout the software development life-cycle. In this study, we focus on investigating co-located, face-to-face software design communication.

Design communication plays a fundamental role in transferring software design decisions (i.e., instructions for software construction) from architects/analysts to programmers or other stakeholders. Also, the quality of these communications might play an important role in shaping the overall structure and behaviour of software products.

In co-located teams, developers usually communicate face-to-face. In distributed teams, developers use other communication channels, such as video conferencing systems. Jolak et al. (2018) found that co-located software design discussions are more effective than distributed design discussions. Moreover, Storey et al. (2017) stated that face-to-face communication is one of the most important and preferred communication channel for collaborative software development. Indeed, with face-to-face communications, developers can receive feedback quickly which facilitates discussing through complex issues, such as design decisions. Thus, we investigate co-located face-to-face communication, as this type of communication is widely preferred and would therefore contribute to the generalizability of our results.

Modeling languages can be (i) of general-purpose and applied to any domain, such as the Unified Modeling Language (UML) or (ii) domain-specific and designed for a specific domain or context, such as the Domain Specific Languages (DSLs). Brambilla et al. (2012) stated that UML is widely known and adapted, and comprises a set of different diagrams for describing a system from different perspectives. Dobing and Parsons (2006) found that the use of UML class diagrams substantially exceeds the use of any other UML diagram (use case, sequence, activity, etc.). Thus, in order to increase the generalizability of the results of this study we chose to represent the graphical software design description by a UML class diagram.

3.3 Participants

The population for this study was intended to match two prerequisites: (i) having a basic knowledge in UML (especially UML class models), (ii) and being able to understand and communicate in the experiment language. The target group in this case is the entire group of people who posses the aforementioned criteria: students who took an academic course in UML modeling, professional software developers, architects, etc. However, the portion of the population to which we had reasonable access is a subset of the target population. In particular, the accessible population for this family of experiments was the group of B.Sc. and M.Sc. Software Engineering (SE) students at the universities where the authors teach SE courses. The sampling approach was convenience sampling. On the one hand, this sampling approach is easy and readily available. On the other hand, the sample produced by convenience sampling might not represent the entire population (i.e., threat to external validity or generalizability of the results). To increase the external validity of the results, we recruited a mix of 240 B.Sc. and M.Sc. SE students from four universities to take a part in a family of experiments. Previously in Section 3.1 (Table 2), we provided details on the participants in this family of experiments.

3.4 Experimental Treatments

The participants of each experiment were randomly assigned to two treatments or groups:

-

Group G: participants in this group had to discuss a software design as represented by a graphical description (UML class diagram).

-

Group T: participants in this group had to discuss the same software design, but as represented by a textual description.

Furthermore, the participants of each group were randomly assigned one specific role:

-

Explainer: this role consisted in: (i) understanding the design representation, and (ii) explaining it to a Receiver.

-

Receiver: this role consisted in understanding the software design based on the discussion with an Explainer.

Having the roles assigned, we randomly formed 120 Explainer-Receiver pairs. These pairs were involved in discussing a design case which we detail in the next Section 3.5.

3.5 Design Case and Graphical vs. Textual Descriptions

We created a design case for our family of experiments. The design case describes a structural view of a mobile application of a fitness center, the Fitness Paradise.

This fitness center gives its clients the opportunity to book facilities and activities. The featured application enables clients to consult the schedule of activities, manage bookings, keep track of payments, and visualize performance data when available. We believe that the selected design case relies on a familiar domain, Sport and Gym, from everyday life which is quite popular and easy to understand without prior knowledge.

To introduce the Explainers with the design case, we created a design case specificationFootnote 5 document which describes the fitness center and lists the features of the mobile application in natural language.

3.5.1 Design Descriptions

We played the role of the designer/architect and created the two design representations (i.e., GSD and TSD). The GSD and TSD provide the same information and describe one structural design of the Fitness Paradise app. The two design descriptions only differ in the way they represent the design (i.e., graphical vs. textual).

-

GSD. We created the UML class diagramFootnote 6 of the design case. The diagram includes 28 classes (21 model entities, 3 controllers, and 4 views) and 30 relationships. We chose to use the Model View Controller (MVC) design pattern for structuring the design, as this pattern is well known by the participants of the experiments. The entities of each part of the MVC were given a specific color. The model entities have a yellow color, the controllers are in blue, and the views are in green.

The colors were added to the entities in the GSD in order to mimic the characteristic of structured textual document (which we describe next) in facilitating a visual distinction between different sections (i.e., the three parts of the MVC).

-

TSD. For EXP, REP1, and REP2, each element of the GSD was systematically used to create exactly one corresponding element (e.g., one paragraph or sentence) in the textual description, thus to maintain a one-to-one correspondence between GSD and TSD. In other words, we were thoroughly keen to make both the graphical and textual designs present the exact same amount of information or design knowledge in order to control eventual bias due to a different amount of information.

The textual descriptionFootnote 7 was arranged into two main structured sections. In the first section, we orderly described the entities of each module of the MVC: First the entities of the model part, then the entities of the controller part, and last the entities of the view part. In the second section, we described the relationships between the entities following the same appearance order of the entities.

-

Altered TSD. In REP3, we used an altered TSDFootnote 8 to know whether or not a motivated and cohesive TSD could affect design communication differently from the original TSD.

(i) Motivated: We added an introduction to the original textual description, including a rationale of the chosen design pattern (i.e., MVC).

(ii) Cohesive: The textual design description was organized differently. In particular, the description of the relationships of each entity was moved and placed right after the description of the entity. In this way, information regarding each entity and its relationships are close to each other, instead of being distant/remote (i.e., located on different pages), as in the original textual description.

3.6 Tasks

The main task of this family of experiments was inspired by the Chinese Whispers (or The Telephone) game. In this game, players form a line, and the first player comes up with a message and whispers it to the ear of the second person in the line. The second player repeats the message to the third player, and so on. When the last player is reached, they announce the message they heard to the entire group.

In contrast, we created a message (i.e., a software design representation), and asked the first player (i.e., the Explainer) to first understand the message then explain it to the second player (i.e., the Receiver). After that, the players (i.e., Explainers and Receivers) have to announce the message (i.e., via answering a post-task questionnaire). Finally, the original message (i.e., the software design representations) is compared with the final version (i.e., knowledge of Explainers and Receivers).

The main task of the experiments reflects common scenarios in software engineering industry where developers collaborate, communicate, and exchange knowledge in order to create software. For example, the main task reflects a common knowledge-transfer scenario between a software architect (i.e., Explainer) who owns knowledge on the structure and behavior of the software system and a software developer (i.e., Receiver) who needs to receive and understand the knowledge of the architect in order to start coding. Moreover, knowledge communication is especially important when new employees enter a company and struggle to learn the existing tacit knowledge. In this direction, our task reflects the scenario of onboarding of novice developers by experienced developers (e.g., Ericsson’s “Experience Engine” initiative (cf. section 1)).

In addition to the main task, we added two secondary tasks to collect complementary data, such as participants’ design experience and communication skills that are also needed for data analysis and results’ discussion.

For the study, the participants had to perform the following three tasks:

-

1.

Answer the pre-task questionnaire. All participants have to answer the pre-task questionnaire based on the group they are assigned to. No time-limit is imposed for this task. We noted the required time for this step during the experiments and found that it takes 15 minutes on average.

The pre-task questionnaire is developed to collect participants’ design experiences and communication skills based on self-evaluations. The questions in the pre-task questionnaire varyaccording to the role of the participant (Explainer vs. Receiver) and his/her group (G vs. T).

-

G-Explainer: participants belonging to this subset have to answer questions on (i) familiarity with software design and UML modeling, (ii) how good are they in understanding and sense-makingFootnote 9 of UML models, an English/French conversation, and explaining their knowledge to others.

-

G-Receiver: participants belonging to this subset have to answer questions on (i) familiarity with software design and UML modeling, (ii) how good are they in understanding and sense-making of UML models, an English/French conversation, and building knowledge from conversing with others.

-

T-Explainer: participants belonging to this subset have to answer questions on (i) familiarity with software design, (ii) how good are they in reading, understanding, and sense-making of an English/French text, understanding and sense-making of an English/French conversation, and explaining their knowledge to others.

-

T-Receiver: participants belonging to this subset have to answer questions on (i) familiarity with software design, (ii) how good are they in understanding and sense-making of an English/French conversation, and building knowledge from conversing with others.

-

-

2.

Discuss the Design (i.e., transfer design knowledge). Each Explainer receives a design case specification plus either a GSD or TSD, based on Explainer’s group (G or T). The Explainer has to read and understand the received artifacts, as good as he/she can, in 20 minutes (defined based on to the pilot studies, see Section 3.7.1). The Explainers are allowed to individually ask questions to experiment supervisors to clarify issues related to the design, if required.

After 20 minutes, the Explainers give the design case specification back to the supervisors, but keep the design description (GSD or TSD). Each Explainer is randomly paired with a Receiver from the same group. Then, each Explainer-Receiver pair is given 12 minutes (defined based on to the pilot studies, see Section 3.7.1) to discuss the design, where the Explainer has to explain the design and the Receiver has to understand the design. The Receivers can unhesitatingly ask questions. Moreover to help the understanding process, Receivers are allowed to take notes during the discussion, but all notes are collected by the supervisors before the next task. This is because two of the communication aspects, Understanding and Recall, that we measure require the participants to, respectively, apply and remember the design knowledge without using the design descriptions or the notes that they took during the discussions.

-

3.

Answer the post-task questionnaire. All participants have to answer the post-task questionnaire based on their groups. No time-limit is imposed for this task. We also noted the required time for this step and found that it takes 15 minutes on average.

The first part of the post-task questionnaire is developed to collect participants’ self-evaluations of their experiences just after the design discussion. The questions vary according to the role of the participant (Explainer vs. Receiver) and his/her group (G vs. T).

-

G-Explainer: participants belonging to this subset have to answer questions on (i) how good they are in remembering UML models, (ii) how well they did understand and explain the design, and (iii) how much diagrams did help them in understanding and explaining the design.

-

G-Receiver: participants belonging to this subset have to answer questions on (i) how good they are in remembering UML diagrams, (ii) how well they did understand the design from the discussion with the Explainer, and (iii) how much diagrams did help them in understanding the design.

-

T-Explainer: participants belonging to this subset have to answer questions on (i) how good they are in remembering a English/French text, (ii) how well they did understand and explain the design, and (iii) in case they could have used them, how much diagrams would have helped in understanding and explaining the design.

-

T-Receiver: participants belonging to this subset have to answer questions on (i) how good they are in remembering a English/French text, (ii) how well they did understand the design from the discussion with the Explainer, and (iii) in case they could have used them, how much diagrams would have helped in understanding the design.

The second part of the post-task questionnaire evaluates participants’ understanding and recall abilities.

To measure the Recall, we formulated ten questionsFootnote 10 challenging participants’ recall abilities. Two of these questions are open requiring free-text answers, six questions are multiple-choice questions which require the participants to choose only one choice, and two questions are check-boxes questions which require to select one or more answers from the available.

To measure the Understanding, we formulated three questionsFootnote 11 focusing on MVC design maintenance (using maintenance questions to measure understanding is motivated in Section 4.3). In each question we introduce a design maintenance (i.e., evolution) scenario and suggest four ways to address it. The three questions are multiple-choice questions which require the participants to choose only one choice from 4 provided choices.

To evaluate the answers of the participants on recall and understanding questions, we defined grading rules that can be consulted onlineFootnote 12.

-

Remark.

In REP2 (University of Lille), the pre- and post-task questionnaires, design case specification, GSD, and TSD were translated to French, as the SE course that the participants are frequenting is in French. During the translation process, each word was carefully chosen to match the semantics of the original English textual description as close as possible. To maintain a strict replication, after the translation process we thoroughly did review the aforementioned artifacts and ensured that the amount of information/knowledge they convey is the same as provided by the artifacts used in the original experiment (OExp).

3.7 Variables and Hypotheses

The goal of this study is to compare between the effect of using GSD versus TSD on software design communication.

The only independent variable and manipulated factor is the design description. This variable is nominal and corresponds to two treatments/groups: G group using GSD and T group using TSD.

In this study, we consider six dependent variables (See Table 3). These variables correspond to the six communication aspects which we described in the introduction.

The original experiment and replications were conducted under the same environment conditions and by following a well-defined protocol to ensure that the impact of any other variable on the results is relatively negligible.

For OExp, REP1, and REP2, we formulate and study the following nullH0 and alternative hypotheses H\(^{a}_{0}\):

-

H EXP\(_{0}^{a}\) : The design description [has no impact]0/[has impact]a on EXP.

-

H UND\(_{0}^{a}\) : The design description [has no impact]0/[has impact]a on UND.

-

H REC\(_{0}^{a}\) : The design description [has no impact]0/[has impact]a on REC.

-

H AD\(_{0}^{a}\) : The design description [has no impact]0/[has impact]a on AD.

-

H CC\(_{0}^{a}\) : The design description [has no impact]0/[has impact]a on CC.

-

H CM\(_{0}^{a}\) : The design description [has no impact]0/[has impact]a on CM.

REP3 varies one variable intrinsic to the object of study (i.e., the independent variable TSD). Accordingly, we study the following nullH0 and alternative hypothesis H\(^{a}_{0}\):

-

H TSD\(_{0}^{a}\) : A motivated and cohesiveTSD [has no impact]0/[has impact]a on the communication aspect.

3.7.1 Experiment Procedure

Before presenting the experiment procedure, we would like to highlight that we conducted several pilot studies, 2 in the university of Gothenburg, 1 in Aachen university, 1 in Lille university, and 1 in the Slovak university. To cover the treatments of our study, each pilot study involved 2 Explainer-Receiver pairs (B.Sc., M.Sc., or PhD students in SE). One pair was assigned to the G group using a graphical design description, and the second pair was assigned to the T group using a textual design description. These pilot studies helped us in:

-

Designing a research protocol and assessing whether or not it is realistic and workable, especially in estimating the time that is required by: (i) the Explainer to understand the design (20 minutes), and (ii) the Explainer and Receiver to discuss the design (12 minutes).

-

Identifying logistical problems and determining what resources (e.g, supervisors, software, and rooms) are needed for the actual experiments.

-

Training the supervisors of the experiments.

The experiment procedure was created to define the process of the experiment and to ensure strict replications of the original experiment.

Figure 1 presents the four main steps of the experimentation procedure:

-

Step 1: To anonymize their identity and thus their answers, all participants were randomly assigned an identification number (ID). We asked the participants to bring their PCs to be able to answer the online pre- and post-task questionnaires. Eduroam Internet connection was available in the rooms where the experiments were running. Also, we asked the participants to bring a device to record the discussions (either by downloading audio-recording software on their PCs or by using a smart-phone with a recording application). We booked large university lecture-rooms which can host all Explainer-Receiver pairs with a sufficient distance between each pair. This helps to reduce voice interference to a minimum and produce better-quality audio recordings. We randomly assigned the participants to two groups (G and T). Furthermore, we randomly assigned each participant one role, Explainer or Receiver. After that, we asked the participants to answer the pre-task questionnaire.

-

Step 2: Once all participants filled the pre-task questionnaire, the Explainers were taken to a second room where they received the design case specification and the design description (GSD or TSD). The Explainers were asked to understand the design that they received as good as they can in 20 minutes. During this time, the Receivers ensured that their recording software/devices were working as expected.

-

Step 3: After 20 minutes, we took the design case specification from the Explainers, but let them keep the assigned design description (GSD or TSD). The Explainers and Receivers were randomly grouped in pairs in one or two rooms according to the number of participants and room’s capacity.

The pairs were then informed that, using the design descriptions (GSD or TSD), Explainers should explain the design to the Receivers in 12 minutes. We also informed the pairs that Receivers can ask clarification questions to the Explainers. When all participants were prepared, we asked the participants to start the audio recorder software and begin the discussions by introducing themselves (by mentioning the name/ID and role). This allowed us later to match the discussion records of the participants with their corresponding answers to the questionnaires.

-

Step 4: After 12 minutes, the participants were informed that they should stop the audio recording. All documents, including Receivers’ draft notes, were collected. Then, we asked all the participants to answer the post-task questionnaire individually. Lastly, we asked the participants to rename the audio recordings with their ID numbers and put the recordings in a USB flash drive that we provided.

3.8 Data Analysis

The data of this study was collected via questionnaires and by audio-recording discussions between Explainers and Receivers. In this section, we describe three types of analysis procedures that we used:

-

Data Set Preparation: To check and organize data collected from different sources and prepare it for analysis.

-

Descriptive Statistics: To describe the basic features of the data by summarizing and showing measures in a meaningful way such that patterns might emerge from the data.

-

Hypothesis Testing: To make statistical decisions by evaluating two mutually exclusive statements about a population and determining which statement is best supported by the sample data.

-

Meta-Analysis: To obtain a global effect of a factor on a dependent variable by combining the effect size of different experiments, as well as assessing the consistency of the effect across the individual experiments (Borenstein et al. 2011).

3.8.1 Data Set Preparation

Data from 14 participants (7 pairs) were eliminated: 1 Explainer-Receiver pair from OExp as well as REP1, and 5 pairs from REP2. In particular, 5 pairs discussed the design assignment for too short time (less than 2 minutes) and decided to discuss other topics of their interest for the rest of the time. Moreover, the audio quality of the recorded discussion of 2 pairs was bad and the corresponding data from these pairs was eliminated. The final number of participants in each experiment is provided in Table 4.

The discussions between Explainers and Receivers were recorded by using either mobile phones or Audacity, an easy-to-use audio editor and recorder that works on multiple operative systemsFootnote 13. We transcribed approximately 23 hours of audio recordings and performed a manual coding of more than 2000 discussion records between Explainers and Receivers. For coding the discussions, we used the collaborative interpersonal problem-solving skill taxonomy of McManus and Aiken (1995), as presented in Figure 2. This taxonomy captures the collaborative interpersonal communication aspects; Active Discussion, Creative Conflict, and Conversation Management, which we described previously in Section 1. For instance, the following transcribed sentence: “Can you explain why/how?” is a Request for Clarification which contributes to Active Discussion. Another example: “If... then” refers to Suppose; one of the categories of Argue which contributes to a Creative Conflict. More examples are provided onlineFootnote 14.

Collaborative interpersonal problem-solving conversation skills (McManus and Aiken 1995)

NVivoFootnote 15 was used for coding the transcriptions. Prior to coding, we ensured coding/rating’s reliability by performing two-way mixed Intraclass Correlation Coefficient (I-C-C) tests with 95% confidence interval on 9% of the data. In particular, three coders/rater were involved in measuring the I-C-C of the G group and T group of EXP, REP1, and REP2 (I-C-C value is 0,97 for group G and 0,96 for group T). Whereas, two coders/raters were involved for measuring the I-C-C of the G group and T group of REP3 (I-C-C value is 0,83 for group G and 0,92 for group T). The coding/rating reliability is positive. Indeed, according to Koo and Li (2016), I-C-C is good when it is > 0,75 and ≤ 0,90 and excellent when it is > 0,90. Based on this result, the raters collaboratively continued to code the rest of the data i.e., 91% of the data.

3.8.2 Descriptive Statistics

By using IBM SPSSFootnote 16, we generated descriptive statistics, including Box-plots and Mean +/- 1SD plots, to analyze the collected data via questionnaires and audio recordings. In particular, we measured: means, medians, standard deviations, and ranges. These descriptive statistics help to analyze central tendencies and dispersion.

3.8.3 Hypotheses Testing

In the family of experiments, we wanted to compare two treatments/groups (G and T). So, we assigned our participants to these two groups by following the between-subjects design. In this setting, different people test each condition to reduce learning- and transfer-across-conditions effects. The collected data during the experiments include both interval and ordinal measures. Moreover, they are not normally distributed. Thus, we used non-parametric tests.

In particular, the hypotheses that we formulated in Section 3.7 seek to determine whether two independent samples have the same distribution. Therefore, these hypotheses were tested by performing the non-parametric independent-samples Mann-Whitney test.

3.8.4 Meta-Analysis

We perform a fixed-effect meta-analysis, as all factors that could influence the effect size are the same in all the experiments (Borenstein et al. 2011). We use different scales to measure the communications aspects. Thus, for each experiment (i), we compute the effect size (Gi) by calculating the Hedges’ g metric (Hedges 1981). The assigned weight to each experiment is:

where, \(V_{G_{i}}\) is the within-experiment variance for the i th experiment.

We obtain the global effect size by calculating the weighted mean M:

According to (Hedges 1981), the effect size is small when g≥ 0,2; medium when g ≥ 0,5; and large when g ≥ 0,8. We report the result of the meta-analysis by using forest plots (Borenstein et al. 2011).

4 Results

In the first part of this section, we report the participants’ perceptions of their design experiences and communication skills (the results of the pre-task questionnaire). After that, we present the results of the individual experiments and the performed meta-analysis. Finally, we report the participants’ perceptions of their experience in working with different design representations (the results of the post-task questionnaire).

4.1 Perceived Design Experience and Communication Skills

The perceived (based on self-evaluations) design experience and communication skills are detailed hereFootnote 17. In summary, we find that:

-

the participants are somewhat familiar with software design.

-

the participants are familiar with software modeling and good in understanding and sense-making of UML models.

-

the participants are very good in reading, understanding, and sense-making of textual documentation.

-

the Explainers in the group G are neither poor nor good in explaining their knowledge, while the Explainers in the group T are good in explaining their knowledge.

-

the Receivers of the two groups (G and T) are good in building knowledge from conversing with others.

There are no statistically significant differences in the perceived design experience and communication skills between groups G and T in the different experiments. Accordingly, we assume that the design experience and communication skills of participants are not influencing the results of this study.

4.2 Individual Experiments

Table 5 shows the descriptive statistics of the studied communication aspects sorted by two subgroups of studies:

-

Subgroup A: including OExp, REP1, and REP2.

-

Subgroup B: including REP3.

Considering Subgroup A, we observe that the unbiased estimate of the effect size, based on the standardized mean difference between group G and T (Hedges’g 1981), is positive for Explaining, Recall, Active Discussion, and Creative Conflict. This means that there is a clear tendency in favor of using GSD over TSD. Regarding Understanding, the participants achieved better results when using TSD in OExp (negative g value). In contrast, the participants of REP1 and REP2 achieved better results when using GSD. Regarding Conversation Management, the results show that the participants of all the experiments spent more effort on conversation management when using TSD.

Considering Subgroup B, we observe that the unbiased estimate of the effect size (i.e., Hedges’g) is positive for Explaining, Understanding, and Creative Conflict. This means that there is a clear tendency in favor of using GSD over Altered-TSD. Regarding Recall and Active Discussion, the participants achieved better results when using the Altered-TSD. Moreover, the participants spent more effort on conversation management when using Altered-TSD.

We tested whether or not the distribution of the communication aspects (i.e., dependent variables) is the same across the two groups (G and T) by running the Independent-Samples Mann-Whitney U Test.

Table 6 shows the results of the test. The p-value is the probability of obtaining the observed results of a test, assuming that the null hypothesis is correct. We set the probability of type I error (i.e., α, probability of finding a significance where there is none) to 0,05. The statistical power is the probability that a test will reject a null hypothesis when it is in fact false. As the power increases, the probability of making a type II error (β-value) decreases. A power value of 0,80 is considered as a standard for adequacy (Ellis 2010). β-value is used to estimate the probability of accepting the null hypothesis when it is false.

Considering Subgroup A, we observe in REP1 that there is a statistically significant difference in Recall between the two groups G and T (p-value = 0,037 < 0,05, statistical power is 0,554). In REP2, we observe that there is a statistically significant difference in Active Discussion and in Conversation Management between the two studied groups (p-values = 0,010 and 0,011 < 0,05, statistical powers are 0,694 and 0,705, respectively).

Considering Subgroup B, we observe a statistically significant difference in Explaining and Creative Conflict between the two studied groups (p-values = 0,000 and 0,017 < 0,05, statistical powers are 0,991 and 0,800, respectively).

4.3 Meta-Analysis

In this section, we report and discuss the meta-analysis by means of forest plots. The squares in each forest plot indicate the effect size of each experiment. The size of the squares represents the relative weight (squares are proportional in size to experiments’ relative weight). The horizontal lines on the sides of each square represents the 95% confidence interval. The diamond shows the global effect size (the location of the diamond represents the effect size), while its width reflects the precision of the estimate (i.e., 95% confidence interval). The plot also shows the values of the effect size, weight, and p-value relative to each experiment and to the global measure.

Positive values of the effect size indicate that the use of GSD increases/ improves the effort/quality of the communication aspect, while negative values indicate that using TSD/Altered-TSD is the increasing/improving condition.

4.3.1 Explaining

Figure 3 shows the forest plot for perceived quality of Explaining in the two subgroups of studies, A and B. We observe that the effect size values are positive in all the experiments. This implies that using a GSD has a positive effect on perceived Explaining quality. In other words, the participants’ level of perceived explaining is better when using the GSD. Despite this tendency, the global effect size of the studies within Subgroup A is not statistically significant (p-value is 0,128 > 0,05). In contrast, the global effect size of the study in Subgroup B is statistically significant (p-value is 0,000 < 0,05).

4.3.2 Understanding and Recall

In a revised Bloom’s taxonomy, Anderson L.W. et al. (2001) outline a hierarchy of cognitive-learning levels ranging from remembering of a specific topic, over understanding and application of such knowledge, to advanced levels of analysis, evaluation, and creation. Figure 4 shows the hierarchy of the six cognitive learning levels. According to Anderson, remember is the recalling of the previously learned topic. understand is the ability to grasp the meaning of the topic by interpreting the knowledge and predicting future trends. Apply instead, comes on top of understand. It is the ability to use the acquired and comprehended knowledge in a new and concrete context or situation. In order to measure the quality of understanding of our experiments’ participants, we formulated three questions on design maintenance (these questions are provided in Section 3.6) which required the participants to use/apply their acquired knowledge in a new context (i.e., apply in Anderson’s revised taxonomy).

The participants in the two groups (G and T) answered ten recall questions. We formulated the recall questions (see Section 3.6) to evaluate how well the participants do remember the design details after the discussions.

Figure 5 shows the forest plot for quality of (a) Understanding and (b) Recall ability of design details. Regarding the quality of Understanding, the effect size value is negative for OExp, which means that TSD is the improving condition. For the other experiments in subgroups A and B the values of the effect size are positive. This implies that using a GSD in these experiments has a positive effect on the understanding quality. Despite these tendencies, the global effect size of subgroups A and B is not statistically significant (p-values are 0,329 and 0,399, respectively). Considering the Recall ability, we observe that the effect size values in subgroup A are positive. This implies that using a GSD has a positive effect on the Recall ability. This effect is statistically significant and has a medium effect size for REP1. Furthermore, the global effect size is statistically significant (p-value = 0,048 < 0,05). In contrast, the effect size value in subgroup B is negative. This implies that using a Altered-TSD is the improving condition. However, the effect is not statistically significant (p-value = 0,191 > 0,05).

4.3.3 Interpersonal Communication

Figure 6 shows the the forest plot for collaborative interpersonal communication dimensions: Active Discussion (AD), Creative Conflict (CC), and Conversation Management (CM).

Considering AD, we observe that the effect size values of Subgroup A studies are positive. This implies that using a GSD has a positive effect on the amount of ADs. The global effect size for AD is statistically significant (p-value = 0,005 < 0,05). The effect size value of Subgroup B study is negative. This implies that using a Altered-TSD is the improving condition. However, the effect size of the study in Subgroup B is not statistically significant (p-value = 0,131).

Considering CC, we observe that the effect size values of all the studies are positive. This implies that using a GSD has a positive effect on the amount of CCs. The global effect size of Subgroup A is not statistically significant (p-value = 0,162 > 0,05). In contrast, the effect size of Subgroup B study is statistically significant (p-value = 0,004 < 0,05).

Considering CM, we observe that the effect size values of all the studies are negative. This implies that the effort on CM is bigger when using TSD/Altered-TSD. The global effect size of Subgroup A is medium and statistically significant (p-value = 0,001 < 0,05). The effect size of the study in Subgroup B is not statistically significant (p-value = 0,615 > 0,05).

4.4 Motivated and Cohesive TSD

Falessi et al. (2013) suggested that documentation of software design rationale could support many software development activities, including analysis and re-design. Tang et al. (2006) conducted a survey of practitioners to probe their perception of the value of software design rationale and how they use and document it. They found that practitioners recognize the importance of documenting design rationale for reasoning about their design choices and supporting the subsequent implementation and maintenance of systems.

The goal of running REP3 is to know how a motivated and cohesive TSD (as described previously in Section 3.5.1 – Altered TSD) could influence design communications. To this end, we used a different TSD in REP3, which includes a rationale that motivates why the MVC paradigm is selected for structuring the design. Moreover, we organized the information/knowledge in the TSD and made it cohesive. In particular, the relationships of each entity are reported right after describing it, instead of being reported with all the other relationships in the ‘relationship section’ at the end of the TSD.

To achieve the goal of REP3, we use a fixed-effect subgroup analysis (Borenstein et al. 2011) to determine whether the Altered-TSD variant is more effective than the TSD. In particular, we compare the mean effect for two subgroups of studies:

-

Subgroup A: the experiments that use a TSD (OExp, REP1, and REP2).

-

Subgroup B: the experiment that uses a Altered-TSD (REP3).

For each subgroup of studies, we report in Table 7 the mean effect size and variance of the studied communication aspects. We observe that effect size of REC and AD is lower in Subgroup B. This indicates that the Altered-TSD is better than TSD in promoting Recall Ability and Active Discussion. We also observe that the effect size of CM is higher in Subgroup B. This indicates that the users of Altered-TSD spent less effort on Conversation Management.

To statistically analyze the difference between TSD and Altered-TSD, we use the Z-test method (Borenstein et al. 2011). The results of the test are presented in Table 8. We observe three significant differences at the α level of 0,05 concerning Explaining (EXP), Recall (REC), and Active Discussion (AD).

For EXP, we find that the two-tailed p-value corresponding to Z = 2,290 is 0,003. This tells us that the TSD of Subgroup A’ studies is better for Explaining than the Altered-TSD of Subgroup B’s study. In addition to reporting the test of significance, we report the clinical significance. The difference in effect size between the two subgroups of studies is Diff = 0,935, and the 95% confidence interval is in the range of 0,190 and 0,715.

For REC and AD, we find that the two-tailed p-value corresponding to Z = 2,136 and 2,740 are 0,033 and 0,006, respectively. This tells us that the Altered-TSD of the Subgroup B’s study is better than the TSD of Subgroup A’ studies for increasing Active Discussions and enhancing Recall ability of design details. The differences in REC and AD effect size between the two subgroups of studies are Diff = 0,639 and 1,158, and the 95% confidence intervals are in the range of − 0,124, and 0,393 for REC, and in the range of − 0,062, and 0,670 for AD.

4.5 Perceived Experience in Working with Different Design Representations

The perceived experience (based on self-evaluations) in working with different design representations are detailed hereFootnote 18. In summary, we find that:

-

the participants (both Explainers and Receivers) perceive that the design of the system is very clear.

-

the participants perceive that they are good in recalling a conversation.

-

the participants in group G perceive that they are good in recalling UML design models.

-

the participants in group T perceive that they are good in recalling a textual documentation.

-

the Explainers in group G perceive that models are helpful in understanding the design.

-

the Explainers in group T perceive that having diagrams would have helped in understanding the design.

-

the Explainers in group G perceive that models are very helpful in explaining the design.

-

the Explainers in group T perceive that having diagrams would have helped in understanding the design.

-

the Receivers in group G perceive that models are helpful in understanding the design.

-

the Receivers in group T perceive that having diagrams would have helped in understanding the design.

5 Discussion

Our experiments investigate whether design communication between software engineers can become more effective when using GSD instead of TSD to exchange design information. To this end, we investigate whether using a GSD affects six considered communication aspects (Understanding, Explaining, Recall, Active Discussion, Creative Conflict, and Conversation Management) differently from using a TSD (R.Q.1). Moreover, we study whether a cohesive and motivated TSD (i.e., Altered-TSD) improves design communication (R.Q.2).

Considering Subgroup A, the global effect size of the perceived explaining quality is positive. This means that using a GSD has a positive effect on the perceived explaining quality. Similarly, the global effect size of the understanding (i.e., maintenance task) score is positive, which means that the score of the GSD users is better than the score of TSD users. Nevertheless, by considering distributions of the scores we neither find a statistically significant difference in the quality of explaining (Observation 1) nor in the quality of understanding (Observation 2) between the two groups: G and T.

While analyzing the recorded, and further transcribed, discussions between the Explainers and Receivers, we interestingly observed a difference in the explaining approach between the Explainers of the two groups. Figure 7 provides an illustration of the observed explaining approaches in the two groups. On the one hand, the Explainers of a TSD tended to explain the three modules of the MVC sequentially: Firstly the model entities, then the controllers, and lastly the views, as these modules are orderly presented in the textual document. We think that this trend is intrinsically imposed by the nature of textual descriptions where the knowledge is presented sequentially on a number of consecutive ordered pages. On the other hand, the Explainers of the GSD had more freedom in explaining the design. Indeed according to their explaining preferences, the Explainers of the GSD tended to jump back and forth between the three MVC modules when explaining the design. Based on this, we suggest that a GSD has an advantage over the TSD in unleashing Explainers’ expressiveness when explaining the design, as well as in helping navigation and getting a better overview of the design. However, developers might not have this advantage when explaining many GSDs (e.g., many UML diagrams) spread on different pages within a software design documentation.

We found that using a GSD is better than a TSD for recalling the details of the discussed design (Observation 3). This is actually inline with Meade et al. (2018), who suggest that drawing graphical notations brings more recall benefits than writing textual words in younger and older adults.

Graphical representations are considered better than the textual in representing information which deals with relationships between entities (Völter 2011). One of the recall questions that we used to measure the recall ability of the participants is concerned with the relationships between the entities of the software architecture design. We compared the score (interval variable; min is 0 and max is 1 point) of the two groups on this question. On average, the users of the graphical representation were slightly better in recalling the relationships between the entities (G: Mean= 0,506; Std. Dev.= 0,331) vs. (T: Mean= 0,423; Std. Dev.= 0,347). However, this difference is not statistically significant (Sig.= 0,128 > 0,05; Hedges’ g = 0,244; Power= 0,338).

The Chinese Whispers game is often invoked as a metaphor for miscommunication. In this game, the first player often fails to recall all the information of the initial message that she/he receives. Likewise, the second player often fails to recall all the information of the message that she/he receives from the first player, and so on for the rest of the players. In the same manner, the Explainers in our experiments failed to recall all the design details that we asked for in the post-task questionnaire (Mean Score= 3,319; Std. Dev.= 0,855). The Receivers were, as expected, worse than the Explainers in recalling the design details (Mean Score= 2,492; Std. Dev.= 0,885). Moreover, we found that the difference in recall ability betweeen Explainers and Receivers is statistically significant (Sig.= 0,000 < 0,05; Hedges’ d = 0,946, Power= 0,999).

Based on empirical results, we find that a GSD fosters more Active Discussion (AD) than TSD (Observation 4), while reducing Conversation Management (CM) at the same time (Observation 6). In the skill taxonomy of McManus and Aiken (1995), the communication activities in the AD category generally aim at helping an active exploration of the discussed argument by encouraging information requesting, clarification, or elaboration. In contrast, the branch of CM comprises communication activities that generally contribute less to active information requesting or clarification, such as acknowledging or coordinating group tasks. Consequently, we suggest that using a GSD as a basis for software design communication promotes an active exploration of the communicated designs, which in turn helps to improve the effectiveness of software design communication.

There is no significant difference in Creative Conflict (CC) discussions between group G using GSD and group T using TSD (Observation 5). We suggest that the type of design description does not influence design argumentation and reasoning. Alternatively, we think that the context, complexity of the design, available knowledge, or the application of reasoning techniques might affect the quality of design argumentation and reasoning discussions, as suggested by Tang et al. (2018).

It is widely assumed that model-based techniques support communicating software (Hutchinson et al. 2014). Our findings support such assumption and prove that using a GSD improves the recall ability of the discussed design details, fosters Active Discussion, and at the same time reduces less useful conversation on activities management.

We conducted REP3 to better calibrate our findings of the differences between GSD and TSD. We found that a motivated (i.e., augmented with rationale) and cohesive TSD helps to enhances the recall of the design details and increases the amount of active discussions at the cost of reducing the perceived quality of explaining (Observation 7). This finding is indeed inline with Tang et al. (2010) who stated that discussing the reasons of making software design choices (i.e. design rationale) positively contributes to the effectiveness of software design discussions by facilitating communication and design knowledge transfer. However, we found that adding more details (e.g., rationale) to the TSD adversely influences the perceived quality of explaining. One explanation for this effect is that the Explainers did not have enough time to explain the details of the Altered-TSD. For the same reason, the Receivers might have perceived that the Explainers did not go through the entire textual description when explaining the software design.

5.1 Threats to Validity

Our family of experiments is subject to threats to their construct validity, internal validity (causality), external validity (generalizability), and conclusion validity. We highlight these issues and discuss related study design decisions.

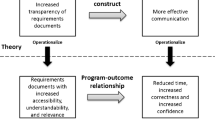

5.1.1 Construct Validity

Constructs validity refers to how well operational measures represent what researchers intended them to represent in the study in question. In this study, we used a single method for measuring the impact of different design representation per each communication aspect. To mitigate this issue, we did not only rely on questionnaires, but also recorded, transcribed, and later evaluated the communication observed during the experiments. Nonetheless, leveraging additional methods to probe the explaining, understanding, recall, and interpersonal communication skills of the participants might help to better investigate the effects of different design representations. Such methods, for instance, might comprise conducting actual software design or software engineering tasks after receiving the explanation. However, this would introduce a multitude of other variables (e.g., the programming language or IDE used) that either can be hardly controlled or demand for drastic simplification, thus reducing our experiments’ generalizability.

Another threat to construct validity could arise from discretizing the measurement of continuous properties, such as the participants’ familiarity with software design or their expertise with UML. This challenge has been investigated for balanced Likert and identified as not compromising generalizability (Ray 1982).

5.1.2 Internal Validity

The questionnaires to evaluate the participants’ performance raise threats to internal validity themselves: For instance, the participants might interpret the Likert scales we have used differently, might have avoided extreme responses (central tendency bias), and - as the participants evaluated their communication skills themselves - might be biased towards overestimating or underestimating their skills, which might be subject to different effects on their introspection. To support comprehension and reproduction of results, we use established surveys where possible and provide all materials on the experiments’ companion website. Nonetheless, completely mitigating the potential effects of surveys’ general deficiency requires the development of novel methods to test familiarity and understanding of UML designs and textual designs, as well as communication skills. While for the latter, specifically tailored exercises might be feasible to evaluate the skill level, conducting these, (a) requires unbiased instruments as well and (b) might affect our experiments. A specific challenge of our family of experiments regarding the questionnaires arises from conducting the REP2 survey in French, whereas the other experiments used English documents. While this generally could affect the results, the experimenters of REP2 had the task documents and questionnaires professionally translated and reviewed to maintain the consistency of the communicated information.

To mitigate the effect of limited preparation and explanation time – the Explainers had 20 minutes to understand the design and 12 to discuss it with the Receivers – we conducted multiple pilot studies at all sites prior to the actual experiments to understand how much time is required. After running the pilot studies, we increased the initially considered 10 minutes of discussion to 12 based on the feedback of the participants of the pilot studies. Afterwards, we conducted another pilot study that confirmed that both times are considered suitable for the tasks.

Other challenges to internal validity stem from the selection of our experiments’ participants. Potential confounding factors include that due to randomly assigning the participants to the G or T group, certain personality types are prevalent in one of the groups – which could affect results. By measuring the Big Five factors of personality (Donnellan et al. 2006), we checked that this is not the case: the distribution of the five personality factors (Extraversion, Agreeableness, Conscientiousness, Neuroticism, and Openness) is the same across the two groups.

Similarly, it could have affected our findings that the members of one of the two groups have significantly more experience with software design than the members of the other group. The pre-task questionnaire establishes that this is not a problem of our study. Other issues could have arisen from our participants being unfamiliar with UML designs or textual designs but the pre-task questionnaire shows that this is not the case. We assume that this is due to the participants’ educational backgrounds (in which processing textual designs for exercises or exams is common).

The textual representations used in this research are structured by indentation, indexing, and grouping information, which are helpful for information retrieval (Conversy 2014). However, these might have positively affected the quality of TSD communication. Similarly, the MVC entities in the graphical representation were highlighted by colors, which is also helpful for information retrieval (Conversy 2014). This might have also positively affected the quality of GSD communication. If the descriptions of the entities in TSD were tangled and if the entities of the GSD were not colored, then the quality of communication of these two representations might have been different and less efficient. As we use different enhancement techniques for GSD and TSD, it is possible that this affected the results of the comparison. Indeed, the augmentations to the textual representation might yield other (stronger or weaker) effects than the class diagram coloring. As both, coloring in graphical models and structuring of textual design documents, is common in industrial practice, we do not consider this a significant threat over using unstructured text and uncolored diagrams.

Some Receivers of the text group were drawing (informal) class diagrams while being explained to. Hence, there might be an interaction of both treatments, but with only six (2.5%) of the Receivers being affected, the effect of this combination of both representations is negligible.

Another threat might arise from using textual survey questions as the method to investigate the benefits of textual and graphical designs. Maybe, textual design representations yielded better answers to the questions because they are syntactically closer than graphical designs to the textual answers. This threat could be mitigated through leveraging graphical questions and answers in the surveys. While this would be feasible for the answers, for formulating the questions as graphical class diagrams, this would entail a new syntax which might yield further threats.

5.1.3 External Validity

Threats to external validity indicate to which extent the results of our study can be generalized. Due to working at software engineering research and education institutes, we selected students with strong software engineering backgrounds of our Universities. While this prevents generalizing results to software developers with different backgrounds (e.g., developers in computer vision, artificial intelligence, or robotics), software design aims at software architecture from which we expect strong software engineering backgrounds.

Also, we conducted our studies with students instead of software design practitioners. Hence, the participants involved in our experiments may not represent the general professional population of software engineering practitioners. While this limits us from generalizing our findings to other subjects (i.e., domain experts, professional software architects, industrial practitioners in the field), the differences between students and professional software developers in performing small tasks are generally very small (Höst et al. 2000). We, therefore, consider our findings as a basis to extend our study to a larger community of software engineering practitioners.

Another threatening effect is that the population of professional software developers yield a larger age range than students. With recall abilities changing over time (Craik 2019), this limits generalization of our results to professional software developers of the same age range – between 20 and 30 years – than software engineering students and PhD students (as proposed in (Falessi et al. 2018)).

Moreover, the studies were conducted in educational contexts, i.e., contexts in which the students usually are evaluated and graded. This generally might have improved their performance (Hawthorne effect). However, as this applies to both groups, this does not affect our results.

Due to the outline of our experiments as single one-hour sessions and their popular context in sports that are easily relatable, we can exclude threats regarding history or maturation. The participants could neither have been effected from previous events of the experiment as there have not been any.

Moreover, as we used the same two textual/graphical notations in all experiments, this limits generalizability of our results to other textual or graphical representations, i.e., differently structured text or differently highlighted class diagrams. This, however, is a threat independent of the specific choice of representation and demands for studies deploying multiple (popular) representations – which demands correctly identifying industrially relevant forms of representation and yields further threats to generalizability.

The use of a single case specification is a threat to the generalizability of the results. The size, topic, and complexity of the design case specification might affect the communication quality and the results of the comparison. This threat can be addressed by conducting replication studies with different design case specifications.

Another challenge to generalizability might arise from the constructs investigated, i.e., whether structured textual design documents and colored UML class diagrams actually are relevant to communicating design decisions in industry. While the use of UML in software design and engineering is undaunted in various domains (cf. (Liebel et al. 2014; Wortmann et al. 2019)), so is the use of textual documents to describe software designs (Casamayor et al. 2012; Wagner and Fernández 2015; Palomba et al. 2016). However, using a specific form of structured text for communicating design decisions limits generalizability to this form of text. For instance, in requirements engineering, there are different tools that support capturing textual requirements and design decisions using different textual representations (Cant et al. 2006) and using these might entail different effects.