Abstract

Software engineering (SE) research should be relevant to industrial practice. There have been regular discussions in the SE community on this issue since the 1980’s, led by pioneers such as Robert Glass. As we recently passed the milestone of “50 years of software engineering”, some recent positive efforts have been made in this direction, e.g., establishing “industrial” tracks in several SE conferences. However, many researchers and practitioners believe that we, as a community, are still struggling with research relevance and utility. The goal of this paper is to synthesize the evidence and experience-based opinions shared on this topic so far in the SE community, and to encourage the community to further reflect and act on the research relevance. For this purpose, we have conducted a Multi-vocal Literature Review (MLR) of 54 systematically-selected sources (papers and non peer-reviewed articles). Instead of relying on and considering the individual opinions on research relevance, mentioned in each of the sources, the MLR aims to synthesize and provide the “holistic” view on the topic. The highlights of our MLR findings are as follows. The top three root causes of low relevance, discussed in the community, are: (1) Researchers having simplistic views (or wrong assumptions) about SE in practice; (2) Lack of connection with industry; and (3) Wrong identification of research problems. The top three suggestions for improving research relevance are: (1) Using appropriate research approaches such as action-research; (2) Choosing relevant (practical) research problems; and (3) Collaborating with industry. By synthesizing all the discussions on this important topic so far, this paper aims to encourage further discussions and actions in the community to increase our collective efforts to improve the research relevance. Furthermore, we raise the need for empirically-grounded and rigorous studies on the relevance problem in SE research, as carried out in other fields such as management science.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Avoid common mistakes on your manuscript.

1 Introduction

Concerns about the state of the practical relevance of research are shared across all areas of science, and Software Engineering (SE) is no exception. A paper in the field of management sciences (Kieser et al. 2015) reported that: “How and to what extent practitioners use the scientific results of management studies is of great concern to scholars and has given rise to a considerable body of literature”. The topic of research relevance in science in general, also referred to as the “relevance problem”, is at least 50 years old, as there are papers dating back to the 1960’s, e.g., a paper having the following title: “The social sciences and management practices: Why have the social sciences contributed so little to the practice of management?” (Haire 1964).

David Parnas was one of the first to publish experience-based opinions about questionable relevance of SE research as early as in the 1980’s. In his 1985 paper (Parnas 1985), Parnas argued that: “Very little of [SE research] leads to results that are useful. Many useful results go unnoticed because the good work is buried in the rest”. In a 1993 IEEE Software paper (Potts 1993), Colin Potts wrote that: “as we celebrate 25 years of SE, it is healthy to ask why most of the research done so far is failing to influence industrial practice and the quality of the resulting software”.

In around the 25th-year anniversary of SE, Robert Glass published an IEEE Software paper (Glass 1994) in 1994, entitled “The software-research crisis”. The paper argued that most research activities at the time were not (directly) relevant to practice. Glass also posed the following question: “What happens in computing and software research and practice in the year 2020?”. Furthermore, he hoped in the paper, for year 2020: “Both researchers and practitioners, working together, can see a future in which the wisdom of each group is understood and appreciated by the other” (Glass 1994).

In a 1998 paper (Parnas 1998), David Parnas said: “I have some concerns about the direction being taken by many researchers in the software community and would like to offer them my (possibly unwelcome) advice”, and one of those pieces of advice being: “Keep aware of what is actually happening [in the industry] by reading industrial programs [resources]”. He also made the point that: “most software developers [in industry] ignore the bulk of our research”.

More recently, some critics have said that: “SE research suffers from irrelevance. Research outputs have little relevance to software practice” (Tan and Tang 2019), and that “practitioners rarely look to academic literature for new and better ways to develop software” (Beecham et al. 2014). Also SE education has also been criticized, e.g., “… SE research is divorced from real-world problems (an impression that is reinforced by how irrelevant most popular SE textbooks seem to the undergraduates who are forced to wade through them)” (Wilson 2019). Another team of researchers and practitioners wrote a joint blog post about SE research relevance in which they argued that (Aranda 2019): “Some [practitioners] think our field [SE] is dated, and biased toward large organizations and huge projects”.

We have now celebrated 50 years of SE (Ebert 2018), climaxing with the ICSE 2018 conference in Gothenburg, Sweden.Footnote 1 Thus, it is a good time to reflect back and wonder to what extent old critique of SE research relevance is still valid. Furthermore, we are close to 2020, i.e., the year targeted by Glass’ vision of when “Software practice and research [would] work together” (Glass 1994) (for higher industrial relevance of SE research) have been realized. But has this really happened in a large scale?

Glass had hoped that things would change (improve): “the gradual accumulation of enough researchers expressing the same view [i.e., the software-research crisis,] began to swing the field toward less arrogant and narrow, more realistic approaches” (Glass 1994). However, as this MLR study would reveal, it could be argued that perhaps we as a community have had only a bit of improvement in terms of research relevance. According to the panelists of an industry-academic panel in ICSE 2011, there is a “near-complete disconnect between software research and practice” (Wilson and Aranda 2019).

The argument about practical (ir)relevance of research is not specific to SE, and the issue has been discussed widely in other circles of science, e.g., (Heleta 2019; Rohrer et al. 2000; Slawson et al. 2001; Andriessen 2004; Desouza et al. 2005; Flynn 2008). An online article (Heleta 2019) reported that most works of academics are not shaping industry and the public sector. But instead, “their [academics] work is largely sitting in academic journals that are read almost exclusively by their peers”. Some of the paper titles on this subject, in other fields of science, look interesting and even bold, e.g., “Rigor at the expense of relevance equals rigidity” (Rohrer et al. 2000), “Which should come first: Rigor or relevance?” (Slawson et al. 2001), “Reconciling the rigor-relevance dilemma” (Andriessen 2004), “Information systems research that really matters: Beyond the IS rigor versus relevance debate” (Desouza et al. 2005), and “Having it all: Rigor versus relevance in supply chain management research” (Flynn 2008).

In summary, since as early as 1985, many wake-up calls have been published in the community to reflect on the relevance of SE research (Floyd 1985). While there have been some positive changes on the issue in recent years, e.g., establishing “industrial” tracks in several SE conferences, many believe we are still far from the ideal situation with respect to research relevance.

The goal of this paper is to synthesize the discussions and arguments in the SE community on the industrial relevance of SE research. To achieve that goal, we report a Multi-vocal Literature Review (MLR) on a set of 54 sources, 36 of which being papers from the peer-reviewed literature and 18 sources from the gray literature (GL), e.g., blog posts and white papers. An MLR (Garousi et al. 2019) is an extended form of a Systematic Literature Review (SLR) which includes the GL in addition to the published (formal) literature (e.g., journal and conference papers). MLRs have recently increased in popularity in SE, as many such studies have recently been published (Garousi et al. 2019; Garousi and Mäntylä 2016a; Mäntylä and Smolander 2016; Lwakatare et al. 2016; Franca et al. 2016; Garousi et al. 2017b; Myrbakken and Colomo-Palacios 2017; Garousi et al. 2016a).

The contributions of this study are novel and useful since, instead of relying on and considering the experience-based opinions mentioned in each of the papers in this area, our MLR collects and synthesizes the opinions from all the sources on this issue and thus it provides a more “holistic” view on the subject. Similar SLRs have been published, each synthesizing the issue in a certain research discipline outside SE, e.g., (Tkachenko et al. 2017) reported a SLR of the issue in management and human-resource development, (Carton and Mouricou 2017) reported a systematic analysis of the issue in management science, and (Moeini et al. 2019) reported a review of the issue in the Information Systems (IS) community.

It is worthwhile to clarify the focus of this MLR paper. We focus in this work on research “relevance”, and not research “impact”, nor “technology transfer” of SE research. As we discuss in Section 2.3, while these concepts (terms) are closely related, they are not the same. Research relevance does not necessarily mean (result in) research impact or technology transfer. A paper / research idea, with high research relevance, has a higher potential for usage (utility) in industry, and could lead to a higher potential (chance) of research impact. The last two concepts (research utility and research impact) are parts of technology transfer phases (Brings et al. 2018).

The remainder of this paper is structured as follows. Background and a review of the related work are presented in Section 2. We present the design and setup of the MLR in Section 3. The results of the study are presented by answering two review questions (RQs) in Sections 4 and 5. In Section 6, we summarize the findings and discuss the recommendations. Finally, we conclude the paper in Section 7 and discuss directions for future work.

Before going in-depth in the paper, given the controversial nature of the topic of research relevance, the authors should clarify that they are not biased towards finding evidence that some or most of SE research is irrelevant. Instead, our objective and goal in this paper is to synthesize the SE community’s discussions on this topic in the last many years. The synthesized evidence may happen or may not happen to support the hypothesis that some or most of SE research is relevant or irrelevant. Such an objective is not the focus of our study.

2 Background and related work

To set the stage for the rest of the paper, we first use the literature to provide definitions of the following important terms: research relevance, utility, applicability, impact, and rigor, and we then characterize the relationships among them. Then, in terms of related work, we review the literature on research relevance in other disciplines. Finally, we provide a review on the current state of affairs between SE practice and research (industry versus academia), the understanding of which is important to discuss research relevance in the rest of the paper.

Note: To ensure a solid foundation for our paper, we provide a comprehensive background and related work section. If the reader thinks s/he is familiar with precise definitions of research relevance and its related important concepts (research utility, applicability, impact, and rigor), s/he can bypass Section 2 and go directly to Section 3 or 4, to read the design aspects or results of our MLR study.

2.1 Understanding the concepts related to research relevance: Research utility, applicability, impact, and rigor

To ensure preciseness of our discussions in this paper, it is important to clearly define the terminologies used in the context of this work, which include research “relevance” and its related topics, e.g., research “utility”, “impact” and “rigor”. We review the definitions of these terms in the literature first. We finally synthesize a definition for research relevance in SE.

2.1.1 Research utility and usefulness

Research relevance closely relates to “utility” of research. According to the Merriam-Webster Dictionary, “utility” is defined as “fitness for some purpose or worth to some end” and “something useful or designed for use”.

When research is useful and could provide utility to practitioners, it is generally considered relevant (Hemlin 1998). There have been studies assessing utility of research, e.g., (Hemlin 1998) proposed several propositions for utility evaluation of academic research, e.g., (1) utility is dependent not only on academic research supply of knowledge and technology, but equally importantly on demand from industry; and (2) the framework for evaluating research utility must take into consideration differences between research areas.

A 1975 paper entitled “Notes on improving research utility” (Averch 1975) in medical sciences, argued that: “Researchers are fond of saying that all information is relevant and that more information leads to better outcomes”. But if practitioners’ resources (such as time) are limited, practitioners are really interested in the information that provide the most utility to them. When practitioners find most research papers of low utility, they stop paying attention to research papers in general.

2.1.2 Research impact

Andreas Zeller, an active SE researcher, defined “impact” as: “How do your actions [research] change the world?” (Zeller 2019). In more general terms, research impact often has two aspects: academic impact and industrial impact. Academic impact is the impact of a given paper on other future papers and activities of other researchers. It is often measured by citations and is studied in bibliometric studies, e.g., (Eric et al. 2011; Garousi and Mäntylä 2016b). The higher the number of citations to a given paper, the higher its academic impact.

Industrial impact is, however, harder to measure since it is not easy to clearly determine how many times and to what extent a given paper has been read and its ideas have been adopted by practitioners. The “Impact” project (Osterweil et al. 2008), launched by ACM SIGSOFT, aimed to demonstrate the (indirect) impact of SE research on industrial SE practices through a number of articles by research leaders, e.g., (Emmerich et al. 2007; Rombach et al. 2008). Industrial impact of a research paper (irrespective of how difficult it is to assess) could be an indicator of its utility and relevance.

2.1.3 Research rigor

Rigor in research refers to “the precision or exactness of the research method used” (Wohlin et al. 2012a). Rigor can also mean: “the correct use of any method for its intended purpose” (Wieringa and Heerkens 2006). In the literature, relevance is often discussed together with research “rigor”, e.g., (Ivarsson and Gorschek 2011; Rohrer et al. 2000; Slawson et al. 2001). For example, there is a paper with this title: “Reconciling the rigor-relevance dilemma” (Andriessen 2004). A few researchers have mentioned bold statements in this context, e.g., “Until relevance is established, rigor is irrelevant. When relevance is clear, rigor enhances it” (Keen 1991), denoting that there is little value in highly-rigorous, but less-relevant research.

In “Making research more relevant while not diminishing its rigor”, Robert Glass mentioned that: “Many believe that the two goals [rigor and relevance] are almost mutually incompatible. For example, rigor tends to demand small, tightly controlled studies, whereas relevance tends to demand larger, more realistic studies” (Glass 2009).

To assess different combinations of research rigor and relevance, a paper in psychology (Anderson et al. 2010) presented a matrix model, as shown in Table 1. Where methodological rigor is high, but practical relevance is low, the so-called “pedantic” science is generated. It is the belief of the authors and many other SE researchers (e.g., see the pool of papers in Section 3.7) that most SE papers fall in this category. These are studies that are rigorous in their design and analytical sophistication, but yet fail to address the important issue of relevance. Such research usually derives its questions from theory or from existing published studies, “the sole criterion of its worth being the evaluation of a small minority of other researchers who specialize in a narrow field of inquiry” (Anderson et al. 2010).

The quadrant where both practical relevance and methodological rigor are high, is termed as pragmatic science. Such work simultaneously addresses questions of applied relevance and does so in a methodologically robust manner. Clearly, we believe that this particular form of research is the form that should dominate our discipline, an opinion which is also stated in other fields, e.g., psychology (Anderson et al. 2010).

Research representing popular science are highly relevant but lacks methodological rigor. (Anderson et al. 2010) elaborated that popular science “is typically executed where fast-emerging business trends or management initiatives have spawned ill-conceived or ill-conducted studies, rushed to publication in order to provide a degree of legitimacy and marketing support”. Papers in trade magazines in SE and computing often fall in this category. We also believe that most non-peer-reviewed gray literature (GL) materials, such as blog posts and white papers written by SE practitioners, are often under popular science. Given the popularity of online materials and sources among SE practitioners, we can observe that they often find such materials useful for their problems and information needs.

2.2 Research relevance

2.2.1 Two aspects of research relevance: Academic (scientific) relevance and industrial (practical) relevance

According to the Merriam-Webster Dictionary, something is relevant if it has “significant and demonstrable bearing on the matter at hand”.

Similar to research “impact” (as discussed above), research relevance has two aspects in general: academic (scientific) relevance (Coplien 2019) and practical (industrial) relevance. A paper in the field of Management Accounting focused on this very distinction (Rautiainen et al. 2017). Academic relevance of a research paper or a research project is the degree of its relevance and the value provided by it for the specific academic field (Rautiainen et al. 2017). For example, SE papers which propose interesting insights, frameworks, or formalize certain SE topics which are useful to other researchers in future studies are academically relevant and will have academic impact, even if the subject of those studies are not directly related to SE practice. For example, meta-papers such as the work reported in the current paper (an MLR study), studies in the scope of SE “education research”, and various guideline papers in empirical SE such as (Runeson and Höst 2009; Kitchenham and Charters 2007a; Petersen et al. 2015) are examples of research undertakings which are academically-relevant, but are not expected to have industrial (practical) relevance.

On the other hand, practical relevance of a research paper or a research project is the degree of its relevance and potential value for SE organizations and industry. Since this paper focuses on practical relevance, in the rest of this paper, when we mention “relevance”, we refer to industrial (practical) relevance. A good definition for practical relevance was offered in a management science paper (Kieser et al. 2015): “Broadly speaking, research results can be said to be practically relevant if they influence management practice; that is, if they lead to the change, modification, or confirmation of how managers think, talk, or act”.

Similar to the matrix model of Table 1 (Anderson et al. 2010), which showed the combinations of research rigor and relevance, we design a simple matrix model illustrating academic relevance versus practical relevance, as shown in Table 2.

In quarter Q1, we find the majority of academic papers in which the ideas are rigorously developed to work in lab settings and practical considerations (such as scalability and cost-effectiveness of a software testing approach) are not considered. If an industrial practitioner or company gets interested in the approaches or ideas presented in such papers, it would be very hard or impossible to apply the proposed approaches. For example, the study in (Arcuri 2017) argued that many model-based testing papers in the literature incur more costs than benefits, when one attempts to apply them, and it went further to state that: “it is important to always state where the models [to be used for model-based testing] come from: are they artificial or did they already exist before the experiments?”

Quarter Q2 in Table 2 is the case of the majority of technical reports in industry, which report and reflect on approaches that work in practice, but are often shallow in terms of theory behind the SE approaches. In quarter Q3, we have papers and research projects which are low on both academic and practical relevance, and thus have little value from either aspect.

In quarter Q4, we find papers and research projects which are high on both academic and practical relevance, and are conducted using rigorous (and often empirical) SE approaches. We consider such undertakings as highly valuable which should be the ideal goal for most SE research activities. Examples of such research programs and papers are empirical studies of TDD in practice, e.g., (Fucci et al. 2015; Bhat and Nagappan 2006), and many other papers which are published in top-quality SE venues, such as ICSE, IEEE TSE and the Springer’s Empirical Software Engineering journal, e.g., (Zimmermann et al. 2005). Many of the top-quality papers published by researchers working in corporate research centers also fall in this category, e.g., a large number of papers from Microsoft Research, e.g., (Johnson et al. 2019; Kalliamvakou et al. 2019), and successful applications of search-based SE in Facebook Research (Alshahwan et al. 2018). Other examples are patents filed on practical SE topics, e.g., a patent on combined code searching and automatic code navigation filed by researchers working in ABB Research (Shepherd and Robinson 2017).

2.2.2 Value for both aspects of research relevance: Academic and practical relevance

There is obviously value for both aspects of research relevance: academic and practical relevance. Papers that have high academic relevance are valuable since the materials presented in them (e.g., approaches, insights and frameworks) will benefit other researchers in future studies, e.g., various guideline papers in empirical SE such as (Runeson and Höst 2009; Kitchenham and Charters 2007a; Petersen et al. 2015) have been cited many times and have helped many SE researchers to better design and conduct empirical SE research.

Papers that have high practical relevance can also be valuable since the materials presented in them have high potentials to be useful for and be applied by practitioners. Furthermore, such papers can help other SE researchers better understand industrial practice – thus stimulating additional relevant papers in the future.

2.2.3 Dimensions of practical relevance of research

A paper in the Information Systems (IS) domain (Benbasat and Zmud 1999) stated that “research in applied fields has to be responsive to the needs of business and industry to make it useful and practicable for them”. The study presented four dimensions of relevance in research which deal with the content and style of research papers, as shown in Table 3 (Benbasat and Zmud 1999). As we can see, the research topic (problem) is one of the most important aspects. The research topic undertaken by a researcher should address real challenges in industry, especially in an applied field such as SE. It should also be applicable (implementable) and consider current technologies. The study (Benbasat and Zmud 1999) also include writing style as a dimension of relevance. But, it is the opinion of the authors that, while SE papers should be written in a way that is easily readable and understandable by professionals, we would not include writing style as a core dimension of relevance. On the other hand, we believe that the first three dimensions in Table 3 are important and will include them in our definition of relevance at the end of this sub-section.

In a paper in management science (Toffel 2016), Toffel writes: “I define relevant research papers as those whose research questions address problems found (or potentially found) in practice and whose hypotheses connect independent variables within the control of practitioners to outcomes they care about using logic they view as feasible”. Toffel further mentioned that: “To me, research relevance is reflected in an article’s research question, hypotheses, and implications”. For a researcher to embark on a research project (or a paper) that want to be relevant to practitioners, Toffel suggested proceeding with a project (paper) only if the researcher can answer “yes” to all three of the following questions:: (1) Is the research question novel to academics (academic novelty/relevance)?, (2) Is the research question relevant to practice?, and (3) Can the research question be answered rigorously? Finally, Toffel believes that relevant research should articulate implications that encourage practitioners to act based on the findings. Researchers should therefore state clearly how their results should influence practitioners’ decisions, using specific examples when possible and describing the context under which the findings are likely to apply.

“Applicability” is another term that might be considered related to or even a synonym of “relevance”. Merriam-Webster defines applicability as “the state of being pertinent”, with “relevance” listed as a synonym. “Pertinent” is defined as “having to do with the matter at hand”, which is essentially the same as the definition of “relevance”. While “relevance” and “applicability” are synonyms in terms of linguistics (terminology meanings), applicability is one dimension of research relevance (Benbasat and Zmud 1999), as shown in Table 3. A paper could address real challenges, but it may miss considering realistic assumptions in terms of SE approaches (Parnin and Orso 2011), or applying it may incur more costs than benefits (“cure worse than the disease”), e.g., (Arcuri 2017) argued that many model-based testing papers do not consider this important issue by stating that: “it is important to always state where the models come from: are they artificial or did they already exist before the experiments?”

The work by Ivarsson and Gorschek (Ivarsson and Gorschek 2011) is perhaps the only analytical work in SE which proposed a scoring rubric for evaluating relevance. For the industrial relevance of a study, they argued that the realism of the environment in which the results are obtained influences the relevance of the evaluation. Four aspects of evaluations were considered in evaluating the realism of evaluations: (1) Subjects (used in the empirical study); (2) Context (industrial or lab setting); (3) Scale (industrial scale, or toy example); and (4) Research method. A given SE study (paper) would be scored either contributing to relevance (score = 1), or not contributing to relevance (score = 0) w.r.t. each of those factors; and the sum of the values would be calculated using the rubric. However, in the opinion of the current paper authors, a major limitation (weakness) of that rubric (Ivarsson and Gorschek 2011) is that it does not include: addressing real challenges and applicability which are two important dimensions of relevance.

2.2.4 Our definition of practical relevance for SE research

We use the above discussions from the literature to synthesize and propose dimensions of relevance in SE research, as shown in Table 4. As shown in Table 4, we found two particular sources in the literature (Benbasat and Zmud 1999; Toffel 2016) valuable, as they provided solid foundations for dimensions of relevance, and we have adopted our suggested dimensions based on them: (1) Focusing on real-world problems; (2) Applicable (implementable); and (3) Actionable implications.

We should clarify the phrase “(presently or in future)” in Table 4, as follows. If the research topic or research question(s) of a study aims at improving SE practices and/or addressing problems, “presently” found or seen in practice, we would consider that study to have practical relevance w.r.t. “present” industrial practices. However, it is widely accepted that, just like any research field, many SE researchers conduct “discovery” (ground-breaking) type of research activities, i.e., research which is not motivated by the “current” challenges in the software industry, but rather presenting ground-breaking new approaches to SE which could or would possibly improve SE practices in future, e.g., research on automated program repair which took many years to be gradually used by industry (Naitou et al. 2018). In such cases, the research work and the paper would be considered of practical relevance, with the notion of “future” focus, i.e., research with potential practical relevance in future. In such cases, research may take several years to reach the actual practice, and then it would become practically relevant. Note that research targeting plausible problems of the future might still benefit from extrapolating needs from current SE practice.

In essence, we can say that there is a spectrum for practical relevance instead of having a binary (0 or 1) view on it, i.e., it is not that a given SE paper/project has zero practical relevance. But instead, we can argue that a given paper/project may have potential practical relevance or even may have the potential to have practical relevance, for the case discovery-type of SE research activities that “may” lead to ground-breaking findings. A classic view on the role of research (including SE research) is to explore directions that industry would not pursue, because these directions would be too speculative, too risky, and quite far away from monetization. This notion of research entails that a large ratio of research activities would have little to no impact in the sense that it will not lead to a breakthrough. However, we argue that by ensuring partial relevance at least, SE researchers would ensure that in case they discover a ground-breaking (novel) SE idea, it would have higher chances of impact on practice and society in general. Our analysis in the next sub-section (Fig. 1) will show that: higher potential relevance would have higher chances of impact.

Also related to the above category of research emphasis is the case of SE research projects which start by full or partial industrial funding, whose research objective is to explore new approaches to “do things”, e.g., developing novel / ground-breaking software testing approaches for AI software. When industrial partners are involved in a research project, the research direction and papers coming out of such projects would, in most cases, be of practical relevance. But one can find certain “outlier” cases in which certain papers from such projects have tended to diverge from the project’s initial focus and has explored and presented topics/approaches which are of low practical relevance.

Inspired by a quote from (Toffel 2016), as shown in Table 4, we divide the applicability dimension (second item in in Table 4) into four sub-dimensions: (1) considering realistic inputs/constraints for the proposed SE technique; (2) controllable independent variables; (3) outcomes which practitioners care about; and (4) feasible and cost-effective approaches (w.r.t. cost-benefit analysis).

We believe that, in SE, relevant research usually satisfies the three dimensions in Table 4 and takes either of the following two forms: (1) picking a concrete industry problem and solving it; and (2) working on a topic that meets the dimensions of relevance in Table 4 using systems, data or artifacts which are similar to industry grade, e.g., working on open-software systems.

2.2.5 Spectrum of research relevance according to types and needs of software industry

It is clear that there are different types (application domains) in the software industry, e.g., from firms / teams developing software for safety-critical control systems such as airplanes to companies developing enterprise applications, such as Customer Relationship Management (CRM) systems, to mobile applications and to games software. Nature and needs of SE activities and practices for each of such domains are obviously quite diverse and one can think of this concept as a “spectrum” of SE needs and practices which require a diverse set of SE approaches, e.g., testing an airplane control software would be very different than testing an entertainment mobile app. Thus, when assessing practical relevance of a given SE research idea or paper, one should consider the type of domains and contextual factors (Briand et al. 2017a; Petersen and Wohlin 2009a) under which the SE research idea would be applied in. For example, a heavy-weight requirements engineering approach may be too much for developing an entertainment mobile app, and thus not too relevant (or cost effective), but it may be useful and relevant for developing an airplane control software.

This exact issue has been implicitly or explicitly discussed in most of the studies included in our MLR study (Section 3). For example, our pool of studies included a 1992 paper, with the following interesting title: “Formal methods: Use and relevance for the development of safety-critical systems” (Barroca and McDermid 1992). The first sentence of its abstract reads: “We are now starting to see the first applications of formal methods to the development of safety-critical computer-based systems”. In the abstract of the same paper (Barroca and McDermid 1992), the authors present the critique that: “Others [other researchers] claim that formal methods are of little or no use - or at least that their utility is severely limited by the cost of applying the techniques”.

Taking a step back and looking at the bigger picture of practical relevance of SE research, by a careful review of the sources included in our pool of studies (Section 3), we observed that while the authors of some of the studies, e.g., (Barroca and McDermid 1992; Malavolta et al. 2013), have raised the above issue of considering the type of software industry when assessing research relevance, the other authors have looked at the research relevance issue, from a “generic” point of view, i.e., providing their critical opinions and assessment of SE research relevance w.r.t. an “overall picture” of the software industry. Since the current work is our first effort to synthesize the community’s voice on this topic, based on publications and gray literature from 1985 and onwards, we have synthesized all the voices on the SE research relevance. It would be worthwhile to conduct future syntheses on the issue of research relevance in the pool of studies w.r.t. the types and domains of software systems.

2.3 Relationships among relevance and the above related terms

By reviewing the literature (above) and our own analysis, we characterize the inter-relationships among these related terms using a causal-loop (causation) diagram (Anderson and Johnson 1997) as shown in Fig. 1. Let us note that understanding such inter-relationships is important when discussing research relevance in the rest of this paper.

More relevance of a research endeavor would often increase its applicability, usability, and its chances of usage (utility) in industry, and those in turn will increase its chances for (industrial) usefulness and impact. As shown in Fig. 1, the main reward metric of academia has been academic impact (citations) which, as we will see in Section 3, is one main reason why the SE research, and almost all other research disciplines (as reviewed in Section 2.2.1), unfortunately suffer from low relevance.

A researcher may decide to work on industrially-relevant or -important topics and/or on “academically-hot” (“academically-challenging”) topics. It should be noted that the above options are not mutually exclusive, i.e., one can indeed choose a research topic which is industrially-relevant and important and academically-challenging.

As per our experience, and also according to the literature (Sections 2.4), often times however, aligning these two (selecting an industrially-relevant and an academically-challenging topic) is unfortunately hard (Glass 2009; Andriessen 2004; Garousi et al. 2016b). In a book entitled Administrative Behavior (Simon 1976), Herbert Simon used the metaphor of “pendulum swings” to describe the trade-off between the two aspects and compared the attempt to combine research rigor and relevance to an attempt to mix oil with water.

Thus, it seems that relevance and rigor often negatively impact each other, i.e., the more practically relevant a research effort, it could become less rigorous. However, this is not a hard rule and researchers can indeed conduct research that is both relevant and rigorous. For example, one of the authors collaborated with an industrial partner to develop and deploy a multi-objective regression test selection approach in practice (Garousi et al. 2018). A search-based approach based on genetic algorithms was “rigorously” developed. The approach was also clearly practically-relevant since it was developed using the action-research approach, based on concrete industrial needs. Furthermore, the approach had high industrial “impact” since it was successfully deployed and utilized in practice.

As shown in Fig. 1, our focus in this MLR paper is on research “relevance”, and not research “impact”, nor “technology transfer” of SE research. As it is depicted in Fig. 1, while these concepts (terms) are closely related, they are not the same. Research relevance does not necessarily mean (result in) research impact or technology transfer. A paper / research idea, with high research relevance, has a higher potential for usage (utility) in industry, and that could lead to a higher potential (chance) of research impact. The last two concepts (research utility and research impact) are parts of technology transfer phases (Brings et al. 2018).

When a researcher chooses to work on an “academically-hot” topic, it is possible to do it with high rigor. This is often because many “simplifications” must be made to formulate (model) the problem from its original industrial form into an academic form (Briand et al. 2017b). However, when a researcher chooses to work on an industrially-relevant topic, especially when in the form of an Industry-Academia Collaboration (IAC) (Garousi et al. 2016b; Wohlin et al. 2012b; Garousi et al. 2017c), simplifications cannot be (easily) made to formulate the problem, and thus often times, it becomes challenging to conduct the study with high rigor (Briand et al. 2017b). When seeing a paper which is both relevant and rigorous, it is important to analyze the “traits” in the study (research project) from inception to dissemination which contribute to its relevance and rigor, and how, as discussed next.

In the opinion of the authors, one main factor which could lead to high research relevance is active IAC (Garousi et al. 2016b; Wohlin et al. 2012b; Garousi et al. 2017c). One of the most important aspects in this context is the collaboration mode (style), or degree of closeness between industry and academia, which could have important influence on relevance. One of the best models in this context is the one proposed by Wohlin (Wohlin 2013b), as shown in Fig. 2. There are five levels in this model, which can also be seen as a “maturity” level: (1): not in touch, (2): hearsay, (3): sales pitch, (4): offline, and (5): one team. In levels 1–3, there is really no IAC, since researchers working in those levels only identify a general challenge of the industry, develop a solution (often too simplistic and not considering the context (Briand et al. 2017b)), and then (if operating in level 3) approach the industry to try to convince them to try/adopt the solution. However, since such techniques are often developed with a lot of simplifications and assumptions which are often not valid in industry (Briand et al. 2017b; Yamashita 2015), they often result in low applicability in practice and thus low relevance in industry.

Five (maturity) levels of closeness between industry and academia (source: (Wohlin 2013b))

The Need for Speed (N4S) project, which was a large industry-academia consortium in Finland (n4s.dimecc.com/en) and ran between 2014 and 2017, is an example of level 5 collaboration. An approach for continuous and collaborative technology transfer in SE was developed in this four-year project. The approach supported “real-time” industry impact, conducted continuously and collaboratively with industry and academia working as one team (Mikkonen et al. 2018).

Referring back to the “traits” in the study (research project) from inception to dissemination which contribute to its relevance, we have seen first-hand that if a paper comes out of the collaboration mode in level 5 (“one team”), it could have good potential to have high relevance. Of course, when conducting highly relevant research, one should not overlook “rigor”, since there is a risk in conducting highly relevant research that lacks methodological rigor (Anderson et al. 2010) (referred to as “popular science”). To ensure research rigor, SE researchers should ensure following the highest levels of rigor using established research methods in empirical SE (Runeson and Höst 2009; Easterbrook et al. 2008; Runeson et al. 2012).

2.4 Related work

We review next the literature on research relevance in other disciplines, and then the existing systematic review studies on the topic of relevance in SE research.

2.4.1 An overview of the “relevance” issue in science in general

To find out about the publications on research relevance in other disciplines, we conducted database searches in the Scopus search engine (www.scopus.com) using the following keywords: “research relevance”, “relevance of research”, and “rigor and relevance”. We observed that the topic of research relevance has indeed been widely discussed in other disciplines, as we were able to get as results a set of several hundred papers. We discuss below a selection of the most relevant papers, identified by our literature review.

The Information Systems (IS) community seems to be particularly engaged in the relevance issue, as we saw many papers in that field. A paper published in the IS community (Srivastava and Teo 2009) defined a quantitative metric called “relevance coefficient”, which was based on the four dimensions of relevance as we discussed in Section 2.1.4 (defined in (Benbasat and Zmud 1999)). The paper then assessed a set of papers in three top IS journals using that metric.

A “relevancy manifesto” for IS research was published (Westfall 1999) in 1999 in the Communications of the AIS (Association for Information Systems), one of the top venues in IS. The author argued that: “Many practitioners believe academic IS research is not relevant. I argue that our research, and the underlying rewards system that drives it, needs to respond to these concerns”. The author then proposed “three different scenarios of where the IS field could be 10 years from now [1999]”:

Scenario 1: Minimal adaptation. The IS field is shrinking, largely due to competition from newly established schools of information technology.

Scenario 2: Moderate adaptation.

Scenario 3: High adaptation. The IS field is larger than before, growing in proportion to the demand for graduates with IT skills.

The author said that scenario 1 is the “do nothing” alternative. Scenarios 2 and 3 represent substantial improvements, but they would not occur unless the community acts vigorously to improve the position. We are not sure if any recent study has looked at those three different scenarios to assess which one has been the case in recent years.

A study (Hamet and Michel 2018) in management science argued that: “The relevance literature often moans that the publications of top-ranked academic journals are hardly relevant to managers, while actionable research struggles to get published”. A 1999 paper in the IS community (Lee 1999) took a philosophical view on the issue and argued that: “It is not enough for senior IS researchers to call for relevance in IS research. We must also call for an empirically grounded and rigorous understanding of relevance in the first place”.

Another paper in the IS community (Moody 2000) mentioned that as an applied discipline, is will not achieve legitimacy by the rigor of its methods or by its theoretical base, but by being practically useful. “Its success will be measured by its contribution to the IS profession, and ultimately to society” (Moody 2000). It further talked about “the dangers of excessive rigor” since excessive rigor acts as a barrier to communication with the intended recipients of research results: practitioners, thus it leads to low relevance. The author believed that laboratory experiments are in fact a poor substitute for testing ideas in organizational contexts using real practitioners. “However, it is much more difficult to do ;rigorous’ research in a practical setting” (Moody 2000). The author also believed that most researchers focus on problems that can be researched (using “rigorous” methods) rather than those problems that should be researched. In the SE community, Wohlin has referred to this phenomenon as “research under the lamppost” (Wohlin 2013a).

In other fields, there are even books on relevance of academic conferences, e.g., a book entitled “Impacts of mega-conferences on the water sector” (Biswas and Tortajada 2009), which reported that: “…except for the UN Water Conference, held in Argentina in 1977, the impacts of the subsequent mega-conferences have been at best marginal in terms of knowledge generation and application, poverty alleviation, environmental conservation and /or increasing availability of investments funds for the water sector”.

In the literature of research relevance, we also found a few papers from sub-areas of Computer Sciences, different than SE, e.g., in the field of data-mining (Pechenizkiy et al. 2008), Human-Computer Interaction (HCI) (Norman 2010), and Decision-support systems (Vizecky and El-Gayar 2011). Entitled “Towards more relevance-oriented data mining research”, (Pechenizkiy et al. 2008) argued that the data-mining community has achieved “fine rigor research results” and that “the time when DM [data-mining] research has to answer also the practical expectations is fast approaching”.

Published in ACM Interactions magazine, one of the top venues for HCI, (Norman 2010) started with this phrase: “Oh, research is research, and practice is practice, and never the twain shall meet”. The paper argued that: “Some researchers proudly state they are unconcerned with the dirty, messy, unsavory details of commercialization while also complaining that practitioners ignore them. Practitioners deride research results as coming from a pristine ivory tower—interesting perhaps, but irrelevant for anything practical”. Thus, it seems that the issues of research relevance as discussed in SE community does also exist in the HCI community. (Norman 2010) proposed an interesting idea: “Between research and practice a third discipline must be inserted, one that can translate between the abstractions of research and the practicalities of practice. We need a discipline of translational development. Medicine, biology, and the health sciences have been the first to recognize the need for this intermediary step through the funding and development of centers for translational science”.

Overall, the extensive discussion on the relevance issue (even referred to as the relevance “problem” (Kieser et al. 2015)) in science in general has been ongoing for more than 50 years, i.e., there are papers published back in the 1960’s, e.g., a paper with the following title: “The social sciences and management practices: Why have the social sciences contributed so little to the practice of management?” (Haire 1964). The papers on the issue have been either often based on opinion, experience, or empirical data (survey studies). Some studies also such as (Fox and Groesser 2016) have used related theories such as: Relevance Theory (Sperber and Wilson 2004), Cognitive Dissonance Theory, and Cognitive Fit Theory for their discussions on this topic. Such studies have aimed at broadening the framing for the issue and provide greater specificity in the discussion of factors affecting relevance.

Many studies have also proposed recommendations (suggestions) for improving research relevance (Hamet and Michel 2018; Lee 1999; Vizecky and El-Gayar 2011), e.g., changes in the academic system (e.g., hiring and tenure committees assigning values for efforts beyond just papers), changes in the research community (e.g., journals appreciating empirical/industrial studies), and changes to the funding system (e.g., funding agencies encouraging further IAC).

Furthermore, it seems that some members of each scientific community seem to be forefront “activists” on the issue while other researchers tend to still put more emphasis on rigor and not consider relevance a major issue (Kieser et al. 2015). Even, there are reports indicating that the arguments on the issue have sometimes become “heated”, e.g., “… some feelings of unease are to be expected on the part of those scholars whose work is conducted without direct ties to practice” (Hodgkinson and Rousseau 2009). Some researchers (e.g., (Kieser and Leiner 2009)) even think that the “rigor–relevance gap” is “unbridgeable”. For example, Kieser and Leiner (Kieser and Leiner 2009) said: “From a system theory perspective, social systems are self-referential or autopoietic, which means that communication elements of one system, such as science, cannot be authentically integrated into communication of other systems, such as the system of a business organization”. Such studies sometimes have led to follow-up papers which have criticized such views, e.g., (Hodgkinson and Rousseau 2009).

2.4.2 Existing review studies on the issue of research relevance in other fields

In addition to the “primary” studies on this issue, many review studies (e.g., systematic reviews) have also been published in the context of other fields. We did not intend to conduct a systematic review on those review studies, i.e., a tertiary study, instead we show a summary of a few selected review studies. Table 5 lists the selected studies, and we briefly discuss each of them below.

A 1999 systematic mapping paper mapped the research utilization field in nursing (Estabrooks 1999). It was motivated by the fact that: “the past few years [before 1999] have seen a surge of interest in the field of research utilization”. The paper offered critical advice on the issue in that field, e.g., “Much of it [the literature in this area] is opinion and anecdotal literature, and it has a number of characteristics that suggest the profession has not yet been able to realize sustained initiatives that build and test theory in this area”.

A review paper in management science (Kieser et al. 2015) focused on “turning the debate on relevance into a rigorous scientific research program”. It argued that “in order to advance research on the practical relevance of management studies, it is necessary to move away from the partly ideological and often uncritical and unscientific debate on immediate solutions that the programmatic literature puts forward and toward a more rigorous and systematic research program to investigate how the results of scientific research are utilized in management practice”. The paper (Kieser et al. 2015) reviewed 287 papers focusing on the relevance issue in management science and synthesized the reasons for the lack of relevance, and suggested solutions in the literature to improve research relevance. The current MLR is a similar study in the context of SE. The paper (Kieser et al. 2015) organized the literature of the so-called “relevance problem” in management science into several “streams of thought”, outlining the causes of the relevance problem that each stream identifies, the solutions it suggests, and summarizes the criticism it has drawn. Those “streams of thought” include: popularization view, institutional view, action-research, Mode-2 research, design science, and evidence-based management. The popularization view is considered the most traditional approach in the programmatic relevance issue. Proponents of this view are concerned with how existing academic knowledge can be transferred to practitioners. They regard the inaccessibility of research and the use of academic jargon as the most important barriers to relevance.

An SLR of the rigor-relevance debate in top-tier journals in management research was reported in (Carton and Mouricou 2017). By being quite similar to (Kieser et al. 2015), it identified four typical positions on rigor and relevance in management research: (1) gatekeepers’ orthodoxy, (2) collaboration with practitioners, (3) paradigmatic shift, and (4) refocusing on common good. It argued that, although contradictory, these positions coexist within the debate and are constantly being repeated in the field. The paper linked the findings to the literature on scientific controversies and discussed their implications for the rigor-relevance debate (Carton and Mouricou 2017).

A literature review of the research–practice gap in the fields of management, applied psychology, and human-resource development was reported in (Tkachenko et al. 2017). The paper synthesized the community’s discussions on the topic across the above three fields into several themes, e.g., the researching practitioner and the practicing researcher, and engaged scholarship.

A recent 2019 paper (Moeini et al. 2019) reported a descriptive review of the practical relevance of research in “IS strategy”, which is a sub-field under IS. This sub-field deals with use of IS to support business strategy (Moeini et al. 2019). The review paper presented a framework of practical relevance with following dimensions: (1) potential practical relevance, (2) relevance in topic selection, (3) relevance in knowledge creation, (4) relevance in knowledge translation, and (5) relevance in knowledge dissemination.

2.4.3 Existing review studies on the topic of research relevance in SE

There has been “meta-literature”, i.e., review (secondary) studies, on the research relevance in sub-topics of SE, e.g., (Dybå and Dingsøyr 2008; Paternoster et al. 2014; Munir et al. 2014; Doğan et al. 2014). The work reported in (Dybå and Dingsøyr 2008) was an SLR on empirical studies of Agile SE. One of the studied aspects was how useful the reported empirical findings are to the software industry and the research community, i.e., it studied both academic and industrial relevance.

SLR studies such as (Paternoster et al. 2014; Munir et al. 2014; Doğan et al. 2014) used a previously-reported method for evaluating rigor and industrial relevance in SE (Ivarsson and Gorschek 2011), as discussed in Section 2.1, which includes the following four aspects for assessing relevance: subjects, context, scale, and research method. As discussed in Section 2.1.4, one major limitation (weakness) of that rubric (Ivarsson and Gorschek 2011) is that it does not include: addressing real challenges and applicability, which are two important dimensions of relevance, in our opinion.

For example, the SLR reported in (Doğan et al. 2014) reviewed a pool of 58 empirical studies in the area of web application testing, using the industrial relevance and rigor rubrics, as proposed in (Ivarsson and Gorschek 2011). The pair-wise comparison of rigor and relevance for the analyzed studies is shown as a bubble-chart in Fig. 3. The SLR (Doğan et al. 2014) reported that one can observe quite a reasonable level of rigor and low to medium degree of relevance, in this figure.

Rigor versus relevance of the empirical studies as analyzed in the SLR on web application testing (Doğan et al. 2014)

By searching the literature, we found no review studies on the topic of research relevance in the “entirety” of the SE research field.

As another related work is an SLR on approaches, success factors, and barriers for technology transfer in SE (Brings et al. 2018). As discussed in Section 2.3, research relevance, and technology transfer of SE research are closely-related concepts. Among the findings of the SLR was that, empirical evidence, maturity, and adaptability of the technology (developed in research settings) seem important preconditions for successful transfer, while social and organizational factors seem important barriers to successful technology transfer. We could contrast the above factors with dimensions of research relevance, in which one important factor is focusing on real-world SE problems (Table 4).

2.5 Current state of affairs: SE practice versus research (industry versus academia)

To better understand research relevance, we also need to have a high-level view of the current state of affairs between SE practice versus research (industry versus academia) (Garousi et al. 2016b; Garousi et al. 2017c; Garousi and Felderer 2017). It is without a doubt that the current level of IAC in SE is relatively small compared to the level of activities and collaborations within each of the two communities, i.e., industry-to-industry collaborations and academia-to-academia collaborations. A paper (Moody 2000) in the IS community put this succinctly as follows: “While they deal with the same subject matter, practitioners and researchers mix in their own circles, with very little communication between them”.

While it is not easy to get quantitative data on IAC, we can look at the estimated population of the two communities. According to a report by Evans Data Corporation (Evans Data Corporation 2019), there were about 23 million software developers worldwide in 2018, and that number is estimated to reach 27.7 million by 2023. According to a 2012 IEEE Software paper (Briand 2012), “4000 individuals” are “actively publishing in major [SE] journals”, which can be used as the estimated size (lower bound) of the SE research community. If we divide the two numbers, we can see that on average, there is one SE academic for every 5750 practicing software engineer, denoting that the size of the SE research community is very small compared to the size of the SE workforce. To better put things in perspective, we visualize the two communities and the current state of collaborations in Fig. 4.

Inside each of the communities in Fig. 4, there are groups of software engineers working in each company, and SE researchers in each academic institution (university). The bi-directional arrows (edges) in Fig. 4 denote the collaboration and knowledge sharing between members of practitioners and researchers, inside their companies, universities, and with members of the other community. In the bottom of Fig. 4, we are showing the knowledge flow in both directions between the two communities. From industry to academia, knowledge flows could occur in various forms, e.g., interviews, opinion surveys, project documents, ethnography, reading GL sources (such as blog posts), and joint collaborative projects. In the other direction, from academia to industry, knowledge flows occur in forms of practitioners reading academic papers, talks by researchers, and joint collaborative projects (“technology transfer”), etc.

While we can see extensive collaborations within each of the two communities (software industry and academia), many believe that the level of collaborations between members of the two communities are much less frequent (Moody 2000). There are few quantitative data sources on the issue of interaction and information flow between the two communities. For example, in a survey of software testing practices in Canada (Garousi and Zhi 2013), a question asked practitioners to rate their frequency of interaction (collaboration) with academics. Based on the data gathered from 246 practitioners, the majority of respondents (56%) mentioned never interacting with the researchers in academia. 32% of the respondents mentioned seldom interactions. Those who interacted with researchers once a year or more only covered a small portion among all respondents (12%). Thus, we see that, in general, there are limited interaction, knowledge exchanges and information flows between the two communities in SE. Nevertheless, we could clarify that there are multiple communities within academic SE and industry SE, respectively. In such a landscape, some communities might collaborate more than others, and some industry sectors are probably closer to research than others.

The weak connection between industry and academia is also visible from “the difficulties we have to attract industrial participants to our conferences, and the scarcity of papers reporting industrial case studies” (Briand 2011). Two SE researchers who both moved to industry wrote in a blog post (Wilson and Aranda 2019): “While researchers and practitioners may mix and mingle in other specialties, every SE conference seemed to be strongly biased to one side or the other” and “…only a handful of grad students and one or two adventurous faculty attend big industrial conferences like the annual Agile get-together”. On a similar topic, there are insightful stories about moving between industry and academia by people who have made the move, e.g., (King 2019).

Of course, things are not all bad, and there have been many positive efforts to bring software industry and academia closer, e.g., many specific events such as panels have been organized on the topic, such as the following example list:

A panel in ICSE 2000 conference with this title: “Why don’t we get more (self?) respect: the positive impact of SE research upon practice” (Boehm et al. 2000).

A panel in ICSE 2011 conference with this title: “What industry wants from research” (ICSE 2011).

A panel in FSE 2016 conference with this title: “The state of SE research” (FSE 2018).

A panel in ICST 2018 conference with this title: “When are software testing research contributions, real contributions?” (ICST 2018).

While the above panels are good examples of efforts to bring industry and academia closer, they are not examples of successes in terms of evenly-mixed attendance by both practitioners and researchers, since most of their attendees were researchers. While some researchers clearly see the need to pursue discussions on this topic, most practitioners in general do not seem to care about this issue. This opinion was nicely summarized in a recent book chapter by Beecham et al. (Beecham et al. 2018): “While it is clear that industry pays little attention to software engineering research, it is not clear whether this [relevance of SE research] matters to practitioners.”

The “Impact” project (Osterweil et al. 2008), launched by ACM SIGSOFT, aimed to demonstrate the (indirect) impact of SE research through a number of articles by research leaders, e.g., (Emmerich et al. 2007; Rombach et al. 2008). Although some impact can certainly be credited to research, we are not aware of any other engineering discipline trying to demonstrate its impact through such an initiative. This, in itself, is a symptom of a lack of impact as the benefits of engineering research should be self-evident.

In a classic book entitled “Software creativity 2.0” (Glass 2006), Robert Glass dedicated two chapters to “theory versus practice” and “industry versus academe” and presented several examples (which he believes are “disturbing”) on the mismatch of theory and practice. One section of the book focused especially on “Rigor vs. relevance [of research]” (Section 8.8). Another book by Robert Glass was on “Software conflict 2.0: the art and science of software engineering” (Glass and Hunt 2006) in which he also talked about theory versus practice and how far (and disconnected) they are.

Before leaving this section, we want to clarify that Fig. 4 shows a simplified picture as academic research is not limited to the universities. Public and private research institutes as well as corporate research centers publish numerous SE papers. Research institutes focusing on applied science, which may or may not be connected to universities, can help bridging gaps between academia and industry – thus supporting relevant research. The industry connections are often stressed in the corresponding mission statements, e.g., “partner with companies to transform original ideas into innovations that benefit society and strengthen both the German and the European economy” (from the mission statement of Fraunhofer family of research institutes in Germany), “work for sustainable growth in Sweden by strengthening the competitiveness and capacity for renewal of Swedish industry, as well as promoting the innovative development of society as a whole” (from the mission statement of the Research Institutes of Sweden, RISE), and “connect people and knowledge to create innovations that boost the competitive strength of industry and the well-being of society in a sustainable way” (TNO, the Netherlands). Finally, corporate research centers typically must demonstrate practical relevance of the research to motivate their existence. Corporate research centers that frequently publish in SE venues include: Microsoft Research, ABB Corporate Research, and IBM Research.

3 Research method and setup of the MLR

We discuss in the following the different aspects of the research method used for conducting the MLR.

3.1 Goal and review questions (RQs)

The goal of our MLR is to synthesize the existing literature and debates in the SE community about research relevance. Based on this goal, we raised two review questions (RQs):

RQ 1: What root causes have been reported in the SE community for the relevance problem (lack of research relevance)?

RQ 2: What ideas have been suggested for improving research relevance?

3.2 Deciding between an SLR and an MLR

During the planning phase of our review study, we had to decide whether to conduct an SLR (by only considering peer-reviewed sources) or to also include the gray literature (GL), e.g., blog posts and white papers, and conduct an MLR (Garousi et al. 2019). In our initial literature searches, we found several well-argued GL sources about research relevance in SE, e.g., (Riehle 2019; Murphy 2019; Zeller 2019; Tan and Tang 2019), and it was evident that those sources would be valuable for us when answering the study’s RQs. We thus determined that we should include GL in our review study, and thus decided to conduct an MLR instead of a SLR.

3.3 Overview of the MLR process

MLRs have recently started to appear in SE. According to a literature search (Garousi et al. 2019), the earliest MLR in SE seems to have been published in 2013, on the topic of technical debt (Tom et al. 2013). More recently, more MLRs have been published, e.g., on smells in software test code (Garousi and Küçük 2018), on serious games for software process education (Calderón et al. 2018), and on characterizing DevOps (Franca et al. 2016).

A recent guideline for conducting MLRs in SE has been published (Garousi et al. 2019), which is based on the SLR guidelines proposed by Kitchenham and Charters (Kitchenham and Charters 2007b), and MLR guidelines in other disciplines, e.g., in medicine (Hopewell et al. 2007) and education sciences (Ogawa and Malen 1991). As noted in (Garousi et al. 2019), certain phases of MLRs are quite different than those of regular SLRs, e.g., searching for and synthesizing gray literature.

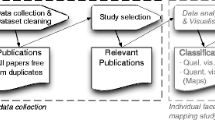

To conduct the current MLR, we used the guideline mentioned above (Garousi et al. 2019) and our recent experience in conducting several MLRs, e.g., (Garousi and Mäntylä 2016a; Garousi et al. 2017b; Garousi and Küçük 2018). We first developed the MLR process as shown in Fig. 5. The authors conducted all the steps as a team.

We present the subsequent phases of the process in the following sub-sections: (phase 2) search process and source selection; (phase 3) development of the classification scheme (map); (phase 4) data extraction and systematic mapping; and finally (phase 5) data synthesis. As we can see, this process has a lot of similarity to the typical SLR processes (Kitchenham and Charters 2007b) and also SM processes (Petersen et al. 2015; Petersen et al. 2008), the major difference being only in the handling of the gray literature, i.e., searching for those sources, applying inclusion/exclusion criteria to them and synthesizing them.

3.4 Source selection and search keywords

As suggested by the MLR guidelines (Garousi et al. 2019), and also as done in several recent MLRs, e.g., (Garousi and Mäntylä 2016a; Garousi et al. 2017b; Garousi and Küçük 2018), we performed the searches for the formal literature (peer-reviewed papers) using the Google Scholar and the Scopus search engines. To search for the related gray literature, we used the regular Google search engine. Our search strings were: (a) Relevance software research; (b) Relevant software research; and (c) utility software research.

Details of our source selection and search keywords approach were as follows. The authors did independent searches with the search strings, and during this search, they already applied inclusion/exclusion criterion for including only those results which explicitly addressed “relevance” of SE research.

Typically in SM and SLR studies, a team of researchers includes all the search results in the initial pool and then performs the inclusion/exclusion as a separate step. This results in huge volumes of irrelevant papers. For example, in an SLR (Rafi et al. 2012), the team of researchers started with an initial pool of 24,706 articles but out of those only 25 (about 0.1% of the initial pool) were finally found relevant. This means researchers had to spend a lot of (unnecessary) effort due to the very relaxed selection and filtering in the first phase. In line with our recent work (Banerjee et al. 2013; Garousi et al. 2015), we performed rigorous initial filtering to guard against including too many irrelevant papers. On the other hand, we made sure to include both clearly-related and potentially-related papers in the candidate pool to guard against missing potentially-relevant paper.

To ensure finding the relevant gray literature, we utilized the “relevance ranking” of the Google search engine (i.e., the so-called PageRank algorithm) to restrict the search space. For example, if one applies search string (a) above (“Relevance software research”) to the Google search engine, 293,000,000 results would show as of this writing (February 2019), but as per our observations, relevant results usually only appear in the first few pages. Thus, similar to what was done in several recent MLRs, e.g., (Garousi and Mäntylä 2016a; Garousi et al. 2017b; Garousi and Küçük 2018), we checked the first 10 pages (i.e., somewhat a search “saturation” effect) and only continued further if needed, e.g., when the results in the 10th page still looked relevant.

As a result of the initial search phase, we ended up with an initial pool of 62 sources. To ensure including all the relevant sources as much as possible, we also conducted forward and backward snowballing (Wohlin 2014) for the sources in formal literature, as recommended by systematic review guidelines, on the set of papers already in the pool. Snowballing, in this context, refers to using the reference list of a paper (backward snowballing) or the citations to the paper to identify additional papers (forward snowballing) (Wohlin 2014). By snowballing, we found and added to the candidate pool 10 additional sources, bringing the pool size to 72 sources. For example, source (Mahaux and Mavin 2013) was found by backward snowballing of (Franch et al. 2017), source (Pfleeger 1999) was found by backward snowballing of (Beecham et al. 2013).

3.5 Quality assessment of the candidate sources

An important phase of study selection for an SLR (Kitchenham and Charters 2007b) or an MLR study (Garousi et al. 2019) is quality assessment of the candidate sources. Because the topic under study (the relevance of SE research) differs from the topics of “technical” SE papers (e.g., papers in SE topics such as testing), and because we intended to include both peer-reviewed literature and gray literature (GL), we had to develop appropriate quality assessment criteria.

After reviewing the SLR guidelines (Kitchenham and Charters 2007b) and MLR guidelines (Garousi et al. 2019), we established the following quality assessment criteria:

Determine the information each candidate source used as the basis for its argumentation. After reviewing several candidate sources, we found that the argumentations are based on either: (1) Experience or opinions of its authors (opinions were often based on years of experience), or (2) Based on empirical data (e.g., conducting a survey with a pool of programmers about relevance of SE research or a set of SE papers).

If the source was from the peer-reviewed literature, we would assess the venue the paper has been published in, and the research profile of the authors. We used their citation counts as a proxy of research strength / expertise of the authors.

If the candidate source was from the gray literature (GL), we used a widely-used (Garousi et al. 2019) quality-assessment checklist, named the AACODS (Authority, Accuracy, Coverage, Objectivity, Date, Significance) checklist (Tyndall 2019) for appraising the source.

A given source was ranked higher in quality assessment (to discuss about relevance of SE research), if it was based on empirical data, rather than just experience or opinions. However, when the author(s) of an experience-based or opinion-based source had a high research profile (many citations), then we also ranked it high in quality assessment. Also, we found that for almost all the papers, e.g., (Glass 1996), authors of experience/opinion papers in the pool had substantial experience working in (or with the) industry (more details in Section 3.8.2). Thus, they had the qualifications / credibility to cast their experience-based opinions in their papers.

Also, venues for published papers provided indications about their quality. In the candidate pool of 62 sources, we found that four papers were published in IEEE Computer, nine were published in IEEE Software, two in Communication of ACM, and two in the Empirical Software Engineering journal, all considered to be among the top-quality SE venues.

As examples, we show in Table 6 the log of data that we derived to support quality assessment of eight example candidate sources (seven papers and one GL source). For the GL source example (a blog-post), we determined that all six criteria of the AACODS checklist (authority, accuracy, coverage, objectivity, date, significance) had a value of 1 (true). This determination was justified as follows. The blog-post author is a highly-cited SE researcher (4084 citations as of Feb. 2019, according to Google Scholar). He had written the post motivated by: “I just attended FSE 2016, a leading academic conference on software engineering research”. According to his biography (https://dirkriehle.com/about/half-page-bio), this researcher has also been involved in industrial projects and companies in several occasions.

Three of the candidate papers in Table 6 used empirical data as the basis (data source) for their argumentation. For example, (Ko 2017) used the “participant observation” research method (Easterbrook et al. 2008) to report a three-year experience of software evolution in a software start-up and its implication for SE research relevance. (Parnin and Orso 2011) reported an empirical study with programmers to assess whether automated debugging techniques are actually helping programmers, and then discussed the implication for SE research relevance.

For these eight example sources, we can see that they are all considered to be of high quality, i.e., deserve to be included in the pool of the MLR.

3.6 Inclusion / exclusion criteria

The next stage was devising a list of inclusion/exclusion criteria and applying them via a voting phase. We set the inclusion criterion as follows: The source should clearly focus on and comment about SE research relevance, and should possess satisfactory quality in terms of evidence or experience (as discussed in the previous section).

To assess candidate sources for inclusion/exclusion when their titles (main focus) was not “relevance”, but one of the related terms (e.g., impact), we used the definition of research relevance from Section 2.1. Any source, which did not clearly focus on “relevance”, was excluded. During the inclusion/exclusion process, the authors did the judgements on the candidate sources on a case-by- case basis. For example, we excluded several papers published out of the ACM SIGSOFT “Impact” project (Osterweil et al. 2008; Emmerich et al. 2007; Rombach et al. 2008), since they had only focused on research impact, and had not discussed any substantial material about relevance. As another example, a potentially related source was a blog-post in the IEEE Software official blog, entitled “The value of applied research in software engineering” (blog.ieeesoftware.org/2016/09/the-value-of-applied-research-in.html). The post was interesting, but it focused on the value of applied research and impact, and not on “relevance”. Including such sources would not have contributed any data towards answering our two RQs (see Section 3.1), since they had not contributed any direct substance about “relevance”.