Abstract

This study investigated the extent to which self-report and digital-trace measures of students’ self-regulated learning in blended course designs align with each other amongst 145 first-year computer science students in a blended “computer systems” course. A self-reported Motivated Strategies for Learning Questionnaire was used to measure students’ self-efficacy, intrinsic motivation, test anxiety, and use of self-regulated learning strategies. Frequencies of interactions with six different online learning activities were digital-trace measures of students’ online learning interactions. Students’ course marks were used to represent their academic performance. SPSS 28 was used to analyse the data. A hierarchical cluster analysis using self-reported measures categorized students as better or poorer self-regulated learners; whereas a hierarchical cluster analysis using digital-trace measures clustered students as more active or less active online learners. One-way ANOVAs showed that: 1) better self-regulated learners had higher frequencies of interactions with three out of six online learning activities than poorer self-regulated learners. 2) More active online learners reported higher self-efficacy, higher intrinsic motivation, and more frequent use of positive self-regulated learning strategies, than less active online learners. Furthermore, a cross-tabulation showed significant (p < .01) but weak association between student clusters identified by self-reported and digital-trace measures, demonstrating self-reported and digital-trace descriptions of students’ self-regulated learning experiences were consistent to a limited extent. To help poorer self-regulated learners improve their learning experiences in blended course designs, teachers may invite better self-regulated learners to share how they approach learning in class.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The coronavirus pandemic (COVID-19) emergency has required higher education learning and teaching around the world to rapidly respond, in particular, to redeploy even more learning and teaching activities to virtual learning spaces to promote physical distancing. As a result, the vast numbers of face-to-face courses have been delivered either as blended courses or as purely online courses (Tang et al., 2021). Learning in the online and blended contexts requires students to take high levels of control of and to regulate their learning (Vanslambrouck et al., 2019; Wong et al., 2019). As a result, an increasing number of studies have examined students’ self-regulated learning in online and blended deliveries (Broadbent & Fuller-Tyszkiewicz, 2018; Sun et al., 2018). These studies have focused on how different aspects in self-regulated learning, such as motivation and emotion, may impact on students’ learning processes and their learning outcomes (Li et al., 2020). However, these studies have predominantly employed self-reported measures to examine what and how students learn in online and blended contexts (Han, 2022). With the development of modern technology and learning analytics, the online learning management systems (LMSs) are able to record students’ online learning and generate digital-trace data to describe what and how students learn online (Ainley & Patrick, 2006). In this scenario, it is important to examine the extent to which self-reported and digital-trace measures are consistent with each other in terms of describing students’ self-regulated learning, which is the focus of this chapter.

2 Literature review

2.1 Social-cognitive view of self-regulated learning

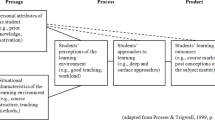

Self-regulated learning describes learners’ cognitively and metacognitively oriented thoughts, feelings, and actions towards the attainment of certain learning goals (Zimmerman & Schunk, 2001). It recognizes the active role played by students and acknowledges the self-directed nature in the process of learning, as students are thought to be able to set goals for their learning; monitor, regulated, and modify their cognition and motivation in order to achieve the learning goals they set (Zimmerman, 2000). During the constant monitoring, regulating, and modifying processes, learning takes place by students’ active construction of meaning through an interaction between their prior knowledge, their own characteristics, and their learning contexts and environments (Pintrich, 2000). Various models have been proposed to describe and conceptualize self-regulated learning (Moos & Stewart, 2013). Some models conceive self-regulated learning as an event-based phenomenon in specific contexts (Azevedo, 2009; Azevedo et al., 2010; Greene & Azevedo, 2010; Järvelä & Hadwin, 2013); whereas others describe the overall process of self-regulated learning (Zimmerman, 2000). The self-regulated models also have different theoretical bases. For instance, Winne and Hadwin’s (1998) model adopts an information processing perspective; the model proposed by McCaslin and Hickey (2001) departs from a sociocultural point of view; and Pintrich’s (2000) self-regulated model is based on social-cognitive theory. Researchers have also attached importance to different aspects in the process of self-regulated learning. While some researchers emphasize metacognitive monitoring and control in self-regulated learning (Winne, 2001; Winne & Perry, 2000); some focus on goal settings (Boekaerts, 2011); and others pay attention to the cognitive aspect of self-regulated learning (Winne, 1996).

Amongst different conceptualised models of self-regulated learning, Pintrich’s (2000) social-cognitive model comprehensively describe the main process of self-regulated learning and is one of the most widely adopted theoretical framework to measure self-regulated learning (Broadbent & Poon, 2015). This model proposes a triadic reciprocal interaction amongst motivation, the environment, and behaviour, in which self-regulated learning is perceived as a “dynamic and contextually bound” phenomenon rather than a static trait of students (Duncan & McKeachie, 2005, p. 117). It proposes that learners’ motivation, cognition, and self-regulated learning strategies may vary depending on the contextual features of the learning environment and characteristics of learning tasks (e.g., the nature of the course, its structure, and the learning activities) along with learners’ internal state of mind (e.g., students’ interest and their abilities) (Pintrich & Zusho, 2007). Therefore, the external environment, such as the course designs (including the design of the online course sites) can either facilitate or hamper self-regulated learning processes (Zimmerman & Schunk, 2011), and may in turn affect the academic performance (Broadbent & Poon, 2015). In this perspective, able self-regulated learners are often described as having higher self-efficacy to manage and effectively organize study with a minimum of distractions, feeling less anxious, having appropriate learning goals, and adopting appropriate self-regulated learning strategies (Pajares, 2002). Based on this model, Pintrich and colleagues developed the Motivated Strategies for Learning Questionnaire (MSLQ, Pintrich et al., 1991), which is one of the most frequently adopted instrument to measure self-regulated learning (Azevedo et al., 2012).

2.2 Research on self-regulated learning using self-reported measures

According to Winne and Perry (2000), self-regulated learning has properties of an aptitude and an event. The aptitude aspect concerns relatively stable attributes of a person which influence his/her behaviors; whereas the event aspect describes self-regulated learning as occurrences marked by transitions from one state to another along a timeline. Investigations into the aptitude aspect of self-regulated learning have predominantly relied on collecting self-reported data, such as questionnaires, structured interviews, teacher judgement, or think-aloud method (Schellings & Van Hout-Wolters, 2011; Weinstein et al., 1987; Winne, 2010). Of these methods, self-reported questionnaires are the most frequently used measures due to the easiness of administer and score (Winne & Perry). A considerable number of aptitude variables in self-regulated learning have been researched, such as motivation, anxiety, concentration, time management, and strategies (Panadero, 2017).

However, the self-reported data have received criticism, such as lacking objectivity (Matcha et al., 2020; Zhou & Winne, 2012); students’ careless answering and item nonresponse (Hitt et al., 2016; Zamarro et al., 2018); their inability to represent the complexity and dynamics of students’ self-regulated strategy use in real learning contexts (Zhou & Winne, 2012). To improve insights into understanding students’ self-regulated learning, researchers have suggested to use multiple measures and types of data, such as digital-trace data (Vermunt & Donche, 2017), as this type of data may provide “both researchers and practitioners with the opportunity to monitor students’ strategic decisions in online environments in minute detail and in real time” (Richardson, 2017, p. 359).

2.3 Research on self-regulated learning using digital-trace measures

The rich and detailed digital-trace of students’ interactions with a variety of online learning resources and activities have the advantage of offering descriptions of students’ online learning behaviors and strategies relatively more objectively than using self-reported measures (Baker & Siemens, 2014; Siemens, 2013; Sclater et al., 2016).

For instance, Jovanović et al. (2017) used the 13-week digital-trace data extracted from the LMS to investigate 290 computer science students’ self-regulated online learning strategies. The hierarchical sequence analysis identified five distinct of groups of students differed by their self-regulated learning strategies, namely the intensive students (diverse online learning strategies); the strategic students (focusing on summative and formative assessments); the highly strategic students (focusing on summative assessments and reading materials); the selective group (emphasizing summative assessments without much involvement in reading the course materials); and the highly selective group (predominantly performing summative assessments). The students with different self-regulated learning strategies also differed on their academic performance: the first three groups performed significantly better on both mid-term and final examinations than the other two groups. Despite presenting informative findings with regard to students’ use of self-regulated learning strategies in online learning, this study failed to reveal information on learners’ internal state of mind, such as their motivation, learning goals, and anxiety, which are important aspects in social-cognitive perspectives on self-regulated learning.

2.4 Research on self-regulated learning by combining self-reported and digital-trace measures

Recognising the limitations of the self-reported and digital-trace measures to research self-regulated learning, researchers have proposed to combine both self-reported and digital-trace measures, which will enable investigations of students’ self-regulated learning to be more holistic and comprehensive (Gašević et al., 2015; Lockyer et al., 2013; Rienties & Toetenel, 2016). The majority of the existing studies combining self-reported and digital-trace measures examined how combining two types of measures would improve the explanatory power to predict the academic performance of self-regulated learning (Reimann et al., 2014). For instance, using multiple regression analyses, Pardo et al. (2017) found that students’ reported anxiety in learning and their reported use of self-regulated learning strategies could only explain 7% of variance in students’ academic performance; whereas adding the frequency of students’ interactions with the online learning activities measured by digital traces into the regression model could explain 32% of variance in students’ academic performance, which increased an additional of 25% of variance. Little research, however, investigates the extent to which the two types of measures are consistent to describe students’ self-regulated learning, which will be the aim of the current study.

The current study sought to answer three research questions:

-

1.

Do digital-trace measures of students’ interactions with the online learning activities and academic performance differ by their self-reported self-regulated learning?

-

2.

Do self-regulated learning reported by students and academic performance differ by digital-trace measures of students’ interactions with the online learning activities?

-

3.

Do profiles of students’ self-regulated learning by self-reports and digital traces align with each other?

3 Method

3.1 Participants and the context of research

The participants were 145 computer science undergraduates, of whom 108 were male (74.5%) and 37 were female (25.5%). They were enrolled in a first-year compulsory course – “computer systems” in an Australia metropolitan university. The course run for 13 weeks and had four learning aims: 1) to understand the concepts of computer systems; 2) to gain insights into fundamentals of information technology hardware and software; 3) to distinguish different types of business systems and to conduct basic systems analysis; and 4) to study software development and create simple Java programs. In addition to acquiring content knowledge and skills, the course also aimed to horn students’ generic attributes, such as independent inquiry skills, communication and negotiation abilities; and developing information and digital literacy. The course was designed as a blended course, which not only required students to attend face-to-face and online learning and teaching but also to study online learning materials and activities in a customarily designed LMS (Please see the instrument section for online learning activities).

3.2 Instruments

Three types of data were collected, namely self-reported data, digital-trace data, and academic performance data.

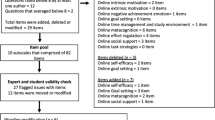

3.2.1 Self-reported data collected by the motivated strategies for learning questionnaire (MSLQ)

The self-reported data were collected using five scales in the MSLQ (Pintrich et al., 1991), namely self-efficacy (8 items), anxiety (4 items), intrinsic motivation (5 items), positive self-regulated learning strategies (4 items), and negative self-regulated learning strategies (3 items). The MSLQ was developed and validated by Pintrich and colleagues (Pintrich et al.). The questionnaire asked students to rate on 7-point anchors, with 1 representing “not at all true of me” and 7 indicating “very true of me”. In our study, the values of Cronbach’s alpha reliability of all the five scales (self-efficacy: α = .89, anxiety: α = .84, intrinsic motivation: α = .85, positive self-regulated learning strategies: α = .68, and negative self-regulated learning strategies: α = .68) were above .65, which showed acceptable reliability (Griethuijsen et al., 2014).

3.2.2 Digital-trace data of students’ interactions with the online learning activities

The digital-trace data were collected via the learning analytics function built in a customer-designed LMS, which was able to record frequency of students’ interactions with the online learning activities. There were six types of online learning activities:

-

dashboard-view: provided a student’s performance with regard to other students in the course.

-

collapse-and-expand: involved collapsing and expanding HTML page.

-

resource-view: involved viewing the supplementary course materials.

-

video: required learners to watch a video file.

-

multiple-choice-question: required learners to complete a multiple-choice-question.

-

excise: required students to answer questions in a sequence.

3.2.3 Academic performance

Students’ course marks were used to represent their academic performance. The course mark was an aggregated score of the six assessment tasks, with the highest possible mark being 100. The six assessment tasks were: 1) weekly online exercises (10%); 2) tutorial preparation and participation (10%); 3) written laboratory reports, which included laboratory experiment logs, and problems and solutions of laboratory activities and work (5%); 4) major project (15%); 5) midterm examination (20%); and 6) final examination (40%).

3.3 Data collection procedure

Data collection strictly followed the ethical procedures stipulated in the Human Ethics Committee of the researcher’s University. Before the data collection, we explained to the participants that participating in the study was completely voluntary and obtained their written consent to completing the questionnaire, to access to the digital footprints left in the LMS, and to access to their course marks. We explained to the participants that the data would only be used for research purposes and their identity would be kept anonymously throughout the research. We administered the questionnaire towards the end of the course, which allowed students to have relatively full experience to reflect upon.

3.4 Data analysis

Methods for data analyses are presented in Table 1.

4 Results

4.1 Descriptive statistics

The descriptive statistics of self-reported measures, digital-trace measures, and academic performance are presented in Table 2.

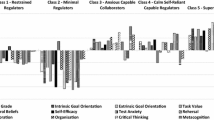

4.2 Results for research question 1 – Differences of digital-trace measures of students’ online learning and academic performance based on self-reported measures

The increasing value of the squared Euclidean distance between the clusters suggested a two-cluster solution: cluster 1 had 62 students whereas cluster 2 had 83 students (Table 3). One-way ANOVAs showed significant differences on all the self-reported measures: self-efficacy, anxiety, and intrinsic motivation, positive self-regulated learning strategies, and negative self-regulated learning strategies. Specifically, cluster 1 students reported higher self-efficacy, intrinsic motivation, and use of positive self-regulated learning strategies; but lower anxiety, and use of negative self-regulated learning strategies, than cluster 2 students. According to these patterns, cluster 1 students were named as better self-regulated learners and cluster 2 students were called poorer self-regulated learners.

Furthermore, one-way ANOVAs also showed that better and poorer self-regulated learners differed significantly on the frequencies of the interactions of the three out of six online learning activities, namely dashboard-view; multiple-choice-question; and exercise. Better self-regulated learners interacted significantly more frequently than their peers in the poorer self-regulated cluster on dashboard-view, multiple-choice-question, and exercise. Moreover, better self-regulated learners also achieved significantly better academic performance than poorer self-regulated learners.

4.3 Results for research question 2 - differences of self-reported measures of students’ self-regulated learning and academic performance based on digital-trace measures

Based on the increasing value of the squared Euclidean distance between the clusters, the hierarchical cluster analysis using digital-trace measures also produced a two-cluster solution: cluster 1 and 2 had 88 and 57 students respectively (Table 4). One-way ANOVAs found significant differences between the two clusters on all digital-trace measures: dashboard-view, collapse-and-expand view, resource, video, multiple-choice-question, and exercise. Specifically, cluster 1 students had higher frequencies of the interactions with all the online learning activities than their peers in cluster 2. Hence, cluster 1 and cluster 2 were referred to as more active and less active online learners respectively.

The one-way ANOVAs further revealed that more active and less active online learners also differed significantly on three out of five self-reported scales: self-efficacy, intrinsic motivation, and positive self-regulated learning strategies. More active online learners reported having higher self-efficacy, higher intrinsic motivation, and using more positive self-regulated learning strategies, than less active online learners. At the same time, the two clusters of students also differed significantly on their final examination results. More active online learners obtained higher course marks than less active online learners.

4.4 Results for research question 3 - alignment of profiles students by self-reported and digital-trace measures

The results of the cross-tabulation analysis revealed significant but weak association between the clusters by self-reported and digital-trace measures: χ2 (1) = 8.28, p < .01, φ = .24. Table 5 shows that amongst students who self-reported as better self-regulated learners, a significantly higher proportion was more active online learners (74.2%) than less active learners (25.8%). However, of students who self-reported as poorer self-regulated learners, the proportions between more (50.6%) and less (49.4%) active online learners did not differ.

5 Discussion

5.1 Clustering students based on self-reported or digital-trace measures

We found a certain level consistency no matter using self-reported or digital-trace measures to cluster students. The cluster analyses identified groups of students who showed similar self-regulated learning experience in terms of how they reported their efficacy, anxious feelings, intrinsic goals, and their self-regulated learning strategies on the one hand, and how they were observed to engage online, and how well they achieved in the course. Within each group, there was coherence between self-reported learning experience and their actual online engagement in the compulsory online part of the course. Students who self-reported having higher efficacy, setting higher intrinsic goals, feeling less anxious, using more appropriate self-regulated learning strategies in the course, were also more likely to be active learners in the online part of the learning, as they tended to use the dashboard functions to evaluate their learning by comparing it with other students; interacted with the multiple-choice-questions and exercises significantly more frequently than those who reported lower efficacy, lower intrinsic goals, more anxiety, and using less desirable self-regulated learning strategies. At the same time, better self-regulated learners measured by self-reporting also scored significantly higher in the course performance than poorer self-regulated learners did. Oure results were consistent with previous studies, which reported that self-regulated learners also tended to achieve better academic performance (Kizilcec et al., 2013, 2017).

5.2 Weak association between students’ profiles by self-reported and digital-trace measures

Furthermore, although we found significant association (p < .01) between students’ profiles by self-reported measures and digital-trace measures, such association was rather weak (φ = .24). Of students who were classified as poorer self-regulated learners by their reporting on their self-efficacy, intrinsic goals, anxiety, and use of regulatory strategies in learning, the proportions of more active and less active online learners by digital-trace measures were the same (50.6% vs. 49.4%). One possible explanation of this finding could be that poorer self-regulated learners were less accurate in their self-reporting. This weak alignment was also reported in Ye and Pennisi (2022) that approximately one third of their participants (from 30.8% to 53.9% depending on whether there were three or two types of self-regulated learning strategies) demonstrated inconsistency between their self-regulated learning strategies measured by self-reported questionnaires and digital-trace data. Ye and Pennisi also found that amongst their participants, better self-regulated learners tended to report their self-regulated learning experience more accurate than poorer self-regulated learners. Indeed, in the interviews with the participants, Ye and Pennisi found that better self-regulated learners were able to identify their weaknesses through self-reflection – an important self-regulatory ability.

Another possible explanation of weak alignment between students’ profiles by self-reported and digital-trace measures could be that the two types of measures captured different aspects in self-regulated learning and could offer complementary information. Past research demonstrated that inclusion of both students’ self-regulated learning experience assessed by self-reported measures (e.g., use of self-regulated learning strategies) and digital-trace measures (e.g., frequency of students’ online learning interactions) significantly increased the percentage of predictions of academic achievement than using either self-reported measures or digital-trace measures alone. For example, Pardo et al. (2017) found that students’ reporting of self-regulated learning predicted only 7% of variance in their academic achievement. Adding digital traces of online interactions predicted an additional of 25% of variance in students’ academic achievement. Similarly, Li et al. (2020) found that adding digital-trace measures of students’ time management (regulatory learning strategies) on top of students’ self-reporting about how they managed time during the course improved the prediction from 1% to 30% for course grade, and from 1% to 28% for final examination score. In the same study, Li et al. also showed adding digital traces of students’ effort regulation into the regression analyses increased the predictive power made by just using students’ reporting of their effort regulation from 2% to 28% for course grade, and from 2% to 24% for final examination scores. Viewed together, these past findings as well as ours may suggest that combining using self-reporting and digital traces measures is likely to offer a more comprehensive picture of students’ self-regulated learning experience in blended course designs than using either measure alone.

5.3 Implications of the study

The results of the study may not only benefit teachers and students but also institutional learning designers. The identification of the better self-regulated learners early in the course may allow teachers to invite those learners to share their experience, such as how they direct their actions and efforts to achieve their learning goals, so that poorer self-regulated learners can make adjustment to emulate their peers. To encourage students to actively participate in the online learning, teachers may consider adopting strategies which aim to orient students to online learning and to the online learning site. For example, teachers may explicitly explain the purposes of different online activities, resources, and materials, and how these are linked with the learning and teaching in the face-to-face lectures. Teachers may also guide students to navigate the online course site and to explore various functions and sections in the LMS.

As our study also showed that digital-trace measures extracted from LMS were able to reflect how students approach online learning activities and tasks differently, it will benefit students if learning designers can improve functions of LMS to embed learning analytic tools which allow students to monitor their own online learning, as currently most learning analytic tools are designed solely for the teaching staff (Viberg et al., 2018). For instance, tools can be built to alert students if their online participation is below the class average. This will remind students with low online participation to catch up.

5.4 Limitations and directions for future research

Some of the limitations of the study should be taken into consideration when interpreting the results and designing further research on this line of inquiry. First, the major limitation of the study was that using frequency of interactions with the online learning activities alone to represent students’ online learning interaction. Frequency of online participation is only one of the possible indicators of students’ online learning. Apart from frequency, duration of time spent on different online learning activities and patterns of time-stamped sequences of online learning activities are also possible indicators of students’ online learning (Bannert et al., 2014; Hadwin et al., 2007; Han et al., 2022; Winne et al., 2017). Therefore, future studies should use different types of digital-trace data to assess students’ online learning (Fincham et al., 2019; Jovanović et al., 2017).

Second, digital-trace measures of students’ interactions with the online learning activities only reflected students’ learning experience in the online part of the whole course, whereas self-reported measures of students’ self-efficacy, intrinsic goal, anxiety, and self-regulated learning strategies were concerned with the learning in both face-to-face and online components in the course. Future research should add additional more objective measures (other than self-reported measures) of students’ learning experiences in the face-to-face part of the learning in blended course designs.

Data availability

The dataset used and analysed during the current study is available from the corresponding author on reasonable request.

References

Ainley, M., & Patrick, L. (2006). Measuring self-regulated learning processes through tracking patterns of student interaction with achievement activities. Educational Psychology Review, 18(3), 267–286. https://doi.org/10.1007/s10648-006-9018-z

Azevedo, R. (2009). Theoretical, methodological, and analytical challenges in the research on metacognition and self-regulation: A commentary. Metacognition and Learning, 4(1), 87–95. https://doi.org/10.1007/s11409-009-9035-7

Azevedo, R., Moos, D. C., Witherspoon, A. M., & Chauncey, A. D. (2010). Measuring cognitive and metacognitive regulatory processes used during hypermedia learning: Theoretical, conceptual, and methodological issues. Educational Psychologist, 45(4), 1–14. https://doi.org/10.1080/00461520.2010.515934

Azevedo, R., Behnagh, R., Duffy, M., Harley, J., & Trevors, G. (2012). Metacognition and self-regulated learning in student-centered leaning environments. In S. Land & D. Jonassen (Eds.), Theoretical foundations of student-centered learning environments (pp. 171-197).

Baker, R., & Siemens, G. (2014). Educational data mining and learning analytics. In R. Sawyer (Ed.), The Cambridge handbook of the learning sciences (2nd ed., pp. 253–272). Cambridge University Press.

Bannert, M., Reimann, P., & Sonnenberg, C. (2014). Process mining techniques for analysing patterns and strategies in students’ self-regulated learning. Metacognition and Learning, 9(2), 161–185. https://doi.org/10.1007/s11409-013-9107-6

Boekaerts, M. (2011). Emotions, emotion regulation, and self-regulation of learning. In H. Schunk & B. Zimmerman (Eds.), Handbook of self-regulation of learning and performance (pp. 422–439). NY: Routledge.

Broadbent, J., & Fuller-Tyszkiewicz, M. (2018). Profiles in self-regulated learning and their correlates for online and blended learning students. Educational Technology Research and Development, 66(6), 1435–1455. https://doi.org/10.1007/s11423-018-9595-9

Broadbent, J., & Poon, W. L. (2015). Self-regulated learning strategies and academic performance in online higher education learning environments: A systematic review. The Internet & Higher Education, 27, 1–13. https://doi.org/10.1016/j.iheduc.2015.04.007

Duncan, T. G., & McKeachie, W. J. (2005). The making of the motivated strategies for learning questionnaire. Educational Psychologist, 40(2), 117–128. https://doi.org/10.1207/s15326985ep4002_6

Fincham, E., Gašević, D., Jovanović, J., & Pardo, A. (2019). From study tactics to learning strategies: An analytical method for extracting interpretable representations. IEEE Transactions on Learning Technologies, 12(1), 59–72. https://doi.org/10.1109/TLT.2018.2823317

Gašević, D., Dawson, S., & Siemens, G. (2015). Let’s not forget: Learning analytics are about learning. TechTrends, 59(1), 64–75. https://doi.org/10.1007/s11528-014-0822-x

Greene, J. A., & Azevedo, R. (2010). The measurement of learners’ self-regulated cognitive and metacognitive processes while using computer-based learning environments. Educational Psychologist, 45(4), 203–209. https://doi.org/10.1080/00461520.2010.515935

Griethuijsen, R. A. L. F., Eijck, M. W., Haste, H., Brok, P. J., Skinner, N. C., Mansour, N., et al. (2014). Global patterns in students’ views of science and interest in science. Research in Science Education, 45(4), 581–603. https://doi.org/10.1007/s11165-014-9438-6

Hadwin, A. F., Nesbit, J. C., Jamieson-Noel, D., Code, J., & Winne, P. H. (2007). Examining trace data to explore self-regulated learning. Metacognition Learning, 2(2–3), 107–124. https://doi.org/10.1007/s11409-007-9016-7

Han, F. (2022). Recent development in university student learning research in blended course designs: Combining theory-driven and data-driven approaches. Frontiers in Psychology. https://doi.org/10.3389/fpsyg.2022.905592

Han, F., Ellis, R. A., & Pardo, A. (2022). The descriptive features and quantitative aspects of students’ observed online learning: How are they related to self-reported perceptions and learning outcomes? IEEE Transactions on Learning Technologies. https://doi.org/10.1109/TLT.2022.3153001

Hitt, C., Trivitt, J., & Cheng, A. (2016). When you say nothing at all: The predictive power of student effort on surveys. Economics of Education Review, 52, 105–119. https://doi.org/10.1016/j.econedurev.2016.02.001

Järvelä, S., & Hadwin, A. F. (2013). New frontiers: Regulating learning in CSCL. Educational Psychologist, 48(1), 25–39. https://doi.org/10.1080/00461520.2012.748006

Jovanović, J., Gašević, D., Pardo, A., Dawson, S., & Mirriahi, N. (2017). Learning analytics to unveil learning strategies in a flipped classroom. The Internet & Higher Education, 23, 74–85. https://doi.org/10.1016/j.iheduc.2017.02.001

Kizilcec, R. F., Piech, C., & Schneider, E. (2013). Deconstructing disengagement: Analyzing learner subpopulations in massive open online courses. In D. Suthers, K. Verbert, E. Duval, & X. Ochoa (Eds.), Proceedings of the third international conference on learning analytics and knowledge, LAK ‘13 (pp. 170–179). ACM Press.

Kizilcec, R. F., Pérez-Sanagustín, M., & Maldonado, J. J. (2017). Self-regulated learning strategies predict learner behavior and goal attainment in massive open online courses. Computers & Education, 104, 18–33. https://doi.org/10.1016/j.compedu.2016.10.001

Li, Q., Baker, R., & Warschauer, M. (2020). Using clickstream data to measure, understand, and support self-regulated learning in online courses. The Internet and Higher Education, 45, 100727. https://doi.org/10.1016/j.iheduc.2020.100727

Lockyer, L., Heathcote, E., & Dawson, S. (2013). Informing pedagogical action: Aligning learning analytics with learning design. American Behavioral Scientist, 57(10), 1439–1459. https://doi.org/10.1177/0002764213479367

Matcha, W., Gasevic, D., Uzir, N. A. A., Jovanovic, J., Pardo, A., Lim, L., ... & Tsai, Y. S. (2020). Analytics of learning strategies: role of course design and delivery modality. Journal of Learning Analytics, 7(2), 45–71. https://doi.org/10.18608/jla.2020.72.3

McCaslin, M., & Hickey, D. T. (2001). Educational psychology, social constructivisim, and educational practice: A case of emergent identity. Educational Psychologist, 36(2), 133–140. https://doi.org/10.1207/S15326985EP3602_8

Moos, D. C., & Stewart, C. A. (2013). Self-regulated learning with hypermedia: Bringing motivation into the conversation. In R. Azevedo & V. Aleven (Eds.), International handbook of metacognition and learning technologies (pp. 683–695). New York, NY: Springer.

Pajares, F. (2002). Gender and perceived self-efficacy in self-regulated learning. Theory Into Practice, 41, 116–225. https://doi.org/10.1207/s15430421tip4102_8

Panadero, E. (2017). A review of self-regulated learning: Six models and four directions for research. Frontiers in Psychology. https://doi.org/10.3389/fpsyg.2017.00422

Pardo, A. P., Han, F., & Ellis, R. A. (2017). Combining university student self-regulated learning indicators and interactions with online learning activities to predict academic performance. IEEE Transactions on Learning Technologies, 10(1), 82–92. https://doi.org/10.1109/TLT.2016.2639508

Pintrich, P. R. (2000). Role of goal orientation in self-regulated learning. In M. Boekarts, P. Pintrich, & M. Zeidner (Eds.), Handbook of self-regulation (pp. 452–494). Academic Press.

Pintrich, P. R., & Zusho, A. (2007). Student motivation and self-regulated learning in the college classroom. In R. P. Perry & J. C. Smart (Eds.), The scholarship of teaching and learning in higher education: An evidence-based perspective (pp. 731–810). Springer.

Pintrich, P. R., Smith, D. A. F., García, T., & McKeachie, W. J. (1991). A manual for the use of the motivated strategies questionnaire (MSLQ). National Center for Research to Improve Postsecondary Teaching and Learning, University of Michigan.

Reimann, P., Markauskaite, L., & Bannert, M. (2014). e-R esearch and learning theory: What do sequence and process mining methods contribute? British Journal of Educational Technology, 45(3), 528–540. https://doi.org/10.1111/bjet.12146

Richardson, J. T. (2017). Student learning in higher education: A commentary. Educational Psychology Review, 29(2), 353–362. https://doi.org/10.1007/s10648-017-9410-x

Rienties, B., & Toetenel, L. (2016). The impact of learning design on student behaviour, satisfaction and performance: A cross-institutional comparison across 151 modules. Computers in Human Behavior, 60, 333–341. https://doi.org/10.1016/j.chb.2016.02.074

Schellings, G., & Van Hout-Wolters, B. (2011). Measuring strategy use with self-report instruments: Theoretical and empirical considerations. Metacognition and Learning, 6(2), 83–90. https://doi.org/10.1007/s11409-011-9081-9

Sclater, N., Peasgood, A., & Mullan, J. (2016). Learning analytics in higher education: A review of UK and international practice. London: Joint Information Systems Committee.

Siemens, G. (2013). Learning analytics: The emergence of a discipline. American Behavioral Scientist, 57(10), 1380–1400. https://doi.org/10.1177/000276421349885

Sun, Z., Xie, K., & Anderman, L. H. (2018). The role of self-regulated learning in students' success in flipped undergraduate math courses. The Internet & Higher Education, 36, 41–53. https://doi.org/10.1016/j.iheduc.2017.09.003

Tang, Y. M., Chen, P. C., Law, K. M., Wu, C. H., Lau, Y. Y., Guan, J., et al. (2021). Comparative analysis of Student's live online learning readiness during the coronavirus (COVID-19) pandemic in the higher education sector. Computers & Education, 168, 104211. https://doi.org/10.1016/j.compedu.2021.104211

Vanslambrouck, S., Zhu, C., Pynoo, B., Lombaerts, K., Tondeur, J., & Scherer, R. (2019). A latent profile analysis of adult students’ online self-regulation in blended learning environments. Computers in Human Behavior, 99, 126–136. https://doi.org/10.1016/j.chb.2019.05.021

Vermunt, J. D., & Donche, V. (2017). A learning patterns perspective on student learning in higher education: State of the art and moving forward. Educational Psychology Review, 29, 269–299. https://doi.org/10.1007/s10648-017-9414-6

Viberg, O., Hatakka, M., Bälter, O., & Mavroudi, A. (2018). The current landscape of learning analytics in higher education. Computers in Human Behavior, 89, 98–110. https://doi.org/10.1016/j.chb.2018.07.027

Weinstein, C. E., Palmer, D. R., & Schulte, A. C. (1987). LASSI: Learning and study strategies inventory. H & H Publishing Company.

Winne, P. H. (1996). A metacognitive view of individual differences in self-regulated learning. Learning and Individual Differences, 8(4), 327–353. https://doi.org/10.1016/S1041-6080(96)90022-9

Winne, P. H. (2001). Self-regulated learning viewed from models of information processing. In G Cshra & J. Impara (Eds.) Self-regulated learning and academic achievement: Theoretical perspectives (2nd ed.) (pp. 43–97), : Buros Institute of Mental Measurements.

Winne, P. H. (2010). Improving measurements of self-regulated learning. Educational Psychologist, 45(4), 267–276. https://doi.org/10.1080/00461520.2010.517150

Winne, P. H., & Hadwin, A. F. (1998). Studying as self–regulated engagement in learning. In D. Hacker, J. Dunlosky, & A. Graesser (Eds.), Metacognition in educational theory and practice (pp. 277–304). Lawrence Erlbaum.

Winne, P. H., Nesbit, J. C., & Popowich, F. (2017). nStudy: A system for researching information problem solving. Technology, Knowledge and Learning, 22, 369–376. https://doi.org/10.1007/s10758-017-9327-y

Winne, P. H., & Perry, N. E. (2000). Measuring self-regulated learning. In M. Boekaerts, P. R. Pintrich, & M. Zeidner (Eds.), Handbook of self-regulation (pp. 531–566). Academic Press.

Wong, J., Baars, M., Davis, D., Van Der Zee, T., Houben, G.-J., & Paas, F. (2019). Supporting self-regulated learning in online learning environments and MOOCs: A systematic review. International Journal of Human-Computer Interaction, 35(4–5), 356–373. https://doi.org/10.1080/10447318.2018.1543084

Ye, D., & Pennisi, S. (2022). Using trace data to enhance students’ self-regulation: A learning analytics perspective. The Internet and Higher Education, 54, 100855. https://doi.org/10.1016/j.iheduc.2022.100855

Zamarro, G., Cheng, A., Shakeel, M. D., & Hitt, C. (2018). Comparing and validating measures of non-cognitive traits: Performance task measures and self-reports from a nationally representative internet panel. Journal of Behavioral and Experimental Economics, 72, 51–60. https://doi.org/10.1016/j.socec.2017.11.005

Zhou, M., & Winne, P. H. (2012). Modeling academic achievement by selfreported versus traced goal orientation. Learning and Instruction, 22, 413–419. https://doi.org/10.1016/j.learninstruc.2012.03.004

Zimmerman, B. J. (2000). Attaining self-regulation: Asocial cognitive perspective. In M. Boekarts, P. Pintrich, & M. Zeidner (Eds.), Handbook of self-regulation (pp. 13–39). Academic Press.

Zimmerman, B. J., & Schunk, D. H. (Eds.). (2001). Self-regulated learning and academic performance: Theoretical perspectives. Lawrence Erlbaum Associates.

Zimmerman, B. J., & Schunk, D. H. (Eds.). (2011). Handbook of self-regulation of learning and performance. Routledge.

Acknowledgements

A proportion of the study results has been presented at a conference.

Funding

Open Access funding enabled and organized by CAUL and its Member Institutions This work was supported by the Australian Research Council [grant number DP150104163].

Author information

Authors and Affiliations

Contributions

Feifei Han and Robert Ellis have made substantial contributions to the conception and design of the work; the acquisition and analysis of data; the analysis and interpretation of data; have drafted the work and substantively revised it.

Corresponding author

Ethics declarations

Ethics approval

The questionnaire and methodology for this study was approved by the Human Research Ethics committee of the researchers’ university.

Consent to participate

Informed consent was obtained from all individual participants included in the study.

Competing interests

The authors declare that they have no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Han, F., Ellis, R.A. Self-reported and digital-trace measures of computer science students’ self-regulated learning in blended course designs. Educ Inf Technol 28, 13253–13268 (2023). https://doi.org/10.1007/s10639-023-11698-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10639-023-11698-5