Abstract

Interpretable machine learning has become a very active area of research due to the rising popularity of machine learning algorithms and their inherently challenging interpretability. Most work in this area has been focused on the interpretation of single features in a model. However, for researchers and practitioners, it is often equally important to quantify the importance or visualize the effect of feature groups. To address this research gap, we provide a comprehensive overview of how existing model-agnostic techniques can be defined for feature groups to assess the grouped feature importance, focusing on permutation-based, refitting, and Shapley-based methods. We also introduce an importance-based sequential procedure that identifies a stable and well-performing combination of features in the grouped feature space. Furthermore, we introduce the combined features effect plot, which is a technique to visualize the effect of a group of features based on a sparse, interpretable linear combination of features. We used simulation studies and real data examples to analyze, compare, and discuss these methods.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Machine learing (ML) algorithms are nowadays used in many diverse fields e.g. in medicine (Shipp et al. 2002), criminology (Berk et al. 2009), and increasingly in the social sciences (Stachl et al. 2020b; Yarkoni and Westfall 2017). Interpretable models are paramount in many high-stakes settings, such as medical and juridical applications (Lipton 2018). However, well-performing ML models often bear a lack of interpretability. In the context of interpretable ML (IML) research, several model-agnostic methods to produce explanations for single features have been developed (Molnar 2019). Examples include the permutation feature importance (PFI; Fisher et al. 2019), leave-one-covariate out (LOCO) importance (Lei et al. 2018), SHAP values (Lundberg and Lee 2017), or partial dependence plots (PDP; Friedman 2001).

In many applications, it can be more informative to produce explanations for the importance or effect of a group of features (which we refer to as grouped interpretations) rather than for single features. It is important to note that the meaning of grouped interpretations, in general, differs from single feature interpretations, and resulting interpretations are usually not directly comparable (e.g., as Gregorutti et al. (2015) shows for the permutation feature importance). Hence, our aim is not to challenge single feature interpretations as both single and grouped feature interpretation methods measure different things and are useful on their own.

Grouped interpretations might be especially interesting for high-dimensional settings with hundreds or thousands of features. In particular, when analyzing the influence of these features visually (e.g., by plotting the marginal effect of a feature on the target) on a single feature level, this might result in an information overload which might not provide a comprehensive understanding of the learned effects (Molnar et al. 2020b). Furthermore, the runtime of some interpretation methods—such as Shapley values—does not scale linearly in the number of features. Hence, calculating them on a single feature level might not be computationally feasible for high-dimensional settings, making grouped computations a feasible remedy (Lundberg and Lee 2017; Covert et al. 2020; Molnar et al. 2020b).

From a use case perspective, the concept of grouped interpretations is particularly useful when the feature grouping is available a priori based on the application context. In that sense, features that either belong to the same semantic area (e.g., behaviors in psychology or biomarkers in medicine) or are generated by the same mechanism or device (e.g., fMRI, EEG, smartphones) can be grouped together to assess their joint effect or importance. For example, in our application in Sect. 7, we use a real-world use case from psychology that studies how the human behavior on smartphone app usage is associated to different personality traits (Stachl et al. 2020a). Features were extracted from longitudinal data collected from smartphones of 624 participants, and can be grouped into different behavioral classes (i.e., communication and social activity, app-usage, music consumption, overall phone activity, mobility). Another example is applications with sensor data (Chakraborty and Pal 2008), where multiple features measured by a single sensor naturally belong together, and hence grouped interpretations on sensor-level might be more informative.

There are also situations where the interpretation of single features might be misleading and where grouped interpretations can provide a remedy. Examples include datasets with time-lagged or categorical features (e.g., dummy or one-hot encoded categories) and the presence of feature interactions (Gregorutti et al. 2015). A concrete example for dummy encoded categorical features is shown in Appendix A.

Even in situations where feature groups are not naturally given in advance, it still might be beneficial to define groups in a data-driven manner and apply interpretation methods on groups of features (for examples, see Sect. 1.2).

Hence, compared to single feature interpretation methods, the grouping structure must be defined beforehand. A possible process—from group membership definition to modeling up to post-hoc interpretations—is illustrated in Fig. 1. Since defining the underlying group structure is a relevant step in this process, we discuss some applied techniques on how to find groups of features in Sect. 1.2. However, in this paper, we focus on the interpretation component once the groups are known (the green part in Fig. 1).

A possible process from group definition to grouped interpretations. First, the feature groups must be defined. A model is then fitted, typically on the feature space where the information of the pre-defined grouping might be used (e.g., if the fitting process is combined with a feature selection procedure) or ignored. When the best model is found, model-agnostic grouped interpretation methods are applied on the previously defined feature groups. A commonly used approach is to first obtain an overview of which groups are most important for achieving a good model performance (grouped feature importance) to subsequently analyze how the most important feature groups influence the model’s prediction (grouped feature effect) (Color figure online)

Although the grouped feature perspective is relevant in many applications, most IML research has focused on methods that attempt to provide explanations on a single-feature level. Model-agnostic methods for feature groups are rare and not well-studied.

1.1 Real data use cases with grouped features

In the following we summarize further exemplary predictive tasks with pre-specified feature groupings. These tasks will also be used in Sect. 3.4 for further empirical analysis. For more details on features and associated groups see Table 1.

Heat value of fossil fuels In this small scale regression task (\(n = 129\)), the objective is to predict the heat value of fossil fuels from spectral data (Fuchs et al. 2015). In addition to one scalar feature (humidity), the dataset contains two groups of curve data, the first from the ultraviolet-visible spectrum (UVVIS) and the second from the near infrared spectrum (NIR).

Birthweight The birthweight dataset has data on 189 births at the Baystate Medical Centre in Massachusetts during 1986 (Venables and Ripley 2002). The objective is to predict the birth weight in kilograms from a set of 16 features, some of which are grouped (e.g., dummy encoded categorical features).

Colon cancer The colon dataset contains gene expression data of 20 genes (5 basis B-Splines each) for 62 samples from microarray experiments of colon tissue (Alon et al. 1999). The task is to predict cancerous tissue from the resulting 100 predictors.

1.2 Grouping procedures

Following the definitions of He and Yu (2010), we provide a brief overview of different procedures to define feature groups in a knowledge-driven and data-driven manner. In data-driven grouping, an algorithmic approach such as clustering or density estimation is used to define groups of features. Knowledge-driven grouping, on the other hand, uses domain knowledge to define the grouping structure of features. Throughout our paper, we mainly assume a user-defined grouping structure. However, all methods introduced in this paper should also be compatible with an appropriate data-driven method if the defined groups have a meaningful interpretation.

Data-driven grouping

One method to group features in a data-driven manner is to use clustering approaches such as hierarchical clustering (Park et al. 2006; Toloşi and Lengauer 2011; Rapaport et al. 2008) or fuzzy clustering (Jaeger et al. 2003). These approaches often work well in highly correlated feature spaces, such as in genomics or medicine, where correlated features are grouped together so that no relevant information is discarded (Toloşi and Lengauer 2011). For instance, Jaeger et al. (2003) tackles a feature selection problem for a high-dimensional and intercorrelated feature space when working with microarray data. To simultaneously select informative and distinct genes, they first apply fuzzy clustering to obtain groups of similar genes from microarray data. Next, the informative representatives of each group are selected based on a suitable test statistic. The disadvantage of data-driven grouping is that groups depend only on the statistical similarity between features, which might not coincide with domain-specific interpretations (Chakraborty and Pal 2008).

Knowledge-driven grouping

Knowledge-driven group formation has the advantage that the dimensionality reduction might lead to better interpretability than the data-driven path. Gregorutti et al. (2015) apply a knowledge-driven approach in the context of multiple functional data analysis, where they then select groups for subsequent modeling based on their group importance values. Chakraborty and Pal (2008) also select groups of features, where data from one sensor (e.g., to capture satellite images in different spectral bands) represents a group. Hence, features are grouped based on their topical character (e.g., measurement device) rather than their shared statistical properties. Another use case of knowledge-driven grouping is described in Lozano et al. (2009), who group time-lagged features of the same time series for gene expression data. They use the given grouping structure in a group feature selection procedure and apply group LASSO as well as a boosting method.

1.3 Related work

A well-known model that handles groups of features is the group LASSO (Yuan and Lin 2006), which extends the LASSO (Tibshirani 1996) for feature selection based on groups. Moreover, other extensions—e.g., to obtain sparse groups of features (Friedman et al. 2010), to support classification tasks (Meier et al. 2008) or non-linear effects (Gregorova et al. 2018)—also exist. However, group LASSO is a modeling technique that focuses on selecting groups in the feature space rather than quantifying their importance.

A large body of research already exists regarding the importance of individual features (see, e.g., Fisher et al. 2019; Hooker and Mentch 2019; Scholbeck et al. 2020). Hooker and Mentch (2019) distinguish between two loss-based feature importance approaches, namely permutation methods and refitting methods. Permutation methods measure the increase in expected loss (or error) after permuting a feature while the model remains untouched. Refitting methods measure the increase in expected loss after leaving out the feature of interest completely and hence require refitting the model (Lei et al. 2018). Since the model remains untouched in the former approach, interpretations refer to a specific fitted model, while interpretations for refitting methods refer to the underlying ML algorithm. Gregorutti et al. (2015) introduced a model-specific, grouped PFI score for random forests and applied this approach to functional data analysis. Valentin et al. (2020) introduced a model-agnostic grouped version of the model reliance score (Fisher et al. 2019). However, they focus more on the application and omit a detailed theoretical foundation. Recently, a general refitting framework to measure the importance of (groups of) features was introduced by Williamson et al. (2020). In their approach, the feature importance measurement is detached from the model level and defined by an algorithm-agnostic version to measure the intrinsic importance of features. The importance score is defined as the difference between the performance of the full model and the performance based on all features except the group of interest.

Permutation methods can be computed much faster than refitting methods. However, the PFI, for example, has issues when features are correlated and interact in the model due to extrapolation in regions without any or just a few observations (Hooker and Mentch 2019). Hence, interpretations in these regions might be misleading. To avoid this problem, alternatives based on conditional distributions or refitting have been suggested (e.g., Strobl et al. 2008; Nicodemus et al. 2010; Hooker and Mentch 2019; Watson and Wright 2019; Molnar et al. 2020a). Although the so-called conditional PFI provides a solution to this problem, its interpretation is different and “must be interpreted as the additional, unique contribution of a feature given all remaining features we condition on were known” (Molnar et al. 2020a). This property complicates the comparison with non-conditional interpretation methods. Therefore, we do not consider any conditional variants in this paper.

A third class of importance measures is based on Shapley values (Shapley 1953), a theoretical concept of game theory. The SHAP (Lundberg and Lee 2017) approach quantifies the contribution of each feature to the predicted outcome and is a permutation-based method. It has the advantage that contributions of interactions are distributed fairly between features. Besides being computationally more expensive, SHAP itself is based on the model’s predicted outcome rather than the model’s performance (e.g., measured by the model’s expected loss). Casalicchio et al. (2019) extended the concept of Shapley values to fairly distribute the model’s performance among features and called it Shapley Feature IMPortance (SFIMP). A similar approach called SAGE has also been proposed by Covert et al. (2020), who showed the benefits of the method on various simulation studies. One approach that uses Shapley values to explain grouped features was introduced by de Mijolla et al. (2020). However, instead of directly computing Shapley importance on the original feature space, they first apply a semantically-meaningful latent representation (e.g. by projecting the original feature space into a lower dimensional latent variable space using disentangled representations) and compute the Shapley importance on the resulting latent variables. Williamson and Feng (2020) mention that their feature importance method based on Shapley values can also be extended to groups of features. Additionally, Amoukou et al. (2021) investigated grouping approaches for Shapley values in the case of encoded categorical features and subset selection of important features for tree-based methods. The calculation of Shapley values on groups of features based on performance values has only been applied with regard to feature subset selection methods and not for interpretation purposes (Cohen et al. 2005; Tripathi et al. 2020).Footnote 1

After identifying which groups of features are important, the user is often interested in how they (especially the important groups) influence the model’s prediction. Several techniques to visualize single-feature effects exist. These include partial dependence plots (PDP) (Friedman 2001), individual conditional expectation (ICE) curves (Goldstein et al. 2013), SHAP dependence plots (Lundberg et al. 2018), and accumulated local effects (ALE) plots (Apley and Zhu 2019). However, in the case of high-dimensional feature spaces, it is often not feasible to compute, visualize, and interpret single-feature plots for all (important) features. If features are grouped, visualization techniques become computationally more complex, and it may become even harder to visualize the results in an easily interpretable way. In the case of low-dimensional feature spaces, this might still be feasible, for example by using two-feature PDPs or ALE plots. Recently, effect plots that visualize the combined effect of multiple features have been introduced by Seedorff and Brown (2021) and Brenning (2021). They use principal component analysis (PCA) to reduce the dimension of the feature space and calculate marginal effect curves for the principal components. However, the employed dimension reduction method does not include information about the target variable and lacks sparsity (and hence, interpretability).

1.4 Contribution

Our contributions can be summarized as follows: We extend the permutation-based and refitting-based grouped feature importance methods introduced by Valentin et al. (2020) and Williamson et al. (2020) by comparing these methods to not only the full model (i.e., taking into account all features), but also to a null model (i.e., ignoring all features). Hence, we can quantify to what extent a group itself contributes to the prediction of a model without the presence of other groups. Furthermore, we introduce Shapley importance for feature groups and describe how these scores can be decomposed into single-feature importance scores of the respective groups. Our main contributions are: (1) We define a new algorithm to sequentially add groups of features depending on their importance, thereby enabling identification of well-performing combinations of groups. (2) We compare all grouped feature importance methods with respect to the main challenges that arise when applying these methods by creating small simulation examples. Subsequently, we provide recommendations for using and interpreting the respective methods correctly. (3) We introduce a model-agnostic method to visualize the joint effect of a group of features. To that end, we use a suitable dimension reduction technique and the conceptual idea of PDPs to calculate and plot the mean prediction of a sparse group of features with regard to their linear combination. This novel method finally enables the user to visualize effects for groups of features. Finally, we showcase the usefulness of all these methods in real data examples.

The structure of this paper is as follows: First, we provide some general notation and definitions in Sect. 2. We formally define the grouped feature importance methods and introduce the sequential grouped feature importance procedure in Sects. 3 and 4, respectively. We compare these methods for different scenarios in Sect. 5. In Sect. 6, we introduce the combined features effect plot (CFEP) to visualize the effects of feature groups based on a supervised dimension reduction technique. Moreover, we also show the suitability of this technique compared to its unsupervised counterpart in a simulation study. Finally, in Sect. 7, all methods are applied to a real data example before summarizing and offering an outlook for future research in Sect. 8.

2 Background and notation

Analogous to Casalicchio et al. (2019), we use the term feature importance to refer to the influence of features on a model’s predictive performance, which we measure by the expected loss when we perturb these features in a permutation approach or remove these features in a refitting approach.

2.1 General notation

Consider a p-dimensional feature space \({\mathcal {X}} = ({\mathcal {X}}_1 \times \cdots \times {\mathcal {X}}_p)\) and a one-dimensional target space \({\mathcal {Y}}\). The corresponding random variables that are generated from these spaces are denoted by \( X = ( X_1, \ldots , X_p)\) and Y. We denote a ML prediction function that maps the p-dimensional feature space to a one-dimensional target space by \({\hat{f}} : {\mathcal {X}} \rightarrow {\mathbb {R}}\) for regression tasks.Footnote 2 ML algorithms try to learn this functional relationship using \(n \in {\mathbb {N}}\) i.i.d. observations drawn from the joint space \({\mathcal {X}} \times {\mathcal {Y}}\) with unknown probability distribution \({\mathcal {P}}\). The resulting dataset is denoted by \({\mathcal {D}} = \{({\textbf{x}}^{(i)}, y^{(i)})\}_{i = 1}^n\), where the vector \({\textbf{x}}^{(i)} = (x_1^{(i)}, \ldots , x_p^{(i)})^\intercal \in {\mathcal {X}}\) is the i-th observation associated with the target variable \(y^{(i)} \in {\mathcal {Y}}\). The j-th feature is denoted by \({\textbf{x}}_j = (x_j^{(1)}, \ldots , x_j^{(n)})^\intercal \), for \(j = 1, \ldots , p\). The dataset \({\mathcal {D}}\) can also be written in matrix form:

The general error measure \(\rho ({\hat{f}}, {\mathcal {P}}) = {\mathbb {E}}(L({\hat{f}} (X), Y))\) of a learned model \({\hat{f}}\) is measured by a loss function L on test data drawn independently from \({\mathcal {P}}\) and can be estimated using unseen test data \({\mathcal {D}}_{\text {test}}\) by

The application of an ML algorithm (or learner) \({\mathcal {I}}\) to a given dataset \({\mathcal {D}}\) results in a fitted model \({\mathcal {I}}({\mathcal {D}}) = {\hat{f}}_{{\mathcal {D}}}\). The expected generalization error of a learner \({\mathcal {I}}\) takes into account the variability introduced by sampling different datasets \({\mathcal {D}}\) of equal size n from \({\mathcal {P}}\) and is defined by

In practice, resampling techniques such as cross-validation or bootstrapping on the available dataset \({\mathcal {D}}\) are used to estimate Eq. (3). Resampling techniques usually split the dataset \({\mathcal {D}}\) into \(k \in {\mathbb {N}}\) training datasets \({\mathcal {D}}_{\text {train}}^i\), \(i = 1,\ldots ,k\), of roughly the same size \(n_{\text {train}} < n\). Eq. (3) can be estimated by

In the following, we often associate the set of numbers \(\{1, \ldots , p\}\) in a one-to-one manner with the features \({\textbf{x}}_1, \ldots , {\textbf{x}}_p\) by referring a number \(j \in \{1, \ldots ,p\}\) as feature \(x_j\). We call \(G \subset \{1, \ldots , p\}\) a group of features.

2.2 Permutation feature importance (PFI)

Fisher et al. (2019) proposed a model-agnostic version of the PFI measure used in random forests (Breiman 2001). The PFI score of the \(j-\)th feature of a fitted model \({\hat{f}}\) is defined as the increase in expected loss after permuting feature \(X_{j}\):

Here, \(X_{[j]} = (X_1, \ldots , X_{j-1}, {\tilde{X}}_j, X_{j + 1}, \ldots , X_p)\) is the p-dimensional random variable vector of features, where \({\tilde{X}}_j\) is an independent replication of \(X_j\) following the same distribution. The idea behind this method is to break the association between the \(j-\)th feature and the target variable by permuting its feature values. If a feature is not useful for predicting an outcome, changing its values by permutation will not increase the expected loss.Footnote 3 For an accurate estimation of Eq. (5), we would need to calculate all possible permutation vectors over the index set \(\{1, \ldots , n\}\) (see Casalicchio et al. (2019) for an in-depth discussion on this topic). However, Eq. (5) can be approximated on a dataset \({\mathcal {D}}\) with n observations by Monte Carlo integration using m random permutations:

where \(\tau _k\) is a random permutation vector of the index set \(\{1, \ldots , n\}\) for \(k = 1,\ldots ,m\) permutations.Footnote 4

Equation (6) could also be embedded into a resampling technique, where the permutation is always applied on the held-out test set of each resampling iteration (Fisher et al. 2019). However, this leads to refits and is computationally more expensive. The resulting resampling-based PFI of a learner \({\mathcal {I}}\) is estimated by

where the permutation strategy is applied on the test sets \({\mathcal {D}}_{\text {test}}^i\).

3 Feature importance for groups

In our first minor contribution, we provide a general notation and formal definitions for grouped permutation and refitting methods and explain them by answering the following questions:

-

(a)

To what extent does a group of features contribute to the model’s performance in the presence of other groups?

-

(b)

To what extent does a group itself increase the expected loss if it is added to a null model like the mean prediction of the target for refitting methods?

-

(c)

How can we fairly distribute the expected loss among all groups and all features within a group?

The definitions of all grouped feature importance scores are based on loss functions. They are defined in such a way that important groups will yield positive grouped feature importance scores. The question of how to interpret the differing results of these methods is addressed in Sect. 5.

3.1 Permutation methods

Here, we extend the existing definition of PFI to groups of features and introduce the GPFI (Grouped Permutation Feature Importance) and GOPFI (Group Only Permutation Feature Importance) scores. For ease of notation, we will only define these scores for a fitted model \(\hat{f}\) (see Eq. 5).

3.1.1 Grouped permutation feature importance (GPFI)

For the definition of GPFI—which is based on the definitions of Gregorutti et al. (2015) and Valentin et al. (2020)—let \(G \subset \{1, \ldots , p\}\) be a group of features. Let \({\tilde{X}}_{G} = ({\tilde{X}}_j)_{j \in G}\) be a \(\vert G\vert \)-dimensional random vector of features, which is an independent replication of \(X_{G} = (X_j)_{j \in G}\) following the same joint distribution. This random vector is independent of both the target variable and the random vector of the remaining features, which we define by \(X_{-G} := (X_j)_{j \in \{1, \ldots , p\} \backslash G}\). With slight abuse of notation to index the feature groups included in G, we define the grouped permutation feature importance of G as

Equation (8) extends Eq. (5) to groups of features so that the interpretation of GPFI scores always refers to the importance when the feature values of the group defined by G are permuted jointly (i.e., without destroying the dependencies of the features within the group). Similar to Eq. (7), the grouped permutation feature importance can be estimated by Monte Carlo integration:

The GPFI measures the contribution of one group to the model’s performance if all other groups are present in the model (see (a) from Sect. 3).

3.1.2 Group only permutation feature importance (GOPFI)

To evaluate the extent to which a group itself contributes to a model’s performance (see (b) from Sect. 3), one can also use a slightly different measure. As an alternative to Eq. 9, we can compare the expected loss after permuting all features jointly with the expected loss after permuting all features except the considered group. We define this GOPFI for a group \(G \subset \{1, ..., p\}\) as

which can be approximated by

While the relevance of GOPFI as an importance measure might be limited, it is technically useful for the grouped Shapley importance (see Eq. 14).

3.2 Refitting methods

Here, we introduce two refitting-based methods for groups of features. The first definition is similar to the one introduced in Williamson et al. (2020).

3.2.1 Leave-one-group-out importance (LOGO)

For a subset \(G \subset \{1, \ldots , p\}\), we define the reduced dataset \(\tilde{{\mathcal {D}}} := \{({\textbf{x}}_{-G}^{(i)}, y^{(i)})\}_{i = 1}^n\). Given a learner \({\mathcal {I}}\), which generates models \({\mathcal {I}}({\mathcal {D}}) = {\hat{f}}_{{\mathcal {D}}}\) and \({\mathcal {I}}(\tilde{{\mathcal {D}}}) = {\hat{f}}_{\tilde{{\mathcal {D}}}}\), we define the Leave-One-Group-Out Importance (LOGO) as

The LOGO can be estimated by using a learner \({\mathcal {I}}\) on \(\tilde{{\mathcal {D}}}\) and should be embedded in a resampling technique:

Consequently, we compare the increase in expected loss compared to the full model’s expected loss when leaving out a group of features and performing a refit (see (a) from Sect. 3).

While GPFI can be calculated with a resampling-based strategy by using refits to receive the algorithm-based instead of model-based GPFI, the meaning still varies from LOGO. For the algorithm-based GPFI, we calculate for each fitted model the importance score by permuting the regarded group and predicting with the same model. Then we average over all models from our resampling strategy and receive an importance score, which tells us how important a group of features is for some learner \({\mathcal {I}}\) when we break the association between this group and all other groups and the target. LOGO, on the other hand, leaves the group out and then performs the refit to calculate the importance of the group, and hence, it addresses the question: Can we remove this group from our dataset without reducing our model’s performance? This is not answered by permutation-based methods.

3.2.2 Leave-one-group-in importance (LOGI)

While it may be too limiting to estimate the performance of a model based on one feature only, it can be informative to determine the extent to which a group of features (e.g., all measurements from a specific medical device) can reduce the expected loss in contrast to a null model (see (b) from Sect. 3). The Leave-One-Group-In (LOGI) method could be particularly helpful in settings where information on additional groups of measures will induce significant costs (e.g., adding functional imaging data for a diagnosis) and/or limited resources are available (e.g., in order to be cost-covering, only one group of measures can be acquired). The LOGI method can also be useful for theory development in the natural and social sciences (e.g., which group of behaviors is most predictive by itself).

Let \({\mathcal {I}}_{\text {null}}\) be a null algorithm, which results in a null model \({\hat{f}}_{\text {null}}\) that only guesses the mean (or majority class for classification) of the target variable for any dataset. We additionally define a learner \({\mathcal {I}}\), which generates a model \({\mathcal {I}}(\mathring{{\mathcal {D}}}) = {\hat{f}}_{\mathring{{\mathcal {D}}}}\) for a dataset \(\mathring{{\mathcal {D}}} := \{({\textbf{x}}_{G}^{(i)}, y^{(i)})\}_{i = 1}^n\), which only contains features defined by \(G \subset \{1, \ldots , p\}\). We define the LOGI of a group G as

The LOGI can be estimated by using a learner \({\mathcal {I}}\) on \(\mathring{{\mathcal {D}}} = \{({\textbf{x}}_{G}^{(i)}, {\textbf{y}}^{(i)})\}_{i = 1}^n\) and should be embedded in a resampling technique:

3.3 Grouped Shapley importance (GSI)

The importance measures defined above either exclude (or permute) individual groups of features from the total set of features or consider only the importance of groups by omitting (or permuting) all other features. The grouped importance scores are usually not affected if interactions within the groups are present. However, they can be affected if features from different groups interact, since permuting a group of features jointly destroys any interactions with other features outside the considered group. Therefore, we define the grouped Shapley importance (GSI) based on Shapley values (Shapley 1953). GSI scores account for feature interactions, as they measure the average contribution of a given group to all possible combinations of groups and fairly distribute the importance value caused by interactions among all groups (see (c) from Sect. 3).

We assume a set of distinct groups \({\mathcal {G}} = \{G_1, \ldots , G_l\}\), with \(G_i \subset \{1, \ldots , p\}\), for \(i = 1,\ldots ,l\). In our grouped feature context, the value function \(v:{\mathcal {P}}({\mathcal {G}}) \longrightarrow {\mathbb {R}}\) assigns a “payout” to each possible group or combination of groups included in \({\mathcal {G}}\). With slight abuse of notation, we define the value function for a subset \(S \subset {\mathcal {G}}\) as

We define the value function for a group \(G \in {\mathcal {G}}\) calculated by a refitting or a permutation method by

respectively. The marginal contribution of a group \(G \in {\mathcal {G}}\), with \(S \subset {\mathcal {G}}\) is

The GSI of the feature group G is then defined as

which is a weighted average of marginal contributions to all possible combinations of groups.

The GSI cannot always be calculated in a time-efficient way, because the number of coalitions \(S \subset {\mathcal {G}} \backslash G\) can become large very quickly. In practice, the Shapley value is often approximated (Casalicchio et al. 2019; Covert et al. 2020) by drawing \(M \le \vert {\mathcal {G}}\vert !\) different coalitions \(S \subset {\mathcal {G}} \backslash G\) and averaging the marginal, weighted contributions:

with \(S_m \subset {\mathcal {G}} \backslash G\), for all \(m= 1, \ldots , M\).

The GSI can in general not be exactly decomposed into the sum of the Shapley importances for single features of the regarded group. In Appendix B, we show that the remainder term \(R = \phi (G) - \sum _{i \in G} \phi (x_i)\) depends only on higher-order interaction effects between features of the regarded group and features of other groups. Hence, if one is interested in which features contributed most within a group, the Shapley importances for single features can be calculated, which provide a fair distribution of feature interactions within the group but not necessarily of feature interactions across groups. However, the remainder term can be used as a quantification of learned higher-order interaction effects between features of different groups.

While the GSI can be calculated with permutation- as well as refitting-based approaches, we will only apply the permutation-based approach in the upcoming simulation studies and the real-world example.

3.4 Real world use cases

For each dataset from Sect. 1.1, we fitted a random forest and summarized the three most important groups according to different grouped feature importance methods. For the importance scores of LOGI and LOGO, we used a 10-fold cross-validation (Table 2).

For the birthweight task, the feature lwt (mother’s weight) was the most important group to predict the birthweight for all grouped feature importance methods except for LOGI. While all methods except LOGI also agree on the second most important group ui (presence of uterine irritability), feature groups differ for the third rank. However, this may also be due to statistical variability, as the importance values become very small. It is interesting that lwt, despite being the most important group for all other scores, is not very important in terms of LOGI. Thus, lwt is less important as a stand-alone group, but appears important if the other feature groups are included in the model.

In the colon task, the feature group gene14 is by far the most important group to predict cancerous tissue for all grouped feature important methods. However, there are variations in the second and third most important groups.

For the fuelsubset task, the permutation-based grouped importance methods (GPFI, GOPFI and GSI) show the same importance ranking for the three most important feature groups. However, for the refitting-based grouped importance methods (LOGI and LOGO), we can observe interesting differences. The features from the UVVIS group are important as a stand-alone group as can be seen by their positive LOGI score. However, the negative LOGO score of the UVVIS group indicates that the algorithm seems to perform better with only the NIR and H2O groups.

GPFI, GOPFI and GSI provide importance scores for feature groups of a given trained model without the necessity to refit the model. In contrast, LOGI and LOGO provide grouped importance scores based on the underlying algorithm and should always be considered together.

4 Sequential grouped feature importance

In general, feature groups do not necessarily have to be distinct or independent of each other. When groups partly contain the same or highly correlated features, we may obtain high grouped feature importance scores for similar groups. This can lead to misleading conclusions regarding the importance of groups. Quantifying the importance of different combinations of groups is especially relevant in applications where extra costs are associated with using additional features from other data sources. In this case, one might be interested in the sparest, yet most important combination of groups or in understanding the interplay of different combinations of groups. Hence, in practical settings, it is often important to decide which additional group of features to make available (e.g., buy or implement) for modeling and how groups should be prioritized under economic considerations.

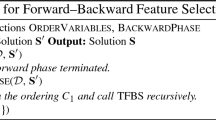

Gregorutti et al. (2015) introduced a method called grouped variable selection, which is an adaptation of the recursive feature elimination algorithm from Guyon et al. (2002) and uses permutation-based grouped feature importance scores for the selection of feature groups. In Algorithm 1, we introduce a sequential procedure that is based on the idea of stability selection (Meinshausen and Bühlmann 2010). The procedure primarily aims at understanding the interplay of different combinations of groups by analyzing how the importance scores change after including other groups in a sequential manner. The feature groups must be pre-specified by the user. We prefer a refitting-based over a permutation-based grouped feature importance score when the secondary goal is to find well-performing combinations of groups. Here, the fundamental idea is to start with an empty set of features and to sequentially add the next best group in terms of LOGI until no further substantial improvement can be achieved. Our sequential procedure is based on a greedy forward search and creates an implicit ranking by showing the order in which feature groups are added to the model. To account for the variability introduced by the model, we propose to use repeated subsampling or bootstrap with sufficient repetitions (e.g., 100 repetitions).

To better understand Algorithm 1, we will demonstrate it with a small example with four groups \({\mathcal {G}} = \{G_1, G_2, G_3, G_4\}\) here. As a reminder, each group is a subset of \(\{1, \ldots , p\}\), and we want to find a subset \(B \subset \{1, \ldots , p\}\), which consists of the union of groups in \({\mathcal {G}}\). The subset B is found by our sequential grouped feature importance procedure. To account for variability, the whole dataset is split into two sets (training and test set) repeatedly so that the train-test splits are different in each repetition of the resampling strategy (bootstrap or subsampling). For each training set, Algorithm 1 starts with an empty set \(B= \emptyset \) (line 2, Algorithm 1). In line 5 of Algorithm 1, the candidate set \({\mathcal {B}} \subset {\mathcal {P}}({\mathcal {G}})\) is defined as all subsets of the power set with cardinality 1. These are all individual groups \({\mathcal {B}} = \{\{G_1\}, \{G_2\}, \{G_3\}, \{G_4\}\}\). The LOGI score of each single group is then calculated. In our example, let \(G_1\) have the highest LOGI score, which also exceeds the threshold \(\delta \). The desired combination B is preliminarily defined as \(G_1\) (line 8), and for the comparison in the next step, the LOGI score of \(G_1\) is defined as \(L_{0}\) (line 9). Then, a new candidate set \({\mathcal {B}}\) is defined (line 11), which consists of all subsets of the power set of \({\mathcal {G}}\) of size i (at this step, we have \(i = 2\)), where \(B = G_1\) is also a subset of \({\mathcal {B}}\). Hence, \({\mathcal {B}} := \left\{ \{G_1, G_2\}, \{G_1, G_3\}, \{ G_1, G_4\} \right\} \). The LOGI score of elements of \({\mathcal {B}}\) is calculated as the LOGI score of the union of all subsets. Now, let \(\widehat{LOGI}(G_1 \cup G3)\) have the highest score. This score is compared to the LOGI score of the previous iteration \(L_{0}\) (line 13). Let the difference exceed the threshold \(\delta \) for our example. In line 14 and 15, the desired combination B is now defined as \(G_1 \cup G_3\) and the LOGI score is again defined as \(L_1\). Algorithm 1 now jumps to line 10 again with \(i = 3\). The candidate set is now \({\mathcal {B}} = \left\{ \{G_1, G_3, G_2\}, \{G_1, G_3, G_4\}\right\} \) (line 11). The LOGI scores are now calculated again for each element of \({\mathcal {B}}\). Let no LOGI score exceed \(L_0\) by the threshold \(\delta \) (line 13). Algorithm 1 now ends for this dataset split and returns \(B = G_1 \cup G_3\) as the best combination. This procedure is repeated for each train-test split in each repetition.

Since the order in which feature groups are added is also known, alluvial charts (Allaire et al. 2017) can be created for visualization purposes (see Figs. 2 and 10). In these charts, we included the number of times feature groups were added as well as the performance on the test datasets. These charts show how frequently a group was selected given that another group was already included and thereby highlight robust combinations of groups.

5 Comparison of grouped feature importance methods

After introducing the methodological background of the different loss-based grouped feature importance measures in Sect. 3, we will now compare them in different simulation settings. We analyze the impact on all methods for settings where (1) groups are dependent, (2) correlations within groups vary, and (3) group sizes differ.

5.1 Dependencies between groups and sparsity

In this section, we compare refitting- and permutation-based grouped feature importance methods and show how different dependencies between groups can influence the importance scores. We demonstrate the benefits of the sequential grouped feature importance procedure and conclude with a recommendation of when to use refitting or permutation-based methods depending on the use-case.

We simulate a data matrix \({\textbf{X}}\) with \(n = 1000\) instances and 3 groups \(G_1, G_2, G_3\), with each of them containing 10 normally distributed features. Features are simulated in such a way that features within each group are highly correlated. However, features in \(G_3\) are independent of features in \(G_1\) and \(G_2\), while features in \(G_1\) and \(G_2\) are also highly correlated with each other. To generate normally distributed features with such correlation patterns, we follow the approach of Toloşi and Lengauer (2011) and use prototype vectors in the following way: (1) We draw n instances of the prototype vector \({\textbf{U}} \sim {\mathcal {N}} (0,1)\). (2) We generate features in \(G_1\) by adding a normally distributed error term \(\epsilon \sim {\mathcal {N}}(0, 0.5)\) to 10% of the instances of the prototype vector \({\textbf{U}}\). (3) Features in \(G_2\) are generated by copying features of \(G_1\) and adding a small normally distributed error term \(\epsilon \sim {\mathcal {N}}(0, 0.01)\) to the copied features. It follows that features within \(G_1\) and \(G_2\) as well as features between the two groups are highly correlated. (4) We generate a new prototype vector \({\textbf{V}}\), which is independent of \({\textbf{U}}\). (5) We generate features for \(G_3\) in the same way as done for \(G_1\) in step (2) but with the prototype vector \({\textbf{V}}\).

The target vector \({\textbf{Y}}\) is generated by \({\textbf{Y}} = 2{\textbf{U}} + 1{\textbf{V}} + \epsilon \), with \(\epsilon \sim {\mathcal {N}}(0, 0.1)\). We fitted a support vector machine with a radial basis function kernelFootnote 5, as an example of a black-box algorithm.

The results in Table 3 show that there can be major differences depending on how the grouped feature importance is calculated. Permutation methods (GOPFI & GPFI & GSI) reflect the importance of the groups based on a model trained on a fixed dataset. In contrast, refitting methods (LOGI & LOGO) retrain the model on a reduced dataset and can therefore learn new relationships. Looking at the results from the permutation methods, we can see that the groups \(G_1\) and \(G_2\) are approximately equally important while both being more important than \(G_3\). However, the results from the refitting methods can reveal some interesting relationships between the groups. The refitting methods highlight that \(G_1\) and \(G_2\) are more or less interchangeable if we only consider a performance-based interpretation (which might not coincide with a domain-specific perspective)Footnote 6. Hence, the two groups do not complement each other. This is reflected by the near-zero LOGO scores, which indicate that leaving each group out of the full model does not considerably change the model’s expected loss.

Sequential grouped feature importance for the simulation in Sect. 5.1. 100 times repeated subsampling. Improvement threshold \(\delta = 0.001\). Vertical bars show one step of the sequential procedure (left to right). Height of the vertical bars represent the number of subsampling iterations that a combination of groups was chosen. MSE scores show predictive performance. Streams represent the addition of a group

Figure 2 illustrates the results of the sequential procedure introduced in Algorithm 1. We see that across 100 subsampling iterations, \(G_1\) was chosen 46 times as the most important first group, and \(G_2\) was chosen 54 times with similar predictive performance for both groups, while \(G_3\) was never chosen as the first most important group. Hence, similar to LOGI, we can see that if only one group can be chosen, it would either be \(G_1\) or \(G_2\) with approximately the same probability. In the second step, the group \(G_3\) was added in all cases to either \(G_1\) or \(G_2\) (depending on which group had been chosen in the first step). This step resulted in an on-average drop in the MSE score from 1.2 to 0.2. In only a few cases (15 out of 100), the final addition of either \(G_1\) or \(G_2\) to a full model in step 3 exceeded the very low chosen threshold of \(\delta = 0.001\). This rather unlikely improvement is represented by the proportionally narrower band that connects the second and the third step (dark gray bars) in the chart in Fig. 2. This reveals that these two groups are—from a performance or loss perspective—rather interchangeable and do not benefit from one another.

The choice between using permutation-based or refitting-based grouped feature importance methods might depend on the number of groups and correlation strength between the different groups. If feature groups are distinct and features between the groups are almost uncorrelated, we might prefer permutation over refitting methods due to lower computation time. In cases where groups are correlated with each other (e.g., because some features belong to multiple groups), refitting methods might be preferable, as they are not misleading in correlated settings. Since the number of groups is usually smaller than the number of features in a dataset, refitting methods for groups of features could become a viable choice. Furthermore, with the sequential grouped feature importance procedure, it is possible to find sparse and well performing combinations of groups in an interpretable manner. Thus, this approach helps to better understand which groups of features were important (e.g., as they were more frequently selected) given that certain groups were already selected.

5.2 Varying correlations within groups

In many use cases, it is quite common to group similar (and therefore, often correlated) features together, while groups of features may be almost independent of each other. However, compared to Sect. 5.1, correlations of features within groups might differ. We created a data matrix \({\textbf{X}}\) with \(n = 1000\) instances and 4 groups \(G_1\), \(G_2\), \(G_3\), and \(G_4\), with each of these groups containing 10 normally distributed features. Using fivefold cross-validation, we fitted a random forest with 2000 trees and a support vector regression with a radial basis function kernel.Footnote 7 The univariate target vector \({\textbf{Y}}\) is defined as follows:

with

and \(\epsilon {\mathop {\sim }\limits ^{iid}} N(0,1)\). The \(i-\)th feature of the j-th group is denoted by \({\textbf{X}}_{G_j, i}\). We repeated the simulation 500 times.

It follows that \(G_1\), \(G_2\), and \(G_3\) have the same influence on the target variable, while \(G_4\) has no influence on \({\textbf{Y}}\). We generate the feature space \({\textbf{X}}\)—similar to the approach in Sect. 5.1—as follows: (1) For each feature group j, we generate a prototype vector \({\textbf{U}}_j \sim {\mathcal {N}} (0,1)\) with n instances. (2) We generate the features of a group \(G_j\) by altering a proportion \(\alpha \) with \(0 \le \alpha \le 1\) of the n instances of \({\textbf{U}}_j\). We alter these instances by taking a weighted average between the respective values of \({\textbf{U}}_j\) (20%) and a standard normally distributed random variable \({\textbf{W}}_i\) (80%). For the results shown in Fig. 3, we set \(\alpha \) to 0.1 for all features within the same group. Hence, correlations within groups are the same (around \(90\%\)) for all groups, while groups themselves are independent of each other. The plots show that all methods correctly attribute the same importance to the first three groups, while the fourth group is not important for predicting \({\textbf{Y}}\). The lower plots in Fig. 3, on the other hand, correlations within groups vary across groups. The altering proportion parameter \(\alpha \) is set to 0.1 for features of \(G_1\) and \(G_4\), to 0.3 for features of \(G_2\), and to 0.6 for features of \(G_3\). Hence, features in \(G_1\) and \(G_4\) are highly correlated within the respective group, while features within \(G_2\) and \(G_3\) show a medium and small correlation, respectively. While \(G_4\) is still recognized to be unimportant, the relative importance of groups 1 to 3 drops with decreasing within-group correlation. This artifact seems—at least, in this simulation setting—to be even more severe for the random forest compared to the support vector machine. For example, \(G_3\) is on average less than half as important as \(G_1\) for permutation-based methods. Thus, none of the methods reflect the true importance of the different groups of the underlying data generating process. A possible reason for this artifact is that the regarded model learned effects different from those given by the underlying true relationship. Especially for the random forest, this has already been studied extensively in the presence of different correlation patterns in the feature space (Strobl et al. 2008; Nicodemus et al. 2010). Additionally, Hooker and Mentch (2019) showed that permutation-based methods are more sensitive in this case than refitting methods, which is also visible for both models in Fig. 3. Since the model is learned on the original feature space and group structures are not considered in the modelling process, we can also observe this effect when applying grouped feature importance methods. This is due to the fact that we can only quantify which groups are important for the model or algorithm performance but not for the underlying data generating process, which is usually unknown. Another approach to quantify feature importance when using random forests is to extract the information on how often a feature has been used as a splitting variable for the different trees. The feature chosen for the first split has the most influence within each tree. Hence, we calculated for each repetition the percentage of how often a feature is chosen as the first splitting feature. The distribution over all repetitions is displayed in Fig. 4. Each of the features of \(G_1\) is on average chosen more often as the first splitting feature than all features of the other groups, no matter if it has an influence on the target or not. The influential features of \(G_3\) (which has the lowest within-group correlation) are rarely chosen as the first splitting feature. This observation confirms the results of the grouped importance methods in Fig. 3, since all of them rank \(G_3\) as least important from the influential feature groups.

Note that while GPFI and LOGO are calculated with reference to the full model’s performance—which on average leads to higher absolute values than the two counter-methods based on the null model’s performance—GOPFI and LOGI might lead to less robust results, as the newly learned effects as well as the approximation of the permutation effect underlie a higher uncertainty. This effect might increase when relative values instead of absolute values are considered due to smaller absolute importance scores of GOPFI and LOGI. However, the methods are only comparable on a relative scale. This effect is also visible in the boxplots of Fig. 3. Furthermore, LOGI can also take negative values in the case of \(G_4\), as the feature group does not affect the target in the underlying data generating process, and hence it might be counterproductive to only include \(G_4\) compared to the null model.

Upper (lower) plots: Grouped relative importance scores in the case of equally sized (varying sizes of) within-group correlations for random forest (left) and SVM (right). Relative importance is calculated by dividing each of the absolute group importance scores by the importance score of \(G_2\). Hence, the relative importance of \(G_1\) is 1. Boxplots illustrate the variation between different repetitions

5.3 Varying sizes of groups

Another factor to consider when calculating grouped rather than individual feature importance scores is that differing group sizes might influence the ranking of the scores. Groups with more features might often have higher grouped importance scores and might contain more noise features than smaller groups. Therefore, Gregorutti et al. (2015) argue that in case one must decide between two groups that have an equal importance score, one would prefer the group with fewer features. Following from that, they normalize the grouped feature importance scores regarding the group size with the factor \(\vert G\vert ^{-1}\). This is also used in the default definition of the grouped model reliance score in Valentin et al. (2020). However, the usefulness of normalization highly depends on the question the user would like to answer. This is illustrated in a simulation example in Fig. 5. We created a data matrix \({\textbf{X}}\) with \(n = 2000\) instances and 2 groups, with \(G_1\) containing \(\{x_1, \dots x_6\}\) and \(G_2\) containing \(\{x_7, x_8\}\) i.i.d. uniformly distributed features on the interval [0, 1]. The univariate target variable \({\textbf{Y}}\) is defined as follows:

We used 1000 observations for fitting a random forest with 2000 trees and 1000 observations for prediction and calculating the GSI as defined in Sect. 3.3 with a permutation-based value function. This was repeated 500 times. Figure 5 shows that \(G_1\) is about twice as important as \(G_2\). As shown in Sect. 3.3 and Appendix B, we can compare the GSI with the Shapley importance on feature level. In case there are no higher-order interaction terms between groups modeled by the random forest, the single feature importance scores will approximately sum up to the grouped importance score, as shown in this example. This provides a more detailed view of how many and which features are important within each group. In this case, there are two equally important features in \(G_1\) and one equally important feature in \(G_2\). If we use the normalization constant in this example, we would divide the grouped importance score of \(G_1\) (which is on average approximately 1.1) by 6 and the one of \(G_2\) (which is on average approximately 0.55) by 2. Consequently, \(G_2\) with a normalized score of approximately 0.27 would be regarded as more important than \(G_1\) with a normalized score of approximately 0.18. It follows that if we must decide between two groups, we would choose \(G_2\) when we follow the approach of Gregorutti et al. (2015). However, since \(G_1\) contains two features with the same importance as the one important feature of \(G_2\), and hence \(G_1\) contains more information from a statistical perspective, the user might prefer \(G_1\). Furthermore, breaking down the GSI to the single-feature Shapley importance scores puts the user in the position of defining sparser groups by excluding non-influential features.

Finally, Table 4 presents a summary of the key takeaways regarding all discussed grouped feature importance methods.

6 Feature effects for groups

Feature effect methods quantify or visualize the influence of features on the model’s prediction. For a linear regression model, we can easily summarize the feature effect in one number, thus making interpretation very simple: If we change feature \(x_1\) by one unit, our prediction will change by the corresponding coefficient estimate \({\hat{\beta }}_1\) (positively or negatively depending on the sign of the coefficient). For more complex non-linear models like generalized additive models, such a simplified summary of the feature effect is not adequate, as the magnitude and sign of the effect might change over the feature’s value range. Hence, it is more common to visualize the marginal effect of the feature of interest on the predicted outcome. Since ML models are often complex non-linear models, different visualization techniques for the feature effect have been introduced in recent years. Common methods are PDP, ICE curves or ALE (Friedman 2001; Goldstein et al. 2013; Apley and Zhu 2019), which show how changes in the feature values affect the predictions of the model. However, these are usually only defined for a maximum of two features. For larger groups of features, this becomes more challenging, since it is difficult to visualize the influence of several features simultaneously. The approach described in this section aims to create effect plots for a predefined group of features that have an interpretation similar to that of the single-feature PDP. To achieve this, we transform the high-dimensional space of the feature group into a low-dimensional space by using a supervised dimension reduction method, which is discussed in Sect. 6.1. We want to find a few underlying factors that are attributed to a sparse and interpretable combination of features that explain the effect of the regarded group on the model’s expected loss. We provide a detailed description of this method in Sect. 6.3 and introduce the resulting combined features effect plot (CFEP). In Sect. 6.4, we illustrate the advantages of applying a supervised rather than an unsupervised dimension reduction method and compare our method to the main competitor, which is the totalvis effect plot introduced in Seedorff and Brown (2021).

6.1 Choice of dimension reduction method

The most prominent dimension reduction technique is arguably PCA (Jolliffe 1986). PCA is restricted to explaining most of the variance of the feature space, and the identified projections are not related to the target variable (for more details see Appendix C.1). Because we want to visualize the mean prediction of combined features as a result of the dimension reduction process, we prefer supervised procedures that maximize dependencies between the projected data \({\textbf{X}}{\textbf{V}}\)—with \({\textbf{V}}\) being a projection \({\textbf{V}} \in {\mathbb {R}}^{p \times p}\)—and the target vector \({\textbf{Y}}\) (as we show in Sect. 6.4). Many methods for supervised PCA have been established. For example, see Bair et al. (2006), who used a subset of features that were selected based on their linear correlation with the target variable. Another very popular method that maximizes the covariance between features and the target variable is partial least squares (PLS) (Wold et al. 1984). The main difference between these methods and the supervised PCA (SPCA) introduced by Barshan et al. (2011) is that the SPCA is based on a more general measure of dependence, called the Hilbert-Schmidt Independence Criterion (HSIC). This independence measure is constructed to be zero, if and only if any bounded continuous function between the feature and target space is uncorrelated. In practice, an empirical version of the HSIC criterion is calculated with kernel matrices. It follows that while this SPCA technique can cover a variety of linear and non-linear dependencies between \({\textbf{X}}\) and \({\textbf{Y}}\) by choosing an appropriate kernel, the other suggested methods are only able to model linear dependencies between the features and the target variable. The approach that is probably best suited for our application of finding interpretable sets of features in a high-dimensional dataset is the method called sparse SPCA, described in Sharifzadeh et al. (2017). Similar to the SPCA method from Barshan et al. (2011), sparse SPCA not only uses the HSIC criterion to maximize the dependency between projected data \({\textbf{X}}{\textbf{V}}\) and the target \({\textbf{Y}}\), but also incorporates an \(L_1\) penalty of the projection \({\textbf{V}}\) for sparsity. The sparse SPCA problem can be solved with a penalized matrix decomposition (Witten et al. 2009). More theoretical details on the sparse SPCA, including the HSIC criterion and how it can be calculated empirically, and the choice of kernels and hyperparameters can be found in Appendix C.

6.2 Totalvis effect plot

Seedorff and Brown (2021) recently introduced a method that aims to plot the combined effect of multiple features by using PCA. Their approach can be described as follows: First, they apply PCA on the regarded feature space to receive the principal components matrix after rotation. For the principal component of interest, they create an equidistant grid. Second, for each grid value, they replace all values of the selected principal component with this grid value and transform the matrix back to the original feature space. Third, The ML model is applied on these feature values and a mean prediction for the grid point of the regarded principal component is calculated. Steps 2 and 3 are repeated for all grid points.

Hence, with this method, combined effect plots for up to p principal components can be created. Thus, Seedorff and Brown (2021) do not focus on explaining groups of features explicitly. Furthermore, they use PCA for unsupervised dimension reduction, and thus, projections might not be related to the target. Due to using PCA and not sparse PCA, the results might be difficult to interpret, as many or all features might have an influence on the principal component. Lastly, with the back-transformation from the principal component matrix to the original feature space, all feature values change and might not be meaningful anymore. For example, in the case of integer features, the back-transformation might lead to real feature values. We illustrate the drawbacks of the method compared to the CFEP in Sect. 6.4.

6.3 Combined features effect plot (CFEP)

The CFEP picks up the idea of PDPs (Friedman 2001) and extends it to groups of features. The partial dependence function is defined as

with \(S \subset \{1,\ldots ,p\}\) and \(C = \{1,\ldots , p\} \backslash S\). Since the joint distribution of \(X_C\) is usually unknown, the Monte Carlo method is used to estimate \(f_S ^{PD}({\textbf{x}}_S)\):

Hence, we marginalize over all features in C and with that we obtain the average marginal effect for the feature subset in S. The PDP usually visualizes this average marginal effect for \(\vert S\vert \le 2\) by plotting \(\left( {\textbf{x}}_S^{(k)},{\hat{f}}_S^{PD}({\textbf{x}}^{(k)}_S)\right) \) for some pre-specified grid points \(k = \{1,\ldots ,m\}\).Footnote 8 However, this is usually only possible for \(\vert S\vert \le 2\) and thus not directly applicable to visualize the combined effect of feature groups. To obtain a visualization in the case of \(\vert S\vert >2\), we need to reduce the dimensions and therefore define the CFEP of a certain group of features G as follows:

-

(1)

We first apply a suitable (preferably supervised) dimension reduction method (e.g., here we use the sparse SPCA, however, the CFEP follows a modular approach and hence the dimension reduction method is exchangeable) on features in \(G \subset \{1,\ldots ,p\}\) to obtain a low dimensional representation of the feature group G. We denote these principle component functions—which are ordered according to relevanceFootnote 9 and which possibly depend on a reduced set of featuresFootnote 10\(S_j \subseteq G\) with \(j \in \{1,\ldots ,\vert G\vert \}\)—by \(g_j: {\mathcal {X}}_{S_j} \longrightarrow {\mathbb {R}}\).

-

(2)

For visualization purposes, we choose from all possible \(g_j\) with \(j \in \{1,\ldots ,\vert G\vert \}\) a principle component function

$$\begin{aligned} g: {\mathcal {X}}_{S} \longrightarrow {\mathbb {R}} \end{aligned}$$(19)(with S being its reduced set of features) which serves as a proxy for the feature group G. We usually only consider the first few principle components.

-

(3)

We calculate the average marginal effect \({\hat{f}}_S^{PD}({\textbf{x}}_S)\) of the feature set S exactly as in Eq. (18).

-

(4)

We visualize the CFEP by plotting \(\left( g({\textbf{x}}^{(i)}_S),\hat{f}_S^{PD}({\textbf{x}}^{(i)}_S)\right) \) for each observation in the dataset.

Hence, the CFEP visualizes the average marginal effect of features in S against the combinations of features received by the dimension reduction method (e.g., a linear combination of a principal component in the case of sparse SPCA) and thus shows how different values of \(g({\textbf{x}}_S)\) affect the predictions of a given model. For a feature group, several principle components \(g_j\) and hence several CFEPs may be of interest.

The CFEP is defined in Algorithm 2, but we will demonstrate the procedure of constructing a CFEP with the illustrative example in Fig. 6. In this example, we have two predefined groups of features, where the first group contains \(x_1\), \(x_2\), and \(x_3\), and the second group contains features \(x_4\) and \(x_5\). The sparse SPCA on the first group yields a first principal component (\(g_1\)) with the loadings 0.3 for \(x_1\), 0.6 for \(x_2\) and 0.5 for \(x_3\) (step 1 to 3 of Algorithm 2). It follows that \(S = \{1,2,3\}\) and that the low dimensional representation of interest is \(g_1\). For the construction of a CFEP for \(g_1\), mean predictions for the principal component are calculated for each observation. To calculate the mean prediction of the first observation (shown in red), we replace the values of features with non-zero loadings of \(g_1\) of each instance in the dataset by the feature values of the first observation (step 6 in Algorithm 2). A prediction vector \(\hat{{\textbf{y}}}^{(1)}_{rep}\) is then calculated with the previously trained model (step 7 in Algorithm 2). The value on the y-axis for the red point in the graph below corresponds to the mean over all predictions for the first observation: \(\bar{\hat{y}}^{(1)}_{rep}\) = \((0.8 + 0.2 + 0.7 + 0.6 + 0.4 + 0.3) / 6 = 0.5\). The value on the x-axis is the linear projection of the first observation for the regarded principal component (step 8 and 9 in Algorithm 2). Hence, it is calculated by the weighted sum of feature values \(x_1^{(i)}\) to \(x_3^{(i)}\), where the weights are defined by the loadings of the respective principal component that we receive with sparse SPCA.

Explanation of estimating and visualizing CFEP; the x-coordinate reflects the linear combination of features with non-zero loadings for \(g_1\), and the y-coordinate reflects the mean predictions \(\bar{\hat{y}}^{(i)}_{rep}\) for each observation i. The substitution of values for each observation is only done for features with non-zero loadings

In contrast to PDP or totalvis effect plots, CFEP produces a point cloud instead of a curve. The CFEP is, mathematically speaking, not a function, since points on the x-axis correspond to linear projections of features within a group. A point z on the x-axis can have multiple combinations of features, which lead to z and have different mean predictions on the y-axis. However, we now have the possibility to interpret the shape of the point cloud and can draw conclusions about the behavior of the mean prediction of the model regarding a linear combination of features of interest.

6.4 Experiments on supervised versus unsupervised dimension reduction

As discussed in Sect. 6.1, PCA might be the most popular dimension reduction method. However, since PCA is unsupervised, it does not account for the dependency between the feature space and the target variable. To evaluate the degree to which this drawback influences CFEP, we examine two regression problems on simulated data. The first is defined by a single underlying factor depending on a sparse set of features, which can be represented by a single principal component. The linear combination of this feature set is also linearly correlated with the target variable. The second regression problem contains two underlying factors that depend on two sparse sets of features. While the linear combination of the first feature set is also linearly correlated with the target, the second factor has a quadratic effect on \({\textbf{Y}}\). In both cases, we compare the usage of sparse supervised and unsupervised PCA (sparse SPCA and sparse PCA) as dimension reduction methods within CFEP and compare them to the totalvis effect plot. Here, we investigate if the respective dimension reduction method does correctly identify the sparse set of features for each group. Additionally, we determine how accurately we can predict the true underlying relationship between the linear combination of these features and the target variable. Since we simulated the data, we know the number of underlying factors (principal components).

6.4.1 One factor

In this example, we created a data matrix \({\textbf{X}}\) with 500 instances of 50 standard normally distributed features with decreasing correlations. Therefore, all features are generated as done in Sect. 5.2. The altering proportion \(\alpha \) is set to 0.2 for the first 10 features, to 0.4 for the next 10 features, and to 1 for the last 10 features. Thus, while the first 10 features are highly correlated with each other, the last 10 features are approximately uncorrelated with each other. The sparse subgroup defined by the variable \({\textbf{Z}}\) is a linear combination of 5 features from \({\textbf{X}}\) and has itself a linear effect on the target variable \({\textbf{Y}}\):

Hence, according to our notation, \(G_{{\textbf{Z}}}\) is defined by \(G_{{\textbf{Z}}} = \{5,8,25,47,49\}\), and thus, \(X_{G_Z}\) is the related subset of all features. We drew 100 samples and fitted a random forest with 2000 trees with each sample drawing. We used the 10-fold cross-validated results to perform sparse SPCA. For each dimension reduction method, we estimate \(\hat{{\textbf{Z}}}\) by summing up the (sparse) loading vector (estimated by the dimension reduction method) multiplied by the feature matrix \({\textbf{X}}\). Therefore, \({\textbf{X}}_{G_{\hat{{\textbf{Z}}}}}\) is defined by the received sparse feature set. The mean prediction \(\bar{\hat{{\textbf{Y}}}}_{rep}\) for the CFEP is calculated as described in Sect. 6.3.

The impact of choosing a supervised over an unsupervised sparse PCA approach is shown in Fig. 7, which also shows the average linear trend and \(95\%\) confidence bands of CFEP for the simulation results. To evaluate how well the estimated mean prediction \(\bar{\hat{{\textbf{Y}}}}_{rep}\) approximates the underlying trend, we assume that we know that \({\textbf{Z}}\) has a linear influence on the target. Thus, we fit a linear model on each simulation result. To compare the received regression lines, we evaluate each of them on a predefined grid and average over all 100 samples (represented by the red line). The confidence bands are then calculated by taking the standard deviation over all estimated regression lines on grid level and calculating the \(2.5\%\) and \(97.5\%\) quantiles using the standard normal approximation. The associated calculation steps for each of the 100 samples can be summarized as follows:

-

(1)

Estimate a linear model \(\hat{f}(\mathbf {\textbf{X}}_{G_{\hat{{\textbf{Z}}}}}) \sim {\textbf{Z}}\).

-

(2)

Define an equidistant grid of length 50 within the range of \({\textbf{Z}}\).

-

(3)

Apply the linear model estimated in 1) on the grid defined in 2).

-

(4)

Repeat steps 1 to 3 for \(\hat{f}({\textbf{X}}_{G_{\textbf{Z}}})\) by using the true underlying features of \({\textbf{Z}}\) to calculate the combined features dependencies that we call the ground truth.

The left plot in Fig. 7 shows a similar linear trend of the estimated CFEP compared to the average ground truth (represented by the blue line), while the red line in the right plot varies around 0. By using sparse SPCA, the underlying feature set \({\textbf{X}}_{G_{\hat{{\textbf{Z}}}}}\) is better approximated than with sparse PCA, which is reflected in the MSE between \({\textbf{Z}}\) and \(\hat{{\textbf{Z}}}\) of 0.7 for sparse SPCA and 1.9 for sparse PCA. Figure 8 provides an explanation for those differences. While sparse SPCA (on average) more strongly weights features that have a large influence on the target, impactful loading weights for sparse PCA are solely distributed over highly correlated features in \({\textbf{X}}\) that explain the most variance in the feature space. Thus, including the relationship between the target and \({\textbf{X}}\) in the dimension reduction method may have a huge influence on correctly approximating the underlying factor and, hence, also on the CFEP.

Similar to using sparse PCA as a dimension reduction method within CFEP, on average, the totalvis effect curves based on PCA do not show a clear positive linear trend (see Fig. 7). For almost half of the samples, we even receive a negative instead of a positive trend for the underlying factor. The interpretation is opposite to the actual effect and, hence, is misleading.

6.4.2 Two factors

In real-world data settings are often more complex by containing non-linear relationships and the target variable is described by more than one underlying factor. Hence, we now examine a more complex simulation setting to assess if we can observe the same behavior that we observed for the simple case. To that end, we simulated a data matrix \({\textbf{X}}\) with 500 instances for two feature sets, each containing 20 standard normally distributed features. The data for each feature set is generated as described in Sect. 5.2 but with an altering proportion of 0.15 and 0.35 for the features in the first set and 0.55 and 0.85 in the second set. Hence, within each set, the first ten features show a higher correlation among each other than the last ten features. Additionally, all features of the first set are on average more highly correlated than all features of the second set. Features between the two sets are uncorrelated. The first factor \({\textbf{Z}}_1\) is a linear combination of four features from the first set and \({\textbf{Z}}_2\) of two features from the second set. \({\textbf{Z}}_1\) has a linear and \({\textbf{Z}}_2\) a quadratic effect on \({\textbf{Y}}\).

Again, we drew 100 samples and fitted a random forest with 2000 trees with each sample drawing. The approach is almost the same as described for one factor, with the difference being that we use the first two principal components (as we want to find two sparse feature sets instead of one).

In Fig. 9, the average linear and quadratic trend of the underlying CFEPs of \({\textbf{Z}}_1\) and \({\textbf{Z}}_2\) are depicted for both dimension reduction methods. While the average linear regression line of sparse SPCA matches the average ground truth almost perfectly for \({\textbf{Z}}_1\), the associated line of sparse PCA shows only a slightly positive trend and differs substantially from the ground truth. Regarding \({\textbf{Z}}_2\), a similar propensity can be observed for the quadratic shape. Again, this behavior results from sparse SPCA (on average) more strongly weighting features that have a large effect on the target, while the unsupervised version focuses on features that explain the most variance in \({\textbf{X}}\).

Top (\({\textbf{Z}}_1\)): Average linear trend and confidence bands of CFEP over all samples using sparse SPCA (left) and sparse PCA (middle) compared to estimated totalvis effect curves over all 100 samples for first principal component (black) and the average linear trend (red) (right). Bottom (\({\textbf{Z}}_2\)): Same structure as for \({\textbf{Z}}_1\), but showing the quadratic trend of \({\textbf{Z}}_2\) (Color figure online)

The estimated linear trend of the totalvis effect curves for the first principal component is negative instead of positive. Thus, for most of the samples and on average, these results are completely misleading (see Fig. 9). The quadratic shape of the second component is (on average and for almost all samples) steeper than the average ground truth. Additionally, the deviation is higher here than for CFEP with sparse SPCA.

7 Real data example: smartphone sensor data

Smartphones and other consumer electronics have increasingly been used to collect data for research (Miller 2012; Raento et al. 2009). The emerging popularity of these devices for data collection is grounded in their connectivity, the number of built-in sensors, and their widespread use. Moreover, smartphones enable users to perform a wide variety of activities (e.g., communication, shopping, dating, banking, navigation, listening to music) and thus provide an ideal means to study human behavior in naturalistic contexts, over extended periods of time, and at fine granularity (Harari et al. 2015, 2016, 2017). In this regard, smartphone data has been used to investigate individual differences in personality traits (Stachl et al. 2017; Harari et al. 2019), in human emotion and well-being (Servia-Rodríguez et al. 2017; Rachuri et al. 2010; Saeb et al. 2016; Thomée 2018; Onnela and Rauch 2016; Kolenik and Gams 2021), and in daytime and nighttime activity patterns (Schoedel et al. 2020).