Abstract

Early time series classification (EarlyTSC) involves the prediction of a class label based on partial observation of a given time series. Most EarlyTSC algorithms consider the trade-off between accuracy and earliness as two competing objectives, using a single dedicated hyperparameter. To obtain insights into this trade-off requires finding a set of non-dominated (Pareto efficient) classifiers. So far, this has been approached through manual hyperparameter tuning. Since the trade-off hyperparameters only provide indirect control over the earliness-accuracy trade-off, manual tuning is tedious and tends to result in many sub-optimal hyperparameter settings. This complicates the search for optimal hyperparameter settings and forms a hurdle for the application of EarlyTSC to real-world problems. To address these issues, we propose an automated approach to hyperparameter tuning and algorithm selection for EarlyTSC, building on developments in the fast-moving research area known as automated machine learning (AutoML). To deal with the challenging task of optimising two conflicting objectives in early time series classification, we propose MultiETSC, a system for multi-objective algorithm selection and hyperparameter optimisation (MO-CASH) for EarlyTSC. MultiETSC can potentially leverage any existing or future EarlyTSC algorithm and produces a set of Pareto optimal algorithm configurations from which a user can choose a posteriori. As an additional benefit, our proposed framework can incorporate and leverage time-series classification algorithms not originally designed for EarlyTSC for improving performance on EarlyTSC; we demonstrate this property using a newly defined, “naïve” fixed-time algorithm. In an extensive empirical evaluation of our new approach on a benchmark of 115 data sets, we show that MultiETSC performs substantially better than baseline methods, ranking highest (avg. rank 1.98) compared to conceptually simpler single-algorithm (2.98) and single-objective alternatives (4.36).

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

The goal of time series classification (TSC) is to assign a class label to a given time series, i.e., to a sequence of observations that have been sampled over time. Practical applications of time series classification include the diagnosis of heart conditions from ECGs, identification of patterns in financial markets, and detection of anomalies in seismic activity. Many such time-critical applications can benefit from classification results being available as early as possible, preferably even before the full time series has been observed. As an example, cardiac surgical patients in postoperative care are monitored for postoperative complications during an extended period of time. For some of these complications, indications of increased risk can be made far in advance of the actual onset (Abdelghani et al. 2016). Being able to automatically detect these signals as soon as they occur, through a timely classification of the monitored time series, can mean the difference between life and death.

Early time series classification (EarlyTSC) addresses the problem of classifying time series based on partial observations while maintaining a reasonable level of accuracy. The problem has been first described by Rodríguez Diez and Alonso González (2002) and has received an increasing amount of attention since. EarlyTSC introduces a second criterion to the classification problem: classifications do not only need to be accurate but also early. This results in a natural trade-off between accuracy and earliness (Mori et al. 2019).

There are currently many algorithms available for EaryTSC with different performance on different datasets. To obtain the best performance on a given dataset, it is typically necessary to carry out algorithm selection as well as hyperparameter tuning. However, when solving an EarlyTSC problem, we want to optimise both earliness and accuracy simultaneously. If configuration A (i.e., algorithm choice and its hyperparameter settings) is better with respect to at least one of these objectives and just as good w.r.t. the other compared to configuration B, we say that A dominates B. However, if A is earlier than B but less accurate or vice versa, A and B do not dominate each other, but rather represent different points in the earliness-accuracy trade-off. In that case, we call them both non-dominated or Pareto-efficient with respect to each other. For any EarlyTSC problem, there are many (potentially infinite) possible non-dominated configurations that we want to identify to get an idea of the trade-off between earliness and accuracy. However, these configurations can be difficult to identify, since only a very small portion of all possible configurations is non-dominated, which makes automatic selection and configuration of EarlyTSC algorithms a challenging task.

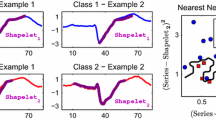

We illustrate the status-quo in EarlyTSC and our proposed approach in Fig. 1, which shows configurations obtained using different approaches and their performance on the GunPoint dataset from the UCR benchmark collection (see Sect. 6.2). Most EarlyTSC algorithms proposed so far provide control over the trade-off between earliness and accuracy by means of one dedicated hyperparameter. Each hyperparameter setting results in a classifier whose performance is represented by a single point in the earliness-accuracy space. Figure 1a shows the earliness and accuracy of a set of manually configured algorithms, for each of which five distinct values of the trade-off hyperparameter have been considered, evenly spread over the full range of the hyperparameter (we chose to consider five settings since that number corresponds closely to what is reported in literature (e.g., Mori et al. 2018; Schäfer and Leser 2020; Mori et al. 2019) and it would be a realistic number of configurations to evaluate manually). Based on this plot we can make several observations.

First, we observe that the dedicated hyperparameters do not strictly trade off accuracy for earliness, meaning that in some cases, earliness and accuracy increase or decrease simultaneously. This can be observed for EDSC (in blue) and TEASER (in grey): some configurations are dominating other configurations of the same algorithm (i.e., are better w.r.t. both earliness and accuracy). The downside of not having a strict trade-off is that considering, for example, ten configurations might only lead to two or three non-dominated ones. This renders the process of finding desirable configurations more challenging, as there is no clear logic for predicting how tuning the hyperparameter can help find a desirable trade-off.

A second observation from Fig. 1a is that, even when covering the full range of the trade-off hyperparameters, in many cases, a single algorithm can be limited to only a narrow part of the earliness-accuracy space or leave large gaps between reachable points. This stems from the fact that these trade-off hyperparameters only provide indirect control over the earliness-accuracy trade-off. As a result, even after extensive hyperparameter tuning, the user might be left with a very limited set of trade-off points, or a choice between configurations that all perform very similarly. This renders finding a good solution to a specific EarlyTSC problem extremely difficult. Note that considering multiple algorithms simultaneously can mitigate some of the problems mentioned above. This is illustrated in Fig. 1b. However, in this example, this involves evaluating 40 configurations, of which only six turn out to be non-dominated, and none of these has an error-rate between 0.33 and 0.02.

In summary, several challenges arise when manually optimising the performance of EarlyTSC algorithms:

-

Exploring the earliness-accuracy trade-off requires manual tuning of at least one dedicated hyperparameter. This is tedious, time-consuming and error-prone.

-

The earliness-accuracy hyperparameter does not always effectively trade off earliness against accuracy. As a result, considering many configurations might only result in a few non-dominated ones.

-

The earliness-accuracy hyperparameter provides only indirect and limited control over the earliness and accuracy, possibly resulting in multiple configurations with very similar earliness and accuracy limiting the freedom of trade-off choice.

In this work, we address these challenges by automating the process of hyperparameter tuning, while considering multiple algorithms and multiple objectives simultaneously. This is done using an algorithm configurator, i.e., a fully automated procedure that repeatedly trains and evaluates algorithm configurations and optimises for earliness and accuracy. Our proposed automated approach falls into the area of automated machine learning (AutoML). Recent developments in this field have made collections of advanced machine learning tools easily accessible for non-experts (e.g., Thornton et al. 2013; Feurer et al. 2015; Koch et al. 2018), such that users do not have to deal with difficult design choices and performance optimisation tasks (e.g., the choice of algorithm, or hyperparameter settings). Particularly, when optimising a single objective function, the problem of combined algorithm selection and hyperparameter optimisation (CASH) has been addressed by Thornton et al. (2013) through the well-known SMAC (Sequential Model-based Algorithm Configuration) procedure (Hutter et al. 2011). However, so far, no such approach has been proposed for EarlyTSC. This is mainly due to the complex, multi-objective nature of this specific machine learning task. A way around this challenge would be to combine both objectives into a single objective. Within the context of EarlyTSC, using the harmonic mean of earliness and accuracy as an objective function has been proposed (Schäfer and Leser 2020). However, this approach fails to capture the complex trade-off between earliness and accuracy.

In this paper, we address this issue by considering a multi-objective configurator, previously designed for hyperparameter optimisation, and expanding its use to the multi-objective CASH (MO-CASH). In particular, we introduce a novel EarlyTSC framework addressing the MO-CASH problem for EarlyTSC that achieves the following:

-

Automating the process of acquiring a set of non-dominated solutions without the need for manual tuning.

-

Enabling the user to make informed decisions through having fine-grained control over the earliness and accuracy trade-off, as provided by a set of non-dominated solutions.

-

Substantially expanding the number of non-dominated solutions found for a given EarlyTSC problem by (i) optimising all hyperparameters and (ii) considering a wide range of EarlyTSC methods, simultaneously.

In Fig. 1c, we illustrate the improvements that can be achieved by an automated search over an expanded space of multiple algorithms and all their hyperparameters. The result is a large set of non-dominated configurations from which a user can choose, with high resolution, the one that is best suited to the problem at hand. Using performance metrics developed for multi-objective optimisation, we can quantify the performance of sets of non-dominated configurations as a whole. Specifically, we can calculate the dominated hypervolume (HV, defined in Sect. 6.4), which takes values between 0 (worst) and 1 (best). Comparing Fig. 1c against Fig. 1b, it can be seen that automated configuration achieves higher HV and produces a larger, denser set of non-dominated configurations.

A strong advantage of our proposed approach is that it effectively leverages the complementary strengths of EarlyTSC algorithms. This means that any algorithm can potentially help in partially improving its overall performance. For example, an algorithm that achieves relatively good accuracy early on, but does not improve with more data, would not be considered a good EarlyTSC algorithm. Nevertheless, by considering multiple algorithms simultaneously within our multi-objective approach, the strength of this particular algorithm would be maximally exploited, while its weaknesses are compensated by other algorithms. This means that our approach can, additionally, open the door to exploring weaker EarlyTSC algorithms that would never be considered in isolation. These could be relatively simple algorithms, where the optimisation of earliness-accuracy trade-off is left to the algorithm configurator (as opposed to be done internally within the algorithm).

An example of such an algorithm is a naïve fixed-time early classifier (i.e., one that always classifies at a fixed timestep). The performance of such a fixed-time classifier heavily depends on the fixed point in time at which classification occurs (controlled by a hyperparameter). Ideally, one would want to consider a high number of possible classification times to obtain a detailed view of the earliness-accuracy trade-off. However, each setting requires retraining a model, which renders the process impractical, unless an automated configuration is used. By using automated configuration, however, favourable trade-offs between earliness and accuracy are easily identified—especially, because the effect of the hyperparameter on the performance of this simple algorithm is very direct. We will build upon this idea, by using the Fixed algorithm which is introduced in Sect. 5.1. The benefits of this step are illustrated in Fig. 1d, where we added the Fixed algorithm to obtain better overall performance in terms of higher hypervolume and a larger number of non-dominated solutions.

Illustration of how an automated framework can improve the quality of EarlyTSC algorithms, in terms of the earliness-accuracy trade-off achieved on the GunPoint data set. Higher HV and a higher number of solutions show better performance. Each point represents an algorithm configuration in the earliness-accuracy space (coloured by the algorithm). a Current approach: manual tuning of only the dedicated hyperparameter controlling the earliness-accuracy trade-off, separately for each algorithm. The Pareto set of configurations of a single algorithm provides a limited choice of solutions. The best Pareto front belongs to ECEC with \(HV=0.84\) and only 2 non-dominated solutions. b By considering multiple manually configured algorithms, improved performance is achieved, but the choice of configurations remains limited, and manual configuration requires extensive effort. c Combined automated algorithm selection and tuning of all hyper-parameters results in a more densely populated Pareto front of solutions. d Adding the simple Fixed algorithm provides additional non-dominated configurations and improves the overall performance in terms of the dominated hypervolume

Overall, our work presented here makes the following contributions to solving EarlyTSC:

-

For the first time, we address the problem of EarlyTSC by systematically considering the trade-off between earliness and accuracy based on all relevant hyperparameters, using a multi-objective automated algorithm configuration approach.

-

We propose MultiETSC, a framework for automatic algorithm selection and hyperparameter optimisation leveraging a wide range of existing EarlyTSC algorithms.

-

Within our MultiETSC framework, we make use of an extension to an existing general-purpose algorithm configurator, exploiting prior knowledge of the structure of the MultiETSC search space.

-

We demonstrate how MultiETSC framework can leverage regular time series classification algorithms to improve its performance on EarlyTSC.

-

We perform an extensive empirical evaluation of MultiETSC on a benchmark of 115 data sets showing that MultiETSC can perform substantially better than baselines, ranking highest (avg. rank 1.98) compared to conceptually simpler single-algorithm (2.98) and single-objective alternatives (4.36).

The remainder of this article is structured as follows: after covering some preliminaries (Sect. 2), we formally describe the problem of MO-CASH for early time series classification in Sect. 3. Section 4 covers related work on both EarlyTSC and AutoML. In Sect. 5, we describe MultiETSC and cover its implementation details. Extensive experiments are described in Sect. 6, and their results discussed in Sect. 7. Finally, in Sect. 8, we draw some general conclusions and discuss directions for future work.

2 Preliminaries

A time series is a series of discrete observations over time. Time series can have varying sampling rates, but for the sake of simplicity, we consider only real-valued time series with a constant sampling rate. Note that for practical applications, the data could be transformed to fit this assumption. We denote a time series of l observations as \(\mathbf {x} = [x_1,\dots ,x_l] \in \mathbb {R}^l\).

Time Series Classification (TSC) is the problem of determining a function \(f: \mathbb {R}^l \rightarrow \mathcal {C}\) that maps a given time series \(\mathbf {x} \in \mathbb {R}^l\) to a class label \(f(\mathbf {x}) = y \in \mathcal {C}\), where \(\mathcal {C}\) is a finite set of labels. Function f is obtained from a learning algorithm A based on a set of training examples \(\{d_1,...,d_n\}\), where each example is a pair of a time series and a class label \(d_i = (\mathbf {x}_i, y_i) \in \mathbb {R}^l \times \mathcal {C}\). Note that we assume time series of uniform lengths. Additionally, most learning algorithms expose a set of hyperparameters \(\varvec{\lambda }\) that control some aspects of their inner workings; settings for these need to be chosen from a space \(\varvec{\Lambda }\) and often have a substantial impact on classification accuracy.

Early Time Series Classification (EarlyTSC) has been first mentioned in the literature by Rodríguez Diez and Alonso González (2002). The main difference to ordinary TSC occurs when performing the actual classification task on a given time series. While ordinary TSC assumes receiving the time series \(\mathbf {x}\) as a single object, EarlyTSC considers multiple prefixes \(\mathbf {x}_p = [x_1,\dots ,x_p] \in \mathbb {R}^p\) of \(\mathbf {x}\) of increasing lengths (\(p \le l\)). At each prefix length considered, the classifier either classifies or postpones classification to await more data. When the full time series has been observed, the classifier must produce a class output.

Hyperparameter optimisation (HPO) is the problem of finding the set of hyperparameter settings \(\varvec{\lambda }^* \in \varvec{\Lambda }\) with optimal generalisation performance for a given algorithm \(A_{\varvec{\lambda }}\) with hyperparameters \(\lambda \) and a set of training data \(\mathcal {D}\). Generalisation performance can be estimated by repeatedly splitting \(\mathcal {D}\) into non-overlapping training and validation subsets of \(\mathcal {D}\), \(\mathcal {D}_{train}\) and \(\mathcal {D}_{valid}\) respectively, training on \(\mathcal {D}_{train}\) and evaluating performance of the resulting classifier on \(\mathcal {D}_{valid}\). Formally, hyperparameter optimisation involves determining

where \(\mathcal {L}(A_{\varvec{\lambda }}, \mathcal {D}_{train}, \mathcal {D}_{valid})\) is the loss of algorithm A with hyperparameters \(\varvec{\lambda }\) when trained on \(\mathcal {D}_{train}\) and evaluated on \(\mathcal {D}_{valid}\). For a classification problem such as TSC, this loss usually is a measure of prediction accuracy, such as misclassification rate, but any performance metric could be chosen as an optimisation target. It is important to note that the combined hyperparameter space is a subset of the product of the permissible set of values for each individual hyperparameter \(\varvec{\Lambda } \subset \Lambda _1 \times \dots \times \Lambda _m\). In most cases, this subset is strict, since some hyperparameters depend on the value of others (Hutter et al. 2014b). For example, for an algorithm with optional regularisation, there might be a hyperparameter \(\lambda _{reg}\) controlling whether regularisation is applied. The hyperparameter \(\lambda _{weight}\) controlling the regularisation weight is only active if the value of \(\lambda _{reg}\) is set to TRUE.

More formally, we say hyperparameter \(\lambda _{i}\) is conditional on hyperparameter \(\lambda _{j}\) if \(\lambda _{i}\) is only active when the value of \(\lambda _{j}\) is in a given set \(V_{i}(j) \subsetneq \Lambda _j\). In this case we call \(\lambda _{j}\) a parent of \(\lambda _{i}\). Conditional hyperparameters can themselves also be parents, resulting in a tree-structured search space (Bergstra et al. 2011).

When there are multiple learning algorithms to choose from, we might want to not only optimise hyperparameter settings but simultaneously select the best learning algorithm for a given data set. This problem is called combined algorithm selection and hyperparameter optimisation or CASH (Thornton et al. 2013). Given a set of algorithms \(\mathcal {A}\), for each algorithm \(A^{(j)} \in \mathcal {A}\), and a hyperparameter space \(\varvec{\Lambda }^{(j)}\), the goal is to optimise generalisation performance, i.e., to determine

In their description of the CASH problem, Thornton et al. (2013) note that the choice of algorithm can be considered a top-level hyperparameter \(\lambda _r\) that selects an algorithm from \(A^{(1)},...,A^{(k)}\). Thereby, the CASH problem can be reformulated as an HPO problem over the combined hyperparameter space \(\varvec{\Lambda } = \varvec{\Lambda }^{(1)} \cup \dots \cup \varvec{\Lambda }^{(k)} \cup \{\lambda _r\}\), where each algorithm \(A^{(i)}\) has its own subspace \(\varvec{\Lambda }^{(i)}\) that is conditional on \(\lambda _r\) being set to \(A^{(i)}\). This places \(\lambda _r\) at the root of the tree-structured search space.

3 Problem definition

So far, we have presented the single-objective CASH problem. To formulate the CASH problem for EarlyTSC, both accuracy and earliness objectives need to be considered. To accommodate that, we will introduce MO-CASH, the multi-objective extension to the CASH problem, and formulate this problem for the specific case of EarlyTSC. In Eqs. 1 and 2, we assumed a one-dimensional loss function defining a total order on the configuration space. When generalising to multi-objective optimisation, we can no longer speak of a total order. Let \(\mathbf {y}^{(1)}\) and \(\mathbf {y}^{(2)}\) be vectors in objective space \(\mathbb {R}^m\) with m objectives. We say \(\mathbf {y}^{(1)}\) is dominated by \(\mathbf {y}^{(2)}\) if the following two conditions are met: 1) \( y^{(2)}_i \le y^{(1)}_i\) for all \(i \in {1,\dots ,m}\) and 2) \(y^{(2)}_i < y^{(1)}_i\) for at least one \(i \in {1,\dots ,m}\). We denote this relation with \(\mathbf {y}^{(2)} \prec \mathbf {y}^{(1)}\). The domination relation is a partial order, meaning that some pairs of configurations are incomparable. In MO-CASH, we are interested in the efficient set of configurations, i.e., a set that consists solely of non-dominated, or Pareto-optimal, configurations. Since CASH can be formalised as a special case of HPO (as discussed in Sect. 2), we will be using the simpler notation of HPO Let \(\mathcal {L}\) be a vector-valued loss function on \(\mathbb {R}^m\); then the efficient set \(\varvec{\Lambda }^*\) and the Pareto-front \(\mathcal {P}\) can be formalised as follows:

Note that the single-objective formulation from Eq. 1 can be considered as a special case of a more general multi-objective problem. In the single-objective case, the efficient set is guaranteed to contain only a single configuration, since the ordering in a 1-dimensional solution space is guaranteed to be a total order. In the multi-objective case, on the other hand, there can be an arbitrarily large number of non-dominated configurations, and an optimiser needs to find improvements over a range of trade-off points in the objective space.

The problem we address in this work is the MO-CASH problem for the specific case of EarlyTSC, which can be defined as follows. Given the set \(\mathcal {D} = \{d_1,...,d_n\}\) of pairs of time series and class label \(d_i = ( \mathbf {x}_i, y_i ) \in \mathbb {R}^l \times \mathcal {C}\), and given the combined space of EarlyTSC algorithms and their hyperparameter settings, in the form of a configuration space \(\varvec{\Lambda }\), find the best set of non-dominated configurations \(\varvec{\Lambda }^*\) in terms of earliness and accuracy.

4 Related work

In this section, we discuss work from the literature that has been fundamental to the algorithms we used as the basis of MultiETSC. Both the foundations of early time series classification as well as AutoML are covered.

4.1 EarlyTSC methods

Many methods for solving the EarlyTSC problem have been proposed over the past two decades. Generally, these are adaptions of classification methods for full time series (for an overview of TSC methods we refer to Bagnall et al. 2016)). Most methods split the problem into two parts: one that addresses the classification of the partial data, aiming to maximise the classification accuracy; and a separate part that manages the trade-off between earliness and accuracy, by deciding whether enough data has been evaluated to base a reliable classification on. We will call this decision triggering and the function that controls it the trigger function. Table 1 shows a concise overview of the algorithms included in MultiETSC, their underlying classification approach and triggering mechanism as well as strengths and weaknesses. Next, we briefly discuss the existing literature on EarlyTSC algorithms.

Rodríguez Diez and Alonso González (2002) were the first to address the classification of time series based on partially observed data. They used a boosted set of simple interval-based binary features using ADABoost (Freund and Schapire 1999). To do early classification, the features that are not yet fully observed are simply ignored. Although this can be seen as the first attempt to solve EarlyTSC, this method does not make an explicit decision when to classify and consequently does not solve the EarlyTSC problem as we have defined it. Zz et al. (2011b) introduced ECTS, a method based on 1NN ED classification using the observed prefix of the time series. In the training phase, the minimum prediction length (MPL) of each time series is learned—the length at which the prediction based on the time series prefix is likely to be equal to the prediction on the full time series. The classification triggers as soon as the MPL of the closest match is equal to the observed prefix length.

EDSC, introduced by Xing et al. (2011a), is a shapelet-based EarlyTSC method. The shapelets are selected on a combination of their distinctiveness and the earliness of appearance in most time series. Classification is done as soon as a matching shapelet is found. RelClass (Parrish et al. 2013) explicitly estimates classification reliability, i.e., the probability of the early class prediction being equal to the classification of the complete time series. The trigger mechanism is simply a minimum reliability threshold that needs to be met.

Hatami and Chira (2013) proposed a method that based the triggering on the “agreement” among different classifiers in an ensemble. When the individual classifiers do not agree, the classification is rejected, and the method waits for more data. Antonucci et al. (2015) proposed a method based on “imprecise hidden Markov models”, where a Markov model is fitted to the incoming data with some uncertainty. The classification is done if only a single model of a time series in the training set remains within the uncertainty bounds. Dachraoui et al. (2015) suggest a meta-algorithm that considers both the cost of classification quality and the cost of delaying the classification decision. Additionally, this method predicts in advance how much data will be needed to make a decision, and only triggers when the observed amount reaches or exceeds the required amount.

Mori et al. (2016) introduced the idea of prefix classifiers. Their algorithm, ECDIRE, trains a set of fully-fledged time series classifiers on increasing time series prefix lengths. The prefix classifiers are typically probabilistic classifiers to provide a confidence estimate. This confidence is then used in the triggering mechanism. SR-CF (Mori et al. 2018), TEASER (Schäfer and Leser 2020) and ECEC (Lv et al. 2019) are all extensions of this idea using slightly different triggering mechanisms and prefix-classifiers.

EARLIEST (Hartvigsen et al. 2019) is based on a recurrent neural network (RNN) with LSTM cells. The base RNN produces a vector representation at each time step. This vector representation is used by two classifiers, one for classification and one binary classifier for triggering. The system is trained as a whole, minimising a loss function combining earliness and accuracy.

All methods discussed above employ sophisticated techniques to address the problem of EarlyTSC. The downside they all have in common is that they only produce a single classifier. Producing classifiers with different levels of accuracy and earliness requires changing one or more hyperparameters and retraining. These methods therefore do not fully address the multi-objective nature of the EarlyTSC problem.

Mori et al. (2019) proposed the first, and thus far the only, truly multi-objective approach to EarlyTSC. They proposed an adaptation of the SR-CF, to which we will refer to as MO-SR, where the internal parameters are optimised for both earliness and accuracy simultaneously. MO-SR produces a set of non-dominated classifiers in a single training phase. The approach we propose in this paper applies multi-objective optimisation at a higher level (hyperparameters instead of parameters) resulting in a more complex, tree-structured search space, and it uses a sophisticated optimiser that can search this space. Following this approach, we developed a more general method that can incorporate any existing or future EarlyTSC methods. While it would be interesting to compare MO-SR with our proposed MultiETSC, no implementation has been made available by the original authors.

As is apparent from this overview, a diverse set of EarlyTSC algorithms can be found in the literature, each with its strengths and weaknesses. To the best of our knowledge, we are the first to attempt to combine the strengths of (a subset of) these algorithms into a single, integrated system for early time series classification. Additionally, while all EarlyTSC algorithms manage the trade-off between earliness and accuracy in some way, most do not provide insight into this trade-off. Our automated approach, described in the following, can produce this insight, without the need to understand the details of the underlying EarlyTSC algorithms, making EarlyTSC more accessible for non-experts.

4.2 Automated machine learning

The application of machine learning to a specific problem often encompasses decisions about data pre-processing, choice of algorithm and hyperparameter settings. Automated machine learning attempts to automate these decisions. Hutter et al. (2009) addressed the hyperparameter optimisation problem using sequential model-based optimisation (SMBO). Hutter et al. (2011) used SMBO as the basis for a general-purpose algorithm configuration procedure, SMAC (Hutter et al. 2011), which enables the efficient search of large and complex configuration spaces with categorical and numerical parameters.

Bergstra et al. (2011) applied two forms of SMBO, Gaussian process (GP) regression and the so-called Tree-structured Parzen Estimator (TPE), to HPO in deep belief networks. For this 32-dimensional configuration space, they achieved better results within 24 h of computing time than had been achieved by manual configuration in earlier work. Snoek et al. (2012) built further on SMBO for AutoML by proposing Spearmint, an algorithm that takes the variable cost, in terms of training time, into account. Thornton et al. (2013) introduced Auto-WEKA, a software package based on SMAC that makes AutoML available for end-users familiar with the WEKA interface, being the first AutoML system addressing the full CASH problem. Feurer et al. (2015) introduced AUTO-SKLEARN, a SMAC-based AutoML system for Python. Additionally, AUTO-SKLEARN supports a meta-learning step before the SMBO phase and an ensemble building phase after optimisation, improving the efficiency of the configuration process and the quality of the results thus obtained.

Olson et al. (2016) introduced a Tree-based Pipeline Optimisation Tool, or TPOT, which optimises classification pipelines using decision trees and random forests. TPOT uses a tree representation for classification pipelines including feature selection, transformation and construction operators, as well as model selection and parameter optimisation elements. These pipelines are optimised using a Genetic Algorithm (GA). The authors introduce an extension of TPOT, called TPOT-Pareto, which not only considers classification accuracy but also pipeline complexity (i.e., number of pipeline operators). During optimisation, not just the best k performing pipelines are kept as the population for the GA, but a Pareto-front of non-dominated pipelines (in terms of accuracy and complexity) is used. However, the selection of the final pipeline is still based solely on accuracy and does therefore not address the more general MO-CASH problem.

Koch et al. (2018) developed the Autotune framework for the proprietary statistical software package SAS. Autotune uses a hybrid search strategy consisting of random search, Latin Hypercube Sampling (LHS), global and local search, GA and Bayesian optimisation using a GP surrogate. Autotune is implemented to maximally exploit parallel computation. The authors show competitive performance compared to only Bayesian optimisation and the Spearmint package. Gardner et al. (2019) extended the work on Autotune to address multi-objective optimisation. They reduced their hybrid search to only employ LHS, GA and Generating Set Search, a local search strategy. In their study, the authors address common conflicting objectives for binary classification, e.g., false-negative rate vs misclassification rate.

Jin et al. (2019) addressed the problem of Neural Architecture Search (NAS) by developing the open-source Keras-based system: Auto-Keras. NAS can be seen as a particularly interesting special case of AutoML since the search space of possible architectures is complex and highly hierarchical. Auto-Keras employs a custom GP kernel for SMBO, based on the edit-distance of the neural network architecture. The downside of Auto-Keras is that it only takes into account a single loss metric, without penalizing architecture complexity.

Some recent efforts have focused on the problem of AutoML for streaming applications, where the optimal algorithm and hyperparameters might change over time (e.g., Veloso et al. 2018; Carnein et al. 2020; Celik and Vanschoren 2020). Although this might be interesting for EarlyTSC, in this paper we consider only static problems.

All the AutoML systems above are built upon existing machine learning packages and are aimed to provide end-users with easier access to advanced machine learning pipelines and algorithms and increased overall performance. For EarlyTSC, there does not yet exist a software package that allows for such a direct extension. This introduced the additional challenge of integrating all algorithms into a common framework with all required hyperparameters exposed. An important difference between these existing AutoML implementations and our AutoML system for EarlyTSC is that our system is not only meant to benefit possible end-users but should also help in the development of new EarlyTSC algorithms since it combines the strengths of multiple algorithms allowing the exploration of weaker EarlyTSC algorithms that would never be considered in isolation.

5 MultiETSC

In this section, we describe the approach developed for automatically configuring EarlyTSC algorithms and the system that implements our approach. At the core of our approach, we make use of a general-purpose automated algorithm configurator. It is the task of the configurator to efficiently search for the best performing configurations by searching a vast space of EarlyTSC algorithms and their hyperparameters. The inner loop of the search process consists of three steps: (1) selecting a candidate configuration (i.e., a combination of algorithm and its hyperparameters); (2) training the configuration on training data; (3) evaluating the configuration on validation data. The evaluation is fed back into the configurator enabling informed decisions for selecting new configurations. The final output thus obtained is a set of configurations that are mutually non-dominated, based on evaluation on the given validation data. The overall framework of our proposed approach is illustrated in Fig. 2. In the remainder of this section, we will first describe in detail the space of EarlyTSC algorithms and hyperparameter settings that we search over. Second, we describe how configurations are evaluated. Third, a description of the algorithm configurator that we use to carry out the search.

5.1 Configuration space

As discussed in Sect. 3, the configuration space is a tree-structured space defined by the choices of algorithm and hyperparameter settings. MultiETSC includes 9 EarlyTSC algorithms: ECTS, EDSC, RelClass, ECDIRE, SR-CF, TEASER, ECEC, EARLIEST (all described in Sect. 4.1) and a naïve fixed-time Euclidean 1NN algorithm, which we will refer to as ‘Fixed’ and is described in more detail below. A more detailed description of the search space, including hyperparameter descriptions and the size of the search space, is provided in Tables 5, 6 and 7 in Appendix 1.

These algorithms were chosen based on the fact that their implementations were made available by the original authors (except for Fixed, which we implemented), which helped us to ensure correctness and efficiency. There were several algorithms proposed in literature that we would have liked to include, but for which we were unable to acquire implementations (e.g., Hatami and Chira 2013; Dachraoui et al. 2015; Wang et al. 2016; Martinez et al. 2018; He et al. 2019; Rußwurm et al. 2019). Note, however, that new algorithms can be added to MultiETSC with relative ease. No algorithms were excluded based on low expected performance. All EarlyTSC algorithms from the literature employ various techniques to find the best time to trigger classification. To put the merit of these techniques into perspective, we extended the algorithm portfolio with the naïve Fixed method that simply classifies at a fixed point in time that is determined by a hyperparameter. Without HPO, such a method would be too naïve to consider. However, the responsibility of picking a favourable classification time is now shifted to the configurator, which is given more direct control. This will potentially lead to a better exploration of the full range of possible trade-off points.

All algorithms were modified and wrapped to provide a common command-line interface, where test data, training data, hyperparameters and, in the case of a stochastic algorithm, a random seed can be passed to the algorithm for reproducibility. To achieve this, the original implementations required a varying degree of modifications. In some cases, the needed modifications were quite considerable.

-

ECTS (Zz et al. 2011b) (C++): is one of the oldest implementations of an EarlyTSC algorithm and still often used as a baseline in experimental evaluations of new algorithms.

-

EDSC (Xing et al. 2011a) (C++): is the only explicitly shapelet-based EarlyTSC method.

-

RelClass (Parrish et al. 2013) (MATLAB modified for GNU Octave): is the only EarlyTSC algorithm that is not based on any time series specific method and is still a very competitive EarlyTSC algorithm.

-

ECDIRE (Mori et al. 2016) (R): uses a set of Gaussian process classifiers. This makes it theoretically better suited for situations where little training data is available.

-

SR-CF (Mori et al. 2018) (R): is the first method introduced with an explicitly learned trigger function.

-

TEASER (Schäfer and Leser 2020) (Java): is designed based on the advanced WEASEL classifier (Schäfer and Leser 2017) using a relatively simple triggering mechanism.

-

ECEC (Lv et al. 2019) (Java): is designed based on the advanced WEASEL classifier (Schäfer and Leser 2017) using a triggering mechanism based on reliability estimation.

-

EARLIEST (Hartvigsen et al. 2019) (Python): is the only neural network based EarlyTSC method included. It is implemented in Python using the PyTorch module (Paszke et al. 2019).

-

Fixed (authors)(Python): is a naïve Euclidean 1NN classifier on a fixed length prefix. A single hyperparameter controls the prefix length at which classification is done as a proportion of the full time series length. We theorise that any EarlyTSC algorithm with more control over “when to classify” should perform at least as well as this naïve algorithm, either by being more accurate with the same average earliness or by being earlier with the same level of accuracy or both. This algorithm is implemented by the authors for the purpose of this paper.

5.2 Algorithm performance

As described earlier, the EarlyTSC algorithms are evaluated on both earliness and accuracy. For our setup, we need to define two metrics that represent the loss in both of these objectives. For the loss relating to accuracy, we used the error rate \(C_a\) defined as follows:

where \(l_{\mathbf {x}}^{*}\) is the length at which the classification is triggered for time series \(\mathbf {x}\), \(f(\mathbf {x}_{l_{\mathbf {x}}^{*}})\) is the early class prediction and \(Class(\mathbf {x})\) is the true class of \(\mathbf {x}\).

The earliness \(C_e\) is quantified by the proportion of the time series needed to produce a classification averaged over the number of samples classified. It can be written as follows:

where \(l_{\mathbf {x}}\) is the length of time series \(\mathbf {x}\).

5.3 Algorithm configurator

Sequential Model-Based Optimisation (SMBO) has shown to be a promising approach to the single-objective CASH problem (Thornton et al. 2013). However, the problem of optimising for multiple objectives is substantially more complex than the single-objective case. While there have been methods proposed for model-based optimisation for multi-objective problems (e.g., Emmerich et al. 2015), these methods are not able to handle the tree-structured search space that is typical for CASH problems.

We make use of the general purpose algorithm configurator MO-ParamILS (Blot et al. 2016) and customise it, in order to achieve increased efficiency in the context of the EarlyTSC problem. MO-ParamILS was originally designed for general-purpose multi-objective algorithm configuration. Here, we leverage its power to search tree-structured search spaces to extend this to MO-CASH (combined algorithm selection and hyper-parameter optimisation) by using the choice of algorithm as a top-level hyperparameter. MO-ParamILS uses iterated local search (ILS), a stochastic local search method, to find promising configurations. MO-ParamILS maintains a set of non-dominated configurations referred to as the archive. The one-exchange neighbourhood of a configuration \(\lambda \) is the set of configurations that is obtainable by changing a single parameter. This one-exchange neighbourhood of the configurations in the archive is used for local search steps. The local search strategy is complemented with random search steps to increase exploration of the search space, enabling to escape local optima.

MO-ParamILS handles the tree-structured search space by always keeping a value for each hyperparameter, whether it is active or not. Changing a higher-level hyperparameter (e.g., the algorithm choice) results in its dependent hyperparameters becoming active, retaining the values assigned to them earlier in the search. This means that the first time a specific algorithm is considered, its hyperparameters will be initialised at random.

It is known from earlier work on hyperparameter optimisation that, even in high-dimensional cases, most performance variation can be attributed to just a few hyperparameters (e.g., Hutter et al. 2014a; Bergstra and Bengio 2012). However, the original version of MO-ParamILS treats each hyperparameter equally. Here, we implemented a variant of the MO-ParamILS method that is able to leverage prior knowledge about individual hyperparameters. As can be seen in Table 6 in Appendix 1, all algorithms in our framework have a specific hyperparameter dedicated to controlling the earliness-accuracy trade-off. We will call these trade-off hyperparameters. In order to maximise coverage of the possible trade-offs (one of the objectives optimised by MO-ParamILS), these trade-off hyperparameters should be considered first when exploring the design space of EarlyTSC algorithms underlying our framework.

The original version of MO-ParamILS keeps track of a set of non-dominated candidates called the archive. In each local search step, the one-exchange neighbourhood of each configuration in the archive is searched uniformly at random, until either a neighbour is found that dominates at least one configuration in the archive, or all neighbours have been searched. In our customised version, we first consider neighbouring configurations obtained by changing a trade-off hyperparameter—a subset of the one-exchange neighbourhood—before considering the remaining neighbours. The neighbours resulting from changing a trade-off hyperparameter are expected to be less likely to dominate the current configuration but are at the same time more likely to be non-dominated. Thus, using this technique, we expect to obtain sets of configurations that are more diverse in terms of their earliness-accuracy trade-off. In Sect. 7.4, we show empirical support for the efficacy of this modification within the context of MultiETSC. We note that, in principle, this approach could be extended to other multi-objective problems, for which a limited number of (hyper)parameters controls the trade-off between different optimisation objectives.

One caveat of using MO-ParamILS is the requirement of a discrete search space requiring discretisation of continuous variables. This means that the true Pareto-set (the multi-objective optimum) might lie in between the chosen values in the discretisation. On the other hand, discretisation reduces the size of the search space facilitating optimisation.

In the context of reproducible research and to share our efforts with the community, we have made the source code for MultiETSC publicly available.Footnote 1

6 Experimental evaluation

We have designed our experiments to answer the following four questions:

-

What improvement can be achieved by solving the MO-CASH problem for EarlyTSC compared to multi-objective HPO of any single competitive EarlyTSC algorithm? (Sect. 7.1)

-

What improvement can be achieved by solving the MO-CASH problem compared to solving the single-objective CASH problem optimising for the harmonic mean of accuracy and earliness? (Sect. 7.1)

-

What would be the impact of adding other EarlyTSC algorithms to the search space (e.g., the naïve fixed method)? (Sect. 7.3)

-

What improvement can be achieved by modifying the MO-ParamILS algorithm configurator to explore the search space of MultiETSC by prioritising the trade-off hyperparameters? (Sect. 7.4)

In the rest of this section, we will introduce our baselines, data sources, and evaluation protocol. Next, we will present the experimental results that will answer these questions.

6.1 Baselines

To answer the first question we compared algorithm selection, using MO-ParamILS on the previously defined search space, with hyperparameter optimisation of each individual algorithm in the search space by fixing the algorithm choice. This results in 9 baseline methods, one for each included algorithm: ECTS, EDSC, RelClass, ECDIRE, SR-CF, TEASER, ECEC, EARLIEST and Fixed.

To address the second question, we compared our multi-objective approach with a method that optimises a single objective (we refer to this baseline as SO-all). For this objective, we chose the harmonic mean of earliness and accuracy as suggested by Schäfer and Leser (2020):

Resulting in a value on the closed interval [0, 1] which is to be minimised. This metric has the property that it will be low when both \(C_e\) and \(C_a\) are low and high when either is high. We will refer to this as the HM metric.

Although MO-ParamILS is capable of single-objective algorithm configuration, more advanced systems are available for this task. To make a fair comparison, we chose the state-of-the-art SMAC (Lindauer et al. 2017) algorithm configurator for its ability to efficiently search tree-structured search spaces. We will refer to the baseline method searching the full algorithm space using SMAC optimising the HM metric as SO-All. MO-ParamILS and SMAC differ in the optimisation method they are based on. In addition, for the single-objective case, the HM needs to be computed for each considered configuration. This means there are differences in the computational demands of these two methods. However, in this case, the computational costs of training and evaluating configurations heavily outweigh the costs of selecting the next configuration to evaluate. Furthermore, as we will discuss in Sect. 6.3, both configurators will be given the same overall time budget, to allow fair comparisons.

6.2 Data

As the main source of data, we will use the University of California, Riverside (UCR) Time Series Archive (Chen et al. 2015; Dau et al. 2018), last updated in 2018. As of 2018, the archive consists of 128 time series data sets for time series classification. Since its introduction in 2015, it has become the de facto standard for the evaluation of time series classification methods. The composers of the UCR archive recommend evaluating on all 128 data sets, and to clearly motivate excluding any. In our experiments we used 115 of these datasets in this paper due to the reason explained in Sect. 6.3. The data sets in the UCR archive contain real-world data and simulated data originating from various sources with varying degrees of complexity. Sources include ECG, EEG, spectrographs, image outlines, three-axis accelerometers and gyroscopes, and audio samples. In addition to real-world data, the archive contains 9 synthetic data sets that are specifically designed to evaluate time series classification methods. For data set details and descriptions, we refer to the Time Series Classification Website (Bagnall and Lines 2020).

All datasets in the UCR archive consist only of univariate time series. Time series lengths vary between a few dozen samples to several thousand samples. For most data sets, all time series within one set are of equal length. 15 of the 128 data sets contain time series of varying lengths or with missing values. For these data sets the archive also includes same-length versions that are imputed using linear interpolation and padded up to the length of the longest time series with low amplitude noise. For this paper, we only used the same-length versions of these data sets.

6.3 Evaluation protocol

6.3.1 Train and validation splits

The UCR data sets have pre-defined train-test splits. The UCR defined train set is split again into five stratified train-validation splits. An algorithm is trained on \(80\%\) of the data and evaluated on \(20\%\) of the data. This is illustrated in Fig. 3. For each repeated configurator run, a different set of cross-validation folds is generated. However, each experimental condition is run with the same set of cross-validation folds to keep the comparison fair. Due to the five-fold split requirement, we had to exclude 8 data sets that did not contain a sufficient amount of training examples per class for this split to be made. These were FiftyWords, Fungi, Phoneme, PigAirwayPressure, PigArtPressure, PigCVP, and Symbols. We evaluated the remaining 120 datasets.

6.3.2 Configurator runs

Configurator runs because a single configurator run is dependent on the random train/validation splits, and random initialisation, we performed multiple runs to get stable results. For each data set, 25 configurator runs were performed. For practical reasons, we set limits to run times of different stages of the experiment (presented in Table 2). The test evaluation is provided with a budget that is significantly bigger than that of the validation. The reason is that the test set can contain much more examples than the validation set. Additionally, we had to set a maximum amount of memory that can be used at any point in time by an algorithm implementation, which we set to 10GB. This made it impossible to process 5 more data sets: Crop, ElectricDevices, FordB, FordA, InsectWingbeatSound.

6.3.3 Bootstrapping

Each configurator run will result in a Pareto-set of configurations based on the validation performance. Note that in the case of the SO configurator, this set will, by definition, contain only a single configuration. To create a distribution of the results, we took a bootstrapping approach by randomly sampling 10 runs from the 25 runs performed. For each subsample, the set of Pareto-sets (one for each run) is then combined into a single set of non-dominated configurations (based on validation performance). All configurations ending up in one or more subsample Pareto-sets are evaluated using test data resulting in a final Pareto-set for each subsample. Evaluation is based on these final Pareto-sets. By repeating this approach 1000 times we created a bootstrap distribution. This protocol was designed based on similar experiments presented in literature (e.g., Hutter et al. 2011; Thornton et al. 2013). Note the fact that the single-objective baseline is limited to producing only a single configuration per run. This means that a subsample of SO-configurator runs can, in the best case, result in a number of non-dominated configurations equal to the number of runs at most. To make the single-objective baseline slightly more competitive, we increased the number of runs from 4 in the original design to 10 runs per subsample for all methods. This way the SO baseline is less constrained by this upper limit. For our analysis, we will be using robust, non-parametric tests, which means we can keep the amount of bootstrap samples at a relatively low number of 1000.

6.4 Evaluation

We want to evaluate Pareto-sets of configurations in the earliness-accuracy space. Solutions that are both accurate and early are desirable, but we also prefer a “cheap” trade-off, getting much earlier predictions for only a small reduction in accuracy. To quantify the performance of a particular Pareto-set, we will turn to the theory of multi-objective optimisation. There is a multitude of Pareto-set performance metrics defined each capturing one or more desirable aspects (Audet 2018). For our purpose, we will be using the dominated hypervolume, the \(\Delta \)-spread and the HM-metric which we describe here in detail.

-

Hypervolume (HV) also called the S-metric, proposed by Zitzler, Deb and Thiele (Zitzler et al. 2000), HV is the hypervolume in the objective space that is dominated by the Pareto efficient solutions bounded by a reference point \(\mathbf {y}_{ref} \in \mathbb {R}^m\) that is dominated by all feasible solutions. Figure 4a shows a Pareto-front and its dominated hypervolume S in \(\mathbb {R}^2\). A commonly mentioned downside of the hypervolume is the need for a reference point that is larger than any point in the Pareto-set on all objectives but not too large since that would reduce the precision. In our case, however, it is clear that both error rate and earliness will never be larger than 100%, so we can safely use (1, 1) as the reference point. The hypervolume metric has several properties that make it well suited for our purpose. First of all, it is intuitive. It is clear that dominating a large part of the objective space is desirable. As denoted in (Audet 2018), the hypervolume metric is the only known unary Pareto-front performance indicator to be strictly monotonic. This means that if set A dominates set B (i.e., all points in B are dominated by at least one point in A) then the hypervolume metric of A is strictly larger than that of B. This is desirable since set domination is a strong indication that one set is preferable over the other and we want the metric to represent this property as well. Furthermore, the hypervolume metric is widely used in the performance evaluation of various multi-objective optimisation algorithms.

-

\(\Delta \)-spread a desirable property that is not very well represented in the hypervolume is the distribution of configurations along the Pareto-front. To have control over the earliness-accuracy trade-off, these configurations should be evenly distributed along with a wide range of values. There can be two cases where the hypervolume metric is equal but in one case all hypervolume is dominated by a single configuration and in the other case there are 1000 unique configurations. In that case, it would be preferable to have a choice out of multiple options. We will be using the \(\Delta \)-spread metric (Zitzler et al. 2000) to quantify the distribution of solutions in the objective space. This metric is based on the Euclidean distances between neighbouring solutions \(d_i\) and is formalised as follows:

$$\begin{aligned} \Delta = \frac{d_f+d_l + \sum _{i=1}^{N-1} |d_i-\bar{d}| }{d_f+d_l+(N-1)\cdot \bar{d}} \end{aligned}$$(8)where \(d_f\) and \(d_l\) are distances between the extreme solutions and the boundary solutions in the Pareto-front, and \(\bar{d} = \frac{\sum _{i=1}^{N-1} d_i}{N-1}\). See Fig. 4b for an illustration of the distances. An ideal distribution would make \(d_f = d_l = 0\) and all distances \(d_i\) equal to \(\bar{d}\), resulting in \(\Delta = 0\). More clustering will result in higher values of \(\Delta \).

-

HM metric in addition to the hypervolume metric and \(\Delta \)-spread, we will also look at the HM scalarisation as defined in Equation 7, to see how this metric compares to our proposed evaluation methods. Since each point in the Pareto-set will have its own HM value, we will look at the minimum value in each set.

7 Results

In this section, we cover the results of our extensive experiments. First, we focus on the relative performance of EarlyTSC and our baselines based on ranked performance. Next, we cover an illustrative example of one of the data sets in the UCR archive and show the performance of the produced classifiers. Finally, we analyse the contribution of the naïve Fixed algorithm to MutliETSC.

7.1 EarlyTSC versus baselines

To compare relative performance between all methods, based on their performance across 115 data sets, we computed the average ranks based on the three selected metrics. Average ranks provide a robust method of comparison without making additional assumptions about normality and symmetry—assumptions we cannot safely make in general. This approach is similar to the empirical analysis done by Bagnall et al. (2016). The ranks are averaged over all subsamples and all datasets. With 1000 subsamples for each of the 115 data sets, these are the averages over \(115\,000\) ranks. We first checked for significant differences between rank means using the Friedman test and subsequently applied the Nemenyi post-hoc test (Nemenyi 1963) which checks for significant pairwise differences in average ranks. We visualised the outcome of this test using critical difference diagrams (Demšar 2006), which are commonly used for the comparison of TSC methods to show the result of a statistical comparison of ranking results. These are shown in Figs. 5, 6 and 7. Due to our subsampling method, we achieved high statistical power resulting in small CD intervals, which means that most observed differences are statistically significant.

According to the comparison of methods based on all metrics (shown in Figs. 5, 6, 7), MultiETSC performs significantly better than any of the algorithms we compared against, finding configurations that together dominate a larger portion of the objective space and distributed more evenly across the trade-off according to the \(\Delta \)-spread. This answers our first question mentioned earlier in Sect. 6. Similarly, MultiETSC performs significantly better than the single-objective CASH method SO-All, which answers our second question. We also observed that SO-All performs relatively well compared to our individual baseline algorithms. However, interestingly, SO-All performs worse than MultiETSC on the HM metric, even though this is the exact metric that the SO configurator optimises, while MultiETSC does not explicitly consider this metric during configuration. Overall, methods consistently rank according to different metrics.

Table 3 shows the performance of the compared methods split out per problem (dataset) type. This table would show any method that is particularly suited or unsuited for a specific problem type. From these results, we observe that MultiETSC is consistently performing well across a broad range of problem types. The results for individual data sets are provided in Appendix 2 (Tables 5–7).

We found a significant number of cases with ties for the best method, most often between Fixed and MultiETSC. In these cases, the set of possible configurations using the Fixed algorithm dominates all other configurations. This set is found by both the Fixed method and MultiETSC, which results in equal hypervolume scores. This results in scenarios for which some rows in Table 3 add up to more than 100% (e.g., TRAFFIC).

As expected, the performance rank of each single algorithm method seems to correspond closely to the order in which these algorithms were originally introduced. However, there are some exceptions to this pattern. EARLIEST, the only neural-network-based algorithm, performs not as well as other methods proposed around the same time. In the original study, EARLIEST (Hartvigsen et al. 2019), is evaluated on data sets containing considerably more training examples than are available in typical UCR data sets and in many real-world applications. Additionally, the running time limits (3 minutes for configuration and 15 minutes for testing) might pose a challenge for the configurator to find viable parameter settings. This, however, is a challenge for all methods, not only EARLIEST.

7.2 Application of earlyTSC

To provide some intuition on the produced Pareto-sets and their metrics we will discuss an illustrative example. A single subsample is constructed by combining ten different validation splits. Each method is run on each split and the resulting Pareto-sets are combined into one Pareto-set per method. Figure 8 shows these Pareto-sets for an arbitrary subsample of the BME data set. The BME data set is a synthetic data set created specifically for TSC research and is part of the UCR archive. It is a three-class problem with 10 training examples per class. The test set is balanced containing 150 items.

Looking at Fig. 8, it is seen that generally, in Pareto-fronts, \(66\%\) seems to be the upper bound of the error rate at zero earliness (maximally early), which corresponds to the performance of a random or constant output classifier. With increasing earliness (later classification) most methods can produce classifiers that are increasingly accurate with clearly diminishing returns. Most methods level out at one-third of the time series observed, while the better-performing methods reduce the error rate up to \(90\%\) of the data observed achieving close to \(5\%\) error rate.

The ability of our approach to provide this visual representation of the trade-off between earliness and accuracy is a major advantage. Using any EarlyTSC algorithm requires the user to select a hyperparameter that only indirectly influences the trade-off. Using our approach, a user trying to solve a specific EarlyTSC problem can make an informed choice, making EarlyTSC more accessible for non-experts.

The metrics evaluating these Pareto-sets are shown in Table 4. In this particular example, the configurations found by MultiETSC dominate the largest hypervolume (0.733). Although the competing methods are close, it is clear that MultiETSC corresponds closely to the best of each individual baseline.

7.3 The contribution of fixed

In this section, we study the impact of adding other algorithms to the search space (e.g., the naïve fixed method). As seen in Fig. 5, the naïve fixed-time method we introduced is highly competitive, consistently outperforming six out of the eight EarlyTSC algorithms proposed in the literature, according to all three metrics. More support for the merit of the Fixed method can be found by looking at how often each algorithm was selected by the configurator. Figure 9 shows the share of each algorithm in the final set of configurations. We observe that more than half of all the selected configurations are based on the Fixed algorithm. This supports our claim that giving more control to the configurator, in terms of optimising algorithmic choices, enables a better exploration of the earliness-accuracy trade-off. In turn, this exploration increases the resolution of choices over the trade-off, which gives the user more freedom and control in picking the best solution for the problem at hand.

So far we showed that Fixed contributes to the Pareto sets with a large number of configurations. However, this does not necessarily mean that Fixed provided benefits to the overall performance of MultiETSC. In other words, the inclusion of Fixed could even have a negative impact on performance by increasing the size of the search space. To show that adding Fixed provides tangible benefits to MultiETSC, we ran a series of experiments (using the same protocol as described earlier) comparing the performance of MultiETSC with and without Fixed on seven UCR data sets. Figure 10 shows the critical difference diagram comparing MultiETSC to both MultiETSC without Fixed (MultiETSC w/o Fixed) and MultiETSC using the original version of MO-ParamILS (MultiETSC-o, which will be discussed in Sect. 7.4), showing their average ranks on all 115 data sets. Although MultiETSC with and without Fixed are both close to rank 2, the difference in rank is statistically significant.

These results provide evidence that MultiETSC is able to efficiently ignore ill-performing configurations and that performance tends to be improved by including Fixed.

7.4 The contribution of our new variant of MO-ParamILS

In Sect. 5.3, we theorised that modifying MO-ParamILS to be more explorative in the direction of the so-called trade-off hyperparameters could potentially result in improved overall performance. Figure 10 compares MultiETSC (using our new variant of MO-ParamILS) to MultiETSC-o, which uses the original version of MO-ParamILS. As seen in the figure, there is a significant difference in the average rank in favour of our new variant. The results shown in Table 7 in Appendix 1 further demonstrate that our modification also positively affects the average numbers of configurations searched and non-dominated configurations found compared to the original version.

8 Conclusions and future work

In this work, we have introduced MultiETSC, a systematic approach to automated early time series classification (EarlyTSC). MultiETSC performs automatic algorithm selection and hyperparameter optimisation to explore the full range of trade-offs between accuracy and earliness afforded by a broad set of EarlyTSC algorithms.

Our approach builds upon recently developed techniques in the area of automated algorithm configuration and uses them in combination with a broad range of well-known EarlyTSC algorithms. We have modified the general-purpose algorithm configurator MO-ParmaILS to exploit prior knowledge about the structure of the EarlyETSC problem, resulting in improved overall performance. MultiETSC enables users to explore and exploit trade-offs between earliness and accuracy, by producing a large and diverse set of non-dominated configurations from which a user can choose the one that is best suited to the problem at hand.

We performed an extensive empirical evaluation of our proposed method, using 115 data sets from the UCR Time Series Archive. We have shown that by leveraging the potential of many existing EarlyTSC algorithms, our approach can outperform any single algorithm, even when their hyperparameters are optimised. Our results also demonstrate that considering both earliness and accuracy separately and in a multi-objective fashion produces better results than combining the two into a single objective.

It should be mentioned that the benefits of automated algorithm configuration for EarlyTSC do come at a significant computational cost, which is primarily caused by the number of configurations considered and evaluated. This can be illustrated by looking back at Fig. 1 in the introduction. The evaluation of the solutions represented in Fig. 1b (manual configuration) costs less than 1 h of CPU time. In contrast, 50 h of CPU time were required to produce Fig. 1c, d (automated configuration). However, during this time, hundreds of other possible configurations are evaluated.

Therefore, MultiETSC essentially trades off the time spent by human experts on manual algorithm selection and configuration against computational effort, while also producing a more diverse set of qualitatively better results. Furthermore, in our experiments, we limited the running time of individual algorithms, in order to prevent spending too much time on a small set of configurations, and we limited the time budget of the automated configurator itself to 2 h per run, in order to keep the computational cost of our experiments manageable. In practice, it would likely be best to adjust these limits to account for factors such as the size of the data set and the amount of CPU time the user can afford to spend on configuration.

We see numerous opportunities for future research. We have shown that our proposed approach can be extended and benefit from additional EarlyTSC algorithms (in particular, the naïve fixed-time classifier). Further research could focus on the development of additional EarlyTSC algorithms that improve the performance of MultiETSC. On the other hand, an algorithm might negatively impact the performance of MultiETSC by increasing the size of the search space without providing any beneficial configurations. Although MultiETSC can reasonably effectively ignore these ill-performing algorithms, the overall performance could possibly be further improved by excluding these algorithms altogether. This could be achieved by considering the marginal contribution or Shapley values of these algorithms in order to assess their contribution to overall performance (Fréchette et al. 2016).

This might make it possible to even more effectively construct a set of complementary algorithms that jointly represent the state-of-the-art in EarlyTSC.

For the area of automated machine learning, our work clearly demonstrates the potential of using multi-objective algorithm configurators within an integrated system leveraging many state-of-the-art techniques—an idea that can be extended to many other problems in machine learning in which multiple conflicting performance objectives arise. Our approach could also be extended to a larger number of objectives, which would enable the exploration of additional trade-offs, for instance, the resources required for processing given data. There are many situations where machine learning solutions need to be applied with limited computing resources. For such cases, it could be very valuable to know how much performance an ML solution is losing by constraining its access to resources.

Finally, we hope that this work will inspire further exploration in other directions, and ultimately lead to significant improvements in the state of the art in solving a broad set of machine learning problems that involve multiple competing performance objectives, as is the case in early time series classification.

References

Abdelghani SA, Rosenthal TM, Morin DP (2016) Surface electrocardiogram predictors of sudden cardiac arrest. Ochsner J 16(3):280–289

Antonucci A, Scanagatta M, Mauá DD, de Campos CP (2015) Early classification of time series by hidden markov models with set-valued parameters. In: Proceedings of the NIPS time series workshop, pp 1–5. https://sites.google.com/site/nipsts2015/home

Audet C (2018) Performance indicators in multiobjective optimization. Les Cahiers du GERAD, GERAD HEC Montréal. https://books.google.nl/books?id=uKepzQEACAAJ

Bagnall A, Lines J (2020) The UEA TSC website. http://www.timeseriesclassification.com/

Bagnall A, Lines J, Bostrom A, Large J, Keogh E (2016) The great time series classification bake off: a review and experimental evaluation of recent algorithmic advances. Data mining and knowledge discovery, vol 31. https://doi.org/10.1007/s10618-016-0483-9

Bergstra J, Bengio Y (2012) Random search for hyper-parameter optimization. J Mach Learn Res 13(1):281–305

Bergstra J, Bardenet R, Bengio Y, Kégl B (2011) Algorithms for hyper-parameter optimization. In: Proceedings of the 24th international conference on neural information processing systems, Curran Associates Inc., USA, NIPS’11, pp 2546–2554. http://dl.acm.org/citation.cfm?id=2986459.2986743

Blot A, Hoos HH, Jourdan L, Kessaci-Marmion M, Trautmann H (2016) MO-ParamILS: a multi-objective automatic algorithm configuration framework. In: Proceedings of the 10th international conference on learning and intelligent optimization (LION 10), Springer, Lecture notes in computer science, vol 10079, pp 32–47. https://doi.org/10.1007/978-3-319-50349-3_3

Carnein M, Trautmann H, Bifet A, Pfahringer B (2020) confstream: automated algorithm selection and configuration of stream clustering algorithms. In: 14th Learning and intelligent optimization conference (LION 2020), pp 80–95. https://doi.org/10.1007/978-3-030-53552-0_10

Celik B, Vanschoren J (2020) Adaptation strategies for automated machine learning on evolving data. arXiv preprint arXiv:2006.06480

Chen Y, Keogh E, Hu B, Begum N, Bagnall A, Mueen A, Batista G (2015) The UCR time series classification archive. www.cs.ucr.edu/~eamonn/time_series_data/

Dachraoui A, Bondu A, Cornuéjols A (2015) Early classification of time series as a non myopic sequential decision making problem. In: Appice A, Rodrigues PP, Santos Costa V, Soares C, Gama J, Jorge A (eds) Machine learning and knowledge discovery in databases, Springer International Publishing, Cham, pp 433–447. https://doi.org/10.1007/978-3-319-23528-8_27

Dau HA, Keogh E, Kamgar K, Yeh CCM, Zhu Y, Gharghabi S, Ratanamahatana CA, Yanping, Hu B, Begum N, Bagnall A, Mueen A, Batista G, Hexagon-ML (2018) The UCR time series classification archive. https://www.cs.ucr.edu/~eamonn/time_series_data_2018/

Demšar J (2006) Statistical comparisons of classifiers over multiple data sets. J Mach Learn Res 7(1):1–30

Emmerich M, Yang K, Deutz A, Wang H, Fonseca C (2015) A multicriteria generalization of Bayesian global optimization, vol 107, Springer International Publishing, pp 229–242. https://doi.org/10.1007/978-3-319-29975-4_12

Feurer M, Klein A, Eggensperger K, Springenberg J, Blum M, Hutter F (2015) Efficient and robust automated machine learning. In: Cortes C, Lawrence N, Lee D, Sugiyama M, Garnett R (eds) Advances in neural information processing systems, Curran Associates, Inc., vol 28, pp 2962–2970. https://proceedings.neurips.cc/paper/2015/file/11d0e6287202fced83f79975ec59a3a6-Paper.pdf

Fréchette A, Kotthoff L, Michalak TP, Rahwan T, Hoos HH, Leyton-Brown K (2016) Using the shapley value to analyze algorithm portfolios. In: Proceedings of the 30th AAAI conference on artificial intelligence (AAAI-16), AAAI Press, pp 3397–3403. http://www.aaai.org/ocs/index.php/AAAI/AAAI16/paper/view/12495

Freund Y, Schapire RE (1999) A short introduction to boosting. In: In Proceedings of the sixteenth international joint conference on artificial intelligence, Morgan Kaufmann, pp 1401–1406. https://doi.org/10.1051/matecconf/201713900222

Gardner S, Golovidov O, Griffin J, Koch P, Thompson W, Wujek B, Xu Y (2019) Constrained multi-objective optimization for automated machine learning. In: 2019 IEEE International conference on data science and advanced analytics (DSAA), pp 364–373. https://doi.org/10.1109/DSAA.2019.00051

Hartvigsen T, Sen C, Kong X, Rundensteiner E (2019) Adaptive-halting policy network for early classification. In: Proceedings of the 25th ACM SIGKDD international conference on knowledge discovery and data mining, pp 101–110. https://doi.org/10.1145/3292500.3330974

Hatami N, Chira C (2013) Classifiers with a reject option for early time-series classification. 2013 IEEE symposium on computational intelligence and ensemble learning (CIEL), pp 9–16. https://doi.org/10.1109/CIEL.2013.6613134

He G, Zhao W, Xia X (2019) Confidence-based early classification of multivariate time series with multiple interpretable rules. Pattern Anal Appl 23:567–580. https://doi.org/10.1007/s10044-019-00782-7

Hutter F, Hoos HH, Leyton-Brown K, Murphy K (2009) An experimental investigation of model-based parameter optimisation: SPO and beyond. In: Proceedings of the 11th annual conference on genetic and evolutionary computation (GECCO 2009), pp 271–278. https://doi.org/10.1145/1569901.1569940

Hutter F, Hoos HH, Leyton-Brown K (2011) Sequential model-based optimization for general algorithm configuration. In: Proceedings of the 5th international conference on learning and intelligent optimization (LION 5), pp 507–523. https://doi.org/10.1007/978-3-642-25566-3_40

Hutter F, Hoos H, Leyton-Brown K (2014a) An efficient approach for assessing hyperparameter importance. In: Proceedings of the 31th international conference on machine learning, ICML 2014, Beijing, China, 21–26 June 2014, JMLR.org, JMLR workshop and conference proceedings, vol 32, pp 754–762. http://jmlr.org/proceedings/papers/v32/hutter14.html

Hutter F, Stützle T, Leyton-Brown K, Hoos HH (2014b) Paramils: an automatic algorithm configuration framework