Abstract

By applying robust control the decision maker wants to make good decisions when his model is only a good approximation of the true one. Such decisions are said to be robust to model misspecification. In this paper it is shown that the application of the usual robust control framework in discrete time problems is associated with some interesting, if not unexpected, results. Results that have far reaching consequences when robust control is applied sequentially, say every year in fiscal policy or every quarter (month) in monetary policy. This is true when unstructured uncertainty à la Hansen and Sargent is used, both in the case of a “probabilistically sophisticated” and a non- “probabilistically sophisticated” decision maker, or when uncertainty is related to unknown structural parameters of the model.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Robust control has been a very popular area of research in Macroeconomics in the last three decades and shows no sign of fatigue.Footnote 1 In recent years a growing attention has been devoted to its continuous time version (see, e.g., Hansen & Sargent, 2011, 2016). A characteristic “feature of most robust control theory”, observes Bernhard (2002, p. 19), “is that the a priori information on the unknown model errors (or signals) is nonprobabilistic in nature, but rather is in terms of sets of possible realizations. Typically, though not always, the errors are bounded in some way. … As a consequence, robust control aims at synthesizing control mechanisms that control in a satisfactory fashion (e.g., stabilize, or bound, an output) a family of models.” Then “standard control theory tells a decision maker how to make optimal decisions when his model is correct (whereas) robust control theory tells him how to make good decisions when his model approximates a correct one” (Hansen & Sargent, 2007a, p. 25). In other words, by applying robust control the decision maker makes good decisions when it is statistically difficult to distinguish between his approximating model and the correct one using a time series of moderate size. “Such decisions are said to be robust to misspecification of the approximating model” (Hansen & Sargent, 2007a, p. 27).

Concerns about the “robustness” of the standard formulation of robust control have been floating around for some time. Sims (2001, p. 52) observes that “once one understands the appropriate role for this tool (i.e. robust control or maxmin expected utility), it should be apparent that, whenever possible, its results should be compared to more direct approaches to assessing prior beliefs.” Then he continues, “the results may imply prior beliefs that obviously make no sense … (or) they may … focus the minimaxing on a narrow, convenient, uncontroversial range of deviations from a central model.” In the latter case “the danger is that one will be misled by the rhetoric of robustness to devoting less attention than one should to technically inconvenient, controversial deviations from the central model.”Footnote 2

Tucci (2006, p. 538) argues, “the true model in Hansen and Sargent (2007a) … is observationally equivalent to a model with a time-varying intercept.” Then he goes on showing that, when the same “malevolent” shock is used in both procedures, the robust control for a linear system with an objective functional having desired paths for the states and controls set to zero applied by a “probabilistically sophisticated” decision maker is identical to the optimal control for a linear system with an intercept following a “Return to Normality” model and the same objective functional only when the transition matrix in the law of motion of the parameters is zero.Footnote 3He concludes that this robust control is valid only when “today’s malevolent shock is linearly uncorrelated with tomorrow’s malevolent” shock” (p. 553). These results are shown to be valid, in a more general setting with arbitrary desired paths, both for a “probabilistically sophisticated” and a non- “probabilistically sophisticated” decision maker and in the presence of a structural model with uncertain parameters in Tucci (2021).

Indeed, it may be pointed out that “the fact that the transition matrix does not appear in the relevant expression in Tucci (2006, 2021) does not mean that the decision maker does not contemplate very persistent model misspecification shocks.” For instance, the robust control in the worst case may not depend upon on transition matrix simply because the persistence of the misspecification shock does not affect the worst case! Again the robust decision maker accounts for the possible persistence of the misspecification shocks, and that persistence may affect the evolution of the control variables in other equilibria, but it happens that transition matrix does not play a role in the worst case equilibrium. Moreover, as commonly understood, “the robust control choice accounts for all possible kinds of persistence of malevolent shocks, which again may take a much more general form than the VAR(1) assumed in Tucci (2006, 2021). It just happens that in the worst-case misspecification shocks are not persistent. While for many possible ‘models’, these misspecification shocks may be very persistent, such models happen to result in lower welfare losses than the worst-case model.” To shed some light on these issues, this paper will further investigate the characteristics of the most common specification of robust control in discrete time in Economics by explicitly considering the welfare loss associated with the various controls.

The remainder of the paper is organized as follows. Section 2 reviews the standard robust control problem with unstructured uncertainty à la Hansen and Sargent, i.e. a nonparametric set of additive mean-distorting model perturbations. In Sect. 3 the linear quadratic tracking control problem where the system equations have a time-varying intercept following a ‘Return to Normality’ model is introduced and the solution compared with that in Sect. 2. Section 4 reports some numerical results obtained using a ‘robustized’ version of MacRae (1972) problem. Then the permanent income model, a popular model in the robust control literature (see, e.g., Hansen & Sargent, 2001, 2003, 2007a; Hansen et al., 1999, 2002) is considered (Sect. 5). The main conclusions are summarized in Sect. 5.

2 Robust Control à la Hansen and Sargent in Discrete Time

Hansen and Sargent (2007a, p. 140) consider a decision maker “who has a unique explicitly specified approximating model but concedes that the data might actually be generated by an unknown member of a set of models that surround the approximating model.”Footnote 4 Then the linear system

with \({\mathbf{y}}_{t}\) the n × 1 vector of state variables at time t, \({\mathbf{u}}_{t}\) the m × 1 vector of control variables and \(\mathbf{\varepsilon }_{t + 1}\) an l × 1 identically and independently distributed (iid) Gaussian vector process with mean zero and an identity contemporaneous covariance matrix, is viewed as an approximation to the true unknown model

The matrices of coefficients A, B and C are assumed known and \({\mathbf{y}}_{0}\) given.Footnote 5

In Eq. (2) the vector \(\mathbf{\omega }_{t + 1}\) denotes an “unknown” l × 1 “process that can feed back in a possibly nonlinear way on the history of y, (i.e.) \(\mathbf{\omega }_{t + 1} = {\mathbf{g}}_{t} ({\mathbf{y}}_{t} ,{\mathbf{y}}_{t - 1} , \, ...)\) where \(\{ {\mathbf{g}}_{t} \}\) is a sequence of measurable functions” (Hansen & Sargent, 2007a, pp. 26–27). It is introduced because the “iid random process …\({(}\mathbf{\varepsilon }_{t + 1} {)}\) can represent only a very limited class of approximation errors and in particular cannot depict such examples of misspecified dynamics as are represented in models with nonlinear and time-dependent feedback of \({\mathbf{y}}_{t + 1}\) on past states” (p. 26).Footnote 6 To express the idea that (1) is a good approximation of (2) the ω’s are restrained by

where \(E_{0}\) denotes mathematical expectation evaluated with respect to model (2) and conditioned on \({\mathbf{y}}_{0}\) and \(\eta_{0}\) measures the set of models surrounding the approximating model.Footnote 7

“The decision maker’s distrust of his model … (1) makes him want good decisions over a set of models … (2) satisfying … (3)” write Hansen and Sargent (2007a, p. 27). The solution can be found solving the multiplier robust control problem formalized asFootnote 8

where \(r({\mathbf{y}}_{t} ,{\mathbf{u}}_{t} )\) is the one-period loss functional, subject to (2) with θ, \(0 < \theta^{*} < \theta \le\)∞, a penalty parameter restraining the minimizing choice of the \(\{ \mathbf{\omega }_{t + 1} \}\) sequence and \(\theta^{*}\) “a lower bound on \(\theta\) that is required to keep the objective … (4) convex in … (\(\mathbf{\omega }_{t + 1}\)) and concave in \({\mathbf{u}}_{t}\)” (p. 161).Footnote 9 This problem can be reinterpreted as a two-player zero-sum game where one player is the decision maker maximizing the objective functional by choosing the sequence for u and the other player is a malevolent nature choosing a feedback rule for a model-misspecification process ω to minimize the same criterion functional.Footnote 10 For this reason, the multiplier robust control problem is also referred to as the multiplier game.Footnote 11

The Riccati equation for problem (4) “is the Riccati equation associated with an ordinary optimal linear regulator problem (also known as the linear quadratic control problem) with controls \(({\mathbf{u^{\prime}}}_{t} \, \mathbf{\omega^{\prime}}_{t + 1} )^{\prime}\) and penalty matrix on those controls appearing in the criterion functional of diag(\({\mathbf{R}},\) \(- \beta \theta {\mathbf{I}}_{l}\))” (Hansen & Sargent, 2007a, p. 170).Footnote 12 Then, when the one-period loss functional is

with Q a positive semi-definite matrix, R a positive definite matrix, W an n × m array, \({\mathbf{y}}_{t}^{d}\) and \({\mathbf{u}}_{t}^{d}\) the desired state and control vectors, respectively, the robust control rule is derived by extremizing, i.e. maximizing with respect to \({\mathbf{u}}_{t}\) and minimizing with respect to ω, the objective functional

with

subject to

where

and \({\tilde{\mathbf{W}}} = [\begin{array}{*{20}c} {\mathbf{W}} & {\mathbf{O}} \\ \end{array} ]\) with O and 0 null arrays of appropriate dimension.

Setting ε\(_{t + 1}\) = 0 and writing the optimal value of (6) as \(- {\mathbf{y^{\prime}}}_{t} {\mathbf{P}}_{t} {\mathbf{y}}_{t} - 2{\mathbf{y^{\prime}}}_{t} {\mathbf{p}}_{t} ,\)Footnote 13 the Bellman equation looks likeFootnote 14

with \({\mathbf{P}}_{t + 1} = \beta {\mathbf{P}}_{t}\), \({\mathbf{Q}}_{t} = \beta^{t} {\mathbf{Q}}\), \({\mathbf{W}}_{t} = \beta^{t} {\mathbf{W}}\), \({\mathbf{R}}_{t} = \beta^{t} {\mathbf{R}}\), \({\mathbf{q}}_{t} =\)− (\({\mathbf{Q}}_{t} {\mathbf{y}}_{t}^{d} + {\mathbf{W}}_{t} {\mathbf{u}}_{t}^{d}\)) and \({\mathbf{r}}_{t} = - ({\mathbf{R}}_{t} {\mathbf{u}}_{t}^{d} + {\mathbf{W^{\prime}}}_{t} {\mathbf{y}}_{t}^{d} )\). Then expressing the right-hand side of (10) only in terms of y and \({\tilde{\mathbf{u}}}_{t}\) and extremizing it yields the optimal control for the decision maker

and the optimal control for the malevolent nature

It follows that the θ-constrained worst-case controls areFootnote 15

andFootnote 16

withFootnote 17

The “robust” Riccati arrays \({\mathbf{P}}_{t + 1}^{*}\) and \({\mathbf{p}}_{t + 1}^{*}\) are always greater than, or equal to, Pand \({\mathbf{p}}_{t + 1} ,\) respectively, because it is assumed that, in the “admissible” region, the parameter θ is large enough to make \({(}\beta \theta {\mathbf{I}}_{l} - \, {\mathbf{C}}^{\prime } {\mathbf{P}}_{t + 1} {\mathbf{C}}{)}\) positive definite.Footnote 18 They are equal when \(\theta =\) ∞.Footnote 19

These results are slightly different from those presented in Hansen and Sargent (2007a, Ch. 2 and 7) where a simpler one-period loss functional with desired state and control vectors and W matrix set equal to zero, see e.g. Hansen and Sargent (2007a, Sect. 2.2.1 on page 28), is considered. Then the quantities \({\mathbf{q}}_{t}\), \({\mathbf{r}}_{t}\), \({\mathbf{p}}_{t}\) and \({\mathbf{p}}_{t}^{*}\) vanish in their case when the ‘modified certainty equivalence principle’, see Hansen and Sargent (2007a, Sect. 2.4.1), is applied.

3 Optimal Control of a Linear System with Time-Varying Parameters

Tucci (2006, pp. 538–539) argues that the model used by a “probabilistically sophisticated’ decision maker to represent dynamic misspecification, i.e. Eq. (2), is observationally equivalent to a model with a time-varying intercept. When this intercept is restricted to follow a ‘Return to Normality’ or ‘mean reverting’ model,Footnote 20 and the symbols are as in Sect. 2, the latter takes the form

with

where a is the unconditional mean of \(\mathbf{\alpha }_{t + 1} {,} \, \mathbf{\Phi }\) the l × l stationary transition matrix and \(\mathbf{\varepsilon }_{t + 1}\) a Gaussian iid vector process with mean zero and an identity covariance matrix. Matrix \({\mathbf{A}}_{1}\) is such that \({\mathbf{A}}_{1} {\mathbf{y}}_{\varvec{t}} + {\mathbf{Ca}}\) in (16) is equal to Ay in (2).Footnote 21 In this case, a decision maker insensitive to robustness but wanting to control a system with a time-varying intercept can find the set of controls ut which maximizesFootnote 22

subject to (16)–(18) using the approach discussed in Kendrick (1981) and Tucci (2004). When the same objective functional used by the robust regulator is optimized, \(L_{t}\) is simply the one-period loss functional in (5) times \(\beta^{t}\).

This control problem can be solved treating the stochastic parameters as additional state variables. When the hyper-structural parameters a and Φ are known, the original problem is restated in terms of an augmented state vector zt as: find the controls ut maximizingFootnote 23

subject toFootnote 24

withFootnote 25

and the arrays z and having dimension n + l, i.e. the number of original states plus the number of stochastic parameters. For this ‘augmented’ control problem the L’s in Eq. (20) are defined as

with \({\mathbf{Q}}_{t}^{*} = \beta^{t} {\mathbf{Q}}^{*}\), \({\mathbf{Q}}^{*} = diag\)(\({\mathbf{Q}}, \, - \beta \theta {\mathbf{I}}_{l}\)), \({\mathbf{W}}_{t}^{*} = \beta^{t} [\begin{array}{*{20}c} {{\mathbf{W^{\prime}}}} & {{\mathbf{O^{\prime}}}} \\ \end{array} ]^{\prime}\) and \({\mathbf{R}}_{t}^{{}} = \beta^{t} {\mathbf{R}}\).

By replacing \( {{\mathbf{A}}_{1} {\mathbf{y}}_{t} + {\mathbf{C}}\mathbf{\alpha }_{t + 1} }\) with \( {{\mathbf{A}}_{t} + {\mathbf{C}}{\varvec{\nu}}_{t + 1} }\)in (22), defining \(\mathbf{\nu }_{t + 1} = \mathbf{\omega }_{t + 1}^{c} + \mathbf{\varepsilon }_{t + 1}\) with \(\mathbf{\omega }_{t + 1}^{c} \equiv \mathbf{\Phi \nu }_{t}\) and using the deterministic counterpart to (20)–(23),Footnote 26 namely

with

the optimal value of (20) can be written as \(- {\mathbf{z^{\prime}}}_{t} {\mathbf{K}}_{t} {\mathbf{z}}_{t} - 2{\mathbf{z^{\prime}}}_{t} {\mathbf{k}}_{t}\) and it satisfies the Bellman equationFootnote 27

with \({\mathbf{K}}_{t + 1} = \beta {\mathbf{K}}_{t}\). Expressing the right-hand side of (26) only in terms of \({\mathbf{z}}_{t}\) and \({\mathbf{u}}_{t}\) and maximizing it yields the optimal control in the presence of time-varying intercept (or tvp-control), i.e.Footnote 28

with K11 and K12 denoting the n × n North-West block and the n × l North-East block, respectively, of the Riccati matrixFootnote 29

and \({\mathbf{k}}_{1,t}\), containing the first n elements of \({\mathbf{k}}_{t}\), defined as

Then the optimal control (28) is independent of the parameter θ which enters only the l × l South-East block of K, namely \({\mathbf{K}}_{22,t}\).

When \(\mathbf{\omega }_{t + 1}^{c} \equiv \mathbf{\omega }_{t + 1}\), i.e. the same shock is used to determine both robust control and tvp-control, the latter is

with

The quantity \({\mathbf{K}}_{11,t + 1}^{ + }\) collapses to the ‘robust’ Riccati matrix \({\mathbf{P}}_{t + 1}^{*}\) when \({\mathbf{P}}_{t + 1} = {\mathbf{K}}_{11,t + 1}^{{}}\) and Φ is a null matrix because the array \({\mathbf{K}}_{12,t + 1}\) is generally different from zero. This means that the control applied by the decision maker who wants to be “robust to misspecifications of the approximating model” implicitly assumes that the ω’s in (2) are serially uncorrelated. Alternatively put, given arbitrary desired paths for the states and controls, robust control is “robust” only when today’s malevolent shock is linearly uncorrelated with tomorrow’s malevolent shock.

Before leaving this section it is worth it to emphasize two things. First of all the results in (30)–(31) do not imply that robust control is implicitly based on a very specialized type of time-varying parameter model or that one of the two approaches is better than the other. Robust control and tvp-control represent two alternative ways of dealing with the problem of not knowing the true model ‘we’ want to control and are generally characterized by different solutions. In general, when the same objective functional and terminal conditions are used, the main difference is due the fact that the former is determined assuming for \(\mathbf{\omega }_{t + 1}\) the worst-case value, whereas the latter is computed using the expected conditional mean of \(\mathbf{\nu }_{t + 1}\) and taking into account its relationship with next period conditional mean. As a side effect even the Riccati matrices common to the two procedures, named P and p in the robust control case and \({\mathbf{K}}_{11}^{{}}\) and \({\mathbf{k}}_{11}\) in the tvp-case, are different. The use of identical Riccati matrices and of an identical shock in the two alternative approaches in this section, i.e. setting \({\mathbf{K}}_{11,t + 1} \equiv {\mathbf{P}}_{t + 1}\), \({\mathbf{k}}_{11,t + 1} \equiv {\mathbf{p}}_{t + 1}\) and \(\mathbf{\omega }_{t + 1}^{c} \equiv \mathbf{\omega }_{t + 1}\) or \(\mathbf{\omega }_{t + 1}^{c} \equiv [\begin{array}{*{20}c} {\mathbf{\omega^{\prime}}_{1,t + 1} } & {\mathbf{\omega^{\prime}}_{2,t + 1} } \\ \end{array} ]^{\prime}\), has the sole purpose of investigating some of the implicit assumptions of these procedures.

4 Some Numerical Results

In this section some numerical results are presented. The classical MacRae (1972) problem with one state, one control and two periods, extensively used in the control literature, has been ‘robustized’ in Tucci (2006) to compare robust control with tvp-control. The robust version of this problem may be restated as: extremize, i.e. maximize with respect to uand minimize with respect to \(\omega_{t + 1}\), the objective functional

subject to

with yt and ut the state and control variable, respectively, qt and rt the penalty weight on the state and control variable and their desired path, respectively, \(w_{t}\) the cross penalty, θ the robustness parameter, β the discount factor, \(\varepsilon_{t + 1}\) an iid random variable with mean zero and variance 1, \(\omega_{t + 1}\) the misspecification process and a, b and c the system parameters. The system parameters are assumed perfectly known and in addition \(\varepsilon_{t + 1} = 0\), \(q_{t} = \beta^{t} q_{0}\), \(r_{t} = \beta^{t} r_{0}\), \(w_{t} = \beta^{t} w_{0}\), \(\beta = .99\) and \(\theta = 100\).

When the parameters areFootnote 30

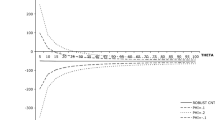

the control selected by the controller interpreting \({\text{c}}\omega_{t + 1}\) as a time-varying intercept following a ‘return to normality’ or ‘mean reverting’ model coincides with robust control, \(u_{0} = 0.10839\), when the transition parameter \(\phi\) in (18) is equal to 0. Alternatively the former is higher (lower) than the latter when the malevolent shocks are assumed positively (negatively) correlated. For instance for \(\phi = 0.1\) tvp-control increases to 0.11245. This is shown in Fig. 1 where the solid line refers to tvp-control and the dashed line to robust control. The intuition behind this result is that, by knowing (or fearing) that today’s malevolent shock is going to make worse tomorrow’s shock, the controller acts proactively to offset some of the negative effects that will materialize tomorrow. Therefore, as an anonymous referee put it, “a positive transition parameter produces a more aggressive interest rate response from the monetary authority and a less aggressive response when it is negative”.

The expected costs associated with these controls, given the malevolent shocks determined by standard robust control theory (i.e. those corresponding to robust controls), are shown in Fig. 2. In this case nature ‘doesn’t care’ about the actual control applied by the regulator in the sense that the malevolent shocks are insensitive to the \(\phi\) used by the regulator assuming a time-varying intercept.Footnote 31 It is apparent that the tvp control derived assuming \(\phi = 0\), i.e.standard robust control, is associated with the maximum of the objective functional.

When the usual malevolent shock generated by the standard robust control framework is contrasted with an hypothetically correlated malevolent control defined asFootnote 32

with \(\left| \rho \right| < 1\) and the shocks \(\mathbf{\omega }_{t}\) and \(\mathbf{\omega }_{t + 1}\) as in (12), some interesting results emerge. As reported in Fig. 3 the objective functional associated with the hypothetically correlated malevolent control reaches its minimum for \(\rho = 0\) at a cost of − .9065.

Therefore both players optimize their objective functional by treating today’s shock (either malevolent or not) as linearly uncorrelated to tomorrow’s shock. This means that, by construction, the most common robust control framework implies that the game at time t is linearly uncorrelated with the game at time t + 1.Footnote 33

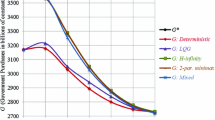

These results are not confined to the simple ‘robustized’ version of the classical MacRae (1972) problem. Indeed, exploiting Tucci’s (2021) results, they apply when unstructured uncertainty à la Hansen and Sargent is used, both in the case of a “probabilistically sophisticated” and a non- “probabilistically sophisticated” decision maker, or when uncertainty is related to unknown structural parameters of the model. For instance when the permanent income model is used, with the parameter estimates in Hansen et al. (2002),Footnote 34 both tvp-control and robust control are equal − 51.1 when \(\phi = 0\) but tvp-control is − 57.8 when \(\phi = - .1\), − 64.5 when \(\phi = - .2\) and so on, as reported in Fig. 4.Footnote 35

Again, the value of the objective functional associated with tvp-control is exactly the same as that for robust control, i.e. 11,434, when the former uses the transition parameter \(\phi = 0\) in (18) and decreases to 10,874 for \(\phi = - .1\), 9193 for \(\phi = - .2\) and so on (Fig. 5).

As in the ‘robustized’ MacRae problem, the hypothetically correlated malevolent control attains its minimum when \(\rho = 0\) at a cost of 11,434 (Fig. 6).

Even in this problem, widely used in robust control literature, both players (the controller and malevolent nature) optimize their objective functional by treating today’s shock (either malevolent or not) as linearly uncorrelated to tomorrow’s shock.

5 Conclusion

Tucci (2006) argues that, unless some prior information is available, the true model in a robust control setting à la Hansen and Sargent is observationally equivalent to a model with a time-varying intercept. Then he shows that, when the same “malevolent shock” is used in both procedures, the robust control for a linear system with an objective functional having desired paths for the states and controls set to zero applied by a “probabilistically sophisticated” decision maker is identical to the optimal control for a linear system with an intercept following a ‘Return to Normality’ model and the same objective functional only when the transition matrix in the law of motion of the parameters is zero.

By explicitly taking into account the welfare loss associated with the various controls, this paper shows for the first time, as far as the author is aware of, that the application of the usual robust control framework in discrete time problems implies that both players (the controller and malevolent nature) optimize their objective functional by treating today’s shock (either malevolent or not) as linearly uncorrelated to tomorrow’s shock. Therefore, by construction, the most common robust control framework implies that the game at time t is linearly uncorrelated with the game at time t + 1. It is then useless to handle situations characterized by correlated malevolent shocks. Were the Fukushima tsunami and the following nuclear disaster uncorrelated? What about the Great Recession of 2008 and the European sovereign debt crisis? Is the worst case shock for 2023 linearly independent of the malevolent shock in 2022? The conclusion of this paper holds not only when unstructured uncertainty à la Hansen and Sargent is used in the case of a “probabilistically sophisticated” decision maker but also, as shown in Tucci (2021), when the decision maker is non- “probabilistically sophisticated” or uncertainty is related to unknown structural parameters of the model as in Giannoni (2002, 2007).

Notes

See, e.g., Giannoni (2002, 2007), Hansen and Sargent (2001, 2003, 2007a, 2007b, 2010, 2016), Hansen et al., (1999, 2002), Onatski and Stock (2002), Rustem (1992, 1994, 1998), Rustem and Howe (2002) and Tetlow and von zur Muehlen (2001a, 2001b). However the use of the minimax approach in control theory goes back to the 60’s as pointed out in Basar and Bernhard (1991, pp. 1–4).

See also Hansen and Sargent (2007a, pp. 14–17).

By probabilistic sophistication is meant that “in comparing utility processes, all that matters are the induced distortions under the approximating model” (Hansen and Sargent, 2007a, p. 406).

See Hansen and Sargent (2007a, Ch. 2 and 7) for the complete discussion of robust control in the time domain.

Matrix C is sometimes called the “volatility matrix” because, given the assumptions on the ε’s, it “determines the covariance matrix CC of random shocks impinging on the system” (Hansen and Sargent, 2007a, p. 29). It is furthermore assumed, see e.g. page 140 in the same reference, that the pair \((\beta^{1/2} {\mathbf{A}},{\mathbf{B}})\) is stabilizable.

When Eq. (2) “generates the data it is as though the errors in … (1) were conditionally distributed as \({\varvec{N}}(\mathbf{\omega }_{t + 1} ,{\mathbf{I}}_{l} )\) rather than as \({\varvec{N}}({\mathbf{0}},{\mathbf{I}}_{l} )\) … (so) we capture the idea that the approximating model is misspecified by allowing the conditional mean of the shock vector in the model that actually generates the data to feedback arbitrarily on the history of the state” (Hansen and Sargent, 2007a, pp. 27).

See Hansen and Sargent (2007a, p. 11).

Alternatively the constraint robust control problem, defined as extremize \(- E_{0} {[}\Sigma_{t = 0}^{\infty } \beta^{t} r({\mathbf{y}}_{t} ,{\mathbf{u}}_{t} ){]}\) subject to (2), (3), can be solved. When \(\eta_{0}\) and \(\theta\) are appropriately related the two “games have equivalent outcomes.” See p. 32 and Chapters 6–8 in Hansen and Sargent (2007a) for details.

As noted in Hansen and Sargent (2007a, p. 40) “this lower bound is associated with the largest set of alternative models, as measured by entropy, against which it is feasible to seek a robust rule … This cutoff value of \(\theta\)… is affiliated with a rule that is robust to the biggest allowable set of misspecifications.” See also Ch. 7 in the same reference and Hansen and Sargent (2001).

See Hansen and Sargent (2007a, p. 35).

Analogously, the constraint robust control problem is sometimes referred to as the constraint game.

This is due to the fact that the “Riccati equation for the optimal linear regulator emerges from first-order conditions alone, and that the first order conditions for (the max–min problem (4) subject to (2)) match those for an ordinary, i.e. non-robust, optimal linear regulator problem with joint control process {u, ω}” (Hansen and Sargent, 2007a, p. 43).

The constant term appearing on the right-hand side and on the left-hand side of the equation have been dropped because they do not affect the solution of the optimization problem. See, e.g., Eqt. (2.5.3) in Hansen and Sargent (2007a, Ch. 2).

See, e.g., Eqs. (7.C.18)–(7.C.19) in Hansen and Sargent (2007a, p. 169).

See, e.g., Eq. (7.C.9) in Hansen and Sargent (2007a, p. 168).

See, e.g., Eqs. (2.5.6) on p. 35 and (7.C.10) on p. 168 in Hansen and Sargent (2007a) where the quantity \(\beta^{ - 1} {\mathbf{P}}_{t + 1}^{*}\) is denoted by D(P).

See, e.g., Theorem 7.6.1 (assumption v) in Hansen and Sargent (2007a, p. 150). The parameter \(\theta\) is closely related to the risk-sensitivity parameter, say \(\sigma\), appearing in intertemporal preferences obtained recursively. Namely, it can be interpreted as minus the inverse of σ. See, e.g., Hansen and Sargent (2007a, pp. 40–41, 45 and 225), Hansen et al. (1999) and the references therein cited.

The first order conditions for problem (10) subject to (8) imply the Riccati equations.

\({\mathbf{P}}_{t} = {\mathbf{Q}}_{t} + {\mathbf{A^{\prime}P}}_{t + 1}^{*} {\mathbf{A}} - \left( {{\mathbf{A^{\prime}P}}_{t + 1}^{*} {\mathbf{B}} + {\mathbf{W}}_{t} } \right)\left( {{\mathbf{R}}_{t} + {\mathbf{B}}^{\prime} {\mathbf{P}}_{t + 1}^{*} {\mathbf{B}}} \right)^{ - 1} \left( {{\mathbf{A^{\prime}P}}_{t + 1}^{*} {\mathbf{B}} + {\mathbf{W}}_{t} } \right)^{\prime }\)

\({\mathbf{p}}_{t} = {\mathbf{q}}_{t} + {\mathbf{A^{\prime}p}}_{t + 1}^{*} - \left( {{\mathbf{A^{\prime}P}}_{t + 1}^{*} {\mathbf{B}} + {\mathbf{W}}_{t} } \right)\left( {{\mathbf{B^{\prime}P}}_{t + 1}^{*} {\mathbf{B}} + {\mathbf{R}}_{t} } \right)^{ - 1} \left( {{\mathbf{B^{\prime}p}}_{t + 1}^{*} + {\mathbf{r}}_{t} } \right)\)

(Hansen and Sargent, 2007a, Sect. 2.7).

See, e.g., Harvey (1981).

When a is a null vector, \({\mathbf{A}}_{1}\) ≡ A. If a is not zero, \({\mathbf{A}}_{1}\) is identical to A except for a column of 0’s associated with the intercept and Ca is identical to the column of A associated with the intercept. It is apparent that, when \(\mathbf{\omega }_{t + 1} \equiv \mathbf{\Phi \nu }_{t}\), the robust control formulation and model (16)–(18) coincide.

When the error term is assumed iid it is equivalent to write the system equations as in (3.5) or as in Tucci (2004, Ch. 2).

As in the previous sections, the constant term appearing on the right-hand side and on the left-hand side of the Bellman equation have been dropped because they do not affect the solution of the optimization problem.

See Tucci (2004, pp. 26–27). It should be stressed that \({\mathbf{K}}_{12,t} = ({\mathbf{A}}^{\prime } {\mathbf{K}}_{11,t + 1} {\mathbf{C}} + {\mathbf{A}}^{\prime } {\mathbf{K}}_{12,t + 1} \mathbf{\Phi }) - ({\mathbf{B}}^{\prime } {\mathbf{K}}_{11,t + 1} {\mathbf{A}}\) \(+ {\mathbf{W^{\prime}}}_{t} )^{\prime} ({\mathbf{R}}_{t} + {\mathbf{B}}^{\prime} {\mathbf{K}}_{11,t + 1} {\mathbf{B}})^{ - 1} {\mathbf{B}}^{\prime} ({\mathbf{K}}_{11,t + 1} {\mathbf{C}} + {\mathbf{K}}_{12,t + 1} \mathbf{\Phi }),\) then even when the terminal condition for \({\mathbf{K}}_{12}\) is a null matrix, this array will not vanish as long as \({\mathbf{K}}_{11}\) is non-zero. Only the last control, i.e. that applied at the ‘final period minus 1’ of the planning horizon, will be independent of the transition matrix characterizing the time-varying intercept.

This is the parameter set originally used in MacRae (1972).

These are the malevolent shocks associated with the robust control, i.e. \(\omega_{1} = 0.02974\) and \(\omega_{2} = 0.01279\).

In a problem with a longer time horizon this malevolent control may be defined as \(\mathbf{\omega }_{t + 1}^{H} = \rho \mathbf{\omega }_{t}^{H} + \mathbf{\omega }_{t + 1}^{{}}\) with \(\mathbf{\omega }_{t + 1}^{{}}\) as in (2.12) and \(\mathbf{\omega }_{1}^{H} \equiv \mathbf{\omega }_{1}^{{}} ,\) i.e. the usual first period robust control.

It is understood that t does not necessarily stand for calendar year. It may indicate a U.S. administration or a central banker term.

See Tucci (2021) for details.

References

Basar, T., & Bernhard, P. (1991). H∞-optimal control and related minimax design problems: A dynamic game approach. Birkhäuser.

Bernhard, P. (2002). Survey of linear quadratic robust control. Macroeconomic Dynamics, 6(1), 19–39.

Giannoni, M. P. (2002). Does model uncertainty justify caution? Robust optimal monetary policy in a forward-looking model. Macroeconomic Dynamics, 6(1), 111–144.

Giannoni, M. P. (2007). Robust optimal policy in a forward-looking model with parameter and shock uncertainty. Journal of Applied Econometrics, 22(1), 179–213.

Hansen, L. P., & Sargent T. J. (2016). Sets of Models and prices of uncertainty. NBER Working Papers 22000. National Bureau of Economic Research, Inc.

Hansen, L. P., & Sargent, T. J. (2001). Acknowledging misspecification in macroeconomic theory. Review of Economic Dynamics, 4(3), 519–535.

Hansen, L. P., & Sargent, T. J. (2010) Wanting robustness in macroeconomics. In B. M. Friedman & M. Woodford (Eds.) Handbook of monetary economics (vol 3B, Ch. 20, pp. 1097–1157). San Diego, CA, and The Netherlands: North-Holland.

Hansen, L. P., & Sargent, T. J. (2003). Robust control of forward-looking models. Journal of Monetary Economics, 50(3), 581–604.

Hansen, L. P., & Sargent, T. J. (2007a). Robustness. Princeton University Press.

Hansen, L. P., & Sargent, T. J. (2007b). Recursive robust estimation and control without commitment. Journal of Economic Theory, 136(1), 1–27.

Hansen, L. P., & Sargent, T. J. (2011). Robustness and ambiguity in continuous time. Journal of Economic Theory, 146(1), 1195–1223.

Hansen, L. P., Sargent, T. J., & Tallerini, T. D. (1999). Robust permanent income and pricing. Review of Economic Studies, 66(4), 873–907.

Hansen, L. P., Sargent, T. J., & Wang, N. E. (2002). Robust permanent income and pricing with filtering. Macroeconomic Dynamics, 6(1), 40–84.

Harvey, A. C. (1981). Time series models. Philip Allan.

Kendrick, D. A. (1981). Stochastic control for economic models. McGraw-Hill.

MacRae, E. C. (1972). Linear decision with experimentation. Annals of Economic and Social Measurement, 1(4), 437–447.

Onatski, A., & Stock, J. H. (2002). Robust monetary policy under model uncertainty in a small model of the U.S. economy. Macroeconomic Dynamics, 6(1), 85–110.

Rustem, B. (1992). A constrained min-max algorithm for rival models of the same economic system. Mathematical Programming, 53(1–3), 279–295.

Rustem, B. (1994). Robust min-max decisions for rival models. In D. A. Belsley (Ed.), Computational technique for econometrics and economic analysis (pp. 109–136). Kluwer Academic Publishers.

Rustem, B. (1998). Algorithms for nonlinear programming and multiple objective decisions. Wiley.

Rustem, B., & Howe, M. A. (2002). Algorithms for worst-case design with applications to risk management. Princeton University Press.

Sims, C. A. (2001). Pitfalls of a Minimax approach to model uncertainty. American Economic Review, 91(2), 51–54.

Tetlow, R., & von zur Muehlen, P. (2001). Simplicity versus optimality: The choice of monetary policy rules when agents must learn. Journal of Economic Dynamics and Control, 25(1–2), 245–279.

Tetlow, R., & von zur Muehlen, P. (2001). Robust monetary policy with misspecified models: Does model uncertainty always call for attenuated policy. Journal of Economic Dynamics and Control, 25(6–7), 911–949.

Tucci, M. P. (2004). The rational expectation hypothesis, time-varying parameters and adaptive control: A promising combination4. Springer.

Tucci, M. P. (2006). Understanding the difference between robust control and optimal control in a linear discrete-time system with time-varying parameters. Computational Economics, 27(4), 533–558.

Tucci, M. P. (2021). How robust is robust control in discrete time? Computational Economics, 58(2), 279–309.

Acknowledgements

The author would like to thank two anonymous referees for carefully reading and commenting on an earlier draft of this paper.

Funding

Open access funding provided by Università degli Studi di Siena within the CRUI-CARE Agreement. The author declares that no funds, grants, or other support were received during the preparation of this manuscript.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The author has no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Tucci, M.P. A Critical Introduction to the Usual Robust Control Framework in Macroeconomics. Comput Econ (2023). https://doi.org/10.1007/s10614-023-10454-8

Accepted:

Published:

DOI: https://doi.org/10.1007/s10614-023-10454-8