Abstract

By applying robust control, the decision maker wants to make good decisions when his model is only a good approximation of the true one. Such decisions are said to be robust to model misspecification. In this paper it is shown that, in many situations relevant in economics, a decision maker applying robust control implicitly assumes that today’s worst-case adverse shock is serially uncorrelated with tomorrow’s worst-case adverse shock. Then, further investigation is needed to see how strong is the ‘immunization against uncertainty’ provided by these popular frameworks.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

A characteristic “feature of most robust control theory”, observes Bernhard (2002, p. 19), “is that the a priori information on the unknown model errors (or signals) is nonprobabilistic in nature, but rather is in terms of sets of possible realizations. Typically, though not always, the errors are bounded in some way…. As a consequence, robust control aims at synthesizing control mechanisms that control in a satisfactory fashion (e.g., stabilize, or bound, an output) a family of models”.Footnote 1 Then “standard control theory tells a decision maker how to make optimal decisions when his model is correct (whereas) robust control theory tells him how to make good decisions when his model approximates a correct one” (Hansen and Sargent 2007a, p. 25). In other words, by applying robust control the decision maker makes good decisions when it is statistically difficult to distinguish between his approximating model and the correct one using a time series of moderate size. “Such decisions are said to be robust to misspecification of the approximating model” (Hansen and Sargent 2007a, p. 27).Footnote 2

Tucci (2006, p. 538) argues that “the true model in Hansen and Sargent (2007a) … is observationally equivalent to a model with a time-varying intercept.” In the sense that, unless some prior information is available, it is impossible to distinguish between the two models by simply observing the output. Then he goes on showing that, when the same worst-case adverse shock and objective functional are used in both procedures, robust control is identical to the optimal control associated with time-varying parameters, or TVP-control, only when the transition matrix in the law of motion of the parameters is zero. He concludes that this decision maker implicitly assumes that today’s worst-case adverse shock is serially uncorrelated with tomorrow’s worst-case adverse shock.

This is a relevant conclusion because it applies to a robust control set up widely used in economics. Moreover, as commonly understood, the robust control choice accounts for all possible kinds of persistence of worst-case shocks, which may take a very general form. Then, it is not immediately obvious why they look linearly independent when the decision maker cares only of the induced distributions under the approximating model and is indifferent between utility processes with identical induced distributions. Namely, when He/She is assumed having preferences defined by using a single constraint, or penalty, on the adverse shocks.

At this stage however, it is unclear if this result holds when a more general framework is considered. For instance, when the decision maker has a different constraint for each type of adverse shocks. This may be the case when He/She is looking for decisions robust to perturbations in a situation where parts of the state vector are unobservable. Then two types of statistical perturbations are considered. One that distorts the adopted model conditional on the knowledge of hidden state and the other that distorts the distribution of the hidden state. This decision maker is sometimes referred to as the non-“probabilistically sophisticated” decision maker and contrasted with the “probabilistically sophisticated” decision maker, who considers only one kind of perturbation, described above.Footnote 3 Alternatively robust control may be applied to situations where the decision maker wants to be “immunized against uncertainty” related to unknown structural parameters as in Giannoni (2002, 2007).Footnote 4

The goal of this paper is to carry on the comparison between TVP-control and robust control for a much larger class of models in discrete-time.Footnote 5 Namely, the case of a non-“probabilistically sophisticated” decision makers who want to make decisions robust with respect to unstructured uncertainty à la Hansen and Sargent, i.e. a nonparametric set of additive mean-distorting model perturbations. And the class of models where uncertainty is related to unknown structural parameters. This is a necessary step to determine if Tucci’s (2006) result holds only in the simplest case or is valid for a much larger class of models. In the former case it may be treated as an interesting special case of limited, or no, practical relevance because it does not affect the most commonly used robust control frameworks. In the latter it is a clear indication that further investigation, outside the scope of the present work, is needed to see how large is the uncertainty set associated with these frameworks. In other words, how strong is the ‘immunization against uncertainty’ provided by the linear-quadratic robust control set up widely used in economics in discrete-time.Footnote 6

The remainder of the paper is organized as follows. Section 2 reviews the simplest robust control problem with unstructured uncertainty à la Hansen and Sargent. An example of a non-“probabilistically sophisticated” decision maker is discussed in Sect. 3. In Sect. 4 both problems are reformulated as linear quadratic tracking control problems where the system equations have a time-varying intercept following a mean reverting, or ‘Return to Normality’, model and the associated TVP-controls are derived. Then the optimizing model for monetary policy used in Giannoni (2002, 2007) is presented (Sect. 5). Section 6 reports some numerical results and the main conclusions are summarized in Sect. 7. For the reader’s sake, the major result of each section is stated as a proposition and its proof confined to the “Appendix”.

2 Robust Control à la Hansen and Sargent: The Standard Case

Hansen and Sargent (2007a, p. 140) consider a decision maker “who has a unique explicitly specified approximating model but concedes that the data might actually be generated by an unknown member of a set of models that surround the approximating model”.Footnote 7 Then the linear system

with yt the n × 1 vector of state variables at time t, ut the m × 1 vector of control variables and εt+1 an l × 1 identically and independently distributed (iid) Gaussian vector process with mean zero and an identity contemporaneous covariance matrix, is viewed as an approximation to the true unknown model

The matrices of coefficients A, B and C are assumed known and y0 given.Footnote 8

In Eq. (2) the vector ωt+1 denotes an unknown l × 1 process, that can feed back in a possibly nonlinear way on the history of y, and is introduced because the iid random process εt+1 can represent only a very limited class of approximation errors. In particular it cannot depict the kind of misspecified dynamics characterizing models with nonlinear and time-dependent feedback of yt+1 on past states.Footnote 9 To express the idea that (1) is a good approximation of (2) the ω’s are restrained by

where E0 denotes mathematical expectation evaluated with respect to model (2) and conditioned on y0 and η0 measures the set of models surrounding the approximating model.Footnote 10

The decision maker’s looking for good decisions over a set of models (2) satisfying (3) is indeed solving a constraint problem or a multiplier problem. The constraint robust control problem is defined asFootnote 11

with r(yt, ut) the one-period loss function, subject to (2)–(3) where η* > η0 and η* measures the largest feasible set of perturbations. The multiplier robust control problem is formalized as

subject to (2) with θ, 0 < θ* < θ ≤ ∞, a penalty parameter restraining the minimizing choice of the {ωt+1} sequence. The “breakdown point” θ*represents a lower bound on θ needed to keep the objective of the two-person zero-sum game convex in ωt+1 and concave in ut.Footnote 12 Both problems can be reinterpreted as two-player zero-sum games where one player is the decision maker maximizing the objective functional by choosing the sequence for u and the other player is a malevolent nature choosing a feedback rule for a model-misspecification process ω to minimize the same criterion function.Footnote 13 For this reason, the constraint and the multiplier robust control problem are also referred to as the constraint and multiplier game, respectively.

Hansen and Sargent (2007a, p. 139) notice that if the parameters η0 and θ are appropriately related the two “games have equivalent outcomes.” Equivalent in the sense that if there exists a solution u*, ω* to the multiplier robust control problem, that u* also solves the constraint robust control problem with \(\eta_{0} = \eta_{0}^{*} =E_{0} [\sum\nolimits_{t = 0}^{\infty } {\beta^{t + 1} {\varvec{\upomega }}_{t + 1}^{{*{\prime }}} {\varvec{\upomega }}_{t + 1}^{*} } ]\).Footnote 14 Then, in Appendix C of Ch. 7, two sets of formulae to compute the robust decision rule are provided and it is pointed out that the Riccati equation for the robust control problem (5) looks like the Riccati equation for an ordinary optimal linear regulator problem (also known as the linear quadratic control problem) with controls \(({\mathbf{u^{\prime}}}_{t} \, {\varvec{\upomega}}^{\prime}_{t + 1} )^{\prime}\) and penalty matrix defined as \(diag({\mathbf{R}}, - \beta \theta {\mathbf{I}}_{l} )\).Footnote 15

Therefore the robust rules for ut and the worst-case shock ωt+1 can be directly computed from the associated ordinary linear regulator problem. In particular, when the one-period loss function r(yt, ut) is specified asFootnote 16

with Q a positive semi-definite matrix, R a positive definite matrix, W an n × m array, \({\mathbf{y}}_{t}^{d}\) and \({\mathbf{u}}_{t}^{d}\) the desired values of the states and controls, respectively, for period t, the robust control rule is derived by extremizing, i.e. maximizing with respect to ut and minimizing with respect to ωt+1, the objective functionFootnote 17

with

subject to

whereFootnote 18

and \({\tilde{\mathbf{W}}} = [\begin{array}{*{20}c} {\mathbf{W}} & {\mathbf{O}} \\ \end{array} ]\) with O and 0 null arrays of appropriate dimension.

At this point the following result can be stated:

Proposition 1

Extremizing the objective function (7) subject to (9), with definitions as in (8) and (10), yields the θ-constrained worst-case control for the decision makerFootnote 19

and for the malevolent natureFootnote 20

withFootnote 21

where the “robust” Riccati arrays \({\mathbf{P}}_{t + 1}^{*}\) and \({\mathbf{p}}_{t + 1}^{*}\) are always greater or equal to Pt+1 and pt+1, respectively, because it is assumed that, in the “admissible” region, the parameter θ is large enough to make \((\beta \theta {\mathbf{I}}_{l} - \, {\mathbf{C}}^{\prime} {\mathbf{P}}_{t + 1} {\mathbf{C}} )\) positive definite.Footnote 22 They are equal when θ = ∞.Footnote 23

3 Robust Filtering Without Commitment

The previous section has considered the case where the decision maker is “probabilistically sophisticated” in the sense that He/She is indifferent between utility processes with identical induced distributions. However robust control can be applied also to situations where there are multiple penalty functions (i.e. more than one θ), in other words cases where the decision maker is not “probabilistically sophisticated.” This is the case when the decision maker does not observe parts of the state useful to forecast relevant variables. Then the approximating model includes an ordinary (i.e. non robust) Kalman filter estimator of this hidden portion of the state. To obtain “decision rules that are robust with respect to perturbations of the conditional distributions associated with the approximating model, the decision maker imagines a malevolent agent who perturbs the distribution of future states conditional on the entire state as well as the distribution of the hidden state conditional on the history of signals” (Hansen and Sargent 2007a, p. 383). This is sometimes referred to as the “robust filtering without commitment” problem.

The law of motion for the states in the approximating model is

with

where now the state vector is partitioned into two parts with y1 containing the n1 observed variables and y2 the n2 hidden state variables, with n1 + n2 = n, and ut and εt+1 are as in the previous section.Footnote 24 The decision maker ranks sequences of states and controls according to

with the one-period utility function U defined as

with the matrices Q, R and W as in the previous section and y1,0, the observed portion of the state vector at time 0, given.

Assuming that the decision maker believes that the distribution of the initial value of the unobserved part of the state is \({\mathbf{y}}_{2,0} \sim \varvec{N}({\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,0} ,{\varvec{\Delta }}_{0} )\) and taking into account that y1 is observed, the ordinary Kalman filter gives the projected value of y1,t+1 conditional on all the available information at time t, i.e. \(E\left( {{\mathbf{y}}_{1,t + 1} |I_{t} } \right)\), and the updated value of y2,t+1 conditional on all the available information at time t + 1, namely \({\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t + 1} \equiv E\left( {{\mathbf{y}}_{2,t + 1} |I_{t + 1} } \right)\).Footnote 25 Under the approximating model, y2,t is distributed as \(\varvec{N}({\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t} ,{\varvec{\Delta }}_{t} )\), with \({\varvec{\Delta }}_{t} = E[({\mathbf{y}}_{2,t} - {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t} )({\mathbf{y}}_{2,t} - {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t} )^{\prime}]\), and the mean and variance of the state represent sufficient statistics for the distribution of the unobserved part of the state at time t.Footnote 26 Equation (11) is then rewritten with the system equations for y2 replaced by the associated ordinary Kalman filter updating equation and the law of motion for the observed subvector expressed in terms of the updated estimate of the hidden state and the discrepancy between this value and the true one, i.e.Footnote 27

withFootnote 28

where \({\varvec{\Xi }} ({\varvec{\Delta }}_{t} )= ({\mathbf{A}}_{22} {\varvec{\Delta }}_{t} {\mathbf{A^{\prime}}}_{12} + {\mathbf{C}}_{2} {\mathbf{C^{\prime}}}_{1} )({\mathbf{A}}_{12} {\varvec{\Delta }}_{t} {\mathbf{A^{\prime}}}_{12} + {\mathbf{C}}_{1} {\mathbf{C^{\prime}}}_{1} )^{ - 1} ,{\varvec{\upvarepsilon }}_{t + 1} \sim {\varvec{N}}({\mathbf{0}},{\mathbf{I}}_{l} )\) and \({\mathbf{y}}_{2,t} \sim \varvec{N}({\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t} ,{\varvec{\Delta }}_{t} )\).

In this approximating model appear two random vectors: εt+1 and \({\mathbf{y}}_{2,t} - {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t}\). Let ω1,t and ω2,t represent the perturbation to the distribution of εt+1 and of the hidden state conditional on (\({\mathbf{y}}_{1,t} ,{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t}\)), respectively.Footnote 29 Then the misspecified model is written asFootnote 30

and the associated return function is

where θ1 and θ2 penalize distortions ω1,t and ω2,t, respectively.Footnote 31

When \({\mathbf{y}}_{t}^{d}\) and \({\mathbf{u}}_{t}^{d}\) denote the vectors of desired values of the states and controls, respectively, for period t, Eq. (16) can be rewritten asFootnote 32

whereFootnote 33

\({\tilde{\mathbf{W}}} = \left( {{\mathbf{W}}\quad {\mathbf{M}}^{*} } \right)\) with \({\mathbf{M}}^{*} = [{\mathbf{O}}\quad {\mathbf{Q}}_{2} ]\), O being a null matrix of dimension n × l and Q2 the matrix of dimension n × n2 obtained deleting the first n1 columns of matrix Q in (13), \({\tilde{\mathbf{u}}}_{t}^{d} = \left( {{\mathbf{u}}_{t}^{d\prime } \, {\mathbf{0}}^{\prime } } \right)^{{\prime }}\) and 0 a null (l + n2)-dimensional vector. As stressed in Hansen and Sargent (2007a, p. 389) “assigning different values to θ… lets the decision maker to focus more or less on misspecifications of one or the other of the two distributions being perturbed.”

For the linear quadratic problem at hand, \(({\mathbf{y}}_{1} ,{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2} )\)-contingent distortions ω1,t and ω2,t and the associated robust rule for u can be computed by solving the deterministic, certainty equivalent, problemFootnote 34

subject to

where

and the Gaussian random vectors with mean zero have been dropped as in the previous section.Footnote 35

This result is stated as:

Proposition 2

Extremizing the objective function (18) subject to (19), with definitions as in (17), yields the (θ1, θ2)-constrained worst-case control for the decision makerFootnote 36

In this general case, where the arrays \({\mathbf{E}}_{t}^{*}\) and \({\mathbf{M}}_{t}^{*}\) are not necessarily null matrices, Proposition 2 cannot be written as Proposition 1 with

In any case the following relations

always hold because it is assumed that θ1 and θ2 are large enough to make Θt positive definite.Footnote 37 The equality signs prevail when θ1 = θ2 = ∞.

4 Optimal Control of a Linear System with Time-Varying Parameters

Tucci (2006) argues that the model used by a “probabilistically sophisticated’ decision maker to represent dynamic misspecification, i.e. Equation (2), is observationally equivalent to a model with a time-varying intercept. Namely, unless some prior information is available, it is impossible to distinguish between the two models by simply observing the output. When this intercept is restricted to follow a mean reverting, or ‘Return to Normality’,Footnote 38 model and the symbols are as in Sect. 2, Eq. (2) can be rewritten as

with

where a is the unconditional mean vector of \({\varvec{\upalpha }}_{t + 1} ,\, {\varvec{\Phi }}\) the l × l transition matrix with eigenvalues strictly less than one in absolute value to guarantee stationarity and \({\varvec{\upzeta }}_{t + 1}\) is a Gaussian iid vector process with mean zero and an identity covariance matrix. The matrix A1 is such that \({\mathbf{A}}_{1} {\mathbf{y}}_{t} + {\mathbf{Ca}}\) in (21) is equal to Ayt in (2).Footnote 39 Obviously, the robust control formulation is more general than model (21)–(22) because in (2) the vector ωt+1 can represent a very general, and possibly complicated, process.

Then the approach discussed in Kendrick (1981) and Tucci (2004) can be used to find the set of controls ut which maximizesFootnote 40

where

subject to (21)–(22). This control problem can be solved treating the stochastic parameters as additional state variables. If the same objective functional used in the robust control problem is optimized, the expression in square bracket is identical to the one-period loss function defined in (6).

When the hyper-structural parameters a and Φ are known, the original problem is restated in terms of an augmented state vector zt as: find the controls ut maximizingFootnote 41

subject toFootnote 42

withFootnote 43

and the arrays zt and \({{{\mathbf{f}}({\mathbf{z}}_{t} ,{\mathbf{u}}_{t} )}}\) having dimension n + l, i.e. the number of original states plus the number of stochastic parameters. For this ‘augmented’ control problem the L’s in Eq. (25) are defined as

with \({\mathbf{Q}}_{t}^{*} = \beta^{t} {\mathbf{Q}}^{*} ,{\mathbf{Q}}^{*} = diag\left( {{\mathbf{Q}}, \, - \beta \theta {\mathbf{I}}_{l} } \right),{\mathbf{W}}_{t}^{*} = \beta^{t} [\begin{array}{*{20}c} {{\mathbf{W^{\prime}}}} & {{\mathbf{O^{\prime}}}} \\ \end{array} ]^{\prime}\) and \({\mathbf{R}}_{t} = \beta^{t} {\mathbf{R}}\) and this proposition can be stated:

Proposition 3

Maximizing the objective function (25) subject to (26), with definitions as in (27) and (28), yields a TVP-control equal to

with

when \({\varvec{\upnu }}_{t + 1} \equiv {\varvec{\upomega }}_{t + 1}\). Namely, the same shock is used to determine both robust control and TVP-control.

The quantity \({\mathbf{K}}_{11,t + 1}^{ + }\) in Proposition 3 collapses to the ‘robust’ Riccati matrix \({\mathbf{P}}_{t + 1}^{*}\) in Proposition 1 when \({\mathbf{P}}_{t + 1} = {\mathbf{K}}_{11,t + 1}\) and \({\mathbf{K}}_{12,t + 1}\) is a null matrix. This means that robust is control is insensitive to the true value of Φ appearing in the law of motion for the stochastic parameters. This is due to the fact that when the same objective functional is optimized both in the robust and TVP-control problems, the only difference between the associated Bellman Eqs. is that the former, implicitly, sets \({\mathbf{P}}_{t} = {\mathbf{K}}_{11,t} ,{\mathbf{p}}_{t} = {\mathbf{k}}_{1,t}\) and K12,t, K21,t, K22,t and k2,t equal to null arrays. Therefore, by construction, the control applied by the decision maker who wants to be “robust to misspecifications of the approximating model” implicitly assumes that the ω’s in (2) are serially uncorrelated.

The framework laid out in this section can be used also to study the case of robust control without commitment discussed in Sect. 3. Then, the following result holds:

Proposition 4

Maximizing the objective function (25) subject to (26), with definitions as in (27) when

and the arrays zt and \({{{\mathbf{f}}({\mathbf{z}}_{t} ,{\mathbf{u}}_{t} )}}\) have dimension \(n + (l + n_{2} )\) and as in Eq. (28) with \({\mathbf{Q}}_{t}^{*} = \beta^{t} {\mathbf{Q}}^{*} ,{\mathbf{R}}_{t} = \beta^{t} {\mathbf{R}}\) and \({\mathbf{W}}_{t}^{*} = \beta^{t} {\mathbf{W}}_{{}}^{*}\) where

yields a TVP-control equal to

when the worst-case adverse shock determined in Sect. 3 is used.

The quantities

in Proposition 4 are identical to the corresponding quantities in Proposition 2 when \({\mathbf{K}}_{11,t + 1} \equiv {\mathbf{P}}_{t + 1}\) and \({\mathbf{k}}_{11,t + 1} \equiv {\mathbf{p}}_{t + 1}\) and \({\mathbf{K}}_{12,t + 1}\) is a null matrix.Footnote 44 Therefore even this non “probabilistically sophisticated” decision maker implicitly assumes that ω1,t and ω2,t, i.e. the perturbation to the distribution of εt+1 and of the hidden state conditional on (\({\mathbf{y}}_{1,t} ,{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t}\)), respectively, in (15) are both independent and serially uncorrelated.

Before leaving this section, it is worth it to emphasize two things. First of all, the results in Propositions 3 and 4 do not imply that robust control is implicitly based on a very specialized type of time-varying parameter model or that one of the two approaches is better than the other. Robust control and TVP-control represent two alternative ways of dealing with the problem of not knowing the true model ‘we’ want to control and are generally characterized by different solutions. In general, when the same objective functional and terminal conditions are used, the main difference is due the fact that the former is determined assuming for ωt+1 the worst-case value, whereas the latter is computed using the expected conditional mean of \({\varvec{\upnu }}_{t + 1}\) and taking into account its relationship with next period conditional mean. As a side effect even the Riccati matrices common to the two procedures, named P and p in the robust control case and K11 and k11 in the TVP-case, are different. The use of identical Riccati matrices and of an identical shock in the two alternative approaches, i.e. setting \({\mathbf{K}}_{11,t + 1} \equiv {\mathbf{P}}_{t + 1} ,{\mathbf{k}}_{11,t + 1} \equiv {\mathbf{p}}_{t + 1}\) and \({\varvec{\upnu }}_{t + 1} \equiv {\varvec{\upomega }}_{t + 1}\) or \({\varvec{\upnu }}_{t + 1} \equiv [\begin{array}{*{20}c} {\varvec{\upomega}}^{\prime}_{1,t} & {\varvec{\upomega}}^{\prime}_{2,t} \\ \end{array} ]^{\prime}\), has the sole purpose of investigating some of the implicit assumptions of these procedures.

Secondly the results of this section do not claim that the worst-case adverse shocks are serially uncorrelated or that the perturbation to the distribution of εt+1 and of the hidden state conditional on (\({\mathbf{y}}_{1,t} ,{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t}\)) in (15) are both independent and serially uncorrelated. It simply shows that in all models where the agent is assumed to behave both in a “probabilistically sophisticated” and in a probabilistically ‘unsophisticated’ manner robust control implicitly assumes that these shocks are serially uncorrelated. This follows from the Bellman Equation associated with this type of problem.

5 Robust Control in the Presence of Uncertain Parameters in the Structural Model

The robust control problems discussed in the previous sections deal with unstructured uncertainty à la Hansen and Sargent. However, sometimes robust control is applied to situations where uncertainty is related to unknown structural parameters. Giannoni (2002, 2007) considers an optimizing model for monetary policy. This is a structural forward-looking model where the constant structural parameters are unknown to the policymaker but are known to agents in the private sector. It “is composed of a monetary policy rules and two structural equations—an intertemporal IS equation and an aggregate supply equation—that are based on explicit microeconomic foundations… (namely, they) can be derived as log-linear approximations to equilibrium conditions of an underlying general equilibrium model with sticky prices” (Giannoni 2002, pp. 112–114).Footnote 45

In Giannoni (2007), the demand side of the economy is written asFootnote 46

where Et denotes the expectation formed at time t, xt the output gap, πt the rate of inflation, \(\hat{\iota }_{t}\) the percentage deviation of the nominal interest rate from its constant steady state value, δt a demand shock and ηt an “adverse efficient supply shock”. By output gap is meant the percentage deviation of actual output from its constant steady state value minus the percentage deviation of the efficient rate of output.Footnote 47 The aggregate supply curve takes the formFootnote 48

with μt the percent deviation of the desired markup from steady state,Footnote 49κ a parameter greater than zero and β the discount factor.Footnote 50 As pointed out in Giannoni (2007, p. 187), the parameters σ and ϖ represent “the inverse of the intertemporal elasticity of substitution in private expenditure (and) … the elasticity of each firm’s real marginal cost with respect to its own supply”, respectively.Footnote 51 Finally, it is assumed that the exogenous shocks δt, ηt and μt have zero (unconditional) mean, are independent of the parameters σ, κ and ϖ and follow an AR(1) process,Footnote 52 i.e.

In this model “monetary policy has real effects … because prices do not respond immediately to perturbations … only a fraction … of suppliers may change their prices at the end of each period” (Giannoni 2007, p. 187). The controller determines the optimal monetary policy optimizing the following penalty functionFootnote 53

where λx and λy, both positive, “are weights placed on the stabilization of the output gap and the nominal interest rate and where x* ≥ 0 represents some optimal level of output gap” (Giannoni 2007, p. 189).

Assuming rational expectations, the system (30)–(32) can be rewritten as (1) when \({\mathbf{y}}_{t} = \left( {x_{t} \, \pi_{t} \, \delta_{t} \, \eta_{t} \, \mu_{t} } \right)^{{\prime }}\) and \({\mathbf{u}}_{t} = \hat{\iota }_{t}\). Then matrix A looks like

where

T is the 3 × 3 diagonal matrix on the right hand side of Eq. (32) and O is a null 3 × 2 array, and B is defined as \({\mathbf{B}} = (\begin{array}{*{20}c} {\sigma^{ - 1} } & 0 & 0 & 0 & 0 \\ \end{array} )\). The vector of disturbances \({\varvec{\upvarepsilon }}_{t} = \left( {\varepsilon_{x} \, \varepsilon_{\pi } \, \varepsilon_{\delta } \, \varepsilon_{\eta } \, \varepsilon_{\mu } } \right)^{{\prime }}\) has mean zero and identity covariance matrix and C is appropriately defined. Namely, it is such that \({\mathbf{CC}}^{\prime} = E({\varvec{\upxi }}_{t} {\varvec{\upxi}}^{\prime}_{t} )\) where \({\varvec{\upxi}}^{\prime}_{t} = \left( {\xi_{x} \, \xi_{\pi } \, \xi_{\delta } \, \xi_{\eta } \, \xi_{\mu } } \right)\), with ξx and ξπ the errors associated with the output gap and inflation, respectively, and \(E({\varvec{\upxi }}_{t} ) = {\mathbf{0}},E({\varvec{\upxi }}_{t} {\varvec{\upxi}}^{\prime}_{t} ) =\Sigma\). Similarly, the one-period loss function implicit in (33) can be put in the format (6) when W is a null matrix, \({\mathbf{Q}} = (1 - \beta )diag(\begin{array}{*{20}c} {\lambda_{x} } & 1 & 0 & 0 & 0 \\ \end{array} )\) and \({\mathbf{R}} = (1 - \beta )\lambda_{\iota }\).

In the presence of uncertain parameters, the worst-case parameter vector results in worst-case matrices which can be viewed as the algebraic sum of the ‘baseline case matrices’, A and B, and the ‘worst-case discrepancies’, Aω and Bω.Footnote 54 It follows that the model in the worst case scenario can be written asFootnote 55

where the term in parenthesis on the right-hand side of the second equality sign plays the role of Cωt+1 in Eq. (2). More precisely, the quantity \({\mathbf{A}}_{{\varvec{\upomega }}} {\mathbf{y}}_{t} + {\mathbf{B}}_{{\varvec{\upomega }}} {\mathbf{u}}_{t}\) replaces the worst-case adverse shock used to derive the result in Proposition 1, premultiplied by the volatility matrix C, in a robust control model where uncertainty is à la Hansen and Sargent. Then, robust control for Giannoni’s model case looks like

where \({\mathbf{A}}_{{\mathbf{w}}} = {\mathbf{A}} + {\mathbf{A}}_{{\varvec{\upomega }}} {\text{ and }}{\mathbf{B}}_{{\mathbf{w}}} = {\mathbf{B}} + {\mathbf{B}}_{{\varvec{\upomega }}}\). The same worst-case adverse shock can be used to compute the associated TVP-control.

6 Some Numerical Results

The permanent income model is a popular model in the robust control literature (see, e.g., Hansen and Sargent 2001, 2003, 2007b; Hansen et al. 1999, 2002). It is a linear quadratic stochastic growth model with a habit where a “probabilistically sophisticated” planner values a scalar process s of consumption services according toFootnote 56

with μb a preference parameter governing the curvature of the utility function.Footnote 57 The service s is produced by the scalar consumption process ct via the household technology

where \(\lambda \ge 0,0 < \delta_{h} < 1\) and ht is a stock of households habits given by a geometric weighted average of present and past consumption. Then a linear technology converts an exogenous (scalar) stochastic endowment dtinto consumption or capital, i.e.

where kt and it represent the capital stock and gross investment, respectively, at time t, γ the constant marginal product of capital and δk the depreciation factor for capital. The endowment is specified as the sum of two orthogonal AR(2) components, namely

where d1,t+1 and d2,t+1 are the permanent and transitory component, respectively, and

with \(\varepsilon_{1,t + 1}\) and \(\varepsilon_{2,t + 1}\) as in Sect. 2.Footnote 58

Rewriting (40b) in terms of ct and substituting it into (39a) yields

Then the one-period loss function \((s_{t} - \mu_{b} )^{2}\) in (38) can be expressed as in (6) when \({\mathbf{u}}_{t} = i_{t} ,{\mathbf{u}}_{t}^{d} = 0, \, {\mathbf{y}}_{t} = (\begin{array}{*{20}c} {h_{t - 1} } & {k_{t - 1} } & {d_{t - 1} } & 1 & {d_{t} } & {d_{1,t} } & {d_{1,t - 1} } \\ \end{array} )^{\prime}, \, {\mathbf{y}}_{t}^{d} = { (}\begin{array}{*{20}c} { - {{\mu_{b} } \mathord{\left/ {\vphantom {{\mu_{b} } \lambda }} \right. \kern-0pt} \lambda }} & 0 & 0 & 0 & 0 & 0 & 0 \\ \end{array} )^{\prime}\)Footnote 59, Q = diag(Q†,O2) where

and only the lower portion is reported because the matrix is symmetric, O2is a square null matrix of dimension 2, \({\mathbf{R}} = \left( {1 + \lambda } \right)^{{\mathbf{2}}}\) and \({\mathbf{W}} = (\begin{array}{*{20}c} {w_{1} } & {w_{2} } & 0 & 0 & {w_{5} } & 0 & 0 \\ \end{array} )^{{\prime }}\) with \(w_{1} = (1 + \lambda )\lambda ,w_{2} = - (1 + \lambda )^{2} \gamma\) and \(w_{5} = - (1 + \lambda )^{2}\).

When model misspecification is not ruled out, the equations for the permanent and transitory components of the endowment process are rewritten adding the quantities \(c_{1} \omega_{1,t + 1}\) and \(c_{2} \omega_{2,t + 1}\), respectively. Then problem (5) is solved subject to Eq. (2) with the initial condition y0 given and the matrices of coefficients defined as

and \({\mathbf{B}} = \left( {b_{1} \quad 1\quad 0\quad 0\quad 0\quad 0\quad 0} \right)^{{\prime }}\), where \(\mu_{d}^{*} = \mu_{d} \left( {1 - a_{1} - a_{2} } \right)\) and \(b_{1} = - \left( {1 - \delta_{h} } \right)\).Footnote 60

Using the parameter estimates in Hansen et al. (2002), robust control for the permanent income model is computed for μb = 32 and different values of θ’s.Footnote 61 The initial condition is set at y0 = (100 100 13.7099 1.0 13.7099 0 0)′, and it is assumed a time horizon of 2 periods.Footnote 62 As observed in Hansen and Sargent (2007a, p. 47), a preference for robustness “leads the consumer to engage in a form of precautionary savings that … tilts his consumption profile toward the future relative to what it would be without a concern about misspecification of (the endowment) process.” This is confirmed by the results reported in Table 1 where gross investment, the control variable, increases as θ gets smaller.Footnote 63

When the observationally equivalent model of Sect. 4 is used, the endowment process and its permanent component have time-varying intercepts following a mean reverting model, namely

with \(\mu_{d,t + 1}^{*} = \mu_{d}^{*} + c_{1} \nu_{1,t + 1} + c_{2} \nu_{2,t + 1}\) and \(\mu_{d1,t + 1}^{*} = c_{1} \nu_{1,t + 1}\) with \(\nu_{i,t + 1} \equiv \varepsilon_{i,t + 1} + \omega_{i,t + 1}\) for i = 1, 2. Then the time-invariant portion of the intercepts can be interpreted asFootnote 64

and the stochastic component takes the form

In this case, setting \(\varepsilon_{i,t + 1} = 0\) and \(\nu_{i,t + 1} \equiv \omega_{i,t + 1}\) for i = 1, 2, the results reported in Sect. 4 hold.

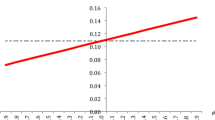

The relationship between the value of Φ and the optimal control at various θ’s is shown in Fig. 1 where Φ = ϕI and several values of ϕ are used. As shown in Sect. 4, the TVP-control derived assuming that the intercept follows a ‘Return to Normality’ model and ϕ = 0 is identical to robust control when the same malevolent shocks are used. On the other hand, knowing that tomorrow’s shocks are negatively correlated with today’s ones would make the household, facing a negative ‘malevolent nature’ shock, to save less for a given β. For instance, when θ = 100, savings decrease from − 51.1894 at ϕ = 0 to − 57.7563 at ϕ = −.1. Then the controls at the various θ’s associated with negative ϕ’s are always below the corresponding robust controls and they go farther and farther from them as the absolute value of ϕ increases. The opposite occurs for positive values of ϕ. Again, the line farther from the ‘robust control line’ is that associated with a higher absolute value of ϕ.

A meaningful example of robust control applied to situations where uncertainty is related to unknown structural parameters of the model has been discussed in Sect. 5. When the parameter values are as in Giannoni (2007, pp. 189–191 and 200) both for the baseline case and for the worst case, the matrices in (36) look likeFootnote 65

and

withFootnote 66

Setting the initial condition y0 = (.03 .05 1.0 0 .01)′,Footnote 67 the desired path for the output gap equal to .01 and the parameters in the penalty matrices equal to λx = .0483, λt = .2364 and β = .99 yields the results in Table 2, for a time horizon of 2 periods.Footnote 68 In this example, robust control is more active than that associated with the familiar linear regulator problem (or quadratic linear problem) and it is identical to the TVP-control when the transition matrix Φ is equal to zero. For this problem specification, the TVP-control is higher than robust control when Φ is positive. The opposite is true for negative values of Φ. As already noticed the difference between the two controls gets larger and larger as Φ gets farther from the null matrix.

7 Conclusion

In this paper a robust control problem with unstructured uncertainty à la Hansen and Sargent, i.e. uncertainty that takes the form of a nonparametric set of additive mean-distorting model perturbations, and a decision maker who cares only of the induced distributions under the approximating model has been introduced. Then the more complicated case of a decision maker with a different constraint for each type of adverse shocks has been discussed. At this point both problems have been reformulated as linear quadratic tracking control problems where the system equations have a time-varying intercept following a mean reverting, or ‘Return to Normality’, model. By comparing the robust control solution with the associated TVP-control, when the same worst-case adverse shock and objective functional are used in both procedures, it is shown that in all these cases a decision maker who wants to be robust against misspecification of the approximating model implicitly assumes that today’s worst-case adverse shock is serially uncorrelated with tomorrow’s worst-case adverse shock. Moreover, the same result holds when uncertainty is not unstructured but is related with unknown structural parameters of the model.

This is a relevant conclusion because it applies to a large set of popular robust control frameworks in economics. As commonly understood, the robust control choice accounts for all possible kinds of persistence of malevolent shocks, which may take a much more general form than the VAR(1) assumed in these pages. Then, it is not immediately obvious why they look linearly independent in this set of models. The fact that the transition matrix does not appear in the relevant expression does not necessarily mean that the decision maker does not contemplate very persistent model misspecification shocks. For instance, the robust control in the worst case may not depend upon the transition matrix simply because the persistence of the misspecification shock does not affect the worst case. In other words, the robust decision maker accounts for the possible persistence of the misspecification shocks, and that persistence may affect the evolution of the control variables in other equilibria, but it happens that transition matrix does not play a role in the worst-case equilibrium. While for many possible “models”, these misspecification shocks may be very persistent, such models happen to result in lower welfare losses than the worst-case model. These are some of the aspects that need to be further investigated in order to assess more precisely how strong is the ‘immunization against uncertainty’ provided by the linear-quadratic robust control frameworks widely used in economics in discrete-time.

Notes

Robust control has been a very popular area of research in economics in the last two decades and shows no sign of fatigue. See, e.g., Giannoni (2002, 2007), Hansen and Sargent (2001, 2003, 2007a, 2007b), Hansen et al. (1999, 2002), Onatski and Stock (2002), Rustem (1992, 1994, 1998), Rustem and Howe (2002) and Tetlow and von zur Muehlen (2001a, b). However the use of the minimax approach in control theory goes back to the 60’s as pointed out in Basar and Bernhard (1991, pp. 1–4).

For a general discussion of the robust control methodology see Ben-Tal et al. (2009).

See, e.g., Hansen and Sargent (2007a, section 19.3).

The reasons that may lead to prefer this formulation are discussed in Giannoni (2007, p. 182).

See de Klerk et al. (2019) for a discussion of distributionally robust optimization techniques.

See Hansen and Sargent (2007a, Ch. 2 and 7) for the complete discussion of robust control in the time domain.

It is assumed, see e.g. page 140 in Hansen and Sargent (2007a), that the pair \(\left( {\sqrt \beta {\mathbf{A}},{\mathbf{B}}} \right)\) is stabilizable, i.e. the eigenvalues of A − BFt, where Ft is the ‘feedback’ matrix (i.e. ut = − Ftyt), have absolute values strictly less than \({1 \mathord{\left/ {\vphantom {1 {\sqrt \beta }}} \right. \kern-0pt} {\sqrt \beta }}\) where β is a discount factor between 0 and 1. The matrix C is sometimes called the “volatility matrix” because, given the assumptions on the ε’s, it “determines the covariance matrix C′C of random shocks impinging on the system” (p. 29).

See Hansen and Sargent (2007a, p. 26) for details. When Eq. (2) “generates the data it is as though the errors in… (1) were conditionally distributed as \(\varvec{N}({\varvec{\upomega }}_{t + 1} ,{\mathbf{I}}_{l} )\) rather than as \(\varvec{N}({\mathbf{0}},{\mathbf{I}}_{l} )\) … (so) we capture the idea that the approximating model is misspecified by allowing the conditional mean of the shock vector in the model that actually generates the data to feedback arbitrarily on the history of the state” (Hansen and Sargent 2007a, p. 27).

See Hansen and Sargent (2007a, p. 11).

See pp. 27–32 and Chapters 6–8 in Hansen and Sargent (2007a) for details.

Hansen and Sargent (2007a, pp. 12–14) argue that entropy is the most appropriate way to measure model misspecification. Then the “lower bound is associated with the largest set of alternative models, as measured by entropy, against which it is feasible to seek a robust rule… This cutoff value of θ… is affiliated with a rule that is robust to the biggest allowable set of misspecifications” (Hansen and Sargent 2007a, p. 40). See also Ch. 7 in the same reference and Hansen and Sargent (2001) for a further discussion of the restrictions on the robustness parameter θ.

See Hansen and Sargent (2007a, p. 35).

See Hansen and Sargent (2007a, pp. 159–160).

This is due to the fact that the “Riccati equation for the optimal linear regulator emerges from first-order conditions alone, and that the first order conditions for [the max–min problem (5) subject to (2)] match those for an ordinary, i.e. non-robust, optimal linear regulator problem with joint control process {ut, ωt+1}” (Hansen and Sargent 2007a, p. 43).

This is a minor generalization of the case discussed in Hansen and Sargent (2007a, Ch. 2 and 7) where the desired values for the states and controls are 0 and there are no cross products between states and controls in the objective function. See their Ch. 4 and pages 167–168 for a transformation of the control problem that eliminates cross products between states and controls in the objective function.

The penalty matrix \({\tilde{\mathbf{R}}}\) implies that each component of the vector process ωt+1 is penalized in the same way.

See, e.g., Equations (7.C.18)–(7.C.19) in Hansen and Sargent (2007a, p. 169). As suggested on page 35 of the same reference Eq. (2.11) “can be represented as”

\(- {\mathbf{y^{\prime}}}_{t} {\mathbf{P}}_{t} {\mathbf{y}}_{t} - 2{\mathbf{y^{\prime}}}_{t} {\mathbf{p}}_{t} = \mathop {\hbox{max} }\limits_{{\mathbf{u}}} - \left[ {{\mathbf{y^{\prime}}}_{t} {\mathbf{Q}}_{t} {\mathbf{y}}_{t} } \right. + {\mathbf{u^{\prime}}}_{t} {\mathbf{R}}_{t} {\mathbf{u}}_{t} + 2{\mathbf{y^{\prime}}}_{t} {\mathbf{W}}_{t} {\mathbf{u}}_{t} + 2{\mathbf{y^{\prime}}}_{t} {\mathbf{q}}_{t} + \left. {2{\mathbf{u^{\prime}}}_{t} {\mathbf{r}}_{t} + {\mathbf{y^{\prime}}}_{t + 1} {\mathbf{P}}_{t + 1}^{*} {\mathbf{y}}_{t + 1} + 2{\mathbf{y^{\prime}}}_{t + 1} {\mathbf{p}}_{t + 1}^{*} } \right]\)

subject to the approximating model (1) instead of the distorted model (2).

See, e.g., Equation (7.C.9) in Hansen and Sargent (2007a, p. 168). As pointed out on page 139 of the same work, these two-player zero-sum dynamic games have identical outcomes both when the u-player chooses before the ω-player, at time 0 or in each period t ≥ 0, and vice versa.

See, e.g., Equations (2.5.6) on p. 35 and (7.C.10) on p. 168 in Hansen and Sargent (2007a) where the quantity \(\beta^{ - 1} {\mathbf{P}}_{t + 1}^{*}\) is denoted by D(P).

See, e.g., Theorem 7.6.1 (assumption v) in Hansen and Sargent (2007a, p. 150).

The parameter θ is closely related to the risk-sensitivity parameter, say σ, appearing in intertemporal preferences obtained recursively. Namely, it can be interpreted as minus the inverse of σ. See, e.g., Hansen and Sargent (2007a, pp. 40–41, 45 and 225), Hansen et al. (1999) and the references therein cited.

In Ch. 18, Hansen and Sargent (2007a) consider the general case where at time t + 1 the decision maker observes a vector s that includes y1 and possibly other signals about the hidden subset of the state. The law of motion relating these signals with the states and controls is written as \({\mathbf{s}}_{t + 1} = {\mathbf{D}}_{1} {\mathbf{y}}_{1,t} + {\mathbf{D}}_{2} {\mathbf{y}}_{2,t} + {\mathbf{Hu}}_{t} + {\mathbf{G}}{\varvec{\upvarepsilon }}_{t + 1}\) and the relationship between y1 and s is \({\mathbf{y}}_{1,t + 1} = {\varvec{\Pi }}_{{\mathbf{s}}} {\mathbf{s}}_{t + 1} + {\varvec{\Pi }}_{{{\mathbf{y}}_{1} }} {\mathbf{y}}_{1,t} + {\varvec{\Pi }}_{{\mathbf{u}}} {\mathbf{u}}_{t}\). Then the arrays in (11) are defined as \({\mathbf{A}}_{11} = {\varvec{\Pi }}_{{\mathbf{s}}} {\mathbf{D}}_{1} + {\varvec{\Pi }}_{{{\mathbf{y}}_{1} }} ,{\mathbf{A}}_{12} = {\varvec{\Pi }}_{{\mathbf{s}}} {\mathbf{D}}_{2} ,{\mathbf{B}}_{1} = {\varvec{\Pi }}_{{\mathbf{s}}} {\mathbf{H}} + {\varvec{\Pi }}_{{\mathbf{u}}}\) and \({\mathbf{C}}_{1} = {\varvec{\Pi }}_{{\mathbf{s}}} {\mathbf{G}}\). When the vector s is simply y1, the matrices \({\varvec{\Pi }}_{{{\mathbf{y}}_{1} }}\) and \({\varvec{\Pi }}_{{\mathbf{u}}}\) are null, \({\varvec{\Pi }}_{{\mathbf{s}}} = {\mathbf{I}}\) and \({\mathbf{A}}_{11} \equiv {\mathbf{D}}_{1} ,\,{\mathbf{A}}_{12} \equiv {\mathbf{D}}_{2} ,\,{\mathbf{B}}_{1} \equiv {\mathbf{H}}\) and \({\mathbf{C}}_{1} \equiv {\mathbf{G}}\).

See Hansen and Sargent (2007a, p. 386). As well known, see e.g. Hamilton, Eq. 13.2.13, the updating value for y2,t+1 can be written as

\(\begin{aligned} E({\mathbf{y}}_{2,t + 1} |I_{t + 1} ) & = E({\mathbf{y}}_{2,t + 1} |I_{t} ) + \{ E[({\mathbf{y}}_{2,t + 1} - E({\mathbf{y}}_{2,t + 1} |I_{t} ))({\mathbf{s}}_{t + 1} - E({\mathbf{s}}_{t + 1} |I_{t} ))^{\prime}]\} \\ & \quad \times \{ E[({\mathbf{s}}_{t + 1} - E({\mathbf{s}}_{t + 1} |I_{t} ))({\mathbf{s}}_{t + 1} - E({\mathbf{s}}_{t + 1} |I_{t} ))^{\prime}]\}^{ - 1} ({\mathbf{s}}_{t + 1} - E({\mathbf{s}}_{t + 1} |I_{t} )) \\ \end{aligned}\)

when s is the vector of observed signals.

In this case, the equation for updating the covariance estimate is \({\varvec{\Delta }}_{t + 1} = {\mathbf{A}}_{22} {\varvec{\Delta }}_{t} {\mathbf{A^{\prime}}}_{22} + {\mathbf{C}}_{2} {\mathbf{C^{\prime}}}_{2} - ({\mathbf{A}}_{22} {\varvec{\Delta }}_{t} {\mathbf{A^{\prime}}}_{12} + {\mathbf{C}}_{2} {\mathbf{C^{\prime}}}_{1} )({\mathbf{A}}_{12} {\varvec{\Delta }}_{t} {\mathbf{A^{\prime}}}_{12} + {\mathbf{C}}_{1} {\mathbf{C^{\prime}}}_{1} )^{ - 1} ({\mathbf{A}}_{22} {\varvec{\Delta }}_{t} {\mathbf{A^{\prime}}}_{12} + {\mathbf{C}}_{2} {\mathbf{C^{\prime}}}_{1} )^{\prime}\). As pointed out in Hansen and Sargent (2007a, p. 387) “Δt evolves exogenously with respect to… \(({\mathbf{y}}_{1} ,{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2} )\) so that given an initial condition Δ0 a path \(\{ {\varvec{\Delta }}_{t} \}_{t = 0}^{\infty }\) can be computed before observing anything else.”.

This equation corresponds to the first two rows of Eq. (18.2.7) in Hansen and Sargent (2007a, p. 387). As pointed out in footnote 5 on page 386 of the same reference, in the case of robust filtering with commitment the “approximate model… (does) not include the law of motion for an estimate of the hidden state induced by applying the ordinary Kalman Filter”.

In the general case discussed in footnote 24 the second block in \({\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{C} }}_{1} ({\varvec{\Delta }}_{t} )\) and \({\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{C} }}_{2} ({\varvec{\Delta }}_{t} )\) is \({\varvec{\Xi }}({\varvec{\Delta }}_{t} ){\mathbf{G}}\) and \({\varvec{\Xi }}({\varvec{\Delta }}_{t} ){\mathbf{D}}_{2}\), respectively, with \({\varvec{\Xi }}({\varvec{\Delta }}_{t} ) = ({\mathbf{A}}_{22} {\varvec{\Delta }}_{t} {\mathbf{D^{\prime}}}_{2} + {\mathbf{C}}_{2} {\mathbf{G^{\prime}}})({\mathbf{D}}_{2} {\varvec{\Delta }}_{t} {\mathbf{D^{\prime}}}_{2} + {\mathbf{GG^{\prime}}})^{ - 1}\) and \({\varvec{\Delta }}_{t + 1} = {\mathbf{A}}_{22} {\varvec{\Delta }}_{t} {\mathbf{A^{\prime}}}_{22} + {\mathbf{C}}_{2} {\mathbf{C^{\prime}}}_{2} - {\varvec{\Xi }}({\varvec{\Delta }}_{t} )({\mathbf{A}}_{22} {\varvec{\Delta }}_{t} {\mathbf{D^{\prime}}}_{2} + {\mathbf{C}}_{2} {\mathbf{G^{\prime}}})\).

This is as though \({\varvec{\upvarepsilon }}_{t + 1}\) and \({\mathbf{y}}_{2,t} - {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t}\), conditionally on \(\left( {{\mathbf{y}}_{1,t} ,{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2,t} } \right)\), are distributed as \(\varvec{N}({\varvec{\upomega }}_{1,t} ,{\mathbf{I}}_{l} )\) and \(\varvec{N}({\varvec{\upomega }}_{2,t} ,{\varvec{\Delta }}_{t} )\), respectively, rather than as \(\varvec{N}({\mathbf{0}},{\mathbf{I}}_{l} )\) and \(\varvec{N}({\mathbf{0}},{\varvec{\Delta }}_{t} )\), respectively, as in the approximating model. See footnote 9 above.

See Hansen and Sargent (2007a, p. 390).

As underlined in Hansen and Sargent (2007a, p. 387) in the case of robust filtering with commitment the “benchmark model… (is repeatedly modified because) past distortions alter the current period reference model.” On the other hand when applying robust filtering without commitment “each period the decision maker retains the same original benchmark model. By itself this diminishes the impact of robust filtering.” They suggest to let “θ2 to be smaller than θ1 thereby giving the current period minimizing agent more flexibility to distort the distribution of the current hidden state.”.

The penalty matrix Q corresponds to Π22 in Hansen and Sargent’s (2007a, Ch. 18) notation, \({\tilde{\mathbf{R}}}\) to Π11 with the blocks in the second row and column appearing in the third row and column and vice versa and \({\tilde{\mathbf{W}}}\) to Π21 with the second and third column inverted.

“In the special case that the decision maker conditions on an infinite history of signals and in which Δt has converged we can set \({\varvec{\Delta }}_{t + 1} = {\varvec{\Delta }}_{t}\)” (Hansen and Sargent 2007a, p. 301). Then the matrices \({\tilde{\mathbf{R}}}({\varvec{\Delta }}_{t} )\) and \({\varvec{\Delta }}_{{\varvec{\upomega }}} ({\varvec{\Delta }}_{t} )\) can be simply denoted by \({\tilde{\mathbf{R}}}\) and \({\varvec{\Delta }}_{{\varvec{\upomega }}}\), respectively, and are constant over time.

The modified certainty equivalence principle discussed in Hansen and Sargent (2007a, Ch. 2) guarantees that omitting these terms does not affect the computations of ω1,t and ω2,t. See also footnote 8 in Ch. 18 of the same reference. Finally it should be stressed that problem (18)–(19) allows to compute the decision rule u and the distortion ω2,t that solves the general ‘misspecification problem’ stated in Eq. (18.2.12) of the same chapter but it does not provide the distortion ω1,t conditional on \(({\mathbf{y}}_{1} ,{\mathbf{y}}_{2} ,{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{2} )\). This computation requires additional steps that go beyond the scope of the present work (Hansen and Sargent 2007a, Sect. 18.2.9).

See, e.g., Equations (7.C.18)–(7.C.19) in Hansen and Sargent (2007a, p. 169). As suggested on page 35 of the same reference Eq. (2.11) “can be represented as”

\(- {\mathbf{y^{\prime}}}_{t} {\mathbf{P}}_{t} {\mathbf{y}}_{t} - 2{\mathbf{y^{\prime}}}_{t} {\mathbf{p}}_{t} = \mathop {\hbox{max} }\limits_{{\mathbf{u}}} - \left[ {{\mathbf{y^{\prime}}}_{t} {\mathbf{Q}}_{t} {\mathbf{y}}_{t} } \right. + {\mathbf{u^{\prime}}}_{t} {\mathbf{R}}_{t} {\mathbf{u}}_{t} + 2{\mathbf{y^{\prime}}}_{t} {\mathbf{W}}_{t} {\mathbf{u}}_{t} + 2{\mathbf{y^{\prime}}}_{t} {\mathbf{q}}_{t} + \left. {2{\mathbf{u^{\prime}}}_{t} {\mathbf{r}}_{t} + {\mathbf{y^{\prime}}}_{t + 1} {\mathbf{P}}_{t + 1}^{*} {\mathbf{y}}_{t + 1} + 2{\mathbf{y^{\prime}}}_{t + 1} {\mathbf{p}}_{t + 1}^{*} } \right]\)

subject to the approximating model (1) instead of the distorted model (2).

See Eq. 18.2.19 in Hansen and Sargent (2007a, p. 391).

See, e.g., Harvey (1981).

When a is a null vector, A1 ≡ A. If a is not zero, A1 is identical to A except for a column of 0’s associated with the intercept and Ca is identical to the column of A associated with the intercept.

See Giannoni (2002, pp. 113–115) for an intuitive description of a simplified version of this model.

Giannoni (2007, p. 187) defines the efficient rate of output as “the equilibrium rate of output that would obtain in the absence of price rigidities and market power”.

Giannoni (2007, p. 188) calls μt “the inefficient supply shock … since it represents a perturbation to the natural rate of output that is not efficient.”.

As noticed in Giannoni (2002, p. 114) “κ, which is the slope of the short run aggregate supply curve, can be interpreted as a measure of the speed of price adjustment. Finally β… (is) the discount factor of the price setters … (and) is supposed to be the same as the discount factor of the representative household.”.

In this model the opposite of σ is the slope of the intertemporal IS curve.

See also footnote 10 in Giannoni (2007, p. 187).

This corresponds to Eq. (18) in Giannoni (2007, p. 189). As explained on page 199 of the same reference, it is assumed “that the preference parameters of the policymaker … [in Eq. (33)] are known to the policymaker and are kept fixed regardless of the values of the structural parameters”. For a discussion of the relationship between the parameters in the objective functional and those in the underlying structural model see also footnote 27 on that page.

From (17) follows that the worst case in Giannoni’s case corresponds to the worst case in Hansen and Sargent’s approach when nature ignores the desired paths and \({\mathbf{A}}_{{\varvec{\upomega }}} = {\mathbf{C}}(\beta \theta {\mathbf{I}}_{l} - {\mathbf{C}}^{\prime} {\mathbf{P}}_{t + 1} {\mathbf{C}})^{ - 1} {\mathbf{C}}^{\prime} {\mathbf{P}}_{t + 1} {\mathbf{A}},{\mathbf{B}}_{{\varvec{\upomega }}} = {\mathbf{C}}(\beta \theta {\mathbf{I}}_{l} - {\mathbf{C}}^{\prime} {\mathbf{P}}_{t + 1} {\mathbf{C}})^{ - 1} {\mathbf{C}}^{\prime} {\mathbf{P}}_{t + 1} {\mathbf{B}}\). It is then clear the relationship between the robustness parameter θ and the size of the confidence interval underlying \({\mathbf{A}}_{{\varvec{\upomega }}} {\text{ and }}{\mathbf{B}}_{{\varvec{\upomega }}}\).

This discussion draws heavily on Hansen et al. (2002, Sect. 4). The notation used in the presentation of this model is kept as close as possible to that used in the cited reference.

See also pp. 47–53, 320–321 and Ch. 10 in Hansen and Sargent (2007a) for a clear discussion of the main features of this model. Hall (1978), Campbell (1987), Heaton (1993) and Hansen et al. (1991) have applied versions of this model to aggregate U.S. time series data on consumption and investment. Aiyagari et al. (2002) discuss the connection between the permanent income consumer and Barro’s (1979) model of tax smoothing.

Solving (40a) for it and substituting it into (40b) yields \(c_{t} + k_{t} = Rk_{t - 1} + d_{t}\) with \(R \equiv \delta_{k} + \gamma\) the “physical gross return on capital, taking into account that capital depreciates over time” (Hansen and Sargent 2007a, p. 226). This quantity coincides with the gross return on a one-period risk-free asset in the Hansen et al. (1999) model as noticed in fn. 9 of the cited reference.

As pointed out in Hansen et al. (2002, Sect. 4), although \(d_{2,t + 1}\) is not explicitly included in the state vector it can be readily derived from the knowledge of dt+1 and d1,t+1.

As reported in Hansen et al. (2002, Table 1) β = .9971, λ = 2.4433, δh = .6817, μd = 13.7099, α1 = .8131, α2 = .1888, ϕ1 = .9978, ϕ2 = .7044, c1 = .1084 and c2 = .1551. In addition the condition \(\beta = \left( {\delta_{k} + \gamma } \right)^{ - 1}\) is imposed for stability reasons, with δk equal to .975, as in Hansen and Sargent (2007a, p. 247). It should be emphasized that the derivations reported in Tucci (2006) are not general enough to handle this case. For this reason in the numerical example carried out in Sect. 7 of that work is set μb = 0.

The goal is to compare the first period control in the various cases. Given the recursive nature of this ‘control game’ considering a 3, 4 or 100 periods time horizon does not change the qualitative results.

In this example, the admissible region for θ is approximately between .7 and infinity. When θ = .7, robust control is above 81 and nature controls are around − 1935 and − 1648. Robust control is − 51.269, with nature controls equal to − .006 and − .01, for θ = 10000.

The implicit values of a1 and a2 are 0 and (μ *d /c2), respectively.

The vector of uncertain parameters \({\mathbf{p}} = \left[ {\sigma \quad \kappa \quad \varpi \quad \rho_{\delta} \quad \rho_{\eta} \quad \rho_{\mu} \quad \nu } \right]^{{\prime }}\) is p = [.1571, .0238, .4729, .35, .35, .35, .5]′ in the baseline case and pw = [.0915, .0308, .2837, .8, -, .8, 1]′ in the worst case, when it is assumed that “uncertainty about the critical structural parameters is given by the approximate 95% intervals” (Giannoni 2007, p. 190). As explained on page 200 of the same reference, in the worst-case ρ “may take any value in the allowed interval [0, 1] since the loss is maximized when … there are no efficient supply shocks.”.

It is assumed that the matrix Σ is block diagonal. The 2 × 2 North-East block has been constructed using the variances of output and inflation reported in Table 2 of Rotemberg and Woodford (1998) on the main diagonal and their covariance, reflecting a correlation of .004 as in Fig. 2 of the cited work, as the off-diagonal element. The 3 × 3 South-West block is identical to that in Giannoni (2007, Tab. 1 on p. 192) for the case ν = 1. The relationship between the models presented in Giannoni and Rotemberg and Woodford is discussed in footnote 13 on pages 189–190 of the same reference.

The initial values for the unexpected demand shock and inefficient supply shock are similar to those used in Giannoni (2007, pp. 194–195).

When the demand and supply shocks are set to 0, i.e. at their unconditional mean level, robust control is .04975 and TVP-control is lower for positive values of Φ. It is equal to .04972 when Φ = diag(.5, .5) and .04977 for Φ = diag(− 5, − .5).

See, e.g., Hansen and Sargent (2007a, p. 33).

When the desired paths for the states and controls are set to 0, \({\mathbf{p}}_{t} = {\mathbf{q}}_{t} = {\mathbf{r}}_{t} = 0\).

This recursion is not necessary when the desired paths are set to 0 as in Hansen and Sargent (2007a, Ch. 2, 7).

See, e.g., Kendrick (1981, Ch. 2).

Again, this recursion is not necessary when the desired paths are set to 0.

See Tucci (2004, pp. 26–27).

References

Aiyagari, S. R., Marcet, A., Sargent, T. J., & Seppälä, J. (2002). Optimal taxation without state-contingent debt. Journal of Political Economy, 110(6), 1220–1254.

Barro, R. J. (1979). On the determination of public debt. Journal of Political Economy, 87(5), 940–971.

Basar, T., & Bernhard, P. (1991). H∞-optimal control and related minimax design problems: A dynamic game approach (2nd ed.). Boston, MA: Birkhäuser.

Ben-Tal, A., El Ghaoui, L., & Nemirovsky, A. (2009). Robust optimization. Princeton, NJ: Princeton University Press.

Bernhard, P. (2002). Survey of linear quadratic robust control. Macroeconomic Dynamics, 6(1), 19–39.

Campbell, J. Y. (1987). Does saving anticipate declining labor income? An alternative test of the permanent income hypothesis. Econometrica, 55(6), 1249–1273.

Clarida, R., Galì, J., & Gertler, M. (1999). The science of monetary policy: A new Keynesian perspective. Journal of Economic Literature, 37(4), 1661–1707.

de Klerk, E., Kuhn, D., & Postek, K. (2019). Distributionally robust optimization with polynomial densities: Theory, models and algorithms. Mathematical Programming, Series B. https://doi.org/10.1007/s10107-019-01429-5.

Giannoni, M. P. (2002). Does model uncertainty justify caution? Robust optimal monetary policy in a forward-looking model. Macroeconomic Dynamics, 6(1), 111–144.

Giannoni, M. P. (2007). Robust optimal policy in a forward-looking model with parameter and shock uncertainty. Journal of Applied Econometrics, 22(1), 179–213.

Hall, R. E. (1978). Stochastic implications of the Life cycle-Permanent income hypothesis: Theory and evidence. Journal of Political Economy, 86(6), 971–987.

Hansen, L. P., Heaton, J., & Sargent, T. J. (1991). Faster methods for solving continuous time recursive linear models of dynamic economies. In L. P. Hansen & T. J. Sargent (Eds.), Rational expectations econometrics (pp. 177–208). Boulder, CO: Westview Press.

Hansen, L. P., & Sargent, T. J. (2001). Acknowledging misspecification in macroeconomic theory. Review of Economic Dynamics, 4(3), 519–535.

Hansen, L. P., & Sargent, T. J. (2003). Robust control of forward-looking models. Journal of Monetary Economics, 50(3), 581–604.

Hansen, L. P., & Sargent, T. J. (2007a). Robustness. Princeton, NJ: Princeton University Press.

Hansen, L. P., & Sargent, T. J. (2007b). Recursive robust estimation and control without commitment. Journal of Economic Theory, 136(1), 1–27.

Hansen, L. P., & Sargent, T. J. (2010). Fragile beliefs and the price of uncertainty. Quantitative Economics, 1(1), 129–162.

Hansen, L. P., & Sargent, T. J. (2016). Sets of models and prices of uncertainty. NBER, Working Paper 22000. http://www.nber.org/papers/w22000.

Hansen, L. P., Sargent, T. J., & Tallerini, T. D. (1999). Robust permanent income and pricing. Review of Economic Studies, 66(4), 873–907.

Hansen, L. P., Sargent, T. J., & Wang, N. E. (2002). Robust permanent income and pricing with filtering. Macroeconomic Dynamics, 6(1), 40–84.

Harvey, A. C. (1981). Time series models. Oxford: Philip Allan.

Heaton, J. (1993). The interaction between time-nonseparable preferences and time aggregation. Econometrica, 61(2), 353–385.

Intriligator, M.D. (1971). Mathematical Optimization and Economic Theory. Prentice Hall, Englewood Cliffs, NJ.

Kendrick, D. A. (1981). Stochastic control for economic models. New York, NY: McGraw-Hill. Second Edition (2002) available at the Author’s web site www.eco.utexas.edu/faculty/Kendrick.

Onatski, A., & Stock, J. H. (2002). Robust monetary policy under model uncertainty in a small model of the U.S. economy. Macroeconomic Dynamics, 6(1), 85–110.

Rotemberg, J. J., & Woodford, M. (1998). An optimization-based econometric framework for the evaluation of monetary policy: Expanded version. National Bureau of Economic Research Technical Working Paper 233.

Rustem, B. (1992). A constrained min–max algorithm for rival models of the same economic system. Mathematical Programming, 53(1–3), 279–295.

Rustem, B. (1994). Robust min–max decisions for rival models. In D. A. Belsley (Ed.), Computational technique for econometrics and economic analysis (pp. 109–136). Dordrecht: Kluwer.

Rustem, B. (1998). Algorithms for nonlinear programming and multiple objective decisions. New York, NY: Wiley.

Rustem, B., & Howe, M. A. (2002). Algorithms for worst-case design with applications to risk management. Princeton, NJ: Princeton University Press.

Savin, I., & Blueschke, D. (2016). Lost in translation: Explicitly solving nonlinear stochastic optimal control problems using the median objective value. Computational Economics, 48(2), 317–338.

Tetlow, R., & von zur Muehlen, P. (2001a). Simplicity versus optimality: The choice of monetary policy rules when agents must learn. Journal of Economic Dynamics and Control, 25(1–2), 245–279.

Tetlow, R., & von zur Muehlen, P. (2001b). Robust monetary policy with misspecified models: does model uncertainty always call for attenuated policy? Journal of Economic Dynamics and Control, 25(6–7), 911–949.

Tucci, M. P. (2004). The rational expectation hypothesis, time-varying parameters and adaptive control: A promising combination?. Dordrecht: Springer.

Tucci, M. P. (2006). Understanding the difference between robust control and optimal control in a linear discrete-time system with time-varying parameters. Computational Economics, 27(4), 533–558.

Woodford, M. (2003). Interest and prices: Foundations of a theory of monetary policy. Princeton, NJ: Princeton University Press.

Acknowledgements

Open access funding provided by Università degli Studi di Siena within the CRUI-CARE Agreement. I thank the Editor of the Journal and two anonimous referees for useful comments and observations that helped me to greatly improve the quality of the present work.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix 1

Proof of Proposition 1

Setting εt+1 = 0 and writing the optimal value of (7) as \(- {\mathbf{y^{\prime}}}_{t} {\mathbf{P}}_{t} {\mathbf{y}}_{t} - 2{\mathbf{y^{\prime}}}_{t} {\mathbf{p}}_{t} ,\)Footnote 69 the Bellman equation looks likeFootnote 70

with \({\mathbf{P}}_{t + 1} = \beta {\mathbf{P}}_{t} ,{\mathbf{Q}}_{t} = \beta^{t} {\mathbf{Q}},{\mathbf{W}}_{t} = \beta^{t} {\mathbf{W}},{\mathbf{R}}_{t} = \beta^{t} {\mathbf{R}},{\mathbf{q}}_{t} = - \left( {{\mathbf{Q}}_{t} {\mathbf{y}}_{t}^{d} + {\mathbf{W}}_{t} {\mathbf{u}}_{t}^{d} } \right)\) and \({\mathbf{r}}_{t} = - ({\mathbf{R}}_{t} {\mathbf{u}}_{t}^{d} + {\mathbf{W^{\prime}}}_{t} {\mathbf{y}}_{t}^{d} )\).Footnote 71 Then expressing the right-hand side of (47) only in terms of yt and \({\tilde{\mathbf{u}}}_{t}\) and extremizing it yields the optimal control for the decision maker

and the optimal control for the malevolent nature

By substituting (49) into (48) and (48) into (49) gives the θ-constrained worst-case controls in Proposition 1.

As noted in Hansen and Sargent (2007a, p. 11), the first order conditions for problem (47) subject to (9) imply the matrix Riccati equation

where \({\tilde{\mathbf{R}}}_{t} = \beta^{t} {\tilde{\mathbf{R}}}\) and \({\tilde{\mathbf{W}}}_{t} = \beta^{t} {\tilde{\mathbf{W}}}\), and the Riccati equation for the p vectorFootnote 72

where \({\tilde{\mathbf{R}}}_{t} = \beta^{t} {\tilde{\mathbf{R}}},{\tilde{\mathbf{W}}}_{t} = \beta^{t} {\tilde{\mathbf{W}}}\) and \({\tilde{\mathbf{r}}}_{t} = (\begin{array}{*{20}c} {{\mathbf{r^{\prime}}}_{t} } & {{\mathbf{0^{\prime}}}_{l} } \\ \end{array} )^{\prime}\). It is straightforward to show that the right-hand side of Eqs. (50)–(51) can be rewritten as

and

respectively. Equations (50) and (51) reduce to the usual Riccati equations of the linear quadratic tracking control problem when ωt+1 = 0.Footnote 73

Appendix 2

Proof of Proposition 2

As in “Appendix 1”, writing the optimal value of (18) as \(- {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y}^{\prime}}}_{t} {\mathbf{P}}_{t} {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{t} - 2{\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y}^{\prime}p}}_{t} ,\) the Bellman equation looks like

with \({\mathbf{P}}_{t + 1} = \beta {\mathbf{P}}_{t} ,{\mathbf{Q}}_{t} = \beta^{t} {\mathbf{Q}},{\mathbf{R}}_{t} = \beta^{t} {\mathbf{R}},{\mathbf{E}}_{t}^{*} = \beta^{t} {\mathbf{E}}^{*} ,{\varvec{\Delta }}_{{{\varvec{\upomega }},t}} ({\varvec{\Delta }}_{t} ) = \beta^{t} {\varvec{\Delta }}_{{\varvec{\upomega }}} ({\varvec{\Delta }}_{t} ),{\mathbf{W}}_{t} = \beta^{t} {\mathbf{W}},{\mathbf{M}}_{t}^{*} = \beta^{t} {\mathbf{M}}^{*} ,{\mathbf{q}}_{t} = - \left( {{\mathbf{Q}}_{t} {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{t}^{d} + {\mathbf{W}}_{t} {\mathbf{u}}_{t}^{d} } \right)\) and \({\mathbf{r}}_{t} = - ({\mathbf{R}}_{t} {\mathbf{u}}_{t}^{d} + {\mathbf{W}}_{t} \prime {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{t}^{d} ).\) Then expressing the right-hand side of (52) only in terms of \({\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{y} }}_{t}\) and \({\tilde{\mathbf{u}}}_{t}\) and extremizing it yields the optimal control for the decision maker, i.e.

and the optimal control for the malevolent nature

with \({\varvec{\Theta }}_{t} = - [{\varvec{\Delta }}_{{{\varvec{\upomega }},t}} ({\varvec{\Delta }}_{t} ) + {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{C} }}({\varvec{\Delta }}_{t} )^{\prime}{\mathbf{P}}_{t + 1} {\mathbf{\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{C} }}({\varvec{\Delta }}_{t} )]\). By substituting (54) into (53) yields the result in Proposition 2.

As in Proposition 1, the first order conditions for problem (18) subject to (19) imply the matrix Riccati equation

where \({\tilde{\mathbf{R}}}_{t} ({\varvec{\Delta }}_{t} ) = \beta^{t} {\tilde{\mathbf{R}}}({\varvec{\Delta }}_{t} )\) and \({\tilde{\mathbf{W}}}_{t} = \beta^{t} {\tilde{\mathbf{W}}}\). The Riccati equation for the p vector looks likeFootnote 74

Both Eqs. (55) and (56) reduce to the usual Riccati equations of the linear quadratic tracking control problem when \({\varvec{\upomega }}_{t} = {\mathbf{0}}\).

Appendix 3

Proof of Proposition 3

By replacing \({\mathbf{A}}_{1} {\mathbf{y}}_{t} + {\mathbf{C}}{\varvec{\upalpha}}_{t + 1}\) with \({\mathbf{Ay}}_{t} + {\mathbf{C}}{\varvec{\upnu}}_{t + 1}\) in (27), treating the vector of stochastic components νt+1 as additional state variables, setting \({\varvec{\upvarepsilon}}_{t + 1}^{*} = {\mathbf{0}}\) and using the deterministic counterpart to (25)–(28), namely

with

the optimal value of (25) can be written as \(- {\mathbf{z^{\prime}}}_{t} {\mathbf{K}}_{t} {\mathbf{z}}_{t} - 2{\mathbf{z^{\prime}}}_{t} {\mathbf{k}}_{t}\) and it satisfies the Bellman equation

with \({\mathbf{K}}_{t + 1} = \beta {\mathbf{K}}_{t}\). Again, expressing the right-hand side of (59) only in terms of zt and ut and maximizing it yields the optimal control in the presence of time-varying parameters (or TVP-control), i.e.Footnote 75

The matrices K11 and K12 in (60) denote the n × n North-West block and the n × l North-East block, respectively, of the Riccati matrix

Consequently they are defined asFootnote 76

and

It is apparent from (62b) that even when K12,t+1 is zero, it is sufficient that the same is not true for K11,t+1, a condition typically met, to have a non zero K12,t matrix. This means that even when the terminal condition for K12 is a null matrix, this array will not vanish in all the time periods in the planning horizon except for the last one. Consequently, only the last control, namely the control applied at the ‘final period minus 1’ of the planning horizon, will be independent of the transition matrix characterizing the time-varying parameters.

The optimal control (60) is independent of the parameter θ which enters the l × l South-East block of K, namely

However it depends upon the vector kt which can be partitioned as \({\mathbf{k}}_{t} = [\begin{array}{*{20}c} {{\mathbf{k^{\prime}}}_{1,t} } & {{\mathbf{k^{\prime}}}_{2,t} } \\ \end{array} ]^{{\prime }}\) with

When \({\varvec{\upnu }}_{t + 1} \equiv {\varvec{\upomega }}_{t + 1}\), i.e. the same shock is used to determine both robust control and TVP-control, the latter is as defined in Proposition 3.

Appendix 4

Proof of Proposition 4

Proceeding as in the proof of Proposition 3 it follows that for this problem the TVP-control looks like

Then setting \({\varvec{\upnu }}_{t + 1} \equiv [\begin{array}{*{20}c} {\varvec{\upomega}}^{\prime}_{1,t} & {\varvec{\upomega}}^{\prime}_{2,t} \\ \end{array} ]^{\prime}\) with ω1,t and ω2,t as in Sect. 3 yields Proposition 4.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Tucci, M.P. How Robust is Robust Control in Discrete Time?. Comput Econ 58, 279–309 (2021). https://doi.org/10.1007/s10614-020-10027-z

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10614-020-10027-z