Abstract

This paper provides a theoretical and numerical investigation of a penalty decomposition scheme for the solution of optimization problems with geometric constraints. In particular, we consider some situations where parts of the constraints are nonconvex and complicated, like cardinality constraints, disjunctive programs, or matrix problems involving rank constraints. By a variable duplication and decomposition strategy, the method presented here explicitly handles these difficult constraints, thus generating iterates which are feasible with respect to them, while the remaining (standard and supposingly simple) constraints are tackled by sequential penalization. Inexact optimization steps are proven sufficient for the resulting algorithm to work, so that it is employable even with difficult objective functions. The current work is therefore a significant generalization of existing papers on penalty decomposition methods. On the other hand, it is related to some recent publications which use an augmented Lagrangian idea to solve optimization problems with geometric constraints. Compared to these methods, the decomposition idea is shown to be numerically superior since it allows much more freedom in the choice of the subproblem solver, and since the number of certain (possibly expensive) projection steps is significantly less. Extensive numerical results on several highly complicated classes of optimization problems in vector and matrix spaces indicate that the current method is indeed very efficient to solve these problems.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

We consider the program

where \( f: {\mathbb {X}} \rightarrow {\mathbb {R}} \) and \( G: {\mathbb {X}} \rightarrow {\mathbb {Y}} \) are continuously differentiable mappings, \( {\mathbb {X}} \) and \( {\mathbb {Y}} \) are Euclidean spaces, i.e., real and finite-dimensional Hilbert spaces, \( C \subseteq {\mathbb {Y}} \) is nonempty, closed, and convex, whereas \( D \subseteq {\mathbb {X}} \) is only assumed to be nonempty and closed (not necessarily convex), representing a possibly complicated set, for which, however, a projection operation is accessible.

This very general setting (analyzed for example in [1]) covers, for example, standard nonlinear programming problems with convex constraints, but also difficult disjunctive programming problems [2,3,4,5], e.g., complementarity [6], vanishing [7], switching [8] and cardinality constrained [9, 10] problems. Matrix optimization problems such as low-rank approximation [11, 12] are also captured by our setting.

In recent years, lots of studies have been published that deal with problems with this structure, where the feasible set consists of the intersection of a collection of analytical constraints and a complicated, irregular set, manageable, for example, by easy projections. In particular, approaches based on decomposition and sequential penalty or augmented Lagrangian methods have been proposed for the convex case [13], the cardinality constrained case [10, 14] and the low-rank approximation case [15]; the recurrent idea in all these works consists of the application of the variable splitting technique [16, 17], to then define a penalty function associated with the differentiable constraints and the additional equality constraint linking the two blocks of variables and finally solve the problem by a sequential penalty method. The optimization of the penalty function is carried out by a two-block alternating minimization scheme [18], which can be run in an exact [14, 15] or inexact [10, 13] fashion.

The aim of this work is to extend the inexact Penalty Decomposition approach to the general setting (1.1) in such a way that it can deal with arbitrary abstract constraints D (at least theoretically, in practice D needs to be such that projections onto this set are easy to compute) and that it allows additional (seemingly simple) constraints given by \( G(x) \in C \). This setting is related to some recent work on (safeguarded) augmented Lagrangian methods, see, in particular, [1], where the resulting subprobems are solved by a projected gradient-type method, which might be inefficient especially for ill-conditioned problems. The decomposition idea used here allows a much wider choice of subproblem solvers, usually resulting in a more efficient solver of the given optimization problem (1.1).

The paper is organized as follows: Sect. 2 summarizes some preliminary concepts and results. In particular, we recall the definitions of an M-stationary point (the counterpart of a KKT point for the general setting from (1.1)), of an AM-stationary point (as a sequential version of M-stationarity) and of an AM-regular point (this being a suitable and relatively weak constraint qualification). Section 3 then presents the Penalty Decomposition (PD) method together with a global convergence theory, assuming that the resulting subproblems can be solved inexactly up to a certain degree. In Sect. 4, we then present a class of inexact alternating minimization methods [18, 19] which, under certain assumptions, are guaranteed to find the desired approximate solution of the subproblems arising in the outer penalty scheme. The remaining part of the paper is then devoted to the implementation of the overall method and corresponding numerical results. To this end, Sect. 5 first discusses several instances of the general setting (1.1) with difficult constraints D where our method can be applied to quite efficiently since projections onto D are simple and/or known analytically (though the latter does not necessarily imply that these projections are easy to compute numerically). In Sect. 6, we then present the results of an extensive numerical testing, where we also compare our method, using different realizations, with the augemented Lagrangian method from [1]. We conclude with some final remarks in Sect. 7.

2 Preliminaries

The Euclidean projection \( P_C: {\mathbb {Y}} \rightarrow {\mathbb {Y}} \) onto the nonempty, closed, and convex set C is defined by

The corresponding distance function \( d_C: {\mathbb {Y}} \rightarrow {\mathbb {R}} \) can then be written as

Note that the distance function is nonsmooth (in general), but the squared distance function

is continuously differentiable everywhere with derivative given by

see [20, Cor. 12.30]. Moreover, projections onto the nonempty and closed set D also exist, but are not necessarily unique. Therefore, we define the (usually set-valued) projection operator \( \Pi _D: {\mathbb {X}} \rightrightarrows {\mathbb {X}} \) by

The corresponding distance function \( \text {dist}_D ( \cdot ) \) is, of course, single-valued again. Furthermore, given a set-valued mapping \( S: {\mathbb {X}} \rightrightarrows {\mathbb {X}} \) on an arbitrary Euclidean space \( {\mathbb {X}} \), we define the outer limit of S at a point \( {\bar{x}} \) by

This allows to define the limiting normal cone at a point \( x \in D \) by

see [21, Sect. 1.1] for further details. Writing

for sequences converging to an element \( {\bar{x}} \in D \) such that the whole sequence belongs to D, the limiting normal cone has the important robustness property

that will be exploited heavily in our subsequent analysis, see [21, Prop. 1.3].

Note that, for D being convex, this limiting normal cone reduces to the standard normal cone from convex analysis, i.e., we have

for any given \( {\bar{x}} \in D \). For points \( {\bar{x}} \not \in D \), we set \( \mathcal {N}_D^{\lim } ( {\bar{x}} ): = \mathcal {N}_D ( {\bar{x}} ):= \emptyset \). For the convex set C, the standard normal cone and the projection operator are related by

see [20, Prop. 6.46].

We next introduce a stationarity condition which generalizes the concept of a KKT point to constrained optimization problems with possibly difficult constraints as given by the set D in our setting (1.1), see [22] for a general discussion and, e.g., [1] for a realization of the specific setting considered here.

Definition 2.1

A feasible point \( {\bar{x}} \in {\mathbb {X}} \) of the optimization problem (1.1) is called an M-stationary point (Mordukhovich-stationary point) of (1.1) if there exists a multiplier \( \lambda \in {\mathbb {Y}} \) such that

Note that this definition coincides with the one of a KKT point if D is convex. The following is a sequential version of M-stationarity.

Definition 2.2

A feasible point \( {\bar{x}} \in \mathbb {X} \) of the optimization problem (1.1) is called an AM-stationary point (asymptotically M-stationary point) of (1.1) if there exist sequences \( \{ x^k \}, \{ \varepsilon ^k \} \subseteq {\mathbb {X}} \) and \( \{ \lambda ^k \}, \{ z^k \} \subseteq {\mathbb {Y}} \) such that \( x^k \rightarrow {\bar{x}} \), \( \varepsilon ^k \rightarrow 0 \), \( z^k \rightarrow 0 \), as well as

for all \( k \in {\mathbb {N}} \).

We stress that this definition implicitly includes that the sequences \( \{ x^k \} \) and \( \{ G(x^k) - z^k \} \) belong to the sets D and C, respectively, since otherwise the corresponding normal cones would be empty. We also note that the previous definition generalizes the related concept of AKKT points introduced for standard nonlinear programs in [23] to our setting with the more difficult constraints. In a similar way, the subsequent regularity conditions are also motivated by related ones from [24], where they were presented for standard nonlinear programs. Their generalizations to our setting can be found in [1, 22], for example.

Every M-stationary point is obviously also AM-stationary, whereas the opposite implication will be guaranteed to hold by a regularity condition that will now be introduced. To this end, let us write

Recall that \( \mathcal {N}_D^{\lim } (x) \) is nonempty if and only if \( x \in D \), which is therefore an implicit requirement for the set \( \mathcal {M} (x,z) \) to be nonempty. Moreover, we consider the set

Note that the auxiliary sequence \( \{ z^k \} \) needs to be introduced since the elements \( G(x^k) \) do not necessarily belong to C, whereas \( x^k \) is supposed to be an element of D.

Definition 2.3

Let \( {\bar{x}} \) be feasible for (1.1). Then \( {\bar{x}} \) is called AM-regular for (1.1) if \( \limsup \limits _{x \rightarrow {\bar{x}}, z \rightarrow 0} \mathcal {M} (x,z) \subseteq \mathcal {M} ({\bar{x}}, 0). \)

Using this terminology, the following statements hold, cf. [22] for further details.

Theorem 2.4

The following statements hold:

-

(a)

Every local minimum of (1.1) is an AM-stationary point.

-

(b)

If \( {\bar{x}} \) is an AM-stationary point satisfying AM-regularity, then \( {\bar{x}} \) is an M-stationary point of (1.1).

-

(c)

Conversely, if for every continuously differentiable function f, the implication

$$\begin{aligned} {\bar{x}} \, \hbox {is an AM-stationary point} \, \Longrightarrow {\bar{x}} \,\hbox { is an M-stationary point} \end{aligned}$$holds for the corresponding optimization problem (1.1), then \( {\bar{x}} \) is AM-regular.

Statement (a) shows that every local minimum of (1.1) is an AM-stationary point even in the absense of any constraint qualification (CQ for short). Hence AM-stationary is a (sequential) first-order optimality condition. In order to guarantee that an AM-stationary point is already an M-stationary point (hence a KKT point in the standard setting of a nonlinear program, say), we require a CQ, namely the AM-regularity condition, cf. Theorem 2.4 (b). The final statement (c) of that result shows that, in a certain sense, AM-regularity is the weakest CQ which implies AM-stationary points to be M-stationary. In fact, this AM-regularity condition turns out to be a fairly weak condition. For example, for standard nonlinear programs, AM-regularity is stronger than the Abadie CQ, but weaker than most of the other well-known CQs like MFCQ (Mangasarian-Fromovitz CQ), CRCQ (constant rank CQ), CPLD (constant positive linear dependence), and RCPLD (relaxed CPLD), to mention at least some of the more prominent ones. We refer the interested reader to [22, 25] and references therein for further details.

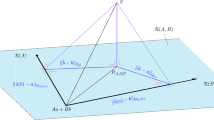

The algorithm, to be described in the following section, is based on the reformulation

of the given optimization problem (1.1). The previous notions of M- and AM-stationarity and AM-regularity can be directly translated to this program by observing that (2.4) can be written in the format of (1.1) as

The counterpart of Theorem 2.4 then also holds for the corresponding (asymptotic) M-stationarity and regularity concepts defined for the formulation (2.4). Note that regularity conditions and constraint qualifications depend on the explicit formulation of a constraint system, hence the corresponding concepts are not necessarily equivalent for the two formulations of our program. We stress, however, that the important notion of an M-stationary point for (2.4) is equivalent to the notion of an M-stationary point for (1.1).

For the sake of completeness, and since this condition will be used explicitly in our convergence analysis, let us write down explicitly the resulting AM-stationarity condition for the reformulated program (2.4): with the above identifications, a feasible point \( {\tilde{x}}^* \) of (2.4) is AM-stationary if there exist sequences \( \{ {\tilde{x}}^k \} \), \( \{ {\tilde{\varepsilon }}^k \} \), and \( \{ {\tilde{\lambda }}^k \} \), \( \{ {\tilde{z}}^k \} \) such that \( {\tilde{x}}^k \rightarrow {\tilde{x}}^* \), \( {\tilde{z}}^k \rightarrow 0 \), \( {\tilde{\varepsilon }}^k \rightarrow 0 \) as well as

for all k. Using the definitions of \( {\tilde{f}}, {\tilde{G}} \) etc., exploiting standard properties of the limiting and standard normal cones (in particular, the Cartesian product rule, cf. [26, Prop. 6.41]), and writing \( {\tilde{x}}^k =: (x^k,y^k), {\tilde{\lambda }}^k =: ( \lambda ^k, \mu ^k) \) as well as \( z^k \) for the first block of \( {\tilde{z}}^k \) (the second block component of \( {\tilde{z}}^k \) turns out to be irrelevant), we see that the two conditions from (2.5) can be rewritten as

3 Algorithm and convergence

The algorithm to be presented here is based on the reformulation (2.4) of the given program (1.1). The idea is to take advantage of the fact that the constraints \( G(x) \in C \) and \( y \in D \) occur in a decomposed way. This formulation allows to develop an alternating direction-type penalty scheme for the solution of the orginal problem (1.1). To this end, let \( \tau > 0 \) be a penalty parameter and define the partial penalty function

Note that \( q_{\tau } \) does not include the potentially difficult constraint \( x \in D \), which we therefore have to deal with explicitly. The general algorithmic scheme that we will investigate here is summarized in Algorithm 1.

Note that Algorithm 1 is a very general scheme for the solution of the reformulated problem (2.4). The main computational burden is in step (S.2). We will see how this step can be realized by an alternating minimization-type iteration in Sect. 4. Here we only note that the computation of the exact minimizer \( y^{k+1} = \text {argmin}_{y \in D} \ q_{\tau _k} (x^{k+1},y) \) can be carried out very easily if projections onto the set D can be computed efficiently (we refer the reader to Sects. 5, 6 for some examples). This follows immediately from the definition of \( q_{\tau _k} \), which implies that \( y^{k+1} \) is characterized by

The remaining part of this section is devoted to the global convergence properties of the general scheme from Algorithm 1. The technique of proof patterns the one used in [1] for an augmented Lagrangian method.

We begin with a feasibility-type result. To this end, recall that all penalty-type methods suffer from the fact that accumulation points may not be feasible for the given optimization problem. The following result shows that such an accumulation point still has a very nice property.

Proposition 3.1

Let \( \{ (x^k,y^k) \} \) be a sequence generated by Algorithm 1 with \( \{ \delta _k \} \) being bounded and \( \{ \tau _k \} \rightarrow \infty \). Then every accumulation point \( ({\bar{x}}, {\bar{y}}) \) of the sequence \( \{ (x^k,y^k) \} \) is an M-stationary point of the feasibility problem

Proof

Let \( \{ (x^{k+1}, y^{k+1}) \}_K \) be a subsequence converging to \( ({\bar{x}}, {\bar{y}}) \). Using the derivative formula of the distance function from (2.1) together with the chain rule, we have, by construction,

and

for all \( k \in {\mathbb {N}} \). Dividing the first equation by \( \tau _k \) and exploiting the cone property in the second inclusion yields

and

for all \( k \in \mathbb {N} \), cf. [26, Thm. 6.12]. Taking the limit \( k \rightarrow _K \infty \), using the continuity of \( \nabla f, G, G', P_C \), and the robustness property (2.2) of the limiting normal cone, we obtain

This shows that \( ( {\bar{x}}, {\bar{y}} ) \) is an M-stationary point of (3.5). \(\square \)

Recall that Algorithm 1 automatically generates iterates \( y^k \) which belong to the set D. The objective function in (3.5) therefore only measures the violation of the constraints \( G(x) \in C \) and \( x - y = 0 \), which is included in the penalty term of \( q_{\tau } \), i.e., (3.5) is a feasibility problem of the decomposed problem (2.4). If \( {\bar{x}} = {\bar{y}} \), then \( {\bar{x}} \) turns out to be an M-stationary point of

which is the feasibility problem of the original problem (1.1). Though Proposition 3.1 obviously does not guarantee that an accumulation point is feasible (either for the original or the decomposed formulation), it guarantees at least a stationarity property, which is the best one can expect in general. Moreover, if the feasible set of (1.1) is nonempty and the function \( \frac{1}{2} \text {dist}_C^2 \big ( G(x) \big ) \) of (3.5) is convex, then every M-stationary point is a global minimum and, hence, a feasible point of (1.1) or (2.4). Note that the square of the above distance function is automatically convex if the constraint \( G(x) \in C \) satisfies standard conditions which imply that this set is convex by itself.

Moreover, one can also define an extended Robinson-type constraint qualification (which boils down to the extended MFCQ condition for standard nonlinear programs) which automatically imply that accumulation points of a sequence generated by Algorithm 1 are feasible, cf. [27, 28] for further details.

Hence, under reasonable assumptions, we can guarantee that accumulation points are automatically feasible for (1.1) or (2.4), whereas, in general, they are at least M-stationary points for the feasibility problem. The following global convergence result therefore assumes that we have a feasible accumulation point and shows that this one is automatically AM-stationary for problem (2.4).

Theorem 3.2

Let \( \{ (x^k,y^k) \} \) be a sequence generated by Algorithm 1 with \( \{ \delta _k \} \rightarrow 0 \) and \( \{ \tau _k \} \rightarrow \infty \), and let \( ({\bar{x}}, {\bar{y}}) \) be an accumulation point of this sequence that is feasible for (2.4). Then \( ( {\bar{x}}, {\bar{y}}) \) is an AM-stationary point of the optimization problem (2.4).

Proof

Let \( \{ (x^{k+1}, y^{k+1}) \}_K \) be a subsequence converging to \( ({\bar{x}}, {\bar{y}}) \). Recall that \( ( {\bar{x}}, {\bar{y}} ) \) is feasible for (2.4), hence \( G( {\bar{x}} ) \in C \) and \( {\bar{x}} = {\bar{y}} \in D \). We further define the sequences

Then setting

we claim that the corresponding four (sub-) sequences \( \{ {\tilde{x}}^{k+1} \}_K = \{ (x^{k+1}, y^{k+1}) \}_K \), \( \{ {\tilde{z}}^{k+1} \} = \{ ( z^{k+1}, 0 ) \}_K \), \( \{ {\tilde{\varepsilon }}^{k+1} \}_K = \{ ( \varepsilon ^{k+1}, 0 ) \}_K \), and \( \{ {\tilde{\lambda }}^{k+1} \}_K = \{ ( \lambda ^{k+1}, \mu ^{k+1} ) \}_K \) satisfy the properties of an AM-stationary point for problem (2.4) as stated at the end of Sect. 2, cf. (2.5) and (2.6). First of all, \( ({\bar{x}}, {\bar{y}}) \) is feasible and \( \{ ( x^{k+1}, y^{k+1} ) \}_K \rightarrow ( {\bar{x}}, {\bar{y}} ) \) by assumption. Furthermore, by definition of \( \varepsilon ^{k+1} \) and the construction of Algorithm 1, we also have

This obviously implies \( \Vert {\tilde{\varepsilon }}^{k+1} \Vert \rightarrow 0 \). Furthermore, the definitions of \( \lambda ^{k+1} \) and \( \mu ^{k+1} \) together with \( 0 \in \tau _k \big ( y^{k+1} - x^{k+1} \big ) + \mathcal {N}_D^{\lim } (y^{k+1}) \) yield

hence the first inclusion in (2.6) holds. To verify the second inclusion, we only have to take a closer look at the first block. By definition of \( \lambda ^{k+1} \) and the relation (2.3) between the projection and the normal cone, we get

where the last identity comes from the definition of \( z^{k+1} \). Finally, we also have \( {\tilde{z}}^{k+1} \rightarrow _K 0 \) since \( z^{k+1} \) satisfies

by the continuity of G and the projection operator \( P_C \) as well as the feasibility of \( {\bar{x}} \). Altogether, this shows that \( ({\bar{x}}, {\bar{y}}) \) is an AM-stationary point of the program (2.4). \(\square \)

Using Theorem 3.2 together with the counterpart of Theorem 2.4 for (2.4) and the fact that the M-stationarity conditions for the two problems (1.1) and (2.4) are equivalent, we directly obtain the following result.

Theorem 3.3

Let \( \{ (x^k,y^k) \} \) be a sequence generated by Algorithm 1 with \( \{ \delta _k \} \rightarrow 0 \) and \( \{ \tau _k \} \rightarrow \infty \), and let \( ({\bar{x}}, {\bar{y}}) \) be an accumulation point of this sequence that is feasible and satisfies AM-regularity for (2.4). Then \( ( {\bar{x}}, {\bar{y}}) \) is an M-stationary point of the optimization problem (2.4), and \( {\bar{x}} \) itself is an M-stationary point of the original problem (1.1).

4 Solution of subproblems by inexact alternating minimization

The Penalty Decomposition approach basically consists in approximately solving the sequence of penalty subproblems at step (S.2) by a two-block decomposition method. The alternating minimization loop can be stopped, at each iteration, as soon as an approximate stationary point of the penalty function w.r.t. the first block of variables x is attained. The instructions of the (inexact) Alternating Minimization loop at a fixed iteration k of the Penalty Decomposition method are detailed in Algorithm 2.

As already pointed out, if we assume that projections onto the set D are easily computable, the update of the second block of variables can be carried out exactly by (3.4).

On the other hand, an exact x-update step (i.e., finding a global minimizer of \(q_{\tau _k}(u,v^\ell )\)) may be prohibitive in most applications. For this reason, the x-variable is only updated by a descent step along a descent direction, with a step size selected by an Armijo-type line search.

Note that the direction \(d_\ell = -H_\ell (\nabla _x q_{\tau _k}(u^\ell ,v^\ell ))\) is certainly a descent direction, since \(H_\ell \) is positive definite, \(\nabla _xq_{\tau _k}(u^\ell ,v^\ell )\ne 0\) and, thus,

The Armijo line search provides a sufficient decrease granting, under suitable assumptions on the sequence of maps \(H_\ell \), the convergence of the entire alternate minimization scheme.

Note that by properly choosing \(H_\ell \) we can retrieve the descent directions employed in most widely employed nonlinear optimization solvers. This point, which we will emphasize again later on, is particularly relevant from the computational point of view.

Throughout this section, we make the following assumption.

Assumption 4.1

f(x) has bounded level sets upon \(\mathbb {X}\), i.e., \(\mathcal {L}_f(\eta ) = \{x\in \mathbb {X}\mid f(x)\le \eta \}\) is bounded for any \(\eta \in \mathbb {R}\).

We then begin by proving that, under Assumption 4.1, the penalty function has bounded level sets for any nonnegative value of the penalty parameter \(\tau \).

Lemma 4.2

The penalty function \(q_{\tau }(x,y)\) has bounded level sets for any \(\tau \ge 0\).

Proof

Consider any \(\eta \in \mathbb {R}\). From Assumption 4.1, the level set \(\mathcal {L}_f(\eta )\) is bounded. Let us consider \(\mathcal {L}_{q_\tau }(\eta )\) for any \(\tau \ge 0\).

Assume by contradiction that \(\mathcal {L}_{q_\tau }(\eta )\) is not bounded, i.e., there exists \(\{(x^t,y^t)\}\) such that \((x^t,y^t)\in \mathcal {L}_{q_\tau }(\eta )\) for all t and \(\Vert (x^t,y^t)\Vert \rightarrow \infty \). Then, either \(\Vert x^t\Vert \rightarrow \infty \) or \(\Vert y^t\Vert \rightarrow \infty \).

If \(\Vert x^t\Vert \rightarrow \infty \), we have \(f(x^t)>\eta \) for t sufficiently large, being \(\mathcal {L}_f(\eta )\) bounded. But then, from the definition of \(q_{\tau }(x,y)\), we have for t sufficiently large \(q_{\tau }(x^{t},y^t)\ge f(x^t)>\eta \), which contradicts \(\{(x^t,y^t)\}\subseteq \mathcal {L}_{q_\tau }(\eta )\).

Thus, \(\Vert y^t\Vert \rightarrow \infty \) while \(\Vert x^t\Vert \) stays bounded. However,

for t sufficiently large, as \(\Vert x^t-y^t\Vert ^2\rightarrow \infty \), \( \text {dist}_C^2 \big ( G(x) \big ) \ge 0 \) and f is bounded having compact level sets. This again is a contradiction, which completes the proof. \(\square \)

It can be seen that step (S.2) of Algorithm 2 is well-defined, i.e., there exists a finite integer j such that \(\beta ^j\) satisfies the acceptability condition (4.1). Moreover the following result can be readily obtained by standard results in nonlinear optimization [29].

Lemma 4.3

Let \(\{ ( u^\ell ,v^\ell ) \}\) be the sequence generated by Algorithm 2. Let \(T\subseteq \{0,1,2,\ldots \}\) be an infinite subset such that

Let \(\{d^\ell \}\) be a sequence of directions such that \(\langle \nabla _x q_{\tau _k}(u^\ell ,v^\ell ),d^\ell \rangle <0\) and assume that \(\Vert d^\ell \Vert \le M\) for some \(M>0\) and for all \(\ell \in T\). If, for any fixed (outer iteration) k, the following equation holds

then we have

Proof

Since, for any \(\ell \), \(\alpha _\ell \) is chosen according to (4.1), we have

Taking the limits for \(\ell \in T\), \(\ell \rightarrow \infty \), we get

where the last inequality comes from the fact that \(\gamma >0\), \(\alpha _\ell \ge 0\) and \(\langle \nabla _x q_{\tau _k}(u^\ell ,v^\ell ),d^\ell \rangle <0\) by assumption. From the hypotheses, we also have that the leftmost limit goes to 0, hence we obtain

Assume, by contradiction, that \(\langle \nabla _x q_{\tau _k}(u^\ell ,v^\ell ),d^\ell \rangle _T \) does not converge to zero. Passing to a subsequence, if necessary, we may assume that \( \lim _{\ell \rightarrow _T \infty } \langle \nabla _x q_{\tau _k}(u^\ell ,v^\ell ),d^\ell \rangle = - \nu \) for some number \( \nu > 0 \). On the other hand, we have \( (u^\ell ,v^\ell )\rightarrow _T(\bar{u},\bar{v})\) by assumption, and \( \{ d^{\ell } \} \) is bounded, so we may also assume that \( \{ d^{\ell } \}_T \rightarrow {\bar{d}} \) for some limit point \( {\bar{d}} \). Altogether, we then have

Exploiting (4.3), we see that \(\alpha _\ell \rightarrow _{T} 0\) holds. Consequently, for all \(\ell \in T\) sufficiently large, we have \(\alpha _\ell <\beta ^0=1\) and thus

By the mean-value theorem, we can write

for some \(z^\ell =u^\ell +\theta _\ell \frac{\alpha _\ell }{\beta } d^\ell \), \(\theta _\ell \in (0,1)\). Subtracting the last two relations and dividing by \(\alpha _\ell /\beta \), we get

On the other hand,

since \(\alpha _\ell \rightarrow _{T}0\) and \(d^\ell \rightarrow \bar{d}\). Taking the limits in the previous inequality, we finally get

which is absurd since \(\gamma \in (0,1)\) and \(\langle \nabla _x q_{\tau _k}(\bar{u},\bar{v}), \bar{d} \rangle =-\nu <0\). \(\square \)

In order to ensure that the sequence generated by the Alternating Minimization scheme properly converges, we need the sequence of directions \(\{d_\ell \}\) to satisfy suitable properties. Here, in particular, we assume that the entire sequence of linear mappings \(\{H_\ell \}\) satisfies the bounded eigenvalues condition [29, Sec. 1.2]:

We are finally able to show that the inexact alternating minimization loop stops in a finite number of iterations providing a point \((x^{k+1},y^{k+1})\) satisfying conditions (3.2)-(3.3).

Proposition 4.4

Assume the sequence of linear maps \(\{H_\ell \}\) in Algorithm 2satisfies the bounded eigenvalues condition (4.4). Then the algorithm cannot cycle infinitely and determines in a finite number of iterations a point \((x^{k+1},y^{k+1})\) such that

and

Proof

Suppose, by contradiction that, for some values of \(\tau _k\) and \(\delta _k\), the sequence \(\{ ( u^\ell , v^\ell ) \}\) is infinite. From the instructions of the algorithm, it is possible to see that we have

cf. (4.5). Hence, for all \(\ell \ge 0\), the point \( ( u^\ell , v^\ell ) \) belongs to the level set

Lemma 4.2 implies that this is a bounded set. Therefore, the sequence \(\{ ( u^\ell , v^\ell ) \}\) admits accumulations points. Let \(K \subseteq \mathbb {N} \) be an infinite subset such that

Recalling the continuity of the gradient, we have

We now show that \( \nabla _x q_{\tau _k}(\bar{u},\bar{v})=0. \) Taking into account the instructions of the algorithm, we have

By (4.4), it is possible to see that

Since \(\nabla _x q_{\tau _k}(u^{\ell }, v^{\ell }) \rightarrow _K \nabla _x q_{\tau _k}(\bar{u},\bar{v})\), we see that there exists a constant \( M > 0 \) such that \(\Vert d^\ell \Vert \le M\) for all \(\ell \in K\).

Since the entire sequence \(\{q_{\tau _k}(u^{\ell }, v^{\ell })\}\) is monotonically decreasing by (4.5), and the subsequence \(\{ q_{\tau _k}(u,v) \}_K\) converges to \( q_{\tau _k}(\bar{u}, \bar{v}) \), it follows that the whole sequence of function values converges to this limit, i.e., we have

Hence (4.5) yields \(\lim \limits _{\ell \rightarrow \infty } q_{\tau _k}(u^{\ell }, v^{\ell }) - q_{\tau _k}(u^{\ell } + \alpha _\ell d^\ell , v^\ell ) = 0.\) Thus, the hypotheses of Lemma 4.3 are satisfied. Moreover, from (4.2) and (4.4), we have

Using Lemma 4.3, we therefore obain

which implies that, for \(\ell \!\in \!K\) sufficiently large, we have \( \Vert \nabla _x q_{\tau _k}(u^\ell ,v^\ell ) \Vert \!\le \! \delta _k, \) i.e., that the stopping criterion of step (S.1) is satisfied in a finite number of iterations, and this contradicts the fact that \(\{( u^\ell ,v^\ell ) \}\) is an infinite sequence. Condition (3.2) is then satisfied by the stopping criterion, whereas condition (3.3) follows by construction. \(\square \)

In order for the theoretical analysis to hold, we only need to ensure that \(H_\ell \) satisfies condition (4.4). This assumption can be guaranteed a priori by different ways of defining \(H_\ell \). Among these valid choices, we can find classical setups leading back to iterations of standard algorithmic schemes such as gradient method (\(H_\ell =I\)), Newton method (\(H_\ell =\nabla _{xx}^2 q_{\tau _k}(u^\ell ,v^\ell )\), provided f is uniformly convex), quasi-Newton methods and limited-memory BFGS type methods.

This aspect is crucial in practice: we are allowed to employ the most efficient solvers for nonlinear optimization to carry out step (S.3) of the Alternate Minimization algorithm and thus speed up the computation of step (S.2) of Algorithm 1, which is the most burdensome one. As a comparison, the Augmented Lagrangian algorithm from [1] has to resort to a gradient-based method to solve the (constrained) sequential subproblems, possibly resulting in an inefficient method especially for ill-conditioned problems. We will indeed observe this difference in Sect. 6. Another difference is pointed out in the following comment.

Remark 4.5

The Augmented Lagrangian algorithm from [1] has to compute projections onto the set D within the computation of the stepsizes, i.e., it may require many projections for a single (inner) iteration. This is a notable difference to our Algorithm 2, which requires only a single projection after the computation of the new iterate \( u^{\ell + 1} \). In fact, it would also be possible to apply several iterations of an unconstrained optimization solver to the subproblem of minimizing the penalty function \( q_{\tau _k} ( \cdot , v^{\ell }) \) before updating the v-component, i.e., before using a single projection step.

5 Particular instances

The idea of this section, similar to [1], is to present some difficult optimization problems where projections onto the complicated set D can be carried out easily. This section does not contain any proofs since the corresponding results are known from the literature. However, since these particular instances will be used in Sect. 6, they have to be discussed in some detail.

5.1 The case of sparsity constraints

A particular case of problem (1.1) is that of sparsity constrained optimization problems, i.e., optimization problems of the form

where \(s<n\) and \(\Vert x\Vert _0\) denotes the zero pseudo-norm of x, i.e., the number of nonzero components of x. The Penalty Decomposition approach was originally proposed in [14] for this class of problems, and the inexact version was then proposed for the case \(\{x\mid G(x)\in C\}=\mathbb {R}^n\) [10].

In fact, from the analysis in Sect. 3, we can deduce that the convergence results continue to hold for the inexact version of the algorithm even in presence of additional constraints.

The Penalty Decomposition method is particularly appealing, from a computational perspective, for this class of problems since the Euclidean projection onto the sparse set D is easily obtainable in closed form, as outlined e.g. in [10, 14]. Let us denote the index set of the largest s variables at \(\bar{x}\) in absolute value by \(\mathcal {G}_s(\bar{x})\); for simplicity, we furthermore assume that cases of tie are handled unambiguously. Then, the projection of \(\bar{x}\) onto D is given by

In other words, the projection can be simply computed by setting to zero the \(n-s\) smallest components of \(\bar{x}\)

Note that M-stationarity, as defined in Definition 2.1, coincides with Lu-Zhang stationarity [9], which is the property guaranteed to hold for cluster points obtained by the original Penalty Decomposition method [14]. Hence, we can conclude, from the results shown in Sect. 3, that the inexact Penalty Decomposition method has the same convergence properties as its exact counterpart, and that the M-stationarity concept includes a corresponding stationarity condition particularly designed for cardinality constrained problems. We note, however, that there exist further stationarity concepts in this setting, see the corresponding discussions in, e.g., [9, 14, 30, 31].

5.2 Low-rank approximation problems

Here we consider the space \(\mathbb {X}=\mathbb {R}^{m\times n}\) with given \(n,m\in \mathbb {N}\), \(n,m\ge 2\); equipped with the standard Frobenius inner product, this is a Euclidean space.

In applications like computer vision, machine learning, computer algebra or signal processing, there is a strong interest in low-rank matrix optimization problems, see, e.g., [12, 32,33,34,35]. Specifically, letting \(q=\min (m,n)\) and given \(\kappa \le q-1\), we are interested in problems of the form

The set D has been thoroughly analyzed from a geometrical point of view, see e.g. [36] for a formula for \(\mathcal {N}_D^\text {lim}(X)\). Interestingly, elements of \(\Pi _D(X)\) can be easily constructed exploiting the singular value decomposition of X [12, 15].

Proposition 5.1

Let \(X\in \mathbb {X}=\mathbb {R}^{m\times n}\) and let \(X=U\Sigma V^T\) its singular value decomposition, with orthogonal matrices \(U\in \mathbb {R}^{m\times m}\), \(V\in \mathbb {R}^{n\times n}\) and \(\Sigma \in \mathbb {R}^{m\times n}\) diagonal with entries in non-increasing order, i.e.,

being \(\sigma _1,\ldots ,\sigma _q\) the singular values of X. Moreover, let \(\hat{\Sigma }\) the matrix obtained setting to zero the \(q-\kappa \) bottom-right elements of \(\Sigma \), i.e.,

Then, \(\hat{X} = U\hat{\Sigma }V^T\in \Pi _D(X)\).

Of course, the computation of the SVD for a matrix X is not a costless operation, so obtaining an element of \(\Pi _D(X)\), even though conceptually simple, requires a non-negligible amount of computing resources.

If we restrict the discussion to the case of symmetric positive semi-definite matrices, i.e., \(D=\{X\in \mathbb {R}^{n\times n}\mid X\succeq 0,\; \text {rank}(X)\le \kappa \}\), we can resort to the eigenvalue decomposition instead of the SVD [1, 15].

Proposition 5.2

Let \(X\in \mathbb {R}^{n\times n}\) be a symmetric matrix. Let us denote by \(X=\sum _{i=1}^{n}\lambda _iv_iv_i^T\) its eigenvalue decomposition, where \(\lambda _1\ge \ldots \ge \lambda _n\) are the non-increasingly ordered eigenvalues with corresponding eigenvectors \(v_1,\ldots ,v_n\). Then, we have \(\hat{X} = \sum _{i=1}^{\kappa }\max \{0,\lambda _i\}v_iv_i^T\in \Pi _D(X)\).

We can thus observe that, in this particular case, in order to compute the projection onto the set D we only need to find the \(\kappa \) largest eigenvalues with the corresponding eigenvectors; this can be done efficiently, especially when \(\kappa \) is small, as in most applications.

A (exact) Penalty Decomposition scheme was developed in [15] to tackle low-rank optimization problems, exploiting the above closed form rules for projection onto D both in the general and the positive semi-definite cases. The analysis in Sect. 3 shows that the algorithmic framework maintains the same convergence properties even when the X-update step is carried out in an inexact fashion.

5.3 Box-switching constrained problems

A wide class of relevant optimization problems with difficult geometric constraints is constituted by the so called box-switching constrained problems [1] that can be formalized as follows:

where, for simplicity, we assume that \(l_x\le 0 \le u_x\) and \(l_y\le 0 \le u_y\).

This setting covers various disjunctive programming problems such as problems with

-

switching constraints [8]: \(l_x=l_y=-\infty \), \(u_x=u_y=\infty \),

-

complementarity constraints [6]: \(l_x=l_y=0\), \(u_x=u_y=\infty \),

-

relaxed sparsity constraints [37]: \(l_x=-\infty \), \(u_x=\infty \), \(l_y=0\), \(l_y=1\).

It is easy to realize that projection onto the set D in this case is simple. Indeed, let us first consider the projection onto classical bound constraints [l, u] of a vector w. Since the constraints are separable, we can immediately obtain the projection by computing, for each component i, the value

With this in mind, noting that the set D is also (pairwise) separable, we can obtain an element \((\hat{x},\hat{y})\in \Pi _D[(\bar{x},\bar{y})]\) by first computing

and then setting

Computing the projection onto D thus amounts to computing 2n projections onto real intervals, which can be done with low computational effort. For this reason, a Penalty Decomposition type scheme again appears particularly appealing for this class of problems.

5.4 General disjunctive programs

A broad class of optimization problems with geometric constraints is represented by programs where variables are required to satisfy at least one among several sets of constraints:

where \(D_i\), \(i=1,\ldots ,N\) are closed convex sets. The resulting overall feasible set of these disjunctive programming problems [4] typically takes the structure of a nonconvex, disconnected set. Projections onto D in this case can be computed by finding the closest among the N projections onto \(D_1,\ldots ,D_N\):

Since, in general, the projection onto a convex set is already an expensive operation, the projection onto D is consequently a costly task. We shall observe that, in fact, the settings analyzed in the previous subsections are particular instances of this setting where the peculiar structure of sets \(D_i\) allows to efficiently compute the projection in smart ways.

The Penalty Decomposition approach might be appealing for problems of this form when the constraints \(G(x)\in C\) are numerous and/or nontrivial and N is also large. In these cases, the brute force strategy of solving N problems with convex constraints may become computationally unsustainable and PD might represent an appealing alternative.

6 Computational experiments

In this section, we report the results of an extensive experimentation aimed at demonstrating the potential and the benefits of using the Penalty Decomposition algorithm on various classes of problems. The experiments have two main goals:

-

Analyze the behavior of penalty decomposition in different settings and understand how to make it as efficient as possible;

-

Compare the penalty decomposition approach with the augmented Lagrangian method proposed in [1], which is, to the best of our knowledge, the only available algorithm from the literature designed to handle the general setting (1.1).

The code for the experiments has entirely been implemented in Python 3.9 and is publicly available at https://github.com/MatteoLapucci/GeoIPD. All the experiments have been run on a machine with the following specifications: Intel Xeon Processor E5-2430 v2, 6 physical cores (12 threads), 2.50 GHz, 16 GB RAM. We considered benchmarks of problems from the classes discussed in Sect. 5, i.e., cardinality constrained problems, low-rank approximation problems and disjunctive programming problems.

For the Penalty Decomposition approach (Algorithm 1), we set an upper bound to the value of \(\tau _k\) equal to \(10^8\). We employed for the inner loop (Algorithm 2) the stopping criterion

whereas for the outer loop we employ

Both the above stopping conditions are the ones suggested in [14] and we set \(\epsilon _\text {in} = 10^{-5}\) and \(\epsilon _\text {out} = 10^{-5}\).

As unconstrained optimization solvers for the x-update step, we implemented the gradient descent algorithm with Armijo line search. We also ran experiments using the implementations of the conjugate gradient (CG, [29]), BFGS [29] and L-BFGS [38] methods available in the scipy library. For all of these algorithms, we used the stopping criterion \(\Vert \nabla _x q_{\tau _k}(u^{\ell +1},v^\ell )\Vert \le \epsilon _\text {solv}\), with \(\epsilon _\text {solv}=10^{-5}\) if not specified otherwise.

As for the augmented Lagrangian method (ALM) from [1], it employs as inner solver of subproblems the spectral gradient method (SGM) proposed in the same work. With reference to [1, Algorithm 3.1], we set \(\sigma =10^{-5}\), \(\gamma _0=1\), \(\gamma _\text {max}=10^{12}\), \(m=10\), \(\tau =2\) (note that here it does not denote the penalty parameter). As for the ALM ( [1, Algorithm 4.1]), we set \(\eta =0.8\). We employed the multipliers safeguarding technique, projecting the values obtained using the standard Hestenes-Powell-Rockafellar updates onto the box \([-10^{8}, 10^8]\). For the spectral gradient loop, we used the stopping condition

where here we have used the notation of the present paper. We used the same stopping condition (6.1) as the PD method with \(\epsilon _\text {in}=10^{-5}\) for the inner loop of the ALM, whereas for the outer loop we require \(\text {dist}_C(G(x^{k+1}))\le \epsilon _\text {out}\), with \(\epsilon _\text {out}=10^{-5}\). The stopping conditions have been chosen as similar as possible for the two algorithms, in order to have a fair comparison.

We also did experiments with a variant of our proposed approach, employing safeguarded Lagrange multipliers in an augmented Lagrangian fashion, i.e., we take the ALM approach from [1] and combine it with the decomposition idea to solve the resulting subproblems. In other terms, the penalty function in this case becomes:

The setting of multipliers and penalty parameter updates is the same as the one we employed for the ALM itself. In the following, we will show that this modification (denoted PDLM), which does not have any major impact in the convergence analysis, leads to significant benefits in practice. It is interesting to note that this finding is in contrast with the remarks that can be found in the conclusions of [14].

Finally, we point out that we did not report the values for the initial penalty parameter \(\tau _0\) and its growing rate \(\alpha _\tau \) (we always set \(\tau _{k+1} = \alpha _\tau \tau _k\)). Indeed, these parameter are quite crucial for the overall performance of both PD and ALM algorithms and have been suitably selected for each class of problems. Thus, we will report each time the specific values of these two parameters.

6.1 Sparsity constrained optimization problems

We begin our numerical analysis with sparsity constrained problems. The reason we start with this class of problems is twofold: a) the original PD approach was designed for these problems and b) results are more easily and intuitively interpretable.

We considered various experimental settings with problems of this class. Firstly, we begin with the simplest possible problems, i.e., convex quadratic problems with only sparsity constraints:

We randomly generated instances of problem (6.2), according to the following procedure:

where \(n_\text {cond}\) denotes the desired condition number of the matrix Q. We generated three instances with \(n_\text {cond}=10\), \(n\in \{10,25,50\}\), \(s=3\), \(\nu =5\) to evaluate the impact of different solvers for the x-update step on the alternating minimization scheme and, in turn, on the overall PD approach.

We report in Table 1 the results obtained by running PD equipped with different inner solvers starting from the origin. We also ran the variant with Lagrange multipliers of our approach only with L-BFGS as inner solver; here we set \(\tau _0=1\) and \(\alpha _\tau =1.1\). Moreover, we considered the spectral gradient method for comparison. Note that, since there are no additional constraints, there is no need to resort to the ALM.

As expected, the use of quasi-Newton type solvers is highly beneficial: BFGS leads to much faster convergence than the simple gradient method; the L-BFGS provides an additional, substantial speed up. The presence of Lagrange multipliers also seems to be beneficial, both in terms of efficiency and of quality of the obtained solution. Based on these result, in the following we will always be using L-BFGS for the x-update step in Algorithm 2.

Note that the spectral gradient method clearly outperforms the PD approach in this case. This is indeed not surprising: being there no additional constraint, there is no need with the SGM to adopt a sequential penalty strategy, which is costly.

Next, we turn to a simple verification of the convergence properties of the PD approach. In particular, we consider the artificial example [30, Example 2.2], which is an instance of (6.2) with \(Q= E+I\), being E the matrix of all ones, \(c = -(3,2,3,12,5)^T\), \(\nu =1\) and \(s=2\). We ran both PD and PDLM, with \(\alpha _\tau =1.1\) from 1000 different starting points randomly generated in the hyper-box \([-10,10]^5\). We observed that the result strongly depends on the choice of \(\tau _0\), as we report in Table 2. Interestingly, both algorithms always converged to the global minimum \(f(x^\star )=-41.33\) when we set \(\tau _0=0.1\); in fact, we observed the same result for smaller values of \(\tau _0\). On the other hand, as \(\tau _0\) grows worse local minimizers become increasingly probable; the presence of Lagrange multipliers seems to alleviate, but not to suppress, this inconvenience. We argue that large values of \(\tau _0\) make PD schemes more dependent on the starting point: since usually \(x^0=y^0\), the penalty term is at the first iteration equal to \(\tau _0\Vert x-x^0\Vert ^2\), which binds variable x close to the start.

At this point, we have devised a setting that apparently makes the PD approach efficient and effective. We therefore expect the algorithm to indeed be a good choice to resort to when: a) additional constraints are present and/or b) the projection operator is costly. In the former case, SGM needs to be employed within another sequential scheme, namely, the ALM, which is the only alternative to the PD available from the literature; in the latter case, the advantage of PD over the ALM may not be straightforward. In fact, the two algorithms share a similar structure, sequentially solving penalized subproblems; in order to do so, unconstrained continuous optimization steps and projections onto D are repeatedly carried out; however, in PD many descent steps can be carried out before turning to the projection step; on the contrary, in the ALM we need to do the projection after every gradient step (in fact, we do it many times per iteration of the SGM to satisfy the acceptance criterion), cf. the discussion in Remark 4.5.

We now turn to sparsity constrained problems with additional constraints. In particular, we keep considering convex quadratic problems, but with simplex constraints, i.e., problems of the form

where \(e\in \mathbb {R}^n\) denotes the vector of all ones. This is a classical sparse portfolio optimization problem [39], where Q and c denote the covariance matrix and the mean of n possible assets.

Portfolio optimization problems are particularly useful to test the proposed algorithm since we can easily obtain the global optimum to be used as a reference. Indeed, we can do so exploiting the mixed-integer reformulation of the problem with binary indicator variables \(z_i\in \{0,1\},\;i=1,\ldots ,n,\) and linear constraints \(0\le x_i\le z_i\), \(e^Tz\le s\), and employing efficient software solvers such as Gurobi [40].

We first consider synthetic problems. Using (6.3), (6.4), we generated 10 problems for each combination of \(n\in \{20,40,60\}\) and \(n_\text {cond}\in \{10,100, 500\}\), for a total of 90 problems. We set \(s=4\) when \(n=20\), \(s=7\) for \(n=40\) and \(s=9\) for \(n=60\). We set \(\nu =1\) and use as starting point of the experiments \(\tilde{x} = (1/n,\ldots ,1/n)^T\); we ran PD, PDLM, ALM all with \(\tau _0=1\) and \(\alpha _\tau =1.1\). We also ran Gurobi on all instances to obtain the global optimizer to be used as reference; note that Gurobi indeed finds the certified global minimum in tens of seconds. The overall results of the experiments are reported in Fig. 1. The results concerning efficiency (runtime) are presented in the form of performance profiles [41] in Fig. 1a. We can observe that Penalty Decomposition with Lagrange multipliers is generally faster than the other two considered algorithms. As for the quality of the retrieved solutions, we plot in Fig. 1b the cumulative distribution of the relative gap between the solution found by a solver and the certified global optimum found with Gurobi; the result of PDLM is surprisingly remarkable, as it almost always reached a value very close, and often equal to, the global optimum; on the contrary, both PD and the ALM end up with substantially suboptimal solutions in almost a half of the cases.

We conclude the analysis on sparsity constrained problems looking at the results on 6 instances of real world portfolio selection problems. In particular, the data used in the experiments consists of daily data for securities from the FTSE 100 index, from 01/2003 to 12/2007. The three datasets are referred to as DTS1, DTS2, and DTS3, and are formed by \(n=12\), 24, and 48 securities, respectively. We also included three datasets from the Fama/French benchmark collection (FF10, FF17, and FF48, with n equal to 10, 17, and 48), using the monthly returns from 07/1971 to 06/2011. The datasets are generated as in [42]. For each dataset, we define an instance of problem (6.1): the values of s and \(\nu \) are set as reported in Table 3, and are such that the cardinality constraint is active at the optimal solution. We used again \(\tilde{x} = (1/n,\ldots ,1/n)^T\) as starting point. As for the penalty parameter, we set \(\tau _0=0.01\) and for the Penalty Decomposition methods, whereas we found that a larger value \(\tau _0=1\) was beneficial for the ALM. The parameter \(\alpha _\tau \) was set to 1.01 for all methods. The results are reported in Table 3 and we can observe that the trends outlined by the previous experiments are substantially confirmed.

6.2 Low-rank optimization problems

In this section, we study problems as discussed in Sect. 5.2 where \(\mathbb {X}=\mathbb {R}^{m\times n}\) and D consists of a low-rank matrices space.

To begin with, we consider the class of nearest low-rank correlation matrix problems, which was already used as a benchmark in [15]. In detail, the problem can be formulated as

where A is a given symmetric correlation matrix. The test problems we consider are the same as in [15], and their corresponding matrix A is defined as follows:

-

(P1) \(A_{ij} = 0.5+0.5\exp (-0.05|i-j|)\) for all i, j;

-

(P2) \(A_{ij} = \exp (-|i-j|)\) for all i, j;

-

(P3) \(A_{ij} = 0.6+0.4\exp (-0.1|i-j|)\) for all i, j.

For each of the above problems, we considered the instances with \(n=200\) and \(n=500\) and a value of \(\kappa =5,10\) and 20.

We experimentally compared the ALM and some implementations of the Penalty Decomposition approach; for all these algorithms, we set \(\tau _0=1\) and \(\alpha _\tau = 1.2\). We also needed for these experiments to set the upper bound on the value of \(\tau _k\) to \(10^{12}\).

Note that solving the X-update subproblem with an iterative solver has a significant cost, as we are considering problems with up to \(n\times n =250000\) variables; moreover, we are dealing with ill-conditioned quadratic problems, thus we found convenient switching from L-BFGS to the CG method. We tested two settings for the X-update step with CG: a strongly inexact setting, where the CG method is stopped after at most 5 steps (PD-cg-inaccurate), or when the norm of the gradient is smaller than \(\epsilon _\text {solv}=0.1\), and a more accurate setting, where up to 20 CG steps are carried out and the tolerance for the gradient norm stopping condition is set to 0.001 (PD-cg-accurate).

In addition, we note that, in fact, the X-update subproblem

can be solved to global optimality in closed form; we thus also carried out experiments with this option (PD-exact); moreover, we also consider the strategy adopted in [15], where the constraint \(\text {diag}(X)=e\) is kept as a lower-level constraint and the X-update subproblem is still solved in closed form (PD-exact-lower-level).

We finally report that we found the introduction of Lagrange multipliers associated with constraints \(G(x)\in C\) useful. We instead noticed that multipliers associated with the constraint \(X=Y\) are not helpful. This observation is in line with the work in [15], where only the constraint \(X=Y\) was in practice handled by the penalty approach and multipliers were reported not to be beneficial. In the experiments described in the following, only multipliers associated with the original problem constraints have been employed. The results of the experiment are reported in Tables 4, 5 and 6.

We can observe that the exact versions of the PD approach are the best performing ones from all perspectives, with the PD-exact-lower-level originally used in [15] standing out. This is in fact not surprising: in this case the exact method solves subproblems not only with higher accuracy, but also employing much less time than using an iterative solver.

Interestingly, however, we observe that the “inaccurate” version of the inexact PD attains runtimes that are comparable with the exact approaches, with only small drops in the quality of the retrieved solution. On the other hand, with a slightly more accurate inexact minimization we are always able to retrieve the best solution as the exact methods, with a computational effort generally comparable to that of the ALM.

We can thus deduce that a suitable configuration exists for the inexact PD approach that provides a good trade-off between solution quality and runtime.

These results are encouraging for all those settings where the exact version of the Penalty Decomposition approach is not employable by construction.

We then turn to a new class of problems, where matrices are not symmetric positive semi-definite and the X-update step requires a solver to be carried out. Specifically, we consider the low-rank based multi-task training [43] of logistic models [44]. Given a collection of somewhat related binary classification tasks \(\mathcal {T}_1,\ldots ,\mathcal {T}_m\), \(\mathcal {T}_i=\{(X_i,Y_i)\mid X_i\in \mathbb {R}^{N_i\times n},\;Y_i\in \{0,1\}^{N_i}\}\), where \(X_i\) represents the data matrix for each task and \(Y_i\) the corresponding labels, we can formalize the multitask logistic regression training problem as

where the t-th row \(W_t\) of W denotes the weights of the logistic model for the t-th task; each model is defined as the sum of a component independently characterizing the particular task, which is regularized, and a second component that lies in a linear subspace shared by all tasks. The stronger is the regularization parameter \(\eta \), the higher will be the similarity of the obtained models. By \(\mathcal {L}(W_t;X_t,Y_t)\) we denote the binary cross entropy loss function of the logistic model for task t, which is a convex function that, however, cannot be minimized in closed form.

We can observe that the problem can be solved by Penalty Decomposition, duplicating variable V. The constraint \(W=U+V\) can also be tackled by the penalty approach. The update step of the original variables W, U, V cannot be carried out in closed form, thus we need to resort to the inexact version of the PD method.

For the experiments, we used the landmine dataset [45], consisting of 9-dimensional data points representing radar images from 29 landmine fields/tasks. Each task aims to classify points as landmine or clutter. There are 14,820 data points in total. Tasks can approximately be clustered into two classes of ground surface conditions, so we expect \(r=2\) to be a reasonable bound for the low-rank component of the solution. We defined four instances of problem (6.5), corresponding to values of \(\eta \) in \(\{0.01,0.1, 0.5,2\}\). We examined the behavior of the inexact PD and ALM methods under different parameters configurations. In particular, we considered the following settings:

-

Penalty decomposition

-

Lagrange multipliers associated with all constraints;

-

\(\tau _0=10^{-3}\), \(\alpha _\tau =1.3\);

-

conjugate gradient (CG) for x-update steps;

-

three options for CG termination criteria:

-

\(\epsilon _\text {solv}=0.1\), \(\text {max\_iters}_{\text {CG}}=5\) (pd_inaccurate);

-

\(\epsilon _\text {solv}=0.05\), \(\text {max\_iters}_{\text {CG}}=8\) (pd_mid);

-

\(\epsilon _\text {solv}=0.001\), \(\text {max\_iters}_{\text {CG}}=20\) (pd_accurate);

-

-

-

ALM

-

\(\tau _0=1\), \(\alpha _\tau =1.3\);

-

two options for spectral gradient parameters:

-

\(\epsilon _\text {in} = 10^{-1}\), \(m=1\), \(\gamma _\text {max}=10^6\), \(\sigma =0.05\) (alm_fast);

-

\(\epsilon _\text {in} = 10^{-3}\), \(m=4\), \(\gamma _\text {max}=10^9\), \(\sigma =5\cdot 10^{-4}\) (alm_accurate).

-

-

We also report the results obtained by optimizing each task independently. For all PD and ALM configurations, we used as starting solution the one retrieved by single task optimization. Note that both configurations for ALM have lower precision than the default one reported at the beginning of Sect. 6; with this particular problem, we found the default configuration to be remarkably inefficient; however we shall underline that, in other test cases considered in this paper, these alternative configurations had led to convergence issues concerning numerical errors. The results of the experiment are reported in Fig. 2. Note that here we are interested in the optimization process metrics, not in the out-of-sample prediction performance of the obtained models.

We can observe that different setups for the algorithms allow to obtain different trade-offs between speed and solution quality. In particular, for the PD method we see that the trend observed with the correlation matrix problems are confirmed: solving the x-update subproblem up to lower accuracy allows to save computing time but at the cost of small yet not negligible sacrifice on the solution quality. A similar and even stronger trend can be observed for the ALM. Finally, we can observe that PD appears to be superior to the ALM both in terms of efficiency and effectiveness.

6.3 Disjunctive programming problems

In this section, we computationally analyze the performance of the Penalty Decomposition approach on problems of the form (5.5). The main goal of this section is to compare the performance of inexact PD with that of the ALM algorithm in a setting where the projection operation is costly and is in fact responsible for the largest part of the computational burden: as highlighted in Sect. 5.4, it amounts to compute the projection onto each of the convex sets \(D_i\).

For the experiments, we defined the following test problem:

where \(\mathcal {L}\) denotes the (convex) logistic loss on a randomly generated dataset of 200 examples. We assume \(A_q\in \mathbb {R}^{s\times n}\) and \(b_q\in \mathbb {R}^s\) have the same dimensions for \(q=1,\ldots ,N\) and their coefficients are uniformly drawn from \([-1,1]\); the coefficients c are randomly picked from [0, 1], whereas values for p are from \([-0.5,0.5]\). We set \(t_j=0.1\) for all j.

First, we consider the problem with \(n=10\), \(s=12\) and \(m=1\), for values of N varying in \(\{2,5,10,20,50,100\}\). In Table 7 we report the results obtained running PD (parameters: \(\tau _0=0.1\), \(\alpha _\tau =1.2\), \(\epsilon _\text {in}=0.01\), Lagrange multipliers employed) and the ALM (parameters \(\tau _0=1\), \(\alpha _\tau =1.2\) \(m=4\), \(\sigma =0.01\), \(\epsilon _\text {in}=0.01\)), together with reference values obtained with the enumeration approach (subproblems are solved using the SLSQP method available in scipy), which allows to retrieve the certified global optimizer. For the projection steps onto sets \(D_i\) we used gurobi solver.

We can observe that PD was always much faster than the ALM; moreover, it always ended up finding the actual global optimizer; this does not hold true for the ALM. We remark that we verified that, as expected, the computing time is indeed entirely dominated by projection steps. Indeed, as can be observed from Table 7, runtime and number of projection steps appear to be somewhat correlated.

Then, we turn to the experiments on an instance where the number of nonlinear constraints shared by all components of the feasible set are numerous and dominate the complexity of solving each subproblem in the enumeration approach. In particular, we consider the previous problem with \(N=50\), \(n=5\), \(m=80\), \(s=7\). Here we set \(\tau _0=0.1\), \(\alpha _\tau =1.5\), \(\epsilon _\text {in}=0.02\) for PD and \(\tau _0=1\), \(\alpha _\tau =1.5\) \(m=4\), \(\sigma =0.05\), \(\epsilon _\text {in}=0.1\) for the ALM. The experiment is repeated 20 times for different random seeds. The results are reported in the form of performance profiles in Fig. 3.

We deduce that Penalty Decomposition can indeed be a good choice in particularly complicated settings: the global optimizer was always reached, and this result was obtained in a consistently more efficient way than the brute force approach; the ALM does not have a comparable appeal in this context, the reason arguably being the much higher frequency of it resorting to the projection operation.

7 Conclusions

The current paper considers a penalty decomposition scheme for optimization problems with geometric constraints. It generalizes existing penalty decomposition schemes both by taking advantage of a general abstract (and usually complicated) constraint (as opposed to having only particular instances like cardinality constraints) and by including further (though supposingly simple) constraints. The idea and the convergence theory of this method is also related to recent augmented Lagrangian techniques, but the decomposition idea turns out to be numerically superior by allowing more efficient subproblem solvers and using many less projection steps.

In principle, it should be possible to extend the decomposition idea to the class of (safeguarded) augmented Lagrangian methods. Another, and related, question is whether one can exploit additional properties of augmented Lagrangian methods in order to improve the existing convergence theory. For example, augmented Lagrangian techniques have very strong convergence properties in the convex case. The particular classes of problems discussed in this paper are nonconvex, but the nonconvexity mainly arises from the fact that the abstract set D is nonconvex. Since we deal with the complicated set D explicitly, so that all iterates are feasible with respect to this set, a natural question is therefore whether one can prove stronger convergence properties in those situations where the remaining functions and constraints are convex. This will be part of our future research.

Data availability

Data sharing is not applicable to this article as no new data were created or analyzed in this study.

Code availability

All the code developed for the experimental part of this paper is publicly available at https://github.com/MatteoLapucci/GeoIPD.

References

Jia, X., Kanzow, C., Mehlitz, P., Wachsmuth, G.: An augmented lagrangian method for optimization problems with structured geometric constraints. Progr. Math. (2022). https://doi.org/10.1007/s10107-022-01870-z

Benko, M., Červinka, M., Hoheisel, T.: Sufficient conditions for metric subregularity of constraint systems with applications to disjunctive and ortho-disjunctive programs. Set-Val. Variat. Anal. 30(1), 143–177 (2022)

Benko, M., Gfrerer, H.: New verifiable stationarity concepts for a class of mathematical programs with disjunctive constraints. Optimization 67(1), 1–23 (2018)

Flegel, M.L., Kanzow, C., Outrata, J.V.: Optimality conditions for disjunctive programs with application to mathematical programs with equilibrium constraints. Set-Val. Anal. 15(2), 139–162 (2007)

Mehlitz, P.: On the linear independence constraint qualification in disjunctive programming. Optimization 69(10), 2241–2277 (2020)

Ye, J.: Optimality conditions for optimization problems with complementarity constraints. SIAM J. Optimiz. 9(2), 374–387 (1999)

Achtziger, W., Kanzow, C.: Mathematical programs with vanishing constraints: optimality conditions and constraint qualifications. Math. Progr. 114(1), 69–99 (2008)

Mehlitz, P.: Stationarity conditions and constraint qualifications for mathematical programs with switching constraints. Math. Progr. 181(1), 149–186 (2020)

Lapucci, M.: Theory and algorithms for sparsity constrained optimization problems. PhD thesis, University of Florence, Italy (2022)

Lapucci, M., Levato, T., Sciandrone, M.: Convergent inexact penalty decomposition methods for cardinality-constrained problems. J. Optimiz. Theory Appl. 188(2), 473–496 (2021)

Kishore Kumar, N., Schneider, J.: Literature survey on low rank approximation of matrices. Linear Multilin. Algebra 65(11), 2212–2244 (2017)

Markovsky, I.: Low rank approximation: algorithms, implementation, applications, 2nd edn. Springer, London, UK (2012)

Galvan, G., Lapucci, M., Levato, T., Sciandrone, M.: An alternating augmented Lagrangian method for constrained nonconvex optimization. Optimiz. Method. Softw. 35(3), 502–520 (2020)

Lu, Z., Zhang, Y.: Sparse approximation via penalty decomposition methods. SIAM J. Optimiz. 23(4), 2448–2478 (2013)

Zhang, Y., Lu, Z.: Penalty decomposition methods for rank minimization. Adv. Neural Inf. Process. Sys. 24 (2011)

Guignard, M., Kim, S.: Lagrangean decomposition: a model yielding stronger Lagrangean bounds. Math. Progr. 39(2), 215–228 (1987)

Jörnsten, K.O., Näsberg, M., Smeds, P.A.: Variable splitting: a new Lagrangean relaxation approach to some mathematical programming models. Universitetet i Linköping/Tekniska Högskolan i Linköping, Department of Mathematics (1985)

Grippo, L., Sciandrone, M.: Globally convergent block-coordinate techniques for unconstrained optimization. Optimiz. Meth. Softw. 10(4), 587–637 (1999)

Bonettini, S.: Inexact block coordinate descent methods with application to non-negative matrix factorization. IMA J. Numer. Anal. 31(4), 1431–1452 (2011)

Bauschke, H.H., Combettes, P.L.: Convex analysis and monotone operator theory in hilbert spaces, 1st edn. Springer, New York (2011). https://doi.org/10.1007/978-1-4419-9467-7

Mordukhovich, B.S.: Variational analysis and applications, 1st edn. Springer, Cham (2018). https://doi.org/10.1007/978-3-319-92775-6

Mehlitz, P.: Asymptotic stationarity and regularity for nonsmooth optimization problems. J Nonsmooth Anal. Optimiz. 1 (2020)

Andreani, R., Haeser, G., Martínez, J.M.: On sequential optimality conditions for smooth constrained optimization. Optimization 60(5), 627–641 (2011)

Andreani, R., Martinez, J.M., Ramos, A., Silva, P.J.: A cone-continuity constraint qualification and algorithmic consequences. SIAM J. Optimiz. 26(1), 96–110 (2016)

Andreani, R., Martinez, J.M., Ramos, A., Silva, P.J.: Strict constraint qualifications and sequential optimality conditions for constrained optimization. Math. Operat. Res. 43(3), 693–717 (2018)

Rockafellar, R.T., Wets, R.J.-B.: Variational analysis, 1st Edn. Springer, Heidelberg(2009) https://doi.org/10.1007/978-3-642-02431-3

Börgens, E., Kanzow, C., Steck, D.: Local and global analysis of multiplier methods for constrained optimization in Banach spaces. SIAM J. Contr. Optimiz. 57(6), 3694–3722 (2019)

Kanzow, C., Steck, D.: An example comparing the standard and safeguarded augmented Lagrangian methods. Operat. Res. Lett. 45(6), 598–603 (2017)

Bertsekas, D.: Nonlinear programming, vol. 4, 2nd edn. Athena Scientific, Belmont (2016)

Beck, A., Eldar, Y.C.: Sparsity constrained nonlinear optimization: optimality conditions and algorithms. SIAM J. Optimiz. 23(3), 1480–1509 (2013). https://doi.org/10.1137/120869778

Lämmel, S., Shikhman, V.: On nondegenerate M-stationary points for sparsity constrained nonlinear optimization. J. Global Optimiz. 82(2), 219–242 (2022)

Ben-Tal, A., Nemirovski, A.: Lectures on modern convex optimization: analysis, algorithms, and engineering applications, 1st edn. SIAM, Philadelphia (2001)

Burer, S., Monteiro, R.D., Zhang, Y.: Maximum stable set formulations and heuristics based on continuous optimization. Math. Progr. 94(1), 137–166 (2002)

Candès, E.J., Recht, B.: Exact matrix completion via convex optimization. Foundat. Comp. Math. 9(6), 717–772 (2009)

Recht, B., Fazel, M., Parrilo, P.A.: Guaranteed minimum-rank solutions of linear matrix equations via nuclear norm minimization. SIAM Review 52(3), 471–501 (2010)

Hosseini, S., Luke, D.R., Uschmajew, A.: Tangent and normal cones for low-rank matrices. In: Nonsmooth optimization and its applications, pp. 45–53. Springer, Birkhäuser, Cham (2019)

Burdakov, O.P., Kanzow, C., Schwartz, A.: Mathematical programs with cardinality constraints: reformulation by complementarity-type conditions and a regularization method. SIAM J. Optimiz. 26(1), 397–425 (2016)

Liu, D.C., Nocedal, J.: On the limited memory BFGS method for large scale optimization. Math. Progr. 45(1), 503–528 (1989)

Bertsimas, D., Cory-Wright, R.: A scalable algorithm for sparse portfolio selection. Informs J. Comput. 34(3), 1489–1511 (2022)

Gurobi optimization, LLC: Gurobi optimizer reference manual (2022). https://www.gurobi.com

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Progr. 91(2), 201–213 (2002)

Cocchi, G., Levato, T., Liuzzi, G., Sciandrone, M.: A concave optimization-based approach for sparse multiobjective programming. Optimiz. Lett. 14(3), 535–556 (2020)

Zhang, Y., Yang, Q.: A survey on multi-task learning. IEEE Trans. Knowl. Data Eng. 34(12), 5586–5609 (2021)

Hastie, T., Tibshirani, R., Friedman, J.: The elements of statistical learning: data mining, inference, and prediction, 2nd edn. Springer, New York (2009)

Xue, Y., Liao, X., Carin, L., Krishnapuram, B.: Multi-task learning for classification with Dirichlet process priors. J. Mach. Learn. Res. 8(1), 35–63 (2007)

Funding

Open access funding provided by Università degli Studi di Firenze within the CRUI-CARE Agreement. No funding was received for conducting this study.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no competing interests to declare that are relevant to the content of this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kanzow, C., Lapucci, M. Inexact penalty decomposition methods for optimization problems with geometric constraints. Comput Optim Appl 85, 937–971 (2023). https://doi.org/10.1007/s10589-023-00475-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-023-00475-2

Keywords

- Penalty decomposition

- Augmented lagrangian

- Mordukhovich-stationarity

- Asymptotic regularity

- Asymptotic stationarity

- Cardinality constraints

- Low-rank optimization

- Disjunctive programming