Abstract

Recent advances in neural-network architecture allow for seamless integration of convex optimization problems as differentiable layers in an end-to-end trainable neural network. Integrating medium and large scale quadratic programs into a deep neural network architecture, however, is challenging as solving quadratic programs exactly by interior-point methods has worst-case cubic complexity in the number of variables. In this paper, we present an alternative network layer architecture based on the alternating direction method of multipliers (ADMM) that is capable of scaling to moderate sized problems with 100–1000 decision variables and thousands of training examples. Backward differentiation is performed by implicit differentiation of a customized fixed-point iteration. Simulated results demonstrate the computational advantage of the ADMM layer, which for medium scale problems is approximately an order of magnitude faster than the state-of-the-art layers. Furthermore, our novel backward-pass routine is computationally efficient in comparison to the standard approach based on unrolled differentiation or implicit differentiation of the KKT optimality conditions. We conclude with examples from portfolio optimization in the integrated prediction and optimization paradigm.

Similar content being viewed by others

Data availability

The U.S stock data used for computational experiments 3 and 4 was obtained from Quandl https://data.nasdaq.com. The Famma-French factor data was obtained from the Kenneth R. French data library https://mba.tuck.dartmouth.edu/pages/faculty/ken.french/data_library.html.

References

Agrawal, A., Brandon, A., Barratt, S., Boyd, S., Diamond, S., Kolter, J.Z: Differentiable convex optimization layers. In: Advances in Neural Information Processing Systems, volume 32, pages 9562–9574. Curran Associates, Inc., (2019)

Agrawal, A., Barratt, S., Boyd, S., Busseti, E., Moursi, W.M.: Differentiating through a cone program, (2019). arXiv:1904.09043

Amos, B., Kolter, Z.J.: Optnet: Differentiable optimization as a layer in neural networks, (2017). arXiv:1703.00443

Amos, B., Rodriguez, Jimenez, I.D Sacks, J., Boots, B., Kolter, J.Z: Differentiable mpc for end-to-end planning and control (2019). arXiv:1810.13400

Anderson, D.G.M.: Iterative procedures for nonlinear integral equations. J. ACM 12, 547–560 (1965)

Black, F., Litterman, R.: Asset allocation combining investor views with market equilibrium. J. Fixed Income 1(2), 7–18 (1991)

Blondel, M., Berthet, Q., Cuturi, M., Frostig, R., Hoyer, S., Llinares-Lopez, F., Pedregosa, F., Vert, J.-P.: Efficient and modular implicit differentiation, (2021). arXiv:2105.15183

Boyd, S., Vandenberghe, L.: Convex Optimization. Cambridge University Press, Cambridge (2004). https://doi.org/10.1017/CBO9780511804441

Boyd, S., Parikh, N., Chu, E., Peleato, B., Eckstein, J.: Distributed optimization and statistical learning via the alternating direction method of multipliers. Found. Trends Mach. Learn. 3, 1–122 (2011). https://doi.org/10.1561/2200000016

Busseti, E., Moursi, W.M., Boyd, S.: Solution refinement at regular points of conic problems. Comput. Optim. Appl. 74(3), 627–643 (2019). https://doi.org/10.1007/s10589-019-00122-

Butler, A., Kwon, R.: Covariance estimation for risk-based portfolio optimization: an integrated approach. J. Risk, 24(2), (2021)

Butler, A., Kwon, R.H.: Integrating prediction in mean-variance portfolio optimization, (2021). arXiv:2102.09287

Cornuejols, G., Tutuncu, R.: Optimization Methods in Finance. Cambridge University Press, Cambridge (2007). https://doi.org/10.1017/CBO9780511753886

Diamond, S., Sitzmann, V., Heide, F., Wetzstein, G.: Unrolled optimization with deep priors, (2017). arXiv:1705.08041

Domke, J.: Generic methods for optimization-based modeling. In: Lawrence, Neil D., Girolami, M. (Eds), Proceedings of the Fifteenth International Conference on Artificial Intelligence and Statistics, volume 22 of Proceedings of Machine Learning Research, pages 318–326, La Palma, Canary Islands, 21–23 Apr (2012). PMLR. URL https://proceedings.mlr.press/v22/domke12.html

Dontchev, A., Rockafellar, R.: Implicit Functions and Solution Mappings: A View from Variational Analysis. Springer, New York (2009)

Donti, P., Amos, B., Zico Kolter, J.: Task-based end-to-end model learning in stochastic optimization. In: Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 30, pp. 5484–5494. Curran Associates Inc., New York (2017)

Fama, Eugene F., French, K.R.: A five-factor asset pricing model. J. Financ. Econ. 116(1), 1–22 (2015)

Gabay, D., Mercier, B.: A dual algorithm for the solution of nonlinear variational problems via finite element approximation. Comput. Math. Appl. 2, 17–40 (1976)

Glowinski, R., Marroco, A.: Sur l’approximation, par éléments finis d’ordre un, et la résolution, par pénalisation-dualité d’une classe de problèmes de dirichlet non linéaires. ESAIM: Math. Model. Numer. Anal. Modélisation Mathématique et Analyse Numérique 9(2), 41–76 (1975)

Goldfarb, D., Liu, S.: An o(n3l) primal interior point algorithm for convex quadratic programming. Math. Program. 49, 325–340 (1991)

Ho, M., Sun, Z., Xin, J.: Weighted elastic net penalized mean-variance portfolio design and computation. SIAM J. Financ. Math. 6(1), 1220–1244 (2015)

Kim, S.-J., Koh, K., Lustig, M., Boyd, S., Gorinevsky, D.: An interior-point method for large-scale l1-regularized least squares. Sel. Top. Signal Process. IEEE J. 1, 606–617 (2008). https://doi.org/10.1109/JSTSP.2007.910971

Mahapatruni, R.S.G., Gray, A.: Cake: convex adaptive kernel density estimation. In: Gordon, G., Dunson, D., Dudik, M. (Eds), Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics, volume 15 of Proceedings of Machine Learning Research, pages 498–506, Fort Lauderdale, FL, USA, 11–13 (Apr 2011). PMLR. URL https://proceedings.mlr.press/v15/mahapatruni11a.html

Mandi, J., Guns, T.: Interior point solving for lp-based prediction+optimisation, (2020). arXiv:2010.13943

Mandi, J., Demirovic, E., Stuckey, P.J., Guns, T.: Smart predict-and-optimize for hard combinatorial optimization problems, (2019). arXiv:1911.10092

Markowitz, H.: Portfolio selection. J. Financ. 7(1), 77–91 (1952)

Michaud, R., Michaud, R.: Estimation error and portfolio optimization: a resampling solution. J. Invest. Manag. 6(1), 8–28 (2008)

O’Donoghue, B., Chu, E., Parikh, N., Boyd, S.: Conic optimization via operator splitting and homogeneous self-dual embedding, (2016)

Rumelhart, D.E., Hinton, G.E., Williams, R.J.: Learning representations by back-propagating errors. Nature 323, 533–536 (1986)

Schubiger, M., Banjac, G., Lygeros, J.: Gpu acceleration of admm for large-scale quadratic programming. J. Parallel Distrib. Comput. 144, 55–67 (2020)

Sopasakis, P., Menounou, K., Patrinos, P.: Superscs: fast and accurate large-scale conic optimization, (2019). arXiv:1903.06477

Stellato, B., Banjac, G., Goulart, P., Bemporad, A., Boyd, S.: OSQP: An operator splitting solver for quadratic programs. Math. Program. Comput. 12(4), 637–672 (2020)

Tibshirani, Robert: Regression shrinkage and selection via the lasso. J. R. Stat. Soc. 58(1), 267 (1996)

Tikhonov, A.N.: Solution of incorrectly formulated problemsand the regularization method. Soviet Math. pp 1035–1038 (1963)

Uysal, A.S, Li, X., Mulvey, J.M.: End-to-end risk budgeting portfolio optimization with neural networks, (2021). arXiv:2107.04636

Walker, H., Ni, P.: Anderson acceleration for fixed-point iterations. SIAM J. Numer. Anal. 49, 1715–1735 (2011). https://doi.org/10.2307/23074353

Xie, X., Wu, J., Zhong, Z., Liu, G., Lin, Z.: Differentiable linearized ADMM, (2019). arXiv:1905.06179

Yang, Y., Sun, J., Li, H., Xu, Z.: Admm-net: A deep learning approach for compressive sensing MRI (2017). arXiv:1705.06869

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no competing interests to declare that are relevant to the content of this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

A Appendix

A Appendix

1.1 A.1 Proof of Proposition 1

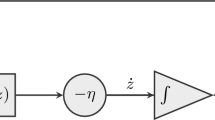

We define \(\mathbf{v}^k = \mathbf{x}^{k+1} +\varvec{\mu }^k\). We can therefore express Equation (14b) as:

and Equation (14c) as:

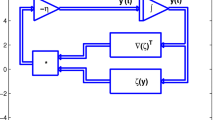

Substituting Equations (42) and (43) into Equation (14a) gives the desired fixed-point iteration:

1.2 A.2 Proof of Proposition 2

We define \(F :{\mathbb {R}}^{d_{v}} \times {\mathbb {R}}^{d_\eta } \rightarrow {\mathbb {R}}^{d_{v}} \times {\mathbb {R}}^{d_\eta }\) as:

and let

Therefore we have

Taking the partial differentials of Equation (49) with respect to the relevant problem variables therefore gives:

From Equation (49) we have that the differential \(\partial F(\mathbf{v},\varvec{\eta })\) is given by:

Substituting the gradient action of Equation (51) into Equation (26) and taking the left matrix-vector product of the transposed Jacobian with the previous backward-pass gradient, \(\frac{\partial \ell }{\partial \mathbf{z}^*}\), gives the desired result.

From Equation (24) we have:

Simplifying Equation (52) with Equation (53) yields the final expression:

1.3 A.3 Proof of Proposition 3

From the KKT system of equations (20) we have:

From Equation (21) it follows that:

and therefore Equation (55) uniquely determines the relevant non-zero gradients. Let \(\tilde{\varvec{\mu }}^*\) be as defined by Equation (29), then it follows that:

Substituting \(\hat{ \mathbf{d}}_{\varvec{\lambda }}\) into Equation (21) gives the desired gradients.

1.4 A.4 Data Summary

See Table 3.

1.5 A.5 Experiment 1: relative performance

See Table 4.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Butler, A., Kwon, R.H. Efficient differentiable quadratic programming layers: an ADMM approach. Comput Optim Appl 84, 449–476 (2023). https://doi.org/10.1007/s10589-022-00422-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-022-00422-7