Abstract

Ethane is the most abundant non-methane hydrocarbon in the Earth’s atmosphere and an important precursor of tropospheric ozone through various chemical pathways. Ethane is also an indirect greenhouse gas (global warming potential), influencing the atmospheric lifetime of methane through the consumption of the hydroxyl radical (OH). Understanding the development of trends and identifying trend reversals in atmospheric ethane is therefore crucial. Our dataset consists of four series of daily ethane columns. As with many other decadal time series, our data are characterized by autocorrelation, heteroskedasticity, and seasonal effects. Additionally, missing observations due to instrument failure or unfavorable measurement conditions are common in such series. The goal of this paper is therefore to analyze trends in atmospheric ethane with statistical tools that correctly address these data features. We present selected methods designed for the analysis of time trends and trend reversals. We consider bootstrap inference on broken linear trends and smoothly varying nonlinear trends. In particular, for the broken trend model, we propose a bootstrap method for inference on the break location and the corresponding changes in slope. For the smooth trend model, we construct simultaneous confidence bands around the nonparametrically estimated trend. Our autoregressive wild bootstrap approach, combined with a seasonal filter, is able to handle all issues mentioned above (we provide R code for all proposed methods on https://www.stephansmeekes.nl/code.).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

There are several important reasons to study ethane time series. First, ethane is an indirect greenhouse gas influencing the atmospheric lifetime of methane. It degrades by reacting with the same oxidizer, the hydroxyl radical (OH; Aikin et al. (1982) and Rudolph (1995)), which is needed for the degradation of other major greenhouse gases such as methane. The OH radicals which are occupied by ethane are not available for the destruction of other pollutants (Collins et al. 2002). Second, ethane is an important precursor of tropospheric ozone (Fischer et al. 2014, see, e.g.,Franco et al. 2016). It contributes to the formation of ground level ozone (O3) which is—unlike stratospheric ozone—a major pollutant affecting air quality. While ozone in higher levels of the atmosphere protects us from the sun’s harmful ultraviolet rays, ground-level ozone damages ecosystems and has adverse effects on the human body. Third, ethane emissions can be used as a measure of methane emissions (e.g., Schaefer 2019). Both gases share some of their anthropogenic sources, while ethane does not have natural sources; methane is released in the atmosphere by both natural and anthropogenic activities. This makes it hard to measure the fraction of methane released by the oil and gas sector. An estimate of this fraction can be provided with the help of ethane measurements. Its monitoring is therefore crucial for the characterization of air quality and the transport of tropospheric pollution. The main sources of ethane are located in the Northern Hemisphere, and the dominating emissions are associated to production and transport of natural gas (Xiao et al. 2008).

Understanding recent and past developments in such emission data builds on the analysis of time trends. Trend estimation has received much attention in econometrics and statistics and many tools are available for this purpose. Trend estimation, however, is not enough; it is crucial to indicate the corresponding uncertainty around the estimate. This is commonly achieved by constructing confidence intervals which enable us to judge the significance of our results.

As many other climatological time series, measurements of atmospheric ethane display characteristics which complicate the analysis. In particular, calculation of uncertainty measures becomes increasingly difficult. These characteristics include strong seasonality, different degrees of variability (e.g., significant interannual changes), and missing observations due to instrument failures or unfavorable measurement conditions. Atmospheric ethane, when measured with the Fourier Transform InfraRed (FTIR) remote-sensing technique, is a prominent example in which all three problematic characteristics arise. It displays strong seasonality, a time-varying variance, and, since measurements can only be taken under clear sky conditions, many missing data points. Therefore, it is important to use methods which provide reliable results under these circumstances.

Bootstrap methods can address some of these problems, as in Gardiner et al. (2008). The authors propose a method for (linear) trend analysis of greenhouse gases. Gardiner et al. (2008) stress that the residuals of the model are serially correlated and not normally distributed. They propose an i.i.d. (independently and identically distributed) bootstrap method to construct confidence intervals around the slope parameter. This approach suffers from two major drawbacks. First, the approach does not provide confidence intervals for the break location. Second, in the presence of autocorrelation, the i.i.d. bootstrap method cannot correctly mimic the dependence structure of the residuals. Alternative bootstrap methods, such as the block or sieve bootstrap, are available to solve this problem. In terms of implementation, both require only minor modifications compared with the i.i.d. bootstrap.

Similar methods as in Gardiner et al. (2008) have been applied to various data series. De Smedt et al. (2010) investigate trends in satellite observations of formaldehyde columns in the troposphere, Noguchi et al. (2011) study linear trends in ice phenology data, and Mahieu et al. (2014) look at stratospheric hydrogen chloride increases in the Northern Hemisphere. More recently, Hausmann et al. (2016) use a bootstrap method to study trends in atmospheric methane and ethane emissions measured at Zugspitze and Lauder. The latter two papers split the sample into two periods and compare the changes in trends. It is, however, not always obvious where the sample should be split, and user-selected break points are thus somewhat arbitrary. This issue can be resolved using data-driven methods to select the break point. While trend estimates, such as slopes for linear approaches, usually come with confidence intervals, the break location is often stated without any measure of uncertainty. Obtaining confidence intervals for break locations gives valuable additional insights.

This paper aims to analyze trends in atmospheric ethane with an alternative set of statistical tools. Our dataset consists of four series of daily ethane columns (i.e., the number of molecules integrated between the ground and top of the atmosphere in a column of a given area, e.g., a square centimeter) obtained from ground-based FTIR measurements. We present selected methods designed for the analysis of time trends and trend reversals and apply them to our dataset. We focus on two different, but complimentary, approaches which are particularly suited in this context. First, we present a linear trend model which allows for a break at an unknown time point for which we also obtain confidence intervals. It provides researchers with a tool to test for the presence of a break and, if so, it additionally gives an estimate of its location together with a reliable confidence interval. With this method, it is not necessary to split the sample. If there is a break present, it automatically determines two different estimates of the trend parameters.

In the second part, we move to a more flexible specification by considering a smoothly varying nonparametric trend model. It does not impose any assumptions regarding the form of the trend. However, the trend function will no longer be characterized by merely two values—like the intercept and slope for a linear trend. The nonparametric approach results in a collection of estimates, one for every time point, which together define the trend. We are nevertheless concerned with investigating certain properties of the resulting trend. This is why we propose three additional methods to take a closer look at the trend shape.

In both parts, we suggest the use of bootstrap methods to construct confidence intervals and obtain critical values for our statistical tests. We advocate the use of a specific bootstrap method—the autoregressive wild bootstrap—which is applicable to correlated and heteroskedastic data. Its second advantage over many other bootstrap methods is that it can easily be applied to data series with missing observations.

The structure of the paper is as follows. The data description and general modeling approach are introduced in Section 2. Section 3 presents the linear trend approach and corresponding ethane results. Section 4 continues with the nonparametric trend model. The first part gives the model specifications and discusses the results. In the second part, we present how to conduct inference on the shape of the nonparametric trends and apply these methods to the data. Section 5 concludes. In the Supplementary Appendix, we give additional technical details in part A and provide a Monte Carlo simulation study in part B.

2 Trends in atmospheric ethane

2.1 The data

We study four series of atmospheric ethane measurements. The measurement stations are located at Jungfraujoch (Swiss Alps), Lauder (New Zealand), Thule (Greenland), and Toronto (Canada). Jungfraujoch, Thule, and Toronto lie in the Northern Hemisphere while Lauder is located in the Southern Hemisphere.

The Jungfraujoch measurement station is located on the saddle between the Jungfrau and the Mönch, at 46.55∘ N, 7.98∘ E, 3580 m altitude (Zander et al. 2008). The time series consists of daily ethane columns recorded under clear-sky conditions between 1986 and 2019 with a total of 2935 data points. Part of the series, from 1994 to 2014, has been analyzed in Franco et al. (2015) and Friedrich et al. (2020). It is an interesting series to study, since the measurement conditions are very favorable at this location due to high dryness and low local pollution. This is the longest FTIR time series of ethane, with more than three decades of continuous measurements available. Further details on the ground-based station at Jungfraujoch and on how measurements are obtained can be found in Franco et al. (2015).

The Lauder time series starts in 1992 and ends in 2014 and has 2550 observations. The station is located at 45∘ S, 170∘ E, 370 m altitude. A part of the series (until 2009) was investigated in Zeng et al. (2012). The measurement station in Thule is located at 76.52∘ N, 68.77∘ W, 225 m altitude (Hannigan et al. 2009). The series consists of 814 data points taken between 1999 and 2014. Finally, the Toronto station is located at 43.66∘ N, 79.40∘ W, 174 m altitude, and the series ranges from 2002 to 2014 with 1399 observations (Wiacek et al. 2007). The series obtained at Thule and Toronto have been studied in Franco et al. (2016). Whenever multiple measurements are taken on one day, a daily mean is considered.

The Jungfraujoch series contains an average of 89.9 data points per year, corresponding to data availability of about 25% on a yearly basis, Lauder has on average 115.9 data points per year (32%), Thule 54.4 (15%), and Toronto 112.1 (31%). These percentages clearly indicate that missing data is a severe and non-negligible problem in this type of analysis. In particular, simple imputation is likely to be imprecise and it may introduce strong biases into the outcomes. We therefore do not impute the data but use statistical methods that directly allow for missing values.

These data are also characterized by a strong seasonal pattern, as ethane degrades faster under warm weather conditions than in cold temperature; and therefore, the measurements display local peaks every winter period. Finally, it is worthwhile to note that the measurements display strong autocorrelation, which has to be accommodated as well.

2.2 A general trend model

Let yt denote ethane measurements at time t, where t ranges from 1 to T. Then, we formulate the trend model:

where dt is the long-run trend—our object of interest, st is the (deterministic) intra-annual seasonal pattern, and ut is a stochastic error term that captures short-run fluctuations. We assume that ut is of the form σtvt where σt is a deterministic sequence and vt is a linear process with absolutely summable coefficients. Thus, {ut} can exhibit heteroskedasticity and serial dependency, as also observed in our ethane measurement series. While this structure implies that dependence dies out over time, this can be quite slow and therefore fairly strong autocorrelation is allowed for. Moreover, it is well known that the autoregressive wild bootstrap is valid for many problems with this error specification.

Seasonal effects are modeled through st; we focus on a deterministic specification given the fixed nature of seasonal effects in this context, though stochastic effects can be allowed for as well. We model and estimate the seasonal effects with the help of Fourier terms:

This specification of the seasonal variability is widely used when estimating trends in atmospheric gases; see, e.g., Gardiner et al. (2008), Franco et al. (2015), and Franco et al. (2016). These papers show that the variability is well captured by the inclusion of S = 3 sine and cosine terms. Our own investigations confirm that three terms capture the seasonal variation wellFootnote 1; and therefore, we follow the same approach and consider (2.2) with S = 3 in the remainder of the paper.

The specification of dt depends on our trend specifications (see Sections 3 and 4). Before going into detail, we first address the missing data issue. Define the binary variable Mt as:

In order to derive theoretical properties of our methods, e.g., (Friedrich et al. 2020), one typically assumes that the missing pattern, characterized by {Mt}, is independent of the observations. Strictly speaking, in the present case, we cannot exclude a mild dependence between ethane and the missing pattern as ethane’s primary sink is oxidation by the hydroxyl radical, which is dependent on solar insolation.Footnote 2 However, given the atmospheric lifetime of ethane in relation to our sampling frequency, we argue that any dependence is negligible in comparison with other fluctuations since our purpose is analyzing long-term trends. Ethane’s lifetime is of the order of 2 months, while FTIR measurements are taken on average every 3 to 4 days. As such, the high frequency of measurements means that most variation in ethane that we capture is due to other sources.

We allow the probability of observing measurements on a given day to vary over time, which can accommodate for instance seasonal variation and long-term climatic trends. In addition, we assume that the probability of observing a measurement on a given day may be serially dependent, but we need this dependence to decay over time; for the precise meaning we have in mind, please see Friedrich et al. (2020), Assumption 4. This ensures that we, over a large enough time span, always have sufficient data available to estimate the trend. It is reasonable to assume that the pattern of the missing data points in the case of FTIR measurements—generally caused by adverse weather conditions—satisfies these assumptions.

A second source of missing data—resulting in prolonged periods without observations—might be instrument failure and/or maintenance, as well as polar nights for stations close to the poles. While instrument failure, if it is not expected to last indefinitely, can be captured by an assumption like Assumption 4 in Friedrich et al. (2020) and polar nights can be modeled by varying the probability of missing data, we stress that for prolonged periods without observations, one cannot draw meaningful conclusions. Practically, one needs data around the point of interest to estimate the trend and conduct inference. While for the linear approach, such periods are less of an issue as long as the break in trend is not thought to be located in such a period, the nonparametric approach in Section 4, which requires to construct local averages around the date, becomes completely uninformative. Such periods should therefore be treated with caution, and would have to be excluded from the analysis in order to draw meaningful conclusions. The reader is referred to Friedrich et al. (2020) for a more precise statement and detailed discussion of these assumptions.

3 Modeling trends linearly

3.1 A broken trend model

We now return to the trend model of Eq. 2.1 and specify dt as follows:

where

Equations 3.1 and 3.2 describe a broken linear trend model with a singleFootnote 3 and unknown break at date T1. The intercept and slope parameter before the break are α and β, respectively. For t > T1, the dummy variable \(D_{t,T_{1}}\) induces a change in the slope coefficient from β to \(\left (\beta +\delta \right )\) while altering the intercept in such a way as to enforce continuity at the break date. This prevents the modeled ethane concentration from exhibiting a sudden unrealistic jump at T1.

The parameters of interest are (α,β,δ), the parameters in the Fourier specification (2.2), and the unknown breakdate T1. For future reference, we denote the fitted seasonal effects by \(\hat {s}_{t}\). The inherent simplicity and small number of parameters make Eq. 3.1 easy to estimate and interpret. Both aspects make linear trend models a popular tool for trend analysis (see, e.g., Bloomfield1992; Fomby and Vogelsang 2002; Mckitrick and Volgesang 2014). However, one should realize that piecewise linearity is most likely nothing but an approximation of reality. As such, we view the broken trend model as a description of the most prominent trend features and designate any remaining nonlinearities to the error term.

3.2 Testing for a break

Following Bai and Perron (1998), we propose a formal test to determine whether a model with one break is preferred over a simple linear trend model. Let Λ denote the set of possible break dates. For some 0 < λ < 1/2, we specify this set as \({\Lambda }=\left [\lambda T,(1-\lambda )T\right ]\), that is, we require the break date to be bounded away from the boundaries of the sample. This assumption is standard in the structural breaks literature. Without this assumption, the test statistic will diverge as \(T\to \infty \) and the method will not have any asymptotic validity (see Section 5.2 of Andrews (1993) for details). In practice, λ has to be specified by the user. Its choice should ensure that sufficient data points are available on both sides of each candidate break to allow for the estimation of the unknown parameters. We set λ = 0.1. Changes in λ have little effect on the results as long as the estimated break point does not occur too close to the boundaries of Λ.Footnote 4 Empirical evidence for this claim can be found in Table 1. As visible in this table, changes in λ lead to qualitatively similar confidence intervals.

Having specified Λ, we define our test statistic as:

where we compare the sum of squared residuals of a model without break to the lowest sum of squared residuals of a model including one break. It is a formal test of the pair of hypothesis H0 : δ = 0 versus H1 : δ≠ 0, for every possible break point Tc ∈Λ. Low (high) values of FT indicate little (substantial) evidence in favor of the model with a structural break. Given a significance level of the test, the critical value of the test determines the cutoff point. The exact procedure is summarized below.

Since the test is rejected for large values of the test statistic FT, we use the (1 − α) quantile of the ordered bootstrap statistics as critical value for the break test. In Step 2 of the above algorithm, the autoregressive coefficient γ has to be chosen. The choice reflects a trade-off: a larger value captures more of the dependence whereas a smaller value allows for more variation in the bootstrap samples. We suggest to follow Friedrich et al. (2020) and use γ = 𝜃1/l with l = 1.75T1/3 and 𝜃 = 0.1.

In Step 2, we generate \(\left \{\xi _{t}^{\ast }\right \}\) for all t = 1,…,T, although in Step 3 we construct bootstrap errors and subsequently, bootstrap observations only when there exists an actual data point. This is what the multiplication by Mt in Step 3 ensures. The bootstrap sample thus correctly reflects the missing pattern present in the data.

The autoregressive wild bootstrap (AWB) can also be used to obtain confidence intervals for the unknown break date T1 and all parameter estimates. We refer the reader to Appendix A of the Supplementary Material for further details.

3.3 Empirical findings for ethane series

Panel (A) of Table 2 summarizes the results of the break test for the four ethane time series. As an example, for the Jungfraujoch, the test statistic of the F-test is 1.40 × 1033, while the bootstrapped critical value lies at 5.54 × 1031. The resulting p value of 0 indicates that the null hypothesis of no break should be rejected. The conclusions are similar for Lauder, Thule, and Toronto. We thus thus include a break point in each trend specification.

The estimated break location for the Jungfraujoch series is 2006.38 (19.05.2006) and the AWB method provides a confidence interval ranging from 2005.66 to 2007.04 (August 26, 2005, to January 14, 2007). The graphical summary in Fig. 1a plots the following: the ethane time series (gray circles), the seasonal fit of three Fourier terms (blue), the estimated broken trend (black), and the confidence interval of the break date (dotted vertical lines). We observe a significant decrease in ethane concentration of about − 1.54 × 1014 mol cm− 2 yr− 1 before the break, followed by an increase of 1.83 × 1014 mol cm− 2 year− 1 after the break. Figure 1b–d and panel (B) of Table 2 provide information on the other series.

Our results are qualitatively similar to those in Franco et al. (2015).Footnote 5 As mentioned there, the initial downward trend can be explained by general emission reductions since the mid-1980s of the fossil fuel sources in the Northern Hemisphere. This has also been reported by other studies. The upward trend seems to be a more recent phenomenon. Some studies attribute it to the recent growth in exploitation of shale gas and tight oil reservoirs, taking place in North America; see, e.g., Vinciguerra et al. (2015), Franco et al. (2016), and Helmig et al. (2016). The significant negative coefficients before and after the break in panel (B) of Table 2 indicate that Lauder is not yet impacted by the recent increase of ethane in the Northern Hemisphere. Lauder is the only site in the data set which is located in the Southern Hemisphere. Indeed, C2H6 has a mean atmospheric lifetime of 2 months, significantly shorter than the time needed to mix air between both hemispheres (Simpson et al. 2012).

4 Modeling trends as smooth nonparametric functions

The piecewise linear model provides a transparent overview of the long-term behavior of the ethane concentration. That is, fitted trends (as seen in Fig. 1) provide a clear visualization of periods of decreasing/increasing ethane concentration. However, all short-lived deviations from this linear trend are not discernible. We will now introduce a more flexible model which does not require functional form, comment on our empirical findings, and propose some tests that allow for a more detailed analysis of the data.

4.1 The nonparametric trend model

Instead of a linear specification of the trend dt in Eq. 2.1, we now specify:

where g(⋅) denotes a smooth (i.e., twice-differentiable) function defined on the interval [0,1]. As is standard with this approach (see, e.g., Robinson 1989; Wu and Zhao 2007), we map all time points into the interval \(\left [0,1\right ]\) by the division by T, with the idea that when the sample size T increases we observe points on a denser grid of [0,1]. This is mainly done for theoretical purposes, and does not affect estimation in practice.

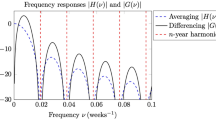

The main goal is to estimate the function g(⋅) and determine the uncertainty around this estimate. We use the nonparametric kernel estimator suggested by Nadaraya (1964) and Watson (1964) in a two-stage procedure where we first eliminate seasonal variability and next estimate the trend function nonparametrically. The estimator uses a smoothing parameter called the bandwidth. Essentially, the bandwidth determines how many data points around the point of interest are used to estimate the trend by constructing a local (weighted) average around that point. Large bandwidths produce very smooth estimates, while for small bandwidth, estimated trends fluctuate more. Bandwidth selection is important for this type of estimation (Fan 1992, e.g.). If the bandwidth is too small, approaching zero, the trend estimate almost coincides with the data points, which would be overfitting. If, in contrast, the bandwidth is very large, the trend estimate will be close to a linear trend. An appropriate bandwidth lies in between to avoid over- or underfitting and ultimately has to be selected by the user. The choice depends on the context of the study. Data-based procedures exist which can help with bandwidth selection. However, it is not uncommon to encounter problems with these methods in practice. We elaborate on this in the next section.

This model was studied by Friedrich et al. (2020), who develop bootstrap methods to construct confidence bands around the trend and establish the method’s theoretical properties. Inference on the trend is conducted using the autoregressive wild bootstrap to construct pointwise intervals in a similar fashion as above. Subsequently, we apply a three-step procedure to find simultaneous confidence bands based on the pointwise intervals. Many interesting research questions, like whether a coefficient remains zero over the whole period or whether there was an upward trend over a certain period of time, cannot be answered with pointwise confidence intervals. Therefore, we use simultaneous confidence bands as discussed in Härdle and Marron (1991), Bühlmann (1998), and Neumann and Polzehl (1998). For technical details on the estimation and bootstrap methods, and how to obtain simultaneous confidence bands, we refer to Appendix A of the Supplementary Material.

4.1.1 Smooth trends in ethane

To estimate the trend function, we first obtain residuals from a regression of the ethane data on three Fourier terms. From these residuals, we estimate the trend function nonparametrically using a local constant kernel estimator with an Epanechnikov kernel.Footnote 6 We illustrate a data-driven bandwidth selection using the Modified Cross Validation (MCV) approach of Chu and Marron (1991) which is discussed in the nonparametric trend setting in Friedrich et al. (2020). Technical details can again be found in Appendix A.

The MCV procedure has to be applied with care. The range of possible bandwidths over which we minimize the criterion can have a major effect on the resulting optimal bandwidth. The MCV criterion function can have multiple local minima or, in some cases, the function can be monotonically increasing such that it always selects the smallest possible bandwidth. The latter can occur if the values contained in the range of possible bandwidths are too small, but it can also happen using a reasonable grid. To illustrate, in our analysis, we allow for values between 0.01 and 0.25 in steps of 0.005. This yields a total of 50 possible bandwidths. We plot the criterion as function of the bandwidth in Fig. 2. For all series except the Jungfraujoch, we can observe at least two local minima which we collect in the caption. The bandwidth choice depends on the context of the study and has to be made by the user. In our case, we prefer a bandwidth that is small enough to allow us to see developments in the trend curve that are missed by the linear trend approach but which produces a reasonably smooth estimate. For Lauder, we therefore select the first bandwidth and for Thule and Toronto the second. In the Jungfraujoch case, the criterion is monotonically increasing. There is a kink at 0.03; the resulting trend estimate with this bandwidth still contains a lot of variation. Since we are interested in longer term movements, we select a slightly larger value of 0.05.

Figure 3 plots the seasonally adjusted data points and the nonparametric trend with the 95% simultaneous confidence bands in blue. If we follow the movements of the Jungfraujoch trend curve in panel (a), we see local peaks around the year of 1998 and 2002–2003, which were not visible in the previous analysis. Capturing these two events is possible thanks to the flexibility of the nonparametric approach. A similar peak in 1998 is also visible in the Lauder series (panel (b)) and in 2002–2003 in the Thule series (panel (c)). The peaks can be attributed to boreal forest fires which were taking place mainly in Russia during both periods. Geophysical studies have investigated these events in association with anomalies in carbon monoxide emissions (Yurganov et al. 2004; Yurganov et al. 2005). In such fires, carbon monoxide is co-emitted with ethane, such that these events are likely explanations for the peaks we observe.

In addition, we observe a significant upward trend toward the end of the sample period after a minimum has been reached in 2006 for Jungfraujoch and Thule and around 2009 for Toronto (panel (d)). This is in line with the parametric analysis and cannot be observed at Lauder. Looking at the confidence bands for the three upward trending series after their minimum has been reached, it is impossible to completely embed a horizontal line into the bands, signaling strong evidence of a nonzero upward trend. A more recent development is the slow down of the upward trend resulting in a peak around 2015. This is a novel finding due to the longer range of our sample. A potential explanation could be the drastic drop in oil prices which occurred in late 2014. Lower oil prices will likely have an impact on the oil and gas industry making it less profitable to exploit shale gas wells.

4.2 Inference on trend shapes

Based on the trend estimates from previous sections, we are interested in particular features of the trend curve. Having in mind the shape of the trends that we discovered, one important feature for our analysis is the local minimum in 2006 of the trend in the Jungfraujoch ethane column series. All other ethane series from the Northern Hemisphere also display a (local) minimum. In the Thule trend estimate, it is located in 2005 and in Toronto in 2008. Therefore, we are interested in the uncertainty around the location of such minima. In order to investigate this issue, we again rely on the autoregressive wild bootstrap method presented above. This is discussed in the first part of this section. The analysis can equally be applied to a local maximum of the trend curve, it is not restricted to the analysis of local minima.

Another interesting feature is the resulting post-minimum upward trend. We have a closer look at the specific form of this trend in the second part. Specifically, we suggest two formal tests; one will compare the nonparametric trend to a linear trend and the other one tests for monotonic behavior in the nonparametric trend. All approaches are applied to investigate the trend in the Jungfraujoch, Thule, and Toronto time series. As Lauder does not show the same trend pattern, we drop it for the remainder of the paper.

4.2.1 Analyzing the locations of extrema

We are interested in the minimum of the trend estimate, which we denote by \(\hat {g}_{min}\), and its location by tmin. Our goal is to construct a confidence interval for tmin. For this, we use the autoregressive wild bootstrap to construct bootstrap observations in a similar vein as presented in Algorithm 1. To the bootstrap observations, we then apply the nonparametric estimator and determine the location of the local minimum for each bootstrap trend closest to tmin—the original minimum—and denote it by \(t_{min}^{\ast }\). We give the bootstrap algorithm in Appendix A of the Supplementary Material.

The proposed analysis can be used to obtain further evidence on the location of a potential trend reversal and the results can be compared with the break location found in the linear trend analysis discussed in Section 3. This new approach is less robust in a sense that it is sensitive to the choice of bandwidth that was used to generate the nonparametric trend estimate. It is, however, much more flexible and less restrictive than the break point detection, as we do not force the trend before and after the minimum to be linear.

The minimum of the estimated Jungfraujoch trend is located at 2006.86 (November 10, 2006). When applying the adapted autoregressive wild bootstrap to obtain 95% confidence intervals around that location, we find 2006.52 to 2007.38 (July 08, 2006, to May 16, 2007), which lies completely within the confidence interval obtained for the break location in Section 3 (2005.66 to 2007.04). It is a good sign that we obtain a qualitatively similar result from these two different approaches. The nonparametric approach with this choice of the bandwidth parameter results in a smooth trend, while the parametric specification includes an abrupt break through which the minimum is defined. Similar results are obtained for the Toronto ethane series with a minimum in 2008.84 (October 30, 2008) and the 95% confidence interval ranging from 2007.81 to 2009.53 (October 23, 2007 to July 12, 2009), and for Thule with a minimum in 2005.50 (June 30, 2005) and confidence intervals ranging from 2005.17 to 2007.40 (March 02, 2005, to May 23, 2007).

4.2.2 A bootstrap-based specification test

When comparing both approaches, the (piecewise) linear and the nonparametric one permitting any smooth nonlinear shape, an obvious question arises as to whether we can say more about the appropriateness of the two trend shapes. While the linear trend has some desirable properties—e.g., we get an estimate of the average annual decrease or increase in the data—it might be too restrictive to model the underlying true trend. With the nonparametric approach, we get a better understanding of the true trend shape. Due to its flexibility, however, we do not obtain parameter estimates to measure and compare trends. It can nevertheless be seen as a tool to investigate the plausibility of a linear trend in the different series or subsamples.

Kapetanios (2008) designs a bootstrap-based test which can be used to test for parameter constancy under the null hypothesis against smoothly occurring structural change. Based on this work, we propose a modification of the test which is able to provide evidence if a certain parametric shape is appropriate to describe the trend in the data at hand.

We first introduce the test in a general framework. The more specific case of linearity will be discussed later. For the general framework, consider the following null hypothesis:

where g0(𝜃,⋅) belongs to a parametric family \(\mathbf {G}=\{g(\boldsymbol {\theta },\cdot ); \boldsymbol {\theta } \in {\Theta } \subset \mathbb {R}^{d}\}\) with d being the number of parameters in 𝜃. Furthermore, the set \(\mathcal {G}_{m}=\left \{t_{1},t_{2},...,t_{m}\right \}\) contains the time points for which the hypothesis should be tested. Under the alternative, the trend does not follow the parametric shape given by g0(𝜃,⋅), but can be expressed as in model (4.1). As a special case of the test, which is of particular interest in our application, we can consider the linear trend function g0(𝜃,⋅) = α + βt such that \(\boldsymbol {\theta }=\left (\alpha ,\beta \right )\) and d = 2. A similar framework is considered in Wang and Van Keilegoom (2007) and Zhang and Wu (2011) as well as in Lyubchich et al. (2013). The proposed tests are, however, designed for equally spaced observations and, therefore, not easily applicable to series with missing data.

We use an adaption of the test statistic in Kapetanios (2008):

where \(\hat {g}(t/T)\) denotes the nonparametric kernel estimator, as before, and \(\widehat {\boldsymbol {\theta }}\) denotes the parameter estimates under the null hypothesis. The type of estimator we choose under the null hypothesis depends on the specific case and the form of the parametric function. In the linear trend case, we can use OLS to obtain estimates \(\hat {\alpha }\) and \(\hat {\beta }\). As the subscript t shows, this test statistic is pointwise. Since we are interested in the trend over time, we follow Kapetanios (2008) and use the two summary statistics for the set \(\mathcal {G}_{m}=\left \{t_{1},t_{2},...,t_{m}\right \}\):

To obtain critical values for the test statistics, we rely again on the autoregressive wild bootstrap method.Footnote 7

Our test can loosely be interpreted as a functional extension of a traditional specification test in the spirit of Hausman (1978), where we have one estimator that is consistent under both the null and alternative hypotheses—here the nonparametric one—and one that is more efficient under the null hypothesis—the correctly specified parametric model—but inconsistent under the alternative. Therefore, we expect the two estimators to be close to each other under the null, for all considered t, and hence Qt will be close to zero. Under the alternative, the estimators will differ, leading Qt to diverge at some t. We then detect these divergences by aggregating the Qt statistics over all \(t \in \mathcal {G}_{m}\) in one of the two ways described above.

The exact specification of the set \(\mathcal {G}_{m}\) depends on the application at hand. In practice, often a set of several consecutive points is needed to be able to estimate the parameters under the null hypothesis. This is the case, for example, with the linear trend application that we focus on in the remainder of the section.

We choose the set \(\mathcal {G}_{m}\) in such a way that it covers the period of increase in the ethane trends. Specifically, we select the starting point of Gm as the minimum of the nonparametrically estimated trend, which we have determined in the previous section. The end point coincides with the end of the sample. While one could clearly take any starting point, testing for linearity appears counterintuitive if we start before the minimum. This would be equivalent to asking whether a line with a kink could be described as linear. Since this null hypothesis would surely be rejected, we consider a more interesting part of the sample.Footnote 8 We connect the nonparametrically estimated part of the trend to the linear part imposed by the null hypothesis at the point \(\hat {g}_{min}\), thereby testing whether the trend after this point is linear. To connect the two subsamples, the intercept is determined by the value of the trend before \(\hat {g}_{min}\), and only the slope parameter is estimated by OLS. For the calculation of the test statistic (4.2) we use as \(g_{0}(\widehat {\boldsymbol {\theta }},t)\) the best fitting linear trend line that goes through the minimum for all t in \(\mathcal {G}_{m}\).

The results are summarized in panel (A) of Table 3. We report the values of the two different versions of the test as well as the bootstrap critical values and the resulting p values. The test leads to a rejection of the null hypothesis of a linear trend for the Jungfraujoch series at a 1% significance level, while it does not reject linearity for Thule and Toronto at any reasonable level.

4.2.3 Two tests for monotonicity

In the previous section, we proposed a bootstrap-based test to investigate if the trend can be best described by a specific parametric shape—in this case linearity—or by the unrestricted nonparametric alternative. In some applications, however, the question whether the trend has been monotonically increasing or decreasing over a certain period can already be enough evidence. In the case of the ethane series, we are mainly interested in establishing an upward trend in the post-minimum period of the sample. Therefore, we propose to additionally use two tests for monotonicity.

The test considers a monotonically increasing trend function under the null hypothesis. The alternative is the same as before, a nonparametric unrestricted trend. Formally, this can be written as:

or, since under the given smoothness assumptions the function g(⋅) is differentiable:

In this case, the set \(\mathcal {I}\) must be a compact interval in the domain of the function g(⋅). The paper by Ghosal et al. (2000) proposes the following test statistic to test the above null hypothesis, for \(t\in \mathcal {I}\):

with

As kernel function, we use \(K(x)=0.75\left (1-x^{2}\right )\) for − 1 < x < 1 and 0 otherwise, as Ghosal et al. (2000) suggest. We also follow their bandwidth recommendation hU = 0.5T− 1/5. The test is based on the idea that for an increasing function, increments will be positive; and thus, the test statistic should satisfy U1,t ≤ 0 for most \(t\in \mathcal {I}\) under the null. This can be easily verified as U1,t sums over weighted differences of observations \(\left (y_{j}-y_{i}\right )\) such that j > i; or more precisely, it sums over the sign thereof. The test statistic U1,t corresponds to one point in the interval of interest, \(\mathcal {I}\), similar to the test statistic Qt in Eq. 4.2. As summary statistic, Ghosal et al. (2000) propose a supremum statistic:

Additionally, we use a second test to support our findings. This second test is proposed in Chetverikov (2019). The difference compared with Eq. 4.5 is the use of the sign function, which is omitted in this version of the test. The full differences and not only their sign will be accounted for. This gives the following test statistic:

which we apply with the same specifications as we use for U1,t. Again, this statistic is negative under the null hypothesis due to the same reason as above. In line with the above procedure, we calculate summary test statistics U2 whose exact definition follows in analogy to U1.

To obtain critical values, we rely once again on the autoregressive wild bootstrap. In this case, we need to make one adjustment to the bootstrap algorithm for the nonparametric trend reported in Appendix A, which is that the trend is set to zero in the construction of bootstrap observations. This makes sure that the null hypothesis is satisfied.

Coming back to the original research question and motivation for this test, we now investigate the post-minimum nonparametric trend of the three ethane series obtained at Jungfraujoch, Thule, and Toronto. After having rejected linearity for the Jungfraujoch location, this test helps us to establish whether there has been a monotonic upward trend in the series since their respective minimum. Thus, the set \(\mathcal {I}\) over which we test for monotonicity coincides with the set Gm we selected for the linearity test above.

The results are summarized in panel (B) of Table 3. We report the values of the two different versions of the test as well as the bootstrap critical values and the resulting p values. The test provides evidence that the Jungfraujoch post-minimum trend is not monotonically increasing. This result is likely driven by the slow down in the estimated trend around 2015. For the other two locations, we cannot reject the null hypothesis and conclude that the post-minimum trend in the ethane burden at Thule and Toronto is monotonically increasing, which is in line with the results from the (post-minimum) linearity test.

5 Conclusion

We analyze trends and trend reversals in a set of four time series of ethane total columns. Three series are obtained from measurement stations located in the Northern Hemisphere: Jungfraujoch in the Swiss Alps, Thule in Greenland, and Toronto in Canada. One series is taken in Lauder which is located in the Southern Hemisphere. The stations record daily observations of ethane abundance in the atmosphere. Depending on the conditions, however, measurements cannot be made during cloudy days resulting in time series with data available on about one day in three. This is a limitation frequently encountered in (climatological) time series, which causes problems when constructing confidence intervals around the trend estimate.

This paper proposes two approaches for trend analysis in such settings. First, a broken linear trend model is estimated with unknown break date. Piecewise linear trends have the advantage of being easy to estimate and interpret. However, imposing linearity may obscure important features that are not well captured by linearity. Second, we move to a nonlinear and nonparametric model. This model allows us to capture much richer features at the expense of more complicated estimation and interpretation. For the construction of confidence intervals in the nonparametric model, we use an autoregressive wild bootstrap method. Additionally, we propose several diagnostic tools to investigate the shape of the resulting trend.

There is a significant upward trend in atmospheric ethane, starting around 2006/2007. This finding is confirmed by both approaches as the break of the linear model and the local minimum of the nonparametric approach is located in 2006. The subsequent results of a formal test for linearity indicate that a linear trend is not appropriate for the post-minimum period of the Jungfraujoch and Thule series. In addition, the nonparametric estimation reveals trend functions which exhibit local maxima around the years of 1998 and 2002–2003 which coincide with boreal forest fires in Russia which were not captured by the linear model.

The two approaches proposed in this paper should be viewed as complimentary rather than competing methods. The simplicity of the broken linear trend model allows us to indicate a numerical value for the slope parameter, summarizing the development of the trend over a particular period. Even if one does not truly believe in linearity of the trend, it may still prove to be a useful approximation given its simplicity. Alternatively, the complexity of the nonlinear approach has the potential of providing us with additional information and capturing features obscured by the linear model. At the same time, it can be used to confirm previous findings and to judge the plausibility and appropriateness of the linear trend model.

A limitation of the piecewise linear trend model presented here is that it can accommodate only one break, putting it at a natural disadvantage to the more flexible nonparametric approach. Indeed, broken linear trend models with multiple breaks at unknown locations can be estimated using, for instance, the methods proposed in Bai and Perron (1998), which also allow one to test for the number of breaks in the trend. However, constructing confidence intervals for the locations of multiple breaks is more complicated in such models, and the bootstrap method for a single break is not easily adapted. The extension of the bootstrap methodology to multiple breaks is left for future research.

Notes

Detailed results are available on request.

We thank an anonymous referee for pointing this out.

Bai and Perron (1998) discuss inference in regression models with multiple unknown breaks. One of their findings is that break locations can be estimated sequentially. An extension of our bootstrap methodology to multiple structural changes is left for future research.

We thank an anonymous referee for pointing out the difficulties that can occur when choosing λ in practice. In general, the practitioner should proceed with care when the estimated break date is in close proximity to the start or end of the sample. Re-estimating the model with a slightly different value of λ should indicate whether results should be treated with caution.

A difference with the results in Franco et al. (2015) is the estimated break data. Based on data until August 2014, they place the break point beginning 2009. Two facts can explain this apparent discrepancy. First, the break point in Franco et al. (2015) was determined by finding the minimum in the running mean of the daily average data instead of selecting the break point that achieves the minimum sum of squared residuals among all candidate broken trend models. Second, Franco et al. (2015) do not report a confidence interval for their break location as appropriate bootstrap methodology was not available at the time. As such, it is hard to judge whether our outcomes are significantly different.

Next to bootstrapping, Kapetanios (2008) also investigates asymptotic tests which show a particularly poor performance. We can therefore expect that a bootstrap-based test is also preferred in our slightly different setup.

Alternatively, one could test whether a piecewise linear model is appropriate for a larger part of the sample, if at least one is comfortable with using the nonparametric estimator for modeling an abrupt change by a smooth approximation. The mechanics of such a test are the same as the test we consider here.

References

Aikin AC, Herman JR, Maier EJ, McQuillan CJ (1982) Atmospheric chemistry of ethane and ethylene. J Geophys Res 87(C4):3105. https://doi.org/10.1029/JC087iC04p03105

Andrews DWK (1993) Tests for parameter instability and structural change with unknown change point. Econometrica 61(4):821–856

Bai J, Perron P (1998) Estimating and testing linear models with multiple structural changes. Econometrica 66(1):47–78

Bloomfield P (1992) Trends in global temperatures. Clim Chang 21:275–287

Bühlmann P (1998) Sieve bootstrap for smoothing in nonstationary time series. Ann Stat 26:48–83

Chetverikov D (2019) Testing regression monotonicity in econometric models. Economet Theor 35 (4):729–776. https://doi.org/10.1017/S0266466618000282

Chu C-K, Marron JS (1991) Comparison of two bandwidths selectors with dependent errors. Annals Stat 19(4):1906–1918

Collins WJ, Derwent RG, Johnson CE, Stevenson DS (2002) The oxidation of organic compounds in the troposphere and their global warming potentials. Clim Chang 52:453. https://doi.org/10.1023/A:1014221225434

De Smedt I, Stavrakou T, Müller J-F, van der ARJ, Van Rosendael M (2010) Trend detection in satellite observations of formaldehyde troposheric columns. Geophys Res Lett 37:L18808

Fan J (1992) Design-adaptive nonparametric regression. J Am Stat Assoc 87:998–1004

Fan J (1993) Local linear regression smoothers and their minimax efficiencies. Ann Stat 21:196–216

Fan J, Gijbels I (1992) Variable bandwidth and local linear regression smoothers. Ann Stat 20:2008–2036

Fischer EV, Jacob DJ, Yantosca RM, Sulprizio MP, Millet DB, Mao J, Paulot F, Singh HB, Roiger A, Ries L, Talbot RW, Dzepina K, Pandey Deolal S (2014) Atmospheric peroxyacetyl nitrate (PAN): a global budget and source attribution. Atmos Chem Phys 14(5):2679–2698. https://doi.org/10.5194/acp-14-2679-2014

Franco B, Bader W, Toon GC, Bray C, Perrin A, Fischer EV, Sudo K, Boone CD, Bovya B, Lejeune B, Servais C, Mahieu E (2015) Retrieval of ethane from ground-based FTIR solar spectra using improved spectroscopy: Recent burden increase above Jungfraujoch. J Quant Spectrosc Radiat Trans 160:36–49

Franco B, Mahieu E, Emmons LK, Tzompa-Sosa ZA, Fischer EV, Sudo K, Bovy B, Conway S, Griffin D, Hannigan JW, Strong K, Walker KA (2016) Evaluating ethane and methane emissions associated with the development of oil and natural gas extraction in North America. Environment Res Lett 11 (4):044010. https://doi.org/10.1088/1748-9326/11/4/044010,2016

Friedrich M, Smeekes S, Urbain J-P (2020) Autoregressive wild bootstrap inference for nonparametric trends. J Econ 214(1):81–109

Fomby T, Vogelsang TJ (2002) The application of size-robust trend statistics to global warming temperature series. J Clim 15:117–123

Gardiner T, Forbes A, de Mazière M, Vigouroux C, Mahieu E, Demoulin P, Velazco V, Notholt J, Blumenstock T, Hase F, Kramer I, Sussmann R, Stremme W, Mellqvist J, Strandberg A, Ellingsen K, Gauss M (2008) Trend analysis of greenhouse gases over Europe measuerd by a network of ground-based remote FTIR instruments. Atmos Chem Phys 8:6719–6727

Ghosal S, Sen A, van der Vaart A (2000) Testing monotonicity of regression. Ann Stat 28:1054–1082

Hannigan JW, Coffey MT, Goldman A (2009) Semiautonomous FTS observation system for remote sensing of stratospheric and tropospheric gases. J Atmos Oceanic Technol 26(9):1814–1828

Härdle W, Marron JS (1991) Bootstrap simultaneous error bars for nonparametric regression. Ann Stat 19:778–796

Hausman JA (1978) Specification tests in econometrics. Econometrica 46:1251–1271

Hausmann P, Sussmann R, Smale D (2016) Contribution of oil and natural gas production to renewed increase in atmospheric methane (2007-2014): top-down estimate from ethane and methane column observations. Atmos Chem Phys 16:3227–3244

Helmig D, Rossabi S, Hueber J, Tans P, Montzka SA, Masarie K, Thoning K, Plass-Duelmer C, Claude A, Carpenter LJ, Lewis AC, Punjabi S, Reimann S, Vollmer MK, Steinbrecher R, Hannigan JW, Emmons LK, Mahieu E, Franco B, Smale D, Pozzer A (2016) Reversal of global atmospheric ethane and propane trends largely due to US oil and natural gas production. Nat Geosci 9:490–495

Kapetanios G (2008) Bootstrap-based tests for deterministic time-varying coefficients in regression models. Comput Stat Data Anal 53:534–545

Lyubchich Y, Gel R, El-Shaarawi A (2013) Detecting Non-Monotonic trends in environmental time series: a fusion of local regression and bootstrap. Environmetrics 24(4):209–226

McKitrick RR, Vogelsang TJ (2014) HAC Robust trend comparisons among climate series with possible level shifts. Environmetrics 25:528–54

Mahieu E, Chipperfield MP, Notholt J, Reddmann T, Anderson J, Bernath PF, Blumenstock T, Coffey MT, Dhomse SS, Feng W, Franco B, Froidevaux L, Griffith DWT, Hanniga JW, Palm M, Paton-Walsh C, russell JM III, Schneider M, Servais C, Smale D, Walker KA (2014) Recent Northern Hemisphere stratospheric HCl increase due to atmospheric circulation changes. Nature 515:104–107

Nadaraya EA (1964) On estimating regression. Theor Probab Its Appl 9:141–142

Neumann MH, Polzehl J (1998) Simultaneous bootstrap confidence bands in nonparametric regression. J Nonparametr Stat 9:307–333

Noguchi K, Gel YR, Duguay CR (2011) Bootstrap-based tests for trends in hydrological time series, with application to ice phenology data. J Hydrol 410:150–161

Robinson PM (1989) Nonparametric estimation of time varying parameters. In: Hackl P (ed) Statistics, analysis and forecasting of economic structural change. Springer, Berlin

Rudolph J (1995) The tropospheric distribution and budget of ethane. J Geophys Res 100(D6):11369. https://doi.org/10.1029/95JD00693

Schaefer H (2019) On the causes and consequences of recent trends in atmospheric methane. Curr Clim Change Rep 5(4):259–274. https://doi.org/10.1007/s40641-019-00140-z

Simpson IJ, Sulbaek Andersen MP, Meinardi S, Bruhwiler L, Blake NJ, Helmig D, Rowland FS, Blake DR (2012) Long-term decline of global atmospheric ethane concentrations and implications for methane. Nature 488(7412):490–494. https://doi.org/10.1038/nature11342

Vinciguerra T, Yao S, Dadzie J, Chittams A, Deskins T, Ehrman S, Dickerson RR (2015) Regional air quality impacts of hydraulic fracturing and shale natural gas activity: evidence from ambient VOC observations. Atmos Environ 110:144–50

Wang L, Van Keilegoom I (2007) Nonparametric test for the form of parametric regression with time series errors. Stat Sin 17:369–386

Watson GS (1964) Smooth regression analysis. Sankhyā Ser A 26:359–372

Wiacek A, Taylor JR, Strong K, Saari R, Kerzenmacher T, Jones NB, Griffith DWT, Wiacek A (2007) Ground-Based Solar Absorption FTIR Spectroscopy: Characterization of Retrievals and First Results from a Novel Optical Design Instrument at a New NDACC Complementary Station. J Atmos Oceanic Technol 24 (3):432–448

Wu WB, Zhao Z (2007) Inference of trends in time series. J R Stat Soc B 69:391–410

Xiao Y, Logan JA, Jacob DJ, Hudman RC, Yantosca R, Blake DR (2008) Global budget of ethane and regional constraints on U.S. sources. J Geophys Res 113(D21) https://doi.org/10.1029/2007JD009415

Yurganov LN, Blumenstock T, Grechko EI, Hase F, Hyer EJ, Kasischke IS, Koike M, Kondo Y, Kramer I, Leung F-Y, Mahieu E, Mellqvist J, Notholt J, Novelli PC, Rinsland CP, Scheel HE, Schulz A, Strandberg A, Sussmann R, Tanimoto H, Velazco V, Zander R, Zhao Y (2004) A quantitative assessment of the 1998 carbon monoxide emission anomaly in the Northern Hemisphere based on total column and surface concentration measurements. J Geophys Res 109:D15305

Yurganov LN, Duchatelet P, Dzhola AV, Edwards DP, Hase F, Kramer I, Mahieu E, Mellqvist J, Notholt J, Novelli PC, Rockmann A, Scheel HE, Schneider M, Schulz A, Strandberg A, Sussmann R, Tanimoto H, Velazco V, Drummond JR, Gille JC (2005) Increased Northern Hemispheric carbon monoxide burden in the troposphere in 2002 and 2003 detected from the ground and from space. Atmos Chem Phys 5(2):563–573

Zander R, Mahieu E, Demoulin P, Duchatelet P, Roland G, Servais C, De Mazière M., Reimann S, Rinsland CP (2008) Our changing atmosphere: Evidence based on long-term infrared solar observations at the Jungfraujoch since 1950. Sci Total Environ 391(2-3):184–195. https://doi.org/10.1016/j.scitotenv.2007.10.018

Zeng G, Wood SW, Morgenstern O, Jones NB, Robinson J, Smale D (2012) Trends and variations in CO, C2H6, and HCN in the Southern Hemisphere point to the declining anthropogenic emissions of CO and C2H6. Atmos Chem Phys 12(16):7543–7555

Zhang T, Wu WB (2011) Testing parametric assumptions of trends of a nonstationary time series. Biometrika 98(3):599–614

Funding

Open Access funding provided by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Support to the Liège team has been primarily provided by the F.R.S. - FNRS (Brussels) under Grant J.0147.18. Emmanuel Mahieu is a Research Associate with F.R.S. - FNRS. The vital support from the GAW-CH programme of MeteoSwiss is acknowledged. Mission expenses at the Jungfraujoch station were funded by the Fédération Wallonie-Bruxelles. We thank the International Foundation High Altitude Research Stations Jungfraujoch and Gornergrat (HFSJG, Bern) for supporting the facilities needed to perform the observations. W. Bader has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Sklodowska-Curie grant agreement no. 704951, and from the University of Toronto through a Faculty of Arts & Science Postdoctoral Fellowship Award. We gratefully acknowledge D. Smale (National Institute of Water and Atmospheric research, NIWA, Lauder, NZ), J.W. Hannigan (National Center for Atmospheric Research, NCAR, Boulder, CO, USA) and K. Strong (University of Toronto, Toronto, ON, Canada) for providing FTIR data for the Lauder, Thule and Toronto stations, respectively. Network for the Detection of Atmospheric Composition Change data are publicly available at http://www.ndacc.org. The NIWA FTIR program is funded through the New Zealand government’s core research grant framework from the Ministry of Business, Innovation and Employment. NCAR is sponsored by the National Science Foundation. The NCAR FTS observation program at Thule is supported under contract by the National Aeronautics and Space Administration (NASA). The Thule work is further supported by the NSF Office of Polar Programs (OPP). Measurements at Toronto were made at the University of Toronto Atmospheric Observatory, funded by CFCAS, ABB Bomem, CFI, CSA, ECCC, NSERC, ORDCF, PREA, and the University of Toronto.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Friedrich, M., Beutner, E., Reuvers, H. et al. A statistical analysis of time trends in atmospheric ethane. Climatic Change 162, 105–125 (2020). https://doi.org/10.1007/s10584-020-02806-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10584-020-02806-2