Abstract

The detection of online cyberbullying has seen an increase in societal importance, popularity in research, and available open data. Nevertheless, while computational power and affordability of resources continue to increase, the access restrictions on high-quality data limit the applicability of state-of-the-art techniques. Consequently, much of the recent research uses small, heterogeneous datasets, without a thorough evaluation of applicability. In this paper, we further illustrate these issues, as we (i) evaluate many publicly available resources for this task and demonstrate difficulties with data collection. These predominantly yield small datasets that fail to capture the required complex social dynamics and impede direct comparison of progress. We (ii) conduct an extensive set of experiments that indicate a general lack of cross-domain generalization of classifiers trained on these sources, and openly provide this framework to replicate and extend our evaluation criteria. Finally, we (iii) present an effective crowdsourcing method: simulating real-life bullying scenarios in a lab setting generates plausible data that can be effectively used to enrich real data. This largely circumvents the restrictions on data that can be collected, and increases classifier performance. We believe these contributions can aid in improving the empirical practices of future research in the field.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Learning to accurately classify rare phenomena within large feeds of data poses challenges for numerous applications of machine learning. The volume of data required for representative instances to be included is often resource-consuming, and limited access to such instances can severely impact the reliability of predictions. These limitations are particularly prevalent in applications dealing with sensitive social phenomena such as those found in the field of forensics: e.g., predicting acts of terrorism, detecting fraud, or uncovering sexually transgressive behavior. Their events are complex and require rich representations for effective detection. Conversely, online text, images, and meta-data capturing such interactions have commercial value for the platforms they are hosted on and are often off-limits to protect users’ privacy.

An application affected by such limitations with increasing societal importance and growing interest over the last decade is that of cyberbullying detection. Not only is it sensitive, but the data is also inherently scarce in terms of public access. Most cyberbullying events are off-limits to the majority of researches, as they take place in private conversations. Fully capturing the social dynamics and complexity of these events requires much richer data than available to the research community up until now. Related to this, various issues with the operationalization of cyberbullying detection research were recently demonstrated by Rosa et al. (2019), who share much of the same concerns as we will discuss in this work. While their work focuses on methodological rigor in prior research, we will focus on the core limitations of the domain and complexity of cyberbullying detection. Through an evaluation of the current advances on the task, we illustrate how the mentioned issues affect current research, particularly cross-domain. Finally, we demonstrate crowdsourcing in an experimental setting to potentially alleviate the task’s data scarcity. First, however, we introduce the theoretical framing of cyberbullying and the task of automatically detecting such events.

1.1 Cyberbullying

Asynchrony and optional anonymity are characteristic of online communication as we know it today; it heavily relies on the ability to communicate with people who are not physically present, and stimulates interaction with people outside of one’s group of close friends through social networks (Madden et al. 2013). The rise of these networks brought various advantages to adolescents: studies show positive relationships between online communication and social connectedness (Bessiere et al. 2008; Valkenburg and Peter 2007), and that self-disclosure on these networks benefits the quality of existing and newly developed relationships (Steijn and Schouten 2013). The popularity of social networks and instant messaging among children has them connecting to the Internet from increasingly younger ages (Ólafsson et al. 2013), with \(95\%\) of teensFootnote 1 ages 12–17 online,of which \(80\%\) are on social media (Lenhart et al. 2011). For them, however, the transition from social interaction predominantly taking place on the playground to being mediated through mobile devices (Livingstone et al. 2011) has also moved negative communication to a platform where indirect and anonymous interaction has a window into homes.

A range of studies conducted by the Pew Research CenterFootnote 2, most notably (Lenhart et al. 2011), provides detailed insight into these developments. While \(78\%\) of teens report positive outcomes from their social media interactions, \(41\%\) have experienced at least some adverse outcomes, ranging from arguments, trouble with school and parents, physical fights and ending friendships. From \(19\%\) bullied in the 12 months prior to the study, \(8\%\) of all teens reported this was some form of cyberbullying. These numbers are comparable to other research (Robers et al. 2015; Kann et al. 2014) (7% for Grades 6–12, and 15% Grades 9–12 respectively). Bullying has for a while been regarded as a public health risk by numerous authorities (Xu et al. 2012), with depression, anxiety, low self-esteem, school absence, lower grades, and risk of self-medication as primary concerns.

The act of cyberbullying—other than being conducted online—shares the characteristics of traditional bullying: a power imbalance between the bully and victim (Sharp and Smith 2002), the harm is intentional, repeated over time, and has a negative psychological effect on the victim (DeHue et al. 2008). With the Internet as a communication platform however, some additional aspects arise: location, time, and physical presence have become an irrelevant factor in the act. Accordingly, several categories unique to this form of bullying are defined (Willard 2007; Beran and Li 2008): flaming (sending rude or vulgar messages), outing (posting private information or manipulated personal material of an individual without consent), harassment (repeatedly sending offensive messages to a single person), exclusion (from an online group), cyberstalking (terrorizing through sending explicitly threatening and intimidating messages), denigration (spreading online gossips), and impersonation. Moreover, in addition to optional anonymity hiding the critical figures behind an act of cyberbullying, it could also obfuscate the number of actors (i.e., there might only be one even though it seems there are more). Cyberbullying acts can prove challenging to remove once published; messages or images might persist through sharing and be viewable by many (as is typical for hate pages), or available to a few (in group or direct conversations). Hence, it can be argued that any form of harassment has become more accessible and intrusive. This online nature has an advantage as well: in theory, platforms record these bullying instances. Therefore, an increasing number of researches are interested in the automatic detection (and prevention) of cyberbullying.

1.2 Detection and task complexity

The task of cyberbullying detection can be broadly defined as the use of machine learning techniques to automatically classify text in messages on bullying content, or infer characteristic features based on higher-order information, such as user features or social network attributes. Bullying is most apparent in younger age groups through direct verbal outings (Vaez et al. 2004), and more subtle in older groups, mainly manifested in more complex social dynamics such as exclusion, sabotage, and gossip (Privitera and Campbell 2009). Therefore, the majority of work on the topic focuses on younger age groups, be it deliberately or given that the primary source for data is social media—which will likely result in these being highly present for some media (Duggan 2015). Apart from the well-established challenges that language use poses (e.g., ambiguity, sarcasmdialects, slang, neologisms), two factors in the event add further linguistic complexity, namely that of actor role and associated context. In contrast to tasks where adequate information is provided in the text of a single message alone, to completely map a cyberbullying event and pinpoint bully and victim implies some understanding of the dynamics between the involved actors and the concurrent textual interpretation of the register.

1.3 Register

Firstly, to understand the task of cyberbullying detection as a specific domain of text classification, one should consider the full scope of the register that defines it. The bullying categories discussed in Sect. 1.1 include some initial cues that can be inferred from text alone; flaming being the most obvious through simple curse word usage, slurs, or other profanity. Similarly, threatening or intimidating messages that fall under cyberstalking are clearly denoted by particular word usage. The other categories are more subtle: outing could also be done textually, in the form of a phone number, or pieces of information that are personal or sensitive in nature. Denigration would include words that are not blatantly associated with abusive acts; however, misinformation about sensitive topics might for example be paired with a victim’s name. One could further extend these cues based on the literature (as also captured in Hee et al. (2015)) to include bullying event cues, such as messages that serve to defend the victim, and those in support of the bully. The linguistic task could therefore be framed (partly based on Van Hee et al. (2018)) as identifying an online message context that includes aggressive or hurtful content against a victim. Several additional communicative components in these contexts further change the interpretation of these cues, however.

1.4 Roles

Secondly, there is a commonly made distinction between several actors within a cyberbullying event. A naive role allocation includes a bully B, a victim V and bystander BY, the latter of whom may or may not approve of the act of bullying. More nuanced models such as that of Xu et al. (2012) include additional roles (see Fig. 1 for a role interaction visualization), where different roles can be assigned to one person; for example, being bullied and reporting this. Most importantly, all shown roles can be present in the span of one single thread on social media, as demonstrated in Table 1. While some roles clearly show from frequent interaction with either a positive or negative sentiment (B, V, A), others might not be observable through any form of conversation (R, BY), prove too subtle, or not distinguishable from other roles.

Role graph of a bullying event. Each vertex represents an actor, labeled by their role in the event: bully (B), victim (V), bystander (BY), reinforcer (AB), assistant (BF), defender (S), reporter (S), accuser (A), and friend (VF). Each edge indicates a stream of communication, labeled by whether this is positive (\(+\)) or negative (−) in nature, and its strength indicating the frequency of interaction. Dotted edges indicate nonparticipation in the event, and vertices those added by Xu et al. (2012) to account for social-media-specific roles

1.5 Context

Thirdly, the content of the messages has to be interpreted differently between these roles. While curse words can be a good indication of harassment, identification of a bully arguably requires more than these alone. Consider Table 1: both B and A use insults (lines 7–8), the message of V (line 6) might be considered as bullying in isolation, and having already determined B, the last sentence (line 10) can generally be regarded as a threat. In conclusion, the full scope of the task is complex; it could have a temporal-sequential character, would benefit from determining actors and their interactions, and then should have some sense of severity as well (e.g. distinguish bullying from teasing).

1.6 Our contributions

Surprisingly, a significant amount of work on the task does not collect (or use) data that allows for the inference of such features (which we will further elaborate on in Sect. 3). To confirm this, we reproduce part of the previous cyberbullying detection research on different sources. Predictions made by current automatic methods for cyberbullying classification are demonstrated not to reflect the above-described task complexity; we show performance drops across different training domains, and give insights into content feature importance and limitations. Additionally, we report on reproducibility issues in state-of-art work when subjected to our evaluation. To facilitate future reproduction, we will provide all code open-source, including dataset readers, experimental code, and qualitative analyses.Footnote 3 Finally, we present a method to collect crowdsourced cyberbullying data in an experimental setting. It grants control over the size and richness of the data, does not invade privacy, nor rely on external parties to facilitate data access. Most importantly, we demonstrate that it successfully increases classifier performance. With this work, we provide suggestions on improving methodological rigor and hope to aid the community in a more realistic evaluation and implementation of this task of societal importance.

2 Related work

The task of detecting cyberbullying content can be roughly divided into three categories. First, research with a focus on binary classification, where it is only relevant if a message contains bullying or not. Second, more fine-grained approaches where the task is to determine either the role of actors in a bullying scenario or the content type (i.e., different categories of bullying). Both binary and fine-grained approaches predominantly focus on text-based features. Lastly, meta-data approaches that take more than just message content into account; these might include profile, network, or image information. Here, we will discuss efforts relevant to the task of cyberbullying classification within these three topics. We will predominantly focus on work conducted on openly available data, and those that report (positive) \(F_1\)-scores, to promote fair comparisons.Footnote 4 For an extensive literature review and a detailed comparison of different studies, see Rosa et al. (2019). Finally, a significant portion of our research pertains to generalizability, and therefore the field of domain adaptation. We will discuss its previous observations related text classification specifically, and their relevance to (future) research on cyberbullying detection.

2.1 Binary classification

One of the first traceable suggestions for applying text mining specifically to the task of cyberbullying detection is made by Kontostathis et al. (2010), who note that Yin et al. (2009) previously tried to classify online harassment on the caw 2.0 dataset.Footnote 5 In the latter research, Yin et al. already state that the ratio of documents with harassing content to typical documents is challengingly small. Moreover, they foresee several other critical issues with regards to the task: a lack of positive instances will make detecting characteristic features a difficult task, and human labeling of such a dataset might have to face issues of ambiguity and sarcasm that are hard to assess when messages are taken out of conversation context. Even with very sparse datasets (with less than \(1\%\) positive class instances), the harassment classifier outperforms the random baseline using tf\(\cdot \)idf, pronoun, curse word, and post similarity features.

Following up Yin et al. (2009), Reynolds et al. (2011) note that the caw 2.0 dataset is generally unfit for cyberbullying classification: in addition to lacking bullying labels (it only provides harassment labels), the conversations are predominantly between adults. Their work, along with Bayzick et al. (2011), is a first effort to create datasets for cyberbullying classification through scraping the question-answering website Formspring.me, as well as Myspace.Footnote 6 In contrast with similar research, they aim to use textual features while deliberately avoiding Bag-of-Words (BoW) features. Through a curse word dictionary and custom severity annotations, they construct several metrics for features related to these “bad” words. In their more recent paper, Kontostathis and Reynolds (2013) redid analyses on the kon_frm set, primarily focusing on the contribution curse words have in the classification of bullying messages. By forming queries from curse word dictionaries, they show that there is no one combination which retrieves all. However, by capturing them in an Essential Dimensions of Latent Semantic Indexing query vector averaged over known bullying content—classifying the top-k (by cosine similarity) as positive—they show a significant Average Precision improvement over their baseline.

More recent efforts include Bretschneider et al. (2014), who combined word normalization, Named Entity Recognition to detect person-specific references, and multiple curse word dictionaries (Noswearing.com 2016; Broadcasting Standards Authority 2013; Millwood-Hargreave 2000) in a rule-based pattern classifier, scoring well on Twitter data.Footnote 7 Our own work (Hee et al. 2015), where we collected a large dataset with posts from Ask.fm, used standard BoW features as a first test. Later, these were extended in Van Hee et al. (2018) with term lists, subjectivity lexicons, and topic model features. Recently popularized techniques of word embeddings and neural networks have been applied by Zhao et al. (2016); Zhao and Mao (2016) on xu_trec, nay_msp and sui_twi, both resulting in the highest performance for those sets. Convolutional Neural Networks (CNNs) on phonetic features were applied by Zhang et al. (2016) and Rosa et al. (2018) investigate among others the same architecture on textual features in combination with Long Short Term Memory Networks (LSTMs). Both Rosa et al. (2018) and that of Agrawal and Awekar (2018) investigate the C-LSTM (Zhou et al. 2015), the latter includes Synthetic Minority Over-sampling Technique (SMOTE). However, as we will show in the current research, both of these works suffer from reproducibility issues. Finally, fuzzified vectors of top-k word lists for each class were used to conduct membership likelihood-based classification by Rosa et al. (2018) on kon_frm, boosting recall over previously used methods.

2.2 Fine-grained classification

The common denominator of the previously discussed research was a focus on detecting single messages with evidence of cyberbullying per instance. As argued in Sect. 1.2, however, there are more textual cues to infer than merely if a message might be interpreted as bullying. The work of Xu et al. (2012) proposed to expand this binary approach with more fine-grained classification tasks by looking at bullying traces; i.e., the responses to a bullying incident. They distinguished two tasks based on keyword-retrieved (bully) Twitter data:Footnote 8 (1) a role labeling task, where semantic role labeling was then used to distinguish person-mention roles, and (2) the incorporation of sentiment to determine teasing, where despite high accuracy, \(48\%\) of the positive instances were misclassified.

In our work, we extended this train of thought and demonstrated the difficulty of fine-grained classification of types of bullying (curse, defamation, defense, encouragement, insult, sexual, threat), and roles (harasser, bystander assistant, bystander defender, victim) with simple BoW and sentiment features—especially in detecting types (Hee et al. 2015; Van Hee et al. 2015). Later, this was further addressed in Van Hee et al. (2018) for both Dutch and English. Evaluated against a profanity (curse word lexicon) and word n-gram baseline, a multi-feature model (as discussed in Sect. 2.1) achieved the lowest error rates over (almost) all labels, for both bullying type and role classification. Lastly, Tomkins et al. (2018) also adapt fine-grained knowledge about bullying events in their socio-linguistic model; in addition to performing bullying classification, they find latent text categories and roles, partly relying on social interactions on Twitter. It thereby ties in with the next category of work: leveraging meta-data from the network the data is collected from.

2.3 Meta-data features

A notable, yet less popular aspect of this task is the utilization of a graph for visualizing potential bullies and their connections. This method was first adopted by Nahar et al. (2013), who use this information in combination with a classifier trained on LDA and weighted tf\(\cdot \)idf features to detect bullies and victims on the caw_* datasets. Work that more concretely implements techniques from graph theory is that of Squicciarini et al. (2015), who used a wide range of features: network features to measure popularity (e.g., degree centrality, closeness centrality), content-based features, (length, sentiment, offensive words, second-person pronouns), and incorporated age, gender, and number of comments. They achieved the highest performance on kon_frm and bay_msp.

Work by Hosseinmardi et al. (2015) focuses on Instagram posts and incorporates platform-specific features retrieved from images and its network. They are the first to adhere to the literature more closely and define cyberagression (Kowalski et al. 2012) separately from cyberbullying, in that these are single negative posts rather than the repeated character of cyberbullying. They also show that certain LIWC (Linguistic Inquiry and Word Count) categories, such as death, appearance, religion, and sexuality, give a good indication of cyberbullying. While BoW features perform best, meta-data features (such as user properties and image content) in combination with textual features from the top 15 comments achieve a similar score. Cyberagression seems to be slightly easier to classify.

2.4 Domain adaptation

As the majority of the work discussed above focuses on a single corpus, a serious omission seems to be gauging how this influences model generalization. Many applications in natural language processing (NLP) are often inherently limited by expensive high-quality annotations, whereas unlabeled data is plentiful. Idiosyncrasies between source and target domains often prove detrimental to the performance of techniques relying on those annotations McClosky et al. (2006), Chan et al. (2006); Vilain et al. (2007) when applied in the wild. The field of domain adaptation identifies tasks that suffer from such limitations, and aims to overcome them either in a supervised DauméIII (2009); Finkel et al. (2009) or unsupervised Blitzer et al. (2007); Jiang and Zhai (2007); Ma et al. (2014); Schnabel and Schütze (2014) way. For text classification, sentiment analysis is arguably closest to the task of cyberbullying classification Glorot et al. (2011); Chan et al. (2012); Pan et al. (2010). In particular as imbalanced data exacerbates generalization Li et al. (2012). However, while for sentiment analysis these issues are clearly identified and actively worked on, the same cannot be said for cyberbullying detection,Footnote 9 where concrete limitations have yet to be explored. We assume to find issues similar to those in sentiment analysis in the current task, as we will further discuss in the following section.

3 Task evaluation importance and hypotheses

The domain of cyberbullying detection is in its early stages, as can be seen in Table 2. Most datasets are quite small, and only a few have seen repeated experiments. Given the substantial societal importance of improving the methods developed so far, pinpointing shortcomings in the current state of research should assist in creating a robust framework under which to conduct future experiments—particularly concerning evaluating (domain) generalization of the classifiers. The latter of which, to our knowledge, none of the current research seems involved with. This is therefore the main focus of our work. In this section, we define three motivations for assessing this, and pose three respective hypotheses through which we will further investigate current limitations in cyberbullying detection.

3.1 Data scarcity

Considering the complexity of the social dynamics underlying the target of classification, and the costly collection and annotation of training data, the issue of data scarcity can mostly be explained with respect to the aforementioned restrictions on data access: while on a small number of platforms most data is accessible without any internal access (commonly as a result of optional user anonymity), it can be assumed that a significant part of actual bullying takes place ‘behind closed doors’. To uncover this, one would require access to all known information within a social network (such as friends, connections, and private messages, including all meta-data). As this is unrealistic in practice, researchers rely on the small subset of publicly accessible data (predominantly text) streams. Consequently, most of the datasets used for cyberbullying detection are small and exhibit an extreme skew between positive and negative messages (as can be seen in Table 3). It is unlikely that these small sets accurately capture the language use on a given platform, and generalizable linguistic features of the bullying instances even less so. In line with domain adaptation research, we therefore anticipate that the samples are underpowered in terms of accurately representing the substantial language variation between platforms, both in normal language use and bullying-specific language use (Hypothesis 1).

3.2 Task definition

Furthermore, we argue that this scarcity introduces issues with adherence to the definition of the task of cyberbullying. The chances of capturing the underlying dynamics of cyberbullying (as defined in the literature) are slim with the message-level (i.e., using single documents only) approaches that the majority of work in the field has used up until now. The users in the collected sources have to be rash enough to bully in the open, and particular (curse) word usage that would explain the effectiveness of dictionary and BoW-based approaches in previous research. Hence, we also assume that the positive instances are biased; only reflecting a limited dimension of bullying (Hypothesis 2). A more realistic scenario—where characteristics such as repetitiveness and power imbalance are taken into consideration—would require looking at the interaction between persons, or even profile instances rather than single messages, which, as we argued, is not generally available. The work found in the meta-data category (Sect. 2.3) supports this argument with improved results using this information.

This theory regarding the definition (or operationalization) of this task is shared by Rosa et al., who pose that “the most representative studies on automatic cyberbullying detection, published from 2011 onward, have conducted isolated online aggression classification” (Rosa et al. 2019, p. 341). We will mainly focus on the shared notion that this framing is limited to verbal aggression; however, our focus will empirically assess its overlap with data framed to solely contain online toxicity data (i.e., online / cyberagression) to find concrete evidence.

3.3 Domain influence

Enriching previous work with data such as network structure, interaction statistics, profile information, and time-based analyses might provide fruitful sources for classification and a correct operationalization of the task. However, they are also domain-specific, as not all social media have such a rich interaction structure. Moreover, it is arguably naive to assume that social networks such as Facebook (for which in an ideal case, all aforementioned information sources are available) will stay a dominant platform of communication. Recently, younger age groups have turned towards more direct forms of communication such as WhatsApp, Snapchat, or media-focused forms such as Instagram (Smith and Anderson 2018), and recently TikTok. This move implies more private and less affluent environments in which data can be accessed (resulting in even more scarcity), and that further development in the field requires a critical evaluation of the current use of the available features, and ways to improve cross-domain generalization overall. This work, therefore, does not disregard textual features; they would still need to be considered as the primary source of information, while paying particular attention to the issues mentioned here. We further try to contribute towards this goal and argue that crowdsourcing bullying content potentially decreases the influence of domain-specific language use, allows for richer representations, and alleviates data scarcity (Hypothesis 3).

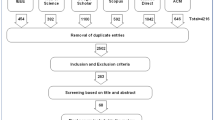

4 Data

For the current research, we distinguish a large variety of datasets. For those provided through the AMiCA (Automatic Monitoring in Cyberspace Applications)Footnote 10 project, the Ask.fm corpus is partially available open-source,Footnote 11 and the Crowdsourced corpus will be made available upon request. All other sources are publicly available datasets gathered from previous researchFootnote 12 as discussed in Sect. 2. Corpus statistics of all data discussed below can be found in Table 3. The sets’ abbreviations, language (en for English, nl for Dutch), and brief collection characteristics can be found below.

4.1 AMiCA

Ask.fm (\(D_{ask}\), \(D_{ask\_nl}\), en, nl) were collected from the eponymous social network by Hee et al. (2015). Ask.fm is a question answering-style network where users interact by (frequently anonymously) asking questions on other profiles, and answering questions on theirs. As such, a third party cannot react to these question-answer pairs directly. The anonymity and restrictive interactions make for a high amount of potential cyberbullying. Profiles were retrieved through profile seed list, used as a starting point for traversing to other profiles and collecting all existing question-answer pairs for those profiles—these are predominantly Dutch and English. Each message was annotated with fine-grained labels (further details can be found in Van Hee et al. (2015)); however, for the current experiments these were binarized, with any form of bullying being labeled positive.

Donated (\(D_{don\_nl}\), nl) contains instances of (Dutch) cyberbullying from a mixture of platforms such as Skype, Facebook, and Ask.fm. The set is quite small; however, it contains several hate pages that are valuable collections of cyberbullying directed towards one person. The data was donated for use in the AMiCA project by previously bullied teens, thus forming a reliable source of gold standard, real-life data.

Crowdsourced (\(D_{sim\_nl}\), nl) originates from a crowdsourcing experiment conducted by Broeck et al. (2014), wherein 200 adolescents aged 14 to 18 partook in a role-playing experiment on an isolated SocialEngineFootnote 13 social network. Here, each respondent was given the account of a fictitious person and put in one of four roles in a group of six: a bully, a victim, two bystander-assistants, and two bystander-defenders. They were asked to read—and identify with—a character description and respond to an artificially generated initial post attributed to one of the group members. All were confronted with two initial posts containing either low- or high-perceived severity of cyberbullying.

4.2 Related work

Formspring (\(D_{frm}\), en) is taken from the research by Reynolds et al. (2011) and is composed of posts from Formspring.me, a question-answering platform similar to Ask.fm. As Formspring is mostly used by teenagers and young adults, and also provides the option to interact anonymously, it is notorious for hosting large amounts of bullying content (Binns 2013). The data was annotated through Mechanical Turk, providing a single label by majority vote for a question-answer pair. For our experiments, the question and answer pairs were merged into one document instance.

Myspace (\(D_{msp}\), en) was collected by Bayzick et al. (2011). As this was set up as an information retrieval task, the posts are labeled in batches of ten posts, and thus a single label applies to the entire batch (i.e., does it include cyberbullying). These were merged per batch as one instance and labeled accordingly. Due to this batching, the average tokens per instance are much higher than any of the other corpora.

Twitter (\(D_{twB}\), en) by Bretschneider et al. (2014) was collected from the stream between 20-10-2012 and 30-12-2012, and was labeled based on a majority vote between three annotators. Excluding re-tweets, the main dataset consists of 220 positive and 5162 negative examples, which adheres to the general expected occurrence rate of 4%. Their comparably-sized test set, consisting of 194 positive and 2699 negative examples, was collected by adding a filter to the stream for messages to contain any of the words school, class, college, and campus. These sets are merged for the current experiments.

Twitter II (\(D_{twX}\), en) from Xu et al. (2012) focussed on bullying traces, and was thus retrieved by keywords (bully, bullying), which if left unmasked generates a strong bias when utilized for classification purposes (both by word usage as well as being a mix of toxicity and victims). It does, however, allow for demonstrating the ability to detect bullying-associated topics, and (indirect) reports of bullying.

4.3 Experiment-specific

Ask.fm Context (\(C_{ask}\), \(C_{ask}\_nl\), en, nl)—the Ask.fm corpus was collected on profile level, but prior experiments have focused on single message instances (Van Hee et al. 2018). Here, we aggregate all messages for a single profile, which is then labeled as positive when as few as a single bullying instance occurs on the profile. This aggregation shifts the task of cyberbullying message detection to victim detection on profile level, allowing for more access to context and profile-level severity (such as repeated harassment), and makes for a more balanced set (1,763 positive and 6,245 negative instances).

Formspring Context (\(C_{frm}\), en)—similar to the Ask.fm corpus, was collected on profile level (Reynolds et al. 2011). However, the set only includes 49 profiles, some of which only include a single message. Grouping on full profile level would result in very few instances; thus, we opted for creating small ‘context’ in batches of five (of the same profile). Similar to the Ask.fm approach, if one of these messages contains bullying, it is labeled positive, balancing the dataset (565 positive and 756 negative instances).

Toxicity (\(D_{tox}\), en)—bashed on the Detox set from Wikimedia (Thain et al. 2017; Wulczyn et al. 2017), this set offers over 300k messagesFootnote 14 of Wikipedia Talk comments with Crowdflower-annotated labels for toxicity (including subtypes).Footnote 15 Noteworthy is how disjoint both the task and the platform are from the rest of the corpora used in this research. While toxicity shares many properties with bullying, the focus here is on single instances of insults directed to anonymous people, who are most likely unknown to the harasser. Given Wikipedia as a source, the article and moderation focussed comments make it topically quite different from what one would expect on social media—the fundamental overlap being curse words, which is only one of many dimensions to be captured to detect cyberbullying (as opposed to toxicity).

4.4 Preprocessing

All texts were tokenized using spaCy (Honnibal and Montani 2017).Footnote 16 No preprocessing was conducted for the corpus statistics in Table 3. All models (Sect. 5) applied lowercasing and special character removal only; alternative preprocessing steps proved to decreased performance (see Table 6).

4.5 Descriptive analysis

Both Table 3 and Fig. 2 illustrate stark differences; not only across domains but more importantly, between in-domain training and test sets. Most do not exceed a Jaccard similarity coefficient over 0.20 (Fig. 2), implying a large part of their vocabularies do not overlap. This contrast is not necessarily problematic for classification; however, it does hamper learning a general representation for the negative class. It also clearly illustrates how even more disjoint \(D_{twX}\) (collected by trace queries) and \(D_{tox}\) are from the rest of the corpora and splits. Finally, the descriptives (Table 3) further show significant differences in size, message length, class balance, and type/token ratios (i.e., writing level). In conclusion, it can be assumed that the language use in both positive as negative instances will vary significantly, and that it will be challenging to model in-domain, and generalize out-of-domain.

5 Experimental setup

We attempt to address the following hypotheses posited in Sect. 3:

-

Hypothesis 1: As researchers can only rely on scarce data of public bullying, their samples are assumed to be underpowered in terms of accurately representing the substantial language variation between platforms, both in normal language use and bullying-specific language use.

-

Hypothesis 2: Given knowledge from the literature, it is unlikely that single messages capture the full complexity of bullying events. Cyberbullying instances in the considered corpora are therefore expected to be largely biased, only reflecting a limited dimension of bullying.

-

Hypothesis 3: With control over data generation and structure, crowdsourcing bullying content potentially decreases the influence of domain-specific language use, allows for richer representations, and alleviates data scarcity.

Accordingly, we propose five main experiments. Experiments I (Hypothesis 1) and III (Hypothesis 2) deal with the problem of generalizability, whereas Experiment II (Hypothesis 1) and V (Hypothesis 3) will both propose a solution for restricted data collection. Experiment IV will reproduce a selection of state-of-the-art models for cyberbullying detection and subject them to our cross-domain evaluation, to be compared against our baselines.

5.1 Experiment I: cross-domain evaluation

In this experiment, we introduce the cross-domain evaluation framework, which will be extended in all other experiments. For this, we initially perform a many-to-many evaluation of a given model (baseline or otherwise) trained individually on all available data sources, split in train and test. In later experiments, we extend this with a one-to-many evaluation. This setup implies that (i) we fit our model on some given corpus’ training portion and evaluate prediction performance on all available corpora their test portions (many-to-many) individually. Furthermore, we (ii) fit on all corpora their train portions combined, and evaluate on all their test portions individually (one-to-many). In sum, we report on ‘small’ models trained on each corpus individually, as well as a ‘large’ one trained on them combined, for each test set individually.

For every experiment, hyper-parameter tuning was conducted through an exhaustive grid search, using nested cross-validation (with ten inner and three outer folds) on the training set to find the optimal combination of the given parameters. Any model selection steps were based on the evaluation of the outer folds. The best performing model was then refitted on the full training set (90% of the data) and applied to the test set (10%). All splits (also during cross-validation) were made in a stratified fashion, keeping the label distributions across splits similar to the whole set.Footnote 17 Henceforth, all experiments in this section can be assumed to follow this setup.

The many-to-many evaluation framework intends to test Hypothesis 1 (Sect. 3.1), relating to language variation and cross-domain performance of cyberbullying detection. To facilitate this, we employ an initial baseline model: Scikit-learn’s (Pedregosa et al. 2011) Linear Support Vector Machine (SVM) (Cortes and Vapnik 1995; Fan et al. 2008) implementation trained on binary BoW features, tuned using the grid shown in Table 3, based on Van Hee et al. (2018). Given its use in previous research, it should form a strong candidate against which to compare. To ascertain out-of-domain performance compared to this baseline, we report test score averages across all test splits, excluding the set the model was trained on (in-domain).

Consequently, we add an evaluation criterion to that of related work: when introducing a novel approach to cyberbullying detection, it should not only perform best in-domain for the majority of available corpora, but should also achieve the highest out-of-domain performance on average to classify as a robust method. It should be noted that the selected corpora for this work are not all optimally representative for the task. The tests in our experiments should, therefore, be seen as an initial proposal to improve the task evaluation.

5.2 Experiment II: gauging domain influence

In an attempt to overcome domain restrictions on language use, and to further solidify our tests regarding Hypothesis 1, we aim to improve the performance of our baseline models through changing our representations in three distinct ways: (i) merging all available training sets (as to simulate a large, diverse corpus), (ii) by aggregating instances on user-level, and (iii) using state-of-the-art language representations over simple BoW features in all settings. We define these experiments as such:

Volume and Variety Some corpora used for training are relatively small, and can thus be assumed insufficient to represent held-out data (such as the test sets). One could argue that this can be partially mitigated through simply collecting more data or training on multiple domains. To simulate such a scenario, we merge all available cyberbullying-related training splits (creating \(D_{all}\)), which then corresponds to the one-to-many setting of the evaluation framework. The hope is that corpora similar in size or content (the Twitter sets, Ask.fm and Formspring, YouTube and Myspace) would benefit from having more (related) data available. Additionally, training a large model on its entirety facilitates a catch-all setting for assessing the average cross-domain performance of the full task (i.e. across all test sets when trained on all available corpora). This particular evaluation will be used in Experiment IV (replication) for model comparison.

Context change Practically all corpora, save for MySpace and YouTube, have annotations based on short sentences, which is particularly noticeable in Table 3. This one-shot (i.e., based on a single message) method of classifying cyberbullying provides minimal content (and context) to work with. It does therefore not follow the definition of cyberbullying—as previously discussed in Sect. 3.2. As a preliminary simulationFootnote 18 of adding (richer) context, we merge the profiles of \(D_{ask}\) and (batches of) \(D_{frm}\) into single context instances (creating \(C_{ask}\) and \(C_{frm}\), see Sect. 4). This allows us to compare models trained on larger contexts directly to that of single messages, and evaluate how context restrictions affect performance on the task in general, as well as cross-domain.

Improving representations Pre-trained word embeddings as language representation have been demonstrated to yield significant performance gains for a multitude of NLP-related tasks (Collobert et al. 2011). Given the general lack of training data—including negative instances for many corpora—word features (and weightings) trained on the available data tend to be a poor reflection of the language use on the platform itself, let alone other social media platforms. Therefore, pre-trained semantic representations provide features that in theory, should perform better in cross-domain settings. We consider two off-the-shelf embedding models per language that are suitable for the task at hand: for English, averaged 200-dimensional GloVe (Pennington et al. 2014) vectors trained on Twitter,Footnote 19 and DistilBERT (Sanh et al. 2019) sentence embeddings (Devlin et al. 2018).Footnote 20 For Dutch, fastText embeddings (Bojanowski et al. 2017) trained on WikipediaFootnote 21 and word2vec (Mikolov et al. 2013) embeddingsFootnote 22 (Tulkens et al. 2016) trained on the COrpora from the Web (COW) corpus (Schäfer and Bildhauer 2012) embeddings. The GLoVe, fastText, and word2vec embeddings were processed using GensimFootnote 23 (Řehůřek et al. 2010).

As an additional baseline for this section, we include the Naive Bayes Support Vector Machine (NBSVM) from Wang et al. (2012), which should offer competitive performance on text classification tasks.Footnote 24 This model also served as a baseline for the Kaggle challenge related to \(D_{tox}\).Footnote 25 NBSVM uses tf\(\cdot \)idf-weighted uni and bi-gram features as input, with a minimum document frequency of 3, and corpus prevalence of 90%. The idf values are smoothed and tf scaled sublinearly (\(1 + \log (\)tf)). These are then weighted by their log-count ratios derived from Multinomial Naive Bayes.

Tuning of both embeddings and NB representation classifiers is done using the same grid as Table 4, however replacing C with [1, 2, 3, 4, 5, 10, 25, 50, 100, 200, 500]. Lastly, we opted for Logistic Regression (LR), primarily as this was used in the NBSVM implementation mentioned above, as well as fastText. Moreover, we found SVM using our grid to perform marginally worse using these features, and fine-tuning DistilBERT using a fully connected layer (similar to e.g. Sun et al. (2019)) to yield similar performance. The embeddings were not fine-tuned for the task. While this could potentially increase performance, it complicates direct comparison to our baselines—we leave this for Experiment IV.

5.3 Experiment III: aggression overlap

In previous research using fine-grained labels for cyberbullying classification (e.g., Van Hee et al. (2018)) it was observed that cyberbullying classifiers achieve the lowest error rates on blatant cases of aggression (cursing, sexual talk, and threats), an idea that was further adopted by Rosa et al. (2019). To empirically test Hypothesis 2 (see Sect. 3.2)—related to the bias present in the available positive instances—we adapt the idea of running a profanity baseline from this previous work. However, rather than relying on look-up lists containing profane words, we expand this idea by training a separate classifier on toxicity detection (\(D_{tox}\)) and seeing how well this performs on our bullying corpora (and vice-versa). For the corpora with fine-grained labels, we can further inspect and compare the bullying classes captured by this model.

We argue that high test set performance overlap of a toxicity detection model with models trained on cyberbullying detection gives strong evidence of nuanced aspects of cyberbullying not being captured by such models. Notably, in line with Rosa et al. (2019), that the current operationalization does not significantly differ from the detection of online aggression (or toxicity)—and therefore does not capture actual cyberbullying. Given enough evidence, both issues should be considered as crucial points of improvement for the further development of classifiers in this domain.

5.4 Experiment IV: replicating state-of-the-art

For this experiment, we include two architectures that achieved state-of-the-art results on cyberbullying detection. As a reference neural network model for language-based tasks, we used a Bidirectional (Schuster and Paliwal 1997; Baldi et al. 1999) Long Short-Term Memory network (Hochreiter and Schmidhuber 1997; Gers et al. 2002) (BiLSTM), partly reproducing the architecture from Agrawal and Awekar (2018). We then attempt to reproduce the Convolutional Neural Network (CNN) (Kim 2014) used in both Rosa et al. 2018) and Agrawal and Awekar (2018), and the Convolutional LSTM (C-LSTM) (Zhou et al. (2015) used in Rosa et al. (2018). As Rosa et al. (2018) do not report essential implementation details for these models (batch size, learning rate, number of epochs), there is no reliable way to reproduce their work. We will, therefore, take Agrawal and Awekar (2018) their implementation for the BiLSTM and CNN as the initial setup. Given that this work is available open-source, we run the exact architecture (including SMOTE) in our Experiment I and II evaluations. The architecture-specific details are as follows:

Reproduction We initially adopt the basic implementationFootnote 26 by Agrawal and Awekar (2018): randomly initialized embeddings with a dimension of 50 (as the paper did not find significant effects of changing the dimension, nor initialization), run for 10 epochs with a batch size of 128, dropout probability of 0.25, and a learning rate of 0.01. Further architecture details can be found in our repository.Footnote 27 We also run a variant with SMOTE on, and one from the provided notebooks directly.Footnote 28 This and following neural models were run on an NVIDIA Titan X Pascal, using Keras (Chollet et al. 2015) with Tensorflow (Abadi et al. 2015) as backend.

BiLSTM For our own version of the BiLSTM, we minimally changed the architecture from Agrawal and Awekar (2018), only tuning using a grid on batch size [32, 64, 128, 256], embedding size [50, 100, 200, 300], and learning rate [0.1, 0.01, 0.05, 0.001, 0.005]. Rather than running for ten epochs, we use a validation split (10% of the train set) and initiate early stopping when the validation loss does not go down after three epochs. Hence—and in contrast to earlier experiments—we do not run the neural models in 10-fold cross-validation, but a straightforward 2-fold train and test split where the latter is 10% of a given corpus. Again, we are predominantly interested in confirming statements made in earlier work; namely, that for this particular setting tuning of the parameters does not meaningfully affect performance.

CNN We use the same experimental setup as for the BiLSTM. The implementations of Agrawal and Awekar (2018); Rosa et al. (2018) use filter window sizes of 3, 4, and 5—max pooled at the end. Given that the same grid is used, the word embedding sizes are varied and weights trained (whereas Rosa et al. (2018) use 300-dimensional pre-trained embeddings). Therefore, for direct performance comparisons, Agrawal and Awekar (2018) their results will be used as a reference. As CNN-based architectures for text classification are often also trained on character level, we include a model variant with this input as well.

C-LSTM For this architecture, we take an open-source text classification survey implementation.Footnote 29 This uses filter windows of [10, 20, 30, 40, 50], 64-dimensional LSTM cells and a final 128 dimensional dense layer. Please refer to our repository for additional implementation details—for this and previous architectures.

5.5 Experiment V: crowdsourced data

Following up on the proposed shortcomings of the currently available corpora in Hypotheses 1 and 2, we propose the use of a crowdsourcing approach to data collection. In this experiment, we will repeat Experiment I and II with the best out-of-domain classifier from the above evaluations with three (DutchFootnote 30) datasets: \(D_{ask\_nl}\); the Dutch part of the Ask.fm dataset used before, \(D_{sim\_nl}\); our synthetic, crowdsourced cyberbullying data, and lastly \(D_{don\_nl}\); a small donated cyberbullying test set with messages from various platforms (full overview and description of these three can be found in Sect. 4). The only notable difference to our setup for this experiment is that we never use \(D_{don\_nl}\) as training data. Therefore rather than \(D_{all}\), the Ask.fm corpus is merged with the crowdsourced cyberbullying data to make up the \(D_{comb}\) set.

6 Results and discussion

We will now cover results per experiment, and to what extent these provide support for the hypotheses posed in Sect. 3. As most of these required backward evaluation (e.g., Experiment III was tested on sets from Experiment I), the results of Experiment I-III are compressed in Table 5. Table 7 comprises the Improving Representations part of Experiment II (under ‘word2vec’ and ‘DistilBERT’) along with the preprocessing results effect of our baselines. The results of Experiment V can be found in Table 8. For brevity of reporting, the latter two only report on the in-domain scores, and feature the out-of-domain averages for the \(D_{all}\) models for comparison, and \(D_{tox}\) averages in Table 7.

6.1 Experiment I

Looking at Table 5, the upper group of rows under T1 represents the results for Experiment I. We posed in Hypothesis 1 that samples are underpowered regarding their representation of the language variation between platforms, both for bullying and normal language use. The data analysis in Sect. 4.5 showed minimal overlap between domains in vocabulary and notable variances in numerous aspects of the available corpora. Consequently, we raised doubts regarding the ability of models trained on these individual corpora to generalize to other corpora (i.e., domains).

Firstly, we consider how well our baseline performed on the in-domain test sets. For half of the corpora, it achieves the highest performance on these specific sets (i.e., the test set portion of the data the model was trained on). More importantly, this entails that for four of the other sets, models trained on other corpora perform equal or better. Particularly the effectiveness of \(D_{ask}\) was in some cases surprising; the YouTube corpus by Dadvar et al. (2014) (\(D_{ytb}\)), for example, contains much longer instances (see Table 2).

It must be noted though, that the baseline was selected from work on the Ask.fm corpus (Van Hee et al. 2018). This data is also one of the more diverse datasets (and largest) with exclusively short messages; therefore, one could assume a model trained on this data would work well on both longer and shorter instances. It is however also likely that particularly this baseline (binary word features) trained on this data therefore enforces the importance of more shallow features. This will be further explored in Experiments II and III.

For Experiment I, however, our goal was to assess the out-of-domain performance of these classifiers, not to maximize performance. For this, we turn to the Avg column in Table 5. Between the top portion of the Table, the \(D_{ask}\) model performs best across all domains (achieving highest on three, as mentioned above). The second-best model is trained on the Formspring data from Reynolds et al. (2011) (\(D_{frm}\)), akin to Ask.fm as a domain (question-answer style, option to post anonymously). It can be observed that almost all models perform worst on the ‘bullying traces’ Twitter corpus by Xu et al. (2012), which was collected using queries. This result is relatively unsurprising, given the small vocabulary overlaps with its test set shown in Fig. 2. We also confirm in line with Reynolds et al. (2011) that the caw data from Bayzick et al. (2011) is unfit as a bullying corpus; achieving significant positive \(F_1\)-scores with a baseline, generalizing poorly and proving difficult as a test set.

Additionally, we observe that even the best performing models yield between .1 and .2 lower \(F_1\) scores on other domains, or a \(15-30\%\) drop from the original score. To explain this, we look at how well important features generalize across test sets. As our baseline is a Linear SVM, we can directly extract all grams with positive coefficients (i.e., related to bullying). Figure 3 (right) shows the frequency of the top 5000 features with the highest coefficient values. These can be observed to follow a Zipfian-like distribution, where the important features most frequently occur in one test set (\(25.5\%\)) only, which quickly drops off with increasing frequency. Conversely, this implies that over \(75\%\) of the top 5,000 features seen during training do not occur in any test instance, and only \(3\%\) generalize across all sets. This coverage decreases to roughly \(60\%\) and \(4\%\) respectively for the top 10,000, providing further evidence of the strong variation in predominantly bullying-specific language use.

Left: Top 20 test set words with the highest average coefficient values across all classifiers (minus the model trained on \(D_{tox}\)). Error bars represent standard deviation. Each coefficient value is only counted once per test set. The frequency of the words is listed in the annotation. Right: Test set occurrence frequencies (and percentages) of the top 5000 highest absolute feature coefficient values

Figure 3 (left) also indicates that the coefficient values are highly unstable across test sets, with most having roughly a 0.4 standard deviation. Note that these coefficient values can also flip to negative for particular sets, so for some of the features, the range goes from associated with the other class to highly associated with bullying. Given the results of Table 5 and Fig. 3, we can conclude that our baseline model shows not to generalize out-of-domain. Given the quantitative and qualitative results reported on in this Experiment, this particular setting partly supports Hypothesis 1.

6.2 Experiment II

The results for this experiment can be predominantly found in Table 5 (middle and lower parts, and T2 in particular), and partly in Table 7 (word2vec, DistilBERT). In this experiment, we seek to further test Hypothesis 1 by employing three methods: merging all cyberbullying data to increase volume and variety, aggregating on context level for a context change, and improving representations through pre-trained word embedding features. These are all reasonably straightforward methods that can be employed in an attempt to mitigate data scarcity.

Volume and variety The results for this part are listed under \(D_{all}\) in Table 5. For all of the following experiments, we now focus on the full results table (including that of Experiment I) and see which individual classifiers generalize best across all test sets (highlighted in gray). The Avg column shows that our ‘big’ model trained on all available corporaFootnote 31 achieves second-best performance on half of the test sets and best on the other half. More importantly, it has the highest average out-of-domain performance, without competition on any test set. These observations imply that for the baseline setting, an ensemble model of different smaller classifiers should not be preferred over the big model. Consequently, it can be concluded that collecting more data does seem to aid the task as a whole.

However, a qualitative analysis of the predictions made by this model clearly shows lingering limitations (see Table 6). These three randomly-picked examples give a clear indication of the focus on blatant profanity (such as d*ck, p*ss, and f*ck). Especially combinations of words that in isolation might be associated with bullying content (leave, touch) tend to confuse the model. It also fails to capture more subtle threats (skull drag) and infrequent variations (h*). Both of these structural mistakes could be mitigated by providing more context that potentially includes either more toxicity or more examples of neutral content to decrease the impact of single curse words—hence, the next experiment.

Context change As for access to context scopes, we are restricted to the Ask.fm and Formspring data (\(C_{frm}\) and \(C_{ask}\) in Table 5). Nevertheless, in both cases, we see a noticeable increase for in-domain performance: a positive \(F_1\) score of .579 for context scope versus .561 on Ask.fm, and .758 versus .454 on Formspring respectively. This increase implies that considering message-level detection for both individual sets should be preferred. On the other hand, however, these longer contexts do perform worse on out-of-domain sets. This can be partly explained due the fact that including more data (therefore moving the data to profile, or conversation level) shifts the task to identifying bullying conversations, or profiles of victims. While variation will be higher, chances also increase that multiple single bullying messages will be captured in a one context. This would therefore allow to learn the distinction between a profile or conversation with predominately neutral messages including a single toxic message—which might therefore be harmless, to one where there are multiple toxic messages, increasing the severity.

The change in scope clearly influences which features are deemed important. On manual inspection, averaging feature importances of all baseline models on their in-domain test sets, the top 500 most important features consist for 63% of profane words. For the models trained on Ask.fm and Formspring specifically (\(D_{ask}\) and \(D_{frm}\)), this is an average of 42%. Strikingly, for the models trained on context scopes (\(C_{frm}\) and \(C_{ask}\)), this percentage significantly reduced to 11%; many of their important bi-gram features include you, topics such as dating, boys, girls, and girlfriend occur, yet also positive words such as (are) beautiful—the latter of which could indicate messages from friends (defenders). This change is to an extent expected as by changing the scope, the task shifts to classifying profiles that are bullied, thus showing more diverse bullying characteristics.

These results provide evidence for extending classification to contexts to be a worthwhile platform-specific setting to pursue. However, we can conversely draw the same conclusions as Experiment I; that including direct context does not overcome the tasks general domain limitations, therefore further supporting Hypothesis 1. A plausible solution to this could be improving upon the BoW features by relying on more general representations of language, as found in word embeddings.

Improving representations The aim of this experiment was to find (out-of-the-box) representations that would improve upon the simple BoW features used in our base-line model (i.e., achieving good in-domain performance as well as out-of-domain generalization). Table 7 lists both of our considered baselines, tested under different preprocessing methods. These are subsequently compared against the two different embedding representations.

For preprocessing, several levels were used: the default for all models being (1) lowercasing only, then either (2) removal of special characters, or (3) lemmatization and more appropriate handling of special characters (e.g., splitting #word to prepend a hashtag token) were added. The corresponding results in Table 7 do not reveal an unequivocal preprocessing method for either the BoW baseline or NBSVM. While the latter achieves highest out-of-domain generalization with thorough preprocessing (‘+preproc’, .566 positive \(F_1\)), the baseline model achieves best in-domain performance on five out of nine corpora, and an on-par out-of-domain average (.566 versus .561) with simple cleaning (‘+clean’).

According to our criterion proposed in Sect. 5.1, a method that performs well both in- and out-of-domain should be preferred. The current consideration of preprocessing methods illustrates how this stricter evaluation criterion used in this experiment potentially yields different overall results in contrast to evaluating in-domain only, or focusing on single corpora. Conversely, we opted for simple cleaning throughout the rest of our experiment (as mentioned in Sect. 4.4), given its consistent performance for both baselines.

The embeddings chosen for this experiment do not seem to provide representations that yield an overall improvement for the classification performance of our Logistic Regression model. Surprisingly, however, DistilBERT does yield significant gains over our baseline for the conversation-level corpus of Ask.fm (.629 positive \(F_1\) over .579). This might imply that such representations would work well on more (balanced) data. While we did not see a significant effect on performance with shallowly fine-tuning DistilBERT, more elaborate fine-tuning would be a required point of further investigation before drawing strong conclusions.

Moreover, given that we restricted our embeddings to averaged representations on document-level for word2vec, and the sentence representation token for BERT (following common practices), numerous settings remain unexplored. While both (i.e., fine-tuning and alternate input representations) of such potential improvements would certainly merit further exploration in future work focused on optimization, this is out of scope of the current research. Similarly, embeddings trained on a similar domain would be more ideal to represent our noisy data; we settled for strictly off-the-shelf ones that at minimum included web content, and a large vocabulary.

Therefore, we conclude that no alternative (out-of-the-box) baselines seem to clearly outperform our BoW baseline. We previously eluded to the effectiveness of binary BoW representations in previous work, and argued this being a result of capturing blatant profanity. We will further test this in the next experiment.

6.3 Experiment III

Here, we investigate Hypothesis 2: the notion that positive instances across all cyberbullying corpora are biased, and only reflect a limited dimension of bullying. We have already found strong evidence for this in the previous Experiments I and II, Fig. 3, Table 6, and manual analyses of top features all indicated toxicity to be consistent top-ranking features. To add more empirical evidence to this, we trained models on toxicity, or cyber aggression, and tested them on bullying data (and vice-versa)—providing results on the overlap between the tasks. The results for this experiment can be found in the lower end of Table 5, under \(D_{tox}\) and T3.

It can be noted that there is a substantial gap in performance between the cyberbullying classifiers (using \(D_{all}\) as reference) performance on the \(D_{tox}\) test set and that of the toxicity model (positive \(F_1\) score of .587 and .806 respectively). More strikingly, however, the other way around, toxicity classifiers perform second-best on the out-of-domain averages (Avg in Table 5). In the context scopes (\(C_{frm}\) and \(C_{ask}\)) it is notably close, and for other sets relatively close, to the in-domain performance.

Cyberbullying detection should include detection of toxic content, yet also perform on more complex social phenomena, likely not found in the Wikipedia comments of the toxicity corpus. It is therefore particularly surprising that it achieves higher out-of-domain performance on cyberbullying classification than all individual models using BoW features to capture bullying content. Only when all corpora are combined, the \(D_{all}\) classifier performs better than the toxicity model. This observation combined with previous results provides significant evidence that a large part of the available cyberbullying content is not complex, and current models to only generalize to a limited extent using predominately simple aggressive features, supporting Hypothesis 3.

6.4 Experiment IV

So far, we have attempted to improve a straight-forward baseline that was trained on binary features with several different approaches. While changes in data (representations) seem to have a noticeable effect on performance (increasing the amount of messages per instance, merging all corpora), none of the experiments with different feature representations have had an impact. With the current experiment, we had hoped to leverage earlier state-of-the-art architectures by reproducing their methodology and subjecting our evaluation framework.

As can be inferred from Table 8, our baselines outperform these neural techniques on almost all in-domain tests, as well as the out-of-domain averages. Having strictly upheld the experimental set-up from Agrawal and Awekar (2018) and as close as possible that of Rosa et al. (2018), we can conclude that—under stricter evaluation—there is sufficient evidence that these models do not provide state-of-the-art results on the task of cyberbullying.Footnote 32 Tuning these networks (at least in our set-up) does not seem to improve performance, rather decrease it. This indicates that the validation set on which early stopping is conducted is often not representative to the test set. Parameter tuning on this set is consequently sensitive to overfitting; an arguably unsurprising result given the size of the corpora.

Some further noteworthy observations can be made related to the performance of the CNN architecture, achieving quite significant leaps on word level (for \(D_{twB}\)) and character level (for \(C_{ask}\)). Particularly the conversation scopes (C, with a comparitatively balanced class distribution) see much more competitive perfomance compared to the baselines. The same effect can be observed when more data is available; both averages test scores for \(D_{all}\) and \(D_{tox}\) are comparable to the baseline across almost all architectures. Additionally, the \(D_{tox}\) scores indicate that all architectures show about the same overlap on toxicity detection, although interestingly, less so for the neural models than for the baselines.

It can therefore be concluded that the current neural architectures do not provide a solution to the limitations of the task, rather, suffering more in performance. Our experiments do, however, once more illustrate that the proposed techniques of improving the representations of the corpora (by providing more data through merging all sources, and balancing by classifying batches of multiple messages, or conversations) allow the neural models to approach the baseline ballpark. As our goal here was not to completely optimize these architectures, but replication, the proposed techniques still could provide more avenues for further research. Finally, given its robust performance, we will continue to use the baseline model for the next experiment.

6.5 Experiment V

Due to the nature of its experimental set-up (which generates balanced data with simple language use, as shown in Table 2), the crowdsourced data proves easy to classify. Therefore, we do not report out-of-domain averages, as this set would skew them too optimistically, and be uninformative. Regardless, we are primarily interested in performance when crowdsourced data is added, or used as a replacement for real data. In contrast to the other experiments, the focus will mostly be on the Ask.fm (\(D_{ask\_nl}\)) and donated (\(D_{don\_nl}\)) scores (see Table 8). The scores on the Dutch part of the Ask.fm corpus are quite similar to those on the English corpus (.561 vs .598 positive \(F_1\) score), which is in line with earlier results (Van Hee et al. 2018). Moreover, particularly for the small amount of data, the crowdsourced corpus performs surprisingly well on \(D_{ask\_nl}\) (.516), and significantly better on the donated test data (.667 on \(D_{don\_nl}\)). This implies that a balanced, controlled bullying set, tailored to the task specifically, does not need a significant amount of data to achieve comparable (or even better) performance, which is a promising result.

Furthermore, in the settings that utilize context representations, training on conversation scopes initially does not seem to improve detection performance in any of the configurations (save for a marginal gain on \(D_{simC\_nl}\)). However, it does simplify the task in a meaningful way at test-time; whereas a slight gain is obtained for message-level \(D_{ask\_nl}\) (from .598 \(F_1\)-score to .608), when merging both datasets a significant performance boost can be found when training on \(D_{comb}\) and testing on \(D_{askC\_nl}\) (from .264 and .501 to .801 on the combined). Hence, it can be further concluded that enriching the existing training set with crowdsourced data yields meaningful improvements.

Based on these results, we confirm the Experiment II results hold for Dutch: more diverse, larger datasets, and increasing context sizes contributes to better performance on the task. Most importantly, there is enough evidence to support Hypothesis 3: the data generated by the crowdsourcing experiment helps detection rates for our in-the-wild test set, and its combination with externally collected data increases performance with and without additional context. These results underline the potential of this approach to collecting cyberbullying data.

6.6 Suggestions for future work

We hope our experiments have helped to shed light on, and raise further attention to multiple issues with methodological rigor pertaining the task of cyberbullying detection. It is our understanding that the disproportionate amount of work on the (oversimplified) classification task, versus the lack of focus on constructing rich, representative corpora reflecting the actual dynamics of bullying, has made critical assessment of the advances in this task difficult. We would therefore want to particularly stress the importance of simple baselines and the out-of-domain tests that we included in the evaluation criterion for this research. They would provide a fairer comparison for proposed novel classifiers, and a more unified method of evaluation. In line with this, the structural inclusion of domain adaptation techniques seems a logical next step to improve cross-domain performance, specifically those tailored to imbalanced data.

Furthermore, this should be paired with a critical view on the extent to which the full scope of the task is fulfilled. Novel research would benefit from explicitly finding evidence to support its assumptions that classifiers labeled ‘cyberbullying detection’ do more than one-shot, message-level toxicity detection. We would argue that the current framing of the majority of work on the task is still too limited to be considered theoretically-defined cyberbullying classification. In our research, we demonstrated several qualitative and quantitative methods that can facilitate such analyses. As popularity of the application of cyberbullying detection grows, this would avoid misrepresenting the conducted work, and that of in-the-wild applications in the future.

We can imagine these conclusions to be relevant for more research within the computational forensics domain: detection of online pedophilia (Bogdanova et al. 2014), aggression and intimidation (Escalante et al. 2017), terrorism and extremism (Ebrahimi et al. 2016; Kaati et al. 2015), and systematic deception Feng et al. (2012)–among others. These are all examples of heavily skewed phenomena residing within more complex networks for which simplification could lead to serious misrepresentation of the task. As with cyberbullying research, a critical evaluation of multiple domains could potentially uncover problematic performance gaps.

While we demonstrated a method of collecting plausible cyberbullying with guaranteed consent, the more valuable sources of real-world data that allow for complex models of social interaction remain restricted. It is our expectation that future modeling will benefit from the construction of much larger (anonymized) corpora—as most fields dealing with language have, and we therefore hope to see future work heading this direction.

7 Conclusion

In this work, we identified several issues that affect the majority of the current research on cyberbullying detection. As it is difficult to collect accurate cyberbullying data in the wild, the field suffers from data scarcity. In an optimal scenario, rich representations capturing all required meta-data to model the complex social dynamics of what the literature defines as cyberbullying would likely prove fruitful. However, one can assume such access to remain restricted for the time being, and with current social media moving towards private communication, to not be generalizable in the first place. Thus, significant changes need to be made to the empirical practices in this field. To this end, we provided a cross-domain evaluation setup and tested several cyberbullying detection models, under a range of different representations to potentially overcome the limitations of the available data, and provide a fair, rigorous framework to facilitate direct model comparison for this task.

Additionally, we formed three hypotheses we would expect to find evidence for during these evaluations: (1) the corpora are too small and heterogeneous to represent the strong variation in language use for both bullying and neutral content across platforms accurately, (2) the positive instances are biased, predominantly capturing toxicity, and no other dimensions of bullying, and finally (3) crowdsourcing poses a resource to generate plausible cyberbullying events, and that can help expand the available data and improve the current models.

We found evidence for all three hypotheses: previous cyberbullying models generalize poorly across domains, simple BoW baselines prove difficult to improve upon, there is considerable overlap between toxicity classification and cyberbullying detection, and crowdsourced data yields well-performing cyberbullying detection models. We believe that the results of Hypotheses 1 and 2 in particular are principal hurdles that need to be tackled to advance this field of research. Furthermore, we showed that both leveraging training data from all openly available corpora, and shifting representations to include context meaningfully improves performance on the overall task. Therefore, we believe both should be considered as an evaluation point in future work. More so given that we show that these do not solve the existing limitations of the currently available corpora, and could therefore provide avenues for future research focusing on collecting (richer) data. Lastly, we show reproducibility of models that previously demonstrated state-of-the-art performance on this task to fail. We hope that the observations and contributions made in this paper can aid to improve rigor in future cyberbullying detection work.

Notes

Survey conducted in 2011 among 799 American teens. Black and Latino families were oversampled.

Available at https://github.com/cmry/amica.

Unfortunately, numerous (recent) work on cyberbullying detection seems not to report such \(F_1\)-scores (in favor of accuracy), is limited to criticized datasets with high baseline scores (such as the caw datasets) or does not show enough methodological rigor—some are therefore not included in this overview.

Data has been made available at http://caw2.barcelonamedia.org.