Abstract

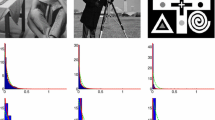

This paper addresses the study of a class of variational models for the image restoration inverse problem. The main assumption is that the additive noise model and the image gradient magnitudes follow a generalized normal (GN) distribution, whose very flexible probability density function (pdf) is characterized by two parameters—typically unknown in real world applications—determining its shape and scale. The unknown image and parameters, which are both modeled as random variables in light of the hierarchical Bayesian perspective adopted here, are jointly automatically estimated within a Maximum A Posteriori (MAP) framework. The hypermodels resulting from the selected prior, likelihood and hyperprior pdfs are minimized by means of an alternating scheme which benefits from a robust initialization based on the noise whiteness property. For the minimization problem with respect to the image, the Alternating Direction Method of Multipliers (ADMM) algorithm, which takes advantage of efficient procedures for the solution of proximal maps, is employed. Computed examples show that the proposed approach holds the potential to automatically detect the noise distribution, and it is also well-suited to process a wide range of images.

Similar content being viewed by others

Notes

In fact, from definitions in (1.4), we have \(\text {TV}_p(u) \;{:}{=}\; \left\| g(u) \right\| _p^p \;{=}\; \big \Vert \big ( \left\| ({{\mathsf {D}}}u)_1 \right\| _2, \, \ldots \, , \left\| ({{\mathsf {D}}}u)_n \right\| _2 \big )^T \big \Vert _p^p\) \(\;{=}\; \sum _{i=1}^n \big |\,\big \Vert ({{\mathsf {D}}}u)_i\big \Vert _2\big |^p \;{=}\; \sum _{i=1}^n \big \Vert ({{\mathsf {D}}}u)_i\big \Vert _2^p\), which is the standard \(\text {TV}_p\) regularizer definition (see, e.g., [21]).

References

Abramowitz, M., Stegun, I.A.: Handbook of mathematical functions: with formulas, graphs, and mathematical tables. In: Abramowitz, M., Stegun, I.A. (eds.) Dover Books on Advanced Mathematics. Dover Publications, New York (1965)

Almeida, M.S.C., Figueiredo, M.A.T.: Parameter estimation for blind and non-blind deblurring using residual whiteness measures. IEEE Trans. Image Process. 22, 2751–2763 (2013)

Babacan, S.D., Molina, R., Katsaggelos, A.: Generalized Gaussian Markov random field image restoration using variational distribution approximation. In: 2008 IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 1265–1268 (2008)

Babacan, S.D., Molina, R., Katsaggelos, A.: Parameter estimation in TV image restoration using variational distribution approximation. IEEE Trans. Image Process. 17, 326–339 (2008)

Bauer, F., Lukas, M.A.: Comparing parameter choice methods for regularization of ill-posed problem. Math. Comput. Simul. 81, 1795–1841 (2011)

Calatroni, L., Lanza, A., Pragliola, M., Sgallari, F.: A flexible space-variant anisotropic regularization for image restoration with automated parameter selection. SIAM J. Imaging Sci. 12(2), 1001–1037 (2019)

Calatroni, L., Lanza, A., Pragliola, M., Sgallari, F.: Adaptive parameter selection for weighted-TV image reconstruction problems. J. Phys. Conf. Ser. 1476 (2020)

Calatroni, L., Lanza, A., Pragliola, M., Sgallari, F.: Space-adaptive anisotropic bivariate Laplacian regularization for image restoration. In: Tavares, J., Natal Jorge, R. (eds) VipIMAGE 2019. Lect. Notes Comput. Vis. Biomech. 34 (2019)

Calvetti, D., Hakula, H., Pursiainen, S., Somersalo, E.: Conditionally Gaussian hypermodels for cerebral source localization. SIAM J. Imaging Sci. 2, 879–909 (2009)

Calvetti, D., Hansen, P.C., Reichel, L.: L-curve curvature bounds via Lanczos bidiagonalization. Electron. Trans. Numer. Anal. 14, 20–35 (2002)

Calvetti, D., Pascarella, A., Pitolli, F., Somersalo, E., Vantaggi, B.: A hierarchical Krylov–Bayes iterative inverse solver for MEG with physiological preconditioning. Inverse Probl. 31, 125005 (2015)

Calvetti, D., Pragliola, M., Somersalo, E., Strang, A.: Sparse reconstructions from few noisy data: analysis of hierarchical Bayesian models with generalized gamma hyperpriors. Inverse Probl. 36, 025010 (2020)

Calvetti, D., Somersalo, E.: Introduction to Bayesian Scientific Computing: Ten Lectures on Subjective Computing (Surveys and Tutorials in the Applied Math. Sciences). Springer, Berlin (2007)

Calvetti, D., Somersalo, E., Strang, A.: Hierachical Bayesian models and sparsity: \(\ell _2\) -magic. Inverse Probl. 35, 035003 (2019)

Chan, R.H., Dong, Y., Hintermüller, M.: An efficient two-phase L\(_1\)-TV method for restoring blurred images with impulse noise. IEEE Trans. Image Process. 65(5), 1817–1837 (2005)

Clason, C.: L\(\infty \) fitting for inverse problems with uniform noise. Inverse Probl. 28(10) (2012)

De Bortoli, V., Durmus, A., Pereyra, M., Vidal, A.F.: Maximum likelihood estimation of regularization parameters in high-dimensional inverse problems: an empirical Bayesian approach part II: theoretical analysis. SIAM J. Imaging Sci. 13, 1990–2028 (2020)

Fenu, C., Reichel, L., Rodriguez, G., Sadok, H.: GCV for Tikhonov regularization by partial SVD. BIT 57, 1019–1039 (2017)

Guo, X., Li, F., Ng, M.K.: A Fast \(\ell _1\)-TV algorithm for image restoration. SIAM J. Sci. Comput. 31(3), 2322–2341 (2009)

Lanza, A., Morigi, S., Pragliola, M., Sgallari, F.: Space-variant generalised Gaussian regularisation for image restoration. Comput. Method. Biomech. 7, 490–503 (2019)

Lanza, A., Morigi, S., Sgallari, F.: Constrained TV\(_p\)-\(\ell _2\) model for image restoration. J. Sci. Comput. 68, 64–91 (2016)

Lanza, A., Pragliola, M., Sgallari, F.: Residual whiteness principle for parameter-free image restoration. Electron. Trans. Numer. Anal. 53, 329–351 (2020)

Molina, R., Katsaggelos, A., Mateos, J.: Bayesian and regularization methods for hyperparameter estimation in image restoration. IEEE Trans. Image Process. 8, 231–246 (1999)

Park, Y., Reichel, L., Rodriguez, G., Yu, X.: Parameter determination for Tikhonov regularization problems in general form. J. Comput. Appl. Math. 343, 12–25 (2018)

Reichel, L., Rodriguez, G.: Old and new parameter choice rules for discrete ill-posed problems. Numer. Algorithms 63, 65–87 (2013)

Robert, C.: The Bayesian Choice: From Decision-theoretic Foundations to Computational Implementation. Springer, Berlin (2007)

Rudin, L.I., Osher, S., Fatemi, E.: Nonlinear total variation based noise removal algorithms. Phys. D 60, 259–268 (1992)

Stuart, A.M.: Inverse problems: a Bayesian perspective. Acta Numer. 19, 451–559 (2010)

Vidal, A.F., De Bortoli, V., Pereyra, M., Durmus, A.: Maximum likelihood estimation of regularization parameters in high-dimensional inverse problems: an empirical Bayesian approach part I: methodology and experiments. SIAM J. Imaging Sci. 13, 1945–1989 (2020)

Wang, Z., Bovik, A.C., Sheikh, H.R., Simoncelli, E.P.: Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 4, 600–612 (2004)

Wen, Y., Chan, R.H.: Parameter selection for total-variation-based image restoration using discrepancy principle. IEEE Trans. Image Process. 21(4), 1770–1781 (2012)

Wen, Y., Chan, R.H.: Using generalized cross validation to select regularization parameter for total variation regularization problems. Inverse Probl. Imaging 12, 1103–1120 (2018)

Zuo, W., Meng, D., Zhang, L., Feng X., Zhang, D.: A generalized iterated shrinkage algorithm for non-convex sparse coding. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 217–224 (2013)

Acknowledgements

Research was supported by the “National Group for Scientific Computation (GNCS-INDAM)” and by ex60 project by the University of Bologna “Funds for selected research topics”.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Rosemary Anne Renaut.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

A Proofs of the results

A Proofs of the results

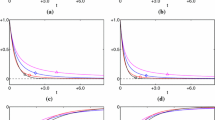

Proposition A.1

Let \(s,\gamma \in {{\mathbb {R}}}_{++}\), \(z \in {\mathbb {N}}\) be given constants, let \(f_s: {{\mathbb {R}}}^z \rightarrow {{\mathbb {R}}}\) be the (parametric, not necessarily convex) function defined by \(f_s(x) := \Vert x\Vert _2^s\) and let \(\text {prox}_{f_s}^{\gamma }: {{\mathbb {R}}}^z \rightrightarrows {{\mathbb {R}}}^z\) be the proximal operator of function \(f_s\) with proximity parameter \(\gamma \), defined as the z-dimensional minimization problem

Then, for any \(z \in {\mathbb {N}}, \, s,\gamma \in {{\mathbb {R}}}_{++}, \, y \in {{\mathbb {R}}}^z\), \(\text {prox}_{f_s}^{\gamma }\) takes the form of a shrinkage operator:

and with function

In particular, for any \(z \in {\mathbb {N}}\), the shrinkage coefficient function \(\xi ^*(s,\rho )\) satisfies:

with functions \({\bar{\rho }}: (0,1] \rightarrow [1,\infty )\), \({\bar{\xi }}: (0,1] \rightarrow [0,1)\), and \(h: (0,1] \rightarrow {{\mathbb {R}}}\) defined by

Finally, the function \(\,\xi ^*\) exhibits the following regularity and monotonicity properties:

Proof

The proof of (A.1)–(A.2) comes easily from Proposition 1 in [21], as (A.1)–(A.2) is there proved for \(s<2\), but the proof of case c) in that proposition can be seamlessly extended to cover the case \(s \ge 2\).

To prove (A.3), first we notice from (A.1)–(A.2) that \(\xi ^*(s,\rho ) \,{\in }\, (0,1) \; \forall \, (s,\rho ) \,{\in }\, S\) and introduce the function \(\,f\,{:}\;\, S_f \rightarrow {{\mathbb {R}}}\), \(\,S_f = (0,1) \,{\times }\, S \;{\subset }\; {{\mathbb {R}}}_{++}^3\), defined by \(\,f(\xi ,s,\rho ) \,{:}{=}\, h(\xi ;s,\rho )\) \({=}\; \xi ^{s-1} + \rho \, (\xi -1)\), \(\forall \, (\xi ,s,\rho ) \,{\in }\, S_f\). The function f clearly satisfies

We now demonstrate by contradiction that that if \(s < 1\) then \(\xi ^{s-2} \ne \rho /(1-s)\) for any \((\xi ,s,\rho ) \in S_f\). In fact, according to the definition of set \(S_f\) given above, we have that \(\xi > {\bar{\xi }}(s)\) and \(\rho > {\bar{\rho }}(s)\) for \(s<1\), with functions \({\bar{\xi }},{\bar{\rho }}\) defined in (A.2). But we have

Hence, \(\,\partial f / \partial \xi \ne 0 \;\, \forall \, (\xi ,s,\rho ) \in S_f\) and it follows form the implicit function theorem that, for any \((s,\rho ) \in S\), the shrinkage coefficient \(\xi ^* \in (0,1)\) is given by the infinitely many times differentiable function of \((s,\rho )\), denoted \(\xi ^*(s,\rho )\), solution of equation

Taking the partial derivatives of both sides of (A.4) with respect to s and \(\rho \), and recalling that for any function \(\,c(x) \;{=}\; w(x)^{a(x)}\) with \(\,w(x) \;{>}\; 0 \;\, \forall \, x \in {{\mathbb {R}}}\), it holds that

after simple manipulations we have

Since \(\,(s,\rho ) \in S \,\;{\Longrightarrow }\;\, \rho > 0, \, \xi ^*(s,\rho ) \in (0,1)\), then both the numerators in the definitions of \(\partial \xi ^* / \partial s\) and \(\partial \xi ^* / \partial \rho \) in (A.5)–(A.6) are positive quantities. The denominator in (A.5)–(A.6) is also clearly positive for \(s \ge 1\); for \(s<1\) it is positive for

which is always verified since \(\,\xi ^*(s,\rho ) \;{>}\; {\bar{\xi }}(s)\) for \(s<1\). This proves (A.3). \(\square \)

Corollary A.1

Under the setting of Proposition A.1, let \((s,\rho ) \in S\). Then, the Newton–Raphson iterative scheme applied to the solution of \(h(\xi ;s,\rho ) = 0\), namely

converges to \(\xi ^*(s,\rho )\) if the initial iterate \(\xi _0\) is chosen, dependently on \(s,\rho \), as follows:

Hence, based on (A.3), \(\xi _0\) for computing \(\xi ^*(s,\rho )\) by (A.7) can be chosen among the solutions \(\xi ^*({\tilde{s}},\rho )\) for different s values according to the following strategy:

Proof

According to Proposition A.1, for any given pair \((s,\rho ) \in S\), the shrinkage coefficient \(\xi ^*(s,\rho )\) is given by the unique root of nonlinear equation \(h(\xi ;s,\rho ) = 0\) in the open interval \(({\bar{\xi }}(s),1)\) for \(s\le 1\), (0, 1) for \(s>1\). Convergence of the Newton–Raphson method applied to finding such roots depends on the initial guess as well as on the first- and second-order derivatives of the function h, which read

In particular, it is immediate to verify that \(h \;{\in }\, C^{\infty }\left( (0,1]\right) \) for any \((s,\rho ) \in S\) and that, depending on s, the function h and its derivatives \(h',h''\) satisfy

Properties of \(\,h,h',h''\) for \(s\,{<}\,1 \,{\wedge }\, \rho \,{>}\,{\bar{\rho }}(s)\) indicate that in this case the iterative scheme (A.7) is guaranteed to converge to the unique root \(\xi ^*(s,\rho )\) of \(h(\xi ;s,\rho ) = 0\) in the open interval \(({\bar{\xi }}(s),1)\) if \(\xi _0 \in \big [\xi ^*(s,\rho ),1]\). But, since it can be proved (we omit the proof for shortness) that setting \(\xi _0 = {\bar{\xi }}(s)\) the first iteration of (A.7) yields \(\xi _1 \in \big [\xi ^*(s,\rho ),1\big )\), then (A.7) converges under the milder condition \(\xi _0 \in [{\bar{\xi }}(s),1]\).

Properties of \(\,h,h',h''\) for \(s\,{\in }\,(1,2) \,{\wedge }\, \rho \,{>}\,0\) lead to the convergence condition \(\xi _0 \,{\in }\, \big (0,\xi ^*(s,\rho )\big ]\) for (A.7), with \(\xi _0 \,{=}\, 0\) excluded since \(h'(0^+) \,{=}\; {+}\infty \;{\Longrightarrow }\; \xi _k \,{=}\, 0 \;\, \forall k\). It can be proved that setting \(\xi _0 = 1\), then (A.7) yields \(\xi _1 \in \big (0,\xi ^*(s,\rho )\big ]\) if and only if \(\rho \,{>}\, 2-s\). Hence, for \(\rho \,{>}\, 2-s\) we have the milder convergence condition \(\xi _0 \,{\in }\, (0,1]\).

Properties of \(\,h,h',h''\) for \(s\,{>}\,2 \;{\wedge }\; \rho \,{>}\,0\) indicate that (A.7) is guaranteed to converge to the desired \(\,\xi ^*(s,\rho )\,\) if \(\,\xi _0 \,{\in }\, \big [\xi ^*(s,\rho ),1]\). However, as it is easy to prove that \(\xi _0\,{=}\,0\) in (A.7) yields \(\xi _1\,{=}\,1\), then (A.7) converges for any \(\xi _0\,{\in }\,[0,1]\) in this case.

This completes the proof of (A.8), whereas (A.9) comes easily from (A.8) and from the monotonicity properties of function h given in (A.3).\(\square \)

Proof of Proposition 4.2

Based on Proposition 2.1, the function T is infinitely differentiable. Hence, we impose a first order optimality condition on T with respect to \(\sigma \):

Equation (A.10) admits the following closed-form solution:

where the expression of \(\sigma ^{\text {ML}}(s)\) is given in (2.7). Note that (A.11) can be further manipulated so as to give

It is easy to verify that the second derivative of T with respect to \(\sigma \) computed at \(\sigma ^{\text {MAP}}(s)\) is strictly positive, hence the stationary point in (A.10) is a minimum. Finally, plugging (A.12) into the expression of function T in (4.13), after a few simple manipulations, the s estimation problem takes the form:

Based on Proposition 2.1, it is easy to verify that \(f^{\text {MAP}}\in C^{\infty }(B)\) and that the following limits hold:

Depending on \(\nu \), the three following scenarios arise:

-

(a)

\(\nu =1\). Based on the properties of function \(\phi \) and \(\Vert \cdot \Vert _{\infty }\), \(\nu \) tends to 1 from the right. If \(\nu \rightarrow 1^+\) slower than \(s\rightarrow +\infty \), case (a) leads to case (c), otherwise, \(c(s)\sim O(s)\) and \(f_2(s)\) vanish so that \(f^{\text {MAP}}(s)\) tends to a finite value when s goes to \(+\infty \).

-

(b)

\(\nu < 1\).

For large s, the first term in \(f_2(s)\) vanishes, while for the logarithmic term we consider the Maclaurin series expansion of \(\sqrt{1+c(s)}\), so that:

$$\begin{aligned}&\lim _{s\rightarrow +\infty } f_2(s) = \lim _{s\rightarrow +\infty } (n\alpha /s)[\ln (\alpha /(2\beta ))+\ln (-1+1+(1/2)c(s)+o(c(s)))]\\&\quad =\lim _{s\rightarrow +\infty } (n\alpha /s) \ln ((1/2)c(s)) = \lim _{s\rightarrow +\infty } (n\alpha /s)\ln (2(\beta /\alpha ^2)(s/n)) \nonumber \\&\qquad + (n\alpha )\ln \nu = n\alpha \ln \nu \in {{\mathbb {R}}}\,. \end{aligned}$$Therefore, function \(f^{\text {MAP}}(s)\) tends to a finite value when \(s\rightarrow +\infty \).

-

(c)

\(\nu > 1\). For large s, we have \((n\alpha /s)\ln (-1+\sqrt{1+c(s)})\sim (n\alpha /s)\ln \sqrt{c(s)}\rightarrow k\in {{\mathbb {R}}}_{+}\) as \(s\rightarrow + \infty \). Hence:

$$\begin{aligned} \lim _{s\rightarrow +\infty } f_2(s) = \lim _{s\rightarrow +\infty } (n\alpha /s)\sqrt{1+c(s)} + k = + \infty \longrightarrow \lim _{s\rightarrow +\infty }f^{\text {MAP}}(s)=+\infty . \end{aligned}$$

We conclude that \(f^{\text {MAP}}\) admits either a finite minimizer, that can be plugged into (A.11) thus returning \(\sigma ^{\text {MAP}}\), or \(s^{\text {MAP}}=+\infty \), i.e. the samples in x are drawn from a uniform distribution with \(\sigma ^{\text {MAP}}=m\nu \). \(\square \)

Rights and permissions

About this article

Cite this article

Lanza, A., Pragliola, M. & Sgallari, F. Automatic fidelity and regularization terms selection in variational image restoration. Bit Numer Math 62, 931–964 (2022). https://doi.org/10.1007/s10543-021-00901-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10543-021-00901-z