Abstract

We consider a scalar function depending on a numerical solution of an initial value problem, and its second-derivative (Hessian) matrix for the initial value. The need to extract the information of the Hessian or to solve a linear system having the Hessian as a coefficient matrix arises in many research fields such as optimization, Bayesian estimation, and uncertainty quantification. From the perspective of memory efficiency, these tasks often employ a Krylov subspace method that does not need to hold the Hessian matrix explicitly and only requires computing the multiplication of the Hessian and a given vector. One of the ways to obtain an approximation of such Hessian-vector multiplication is to integrate the so-called second-order adjoint system numerically. However, the error in the approximation could be significant even if the numerical integration to the second-order adjoint system is sufficiently accurate. This paper presents a novel algorithm that computes the intended Hessian-vector multiplication exactly and efficiently. For this aim, we give a new concise derivation of the second-order adjoint system and show that the intended multiplication can be computed exactly by applying a particular numerical method to the second-order adjoint system. In the discussion, symplectic partitioned Runge–Kutta methods play an essential role.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

We consider an initial value problem of a d-dimensional time-dependent vector x driven by an ordinary differential equation (ODE) of the form

where t is time, the function \(f:{\mathbb {R}}^d\rightarrow {\mathbb {R}}^d\) is assumed to be sufficiently differentiable, and \(\theta \) is an initial value of x. Such an ODE is often solved numerically. Let \(x_n (\theta )\) be the numerical solution that approximates the analytic solution at \(t=t_{n}\), i.e., \(x_n (\theta )\approx x(t_n;\theta )=x(nh;\theta )\), where n is an integer and h is a time step size. This study is interested in numerically computing derivatives of a twice differentiable scalar function \(C:{\mathbb {R}}^d \rightarrow {\mathbb {R}}\), which depends on the numerical solution at a certain time \(t_{N}\), e.g., a gradient vector \(\nabla _\theta C(x_N(\theta ))\) and a second-derivative (Hessian) matrix \({\mathsf {H}}_\theta C(x_N(\theta ))\) of the function C with respect to \(\theta \).

Calculating the gradient \(\nabla _\theta C(x_N(\theta ))\) is often required to solve an optimization problem

One simple way of obtaining an approximation to the gradient is to integrate the system (1.1) numerically multiple times for perturbed \(\theta \). For example

where \(\varDelta \) is a small scalar constant and \(e_i\) is the i-th column of the d-dimensional identity matrix, can be seen as an approximation to the i-th component of the gradient. However, when the dimensionality d or the number of time steps N is large, this approach becomes computationally expensive, which makes it difficult to obtain a sufficiently accurate approximation. Instead, in various fields such as optimal design in aerodynamics [5], variational data assimilation in meteorology and oceanography [3], inversion problems in seismology [4], and neural network [2], the adjoint method has been used to approximate the gradient: the gradient is approximated by integrating the so-called adjoint system numerically. This approach is more efficient than the simple approach in most cases, but the accuracy of the approximation is still limited when there are highly collected discretization errors. More recently, Sanz-Serna [15] showed that, if \(x_N(\theta )\) is the solution of a Runge–Kutta method, the gradient \(\nabla _\theta C(x_N(\theta ))\) can be calculated exactly by solving the adjoint system with a particular choice of Runge–Kutta method: the computed gradient coincides with the exact gradient up to round-off in floating point arithmetic. Given a system of ODEs and Runge–Kutta method applied to the system, the recipe presented in [15] finds the intended Runge–Kutta method for the adjoint system. It is worth noting that, though the computation based on the recipe can be viewed as that using automatic differentiation with backward accumulation, the recipe provides new insights in the context of adjoint methods and is quite useful for practitioners.

Hessian matrices also arise in several contexts. For example, if we apply the Newton method to the problem (1.2), a linear system whose coefficient matrix is the Hessian with respect to \(\theta \) needs to be solved. Further, the information of the inverse of the Hessian is used to quantify the uncertainty for the estimation in the Bayesian context [9, 19]. This case also requires solving a linear system whose coefficient matrix is the Hessian to calculate the inverse.

There are, however, several difficulties in solving such a linear system numerically. As is the case with the gradient, the simplest way of obtaining all elements of the Hessian is to integrate the system (1.1) multiple times for perturbed initial value. However, this approach is noticeably expensive, and further may suffer from the discretization error. Therefore, calculating all elements of the Hessian by this simple approach is often computationally prohibitive. If we apply a Krylov subspace method such as the conjugate gradient method or conjugate residual method [14], there is no need to have full entries of the Hessian, and instead, all we need to do is to compute a Hessian-vector multiplication, i.e., \(({\mathsf {H}}_\theta C(x_N(\theta )) )\gamma \) for a given vector \(\gamma \in {\mathbb {R}}^d\). It was pointed out in [20, 21] that, if C is a function of the exact solution to (1.1), the Hessian-vector multiplication \(({\mathsf {H}}_\theta C(x(t_N;\theta )) )\gamma \) can be obtained by solving the so-called second-order adjoint system backwardly. This property indicates that solving the second-order adjoint system numerically gives an approximation to the intended Hessian-vector multiplication \(({\mathsf {H}}_\theta C(x_N(\theta )) )\gamma \). However, when the numerical solutions to the original system (1.1) or second-order adjoint system are not sufficiently accurate, the error between the intended Hessian-vector multiplication \(({\mathsf {H}}_\theta C(x_N(\theta )) )\gamma \) and its approximation could be substantial.

In this paper, we extend the aforementioned technique, which was proposed by Sanz-Serna [15] to get the exact gradient, to calculate the exact Hessian-vector multiplication. More precisely, focusing on Runge–Kutta methods and their numerical solutions, we shall propose an algorithm that computes the Hessian-vector multiplication \(({\mathsf {H}}_\theta C(x_N(\theta )) )\gamma \) exactly. For this aim, we give a new concise derivation of the second-order adjoint system, which makes it possible to discuss the second-order adjoint system within the framework of the conventional (first-order) adjoint system and to apply the technique [15] to the second-order adjoint system. We show that the intended Hessian-vector multiplication can be calculated by applying a particular choice of Runge–Kutta method to the second-order adjoint system.

In Sect. 2, we give a brief review of adjoint systems and the paper by Sanz-Serna [15]. The main results are shown in Sect. 3, where we present a new concise derivation of the second-order adjoint system and show how to compute the intended Hessian-vector multiplication exactly. Section 4 is devoted to numerical experiments. Concluding remarks are given in Sect. 5.

2 Preliminaries

In this section, we give a brief review of adjoint systems and the paper by Sanz-Serna [15]. In Sect. 2.1, we focus on the continuous case, where C is a function of the exact solution to (1.1), and explain how the gradient \(\nabla _\theta C(x(t_N; \theta ))\) and the Hessian-vector multiplication \(( {\mathsf {H}}_\theta C(x(t_N;\theta )) )\gamma \) are obtained based on the adjoint system and second-order adjoint system, respectively. In Sect. 2.2, we explain that the gradient \(\nabla _\theta C(x_N( \theta ))\) can be calculated by solving the adjoint system using a particular choice of Runge–Kutta method.

2.1 Adjoint method

Let \({\overline{x}}(t)\) be the solution to (1.1) for the perturbed initial condition \({\overline{x}}(0) = \theta + \varepsilon \). By linearizing the system (1.1) at x(t), we see that as \(\Vert \varepsilon \Vert \rightarrow 0\) it follows that \({\overline{x}}(t) = x(t) + \delta (t) + \mathrm {o}(\Vert \varepsilon \Vert )\), where \(\delta (t)\) solves the variational system

Its solution satisfies \(\delta (t) = (\nabla _\theta x (t;\theta )) \delta (0)\), that is, \(\delta (t)\) depends linearly on \(\delta (0)\). The adjoint system of (2.1), which is usually introduced by using Lagrange multipliers, is given by

For the solutions to (2.1) and (2.2), \(\delta (t)^\top \lambda (t)\) is constant because

Thus, we have

On the other hand, it follows that

for any \(\delta (0)\), because of the chain rule \( \nabla _\theta C(x(t_N;\theta )) = \nabla _\theta x(t_N;\theta )^\top \nabla _x C(x(t_N;\theta )) \) and \(\delta (t_N) = (\nabla _\theta x (t_N;\theta )) \delta (0)\). By comparing (2.4) with (2.3), it is concluded that solving the adjoint system (2.2) backwardly with the final state \(\lambda (t_N) = \nabla _x C(x(t_N;\theta ))\) leads to the intended gradient at \(t=0\), i.e., \(\lambda (0) = \nabla _\theta C(x(t_N;\theta ))\).

The second-order adjoint system reads [20, 21]

where \(\delta (t)\) is the solution to the variational system (2.1) and \(\lambda (t)\) is the solution to the adjoint system (2.2). In [21], the second-order adjoint system is introduced as the variational system of the adjoint system (2.2). Suppose that the initial state for (2.1) is \(\delta (0)=\gamma \) and the final state for (2.2) is \(\lambda (t_N) = \nabla _x C(x(t_N;\theta ))\). Then, solving the second-order adjoint system (2.5) with the final state \(\xi (t_N) = ({\mathsf {H}}_x C(x(t_N;\theta )))\delta (t_N)\) gives the intended Hessian-vector multiplication at \(t=0\), i.e., \(\xi (0) = ({\mathsf {H}}_\theta C(x(t_N;\theta ))) \gamma \). We here skip the original proof of [20] and shall explain this property based on a new derivation of the second-order adjoint system in Sect. 3.

2.2 Exact gradient calculation

We consider the discrete case, where C is a function of the numerical solution to (1.1) obtained by a Runge–Kutta method. Sanz-Serna [15] showed that the gradient \(\nabla _\theta C(x_N(\theta ))\) can be computed exactly by applying a particular choice of Runge–Kutta method for the adjoint system (2.2). We briefly review the procedure.

Assume that the original system (1.1) is discretized by an s-stage Runge–Kutta method

We discretize the adjoint system (2.2) with another s-stage Runge–Kutta method

In the continuous case, the adjoint system gives the gradient \(\nabla _\theta C(x(t_N;\theta ))\) due to the property \(\lambda (t_N)^\top \delta (t_N) = \lambda (0)^\top \delta (0)\). Therefore, in the discrete case, to obtain the exact gradient \(\nabla _\theta C(x_N(\theta ))\), the numerical solution to the adjoint system must satisfy \(\lambda _N^\top \delta _N = \lambda _0^\top \delta _0\). In [15], it is proved that if the Runge–Kutta method for the adjoint system is chosen such that the pair of the Runge–Kutta methods for the original system and adjoint system constitutes a symplectic partitioned Runge–Kutta method, the property \(\lambda _N^\top \delta _N = \lambda _0^\top \delta _0\) is guaranteed and the gradient \(\nabla _\theta C(x_N(\theta ))\) is exactly obtained as shown in Theorem 2.1. We note that the symplecticity is a fundamental concept in numerical analysis for ODEs, and symplectic numerical methods are well known in the context of geometric numerical integration. For more details, we refer the reader to [7, Chapter VI] (for symplectic partitioned Runge–Kutta methods, see also [1, 17]).

Theorem 2.1

[15] Let \(x_1(\theta ),\dots ,x_N(\theta )\) be the approximate solutions (to \(x(h;\theta ),\dots , x(Nh;\theta )\)) obtained by applying the Runge–Kutta method (2.6) characterized by the coefficients \(a_{ij}\) and \(b_i\) to (1.1). Assume that the coefficients \(A_{ij}\) and \(B_i\) of the Runge–Kutta method for the adjoint system (2.2) satisfy the relation

Then, solving the adjoint system (2.2) with \(\lambda _N = \nabla _x C(x_N(\theta ))\) by using the Runge–Kutta method (2.7) characterized by \(A_{ij}\) and \(B_i\) gives the exact gradient at \(n=0\), i.e., \(\lambda _0 = \nabla _\theta C(x_N(\theta ))\).

The combination of Runge–Kutta methods for the original and adjoint systems can be seen as a partitioned Runge–Kutta method for the coupled system.

Remark 2.1

The conditions in Theorem 2.1 indicate that

which makes sense only when every weight \(b_i\) is nonzero. However, for some Runge–Kutta methods such as the Runge–Kutta–Fehlberg method one or more weights \(b_i\) vanish. For such cases, the above conditions cannot be used to find an appropriate Runge–Kutta method for the adjoint system. We refer the reader to Appendix in [15] for a workaround. For clarity, in this paper, we always assume that every weight \(b_i\) is nonzero.

Remark 2.2

As explained in [15], the overall accuracy of the partitioned Runge–Kutta method for the coupled system may be lower than that of the Runge–Kutta method for (1.1). Such an undesirable property is called the order reduction. We need to take into account the reduction especially when we intend to compute \(\nabla _\theta C( x(t_N;\theta ))\) as accurately as possible in the context of, for example, sensitivity analysis (see, for example, [6, 11] for the reduction of order conditions).

We note that the Runge–Kutta method (2.7) for the adjoint system (2.2) is equivalently rewritten as

This expression is convenient when the adjoint system is solved backwardly.

3 Hessian-vector multiplication

Given an arbitrary vector \(\gamma \), we are interested in calculating the Hessian-vector multiplication \(( {\mathsf {H}}_\theta C(x_N(\theta )) )\gamma \) exactly.

In Sect. 3.1, we give a new concise derivation of the second-order adjoint system (2.5). The idea of the derivation plays an important role in Sect. 3.2, where we show how to calculate the exact Hessian-vector multiplication \(( {\mathsf {H}}_\theta C(x_N(\theta )) )\gamma \).

3.1 Concise derivation of the second-order adjoint system

Let us couple the original system (1.1) and the variational system (2.1). This leads to the following system

which can be written as

by introducing an augmented vector \(y=[x^\top , \delta ^\top ]^\top \). The adjoint system for (3.1) is given by

which can also be written as

where \(\phi = [\xi ^\top , \lambda ^\top ]^\top \). Note that the system (3.2) is the combination of the second-order adjoint system (2.5) and the adjoint system (2.2).

As explained in Sect. 2.1, the Hessian-vector multiplication \(({\mathsf {H}}_\theta C(x(t_N;\theta ))) \gamma \) is obtained by solving the second-order adjoint system (2.5) backwardly. This property was proved in Theorem 2 in [20], but we give another proof building on (3.2).

Proposition 3.1

Let x, \(\delta \) and \(\lambda \) be the solutions to the original system (1.1) with the initial state \(x(0) = \theta \), to the variational system (2.1) with the initial state \(\delta (0) = \gamma \) and to the adjoint system (2.2) with the final state \(\lambda (t_N) = \nabla _x C(x(t_N;\theta ))\), respectively. For the solution to the second-order adjoint system (2.5) with the final state \(\xi (t_N) = ({\mathsf {H}}_x C(x(t_N;\theta )))\delta (t_N)\), it follows that \(\xi (0) = ({\mathsf {H}}_\theta C(x(t_N;\theta ))) \gamma \).

Proof

Let \({\tilde{C}}:{\mathbb {R}}^d\times {\mathbb {R}}^d \rightarrow {\mathbb {R}}\) be a real valued function defined by

Because

and

it follows that

for any vector \(\gamma \). We note that the solution to the variational system (2.1) depends on both \(\theta \) and \(\gamma \). Then, building on the discussion in Sect. 2.1 we see that solving the adjoint system (3.2) backwardly with the final states

and

leads to the Hessian-vector multiplication at \(t=0\), i.e.,

as well as the gradient

\(\square \)

The result of the above discussion lets the second-order adjoint system include within the framework of the first-order adjoint system.

3.2 Exact Hessian-vector multiplication

From the discussion in Sects. 2.2 and 3.1, we readily see that the exact Hessian-vector multiplication \(( {\mathsf {H}}_\theta C(x_N(\theta )) )\gamma \) is obtained by solving the coupled adjoint system (3.2) with a particular choice of Runge–Kutta method.

Suppose that the pair of x- and \(\delta \)-systems (3.1) is discretized by a Runge–Kutta method:

where \(y_{n}=[x_{n}^\top , \delta _{n}^\top ]^\top \). We discretize the coupled adjoint system (3.2), i.e., the pair of \(\xi \)- and \(\lambda \)-systems, by another Runge–Kutta method:

where \(\phi _{n}=[\xi _{n}^\top , \lambda _{n}^\top ]^\top \). Then, we have the following theorem.

Theorem 3.1

Let \(y_1(\theta ,\gamma ),\dots ,y_N(\theta ,\gamma )\) be the solutions obtained by applying the Runge–Kutta method (3.4) characterized by the coefficients \(a_{ij}\) and \(b_i\) to (3.1). Assume that the coefficients \(A_{ij}\) and \(B_i\) of the Runge–Kutta method for the coupled adjoint system (3.2) satisfy the relation

Then, solving the coupled adjoint system (3.2) with \(\xi _N = ({\mathsf {H}}_xC(x_N(\theta ))) \gamma \) and \(\lambda _N = \nabla _x C(x_N(\theta ))\) by using the Runge–Kutta method (3.5) characterized by \(A_{ij}\) and \(B_i\) gives the exact Hessian-vector multiplication at \(n=0\), i.e., \(\xi _0 = ({\mathsf {H}}_\theta C(x_N(\theta ))) \gamma \) as well as the exact gradient \(\lambda _0 = \nabla _\theta C(x_N(\theta ))\).

We omit the proof of this theorem because it proceeds as that for Theorem 2.1 by considering (3.1) and (3.2) instead of (1.1) and (2.2), respectively, and considering the function (3.3) instead of C(x).

As is the case with the gradient computation, an expression equivalent to (3.5):

is convenient when the coupled adjoint system is solved backwardly.

3.3 Actual computation procedure

In this subsection, we summarize the actual computational procedure.

In what follows, “RK1” denotes a Runge–Kutta method with coefficients \(a_{ij}\) and \(b_i\), and “RK2” the Runge–Kutta method with coefficients \(A_{ij}\) and \(B_i\) determined by the conditions in Theorem 3.1. The actual computational procedure for computing \(({\mathsf {H}}_\theta C(x_N(\theta ))) \gamma \) is as follows.

-

Step 1

Integrate (3.1) by using RK1 (see (3.4)) to obtain \(x_1(\theta ),\dots , x_N(\theta )\) and \(\delta _1(\theta ,\gamma ),\dots , \delta _N (\theta ,\gamma )\). The computational cost for the \(\delta \)-equation (variational system) is usually cheaper than that for the x-equation. For example, when f is nonlinear and RK1 is implicit, we need to solve a nonlinear system for the x-equation in each time step, but we only have to solve a linear system for the \(\delta \)-equation.

-

Step 2

Integrate (3.2) with \(\xi _N = ({\mathsf {H}}_xC(x_N(\theta ))) \gamma \) and \(\lambda _N = \nabla _x C(x_N(\theta ))\) backwardly by using RK2 (see (3.6)). If RK1 is explicit (resp. implicit), the computation of this step is explicit (implicit). Even in the implicit cases, this step only requires solving linear systems.

In the above procedure, solving the x-equation (original system) is usually the most computationally expensive part.

When we apply a Newton-type method to solve the optimization problem (1.2), we need to compute a linear system having the Hessian \({\mathsf {H}}_xC(x_N(\theta ))\) as a coefficient matrix in every Newton iteration. A Krylov subspace method is one of the choices for solving such a linear system, and it requires computing Hessian-vector multiplications repeatedly for the same Hessian but different vectors. We note that, when repeating the above procedure, we can skip solving the x-equation, which is the most computationally expensive part, and the \(\lambda \)-equation.

As far as the authors know, there has been no consensus for the standard choice of numerical integrators for the adjoint systems. A simple strategy is to solve the adjoint systems as accurately as possible (by interpolating the internal stages of the x- and \(\delta \)-equations if necessary). However, this strategy fails to obtain the exact Hessian-vector multiplication and makes the computation of the adjoint system more expensive. We may conclude that the proposed method is the best choice among others in terms of the exactness of the Hessian-vector multiplication and the computational cost for the adjoint systems.

4 Numerical verification

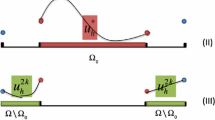

This section validates the proposed method through three numerical experiments using (I) the simple pendulum (Sect. 4.1), (II) the one-dimensional Allen–Cahn equation (Sect. 4.2), and (III) a one-dimensional inhomogeneous wave equation (Sect. 4.3). The Allen–Cahn and wave equations are often employed as testbeds in the research fields of data assimilation and inversion problems. The experiment (I) aims at confirming that the proposed method works well through the small-scale problem that enables us to check all of the elements in the Hessian matrix. The performance of the proposed method in the large-scale problems are checked in the experiments (II) and (III), through an initial value problem (the experiment II) and a parameter-field inversion problem (the experiment III).

In all three experiments, we compare results of the proposed method with those of other numerical integrators. Using the same Runge–Kutta method for (3.1), we compare two Runge–Kutta methods for the coupled adjoint system (3.2). One is the method determined by Theorem 3.1, and the other one is selected such that it has the same number of stages and same order of accuracy as the Runge–Kutta method for (3.1).

4.1 Simple pendulum

We verify the discussion of Sect. 3.2 by a numerical experiment for the simple pendulum problem

which is employed as a toy problem. We discretize this system (4.1) by the explicit Euler method. The function C is defined by \(C(x) = C(Q,P) = Q^2 + QP + P^2 + P^4\).

The step size is set to \(h=0.01\). As a reference, we obtain an analytic Hessian \({\mathsf {H}}_\theta C (x_5(\theta )) |_{\theta = [1,1]^{\top }}\) at \(N=5\) with the help of symbolic computation.Footnote 1 Note that symbolic computation is possible only when N is relatively small. The result is

We compute \(\xi _0\) using the proposed approach: the coupled adjoint system (3.2) is solved by

We employ two vectors \([1,0]^\top \) and \([0,1]^\top \) as \(\gamma \) to obtain the exact Hessian, and the result is

which coincides with (4.2) to 14 digits (the underlines are drawn for the matched digits). For comparison, we solve the coupled adjoint system (3.2), i.e., the pair of the (first-order) adjoint system and second-order adjoint system, by applying the explicit Euler method backwardly (more precisely, the explicit method with the exchanges \(\phi _{n+1} \leftrightarrow \phi _n\), \(y_{n+1} \leftrightarrow y_n\) and \(h\leftrightarrow -h\))

This formula is obviously explicit when the coupled adjoint system (3.2) is solved backwardly in time. We then obtain the approximated Hessian

which differs from (4.2) substantially. We also note that this matrix is no longer symmetric.

4.2 Allen–Cahn equation

We consider an initial value problem of a time-dependent field variable \(\psi (t,z)\) driven by the one-dimensional Allen–Cahn equation

under the Neumann boundary condition: \(\psi _{z}(t,0) = \psi _{z}(t,1) = 0\). In the following numerical experiments, the coefficients and initial value are set to \((\alpha ,\beta ,\kappa ) = (10,0.001,-1)\) and \(\psi (0,z) = \cos (\pi z)\). We discretize the equation in space with d grid points and the grid spacing \(\varDelta z\), i.e., \(\varDelta z = 1/(d-1)\), and apply the second-order central difference to the space second-derivative to obtain a semi-discrete scheme:

where \(\varPsi \in {\mathbb {R}}^{d}\) is the discretized \(\psi \), and we used \(\bullet ^{(m')}\) to describe a quantity \(\bullet \) at \(z=(m'-1)\varDelta z\) to simplify the notation. In the following, we solve the semi-discrete scheme and its variational system by the implicit Euler method, that is, we discretize (3.1) by \(y_{n+1} = y_{n} + h g(y_{n+1})\). A cost function considered here is

where \(\Vert \cdot \Vert _{2}\) denotes the Euclidean norm. The vector \(\theta \in {\mathbb {R}}^{d}\) is an initial condition for the semi-discrete scheme and \({\hat{\theta }}\) is the discretized \(\psi (0,z)\), i.e., \({\hat{\theta }}^{(m)}=\cos (\pi (m-1)\varDelta z)\) for \(m=1,\dots ,d\). The proposed method discretizes the coupled adjoint system (3.2) by

We employ (4.3) for comparison. Let \({\mathsf {H}}\) and \(\tilde{{\mathsf {H}}}\) be the Hessian matrices obtained by applying (4.4) and (4.3), respectively. We note that the procedure (4.3) finds \(\tilde{{\mathsf {H}}}\) uniquely since \(\phi _0\) depends linearly on \(\phi _N\). The numerical experiments conducted here employ \(d=150\), \(h = 0.001\) and \(N=20\), and show the results using \(\theta ^{(m)} = 1.05 \cos (\pi (m-1)\varDelta z)\) for \(m=1,\dots ,d\).

First, we check the extent to which the symmetry is preserved or broken in the Hessian matrices by measuring “degree of asymmetry” defined by

for a given matrix \({\mathsf {M}}\). Here, \(\Vert \cdot \Vert _{\max }\) denotes the maximum norm (\(\Vert {\mathsf {M}}\Vert _{\max }=\max _{i,j} |{\mathsf {M}}_{ij}|\)). In this subsection, we also use the operator norm \(\Vert \cdot \Vert _{\infty }\) induced by the vector maximum norm. We observed that \(\tau (\tilde{{\mathsf {H}}})=2.344 \times 10^{-5}\) for (4.3) and \(\tau ({\mathsf {H}})=1.518 \times 10^{-18}\) for (4.4). This result tells us that the inappropriate discretization for the adjoint systems breaks the Hessian symmetry while the appropriate one based on the proposed method preserves the symmetry high-accurately.

Second, we check the extent to which \({\mathsf {H}}\) is well approximated by \(\tilde{{\mathsf {H}}}\). The difference is \(\Vert {\mathsf {H}} - \tilde{{\mathsf {H}}} \Vert _{\max }= 4.252\times 10^{-5}\) (\(\Vert {\mathsf {H}} - \tilde{{\mathsf {H}}} \Vert _{\infty }= 7.640\times 10^{-5}\), \(\Vert {\mathsf {H}} - \tilde{{\mathsf {H}}} \Vert _\infty / \Vert {\mathsf {H}} \Vert _\infty = 0.01660\), and \(\Vert {\mathsf {H}} - \tilde{{\mathsf {H}}} \Vert _\infty / \Vert \tilde{{\mathsf {H}}} \Vert _\infty = 0.01634\)), which has the same order as \(\tau ({\mathsf {H}})-\tau (\tilde{{\mathsf {H}}})\). This implies that the difference comes from the asymmetry appeared in \(\tilde{{\mathsf {H}}}\).

Third, we check what happens when solving a linear system \({\mathsf {H}} v = r\) with respect to v. We employ the conjugate residual (CR) method.Footnote 2 The tolerance is set to \(1.0\times 10^{-8}\) for the relative residual measured by the maximum norm. The vector r is set to \(r = {\mathsf {H}} v^\text {exact}\), where \(v^\text {exact} = (1,0,\dots ,0)^{\top }\). Figure 1 compares two approaches: “proposed” uses \({\mathsf {H}}\) while “approximation” uses \(\tilde{{\mathsf {H}}}\). It is observed from the top figure that the relative residual monotonically decreases for both approaches, but faster convergence is observed for the proposed approach. Note that CR method still works within double precision despite the symmetry is broken. However, from the bottom figure, which plots the error \(\Vert v - v^\text {exact} \Vert _\infty \), a significant error is observed for \(\tilde{{\mathsf {H}}}\) even after the CR method itself converges, while the proposed approach reaches the exact solution. In the context of uncertainty quantification [9, 19], the information we need is not \(\tilde{{\mathsf {H}}}^{-1}\) but \({\mathsf {H}}^{-1}\). In this viewpoint, the calculation using \(\tilde{{\mathsf {H}}}\) is problematic. In particular, the fact that the CR method itself converges could increase the risk of overconfidence. In contrast, the calculation based on the proposed approach seems of importance.

As a complementary study, let us investigate why the difference between the solution to \(\tilde{{\mathsf {H}}} {\tilde{v}} = r\) computed by the CR method and \(v^\text {exact}\) is so significant despite of the difference between \({\mathsf {H}}\) and \( \tilde{{\mathsf {H}}}\), which is not sufficiently small in double precision but is still much smaller than \(\mathrm {O}(1)\). We compute the condition numberFootnote 3 of \(\tilde{{\mathsf {H}}}\), and the result is \(\mathrm {cond}_\infty (\tilde{{\mathsf {H}}}) = 2.993\times 10^5\) (\(\mathrm {cond}_\infty ({\mathsf {H}}) = 2.696 \times 10^5\)). Because the condition number is moderate, we suspect that the CR method for \(\tilde{{\mathsf {H}}} {\tilde{v}} = r\) actually finds an accurate solution to \(\tilde{{\mathsf {H}}}{\tilde{v}} = r\). In general, for the solutions to \({\mathsf {H}}v=r\) and \(\tilde{{\mathsf {H}}} {\tilde{v}}=r\), it follows that

The right hand side of (4.5) is calculated to be \(4.892\times 10^4\). Since \(\Vert v\Vert _{\infty } = \Vert v^{\text {exact}}\Vert _{\infty } = 1\) in the above problem setting, we see that \(\Vert v - {\tilde{v}}\Vert _{\infty }\) could be as big as \(\mathrm {O}(10^4)\), which explains the undesirable property observed in Fig. 1 (bottom figure). We note that the difference between v and \({\tilde{v}}\) could result in the slow convergence in the Newton method (the slow convergence is discussed in Sect. 4.3).

4.3 Wave equation

We validate the proposed method through a problem to estimate an inner structure from a wave form of displacement field driven by the one-dimensional inhomogeneous wave equation

under a periodic boundary condition, where \(u\left( t,z\right) \) is a displacement field and the spatial-dependent function \(w\left( z\right) \) is a “structure field” to be estimated. In the following numerical experiments, the initial conditions of u and its time derivative \(u_{t}\) are set to \(u\left( 0,z\right) =16z^2\left( L-z\right) ^2/L^4\) and \(u_{t}\left( 0,z\right) =0\), and we assume that \(u\left( 0,z\right) \) and \(u_{t}\left( 0,z\right) \) are known but w is not, that is, the structure field w is a unique control variable that determines the time evolution of the wave form of u. Such a problem to estimate the unobservable inner structure field from the acoustic response of objective materials appears ubiquitously in various scientific fields such as seismology [4] and engineering [8, 13]. We discretize (4.6) in space via a staggered grid with d grid points and grid spacing \(\varDelta z\), i.e., \(\varDelta z = L/d\), and then we obtain a semi-discrete scheme of (4.6) given by

where \(U\in {\mathbb {R}}^{d}\), \(V\in {\mathbb {R}}^{d}\), and \(W\in {\mathbb {R}}^{d}\) are the discretized u, \(u_{t}\), and w, respectively, and \(\bullet ^{(m')}\) indicates a quantity \(\bullet \) at \(z=(m'-1)\varDelta z\). Throughout this subsection, the parameters L and \(\varDelta z\) are fixed to \(L=64\) and \(\varDelta z =1\) (i.e., \(d=64\)), and the semi-discrete scheme (4.7) and its variational system are solved by the following 2-stage second order explicit Runge–Kutta (Heun) method:

The cost function C considered here is a sum of squared residuals between the time evolution of U depending on W to be estimated and that of “observation” of U:

where \(T^{\text {obs}}\) is a set of the observation times, and \(U^{\text {obs}}\) is the observation of U obtained by solving (4.7) assuming a “true” structure field \(w^{\text {true}}(z)=0.5+0.25\sin \left( 4\pi z/L\right) \). Note that we use the Heun method with the common step size when calculating \(U_{n}\left( W\right) \) and \(U_{n}^{\text {obs}}\), meaning that C is exactly zero when W is given by the discretized \(w^{\text {true}}\). The set of observation times is fixed to \(T^{\text {obs}}=\{t^{\text {obs}}\mid t^{\text {obs}}=0.2j,\;0\le j\le 10,j\in {\mathbb {Z}}\}\) in the following experiments.

First, as well as the first experiment in Sect. 4.2, we start from checking the symmetry of the Hessian. The Hessian is evaluated at the point where W is given by the discretized \(w_{\text {true}}\), i.e., at the global minimum point. Let \({\mathsf {H}}\) and \(\tilde{{\mathsf {H}}}\) respectively be the Hessian evaluated by the proposed method and the Hessian obtained by applying the Heun method to the coupled adjoint system (3.2) backwardly (more precisely, the Heun method with the exchanges \(\phi _{n+1} \leftrightarrow \phi _n\), \(y_{n+1} \leftrightarrow y_n\) and \(h\leftrightarrow -h\)):

Although the difference between (4.8) and the scheme of the proposed method

is only on the arguments of \(\nabla _y g\), the small difference causes a significant difference on the symmetry of the Hessian. Figure 2 shows each degree of asymmetry, \(\tau ({\mathsf {H}})\) and \(\tau (\tilde{{\mathsf {H}}})\), as a function of step size h used for the time integrators. Obviously the proposed method can suppress the degree of asymmetry while \(\tilde{{\mathsf {H}}}\) cannot reproduce the symmetry when using large h. The degree of asymmetry \(\tau (\tilde{{\mathsf {H}}})\) is scaled by \(h^2\), which results from the accuracy of the Heun method, meanwhile \(\tau ({\mathsf {H}})\) does not show such a convergence property but a slow increase proportional to \(h^{-1}\) as h gets smaller mainly due to an accumulation of round-off error. This result demonstrates that the proposed method can calculate an “exact” Hessian up to round-off.

Next, we check the speed of convergence in the optimization based on the Newton method. When conducting a Newton-type method to optimize a nonlinear function like C, a linear equation \(\left( {\mathsf {H}}+{\mathsf {D}}\right) \nu =-\nabla C\) needs to be solved to get a descent direction \(\nu \) in each iteration step, where \({\mathsf {D}}\) is a method-dependent diagonal matrix [10, 12]. Whether a correct descent direction is obtained or not largely depends not only on the symmetry of Hessian, as seen in the third experiment of Sect. 4.2, but also on the error involved in the gradient \(\nabla C\), which needs to be calculated by solving an adjoint system. This experiment sets the step size to \(h=0.2\) for all of the time integrators and employs the Levenberg-Marquardt-regularized Newton (LMRN) method [12] to optimize the cost function C with an initial guess set to \(W=0.5\), and then measures the performance by the number of computations of the coupled adjoint system (3.2) needed in the optimization of C (We call this “number of backward evaluations” for simplicity). The LMRN method employs a tolerance set to \(1.0\times 10^{-8}\) for the residual measured by the maximum norm and the CR method to solve the linear equations with the same tolerance as the one used in Sect. 4.2. The result of the experiment shows that, although the optimum solutions in both cases accord with the true structure field, there is a significant difference between their speeds of convergence to get the solutions. The lines “proposed” and “approximation” in Fig. 3 are the convergence behaviors to attain the optimum solutions, in which (4.9) and (4.8) are respectively used, as a function of the number of backward evaluations. The optimization using \({\mathsf {H}}\) based on the proposed method is more than twice faster than that using \(\tilde{{\mathsf {H}}}\). We confirmed that the former is robustly faster than the latter by checking the other cases using different step sizes. This result lets us conclude that the proposed method provides us a great benefit in the viewpoint of the speed of convergence in the nonlinear optimization.

5 Conclusion

In this paper, we have shown a concise derivation of the second-order adjoint system, and a procedure for computing a matrix-vector multiplication exactly, where the matrix is the Hessian of a function of the numerical solution of an initial value problem with respect to the initial value. The fact that the second-order adjoint system can be reformulated to a part of a large adjoint system is the key point to obtain the exact Hessian-vector multiplication based on the Sanz-Serna scheme.

The proposed method can be used either to obtain the exact Hessian or to solve a linear system having the Hessian as the coefficient matrix based on a Krylov subspace method. Particularly in the latter case, the proposed method can contribute a rapid convergence since the accuracy of Hessian-vector multiplication affects the speed of convergence directly. The importance of calculating the exact Hessian was illustrated for the Allen–Cahn and wave equations.

We plan to test the method to quantify the uncertainty for the estimation of more practical problems. We note that in many applications an ODE system often arises from discretizing a time-dependent partial differential equation (PDE) in space. However, discretizing PDEs in space before taking the adjoint may lead to a very strong nonphysical behavior [16] (this issue has been partially addressed in [18]). Since the proposed method does not care about the spatial discretization, we have to take the spatial discretization into account when testing the proposed method to practical problems. Considering with such spatial discretization method may provide an optimal combination of time and spatial discretizations for given problems.

Notes

We used SymPy package in Julia.

The conjugate gradient (CG) method is a method of choice when the coefficient matrix is real and symmetric. Note that the matrix \({\mathsf {H}}\) or \(\tilde{{\mathsf {H}}}\) may have negative eigenvalues. The CG method still works even if the coefficient matrix has negative eigenvalues in theory, but we employ the CR method to reduce the risk of break-down. We show the results of the CR method only, but we note that similar results were obtained for the CG method in this problem setting.

The condition number was calculated by using \(\mathsf {cond}\) function in LinearAlgebra package of julia.

References

Abia, L., Sanz-Serna, J.M.: Partitioned Runge–Kutta methods for separable Hamiltonian problems. Math. Comput. 60(202), 617–634 (1993). https://doi.org/10.2307/2153105

Chen, R.T., Rubanova, Y., Bettencourt, J., Duvenaud, D.: Neural ordinary differential equations. Adv. Neural Inf. Process. Syst. 31, 6571–6583 (2018)

Dimet, F.X.L., Talagrand, O.: Variational algorithms for analysis and assimilation of meteorological observations: theoretical aspects. Tellus A 38A(2), 97–110 (1986). https://doi.org/10.1111/j.1600-0870.1986.tb00459.x

Fichtner, A., Bunge, H.P., Igel, H.: The adjoint method in seismology: I. theory. Phys. Earth Planet. In. 157(1), 86–104 (2006). https://doi.org/10.1016/j.pepi.2006.03.016

Giles, M.B., Pierce, N.A.: An introduction to the adjoint approach to design. Flow Turbul. Combust. 65(3), 393–415 (2000). https://doi.org/10.1023/A:1011430410075

Hairer, E.: Backward analysis of numerical integrators and symplectic methods. Ann. Numer. Math. 1(1–4), 107–132 (1994)

Hairer, E., Lubich, C., Wanner, G.: Geometric Numerical Integration: Structure-Preserving Algorithms for Ordinary Differential quations, 2nd edn. Springer, Berlin (2006)

Hursky, P., Porter, M.B., Cornuelle, B.D., Hodgkiss, W.S., Kuperman, W.A.: Adjoint modeling for acoustic inversion. J. Acoust. Soc. Am. 115(2), 607–619 (2004). https://doi.org/10.1121/1.1636760

Ito, S., Nagao, H., Yamanaka, A., Tsukada, Y., Koyama, T., Kano, M., Inoue, J.: Data assimilation for massive autonomous systems based on a second-order adjoint method. Phys. Rev. E 94(4), 043307 (2016). https://doi.org/10.1103/PhysRevE.94.043307

Li, D.H., Fukushima, M., Qi, L., Yamashita, N.: Regularized newton methods for convex minimization problems with singular solutions. Comput. Optim. Appl. 28(2), 131–147 (2004)

Murua, A.: On order conditions for partitioned symplectic methods. SIAM J. Numer. Anal. 34(6), 2204–2211 (1997). https://doi.org/10.1137/S0036142995285162

Nesterov, Y.: Lectures on Convex Optimization, vol. 137. Springer, Berlin (2018)

Rosenthal, A., Ntziachristos, V., Razansky, D.: Acoustic inversion in optoacoustic tomography: a review. Curr. Med. Imaging Rev. 9(4), 318–336 (2013)

Saad, Y.: Iterative methods for sparse linear systems, second edn. Society for Industrial and Applied Mathematics, Philadelphia, PA (2003). https://doi.org/10.1137/1.9780898718003

Sanz-Serna, J.M.: Symplectic Runge–Kutta schemes for adjoint equations, automatic differentiation, optimal control, and more. SIAM Rev. 58(1), 3–33 (2016). https://doi.org/10.1137/151002769

Sirkes, Z., Tziperman, E.: Finite difference of adjoint or adjoint of finite difference? Mon. Weather Rev. 125(12), 3373–3378 (1997). https://doi.org/10.1175/1520-0493(1997)125<3373:fdoaoa>2.0.co;2

Sun, G.: Symplectic partitioned Runge–Kutta methods. J. Comput. Math. 11(4), 365–372 (1993)

Tanaka, T., Matsuo, T., Ito, S., Nagao, H.: Making adjoint discretizations consistent in partial differential equations. In preparation

Thacker, W.C.: The role of the hessian matrix in fitting models to measurements. J. Geophys. Res. Oceans 94(C5), 6177–6196 (1989). https://doi.org/10.1029/JC094iC05p06177

Wang, Z., Droegemeier, K., White, L.: The adjoint Newton algorithm for large-scale unconstrained optimization in meteorology applications. Comput. Optim. Appl. 10(3), 283–320 (1998). https://doi.org/10.1023/A:1018321307393

Wang, Z., Navon, I.M., Dimet, F.X.L., Zou, X.: The second order adjoint analysis: theory and applications. Meteor. Atmos. Phys. 50(1–3), 3–20 (1992). https://doi.org/10.1007/bf01025501

Acknowledgements

This work was triggered by discussions in the research projects of JST CREST Grant Nos. JPMJCR1761 and JPMJCR1763, JST ACT-I Grant No. JPMJPR18US, JSPS Grants-in-Aid for Scientific Research (B) Grant No. 17H01703, and JSPS Grants-in-Aid for Early-Career Scientists Grant Nos. 16K17550, 19K14671 and 19K20220.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Antonella Zanna Munthe-Kaas.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Ito, Si., Matsuda, T. & Miyatake, Y. Adjoint-based exact Hessian computation. Bit Numer Math 61, 503–522 (2021). https://doi.org/10.1007/s10543-020-00833-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10543-020-00833-0