Abstract

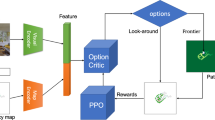

When reinforcement learning with a deep neural network is applied to heuristic search, the search becomes a learning search. In a learning search system, there are two key components: (1) a deep neural network with sufficient expression ability as a heuristic function approximator that estimates the distance from any state to a goal; (2) a strategy to guide the interaction of an agent with its environment to obtain more efficient simulated experience to update the Q-value or V-value function of reinforcement learning. To date, neither component has been sufficiently discussed. This study theoretically discusses the size of a deep neural network for approximating a product function of p piecewise multivariate linear functions. The existence of such a deep neural network with O(n + p) layers and O(dn + dnp + dp) neurons has been proven, where d is the number of variables of the multivariate function being approximated, 𝜖 is the approximation error, and n = O(p + log2(pd/𝜖)). For the second component, this study proposes a general propagational reinforcement-learning-based learning search method that improves the estimate h(.) according to the newly observed distance information about the goals, propagates the improvement bidirectionally in the search tree, and consequently obtains a sequence of more accurate V-values for a sequence of states. Experiments on the maze problems show that our method increases the convergence rate of reinforcement learning by a factor of 2.06 and reduces the number of learning episodes to 1/4 that of other nonpropagating methods.

Similar content being viewed by others

Notes

See the related works in Section 3.1.

A learning loop here refers to a series of steps of RL starting with “Select an action and execute it”.

The main cause is “the high time complexity of single problem solving”, i.e., their one-off search algorithms are inefficient.

𝜖 − A∗ takes the meaning of (and is similar to) 𝜖 − Greedy.

That is the “strategy” component mentioned in the abstract.

Here a represents an action.

See the proof of Theorem 2.

The proof is quite lengthy, and due to space limitations, it was moved to Appendix B.

In this paper, O(.) means the upper bound, Ω(.) is the lower bound, and Θ (.) is a tight bound (it is both the upper and lower bound).

This formula means that hDNN(.) approximates h∗(.) to logarithmic precision.

Here, R1 = [0, c1] ×… × [0, cd], R2 = (c1, 1] ×… × (cd, 1], and 0 < cj < 1.

In the caption of Fig. 3, \(\tilde {x}^{(1)}\) is the binary expansion of x(1), \(\tilde {x}^{(1)}\approx x^{(1)}\), \({\tilde {h}}_{1}(\mathbf {x})\) = \(h_{1}{\tilde {(\mathbf {x})}}\) ≈ h1(x), where h1(x) is an approximation of h1R(x). The definitions are given in the following paragraph.

We define \(\tilde {h}_{l}(x^{(1)}, \ldots ,x^{(d)})\) \(\triangleq \)hl(x~(1),…, x~(d)) and can prove \(\tilde {h}_{l}(x^{(1)}, \ldots ,x^{(d)})\) ≈ hlR(x(1),…, x(d)) such that \(|{h_{1}^{R}}(x^{(1)},\ldots {},\) \(x^{(d)}) -\tilde {h}(x^{(1)},\ldots , x^{(d)})| \leq \varepsilon \) , see Theorem 1.

It is a 2-variable linear function.

The functions Hj(x, y) and H∗(⋅) are defined below.

That is a part of the whole shortest distance from (x,y) to the goal. Here \(t_{i} \triangleq (x_{-} t_{i}, y_{-} t_{i})\).

The \(\max \limits \{0,x\}\) is defined as follows: if x ≥ 0, then \(\max \limits \{0,x\}=x\); otherwise, \(\max \limits \{0,x\}=0\).

Note that each Hi group has four neuron layers (including the input layer (x, y, Constants)), as shown in Fig. 6.

There are 8 Hi groups, each of which has 4 II(⋅) neurons and 4 ReLU neurons.

\(H^{*}_{2}(x\_t_{1},y\_t_{1}\)) computes the length of the shortest path from (R1’s) island t1 to (R2’s) island t2 within R2. The “Const.” inputted to \(H^{*}_{2}\) is t1 = (x_t1, y_t1). Since island t0 does not exist, there is no \(H^{*}_{1}\) group. So, there are 7 \(H^{*}_{i}\) groups.

That is: 8 \(\sum \) units in the 8 Hi groups (in the 3rd layer) and 7 \(\sum \) units in the 7 \(H^{*}_{i}\) groups.

That is the 4th layer of the DNN.

There are 8 “Hi+ \(\sum H^{*}_{i}\)” computing units in the 5th layer of the DNN in Fig. 7.

The last layer is used to select the outputs of the previous neuron groups, only one of the “Sgn(Hi)”s is not 0, where Sgn(H8)=sgn(9 − x) ⋅ sgn(9 − y) ⋅ sgn(x − 7) ⋅ sgn(y − 3) representing the boundary constraints of R8.

For the maze problem, it is the H⋆(x, y).

According to the algorithms’ naming scheme in Section 6, the algorithm is called Algorithm 4, ALGO 4 in short.

That is the one-off search component of the RL algorithm is efficient.

In the simple modified A∗ algorithm, a global variable F is introduced to record the expanded nodes’ maximum f -values. In the OPEN list, for nodes with f -values less than F, those with small g-values are first selected for expansion. In this way, some repeated node expansions can be avoided [3].

V-Learning represents the V-value learning based RL algorithm.

Here, we have p-value < α = 0.05, which is the significance level of the Wilcoxon rank-sum test, α = 0.05 corresponds to a confidence level of 95%.

The search tree of the Eight-puzzle problem, whose shortest solution path length is 23, includes more than 6500 nodes, whereas the search tree of the maze problem contains 100 nodes.

When solving Constrained Optimization problem, C is usually the radius of an L1-norm ball, in which the parameters are constrained to lie [8].

That is the required episode number to learn h(.) of all nodes on a path (x, t).

References

Silver D, Huang A, Maddison C, et al. (2016) Mastering the game of Go with deep neural networks and tree search. Nature 529:484–489

Korf R (1990) Real-time heuristic search. Artificial Intelligence 42:189–211

Martelli A (1977) On the complexity of admissible search algorithms. Artificial Intelligence 8:1–13

Mero L (1984) A heuristic search algorithm with modifiable estimate. Artificial Intelligence 23:13–27

Zhang W, Yu R, He Z (1990) The lower bound on the worst-case complexity of admissible search algorithms. Chinese Journal of Computer 13:450–455

Barto A, Bradtke S, Singh S (1995) Learning to act using real-time dynamic programming. Artificial Intelligence 72:21–138

Pearl J (1983) Knowledge versus search: a quantitative analysis using a∗. Artificial Intelligence 20:1–13

Goodfellow I, Bengio Y, Courville A (2016) Deep learning. MIT Press, Cambridge

Yarotsky D (2107) Error bounds for approximations with deep reLU networks. Neural Networks 94:103–114

Mnih V, Kavukcuoglu K, Silver D, et al. (2015) Human-level control through deep reinforcement learning. Nature 518:529–533

Schaul T, Horgan D, Gregor K (2015) Universal value function approximators. In: Proceedings of the 32nd international conference on machine learning. Lille, France, pp 1312–1320

Pan Y, Yao H, Farahmand A, et al. (2019) Hill climbing on value estimates for search-control in Dyna. In: Proceedings of the 28th international joint conference on artificial intelligence (IJCAI 2019). Macau, China, pp 3209–3215

Chen J, Sturtevant N (2019) Conditions for avoiding node re-expansions in bounded suboptimal search. In: Proceedings of the 28th international joint conference on artificial intelligence (IJCAI-2019). Macao, China, pp 1220–1226

Pan Y, Zaheer M, White A, et al. (2018) Organizing experience: a deeper look at replay mechanisms for samplebased planning in continuous state domains. In: Proceedings of the 27th international joint conference on artificial intelligence (IJCAI-2018). Stockholm, Sweden, pp 4794–4800

Thirugnanasambandam K, Prakash S, Subramanian V, et al. (2019) Reinforced cuckoo search algorithm-based multimodal optimization[J]. Applied Intelligence 49:2059–2083

Xiong H, Huang L, Yu M, et al. (2020) On the number of linear regions of convolutional neural networks. In: Proceedings of the 37th international conference on machine learning (ICML-2020). Vienna, Austria. arXiv:2006.00978

Corneil D, Gerstner W, Brea J (2018) Efficient model-based deep reinforcement learning with variational state tabulation. In: Proceedings of the 35th international conference on machine learning (ICML-2018). Stockholm, Sweden, pp 1049–1058

Sutton R, Barto A (1998) Reinforcement learning: an introduction. IEEE Transactions on Neural Networks 9:1054–1054

Nilsson N (1998) Artificial intelligence: a new synthesis. Morgan Kaufmann, San Francisco

Montufar G, Pascanu R, Cho K, et al. (2014) On the number of linear regions of deep neural networks. Advances in Neural Information Processing Systems 27:2924–2932

Liang S, Srikant R (2017) Why deep neural networks for function approximation. In: Proceedings of the 5th international conference on learning representations. Toulon, France, pp 24–26

Zhang C, Bengio S, Hardt M, et al. (2017) Understanding deep learning requires rethinking generalization. In: Proceedings of the 5th international conference on learning representations (ICLR-2017). Toulon, France, pp 1–15

Yun C, Sra S, Jadbabaie A (2019) Small reLU networks are powerful memorizers: a tight analysis of memorization capacity (NIPS-2019). Vancouver, Canada, pp 15558–15569. arXiv:1810.07770

Raghu M, Poole B, Kleinberg J, et al. (2017) On the expressive power of deep neural networks. In: Proceedings of the 34th international conference on machine learning (ICML-2017). Sydney, Australia, pp 2847–2854

Andoni A, Panigrahy R, Valiant G, Zhang L (2014) Learning polynomials with neural networks. PMLR (Proceedings of Machine Learning Research) 32:1908–1916

Lao Z, Zhou Z, Huang J (2017) Incremental extreme learning machine via fast random search Method[J]. Lecture Notes in Computer Science 10634:82–90

Brookshear J (2002) Computer science: an overview. Addison-Wesley Longman Publishing Co., Boston

Nebel B (2000) On the compilability and expressive power of propositional planning formalisms. Journal of Artificial Intelligence Research 12:271–315

Acknowledgements

This work was supported by Liaoning Provincial Natural Science Foundation of China (Grant No. 20170540311).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A: Proof of Theorem 1

Theorem 3

For the p-order multivariate piecewise function h(x(1),…, x(d) ) defined by (4) and arbitrary error ε > 0, let (x(1),…, x(d)) ∈ [0, 1]d, wi=(wi1,…, wid) be the coefficient vector of hi(.) that constitutes h(x(1),…, x(d) ) and satisfies ∥wi∥1 ≤ C, C is a constant. If h(x(1),…, x(d) ) is differentiable, then there exists a DNN with O(n+p) layers and O(dn + dnp + dp ) neurons, implementing an \(\tilde {h}(x^{(1)}, {\ldots } ,x^{(d)})\) such that

where \(n=(p +1)\log C +\log [dp/\varepsilon ]\).

Proof

First, for each component x(k) of the multivariate input vector (x(1),…, x(d) ), we can obtain its binary expansion sequence {\(x_{0}^{(k)}, {\ldots } ,x_{n}^{(k)}\)} by means of an n-layer DNN with n BSP neurons. Denote the approximate value of x(k) calculated by this sequence as \({\tilde {x}}^{(k)}\), that is,

According to the definition of the n-bit binary expansion of a decimal fractional number, the error between \({\tilde {x}}^{(k)}\) and the original x(k) satisfies |x(k) -\({\tilde {x}}^{(k)} |\) ≤ 1/2 n. Approximately implementing (i.e., calculating) one x(k) requires n neurons, so implementing all x(1),…, x(d) in parallel requires nd neurons. Therefore, the first n layers of the DNN in Fig. 3 have nd neurons, which are not displayed due to size limitations.

Second, the DNN recursively calculates \(\tilde {h}(x^{(1)},{\ldots } ,\) x(d)) through two channels to approximate h(x(1),…, x(d)). If the input vector (x(1),…, x(d)) ∈ [0, c1] ×… × [0, cd], the upper channel is switched on (π sgn(⋅)≠ 0), and the DNN outputs \(\tilde {h}(x^{(1)},\ldots {}, x^{(d)}\) )=\({\prod }_{i = 1}^{p}{(w_{i1}{\tilde {x}}^{(1)}} + {\ldots } + w_{\text {id}}{\tilde {x}}^{(d)}) \) (see (4)); otherwise, the lower channel is turned on, and the result is \(\tilde {h}\)(x(1),…, x(d) ) =\({\prod }_{i = 1}^{p}{(w_{i1}^{\prime }{\tilde {x}}^{(1)}} + {\ldots } + w_{\text {id}}^{\prime }{\tilde {x}}^{(d)})\).

Consider the case of (x(1),…, x(d)) ∈ [0, c1] ×… × [0, cd]. According to the previous explanation of Fig. 3, the DNN recursively calculates the following terms from left to right in the figure in accordance with (11):

where l = 0,…, p − 1, and \({\tilde {h}}_{l + 1}\left (\cdot \right )\) is an approximation of \(h_{l + 1}^{R}(\cdot ) \) that is calculated according to (11) by replacing x with \(\tilde {x}\). Define \(h_{l + 1}^{R}\left (x^{\left (1 \right )}, {\ldots } ,x^{\left (d \right )} \right ) \approx \tilde {h}\)l+ 1(x(1),…, \( x^{(d)} ) \triangleq \) hl+ 1 (\(\tilde {x}^{(1)}, \ldots {}, \tilde {x}^{(d)}\) ) and \(\tilde {f}_{l+1} (x^{(1)},\ldots {}, x^{(d)} ) \triangleq f_{l+1}\tilde {x}^{(1)}, \ldots {}, \tilde {x}^{(d)}\) ):

In particular, we define \({\tilde {h}}_{0}\left (x^{(1)}, {\ldots } ,x^{(d)} \right ) = 1.\)

It can be seen from (A-2) that \(\tilde {h}_{l+1}(x^{(1)},\ldots {}, x^{(d)}\)) is calculated according to (11), but (\(x^{\left (1 \right )},\ldots ,x^{\left (d \right )})\) is replaced with (\({\tilde {x}}^{\left (1 \right )},\ldots ,{\tilde {x}}^{\left (d \right )})\), so \(\tilde {h}_{l+1}(x^{(1)},\ldots {}, x^{(d)}\)) is an approximation of \(h_{l + 1}^{R}\left (x^{\left (1 \right )}, {\ldots } ,x^{\left (d \right )} \right )\). That is \(\tilde {h}\)l+ 1(\(x^{(1)},\ldots {}, x^{(d)} ) \approx h_{l + 1}^{R}\left (x^{\left (1 \right )},\ldots ,x^{\left (d \right )} \right ).\) Note that in the case of (\(x_{0}^{(1)}, {\ldots } ,x_{n}^{(1)})\) falls in [0, c1] ×… × [0, cd]), there is π sgn(⋅) = 1 and [1- π sgn(⋅) ]= 0.

When \(\left (l + 1 \right ) = p\), there is \(\tilde {h}\)p(\(x^{(1)},\ldots {}, x^{(d)} ) \approx {h_{p}^{R}}\left (x^{\left (1 \right )}, {\ldots } ,x^{\left (d \right )} \right ) = h\left (x^{\left (1 \right )}, {\ldots } ,x^{\left (d \right )} \right )\), which is the desired. (see (10)).

In the first hidden layer of the DNN in Fig. 3, for the binary expansion sequence of each input variable (e.g., the (\(x_{0}^{(1)}, {\ldots } ,x_{n}^{(1)})\) of x(1) ), there are n ReLU neurons directly transmitting the sequence to the next hidden layer, one ReLU neuron implementing the w11 ⋅ max(0, \({\sum }_{i = 0}^{n}\frac {x_{i}^{(1)}}{2^{i}})\) term of (A-2), and one BSU neuron calculating the sgn(\(c^{(1)}-{\sum }_{i = 0}^{n}\frac {x_{i}^{(1)}}{2^{i}})\) term. For the d variables \(x^{\left (1 \right )},\ldots ,x^{\left (d \right )}\), there are d such (n + 1 + 1) neuron groups in the first hidden layer of each channel, so there are 2d groups, and 2d ⋅ (n + 1 + 1) neurons in the two channels.

In addition, there are six neurons behind the first hidden layer that combine the previous sgn(.), w11 ⋅max (.), \(\tilde h_{0}(.)\) (\(\tilde h_{0}\)(.)= 1) according to (11) to form f1(.) and \(\tilde {h}\)l(.). (Note that for convenience, we also count these six neurons as the ones in the first hidden layer). These {2d ⋅(n + 2)+ 6} neurons implement \(\tilde {h}\)l(.) and send (x(1),…, x(d)) and \(\tilde {h}\)l(.) to the second hidden layer that calculates f2(.) and \(\tilde {h}_{2}\)(.) in the same way; … By analogy, fp(.) and \(\tilde {h}_{p}\)(.) are finally obtained. The p hidden layers contain [{2d ⋅(n + 2)+ 6} ⋅ p] neurons. Adding the nd units representing the input variables, the whole DNN contains a total of (n + p) layers and [nd + {2d ⋅(n + 2)+ 6} ⋅ p ] neurons. Simply, it has O(n + p) layers and O(nd+dnp+dp ) neurons.

When the input vector (x(1),…, x(d) )∉[0, c1] ×… × [0, cd], similarly, we can get the same conclusion.

Finally, we estimate the error of the DNN approximation:

Calculate the partial derivative:

Consider the absolute value of the partial derivative:

The meaning of C is typically the radius of the norm ballFootnote 32 in which the parameters wi1,…,wid are constrained to lie.)

Therefore, following (A-3), the error estimation can be obtained as follows.

Calculate the second L2 norm \(\left \| \left ({\varDelta } x^{(1)}, \ldots , {\varDelta } x^{(d)} \right ) \right \|_{2}\):

\({\varDelta } x^{(i)} = x^{(i)} -\tilde {x}^{(i)} \leq 1/2^{n}\), for i = 1,…, d, So we have

Combining this formula (about the second L 2 norm) with (A-5), we get:

Let the right side be ε. So, \(\varepsilon = pC^{p+1}\cdot {} d \cdot {} \left (\frac {1}{2^{n}} \right )\), and \(n = (p+1) \log _{2} C + \log _{2} \left (\frac {pd}{\varepsilon } \right )\). Therefore, we can choose \(n =(p + 1)\log _{2} C + \log _{2} \left (\frac {pd}{\varepsilon } \right )\) to make \(\tilde {h}(x^{(1)}, \ldots , x^{(d)})\) approach h(x(1),…, x(d)), satisfying

Similarly, when the input vector (x(1),…, x(d))∉[0, c1] ×… × [0, cd], the lower channel is turned on, and the DNN implements \(\tilde {h}\)(x(1),…, x(d) ) =\({\prod }_{i = 1}^{p}{(w_{i1}^{\prime }{\tilde {x}}^{(1)}} + {\ldots } + w_{\text {id}}^{\prime }{\tilde {x}}^{(d)})\). Under the same value of \(n, \tilde {h}\)(x(1),…, x(d) ) still achieves an ε-approximation to h(x(1),…, x(d)).

In summary, for the p-order multivariate piecewise function h(x(1),…, x(d) ) described in this theorem, there exists a DNN with O(n + p) layers and O(dn + dnp + dp) neurons, which implements an \(\tilde {h}(x^{(1)}, {\ldots } ,x^{(d)})\), satisfying:

where \(n=(p +1) \log _{2} C + log_{2} [dp/\varepsilon ]\). □

Appendix B: Proof of Theorem 2

Proof

. The proof of this theorem comprises two parts.

(1) In each problem-solving episode employing ALGO 4, no nodes are re-expanded.

The formal proof of this part is rather long, and, therefore, only the main idea (i.e., proof by contradiction) has been included here for brevity. Let us assume that the node n0 is expanded repeatedly when the propagational 𝜖 − A∗ algorithm is applied. Thus, there must exist at least two paths − path1 and path2 − from the initial node s to n0. Let us assume length(path1) > length(path2). Next, n0 is first expanded once through path1 (and the child node of n0 − defined as n1 − is placed onto the OPEN list). Subsequently, nodes are expanded through path2 from s to n0’s parent node xk lying on the same path. Thus, a shorter path (path2) is discovered to connect s and n0, and n0 is placed back onto the OPEN list. The backward pointer of n0 points toward xk for n0 to be expanded once again. Because the algorithm first explores path1, a node xi must exist on path2, and the value of its heuristic function f(xi) exceeds those of f(.) of all nodes (including n0) on path1. That is, f(xi) > f(n0). Because the proposed search algorithm enables bidirectional propagation of heuristic information (to ensure all nodes in the search tree satisfy: h(parent) ≤ h(child) + c(parent, child)), the next time it encounters n0 through path2, it would propagate the larger f(xi) value to xk, n0 (and its child node n1) through path2 such that f(xi) = f(xk) = f(n0) = f(n1). At this moment, both n0 and n1 remain on the OPEN list and satisfy f(n0) = f(n1), and g(n0) < g(n1) (g(n0) denotes the known distance from s to n0). Under this condition, the proposed algorithm would first expand n1 but not n0. This contradicts the assumption, and it follows that n0 would not be expanded once again. Therefore, in the worst-case scenario of a problem-solving episode employing the propagation-enabling 𝜖 − A∗ algorithm, all nodes with f-values smaller than the length of the optimal solution path f∗(s, t) would only be expanded once. Thus, the maximum time complexity equals O(M). In contrast, the maximum time complexity of the ordinary A* algorithm equals \(\mathrm {O}\left (2^{\mathrm {M}}\right )\) [3], whereas that of the simple modified A* algorithm (ALGO B) equals \(\mathrm {O}\left (\mathrm {M}^{2}\right )\).

(2) The total time complexities of ALGO 4 and ALGO 3 equal O(M2) and \(O(M^{3} \cdot \sqrt {M})\), respectively.

The time complexity of an RL algorithm is determined by two factors: (1) the time required for each problem-solving episode and (2) total number of episodes required to achieve convergence. The time complexity of each problem-solving episode when using the different algorithms is discussed above. Thus, this passage discusses the estimation of the number of episodes required to achieve convergence using the above-mentioned algorithms.

For any RL algorithm, the number of episodes required to achieve convergence (i.e., episode count) depends on factors such as the structure of the problem space and accuracy of the initial heuristic function. According to the method proposed by Korf to study the performance of the LRTA* algorithm when solving the maze problem with no obstacles [2], it is assumed that the state space of the maze problem comprises a matrix with N × N unit squares (denoted as M = N × N). The initial state s is placed in the upper-left corner of the matrix (square), while the target state t is placed in the lower-right corner. Additionally, it is also assumed that the initial heuristic function h(.) = 0. This follows that the ordinary V-Learning requires \(\mathrm {O}\left (\mathrm {N}^{3}\right )(\mathrm {i} . \mathrm {e} ., \mathrm {O}(\mathrm {M} \cdot \sqrt {\mathrm {M}}))\) episodes to achieve convergence. This total episode count can be deduced as follows.

In a given state space, each state has a shortest path to the target. Moreover, there exist no obstacles in the matrix. Thus, the length of the solution path from the state s in the upper-left corner to the target state t equals 2N − 2. Additionally, compared to other solution paths in the state space, the state s is the furthest away from the target state t. In contrast, the length of the solution path from a state in the lower-right corner to the target state is zero, which is the shortest solution path. The average lengths of the optimum-solution paths for all states is N, while there exist N2 states within the state space. It is assumed that the simple modified A* algorithm is applied for each problem-solving episode. Additionally, the V-Learning-based RL is adopted to learn the heuristic function h(.). When the maze problem is solved using RL the first time for a state x with the shortest solution path measuring N, the heuristic function h(.) is partially learned and set \(h\left (x_{N-1}\right )=h^{*}\left (x_{N-1}\right )=1\) for the state xN− 1 on the solution \(path(x,t)=\left \{\mathrm {x}=\mathrm {x}_{0}, \mathrm {x}_{1}, \ldots , \mathrm {x}_{\mathrm {N}}=\mathrm {t}\right \}\). Similarly, when the problem is solved for the second time, we have \(h\left (x_{N-2}\right )=h^{*}\left (x_{N-2}\right )=2\) for xN− 2 on path(x, t). Eventually, when the problem is solved the Nth time, we have \(h\left (x_{0}\right )=h^{*}\left (x_{0}\right )=N\). That is, to learn the heuristic functions h(.) = h∗(.) of all nodes on a given path, the required number of episodes equals the length of the path. (In contrast, the propagation-enabling 𝜖 − A∗ algorithm only needs to solve the problem once to learn the h(.) of a path). Therefore, when the simple modified A* algorithm is used in each problem-solving episode (ALGO 3), the number episodes required for convergence is given by

For the maze problem examined in this study, the state space S comprises a matrix with N × N (= M) unit squares with barriers (Fig. 4). S is divided into eight regions \(\left (\mathrm {R}_{1}, \ldots , \mathrm {R}_{8}\right )\) by the barriers; thus, S = R1 ∪R2 ∪… ∪R8. For any state x ∈ R7 ∪ R8, when x is located in the upper-left corner, its solution path is the longest (equal to N + N/2). In contrast, when x is located in the lower-right corner, its solution path becomes the shortest (zero). Therefore, the average solution-path length is L = 3N/4. The number of states in R7 ∪ R8 approximately is N × N/2 = N2/2. Because the proposed algorithm (ALGO 4) adopts the propagation-enabling 𝜖 − A∗ algorithm during each problem-solving episode, it will cause the h(xi) of all nodes xi on the solution \(path(x,t)=\left \{\mathrm {x}=\mathrm {x}_{0}, \mathrm {x}_{1}, \ldots , \mathrm {x}_{\mathrm {L}}=\mathrm {t}\right \}\) to equal h∗(xi) through a single problem-solving episode. For a detailed description of the propagation rules, readers are advised to please refer Step 7 of ALGO 4 and Section 2.2.2. Accordingly, in sub-spaces R7 ∪R8, the total number of problem-solving episodes required to achieve convergence approximately equals: (episode number required per solution path)Footnote 33× (number of states) = \( 1 \times \left (\mathrm {N}^{2} / 2\right )=\mathrm {N}^{2} / 2\).

A similar analysis has been performed for sub-spaces R1 ∪… ∪R6, and it has been observed that, when x ∈R1 ∪… ∪R6, the average length of the solution path(x, t) is ((4N − 3) + (N/2 + N − 3))/2 = 11N/4 − 3. Thus, the propagation-enabling 𝜖 − A∗ algorithm still yields \(\mathrm {h}\left (\mathrm {x}_{\mathrm {i}}\right )=\mathrm {h}^{*}\left (\mathrm {x}_{\mathrm {i}}\right )\) for all nodes xi on path(x, t) = \(\left \{\mathrm {x}=\mathrm {x}_{0}, \mathrm {x}_{1}, \ldots , \mathrm {x}_{\mathrm {L}}=\mathrm {t}\right \}\) through a single problem-solving episode. In sub-spaces R1 ∪… ∪R6, the number of states approximately equals N2/2 (neglecting the states occupied by barriers). Thus, the number of episodes required for the propagation-enabling RL in R1 ∪… ∪R6 equals: (episode number required per solution path) × (number of states) = 1 × N2/2 = N2/2. Hence, for the state space S in the maze problem with barriers, ALGO 4 yields the following total number of episodes to achieve h(.) = h∗(.) for all states.

In the state space S of the maze problem with barriers, a similar analysis has been performed on ALGO 3. Accordingly, it is observed that the total episodes count required equals \(3 \mathrm {N}^{3}-3 \mathrm {N}^{2}=\mathrm {O}\left (\mathrm {N}^{3}\right )=\mathrm {O}(\mathrm {M} \cdot \sqrt {\mathrm {M}})\). The conclusion from this theorem can be drawn by multiplying the number of episodes required to achieve convergence when using ALGO 3 and ALGO 4 by their corresponding time complexities for a single problem-solving episode. □

Rights and permissions

About this article

Cite this article

Zhang, W. A learning search algorithm with propagational reinforcement learning. Appl Intell 51, 7990–8009 (2021). https://doi.org/10.1007/s10489-020-02117-0

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-020-02117-0