Abstract

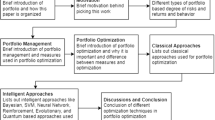

In this paper, we address the problem of long-term investment by exploring optimal strategies for allocating wealth among a finite number of assets over multiple periods. Based on the classical Markowitz mean-variance philosophy, we develop a new portfolio optimization framework which can produce sparse portfolios. The sparsity of the portfolio at each and across periods is characterized by the possibly nonconvex penalties. For the constructed nonconvex and nonsmooth constrained model, we propose a generalized alternating direction method of multipliers and its global convergence to a stationary point can be guaranteed theoretically. Moreover, some numerical experiments are conducted on several datasets generated from practical applications to illustrate the effectiveness and advantage of the proposed model and solving method.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Portfolio optimization is a prominent topic in finance that involves selecting securities from among a large and complex array of candidates to allocate wealth rationally with the goal of maximizing returns and minimizing risks. Although investors often have access to numerous assets, investing in a large number of them can result in high transaction costs and increased complexity in portfolio management. Consequently, most investors are only able to invest in a limited number of assets. In this paper, we address multi-period sparse portfolio selection problems, aimed at developing an optimal strategy for long-term investment through sparse portfolio selection.

Markowitz (1968) proposed the mean-variance (MV) portfolio selection model, which established the foundation of modern portfolio theory. This model defines the mean of returns as the measure of gain and considers the variance of returns as a measure of risk. Mathematically, the classical MV model can be formulated as a quadratic programming problem as follows:

where \(\textbf{x}=[x_1,x_2,\ldots ,x_n]^\top \) denotes the weight vector, H denotes the covariance matrix of returns, and the objective function is to minimize portfolio risk. \(\mu \) is the expected return vector, and \(\rho \) is the minimum end-of-period wealth value. To enhance realism, many researchers have extended the MV model under various settings, such as minimum investment, maximum investment, and cardinality constraints (see, e.g., Jacob 1974; Perold 1984; Chang et al. 2000; Bertsimas and Cory-Wright 2022). We refer to Zhang et al. (2018), Mencarelli and d’Ambrosio (2019), Cui et al. (2022) for surveys on the MV portfolio selection model and its variants.

When the number of assets is large and returns are highly correlated, the MV model produces a weight vector with many non-zero that infinitely close to zero values, making the model unstable with poor out-of-sample performance (Cui et al., 2018; Huang et al., 2021). On the other hand, an excessive number of assets can increase management difficulty and transaction costs for investors. To address these issues, Gao and Li (2013) developed the cardinality constrained MV model, which selects a small number of assets from a larger pool. This model is formulated as

where \(\lambda \) is a trade-off parameter, and \(\Vert \textbf{x}\Vert _0\) denotes the number of nonzero elements in \(\textbf{x}\). The model (2) can produce sparse portfolios, which are more attainable in real-life situations. However, the constrained MV model (2) is NP-hard (Gao & Li, 2013), and can only be solved by using some heuristic or convex relaxation methods (Chang et al., 2000; Bertsimas & Shioda, 2009; Anis & Kwon, 2022).

To remedy the NP-hardness of the \(\ell _0\)-norm penalty model (2), some convex penalty methods have been developed to produce sparse portfolio selection solution and improve out-of-sample performance. For instance, DeMiguel et al. (2009) proposed using \(\ell _1\) and squared \(\ell _2\) norm constraints to achieve the minimum variance criterion. It is worth noting that \(\ell _1\)-regularization for MV portfolio selection can be seen as an adaptation of the Least Absolute Shrinkage and Selection Operator (LASSO) (Tibshirani, 1996), which can be solved exactly. Yen and Yen (2014) and Ho et al. (2015) introduced an elastic net penalty in the context of constrained minimum variance portfolio optimization. Fastrich et al. (2015) studied a weighted LASSO approach for minimum variance portfolios and proposed a calibration scheme wherein the weights are selected based on the variability in the volatility of each asset. Various regularization techniques applied in the Markowitz MV framework have been proposed in recent works such as Fastrich et al. (2015); Dai and Wen (2018); Corsaro and De Simone (2019); Dai and Kang (2021).

The large appeal of using convex penalties such as \(\ell _1\)-regularization in portfolio optimization is mainly due to the fact that they can be solved using convex optimization methods (Corsaro & De Simone, 2019; Kremer et al., 2020). However, Fan and Li (2001) showed that the \(\ell _1\)-penalty tends to produce biased estimates and becomes ineffective when there are both portfolio budget constraints and short-selling constraints in portfolio selection. To address this issue, nonconvex penalties that promote sparsity while countering bias by satisfying singularity at the origin have been suggested. Actually, the nonconvex SCAD penalty proposed in Fan and Li (2001) has been used in Fastrich et al. (2015), Kim et al. (2016) for sparse portfolio selection. Cui et al. (2018) proposed a model for solving sparse portfolio selection problems using nonconvex fraction penalty functions. More recently, Li and Zhang (2022) introduced a nonconvex penalty to promote sparse asset selection for short-term single period portfolio selection problems. Other nonconvex penalties, such as \(\ell _q\) norm (\(0< q < 1\)), Capped \(\ell _1\), log-function, have also been used to solve the sparse portfolio selection (Fastrich et al., 2014; Xu et al., 2016; Benidis et al., 2018). However, there is still a lack of unified frameworks and theoretically guaranteed algorithms for these nonconvex penalties based on MV portfolio selection models.

In the actual decision-making process, investors often have the flexibility to adjust their asset positions multiple times depending on market conditions, making it a multi-period process (Li & Ng, 2000; Cui et al., 2014, 2022). Wealth is reallocated at the beginning of each period with the goal of maximizing returns upon exit from the market. Based on this background, Li and Ng (2000) first developed a multi-period MV model, and Cui et al. (2014) extended the MV model to multiple sub-periods under the assumption that short selling is not allowed. Pun and Wong (2019) developed a linear programming model for selecting sparse high-dimensional multi-period portfolios and introduced a constrained \(\ell _1\) minimization approach to directly estimate parameters in the optimal portfolio solution. Nystrup et al. (2019) proposed a model predictive control method based on a multivariate hidden Markov model with time-varying parameters to dynamically optimize an investment portfolio and control drawdowns. Corsaro et al. (2021a, 2021b) recently proposed a fused LASSO model to solve sparse multi-period portfolio problems and utilized the split Bregman algorithm in the implementation. Li et al. (2022) studied multi-period portfolio optimization problems with MV and risk parity asset allocation frameworks. For more information on the multi-period MV portfolio selection model, see the recent survey by Cui et al. (2022).

In this paper, we further study a possibly nonconvex penalty-based MV framework for solving the sparse multi-period portfolio selection problem. For the developed nonconvex optimization model, we propose a generalized alternating direction method of multipliers (ADMM) with theoretically guaranteed convergence. Previous work often used heuristic algorithms, such as genetic algorithms and particle swarm optimization, to solve the model (Chen & Wei, 2019; Silva et al., 2019). However, the speed of convergence is slow, and convergence cannot be guaranteed. As a benchmark method for structured convex optimization problems, ADMM is widely used in many practical fields (Boyd et al., 2011; Maneesha & Swarup, 2021; Han, 2022). ADMM can be seen as an extension of the augmented Lagrangian method that decomposes the problem and updates primal and dual variables alternatively, making subproblems easy to solve and applicable to large-scale optimization problems. Indeed, ADMM has been proposed to solve sparse portfolio problems with convex penalties, and global convergence can be guaranteed (Chen et al., 2020). In recent years, ADMM has been extended to solve nonconvex optimization problems, and global convergence can be guaranteed under the Kurdyka-Łojasiewicz framework (Kurdyka, 1998), as shown in Guo et al. (2017), Wu et al. (2017), Themelis and Patrinos (2020), Boţ and Nguyen (2020). However, due to the special property of the nonconvex penalty-based multi-period MV model, existing algorithms cannot solve the problem directly with guaranteed convergence.

Contributions of the paper are threefold. Firstly, we propose a possibly nonconvex penalty-based sparse multi-period MV model that includes two possibly nonconvex penalties on the weight vector to produce a sparse portfolio in a single period and reduce the number of changes in adjacent periods. This model provides a general framework for solving multi-period MV portfolio selection problems. Secondly, we propose a generalized ADMM method to solve the unified model, where each subproblem can be solved efficiently. Thirdly, the rigorous theoretical analysis for the generalized ADMM are conducted based on the Kurdyka-Łojasiewicz property. The computational scalability of the algorithm and the impressive performance of the presented model are demonstrated through some out-of-sample empirical tests in numerical experiments.

The rest of this paper is organized as follows. In Sect. 2, we propose a unified sparse multi-period MV model with possibly nonconvex penalties. In Sect. 3, we develop a generalized ADMM to solve the novel model. The global convergence of the proposed algorithm is rigorously analyzed in Sect. 4 based on subdifferential theory and the Kurdyka-Łojasiewicz property. We report some numerical results on several datasets from practical applications in Sect. 5. Finally, we conclude this paper in Sect. 6.

2 Nonconvex sparse multi-period mean-variance model

We first refer to the fused LASSO model presented in Corsaro et al. (2021b). More precisely, let m denote the number of subperiods, decision taken at time \(j~(j=1,2,\ldots ,m)\) is kept in the j-th sub-period \([j, j+1)\) of the investment. Let n denote the number of assets that can be invested at each sub-period, then the strategy of portfolio selection at whole period can be denoted by

where \(\textbf{x}_j\in {{\mathbb {R}}}^n\) is the portfolio of holdings at the beginning of sub-period \(j~(j=1,2,\ldots ,m)\) and \(N = mn\). Note that \((\textbf{x}_j)_i\) is the portion of the investor’s total wealth invested in asset i at j-th sub-period.

Assuming that \(j=1\) is the initial period, we denote \(\textbf{r}_j\in {\mathbb {R}}^n\) as the expected return vector and \(H_j\in {\mathbb {R}}^{n\times n}\) as the covariance matrix, which is assumed to be positive definite. Then, the FL model can be formulated as follows:

where \(\textbf{1}_n\) is the column vector with n elements, all equal to one, \(\xi _0\) is the initial wealth, \(\mathbf{x_{\min }}\) is the vector of expected minimum wealth, \(\tau _1\) and \(\tau _2\) are trade-off parameters. Note that in the objective function of (4), the quadratic term represents the portfolio risk, which is the sum of all sub-period variances. The \(\ell _1\)-norm is used to promote sparsity in the solution. In particular, the terms \(\Vert \textbf{x}_j\Vert _1~(j=1,2,\ldots ,m)\) and \(\Vert \textbf{x}_{j+1}-\textbf{x}_j\Vert _1~ (j=1,2,\ldots , m-1)\) characterize the sparsity of the investment in a single period and the rebalance during successive periods, respectively. The constraints are all imposed on wealth, including the initial equilibrium and the constraint on minimal return.

As presented in Corsaro et al. (2021a, 2021b), the model (4) can be reformulated as the following compact form:

where \(\textbf{b}=(\xi _0,0,0,\ldots ,0)^\top \in {\mathbb {R}}^m\), and the matrices are defined as follows:

and

Recently, nonconvex penalties have received much attention in sparse learning problems, as they have been found to have nearly unbiased properties and can overcome the limitations of the \(\ell _1\)-norm. Moreover, in many cases, \(\ell _1\) regularization has been shown to be suboptimal; for instance, it cannot recover a signal with the least measurements when applied to compressed sensing (Xu et al., 2012). Therefore, in this paper, we propose a general nonconvex penalty mean-variance (GNPMV) model to solve the multi-period sparse portfolio selection problem, as follows:

where \(\Phi \) and \(\Psi \) are possibly nonconvex penalty functions, and the other notations are the same as those in (4). We list several popular choices of \(\Phi \) and \(\Psi \) in Table 1, including \(\ell _{1/2}\) regularization (Xu et al., 2012), smoothly clipped absolute deviation (SCAD) penalty (Fan & Li, 2001), minimax concave penalty (MCP) (Zhang, 2010a), and capped \(\ell _1\) penalty (CAP) (Zhang, 2010b). In Table 1, we also provide the proximal operator of the nonconvex penalties, which will be useful in solving the subproblems in the implementation. The proximal operator of a function g with \(\lambda >0\) is defined by

In addition, we show several common penalty functions with fixed \(c=2\) and \(\kappa =1\) in Fig. 1 to illustrate the difference between convex and nonconvex penalties.

3 A generalized ADMM for solving GNPMV model

The GNPMV model (6) is a nonconvex and nonsmooth optimization problem if nonconvex penalties are chosen. For this potentially nonconvex model, previous works have typically relied on existing solvers or heuristic algorithms for solving it. However, the convergence speed of such methods can be slow, and theoretical convergence guarantee is lacking.

We present a generalized alternating direction method of multipliers (ADMM) for efficiently solving the nonconvex GNPMV model (6) with guaranteed convergence. To achieve this, we introduce auxiliary variables \(\textbf{t}\in {\mathbb {R}}^{N}\), \(\textbf{y}\in {\mathbb {R}}^{N-n}\), and \(\textbf{z}\in {\mathbb {R}}^N\), which allows us to reformulate (6) as follows:

where \(\Pi _{{{\mathbb {R}}}_+^N}(\textbf{z})\) is an indicator function that equals 0 if \(\textbf{z} \in {{\mathbb {R}}}_+^N\), and is otherwise infinite.

Let

Then, we can reformulate the model (8) into the following compact form:

where \(\Phi \) and \(\Psi \) are proper, closed, and nonnegative functions that may be nonconvex and nonsmooth.

Define the augmented Lagrangian function of problem (10) as follows:

where \({\gamma }\) is the Lagrangian multiplier corresponding to the equality constraint in (10) and \(\beta >0\) is a penalty parameter. The generalized ADMM framework is presented in Algorithm 1, where the primal and dual variables are updated alternately with respect to the augmented Lagrangian function (11).

A generalized ADMM for solving GNPMV model (6)

Let \(\gamma _1\), \(\gamma _2\), and \(\gamma _3\) be the components of \({\gamma }\) corresponding to the Lagrangian multipliers with respect to the constraints \(\textbf{x}=\textbf{t}\), \(F\textbf{x}=\textbf{y}\), and \(G\textbf{x}+\textbf{z}=\textbf{x}_{\min }\) in (8), respectively. We now specify the implementation of subproblems in Algorithm 1:

-

The \(\textbf{t}\)-subproblem (12a) is equivalent to estimating the proximal operator of \(\Phi \), which can be read as

$$\begin{aligned} \begin{aligned} \textbf{t}^{k+1}&= \arg \min _\textbf{t} \left\{ \tau _1\Phi (\textbf{t})+\frac{\beta }{2}\left\| \textbf{x}^k-\textbf{t}+\frac{{\gamma }_1^k}{\beta }\right\| ^2\right\} \\&= \textrm{prox}_{\frac{\tau _1}{\beta }\Phi }\left( \textbf{x}^k+\frac{{\gamma }_1^k}{\beta }\right) . \end{aligned} \end{aligned}$$(14) -

Similarly, the \(\textbf{y}\)-subproblem (12b) is equivalent to estimating the proximal operator of \(\Psi \) as follows:

$$\begin{aligned} \begin{aligned} \textbf{y}^{k+1}&= \arg \min _\textbf{y} \left\{ \tau _2\Psi (\textbf{y})+\frac{\beta }{2}\left\| {F\textbf{x}}^k-\textbf{y}+\frac{{\gamma }_2^k}{\beta }\right\| ^2\right\} \\&= \textrm{prox}_{\frac{\tau _2}{\beta }\Psi }\left( {F\textbf{x}}^k+\frac{{\gamma }_2^k}{\beta }\right) . \end{aligned}\end{aligned}$$(15) -

The \(\textbf{z}\)-subproblem (12c) is equivalent to deriving the projection onto \({\mathbb {R}}_{+}^{N}\), which is

$$\begin{aligned} \begin{aligned} \textbf{z}^{k+1}&= \arg \min _\textbf{t} \left\{ \Pi _{{\mathbb {R}}_{+}^{N}}(\textbf{z})+\frac{\beta }{2}\left\| G\textbf{x}^k+\textbf{z}- \mathbf{x_{\min }}+\frac{{\gamma }_3^k}{\beta }\right\| ^2\right\} \\&= \textrm{Proj}_{{\mathbb {R}}_{+}^{N} }\left( \mathbf{x_{\min }}-\frac{{\gamma }_3^k}{\beta }-G \textbf{x}^k\right) . \end{aligned}\end{aligned}$$(16) -

The \(\textbf{x}\)-subproblem (12d) is equivalent to solving the following linear system:

$$\begin{aligned} H\textbf{x} +A^\top {\gamma }^k+\beta A^\top (A\textbf{x} +B\textbf{t}^{k+1}+C\textbf{y}^{k+1}+D\textbf{z}^{k+1}-\textbf{q})=0. \end{aligned}$$(17)

We can see that the \(\textbf{z}\)-subproblem has an explicit solution, while the \(\textbf{t}\)- and \(\textbf{y}\)-subproblems depend on the choices of \(\Phi \) and \(\Psi \). If the popular nonconvex penalties presented in Table 1 are chosen, the closed-form solutions of the \(\textbf{t}\)- and \(\textbf{y}\)-subproblems can be obtained. The linear system (17) can be efficiently solved using sparse Cholesky factorization (Corsaro et al., 2021a) or the conjugate gradient method (Wright & Nocedal, 1999).

4 Convergence analysis

Although the theoretical convergence of ADMM has been studied for various nonconvex optimization problems, such as those presented in Guo et al. (2017); Themelis and Patrinos (2020); Wang et al. (2019), the assumptions made in these studies are not always easy to verify or satisfy, especially for concrete applications. Thus, for the sake of self-containedness in this paper, we will analyze the global convergence of ADMM in Algorithm 1 to solve the nonconvex portfolio optimization problem (8).

4.1 Preliminaries

For an extended-real-valued function g, the domain of g is defined as

A function g is closed if it is lower semicontinuous and is proper if \(\textrm{dom}g\ne \emptyset \) and \(g(\textbf{x})>-\infty \) for any \(\textbf{x}\in \textrm{dom} g\). For any point \(\textbf{x}\in {\mathbb {R}}^{n}\) and subset \(S \subseteq {\mathbb {R}}^{n}\), the Euclidean distance from \(\textbf{x}\) to S is defined by

For a proper and closed function \(g:{\mathbb {R}}^{n}\rightarrow {\mathbb {R}}\cup \{\infty \}\), a vector \( \textbf{u}\in \partial g(\textbf{x})\) is a subgradient of g at \(\textbf{x}\in \textrm{dom}g\), where \(\partial g\) denotes the subdifferential of g (Rockafellar & Wets, 2009) defined by

with \(\widehat{\partial }g(\textbf{x})\) being the set of regular subgradients of g at \(\textbf{x}\):

As discussed in Rockafellar and Wets (2009), it holds that \(\widehat{\partial }g(\textbf{x})\subseteq \partial g(\textbf{x})\) and both of them are closed. Note that for a continuously differentiable function f, the subdifferential of f reduces to the gradient of f, denoted by \(\nabla f\). Furthermore, if \(f:{\mathbb {R}}^n\rightarrow {\mathbb {R}}\) is continuously differentiable and \(g:{\mathbb {R}}^n\rightarrow {\mathbb {R}}\cup \{\infty \}\) is proper and lower semicontinuous, it follows from Rockafellar and Wets (2009) that \(\partial (f+g)=\nabla f+\partial g\). A point \(\textbf{x}^*\) is called (limiting-) critical point or stationary point of a cost function F if it satisfies \(0\in \partial F(\textbf{x}^*)\), and the set of critical points of F is denoted by \(\textrm{crit} F\).

Definition 1

We say that \((\textbf{t}^*, \textbf{y}^*, \textbf{z}^*, \textbf{x}^*, {\gamma }^*)\) is a critical point of the augmented Lagrangian function \( {\mathcal {L}}_\beta (\cdot )\) in (11) if it satisfies

It is straightforward to observe that a critical point of the augmented Lagrangian function of (10) corresponds to a KKT point associated with it.

We now introduce the definition of Kurdyka-Łojasiewicz (KL) function and uniform KL property, as borrowed from Attouch et al. (2013); Bolte et al. (2014), respectively. These concepts will aid in establishing global convergence.

Definition 2

Let \(f:{\mathbb {R}}^n\rightarrow (-\infty ,\infty ]\) be a proper and lower semicontinuous function.

(i) The function f is said to have KL property at \(\textbf{x}^*\in \textrm{dom}(\partial f)\) if there exists \(\eta \in (0,+\infty ]\), a neighborhood U of \(\textbf{x}^*\), and a continuous and concave function \(\varphi :[0,\eta )\rightarrow \mathbb {R^+}\) such that

(a) \(\varphi (0)=0\) and \(\varphi \) is continuously differentiable on \((0,\eta )\) with \(\varphi '>0;\)

(b) for all \(\textbf{x}\in U\cap \{\textbf{z}\in {\mathbb {R}}^n|f(\textbf{x}^*)<f(\textbf{z})<f(\textbf{x}^*)+\eta \}\), the following KL inequality holds:

(ii) If f satisfies the KL property at each point of \( \textrm{dom}(\partial f)\), then f is called a KL function.

Throughout this paper, we assume that the objective function of (10) is coercive and there exists at least a KKT point of (10).

4.2 Convergence

In this subsection, we are devoted to analyzing the convergence of Algorithm 1. Recalling the iterative scheme (12)–(13), we first present the first-order optimality conditions of the subproblems in Algorithm 1 as follows:

In the following, we first present several lemmas to characterize the properties of the sequences generated by Algorithm 1. The proofs of these lemmas can be found in Appendix A.

Lemma 1

Let \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) be the sequence generated by Algorithm 1. Then, for any \(k>0\), we have

where \(\lambda _{\min }\) is the smallest eigenvalue of \(A^\top A\).

Lemma 2

Let \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) be the sequence generated by Algorithm 1, then the sequence \(\{{\mathcal {L}}_\beta (\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k)\}\) is decreasing, i.e.,

where \(b>0\) is a certain positive constant.

Lemma 3

The sequence \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) generated by Algorithm 1 is bounded.

Lemma 4

Let \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) be the sequence generated by Algorithm 1, then we have

Remark 1

Note that in practical computation, the value of \({\hat{\beta }}\) might be too large, which will lead to slow convergence. As suggested in Li and Pong (2016), Yang et al. (2017), one could initialize the algorithm with a small \(\beta \) less than \({\hat{\beta }}\), and then increase \(\beta \) by a constant ratio if \(\beta \le {\hat{\beta }}\) and the sequence generated by the algorithm becomes unbounded or the successive change of the sequence does not vanish sufficiently fast. It is obvious that one can get \(\beta >{\hat{\beta }}\) after at most finitely many increases and then the conclusion of Lemma 4 holds. Otherwise, one must have that the sequence is bounded and the successive change goes to zero. Hence the assertions of Lemma 4 hold.

We provide the subsequential convergence result in the following theorem, and the proof can be found in Appendix A.5.

Theorem 5

Let \(\beta >\hat{\beta }\) and \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) be the sequence generated by Algorithm 1, then any cluster point \((\textbf{t}^*, \textbf{y}^*, \textbf{z}^*, \textbf{x}^*, \gamma ^*)\) of the sequence \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) is a stationary point of (10).

By utilizing the KL function and KL property, we can establish that the convergence generated by Algorithm 1 is globally convergent. The proof of this theorem can be found in Appendix A.7.

Theorem 6

Let \(\beta >\hat{\beta }\) and \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) be the sequence generated by Algorithm 1. Suppose \({\mathcal {L}}_{\beta }\) is a KL function, then the sequence \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k\}\) converges globally to a critical point of (10).

5 Numerical experiments

In this section, we apply the generalized ADMM, i.e., Algorithm 1, to solve the proposed GNPMV model (6). All numerical experiments are written by MATLAB 2019a on a 64-bit Windows 10 laptop with Intel(R) Core (TM) i5-10210U CPU @ 1.60GHz 2.11Ghz and 16 G running memory.

To evaluate the performance of the nonconvex penalty MV model (8), we consider a well-diversified investment and compare the results with those obtained by 1/n strategy. 1/n strategy means investing the same amount of money in all available assets, which is also called naive portfolio. By recursively applying the 1/n allocation rule, we can get the expected wealth of the naive portfolio as follows:

where \(\xi _0\) denotes the wealth at beginning of the investment, which is assumed to be one unit without loss of generality, and \(\textbf{r}_j\in {\mathbb {R}}^n,~j=1,2,\ldots ,m,\) is the expected return vector. We set the expected wealth of the naive portfolio to be the minimal expected wealth of each period, i.e., \(\textbf{x}_{\min }\) is the vector whose elements are all \({\tilde{\xi }}\) in (4) and (8). Now we introduce some performance measures considering portfolio risk and cost. Firstly, we compute the ratio between the number of non-zero weights and the total number of weights in the result as Density, which is

where amount denotes the number of non-zero weights in the result, and N is the total number of weights. This value is used to measure the sparsity of the portfolio, and reflect the investor’s holding costs.

Secondly, we denote the ratio between the estimated risk of the naive strategy and the estimated risk of the optimal strategy as Ratio, i.e.,

where \(\tilde{\textbf{x}}\) denotes the 1/n portfolio selection and thus the numerator represents the estimated risk of the naive portfolio strategy, \(\textbf{x}_{o}\) denotes the optimal portfolio selection obtained by the tested models and the denominator represents the estimated risk of the optimal one. This value measures the risk reduction factor related to the benchmark. If Ratio \(>1\), it means the model is more efficient than 1/n portfolio strategy.

Thirdly, we count the number of weight changes, which is a measure of transaction costs. We construct a matrix \(Y\in \textrm{R}^{n \times (m-1)}\) to reflect the change in the weights of the same asset during two adjacent investment periods. Each element of Y denotes whether security i was bought or sold during the period j, i.e.,

where \(i=1,2, \ldots , n\) and \( j=1,2, \ldots , m-1\). The naive strategy re-executes the decision to distribute evenly every period, thus the total number of transactions is

The number of transactions associated with the optimal strategy of the tested models can be expressed as

To estimate the percentage of transactions of the optimal strategy, we define

If \(\vartheta <1\), it means that the tested model can effectively reduce the percentage of transactions, thus reduces transaction costs and obtains more profits.

For the implementation of \(\beta \) in Algorithm 1, we adopt a strategy similar to that in Yang et al. (2017), as discussed in Remark 1. We choose \(\beta \) as follows: we initialize \(n_s=0\) and \(\beta =0.5 \hat{\beta }\). In the k-th iteration, we compute

Then, we increase \(n_s\) by 1 if \(succ\_delta^k>0.99\cdot succ\_delta^{k-1}\). Obviously, \(n_s\) is nondecreasing in this procedure. We then update \(\beta \) as \(1.1 \beta \) whenever \(\beta \le 1.01 \hat{\beta }\) and the sequence satisfies either \(n_s \ge 0.3 k\) or \(obj^k>10^{10}\).

5.1 Numerical performance of ADMM

We first test the performance of Algorithm 1, i.e., ADMM, for solving the proposed GNPMV model (6) with different penalties on a reliable FF48 dataset. FF48 dataset comes from the Fama and French database,Footnote 1 containing monthly returns for 48 industry sector portfolios from July 1926 to April 2022. We set the investment rebalancing at the end of each year, and test the model with the period being 10 and 20 years respectively, i.e., \(m=10,20\). The assets in FF48 are moderately correlated, and the condition number of the covariance matrix is \(cond(C)=O(10^4)\), which implies the good numerical stability.

We test the performance of ADMM for solving GNPMV model (6) with \(\ell _1\) norm penalty, SCAD and MCP penalty in Table 1, i.e., \(\Phi \) and \(\Psi \) are both chosen to be the SCAD or MCP function, denoted by FL, GNPMV\(_\textrm{SCAD}\) and GNPMV\(_\textrm{MCP}\). We fix \(\tau _1=0.001\) and \(\tau _2=0.01\) for each model, and use \(tol:=10^{-4}\) as the stopping criterion and make optimal parameter selection by simulating the parameters involved in the tested algorithm. As observed in Fan and Li (2001), we find that the parameters c and \(\kappa \) can be chosen empirically with cross-validation or generalized cross-validation techniques. By the cross-validation, we fix \(c=\) \(9, \kappa =6\) for the SCAD and MCP penalty functions presented in Table 1. The maximum number of iteration is set as 25000. For each period, we set as expected minimum wealth \((\textbf{x}_{\min })_j\), \(j=1,2,\ldots ,m\), the expected value produced by the recursive application of the 1/n naive strategy as presented in Corsaro et al. (2021a).

Since the proposed ADMM is customized with theoretical guarantee for solving the portfolio optimization problem (6), we compare it with the general purpose solver, i.e., CPLEX. In Table 2, we report the obtained objective function value (f\(\_\)value), Density (Dens.(%)) and computing time (Time(s)). From the results presented in Table 2, we can see that ADMM can obtain higher quality solution and costs less computation time compared with CPLEX solver.

5.2 Effects of regularization parameters

For the model (6), the setting of the regularization parameters \(\tau _{1}\) and \(\tau _{2}\) is important to trade off the risk measure and sparsity. Hence, in this subsection, we will test the effects of the regularization parameters \(\tau _1\) and \(\tau _2\) on the resulted optimal portfolio selection. The parameter \(\tau _{1}\) controls the sparsity within group and affect the number of non-zero elements in the obtained portfolio selection. The parameter \(\tau _{2}\) characters the sparsity of the rebalance between the successive periods, which will influence the turnover rate and the transaction cost. In the experiment, we first test the influence of parameters \(\tau _1\) and \(\tau _2\) on GNPMV\(_\textrm{SCAD}\). Specifically, we set \(\Phi \) and \(\Psi \) both to be SCAD penalty in Table 1, and test GNPMV\(_\textrm{SCAD}\) with \(\tau _{1}, \tau _{2} \in \left\{ 10^{-2}, 10^{-3}, 10^{-4}\right\} \).

We report the numerical performance of GNPMV\(_\textrm{SCAD}\) with different choices of \(\tau _{1}\) and \(\tau _{2}\) for 10 and 20 years of FF48 dataset in Table 3, including the Density (Dens.(%)), Ratio and the percentage of transactions (\(\vartheta (\%)\)). From the results in the left half of Table 3, we can see that the proportion of non-zero elements (Density) is greatly reduced with the increase of \(\tau _{1}\), thus achieving better sparsity and reducing the holding cost. The risk reduction factor (Ratio) is at least 1.46. This indicates that the investment risk of the optimization model is significantly lower than that of the naive investment portfolio. With the increase of \(\tau _{2}\), the percentage of transactions of the optimal strategy \(\vartheta \) generally shows a downward trend and always remains below \(27 \%\), which shows that the regularization parameter \(\tau _{2}\) indeed promotes the smooth effect between groups, thus reducing transaction costs. The 20-year investment results of the FF48 dataset under different \(\tau _{1}, \tau _{2}\) are also reported in Table 3. In all cases, we can find that the optimal portfolio outperforms the naive portfolio in terms of risk and turnover rate. More precisely, the risk reduction factor Ratio of the 10-year investment period for FF48 dataset at least 1.46, the percentage of transactions of the optimal strategy \(\vartheta \) at most \(27 \%\), the risk reduction factor Ratio of the 20-year investment period for FF48 dataset at least 1.03, and the percentage of transactions of the optimal strategy no more than \(20 \%\).

We further test the numerical performance of GNPMV\(_\textrm{MCP}\) with different choices of \(\tau _{1}\) and \(\tau _{2}\) and report the results in Table 4. As we expected, the performance of all indicators is relatively outstanding for both the 10-year and 20-year periods. By adjusting the parameters, we observe the indicators that measure investment transactions performance, that is, the proportion of non-zero elements (Density), the risk reduction factor (Ratio), the percentage of transactions of the optimal strategy \((\vartheta (\%))\). As listed in Table 4, we observe that the risk reduction factor Ratio is all more than 1.00, which implies that with enhanced sparsity to reduce transaction costs, the risk is still less than that of a naive strategy.

In Figs. 2 and 3, the trend of the optimal portfolio weights over time is shown to investigate the difference of the GNPMV with different penalties and parameters in depth. In each picture, the number of color blocks on each rebalancing period represents the number of assets allocated, and the height of each color block represents the proportion of the amount allocated to the asset at that time. Obviously, the graphs show excellent smooth effect of the nonconvex penalty term in all cases. It can be seen that the asset weight trend graphs of GNPMV with nonconvex penalty are clearly smooth, and the color blocks are simpler by adjusting the parameters.

5.3 Numerical comparisons between different penalties

In this subsection, we further compare the performance of GNPMV (6) with convex and nonconvex penalty functions. Especially, we conduct the experiments for GNPMV with \(\ell _1\), i.e., FL in (4), on several datasets, i.e., FF48, DJ28, NasdqQ100 and SP500, and compare with GNPMV\(_{\ell _{1/2}}\), GNPMV\(_\textrm{SCAD}\), GNPMV\(_\textrm{MCP}\) and GNPMV\(_\textrm{CAP}\), which correspond to the GNPMV (6) with the penalties presented in Table 1. We run the models to achieve the same predetermined target Ratio and compare the sparsity, turnover, short positions and computing time for these five models. In order to ensure fair comparisons between different models, we utilize a 5-fold cross-validation strategy for selecting the regularization parameters \(\tau _1\) and \(\tau _2\) under the preset target Ratio. Specifically, each dataset is arbitrarily divided into five equally sized portions, out of which four are allocated as training sets, i.e., each partition includes \(80\%\) of the data for training and \(20\%\) for testing purposes. For each dataset, we perform cross-validation to compare sparsity under various parameter groups ranging from \(\{10^{-5},10^{-4},\ldots , 10^{3}\}\). The optimal parameters \(\tau _1\) and \(\tau _2\) are then chosen based on averaged performance.

The numerical results including sparsity (Dens.), turnover rate (\(\vartheta \)), short positions (Shorts) and computing time (Time(s)) are reported in Tables 5 and 6. Table 5 presents the results of sparsity, turnover, short positions and computing time for \(m=10,~20\) and 30 respectively in FF48 database when the target Ratio is not less than 2.0 and 2.5. We can find that GNPMV with nonconvex penalties perform better in terms of sparsity, turnover rate and short positions to achieve the same target Ratio. Meanwhile, the computing time of solving various models using ADMM is also similar. Taking \(m=20\) as an example, the sparsity of FL is about \(50\%\), and there are many short positions, which makes asset management difficult and costly, while GNPMV\(_{\ell _{1/2}}\), GNPMV\(_\textrm{SCAD}\), GNPMV\(_\textrm{MCP}\) and GNPMV\(_\textrm{CAP}\) can achieve lower sparsity and reduce short positions to 0. Table 6 also presents the numerical comparisons on DJ28, NasdqQ100 and SP500 databases, and the same advantages that GNPMV with nonconvex penalties outperform \(\ell _1\) penalty, i.e., FL, except for GNPMV\(_\textrm{MCP}\) on SP500 dataset. More importantly, GNPMV with nonconvex penalties can achieve no short positions, which fits the fact that short positions are limited in many financial markets.

6 Conclusions

We introduced a nonconvex penalty-based mean-variance optimization model for solving multi-period sparse portfolio selection problems. Our proposed model provides a comprehensive framework for all regularized portfolio selection models. To address the potential nonconvexity of the model, we developed a new solving method, namely the generalized alternating direction method of multipliers based on that for the two-block setting. With the aid of nonconvex optimization theories, we then conducted rigorous convergence analysis to guarantee the efficiency of the proposed method. We performed numerical experiments on four datasets to illustrate the benefits of the nonconvex penalty model in terms of sparsity in single period and transactions between nearest periods. In the future, we plan to extend our work to consider multi-period portfolio selection under uncertain returns.

Notes

Data available at http:/mba.tuck.dartmouth.edupagesfacultyken.frenchdata_library.html#BookEquity.

References

Anis, H. T., & Kwon, R. H. (2022). Cardinality-constrained risk parity portfolios. European Journal of Operational Research, 302(1), 392–402.

Attouch, H., Bolte, J., & Svaiter, B. F. (2013). Convergence of descent methods for semi-algebraic and tame problems: proximal algorithms, forward-backward splitting, and regularized gauss-seidel methods. Mathematical Programming, 137(1–2), 91–129.

Benidis, K., Feng, Y., Palomar, D.P. (2018). Optimization methods for financial index tracking: From theory to practice. Foundations and Trends® in Optimization 3(3), 171–279

Bertsimas, D., & Cory-Wright, R. (2022). A scalable algorithm for sparse portfolio selection. INFORMS Journal on Computing, 34(3), 1489–1511.

Bertsimas, D., & Shioda, R. (2009). Algorithm for cardinality-constrained quadratic optimization. Computational Optimization and Applications, 43(1), 1–22.

Bolte, J., Sabach, S., & Teboulle, M. (2014). Proximal alternating linearized minimization for nonconvex and nonsmooth problems. Mathematical Programming, 146(1–2), 459–494.

Boţ, R. I., & Nguyen, D.-K. (2020). The proximal alternating direction method of multipliers in the nonconvex setting: convergence analysis and rates. Mathematics of Operations Research, 45(2), 682–712.

Boyd, S., Parikh, N., Chu, E., Peleato, B., Eckstein, J. (2011). Distributed optimization and statistical learning via the alternating direction method of multipliers. Foundations and Trends® in Machine learning 3(1), 1–122.

Chang, T.-J., Meade, N., Beasley, J. E., & Sharaiha, Y. M. (2000). Heuristics for cardinality constrained portfolio optimisation. Computers & Operations Research, 27(13), 1271–1302.

Chen, J., Dai, G., & Zhang, N. (2020). An application of sparse-group lasso regularization to equity portfolio optimization and sector selection. Annals of Operations Research, 284, 243–262.

Chen, C., & Wei, Y. (2019). Robust multiobjective portfolio optimization: a set order relations approach. Journal of Combinatorial Optimization, 38(1), 21–49.

Corsaro, S., & De Simone, V. (2019). Adaptive \( l_1 \) lregularization for short-selling control in portfolio selection. Computational Optimization and Applications, 72(2), 457–478.

Corsaro, S., De Simone, V., & Marino, Z. (2021). Split bregman iteration for multi-period mean variance portfolio optimization. Applied Mathematics and Computation, 392, 125715.

Corsaro, S., Simone, V. D., & Marino, Z. (2021). Fused Lasso approach in portfolio selection. Annals of Operations Research, 299(1), 47–59.

Cui, X., Gao, J., Li, X., & Shi, Y. (2022). Survey on multi-period mean–variance portfolio selection model. Journal of the Operations Research Society of China, 1–24.

Cui, A., Peng, J., Zhang, C., Li, H., & Wen, M. (2018). Sparse portfolio selection via non-convex fraction function. arXiv preprint arXiv:1801.09171

Cui, X., Gao, J., Li, X., & Li, D. (2014). Optimal multi-period mean-variance policy under no-shorting constraint. European Journal of Operational Research, 234(2), 459–468.

Dai, Z., & Kang, J. (2021). Some new efficient mean-variance portfolio selection models. International Journal of Finance and Economics, 1, 1–13.

Dai, Z., & Wen, F. (2018). Some improved sparse and stable portfolio optimization problems. Finance Research Letters, 27, 46–52.

DeMiguel, V., Garlappi, L., Nogales, F. J., & Uppal, R. (2009). A generalized approach to portfolio optimization: Improving performance by constraining portfolio norms. Management Science, 55(5), 798–812.

Fan, J., & Li, R. (2001). Variable selection via nonconcave penalized likelihood and its oracle properties. Journal of the American Statistical Association, 96(456), 1348–1360.

Fastrich, B., Paterlini, S., & Winker, P. (2014). Cardinality versus q-norm constraints for index tracking. Quantitative Finance, 14(11), 2019–2032.

Fastrich, B., Paterlini, S., & Winker, P. (2015). Constructing optimal sparse portfolios using regularization methods. Computational Management Science, 12(3), 417–434.

Gao, J., & Li, D. (2013). Optimal cardinality constrained portfolio selection. Operations Research, 61(3), 745–761.

Guo, K., Han, D., & Wu, T. (2017). Convergence of alternating direction method for minimizing sum of two nonconvex functions with linear constraints. International Journal of Computer Mathematics, 94(8), 1653–1669.

Han, D. (2022). A survey on some recent developments of alternating direction method of multipliers. Journal of the Operations Research Society of China, 10, 1–52.

Ho, M., Sun, Z., & Xin, J. (2015). Weighted elastic net penalized mean-variance portfolio design and computation. SIAM Journal on Financial Mathematics, 6(1), 1220–1244.

Huang, R., Qu, S., Yang, X., Xu, F., Xu, Z., & Zhou, W. (2021). Sparse portfolio selection with uncertain probability distribution. Applied Intelligence, 51(10), 6665–6684.

Jacob, N. L. (1974). A limited-diversification portfolio selection model for the small investor. The Journal of Finance, 29(3), 847–856.

Kim, M. J., Lee, Y., Kim, J. H., & Kim, W. C. (2016). Sparse tangent portfolio selection via semi-definite relaxation. Operations Research Letters, 44(4), 540–543.

Kremer, P. J., Lee, S., Bogdan, M., & Paterlini, S. (2020). Sparse portfolio selection via the sorted \(\ell _1\)-norm. Journal of Banking and Finance, 110, 105687.

Kurdyka, K. (1998). On gradients of functions definable in o-minimal structures. In: Annales de L’institut Fourier, vol. 48, pp. 769–783.

Li, D., & Ng, W.-L. (2000). Optimal dynamic portfolio selection: Multiperiod mean-variance formulation. Mathematical Finance, 10(3), 387–406.

Li, G., & Pong, T. K. (2016). Douglas-Rachford splitting for nonconvex optimization with application to nonconvex feasibility problems. Mathematical Programming, 159(1), 371–401.

Li, X., Uysal, A. S., & Mulvey, J. M. (2022). Multi-period portfolio optimization using model predictive control with mean-variance and risk parity frameworks. European Journal of Operational Research, 299(3), 1158–1176.

Li, Q., & Zhang, W. (2022). Sparse and risk diversifcation portfolio selection. Optimization Letters. https://doi.org/10.1007/s11590-022-01914-5

Maneesha, A., & Swarup, K. S. (2021). A survey on applications of alternating direction method of multipliers in smart power grids. Renewable and Sustainable Energy Reviews, 152, 111687.

Markowitz, H.M. (1968). Portfolio Selection. Yale University Press

Mencarelli, L., & d’Ambrosio, C. (2019). Complex portfolio selection via convex mixed-integer quadratic programming: a survey. International Transactions in Operational Research, 26(2), 389–414.

Nystrup, P., Boyd, S., Lindström, E., & Madsen, H. (2019). Multi-period portfolio selection with drawdown control. Annals of Operations Research, 282(1), 245–271.

Perold, A. F. (1984). Large-scale portfolio optimization. Management Science, 30(10), 1143–1160.

Pun, C. S., & Wong, H. Y. (2019). A linear programming model for selection of sparse high-dimensional multiperiod portfolios. European Journal of Operational Research, 273(2), 754–771.

Rockafellar, R.T., Wets, R.J.-B. (2009) Variational Analysis. Springer

Silva, Y. L. T., Herthel, A. B., & Subramanian, A. (2019). A multi-objective evolutionary algorithm for a class of mean-variance portfolio selection problems. Expert Systems with Applications, 133, 225–241.

Themelis, A., & Patrinos, P. (2020). Douglas-rachford splitting and ADMM for nonconvex optimization: Tight convergence results. SIAM Journal on Optimization, 30(1), 149–181.

Tibshirani, R. (1996). Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society: Series B (Methodological), 58(1), 267–288.

Wang, Y., Yin, W., & Zeng, J. (2019). Global convergence of ADMM in nonconvex nonsmooth optimization. Journal of Scientific Computing, 78, 29–63.

Wright, S., Nocedal, J., et al. (1999). Numerical Optimization. Springer. Science, 35(67–68), 7.

Wu, Z., Li, M., Wang, D. Z., & Han, D. (2017). A symmetric alternating direction method of multipliers for separable nonconvex minimization problems. Asia-Pacific Journal of Operational Research, 34(06), 1750030.

Xu, Z., Chang, X., Xu, F., & Zhang, H. (2012). \( \ell _{1/2}\) regularization: A thresholding representation theory and a fast solver. IEEE Transactions on Neural Networks and Learning Systems, 23(7), 1013–1027.

Xu, F., Lu, Z., & Xu, Z. (2016). An efficient optimization approach for a cardinality-constrained index tracking problem. Optimization Methods and Software, 31(2), 258–271.

Yang, L., Pong, T. K., & Chen, X. (2017). Alternating direction method of multipliers for a class of nonconvex and nonsmooth problems with applications to background/foreground extraction. SIAM Journal on Imaging Sciences, 10(1), 74–110.

Yen, Y.-M., & Yen, T.-J. (2014). Solving norm constrained portfolio optimization via coordinate-wise descent algorithms. Computational Statistics and Data Analysis, 76, 737–759.

Zhang, C.-H. (2010). Nearly unbiased variable selection under minimax concave penalty. The Annals of Statistics, 38(2), 894–942.

Zhang, T. (2010). Analysis of multi-stage convex relaxation for sparse regularization. Journal of Machine Learning Research, 11(3), 1081–1107.

Zhang, Y., Li, X., & Guo, S. (2018). Portfolio selection problems with Markowitz’s mean-variance framework: A review of literature. Fuzzy Optimization and Decision Making, 17(2), 125–158.

Acknowledgements

This work was partly supported by the National Natural Science Foundation of China (Nos. 12001286, 12001281) and the Project funded by China Postdoctoral Science Foundation (No. 2022M711672) and Qing Lan Project and by the Istituto Nazionale di Alta Matematica - Gruppo Nazionale per il Calcolo Scientifico (INdAM-GNCS).

Funding

Open access funding provided by Università degli Studi della Campania Luigi Vanvitelli within the CRUI-CARE Agreement.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A

Appendix A

1.1 A.1 Proof of Lemma 1

Proof

It follows from the definitions in (9), we know that A is full column rank and \(\textrm{Im}(B)\subseteq \textrm{Im}(A), \textrm{Im}(C)\subseteq \textrm{Im}(A), \textrm{Im}(D)\subseteq \textrm{Im}(A)\), where \(\textrm{Im}(\cdot )\) returns the image of a matrix. By the \(\gamma -\)updating rule (13) and the \(\textrm{Im}(B)\subseteq \textrm{Im}(A), \textrm{Im}(C)\subseteq \textrm{Im}(A), \textrm{Im}(D)\subseteq \textrm{Im}(A)\), for any \(k>0\), we have \(\gamma ^{k+1}-\gamma ^k=\beta (A \textbf{x}^{k+1}+B \textbf{t}^{k+1}+C \textbf{y}^{k+1}+D \textbf{z}^{k+1}-\textbf{q})\in \textrm{Im}(A)\). Because A has full column rank, there exist the matrices R and S such that R is invertible, \(SS^\top =I\) and \(A^\top =RS.\) Noticing that \(\textrm{Im}(A)=\textrm{Im}(S^\top )\), we get \(\gamma ^{k+1}-\gamma ^k\in \textrm{Im}(S^\top ).\) Thus, \(\Vert \gamma ^{k+1}-\gamma ^k\Vert ^2=\Vert S(\gamma ^{k+1}-\gamma ^k)\Vert ^2.\) Consequently, we have

where \(\lambda _{R^\top R}\) denotes the smallest eigenvalue of \(R^\top R.\) By the definitions of R and S, we have \(\lambda _{\min }=\lambda _{RR^\top }\). Together with the common conclusion in linear algebra \(\lambda _{R^\top R}=\lambda _{RR^\top }\), we get \(\lambda _{R^\top R}=\lambda _{\min }\), which completes the proof. \(\square \)

1.2 A.2 Proof of Lemma 2

Proof

It follows from the definition of \({\mathcal {L}}_\beta (\cdot )\) in (11) and (13) that

Notice that the first-order optimality condition of x-subproblem (12d) can be read as

Hence, we have \(A^\top \gamma ^{k+1}=-H\textbf{x}^{k+1}\). Similarly, \(A^\top \gamma ^{k}=-H\textbf{x}^{k}\). It follows from the positive definiteness of H that

where \(\lambda '_{\max }\) denotes the largest eigenvalue of H. Combining the Lemma 1, we get

Next, the \(\textbf{x}\)-subproblem (12d) in Algorithm 1 can be simplified to

Since H is positive definite and A is full rank, the inverse of \((H+\beta A^\top A)^{-1}\) exists. That is, \(\textbf{x}\)-subproblem is strongly convex with modulus at least \(\lambda '_{\min }+\beta \lambda _{\min }\), where \(\lambda '_{\min }\) is the smallest eigenvalue of H. Then, we have

Moreover, because \(\textbf{t}^{k+1}\) is a minimizer of \(\textbf{t}-\)subproblem (12a) in Algorithm 1, we have

Similarly, we have

Summing (22), (24), (25), (26), and (27), we get

where \(b=\frac{\lambda '_{\min }+\beta \lambda _{\min }}{2}-\frac{\lambda '^2_{\max }}{\beta \lambda _{\min }}\). We know that \(b>0\) if \(\beta >\frac{-\lambda '_{\min }+\sqrt{\lambda '^2_{\min }+8\lambda '^2_{\max }}}{2\lambda _{\min }}\) given in Algorithm 1, then it holds that \(\{\mathcal { L}_\beta (\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k)\}\) is decreasing. This completes the proof. \(\square \)

1.3 A.3 Proof of Lemma 3

Proof

From Lemma 2, for \(k\ge 1\), we have

where the last inequality follows from (23).

By the assumption on \(\beta \) in Algorithm 1, we can see that \(\lambda '_{\min }-\frac{\lambda '^2_{\max }}{ \beta \lambda _{\min }}>0\). Again by using the fact that \(\Phi \), \(\Psi \), and \(\Pi _{{\mathbb {R}}_{+}^{N}}\) are nonnegative, we conclude that \(\{\textbf{t}^k\}\), \(\{\textbf{y}^k\}\),\(\{\textbf{z}^k\}\), \(\{\textbf{x}^k\}\), and \(\{\frac{\gamma ^k}{\beta }+A{\textbf{x}^k} +B\textbf{t}^k+C\textbf{y}^k+D\textbf{z}^k-\textbf{q}\}\) are bounded.

In addition, it holds that

which implies that \(\{\gamma ^k\}\) is also bounded. Hence, the sequence \(\{(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k)\}\) is bounded. This completes the proof. \(\square \)

1.4 A.4 Proof of Lemma 4

Proof

Suppose that \((\textbf{t}^*,\textbf{y}^*,\textbf{z}^*,\textbf{x}^*,\gamma ^*)\) is a cluster point of a sequence \((\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k)\) generated by Algorithm 1, and \((\textbf{t}^{k_i},\textbf{y}^{k_i},\textbf{z}^{k_i},\textbf{x}^{k_i},\gamma ^{k_i})\) the corresponding subsequence satisfies

Summing (21) from \(k=1\) to \(k=k_i-1\), we have

which implies

Together with \(b>0\), we can derive that \(\textbf{x}^{k+1}-\textbf{x}^k\rightarrow 0.\)

Next, summing both sides of (24) from \(k=1\) to \(k=k_i-1\) and taking limits, we have

from which we can conclude that \(\gamma ^{k+1}-\gamma ^k \rightarrow 0.\) Again from following iteration form of \(\gamma ^{k+1}\):

we can get \(\textbf{t}^{k+1}-\textbf{t}^k \rightarrow 0\), \(\textbf{y}^{k+1}-\textbf{y}^k \rightarrow 0\) and \(\textbf{z}^{k+1}-\textbf{z}^k \rightarrow 0\). This completes the proof. \(\square \)

1.5 A.5 Proof of Theorem 5

Proof

It follows from Lemma 3 that the sequence \((\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k)\) generated by Algorithm 1 is bounded. Let \((\textbf{t}^*, \textbf{y}^*, \textbf{z}^*, \textbf{x}^*, \gamma ^*)\) be the cluster point of the sequence \((\textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k)\), and there exists a subsequence \((\textbf{t}^{k_1},\textbf{y}^{k_i},\textbf{z}^{k_i},\textbf{x}^{k_i},\gamma ^{k_i})\) such that

Since \({\mathcal {L}}_\beta \) is lower semi-continuous, we have

From the definition of \(\textbf{x}^{k_i+1}\) in (12d), we have

Taking limits on both sides of the above inequality, we have

Combining (30) and (31), we obtain

which, together with \(\textbf{t}^{k+1}-\textbf{t}^k \rightarrow 0\), \(\textbf{y}^{k+1}-\textbf{y}^k \rightarrow 0\), \(\textbf{z}^{k+1}-\textbf{z}^k \rightarrow 0\) in Lemma 4 and the definition of \({\mathcal {L}}_\beta \) in (11), implies that

Taking limits of (20) and invoking Lemma 4, (32), and (18), we see that (19) holds. That is, \((\textbf{t}^*, \textbf{y}^*, \textbf{z}^*, \textbf{x}^*, \gamma ^*)\) is a stationary point of (10). \(\square \)

1.6 A.6 Uniformized KL property (Bolte et al., 2014)

Lemma 7

Let \(\Phi _\eta \) be the set of function \(\varphi \) which satisfy the involved conditions in Definition 2(i). Let \(\Omega \) be a compact set and \(f:{\mathbb {R}}^n\rightarrow (-\infty ,\infty ]\) be a proper and lower semicontinuous function. Assume that f is a constant on \(\Omega \) and satisfies the KL property at each point of \(\Omega \). Then, there exist \(\zeta , \eta >0\) and \(\varphi \in \Phi _\eta \) such that for all \(\bar{\textbf{x}}\in \Omega \) and all \(\textbf{x}\) in the following intersection

one has

1.7 A.7 Proof of Theorem 6

Proof

In view of Theorem 5, we only need to show that the sequence is convergent. From the coercivity of (10) and (28), it is not hard to observe that \(\{{\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)\}\) is bounded from below. Hence, we assume that \(\underset{k\rightarrow \infty }{\lim }\{{\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)\}=\theta ^*\). To make the proof clear, we consider the following two cases. One is \({\mathcal {L}}_{\beta }(\textbf{t}^N,\textbf{y}^N,\textbf{z}^N, \textbf{x}^N,\gamma ^N)=\theta ^*\) for some \(N\ge 1,\) and the other one is \({\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)>\theta ^*\) for all \(k\ge 1.\)

Case (i). Because \(\{{\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)\}\) is decreasing, we have \({\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)=\theta ^*\) for all \(k\ge N.\) It follows from (21) that \(\textbf{x}^{N+t}=\textbf{x}^N\) for all \(t\ge 0.\) Then, \(\{\textbf{x}^k\}\) converges finitely. Moreover, from (24), we have

which implies that \(\{\gamma ^k\}\) is convergent.

By using (29), we obtain \(\{\textbf{t}^k\}\), \(\{\textbf{y}^k\}\), and \(\{\textbf{z}^k\}\) are also convergent. Consequently, we see that \(\{ \textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k \}\) is convergent in this case.

Case (ii). In this case, we consider \({\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)>\theta ^*\) for all \(k\ge 1.\) We will divide the proof into three steps. (1) We first prove that \({\mathcal {L}}_{\beta }\) is constant on the set of cluster points of the sequence \(\{ \textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k \}\) and then apply the uniformized KL property; (2) We bound the distance from 0 to \(\partial {\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)\); (3) We show that the sequence \(\{ \textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k \}\) is a Cauchy sequence and hence is convergent. The complete proof can be presented as follows.

Step (1). It follows from Lemma 3 that the sequence \(\{ \textbf{t}^k,\textbf{y}^k,\textbf{z}^k,\textbf{x}^k,\gamma ^k\}\) generated by Algorithm 1 is bounded and hence there exists at least one cluster point. Let \(\Gamma \) denote the set of cluster points of \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k\}\). We will show that \({\mathcal {L}}_{\beta }\) is constant on \(\Gamma \). To this end, take any \((\textbf{t}^*,\textbf{y}^*,\textbf{z}^*,\textbf{x}^*,\gamma ^*)\in \Gamma \) and consider a convergent subsequence \( (\textbf{t}^{k_i},\textbf{y}^{k_i},\textbf{z}^{k_i}, \textbf{x}^{k_i},\gamma ^{k_i}) \) with \(\underset{i\rightarrow \infty }{\lim }(\textbf{t}^{k_i},\textbf{y}^{k_i},\textbf{z}^{k_i}, \textbf{x}^{k_i},\gamma ^{k_i})=(\textbf{t}^*,\textbf{y}^*,\textbf{z}^*, \textbf{x}^*,\gamma ^*).\) Then from the lower semicontinuity of \({\mathcal {L}}_{\beta }\) and the definition of \(\theta ^*\), we have

On the other hand, notice that from the definitions of \(\textbf{t}^{k+1}\), \(\textbf{y}^{k+1}\), and \(\textbf{z}^{k+1}\) in (12a), (12b), and (12c), we have

This together with Lemma 4 and the continuity of \({\mathcal {L}}_{\beta }\) with respect to \(\textbf{x}\), \(\gamma \), and the definition of \(\theta ^*\) implies that

Combining (34) and (35), we derive that \({\mathcal {L}}_{\beta }(\textbf{t}^{*},\textbf{y}^{*},\textbf{z}^{*}, \textbf{x}^{*},\gamma ^{*})=\theta ^*.\) Furthermore, \((\textbf{t}^{*},\textbf{y}^{*},\textbf{z}^{*}, \textbf{x}^{*},\gamma ^{*})\in \Gamma \) is arbitrary, we conclude that \({\mathcal {L}}_{\beta }\) is constant on \(\Gamma .\)

Since \({\mathcal {L}}_{\beta }\equiv \theta ^*\) on \(\Gamma \) as discussed in the above and \({\mathcal {L}}_{\beta }\) is a KL function by the assumption, it follows from Lemma 1 that there exist \(\zeta , \eta >0\), and \(\varphi \in \Phi _\eta \) such that

for all \((\textbf{t},\textbf{y},\textbf{z}, \textbf{x},\gamma )\) satisfying \(\textrm{dist}((\textbf{t},\textbf{y},\textbf{z}, \textbf{x},\gamma ),\Gamma )<\zeta \) and \(\theta ^*<{\mathcal {L}}_{\beta }(\textbf{t},\textbf{y},\textbf{z}, \textbf{x},\gamma )<\theta ^*+\eta \). On the other hand, since \(\underset{i\rightarrow \infty }{\lim }\textrm{dist}((\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k),\Gamma )=0\) by the definition of \(\Gamma \), and \({\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)\rightarrow \theta ^*\), it holds that for such \(\zeta \), and \(\eta \), there exists \(k_1\ge 0\) and

\(\underset{k\rightarrow \infty }{\lim }\textrm{dist}((\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k),\Gamma )<\zeta \) and \(\theta ^*<{\mathcal {L}}_{\beta }(\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)<\theta ^*+\eta \) for all \(k\ge k_1\). Thus, for all \(k\ge k_1\), we have

Step (2). First, the partial subdifferential with respect to t is

where the conclusion follows from (12a), and the definitions of B, C, and D. Similarly,

and

Moreover, according to (12d), we have

Finally, it follows from (13) that

Thus, there exists \(a:=\max \{(3+\Vert A^\top \Vert +\frac{1}{\beta }), \beta (\Vert B^\top A\Vert +\Vert C^\top A\Vert +\Vert D^\top A\Vert )\}>0\) such that

Step (3). Define \(\Delta ^k=\varphi ({\mathcal {L}}_\beta (\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k)-\theta ^*)- \varphi ({\mathcal {L}}_\beta (\textbf{t}^{k+1},\textbf{y}^{k+1},\textbf{z}^{k+1}, \textbf{x}^{k+1},\gamma ^{k+1})-\theta ^*)\). Since \(\mathcal { L}_\beta \) is decreasing and \(\varphi \) is monotonic, it is easy to see \(\Delta ^k\ge 0\) for all \(k\ge 1\).

Then, for \(k\ge k_1\), we have

where the first inequality follows from (37), the second inequality follows from the concavity of \(\varphi \), the third inequality follows from (36), and the fourth inequality follows from (21).

By using (33) and \(\sqrt{uv}\le \frac{u+v}{2}\) for \(u,v \ge 0\), we further obtain

where t is an arbitrary positive constant.

Let \(m:=\frac{1}{2t}\left( 1+\sqrt{\frac{{\lambda '^2_{\max }}}{\lambda _{\min }}}\right) \), adding \(-m\Vert \textbf{x}^{k+1}-\textbf{x}^k\Vert \) to both sides of the above inequality and simplifying the resulting inequality, we have

Taking \(t>\frac{1}{2}\left( 1+\sqrt{\frac{{\lambda '^2_{\max }}}{\lambda _{\min }}}\right) \) and hence \(\frac{m}{1-m}>0\). Thus, summing (40) from \(k=k_1, k_1+1, \cdots , \infty \), we have

Hence, \(\{\textbf{x}^k\}\) is convergent. From the inequality (33), we immediately obtain \(\{\gamma ^k\}\) is convergent. From (29), we see that \(\{\textbf{t}^k\}\), \(\{\textbf{y}^k\}\), and \(\{\textbf{z}^k\}\) are also convergent. Consequently, we conclude that \(\{\textbf{t}^k,\textbf{y}^k,\textbf{z}^k, \textbf{x}^k,\gamma ^k\}\) is a convergent sequence. This completes the proof. \(\square \)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wu, Z., Xie, G., Ge, Z. et al. Nonconvex multi-period mean-variance portfolio optimization. Ann Oper Res 332, 617–644 (2024). https://doi.org/10.1007/s10479-023-05524-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10479-023-05524-x