Abstract

This article provides a brief biographical synopsis of the life of Cyrus Derman and a comprehensive summary of his research. Professor Cyrus Derman was known among his friends as Cy.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Life and career

Derman was born on July 16, 1925 in Philadelphia and died at the age of 85 on April 27, 2011. He is survived by his daughter Hessy Derman.

Cy grew up in Collingdale Pennsylvania. He was the son of a grocery store owner who came to the United States from Lithuania. As a young boy Cy was often invited to play violin at a Philadelphia radio show for talented children. After high school he served in the US Navy, then pursued his undergraduate education at the University of Pennsylvania where, in 1948, he completed a degree in Liberal Arts, that for him meant music and mathematics. After he completed a Master’s degree in Mathematics at the University of Pennsylvania, in 1949, he went on to Columbia University for his graduate education in mathematical statistics.

At Columbia he was privileged to work with many of the most important US statisticians and probabilists of the early 1950s. Some of these were: Theodore (“Ted”) W. Anderson (chair), Aryeh (“Arie”) Dvoretzky, Herbert E. Robbins, Kai-Lai Chung, Joe Gani, Emil Julius Gumbel (Derman was his T.A. and he helped him edit his book Statistics of Extremes), Theodore (“Ted”) Harris, Howard Levene, Ronald Pyke, Jerome Sacks, Herbert Solomon, Lajos Takacs, Jacob Wolfowitz. Kai-Lai Chung was his formal thesis advisor. However, Cy wrote what he called a second thesis with Herbert Robbins, who considered Cy as his student. In addition Cy on many occasions had expressed his gratitude towards Ted Harris for introducing him to ways of thinking about stochastic processes. An early indication of his success in mathematics came when the well-known authority of the time, William Feller, cited young Derman’s unfinished dissertation work in his book next to the work of another giant of the time Andrey N. Kolmogorov.

After finishing his Ph.D. in 1954, Derman spent a year as an instructor in the department of Mathematics at Syracuse University. Then he joined the Department of Industrial Engineering at Columbia University in 1954 as an assistant professor in Operations Research. In 1960 Cy Derman met Martha Winn (October 24, 1920–May 17, 2009) of Poughkeepsie NY in Europe at an art gallery opening they both happened to be attending. Cy was traveling with Herbert Robbins who knew Martha and introduced them. They married in 1961, and they spent their first year of married life in Israel where Cy taught at the Technion. They lived in Chappaqua NY and adopted and raised two children Adam and Hessy. In 1965 Cy was promoted to the rank of Professor of Operations Research at Columbia University. He was a prominent figure in Operations Research at Columbia during his 38 years there and in 1977 played a crucial role in the formation of the department of Industrial Engineering and Operations Research. This department soon thereafter became one of the top departments in the field. He stayed at Columbia until his retirement in 1992. Cy was devastated when his son Adam died in October 1989.

Derman held visiting appointments and taught at Syracuse University, Stanford University (1965–1966 and most summers during the period: 1971-1989), University of California Berkeley (Spring 1979), University of California Davis (1975–1976), Imperial College (Spring 1970) and the Technion Israel Institute of Technology (1961–1962 as Guest Professor). Derman did not accept several offers to join the Operations Re- search department at Stanford, because he wanted to be close, and to help both his aging father and a needy older brother who lived in Philadelphia. However, he did spend many of his summers at Stanford working with his close friends Gerald (Jerry) J. Lieberman, Sheldon M. Ross and Ingram Olkin and he was glad to see the Stanford Operations Research department flourish under the leadership of Lieberman and his student Arthur (Pete) F. Veinott Jr. Katehakis was also asked to join him at Stanford during several summers in the 1980s.

Derman had the ability to make difficult ideas easy to explain for students and he was an excellent teacher. He influenced the field of Operations Research through his Ph.D. advisees. Many of them held or are holding important posts in academia and have had their own impact felt. Notable students of his are: Morton Klein (1957), Peter Frank (1959), Arthur Veinott, Jr. (1960), Robert Roeloffs (1962), Norman Agin (1964), Peter Kolesar (1964), Leon White (1964), Israel Brosh (1967), Eric Brodheim (1968), Sheldon M. Ross (1968), Monique Berard (1973), Ou Song Chan (1974), Christian Reynard (1974), Kiran Seth (1975), Michael N. Katehakis (1980), Showlong Koh (1982), Yeong-duk Cho (1987). As of May 2013, Derman had 262 descendants listed at the Mathematics Genealogy Project.

Michael Katehakis remembers Cy’s seemingly endless stylistic corrections to the draft of his Ph.D. thesis. When he complained about this, Cy asked him to go to a museum and pay attention to a painting and be aware that each dot of the painting has a reason for its existence. Many years later Cy smiled about this and said: “I really did not give you as much of a hard time as I did with Pete Veinott”. Veinott’s perfectionism is well-known in the profession. Perhaps it can be traced to this statement of Cy.

Cy was a Fellow of the Institute of Mathematical Statistics and the American Statistical Association. In 2002, he was awarded the John von Neumann Theory Prize from the Institute for Operations Research and Management Sciences for his contributions to performance analysis and optimization of stochastic systems.

Even while enjoying an illustrious career in applied mathematics, operations research, and statistics, Derman never lost his passion for music. He played violin all of his life and in the early 1990s following his retirement from Columbia he performed with a chamber group.

2 Major accomplishments

Cyrus Derman did fundamental research in Markov decision processes, i.e., sequential decision making under uncertainty. He also made significant contributions in optimal maintenance, stochastic assignment, surveillance, quality control, clinical trials, queueing and inventory depletion management among others.

In a series of important papers culminating in the book Finite State Markovian Decision Processes (Derman 1970b), which he wrote following a suggestion of Richard Bellman, Derman fundamentally advanced the theory of finite-state-and-action Markovian decision processes. He took a leading role in showing that starting from a state, the set of state-action frequencies over all policies is the convex hull of the finite set of state-action frequencies over all stationary deterministic Markov policies. This work plays a foundational role in solving problems that arise in the presence of linear constraints on the state-action frequencies, e.g., reflecting desired limits on the frequency of unfavorable events like failures, rejects, shortages and accidents, and has been widely used in practice. Moreover, it led him to co-develop the first general method for finding minimum-average-cost (typically randomized) policies in the presence of such constraints.

With Jerome Sacks, Derman discovered the celebrated and widely used conditions that assure an optimal stopping policy is a one-stage look-ahead policy, and applied them to give simple solutions of problems of optimal equipment replacement and search for a favorable price to buy an asset (Derman and Sacks 1960). With Gerald Lieberman and Sheldon Ross, he introduced and gave an interesting solution to a sequential stochastic assignment problem that has application to optimally assigning workers with differing skills to randomly arriving jobs of varying types (Derman et al. 1972a). With his student and long-time colleague Morton Klein he originated and did pioneering work characterizing when first in, first out (FIFO) and last in, first out (LIFO) issuing policies are optimal for items whose value or field life depends on their age at issue (Derman and Klein 1958a, 1959). Derman also developed a beautiful solution to the problem of finding a minimax inspection schedule for randomly failing equipment (Derman 1961a). He influenced and contributed to engineering practice by co-developing blood-inventory management policies that were used subsequently in New York hospitals (Brodheim et al. 1975), evaluating the safety of New York bridges, and by co-developing strategies for early termination of tests for the Navy.

3 Books

-

Probability and Statistical Inference for Engineers: A First Course (Derman and Klein 1970). As the authors acknowledge in the preface, this book was written following the encouragement of Herbert Robbins to put into Ebook form a set of notes that were prepared by the authors for an undergraduate course in the Department of “Industrial Engineering and Management” at Columbia. Its purpose was: “to present in compact form a one semester course of study in probability theory and statistical inference. The book provides an elegant elementary introduction to decision theory, estimation, tests of hypothesis and confidence intervals.

-

Finite State Markovian Decision Processes (Derman 1970b). This is one of the first books on the topic of the optimal sequential control of Markovian dynamic systems. It introduced the concept of Markovian decision process for the first time. In this text Derman provides a general framework for formulating and solving (it discussed basic computational algorithms) certain optimization problems that became known as Markovian decision process. This book was intended for operation researchers, statisticians, mathematicians and engineers.

-

A Guide to Probability Theory and Application (Derman et al. 1973). This book has as a unifying theme, the role that probability models can play in scientific and practical applications. It includes many examples, with actual data, of real-world uses of models. In addition, there is a detailed coverage of the properties and uses of probability models, including many that do not rely on the normal distribution. This book contains an elegant elementary introduction to Markov Chains.

-

Probability Models and Application (Olkin et al. 1994). The text is a revision of the previous title with emphasis on where and how to apply probability methods to practical problems. It aims to help the reader acquire a strong preparation in the tools necessary for applications. The material on the distributions of sums of independent random variables is presented before the discussion of individual distributions.

-

Statistical Aspects of Quality Control (Derman and Ross 1996). The text presents the key ideas and concepts in a compact manner rather attempting to cover each topic in complete depth. The authors use the concept of Average Run Length (ARL) to compare the different control charts, such as Shewhart, moving average, and cusum. They introduce the Taguchi approach to quality design and they provide a good survey of acceptance sampling. The authors also present both on-line and off-line quality control methods and they cover a wide range of statistical methods of quality control.

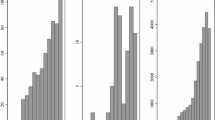

4 Probability, stochastic processes, and statistics

In Derman (1954a) Cy proved the following strong version of an ergodic property for a Wiener process {X(t)} t≥0. When for some bounded functions f and g, the ratio \(\int_{-\infty}^{+\infty}f(x)dx/\int_{-\infty}^{+\infty}g(x)dx\) is well defined, he showed that

The proof relied on a clever decomposition of the interval [0,T] into sub-intervals defined by first passage times.

Derman with one of his main teachers Herbert E. Robbins in Derman and Robbins (1955) presented a version of the strong law of large numbers for a case where E[X] is equal to +∞. Let X 1,X 2,… be independent and identically distributed random variables with distribution F(x). For 0<α<β<1 and C>0, suppose F(x)≤1−C/x α for large positive x and \(\int_{-\infty}^{0}\mid x\mid^{\beta}dF(x)<+\infty\). It was shown that with probability one, the sample mean \(\sum_{1}^{n} X_{i}/n\) converges almost surely to +∞ as n tends to +∞.

Cy with his thesis advisor Chung in Chung and Derman (1956) consider a sequence of integral-valued independent and identically distributed random variables {X i }. They suppose that 0<E[X i ]<∞, the random variables are aperiodic (i.e., the greatest common divisor of all values of x for which P{X i =x}>0 is 1), and let A denote a set containing an infinite number of positive integers. They showed that the set A is visited infinitely often with probability 1 by the sequence {S n }, where \(S_{n}=\sum_{1}^{n}X_{i}\). They also identified conditions for similar results to hold when {X i } has a continuous distribution and the set A is a Lebesgue measurable set whose intersection with the positive real numbers has infinite Lebesgue measure. They treated {S n } as a Markov process and tapped into the theory of such processes.

Derman was one of the first to make important contributions to the theory of stochastic approximation: For each x suppose that a random noise Y(x) is observed surrounding a regression function M(x), satisfying E[Y(x)]=M(x). Stochastic approximation aims at finding a random sequence {X n } n≥1 based on past observations such that X n converges, in some sense, to the true root x 0 of the equation M(x 0)=α or to the maximizing value x ∗ of M(x ∗)=max x {M(x)}. In 1951, Herbert Robbins and Sutton Monro pioneered the field by identifying such a sequence that would converge, in probability, to the root of x 0. In 1952, Jack Kiefer and Jacob Wolfowitz had proposed a sequence {X n } for which they provided conditions under which it would almost surely converge to the maximizing value x ∗. Derman (1956b) provided additional conditions under which the Kiefer-Wolfowitz procedure converges in distribution to the normal distribution. In addition, Derman (1956c) gave a comprehensive exposition of all the work directed towards providing a more general theory of stochastic approximation up to that time.

Derman (1957) developed a non-parametric procedure for estimating the α-quantile (i.e., the root θ α of the equation F(θ α )=α) of an unknown distribution function F(⋅), where at any control x the only observation is a random observation Y(x) with P(Y(x)=1)=F(x). When α∈[1/2,1), his “Up & Down” procedure starting from any present level x works as follows. If the response Y(x)=0 is observed it would move on to x+1. If the response Y(x)=1 is observed then using randomization, it would move to x+1 with probability \(1-\frac{1}{2\alpha}\) and to x−1 with probability \(\frac{1}{2\alpha }\). Note there would be equal chances to move up and down when x=θ α . Derman let the estimate \(\theta^{n}_{\alpha}\) based on n observations be the average of the most frequently encountered x levels. He used properties of Markov chains, to prove that limit points of the sequence \(\{\theta^{n}_{\alpha}\}\) are surely within distance ±1 of θ α .

In 1956, Aryeh Dvoretzky established an important Theorem on the convergence of certain transformations with superimposed random errors. The result could be used to show the convergence of stochastic approximation methods. Derman and Sacks (1959) gave a simpler proof of the probability-one version of the Dvoretzky Theorem. Further, the method of proof of Derman and Sacks permitted a direct extension to the multi-dimensional case.

Derman and Klein (1958b) investigated the feasibility and the limitations related to the use of regression for verifying a declared inventory. They had in mind applications related to inspection and disarmament issues of that time.

Derman (1961b) considers a machine that transitions between the “ON” and “OFF” states intermittently and each time it is “ON” the duration is an independent draw from a distribution F and each time it is “OFF” the duration is an independent draw from a distribution G. He employed a semi-Markov process Z(t), which equals 1 or 0 according to whether the machine is “ON” or “OFF”, and showed that

The main result of Derman and Sacks (1960), is the celebrated Derman & Sacks Optimal Optional Stopping Lemma: Let \((\varOmega, \mathcal {F}, P) \) be a probability space, \(\{\mathcal{F}_{n}\}_{n\ge1}\) a filtration on it and let {Y n } be a sequence of random variables with Y n measurable with respect to \(\mathcal{F}_{n}\) and such that EY n exists and is finite for all n. Let \(\mathcal{C}\) be the class of all optional stopping rules N such that EN<∞. They stated and proved the following lemma.

If there exists an optional stopping rule N ∗ that satisfies the following three conditions:

-

(a)

EN ∗<∞,

-

(b)

\(E (Y_{n} \mid\mathcal{F}_{n-1} ) \ge Y_{n-1}\) when n≤N ∗(ω) a.e. (P) and \(E ( Y_{n} \mid\mathcal{F}_{n-1} ) \le Y_{n-1}\) when n≥N ∗(ω) a.e. (P),

-

(c)

\(E (|Y_{n+1} -Y_{n}\mid| \mathcal{F}_{n}) \le M\), for some finite constant M,

then

In order to understand this lemma, it is helpful to think of Y n as denoting the fortune of a player after the n th game in a sequence of not necessarily independent gambling games. An optimal stopping rule is one that maximizes the expected fortune.

Derman and Sacks applied their lemma to the following replacement problem. Let a sequence X t , t=1,2,… of independent and identically distributed nonnegative, random variables with common distribution function F denote the observable equipment deterioration, where X t denotes the amount of deterioration occurring during the interval (t−1,t). Let \(S_{n}=\sum_{t=1}^{n}X_{t}\) for any n and suppose the equipment fails whenever S n >L, for a known constant L. If a replacement occurs before a failure there is a cost c>0. The cost of a replacement after a failure is c+A>c. For any replacement rule R, let C Ri be the cost (i.e., c or c+A ) and N Ri be the cycle length associated with the i th replacement cycle under R i , i=1,2,…. For rules under which C Ri and N Ri are independent and identically distributed, natural optimization criteria related to the average system cost are: ϕ R =EC R1/EN R1 and \(\phi'_{R}= E ( C_{R1}/ N_{R1} )\).

General replacement rules (policies) that efficiently use all acquired information specify replacement at a stopping time N of the form: N=min{ν,⌊Z⌋+1}, for some stopping time ν of the sequence {X t }, where Z is the service life of the equipment and for any real number a, ⌊a⌋ denotes the greatest integer less or equal to a.

Using their lemma they showed that the optimal (it maximizes \(\phi'_{R}\)) replacement rule specifies replacement of the equipment when it has been in service for N ∗ time periods, where: N ∗=min{n ∗−1,⌊Z⌋+1} and

This Derman and Sacks lemma also applies in a variety of situations. For example, in the same paper they obtained a simple derivation for the solution to the following problem, that was previously considered by Gideon Schwarz and also by Herbert Robbins who had obtained the same solution for N ∗ by different methods. Let X n be a sequence of independent and identically distributed random variables with common distributions F with finite first moment. Let Y n =max{X 1,…,X n }−cn, for some positive constant c. The objective is to find an optional stopping rule N which maximizes EY N . The lemma readily yields that: N ∗=inf{n:max{X 1,…,X n }≥a}, where a is the solution of \(\int_{a}^{\infty}(x-a) dF(x) =c\).

Derman and Ignall (1969) continued with the above setup and considered two variants of the problem of getting close to but not beyond a boundary. One is about maximizing E[W N ] where W n =S n if S n ≤L and W n =A for A≤L if S n >L, and another is about maximizing E[S N |S N ≤L] subject to P(S N >L)≤β. When A for the first problem or β for the second problem is large enough, the authors showed that an optimal rule could be characterized by stopping as soon as S n >S ∗ for some number S ∗. The closed-form solution for S ∗ was found for the case where the X i ’s are exponentially distributed.

Derman (1956a) investigated the problem of making inferences for the transition probabilities p ij of irreducible, positive recurrent and aperiodic Markov chains, from state observations x 1,…,x n . Let m ij , \(\sigma_{ij}^{2}\) denote respectively the expectation and the variance of the number of transitions it takes the chain to go from state i to state j. For any subset {i 1,…,i r } of states, let N n (i 1,…,i r ) be the number of times the consecutive sequence (i 1,…,i r ) is observed during transitions 1,…,n. In particular N n (i) is the number of times state i is observed (visited) during transitions ν=1,…,n. Cy then established the following:

-

(i)

Let i 1,…,i r be any finite sequence of distinct states and suppose there exists a state i in the class such that \(\sigma _{ii}^{2} <\infty\). Then there is a covariance matrix C so that

$$\frac{1}{\sqrt{n}} \biggl(N_n(i_{1})-\frac{n}{m_{i_{11}i_{11}}}, \ldots, N_n(i_{r})-\frac{n}{m_{i_{r}i_{r}}} \biggr) $$converges, as n→∞, to a multivariate Normal distribution with mean \({\bf0}\) covariance matrix C which can be determined.

-

(ii)

Given k subsets of states \(\{i_{11},\ldots,i_{1r_{1}}\}\), …, \(\{i_{k1},\ldots,i_{kr_{k}}\}\), let each P l for l=1,…,k stand for the probability of going from i l1 to \(i_{lr_{l}}\) in succession. Derman showed that the distribution of

$$\frac{1}{\sqrt{n}} \biggl(N_n(i_{11},\ldots,i_{1r_1})- \frac {nP_1}{m_{i_{11}i_{11}}},\ldots,N_n(i_{k1},\ldots,i_{kr_k})- \frac {nP_k}{m_{i_{k1}i_{k1}}} \biggr) $$converges to a multivariate Normal distribution with mean \({\bf0}\) and a covariance matrix that could be approximately characterized.

These theorems can be used to construct test statistics and chi-square tests suitable for any finite number of state transition probabilities.

5 Markov chains and Markov decision processes

Derman made fundamental contributions to the understanding of Markov chains. His focus was on irreducible stationary chains with denumerable states 0,1,…. Each of these chains is describable by a time-invariant stochastic transition matrix P=(p ij ), in which every p ij stands for the probability of going from state i to state j in one transition. Each pair of states is assumed to communicate with each other; hence, all states belong to a single class.

In Derman (1954b) he gave an important characterization to such Markov chains when they are recurrent. For such a chain, he demonstrated the existence of numbers akin to relative frequencies, regardless of whether the chain is positive or merely null recurrent: i.e., he showed that there exist positive numbers v 0,v 1,… that satisfy

In addition,

where each \(p^{(n)}_{jj}\) is the probability of going from state j in the chain to the same state in n transitions; also, the Markov chain is null recurrent if and only if \(\sum_{0}^{+\infty}v_{k}=+\infty\).

Using analysis based on characteristic functions, Derman (1955) showed that the v k values identified in the above possess statistical equilibrium interpretations. Suppose at an initial time 0, particles are distributed at the Markov chain’s various states, with the number A k (0) of particles at each state k being independently generated from a Poisson distribution with mean v k , and each individual particle subsequently undergoes the Markov chain state transitions independently. Then, at any future time n, the random numbers A k (n) of particles at states k would behave the same.

While viewing the vector of numbers of particles at all states as a super state, the evolution of the super state as a Markov chain was also studied. In the same work, Derman furthered the understanding on how the ratio \(\sum_{0}^{n} p^{(k)}_{ii}/\sum_{0}^{n} p^{(k)}_{jj}\) between frequencies of visits to different states converges to its limit π ij as the number of transitions n approaches +∞.

Derman also significantly contributed to lay the foundations for Markov decision processes (MDPs). A typical Markov decision process involves discrete time points n=0,1,…, a finite number of states 0,1,…,L, and a finite number of potential decisions 1,…,K. Under decision k, state transition is governed by the stochastic matrix Q(k)=(q ij (k)). When decision k is used at state i, a cost w i (k), irrespective of the time point t, is to be incurred.

A general policy R could be history-dependent, whereby the probability that decision k is made in a period may depend on all states and decisions experienced from the very beginning until the period. Thus, a Markovian or memoryless policy R would prescribe potentially randomized decisions according to the current period and state. A stationary Markovian policy R specifies the n-independent probability D ik of applying decision k at state i in any period n. It is considered stationary deterministic when D ik =0 or 1 for all i and k. Applying a stationary Markovian policy R to the Markov decision process Q=(q ij (k)) results in a Markov chain with transition matrix \(P^{R}=(p^{R}_{ij})\), where each \(p^{R}_{ij}=\sum_{k=1}^{K} D_{ik}q_{ij}(k)\).

Derman (1962a) considered two problems, one about minimizing the total cost in the long run and another about minimizing the total cost incurred before hitting an absorbing state. He showed that neither problem need look beyond stationary deterministic policies. His approach first tackled problems where α discounts are involved, and then took advantage of the limiting behavior when α→1. Under irreducibility assumptions, Derman further demonstrated that optimal stationary Markovian policies could be identified through solving linear programming problems.

Derman and Klein (1965) studied a finite N-horizon MDP with time-dependent transition rules q ij (n,k) and costs w i (n,k). By enlarging the state space from (0,1,…,L) to (0,1,…,L)×(0,1,…,N), they demonstrated that results from Derman (1962a) originally intended for stationary systems would still apply; indeed, even when side constraints are present and when termination is decided through a stopping time bounded by N, the problem would remain solvable via linear programming.

In Derman (1963c) he gave strong support to the substitutability of history-dependent policies by their stationary Markovian counterparts under the long-run average criterion. Let G R stand for the set of limit points, as N→+∞, of the (L+1)-component vectors formed from the expected frequencies at which various states are reached over a duration of N periods when the starting state experiences all possibilities and state transitions are governed by policy R. Under the condition that P R would always specify an irreducible Markov chain as long as R is stationary deterministic, Derman illustrated that the union of G R over all stationary Markovian policies is as big as that over all history-dependent policies; moreover, this common set is closed and convex. This means that stationary Markovian policies achieve any long-run average performance attainable by any history-dependent policy.

When an MDP is started at state i and subsequently governed by policy R, let \(\phi^{R}_{Njk}(i)\) represent the expected frequency at which state j and decision k appear together in a given period over periods 0 through N. Then, \(\phi^{R}_{N}(i)=(\phi^{R}_{Njk}(i))\) is an (L+1)×K-component frequency vector. Let H(i), H′(i), and H″(i) stand for the set of limit points of \(\phi^{R}_{N}(i)\) as N→+∞ while R range in, respectively, all history-dependent policies, all stationary Markovian policies, and all stationary deterministic policies.

Another way to express the main result of Derman (1963c) is that, under the aforementioned irreducibility condition, H′(i) would be equal to H(i). Derman (1964a) worked with the general case where irreducibility does not necessarily apply. He showed that the convex hulls of H″(i) and H′(i) are the same, and they both contain H(i). This means that a randomization of stationary deterministic policies would reach targets set for history-dependent policies.

The above was proved by Derman (1964b). Furthermore, under the irreducibility condition, Derman showed that H(i)=H′(i) is independent of the starting state i, and that the convex hull of H″(i) amounts to H(i)=H′(i). He also provided a strong law, stating that with probability one, sample limit points of the state-decision frequency vectors resulting from any history-dependent policy would reside in the convex hull of \(\bigcup_{i=0}^{L} H''(i)\). Relatedly, Derman and Strauch (1966) showed that the expected frequencies achieved by any history-dependent policy could be replicated by a Markovian but not necessarily stationary policy.

Derman (1966) studied a Markov decision process with a denumerable state space I={0,1,…}, under the long-run average criterion, and state transition probabilities q ij (k) that are functions only of the last observed state i and the subsequently made decision k. For such denumerable state space problems an optimal policy need not exist, and even when it does, it need not be stationary deterministic. Cy showed that when all action sets A i are finite for all i∈I, the one step state—action costs w ik belong to a bounded set of numbers and if there exists a bounded solution {g,v j } j∈I of the functional equation:

then there exists an optimal deterministic policy (“rule”) R ∗ specified by:

Further in this paper Cy provided, irreducibility type, conditions under which optimal deterministic policies exist and could be computed with a policy improvement procedure.

Derman and Veinott (1967) gave conditions for the existence, uniqueness and the form of the solutions of the countable system of optimality conditions above. Specifically, they considered a Markov chain {X n } n≥0 with denumerable state space I={0,1,…}, with transition matrix P=(p ij ) and {w i } a sequence of real numbers. The sufficient conditions of Derman (1966) were connected to the following system of equations:

where g and v 0,v 1,… are unknown variables.

They used the auxiliary processes:

and quantities \(_{0}p^{*}_{ij}=E(\sum_{n=0}^{\infty}Z_{n}(j) | X_{0}=i)\), \(c_{i0}=E(\sum_{n=0}^{\infty} Y_{n} | X_{0}=i)= \sum_{j=0}^{\infty} {} _{0}p^{*}_{ij}w_{j}\), \(m_{i0}=\sum_{j=0}^{\infty} {} _{0}p^{*}_{ij}\) and they showed that if the numbers m i0 and c i0 are finite for all i∈I then the following statements are true.

-

(i)

A solution to the above system of equations is given by g=c 00/m 00 and v i =c i0−c 00 m i0/m 00; in addition the series \(\sum_{j=0}^{\infty}p_{ij}v_{j}\) converges absolutely.

-

(ii)

If {g,v 0,…} is a sequence with \(\sum_{j=0}^{\infty} {} _{0}p^{*}_{ij}v_{j}\) converging absolutely, for all i and if in addition \(\sum_{j=0}^{\infty} {} _{0}p^{*}_{ij}(c_{j0}-(c_{00}/m_{00}) m_{i0})\) converges absolutely for all i, then {g, v 0,…} is a solution to the above system if and only if there exists a real number r such that g=c 00/m 00 and v i =c i0−(c 00/m 00)m i0+r.

Derman (1970a) summarized the above advances on Markov decision processes with both finite and denumerable state spaces when the long-run average criterion is applied.

Derman and Veinott (1972) considered a general finite state and action Markov decision processes when there are linear side conditions on the limit points of the expected state-action frequencies. This problem had been solved previously only for the case where every deterministic stationary policy gives rise to at most one ergodic class. In this paper they removed that restriction by applying the Dantzig-Wolfe decomposition principle.

In Brodheim et al. (1974), with Brodheim and Keyfitz, Cy studied a Markov chain that would arise in inventory control and blood management. The model involved a random walk that takes at most n steps to the right and has a reflecting barrier at the origin. Using complex analysis and other novel techniques, the authors thoroughly characterized the stationary probabilities of the chain.

6 Maintenance, quality, reliability

In many industries, continuous sampling of the output is essential when there is a need to rectify defective products. Multi-level continuous sampling is a generalization that is based on the specification of several levels of partial inspection. Derman et al. (1957) studied three generalizations of multi-level plans (MLPs). They obtained explicit relationships between the average outgoing quality limit (AOQL) and parameters for two of the plans. The relationship could be approximated for the third plan.

Derman and Solomon (1958) used the theory of Markov processes on a sampling inspection problem. Lots of items are maintained, whereas the quality of each item deteriorates randomly over time. Lots are inspected and those failing are replaced with fresh ones. The objective is to maintain a high probability of having sufficient items of good quality at all time. The effectiveness of sampling plans and replacement policies were evaluated.

Derman et al. (1959) studied two inspection procedures that used complete inspection and random sampling intermittently. Random sampling here referred to the inspection of one randomly chosen item from every k-item block. For both procedures, they derived the average outgoing quality limit without the assumption of control. That is, they let Nature play the adversary to each procedure and then they derived its worst-case long-run portion of undetected defective items. These average outgoing quality limit results were compared to those employed under the “control assumption” that each item has an equal p chance of being defective.

Derman (1961a) treated a surveillance-scheduling problem and derived the optimal schedule under a min-max criterion. In addition (Derman 1963a), he considered optimal replacement rules when changes of state are Markovian.

In Derman (1962b) he gave a simple rule for determining the most efficient sequence of independent inspections. Suppose test i costs c i and each product has an independent p i chance of failing the test, and a product failing k out of n tests will be rejected. Then, he showed that the expected cost would be minimized by running the tests in an increasing order of the ratio c i /p i .

Derman (1963b) modeled the problem of making maintenance and replacement decisions to influence the random state evolution of a system as an MDP. His goal was to maximize the expected time length between replacements while considering potential side conditions that bound the probabilities of replacement through certain undesirable states. Using results from (Derman 1962a), Cy showed how linear programming could be used to formulate the problem.

Derman and Klein (1966) examined a stochastic traveling salesman problem, in which locations are to be inspected at required frequencies and traveling between locations is costly. The authors set up the problem as an Markovian decision process in order to find policies that minimize the average traveling cost in the long run while meeting inspection frequencies. They found Markov, potentially non-deterministic, stochastic decision rules to be advantageous.

Together with Lieberman and Ross, in Derman et al. (1972b) Derman studied the reliability of a system consisting of some k components. When each component j is associated with a quality label a j, the probability that the system would perform satisfactorily is given by some R(a 1,…,a k). Suppose each component j comes in n varieties with \(a^{j}_{1}\leq\cdots\leq a^{j}_{n}\), then there are a huge number of ways in which n systems could be simultaneously assembled, each leading to a random number N of systems among the n that perform satisfactorily. Using an extension of a well known result of Hardy, Littlewood, and Pólya on sums of products, authors showed how to maximize E[N] when R(a 1,…,a k) acts as a joint cumulative distribution function: let the ith system have reliability \(R(a_{i}^{1},\ldots,a_{i}^{k})\); that is, assemble the worst components together, the second-worst components together, and so on and so forth.

In a follow-up paper (Derman et al. 1974a), with Lieberman and Ross, they investigated two more reliability problems. The first problem is concerned with the assembly of independent components into parallel systems so as to maximize the expected number of systems that perform satisfactorily. Unlike a series system which requires all its components to work, a parallel system would perform satisfactorily so long as one of its component works. The authors showed the obtainability of an optimal assembly when the reliability of each assembled system could be made equal. Otherwise, bounds on the optimal number of performing systems were given. The second problem deals with the allocation of satisfactory-performance probabilities p m among n components so as to maximize the reliability of a single system made out of these components. Optimal solutions were characterized for different system designs.

In the paper (Derman et al. 1978) Derman, Lieberman, and Ross were concerned with a system that needs to stay operational for T units of time. A key component of the system needs to be replaced every time it fails. Different types of this component have different exponential distributions for their life times and replacement costs. The authors studied how to assign the initial component and subsequent replacements so as to minimize the total expected cost over the time horizon. Later in Derman et al. (1979b) they continued to consider the case where the system’s operational time length T is random and there are two component types, one more costly and lasting longer and another less costly and lasting shorter. Results formalized the intuition that more expensive replacements would be used when the system is relatively new.

Derman and Smith (1979) followed with a more general case involving n component types, with positive component costs c 1<⋯<c n and exponential failure rates. They established the structure of an optimal policy when the distribution function F of T is of increasing failure rate (IFR), i.e., F′(t)/(1−F(t)) is increasing in t. They also developed an algorithm for finding an optimal policy. Some characterizations and properties of optimal policies were also obtained for the case where component life distributions are not exponential. Further, in Derman and Smith (1980), they offered an alternative proof for the result that, under reasonable conditions, the life distribution function F(t) of a system sustaining cumulative randomly arriving damages has the increasing failure rate on average (IFRA) property, i.e., \(\frac{1}{t}\log (1-F(t))\) is increasing in t. The proof utilized the celebrated IFRA closure theorem in reliability theory.

Derman et al. (1982) studied a system that consists of n components that are linearly ordered. Each component either functions or fails and the system would be put out of service by the failure of any k consecutive components. When the status of each component is i.i.d., they provided recursive formulas and bounds for computing the system reliability. Some questions regarding optimal system design were also answered.

Derman et al. (1983) gave a probabilistic proof to the result that, for a birth and death process starting in state 0, the time of first passage into state n has an IFR distribution. A similar result was given for the nonnegative diffusion process. Derman, Lieberman, and Schechner in Derman et al. (1984b) gave formulas and tables that would enable users of the sequential probability ratio tests to make acceptance or rejection decisions when the test procedure is prematurely truncated. These tests would comply with the prevailing at the time Military Standard: MIL-STD 781C.

Derman et al. (1984a) considered an extreme replacement problem where a vital component such as a pacemaker has to be replaced before its failure knocks the system out of commission for good. With n spares with a common distribution of life, the objective was to schedule the replacements so as to maximize the expected life of the system. Authors showed that the maximum expected life time when n spares are available is increasing and concave in n. They also proved the intuitively expected result that the optimal length of time to use a component before replacement when n spares are available, is decreasing in n. Also considered were various limiting relationships and equal-interval schedules.

Katehakis and Derman (1984) treated the optimal assignment over time of a single repairman to failed components in a series system with exponentially-distributed component failure and repair times. Component failures can occur even while the system is not functioning and the repairman can be freely re-assigned among failed components. They showed that assigning the repairman to the failed component with the smallest failure rate would always maximize the availability of the system. Their proof relied on establishing that the aforementioned policy solves the functional equations of an equivalent Markov decision problem of minimizing the expected first passage times to the functioning state. The same policy was shown by Katehakis and Derman (1987) to be optimal when discounting over time is involved. We note that this was an open problem at that time and many well known people in the field had worked on and provided solutions to versions of the problem.

Derman et al. (1987) studied a sampling inspection problem involving imperfect inspection, where a large lot is sampled by a number of inspectors with unknown probabilities of not detecting a defect for the purpose of ascertaining its number of defective units. A sampling plan was provided for estimating the number of defective units in the lot.

Derman C. and Koh (1988) used the mean square error criterion to compare two different estimators of software failure rate, and found that one tended to be better when the number of errors is small to moderate and the other when the number of errors is large. Also, Derman and Ross (1995) showed that a new estimator for the standard deviation parameter in the standard quality control model would have a smaller mean square error than the usual estimator when the data are normal. Unlike the usual estimator, it would remain consistent even when the data are not normal.

Katehakis and Derman (1989) investigated the problem of characterizing properties of optimal maintenance (i.e., server allocation to failed components) policies for systems composed of highly reliable components. The problem is to assign arriving failures to distinguishable servers so as to optimize a measure of system performance. This an intractable problem due to the high dimension of the state space, even under the assumption of exponential distributions for the repair and failure free times. To address this problem the authors employed Maclaurin series expansions of the value functions of related Markovian decision processes with respect to a parameter of the component failure or repair (service) rates. By studying the coefficients of these expansions they obtained solutions and characterizations for the important case of a highly reliable system of general structure function.

7 Inventory, assignment, allocation, investment

Derman worked on many operations research problems in which his mastery of probability and stochastic processes was put to good use. Roughly, we bin these problems into inventory control, stochastic assignment, stochastic sequential allocation, and investment. As in previous sections, we present his works in chronological order.

Derman and Klein (1958a) studied three problems, all concerning the optimal times to release items from storage to meet given demand schedules. The utility of an item is dependent on the age at which it is issued. The objective taken was the maximization of the total utility obtainable from the issuance of the items. The authors identified the convexity of the utility function, among other things, as the most prominent condition that would result in the optimality of the last in, first out (LIFO) issuance policies. Symmetrically, they showed the intimate link between a concave utility and first in, first out (FIFO) policies. In a follow-up note, Derman and Klein (1959) presented examples to which their earlier theory could be applied.

Derman (1959) considered a distribution-of-effort problem. It is about how to divide T units of a product or service among k locations whose demands at the time of allocation are random, so that the total expected amount of demand being satisfied can be maximized. A general condition about demands was given under which an optimal allocation could be found in the interior of the feasible region for allocations (a 1,…,a k ). Also examined was the optimal decision on the total initial production T.

Derman and Lieberman (1967) worked on a discrete-time joint stocking and replacement problem. Items are purchased in N-sized batches, after each of which they are put into service one after another. Each item in service fails in a period with a certain probability, and before giving out or being replaced, it holds the same randomly generated performance level. The level in turn influences the cost incurred in each period the item is operational. The replenishment cost is of course dependent on the batch size N. The decision maker is to select the order size and the replacement policy that minimizes the expected cost per period in the long run.

The authors modeled the problem as a Markov decision problem with an average criterion and a denumerable state space. Based on a theoretical result concerning the optimality of deterministic Markovian policies under mild conditions, they showed that optimal replacement follows a threshold policy. They established that a policy improvement type procedure developed in Derman (Derman 1966) could be used to compute the optimal policy. Bounds were also established for the optimal replenishment batch size.

Derman et al. (1972a) studied a stochastic assignment problem, in which n men are available to perform n jobs that arrive in a sequential order. Each man i is associated with a positive number p i . Associated with the j th job is a random variable X j the value of which is revealed upon arrival. The reward from assigning man i to job j when X j =x j is p i x j . It is first assumed that the X j are independent and identically distributed random variables. Let π(j) denote the man (identified by number) assigned to the j th arriving job, by a policy π. The objective is to find a π that maximizes the expectation \(E (\sum_{1}^{n} p_{\pi(j)}X_{j}) \). A special case of this model is a generalization of the “asset disposal” problem studied previously by Samuel Karlin. Suppose that there are k≤n identical houses to be sold. Offers assumed to be a sequence of independent identically distributed random variables X 1, X 2,… arrive in a sequential manner. The seller must dispose of all k≤n houses by no later than the n th offer. The problem is to determine which offers to accept in order to maximize the total expected profit. This problem becomes a special case of the stochastic assignment problem when one takes a sequence of p j where the first k terms are 1 and the rest 0 with the interpretation that an assignment of an offer to a 1 (respectively to a 0) means acceptance (respectively rejection) of the offer. The authors derived the structure of the optimal policy using induction and the Hardy, Littlewood, Pólya inequality which states that for sequences of numbers p 1,…,p n and x 1,…,x n and with p (1)≤p (2)≤⋯≤p (n) and x (1)≤x (2)≤⋯≤x (n) denoting the ordered values of the p’s and of the x’s, it is true that \(\sum_{1}^{n}p_{j}x_{j} \leq\sum_{1}^{n}p_{(j)}x_{(j)}\). They showed that at any stage when there are m pairs of men and jobs remaining, the real line ℜ could be partitioned into m intervals. When the value x of the emerging job falls into the kth interval, the remaining man with the kth ranked p value should be assigned to this job. More strikingly, the intervals do not depend on the p values, and they can be generated through an iterative procedure. When the reward is a supermodular function of p i and x j rather than just a simple product of them, the interval policy is still valid, albeit being dependent on the p values and much more difficult to compute. A byproduct of this research is that the authors obtained the following generalization of the “Hardy, Littlewood, Pólya inequality”. When p 1≤p 2≤⋯≤p n and the successive job values X 1,X 2,…,X n form a submartingale, the optimal policy is to assign man j to the j th arriving job for eachj=1,…n, because E[X j ] is increasing from the submartingale assumption. The point of this result is that for any value of X 1 it is optimal to assign man 1 to job 1, and so on. The authors consider several extensions such as the case in which there is a cost c(p) to retain a man having an associated p value and general assignment reward functions r(p,x).

Albright and Derman (1972) worked on the limiting behavior of the optimal assignment policy for the product-award case. They showed that in the limit all men have the same 1/n chance of being assigned first, when the number n of men and jobs approaches +∞.

Derman et al. (1974b) studied an optimal resource allocation problem, in which a fixed budget A was divided among the building of n components to ensure that k of them would be successful. The success rate of each component is a function P(x) of the money put into it. The log-concavity of P(⋅) was identified as a sufficient condition for the optimality of the equal-allocation rule. Cases with general P(⋅) but k=1 or P(x)=x were also analyzed. The authors further discuss this problem in Derman et al. (1975a).

Derman, Lieberman, and Ross in Derman et al. (1975b) made a statement on an often-encountered dilemma: whether to put more money into an available investment opportunity with decreasing marginal returns or wait for another opportunity whose appearance in the future is yet uncertain. Under both discrete- and continuous-time settings, authors characterized the optimal policy as one advocating more caution when either money is tight or time is abundant. Under special profit functions, they gave closed-form expressions for the policy.

Brodheim et al. (1975) presented two inventory policies for perishable items such that the inventory level under one policy would dominate that under the other on each sample path. Derman and Ignall (1975) gave more general conditions under which the domination in steady-state distribution could still be held.

Derman et al. (1976) studied another sequential allocation problem. Here, a number of periods are allowed for the construction of a certain number of items. Each period is devoted to one item, with the success rate being an increasing function of the money invested into the construction. In the end, a convex cost is associated with the number of required items not yet constructed. An optimal investment policy was identified, in which the spending in a period is increasing in the number of items to be constructed and decreasing in the number of periods still remaining.

The same authors in Derman et al. (1979a) analyzed a classical model for selling an asset in which randomly distributed offers X come in daily and a decision must be made as to whether or not to sell. For each day the item remains unsold a maintenance cost c is incurred. Under a known offer-size distribution F, the optimal policy had been shown to be determined by a critical number x F , so that the first offer exceeding x F is accepted.

Here, bounds on the returns of certain policies were obtained. Also established was a link between the NWUE property of F, saying that E F [X−a|X>a]≥E F [X] for a≥0, and the monotone relationship between a resulting policy and that for an exponentially distributed offer with the same mean. For both cases where past offers may or may not be recalled, authors derived properties regarding the optimal policies and value functions when Bayesian updating is used in learning the unknown offer-size distribution.

Derman et al. (1979c) studied a variant of the famous secretary problem. The problem is about finding the best strategy to stopping interviewing candidates for a secretarial position. When there are M candidates, it is known that the interviewer should pick the best candidate first encountered after about M/e interviews, where e is the base of the natural logarithm. Here authors let M be random and gave sufficient conditions on its distribution that would be responsible for simple optimal policies.

Furthermore, Derman et al. (1980) considered a dynamic assignment problem in which multiple servers with exponential service times receive customers that arrive at random times and the latter are lost when all machines are occupied. Authors showed that assigning an arrival to an available machine with the highest (lowest) rate would stochastically minimize (maximize) the number of customers in the system. The result was applied to a particular component-repair problem with exponential repair times in which the policy of always repairing the component least prone to fail was shown to stochastically maximize the number of working components.

Katehakis and Derman (1986) formulated a problem regarding assigning one of several treatments in clinical trials as a discounted bandit problem, using a Bayes framework with Dirichlet priors. A solution involves comparison of certain treatment dependent indices, where each index is dependent only on its own treatment past success-failure record. In each period it is optimal to select the treatment with the largest index. Here, the authors found an efficient way to calculate the indices, using a characterization of the index as a solution to a restart problem that had been obtained by Katehakis and Veinott. They further showed how solutions of any prescribed accuracy could be obtained with computations that involved a finite number of state variables.

8 Some additional pictures

Cy Derman with Ingram Olkin and Leon Gleser and a copy of their book at Stanford in 1986

Cy Derman at Columbia in 1973

Cy Derman elected fellow of ASA

Cy Derman with his father in 1945 at the occasion of his college graduation

Cy Derman (undated) with his violin

Cy Derman (undated)

Cy Derman with his daughter Hessy, at Imperial College London in 1970

References

Albright, S. C., & Derman, C. (1972). Asymptotic optimal policies for the stochastic sequential assignment problem. Management Science, 19(1), 46–51.

Brodheim, E., Derman, C., & Keyfitz, B. L. (1974). On the stationary probabilities for a certain class of denumerable Markov chains. Jnanabha (Sec. A), 4, 93–103.

Brodheim, E., Derman, C., & Prastacos, G. (1975). On the evaluation of a class of inventory policies for perishable products such as blood. Management Science, 21(11), 1320–1325.

Chung, K., & Derman, C. (1956). Non-recurrent random walks. Pacific Journal of Mathematics, 6(3), 441–447.

Derman, C. (1954a). Ergodic property of the Brownian motion process. Proceedings of the National Academy of Sciences of the United States of America, 40(12), 1155–1158.

Derman, C. (1954b). A solution to a set of fundamental equations in Markov chains. Proceedings of the American Mathematical Society, 5(2), 332–334.

Derman, C. (1955). Some contributions to the theory of denumerable Markov chains. Transactions of the American Mathematical Society, 79(2), 541–555.

Derman, C. (1956a). Some asymptotic distribution theory for Markov chains with a denumerable number of states. Biometrika, 43(3–4), 285–294.

Derman, C. (1956b). An application of Chung’s lemma to the Kiefer–Wolfowitz stochastic approximation procedure. The Annals of Mathematical Statistics, 27(2), 532–536.

Derman, C. (1956c). Stochastic approximation. The Annals of Mathematical Statistics, 27(4), 879–886.

Derman, C. (1957). Non-parametric up-and-down experimentation. The Annals of Mathematical Statistics, 28(3), 795–798.

Derman, C. (1959). A simple allocation problem. Management Science, 5(4), 453–459.

Derman, C. (1961a). On minimax surveillance schedules. Naval Research Logistics Quarterly, 8(4), 415–419.

Derman, C. (1961b). Remark concerning two-state semi-Markov processes. The Annals of Mathematical Statistics, 32(2), 615–616.

Derman, C. (1962a). On sequential decisions and Markov chains. Management Science, 9(1), 16–24.

Derman, C. (1962b). The sequencing of tests. In Columbia engineering quarterly (pp. 1175–1191).

Derman, C. (1963a). On optimal replacement rules when changes of state are Markovian. In R. Bellman (Ed.), Mathematical optimization techniques (Vol. 396, pp. 201–210). California: University of California Press.

Derman, C. (1963b). Optimal replacement and maintenance under Markovian deterioration with probability bounds on failure. Management Science, 9(3), 478–481.

Derman, C. (1963c). Stable sequential control rules and Markov chains. Journal of Mathematical Analysis and Applications, 6(2), 257–265.

Derman, C. (1964a). Markovian sequential decision processes. Proceedings of Symposia in Applied Mathematics, 16, 281–289.

Derman, C. (1964b). On sequential control processes. The Annals of Mathematical Statistics, 35(1), 341–349.

Derman, C. (1966). Denumerable state Markovian decision processes-average cost criterion. The Annals of Mathematical Statistics, 37(6), 1545–1553.

Derman, C. (1970a). Markovian decision processes—average cost criterion. Mathematics of the decision sciences, Part 2. American Mathematical Society, 11, 139–149.

Derman, C. (1970b). Finite state Markovian decision processes. New York: Academic Press.

Derman, C., & Ignall, E. (1969). On getting close to but not beyond a boundary(optimal rule for decision to stop or continue observation of random variables after observing sequence of variables with continuous distribution function). Journal of Mathematical Analysis and Applications, 28, 128–143.

Derman, C., & Ignall, E. (1975). On the stochastic ordering of Markov chains. Operations Research, 23(3), 574–576.

Derman, C., & Klein, M. (1958a). Inventory depletion management. Management Science, 4(4), 450–456.

Derman, C., & Klein, M. (1958b). On the feasibility of using a multiple linear regression model for verifying a declared inventory. In S. Melman (Ed.), Inspection for disarmament (pp. 220–229). Columbia: Columbia University Press.

Derman, C., & Klein, M. (1959). Discussion: a note on the optimal depletion of inventory. Management Science, 5(2), 210–213.

Derman, C., & Klein, M. (1965). Some remarks on finite horizon Markovian decision models. Operations Research, 13(2), 272–278.

Derman, C., & Klein, M. (1966). Surveillance of multi-component systems: a stochastic traveling salesman’s problem. Naval Research Logistics Quarterly, 13(2), 103–111.

Derman, C., & Klein, M. (1970). Probability and statistical inference for engineers: a first course. Oxford: Oxford University Press.

Derman, C., & Koh, S. P. (1988). On the comparison of two software reliability estimators. Probability in the Engineering and Informational Sciences, 2(1), 15–21.

Derman, C., & Lieberman, G. (1967). A Markovian decision model for a joint replacement and stocking problem. Management Science, 13(9), 609–617.

Derman, C., & Robbins, H. (1955). The strong law of large numbers when the first moment does not exist. Proceedings of the National Academy of Sciences of the United States of America, 41(8), 586–587.

Derman, C., & Ross, S. (1995). An improved estimator of σ in quality control. Probability in the Engineering and Informational Sciences, 9(3), 411–416.

Derman, C., & Ross, S. (1996). Statistical aspects of quality control. New York: Prentice Hall.

Derman, C., & Sacks, J. (1959). On Dvoretzky’s stochastic approximation theorem. The Annals of Mathematical Statistics, 30(2), 601–606.

Derman, C., & Sacks, J. (1960). Replacement of periodically inspected equipment (an optimal optional stopping rule). Naval Research Logistics Quarterly, 7(4), 597–607.

Derman, C., & Smith, D. (1979). Renewal decision problem-random horizon. Mathematics of Operations Research, 4(3), 225–232.

Derman, C., & Smith, D. (1980). An alternative proof of the IFRA property of some shock models. Naval Research Logistics Quarterly, 27(4), 703–707.

Derman, C., & Solomon, H. (1958). Development and evaluation of surveillance sampling plans. Management Science, 5(1), 72–88.

Derman, C., & Strauch, R. (1966). A note on memoryless rules for controlling sequential control processes. The Annals of Mathematical Statistics, 37(1), 276–278.

Derman, C., & Veinott, A. F. Jr. (1967). A solution to a countable system of equations arising in Markovian decision processes. The Annals of Mathematical Statistics, 38(2), 582–584.

Derman, C., & Veinott, A. F. Jr. (1972). Constrained Markov decision chains. Management Science, 19(4-Part-1), 389–390.

Derman, C., Littauer, S., & Solomon, H. (1957). Tightened multi-level continuous sampling plans. The Annals of Mathematical Statistics, 28(2), 395–404.

Derman, C., Johns, M. Jr., & Lieberman, G. (1959). Continuous sampling procedures without control. The Annals of Mathematical Statistics, 30(4), 1175–1191.

Derman, C., Lieberman, G., & Ross, S. (1972a). A sequential stochastic assignment problem. Management Science, 18(7), 349–355.

Derman, C., Lieberman, G., & Ross, S. (1972b). On optimal assembly of systems. Naval Research Logistics Quarterly, 19(4), 569–574.

Derman, C., Gleser, L. J., & Olkin, I. (1973). A guide to probability theory and application. Holt, Rinehart and Winston.

Derman, C., Lieberman, G., & Ross, S. (1974a). Assembly of systems having maximum reliability. Naval Research Logistics Quarterly, 21(1), 1–12.

Derman, C., Lieberman, G., & Ross, S. (1974b). Optimal allocations in the construction of k-out-of-n reliability systems. Management Science, 21(3), 241–250.

Derman, C., Lieberman, G., & Ross, S. (1975a). Optimal allocation of resources in systems. In SIAM, conference volume on reliability and fault tree analysis (pp. 307–317).

Derman, C., Lieberman, G., & Ross, S. (1975b). A stochastic sequential allocation model. Operations Research, 23(6), 1120–1130.

Derman, C., Lieberman, G., & Ross, S. (1976). Optimal system allocations with penalty cost. Management Science, 23(4), 399–403.

Derman, C., Lieberman, G., & Ross, S. (1978). A renewal decision problem. Management Science, 24(5), 554–561.

Derman, C., Lieberman, G., & Ross, S. (1979a). Adaptive disposal models. Naval Research Logistics Quarterly, 26(1), 33–40.

Derman, C., Lieberman, G., & Ross, S. (1979b). On renewal decisions. In Dynamic programming and its applications (pp. 307–317). New York: Academic Press.

Derman, C., Lieberman, G., & Ross, S. (1979c). On the candidate problem with a random number of candidates. Tech. Rep. 192, Stanford University.

Derman, C., Lieberman, G., & Ross, S. (1980). On the optimal assignment of servers and a repairman. Journal of Applied Probability, 17(2), 577–581.

Derman, C., Lieberman, G., & Ross, S. (1982). On the consecutive-k-of-n: F system. IEEE Transactions on Reliability, 31(1), 57–63.

Derman, C., Ross, S., & Schechner, Z. (1983). A note on first passage times in birth and death and nonnegative diffusion processes. Naval Research Logistics Quarterly, 30(2), 283–285.

Derman, C., Lieberman, G., & Ross, S. (1984a). On the use of replacements to extend system life. Operations Research, 32(3), 616–627.

Derman, C., Lieberman, G., & Schechner, Z. (1984b). Prematurely terminated sequential tests for MILil-std 781c. In Frontiers in statistical quality control. Berlin: Springer.

Derman, C., Lieberman, G., & Ross, S. (1987). On sampling inspection in the presence of inspection errors. Probability in the Engineering and Informational Sciences, 1(02), 237–249.

Katehakis, M., & Derman, C. (1984). Optimal repair allocation in a series system. Mathematics of Operations Research, 9(4), 615–623.

Katehakis, M., & Derman, C. (1986). Computing optimal sequential allocation rules in clinical trials. In J. Van Ryzin (Ed.), Lecture notes-monograph series: Vol. 8. Adaptive statistical procedures and related topics (pp. 29–39).

Katehakis, M., & Derman, C. (1987). Optimal repair allocation in a series system, expected discounted operation time criterion. Stochastic Analysis and Applications, 5(4), 387–394.

Katehakis, M. N., & Derman, C. (1989). On the maintenance of systems composed of highly reliable components. Management Science, 35(5), 551–560.

Olkin, I., Gleser, L. J., & Derman, C. (1994). Probability models and applications. New York: Prentice Hall.

Acknowledgements

We would like to thank Hessy Derman who provided many of the pictures of Cy. Martin Winn, a nephew of Cy, reminded us the story of Cy’s meeting with Martha.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

About this article

Cite this article

Katehakis, M.N., Olkin, I., Ross, S.M. et al. On the life and work of Cyrus Derman. Ann Oper Res 208, 5–26 (2013). https://doi.org/10.1007/s10479-013-1429-x

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10479-013-1429-x