Abstract

This paper deals with a projection least squares estimator of the drift function of a jump diffusion process X computed from multiple independent copies of X observed on [0, T]. Risk bounds are established on this estimator and on an associated adaptive estimator. Finally, some numerical experiments are provided.

Similar content being viewed by others

References

Abramowitz, M., Stegun I.A. (1964). Handbook of Mathematical Functions with Formulas, Graphs, and Mathematical Tables. USA: Dover Publications.

Amorino, C., Gloter, A. (2021). Invariant density adaptive estimation for ergodic jump diffusion processes over anisotropic classes. Journal of Statistical Planning and Inference, 213, 106–129.

Amorino, C., Dion-Blanc, C., Gloter, A., Lemler, S. (2022). On the nonparametric inference of coefficients of self-exciting jump-diffusion. Electronic Journal of Statistics, 16, 3212–3277.

Applebaum, C. (2009). Lévy Processes and Stochastic Calculus. Cambridge: Cambridge University Press.

Belomestny, D., Comte, F., Genon-Catalot, V. (2019). Sobolev-Hermite versus Sobolev nonparametric density estimation on \({\mathbb{R} }\). Annals of the Institute of Statistical Mathematics, 71(1), 29–62.

Bichteler, K., Jacod, J. (1983). Calcul de Malliavin pour les diffusions avec sauts : existence d’une densité dans le cas unidimensionnel. Séminaire de Probabilités, 17, 132–157.

Chen, Z.Q., Hu, E., Xie, L., Zhang, X. (2017). Heat Kernels for nonsymmetric diffusion operators with jumps. Journal of Differential Equations, 263(10), 6576–6634.

Clément, E., Gloter, A. (2019). Estimating Functions for SDE Driven by Stable Lévy Processes. Annales de l’Institut Henri Poincaré (B), 55(3), 1316–1348.

Clément, E., Gloter, A. (2020). Joint estimation for sde driven by locally stable Lévy processes. Electronic Journal of Statistics, 14(2), 2922–2956.

Cohen, A., Davenport, M.A., Leviatan, D. (2013) On the stability and accuracy of least squares approximations. Foundations of Computational Mathematics, 13, 819–834.

Comte, F. (2001). Adaptive estimation of the spectrum of a stationary Gaussian sequence. Bernoulli, 7(2), 267–298.

Comte, F., Genon-Catalot, V. (2020). Regression function estimation as a partly inverse problem. Annals of the Institute of Statistical Mathematics, 72(4), 1023–1054.

Comte, F., Genon-Catalot, V. (2020). Nonparametric drift estimation for i.i.d. paths of stochastic differential equations. The Annals of Statistics, 48(6), 3336–3365.

Delattre, M., Genon-Catalot, V., Samson, A. (2013). Maximum likelihood estimation for stochastic differential equations with random effects. Scandinavian Journal of Statistics, 40, 322–343.

Dellacherie, C.A., Meyer, P.A. (1980). Probabilités et potentiel : théorie des martingales. Paris: Hermann.

Denis, C., Dion-Blanc, C., Martinez M. (2021). A ridge estimator of the drift from discrete repeated observations of the solution of a stochastic differential equation. Bernoulli, 27, 2675–2713.

DeVore, R.A., Lorentz, G.G. (1993). Constructive Approximation. Berlin, Heidelberg: Springer.

Ditlevsen, S., De Gaetano, A. (2005). Mixed effects in stochastic differential equation models. REVSTAT, 3, 137–153.

Dussap, F. (2021). Anisotropic multivariate deconvolution using projection on the laguerre basis. Journal of Statistical Planning and Inference, 215, 23–46.

Gloter, A., Loukianova, D., Mai, H. (2018). Jump filtering and efficient drift estimation for Lévy-Driven SDEs. The Annals of Statistics, 46(4), 1445–1480.

Marie, N., Rosier, A. (2023). Nadaraya-Watson Estimator for I.I.D paths of diffusion processes. Scandinavian Journal of Statistics, 50(2), 589–637.

Picchini, U., Ditlevsen, S. (2011) Practical estimation of high dimensional stochastic differential mixed-effects models. Computational Statistics and Data Analysis, 55, 1426–1444.

Schmisser, E. (2014). Non-parametric adaptive estimation of the drift for a jump diffusion process. Stochastic Processes and their Applications, 124, 883–914.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A. Proofs

1.1 A.1 Proof of Lemma 1

First, let us show that the symmetric matrix \({\varvec{\Psi }}_{m,\sigma }\) is positive semidefinite. Indeed, for any \(y\in {\mathbb {R}}^m\),

Moreover, by the isometry property of Itô’s integral (with respect to B), by the isometry type property of the stochastic integral with respect to \({\mathfrak {Z}}\), and since \(\sigma \) and \(\gamma \) are bounded, for every \(j\in \{1,\dots ,m\}\),

Therefore, since \({\varvec{\Psi }}_{m,\sigma }\) is positive semidefinite, and by Inequality (),

\(\Box \)

1.2 A.2 Proof of Theorem 1

The proof of Theorem 1 relies on the two following lemmas.

Lemma 2

There exists a constant \(\mathfrak c_{2} > 0\), not depending on m and N, such that

Lemma 3

Consider the event

Under Assumptions 1, there exists a constant \({\mathfrak {c}}_{3} > 0\), not depending on m and N, such that

The proof of Lemma 2 is postponed to Subsubsection a.2.2, and the proof of Lemma 3 remains the same as the proof of Comte and Genon-Catalot (2020b), Lemma 6.1, because \((B^1,Z^1),\dots ,(B^N,Z^N)\) are independent.

1.2.1 A.2.1 Steps of the proof

First of all,

Let us find suitable bounds on \({\mathbb {E}}(U_1)\), \({\mathbb {E}}(U_2)\) and \({\mathbb {E}}(U_3)\).

-

Bound on \({\mathbb {E}}(U_1)\). By Cauchy-Schwarz’s inequality,

$$\begin{aligned} {\mathbb {E}}(U_1)\leqslant & {} {\mathbb {E}}(\Vert b_I\Vert _{N}^{4})^{1/2}\mathbb P(\Lambda _{m}^{c})^{1/2} \leqslant \mathbb E\left( \frac{1}{T}\int _{0}^{T}b_I(X_t)^4dt\right) ^{1/2} {\mathbb {P}}(\Lambda _{m}^{c})^{1/2}\\\leqslant & {} {\mathfrak {c}}_1{\mathbb {P}}(\Lambda _{m}^{c})^{1/2}<\infty \quad \textrm{with}\quad {\mathfrak {c}}_1 =\left( \int _{-\infty }^{\infty }b_I(x)^4f_T(x)dx\right) ^{1/2} <\infty . \end{aligned}$$ -

Bound on \({\mathbb {E}}(U_2)\). Let \(\Pi _{N,m}(.)\) be the orthogonal projection from \({\mathbb {L}}^2(I,f_T(x)dx)\) onto \(\mathcal S_m\) with respect to the empirical scalar product \(\langle .,.\rangle _N\). Then,

$$\begin{aligned} \Vert {\widehat{b}}_m - b_I\Vert _{N}^{2} = \Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} + \min _{\tau \in {\mathcal {S}}_m}\Vert b_I -\tau \Vert _{N}^{2}. \end{aligned}$$(6)As in the proof of Comte and Genon-Catalot (2020b), Proposition 2.1, on \(\Omega _m\),

$$\begin{aligned} \Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} = \widehat{\textbf{E}}_{m}^{*}{\widehat{\varvec{\Psi }}}_{m}^{-1}\widehat{\textbf{E}}_m \leqslant 2\widehat{\textbf{E}}_{m}^{*}{\varvec{\Psi }}_{m}^{-1}\widehat{\textbf{E}}_m. \end{aligned}$$So,

$$\begin{aligned} {\mathbb {E}}(\Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} {\textbf {1}}_{\Lambda _m\cap \Omega _m})\leqslant & {} 2\mathbb E\left( \sum _{j,\ell = 1}^{m}[\widehat{\textbf{E}}_m]_j[\widehat{\textbf{E}}_m]_{\ell } [{\varvec{\Psi }}_{m}^{-1}]_{j,\ell }\right) \\= & {} \frac{2}{NT}\sum _{j,\ell = 1}^{m} [{\varvec{\Psi }}_{m,\sigma }]_{j,\ell } [{\varvec{\Psi }}_{m}^{-1}]_{j,\ell } = \frac{2}{NT}\textrm{trace}({\varvec{\Psi }}_{m}^{-1/2} {\varvec{\Psi }}_{m,\sigma } {\varvec{\Psi }}_{m}^{-1/2}). \end{aligned}$$Then, by Equality (6) and Lemma 1,

$$\begin{aligned} {\mathbb {E}}(U_2)\leqslant & {} {\mathbb {E}}\left( \min _{\tau \in \mathcal S_m}\Vert b_I -\tau \Vert _{N}^{2}\right) + \frac{2}{NT}\textrm{trace}({\varvec{\Psi }}_{m}^{-1/2} {\varvec{\Psi }}_{m,\sigma } {\varvec{\Psi }}_{m}^{-1/2})\\\leqslant & {} \min _{\tau \in {\mathcal {S}}_m}\Vert b_I -\tau \Vert _{f_T}^{2} + \frac{2m}{NT} (\Vert \sigma \Vert _{\infty }^{2} +\lambda \mathfrak c_{\zeta ^2}\Vert \gamma \Vert _{\infty }^{2}). \end{aligned}$$ -

Bound on \({\mathbb {E}}(U_3)\). Since

$$\begin{aligned} \Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} = \widehat{\textbf{E}}_{m}^{*}{\widehat{\varvec{\Psi }}}_{m}^{-1}\widehat{\textbf{E}}_m, \end{aligned}$$by the definition of the event \(\Lambda _m\), and by Lemma 2,

$$\begin{aligned} {\mathbb {E}}(\Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} {\textbf {1}}_{\Lambda _m\cap \Omega _{m}^{c}})\leqslant & {} \mathbb E(\Vert {\widehat{\varvec{\Psi }}}_{m}^{-1}\Vert _{\textrm{op}} |\widehat{\textbf{E}}_{m}^{*}\widehat{\textbf{E}}_m| {{\textbf {1}}}_{\Lambda _m\cap \Omega _{m}^{c}})\\\leqslant & {} \frac{{\mathfrak {c}}_T}{L(m)}\cdot \frac{NT}{\log (NT)} {\mathbb {E}}(|\widehat{\textbf{E}}_{m}^{*}\widehat{\textbf{E}}_m|^2)^{1/2} {\mathbb {P}}(\Omega _{m}^{c})^{1/2} \leqslant \frac{\mathfrak c_2m^{1/2}}{\log (NT)}{\mathbb {P}}(\Omega _{m}^{c})^{1/2}, \end{aligned}$$where the constant \({\mathfrak {c}}_2 > 0\) doesn’t depend on m and N. Moreover,

$$\begin{aligned} \min _{\tau \in {\mathcal {S}}_m}\Vert \tau - b_I\Vert _{N}^{2} \leqslant \Vert b_I\Vert _{N}^{2} \quad \textrm{because}\quad 0\in {\mathcal {S}}_m, \end{aligned}$$and then,

$$\begin{aligned} \Vert {\widehat{b}}_m - b_I\Vert _{N}^{2}= & {} \Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} + \min _{\tau \in {\mathcal {S}}_m}\Vert \tau - b_I\Vert _{N}^{2}\\\leqslant & {} \Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} +\Vert b_I\Vert _{N}^{2}. \end{aligned}$$Therefore,

$$\begin{aligned} {\mathbb {E}}(U_3)\leqslant & {} {\mathbb {E}}(\Vert {\widehat{b}}_m -\Pi _{N,m}(b_I)\Vert _{N}^{2} {{\textbf {1}}}_{\Lambda _m\cap \Omega _{m}^{c}}) + {\mathbb {E}}(\Vert b_I\Vert _{N}^{2}{{\textbf {1}}}_{\Lambda _m\cap \Omega _{m}^{c}})\\\leqslant & {} \frac{{\mathfrak {c}}_2m^{1/2}}{\log (NT)} \mathbb P(\Omega _{m}^{c})^{1/2} + {\mathfrak {c}}_1\mathbb P(\Omega _{m}^{c})^{1/2}. \end{aligned}$$

So,

Therefore, by Lemma 3, there exists a constant \({\mathfrak {c}}_3 > 0\), not depending on m and N, such that

\(\square \)

1.2.2 A.2.2 Proof of Lemma 2

In the sequel, the quadratic variation of any piecewise continuous stochastic process \((\Gamma _t)_{t\in [0,T]}\) is denoted by \((\llbracket \Gamma \rrbracket _t)_{t\in [0,T]}\). First of all, note that since B and Z are independent, for every \(j\in \{1,\dots ,m\}\),

where, for every \(t\in [0,T]\),

By Jensen’s inequality and Burkholder-Davis-Gundy’s inequality (see Dellacherie and Meyer, 1980, p. 303), there exists a constant \({\mathfrak {c}}_1 > 0\), not depending on m and N, such that

By Jensen’s inequality,

Moreover, since \(\Vert {{\textbf {x}}}\Vert _{4,m}\leqslant \Vert {{\textbf {x}}}\Vert _{2,m}\) for every \({{\textbf {x}}}\in {\mathbb {R}}^d\),

So, by applying twice the Fubini–Tonelli theorem,

and by the isometry type property of the stochastic integral with respect to \({\mathfrak {Z}}^{(2)}\),

Therefore,

with

\(\square \)

1.3 A.3 Proof of Theorem 2

Let us consider the events

where

and let us recall that

As a reminder, the sets \(\widehat{{\mathcal {M}}}_N\) and \({\mathcal {M}}_N\) introduced in Sect. 4 are, respectively, defined by

and

The proof of Theorem 2 relies on the three following lemmas.

Lemma 4

Under Assumptions 1, 2 and 3, there exists a constant \({\mathfrak {c}}_{4} > 0\), not depending on N, such that

Lemma 5

(Bernstein type inequality) Consider the empirical process

Under Assumption 3, for every \(\xi ,v > 0\),

with

Lemma 6

Under Assumptions 1 and 3, there exists a constant \({\mathfrak {c}}_{7} > 0\), not depending on N, such that for every \(m\in {\mathcal {M}}_N\),

where, for every \(m'\in {\mathcal {M}}_N\),

The proof of Lemma 5 is postponed to Subsubsection A.3.2. Lemma 6 is a consequence of Lemma 5 thanks to the \(\mathbb L_{f_T}^{2}\)-\({\mathbb {L}}^{\infty }\) chaining technique (see Comte, 2001, Proposition 4). Finally, the proof of Lemma 4 remains the same as the proof of Comte and Genon-Catalot (2020b), Eq. (6.17), because \((B^1,{\mathfrak {Z}}^1),\dots ,(B^N,{\mathfrak {Z}}^N)\) are independent.

1.3.1 A.3.1 Steps of the proof

First of all,

Let us find suitable bounds on \({\mathbb {E}}(U_1)\) and \(\mathbb E(U_2)\).

-

Bound on \({\mathbb {E}}(U_1)\). Since

$$\begin{aligned} \Vert {\widehat{b}}_{{\widehat{m}}} -\Pi _{N,{\widehat{m}}}(b_I)\Vert _{N}^{2} = \widehat{\textbf{E}}_{{\widehat{m}}}^{*}{\widehat{\varvec{\Psi }}}_{{\widehat{m}}}^{-1} \widehat{\textbf{E}}_{{\widehat{m}}}, \end{aligned}$$by the definition of \(\widehat{{\mathcal {M}}}_N\), and by Lemma 2,

$$\begin{aligned} {\mathbb {E}}(\Vert {\widehat{b}}_{{\widehat{m}}} -\Pi _{N,{\widehat{m}}}(b_I)\Vert _{N}^{2} {{\textbf {1}}}_{\Xi _{N}^{c}})\leqslant & {} \mathbb E(\Vert {\widehat{\varvec{\Psi }}}_{{\widehat{m}}}^{-1}\Vert _{\textrm{op}} |\widehat{\textbf{E}}_{NT}^{*}\widehat{\textbf{E}}_{NT}| {{\textbf {1}}}_{\Xi _{N}^{c}})\\\leqslant & {} \left[ {\mathfrak {d}}_T \frac{NT}{\log (NT)}\right] ^{1/2} {\mathbb {E}}(|\widehat{\textbf{E}}_{NT}^{*}\widehat{\textbf{E}}_{NT}|^2)^{1/2} {\mathbb {P}}(\Xi _{N}^{c})^{1/2} \leqslant \frac{\mathfrak c_1N}{\log (NT)}{\mathbb {P}}(\Xi _{N}^{c})^{1/2}, \end{aligned}$$where the constant \({\mathfrak {c}}_1 > 0\) does not depend on N. Then,

$$\begin{aligned} {\mathbb {E}}(U_1)\leqslant & {} {\mathbb {E}}(\Vert {\widehat{b}}_{{\widehat{m}}} -\Pi _{N,{\widehat{m}}}(b_I)\Vert _{N}^{2} {{\textbf {1}}}_{\Xi _{N}^{c}}) + {\mathbb {E}}(\Vert b_I\Vert _{N}^{2}{{\textbf {1}}}_{\Xi _{N}^{c}})\\\leqslant & {} \frac{{\mathfrak {c}}_1N}{\log (NT)}\mathbb P(\Xi _{N}^{c})^{1/2} + {\mathfrak {c}}_2{\mathbb {P}}(\Xi _{N}^{c})^{1/2} \end{aligned}$$with

$$\begin{aligned} {\mathfrak {c}}_2 =\left( \int _{-\infty }^{\infty }b_I(x)^4f_T(x)dx\right) ^{1/2}. \end{aligned}$$So, by Lemma 4, there exists a constant \({\mathfrak {c}}_3 > 0\), not depending on N, such that

$$\begin{aligned} {\mathbb {E}}(U_1) \leqslant \frac{{\mathfrak {c}}_3}{N}. \end{aligned}$$ -

Bound on \({\mathbb {E}}(U_2)\). Note that

$$\begin{aligned} U_2= & {} \Vert {\widehat{b}}_{{\widehat{m}}} - b_I\Vert _{N}^{2}{\textbf {1}}_{\Xi _N\cap \Omega _{N}^{c}} + \Vert {\widehat{b}}_{{\widehat{m}}} - b_I\Vert _{N}^{2}{{\textbf {1}}}_{\Xi _N\cap \Omega _N}\\=: & {} U_{2,1} + U_{2,2}. \end{aligned}$$On the one hand, by Lemma 3, there exists a constant \({\mathfrak {c}}_4 > 0\), not depending on N, such that

$$\begin{aligned} {\mathbb {P}}(\Xi _N\cap \Omega _{N}^{c}) \leqslant \sum _{m\in \mathcal M_{N}^{+}}{\mathbb {P}}(\Omega _{m}^{c}) \leqslant \frac{\mathfrak c_4}{N^6}. \end{aligned}$$Then, as for \({\mathbb {E}}(U_1)\), there exists a constant \(\mathfrak c_5 > 0\), not depending on N, such that

$$\begin{aligned} {\mathbb {E}}(U_{2,1}) \leqslant \frac{{\mathfrak {c}}_5}{N}. \end{aligned}$$On the other hand,

$$\begin{aligned} \gamma _N(\tau ') -\gamma _N(\tau ) = \Vert \tau ' - b\Vert _{N}^{2} -\Vert \tau - b\Vert _{N}^{2} - 2\nu _N(\tau ' -\tau ) \end{aligned}$$for every \(\tau ,\tau '\in {\mathcal {S}}_1\cup \dots \cup {\mathcal {S}}_{N_T}\). Moreover, since

$$\begin{aligned} {\widehat{m}} = \arg \min _{m\in \widehat{{\mathcal {M}}}_N}\{-\Vert {\widehat{b}}_m\Vert _{N}^{2} + \textrm{pen}(m)\} = \arg \min _{m\in \widehat{\mathcal M}_N}\{\gamma _N({\widehat{b}}_m) + \textrm{pen}(m)\}, \end{aligned}$$for every \(m\in \widehat{{\mathcal {M}}}_N\),

$$\begin{aligned} \gamma _N({\widehat{b}}_{{\widehat{m}}}) + \textrm{pen}({\widehat{m}}) \leqslant \gamma _N({\widehat{b}}_m) + \textrm{pen}(m). \end{aligned}$$(8)On the event \(\Xi _N =\{{\mathcal {M}}_N\subset \widehat{\mathcal M}_N\subset {\mathcal {M}}_{N}^{+}\}\), Inequality (8) remains true for every \(m\in {\mathcal {M}}_N\). Then, on \(\Xi _N\), for any \(m\in {\mathcal {M}}_N\), since \({\mathcal {S}}_m +{\mathcal {S}}_{{\widehat{m}}}\subset \mathcal S_{m\vee {\widehat{m}}}\) under Assumption 2,

$$\begin{aligned} \Vert {\widehat{b}}_{{\widehat{m}}} - b_I\Vert _{N}^{2}\leqslant & {} \Vert {\widehat{b}_m} - b_I\Vert _{N}^{2} + 2\Vert {\widehat{b}}_{{\widehat{m}}} -{\widehat{b}_{m}}\Vert _{f_T} \nu _N \left( \frac{{\widehat{b}}_{{\widehat{m}}} -{\widehat{b}}_m}{ \Vert {\widehat{b}}_{{\widehat{m}}} -{\widehat{b}}_m\Vert _{f_T}}\right) + \textrm{pen}(m) - \textrm{pen}({\widehat{m}})\\\leqslant & {} \Vert {\widehat{b}}_m - b_I\Vert _{N}^{2} + \frac{1}{8}\Vert {\widehat{b}}_{{\widehat{m}}} -{\widehat{b}}_m\Vert _{f_T}^{2}\\{} & {} \hspace{1.5cm} + 8\left( \left[ \sup _{\tau \in \mathcal B_{m,{\widehat{m}}}}|\nu _N(\tau )|\right] ^2 - p(m,{\widehat{m}})\right) _+ + \textrm{pen}(m) + 8p(m,{\widehat{m}}) - \textrm{pen}({\widehat{m}}). \end{aligned}$$Since \(\Vert .\Vert _{f_T}^{2}{{\textbf {1}}}_{\Omega _N}\leqslant 2\Vert .\Vert _{N}^{2}{{\textbf {1}}}_{\Omega _N}\) on \(\mathcal S_1\cup \dots \cup {\mathcal {S}}_{\max ({\mathcal {M}}_{N}^{+})}\), and since \(8p(m,{\widehat{m}})\leqslant \textrm{pen}(m) + \textrm{pen}({\widehat{m}})\), on \(\Xi _N\cap \Omega _N\),

$$\begin{aligned} \Vert {\widehat{b}}_{{\widehat{m}}} - b_I\Vert _{N}^{2} \leqslant 3\Vert {\widehat{b}}_m - b_I\Vert _{N}^{2} + 4\textrm{pen}(m) + 16\left( \left[ \sup _\tau \in {\mathcal {B}}_m,{\widehat{m}}|\nu _N(\tau )|\right] ^2 - p(m,{\widehat{m}})\right) _+. \end{aligned}$$So, by Lemma 6,

$$\begin{aligned} {\mathbb {E}}(U_{2,2})\leqslant & {} \min _{m\in {\mathcal {M}}_N} \{\mathbb E(3\Vert {\widehat{b}}_m - b_I\Vert _{N}^{2}{{\textbf {1}}}_{\Xi _N}) + 4\textrm{pen}(m)\} + \frac{16{\mathfrak {c}}_{6}}{NT}\\\leqslant & {} {\mathfrak {c}}_6\min _{m\in {\mathcal {M}}_N} \left\{ \inf _{\tau \in {\mathcal {S}}_m} \Vert \tau - b_I\Vert _{f_T}^{2} +\frac{m}{NT} \right\} + \frac{{\mathfrak {c}}_6}{N} \end{aligned}$$where \({\mathfrak {c}}_6 > 0\) is a deterministic constant not depending on N.

\(\square \)

1.3.2 A.2.3.2 Proof of Lemma 5

Consider \(\tau \in {\mathcal {S}}_1\cup \dots \cup {\mathcal {S}}_{N_T}\) and, for any \(i\in \{1,\dots ,N\}\), let \(M^i(\tau ) = (M^i(\tau )_t)_{t\in [0,T]}\) be the martingale defined by

Moreover, for every \(\varepsilon > 0\), consider

where \(A_{\varepsilon }^{i}(\tau ) = (A_{\varepsilon }^{i}(\tau )_t)_{t\in [0,T]}\) and \(B_{\varepsilon }^{i}(\tau ) = (B_{\varepsilon }^{i}(\tau )_t)_{t\in [0,T]}\) are the stochastic processes defined by

for every \(t\in [0,T]\). The proof is dissected in three steps.

Step 1. Note that for any \(i\in \{1,\dots ,N\}\) and \(t\in [0,T]\),

and then, by Assumption 3,

for any \(\varepsilon \in (0,\varepsilon ^*)\) with \(\varepsilon ^* = ({\mathfrak {b}}\wedge 1)/(2\Vert \tau \Vert _{\infty ,I}\Vert \gamma \Vert _{\infty })\). So, \((\exp (Y_{\varepsilon }^{i}(\tau )_t))_{t\in [0,T]}\) is a local martingale by Applebaum (2009), Corollary 5.2.2. In other words, there exists an increasing sequence of stopping times \((T_{n}^{i})_{n\in {\mathbb {N}}}\) such that \(\lim _{n\rightarrow \infty }T_{n}^{i} =\infty \) a.s. and \((\exp (Y_{\varepsilon }^{i}(\tau )_{t\wedge T_{n}^{i}})_{t\in [0,T]}\) is a martingale. Therefore, by Lebesgue’s theorem and Markov’s inequality, for every \(\rho > 0\), the stochastic process \(Y_{N,\varepsilon }(\tau ):= Y_{\varepsilon }^{1}(\tau ) +\dots + Y_{\varepsilon }^{N}(\tau )\) satisfies

Step 2. For any \(\varepsilon \in (0,\varepsilon ^*)\) and \(t\in [0,T]\), let us find suitable bounds on

On the one hand,

On the other hand, for every \(\beta \in (-{\mathfrak {b}}/2,\mathfrak b/2)\), by Taylor’s formula and Assumption 3,

Since \(\varepsilon \in (0,\varepsilon ^*)\), one can take \(\beta =\varepsilon \tau (X_{s}^{i})\gamma (X_{s}^{i})\) for any \(s\in [0,t]\) and \(i\in \{1,\dots ,N\}\), and then

Therefore, Inequalities (9) and (10) lead to

Step 3 (conclusion). Consider \(M_N(\tau ):= M^1(\tau ) +\dots + M^N(\tau )\). For any \(\varepsilon \in (0,\varepsilon ^*)\) and \(\xi ,v > 0\), thanks to Step 2,

Moreover, taking

leads to

Therefore, by Step 1,

\(\square \)

Appendix B

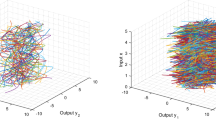

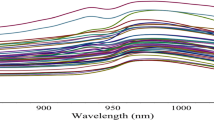

See Table 1 and Figs. (1, 2 and 3)

About this article

Cite this article

Halconruy, H., Marie, N. On a projection least squares estimator for jump diffusion processes. Ann Inst Stat Math 76, 209–234 (2024). https://doi.org/10.1007/s10463-023-00881-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10463-023-00881-7