Abstract

In this paper, we estimate the high-dimensional precision matrix under the weak sparsity condition where many entries are nearly zero. We revisit the sparse column-wise inverse operator estimator and derive its general error bounds under the weak sparsity condition. A unified framework is established to deal with various cases including the heavy-tailed data, the non-paranormal data, and the matrix variate data. These new methods can achieve the same convergence rates as the existing methods and can be implemented efficiently.

Similar content being viewed by others

References

Avella-Medina, M., Battey, H. S., Fan, J., Li, Q. (2018). Robust estimation of high-dimensional covariance and precision matrices. Biometrika, 105(2), 271–284.

Bickel, P. J., Levina, E. (2008). Covariance regularization by thresholding. Annals of Statistics, 36(6), 2577–2604.

Bickel, P. J., Ritov, Y., Tsybakov, A. B. (2009). Simultaneous analysis of lasso and Dantzig selector. Annals of Statistics, 37(4), 1705–1732.

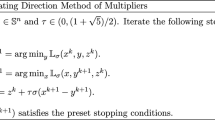

Boyd, S., Parikh, N., Chu, E., Peleato, B., Eckstein, J. (2011). Distributed optimization and statistical learning via the alternating direction method of multipliers. Foundations and Trends in Machine Learning, 3(1), 1–122.

Cai, T. (2017). Global testing and large-scale multiple testing for high-dimensional covariance structures. Annual Review of Statistics and Its Application, 4, 423–446.

Cai, T., Liu, W. (2011). Adaptive thresholding for sparse covariance matrix estimation. Journal of the American Statistical Association, 106(494), 672–684.

Cai, T., Liu, W., Luo, X. (2011). A constrained \(\ell _1\) minimization approach to sparse precision matrix estimation. Journal of the American Statistical Association, 106(494), 594–607.

Cai, T. T., Liu, W., Xia, Y. (2014). Two-sample test of high dimensional means under dependence. Journal of the Royal Statistical Society, Series B, 76(2), 349–372.

Cai, T. T., Liu, W., Zhou, H. H. (2016). Estimating sparse precision matrix: Optimal rates of convergence and adaptive estimation. Annals of Statistics, 44(2), 455–488.

Cai, T. T., Ren, Z., Zhou, H. H. (2016). Estimating structured high-dimensional covariance and precision matrices: Optimal rates and adaptive estimation. Electronic Journal of Statistics, 10(1), 1–59.

Candes, E., Tao, T. (2007). The Dantzig selector: Statistical estimation when \(p\) is much larger than \(n\). Annals of Statistics, 35(6), 2313–2351.

Datta, A., Zou, H. (2017). Cocolasso for high-dimensional error-in-variables regression. Annals of Statistics, 45(6), 2400–2426.

El Karoui, N. (2008). Operator norm consistent estimation of large-dimensional sparse covariance matrices. Annals of Statistics, 36(6), 2717–2756.

Fan, J., Feng, Y., Tong, X. (2012). A ROAD to classification in high dimensional space: The regularized optimal affine discriminant. Journal of the Royal Statistical Society, Series B, 74(4), 745–771.

Fan, J., Liao, Y., Liu, H. (2016). An overview of the estimation of large covariance and precision matrices. The Econometrics Journal, 1(19), C1–C32.

Friedman, J., Hastie, T., Tibshirani, R. (2008). Sparse inverse covariance estimation with the graphical lasso. Biostatistics, 9(3), 432–441.

Hastie, T., Tibshirani, R., Tibshirani, R. (2020). Best subset, forward stepwise or lasso? Analysis and recommendations based on extensive comparisons. Statistical Science, 35(4), 579–592.

Hornstein, M., Fan, R., Shedden, K., Zhou, S. (2019). Joint mean and covariance estimation with unreplicated matrix-variate data. Journal of the American Statistical Association, 114(526), 682–696.

Leng, C., Tang, C. Y. (2012). Sparse matrix graphical models. Journal of the American Statistical Association, 107(499), 1187–1200.

Li, X., Zhao, T., Yuan, X., Liu, H. (2015). The flare package for high dimensional linear regression and precision matrix estimation in R. Journal of Machine Learning Research, 16(18), 553–557.

Liu, H., Lafferty, J., Wasserman, L. (2009). The nonparanormal: Semiparametric estimation of high dimensional undirected graphs. Journal of Machine Learning Research 10(Oct):2295–2328.

Liu, H., Han, F., Yuan, M., Lafferty, J., Wasserman, L. (2012). High-dimensional semiparametric Gaussian copula graphical models. Annals of Statistics, 40(4), 2293–2326.

Liu, W., Luo, X. (2015). Fast and adaptive sparse precision matrix estimation in high dimensions. Journal of Multivariate Analysis, 135, 153–162.

Mai, Q., Zou, H., Yuan, M. (2012). A direct approach to sparse discriminant analysis in ultra-high dimensions. Biometrika, 99(1), 29–42.

Meinshausen, N., Bühlmann, P. (2006). High-dimensional graphs and variable selection with the lasso. Annals of Statistics, 34(3), 1436–1462.

Negahban, S. N., Ravikumar, P., Wainwright, M. J., Yu, B. (2012). A unified framework for high-dimensional analysis of \(M\)-estimators with decomposable regularizers. Statistical Science, 27(4), 538–557.

Raskutti, G., Wainwright, M. J., Yu, B. (2011). Minimax rates of estimation for high-dimensional linear regression over \(\ell _q\) balls. IEEE Transactions on Information Theory, 57(10), 6976–6994.

Ravikumar, P., Wainwright, M. J., Raskutti, G., Yu, B. (2011). High-dimensional covariance estimation by minimizing \(\ell _1\)-penalized log-determinant divergence. Electronic Journal of Statistics, 5, 935–980.

Ren, Z., Sun, T., Zhang, C. H., Zhou, H. H. (2015). Asymptotic normality and optimalities in estimation of large Gaussian graphical models. Annals of Statistics, 43(3), 991–1026.

Rothman, A. J., Levina, E., Zhu, J. (2009). Generalized thresholding of large covariance matrices. Journal of the American Statistical Association, 104(485), 177–186.

Sun, T., Zhang, C. H. (2012). Scaled sparse linear regression. Biometrika, 99(4), 879–898.

Sun, T., Zhang, C. H. (2013). Sparse matrix inversion with scaled lasso. Journal of Machine Learning Research, 14(1), 3385–3418.

Tong, T., Wang, C., Wang, Y. (2014). Estimation of variances and covariances for high-dimensional data: A selective review. Wiley Interdisciplinary Reviews: Computational Statistics, 6(4), 255–264.

Wainwright, M. J. (2009). Sharp thresholds for high-dimensional and noisy sparsity recovery using \(\ell _1\)-constrained quadratic programming (Lasso). IEEE Transactions on Information Theory, 55(5), 2183–2202.

Wainwright, M. J. (2019). High-dimensional statistics: A non-asymptotic viewpoint. Cambridge: Cambridge University Press.

Wang, C., Jiang, B. (2020). An efficient ADMM algorithm for high dimensional precision matrix estimation via penalized quadratic loss. Computational Statistics & Data Analysis, 142, 106812.

Wang, C., Yu, Z., Zhu, L. (2019). On cumulative slicing estimation for high dimensional data. Statistica Sinica, 31(2021), 223–242.

Wang, X., Yuan, X. (2012). The linearized alternating direction method of multipliers for Dantzig selector. SIAM Journal on Scientific Computing, 34(5), A2792–A2811.

Witten, D. M., Friedman, J. H., Simon, N. (2011). New insights and faster computations for the graphical lasso. Journal of Computational and Graphical Statistics, 20(4), 892–900.

Xue, L., Zou, H. (2012). Regularized rank-based estimation of high-dimensional nonparanormal graphical models. Annals of Statistics, 40(5), 2541–2571.

Ye, F., Zhang, C. H. (2010). Rate minimaxity of the Lasso and Dantzig selector for the \(\ell _q\) loss in \(\ell _r\) balls. Journal of Machine Learning Research, 11, 3519–3540.

Yuan, M. (2010). High dimensional inverse covariance matrix estimation via linear programming. Journal of Machine Learning Research, 11, 2261–2286.

Yuan, M., Lin, Y. (2007). Model selection and estimation in the Gaussian graphical model. Biometrika, 94(1), 19–35.

Zhang, T., Zou, H. (2014). Sparse precision matrix estimation via lasso penalized D-trace loss. Biometrika, 101(1), 103–120.

Zhou, S. (2014). Gemini: Graph estimation with matrix variate normal instances. Annals of Statistics, 42(2), 532–562.

Acknowledgements

We thank the Editor, an Associate Editor, and anonymous reviewers for their insightful comments.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Wang’s research is supported by National Natural Science Foundation of China (11971017, 12031005) and NSF of Shanghai (21ZR1432900). Liu’s research is supported by National Program on Key Basic Research Project (2018AAA0100704), NSFC Grant No. 11825104 and 11690013, Youth Talent Support Program, Shanghai Municipal Science and Technology Major Project (2021SHZDZX0102) .

Appendix

Appendix

This section includes all the technical proofs of the main theorems and some necessary lemmas.

1.1 Proof of Theorem 1

Let \(\varvec{e}\) be a column of the identity matrix \(\mathbf {I}\), then \(\varvec{\beta }^*=\varvec{\varSigma }^{-1} \varvec{e}\) is the corresponding column of the target precision matrix \(\varvec{\varOmega }\). For an arbitrary estimator of \(\widehat{\varvec{\varSigma }}\), we consider the SCIO estimation

By the KKT condition, we have

which ensures the basic inequality

Writing the difference vector as \(\varvec{h}=\varvec{\beta }^*-\widehat{\varvec{\beta }}\), we have

Combined with the basic inequality (7), it reduces to

Since \(\lambda \ge 3\Vert \varvec{\varOmega }\Vert _{L_1}\Vert \widehat{\varvec{\varSigma }}-\varvec{\varSigma }\Vert _\infty \), then by this assumption we obtain

and hence

For any index set J, we have

and by rearranging the inequality, we get an important relation

By the KKT condition (6), we have \(|\widehat{\varvec{\varSigma }}\widehat{\varvec{\beta }}-\varvec{e}|_\infty \le \lambda \) and

Thus, we can get

and

Then, we conclude that

and

Next, we split the argument into two cases.

Case 1: If \(|\varvec{h}_{J^c}|_1\ge 3|\varvec{h}_J|_1\), by the inequality (9), we have \(|\varvec{h}_J|_1\le 3|\varvec{\beta }^*_{J^c}|_1\) and then

where the first inequality uses the fact (9) again.

Case 2: Otherwise, we may assume \(|\varvec{h}_{J^c}|_1< 3 |\varvec{h}_J|_1\) and then \( |\varvec{h}|_1 \le 4|\varvec{h}_J|_1\). By the bound (11),

If the relation \(\lambda |J| \le \frac{1}{2}\) holds, we conclude that

Now we begin to conduct the index set J that can control the bounds (12) and (13) simultaneously. To do so, we consider the index set

for some \(t>0\). With this setting,

and for the cardinality of J, we have

Combining the bounds (12) and (13), we get

and setting \(t=\lambda \Vert \varvec{\varOmega }\Vert _{L_1}\) yields the conclusion

It remains to check \(\lambda |J| \le \frac{1}{2}\). Let \(t=\lambda \Vert \varvec{\varOmega }\Vert _{L_1}\) and we have

which holds by the assumption.

Finally, invoking (10), we conclude

where we use the fact \(\lambda ^{1-q} ( \Vert \varvec{\varOmega }\Vert _{L_1})^{-q} s_p<\frac{1}{2}\) again. The proof is completed. \(\square \)

1.2 Proof of Theorem 2

Note the result of Theorem 1 holds uniformly for all \(i=1,\dots ,p\), that is

By the construction of \(\tilde{\varvec{\varOmega }}\), it is easy to show

Next we study the effect of the symmetrization step. For \(i \in \{1,\dots ,n\}\), we denote

Since \(|\tilde{\varvec{\beta }}|_1 \le |\widehat{\varvec{\beta }}|_1\) by our construction, for the index set J, we have

which yields

Recall the index set \(J=\{j|~|\varvec{\beta }_j^*|> \lambda \Vert \varvec{\varOmega }\Vert _{L_1}\}\) from Proof of Theorem 1, and also the bounds

Thus

and

Invoking the bound (16), we get

which ensures

Since the above bound holds uniformly for all \(i=1,\dots ,p\), we conclude

The proof is completed. \(\square \)

1.3 Proof of Corollaries

We only prove Corollary 1. The proof of other corollaries share a very similar procedure as Corollary 1 and hence are omitted.

Proof of Corollary 1:

By the assumption (A2) and Proposition 1, we have \(\mathbf {P}\left( \Vert \widehat{\varvec{\varSigma }}_1-\varvec{\varSigma }\Vert _{\infty }\ge C\sqrt{\frac{\log {p}}{n}}\right) = O(p^{-\tau })\). Similarly, by the assumption (A3) and Proposition 1, we have \(\mathbf {P}\left( \Vert \widehat{\varvec{\varSigma }}_1-\varvec{\varSigma }\Vert _{\infty }\ge C\sqrt{\frac{\log {p}}{n}}\right) = O\left( p^{-\tau }+n^{-\frac{\delta }{8}}\right) \).

We take \(\lambda =C_0M_p\sqrt{\frac{\log {p}}{n}}\). Note that \(\Vert \varvec{\varOmega }\Vert _{L_1}\le M_p\), then for a sufficiently large \(C_0\), the condition \(\lambda \ge 3\Vert \varvec{\varOmega }\Vert _{L_1}\Vert \widehat{\varvec{\varSigma }}-\varvec{\varSigma }\Vert _\infty \) required in Theorem 2 holds. By the assumption (A1), when n, p are large enough, we can obtain that \(\Vert \varvec{\varOmega }\Vert ^{-q}_{L_1}\lambda ^{1-q}s_p\le s_pM_p^{1-2q}\le \frac{1}{2}\). So by applying Theorem 2, the conclusion of Corollary 1 holds. \(\square \)

About this article

Cite this article

Wu, Z., Wang, C. & Liu, W. A unified precision matrix estimation framework via sparse column-wise inverse operator under weak sparsity. Ann Inst Stat Math 75, 619–648 (2023). https://doi.org/10.1007/s10463-022-00856-0

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10463-022-00856-0