Abstract

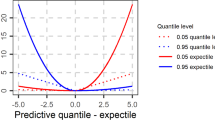

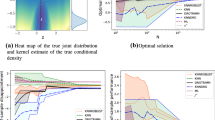

Statistical analysis of large-scale dataset is challenging due to the limited memory constraint and computation source and calls for the efficient distributed methods. In this paper, we mainly study the distributed estimation and inference for composite quantile regression (CQR). For computational and statistical efficiency, we propose to apply a smoothing idea to the CQR loss function for the distributed data and then successively refine the estimator via multiple rounds of aggregations. Based on the Bahadur representation, we derive the asymptotic normality of the proposed multi-round smoothed CQR estimator and show that it also achieves the same efficiency of the ideal CQR estimator by analyzing the entire dataset simultaneously. Moreover, to improve the efficiency of the CQR, we propose a multi-round smoothed weighted CQR estimator. Extensive numerical experiments on both simulated and real data validate the superior performance of the proposed estimators.

Similar content being viewed by others

References

Battey, H., Fan, J., Liu, H., Lu, J., Zhu, Z. (2018). Distributed estimation and inference with statistical guarantees. The Annals of Statistics, 46, 1352–1382.

Boyd, S., Parikh, N., Chu, E., Peleato, B., Eckstein, J. (2011). Distributed optimization and statistical learning via the alternating direction method of multipliers. Foundations and Trends in Machine Learning, 3, 1–122.

Chen, X., Xie, M. G. (2014). A split-and-conquer approach for analysis of extraordinarily large data. Statistica Sinica, 24, 1655–1684.

Chen, X., Liu, W., Zhang, Y. (2019). Quantile regression under memory constraint. The Annals of Statistics, 47, 3244–3273.

Dekel, O., Gilad-Bachrach, R., Shamir, O., Xiao, L. (2012). Optimal distributed online prediction using mini-batches. The Journal of Machine Learning Research, 13, 165–202.

Fan, T. H., Lin, D., Cheng, K. F. (2007). Regression analysis for massive datasets. Data and Knowledge Engineering, 61, 554–562.

Gu, Y., Zou, H. (2020). Sparse composite quantile regression in ultrahigh dimensions with tuning parameter calibration. IEEE Transactions on Information Theory, 66, 7132–7154.

Heller, G. (2007). Smoothed rank regression with censored data. Journal of the American Statistical Association, 102, 552–559.

Horowitz, J. L. (1998). Bootstrap methods for median regression models. Econometrica, 66, 1327–1351.

Jiang, R., Qian, W. M., Zhou, Z. G. (2016). Weighted composite quantile regression for single-index models. Journal of Multivariate Analysis, 148, 34–48.

Jiang, X. J., Jiang, J. C., Song, X. Y. (2012). Oracle model selection for nonlinear models based on weighted composite quantile regression. Statistica Sinica, 22, 1479–1506.

Kai, B., Li, R., Zou, H. (2010). Local composite quantile regression smoothing: An efficient and safe alternative to local polynomial regression. Journal of the Royal Statistical Society: Series B (Statistical Methodology), 72, 49–69.

Kai, B., Li, R., Zou, H. (2011). New efficient estimation and variable selection methods for semiparametric varying-coefficient partially linear models. The Annals of Statistics, 39, 305–332.

Kaplan, D. M., Sun, Y. (2017). Smoothed estimating equations for instrumental variables quantile regression. Econometric Theory, 33, 105–157.

Koenker, R., Bassett, J. G. (1978). Regression quantiles. Econometrica, 46, 33–50.

Lee, J. D., Liu, Q., Sun, Y., Taylor, J. E. (2017). Communication-efficient sparse regression. The Journal of Machine Learning Research, 18, 115–144.

Li, R., Lin, D. K., Li, B. (2013). Statistical inference in massive data sets. Applied Stochastic Models in Business and Industry, 29, 399–409.

Shi, C., Lu, W., Song, R. (2018). A massive data framework for M-estimators with cubic-rate. Journal of the American Statistical Association, 113, 1698–1709.

Volgushev, S., Chao, S. K., Cheng, G. (2019). Distributed inference for quantile regression processes. The Annals of Statistics, 47, 1634–1662.

Whang, Y. J. (2006). Smoothed empirical likelihood methods for quantile regression models. Econometric Theory, 22, 173–205.

Zhang, Y., Duchi, J. C., Wainwright, M. J. (2013). Communication-efficient algorithms for statistical optimization. The Journal of Machine Learning Research, 14, 3321–3363.

Zhao, K., Lian, H. (2016). A note on the efficiency of composite quantile regression. Journal of Statistical Computation and Simulation, 86, 1334–1341.

Zou, H., Yuan, M. (2008). Composite quantile regression and the oracle model selection theory. The Annals of Statistics, 36, 1108–1126.

Acknowledgements

We are grateful to the Editor, an associate editor and one anonymous referee for their insightful comments and suggestions, which have led to significant improvements. This paper was supported by the National Natural Science Foundation of China [grant numbers 11871287, 11771144, 11801359], the Natural Science Foundation of Tianjin [18JCYBJC41100], Fundamental Research Funds for the Central Universities and the Key Laboratory for Medical Data Analysis and Statistical Research of Tianjin.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

About this article

Cite this article

Di, F., Wang, L. Multi-round smoothed composite quantile regression for distributed data. Ann Inst Stat Math 74, 869–893 (2022). https://doi.org/10.1007/s10463-021-00816-0

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10463-021-00816-0