Abstract

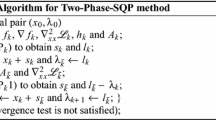

In this work, we consider the relevant class of Standard Quadratic Programming problems and we propose a simple and quick decomposition algorithm, which sequentially updates, at each iteration, two variables chosen by a suitable selection rule. The main features of the algorithm are the following: (1) the two variables are updated by solving a subproblem that, although nonconvex, can be analytically solved; (2) the adopted selection rule guarantees convergence towards stationary points of the problem. Then, the proposed Sequential Minimal Optimization algorithm, which optimizes the smallest possible sub-problem at each step, can be used as efficient local solver within a global optimization strategy. We performed extensive computational experiments and the obtained results show that the proposed decomposition algorithm, equipped with a simple multi-start strategy, is a valuable alternative to the state-of-the-art algorithms for Standard Quadratic Optimization Problems.

Similar content being viewed by others

References

Bomze IM (1998) On standard quadratic optimization problems. J Global Optim 13:369–387

Bomze IM (2002a) Branch-and-bound approaches to standard quadratic optimization problems. J Global Optim 22:17–37

Bomze IM (2002b) Regularity versus degeneracy in dynamics, games, and optimization: A unified approach to different aspects. SIAM Rev. 44(3):394-414, ISSN 0036-1445. https://doi.org/10.1137/S00361445003756

Bomze IM, DeKlerk E (2002) Solving standard quadratic optimization problems via linear, semidefinite and copositive programming. J Global Optim 24:163–185

Bomze IM, Palagi L (2005) Quartic formulation of standard quadratic optimization problems. J Global Optim 32:181–205

Bomze IM, Grippo L, Palagi L (2012) Unconstrained formulation of standard quadratic optimization problems. Top 20:35–51

Bomze IM, Schachinger W, Ullrich R (2018) The complexity of simple models-a study of worst and typical hard cases for the standard quadratic optimization problem. Math Operat Res 43:651–674

Bottou L, Chapelle O, DeCoste D, Weston J (2007) Support Vector Machine Solvers. MIT Press, Ny

Bundfuss S, Dür M (2009) An adaptive linear approximation algorithm for copositive programs. SIAM J Optim 20:30–53

Chang C-C, Lin C-J (2011) LIBSVM: a library for support vector machines. ACM Trans Intell Syst Technol (TIST) 2:27

Cortes C, Vapnik V (1995) Support-vector networks. Mach Learn 20:273–297

Dellepiane U, Palagi L (2015) Using SVM to combine global heuristics for the standard quadratic problem. Euro J Oper Res 241:596–605

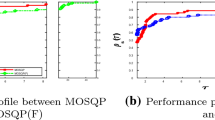

Dolan ED, Moré JJ (2002) Benchmarking optimization software with performance profiles. Math Programm 91:201–213

Fan R-E, Chen P-H, Lin C-J (2005) Working set selection using second order information for training support vector machines. J Mach Learn Res 6:1889–1918

Gibbons LE, Hearn DW, Pardalos PM, Ramana MV (1997) Continuous characterizations of the maximum clique problem. Math Oper Res 22:754–768

Gondzio J and Yıldırım EA (2021) Global solutions of nonconvex standard quadratic programs via mixed integer linear programming reformulations. J Global Optim 81:293–321

L.GurobiOptimization. Gurobi optimizer reference manual, 2021. URL http://www.gurobi.com

Horst R, Pardalos PM, VanThoai N (2000) Introduction to global optimization. Springer Science & Business Media, NY

Ibaraki T, Katoh N (1988) Resource Allocation Problems: Algorithmic Approaches. MIT Press, Cambridge, MA, USA. 0262090279

T.Joachims. Making large-scale support vector machine learning practical. In B.Schölkopf, C.J.C. Burges, and A.J. Smola, editors, Advances in Kernel Methods, pages 169–184. MIT Press, Cambridge, MA, USA, 1999. ISBN 0-262-19416-3

Keerthi SS, Shevade SK, Bhattacharyya C, Murthy KR (2001) Improvements to Platt’s SMO algorithm for SVM classifier design. Neural Comput 13:637–649

Kingman JF 1961 A mathematical problem in population genetics. In: Mathematical Proceedings of the Cambridge Philosophical Society, vol. 57, pages 574–582. Cambridge University Press

Kraft D (1994) Algorithm 733: Tomp-fortran modules for optimal control calculations. ACM Trans Math Softw (TOMS) 20:262–281

S.Lacoste-Julien. Convergence rate of frank-wolfe for non-convex objectives. arXiv preprintarXiv:1607.00345, 2016

Lacoste-Julien S and Jaggi M 2015 On the global linear convergence of frank-wolfe optimization variants. In: Proceedings of the 28th International Conference on Neural Information Processing Systems-Volume 1, pp. 496–504,

H.-T. Lin and C.-J. Lin. A study on sigmoid kernels for SVM and the training of nonpsd kernels by smo-type methods. Technical Report, 2003

Liuzzi G, Locatelli M, Piccialli V (2019) A new branch-and-bound algorithm for standard quadratic programming problems. Optim Methods Softw 34:79–97

Markowitz H (1952) Portfolio selection. J. Finance 7:77–91

Markowitz HM (1994) The general mean-variance portfolio selection problem. Philos Trans R Soc London Ser A Phys Eng Sci 347:543–549

Motzkin TS, Straus EG (1965) Maxima for graphs and a new proof of a theorem of Turán. Can J Math 17:533–540

Nowak I (1999) A new semidefinite programming bound for indefinite quadratic forms over a simplex. J Global Optim 14:357–364

Oliphant TE (2006) A guide to NumPy, vol Trelgol. Publishing USA, NY

Piccialli V, Sciandrone M (2018) Nonlinear optimization and support vector machines. 4OR 16:111–149

Platt JC (1999) Advances in kernel methods chapter Fast Training of Support Vector Machines Using Sequential Minimal Optimization. MIT Press, Cambridge, MA, USA, pp 185–208

Scozzari A, Tardella F (2008) A clique algorithm for standard quadratic programming. Dis Appl Math 156:2439–2448

Virtanen P, Gommers R, Oliphant TE, Haberland M, Reddy T, Cournapeau D, Burovski E, Peterson P, Weckesser W, Bright J et al (2020) Scipy 1.0: fundamental algorithms for scientific computing in python. Nature Methods 17:261–272

Wächter A, Biegler LT (2006) On the implementation of an interior-point filter line-search algorithm for large-scale nonlinear programming. Math Programm 106:25–57

Xu K, Boussemart F, Hemery F, Lecoutre C (2007) Random constraint satisfaction: easy generation of hard (satisfiable) instances. Artif Intell 171:514–534

Acknowledgements

The authors would like to thank Dr. Giampaolo Liuzzi for the useful discussions. We would also like to thank the two anonymous reviewers and the editor for their interesting and constructive comments that greatly helped us to improve the quality and the significance of this manuscript. A special mention is deserved by one of the referees for making us discover the link, highlighted in Remark 7, between the proposed method and a variant of the Frank-Wolfe algorithm.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Detailed results tables

Detailed results tables

In this Appendix, we comprehensively report the results of the experiment described in Sect. 3 about global optimization of StQPs. We consider 45 StQPs, solved by multi-start SMO, multi-start IPOPT and branch-and-bound. For the branch-and-bound method we report both the time spent to find the optimal solution and the total runtime that also includes time spent to certify optimality. We show runtime in Table 7 and the attained best solution in Table 8.

Rights and permissions

About this article

Cite this article

Bisori, R., Lapucci, M. & Sciandrone, M. A study on sequential minimal optimization methods for standard quadratic problems. 4OR-Q J Oper Res 20, 685–712 (2022). https://doi.org/10.1007/s10288-021-00496-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10288-021-00496-9