Abstract

Geometric, robust-to-noise features of curves in Euclidean space are of great interest for various applications such as machine learning and image analysis. We apply Fels–Olver’s moving-frame method (for geometric features) paired with the log-signature transform (for robust features) to construct a set of integral invariants under rigid motions for curves in \({\mathbb {R}}^d\) from the iterated-integrals signature. In particular, we show that one can algorithmically construct a set of invariants that characterize the equivalence class of the truncated iterated-integrals signature under orthogonal transformations, which yields a characterization of a curve in \({\mathbb {R}}^d\) under rigid motions (and tree-like extensions) and an explicit method to compare curves up to these transformations.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

A central problem in image science is constructing geometrically relevant features of curves that are robust to noise. In this sense, rigid motions of space make up a natural group of “nuisance” transformations of the data. For this reason, rotation- and translation-invariant features are often desired, for instance, in human activity recognition [39, Section 6] or in matching contours [52]. Classically, differential invariants such as curvature have been used for this purpose [25], and more recently, integral invariants of curves have been of interest [13, 16]. In this work, we construct a rigid motion-invariant representation of a curve through its iterated-integrals signature by applying the Fels–Olver moving-frame method. We show that this yields sets of integral invariants that characterize the truncated iterated integral signature up to rigid motions.

Iterated integrals, a subject of study introduced by Chen in the 50s [6, 8], will be properly reviewed in Sect. 2.2. In a nutshell, they are descriptive features of continuous curves that moreover possess desirable stability properties. Regarding their use for invariant theory, we consider two concrete examples, reproduced from [13]. Given a smooth curve \(X = (X^{(1)}, X^{(2)}): [0,1] \rightarrow {\mathbb {R}}^2\), starting at \(X_0 = 0\), the norm squared of total displacement is clearly invariant to the orthogonal group \({\text {O}}_2({\mathbb {R}})\) acting on the ambient space. Using the fundamental theorem of analysis, we can write this invariant as

where we introduced the shorthand \(dX^{(i)}_t := \dot{X}^{(i)}_t \mathrm{d}t\). We have expressed this invariant as the linear combination of iterated integrals. A less trivial invariant is given by the squareFootnote 1 of the signed areaFootnote 2 enclosed by the curve (for simplicity assume that the curve is closed, i.e., \(X_1 = 0\)). By Green’s theorem (see [48, Theorem 10.33] and [36, Proposition 1]), the signed area can be expressed in terms of iterated integrals, namely as

These examples illustrate that simple, and geometrically relevant, invariants can be found in the collection of iterated integrals.

The Fels–Olver moving-frame method, introduced in [15], is a modern generalization of the classical moving-frame method formulated by Cartan [3]. In the general setting of a Lie group G acting on a manifold M, a moving frame is defined as a G-equivariant map from M to G. A moving frame is determined by a choice of cross-section to the orbits of G and hence a unique “canonical form” for elements of M under G. Thus, the moving-frame method provides a framework for algorithmically constructing G-invariants on M that characterize orbits and for determining equivalence of submanifolds of M under G.

The moving-frame method has been used to construct differential invariants of smooth planar and spatial curves under Euclidean, affine, and projective transformations, and, in certain cases, these differential invariants lead to a differential signature, which can be used to classify curves under these transformation groups [2]. The differential signature has been applied in a variety of image science applications from automatic jigsaw puzzle assembly [26] to medical imaging [22]. Also in the realm of image science, the moving-frame method has been used to construct invariants of grayscale images [1, 51].

We consider the induced action of the orthogonal group of rotations on the log-signature of a curve, which provides a compressed representation of a curve obtained by applying the log transform to the iterated-integrals signature, and provide an explicit cross-section for this action. We show that for most curves and any truncation of the curve’s log-signature, the orbit is characterized by the value on this cross-section. As a consequence, a curve is completely determined up to rigid motions and tree-like extensions by the invariantization of its iterated-integrals signature induced by this cross-section.

This yields a constructive method to compare curves up to rigid motions and to evaluate invariants that characterize the iterated-integrals signature under rotations. These invariants are constructed from integrals on the curve and hence are likely to be more noise-resistant than their differential counterparts such as curvature. One can easily set up an artificial example where this is visible. Consider, for instance, the circle of radius \(n^{-3/2}\) given by the parameterization \(\gamma : [0,1] \rightarrow {\mathbb {R}}^2\) where

which as \(n\rightarrow \infty \) converges to the constant curve (at the origin). Now the curvature of this curve does not converge (in fact, it blows up). In contrast, the iterated integrals do all converge (to zero) since \(\gamma \) converges in variation norm. Then, the invariants built out of the iterated integrals (Sect. 5.1) also converge to their value on the zero curve. On this toy example, these integral invariants are hence more “stable”. More precisely, iterated integrals are continuous in p-variation norm, for \(p < 2\), [20, Proposition 6.11], thus even covering paths that are not even differentiable. Curvature is continuous only in the (much) stronger \(C^2\)-norm.

Additionally, in contrast to the methods in [13], the resulting set of integral invariants is shown to uniquely characterize the curve under rotations, and moreover, does so in a minimal fashion. Since the iterated-integrals signature of a curve is automatically invariant to translations, this provides rigid motion-invariant features of a curve, which can be used for applications such as machine learning or shape analysis.

This work is structured as follows: In Sect. 2, we provide background on the iterated-integrals signature and the moving-frame method, as well as some facts about algebraic groups and invariants. In Sect. 3, we construct the moving-frame map for paths in \({\mathbb {R}}^2\) and \({\mathbb {R}}^3\) motivating the construction of the moving-frame map for \({\mathbb {R}}^d\). We also provide explicit sets of invariants at these lower dimensions, which might be useful for applications. In Sect. 4, we consider the orthogonal action on the second-order truncation of the log-signature over the complex numbers. Using tools from algebraic invariant theory, we construct the linear space, which will form the basis for the cross-section in the following section. We also provide an explicit set of polynomial invariants that characterize the second-order truncation of the log-signature under the orthogonal group. In Sect. 5.1, we construct a general moving frame for paths in \({\mathbb {R}}^d\), and in Sect. 5.2 we introduce sufficient conditions for the resulting moving-frame invariants to be polynomial, showing these conditions are satisfied for some values of d. Finally, in Sect. 6 we discuss some of the interesting questions that arise as a result of our work.

2 Preliminaries

2.1 The Tensor Algebra

Let \(d \ge 1\) be an integer. A word, or multi-index, over the alphabet \(\{{\mathtt {1}},\dotsc ,{\mathtt {d}}\}\) is a tuple \(w=({\mathtt {w}}_1,\dotsc ,{\mathtt {w}}_n)\in \{{\mathtt {1}},\dotsc ,{\mathtt {d}}\}^n\) for some integer \(n\ge 0\), called its length, which is denoted by |w|. As is usual in the literature, we use the short-hand notation \(w={\mathtt {w}}_1\cdots {\mathtt {w}}_n\), where the \({\mathtt {w}}_i\), words of length one, are called letters. The concatenation of two words v, w is the word \(vw{:}{=}{\mathtt {v}}_1\cdots {\mathtt {v}}_n{\mathtt {w}}_1\cdots {\mathtt {w}}_m\) of length \(|vw|=n+m\). Observe that this product is associative and non-commutative. There is a unique element of length zero, called the empty word and denoted by \({\textsf {e}}\). It satisfies \(w{\textsf {e}}={\textsf {e}}w=w\) for all words w. If we denote by \(T({\mathbb {R}}^d)\) the real vector space spanned by words, the bilinear extension of the concatenation product endows it with the structure of an associative (and non-commutative) algebra. We also note that \(T({\mathbb {R}}^d)\) admits the direct sum decomposition

In \(d=4\), typical element of \(T({\mathbb {R}}^d)\) might look like

We note that when writing elements of \(T({\mathbb {R}}^d)\), our notation distinguishes the letter \({\mathtt {3}}\) from the real coefficient \(3\) in the second term.

There is a commutative product on \(T({\mathbb {R}}^d)\), known as the shuffle product, recursively defined by  and

and

where \(v{\mathtt {i}}\) denotes the concatenation of the word v and the letter \({\mathtt {i}}\), and analogously for \(w{\mathtt {j}}\).

Example 2.1

Suppose \(d=2\). The first few non-trivial shuffle products are

The commutator bracket \([u,v]{:}{=}uv-vu\) endows \(T({\mathbb {R}}^d)\) with the structure of a Lie algebra. The free Lie algebra over \({\mathbb {R}}^d\), denoted by \({{\mathfrak {g}}}({\mathbb {R}}^d)\), can be realized as the following subspace of \(T({\mathbb {R}}^d)\),

where \(W_1{:}{=}{\text {span}}_{\mathbb {R}}\{{\mathtt {1}},\dotsc ,{\mathtt {d}}\} \cong {\mathbb {R}}^d\) and

There are multiple choices of bases for \({{\mathfrak {g}}}({\mathbb {R}}^d)\), but we choose to work with the Lyndon basis (see [45] for further details). A Lyndon word is a word \(h\) such that whenever \(h=uv\), with \(u,v\ne {\textsf {e}}\), then \(u<v\) for the lexicographical order. We denote the set of Lyndon words over the alphabet \(\{{\mathtt {1}},\dotsc ,{\mathtt {d}}\}\) by \({\mathscr {L}}_{d}\). In particular, \(h\) with \(|h|\ge 2\) is Lyndon if and only if there exist non-empty Lyndon words \(u\) and \(v\) such that \(u<v\) and \(h=uv\). Although there might be multiple choices for this factorization, the one with \(v\) as long as possible is called the standard factorization of \(h\). The Lyndon basis \(b_{{\mathtt {h}}}\) is recursively defined by setting \(b_{{\mathtt {i}}}={\mathtt {i}}\) and \(b_{h}=[b_u,b_v]\) for all Lyndon words \(h\) with \(|h|\ge 2\), where \(h=uv\) is the standard factorization.

Example 2.2

Suppose \(d=2\). The Lyndon words up to length 4, their standard factorizations and the associated basis elements are shown in Table 1.

Elements of the dual space \(T(\!({\mathbb {R}}^d)\!){:}{=}T({\mathbb {R}}^d)^*\) can be identified with formal word series. For \(F\in T(\!({\mathbb {R}}^d)\!)\), we write

In particular, we have no growth requirement for the coefficients \(\langle F,w\rangle \in {\mathbb {R}}\). The above expression is meant only as a notation for treating the values of \(F\) on words as a single object. This space can be endowed with a multiplication given, for \(F,G\in T(\!({\mathbb {R}}^d)\!)\), by

Observe that since there is a finite number of pairs of words \(u,v\) such that \(uv=w\), the coefficients of \(FG\) are well defined for all \(w\), so the above formula is an honest element of \(T(\!({\mathbb {R}}^d)\!)\). It turns out that this product is dual to the deconcatenation coproduct \(\varDelta :T({\mathbb {R}}^d)\rightarrow T({\mathbb {R}}^d)\otimes T({\mathbb {R}}^d)\) given by

in the sense that

for all words. This formula is nothing but Eq. (2) componentwise. In this sense, one can say that \(\varDelta \) is the transposition of the concatenation product.

More explicitly, if \(w={\mathtt {w}}_1\cdots {\mathtt {w}}_n\) then

One can then think of the coefficient \(\langle FG,w\rangle \) as the coefficient in front of the word \(w\) in the product \(FG\), when the latter is computed by concatenation of words and then re-expanded in the word basis.

Example 2.3

Suppose \(d=2\), and let

be two elements in \(T(\!({\mathbb {R}}^d)\!)\). Then, their product is given by:

Remark 2.4

It is well known (see, for example, [37]) that \(T({\mathbb {R}}^d)\) with the product  , the coproduct \(\varDelta \) and canonical unit, counit and antipode, forms a Hopf algebra.

, the coproduct \(\varDelta \) and canonical unit, counit and antipode, forms a Hopf algebra.

There are two distinct subsets of \(T(\!({\mathbb {R}}^d)\!)\) that will be important in what follows. The first one is the subspace \({{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\) of infinitesimal characters, formed by linear maps \(F\) such that  whenever \(u\) and \(v\) are non-empty words, and such that \(\langle F,{\textsf {e}}\rangle =0\). It can be identified with the dual space

whenever \(u\) and \(v\) are non-empty words, and such that \(\langle F,{\textsf {e}}\rangle =0\). It can be identified with the dual space

It is a Lie algebra under the commutator bracket \([F,G]=FG-GF\). The second one is the set \({{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\) of characters, i.e., linear maps \(F\) such that  for all \(u,v\in T({\mathbb {R}}^d)\).

for all \(u,v\in T({\mathbb {R}}^d)\).

We may define an exponential map \(\exp :{{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\rightarrow {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\) by its power series

On a single word, the map is given by

and since \(F\) vanishes on the empty word, all terms with \(n>|w|\) also vanish, so that the sum is always finite. Therefore, \(\exp (F)\) is a well-defined element of \(T(\!({\mathbb {R}}^d)\!)\).

Example 2.5

Suppose that \(d=2\) and consider

First, we determine conditions on \(\alpha ,\beta ,\gamma ,\delta \in {\mathbb {R}}\) so that \({{\mathfrak {g}}}(\!({\mathbb {R}}^2)\!)\). Since the coefficient \(\langle F,w\rangle \) vanishes for \(|w|>2\), the only non-trivial shuffle product to check is of the form  for \({\mathtt {i}},{\mathtt {j}}\in \{1,2\}\). In particular, this means that there are no restrictions on \(\alpha ,\beta \), and

for \({\mathtt {i}},{\mathtt {j}}\in \{1,2\}\). In particular, this means that there are no restrictions on \(\alpha ,\beta \), and

so we must have \(\eta =\lambda =0\) and \(\gamma +\delta =0\). Therefore,

Note that \(F\) is expressed in the Lyndon basis (see Example 2.2).

Now, using Eq. (4) (or equivalently Eq. (5)) we may compute

The reader can check that \(\exp (F)\in {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\).

It can be shown that the image of \(\exp \) is equal to \({{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\) and that it is a bijection onto its image [38], with inverse \(\log :{{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\rightarrow {{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\) defined by

where \(\varepsilon \) is the unique linear map such that \(\langle \varepsilon ,{\textsf {e}}\rangle =1\) and zero otherwise.

Finally, we remark some freeness properties of the tensor algebra and its subspaces. Below,

denotes the reduced tensor algebra over \({\mathbb {R}}^d\). The following result can be found in [18, Corollary 2.1].

Proposition 2.6

Let \(\phi :T^+({\mathbb {R}}^d)\rightarrow {\mathbb {R}}^e\) be a linear map. There exists a unique extension \({\tilde{\phi }}:T({\mathbb {R}}^d)\rightarrow T({\mathbb {R}}^e)\) such that

and \(\pi \circ \tilde{\phi }=\phi \), where \(\pi :T({\mathbb {R}}^e)\rightarrow {\mathbb {R}}^e\) denotes the projection of \(T({\mathbb {R}}^e)\) onto \({\mathbb {R}}^e\), orthogonal to \({\mathbb {R}}{\textsf {e}}\) and \(\bigoplus _{n>2}{\text {span}}_{\mathbb {R}}\{w:|w|=n\}\), and \(\varDelta \) denotes the deconcatenation product (3). Moreover, the extension is explicitly given by

By transposition, we obtain a unique map \(\varPhi :T(\!({\mathbb {R}}^e)\!)\rightarrow T(\!({\mathbb {R}}^d)\!)\) such that \(\langle \varPhi (F),w\rangle {:}{=}\langle F,{\tilde{\phi }}(w)\rangle \), i.e.,

In particular, we have that

for all \(F,G\in T(\!({\mathbb {R}}^e)\!)\). Morever, by Eq. (6),

2.2 The Iterated-Integrals Signature

The iterated-integrals signature of (smooth enough) paths was introduced by Chen for homological considerations on loop space [7]. It played a vital role in the rough path analysis of Lyons, a pathwise approach to stochastic analysis [35]. Recently, it has found applications in statistics and machine learning (see, e.g., [9] and references therein), where it serves as a method of feature extraction for possibly non-smooth time-dependent data, as well as in shape analysis [5, 33].

Let \(Z= (Z^{\mathtt {1}}, \dots , Z^{\mathtt {d}}) :[0,1]\rightarrow {\mathbb {R}}^d\) be an absolutely continuous path.Footnote 3 Given a word \(w={\mathtt {w}}_1\cdots {\mathtt {w}}_n\), define

This definition has a unique linear extension to \(T({\mathbb {R}}^d)\). We obtain thus an element \({{\,\mathrm{IIS}\,}}(Z) \in T(\!({\mathbb {R}}^d)\!)\), called the iterated-integrals signature (IIS) of \(Z\).

It was shown by Ree [47] that the coefficients of \({{\,\mathrm{IIS}\,}}(Z)\) satisfy the so-called shuffle relations:

In other words, \({{\,\mathrm{IIS}\,}}(Z)\in {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\).

As a consequence of the shuffle relation, one obtains that the log-signature \(\log ({{\,\mathrm{IIS}\,}}(Z))\) is a Lie series, i.e., an element of \({{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\). Moreover, the identity \({{\,\mathrm{IIS}\,}}(Z) = \exp \left( \log ( {{\,\mathrm{IIS}\,}}(Z) ) \right) \) holds. The log-signature therefore contains the same amount of information as the signature itself; it in fact is a minimal (linear) depiction of it: there are no functional relations between the coefficients of an general log-signature.Footnote 4

The entire iterated-integrals signature \({{\,\mathrm{IIS}\,}}(Z)\) is an infinite-dimensional object and hence can never actually be numerically computed. We now provide more detail on the truncated, finite-dimensional setting.

For each integer \(N\ge 1\), the subspace \(I_n\subset T(\!({\mathbb {R}}^d)\!)\) generated by formal series such that \(\langle F,w\rangle =0\) for all words with \(|w|\le N\) is a two-sided ideal, that is, the inclusion

holds. Therefore, the quotient space \(T_{\le n}(\!({\mathbb {R}}^d)\!){:}{=}T(\!({\mathbb {R}}^d)\!)/I_n\) inherits an algebra structure from \(T(\!({\mathbb {R}}^d)\!)\). Moreover, it can be identified with the direct sum

We denote by \({\text {proj}}_{\le n}:T(\!({\mathbb {R}}^d)\!)\rightarrow T_{\le n}(\!({\mathbb {R}}^d)\!)\) the canonical projection.

Denote with \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{d})\!)\) the free step-\(N\) nilpotent Lie algebra (over \({\mathbb {R}}^d\)). It can be realized as the following subspace of \(T_{\le n}(\!({\mathbb {R}}^d)\!)\), see [20, Section 7.3],

where, as before \(W_1{:}{=}{\text {span}}_{\mathbb {R}}\{ {\mathtt {i}} : i =1 ,\dots , d\} \cong {\mathbb {R}}^d\) and \(W_{n+1}{:}{=}[W_1,W_n]\). In the case of \(N=2\), this reduces to

where we denote with \(\mathfrak {so}(d,{\mathbb {R}})\) the space of skew-symmetric \(d\times d\) matrices. Indeed, an isomorphism is given by:

We remark that the coefficients \(c_{i}\) and \(c_{ij}\) are the coordinatesFootnote 5 with respect to the Lyndon basis (see Example 2.2).

The linear space \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{d})\!)\) is in bijection to its image under the exponential map. This image, denoted \({{\mathscr {G}}}_{\le n}({\mathbb {R}}^d){:}{=}\exp {\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{d})\!)\), is the free step-\(N\) nilpotent group (over \({\mathbb {R}}^d\)). It is exactly the set of all points in \(T_{\le n}({\mathbb {R}}^d)\) that can be reached by the truncated signature map, that is (see [20, Theorem 7.28])

(Equivalently, the truncated log-signature completely fills out the truncated Lie algebra \({{\mathfrak {g}}}_{\le n}(\!({\mathbb {R}}^d)\!)\).

We have

where \(c_{h}(Z)=\langle {{\,\mathrm{ISS}\,}}(Z),\zeta _h\rangle \) for uniquely determined \(\zeta _h\in T({\mathbb {R}}^d)\). This inspires us to also denote the coordinates of an arbitrary \({{\textbf {c}}}_{\le n}\in {\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{d})\!)\) by \(c_{h}\), were analogously

Example 2.7

(Moment curve)

We consider the moment curve in dimension 3, which is the curve \(Z: [0,1] \rightarrow {\mathbb {R}}^3\) given as

It traces out part of the twisted cubic [23, Example 1.10], see also [29, Sect. 15].

We calculate, as an example,

The entire step-2 truncated signature is:

and the step-2 truncated log-signature is:

where

is seen to be skew-symmetric, as expected from (9).

2.3 Invariants

In this section, let G be a subgroup of the general linear group acting linearly on \({\mathbb {R}}^d\). In this work, we are interested in functions on paths in \({\mathbb {R}}^d\) that factor through the signature and that are invariant under this action on the path’s ambient space. While we mostly focus on \(G = {\text {O}}_d({\mathbb {R}})\), the results in this section apply to any subgroup of the general linear group acting linearly. The action of \(A\in G\) on an \({\mathbb {R}}^d\)-valued path \(Z\) is given by \(AZ:[0,1]\rightarrow {\mathbb {R}}^d\), \(t\mapsto AZ_t\).

Using Proposition 2.6, we can extend the action of G on \({\mathbb {R}}^d\) to a diagonal action on words. The matrix \(A^\top \) acts on single letters by

and we set \(\phi _{A^{\top }}(w)=0\) whenever \(|w|\ge 2\). By Proposition 2.6, this induces an endomorphism \({\tilde{\phi }}_{A^\top }:T({\mathbb {R}}^d)\rightarrow T({\mathbb {R}}^d)\), satisfying

In particular, \({\tilde{\phi }}_{A^\top }(u{\mathtt {i}})={\tilde{\phi }}_{A^\top }(u){\tilde{\phi }}_{A^\top }({\mathtt {i}})\) for all words \(u\) and letters \({\mathtt {i}}\in \{{\mathtt {1}},\dotsc ,{\mathtt {d}}\}\). In order to be consistent with the notation in [13], we will denote its transpose map (\(\varPhi _A\) in Proposition 2.6) just by \(A:T(\!({\mathbb {R}}^d)\!)\rightarrow T(\!({\mathbb {R}}^d)\!)\).

Lemma 2.8

The map \({\tilde{\phi }}_{A^\top }:T({\mathbb {R}}^d)\rightarrow T({\mathbb {R}}^d)\) is a shuffle morphism, that is,

for all words \(u,v\).

Proof

We proceed by induction on \(|u|+|v|\ge 0\). If \(|u|+|v|=0\), then necessarily \(u=v={\textsf {e}}\), and the identity becomes

which is true by definition. Now, suppose that the identity is true for all words \(u',v'\) with \(|u'|+|v'|<n\). If \(|u|+|v|=n\) we suppose, without loss of generality, that \(u=u'{\mathtt {i}}, v=v'{\mathtt {j}}\) for some (possibly empty) words \(u',v'\) with \(|u'|+|v'|<n\). Then,

\(\square \)

Remark 2.9

Lemma 2.8 is a special case of [10, Theorem 1.2].

Corollary 2.10

Let \(A \in G\).

-

(i)

The character group is invariant under \(A\), that is, \(A\cdot {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\subset {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\).

-

(ii)

The restriction of \(A\) to \({{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\) is a Lie endomorphism. In particular, the free Lie algebra is invariant under \(A\), that is, \(A\cdot {{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\subset {{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\).

-

(iii)

\(\log : {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\rightarrow {{\mathfrak {g}}}(\!({\mathbb {R}}^d)\!)\) is an equivariant map.

Proof

-

i.

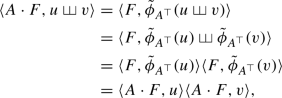

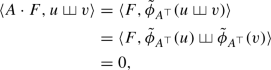

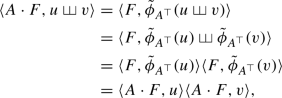

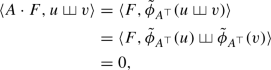

Let \(F\in {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\), and \(u,v\) be words. Then

that is, \(A\cdot F\in {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\).

-

ii.

Since \(A\cdot (FG)=(A\cdot F)(A\cdot G)\), \(A\) is automatically a Lie morphism. Now we check that \(A\cdot F\in {\mathfrak {g}}(\!({\mathbb {R}}^d)\!)\) whenever \(F\in \mathfrak g(\!({\mathbb {R}}^d)\!)\). It is clear that \(\langle A\cdot F,{\textsf {e}}\rangle =\langle F,{\textsf {e}}\rangle =0\). Now, if \(u,v\) are non-empty words, then

i.e. \(A\cdot F\in {\mathfrak {g}}(\!({\mathbb {R}}^d)\!)\).

-

iii.

Let \(G\in {{\mathscr {G}}}(\!({\mathbb {R}}^d)\!)\). Then, since \(A\cdot \varepsilon =\varepsilon \) we get

$$\begin{aligned} \log ( A \cdot G )&= \sum _{n=1}^\infty \frac{(-1)^{n-1}}{n}(A \cdot G-\varepsilon )^n \\&= A \cdot \sum _{n=1}^\infty \frac{(-1)^{n-1}}{n}(G-\varepsilon )^n \\&= A \cdot \log (G). \end{aligned}$$

\(\square \)

In particular, we easily see that (see also [13, Lemma 3.3])

The same is true for the truncated versions, and we note that, in the special case of \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{d})\!)\), under the isomorphism in Eq. (10), the action has the simple form

where the operations on the right-hand side are matrix-vector resp. matrix–matrix multiplication. Indeed, for the first level we have that

In the same vein, we have that

It follows from Corollary 2.10 and (12) that \(\log ( {{\,\mathrm{IIS}\,}}(A Z) ) = A\cdot \log ( {{\,\mathrm{IIS}\,}}(Z) )\). As already remarked, \(\log \) is a bijection (with inverse \(\exp \)). To obtain invariant expressions in terms of \({{\,\mathrm{IIS}\,}}(Z)\), it is hence enough to obtain invariant expressions in terms of \(\log ({{\,\mathrm{IIS}\,}}(Z))\). Going this route has the benefit of working on a linear object. To be more specific, \({{\,\mathrm{IIS}\,}}(Z)\) is, owing to the shuffle relation, highly redundant. As an example in \(d=2\),

Now, both of these expressions are invariant to \({\text {O}}_2({\mathbb {R}})\). The left-hand side is a nonlinear expressions in the signature, whereas the right-hand side is a linear one. To not have to deal with this kind of redundancy, we work with the log-signature. We note that in [13] the linear invariants of the signature itself are presented. Owing to the shuffle relation, this automatically yields (all) polynomial invariants. But, as just mentioned, it also yields a lot of redundant information.

Example 2.11

We continue with Example 2.7. The rotation

results in the curve

Its step-2 truncated signature is

The step-2 truncated log-signature is

In the present work, we consider general, nonlinear expressions of the log-signature. That way, we use the economical form of the log-signature, while still providing a complete—in a precise sense—set of nonlinear invariants.

2.4 Moving-Frame Method

We now provide a brief introduction to the Fels–Olver moving-frame method introduced in [15], a modern generalization of the classical moving-frame method formulated by Cartan [4]. For a comprehensive overview of the method and survey of many of its applications, see [14, 43]. We will assume in this subsection that G is a finite-dimensional Lie group acting smoothlyFootnote 6 on an m-dimensional manifold M.

Definition 2.12

A moving frame for the action of G on M is a smooth map \(\rho :M \rightarrow G\) such that \(\rho (g\cdot z) = \rho (z) \cdot g^{-1}\).

In general, one can define a moving frame as a smooth G-equivariant map \(\rho :M\rightarrow G\). For simplicity, we assume G acts on itself by right multiplication; this is often referred to as a right moving frame. A moving frame can be constructed through the use of a cross-section to the orbits of the action of G on M.

Definition 2.13

A cross-section for the action of G on M is a submanifold \({\mathcal {K}}\subset M\) such that \({\mathcal {K}}\) intersects each orbit transversally at a unique point.

Definition 2.14

The action of G is free if the stabilizer \(G_z\) of any point \(z\in M\) is trivial, i.e.,

where \({\text {id}}\in G\) denotes the identity transformation.

The following result appears in much of the previous literature on moving frames (see, for instance, [42, Thm. 2.4]).

Theorem 2.15

Let G be an action on M and assume that

- (\(*\)):

-

The action is free, and around each point \(z\in M\) there exists arbitrarily small neighborhoods whose intersection with each orbit is pathwise-connected.

If \({\mathcal {K}}\) is a cross-section, then the map \(\rho : M\rightarrow G\) defined by sending z to the unique group element \(g\in G\) such that \(g\cdot z\in {\mathcal {K}}\) is a moving frame.

Remark 2.16

The equivariance of the map \(\rho :M\rightarrow G\) such that \(\rho (z)\cdot z\in {\mathcal {K}}\) can be seen from the fact that \(\rho (z) \cdot z = \rho (g\cdot z) \cdot (g\cdot z)\) for any \(g\in G\). Since G is free, this implies that \( \rho (z)= \rho (g\cdot z) \cdot g\), and hence, \(\rho \) satisfies Definition 2.12.

Similarly, in this setting, a moving frame \(\rho \) specifies a cross-section defined by \({\mathcal {K}}= \{\rho (z)\cdot z \in M \}\). This construction can be interpreted as a way to assign a “canonical form” to points \(z\in M\) under the action of G, thus producing invariant functions on M under G.

Definition 2.17

Let \(\rho : M\rightarrow G\) be a moving frame. The invariantization of a function \(F:M\rightarrow {\mathbb {R}}\) with respect to \(\rho \) is the invariant function \(\iota (F)\) defined by

Given a moving frame \(\rho \) and local coordinates \(z=(z_1,\ldots ,z_m)\) on M, the invariantization of the coordinate functions \(\iota (z_1),\ldots , \iota (z_m)\) is the fundamental invariants associated with \(\rho \). In particular, we can compute \(\iota (F)\) by

Since \(\iota (I)(z)=I(z)\) for any invariant function I, the fundamental invariants provide a functionally generating set of invariants for the action of G on M. In general, we will call a set of invariants \({\mathfrak {I}}=\{J_1,\ldots ,J_m\}\) fundamental if it functionally generates all invariants, i.e., for any invariant I there is a function \(I'\) such that

Now, suppose further that G is an r-dimensional Lie group and that \(\rho \) is the moving frame associated with a coordinate cross-section \({\mathcal {K}}\) defined by equations

for some constants \(c_1,\ldots , c_r\). Then the first r fundamental invariants are the phantom invariants \(c_1,\ldots , c_r\), while the remaining \(m-r\) invariants \(\{I_1,\ldots , I_{m-r}\}\) form a functionally independent generating set. In this case, we can see that two points \(z_1,z_2\in M\) lie in the same orbit if and only if

Example 2.18

Consider the canonical action of \({\text {SO}}_2({\mathbb {R}})\) on \({\mathbb {R}}^2\setminus \{(0,0)\}\). This action satisfies the assumptions of Theorem 2.15, and a cross-section to the orbits is given by

The unique group element taking a point to the intersection of its orbit with \({\mathcal {K}}\) is the rotation (see Fig. 1)

The fundamental invariants associated with the moving frame \(\rho :{\mathbb {R}}^2\backslash \{(0,0)\}\rightarrow {\text {SO}}_2({\mathbb {R}})\) are given by

Thus, any invariant function for this action can be written as a function of \(\iota (y)\), the Euclidean norm. One can check that indeed for an invariant I(x, y), one has \(I(x,y)=I(0,\sqrt{x^2+y^2})\). This additionally implies that two points are related by a rotation if and only if they have the same Euclidean norm.

In practice, it is difficult, or impossible, to find a global cross-section, and thus a global moving frame, to the orbits of G on M. For instance in the above example, the origin was removed from \({\mathbb {R}}^2\) to ensure freeness of the action. If the action of G on M satisfies condition (\(*\)) from Theorem 2.15, then the existence of a local moving frame around each point \(z\in M\) is guaranteed by [15, Thm. 4.4]. In this case, the moving frame is a map \(\rho : U\rightarrow V\) from a neighborhood \(z\in U\) of M to a neighborhood of the identity in \(V\subset G\). The fundamental set of invariants produced are also local in nature and thus only guaranteed to be invariant on U for elements \(g\in V\).

The condition (\(*\)) in Theorem 2.15 can be relaxed in certain cases. In [28, Sec. 1], the authors outline a method to construct a fundamental set of local invariants for actions of G that are only semi-regular, meaning that all orbits have the same dimension. In particular, Theorem 1.6 in [28] states that for a semi-regular action of G on M, there exists a local coordinate cross-section about every point \(z\in M\). In a neighborhood U containing z, such a linear space intersects transversally the connected component containing \({\overline{z}}\) of the orbit \(G\cdot {\overline{z}}\) at a unique point for each \({\overline{z}}\in U\) and is of complementary dimension to the orbits of the action.

Remark 2.19

The algebraic actions that we define in the next section are automatically semi-regular on a Zariski-open subset of the target space (Proposition 2.20(c)), and hence, a local cross-section exists around any point in this subset. Since orbits are algebraic subsets, a local coordinate cross-section is a submanifold of complementary dimension (to the dimension of orbits) intersecting each orbit about z transversally and hence in finitely-many points. If every sufficiently small neighborhood about z does not have pathwise-connected intersection with each orbit, a local cross-section about z necessarily intersects some orbit at infinitely-many points, and hence, a free algebraic group action necessarily satisfies condition (\(*\)) from Theorem 2.15.

2.5 Algebraic Groups and Invariants

In this work, we will be in the setting of an algebraic group G acting rationally on a variety X. In other words, G is an algebraic variety equipped with a group structure, and the action of G on X is given by a rational map \(\varPhi : G\times X \rightarrow X\). Here we outline some key facts and results about algebraic group actions and the invariants of such actions, following [46] for much of our exposition. Unless specified otherwise, both G and X are both varieties over the algebraically closed field \({\mathbb {C}}\).

The orbit \(G \cdot p\) of a point \(p\in X\) under G is the image of \(G\times \{p\}\) under the rational map \(\varPhi \) defining the action, and hence is open in its closure \(\overline{G\cdot p}\) under the Zariski topology.Footnote 7

The following proposition summarizes a few basic results on orbits of algebraic groups that can be found in [46, Section 1.3].

Proposition 2.20

For any point \(p\in X\), the stabilizer \(G_p\) is an algebraic subgroup of G and \(G\cdot p\) satisfies the following:

-

(a)

The orbit \(G\cdot p\) is a smooth, Zariski-open subset of \(\overline{G\cdot p}\).

-

(b)

The dimension of \(G\cdot p\) satisfies \(\dim G\cdot p = \dim G - \dim G_p,\) where \(\dim G_p=\dim T_p (G\cdot p)\).

-

(c)

The dimension of \(G\cdot p\) is maximal on a non-empty Zariski-open subset of X.

For an arbitrary field k, we denote the ring of polynomial functions on the variety X as k[X], i.e., if \({\mathcal {I}}(X)\) is the ideal generated by the polynomials defining the variety \(X\subset {\mathbb {C}}^d\), then \(k[X] = k[x_1,x_2,\dotsc ,x_d]/{\mathcal {I}}(X)\). If X is irreducible, then the field k(X) of rational functions on X is defined similarly. The polynomial invariants (for the action of G on the variety X) form a subring of k[X] defined by

and the rational invariants form a subfield of k(X) given by

respectively. Constructing invariant functions and finding generatingFootnote 8 sets for \({\mathbb {C}}[X]^G\) is the subject of classical invariant theory [34, 41, 50]. In [24], Hilbert proved his finiteness theorem, showing that for linearly reductive groups acting on a vector space V the polynomial ring \({\mathbb {C}}[V]^G\) is finitely generated leading him to conjecture in his fourteenth problem that \({\mathbb {C}}[X]^G\) is always finitely generated. In [40], Nagata constructed a counter-example to this conjecture. For \({\mathbb {C}}(X)^G\), however, a finite generating set always exists and can be explicitly constructed (see, for instance, [11, 27]). Furthermore, a set of rational invariants is generating if and only if it is also separating.

Definition 2.21

A set of rational invariants \({{\mathfrak {I}}}\subset {\mathbb {C}}(X)^G\) separates orbits on a subset \(U\subset X\) if two points \(p,q\in U\) lie in the same orbit if and only if \(K(p)=K(q)\) for all \(K\in {{\mathfrak {I}}}\). If there exists a non-empty, Zariski-open subset X where \({{\mathfrak {I}}}\) separates orbits then we say \({{\mathfrak {I}}}\) is separating.

Proposition 2.22

For the action of G on X, the field \({\mathbb {C}}(X)^G\) is finitely generated over \({\mathbb {C}}\). Moreover, a subset \({{\mathfrak {I}}}\subset {\mathbb {C}}(X)^G\) is generating if and only if it is separating.

Proof

The backward direction holds by [46, Lem. 2.1]. By [46, Thm. 2.4], there always exists a finite set of separating invariants in \({\mathbb {C}}(X)^G\) and hence a finite generating set. Additionally, this finite set can be rewritten in terms of any generating set, and hence, any generating set is also separating. \(\square \)

Under certain conditions, the polynomial ring \({\mathbb {C}}[X]^G\) is also separating, as the following proposition from [46, Prop. 3.4] shows.

Proposition 2.23

Suppose the variety X is irreducible. There exists a finite, separating set of invariants \({{\mathfrak {I}}}\subset {\mathbb {C}}[X]^G\) if and only if \({\mathbb {C}}(X)^G=Q{\mathbb {C}}[X]^G\) where \(Q{\mathbb {C}}[X]^G=\left. \left\{ \frac{f}{g}\, \right| \, f,g\in {\mathbb {C}}[X]^G\right\} \).

One way to understand the structure of invariant rings is by considering subsets of X that intersect a general orbit.

Definition 2.24

Let \(N \subset G\) be a subgroup. A subvariety S of X is a relative N-section for the action of G on X if the following hold:

-

There exists a non-empty, G-invariant, and Zariski-open subset \(U\subset X\), such that S intersects each orbit that is contained in U. In other words, we have that \(\overline{\varPhi (G\times S)} = X\), where closure is taken in the Zariski topology.

-

One has \(N = \{ n\in G\, |\, nS=S\}\).

We call the subgroup N the normalizer subgroup of S with respect to G. The following proposition summarizes a discussion in [46, Sec. 2.8].

Example 2.25

For the action of \({\text {SO}}_2({\mathbb {C}})\) on the Zariski-open subset of \({\mathbb {C}}^2\) defined by \(x^2+y^2\ne 0\), the variety S defined by \(x=0\) is a relative N-section for the action where N is the 2-element subgroup generated by a rotation of 180 degrees. Then, S intersects each orbit of the action in precisely two points.

Proposition 2.26

Let S be a relative N-section for the action of G on X. Then, the restriction map

restricts to a field isomorphism between \({\mathbb {C}}(X)^G\) and \({\mathbb {C}}(S)^N\).

Corollary 2.27

Let S be a relative N-section for the action of G on X and \({{\mathfrak {I}}}\subset {\mathbb {C}}(X)^G\) a set such that \({\textsf {R}}_{X\rightarrow S}({{\mathfrak {I}}})\) generates \({\mathbb {C}}(S)^N\) where \({\textsf {R}}_{X\rightarrow S}\) is the restriction map from Proposition 2.26. Then, \({{\mathfrak {I}}}\) is a generating set for \({\mathbb {C}}(X)^G\).

Relative sections can be used to construct generating sets of rational invariants for algebraic actions as in [21], which the authors refer to as the slice method. Similar in spirit to the approach in [28], considerations can be restricted to an algebraic subset of X. When the intersection of S with each orbit is zero-dimensional, a relative N-section can be thought of as the algebraic analog to a local cross-section for an action.

We end the section by considering algebraic actions on varieties defined over \({\mathbb {R}}\), where the issue is more delicate. For instance, in this setting Proposition 2.22 no longer holds meaning that generating sets of invariants are not necessarily separating and vice versa (see [32, Rem. 2.7]). Suppose that \(X({\mathbb {R}})\) and \(G({\mathbb {R}})\) are real varieties with action given by \(\varPhi : G({\mathbb {R}}) \times X({\mathbb {R}}) \rightarrow X({\mathbb {R}})\) and that X and G are the associated complex varieties. Then, \(\varPhi \) defines an action of G on X.

Proposition 2.28

\({\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\) is a subfield of \({\mathbb {C}}(X)^G\).

Proof

If \(f\in {\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\), then the rational function \(f(g\cdot p) - f(p)\) is identically zero on \(G({\mathbb {R}})\times X({\mathbb {R}})\) and hence is identically zero on \(G\times X\). Thus, \(f\in {\mathbb {C}}(X)^G\).

\(\square \)

Corollary 2.29

If \({{\mathfrak {I}}}=\{I_1,\ldots ,I_s\}\subset {\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\) generates \({\mathbb {C}}(X)^G\) then \({{\mathfrak {I}}}\) generates \({\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\).

Proof

Suppose that \({{\mathfrak {I}}}\) generates \({\mathbb {C}}(X)^G\) and that \(f\in {\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\). Then, there exists a rational function \(g\in {\mathbb {C}}(y_1,\ldots ,y_s)\) such that \(f = g(I_1,\ldots ,I_s)\). We can decompose g as \(g= \text {Re}(g)+i\cdot \text {Im}(g)\) where \(\text {Re}(g), \cdot \text {Im}(g)\in {\mathbb {R}}(y_1,\ldots ,y_s)\). Since f is a real rational function

Thus, g must lie in \({\mathbb {R}}(y_1,\ldots ,y_s)\) proving the result. \(\square \)

Proposition 2.30

Suppose that \({\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\) separates orbits for the action of \(G({\mathbb {R}})\) on \(X({\mathbb {R}})\). Then so does any generating set for \({\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\).

Proof

Suppose that \({{\mathfrak {I}}}=\{I_1,I_2,\ldots \}\) generates \({\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\) and that \({\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\) separates orbits. Then, for any two points \(p_1, p_2\in X({\mathbb {R}})\) if

for all invariants in \({{\mathfrak {I}}}\), then we also have \(I(p_1)=I(p_2)\) for any invariant \(I\in {\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\) as \({{\mathfrak {I}}}\) generates \({\mathbb {R}}(X({\mathbb {R}}))^{G({\mathbb {R}})}\). Thus, \(p_1\) and \(p_2\) lie in the same orbit under \(G({\mathbb {R}})\). \(\square \)

3 Rigid-Motion Invariant Iterated-Integrals Signature in Low Dimensions

Here we showcase the moving-frame method and some results about invariantizing the iterated-integrals signature in \({\mathbb {R}}^2\) and \({\mathbb {R}}^3\). We later generalize these results to arbitrary \({\mathbb {R}}^d\) in Sect. 5.1. However, we feel that these low-dimensional cases are useful for understanding how the method works in higher dimension and that these cases are the most useful for applications involving spatial data.

3.1 Planar Curves

In this section, we construct a moving-frame map for the action of \({\text {O}}_2({\mathbb {R}})\) on \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\) and show how this can be used to construct \({\text {O}}_2({\mathbb {R}})\)-invariants in \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\) and hence in the coefficients of the iterated-integrals signature of a curve \(Z\).

First consider the action on \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!) = {\mathbb {R}}^2\oplus [{\mathbb {R}}^2,{\mathbb {R}}^2]\) (recall the notation from Sect. 2, in particular (1)). We can denote any element of \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\) as \({{\textbf {c}}}_{\le 2}\) with coordinates \(c_{1}, c_{2}, \) and \(c_{12}\). Through the isomorphism in (10), we can consider \({{\textbf {c}}}_{\le 2}\) as an element of \({\mathbb {R}}^2\oplus \mathfrak {so}(2,{\mathbb {R}})\),

and with action as in (13). We will now show that \({\text {O}}_2({\mathbb {R}})\) is free on \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\) and the following submanifold

is a cross-section for the action. Similarly to Example 2.18, we start by defining the group element

which is defined outside of \(\{c_{1}=c_{2}=0\}\). For any such element \({{\textbf {c}}}_{\le 2}\in {\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\), we have that

Unlike in Example 2.18, the action is not free on \({\mathbb {R}}^2\), the submanifold defined by \(c_{1}=0, c_{2} >0\) is not a cross-section, and \(A({{\textbf {c}}}_{\le 2})\) does not define a moving-frame map. This is due to the fact that a reflection about the y-axis will fix v, but change the sign of M. Thus to define a moving-frame map, we must consider the diagonal action of \({\text {O}}_2({\mathbb {R}})\) on all of \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\), not just the action on \({\mathfrak {g}}_{\le 1}(\!({\mathbb {R}}^{2})\!)={\mathbb {R}}^2\). The map \({\tilde{\rho }}_2: {\mathcal {U}}_{2;\le 2} \rightarrow {\text {O}}_2({\mathbb {R}})\) given by

defines the group element \({\tilde{\rho }}_2({{\textbf {c}}}_{\le 2})\) such that \({\tilde{\rho }}_2({{\textbf {c}}}_{\le 2})\cdot {{\textbf {c}}}_{\le 2}\in {\mathcal {K}}\) where

The (unique) intersection point of the orbit \({\text {O}}_2({\mathbb {R}})\cdot {{\textbf {c}}}_{\le 2}\) with \({\mathcal {K}}_{2; \le 2}\) is given by \({\tilde{\rho }}_2({{\textbf {c}}}_{\le 2})\cdot {{\textbf {c}}}_{\le 2}\). We later show that this action is free on \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\) (Corollary 4.13), and hence, the map \({\tilde{\rho }}_2\) defines a moving frame with cross-section \({\mathcal {K}}\). This immediately implies that the coordinates of \({\tilde{\rho }}_2({{\textbf {c}}}_{\le 2})\cdot {{\textbf {c}}}_{\le 2}\) are invariants for the action of \({\text {O}}_2({\mathbb {R}})\) on \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\)Footnote 9:

Furthermore, any two elements \({{\textbf {c}}}_{\le 2}, \tilde{{{\textbf {c}}}}_{\le 2} \in {\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\) are related by an element of \({\text {O}}_2({\mathbb {R}})\) if and only if

For any path \(Z\) in \({\mathbb {R}}^2\), let \({{\textbf {c}}}_{\le 2}(Z)\) denote the element of \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\) given by \({\text {proj}}_{\le 2}(\log ({{\,\mathrm{IIS}\,}}(Z)))\). Then, we can define the “invariantized” path \(Y:={\tilde{\rho }}_2({{\textbf {c}}}_{\le 2}(Z)) \cdot Z\). The above statement implies that for any two paths \(Z, {Z}'\), we have that \({{\textbf {c}}}_{\le 2}(Y) = {{\textbf {c}}}_{\le 2}({Y}')\) if and only if there exists some \(g\in {\text {O}}_2({\mathbb {R}})\) such that

In particular, since the \(\log \) map is an equivariant bijection, the same holds true for the \({{\,\mathrm{IIS}\,}}\) of a path under the projection \({\text {proj}}_{\le 2}\).

Given a path \(Z\) starting at the origin, the values of \(c_{1}(Z), c_{2}(Z)\) correspond to x and y values of \(Z(1)\). Similarly, the value of \(c_{12}(Z)\) corresponds to the so-called Lévy area traced by \(Z\) (see [13, Section 3.2] in the context of classical invariant theory). Thus, the moving-frame map applied to such a path \(Z\) rotates the end point \(Z(1)\) to the y-axis (and reflects about the y-axis if the Lévy area is negative).

The resulting invariants on \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\) are perhaps unsurprising, but the above method also yields \({\text {O}}_2({\mathbb {R}})\)-invariants on \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\) for an arbitrary truncation order n, as we now show. We define a map \(\rho _2:{\mathcal {U}}_{2;\le n}\subset {\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!) \rightarrow {\text {O}}_2({\mathbb {R}})\) by

for any \({{\textbf {c}}}_{\le n}\in {{\mathcal {U}}}_{2;\le n}\) where

with \({\text {proj}}_{\le n\rightarrow \le 2}\) denoting the canonical projection from \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\) onto \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{2})\!)\). Since \({\text {O}}_2({\mathbb {R}})\) acts diagonally on the whole of \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\), \(\rho _2\) is a moving-frame map on \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\) with cross-section \({{\mathcal {K}}}_{2;\le n}\) where

Then, the resulting coordinate functions of \(\rho _2({{\textbf {c}}}_{\le n})\cdot {{\textbf {c}}}_{\le n}\in {\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\) are \({\text {O}}_2({\mathbb {R}})\) invariants for the action on \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{2})\!)\) (see Sect. 3.1 for a more detailed investigation of these invariants) and hence \({\text {O}}_2({\mathbb {R}})\) invariants for paths in \({\mathbb {R}}^2\). Furthermore, for any truncation order n and paths \(Z,{Z}'\in {\mathbb {R}}^2\), we have that \({{\textbf {c}}}_{\le n}(Y) = {{\textbf {c}}}_{\le n}({Y}')\) if and only if there exists some element of \({\text {O}}_2({\mathbb {R}})\) such that \(g\cdot {{\textbf {c}}}_{\le n}(Z) ={{\textbf {c}}}_{\le n}({Z}').\)

Proposition 3.1

Let \(Z, {Z}'\) be paths in \({\mathbb {R}}^2\) such that

are elements of \({\mathcal {U}}_{2;\le 2}\). Define

Then there exists \(g\in {\text {O}}_2({\mathbb {R}})\) such that \({{\,\mathrm{IIS}\,}}(g\cdot Z) = {{\,\mathrm{IIS}\,}}({Z}')\) if and only if \({{\,\mathrm{IIS}\,}}(Y) = {{\,\mathrm{IIS}\,}}({Y}')\) if and only if \({{\textbf {c}}}_{\le n}(Y)={{\textbf {c}}}_{\le n}({Y}')\) for all \(n\in {\mathbb {N}}\).

Proof

The result holds by the moving-frame property of \({\tilde{\rho }}_2\) and the fact that the \(\log \) map is a bijection. For details, see the Proof of Theorem 5.5. \(\square \)

Therefore, two paths, starting at the origin, are equivalent up to tree-like extensions and action of \({\text {O}}_2({\mathbb {R}})\) if and only if \({{\,\mathrm{IIS}\,}}(Y) = {{\,\mathrm{IIS}\,}}({Y}')\). In this sense, the moving-frame map \({\tilde{\rho }}_2\) yields a method to invariantize a path \(Z\) (Fig. 2).

We end this section with a look at the invariants produced by the construction for truncation order 4, i.e., \({\text {O}}_2({\mathbb {R}})\)-invariants on \({\mathfrak {g}}_{\le 4}(\!({\mathbb {R}}^{2})\!)\). A (Lyndon) basis for \({\mathfrak {g}}_{\le 4}(\!({\mathbb {R}}^{2})\!)\) corresponds to the coordinates (see Example 2.2)

With Y as defined in Proposition 3.1, we have that

and that the coordinate functions of \(\log ({{\,\mathrm{IIS}\,}}(Y))\), in terms of the coordinates of \(\log ({{\,\mathrm{IIS}\,}}(Z))\), are \({\text {O}}_2({\mathbb {R}})\)-invariants. Using the action as defined in Sect. 2.3, one can compute

As implied by Proposition 3.1, for any two paths \(Z\) and \(\tilde{Z}\) starting at the origin, we have that \({{\textbf {c}}}_{\le 4}(Z)\) is related to \({{\textbf {c}}}_{\le 4}(\tilde{Z})\) under \({\text {O}}_2({\mathbb {R}})\) if and only if \({{\textbf {c}}}_{\le 4}(Y) ={{\textbf {c}}}_{\le 4}({\tilde{Y}})\). By inspection, we see that a simpler set of polynomial invariants also determine the equivalence class of the image of a path \(Z\) in \({\mathfrak {g}}_{\le 4}(\!({\mathbb {R}}^{2})\!)\).

The value of \(Z\) on the above invariant set determines the value of \({{\textbf {c}}}_{\le 4}(Y)\). Thus, they provide a simpler invariant representation for \({{\textbf {c}}}_{\le 4}(Z) = {\text {proj}}_{\le 4}(\log ({{\,\mathrm{IIS}\,}}(Z)))\).

Remark 3.2

It is an interesting fact that by adding the invariants \(c_{1112}(Y)\) and \(c_{1222}(Y)\), we get the much simpler invariant

In the polynomial invariant set, one can likewise replace either \(p_5\) or \(p_{7}\) by

3.2 Spatial Curves

Here we replicate the results of the previous section, but instead for curves lying in \({\mathbb {R}}^3\). We believe this case is worth a detailed look for two reasons: (1) rigid-motion invariants of spatial curves is likely of interest for applications, (2) the method of constructing a moving frame in this space more closely the general procedure we outline later in Sect. 5.1. We will show that the subset of \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{3})\!)\) defined by

is a cross-section for action of \({\text {O}}_3({\mathbb {R}})\) on a Zariski-open subset of \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{3})\!)\). In this section, we will denote \({{\textbf {c}}}_{\le 2}\) as an element of \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{3})\!)\cong {\mathbb {R}}^2\oplus \mathfrak {so}(2,{\mathbb {R}})\) (see (10) for the explicit isomorphism) where

The action of \({\text {O}}_3({\mathbb {R}})\) acts as in (13). As is common in constructing a moving frame, we will proceed iteratively. At each stage, we bring an arbitrary element to a successively smaller linear spaces containing the desired cross-section and then restrict our attention to elements of this linear space for the next stage. To start, we choose a transformation in \({\text {O}}_3({\mathbb {R}})\) to bring an arbitrary element to the subset

(We will later refer to this as \(L^{(1)}_3({\mathbb {R}})\subset {L_{3}^{(1)}}\), see (18)) Assuming that v is not the zero vector, we can accomplish this with the group element

with the further assumption that \(c_1^2+c_2^2\ne 0\) (we will see later that this assumption can be dropped). The resulting element is of the form

where

which we denote \({{\textbf {c}}}_{\le 2}^{(1)}\). We can now restrict our attention to elements of \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{3})\!)\) of the form

where \(c^{(1)}_{12}\ne 0, c_{3}^{(1)}>0\). We can omit the formulas for the coordinates of \({{\textbf {c}}}_{\le 2}^{(1)}\) in terms of \({{\textbf {c}}}_{\le 2}\) to simplify the computation for the following step. We still have one degree of freedom left, as the subgroup of matrices of the form

with \(B\in {\text {O}}_2({\mathbb {R}})\), preserves the conditions that \(c_{1}^{(1)}=c_{2}^{(1)}=0, c_{3}^{(1)}>0\). Consider such a matrix \(A^2\), one can show that

Thus, we can choose

where

assuming that \(\left( c_{13}^{(1)}\right) ^2+\left( c_{23}^{(1)}\right) ^2\ne 0\). Thus, we can see the iterative procedure to bring a point of \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{3})\!)\) to \({\mathcal {K}}_{3;\le 2}\). At this point, we can put this together to obtain the group element

where

and

Similarly by substituting in the coordinate functions of \({{\textbf {c}}}_{\le 2}^{(1)}\) in terms of the coordinates of \({{\textbf {c}}}_{\le 2}\), we have that

As the only element of \({\text {O}}_3({\mathbb {R}})\) that brings an element of \({\mathcal {K}}_{3;\le 2}\) to \({\mathcal {K}}_{3;\le 2}\) is the identity, this is the unique intersection point of \({\mathcal {K}}_{3;\le 2}\) with the orbit of an arbitrary \({{\textbf {c}}}_{\le 2}\) in the open subset defined by

and since this action is free (see Corollary 4.13), \({\mathcal {K}}_{3;\le 2}\) is a cross-section. As in the previous section, we can thus define a map \(\rho _3:{\mathcal {U}}_{3;\le n}\subset {\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{3})\!)\rightarrow {\text {O}}_3({\mathbb {R}})\) by

for any \({{\textbf {c}}}_{\le n}\in {\mathcal {U}}_{3;\le n}\) where

which defines a moving-frame map on \({\mathfrak {g}}_{\le n}(\!({\mathbb {R}}^{3})\!)\) with cross-section \({\mathcal {K}}_{3;\le n}\) where

All together this implies the following analogue of Proposition 3.1.

Proposition 3.3

Let \(Z, {Z}'\) be paths in \({\mathbb {R}}^3\) such that

are elements of \({\mathcal {U}}_{3;\le 2}\). Define

Then, there exists \(g\in {\text {O}}_3({\mathbb {R}})\) such that \({{\,\mathrm{IIS}\,}}(g\cdot Z) = {{\,\mathrm{IIS}\,}}({Z}')\) if and only if \({{\,\mathrm{IIS}\,}}(Y) = {{\,\mathrm{IIS}\,}}({Y}')\) if and only if \({{\textbf {c}}}_{\le n}(Y)={{\textbf {c}}}_{\le n}({Y}')\) for all \(n\in {\mathbb {N}}\).

Propositions 3.1 and 3.3 are both special cases of Theorem 5.2, proven later for general paths in \({\mathbb {R}}^d\). We end this section by looking at the invariants produced by this cross-section and an example of how we can use this procedure to invariantize the moment curve in \({\mathbb {R}}^3\).

For a curve Z in \({\mathbb {R}}^3\), the nonzero coordinate functions of \({\text {proj}}_{ \le 2}(\log ({{\,\mathrm{IIS}\,}}(Y)))\), where Y is defined in Proposition 3.3, are given byFootnote 10

where

From this, we can conclude that the polynomial invariants \(p_1(Z), p_2(Z)^2,\) and \(p_3(Z)\) characterize the equivalence class of \({{\textbf {c}}}_{\le 2}(Z)\) under \({\text {O}}_3({\mathbb {R}})\).

Remark 3.4

Note that \(p_3(Z)\ge 0\) for any path \(Z\) in \({\mathbb {R}}^3\). There are two ways to see this: First by the Cauchy–Bunyakovsky–Schwarz inequality via

where \(v=(c_{1}(Z),c_{2}(Z),c_{3}(Z))^{\top }\), \(u=(c_{23}(Z),-c_{13}(Z),c_{12}(Z))^{\top }\). On the other hand, it can also be written as a sum of squares,

revealing that it is nothing but \(Mv\cdot Mv\) in the later Example 4.19, while \(p_1(Z)\) is \(v\cdot v\).

Example 3.5

Continuing with our running example, the moment curve, we have already seen (Example 2.7) that

The matrix

is such that

Note that \(A_1\) can be obtained via the equation in (15). Finally, the matrix

is such that

This invariantization of \({\text {proj}}_{\le 2}\log {{\,\mathrm{IIS}\,}}(Z))\) can either be obtained via this iterative method, or by directly using (16). The advantage of this iterative method is that one does not need to know the invariant functions a priori to invariantize the curve. While we are able to succinctly provide the explicit moving-frame map for an arbitrary curve in \({\mathbb {R}}^3\), this will not always be practical in higher dimensions. Figure 3 shows the effects of these transformations on the path itself.

Geometrically this process corresponds to first rotating the curve so that the end point lies on the z-axis. We then choose a rotation about this axis to force \({{\textbf {c}}}_{\le 2}(Z)=0\), which corresponds to forcing the Lévy area of the projection of \({\hat{Z}}:={\tilde{\rho }}_3({{\textbf {c}}}_2(Z))\cdot Z\) onto the \( (x,z)\) plane to be zero. Figure 4 shows this project; one can check that the total area under the curve vanishes.

Area between the coordinates 1 and 3 of the invariantized curve in Example 3.5

Example 3.6

To get a sense of the robustness of these invariants, we run the following experiment: we perturb a curve, compute the resulting invariants, then repeat this 1,000,000 times, and compute the mean and standard deviation of the resulting values. We consider the smooth curve defined by \(t\mapsto (\cos (t),\sin (t),t)\) for \(t\in [0,2\pi ]\), and we perturb it by adding a standard 3-dimensional Brownian motion, scaled down by a parameter \(\varepsilon >0\), so that our curve looks like

where \(B^{(1)}\), \(B^{(2)}\) and \(B^{(3)}\) are independent Brownian motions on the same interval (see Fig. 5 for some samples of the perturbed curve). The jagged nature of each perturbed curve would make using differential invariants more difficult. In practice, one must often apply appropriate smoothing to the curve before using differential methods, such as the differential signature [2].

In our case, the resulting invariantsFootnote 11 are quite stable as shown by Table 2, even for relatively large values of \(\varepsilon \), although not all three are equally stable.

4 Orthogonal Invariants on \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{d})\!)\)

In this section, we take a closer look at the action of \({\text {O}}_d({\mathbb {R}})\) on \({\mathfrak {g}}_{\le 2}(\!({\mathbb {R}}^{d})\!) \cong {\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\). In particular, we construct an explicit linear space, of complementary dimension to the orbits, intersecting each orbit in a large open subset of this space. To achieve this, we consider the associated action of the complex group \({\text {O}}_d({\mathbb {C}})\) on the space \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) where

As described in Sect. 2.5, we can consider \({\text {O}}_d({\mathbb {R}})\) and \({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\) as the real points of the varieties \({\text {O}}_d({\mathbb {C}})\) and \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\).

Remark 4.1

The real Lie group

can be considered as a subgroup of the Lie group

We note that we consider \({\text {O}}_d({\mathbb {C}})\) here as a complex Lie group, in contradistinction to the Lie group of unitary matrices

where \(A^*\) is the conjugate transpose of A. Even though \({\text {U}}_d\) contains matrices with complex entries, it can only be considered as real Lie group.

By investigating the associated complex action, we can utilize tools such as the relative sections described in Definition 2.24 and then pass these results down to the real points. As before in (13), the action of \({\text {O}}_d({\mathbb {C}})\) on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) is given by

We denote the entries as

to make explicit the connection to Sect. 5.1.

Proposition 4.2

For any \(v\in {\mathbb {C}}^d\) such that \(c_1^2+\cdots +c_d^2\ne 0\), there exists \(A\in {\text {O}}_d({\mathbb {C}})\) such that \({\tilde{v}}=Av\) satisfies \(\tilde{c}_{1}=\cdots =\tilde{c}_{ d-1}=0\) and \(\tilde{c}_{d}\ne 0\).

Proof

The function \((d-1)(c_{1}^2+\cdots +c_{d}^2)\) can be written as the sum of all pairwise sum of squares, i.e.,

Suppose that \(c_{1}^2+\cdots +c_{d}^2\ne 0\) and that there exists some \(c_{i}\ne 0\) where \(1\le i\le d-1\). (Otherwise we are done by choosing A as the identity.) By the above equation, there exists a pair of coordinates \(c_{i}\) and \(c_{j}\) such that \(c_{i}^2+c_{j}^2\ne 0\) for some \(1\le i < j\le d\).

Choose the matrix \(A \in {\text {O}}_d({\mathbb {C}})\) defined by

where w is an element of \({\mathbb {C}}\) that satisfies \(w^2 = c_i^2+c_j^2\). The transformation A is the complex analogue to a Givens Rotation which only rotates two coordinates. Then for \(Av = {\tilde{v}}\) we have that \(\tilde{c}_k=c_k\) for \(k\not \in \{ i,j \}\), \(\tilde{c}_i = 0\), and \(\tilde{c}_j = w \ne 0\). This process can be repeated until \({\tilde{v}}\) is of the desired form. \(\square \)

We define a sequence of linear subspaces of \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) as

In particular, the subspace \({L_{d}^{(d-1)}}\) is given by pairs (v, M) of the form

Example 4.3

For \(d=4\), elements in \({L_{4}^{(1)}}\) are of the form

elements in \({L_{4}^{(2)}}\) are of the form

and elements in \({L_{4}^{(3)}}\) are of the form

Note again that all \(\mathfrak {so}_{d}({\mathbb {C}})\) matrices are skew-symmetric and thus have zero diagonal.

We will show that \({L_{d}^{(1)}}, {L_{d}^{(2)}}, ..\) form a sequence of relative sections for the action of \({\text {O}}_d({\mathbb {C}})\) on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) (see Definition 2.24). For this aim, we need to identify the normalizer subgroup for each \({L_{d}^{(i)}}\), which will be achieved in Proposition 4.5.

The group \({\text {O}}_i({\mathbb {C}})\), for \(1\le i < d\), appears as a subgroup of \({\text {O}}_d({\mathbb {C}})\) in several natural ways, in particular the subgroup obtained by considering elements that rotate some fixed subset of i coordinates and fix the remaining coordinates. For \(B\in {\text {O}}_i({\mathbb {C}})\), denote

a matrix rotating the first i coordinates and fixing the last \(d-i\). The set of such E(B) forms a subgroup of \({\text {O}}_d({\mathbb {C}})\) isomorphic to \({\text {O}}_i({\mathbb {C}})\), which we will denote

Note that \({\text {O}}^i_d({\mathbb {C}})\subset {\text {O}}^{i+1}_d({\mathbb {C}})\).

Proposition 4.4

Let \(1\le i < d\) and \(B \in {\text {O}}_i({\mathbb {C}})\). The image of the coordinates \(c_{1(i+1)}, c_{2(i+1)},\ldots , c_{i(i+1)}\) under the action of \(E(B) \in {\text {O}}^i_d({\mathbb {C}})\) on \((v,M) \in {\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) is given by

the standard action of \({\text {O}}_i({\mathbb {C}})\) on a vector in \({\mathbb {C}}^i\).

Proof

This follows from (17). \(\square \)

Consider the subgroup

The action of an element of \({W_d({\mathbb {C}})}\) changes the sign of various coordinates of \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\). We define the subgroup \({N_{d}^{i}({\mathbb {C}})}\) of \({\text {O}}^i_d({\mathbb {C}})\) as

Note that \({N_{d}^{i}({\mathbb {C}})}\) exactly contains matrices of the form

with \(B \in {\text {O}}^i_d({\mathbb {C}})\).

Proposition 4.5

For \(1\le i < d\), the normalizer of \({L_{d}^{(i)}}\) is equal to \({N_{d}^{d-i}({\mathbb {C}})}\).

Proof

It is immediate that \({N_{d}^{d-1}({\mathbb {C}})}\) leaves the space \({L_{d}^{(1)}}\) invariant.

Considering

we see that for \(g \in {\text {O}}_d({\mathbb {C}})\) to have

we must have \(g_{j d} = g_{d j} = 0\), \(j=1,\dots ,d-1\). This proves the claim for \(i=1\).

Let the statement be true for some \(1\le i \le d-2\). First, the normalizer of \({L_{d}^{(i+1)}}\) is contained in \({L_{d}^{(i)}}\). Diagonal entries of \(\pm 1\) leave every \({L_{d}^{(j)}}\) invariant, so it remains to investigate the matrix B in (21). Now by Proposition 4.4B acts by standard matrix multiplication on the vector \((c_{1(i+1)}, \dots , c_{i(i+1)})^\top \). We can hence apply the argument of the case \({L_{d}^{(1)}}\) to deduce that \({N_{d}^{d-(i+1)}({\mathbb {C}})}\) is the normalizer of \({L_{d}^{(i+1)}}\).

\(\square \)

We now show that \({L_{d}^{(d-1)}}\) is a relative \({W_d({\mathbb {C}})}\)-section, by constructing a sequence of relative sections for the action, drawing inspiration from recursive moving-frame algorithms (see [31] for instance).

Proposition 4.6

The linear space \({L_{d}^{(d-1)}}\) is a relative \({W_d({\mathbb {C}})}\)-section for the action of \({\text {O}}_d({\mathbb {C}})\) on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\). More precisely, there exists a set of rational invariants

such that if we define the invariant, non-empty, Zariski-open subset

we have that \({L_{d}^{(d-1)}}\) intersects each orbit that is contained in \({U_d({\mathbb {C}})}\). Furthermore, we can restrict each invariant to obtain

-

\(f_1 = c_1^2+\cdots +c_d^2\),

-

\(f_i|_{{L_{d}^{(i-1)}}} = c_{1(d-i+2)}^2+\cdots +c_{(d-i+1)(d-i+2)}^2\) for \(2\le i <d\).

-

\(f_d|_{{L_{d}^{(d-1)}}} = c_{12}^2\).

Proof

By Proposition 4.2, outside of \(f_1= ||v||^2=0\), there exists a rotation \(A_1\in {\text {O}}_d({\mathbb {C}})\) such that \(A_1\cdot (v,M)\in {L_{d}^{(1)}}\). Thus, by Proposition 4.5, \({L_{d}^{(1)}}\) is a relative \({N_{d}^{d-1}({\mathbb {C}})}\)-section. We also have that \(f_1|_{{L_{d}^{(1)}}} = c_d^2\). We proceed by induction. Suppose that for each point in \(U_i = \{ \prod _{k=1}^{i} f_k(p) \ne 0\}\) there exists a rotation \(B_{i}\in {\text {O}}_d({\mathbb {C}})\) such that \(B_{i}\cdot (v,M) \in {L_{d}^{(i)}}\).

By Proposition 4.5, the linear space \({L_{d}^{(i)}}\) is a relative \({N_{d}^{d-i}({\mathbb {C}})}\)-section and, by Proposition 2.26, there exists a field isomorphism \(\sigma _i : {\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})} \rightarrow {\mathbb {C}}({L_{d}^{(i)}})^{{N_{d}^{d-i}({\mathbb {C}})}}\). Using Proposition 4.4, one can show that on \({L_{d}^{(i)}}\) the polynomial \(c_{1(d-i+2)}^2+\cdots +c_{(d-i+1)(d-i+2)}^2\) lies in \({\mathbb {C}}({L_{d}^{(i)}})^{{N_{d}^{d-i}({\mathbb {C}})}}\). Let \(f_{i+1}\) be the unique element in \({\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})}\) such that \(f_{i+1}= \sigma _i^{-1}( c_{1(d-i+2)}^2+\cdots +c_{(d-i+1)(d-i+2)}^2)\).

By Proposition 4.2, for any \((v,M)\in {L_{d}^{(i)}}\) outside of \(\{f_{i+1}(v,M)=0\}\), there exists a rotation \(A_{i+1}\in {N_{d}^{d-i}({\mathbb {C}})}\) such that \(A_{i+1}\cdot (v,M) \in {L_{d}^{(i+1)}}\). Thus, for any (v, M) in \(U_{i+1} = \{ \prod _{k=1}^{i+1} f_k(v,M) \ne 0\}\) there exists a rotation \(B_{i+1}=A_{i+1}B_i\in {\text {O}}_d({\mathbb {C}})\) such that \(B_{i+1}\cdot (v,M) \in {L_{d}^{(i+1)}}\). Using Proposition 4.5, again, we see that \({L_{d}^{(i+1)}}\) is a relative \({N_{d}^{d-i-1}({\mathbb {C}})}\)-section.

We can continue this induction until we have \(f_{d-1}\) where \(f_{d-1}|_{{L_{d}^{(d-2)}}} = c_{13}^2+c_{23}^2\). Finally, note that the polynomial \(c_{12}^2\) lies in \( {\mathbb {C}}({L_{d}^{(d-1)}})^{W_d({\mathbb {C}})}\). Since \({L_{d}^{(d-1)}}\) is a \({W_d({\mathbb {C}})}\)-section (since \({W_d({\mathbb {C}})}= {N_{d}^{1}({\mathbb {C}})}\)), there exists \(f_d\in {\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})}\) such that \(f_d|_{{L_{d}^{(d-1)}}} = c_{12}^2\). \(\square \)

Remark 4.7

Denoting \(\varsigma _1:=\sigma _1,\,\varsigma _{i+1}:=\sigma _{i+1}\circ \sigma _i^{-1}\), we have the following chain of \(O_d({\mathbb {R}})\) transformations \(A_i\) and field isomorphisms \(\varsigma _i\):

Note though that while the \(\varsigma _i\) are uniquely determined, the \(A_i\) are not. The composition \(A_{d-1}A_{d-2}\cdots A_{2}A_{1}\), however, is unique up to a multiplication of a \({W_d({\mathbb {C}})}\) matrix from the left.

In particular, the above proposition implies that \({L_{d}^{(d-1)}}\) is a relative \({W_d({\mathbb {C}})}\)-section for the action of \({\text {O}}_d({\mathbb {C}})\) on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\), and hence, the function fields \({\mathbb {C}}({L_{d}^{(d-1)}})^{W_d({\mathbb {C}})}\) and \({\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})}\) are isomorphic. By examining the action of \({W_d({\mathbb {C}})}\) on \({L_{d}^{(d-1)}}\) and the structure of \({\mathbb {C}}({L_{d}^{(d-1)}})^{W_d({\mathbb {C}})}\), we can therefore glean information about the action of \({\text {O}}_d({\mathbb {C}})\) on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\). Consider a diagonal matrix \(D\in {W_d({\mathbb {C}})}\) given by

where \(w_i \in \{-1,1\}\) for \(1\le i\le d \). Then, the image of a point in \(L_d^{(d-1)}\) is \(D\cdot (v, M)=({\overline{v}}, {\overline{M}})\) where

Proposition 4.8

The action of \({W_d({\mathbb {C}})}\) on \({L_{d}^{(d-1)}}\cap {U_d({\mathbb {C}})}\) is free.

Proof

Suppose that the action is not free. Then, there exists \(D\in {W_d({\mathbb {C}})}\) such that \(D\cdot (v,M) = (v,M)\) and D is not the identity. Necessarily we have that for some \(1\le i\le d-1\), \(w_i=-1\). Since \(w_iw_{i+1}c_{i(i+1)} = c_{i(i+1)}\) and \(c_{i(i+1)}\ne 0\), then \(w_{i+1}=-1\). Using a similar argument, \(w_{i+2}=-1\) and so forth. However, \(w_dc_d=c_d\), where \(c_d\ne 0\), implying that \(w_d=1\) which is a contradiction. \(\square \)

Corollary 4.9

The action of \({\text {O}}_d({\mathbb {C}})\) on \({U_d({\mathbb {C}})}\subset {\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) is free.

Proof

By Proposition 4.6, each orbit on \({U_d({\mathbb {C}})}\) meets the linear subspace \({L_{d}^{(d-1)}}\). We show that the stabilizer of a point in \({L_{d}^{(d-1)}}\cap {U_d({\mathbb {C}})}\) contains only the identity. This is sufficient to prove the result, as any two points in the same orbit have isomorphic stabilizer groups.

Let \((v,M)\in {L_{d}^{(d-1)}}\) and consider \(g\in G\) such that \(g\cdot (v,M)=(v,M)\). By the proof of Proposition 4.5g must lie in \({W_d({\mathbb {C}})}\). However, by Proposition 4.8, the only element of \({W_d({\mathbb {C}})}\) fixing a point in \({L_{d}^{(d-1)}}\cap {U_d({\mathbb {C}})}\) is the identity. \(\square \)

Since we have that \(w_i^2=1\) for any \(1\le i \le d\), clearly

is a set of invariant functions on \({L_{d}^{(d-1)}}\).

Proposition 4.10

The set \({{\mathfrak {I}}}_{W_d({\mathbb {C}})}\) separates orbits and is a generating set for \({\mathbb {C}}({L_{d}^{(d-1)}})^{W_d({\mathbb {C}})}\).

Proof

Consider the map \(F: {L_{d}^{(d-1)}}\cap {U_d({\mathbb {C}})}\rightarrow {\mathbb {C}}^d\) defined by evaluating the invariants in \({{\mathfrak {I}}}_{W_d({\mathbb {C}})}\) on \({L_{d}^{(d-1)}}\cap {U_d({\mathbb {C}})}\), a non-empty, Zariski-open subset of \({L_{d}^{(d-1)}}\). We show that every fiber of this map is exactly an orbit of \({W_d({\mathbb {C}})}\). Consider any \((v,M)\in {L_{d}^{(d-1)}}\cap {U_d({\mathbb {C}})}\); then, set of points in the fiber of its image is given by

We can individually change the sign for any coordinate of (v, M). To change the sign of only \(c_d\), one can act by the matrix \(D\in {W_d({\mathbb {C}})}\) such that \(w_i=-1\) for all \(1\le i \le d\). Similarly for \(c_{i(i+1)}\), we can act by the matrix \(D\in {W_d({\mathbb {C}})}\) such that \(w_k = -1\) for \(1\le k \le i\) and \(w_k =1 \) otherwise. This implies that the above set is exactly the orbit of (v, M) under \({W_d({\mathbb {C}})}\), and hence, \({{\mathfrak {I}}}_{W_d({\mathbb {C}})}\) is separating on \({L_{d}^{(d-1)}}\cap {U_d({\mathbb {C}})}\). Then by Proposition 2.22, \({{\mathfrak {I}}}_{W_d({\mathbb {C}})}\) generates \({\mathbb {C}}({L_{d}^{(d-1)}})^W\). \(\square \)

Corollary 4.11

The set \({{\mathfrak {I}}}_d\) in (22) is a minimal-generating set of rational invariant functions for \({\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})}\) and separates orbits.

Proof

By Proposition 4.6, \({L_{d}^{(d-1)}}\) is a relative \({W_d({\mathbb {C}})}\)-section for the action of \({\text {O}}_d({\mathbb {C}})\) on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\), and \({{\mathfrak {I}}}_d\) restricts to the set of invariants \({{\mathfrak {I}}}_{W_d({\mathbb {C}})}\) in (25) for the action of \({W_d({\mathbb {C}})}\) on \({L_{d}^{(d-1)}}\). This means \({{\mathfrak {I}}}_{W_d({\mathbb {C}})}=\sigma _{d-1}({{\mathfrak {I}}}_d)\), where \(\sigma _{d-1}\) is the isomorphism from Proof of Proposition 4.6. By Proposition 4.10, the set \({{\mathfrak {I}}}_{W_d({\mathbb {C}})}\) is a generating set for \({\mathbb {C}}({L_{d}^{(d-1)}})^{W_d({\mathbb {C}})}\), and hence, by Corollary 2.27, \({{\mathfrak {I}}}_d\) is a generating set for \({\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})}\). By Proposition 2.22, \({{\mathfrak {I}}}_d\) also separates orbits.

By Corollary 4.9, the action of \({\text {O}}_d({\mathbb {C}})\) is free on a non-empty, Zariski-open subset of \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\). Thus, the maximum dimension of an orbit on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) is \(\dim ({\text {O}}_d({\mathbb {C}}))=\frac{d(d-1)}{2}\). By [46, Corollary, Section 2.3], the transcendence degreeFootnote 12 of \({\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})}\) is \(\frac{d(d+1)}{2} - \frac{d(d-1)}{2} =d\), and hence, any generating set must be at least of size d, implying that \({{\mathfrak {I}}}_d\) is minimal. \(\square \)

The above results for the action of \({\text {O}}_d({\mathbb {C}})\) on \({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}})\) help uncover the structure of the action of \({\text {O}}_d({\mathbb {R}})\) on \({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\). First we show that the intersection of the set \({U_d({\mathbb {C}})}\) defined in (23) with \({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\) is a non-empty and well-defined Zariski open subset of \({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\).

Proposition 4.12

The set \({{\mathfrak {I}}}_d\) in (22) is a subset of \({\mathbb {R}}({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}}))^{{\text {O}}_d({\mathbb {R}})}\). In particular,

is a well-defined, \({\text {O}}_d({\mathbb {R}})\)-invariant, and non-empty Zariski open subset of \({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\), and

intersects each orbit (under \({\text {O}}_d({\mathbb {R}})\)) contained in \({U_{d}({\mathbb {R}})}\).

Proof

In the proof of Proposition 4.6, each function \(f_i\) is obtaining by taking the inverse image of a real invariant function under the field isomorphism \(\sigma _i : {\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})} \rightarrow {\mathbb {C}}({L_{d}^{(i)}})^{{N_{d}^{d-i}({\mathbb {C}})}}\). The function \(f_i\) can be decomposed \(f_i = h_1 + \sqrt{-1}\cdot h_2\), where \(h_1\) and \(h_2\) are elements of \({\mathbb {R}}({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}}))^{{\text {O}}_d({\mathbb {R}})}\), and hence by Proposition 2.28, are elements of \({\mathbb {C}}({\mathbb {C}}^d\oplus \mathfrak {so}_{d}({\mathbb {C}}))^{{\text {O}}_d({\mathbb {C}})}\). Thus, \(h_1|_{{L_{d}^{(i)}}}= f_i|_{{L_{d}^{(i)}}}\). Since \(\sigma _i\) is a field isomorphism, \(f_i\) must define the same rational function as \(h_1\) and hence is an element of \({\mathbb {R}}({\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}}))^{{\text {O}}_d({\mathbb {R}})}\).

Note that Proposition 4.2 also holds for any \(v\in {\mathbb {R}}^d\), i.e., by applying Gram–Schmidt to a linearly independent set of d vectors \(\{v,v_1,\ldots ,v_{d-1}\}\) in \({\mathbb {R}}^d\). Thus if \(f_1(v,M)\ne 0\), there exists a rotation \(A\in {\text {O}}_d({\mathbb {R}})\) such that \(A\cdot (v,M)\in {L_{d}^{(1)}}\cap {\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\). Similarly as in the proof of Proposition 4.6, we can proceed by induction. Suppose \((v,M)\in {L_{d}^{(i)}}\cap {\mathbb {R}}^d\oplus \mathfrak {so}_{d}({\mathbb {R}})\) and \(f_{i+1}(v,M)\ne 0\). Then we have that

By Proposition 4.4, we can find a rotation \(A\in {N_{d}^{d-i}({\mathbb {C}})}\) such that \(A\cdot (v,M)\in {L_{d}^{(i+1)}}\). Therefore, if \((v,M)\in {U_{d}({\mathbb {R}})}\), there exists a rotation \(A\in {\text {O}}_d({\mathbb {R}})\) such that \(A\cdot (v,M)\in {L_{d}^{(d-1)}}\). \(\square \)

The following follows directly from Proposition 4.9.

Corollary 4.13