Abstract

A location histogram is comprised of the number of times a user has visited locations as they move in an area of interest, and it is often obtained from the user in the context of applications such as recommendation and advertising. However, a location histogram that leaves a user’s computer or device may threaten privacy when it contains visits to locations that the user does not want to disclose (sensitive locations), or when it can be used to profile the user in a way that leads to price discrimination and unsolicited advertising (e.g., as “wealthy” or “minority member”). Our work introduces two privacy notions to protect a location histogram from these threats: Sensitive Location Hiding, which aims at concealing all visits to sensitive locations, and Target Avoidance/Resemblance, which aims at concealing the similarity/dissimilarity of the user’s histogram to a target histogram that corresponds to an undesired/desired profile. We formulate an optimization problem around each notion: Sensitive Location Hiding (\({ SLH}\)), which seeks to construct a histogram that is as similar as possible to the user’s histogram but associates all visits with nonsensitive locations, and Target Avoidance/Resemblance (\({ TA}\)/\({ TR}\)), which seeks to construct a histogram that is as dissimilar/similar as possible to a given target histogram but remains useful for getting a good response from the application that analyzes the histogram. We develop an optimal algorithm for each notion, which operates on a notion-specific search space graph and finds a shortest or longest path in the graph that corresponds to a solution histogram. In addition, we develop a greedy heuristic for the \({ TA}\)/\({ TR}\) problem, which operates directly on a user’s histogram. Our experiments demonstrate that all algorithms are effective at preserving the distribution of locations in a histogram and the quality of location recommendation. They also demonstrate that the heuristic produces near-optimal solutions while being orders of magnitude faster than the optimal algorithm for \({ TA}\)/\({ TR}\).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

A location histogram is a statistical summary of a user’s whereabouts, comprised of the number of times a user has visited each location in an area of interest. Location histograms are often obtained from users, in the context of applications including recommendation [28, 29, 65], advertising [14, 21], and location pattern discovery [64]. For example, a recommender application typically employs a set of location histograms each corresponding to a different user (i.e., a user-location matrix) as a training set, and it aims at recommending locations that a user may be interested in visiting based on the user’s histogram [65]. Location histograms are also often visualized or analyzed directly [68].

However, a location histogram that leaves a user’s computer or device may pose a threat to the user’s privacy. This happens when the histogram contains visits to sensitive locations that the user does not want to disclose, because they are associated with confidential information (e.g., a temple is associated with a religion, and the headquarters of a political organization with certain political beliefs), or when the histogram can be used to profile the user (e.g., as “wealthy” or “minority member”) leading to price discrimination [37, 38] and unsolicited advertising [5]. For example, if the histogram reveals that a user frequently visits expensive restaurants, a targeted-advertisement application may display to the user advertisements about products and services that are priced higher than normal [37, 38].

In this work, we introduce two novel notions of histogram privacy, Sensitive Location Hiding and Target Avoidance/Resemblance, for protecting against the disclosure of sensitive locations and user profiling, respectively. Sensitive Location Hiding aims at concealing all visits to user-specified sensitive locations, by producing a sanitized histogram, in which the frequencies associated with the sensitive locations are equal to zero. This protects a user from an adversary who receives the sanitized histogram, knows the set of locations considered to be sensitive, and tries to infer which of these sensitive locations were visited by the user. By enforcing the notion of Sensitive Location Hiding, users are able to disseminate their location histogram in order to benefit from location-based services, such as location recommendation, while being protected from the inference of their sensitive locations and the aforementioned consequences such inference may have.

Target Avoidance aims at concealing the fact that the user’s histogram is similar to an undesirable histogram that, if disseminated, would lead to undesired user profiling. For example, a user may wish to make their histogram dissimilar to a target histogram of a typical wealthy person, containing frequent visits to expensive restaurants, to avoid price discrimination [37]. As another example, a user’s location histogram may allow the inference of the user’s political affiliation, religious beliefs, and sexual orientation, which may lead to emotional distress, harassment, or even persecution. Thus, a user would wish to avoid disseminating a histogram that is similar to histograms that can lead to such undesirable inferences. This protects from adversaries who use the sanitized histogram and the target histogram of a person with an undesirable profile, to infer that the user’s histogram resembles the latter histogram.

Target Resemblance is a variant of Target Avoidance, in which the user expressly wishes to make their histogram similar to the target histogram representing a desirable profile. For example, the desirable target histogram for a tourist could be that of a local resident in order to avoid discriminatory practices toward tourists (e.g., price discrimination). As another example, consider a company that engages in secret discriminatory hiring practices by preferentially hiring members of a particular demographic group. There are cases where companies have been shown to discriminate based on sexual orientation when hiring [57]. In these cases, a person who wishes to be hired will want to make their histogram resemble that of an heterosexual person, so as to avoid discriminatory treatment. The target histogram may be specified by the users themselves, or selected with the help of domain experts (see Sect. 3.3). Enforcing Target Resemblance protects from adversaries who use the sanitized histogram and the target histogram of a person with a desirable profile, to infer that the user’s histogram does not resemble the latter histogram.

Comparing Target Avoidance and Target Resemblance, we see that in both cases the adversary aims to infer whether or not the sanitized histogram resembles a given target histogram. The difference is that, in Target Avoidance, the user wants the adversary to conclude that there is no resemblance, whereas in Target Resemblance the user wants the opposite.

Our privacy notions can be achieved by histogram sanitization, i.e., by changing the frequencies of location visits in the histogram. However, sanitization incurs a quality (utility) loss, which must be controlled to ensure that the user obtains a good response from the application which uses their sanitized histogram. To achieve this balance between privacy and quality, we define an optimization problem around each privacy notion: the Sensitive Location Hiding (\({ SLH}\)) problem, which seeks to construct a sanitized histogram with minimum quality loss, and the Target Avoidance/Resemblance (\({ TA}\)/\({ TR}\)) problem, which seeks to avoid/resemble the target to a level at least equal to a user-provided privacy parameter, while ensuring that the quality loss does not exceed a user-provided quality parameter. If it is impossible to satisfy both the privacy and the quality requirements, then the problem has no solution.

Neither notion can be achieved by existing methods for histogram sanitization. The aim of existing methods is to either (I) prevent the inference of the exact frequencies of the histogram (i.e., the number of visits to one or more locations) [2, 16, 23, 26, 45, 61, 68], or (II) make a user’s histogram indistinguishable from a set of histograms belonging to other users [18, 20, 60]. Their aim is neither to hide sensitive locations, nor to avoid/resemble a target histogram. The privacy notions we introduce in the paper are important to achieve in real applications, as we discuss in Examples 2.1 and 2.2 in Sect. 2.

Therefore, we develop new methods for achieving the \({ SLH}\) and the \({ TA}\)/\({ TR}\) notions: (I) an optimal algorithm for \({ SLH}\), called \({ LHO}\) (Location Hiding Optimal), (II) an optimal algorithm for \({ TR}\), called \({ RO}\) (Resemblance Optimal), and (III) a greedy heuristic for \({ TR}\), called \({ RH}\) (Resemblance Heuristic). Because \({ TA}\) and \({ TR}\) are similar, we focus on \({ TR}\) and discuss \({ TA}\) briefly.

Our methods are both effective and efficient, as demonstrated by experiments using two real datasets derived from the Foursquare location-based social network [62], which together contain approximately 3400 histograms. In terms of effectiveness, all algorithms achieve the corresponding notions, or announce that it is impossible to achieve them, and they are additionally able to preserve: (I) the distribution of locations in a histogram, which is useful in applications such as aggregate query answering and classification [32, 68], and (II) the quality of location recommendation based on collaborative filtering [35]. In addition, the heuristic produces near-optimal solutions (up to \(1.5\%\) worse than the optimal), with respect to preserving distribution similarity. In terms of efficiency, all algorithms scale well with the histogram parameters, requiring from less than 1 second (the \({ LHO}\) algorithm) to 5 minutes (the \({ RO}\) algorithm). In addition, the \({ RH}\) heuristic is more efficient than the optimal algorithm by at least two orders of magnitude.

We note that our notions are framed in the context of location histograms but can be applied to any histogram. For example, they could be applied to a histogram comprised of webpage visits. The resultant sanitized histogram would then conceal visits to webpages that a user does not want to disclose, or it would resemble/avoid a target histogram for protecting the user from targeted advertising based on their webpage visits.

Organization We provide an overview of and motivation for our approach in Sect. 2; we introduce formal notation, and we formalize the privacy notions, the adversary models, and the optimization problems we solve in Sect. 3; we describe our algorithms and our heuristics in Sect. 4; we evaluate our approach in Sect. 5; we discuss related work in Sect. 6; and we conclude the paper in Sect. 7.

2 Overview and motivation of our approach

This section provides examples to motivate the need for Sensitive Location Hiding and Target Resemblance and also provides a high-level overview of the optimization problems and methods for solving them.

2.1 Sensitive Location Hiding

Given a set of sensitive locations, a histogram satisfies the notion of Sensitive Location Hiding when the frequency of each of its sensitive locations is zero. Clearly, one simple strategy to achieve this notion is by setting the frequency of each sensitive location of a given histogram to zero. However, this strategy may have a substantial negative impact on the quality (utility) of the histogram in location histogram applications. This is because it reduces the size (sum of frequencies) of the histogram. A size reduction should be avoided because some important statistics depend on the size of the histogram. An example of such statistics is the fraction of all users’ visits to a particular location in a city (i.e., the ratio between the sum of the frequency of the location over all users’ histograms and the sum of the sizes of these histograms), which is a simple indicator of the popularity of the location. Another example is the average number of visits to a location (i.e., the ratio between the size of the user’s histogram and the number of locations in the histogram), which is used in location recommendation [7, 35].

A different strategy that achieves the Sensitive Location Hiding notion, while preserving the size of the histogram, is to redistribute the frequency counts of the sensitive locations to nonsensitive ones. However, the redistribution needs to be performed in a way that preserves the quality (utility) of the histogram in location histogram applications. The impact of each possible redistribution on quality must be quantified, and the selected redistribution strategy must be the one with the lower impact. We quantify the impact of a redistribution strategy with a quality distance function, similarly to most works on histogram sanitization [2, 26, 61, 68]. This function offers generality, because different functions can be chosen for different applications.

The above discussion motivates the formulation of the Sensitive Location Hiding (\({ SLH}\)) optimization problem: given a histogram H, a set of sensitive locations, and a quality distance function, produce a sanitized histogram \(H'\) such that the frequency of each sensitive location in \(H'\) is 0, \(H'\) is as similar as possible to H, and \(H'\) has the same size as H. Similarity is measured with the quality distance function.

In Sect. 3.2, we give a formal definition of the \({ SLH}\) optimization problem, discuss the adversary model it provides protection against, and show that the problem is weakly NP-hard [41]. In addition, we discuss a variation of the problem which relaxes the size requirement and can be easily dealt with by our algorithms.

To illustrate the \({ SLH}\) notion and the \({ SLH}\) problem, we now provide Example 2.1, which is inspired from approaches on privacy-preserving recommendation [48, 58]. However, the \({ SLH}\) notion and problem are not tied to recommendation and cannot be handled with existing approaches.

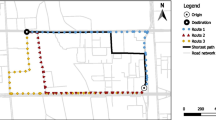

a Location histogram. b Sanitized histogram produced by the \({ LHO}\) algorithm when g and h are sensitive locations and the quality distance function is Jensen–Shannon divergence [30] (only positive counts are illustrated). c Target histogram used in the \({ TR}\) problem. d Sanitized histogram produced by the \({ RO}\) algorithm when applied with the target histogram in c. e Sanitized histogram produced by the \({ RH}\) heuristic when applied with the target histogram in (c)

Example 2.1

(Illustration of the \({ SLH}\) notion and \({ SLH}\) problem) An application provides location recommendations to users by analyzing their location profiles. To obtain a recommended location, a user must send 50 location visits to the application in the form of a location histogram. To compute the recommended location, the application uses common mining tasks, such as discovering frequent location patterns in the user’s histogram and finding similar histograms to it [67]. The location histogram H of a user Alice is shown in Fig. 1a. The histogram contains the number of times Alice visited each of the locations a to h. Alice is not willing to provide H to the application, because the last two locations in H, g and h, are sensitive, but she still wishes to receive a “good” recommended location by the application. Therefore, Alice solves the \({ SLH}\) problem and obtains the sanitized histogram \(H'\) shown in Fig. 1b. The sanitized histogram preserves privacy, because it does not contain the sensitive locations. It can be sent to the application to receive a fairly accurate recommendation, because it contains 50 visits to nonsensitive locations (the visits to sensitive locations are zero and not shown) and is as “similar” as possible to H, to the extent permitted by the privacy requirement. \(\square \)

Graph constructed by the Location Hiding Optimal (\({ LHO}\)) algorithm. The shortest path from s to t corresponds to the optimal solution of the \({ SLH}\) problem. Each node (i, j), \(i\in [1,m]\), \(j\in [0,K]\), in the path denotes the redistribution of j sensitive location visits into the nonsensitive bins \(1, \ldots , i\). The path corresponds to the optimal way of redistributing all K sensitive location visits into all m nonsensitive bins. The weight of the edge \(((i,j),(i+1,j+k))\) denotes the impact on quality caused by redistributing k sensitive location visits into the nonsensitive bin \(i+1\), and the sum of the edge weights of this path \(e_1 + \cdots + e_m\) quantifies the quality distance between the optimal solution and H

To optimally solve the \({ SLH}\) problem, the \({ LHO}\) algorithm finds the exact number of sensitive location visits that need to be redistributed into each nonsensitive bin (bin corresponding to a nonsensitive location), so that all sensitive location visits are redistributed and quality is optimally preserved, with respect to the quality distance function. That is, the algorithm determines the frequency of each nonsensitive location of the sanitized histogram \(H'\), so that \(H'\) has the same size with the given histogram H and is as similar as possible to it, with respect to the quality distance function. However, it is computationally prohibitive to directly compute the quality of each possible redistribution of the sensitive location visits into the nonsensitive bins and then select the optimal solution. This follows from the fact that there are \(O\left( \left( {\begin{array}{c}K+m-1\\ m-1\end{array}}\right) \right) \) ways to redistribute K sensitive location visits into m nonsensitive bins (each way corresponds to a weak composition of K [6]). Therefore, \({ LHO}\) solves the problem by modeling it as a shortest path problem between two specific nodes, s and t, of a directed acyclic graph (DAG) (see Fig. 2). The node s is labeled (0, 0), and each other node is labeled (i, j), where \(i\in [1,m]\) corresponds to a nonsensitive location \(L_i\) and \(j\in [0,K]\) corresponds to the number of sensitive location visits that will be redistributed into the nonsensitive bins \(1, \ldots , i\) of the sanitized histogram \(H'\). For example, the label (m, K) of the node t denotes the redistribution of all K sensitive location visits to all m nonsensitive bins of \(H'\). We may refer to a node using its label. The graph contains an edge from each node (i, j) to each node \((i+1,j+k)\) with \(k\in [0, K-j]\), where k denotes the number of sensitive location visits that are redistributed into the nonsensitive bin \(i+1\). For example, the edge \(((i,j), (i+1,j+k)) = ((1,1), (2,1))\) denotes that \(k=0\) visits are redistributed into the nonsensitive bin \(i+1=2\). Each edge \(((i,j), (i+1,j+k))\) has a weight that quantifies the impact on quality caused by the redistribution of k sensitive location visits into the nonsensitive bin \(i+1\). Every path from s to t corresponds to a feasible solution of the \({ SLH}\) problem. This is because the nodes in the path uniquely determine how all K sensitive location visits will be redistributed into all m nonsensitive bins of \(H'\) (see property (I) in Sect. 4.1). In addition, the length (sum of edge weights) of the path is equal to the quality distance between the corresponding solution \(H'\) and H (see property (II) in Sect. 4.1). Thus, the shortest path from s to t corresponds to a histogram \(H'\) that is as similar as possible to H, and therefore, it is the optimal solution of the \({ SLH}\) optimization problem. For example, applying the \({ LHO}\) algorithm to the histogram of Fig. 1a, when the locations g and h are sensitive and the quality distance function is Jensen–Shannon divergence (see Sect. 3.1.1), produces the sanitized histogram in Fig. 1b. Note that the visits to g and h are redistributed into all nonsensitive bins, so that the sanitized histogram is as similar as possible to the histogram of Fig. 1a. A formal description of the \({ LHO}\) algorithm, as well as the analysis of the algorithm is provided in Sect. 4.1.

2.2 Target Resemblance

Given a target histogram, a histogram satisfies the notion of Target Resemblance when it is similar enough to the target. A privacy distance function quantifies similarity, and a privacy parameter quantifies the threshold for determining whether the two histograms are similar enough.

Clearly, any histogram can be easily modified to be arbitrarily similar to a given target histogram, by simply redistributing all its frequency counts so that they are exactly equal to the counts in the target histogram. However, as in the case of \({ SLH}\), a simplistic redistribution can deteriorate quality unacceptably. The modification to the histogram must balance between resemblance to the target histogram and similarity to the original histogram. A quality distance function quantifies the quality loss caused by the modification, and a quality parameter quantifies the threshold for determining whether the loss is acceptable or not.

The above discussion motivates the formulation of the Target Resemblance (\({ TR}\)) optimization problem: given a histogram H, a target histogram \(H''\), a quality distance function and a quality parameter \(\epsilon \), a privacy distance function and a privacy parameter c, produce a sanitized histogram \(H'\) such that its quality distance from H is at most \(\epsilon \), its privacy distance from \(H''\) is minimized, and its size is the same as H. If the resulting privacy distance of \(H'\) from \(H''\) is larger than c, then there is no solution.

In Sect. 3.3, we give a formal definition of the \({ TR}\) problem, discuss the adversary model it provides protection against, and we show that it is weakly NP-hard. In addition, we discuss a variation that relaxes the size requirement and can be easily dealt with by our algorithms. To illustrate the \({ TR}\) privacy notion and optimization problem, we provide Example 2.2.

Example 2.2

(Illustration of the TR notion and problem, continuing from Example 2.1) Fig. 1a shows the location histogram H of a user, Bob, who wants to use the location recommendation application. Bob is not willing to provide H to the application, because he is concerned about price discrimination, as a result of frequent visits to locations f (“airport”) and g (“5-star hotel”). To achieve his purpose, Bob can solve the Target Resemblance (\({ TR}\)) problem to generate a histogram that resembles the target histogram \(H''\) in Fig. 1c. \(H''\) reflects a budget-conscious person, because in \(H''\) the frequencies of locations a (“train station”), b (“2-star hotel”), and c (“3-star hotel”) are relatively high, whereas the frequencies of f and g are relatively low. Hence, \(H''\) is likely to attract lower-priced recommendations than H would, and it is definitely more likely to prevent price discrimination [37, 38]. The resemblance to \(H''\) is satisfied by generating a sanitized histogram \(H'_{RO}\) (\({ RO}\) for “Resemblance Optimal”) that minimizes a privacy distance function between the sanitized histogram and \(H''\). In parallel, Bob still wishes to receive a “good” recommended location by the application. This quality requirement is satisfied by limiting the dissimilarity between H and the sanitized histogram \(H'_{RO}\) to a maximum of \(\epsilon =0.05\), as measured by a quality distance function, so that the sanitization preserves the similarity between H and other users’ histograms, which helps compute a “good” recommended location [35]. After solving the \({ TR}\) problem, Bob obtains the sanitized histogram \(H'_{RO}\) in Fig. 1d, which is almost identical to the target \(H''\). \(\square \)

To optimally solve the \({ TR}\) problem, the Resemblance Optimal (\({ RO}\)) algorithm finds the exact number of location visits that need to be added into, or removed from, each bin of a histogram H, so that the resultant sanitized histogram \(H'\) is as similar as possible to the target histogram \(H''\), and no more dissimilar from H than what is allowed by the quality threshold \(\epsilon \). Again, the large number of potential solutions, given by \(O\left( \left( {\begin{array}{c}N+n-1\\ n-1\end{array}}\right) \right) \), where N is the size of H and n is its length, prohibits directly computing the quality of each possible solution and selecting the optimal solution. Therefore, \({ RO}\) solves the problem by modeling it as a constrained shortest path problem in a DAG (see Fig. 3). The graph contains a path \((u^0_0,u^{N_1}_1,\ldots ,u^{N_n}_n)\) for each allocation of \(N = N_n\) counts to the n bins of the histogram (i.e., each allocation corresponds to a possible solution to the Target Resemblance problem, ignoring the quality constraint), where a node \(u_i^{N_i}\) corresponds to allocating \(N_i\) counts to bins 1 up to and including i. The length of a path is equal to the dissimilarity of the corresponding allocation to the target histogram \(H''\), whereas the cost of the path is equal to the quality loss as compared to the user’s histogram H. The algorithm finds the shortest path among those whose cost does not exceed the quality threshold \(\epsilon \). As the graph is a DAG, to find the optimal solution it suffices to explore it in breadth-first search order. First, we compute constrained shortest paths to all nodes that correspond to bin 1: \(u_1^{N_1}, N_1 = 0, \ldots , N\); then, we extend these paths to all nodes that correspond to bin 2: \(u_2^{N_2}, N_2 = 0, \ldots , N\) and we prune them if they violate the quality constraint; we continue all the way to \(u_{n-1}^{N_{n-1}}, N_{n-1} = 0, \ldots , N\) and finally to the node \(u_n^{N_n}, N_n = N\). The shortest path to that final node corresponds to the optimal valid allocation of N counts to bins \(1, \ldots , n\).

Graph constructed by the Resemblance Optimal (\({ RO}\)) algorithm. The shortest path from \(u^0_0\) to \(u^N_n\) with cost at most \(\epsilon \) corresponds to the optimal solution of the \({ TR}\) problem. The nodes of this path correspond to the optimal way of allocating counts to all bins. The edge weights of this path are (privacy, quality loss) pairs \((p_1,q_1), \ldots , (p_n,q_n)\). The two weights of an edge \(u_i^{N_i}\) to \(u_{i+1}^{N_i + k}\) are the privacy and quality effects of allocating exactly k counts to bin \(i+1\) of the solution histogram. The sum \(\sum _{i\in [1,n]}p_i\) quantifies the dissimilarity between the optimal solution and the target histogram (smaller is better) and the sum \(\sum _{i\in [1,n]}q_i\) quantifies the total quality loss, which should be at most \(\epsilon \)

When solution optimality is not necessary, the \({ TR}\) problem can be solved more efficiently by the \({ RH}\) heuristic. \({ RH}\) differs from the \({ RO}\) algorithm in that it restricts the set of bins in the histogram H whose number of location visits can increase or decrease. Specifically, it works in a greedy fashion, iteratively “moving” frequency counts from source bins to destination bins. The source bins have higher frequency in H than in the target histogram \(H''\), whereas the destination bins have lower frequency in H than in \(H''\). Thus, moving counts from source to destination bins makes the sanitized histogram more and more similar to the target histogram, but it incurs a quality loss due to changes in frequency counts. Therefore, to control the loss of quality, moves are performed for as long as the quality distance of the resultant sanitized histogram from H does not exceed the quality threshold. Example 2.3 below illustrates the \({ RO}\) algorithm and the \({ RH}\) heuristic.

Example 2.3

(Illustration of the \({ RO}\) algorithm and \({ RH}\) heuristic, continuing from Example 2.2) Bob applies \({ RO}\) to his histogram H in Fig. 1a, using the target histogram \(H''\) in Fig. 1c, the quality threshold \(\epsilon =0.05\), and the Jensen–Shannon divergence to measure dissimilarity from \(H''\) and from H. The algorithm produces the sanitized histogram \(H'_{RO}\) in Fig. 1d, which is as similar to \(H''\) as allowed by the specified threshold. Similarly, Bob applies \({ RH}\) and obtains the sanitized histogram \(H'_{RH}\) in Fig. 1e. Comparing \(H'_{RO}\) and \(H'_{RH}\) to \(H''\), we observe that \(H'_{RO}\) is very similar to \(H''\), while \(H'_{RH}\) is slightly less similar (e.g., the frequencies of f and g are equal in \(H''\) and \(H'_{RO}\), while they are not equal in \(H''\) and \(H'_{RH}\)). However, \(H'_{RH}\) is still useful for getting a good recommendation from the application, because the quality loss (dissimilarity to H) does not exceed \(\epsilon \). \(\square \)

3 Background, problem definitions, and adversary models

In this section, we define some preliminary concepts, and then, we formally define the \({ SLH}\), \({ TA}\), and \({ TR}\) optimization problems. A summary of the most important notation we introduce is in Table 1.

3.1 Preliminaries

We consider an area of interest, modeled as a finite set of semantic locations \(L=\{L_1, \ldots , L_{|L|}\}\) of cardinality |L|, where a location \(L_i\), \(i \in [1,|L|]\), is, e.g., “Italian Restaurant,” “Cinema,” or “Museum.”

We also consider a user who moves in this area. The user’s histogram is a vector of integer frequencies \(H=(f(L_1), \ldots , f(L_n))\), where \(n\le |L|\) is the length of the histogram. Each location \(L_i, i\in [1,n]\), has a frequency \(f(L_i)>0\), when \(L_i\) was visited by the user, or \(f(L_i)=0\) otherwise. We may refer to frequencies as counts.

We use H[i] to refer to the i-th element, or bin, of H, and N, or size, to refer to the \(L_1\)-norm \(|H|_1=\sum _{i\in [1,n]}H[i]\) of H. We use \({\mathcal {H}}_{n,N}\) to denote the set of all histograms of length n and size N.

Having compiled H, the user wishes to submit it to a location-based application. Before submitting it, the user transforms it into a sanitized histogram \(H'\) (in a way to be made concrete in Problems 3.1 and 3.2 below) and then submits \(H'\) to the application. Next, the application returns a response to the user. Depending on the sanitization required, \(H'\) may contain zero-frequency counts for some locations, or it may contain nonzero frequency counts for locations that the user never visited. If the user wishes, we can easily guarantee that \(H'\) will not contain nonzero frequency counts for locations that the user never visited, by assigning an infinite cost \(d_q(H[i],H'[i])\) for each such location \(L_i\).

3.1.1 Quality loss

Since the user submits \(H'\), which is in general different from H, there will be a negative impact on the quality of the application response. The resulting loss in quality is measured by a quality distance function \(d_q(H,H')\). For every pair \(H, H'\), we require that \(d_q(H, H') \ge 0\), and that \(H = H'\) implies \(d_q(H, H') = 0\). In addition, we require \(d_q\) to decompose as a sum over bins, i.e., there must be a function q such that \(d_q(H,H') = \sum _{i \in [1,n]} q(H[i],H'[i])\). Most distances used in data mining applications in which distances between histograms/vectors must be preserved (e.g., Jensen–Shannon divergence (\({ JS}\)-divergence) [30], Jeffrey’s divergence [45], \(L_2\)-distance (Euclidean distance) and squared Euclidean distance [66], variational distance [30], Pearson \(\chi ^2\) distance [30], and Neyman \(\chi ^2\) distance [30]) decompose as a sum over bins.

We use \({ JS}\)-divergence as the objective function \(d_q\) in our experiments (see Sect. 5). \({ JS}\)-divergence is a standard measure for quantifying distances between probability distributions, which is often used in histogram/vector classification [36] and clustering [40]. Given two histograms \(H_1, H_2\), the JS-divergence between them is defined as

with the convention \(0\cdot \log _2(0)=0\). \({ JS}\)-divergence is bounded in [0, 1] [30], and \(JS(H_1,H_2)=0\) implies no quality loss. As explained in [27], \({ JS}\)-divergence can also be easily extended to capture semantic similarity requirements (e.g., An Italian Restaurant is more similar to a French Restaurant than to an American Cinema), when this is needed in applications. The extended measure, called smoothed\({ JS}\)-divergence, requires preprocessing the histogram by kernel smoothing and then applying \({ JS}\)-divergence to the preprocessed histogram. Incorporating smoothed \({ JS}\)-divergence in our methods is straightforward and left for future work.

3.2 The sensitive Location Hiding problem: adversary model and formal definition

As discussed in the Introduction and in Sect. 2, the Sensitive Location Hiding (\({ SLH}\)) privacy notion aims to conceal all visits to sensitive locations. We formulate the adversary model and the desired privacy property for the \({ SLH}\) notion as follows.

The adversary knows: (I) the sanitized histogram \(H'\) that the user submits, (II) the set of all possible sensitive locations \(L'\), and (III) the fact that, if \(H'\) is fake, then it must have been produced by the \({ LHO}\) algorithm in our paper. The adversary has no other background knowledge. The adversary succeeds if, based on their knowledge, they manage to determine whether or not the user visited one or more of the sensitive locations in \(L'\).

The desired privacy property is the negation of the adversary’s success criterion. That is, the adversary must not be able to infer, from the sanitized histogram, that the user has visited any of the sensitive locations.

We formally define the corresponding optimization problem as follows:

Problem 3.1

(Sensitive Location Hiding (\({ SLH}\))) Given a histogram \(H \in {\mathcal {H}}_{n,N}\), a subset \(L'\subseteq L\) of sensitive locations, and a quality distance function \(d_q()\), construct a sanitized histogram \(H' \in {\mathcal {H}}_{n,N}\) that

Intuitively, the \({ SLH}\) problem requires constructing a sanitized histogram by redistributing the counts of the sensitive locations of H into bins that correspond to nonsensitive locations, in the best possible way according to \(d_q\). The sensitive locations are specified by the user based on their preferences.

In the \({ SLH}\) problem formulation, we follow the user-centric (or personalized) approach to privacy that is employed in [1, 3, 13, 50]. This approach requires the users to specify their own privacy preferences, so that these preferences are best reflected in the produced solutions. However, not all users may possess knowledge allowing them to identify certain locations in their histograms as sensitive. Yet, such users often know that a class of locations are sensitive, or they do not want to be associated with a class of locations [31, 59]. For instance, several users may not want to be associated with visits to any type of clinic or adult entertainment location. In this case, users may employ a taxonomyFootnote 1 to identify classes of sensitive locations, which requires less detailed knowledge. This method is inspired by [31, 59] and simply requires a user to select one or more nodes in the taxonomy. If a node u that is not a leaf is selected, then all locations corresponding to leaves in the subtree rooted at u will be considered as sensitive. If the selected node u is a leaf, then its corresponding location will be considered as sensitive. Such taxonomies already exist for location-based data, and they can also be automatically constructed based on machine learning techniques [55]. For example, in the Foursquare taxonomy (see Sect. 5), there is an aggregate category (internal node) “Medical center” which contains more specific categories (leaves) “Hospital,” “Rehab center,” etc.

Theorem 3.1

The \({ SLH}\) problem is weakly NP-hard.

Proof

See Appendix (Sect. A.1). \(\square \)

Clearly, the \({ SLH}\) problem seeks to produce a sanitized histogram \(H'\) with the same size as H. As discussed in the Introduction, this allows preserving statistics that depend on the size of the histogram, which are important in location-based applications, such as location recommendation. However, it is also possible to require the sanitized histogram \(H'\) to have a given size instead (e.g., when an application requires a histogram to have a certain number of location counts, or in pathological cases where redistribution leads to undesirable/implausible histograms). This leads to a variation of the \({ SLH}\) problem, referred to as \(SLH_{r}\), which requires redistributing \(r\ge 0\) counts of sensitive locations into the bins corresponding to nonsensitive locations. Note the following choices for r in \(SLH_{r}\): (I) for \(r=0\), the \(SLH_{r}\) problem requires constructing a sanitized histogram where each sensitive location has count 0 and each nonsensitive location has a count equal to that of its count in H. Such a histogram is trivial to produce, by simply replacing the count of each sensitive location with 0. (II) For \(r=\sum _{L_i\in L'}f(L_i)\) (i.e., equal to the total count of sensitive locations), \(SLH_{r}\) becomes equivalent to the \({ SLH}\) problem. (III) For \(r>\sum _{L_i\in L'}f(L_i)\), the \(SLH_{r}\) problem requires constructing a sanitized histogram with larger size than H. As we will explain in Sect. 4.1, it is straightforward to optimally solve \(SLH_{r}\) based on our \({ LHO}\) algorithm.

3.2.1 Solutions to the \({ SLH}\) optimization problem satisfy the desired privacy property

The adversary cannot distinguish between a user A who has only visited nonsensitive locations and thus submits a non-sanitized histogram \(H_A\), and a user B who has visited some sensitive locations and the algorithm has produced a sanitized histogram \(H_B'\) that is identical to \(H_A\). This is because every possible sanitized histogram that the \({ LHO}\) algorithm can output is a valid histogram that could have legitimately been produced by a user. Note that, if there are histograms that cannot be produced by a legitimate user, \({ LHO}\) can be trivially adapted to never output such histograms. This adaptation is easy because all histograms are encoded as paths in a graph, so illegitimate histograms are also paths in the graph, referred to as illegitimate paths, and these histograms can be avoided by simply changing the shortest path finding algorithm to an algorithm that finds a shortest path which is not contained in a given subset of illegitimate paths [44].

3.3 The Target Resemblance problem: adversary model and formal definition

As discussed in the Introduction and in Sect. 2, for the Target Resemblance (\({ TR}\)) privacy notion the user specifies a target histogram \(H''\) to resemble, a quality parameter \(\epsilon \) and a privacy parameter c. The objective of the \({ TR}\) optimization problem is to create a sanitized histogram \(H'\) that is as similar as possible to \(H''\), subject to the quality constraint \(d_q(H,H')\le \epsilon \). The privacy distance function that quantifies the notion of similarity is denoted \(d_p(H',H'')\). If \(d_p(H',H'') > c\), then \(H'\) is not acceptable, because it is not similar enough to the target.

The function \(d_p(H',H'')\) is nonnegative, and it must decompose as a sum over bins, i.e., there must be a function p such that \(d_p(H', H'') = \sum _{i \in [1, n]} p(H'[i],H''[i])\), using zeros to fill in missing location counts. In \({ TR}\), privacy is maximum when \(H'=H''\) (\(d_p(H',H'') = 0\)), because there is no better resemblance than being identical. Any function with these properties would be suitable as \(d_p\) (e.g., \({ JS}\)-divergence, or \(L_2\)-distance). We use \({ JS}\)-divergence as \(d_p\) in our experiments (see Sect. 5).

We can formulate the adversary model and the desired privacy property for this problem as follows: the adversary knows (I) the histogram \(H'\) that the user submits, (II) a target histogram \(H''\), (III) a privacy distance function \(d_p()\), and (IV) a privacy parameter c.

Upon receiving \(H'\), the adversary compares it to the target \(H''\) in order to profile the user. For example, if an adversary wants to determine whether the user is a member of a particular ethnic/religious/social group, the target histogram is the histogram of a typical member of that group. Formally, the adversary makes this determination by comparing \(d_p(H', H'')\) to c, i.e., by comparing the privacy distance between the user’s submitted histogram \(H'\) and \(H''\) to the privacy parameter c. If \(d_p(H', H'') \le c\), the adversary concludes that the user is a member of the group, otherwise they conclude that the user is not a member of the group. The adversary has no other background knowledge. In particular, the adversary does not know whether the user submitted their true histogram or the user submitted a modified histogram aiming to resemble a particular target histogram. The adversary succeeds if they conclude that the user is not a member of the group, i.e., \(d_p(H', H'') > c\).

The desired privacy property is the negation of the adversary’s success criterion. In TR, the desired privacy property is \(d_p(H', H'') \le c\).

We formally define the corresponding optimization problem as follows:

Problem 3.2

(Target Resemblance (\({ TR}\))) Given two histograms \(H, H'' \in {\mathcal {H}}_{n,N}\), a privacy distance function \(d_p()\), a privacy parameter c, a quality distance function \(d_q()\), and maximum quality loss threshold \(\epsilon \ge 0\), construct a sanitized histogram \(H' \in {\mathcal {H}}_{n,N}\) that

If the resulting \(H'\) is such that \(d_p(H', H'') > c\), then it is impossible to achieve both the desired privacy property and the desired quality constraint.

Intuitively, the \({ TR}\) problem requires constructing a sanitized histogram \(H'\) of the same length and size with H and \(H''\) that offers the best possible privacy by being as similar as possible to the target histogram \(H''\) according to \(d_p\), while incurring a quality loss at most \(\epsilon \) according to \(d_q\).

The function \(d_q\) is selected by the location-based application provider (recipient of the sanitized histogram) and is provided to the user together with an intuitive explanation of what different values of \(d_q()\) mean for quality. For example, \(d_q() \ge 0.8\) means “very low quality,” \(0.6 \le d_q() \le 0.8\) means “low quality” etc., where “quality” refers to the quality of the application response (e.g., recommendation) that the user receives. Then, in the spirit of user-centric (or personalized) privacy [19, 51], the user uses the above explanation by the provider to choose a value of \(\epsilon \) that corresponds to his/her tolerance for quality loss.

The problem requires the user to specify the target histogram \(H''\). However, some users may not possess sufficient knowledge to perform this task, even though they want to resemble a person with certain characteristics (e.g., a wealthy person). In these cases, \(H''\) can be constructed as follows. The user chooses a target probability distribution \(h''\) from a repository of probability distributions that are constructed by domain experts and labeled accordingly (e.g., a distribution corresponding to a “wealthy” profile, a “tourist” profile, a “healthy person” profile [22, 56]), in the same way that experts compile, e.g., adblock filters (lists of URLs to block) or lists of virus signatures for antivirus software. To choose one of these profiles, the user looks for a label that they want to resemble. This setup is very similar to other papers in the literature [1, 13].

Note that the distribution \(h''\) may be defined on a different set of locations from the user’s histogram H, in which case both are expanded to cover all locations in either \(h''\) or H, with zero values for the new locations. Then, each entry \(h''[i]\) is multiplied by the size N of the user’s histogram H to create the target histogram \(H''[i]\) (see Sect. 4.2). So, in effect, \(H''\) is the expected histogram by a hypothetical user that picks N locations from the distribution \(h''\). By the above construction, \(H''\) and H are of the same length n and size N, but note that \(H''\) may not have integer counts, because \(H''[i] = N \cdot h''[i]\) is not necessarily an integer. Strictly speaking, this violates the requirement of histograms to have integer counts, but that is not a problem for our methods, because the privacy distance functions do not need integer arguments. However, we do require the histogram \(H'\) that the algorithms output to have integer counts.

Theorem 3.2

The \({ TR}\) problem is weakly NP-hard.

Proof

See Appendix (Sect. A.2). \(\square \)

Clearly, the \({ TR}\) problem requires constructing a sanitized histogram \(H'\) with the same size as H and \(H''\). That is, it assumes that the desirable target histogram \(H''\) has the same counts as H, but these counts are distributed differently from H. However, it is also possible to relax this assumption. This leads to a variation of the \({ TR}\) problem, referred to as \({ TR}\)\(_{|H''|_1}\), which instead requires the sanitized histogram \(H'\) to only have the same size as \(H''\), while it can be different from the size of H. It is straightforward to optimally (resp., heuristically) solve \({ TR}\)\(_{|H''|_1}\) based on our \({ RO}\) algorithm (resp., based on our \({ RH}\) heuristic) (see Sect. 4.2).

3.3.1 Solutions to the TR optimization problem satisfy the desired privacy property

The TR problem tries to minimize \(d_p(H', H'')\), while satisfying the quality constraint \(d_q(H, H') \le \epsilon \). Of course, a particular choice of \(\epsilon \) affects privacy. If \(\epsilon \) is low, an algorithm for the \({ TR}\) problem may output an \(H'\) that is the same or very similar to H, because all histograms that satisfy the specified quality constraint are close to H. Then, the user has to decide whether this \(H'\) is safe to release.

Given the privacy parameter c, it is not safe to release \(H'\) when \(d_p(H',H'') > c\). If \(d_p(H',H'') > c\), the user will decide not to release any histogram at all. Alternatively, the user may want to re-run the algorithm with a larger \(\epsilon \), i.e., to sacrifice more quality in order to achieve the privacy requirement.

The user’s decision may depend on the intuitive meaning of the function used for \(d_p\). For example, if \(d_p\) is Pearson \(\chi ^2\) and the target \(H''\) models a “wealthy” user, then \(d_p(H',H'')\) quantifies how much more likely it is that \(H'\) has been produced by a user who follows the “wealthy” profile compared to any other profile.Footnote 2 Thus, if this likelihood ratio exceeds c, then the user may not want to release that \(H'\).

It is also trivial to exclude solutions with \(d_p(H',H'')>c\) by modifying our methods to disregard such solutions and terminate if no solution exists. In conclusion, the user either submits a histogram that satisfies the privacy property, or nothing at all.

3.4 The Target Avoidance problem

As mentioned above, Target Avoidance (\({ TA}\)) is a variant of the Target Resemblance (\({ TR}\)) problem, which we briefly discuss below. The algorithms for the \({ TA}\) problem are very similar to those for \({ TR}\) and are omitted; for details see [33].

Problem 3.3

(Target Avoidance (\({ TA}\))) Given two histograms \(H, H'' \in {\mathcal {H}}_{n,N}\), a privacy distance function \(d_p()\), a privacy parameter c, a quality distance function \(d_q()\), and maximum quality loss threshold \(\epsilon \ge 0\), construct a sanitized histogram \(H' \in {\mathcal {H}}_{n,N}\) that

If the resulting \(H'\) is such that \(d_p(H', H'') < c\), then it is impossible to achieve both the desired privacy property and the desired quality constraint.

Intuitively, the \({ TA}\) problem requires constructing a sanitized histogram \(H'\) of the same length and size with H and \(H''\). The sanitized histogram must offer the best possible privacy by being as dissimilar as possible to the target histogram \(H''\) according to \(d_p\), while incurring a quality loss at most \(\epsilon \) according to \(d_q\). The threshold \(\epsilon \) and target histogram \(H''\) are specified by the user based on their preferences. For example, the user can set \(H''\) to H, in order to avoid H itself, or to a part of H that contains the locations that characterize an undesirable profile (e.g., frequent visits to airports) or are frequented by a certain ethnic minority (which may help infer that an individual belongs to the minority). The user could also choose \(H''\) with the help of domain experts, as in the TR problem.

In terms of an adversary model, the adversary has the same knowledge as in \({ TR}\) and they succeed if \(d_p(H', H'') < c\). If the algorithm does not produce an \(H''\) such that \(d_p(H', H'') \ge c\), then the user can either not submit any histogram at all, or the user may want to re-run the algorithm with a larger \(\epsilon \). The proof that the \({ TA}\) problem leads to a solution satisfying the desired privacy property is similar to that for \({ TR}\) (omitted).

Theorem 3.3

The \({ TA}\) problem is weakly NP-hard.

Proof

Omitted (see [33]). \(\square \)

The \({ TA}\) problem is very similar to the \({ TR}\) problem. This is established through a reduction from \({ TA}\) to \({ TR}\) that is given in Appendix (Sect. A.3). There is also a variation of \({ TA}\), referred to as \({ TA}\)\(_{|H''|_1}\), which requires the sanitized histogram \(H'\) to have the same size as \(H''\), but not necessarily as H. Again, our methods can easily deal with this variation.

4 Algorithms

Since the \({ SLH}\), \({ TR}\), and \({ TA}\) problems are weakly NP-hard, it is possible to design pseudopolynomial algorithms to optimally solve them. Such algorithms run in polynomial time in the numerical value of the input [41]. We present optimal algorithms based on shortest path problems for the \({ SLH}\) and \({ TR}\) problems. In addition, we present a heuristic algorithm for the \({ TR}\) problem, which is more efficient than the optimal algorithm by two orders of magnitude and finds solutions of comparable quality. Furthermore, we explain how our methods can deal with the variations \(SLH_{r}\) and \(TR_{|H''|_1}\) of the \({ SLH}\) and \({ TR}\) problem, respectively.

4.1 \({ LHO}\): an optimal algorithm for \({ SLH}\)

This section presents Location Hiding Optimal (\({ LHO}\)), which optimally solves the \({ SLH}\) problem. Before presenting \({ LHO}\), as motivation, we consider a simple algorithm which distributes the counts of the sensitive location(s) to the nonsensitive bin(s) proportionally to the counts of the nonsensitive bins. Thus, it aims to construct an \(H'\) by initializing it to H and then increasing the count of each nonsensitive bin \(H'[i]\) by \(x[i]=H[i]\cdot \frac{\sum _{i\in L'}H[i]}{\sum _{i \in L{\setminus } L'}H[i]}=H[i]\cdot \frac{K}{N-K}\), while assigning 0 to each sensitive bin. While intuitive, this algorithm fails to construct an \(H'\), for a given histogram H and distance function \(d_q\), when x[i] is not an integer, and also it may lead to solutions with large \(d_q(H,H')\) (i.e., low data utility), as it does not take into account the input distance function \(d_q\).

We now discuss the \({ LHO}\) algorithm. Without loss of generality, we assume that the nonsensitive locations correspond to the first \(n-|L'|\) bins of the original histogram \(H=(f(L_1),\ldots ,f(L_n))\), while the remaining \(|L'|\) bins correspond to the sensitive locations. The total count of sensitive locations in H is \(K=\sum _{L_i\in L'}f(L_i)\). \({ LHO}\) must move (redistribute) these counts into the nonsensitive bins, while minimizing the quality error \(d_q()\).

The \({ LHO}\) algorithm is founded on the following observation: the optimal way of redistributing counts to each nonsensitive bin of \(H'\) corresponds to a shortest path between two specific nodes of a search space graph\(G_{{ LHO}}(V,E)\), where V and E are the set of nodes and set of edges of \(G_{{ LHO}}\), respectively. In the following, we discuss the construction of \(G_{{ LHO}}\) and the correspondence between this shortest path and the solution to the \({ SLH}\) problem. Then, we discuss the \({ LHO}\) algorithm.

Search space graph \(G_{{ LHO}}\) for the Sensitive Location Hiding problem. Layer 0 contains the node (0, 0) and each of the layers 1 to \(n-|L'|\) contains \(K+1\) nodes. Each edge connects nodes of consecutive layers and has a weight equal to the error \(E_{i+1,k}\), where \(i+1\) is the layer of the end node of the edge and k is the count of sensitive locations. \(E_{i+1, k}\) represents the impact of redistributing (i.e., adding) k counts into the \(i+1\) bin of the sanitized histogram \(H'\), which is initialized to the original histogram H. That is, \(E_{i+1,k}=q(H[i+1], H'[i+1]+k)\). The missing nodes and edges are denoted with “\(\ldots \)”

\(G_{{ LHO}}\) is a multipartite directed acyclic graph (DAG) (see Fig. 4) such that:

It contains \(n-|L'|+1\) layers of nodes. Layer 0 comprises a single node, and layers \(1,\ldots , n-|L'|\) comprise \(K+1\) nodes each. Each layer \(1,\ldots , n-|L'|\) corresponds to a nonsensitive bin.

The single node in layer 0 is labeled (0, 0), and each node in layer \(i\in [1,n-|L'|]\) is labeled (i, j), where j denotes the redistribution (i.e., addition) of j counts to bins 1 up to and including i of the sanitized histogram. We may refer to nodes of \(G_{{ LHO}}\) using their labels.

There is an edge \(((i,j), (i+1, j+k))\) from node (i, j) to node \((i+1, j+k)\), for each \(i\in [0, n-|L'|-1]\), where \(k\ge 0\), \(j+k\le K\). That is, each node labeled (i, j) is connected to every node in the following layer \(i+1\) that corresponds to a count of at least j.

Each edge \(((i,j), (i+1, j+k))\) is associated with a weight equal to the error \(E_{i+1, k} =q(H[i+1], H[i+1] + k)\). The error \(E_{i+1, k}\) quantifies the impact on quality that is incurred by redistributing (i.e., adding) k counts into bin \(i+1\).

Let P be a path comprised of nodes (0, 0), \((1,k_1)\), \(\ldots \), \((n-|L'|,k_{n-|L'|})\) of \(G_{{ LHO}}\). The properties below easily follow from the construction of \(G_{{ LHO}}\):

- (I)

The path P corresponds to an addition of \(k_{i}-k_{i-1}\) counts to the i-th bin of the histogram, for each \(i\in [1, n-|L'|]\), where \(k_0=0\).

- (II)

The length of P is equal to the total weight \(E_{1, k_1}+\ldots , E_{n-|L'|,k_{n-|L'|}}\) of the edges in P. This total weight is the total quality loss incurred by the allocation corresponding to P.

Thus, the path P corresponds to a sanitized histogram \(H'\) whose first \(n-|L'|\) bins have counts \(H[i] + (k_i - k_{i-1}), i = 1, \ldots , n-|L'|\), and \(d_q(H, H')\) is equal to \(\sum _{i=1}^{n-|L'|}q(H[i], H[i] + k_i - k_{i-1})\). For example, the path comprised of nodes \((0,0), (1,K), \ldots , (n-|L'|,K)\) in Fig. 4 corresponds to a sanitized histogram \(H'\) in which all K sensitive counts have been moved to the first bin. The quality loss in this case is just \(d_q(H, H') = q(H[1], H[1]+K)\), as all other bins have the same counts in both H and \(H'\).

Conversely, each possible allocation of the K sensitive counts into nonsensitive bins corresponds to a path between the nodes (0, 0) and \((n-|L'|, K)\) of \(G_{{ LHO}}\), which represents a feasible solution to the \({ SLH}\) problem. Therefore, the shortest path between the nodes (0, 0) and \((n-|L'|, K))\) of \(G_{{ LHO}}\) (i.e., the path with the minimum length \(E_{1, k_1}+\ldots , E_{n-|L'|,K}\); ties are broken arbitrarily) represents a sanitized histogram \(H'=(H[1]+(k_1-k_0), \ldots , H[n-|L'|]+(K-k_{n-|L'|-1}), 0,\ldots , 0)\), which is the optimal solution to \({ SLH}\). This is because \(H'\) has minimum \(d_q(H,H')\), the same size with H, and a zero count for each sensitive location.

We now present the pseudocode of the \({ LHO}\) algorithm. In step 1, the algorithm constructs the search space graph \(G_{{ LHO}}\). In step 2, the algorithm finds a shortest path between the nodes (0, 0) and \((n-|L'|,K)\) of \(G_{{ LHO}}\). In step 3, the sanitized histogram \(H'\) corresponding to the shortest path (i.e., the optimal solution to the \({ SLH}\) problem) is created and, last, in step 4, \(H'\) is returned.

Example 4.1

\({ LHO}\) is applied to the histogram \(H=(7,2,3,2,13,12,8,3)\) in Fig. 1a. The set of sensitive locations \(L'\) contains the locations g and h with counts 8 and 3, respectively, and the quality distance function \(d_q\) is \({ JS}\)-divergence. In step 1, the algorithm constructs the search space graph in Fig. 5. The graph has \(n-|L'|+1=7\) layers of nodes, where \(n=8\) is the length of H and \(|L'|=2\) is the number of sensitive locations. Layer 0 contains the node (0, 0) and each other layer contains \(K+1=12\) nodes, where \(K=11\) is the total count of sensitive locations in H. Each node in layers \(1, \ldots , 6\) is labeled (i, j); i denotes the layer of the node and j denotes the counts of sensitive locations that are redistributed into bins \(1, \ldots , i\). For example, the node (6, 11) denotes that all 11 counts of the sensitive locations are added into bins \(1,\ldots , 6\). In addition, there is an edge with weight \(E_{i+1,k}\) between each node (i, j) and every node \((i+1, j+k)\), for each \(k\in [0, K-j]\). The weight \(E_{i+1,k}\) quantifies the increase to \({ JS}\)-divergence incurred by redistributing (i.e., adding) k counts of sensitive locations into bin \(i+1\). For example, the node (0, 0) is connected to the nodes \((1,0), \ldots , (1,11)\), and the edge ((0, 0), (1, 2)) has weight \(E_{1,2}\approx 1.8\times 10^{-3}\), because adding 2 counts into the first bin increases \({ JS}\)-divergence by approximately \(1.8\times 10^{-3}\). In step 2, \({ LHO}\) finds the shortest path from the node (0, 0) to the node (6, 11), shown in Fig. 5, and in step 3 it constructs the sanitized histogram \(H'=(9,3,4,3,16,15,0,0)\) (see Fig. 1b) that corresponds to the shortest path. Note that j in the label (i, j) of each node in the shortest path corresponds to the counts of sensitive locations that are added into bins \(1, \ldots , i\) in \(H'\). Last, in step 4, \(H'\) is returned. \(\square \)

Search space graph \(G_{{ LHO}}\) for Example 4.1 (the missing nodes and edges are denoted with “\(\ldots \)”), and shortest path along with its corresponding weights

The time complexity of \({ LHO}\) is \(O\left( (n-|L'|)\cdot K^2+S\right) \), where \((n-|L'|)\cdot K^2\) is the cost of constructing \(G_{{ LHO}}\) (step 1) and S is the cost of finding the shortest path (step 2). Constructing \(G_{{ LHO}}\) takes \(O\left( (n-|L'|) \cdot K^2\right) \) time, because \(G_{{ LHO}}\) contains \(O\left( (n-|L'|)\cdot K\right) \) nodes and \(O\left( K+1+(n-|L'|-1)\cdot \left( {\begin{array}{c}K\\ 2\end{array}}\right) \right) = O\left( (n-|L|)\cdot K^2\right) \) edges, and the computation of each edge weight \(E_{i+1,k}\) takes O(1) time, because it is computed by accessing a single pair of bins from H and \(H'\). The cost S is determined by the shortest path algorithm (e.g., it is \(O\left( (n-|L|)\cdot K^2\cdot \log \left( (n-|L'|)\cdot K^2\right) \right) \) for Dijkstra’s algorithm with binary heap [47]).

Note, the variation \(SLH_r\) in Sect. 3.2 can be optimally solved by applying \({ LHO}\) with \(K=r\).

4.2 Optimal algorithm for Target Resemblance

In this section, we model and solve the \({ TR}\) problem as a constrained shortest path problem on a specially constructed search space graph \(G_{{ TR}}\). It follows immediately that the \({ TA}\) problem can be seen as a longest path problem on the same graph. Because the graph is a DAG, computing longest and shortest paths has the same complexity [47]: by visiting the graph nodes in breadth-first search order, we can simply keep track of the shortest (or longest) path to each node. We can even solve the two problems in one pass. We focus on the \({ TR}\) problem, which we solve optimally with the Resemblance Optimal (\({ RO}\)) algorithm.

In the following, we discuss the construction of \(G_{{ TR}}\) and then provide the pseudocode of \({ RO}\).

From the histogram H and the distance functions \(d_p\) and \(d_q\), we construct a multipartite DAG \(G_{{ TR}}=(V,E)\), as follows (see also Fig. 6):

There are \(n\cdot (N + 1) + 1\) nodes in V, where n and N are the length and the size of the histogram H, respectively.

The nodes are arranged in layers \(0, 1,\ldots , n\), with layer 0 having a single node and layers \(1,\ldots ,n\) having \(N+1\) nodes each. Layer \(i \in [1, n]\) corresponds to bin i (location \(L_i\)) in the histogram. Node \(j \in [0, N]\) in layer i corresponds to the allocation of a total of j frequency counts to histogram bins 1 up to and including i.

The single node in layer 0 is labeled (0, 0), and each node j in every other layer i is labeled (i, j). We may refer to nodes of \(G_{{ TR}}\) using their labels.

The edges in E go from each node (i, j) to each node \((i+1, j+k), k\ge 0, j+k \le N\), i.e., to each node in the following layer that has a frequency count at least equal to j.

The weight of an edge from (i, j) to \((i+1, j+k)\) is the pair \( (p_{\mathrm{err}}, q_{\mathrm{err}})^{i+1,k}\) of the privacy and quality errors of allocating exactly k counts to bin \(i+1\) of the sanitized histogram: \(p_{\mathrm{err}} = p(k,H''[i+1]), q_{\mathrm{err}} = q(H[i+1], k)\). The p-length of a path is the sum of its \(p_{\mathrm{err}}\) weights. We will refer to the path with the minimum p-length as the p-shortest path. Similarly, the q-length of a path is the sum of its \(q_{\mathrm{err}}\) weights.

Search space graph \(G_{{ TR}}\) for the Target Resemblance problem. Layer 0 is an auxiliary layer that just contains the node (0, 0). Layer \(i = 1, \ldots , n\) corresponds to bin i of the sanitized histogram, and node (i, j) corresponds to allocating \(j = 1, \ldots , N\) counts to bins 1 up to and including i. A path from (0, 0) to (n, N) completely defines an allocation of N counts to n bins. The weight of the edge from (i, j) to \((i+1, j+k)\) is the privacy and quality error of allocating exactly k counts to bin \(i+1\). As these errors are additive, the admissible paths are those whose total q-length is less than the threshold \(\epsilon \). Among them, the p-shortest path from (0, 0) to (n, N) corresponds to the optimal solution to \({ TR}\), because it also has q-length at most \(\epsilon \)

At this point, note two important differences between the edge weights of \(G_{{ TR}}\) and \(G_{{ LHO}}\) (Sect. 4.1): first, and most obvious, the edge weights in \(G_{{ TR}}\) are pairs of (privacy error, quality error), whereas in \({ SLH}\) the weights are quality errors. Second, in \(G_{{ TR}}\) the weight of edge from (i, j) to \((i+1, j+k)\) corresponds to setting \(H'[i+1]\) exactly equal to k, whereas in \(G_{{ LHO}}\) that edge weight would correspond to setting \(H'[i+1]\) equal to \(H[i+1] + k\).

From the construction of \(G_{{ TR}}\), it follows that there is a \(1-1\) correspondence between a sanitized histogram \(H' \in {\mathcal {H}}_{n,N}\) and a path from (0, 0) to (n, N) in \(G_{{ TR}}\). Therefore, to solve the \({ TR}\) problem, we need to find the path from (0, 0) to (n, N) with minimum p-length among the paths whose q-length is at most \(\epsilon \). Then, it is straightforward to construct the histogram from the path.

We now provide the pseudocode of the \({ RO}\) algorithm. We assume that the preprocessing needed to construct \(H''\) from \(h''\) is done before the actual algorithm runs, and also H and \(H''\) have been expanded to be defined on the same set of locations, if needed (see Sect. 3.3 for details on \(h''\)). Also, for the moment, we assume that \(d_q\) takes nonnegative integer values.

In step 1, \({ RO}\) constructs the graph \(G_{{ TR}}\). In steps 2–6, the algorithm iterates over each node v of the graph and associates with it a vector \({\mathcal {V}}_v\), indexed by all possible values of \(d_q\). The elements of \({\mathcal {V}}_v\) are initialized to 0 for node (0, 0), and to \(\infty \) for any other node of \(G_{{ TR}}\). Next, in steps 7–9, \({ RO}\) iterates over the nodes of \(G_{{ TR}}\) in increasing lexicographic order, starting from node (1, 0), and for each node v it updates all the elements of \({\mathcal {V}}_v\). Each element \({\mathcal {V}}_v[k]\) is updated using the following dynamic programming equation:

The element \({\mathcal {V}}_v[k]\) is equal to the p-length of the p-shortest path from (0, 0) to node v with q-length exactly equal to k. Thus, as explained above, this path is a feasible solution to the \({ TR}\) problem, and so the optimal solution to \({ TR}\) is the p-shortest path from (0, 0) to (n, N) (i.e., the path corresponding to the minimum element of the vector \({\mathcal {V}}_{(n, N)}\)). The nodes of this path are found in step 10, and its corresponding histogram \(H'\) is constructed in step 11.

We now consider the general case in which the values of q-length of a path from (0, 0) to (n, N) are not necessarily integer. We first show that the q-length of this path is polynomial in N, in Theorem 4.1 below. Then, we show that the number of values of q-length for all paths is polynomial in N, which implies that these values are not too many to store in the vectors \({\mathcal {V}}_u\).

Theorem 4.1

The q-length of a path from (0, 0) to (n, N) can only take a polynomial (in N) number of values.

Proof

The number of possible allocations of N elements to n bins, where some of the bins may be left empty, is equal to the number of n-tuples of nonnegative integers \(f_1,\ldots ,f_N\) that sum to N. Such tuples are called weak compositions of N into n parts (weak, because zeros are allowed), and their total number is

which is a polynomial in N [6]. \(\square \)

Equation 4.2 gives all possible q-lengths for a path from (0, 0) to (n, N). We also need to keep intermediateq-length values in the vectors \({\mathcal {V}}_v\), i.e., q-lengths for paths from (0, 0) to nodes in any layer \(1,\ldots , n\). But each intermediate allocation has at most as many q-length values as the final one, because an intermediate allocation allocates at most N elements to at most n bins. As there are n stages of intermediate allocations, we have in total at most \(n\cdot \left( {\begin{array}{c}N + n - 1\\ n - 1\end{array}}\right) \) values, i.e., a polynomial in N.

Example 4.2

\({ RO}\) is applied to the histogram \(H=(7,2,3,2,13,12,8,3)\) in Fig. 1a, using the target histogram \(H''=(10,8,6,2,13,4,4,3)\) in Fig. 1c, \({ JS}\)-divergence as the quality distance function \(d_q\) and the privacy distance function \(d_p\), and \(\epsilon =0.05\). In step 1, the algorithm constructs the search space graph in Fig. 7. The graph has \(n+1=9\) layers of nodes, where \(n=8\) is the length of H. Layer 0 contains the node (0, 0) and each other layer contains \(N+1=51\) nodes, where \(N=50\) is the size of H. Each node in layers \(1, \ldots , 8\) is labeled (i, j); \(i\in [1,8]\) denotes the layer of the node and corresponds to bin i, while \(j\in [0,50]\) denotes the counts allocated to bins \(1, \ldots , i\). For example, the node (8, 50) denotes that all 50 counts of H are allocated to bins \(1,\ldots , 8\). In addition, there is an edge from each node (i, j) to every node \((i+1, j+k)\), for each \(k\in [0, 50-j]\). The edge weight is a pair \((p_{\mathrm{err}}, q_{\mathrm{err}})\), where the privacy error \(p_{\mathrm{err}}\) (respectively, quality error \(q_{\mathrm{err}}\)) quantifies the error with respect to \({ JS}\)-divergence that is incurred by allocating k counts to bin \(i+1\) of the sanitized histogram (see Fig. 7). For example, the node (0, 0) is connected to the nodes \((1,0), \ldots , (1,50)\), and the edge ((0, 0), (1, 10)) has \((p_{\mathrm{err}},q_{\mathrm{err}})^{1,10}=(0, 3.8\times 10^{-3})\), incurred by allocating 10 counts to the first bin. In steps 2–9, \({ RO}\) computes the vector \({\mathcal {V}}_u\) for each node u. In step 10, the algorithm finds the shortest path from node (0, 0) to node (8, 50) with \(q_{\mathrm{err}} \le \epsilon \) (see Fig. 7), and in step 11 it constructs the sanitized histogram \(H'=(10,6,5,2,14,5,5,3)\) that corresponds to the shortest path (see Fig. 1d). Note that j in the label (i, j) of each node in the shortest path corresponds to the counts of sensitive locations that are allocated to bins \(1, \ldots , i\) in \(H'\). Last, in step 12, \(H'\) is returned. \(\square \)

Search space graph \(G_{{ TR}}\) for Example 4.2 (the missing nodes and edges are denoted with “\(\ldots \)”), and shortest path along with its corresponding edge weights

The time complexity of the \({ RO}\) algorithm is \(O((n\cdot N)^2\cdot \left( {\begin{array}{c}N + n - 1\\ n - 1\end{array}}\right) )\). The total cost is the sum of the cost of constructing \(G_{{ TR}}\) and of finding the constrained shortest path from (0, 0) to (n, N).

The construction of \(G_{{ TR}}\) takes \(O(n\cdot N^2)\) time. This is because the algorithm constructs \(O(n\cdot (N + 1) + 1)=O(n\cdot N)\) nodes, each of which has O(N) outgoing edges, for a total of \(O(n\cdot N^2)\) edges. Note also that the computation of each edge weight takes O(1) time. The cost of computing the shortest path is \(O((n\cdot N)^2\cdot \left( {\begin{array}{c}N + n - 1\\ n - 1\end{array}}\right) )\). This is because it requires (I) constructing a vector \({\mathcal {V}}_v\) with \(O(n\cdot \left( {\begin{array}{c}N + n - 1\\ n - 1\end{array}}\right) )\) entries, for each node v of the \(O(n\cdot N)\) nodes of \(G_{{ TR}}\), which takes \(O(n^2\cdot N\cdot \left( {\begin{array}{c}N + n - 1\\ n - 1\end{array}}\right) )\) time, and (II) updating each entry of \({\mathcal {V}}_v\) once, which takes O(N) time per node since there are O(N) incoming edges to each node (see Eq. 4.1), for a total of \(O\left( (n \cdot N)^2 \cdot \left( {\begin{array}{c}N + n - 1\\ n - 1\end{array}}\right) \right) \) across all nodes.

Note, the variation \({ TR}\)\(_{|H''|_1}\) in Sect. 3.3 can be optimally solved by using \({ RO}\) to allocate \(|H''|_{1}\) counts instead.

4.3 Heuristic for Target Resemblance

Our heuristic \({ RH}\) for the Target Resemblance problem works in a greedy fashion to avoid the cost of constructing and searching the search space graph.

The main idea in \({ RH}\) is to try to greedily reduce the differences in the counts of corresponding bins between H and \(H''\). As can be seen in the pseudocode (steps 1 and 2), \({ RH}\) identifies source bins, i.e., bins in H with more counts in H than in \(H''\), and destination bins, bins with fewer counts in H than in \(H''\). Bins with equal counts in H and \(H''\) are ignored. Then, in steps 3 and 4, \(H'\) is initialized to the original histogram H and the remaining quality budget \(\epsilon _{rem}\) to the quality threshold \(\epsilon \). In steps 5 and 6, \({ RH}\) moves some counts from a source bin to a destination bin using a function BestMove.

As can be seen in the pseudocode of BestMove (steps 4–6), the function searches all possible ways (“moves”) to move k counts from a source bin i to a destination bin j. For each move, BestMove computes the privacy effect \(\Delta d_p\) and the quality effect \(\Delta d_q\) (steps 10 and 11), and it selects the move that maximizes the ratio \(\frac{\Delta d_p}{\Delta d_q}\), subject to the constraint that \(\Delta d_q\) cannot exceed the remaining quality budget \(\epsilon _{rem}\) (steps 12–15).Footnote 3 The rationale is to prioritize moves with a large improvement in privacy \(\Delta d_p\) and a small reduction in quality \(\Delta d_q\).

Next, in step 7, \({ RH}\) checks whether the remaining budget is exhausted. If it is, no more moves are performed (step 8). Otherwise, in steps 9–11, \({ RH}\) reduces the quality budget by \(Opt\Delta _{d_q}\) (i.e., by the quality effect of the best move) and updates the sets of source and destination bins by no longer considering as source or destination bins any bins whose count has become equal to the corresponding bin in \(H''\). Moves continue until the budget is exhausted or there are no more source/destination bins. Since moves cannot increase the count of a source bin nor increase the remaining quality budget, \({ RH}\) will always terminate.

The time complexity of \({ RH}\) is \(O(n^3\cdot N)\). This is because the loop in step 5 runs O(n) times (once per source bin), and each time there is a cost of \(O(n^2\cdot N)\) incurred by BestMove. The cost of BestMove is \(O(n^2\cdot N)\), because there are \(O(n^2)\) source/destination bin pairs, and for each pair O(N) temporary moves are performed. The time complexity analysis refers to the worst case. In practice, a histogram can be sanitized with a smaller number of moves (i.e., executions of BestMoves), and the heuristics scale well with respect to n. For example, in our experiments, the heuristics scale close to linearly with respect to n.

Last, we note that the \({ RH}\) heuristic can also directly deal with the variation \({ TR}\)\(_{|H''|_1}\) of the \({ TR}\) problem (see Sect. 3.3). This is because \({ RH}\) does not pose any restriction on the size of \(H''\), so \(H''\) can have a different size than that of H.

5 Evaluation

In this section, we evaluate our algorithms and heuristics in terms of effectiveness and efficiency. We do not compare against existing histogram sanitization methods, because they cannot be used to solve the problems we consider (see Sect. 6.2).

5.1 Setup and datasets

To calculate the loss in quality (utility) incurred by replacing the original histogram H with the sanitized histogram \(H'\), we compute the distance \(d_q(H,H')\), where \(d_q\) is the Jensen–Shannon (JS) divergence (Sect. 3.1.1). Our algorithms can optimize other measures, leading to qualitatively similar results [33]. In addition, we measure how well sanitization preserves the quality of location recommendation.

Location recommendation suggests to a user, referred to as active user and denoted with \(\alpha \), a location that might interest them. We measure the impact of sanitization on recommendation quality based on the recommendation error [35], defined, for an active user \(\alpha \) and a location \(L_{\mathrm{test}}^\alpha \), as the difference between \(f_\alpha (L_{\mathrm{test}}^\alpha )\), the user’s true frequency of visits to \(L_{\mathrm{test}}^\alpha \), and \(r_\alpha (L_{\mathrm{test}}^\alpha )\), the frequency of visits as predicted by the recommendation algorithm. We use both the absolute error \(|f_\alpha (L_{\mathrm{test}}^\alpha ) - r_\alpha (L_{\mathrm{test}}^\alpha )|\) and the square error \((f_\alpha (L_{\mathrm{test}}^\alpha ) - r_\alpha (L_{\mathrm{test}}^\alpha ))^2\). We compute recommendations based on the dataset of original user histograms, and then based on the dataset of sanitized histograms as follows: (I) each of these datasets is randomly partitioned into a training set \(D_{\mathrm{train}}\) with 90% of the histograms and a test set \(D_{\mathrm{test}}\) with 10% of the histograms, (II) the absolute (or square) recommendation error considering each user in \(D_{\mathrm{test}}\) as \(\alpha \) is computed, and (III) the errors are averaged to obtain two popular measures; Mean Absolute ErrorMAE and Root Mean Square Error. For the absolute error, \(MAE(D_{\mathrm{test}}) = \frac{1}{|D_{\mathrm{test}}|}\sum _{(\alpha , L_{\mathrm{test}}^\alpha )} |f_\alpha (L_{\mathrm{test}}^\alpha ) - r_\alpha (L_{\mathrm{test}}^\alpha )|\), and Root Mean Square Error\(RMSE(D_{\mathrm{test}}) = \sqrt{\frac{1}{|D_{\mathrm{test}}|}\sum _{(\alpha , L_{\mathrm{test}}^\alpha )} (f_\alpha (L_{\mathrm{test}}^\alpha ) - r_\alpha (L_{\mathrm{test}}^\alpha ))^2}\). For the square error, MAE and \({ RMSE}\) are defined similarly.

All algorithms are implemented in Python and applied to the New York City (NYC) and Tokyo (TKY) datasets, which were also used in [39, 63, 64]. The datasets were downloaded from [62] and include long-term check-in data in New York city and Tokyo, collected from Foursquare from April 12, 2012 to February 16, 2013. Each record in the datasets contains a location that was visited by a user at a certain time and corresponds to a leaf in the Foursquare taxonomy (available at https://developer.foursquare.com/docs/resources/categories). There are in total 713 locations in the taxonomy, and on average each user visits fewer than 41 locations. For each dataset, we produce the input histograms for our algorithms by constructing one histogram H per user. The histogram H contains a count \(f(L_i)>0\) for every location \(L_i\) visited by the user. That is, H is constructed based on the user’s values (location visits), which is line with histogram sanitization methods [2, 16, 23, 26, 45, 61, 68]. Table 2 shows the characteristics of NYC and TKY, and Table 3 shows the default values used in our experiments.

We also construct synthetic histograms by appending zeros to a histogram of length \(n=78\) and size \(N=192\) in NYC and to a histogram of \(n=99\) and \(N=642\) in TKY, and their length is up to 400, including the zero-frequency bins. We use the synthetic histograms to test the impact of length on the runtime performance of our methods. In total, we test the algorithms on approximately 3400 different histograms. All experiments ran on an Intel Xeon at 2.60GHz with 256GB of RAM.

5.2 Evaluation of the \({ LHO}\) algorithm

We evaluate the quality and runtime of \({ LHO}\) as a function of (I) n, the length of the original histogram, (II) K, the total frequency of sensitive locations, and (III) \(|L'|\), the number of sensitive locations. We consider JS-divergence as \(d_q()\) and an \(L'\) comprised of 5 sensitive locations selected randomly, unless stated otherwise.

5.2.1 Quality preservation for the \({ LHO}\) algorithm

Impact of histogram length n We show that JS-divergence decreases with n, in Fig. 8 (the y axis is in logarithmic scale). This is because there are more bins whose counts may increase: the space considered by \({ LHO}\) is larger and the change can be “smoothed” over more bins. Also, the JS-divergence scores are low, suggesting that sanitization preserves the distribution of nonsensitive locations fairly well.

Impact of total frequency of sensitive locations K We show that JS-divergence increases with K in Fig. 9 (the y-axes are in logarithmic scale). This is because there are more counts that must be redistributed into the bins of nonsensitive locations, and this incurs more distortion. Note, the JS-divergence scores are low, suggesting that the distribution of nonsensitive locations is preserved fairly well.