Abstract

Through changes in policy and practice, the inherent intent of the ecosystem services (ES) concept is to safeguard ecosystems for human wellbeing. While impact is intrinsic to the concept, little is known about how and whether ES science leads to impact. Evidence of impact is needed. Given the lack of consensus on what constitutes impact, we differentiate between attributional impacts (transitional impacts on policy, practice, awareness or other drivers) and consequential impacts (real, on-the-ground impacts on biodiversity, ES, ecosystem functions and human wellbeing) impacts. We conduct rigorous statistical analyses on three extensive databases for evidence of attributional impact (the form most prevalently reported): the IPBES catalogue (n = 102), the Lautenbach systematic review (n = 504) and a 5-year in-depth survey of the OPERAs Exemplars (n = 13). To understand the drivers of impacts, we statistically analyse associations between study characteristics and impacts. Our findings show that there exists much confusion with regard to defining ES science impacts, and that evidence of attributional impact is scarce: only 25% of the IPBES assessments self-reported impact (7% with evidence); in our meta-analysis of Lautenbach’s systematic review, 33% of studies provided recommendations indicating intent of impacts. Systematic impact reporting was imposed by design on the OPERAs Exemplars: 100% reported impacts, suggesting the importance of formal impact reporting. The generalised linear models and correlations between study characteristics and attributional impact dimensions highlight four characteristics as minimum baseline for impact: study robustness, integration of policy instruments into study design, stakeholder involvement and type of stakeholders involved. Further in depth examination of the OPERAs Exemplars showed that study characteristics associated with impact on awareness and practice differ from those associated with impact on policy: to achieve impact along specific dimensions, bespoke study designs are recommended. These results inform targeted recommendations for ES science to break its impact glass ceiling.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Westman (1977) first argued that to sustainably manage our global natural resources, we need to formally recognise how nature’s diverse services support human wellbeing. The benefits derived from nature were thereafter labelled as ‘ecosystem services (ES)’ (Ehrlich and Ehrlich 1981). The novelty and compelling aspect of the ES concept lie in its representation of nature as provider of services benefiting humankind. Forty years on, the concept has seen a dramatic evolution and underpins research that views ecological, economic and social systems as inextricable social-ecological systems (Anderies et al. 2004; Balvanera et al. 2017; Berkes et al. 2003; Folke 2006). The activities driving this field internationally include the MA (2005a), TEEB (Kumar 2010; ten Brink 2011) and more recently, the IPBES (2015) and the WAVES partnership ( 2017).

Ways to help support and sustain the services essential for human wellbeing are diverse, but at the onset, the concept of ES must shift from a heuristic framework to unambiguous practical applications (Polasky et al. 2011): a process which requires enactment in decision-making by policy makers and practitioners in a salient, credible and replicable manner (Daily and Matson 2008). While challenging, evidence that such a transition is under way is emerging: the concept is being formalised into systematic definitions e.g. Common International Classification of ES CICES (Haines-Young and Potschin 2013) and instruments, tools and standards are being developed (Hein et al. 2016; Maes et al. 2016; OECD 2012). As such, the intellectual community is converging towards a clarification of definitions, standards, tools and methods.

Progress in adoption of the concept in policy is also evident. The concept is being incorporated into directives at various governance levels, including international (Convention on Biological Diversity 2010), European (Bouwma et al. 2017; European Commission 2011; Maes et al. 2016) and national (DEFRA, 2012). Applying ecosystem service in decision-making is utilised as a way to quantify and qualify the ecological and socioeconomic costs and benefits of different management plans (Luck et al. 2012). Therefore, ES valuation is considered to have a high potential for application in decision-making by individuals, institutions, organisations and governments (Wallace 2008; de Groot et al. 2010). At a national level, the European Union has explicitly included the valuing of ES in their Biodiversity Strategy for 2020 (European Commission 2011). The report ties together the protection of ecosystem biodiversity with the services that it provides, making conservation management, of both, vitally important (Jax et al. 2013). As a result, many European countries are working on inputting ecosystem service assessments into their management plans, thereby influencing the implementation of ES Assessment globally (IPBES 2015). There is also evidence of application of the concept in urban planning discourses, such as in Berlin, New York, Salzburg, Seattle and Stockholm (see Hansen et al. 2015). Yet, while some progress is seen at policy levels, applications of the concept in practice are slower to materialise (Hein et al. 2016). Hansen et al. (2015) argue that the challenges of operationalization are many, including translating the ES concept into legal systems for implementation (also extensively discussed in Stępniewska et al. (2017), de Graaf et al. (2017) and Mauerhofer (2017)).

Hence, while much progress has been made in the design of procedures aiming to guide decision-making, little is known about how the knowledge of ES leads to practice impacts or to on-the-ground impacts on biodiversity, ecosystem functions, ES and the various aspects of wellbeing (Folke et al. 2002; Nahlik et al. 2012; Shanley and Lopez 2009). The knowledge of whether and how ES science translates into impacts is fragmented and disjointed. Yet, to succeed in its mission, it is essential that the science engages with the realm of its impacts.

Fundamentally mission-oriented (Cowling et al. 2008), ES science has indeed impact at its core: ‘with appropriate actions it is possible to reverse the degradation of many ES over the next 50 years, but the changes in policy and practice required are substantial and not currently underway’ (MA 2005b).Footnote 1 Underpinning this statement lies a causal assumption that for impacts to happen on the ground, impacts on policy and practice are needed. This suggests the existence of two distinct categories of impacts with a causal relationship between them: attributional impacts refer to transitory effects (impacts on policy, practice, awareness or other direct or indirect drivers of changes) which ideally lead to consequential impacts (ultimate real on-the-ground changes on biodiversity, ecosystem functions, ES and well-being as defined within the MA).

In this paper, we focus our attention on attributional impacts as this is the category of impact most systematically reported to date (see e.g. IPBES 2015). We specifically focus on awareness, policy and practice dimensions. We define awareness as the acquisition of ‘knowledge that something exists, or [an] understanding of […] a subject […] based on information […]’ (Cambridge online dictionary). This also considers literacy, namely, the acquisition of ‘knowledge of a particular subject […]’ (Cambridge online dictionary). Policy refers to a course of action adopted or proposed at any governance level. Practice refers to the implementation of human actions and practices on the ground. Our overarching aim is to understand how ES science and associated interventions have impacted awareness, policy and practice. To address this, we answer the following three questions:

-

Has impact been defined in ES science, and if so, how?

-

What evidence exists that ES studies generate attributional impact?

-

What ES study characteristics are associated with attributional impacts?

By answering these, our intention is to contribute to breaking the broad ES impact glass ceiling: first, by highlight gaps in the coverage (definition and evidence) of impacts, and second, by identifying potential study attributes associated with attributional impacts. We conclude by proposing a systematic approach for studying and reporting the diversity of attributional as well as consequential impacts for a robust evaluation of the breadth and depth of promised transformation by ES science.

Approach

Defining Impact for the Science of Ecosystem Services

Definitions are fundamental for concerted action, as they determine the way we think and act. Here, a common understanding of ‘impact’ is fundamental to the way in which we conduct ES interventions and assess their success (let alone, that of the science). Hence, to have impact, the ES science must first define how it understands it. In this paper, we explore the ES literature to evaluate whether there exists a common and well established definition of ES impacts. We also examine how impact is defined broadly in fields as diverse as higher education, health and international development where it is set firmly as a criteria of success (incl. the World Bank, the OECD, the WHO). We believe this range to be a fair representation of impact definitions found in other, yet related, domains. Based on these findings, in the discussion, we propose a systemic definition of ES impact, a typology of impacts, and an approach for ES impact reporting which builds on the attributional and consequential impact definitions introduced above. Next, we examine whether there exist evidence that ES science generates attributional impact.

What evidence is there that the Science of Ecosystem Services generates attributional impacts?

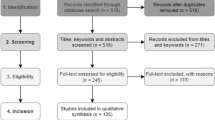

Three databases were consulted to explore whether ES science generates attributional impacts. These were:

-

IPBES catalogue: a searchable compendium of global to subnational scales studies of biodiversity and ES (restricted access under https://www.ipbes.net/deliverables/4a-catalogue-assessments, IPBES 2015). Selected as, of the 29 ES databases reviewed in Schmidt and Seppelt (Schmidt and Seppelt 2018, see Table 1), it is the only one with a specific focus on impact.

-

Lautenbach review: a systematic review of peer-reviewed scientific ES studies assessing how current ES studies are conducted in practice (Lautenbach et al. 2019, this issue). Selected as this is the only ES study database derived from a systematic review, enabling robust statistical analyses.

-

OPERAs Exemplars: a 5-year, in-depth investigation of 13 collaboratively designed ES studies (OPERAs 2017). Selected as the database includes several impact indicators, thereby providing a sample for in-depth understanding of study characteristics related to attributional impact.

Given the different nature of the databases, these are analysed independently.

IPBES catalogue (n = 102)

On 24 October 2017, the catalogue entailed 246 studies of which > 40% were randomly selected (n = 102). Within the database, each entered study can elect to self-report its impacts and associated evidence ‘[…]on policy and/or decision making, as evidenced through policy actions’ (IPBES 2015).

Lautenbach et al. review (n = 504)

The database is comprised of a random sample of peer-reviewed ES studies published in the ISI Web of Knowledge up to 31.03.2016 (n = 504). The database states whether the studies provided policy recommendations, used here as a proxy of intent for impact.

OPERAs Exemplars (n = 13)

The database comprises in-depth characteristics (namely, specific attributes) from 13 complementary ES studies (Table 1, referred to as Exemplars) and was created as part of the OPERAs EU PF7 project (http://www.operas-project.eu/). To create the database, information on a suite of Exemplar characteristics was sought through an exhaustive adaptive questionnaire (referred to online as the ‘blueprint protocol’). The questionnaire was completed three times over a 5-year period (December 2012 to November 2017) and can be freely accessed on the Oppla marketplace (https://www.oppla.eu/product/18033) for consultation and use by the wider ES community. It builds on the work of Seppelt et al. (2012) and a rigorous assessment of other published ES frameworks and protocols (see link above). It takes on average 1 hour to complete, and acted as our modus operandi for standardising the comparison, evaluation and the synthesis of these 13 Exemplars, their operationalisation and their impacts. The questionnaire engaged only the study team leads, not the broader stakeholders affected or impacted by the teams’ studies. In the questionnaire, exemplar teams were asked to self-report whether their study generated impact along the three dimensions (awareness, policy and practice) and to provide relevant evidence of such impacts.

What study characteristics are associated with attributional impacts?

Within each database, study characteristics were selected for this analysis because of their availability and their similarity across the three databases. These include the class and number of ES assessed (as well as related analytical considerations such as bundling of ES, trade-offs); stakeholders engagement attributes; geographical scale considered as well as others (see full lists in Tables 2, 3 and 4). Some characteristics unique to each database were also included if deemed particularly pertinent to generating attributional impact (e.g. scope of assessment in IPBES). We describe below the methodology used to assess associations between study characteristics and impacts within each database.

IPBES catalogue (n = 102)

For each study entry, the IPBES catalogue collates information on ten themes (subdivided into a total of 48 characteristics). Here, five of the ten IPBES themes (labelled 1–5 in Table 2) were used and coded, each including between four to ten single characteristics. An additional theme was created by assessing the stated intention of the study for integration of its findings into decision-making (typology of characteristics described in Daily et al. (2009)). Generalised linear models (GLMs) were used to test the relationship between characteristics of the studies and policy impacts. The GLMs were derived for each theme individually.

Lautenbach et al. review (n = 504)

Twenty study characteristics from the Lautenbach database are used here (Table 3) and tested against the presence or absence of specific policy recommendations. A binomial GLM with the log link (logistic regression) was used to test relationships between the response and the predictors.

OPERAs Exemplars (n = 13)

The characteristics extracted from the OPERAs Exemplars are presented in Table 4. Impacts along the three dimensions were scored as presented in Table 5.

Relationships between the three impact dimensions and Exemplar characteristics were analysed by means of nonparametric pairwise correlation analysis using Kendall’s tau (Kendall 1938). The strength of the associations between numeric and categorical variables was calculated based on point biserial (two categories) or point polyserial (more than two categories) correlation coefficients (Olsson et al. 1982). The differences in correlation between each of the impact domain were visualised in a circular plot. All analyses were done in R (R Core Team 2017) using the additional package circlize (Gu et al. 2014) and polycor (Fox 2016).

Results and discussion

Defining Impact for the Science of Ecosystem Services: A typology

Discourses of impact are omnipresent in medical sciences (Greenhalgh and Fahy 2015), international development (Cameron et al. 2015; Hearn and Buffardi 2016), and education (Papastergiou 2009), yet Table 6 highlights a large variability in definitions adopted in these different fields, from comprehensive (OECD-DAC and DFID, Table 6) to narrow (impact as meeting a predefined set of objectives, World Bank, Table 6).

Meanwhile, in the ES science literature, the term does not seem to have permeated widely. When impact is considered, it is narrowly defined as policy impact (IPBES 2015; Posner et al. 2016) or as effectiveness; id est the degree with which something is successful in producing a desired result (especially in the context of payments for ES). The latter definition is possibly a legacy of environmental impact assessments (EIAs) which evaluate the environmental consequences of major development projects in situ and focus on improving the quality of decisions-making to avoid unwarranted development effects. Yet, even in the EIA literature, impact and effectiveness are used either synonymously, or their distinction is tangled (compare Ervin (2003); Hockings (2003) with van Doren et al. (2013)). This confusion contributes to obscuring the potential demonstration of the breadth of impacts arising from ES science.

To achieve and demonstrate impact, it is fundamental to distinguish between effectiveness and impacts clearly. Effectiveness, as defined above, refers to a measure of success at achieving a desired result. An intervention may be effective, but if broader impacts were considered (such as trade-offs, or off-site effects), the results may not be hailed as successful overall. A more nuanced and comprehensive understanding of ES impacts is needed to encompass the diversity of effects and changes observed within the social-ecological system considered. Such a definition must also be sufficiently flexible to allow for both prospective and retrospective impact appraisals and reporting.

We therefore define ES science impact as the attributional and consequential, positive and negative, long-term effects generated through ES science (and by associated interventions) foreseen or unforeseen, intended or unintended (adapted from Independent Commission for Aid Impact 2014; OECD 2010). This definition is therefore fully inclusive of effectiveness, typically used within EIAs.

In Fig. 1, we present a framework for ES science reporting, which entails the impact definition, a typology and a reporting approach.

Systemic impact reporting framework. The figure presents (a.) a definition of impact for the Science of Ecosystem Services, (b.) a typology of impacts and (c.) a comprehensive reporting framework. (b.) Attributional impacts are precursory to consequential impacts and include transitional impacts on dimensions such as awareness, policy and practice (but could also include transitional effects on other direct or indirect drivers of change, e.g. technological developments). Attributional impacts can occur without consequential impacts. Consequential impacts are ultimately, the real, on-the-ground impacts on dimensions such as biodiversity, ecosystem functions, ecosystem services and well-being. (c.) We exemplify how this framework (adapted from Hearn and Buffardi 2016) can be used in practice as a reporting, thinking or impact mapping tool using a hypothetical reforestation intervention under a PES scheme

The typology characterises the different dimensions of ES science impacts, and thereby contributes towards a consistent and systematic reporting approach. A distinction between attributional and consequential impacts is fundamental, as the former is only transitory, and does not capture on-the-ground impacts (desired or not). An ES intervention may impact policy yet never realise the desired effects on biodiversity, ES, ecosystem functions or human-wellbeing. Attributional impacts (notably impacts on policy) remain nevertheless the category of impacts most systematically reported to date (see IPBES (2015) and Schmidt and Seppelt (2018) for their description of 29 ES databases).

The definition and the systemic typology further differentiates between what are seen as positive or negative impacts, as well as foreseen, unforeseen, intended or unintended effects. Using a hypothetical reforestation intervention under a PES scheme, in Fig. 1, we exemplify how this framework can be used in practice as a reporting, thinking or impact mapping tool. This framework for understanding ES impacts and their reporting offers clear boundaries to categorise the depth with which impact is considered and reported.

What evidence exists that ES studies generate attributional impact? Towards systematic reporting

The lack of definition and consensus as to how impact is defined has undoubtedly contributed to the shortage of concerted effort to report impact, and the accompanying limited evidence that the ES science generates impacts. The extent with which ES science has generated attributional impacts is thin. Within the IPBES catalogue, we found that only 26 (25%) of the 102 randomly selected studies self-reported policy impact. Seven (7%) were independently reviewed. Within the Lautenbach database, the results are similar: only 33% of studies provide some policy recommendations. The findings from the Exemplars contrast, however, with those above: all Exemplars reported impact along at least one of the three dimensions (examples of which are presented in Table 7) and 62% believe having had some impact on policy. The systematic reporting imposed by the adaptive questionnaire in the OPERAs Exemplars (which also supported the study design) may have contributed to such attainment.

Until impact becomes systematically reported, the evidence that ES concept does generate impact may remain thin. A few simple steps could go a long way in addressing this dearth. Impact being core to ES science, it would seem reasonable that peer-reviewed journals in the field request short statements of impact (intended and/or realised), alongside abstracts and keywords. This would promote the importance of thoughtful impact planning and reporting. The use of protocols for study design and reporting (such as the blueprint protocol questionnaire briefly introduced here) could also be considered as a tool to remind teams of the importance of impact planning and reporting. Such protocols serve as vehicles to summarise outcomes from a diverse set of studies, but also, as a thinking tool to integrate specific study considerations (including impact). Finally, global efforts at creating compendia of ES studies need to capture ES science impacts more broadly and consistently. Adopting a comprehensive definition and reporting framework such as the one presented here is essential to fully capture the breadth and depth of ES science impacts.

What study characteristics are associated with impacts? Designing studies for impact

Planning for impact requires an understanding of drivers of impact. Within each database, we explored which study characteristics were associated with different dimensions of attributional impacts. In IPBES and Lautenbach, policy impacts alone were considered. In OPERAs Exemplars, the three dimensions of awareness, policy and practice impacts were analysed.

IPBES catalogue (n = 102)

The results from the GLMs are presented in Table 8. Studies with policy impact show a significantly broader coverage of characteristics for all ordinal characteristics (sign. levels *** column B). However, they did not cover a significantly different selection of ES, but differed in the scales addressed: in these studies, site (*) and subnational scales ( ) were significantly more often studied. Furthermore, they are characterised by stakeholder engagement (**), and engage significantly more with affected people (*); people who actively manage the ecosystems (*), and policy makers ( ). They also have an increased emphasis on drivers of change of ES (**) and explicitly address the role of biodiversity (*). Finally, they differ methodologically by focusing on the ecosystem themselves (*) and on the valuation of the ES ().

Lautenbach et al. review (n = 504)

Studies providing policy recommendations were significantly associated with a number of characteristics (Table 9). Stakeholder involvement mattered: a higher frequency of recommendations was observed in studies where stakeholders were involved. Studies involving distant beneficiaries and experts or that involved stakeholders in a consultation role provided significantly more recommendations than others. Studies that assessed trade-offs by map overlay also provided significantly more recommendations than others—this is likely related to the effect of mapping ES at all: studies that mapped ES reported significantly more recommendations. Studies that assessed bundles of ES reported more specific recommendations than others; however, the case number was low and the effect only marginally significant. Significantly higher frequencies of specific recommendations were also observed for studies that considered policy instruments (low number of cases), that used models different from lookup tables and combined demand and supply side analysis.

OPERAs Exemplars (n = 13)

The three dimensions of impact assessed were correlated across the 13 Exemplars. A moderately strong positive correlation was identified between impact on awareness and impact on practice (τ = 0.56) and between impact on policy and impact on practice (τ = 0.44) but not between impact on awareness and policy (τ = 0.06: policy makers are possibly sufficiently aware of the ES concept, such that mainly practitioners and stakeholders other than policy makers achieve greater awareness through ES engagement).

Associations between Exemplar characteristics and impact were manifold: studies that addressed a more specific problem were positively associated with a larger impact on policy (τ = 0.45) and with impact on awareness (τ = 0.20). Studies that took place at a smaller scale were positively associated with impact on awareness (τ = 0.35). For impact on policy, the impact increased with scale (τ = 0.23). Studies that scored higher with respect to robustness of the analysis were positively associated with impact on all three dimensions: τ = 0.59, 0.50 and 0.57 for impact on awareness, policy and practice respectively. The number of ES categories addressed in an Exemplar was associated with the impact on awareness (0.45).

The indicator measuring the different aspects of stakeholder involvement was positively associated with impact on awareness and impact on practice (τ = 0.42 and 0.39, respectively). The diversity of approaches used to engage with stakeholders was positively associated with impact on practice (τ = 0.49). The diversity of the stakeholders involved was positively associated with impact on awareness (τ = 0.49). The number of stakeholders involved was positively associated with impact on awareness (τ = 0.23) and impact on practice (τ = 0.37).

Exemplars that addressed the effects of policy instruments directly instead of indirectly had a higher impact in all dimensions: the biserial correlation coefficients were r = − 0.78 for impact on policy, r = − 0.44 for impact on awareness and r = − 0.52 for impact on practice—Exemplars that did not address the effects of policy instruments directly had on average a lower impact in those categories compared to those that did. Interventional studies were associated with a higher impact on policy compared to observational studies (polyserial correlation coefficient r = 0.54) but a lower impact in practice (polyserial correlation coefficient r = − 0.46).

Despite relatively low correlation coefficients (between 0.2 and 0.5) overall, the relative importance of single factors becomes obvious from Fig. 2. In this figure, the upper half of the circles represents the different types of impacts and the lower half, the characteristics of the studies. The width of each connection indicates the strength of the Kendall’s correlation between study characteristic and impact (negative correlations are indicated with dashed outline). Scientific robustness shows the highest positive correlation with all types of impacts: awareness, policy and practice. Additional important factors include the number of ES investigated, the specificity of the study, the inclusion of stakeholders at various study stages, the degree of organisation of the stakeholders, the diversity of stakeholders included and the scale of the study. However, the correlation coefficients are in sum not as high; they vary strongly between the impact of awareness, policy and practice, and they show in parts negative correlation to single types of impacts. One illustrative example is, for instance, scale where larger scale is negatively correlated to impact on practice. Whether or not a study includes stakeholders of opposing views shows least correlation with any of the impacts. Similarly, the diversity of approaches to address stakeholders is not highly correlated with impacts.

Comprehensive graphical overview of associations between Exemplar characteristics and attributional impact dimensions. The upper half of the circle represents the different types of impacts and the lower half, the characteristics of the studies. Only the characteristics which are statistically significant are included here. The width of each connection indicates the strength of the Kendall’s correlation between study characteristic and impact. Negative correlations are indicated with dashed outline. Specificness refers to specificity of study. Sc, Instr, Sth, Div, Var and Opp, abbreviations stand for scientific, instruments, stakeholders, diversity, various and opposing, respectively (see Table 4 for corresponding characteristics)

ES study characteristics associated with impacts: synthesis from the three databases

Table 10 presents a summary of results from the IPBES catalogue, the Lautenbach review and the OPERAs Exemplars analyses. For the OPERAs Exemplars, correlations greater or equal to 0.35 are included (* indicates negative correlation). For the Lautenbach review and the IPBES catalogue, significant characteristics and predictors of studies with recommendations/impacts are included (p < 0.1). Characteristics in italics are common to all databases.

While there does not exist a single sweeping intervention or study design affecting consistently all dimensions of attributional impact, our findings highlight four characteristics which appear more frequently (see rows with three Xs, Table 10). These are study robustness, integration of policy instruments into study design, stakeholder involvement and type of stakeholders involved. One could argue that these are the minimum baseline characteristics for impact. Our findings further suggest that to achieve impact along specific dimensions, studies must have a bespoke design: the characteristics associated with impacts on awareness, policy and practice were shown to differ. As a corollary, planning for impact matters. If impact on practice is desired, then a study conducted at smaller scale (e.g. local) which engages with many stakeholders may be more effective than a global modelling exercise testing different policy options and instruments.

The questionnaire can aid in structuring assessments, studies and monitoring programs for impact, by providing various elements for considerations. By highlighting topics such as the analysis of uncertainties, offsite effects or the effects of different policy instruments, it helps researchers track the requirements for, and think early about, desired impacts.

Conclusions

A few lessons can be learned from this study, from which we propose a suite of recommendations.

Lesson-learned 1: There are no clear agreed definitions of impacts in ES science. Such lack of consensus in (and confusion between) definitions of effectiveness, impacts and other measures of a study or intervention’s effects contributes to obscuring the potential demonstration of outcomes arising from ES science. Recommendations 1: given the diversity of ES, functions, associated intervention and studies as well as the breadth of potential impacts, impact ought to be defined broadly as the attributional and consequential, positive and negative, long-term effects generated through ES science (and by associated interventions) foreseen or unforeseen, intended or unintended. A recognition of the different categories of impacts is also important. Attributional impacts (impacts on policy, practice, awareness or other direct/indirect drivers) refer to transitory effects which ideally, but not necessarily, lead to consequential impacts (real, on the ground effects on biodiversity, ES, ecosystem functions and wellbeing).

Lesson-learned 2: There is no broad and systematic collection of ES science impact. This leads to a limited body of evidence that ES studies or interventions yield impact. Even the IPBES catalogue does not collate broad or diverse evidence of impact beyond policy, let alone consequential impacts. Recommendations 2: systematic reporting is critically needed for both attributional and consequential impacts. With minimal efforts, the existing compendia (e.g. IPBES) could be expanded to include broader, systemic and comprehensive impact reporting. The impact framework (definition, typology and reporting framework) presented in Fig. 1 offers such a flexible framework, and clarifies the depth with which impact is considered. It considers foreseen and unforeseen effects, but also allows for both prospective and retrospective reporting. In parallel, expected or realised impacts statement should be presented alongside abstracts and keywords, in ES focused peer-reviewed journals. Finally, the use of well grounded reporting frameworks, such as the questionnaire (blueprint protocol) introduced here, can help teams engage more with the realm of impact.

Lesson-learned 3: Across the three databases considered here (IPBES, Lautenbach review and OPERAs Exemplars), a few key study characteristics were found to be more frequently associated with attributional impacts: these are study robustness, integration of policy instruments into study design, stakeholder involvement and type of stakeholders involved. We see these as the minimum baseline for impact. Meanwhile, the 5-year investigation of the OPERAs Exemplars enabled greater in-depth investigation of associations between study characteristics and specific dimensions of attributional impact. While many characteristics were associated with impacts on awareness and practice, the characteristics linked with impact on policy tended to be distinct. Recommendations 3: designing studies for robustness and reliability is fundamental to all dimensions of attributional impact—study robustness was consistently associated with impact on awareness, policy and practice. Meaningful engagement with stakeholders (see Durham et al. 2014 for guidelines) and careful selection of stakeholder types was also consistently associated with attributional impact. To effect specific dimensions of impacts however, studies must have a bespoke design. Studies conducted at smaller scale (e.g. local) and engaging with many stakeholders were shown to be more effective at generating impact on practice than global modelling studies testing policy options.

In this paper, we have demonstrated that there currently exists no clear consensus on a definition of ES science impacts. We have also shown that limited information is collated on attributional impacts (let alone, consequential impacts). Through robust statistical analyses of three extensive databases of ES studies, we show that certain study characteristics are a key for achieving attributional impact (incl. study robustness and stakeholder engagement).

To break the ecosystem services impact glass ceiling; however, a concerted, global and systematic effort to define, assess and collate evidence of both attributional and consequential impacts is urgently needed.

References

3ie (2012) 3ie Impact evaluation glossary. International Initiative for Impact Evaluation, New Delhi, India http://bit.ly/1Urz3zU. Accessed 15 February 2018

Anderies JM, Janssen MA, Ostrom E (2004) A framework to analyze the robustness of social-ecological systems from an institutional perspective. Ecol Soc 9:18. https://doi.org/10.5751/ES-00610-090118

Balvanera P, Daw TM, Gardner TA, Martin-Lopez B, Norstrom AV, Speranza CI, Spierenburg M, Bennett EM, Farfan M, Hamann M, Kittinger JN, Luthe T, Maass M, Peterson GD, Perez-Verdin G (2017) Key features for more successful place-based sustainability research on social-ecological systems. A Programme on Ecosystem Change and Society (Pecs) Perspective, Ecol Soc 22. https://doi.org/10.5751/Es-08826-220114

Berkes F, Colding J, Folke C (2003) Navigating social-ecological systems: building resilience for complexity and change. Cambridge University Press, Cambridge http://assets.cambridge.org/052181/5924/sample/0521815924ws.pdf

Bouwma I, Schleyer C, Primmer E, Winkler KJ, Berry P, Young J, Carmen E, Špulerová J, Bezák P, Preda E, Vadineanu A (2017) Adoption of the ecosystem services concept in EU policies. Ecosystem Services 29:213–222. https://doi.org/10.1016/j.ecoser.2017.02.014

Cameron DB, Mishra A, Brown AN (2015) The growth of impact evaluation for international development: how much have we learned? J Dev Eff 8:1–21. https://doi.org/10.1080/19439342.2015.1034156

Convention on Biological Diversity (2010). Cop 10 Decision X/2: Strategic plan for biodiversity 2011–2020. www.cbd.int/decision/cop/?id=12268. Accessed 17 December 2017

Cowling RM, Egoh B, Knight AT, O'Farrell PJ, Reyers B, Rouget M, Roux DJ, Welz A, Wilhelm-Rechman A (2008) An operational model for mainstreaming ecosystem services for implementation. Proc Natl Acad Sci 105:9483–9488. https://doi.org/10.1073/pnas.0706559105

Daily GC, Matson PA (2008) Ecosystem services: from theory to implementation. Proc Natl Acad Sci 105:9455–9456. https://doi.org/10.1073/pnas.0804960105

Daily GC, Polasky S, Goldstein J, Kareiva PM, Mooney HA, Pejchar L, Ricketts TH, Salzman J, Shallenberger R (2009) Ecosystem services in decision making: time to deliver. Front Ecol Environ 7:21–28. https://doi.org/10.1890/080025

de Groot RS, Alkemade R, Braat L, Hein L, Willemen L (2010) Challenges in integrating the concept of ecosystem services and values in landscape planning, management and decision making. Ecol Complex 7(3):260–272. https://doi.org/10.1016/j.ecocom.2009.10.006

van Doren D, Driessen PPJ, Schijf B, Runhaar HAC (2013) Evaluating the substantive effectiveness of sea: towards a better understanding. Environ Impact Assess Rev 38:120–130. https://doi.org/10.1016/j.eiar.2012.07.002

Durham E, Baker H, Smith M, Moore E, Morgan V (2014). The Biodiversa Stakeholder Engagement handbook. Paris: http://www.biodiversa.org/702:

Ehrlich PR, Ehrlich AH (1981) Extinction: the causes and consequences of the disappearance of species, 1st edn. Random House, New York

Ervin J (2003) Rapid assessment of protected area management effectiveness in four countries. Bioscience 53:833–841. https://doi.org/10.1641/0006-3568(2003)053[0833:Raopam]2.0.Co;2

European Commission (2011). Our life insurance, our natural capital: an Eu biodiversity strategy to 2020. Commission Communication No. Com(2011) 244. European Commission. (27 July 2017; Http://Ec.Europa.Eu/Environment/Nature/Biodiversity/Comm2006/Pdf/Ep_Resolution_April2012.Pdf)

Folke C (2006) Resilience: the emergence of a perspective for social-ecological systems analyses. Global Environ Change-Human and Policy Dimen 16:253–267. https://doi.org/10.1016/j.gloenvcha.2006.04.002

Folke C, Carpenter S, Elmqvist T, Gunderson L, Holling CS, Walker B (2002) Resilience and sustainable development: building adaptive capacity in a world of transformations. Ambio 31:437–440. https://doi.org/10.1579/0044-7447-31.5.437

Fox J (2016). Polycor: polychoric and polyserial correlations, R Package Version 0.7–9. https://CRAN.R-project.org/package=polycor. Accessed 20 June 2017

Global Environmental Facility (2010). Climate change and the Gef: findings and recommendations from the fourth overall performance study of the Gef. OPS4 Learning Products #2:11: http://www.gefieo.org/documents/ops4-l02-climate-change-and-gef:

de Graaf KJ, Platjouw FM, Tolsma HD (2017) The future Dutch environment and planning act in light of the ecosystem approach. Ecosystem Services 29:306–315. https://doi.org/10.1016/j.ecoser.2016.12.018

Greenhalgh T, Fahy N (2015) Research impact in the community-based health sciences: an analysis of 162 case studies from the 2014 UK research excellence framework. BMC Med 13:232. https://doi.org/10.1186/s12916-015-0467-4

Gu Z, Gu L, Eils R, Schlesner M, Brors B (2014) Circlize implements and enhances circular visualization in R. Bioinformatics 30:2811–2812. https://doi.org/10.1093/bioinformatics/btu393

Haines-Young R, Potschin M ( 2013). Cices V4.3-Report Prepared Following Consultation 440 on Cices Version 4, URL:https://cices.eu/resources/:

Hansen R, Frantzeskaki N, McPhearson T, Rall E, Kabisch N, Kaczorowska A, Kain JH, Artmann M, Pauleit S (2015) The uptake of the ecosystem services concept in planning discourses of European and American cities. Ecosystem Services 12:228–246. https://doi.org/10.1016/j.ecoser.2014.11.013

Hearn S, Buffardi AL (2016). What is impact? A Methods Lab Publication. London: https://www.odi.org/sites/odi.org.uk/files/odi-assets/publications-opinion-files/10302.pdf:

Hein L, Bagstad K, Edens B, Obst C, de Jong R, Lesschen JP (2016) Defining ecosystem assets for natural capital accounting. PLoS One 11(11):e0164460. https://doi.org/10.1371/journal.pone.0164460

Hockings M (2003) Systems for assessing the effectiveness of management in protected areas. Bioscience 53:823–832. https://doi.org/10.1641/0006-3568(2003)053[0823:Sfateo]2.0.Co;2

Independent Commission for Aid Impact (2014). Dfid’s approach to delivering impact. http://bit.ly/1noYc3e Accessed 15 February 2018

IPBES (2015). Intergovernmental science-policy platform on biodiversity and ecosystem services. Guide on Production and Integration of Assessments from and across All Scales. 2015. Deliverable No. 2(a). www.ipbes.net/work-programme/guide-production-assessments. Accessed 27 July 2017

Jax K, Barton DN, Chan KMA, de Groot R, Doyle U, Eser U, Gorg C, Gomez-Baggethun E, Griewald Y, Haber W, Haines-Young R, Heink U, Jahn T, Joosten H, Kerschbaumer L, Korn H, Luck GW, Matzdorf B, Muraca B, Nesshover C, Norton B, Ott K, Potschin M, Rauschmayer F, von Haaren C, Wichmann S (2013) Ecosystem services and ethics. Ecol Econ 93:260–268. https://doi.org/10.1016/j.ecolecon.2013.06.008

Kendall MG (1938) A new measure of rank correlation. Biometrika 30:81–93. https://doi.org/10.1093/biomet/30.1-2.81

Kumar P (ed) (2010) The economics of ecosystems and biodiversity: ecological and economic foundations, vol 5. Earthscan, London

Lautenbach S, Mupepele A-C, Dormann CF, Lee H, Schmidt S, Scholte SSK, Seppelt R, van Teeffelen AJA, Verhagen W, Volk M (2019). Blind spots in ecosystem services research and implementation. Reg Environ Chang. https://doi.org/10.1007/s10113-018-1457-9

Luck GW, Chan KMA, Eser U, Gomez-Baggethun E, Matzdorf B, Norton B, Potschin MB (2012) Ethical considerations in on-ground applications of the ecosystem services concept. Bioscience 62:1020–1029. https://doi.org/10.1525/bio.2012.62.12.4

MA (2005a). Framework reports: introduction and conceptual framework. millennium ecosystem assessment. http://www.maweb.org/en/index.aspx. Accessed 15 February 2018

MA (2005b) Millennium ecosystem assessment: ecosystems and human well-being: synthesis. Millennium Ecosystem Assessment, Washington, DC

Maes J, Liquete C, Teller A, Erhard M, Paracchini ML, Barredo JI, Grizzetti B, Cardoso A, Somma F, Petersen JE, Meiner A, Gelabert ER, Zal N, Kristensen P, Bastrup-Birk A, Biala K, Piroddi C, Egoh B, Degeorges P, Fiorina C, Santos-Martin F, Narusevicius V, Verboven J, Pereira HM, Bengtsson J, Gocheva K, Marta-Pedroso C, Snall T, Estreguil C, San-Miguel-Ayanz J, Perez-Soba M, Gret-Regamey A, Lillebo AI, Malak DA, Conde S, Moen J, Czucz B, Drakou EG, Zulian G, Lavalle C (2016) An Indicator framework for assessing ecosystem Services in Support of the Eu biodiversity strategy to 2020. Ecosystem Services 17:14–23. https://doi.org/10.1016/j.ecoser.2015.10.023

Mauerhofer V (2017). The law, ecosystem services and ecosystem functions: an in-depth overview of coverage and interrelation. Ecosystem services:1–9. https://doi.org/10.1016/j.ecoser.2017.05.011

Mupepele AC, Walsh JC, Sutherland WJ, Dormann CF (2016) An evidence assessment tool for ecosystem services and conservation studies. Ecol Appl 26:1295–1301. https://doi.org/10.1890/15-0595

ten Brink P (ed) (2011). The Economics of Ecosystems and Biodiversity (Teeb) in national and international policy making. London

Nahlik AM, Kentula ME, Fennessy MS, Landers DH (2012) Where is the consensus? A proposed foundation for moving ecosystem service concepts into practice. Ecol Econ 77:27–35. https://doi.org/10.1016/j.ecolecon.2012.01.001

OECD (2010). Glossary of key terms in evaluation and results based management. http://bit.ly/1KG9WUk Accessed 15 January 2018

OECD (2012). System of environmental economic accounting. UN https://doi.org/10.1787/9789210562850-en

Olsson U, Drasgow F, Dorans NJ (1982) The Polyserial correlation-coefficient. Psychometrika 47:337–347. https://doi.org/10.1007/Bf02294164

OPERAs (2017). Ecosystem science for policy & practice. http://www.operas-project.eu/. Accessed 15 November 2017

Papastergiou M (2009) Digital game-based learning in high school computer science education: impact on educational effectiveness and student motivation. Comput Educ 52:1–12. https://doi.org/10.1016/j.compedu.2008.06.004

Polasky S, Caldarone G, Duarte TK, Goldstein J, Hannahs N, Ricketts T, Tallis H (2011). Putting ecosystem service models to work: conservation, management, and trade-offs. Nat Cap: Theory & Practice of Mapping Ecosystem Services https://doi.org/10.1093/acprof:oso/9780199588992.003.0014

Posner SM, McKenzie E, Ricketts TH (2016) Policy impacts of ecosystem services knowledge. Proc Natl Acad Sci U S A 113:1760–1765. https://doi.org/10.1073/pnas.1502452113

R Core Team (2017) R: a language and environment for statistical computing. R Foundation for Statistical Computing, Vienna https://www.R-project.org/. Accessed 15 February 2018

Schmidt S, Seppelt R (2018) Information content of global ecosystem service databases and their suitability for decision advice. Ecosystem Services 32:22–40. https://doi.org/10.1016/j.ecoser.2018.05.007

Seppelt R, Fath B, Burkhard B, Fisher JL, Grêt-Regamey A, Lautenbach S, Pert P, Hotes S, Spangenberg J, Verburg PH, Van Oudenhoven APE (2012) Form follows function? Proposing a blueprint for ecosystem service assessments based on reviews and case studies. Ecol Indic 21:145–154. https://doi.org/10.1016/j.ecolind.2011.09.003

Shanley P, Lopez C (2009) Out of the loop: why research rarely reaches policy makers and the public and what can be done. Biotropica 41:535–544. https://doi.org/10.1111/j.1744-7429.2009.00561.x

Stępniewska M, Zwierzchowska I, Mizgajski A (2017) Capability of the polish legal system to introduce the ecosystem services approach into environmental management. Ecosystem Services 29:271–281. https://doi.org/10.1016/j.ecoser.2017.02.025

Wallace K (2008) Ecosystem services: Multiple classifications or confusion? Biological Conservation 141(2):353–354. https://doi.org/10.1016/j.biocon.2007.12.014

WAVES (2017). World Bank wealth accounting and the valuation of ecosystem Services. https://www.wavespartnership.org/en. Accessed 10 January 2018

Westman WE (1977) How much are Nature’s services worth? Science 197:960–964. https://doi.org/10.1126/science.197.4307.960

White H (2009). International initiative for impact evaluation (Working Paper 1). Some reflections on current debates in impact evaluation. http://www.3ieimpact.org/media/filer_public/2012/05/07/Working_Paper_1.pdf:

WHO (2017). The results chain. http://www.who.int/about/resources_planning/WHO_GPW12_results_chain.pdf. Accessed 20 January 2018

Acknowledgements

We are grateful to Exemplar teams for contributing restlessly to this work, for Stefan Schmidt and Anne-Cristine Mupepele for their early comments on the blueprint protocol and for the collaborative and insightful feedback provided by reviewers on an earlier version of this manuscript.

Funding

This work was supported by the EU’s Seventh Framework Programme for Research (FP7) as part of the project OPERAs, Grant Agreement No. 308393.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Patenaude, G., Lautenbach, S., Paterson, J.S. et al. Breaking the ecosystem services glass ceiling: realising impact. Reg Environ Change 19, 2261–2274 (2019). https://doi.org/10.1007/s10113-018-1434-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10113-018-1434-3