Abstract

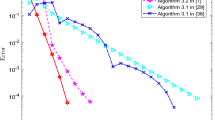

Minimax problems have gained tremendous attentions across the optimization and machine learning community recently. In this paper, we introduce a new quasi-Newton method for the minimax problems, which we call J-symmetric quasi-Newton method. The method is obtained by exploiting the J-symmetric structure of the second-order derivative of the objective function in minimax problem. We show that the Hessian estimation (as well as its inverse) can be updated by a rank-2 operation, and it turns out that the update rule is a natural generalization of the classic Powell symmetric Broyden method from minimization problems to minimax problems. In theory, we show that our proposed quasi-Newton algorithm enjoys local Q-superlinear convergence to a desirable solution under standard regularity conditions. Furthermore, we introduce a trust-region variant of the algorithm that enjoys global R-superlinear convergence. Finally, we present numerical experiments that verify our theory and show the effectiveness of our proposed algorithms compared to Broyden’s method and the extragradient method on three classes of minimax problems.

Similar content being viewed by others

Notes

see Definition A.6 in the appendix for a formal definition of uniform linear independence.

References

Abdi, F., Shakeri, F.: A globally convergent BFGS method for pseudo-monotone variational inequality problems. Optim. Methods Softw. 34(1), 25–36 (2019)

Applegate, D., Díaz, M., Hinder, O., Lu, H., Lubin, M., O’Donoghue, B., Schudy, W.: Practical large-scale linear programming using primal-dual hybrid gradient. arXiv preprint arXiv:2106.04756 (2021)

Arjovsky, M., Chintala, S., Bottou, L.: Wasserstein generative adversarial networks. In: PMLR, International Conference on Machine Learning, pp. 214–223 (2017)

Asl, A., Overton, M.L.: Analysis of the gradient method with an Armijo-Wolfe line search on a class of non-smooth convex functions. Optim. Methods Softw. 35(2), 223–242 (2020)

Atkinson, D.S., Vaidya, P.M.: A cutting plane algorithm for convex programming that uses analytic centers. Math. Program. 69(1), 1–43 (1995)

Benzi, M., Golub, G.H.: A preconditioner for generalized saddle point problems. SIAM J. Matrix Anal. Appl. 26(1), 20–41 (2004)

Benzi, M., Golub, G.H., Liesen, J.: Numerical solution of saddle point problems. Acta Numer 14, 1–137 (2005)

Berthold, T., Perregaard, M., Mészáros, C.: Four good reasons to use an interior point solver within a mip solver, Operations Research Proceedings. Springer 2018, 159–164 (2017)

Bertsekas, D. P.: Constrained optimization and lagrange multiplier methods. Computer Science and Applied Mathematics (1982)

Boyd, S., Vandenberghe, L.: Convex Optimization. Cambridge University Press, Cambridge (2004)

Broyden, C.G.: A class of methods for solving nonlinear simultaneous equations. Math. Comput. 19(92), 577–593 (1965)

Broyden, C.G.: The convergence of single-rank quasi-Newton methods. Math. Comput. 24, 365–382 (1970)

Broyden, C.G., Dennis, J.E., Moré, J.J.: On the local and superlinear convergence of quasi-newton methods. IMA J. Appl. Math. 12(3), 223–245 (1973)

Burke, J.V., Qian, M.: On the superlinear convergence of the variable metric proximal point algorithm using Broyden and BFGS matrix secant updating. Math. Program. 88, 157–181 (1997)

Burke, J.V., Qian, M.: A variable metric proximal point algorithm for monotone operators. SIAM J. Control. Optim. 37(2), 353–375 (1999)

Byrd, R.H., Nocedal, J., Yuan, Y.X.: Global convergence of a class of quasi-Newton methods on convex problems. SIAM J. Numer. Anal. 24(5), 1171–1190 (1987)

Chambolle, A., Pock, T.: A first-order primal-dual algorithm for convex problems with applications to imaging. J. Math. Imaging Vis. 40(1), 120–145 (2011)

Chen, X., Fukushima, M.: Proximal quasi-Newton methods for nondifferentiable convex optimization. Math. Program. Publ. Math. Program. Soc. Ser. A 85(2), 313–334 (1999)

Dai, B., Shaw, A., Li, L., Xiao, L., He, N., Liu, Z., Chen, J., Song, L.: SBEED: Convergent reinforcement learning with nonlinear function approximation. In: Jennifer, D., Andreas, K. (eds.), Proceedings of the 35th International Conference on Machine Learning. Proceedings of Machine Learning Research, vol. 80, PMLR, 10–15 (2018), pp. 1125–1134

Daskalakis, C., Ilyas, A., Syrgkanis, V., Zeng, H.: Training GANs with optimism. In: International Conference on Learning Representations, (2018)

Davidon, W.C.: Variable metric method for minimization. SIAM J. Optim. 1(1), 1–17 (1991)

Dennis, J.E., Moré, J.J.: A characterization of superlinear convergence and its application to quasi-newton methods. Math. Comput. 28(126), 549–560 (1974)

Dennis, J.E., Moré, J.J.: Quasi-newton methods, motivation and theory. SIAM Rev. 19(1), 46–89 (1977)

Du, S.S, Hu, W.: Linear convergence of the primal-dual gradient method for convex-concave saddle point problems without strong convexity. In: The 22nd International Conference on Artificial Intelligence and Statistics, PMLR, pp. 196–205 (2019)

Essid, M., Tabak, E., Trigila, G.: An implicit gradient-descent procedure for minimax problems (2019)

Fletcher, R., Powell, M.J.D.: A rapidly convergent descent method for minimization. Comput. J. 6, 163–168 (1963)

Fletcher, R.: A new approach to variable metric algorithms. Comput. J. 13(3), 317–322 (1970)

Fletcher, R., Powell, M.J.D.: A rapidly convergent descent method for minimization. Comput. J. 6(2), 163–168 (1963)

Gidel, G., Berard, H., Vignoud, G., Vincent, P., Lacoste-Julien, S.: A variational inequality perspective on generative adversarial networks. arXiv preprint arXiv:1802.10551 (2018)

Gidel, G., Berard, H., Vignoud, G., Vincent, P., Lacoste-Julien, S.: A variational inequality perspective on generative adversarial networks (2020)

Gidel, G., Hemmat, R.A., Pezeshki, M., Le Priol, R., Huang, G., Lacoste-Julien, S., Mitliagkas, I.: Negative momentum for improved game dynamics. In: The 22nd International Conference on Artificial Intelligence and Statistics, PMLR, pp. 1802–1811 (2019)

Goffin, J.L., Sharifi-Mokhtarian, F.: Primal-dual-infeasible newton approach for the analytic center deep-cutting plane method. J. Optim. Theory Appl. 101(1), 35–58 (1999)

Goffin, J.-L., Vial, J.-P.: On the computation of weighted analytic centers and dual ellipsoids with the projective algorithm. Math. Program. 60(1), 81–92 (1993)

Goldfarb, D.: A family of variable-metric methods derived by variational means. Math. Comput. 24(109), 23–26 (1970)

Goodfellow, I., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., Bengio, Y.: Generative adversarial networks. Sherjil Ozair (2014)

Grimmer, B., Lu, H., Worah, P., Mirrokni, V.: The landscape of the proximal point method for nonconvex-nonconcave minimax optimization. arXiv preprint arXiv:2006.08667 (2020)

Horn, R.A., Johnson, C.R.: Matrix Analysis. Cambridge University Press, Cambridge (2012)

Karras, T., Aila, T., Laine, S., Lehtinen, J.: Progressive growing of gans for improved quality, stability, and variation (2018)

Korpelevich, The extragradient method for finding saddle points and other problems. Matecon 12 (1976)

Ledig, C., Theis, L., Huszar, F., Caballero, J., Cunningham, A., Acosta, A., Shi, W.: Photo-realistic single image super-resolution using a generative adversarial network. CoRR abs/1609.04802 (2016)

Liang, T., Stokes, J.: Interaction matters: A note on non-asymptotic local convergence of generative adversarial networks. In: The 22nd International Conference on Artificial Intelligence and Statistics, PMLR, pp. 907–915 (2019)

Lu, H.: An \(o(s^r)\)-resolution ode framework for understanding discrete-time algorithms and applications to the linear convergence of minimax problems (2021)

Haihao, L.: An o (sr)-resolution ode framework for understanding discrete-time algorithms and applications to the linear convergence of minimax problems. Math. Program. 194(1), 1061–1112 (2022)

Steven Mackey, D., Mackey, N., Tisseur, F.: Structured tools for structured matrices. Electron. J. Linear. Algebra 10, 106–145 (2003)

Madry, A., Makelov, A., Schmidt, L., Tsipras, D., Vladu, A.: Towards deep learning models resistant to adversarial attacks. In: International Conference on Learning Representations (2018)

Mescheder, L., Nowozin, S., Geiger, A.: The numerics of gans. Adv. Neural Inf. Process. Syst. 30 (2017)

Mokhtari, A., Ozdaglar, A., Pattathil, S.: A unified analysis of extra-gradient and optimistic gradient methods for saddle point problems: Proximal point approach. In: International Conference on Artificial Intelligence and Statistics (2020)

Moré, J.J., Trangenstein, J.A.: On the global convergence of Broyden’s method. Math. Comput. 30(135), 523–540 (1976)

Nemirovski, A.: Prox-method with rate of convergence O(1/t) for variational inequalities with lipschitz continuous monotone operators and smooth convex-concave saddle point problems. SIAM J. Optim. 15(1), 229–251 (2004)

Nesterov, Yu.: Complexity estimates of some cutting plane methods based on the analytic barrier. Math. Program. 69(1), 149–176 (1995)

Nesterov, Y., Nemirovskii, A.: Interior-point polynomial algorithms in convex programming. In: SIAM (1994)

Nicholas J. Higham on the top 10 algorithms in applied mathematics, https://press.princeton.edu/ideas/nicholas-higham-on-the-top-10-algorithms-in-applied-mathematics. Accessed 01 Oct 2022

Nocedal, J., Wright, S.J.: Numerical Optimization, 2nd edn. Springer, New York (2006)

Ortega, J. M., Rheinboldt, W. C.: Iterative solution of nonlinear equations in several variables, Classics in Applied Mathematics, Society for Industrial and Applied Mathematics (1970)

Osborne, M.J., Rubinstein, A.: A course in game theory. MIT Press, Cambridge (1994)

Pearlmutter, B.A.: Fast exact multiplication by the hessian. Neural Comput. 6(1), 147–160 (1994)

Powell, M.J.D.: A hybrid method for nonlinear equations, Numerical methods for nonlinear algebraic equations. In: Proceedings of the Conference on University, Essex, Colchester, 1969, pp. 87–114 (1970)

Powell, M.J.D.: A new algorithm for unconstrained optimization. In: Nonlinear Programming Proceedings of the Symposium, University of Wisconsin, Madison, WI. Academic Press. New York 1970, 31–65 (1970)

Powell, M.J.D.: Some global convergence properties of a variable metric algorithm for minimization without exact line searches. In: Nonlinear Programming (Providence), Am. Math. Soc., SIAM-AMS Proc., Vol. IX, pp. 53–72 (1976)

Tyrrell Rockafellar, R.: Monotone operators and the proximal point algorithm. SIAM J. Control. Optim. 14(5), 877–898 (1976)

Schraudolph, N.N.: Fast curvature matrix-vector products for second-order gradient descent. Neural Comput. 14(7), 1723–1738 (2002)

Shanno, D.F.: Conditioning of quasi-newton methods for function minimization. Math. Comput. 24(11), 647–656 (1970)

Sidi, A.: A zero-cost preconditioning for a class of indefinite linear systems. WSEAS Trans. Math. 2 (2003)

Ye, Y.: Complexity analysis of the analytic center cutting plane method that uses multiple cuts. Math. Program. 78(1), 85–104 (1996)

Zhu, J.-Y., Park, T., Isola, P., Efros, A.A.: Unpaired image-to-image translation using cycle-consistent adversarial networks. IEEE Int. Conf. Comput. Vis. (ICCV) 2017, 2242–2251 (2017)

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Existing definitions and results used in the proofs

Existing definitions and results used in the proofs

Lemma A.1

(Sherman–Woodbury Formula) ( [37, page 19]) Suppose \(A\in {\mathbb {R}}^{n\times n}\) is an invertible matrix and vectors \(u,v\in {\mathbb {R}}^{n}\). Then \(A+uv^T\) is invertible if and only is \(1+v^TA^{-1}u\ne 0\). In this case,

Lemma A.2

(Banach Perturbation Lemma) ( [54, page 45]) Consider square matrices \(A,B \in {\mathbb {R}}^{d\times d}\). Suppose that A is invertible with \(\Vert A^{-1}\Vert \le a\). If \(\Vert A-B\Vert \le b\) and \(ab < 1\), then B is also invertible and

Lemma A.3

([23, Eq. (1.2)]) Consider square matrices \(A,B \in {\mathbb {R}}^{d\times d}\). Then

Definition A.4

(R-superlinear and Q-superlinear convergence rates [48]) We say the sequence \(\{z_k\}\) is converging to \(z^*\) R-superlinearly, if

and \(\{z_k\}\) is converging to \(z^*\) Q-superlinearly, if there exists a sequence \(\{q_k\}\) converging to zero such that

Theorem A.5

(Dennis-Moré Q-superlinear Characterization Identity) ([22, Theorem 2.2]) Let the mapping F be differentiable in the open convex set \(\mathbb {D}\) and assume that for some \(z^* \in \mathbb {D}\), \(\nabla F\) is continuous at \(z^*\) and \(\nabla F(z^*)\) is invertible. Let \(\{B_k \}\) be a sequence of invertible matrices and suppose \(\{z_k\}\), with \(z_{k+1} = z_k -B_k^{-1}F(z_k)\), remains in \(\mathbb {D}\) and converges to \(z^*\). Then \(\{z_k\}\) converges Q-superlinearly to \(z^*\) and \(F(z^*) = 0\) iff

Definition A.6

(Uniform linear independence) ( [48, Definition 5.1.]) A sequence of unit vectors \(\{u_j\}\) in \({\mathbb {R}}^{n+m}\) is uniformly linearly independent if there is \(\beta >0\), \(k_0\ge 0\) and \(t\ge n+m\), such that for \(k\ge k_0\) and \(\Vert x\Vert =1\), we have:

Theorem A.7

([48, Theorem 5.3.]) Let \(\{ u_k\}\) be a sequence of unit vectors in \({\mathbb {R}}^{n+m}\). Then the following options are equivalent.

-

The sequence \(\{ u_k\}\) is uniformly linearly independent.

-

For any \({\hat{\beta }} \in [0,1) \) there is a constant \(\theta \in (0,1)\) such that if \(|\beta _j-1| \le {\hat{\beta }}\) then:

$$\begin{aligned} \left\| \prod _{j=k+1}^{k+t} \big ( I -\beta _j u_ju_j^T \big )\right\| \le \theta , ~~\textrm{for}~~k\ge k_0 \mathrm {~~and~~} t\ge n+m. \end{aligned}$$

Lemma A.8

( [48, Lemma 5.5.]) Let \(\{ \phi _k\}\) and \(\{ \delta _k\}\) be sequences of nonnegative numbers such that \(\phi _{k+t} \le \theta \phi _k + \delta _k\) for some fixed integer \(t \ge 1\) and \(\theta \in (0,1)\). If \(\{ \delta _k\}\) is bounded then \(\{ \phi _k\}\) is also bounded, and if in addition, \(\{ \delta _k\}\) converges to zero, then \(\{ \phi _k\}\) converges to zero.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Asl, A., Lu, H. & Yang, J. A J-symmetric quasi-newton method for minimax problems. Math. Program. 204, 207–254 (2024). https://doi.org/10.1007/s10107-023-01957-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-023-01957-1