Abstract

Lifelogging tools aim to precisely capture daily experiences of people from the first-person perspective. Although there have been numerous lifelogging tools developed for users to record the external environment around them, the internal part of experience characterized by emotions seems to be neglected in the lifelogging field. However, the internal experiences of people are important and, therefore, lifelogging tools should be able to capture not only the environmental data, but also emotional experiences, thereby providing a more complete archive of past events. Moreover, there are implicit emotions that cannot be consciously experienced, but still influence human behaviors and memories. It has been proven that conscious emotions can be recognized from physiological signals of the human body. This fact may be used to enhance life-logs with information about unconscious emotions, which otherwise would remain hidden. On the other hand, it is not clear if unconscious emotions can be recognized from physiological signals and differentiated from conscious emotions. Therefore, an experiment was designed to elicit emotions (both conscious and unconscious) with visual and auditory stimuli and to record cardiovascular responses of 34 participants. The experimental results showed that heart rate responses to the presentation of the stimuli are unique for every category of the emotional stimuli and allow differentiation between various emotional experiences of the participants.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Keeping a diary is a very traditional way of lifelogging. Some people tend to write down in their diaries all the details of what they saw and did, while others like to note moods and emotions they had during a day. Presently, there are various kinds of lifelogging tools (e.g., [1–3]) that have been developed to assist people with recording their life experiences. However, these tools can only record the surrounding environment of people, which ultimately includes everything that they encounter, but not the internal world, which comprises moods, thoughts and emotions. Therefore, current lifelogging tools do not provide people with a possibility to keep records of their mental life, which is crucial for some people who keep diaries [4, 5].

To offer capabilities that are superior to diaries, lifelogging applications should try to capture the complete experiences of people including data from both their external and internal worlds. Since mental experiences of people are too broad and complex to start with, it is reasonable to focus on the individual components of one’s internal world. We propose to first consider emotions because they are undoubtedly one of the most important components of mental life. Moreover, emotions can be recognized from physiological signals of a human body [6–9]. This latter fact is important for the development of an automatic lifelogging tool. It should be noted that questions about emotions are fundamental in psychology and play an important role in understanding mind and behavior [10]. Recently, an interesting idea about this role was presented: emotion is a medium of communication between the unconscious and the conscious in the human mind [11]. According to this idea, emotion is seen as the conscious perception of the complex mapping processes from the unconscious space into the low-dimensional space of the conscious.

Recent studies have proved that it is possible to recognize emotion based on physiological signals. This research direction looks rather promising [12] and the primary focus there is on the conscious emotions, which people are aware of and can report. However, Berridge and Winkielman [13] argue that emotion can be unconscious as well. According to Kihlstrom [14, pp. 432], “explicit emotion’ refers to the person’s conscious awareness of an emotion, feeling, or mood state; ‘implicit emotion’, by contrast, refers to changes in experience thought or action that are attributable to one’s emotional state, independent of his or her conscious awareness of that state”. For this reason, we believe that lifelogging tools should take into account both conscious and unconscious emotions. Unconscious emotions might even be of higher interest and importance than conscious emotions for some users of lifelogging tools because they are hidden from their conscious awareness.

To the best of our knowledge, recognition of unconscious emotions has not been studied yet, and, thus, it is necessary to investigate if physiological signals can be used for this purpose. In our study, we focused on the signal of heart rate, which has been proved to be one of the physiological signals that are related to emotional states [15–18]. Importantly for development of lifelogging tools, heart rate can be unobtrusively measured with wearable sensors.

In emotion recognition studies various sets of stimuli are utilized to elicit emotions in participants. For this purpose, specialized databases of stimuli have been developed and validated [19, 20]. However, in case of unconscious emotions such sets of stimuli have not been clearly identified yet. Therefore, in our study, we had to introduce archetypal stimuli [21] as a new kind of stimuli that are applied to evoke unconscious emotions.

Another difference of our study from the large part of the previous work in this direction is that we targeted five different emotional states, while other studies tended to focus on a fewer number of emotions [22].

Based on the aforesaid, an experiment was set up in a laboratory setting for elicitation of emotions (both conscious and unconscious) with visual and auditory stimuli and for measurement of heart rate changes of participants in response to presentation of the stimuli. Conscious emotions were included in the experiment for control purposes. This experiment should (1) clarify if different types of emotional stimuli evoke diverse cardiovascular responses and (2) if unconscious emotions can be recognized from heart rate.

1.1 Visual and auditory affective stimuli

To elicit emotional feelings under laboratory conditions, it is common to use visual and auditory stimuli. Based on the previous work in this field, there are publicly available databases with affective pictures and sounds that cover the most popular emotions and have been successfully tested [19, 20, 23]. Unfortunately, there are no databases that contain stimuli capable of eliciting unconscious emotional experiences. In view of the aforementioned, for the experiment, it is necessary to pick appropriate content from one of the available databases for regular emotion elicitation and to choose stimuli that are capable of evoking unconscious emotion.

1.1.1 Affective pictures and sounds

In the field of emotion research, International Affective Picture System (IAPS) [19] and the International Affective Digital Sound System (IADS) [20] are widely used to investigate the correlation between self-reported feelings of subjects and the stimuli that are exposed to them. In our study, IAPS and IADS were selected as the sources of experimental material due to the facts that these databases consistently cover the emotional affective space, have relatively complete content including pictures and sound clips and provide detailed instructions about usage of the databases.

Now, we have to identify the remaining stimuli for unconscious emotion.

1.1.2 Archetypal pictures and sounds

Jung [24] postulated the concept of collective unconsciousness, arguing that in contrast to the personal psyche, the unconsciousness has some contents and modes of behavior that are identical in all individuals. This means that the collective unconsciousness is identical in all human beings and, thus, constitutes a common psychic substrate of a universal nature which is present in every human being. Jung further posited that the collective unconsciousness contains archetypes: ancient motifs and predispositions to patterns of behavior that manifest symbolically as archetypal images in dreams, art or other cultural forms [25]. According to Jung’s personal confrontation with the unconsciousness, he tried to translate the emotions into images, or rather to find the images that were concealed in the emotions [26]. According to the record of Jung’s patients, archetypal symbols are essential for representation of one’s emotions at an unconscious level. Jung further argued that mandala (Fig. 1b, c), a circular art form, is an archetypal symbol representing the self and wholeness [24, 26]. The fundamental and more generic form of mandala consists of a circle with a dot at its center (Fig. 1a). This pattern can also be found in different cultural symbols, such as the Celtic cross, the aureole and rose windows.

Since Jung’s argument, mandala drawings have been applied for practical use in the art and psychotherapeutic fields as basic tools for self-awareness, self-expression, conflict resolution and healing [29–33]. Recent studies have discovered that mandala could be a promising tool for non-verbal emotional communication [32, 34–37]. For patients with post-traumatic stress disorder (PTSD), therapists can diagnose patients’ emotional statuses through the mandalas drawn by them, while these patients are not willing or not able to discuss sensitive information regarding childhood abuse [36]. Furthermore, in another case concerning breast cancer patients, mandala drawings, as a non-invasive assessment tool, allowed the physician to extract valuable information that may have been otherwise blocked by conscious processes [34]. The above studies have shown the potential of mandala to be a promising tool to convey unconscious emotions.

Based on the work of Jung, the Archive for Research in Archetypal Symbolism (ARAS) was established [21]. ARAS is a pictorial and written archive of mythological, ritualistic and symbolic pictures from all over the world and from all epochs of human history [38]. Therefore, we assumed that the archetypal content of ARAS might enable us to elicit unconscious emotion and included the archetypal symbols in our experiment.

About archetypal sounds, very little information is available. We found out that ‘Om’ and Solfeggio frequencies are considered to be archetypal sounds [39, 40]. ‘Om’ or ‘Aum’ represents a sacred syllable in Indian religions [40]. “Om” is the reflection of the absolute reality without beginning or end and embracing all that exists [41]. Next, Solfeggio frequencies are a set of six tones that were used long ago in Gregorian chants and Indian Sanskrit chants. These chants had special tones that were believed to impart spiritual blessings during religious ceremonies [39]. Solfeggio frequencies represent the common fundamental sound that is both used in Western Christianity and eastern Indian religions; therefore, we considered them as archetypal sounds.

In addition to the archetypal symbols, we also included the archetypal sounds in our experiment to take into account the effect of auditory stimuli. Our expectations are that unlike the content of IAPS and IADS, the visual and auditory archetypal stimuli might evoke unconscious emotions.

2 Materials and methods

2.1 Participants

Thirty-four healthy subjects, including 15 males and 19 females, participated in our experiment. Most of the participants were students and researchers associated with the Eindhoven University of Technology. The participants had diverse nationalities: 15 from Asia (China, India, Indonesia, and Taiwan), 8 from Europe (Belgium, The Netherlands, Russia, Spain and Ukraine), eight from the Middle East (Turkey and United Arab Emirates) and three from South America (Colombia and Mexico). The subjects had a mean age of 26 years and 9 months, ranging from 18 to 50 years (one under 20 years old, two above 40 years old). The participants provided informed consent prior to the start of the experiment and were financially compensated for their time.

2.2 Stimuli

IAPS and IADS contain huge amounts of visual and audio stimuli, including 1,194 pictures and 167 sound clips. Due to the limitation of time and resources, we had to reduce the amount of materials for the experiment. To keep the validity of these two databases with the shrunk size, three selective principles were applied. First, four featured categories in the affective space of IAPS and IADS had to remain: they were positive and arousal (PA), positive and relax (PR), neutral (NT) and negative (NG). Second, the selected stimuli of each category had to reflect the original data set. For example, the PA picture category mainly consisted of Erotic Couple, Adventure, Sports and Food. Thus, the selected PA picture category should contain these clusters as well. Last, stimuli that can best represent the category should be selected first. For example, for PA category, the most positive and arousing content should be included first.

The same criteria were used to select the materials for the fifth category archetypal content (AR), with the only difference that the distribution of archetypal content in the affective space is not yet defined. To sum up, there were two kinds of media, which were pictures and sound clips; each media contained five categories, which were mentioned above as PA, PR, NT, NG, and AR and each category comprised 6 stimuli (see Table 1). In total, the materials for the experiment included 30 pictures and 30 sound clips. The study followed the method used in IAPS and IADS [19, 20].

2.3 Procedure

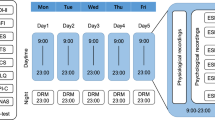

The experiment followed a within-subjects design. After briefing the subject and obtaining informed consent, the electrodes for the electrocardiogram (ECG) recording were placed. The subjects then completed a number of questionnaires, which are reported in [45], to allow time for acclimatization to the laboratory setting prior to emotion manipulation. After filling in the pre-experiment questionnaires, each participant was asked to sit in front of a monitor for displaying visual stimuli and two speakers for playing audio stimuli. The experiment was built with a web-based system and all the experimental data was stored online in the database for further analysis. Before the real experiment started, each participant went through a tutorial to get familiar with the controls and the interface. After introductions and making sure that the participant was calm and ready for the experiment, two sessions were performed: a picture session and a sound session. Once a session began, the screen or the speakers started to display pictures or play sound clips one at a time in a random order. Each picture or sound clip was exposed to the participant for 6 s. Then the stimulus was replaced with a black screen and the interface paused for 5 s. The rating scales to self-reported emotional feelings were shown after the pause. The information obtained from these self-reports is discussed in another paper [46]. Participants had unlimited time to report their emotional feelings. Another 5-s pause and a black screen appeared after the self-report, which was meant to let participants calm down and recover from the previously induced emotion. Then, the next picture or sound clip was shown or played. In Fig. 2, we show the sequence of events during the stimulus demonstration and specify the time intervals that are important in this experiment. The meaning of the time intervals will be discussed later. All the 34 participants went through the whole procedure individually.

2.4 Physiological measures

2.4.1 Recording equipment

The ECG was taken with four Ag/AgCl electrodes with gel placed on the left and right arms (close to shoulders), and left and right sides of a belly. The electrode placed on the right side of the belly served as a reference. The signal was recorded at 1,024 Hz using an amplifier included in ASA-Lab (ANT BV) and Advanced Source Analysis v.4.7.3.1 (ANT BV, 2009).

2.4.2 Derivation of measures

At the end of the experiment, two data files per participant were obtained. The first file was retrieved from the presentation system and contained information about the time intervals of stimuli presentations. For every stimulus, the system stored the time when it appeared and disappeared, as well as the time when the rating scales were presented to a subject and the time when the subject submitted the ratings. The second file contained signals from the three ECG electrodes together with the timestamps of beginning and ending of the recording.

Normally, the ECG signal was taken from electrodes that represent the lead II of Einthoven’s triangle, but for some of the participants we had to use the lead I because the signal from the electrode placed on the left side of the belly contained a strong noise. Next, QRS complexes were identified in the ECG signal using a self-developed computer program, which implemented the method described in [47]. The same computer program matched the ECG recording and the time intervals of stimuli presentation. Interval-1 (see Fig. 2) is used to calculate the average heart rate just before a stimulus is displayed; this heart rate, therefore, serves as a reference. During interval-2 (see Fig. 2), a stimulus is presented and for this reason we expect changes in heart rate relative to interval-1. Interval-3 (see Fig. 2) includes both a stimulus demonstration and a pause with black screen. We expected that, due to the latency of physiological signals, the changes in heart rate might not be visible during the stimulus presentation. Thus, interval-3 gives extra time to observe changes in heart rate. Additionally, we took into account interval-4 (see Fig. 2) to investigate if there was a difference in heart rate during and after the presentation of a stimulus.

The number of heartbeats, time between the beats and average heart rate per second were calculated with our computer program for every interval mentioned above. In the statistical analysis, the type of media (i.e., picture or sound) and the category of stimuli (i.e., archetypal, positive and relaxing, positive and arousing, neutral, and negative) were treated as independent within-subject variables, and the average heart rates for interval-2, interval-3 and interval-4 were treated as dependent variables.

All statistical tests used a 0.05 significance level and performed using SPSS (IBM SPSS Statistics, Version 19).

3 Results

After the experiment, three types of heart rate analyses were performed. The first type of analysis studied the values of heart rate that corresponded to each category of the stimuli. The second type of analysis examined the changes in heart rate during the stimuli demonstrations with regard to the heart rate calculated for the reference intervals. The third type of analysis aimed to investigate the classification of the emotional categories based on heart rate.

3.1 Heart rate measures

As the experiment followed a within-subject design and the stimuli for every participant were presented in a random order, the effect of ECG baseline drift was leveraged. Therefore, it is reasonable to make a comparison of average heart rates during the presentations of different stimuli.

Multivariate analysis of variance (MANOVA) for repeated measurements showed a significant main effect of category on the heart rate of subjects during the time interval-2, [F (4, 32) = 3.772, p = 0.013 (Wilks’ Lambda)]. The same test showed a significant main effect of the category of stimuli on the average heart rate of participants during the time interval-3, [F (4, 32) = 5.793, p = 0.001 (Wilks’ lambda)] and during time interval-4, [F (4, 32) = 5.089, p = 0.003 (Wilks’ lambda)]. However, the average values of heart rates measured for different categories of stimuli are very close to each other (see Table 2).

There was a significant relationship between the type of media (i.e., picture or sounds) and the average heart rate of the participant. Thus, MANOVA for repeated measurement demonstrated a significant main effect of the media on interval-2 [F (1, 35) = 9.992, p = 0.003 (Wilks’ lambda)], on interval-3 [F (1, 35) = 9.296, p = 0.004 (Wilks’ lambda)] and on interval-4 [F (1, 35) = 8.296, p = 0.007 (Wilks’ lambda)].

3.2 Measures of changes in heart rate

At the next step of our analysis, the changes of heart rate during the presentation of stimuli relative to the reference intervals were examined. For every stimulus, first, an average heart rate for the reference interval (interval-1) was calculated, and then the differences between the calculated value and the average heart rates at interval-2, interval-3 and interval-4 were determined.

In the statistical analysis, the type of stimuli (i.e., picture or sound) and the category of stimuli (i.e., archetypal, positive and relaxing, positive and arousing, neutral, and negative) were treated as independent within-subject variables. The changes in heart rate for interval-2, interval-3 and interval-4 were treated as dependent variables.

MANOVA for repeated measurements showed a significant main effect of the category of stimuli on the changes in the heart rate of participants during interval-2 [F (4, 32) = 5.413, p = 0.002 (Wilks’ Lambda)], though the same statistical test did not show significance during interval-3 [F (4, 32) = 2.446, p = 0.067 (Wilks’ Lambda)] and interval-4 [F (4, 32) = 1.518, p = 0.220 (Wilks’ Lambda)]. Descriptive statistics for the changes in heart rate during observation of the stimuli for different intervals of time can be found in Table 3.

The influence of different types of media on the changes in heart rate has also been analyzed, and MANOVA for repeated measurements displayed a significant main effect of the media type during interval-2 [F (1, 35) = 5.171, p = 0.029 (Wilks’ lambda)] and during interval-3 [F (1, 35) = 5.633, p = 0.023 (Wilks’ lambda)].

To graphically illustrate the dynamic of changes in heart rate during interval-3, which lasted 11 s, we plotted two diagrams (Figs. 3, 4). In Fig. 3, the data series that correspond to different types of media are presented. Changes in heart rate during interval-3, which are related to the categories of emotional stimuli, can be seen in Fig. 4.

3.3 Classification analysis

Finally, a discriminant analysis was conducted to investigate if heart rate data could be used to predict the categories of emotional stimuli. The changes of heart rate from the baseline during interval-3 were used as predictor variables. Significant mean differences were observed at seconds 4, 5 and 6 on the category of emotional stimuli. Although the log determinants for different categories of stimuli were quite similar, Box’s M test was significant, which indicated that the assumption of equality of covariance matrices was violated. However, taking into account the large sample size (N = 2040), the results of Box’s M test can be neglected [48]. A combination of the discriminant functions revealed a significant relation (p = 0.019) between the predictor variables and the categories of emotional stimuli, and the classification results showed that 25 % of the original cases and 23.3 % of cross-validated grouped cases were correctly classified. Although these classification rates are not very high, they are still above the chance level, which, in the case of five categories of stimuli, equals to 20 %. Therefore, the achieved classification rates represent an improvement of 25 % for the original cases and 16.5 % for the cross-validated grouped cases in comparison to the chance level.

4 Discussion

The content of IAPS and IADS databases was successfully used to induce a range of emotions in a way that was similar to our experiment [15, 49]. Therefore, we considered that every category of stimuli that comes from the databases would result in a unique combination of emotional feelings of the participants. Based on the literature review, we also expected that each of the categories of stimuli would result in a distinct pattern of physiological responses.

In addition to the content of IAPS and IADS, the archetypal stimuli were included in the experiment. To the best of our knowledge, no one has used the archetypal stimuli in the emotion research so far; hence, we did not exactly know what kind of physiological changes they might induce. However, we assumed that the physiological reaction should be different from the response to the IAPS and IADS stimuli.

The experimental results enabled us to draw several conclusions. The first and the most important conclusion was that the results confirmed our hypotheses regarding the unique pattern of physiological response for every (including archetypal) category of stimuli. Indeed, for both types of analysis that were performed, namely, for the analysis of the average heart rate during the demonstration of a stimulus and for the analysis of the change in average heart rate during the demonstration of a stimulus, the statistical tests showed a significant main effect of the category of the stimuli on heart rate. However, it is necessary to note that for the change of the average heart rate, the test was significant only for interval-2, while for the average heart rate the test was significant for interval-2, interval-3 and interval-4. According to the previous research on the response of heart rate to emotional stimuli [15], the absence of the significant main effect of category during interval-3 and interval-4 can be explained by the fact that the largest change in heart rate happens during the first 2 s of a stimulus presentation.

Figure 5 illustrates the average heart rate changes measured on interval-2 with a reference to interval-1. It can be observed that, independent of the media type and the category of stimuli, heart rate exhibits a general decelerating response. This finding agrees with the previously reported results [15, 49] and is explained by Laceys’ model [50], which describes the effect of attention on heart rate. According to Laceys’ theory of intake and rejection, the deceleration of heart rate occurs due to the diversion of attention to an external task, for instance, perception of a visual or auditory stimulus. On the other hand, when the attention has to be focused on an internal task and the environment has to be rejected, heart rate tends to accelerate.

The next conclusion is that negative stimuli evoked larger deceleration of heart rate in comparison to positive and neutral stimuli. This pattern is also in agreement with previous studies [49] and is usually explained by Laceys’ theory. The fact that our experimental results are highly consistent with the literature confirms again the validity of our study.

We also analyzed changes in heart rate for different types of media and found that auditory stimuli led to a higher speed of heart rate deceleration in comparison to visual stimuli. From our point of view, the participants might consider sounds to be more significant and unexpected events, because they could not influence the perception of a sound stimulus. For example, if a subject did not like a negative visual stimulus, she could always close her eyes and avoid the stimulus. However, she could not avoid an auditory stimulus in the same manner. Therefore, an intake of an auditory stimulus affected heart rate stronger than a visual stimulus.

It is particularly interesting to look at the effect of the archetypal stimuli on heart rates of participants. According to the statistical analysis, there is a significant difference between the influence of visual and auditory archetypal stimuli on the heart rate of the participants during interval-3 [F (11, 24) = 2875, p = 0.015 (Wilks’ Lambda)]. Surprisingly, the archetypal sounds evoked even stronger heart rate deceleration than the negative sounds. This phenomenon is difficult to explain; however, we have an idea that is based on one of the original purposes of the archetypal sounds, which is to support people in meditation practice. Indeed, to achieve a proper mental state during meditation, people have to move away from their conscious experiences and free their mind from thoughts [51]. This exercise is difficult because the conscious mind can hardly be idle. Therefore, people use the archetypal sounds (for instance, the famous “Om” sound) to keep the conscious concentrated on these sounds while they meditate. Then, one can infer that the archetypal sounds efficiently capture the attention of individuals. This, in turn, allows us to explain the strong heart rate deceleration with Laceys’ theory.

The pattern of heart rate change is influenced by the archetypal pictures to a lesser extent than by the archetypal sounds. This might be that, because for the revelation of archetypal features of the pictures, participants have to be deeply engaged in the contemplation of archetypal pictures.

As mandalas and meditative sounds are religious symbols and, therefore, can possibly elicit conscious emotions in some people, one might question if the emotional responses of participants to the archetypal stimuli is indeed unconscious. In order to investigate this question, it is reasonable to assume that people from Asia are more familiar with mandala than people from other regions of the world because mandala is a religious symbol in Hinduism and Buddhism. Therefore, a presentation of mandala to Asian people might elicit conscious emotional response. However, mandala does not appear in, for example, European religions, and, for this reason, it is unlikely that European people consciously know this symbol. As in our study we had participants from various geographical locations (15 from Asia, 8 from Europe, 8 from the Middle East and 3 from South America), it was possible to compare emotional responses to mandala between the participants who come from Asia and the participants who come from other regions of the world. Analysis showed that for the archetypal stimuli, there is no statistically significant main effect of the geographical region (Asia or non-Asia) on changes in heart rate of the participants [F (11, 23) = 1.724, p = 0.13 (Wilks’ Lambda)]. Therefore, we can conclude that emotional responses to the archetypal stimuli do not depend on the familiarity of the participants with these symbols.

5 Conclusion and future directions

As was pointed out earlier, we see emotion as an important component of one’s mental life and, therefore, lifelogging tools should be able to capture emotions. Recent research in the area of affective computing has demonstrated that emotional states of people can be recognized from their physiological signals [52]. However, based on this research, it is not clear if unconscious emotions have corresponding patterns of physiological signals. Our study provided evidence which implied that unconscious emotions also affect the heart rate. Moreover, deceleration of heart rate, which followed presentation of the archetypal stimuli to the participants, was different from deceleration of heart rate after demonstration of other stimuli. It is obvious that even theoretically, heart rate alone will not allow precise classification of emotions because emotion is a multidimensional phenomenon, and heart rate provides just one dimension. Our experimental results support this point of view with the classification rate of 25 % above the chance level, which is lower than in some studies that focus on a fewer number of emotions and utilize various physiological signals in addition to heart rate (e.g., [53]). This result is probably best explained by the fact that we targeted many broad emotional categories, which considerably complicates recognition of emotion. Nevertheless, this study provided an important foundation for the development of lifelogging tools that take into account emotional experiences of people. Therefore, it is necessary to continue the work in this direction and further investigate the patterns of physiological responses to the archetypal stimuli. From our point of view, other features of the ECG signal could also be useful for measurement of emotions. Along with heart activities, other physiological signals, such as galvanic skin response and respiration rate, should be taken into account. Additional physiological signals are important because eventually they might allow establishing one-to-one links between the profile of physiological response patterns and emotional experiences across time [8].

References

Kalnikaitė V, Sellen A, Whittaker S, Kirk D (2010) Now let me see where I was: Understanding how lifelogs mediate memory. Proceedings of the 28th international conference on human factors in computing systems. ACM, Atlanta, Georgia, USA, pp 2045–2054

Byrne D, Doherty AR, Snoek CGM, Jones GJF, Smeaton AF (2010) Everyday concept detection in visual lifelogs: validation, relationships and trends. Multimedia Tools Appl 49:119–144

Blum M, Pentland A, Troster G (2006) InSense: interest-based life logging. IEEE Multimedia 13:40–48

Ståhl A, Höök K, Svensson M, Taylor AS, Combetto M (2008) Experiencing the affective diary. Pers Ubiquit Comput 13:365–378

Clarkson B, Mase K, Pentland A (2001) The familiar: a living diary and companion. CHI’01 extended abstracts on human factors in computing systems. pp 271–272

Villon O, Lisetti C (2006) A user-modeling approach to build user’s psycho-physiological maps of emotions using bio-sensors. The 15th IEEE international symposium on robot and human interactive communication 2006 (ROMAN 2006). IEEE, Hatfield, pp 269–276

Healey J (2000) Wearable and automotive systems for affect recognition from physiology. Ph.D. thesis, Massachusetts Institute of Technology. http://dspace.mit.edu/handle/1721.1/9067

Cacioppo JT, Tassinary LG (1990) Inferring psychological significance from physiological signals. Am Psychol 45:16–28

Fairclough SH (2009) Fundamentals of physiological computing. Interact Comput 21:133–145

Barrett LF (2006) Are emotions natural kinds? Perspect Psychol Sci 1:28–58

Rauterberg M (2010) Emotions: the voice of the unconscious. Proceeding of 2010 international conference of entertainment computing. pp 205–215

Picard RW (2010) Affective computing: from laughter to IEEE. IEEE Trans Affect Comput 1:11–17

Berridge K, Winkielman P (2003) What is an unconscious emotion? (The case for unconscious “liking”). Cogn Emot 17:181–211

Kihlstrom JF (1999) The psychological unconscious. In Pervin LA, John OP (eds) Handbook of personality: theory and research, 2nd ed. Guilford Press, New York, NY, pp 424–442

Palomba D, Angrilli A, Mini A (1997) Visual evoked potentials, heart rate responses and memory to emotional pictorial stimuli. Int J Psychophysiol 27:55–67

Villon O, Lisetti C (2007) A user model of psycho-physiological measure of emotion. In: Conati C, McCoy K, Paliouras G (eds) User modeling 2007. Springer, Berlin, pp 319–323

Gunes H, Pantic M (2010) Automatic, dimensional and continuous emotion recognition. Int J Synth Emot 1:68–99

Mandryk R, Atkins M (2007) A fuzzy physiological approach for continuously modeling emotion during interaction with play technologies. Int J Hum Comput Stud 65:329–347

Lang PJ, Bradley MM, Cuthbert BN (2005) International affective picture system (IAPS): affective ratings of pictures and instruction manual. NIMH, Center for the Study of Emotion and Attention

Bradley MM, Lang PJ (2007) The international affective digitized sounds (IADS-2): affective ratings of sounds and instruction manual. University of Florida, Gainesville, FL, Technical Report B-3

Gronning T, Sohl P, Singer T (2007) ARAS: archetypal symbolism and images. Visual Resour 23:245–267

van den Broek EL, Janssen JH, Westerink JHDM (2009) Guidelines for affective signal processing (ASP): from lab to life. 2009 3rd international conference on affective computing and intelligent interaction and workshops. IEEE, Hatfield, pp 1–6

Dan-Glauser ES, Scherer KR (2011) The Geneva Affective Picture Database (GAPED): a new 730-picture database focusing on valence and normative significance. Behav Res Methods 43:468–477

Jung CG (1969) The archetypes and the collective unconscious. Princeton University Press, Princeton, NJ

Jung CG (1964) Man and his symbols. Doubleday, Garden City, NY

Jung CG (1989) Memories, dreams, reflections. Vintage, New York

Kazmierczak E (1990) Principal choremes in semiography: Circle, square, and triangle. Historical outline. Institute for Industrial Design, Warszawa

Mandala of the Six Chakravartins—Wikipedia, the free encyclopedia, http://en.wikipedia.org/wiki/File:Mandala_of_the_Six_Chakravartins.JPG

Bush CA (1988) Dreams, mandalas, and music imagery: therapeutic uses in a case study. Arts Psychother 15:219–225

Curry NA, Kasser T (2005) Can coloring mandalas reduce anxiety? Art Ther 22:81–85

Kim S, Kang HS, Kim YH (2009) A computer system for art therapy assessment of elements in structured mandala. Arts Psychother 36:19–28

Schrade C, Tronsky L, Kaiser DH (2011) Physiological effects of mandala making in adults with intellectual disability. Arts Psychother 38:109–113

Slegelis MH (1987) A study of Jung’s mandala and its relationship to art psychotherapy. Arts Psychother 14:301–311

Elkis-Abuhoff D, Gaydos M, Goldblatt R, Chen M, Rose S (2009) Mandala drawings as an assessment tool for women with breast cancer. Arts Psychother 36:231–238

DeLue CH (1999) Physiological effects of creating mandalas. In: Malchiodi C (ed) Medical art therapy with children. Jessica Kingsley, London, pp 33–49

Cox CT, Cohen BM (2000) Mandala artwork by clients with DID: clinical observations based on two theoretical models. Art Ther J Am Art Ther Assoc 17:195–201

Henderson P, Rosen D, Mascaro N (2007) Empirical study on the healing nature of mandalas. Psychol Aesthet Creat Arts 1:148–154

ARAS—the archive for research in archetypal symbolism, http://aras.org/index.aspx

Solfeggio frequencies— Wikipedia, the free encyclopedia, http://en.wikipedia.org/wiki/Solfeggio_frequencies

Om—Wikipedia, the free encyclopedia, http://en.wikipedia.org/wiki/Om

Maheshwarananda PS (2004) The hidden power in humans: Chakras and Kundalin. Ibera Verlag, Austria

Bhavacakra—Wikipedia, the free encyclopedia, http://en.wikipedia.org/wiki/Wheel_of_Existence

Welch D Mountainmystic9’s Channel, http://www.youtube.com/user/mountainmystic9

Om Meditation, http://www.youtube.com/watch?v=imWRQpY0P58

Chang H-M, Ivonin L, Chen W, Rauterberg M (in press) Multimodal symbolism in affective computing: people, emotions, and archetypal contents. Informatik Spektrum

Ivonin L, Chang H-M, Chen W, Rauterberg M (2012) A new representation of emotion in affective computing. Proceeding of 2012 international conference on affective computing and intelligent interaction (ICACII 2012). Lecture notes in information technology, Taipei, Taiwan, pp 337–343

Chesnokov Y, Nerukh D, Glen R (2006) Individually adaptable automatic QT detector. Computers in cardiology, 2006. IEEE, Hatfield, pp 337–340

Burns RB, Burns RA (2008) Business research methods and statistics using SPSS. SAGE Publications Ltd, Beverly, CA

Winton WM, Putnam LE, Krauss RM (1984) Facial and autonomic manifestations of the dimensional structure of emotion. J Exp Soc Psychol 20:195–216

Lacey JI, Lacey BC (1970) Some autonomic-central nervous system interrelationships. In: Black P (ed) Physiological correlates of emotion. Academic Press, New York, pp 205–227

Jain S, Shapiro SL, Swanick S, Roesch SC, Mills PJ, Bell I, Schwartz GER (2007) A randomized controlled trial of mindfulness meditation versus relaxation training: effects on distress, positive states of mind, rumination, and distraction. Ann Behav Med Publ Soc Behav Med 33:11–21

van den Broek EL, Janssen JH, Westerink JHDM (2009) Guidelines for affective signal processing (ASP): from lab to life. 2009 3rd international conference on affective computing and intelligent interaction and workshops. IEEE, Hatfield, pp 1–6

Kreibig SD, Wilhelm FH, Roth WT, Gross JJ (2007) Cardiovascular, electrodermal, and respiratory response patterns to fear- and sadness-inducing films. Psychophysiology 44:787–806

Acknowledgments

This work was supported in part by the Erasmus Mundus Joint Doctorate (EMJD) in Interactive and Cognitive Environments (ICE), which is funded by Erasmus Mundus under the FPA no. 2010-2012.

Open Access

This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Ivonin, L., Chang, HM., Chen, W. et al. Unconscious emotions: quantifying and logging something we are not aware of. Pers Ubiquit Comput 17, 663–673 (2013). https://doi.org/10.1007/s00779-012-0514-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00779-012-0514-5