Abstract

We propose an enriched finite element formulation to address the computational modeling of contact problems and the coupling of non-conforming discretizations in the small deformation setting. The displacement field is augmented by enriched terms that are associated with generalized degrees of freedom collocated along non-conforming interfaces or contact surfaces. The enrichment strategy effectively produces an enriched node-to-node discretization that can be used with any constraint enforcement criterion; this is demonstrated with both multi-point constraints and Lagrange multipliers, the latter in a generalized Newton implementation where both primal and Lagrange multiplier fields are updated simultaneously. We show that the node-to-node enrichment ensures continuity of the displacement field—without locking—in mesh coupling problems, and that tractions are transferred accurately at contact interfaces without the need for stabilization. We also show the formulation is stable with respect to the condition number of the stiffness matrix by using a simple Jacobi-like diagonal preconditioner.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The computational modeling of problems in contact mechanics requires careful considerations in order to prevent interpenetration between contacting bodies and ensure a proper transfer of contact tractions. A related problem arises in the coupling of non-conforming finite element discretizations, where the presence of hanging nodes, if not handled properly, yields a discontinuous displacement field. In this work these problems are addressed by means of an enriched finite element method that naturally leads to a simple computer implementation and inherently avoids the emergence of locking due to an over-constrained interface.

The numerical analysis of many engineering problems requires the coupling of meshes of different components. These meshes are often non-conforming, i.e., the locations of their nodes do not coincide along the coupling interface, resulting in hanging nodes that call for special treatment. More importantly, the coupling procedure has to avoid over-constraining the coupling interface—to prevent locking—and ensure a proper transfer of tractions between subdomains. Similar issues are shared by engineering applications that involve contact between bodies as, for instance, forging processes, gear systems, and impact problems [1]. While coupling of non-matching meshes is enforced by means of equality constraints, contact problems use inequality constraints, which makes modeling contact notoriously challenging because of its intrinsic highly nonlinear nature. In general, numerical methods used to resolve contact problems are either incapable of accurately transferring tractions between contacting bodies or require a very intricate computer implementation.

Coupling of non-conforming interfaces and contact is numerically handled by enforcing constraints, to guarantee continuity for the former and avoid interpenetration while allowing both bodies to slide or detach for the latter. Although constraints can be enforced in several manners, their application often leads to locking due to an over-constrained interface. In the multi-point constraint (MPC) method, also known as master/slave approach, non-conformity is handled by constraining slave nodes on one side of the interface to those on the master side. This method, however, has been shown to be sensitive to the choice of master/slave surfaces insofar as guaranteeing continuity. A two-pass MPC approach, where both sides are taken as master and slave of each other [2, 3], ensures continuity but suffers from locking due to an over-constrained interface. Dual space methods, such as finite element tearing and interconnecting (FETI) [4] and the mortar method [5, 6], use a Lagrange multiplier field to enforce compatibility at the interface. In both methods, the Lagrange multiplier field must be selected carefully to avoid locking by satisfying the inf-sup condition, also known as the Ladyzhenskaya–Babuška–Brezzi (LBB) condition [7, 8]. A detailed account of several methodologies proposed in the literature to enforce contact constraints is given in Sect. 2.

Enriched finite element methods, whereby the primal field is enhanced or enriched by means of appropriate enrichment functions, provide an elegant approach to dealing with non-conforming meshes and contact. For instance, Haikal and Hjelmstad [3] proposed an enriched stabilized discontinuous Galerkin formulation for coupling non-conforming meshes that recovers accurate tractions and is devoid of locking. This was accomplished by modified finite element (FE) shape functions and the addition of enriched nodes, effectively recovering an node-to-node (NTN) type of constraint enforcement. The eXtended/Generalized Finite Element Method has also been applied to solve contact and non-conforming interface problems [9,10,11,12,13]. Duarte et al. [9] modified the FE partition of unity by means of clustering and used X/GFEM to deal with non-matching interfaces. For contact problems, Khoei and Nikbakht [13] and Dolbow et al. [10] used X/GFEM to model frictional contact, where contact surfaces were treated as embedded strong discontinuities. Hirmand et al. [11] proposed an augmented Lagrangian-based stabilization technique to model frictional contact and thus obtain smooth contact tractions. Similarly, Akula et al. [12] used the augmented Lagrangian method (ALM) within the mortar method to model complex contact surfaces as embedded discontinuities in a background mesh using X/GFEM—a special stabilization technique was required when contacting bodies have high contrast in stiffness and/or mesh density. Nevertheless, X/GFEM is in general unreliable insofar as obtaining accurate interface tractions or requires stabilization techniques. Aragón and Simone [14] reported the oscillatory nature of the traction field recovered by X/GFEM on a notched beam where a cohesive formulation was used to model perfect bonding. It has also been shown that X/GFEM cannot be used as an immerse boundary (or fictitious domain) method since recovered tractions in Dirichlet boundaries oscillate even when using the Barbosa-Hugues stabilization [15]. The issue is caused by an over-constrained interface (or boundary), and thus the inf-sup condition is not satisfied [16]. Putting aside the issue of oscillatory tractions, X/GFEM may be unstable with respect to the condition number of system matrices, a problem that has prompted recent efforts in pursuit of a stable GFEM (SGFEM) [17, 18]. Finally, X/GFEM may have poor accuracy in blending elements (elements containing both standard and enriched nodes), prescribing non-homogenous Dirichlet boundary conditions requires special techniques, and the computer implementation is far from trivial [14].

Inspired by GFEM, another family of enriched FE formulations seeks to solve problems with discontinuities by placing enrichments to nodes created along the discontinuities—as opposed to X/GFEM, where enrichments are added to the nodes of the original mesh. The Interface-enriched Generalized Finite Element Method (IGFEM) [19] was first proposed to resolve weak discontinuities, i.e., situations where the gradient of the primal field is discontinuous, as in interface problems. The method later developed into the Hierarchical Interface-enriched Finite Element Method (HIFEM) [20], whereby multiple interfaces within a single element are resolved via a hierarchical implementation of enrichment functions. The Discontinuity-Enriched Finite Element Method (DE-FEM) [14] later proposed a generalization of IGFEM/HIFEM for the treatment of both weak and strong discontinuities—e.g., fracture—in a unified formulation. Because enrichments are placed along discontinuities, this family of methods is devoid of many of the disadvantages of X/GFEM: the implementation is straightforward, the method is stable, i.e., the condition number grows with the problem size as in standard FEM [21], and there are no blending elements since enrichment functions are localized to cut elements and vanish at all original mesh nodes. The latter therefore keep the Kronecker-\(\delta \) property, which significantly simplifies the enforcement of non-homogeneous essential boundary conditions [14, 16]. Contrary to most non-standard FEMs in use today, in IGFEM/HIFEM/DE-FEM Dirichlet boundary conditions can be enforced strongly as in standard FEM. This is also true even along non-matching Dirichlet boundaries, where HIFEM/DE-FEM is used as an immerse boundary procedure with smooth recovered traction fields [22, 23]. This is particularly interesting in the context of coupling non-conforming discretizations and contact and has largely inspired the present study.

In this paper we adopt the IGFEM paradigm for coupling non-conforming discretizations and contact problems. To this end, we place enriched nodes along non-conforming interfaces and contact surfaces, respectively, collocated at the locations of the non-conforming mesh nodes on the opposite surface. Their associated enriched functions are \(C^0\)-continuous and local to the enriched elements on one side of the non-conforming interface/contact surface. Notably, since the shape functions of the original mesh are kept intact, the partition of unity property is therefore lost in enriched elements, i.e., at any point in the element the sum of shape and enrichment functions does not add to unity. The formulation yields an enriched NTN contact discretization, and therefore we call it a node-to-node enrichment. It is therefore straightforward to enforce constraints by any procedure, which we show in this paper with both MPC and ALM. The proposed procedure is a two-pass method and therefore shows no bias on the choice of master/slave surfaces. The procedure is applied to several examples with linearized kinematics. Results show that the method passes Taylor and Papodopoulos’ contact patch test [24] and avoids locking due to an over-constrained interface; furthermore, traction transfer is fairly accurate without the need of stabilization techniques. This is advantageous over other methods such as the two-pass mortar method which, although passes the patch test and shows no locking, requires interpolation of the pressure field that results in a complex formulation and corresponding computer implementation. We also show the stability of the method insofar as the condition number of the stiffness matrix is concerned. Indeed, the condition number grows with mesh size h as \(\mathcal {O}\left( h^{-2}\right) \)—i.e., at the same rate as that of the standard FEM—after the application of a simple diagonal preconditioner.

2 Previous work on contact discretizations

We limit the scope of this survey to contact between deformable bodies, i.e., deformable-deformable contact. Many contact discretization procedures have been proposed through the years. Node-to-node (NTN) [25] and node-to-segment (NTS) [26,27,28] discretizations are widely used, where constraints are enforced between a node pair or a node-segment pair, respectively. Their applicability is, however, hindered by some intrinsic limitations: Even though NTN can transfer tractions accurately, it can only be used with conforming discretizations along master/slave surfaces and only for infinitesimal sliding. The NTS approach overcomes this restriction but a one-pass approach—where nodes of one contacting surface are constrained to the segments of the other surface—is biased insofar as the choice of master/slave surfaces. A one-pass NTS approach not only fails to prevent interpenetration at times, but it has also been shown not to pass the contact patch test [24], i.e., correct contact tractions cannot be recovered. Several works have attempted to address this issue. Papadopoulos and Taylor [2] proposed to enforce constraints in an average sense and integrate the contact pressure using Simpson’s rule, thereby passing the patch test. Later, Zavarise and De Lorenzis [29] improved the one-pass NTS contact formulation to pass the contact patch test at the expense of losing symmetry in the contact contribution to the stiffness matrix.

A two-pass NTS approach could also be used to avoid interpenetration of contacting bodies, but this approach leads to an over-constrained contact interface and ultimately to locking. As a result, the traditional two-pass NTS contact discretization does not fulfill the LBB condition [2, 30] and therefore no convergence can be attained with mesh refinement. Papadopoulos et al. [31] and Papadopoulos and Solberg [32] proposed a method that enforces continuity of tractions weakly, whereby nodes are divided into groups in which the gap is constrained and pressure continuity is enforced. Their approach avoids locking and passes the patch test when the same interpolation for geometry and traction field is used. The method was later extended to 3D by Jones and Papadopoulos [33].

The idea of enforcing traction continuity or constraints in a weak sense can be understood as a segment-to-segment (STS) method, first proposed by Simo et al. [34], where the displacement field is interpolated with linear shape functions, and the pressure field is defined as piecewise constant over the contact segment, so that the local variables (gaps) are evaluated in an “average” sense. Another STS-based procedure by Zavarise and Wriggers [35] also enforces contact constraints in a weak sense, whereby local variables are evaluated at integration points and then contact contributions to the stiffness matrix and force vector are calculated based on the integration of these local variables. El-Abbasi and Bathe [36] proposed yet another STS method in which integration points are projected onto the opposite contact surface. Although their formulation is capable of handling both linear and quadratic elements in contact, the LBB condition is satisfied only when the pressure is interpolated with linear continuous functions. In addition, a “composite” integration rule that combines Gaussian and Newton-Cotes rules is required to pass the patch test. All these formulations and their corresponding computer implementations are more complex than those of NTS contact.

The mortar method was first used to handle domain decomposition problems [5] and then contact [37,38,39]. The method also has single-pass and dual-pass versions. The traction field is defined on a mortar surface, which is one of the two contacting surfaces or an intermediate surface that is introduced, and contact constraints are enforced in a weak sense. The single pass mortar method has been shown to pass the contact patch test [40]. This method was later extended to 3D and large deformations [40,41,42,43,44,45,46,47,48]. For large deformation kinematics, gap functions interpolated by shape functions in the mortar surface and nodally-averaged normal vectors are used [44]. To avoid non-smooth normals, special techniques are also employed to obtain smooth surfaces, for instance by means of Hermite functions [41] or by other averaging techniques [49]. Results of this method are generally much more accurate than those of traditional NTS procedures—but at the same time the computer implementation is more involved and requires more computational resources [50]. Although some mortar-based methods satisfy the LBB condition, they are biased insofar as the choice of contact surfaces is concerned. Still, an appropriate choice of dual (Lagrange multiplier) shape functions should be made. To avoid the contact surface bias issue, Solberg et al. [51] proposed a two-pass mortar method with contact constraints enforced by means of Lagrange multipliers, where nodes are separated into active and inactive sets; however, a penalty-based stabilization is needed to avoid traction oscillations, and this method is not guaranteed to work for arbitrary geometries, particularly in 3D. Recently, this method was also extended to solve plasticity and self-contact by Puso and Solberg [52]. Park et al. [53] and Rebel et al. [54] proposed a method, different from the mortar method, where nodes from two contact surfaces are also projected into an intermediate surface, and an independent variable is defined to describe the motion of this surface. By enforcing the traction continuity weakly, constant stress can be transferred exactly in the contact patch test. This method was also extended to handle frictional contact by Gonzalez [55]. On the basis of the mortar method, a dual mortar method was proposed by Flemisch et al. [6] to solve contact problems with curved interfaces in 2D, where discontinuous shape functions for dual variables are used because they are more stable and ensure more accurate solutions. This method was then extended to 3D contact problems with large deformation by Popp et al. [56] and Popp and Wall [57], and extended to frictional contact by Gitterle et al. [58]. In general, the dual mortar method offers the possibility of condensing the multipliers out of the system matrix (another advantage of using dual shape functions), resulting in a symmetric positive definite matrix. In their overview, Popp and Wall [57] highlight several advantages of the dual mortar method for contact over the traditional NTS approach. They show that, although smooth interpolations of the contact surfaces are needed (for instance using NURBS), especially for large deformation contact, solving problems with dual mortar can be more efficient than with the traditional single-pass mortar procedure.

Because the smoothness of contact surfaces influences the transfer of tractions greatly, several surface smoothing techniques have been proposed over the years: Belytschko et al. [59] suggested to compute gap functions based on a least square fit of the original non-smooth surface, obtaining a smooth signed distance function that reduces traction oscillations; Padmanabhan and Laursen [60] and Sauer [61] proposed to use Hermite functions to interpolate contact surface and solution fields; El-Abbasi et al. [62] suggested to use cubic splines; Krstulovic-Opara et al. [63] proposed a Bézier formulation; Sauer [64] used high-order Lagrange shape functions; and Stadler et al. [65] used a NURBS-based formulation. These smoothing techniques aim at reducing traction oscillations and improving convergence.

Contact constraints have also been enforced recently in the context of isogeometric analysis (IGA), whereby CAD features are used directly in the numerical analysis. Lu [66] and De Lorenzis et al. [67] proposed IGA formulations for frictionless and frictional contact, respectively; a similar approach to NTS was established in IGA, the so-called knot-to-surface, where the main difference with respect to the traditional NTS lies in that the knot is used instead of mesh nodes and NURBS are used to describe contact surfaces. This method was later extended to 3D [68,69,70], and a mortar-based IGA procedure was found to be more accurate than the standard knot-to-surface method. Corbett and Sauer [71, 72] proposed to use a NURBS interpolation along contact surfaces, while the rest is discretized using linear elements. This modification makes the approach more efficient. For contact in large deformation, accurate results are still generally hard to obtain without mesh refinement. Dimitri et al. [73, 74] proposed to use T-splines to refine the discretization. Similar to the dual mortar method with FEM, a dual mortar method in IGA was also proposed by Seitz et al. [75] and offers better efficiency than the mortar-based IGA contact formulation [76]. An isogeometric collocation method was also used to solve contact problems [77, 78], whereby contact forces are regarded as Neumann boundary conditions, and contact constraints are enforced as Dirichlet conditions. Since these conditions are fulfilled strongly, quadrature of Neumann BCs is eliminated and efficiency is improved greatly. Recently, Duong and Sauer [79] and Duong et al. [80] proposed an IGA contact formulation based on the surface potential method [81] and equipped with the refined boundary quadrature method [82]. In this procedure the “two-half-pass” method [83] is used, i.e., only tractions on the slave surface are considered in each pass. XFEM is also used in this method to capture weak/strong discontinuities around contact boundaries [80]. With a smoothing technique for post-processing, results are much more accurate than those obtained with traditional two-pass methods. However, IGA-based methods still suffer from locking, with the exception of the two-half-pass method, and the procedure requires a completely different discretization based on NURBS—which may not be straightforward to implement in general displacement-based FEM codes.

For most methods above, it is still not trivial to obtain accurate contact tractions for complex problems due to numerical artifacts [29]. Even for mortar methods traction oscillations are generated for curved contact interfaces—which are relatively small and concentrated near the contact edge with the correct choice of mortar surface [12]. These numerical errors do not necessarily reduce with mesh refinement. Some STS and mortar based methods [32, 33, 51,52,53, 84] are quite accurate in the definition of contact tractions, pass the contact patch test, and avoid locking. Their formulations and implementations are, however, much more complicated than those of traditional NTN or NTS procedures.

There are also procedures that aim at transforming the contact problem into an equivalent NTN contact constraint enforcement. For instance, the virtual element method (VEM) has recently been explored to solve problems in contact mechanics [85]. In VEM non-conforming contacting nodes are projected onto the opposite contact surface, and new nodes are generated at those locations, transforming the problem into a VEM-conforming mesh for which NTN contact constraints can be enforced straightforwardly. Besides, adding contact constraints to a general VEM code is straightforward. Results show that VEM passes the patch test. However, a penalty-based stabilization is needed to avoid traction oscillations. This method was later developed to solve frictional contact in large deformation kinematics by Wriggers and Rust [86] and contact with curved contact surfaces by Aldakheel et al. [87].

Enriched formulations have also been used to convert non-conforming contact discretizations into an equivalent NTN enforcement. For instance, the enriched discontinuous Galerkin formulation for coupling non-conforming discretizations by Haikal and Hjelmstad [88] was later extended to solve frictionless contact with finite deformation kinematics [3]. Masud et al. [89] further combined the ideas put forward by Haikal et al. [3, 88] with Nitsche’s method in a variational multiscale framework that could be used not only for coupling non-conforming meshes but also for solving frictional contact problems. The methodology proposed in this paper follows the enrichment strategy proposed by Haikal and Hjelmstad [3] in which, instead of modifying the shape functions of elements in contact, we keep the standard basis intact. Both strategies could be understood as a form of h-hierarchical refinement along contact surfaces and thus share some similarities with the non-hierarchical h-refinement approach used to handle non-conforming meshes in 3D proposed by Jiao and Heath [90, 91]. Their approach is based on the concept of common refinement of two meshes that is defined as “the intersections of the elements of the input meshes”. The new discretization contains therefore nodes that can be found in both non-matching meshes. Our approach follows a similar strategy in that all standard nodes from one contact surface are projected to the other and vice versa.

3 Problem description and formulation

3.1 Governing equations

Figure 1 shows domain \(\Omega \subset \mathbb {R}^d\) composed of three d-dimensional subdomains \(\Omega _i \subset \Omega \) such that \(\overline{\Omega } = \cup _i \overline{\Omega }_i\). The subdomain boundaries are denoted \(\partial \Omega _i \equiv \Gamma _i = \smash {\overline{\Omega }}_i \setminus \Omega _i\) and their outer normals \(\varvec{n}_i\). Domains \(\Omega _1\) and \(\Omega _2\) are in frictionless contact along the surface \(\Gamma _{12} = \Gamma _1 \cap \Gamma _2 \equiv \Gamma ^c\), and domains \(\Omega _2\) and \(\Omega _3\) are perfectly bonded along the interface \(\Gamma _{23} = \Gamma _2 \cap \Gamma _3 \equiv \Gamma ^g\). Such interface could be physical (e.g., the interface between two different materials), numerical (e.g., a non-conforming discretization with hanging nodes), or a combination thereof. Boundaries \(\Gamma _i^u\) and \(\Gamma _i^t\) denote regions with prescribed Dirichlet and Neumann boundary conditions, respectively. These regions are disjoint, i.e., \(\Gamma _i^u \cap \Gamma _i^t = \emptyset \). The displacement (primal) field is denoted by \(\varvec{u}\) and the traction (dual) field by \(\varvec{t}\). These are composed by considering the fields within all domains \(\Omega _i\). We therefore denote \(\varvec{u}_i\) the restriction of \(\varvec{u}\) to the ith domain. The same notation applies to the traction field \(\varvec{t}_i\) and to other subscripted quantities.

We are interested in solving the elastostatics frictionless contact boundary value problem whose strong form is expressed as: Given body force \(\varvec{b}_i : \Omega _i \rightarrow \mathbb {R}^d\), prescribed displacement \(\bar{\varvec{u}}_i : \Gamma _i^u \rightarrow \mathbb {R}^d\), and traction \(\bar{\varvec{t}}_i : \Gamma _i^t \rightarrow \mathbb {R}^d\) fields, find \(\varvec{u}_i\) such that

with boundary conditions

interface conditions for perfect bonding

and contact conditions

In Eq. (1), \(\varvec{\sigma }_i = \varvec{\mathcal {D}}_i : \varvec{\varepsilon }_i\) is the Cauchy stress tensor which obeys Hooke’s law, \(\varvec{\mathcal {D}}_i\) is a fourth-order constitutive tensor, and \(\varvec{\varepsilon }_i = \frac{1}{2} \left( {\varvec{\nabla }}u_i + {\varvec{\nabla }}u_i^{\intercal } \right) \) the linearized strain tensor (small deformation theory). For the perfect bonding between domains \(\Omega _2\) and \(\Omega _3\), equal displacements and tractions are enforced through interface \(\Gamma ^g\). Contact between domains \(\Omega _1\) and \(\Omega _2\) is enforced through the classical Hertz–Signorini–Moreau conditions, also known as Karush–Kuhn–Tucker conditions in the theory of optimization [1]; these consist of a contact pressure \(p_n\), which can only be in compression, and a gap function \(g_n\), which ensures that the contact surfaces cannot penetrate one other. Finally, Eq. (8) ensures that pressure vanishes when the gap between the two contact surfaces is open, and the gap vanishes when they are in contact.

Equations (1)–(8) can be seen as the solution to a constrained optimization problem on the functional given by

where the first term represents the potential energy in all domains. For the ith domain, the potential energy is

The other two terms in (9) are associated with constraints at the perfectly bonded interface \(\Gamma ^g\) and at the contact interface \(\Gamma ^c\), enforced with Lagrange multipliers \(\varvec{\lambda }^g\) and \(\varvec{\lambda }^c\), respectively; their form varies depending on how the constraints are enforced. In this work we use the standard Lagrange multiplier method for the perfectly bonded interface, and an augmented Lagrangian type of enforcement for contact (which makes the saddle contact problem convex close to the solution). Their forms are therefore respectively written as

where \(\langle \bullet \rangle \) denotes the Macaulay brackets, \(\lambda _n = \varvec{\lambda }^c \cdot \varvec{n}\) the normal component of the Lagrange multiplier (where we could use either \(\varvec{n}_1\) or \(\varvec{n}_2\), as at this stage the choice is arbitrary), and \(\hat{\lambda }_n\) the augmented Lagrange multiplier given by \(\hat{\lambda }_n = \lambda _n + \epsilon _n g_n = p_n\), with \(\epsilon _n\) the augmentation (penalty) parameter in the normal direction.

3.2 Variational formulation

The solution of the boundary value problem makes the optimization problem (9) stationary with respect to variations of all arguments. These three equations are obtained by taking the directional derivative along the directions of the variations:

for all admissible variations \(\delta \varvec{u}\), \(\delta \varvec{\lambda }^g\), and \(\delta \varvec{\lambda }^c\). For the variations of the displacement, we define the vector-valued function space

where \(\mathcal {H}^1 \left( \Omega _i \right) \) denotes the first-order Sobolev space on \(\Omega _i\). The primal field is chosen from the set

Lagrange multipliers \(\varvec{\lambda }\) and their variations \(\delta \varvec{\lambda }\) are taken from a fractional Sobolev space, i.e., \(\varvec{\lambda }^a \in \varvec{\Lambda } \left( \Gamma ^a \right) \equiv \left[ \mathcal {H}^{-1/2} \left( \Gamma _a \right) \right] ^d,\) with \(a = g,c\).

Multiple-point constraints (MPCs) are also investigated in this work to enforce both perfect bonding and contact. In such case, the enforcement works by writing simple constraint equations that describe the relationship between slave degrees of freedom (DOFs) as a function of the master DOFs. As there is no need for Lagrange multipliers, the rightmost two terms in (9) disappear.

Next we discuss the discretization of Eqs. (13) through (15). We start with the enriched formulation used in elements where constraints are to be enforced.

3.3 The finite-dimensional interface-enriched generalized finite element formulation

It is not straightforward to couple meshes or simulate contact when the discretizations of the domains are non-matching, i.e., when there are hanging nodes in the former or contacting nodes do not occupy the same location in space in the latter (no node-to-node contact). To alleviate the burden associated with the lack of mesh conformity, we employ an enrichment scheme inspired by the Interface-enriched Generalized Finite Element Method (IGFEM) [19, 21], whereby enriched nodes are created along material interfaces to resolve weak discontinuities (those in the field gradient). Consequently, all domains are discretized into non-overlapping finite elements such that \(\Omega _i^h = \cup _i e_i\), \(e_i \cap e_j = \emptyset \, (\forall i \ne j)\), with the entire computational domain given by \(\cup _i \Omega _i^h = \Omega ^h \approx \Omega \). In order to solve numerically the finite-dimensional form of equations in (13)-(15), the trial solution and the weight function are chosen from the interface-enriched generalized finite element space.

In (18) the first term represents the standard finite element component and the second term the enrichment. In the former, \(\iota _h\) is the index set of all standard nodes in the mesh, \(N_i\) is the ith standard Lagrange interpolation function associated with degrees of freedom \(\varvec{u}_i\) (which physically represent the nodal displacement at standard node \(\varvec{x}_i\)). In the enrichment term, \(\iota _e\) is the index set of enriched nodes, \(\psi _i\) is the enrichment function associated with enriched DOFs \(\varvec{\alpha }_i\). Finally, \(s_i\) is a scaling factor that is used to improve the condition number of the system matrix after assembly. This factor, which was studied thoroughly in a recent publication [21], is required for a robust implementation that handles interfaces getting arbitrarily close to standard mesh nodes.

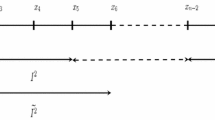

The enrichment function \(\psi _i\) is constructed with the aid of Lagrange shape functions in integration elements (see enrichment \(\psi _i\) corresponding to enriched node \(\varvec{x}_{i}^\perp \) in Fig. 2), which not only form the support \(\psi _i\), but, as the name implies, are also used for the numerical quadrature of the local stiffness and force arrays. Contrary to the original IGFEM formulation, where the support of an enrichment comprises integration elements at both sides of a material interface, here the support comprises only integration elements on one side of the non-conforming interface or contact surface. In our implementation, a computational geometric engine creates enriched nodes along non-conforming interfaces or contact surfaces, so that each enriched node corresponds to a standard mesh node on the surface of the opposite domain. As shown in Fig. 1, the location of mismatching mesh nodes can be directly determined along the interface \(\Gamma ^g\) in mesh coupling problems, whereas for contact problems the closest node projection [1, 92] to an element edge is used to determine the location of enriched nodes at either side of contact surfaces.

3.4 Stiffness matrix contributions

In this section we derive the discrete expressions for the stiffness contributions given by the first term in (13). The assembly of the local stiffness matrix \(\varvec{k}_i\) and force vector \(\varvec{f}_i\) for finite elements that do not contain enriched nodes follows standard procedures. For enriched integration elements, and with reference to [14, 19], local arrays can be expressed as

with

where \(\varvec{D}\) is the constitutive matrix, and \(\varvec{B}_u\) and \(\varvec{B}_\alpha \) are the strain-displacement matrices of shape and enrichment functions, respectively [22, 93]. Finally, the global stiffness matrix \(\varvec{K}\) and global force vector \(\varvec{F}\) are obtained by standard assembly of their local counterparts. Denoting by \(\mathbb {A}\) the standard finite element assembly operator, global arrays are therefore

which, by making explicit the contributions of standard and enriched components, can be expressed as

3.5 Constraint enforcement

The enriched space alone does not ensure continuity across non-conforming interfaces and does not avoid interpenetration of contact surfaces. To properly resolve the field at mesh coupling and contact interfaces, constraints are imposed between enriched and standard DOFs. Although the use of discrete MPCs and the augmented Lagrange method (ALM) is well established, their application in conjunction with this enriched framework is different and both methods are therefore thoroughly discussed in this section.

3.5.1 Multiple-point constraint method

Conventionally, multiple-point constraints (MPCs) are used in a “master and slave” situation to enforce some sort of compatibility between nodes, e.g., for enforcing continuity along an interface or to enforce periodicity at opposite sides of a domain; an MPC can be simply regarded as an approach to create a “tie” among nodes. This method can be used in a single-pass or in a two-pass approach, which means that either side is selected as the master surface, or that either side serves as the master to its opposite side, respectively. In both approaches, the constraint is expressed as a linear combination of displacement vectors as in

where \(\varvec{u}_s\) is the displacement of the slave node \(\varvec{x}_s\), \(\iota _m\) is the index set of the slave’s master nodes, and \(\varvec{g}\) is the gap vector. In mesh coupling problems, as the objective is to ensure continuity across an interface, the homogeneous MPC is used, i.e., \(\varvec{g} = \varvec{0}\). This constraint method is simple and straightforward to implement. However, as already discussed in the introduction, continuity cannot be ensured in a single-pass approach, and the system may become over-constrained in a two-pass approach [3]. In the following, we provide an alternative formulation of MPCs combined with the enriched approach outlined in the previous section for handling mesh coupling and contact problems without slipping and separation. In the context of IGFEM, MPCs have been employed in immersed domain problems [22] and in the enforcement of Bloch-Floquet periodic boundary conditions [94]. Note, however, that for general contact problems it is preferred to adopt ALM (this will be explained in detail in the next section). For the cases where MPCs work, the objective is to ensure \(C^0\)-continuity across non-conforming interfaces or contact surfaces in a given direction.

For a standard hanging node \(\varvec{x}_i\), an enriched node \(\varvec{x}_{i}^\perp \) is created at the same location although placed in the adjacent element (see Fig. 2). The constraint equation enforces that the displacement of standard and enriched nodes, \(\varvec{u}_i\) and \(\varvec{u}_{i}^\perp \), respectively, have to be equal. Referring back to (18), this condition is expressed as

where \(\smash {\iota _{h}^\perp } \subset \iota _h\) is the subset of mesh nodes with standard shape functions whose supports intersect the support of the enrichment function. Mathematically, if the support of a standard shape function is defined as \(\omega _i = \left\{ \varvec{x} \vert \, N_i \left( \varvec{x} \right) \ne 0 \right\} \), and similarly for an enriched function \( \smash {\omega _i^\perp } = \left\{ \varvec{x} \vert \, \psi _i \left( \varvec{x} \right) \ne 0 \right\} \), then \(\smash {\iota _{h}^\perp } = \left\{ i \in \iota _h \vert \, \omega _{i} \cap \smash {\omega _i^\perp } \ne \emptyset \right\} \). Note that in Eq. (28) we use \(\psi _i \left( \smash {\varvec{x}_{i}^\perp } \right) = 1\).

A similar constraint is enforced for every hanging standard node and enriched node pair at both sides of the non-conforming interface, making the proposed procedure actually correspond to a two-pass approach. However, when compared to just using Eq. (27), enriched DOFs render the interface response less stiff. These constraints can be written in matrix form for all enriched nodes in the system as

where \(\varvec{U} = \begin{bmatrix} \varvec{u}_1 \ldots&\varvec{\alpha }_1 \ldots \end{bmatrix}^\intercal \) is the vector containing all standard and enriched DOFs, \(\bar{\varvec{U}}\) is the vector of independent (unconstrained) DOFs, and \(\varvec{T}\) is a transformation matrix storing coefficients \(N_i\) and \(s_i\) in Eq. (28). Following standard procedures for MPCs [22], the unconstrained system \(\varvec{K} \varvec{U} = \varvec{F} \), where \(\varvec{K} \) and \(\varvec{F}\) were given in (26), is transformed into \(\bar{\varvec{K}}\bar{\varvec{U}} = \bar{\varvec{F}}\), where \(\bar{\varvec{K}} = \varvec{T}^{\intercal } \varvec{K} \varvec{T}\), \(\bar{\varvec{F}} = \varvec{T}^{\intercal } \varvec{F}\), and the transformation matrix \(\varvec{T}\) ties both standard and enriched nodes.

Notice that only a few additional enriched DOFs need to be added, and applying the MPC method between master and slave DOFs is straightforward. Numerical examples for coupling non-conforming discretizations will be shown in Sect. 5 to illustrate the accuracy and robustness of the approach.

3.5.2 Augmented Lagrange method

For general contact problems where relative slip and separation occur, it is not convenient to use MPCs to enforce constraints because the contact status needs to be detected during the nonlinear iterative contact step. The augmented Lagrange method can be regarded as a combination of penalty and Lagrange multiplier methods, whereby the inequality-constrained minimization contact problem is transformed into an unconstrained saddle point problem. Compared with pure penalty and Lagrange multiplier methods, this approach decreases the conditioning number of the stiffness matrix and is able to satisfy constraints exactly with finite penalty parameters [95]. In this section we obtain the discrete form corresponding to the weak form (13)-(15). When considering Lagrange multipliers, we seek a solution to the system \(\hat{\varvec{K}} \hat{\varvec{U}} = \hat{\varvec{F}}\) with

where \(\hat{\varvec{U}} = \begin{bmatrix}\varvec{u}&\varvec{\alpha }&\varvec{\lambda }\end{bmatrix}^\intercal \) is the vector of unknowns in terms of standard DOFs, enriched DOFs, and Lagrange multipliers. The solution is found incrementally by making use of a generalized Newton loop [96, 97], i.e., \(\hat{\varvec{K}} \Delta \hat{\varvec{U}} = \Delta \hat{\varvec{F}}\), where \(\hat{\varvec{K}}\) is mostly linear (we assume linear kinematics) and the nonlinear components arise due to contact. In the above expressions, a hat explicitly differentiates this system from that used in the MPC method. Notice that a hat is also used for submatrix components, implying that the original matrices are modified by applying coupling terms. In other words, the system arrays (26) are augmented with the contributions of the Lagrange multipliers, which are obtained by assembling the contributions of \(n_\lambda \) constraints:

The explicit expressions of the Lagrange multiplier contributions \(\varvec{k}_i^c\) and \(\varvec{f}_i^c\) are given next.

3.5.3 Contact contribution

Figure 3 shows three standard mesh nodes \(\varvec{x}_i\), \(\varvec{x}_j\), and \(\varvec{x}_k\). Without loss of generality and with reference to mesh node \(\varvec{x}_i\), we denote by \(\varvec{x}_{i}^\perp \) the position of the corresponding enriched node on the opposite surface. The position of \(\varvec{x}_{i}^\perp \) is determined by projecting \(\varvec{x}_i\) using the unit normal vector \(\varvec{n}_i\). The gap function \(g_{n,i}\) along \(\varvec{n}_i\) and between \(\varvec{x}_i\) and \(\varvec{x}_{i}^\perp \) is expressed as

where \(g_{0,i} = \left( \varvec{X}_i - \varvec{X}_{i}^\perp \right) \cdot \varvec{n}_i\) is the initial gap, \(\varvec{X}_i\) and \(\varvec{X}_{i}^\perp \) represent the initial positions of standard and enriched nodes, respectively, and \(\varvec{u}_i = \varvec{x}_i - \varvec{X}_i\) and \(\varvec{u}_{i}^\perp = \varvec{x}_{i}^\perp - \varvec{X}_{i}^\perp \) their corresponding displacements. Referring back to Fig. 3, the standard FEM shape functions attached to nodes \(\varvec{x}_j\) and \(\varvec{x}_k\) are the only ones that contribute to the displacement field at the location of enriched node \( \varvec{x}_{i}^\perp \). By using (18) to express its displacement, the gap reads

where \( \otimes \) denotes the Kronecker product, \(\varvec{N}_i =\begin{bmatrix} 1&- N_j(\varvec{x}_{i}^\perp )&-N_k(\varvec{x}_{i}^\perp )&- s_i\psi _i \left( \varvec{x}_{i}^\perp \right) \end{bmatrix}\) with \(\psi _i \left( \varvec{x}_{i}^\perp \right) = 1\), \(\varvec{U}_{i}^\perp = \begin{bmatrix} \varvec{u}_i&\varvec{u}_j&\varvec{u}_k&\varvec{\alpha }_i \end{bmatrix}^\intercal \), and \(\varvec{I}\) is the \(d \times d\) identity matrix. Since the initial gap is constant during the analysis, the variation of (33) is given by

The weak form of the contact contribution given by (15) is approximated by a summation over the \(n_\lambda \) active contact nodes. Substituting \(g_{n,i}\) and \(\delta g_{n,i}\) with (33) and (34), respectively, the total contact contribution reads

Noteworthy, in the discretized right hand side of (35) the Lagrange multipliers represent the force acting on the enriched nodes. By introducing \(\delta \hat{\varvec{U}}_i = \begin{bmatrix} \delta \varvec{U}_{i}^\perp&\delta \lambda _{n,i} \end{bmatrix} ^\intercal \), (35) can be expressed as:

with

Equation (36) can be solved iteratively, and in this work we use the generalized Newton method [96, 97], where Lagrange multipliers are updated together with the primal field in the same iterative loop. In this method, which converges faster than Uzawa’s algorithm, linearization of the contact contribution is required, leading to

where \(\Delta \hat{\varvec{U}}_i = \begin{bmatrix} \Delta \varvec{U}_{i}^\perp&\Delta \lambda _{n, i} \end{bmatrix}^\intercal \) and

Here \(\varvec{k}_i^c\) and \(\varvec{f}_i^c\) refer to the contact contribution of mesh node i to the stiffness matrix and the load vector in a generalized Newton loop.

It is worth noting that this formulation and its solution procedure are similar to those of NTN contact (we refer the reader to [1]). The only thing we needed to modify is the \(\varvec{C}_i\) vector, which is based on the vector \(\varvec{N}_i\) of enriched node \(\varvec{x}_{i}^\perp \).

3.5.4 Inactive constraints

In an inactive state of contact, i.e., when \(\hat{\lambda }_n > 0\), Eq. (15) is approximated by

where \(n_i\) is the number of inactive constraints, and the incremental equation is therefore expressed as

For the inactive constraints, the contributions

are considered in (31), which practically implies the update of the Lagrage multiplier as described in Algorithm 1. Similar to the active case previously described, the solution increments are solved using the generalized Newton method. It is worth noticing that, compared to the standard way of applying constraints, only the enriched part described in Sect. 3.4 needs to be added.

4 Implementation

In this section we discuss the implementation of the method in a displacement-based FEM framework. Since the calculation of the element local arrays considering enrichment functions has been detailed elsewhere [14, 19], here we only focus on the implementation of the constraints to enforce non-conforming mesh coupling and contact. Because the use of MPCs for coupling non-conforming meshes is standard [98, pp. 325–340], we only provide the detailed pseudo-code for handling contact problems (where the coupling using LMs is also included).

4.1 Coupling of non-conforming meshes

For each non-conforming node, an enriched node with the same coordinates is created on the other side of the non-conforming interface as shown in Fig. 2. The element that contains this enriched node is then split into integration elements [93]. An ordered tree data structure is recommended to store the associations among integration elements and their mesh parent elements. Integration elements are used to perform the numerical quadrature of elemental local stiffness and force arrays given by (19)–(24). The assembly of these contributions into the corresponding global counterparts follows standard procedures. MPCs or LMs are then used to enforce continuity constraints among non-conforming mesh nodes and their enriched slaves; while the application of MPCs is done according to standard procedures, the use of LMs can be regarded as a simplified version of the contact problem without a convergence test (more on this below).

4.2 Contact

We use a generalized Newton method to solve the nonlinear contact load increment following the procedure outlined in Algorithm 1 which is now described. At each load increment we create enriched nodes using the closest projection method [1, 92] to determined their location (refer to Fig. 3). Integration elements are created afterwards, similarly to the procedure just discussed for the coupling of non-conforming meshes. Here, as the relative displacement between contacting bodies can be neglected, we only determine the locations of enriched nodes at the beginning of each contact step, in analogy with the active set strategy in NTS contact [1]. For simplicity, we first detect enriched nodes without checking their gap in the normal direction; we then compute the gap and, if it is larger than zero, these nodes make no contribution to the stiffness or internal force calculation based on the inactive constraint formulation described in Sect. 3.5. This approach helps the convergence within a step in the case where nodes are not in contact at the start of a step, but come in contact during the iteration.

Once the number of enriched nodes is determined, the number of required Lagrange multipliers is also determined (the cardinality of set \(\mathcal {N}\) is denoted \(\vert \mathcal {N} \vert \) in Algorithm 1). The total number of DOFs, which includes the DOFs for displacement and Lagrange multipliers in the generalized Newton method, is used to initialize the global arrays. Notice that in the displacement and Lagrange multiplier vectors, only the elements that correspond to active constraints are kept (and zeros are added for newly added enriched DOFs and multipliers). The unconstrained global arrays are then assembled considering also the enriched contributions [14].

The following loop over the index set of enriched nodes adds the contribution of contact constraints to the global arrays. Augmented Lagrange multipliers are calculated based on the gap and penalty parameter. Note that contributions are added only if there is contact, i.e., \(\hat{\lambda }_{n,i} \le 0\).

After the solution of the system of equations and the update of the primal (displacement) and dual (Lagrange multiplier) fields, a convergence check is made. Whenever the norm of both vectors is lower than a user-specified tolerance, the analysis continues to the next contact step. As mentioned above, LMs can be used for the mesh coupling problem, which can be regarded as a contact analysis where the enriched positions (mismatching nodes) are known at the beginning of the analysis; the solution to this problem can then be obtained directly in one iteration.

5 Numerical examples

The accuracy and robustness of the proposed method is now demonstrated by means of numerical examples. Homogeneous linearly elastic materials and plane strain conditions are chosen. For convenience, no units are adopted so results are valid for any consistent unit system. Constant strain triangular elements are used with one point quadrature rule for both standard and integration elements.

5.1 Contact patch test

The commonly used contact patch test of Taylor and Papodopoulos [24] is used to study the ability of our method to correctly transfer contact tractions. The problem consists of an elastic substrate with a rectangular punch as illustrated in Fig. 4. Both the punch and the substrate are subject to a uniformly distributed vertical unit traction \(\bar{\varvec{t}}\) along their top edges. The substrate and the punch have the same Young’s modulus \(E = 10\) and Poisson’s ratio \(\nu = 0.3\). The problem is setup so that the punch comes into contact with the substrate because of the applied pressure. Since the same material is used for the punch and substrate, and the pressure is uniformly distributed on the top surfaces, the substrate should experience a constant state of strain and stress.

The problem is then solved using the following discretization methods: (a) Standard FEM using a single-pass MPC; (b) Standard FEM with a two-pass MPC; (c) Our enriched method using a two-pass MPC; and (d) Our enriched method using ALM.

For the single-pass MPC example, we defined the top surface of the substrate as master and the lower surface of the top block as slave (switching master and slave surfaces leads to an unconstrained system). Finally, the same strategy is used to integrate the applied pressure in all cases.

Deformed configurations (\(4\times \) magnification) for the contact patch test showing the element stress in the \(\varvec{e}_2\) direction and the contact tractions plotted at nodes with red arrows: Standard FEM with single- (a) and two-pass (b) MPC; Enriched approach with a two-pass MPC (c) and ALM (d)

a Infinite plate with circular hole subjected to uniform traction at \(x_1 = \pm \infty \). The square region indicates the computational domain; b Typical conforming standard FEM mesh; c Typical mesh with a non-conforming interface. Convergence results are shown for the cases of horizontal and vertical interfaces (for the latter the mesh is rotated)

Figure 5 shows the stress field on the deformed configuration for all methods. The standard FEM single-pass MPC method (panel (a)) is unable to ensure continuity and results in a non-constant stress field; notice also the interpenetration between the substrate and the punch. The standard FEM two-pass MPC method (panel (b)) ensures continuity along the contact boundary and passes the patch test. The results obtained with our enriched formulation (two-pass MPC in panel (c) and ALM in panel (d)) show that the method ensures \(C^0\)-continuity and passes the patch test. In addition, exact integration of the applied pressure is readily possible because of the presence of integration elements with an enriched node at the location where the applied pressure is discontinuous.

Convergence results for the horizontal (top row) and vertical (bottom row) non-conforming interface. Figures show the error in \(\mathcal {L}^2\)-norm (left column) and energy norm (right column) as a function of the total number of DOFs \(n_d\). The curves for enriched methods with MPC and LM overlap

5.2 Convergence study

The convergence of the proposed method is investigated by means of the classical problem of a circular hole in an infinite plate, for which the exact solution can be found in Refs. [99, 100]. As shown in the schematic of Fig. 6a, a square computational domain of size \(L=20\) with a centered whole of radius \(r=4\) is chosen. The material properties of the plate are \(E=10\) and \(\nu = 0.3\). On the boundary of the square domain we prescribe the exact displacement field corresponding to the uniform far-field traction \(\bar{\varvec{t}} = \pm \sigma _\infty \varvec{e}_1\), with \(\sigma _\infty = 1\).

Figure 6 shows the two discretizations for this problem: a standard conforming FE mesh in panel (a) and a mesh composed of two parts that are non-conforming along the coupling interface in panel (b), where the ratio between the the element sizes in the top and bottom domains is equal to 2 and is kept constant with mesh refinement. Four different analysis approaches are compared: i) Standard FEM using conforming meshes; ii) Two-pass MPC on non-conforming discretizations; iii) Our enriched method using a two-pass MPC; and iv) Our enriched method using LM. Since the single-pass MPC cannot ensure continuity along the coupling interface, as demonstrated in the previous example, it has been discarded in this analysis.

Convergence is studied via the standard, \(\mathcal {L}^2\), and energy, \(\mathcal {E}\), norms of the error defined as

where quantities with and without the superscript h refer to approximate and exact solutions, respectively.

The plots in the top row of Fig. 7 show the convergence results with the configuration shown in Fig. 6 (horizontal interface). While the two-pass MPC method does not converge due to locking originated from an overconstrained interface [3, 101], the curves of the two enriched approaches overlap and achieve the same rate of convergence as that of standard FEM with conforming discretizations: about 1 for the \(\mathcal {L}^2\) norm and 0.5 for the energy norm. Similar results, reported in the second row of Fig. 7, are obtained when the non-conforming mesh is rotated \(90^\circ \), resulting in a vertical interface.

The energy norm of the error corresponding to the four methods using the mesh with horizontal and vertical non-conforming interfaces are shown in Fig. 8, plotted per element (average value). The results corresponding to the enriched methods and standard FEM are in good agreement, whereas those obtained by the two-pass MPC (panel (b)) show some clear differences along the coupling interface.

This example shows that the performance of the proposed method for mesh coupling is basically identical to that of the standard FEM on conforming meshes. Because the same convergence rates are obtained, it can be concluded that the LBB condition is fulfilled. In contrast to the two-pass MPC method, our method avoids interface locking because enriching the primal field gives more kinematic freedom to the interface. The enrichment therefore enables an accurate representation of the mechanical behavior at the coupling interface.

5.3 Stability

Following our previous work on enriched FEM [21, 22, 93], we investigate the stability of the proposed method. Using the same problem geometry of the contact patch test used in Sect. 5.1, we examine the influence of punch location and mesh size on the condition number of the system matrix. We compute the condition number of the global system matrix \(\varvec{K}\) as

where \(\lambda _\text {max}\) and \(\lambda _\text {min}\) denote, respectively, the highest and lowest (non-zero) eigenvalues of the system matrix. No Dirichlet boundary conditions are enforced on the system and therefore we discard the lowest six eigenvalues, which correspond to the rigid body modes of both blocks.

We investigate the condition number of the matrices with MPC and ALM. For each approach three variations of the enriched method are compared: \(\mathrm {ns}\)) The enriched method without scaling enrichment functions, i.e., \(s_i = 1\) in Eq. (18); \(\mathrm {os}\)) The enriched method with the optimal scaling proposed in Ref. [21], i.e., \(s_i = \sqrt{2 \zeta \left( 1- \zeta \right) }\), where \(0 \le \zeta \le 1\) denotes the (relative) location of the enriched node in the finite element side that contains it; and \(\mathrm {pc}\)) The enriched method without scaling, but using a diagonal preconditioner such that \(\varvec{K}_{\mathrm {pc}} = \varvec{\Delta }\varvec{K} \varvec{\Delta }\), where \(\Delta _{ij} =\delta _{ij}/\sqrt{K_{ij}}\) is a diagonal matrix with \(\delta _{ij}\) denoting the Kronecker delta.

5.3.1 Effect of punch location

In the first test, the influence of the punch location on the condition number as it moves on the substrate is evaluated (see Fig. 9a). Both substrate and punch are discretized with two triangular elements (see Fig. 9b). Their material properties are, respectively, \(E_1=10, E_2=10{,}000\) and \(\nu _1=\nu _2=0.3\).

Figure 10 shows the condition number of the stiffness matrix as a function of the punch location. For MPC, the condition number for the unscaled enriched method (labelled \(\kappa ( \bar{\varvec{K}}_{\mathrm {ns}} ) \)) rises slightly when the punch approaches the sides of the substrate. However, when using the optimal scaling proposed in Ref. [21] (labelled \(\kappa ( \bar{\varvec{K}}_{\mathrm {os}}) \)), the condition number is the same as that of unscaled method, showing that the ineffectiveness of the scaling factor demonstrated in the one-dimensional example in Appendix A holds also in this case. Applying the diagonal preconditioner (labelled \(\kappa ( \bar{\varvec{K}}_{\mathrm {pc}})\)) improves the condition number. Overall, the condition number remains bounded as enriched nodes are placed arbitrarily close to standard finite element nodes when dealing with MPCs.

For ALM, the condition number of the original stiffness matrix without enrichment scaling (labelled \(\kappa ( \hat{\varvec{K}}_{\mathrm {ns}} ) \)) also overlaps the one that uses optimal enrichment scaling (labelled \(\kappa ( \hat{\varvec{K}}_{\mathrm {os}} )\)). However, with the diagonal preconditioner (labelled \(\kappa ( \hat{\varvec{K}}_{\mathrm {pc}} )\), the condition number improves significantly, but is still higher than that of the MPC method.

5.3.2 Effect of mesh size

In this second test, which is illustrated schematically in Fig. 11a, we study the influence of mesh refinement with a fixed punch. The material properties are the same as those used in the first test. The results of our enriched approach are compared to those of standard FEM using conforming node-to-node contact discretizations, as shown in Fig. 11b. Figure 11c shows a typical finite element discretization used for all other results.

The results in Fig. 12 show the condition number as a function of mesh size (left) and total number of DOFs (right). The reference curve (labeled \(\kappa ( \varvec{K}_{\mathrm {std}} )\)) is computed using the conforming mesh shown in Fig. 11b. It is well known that the condition number in standard FEM scales as \(\mathcal {O}\left( h^{-2}\right) \) with mesh size h and \(\mathcal {O}\left( n_d \right) \) with the total number of DOFs \(n_d\). For MPC, the condition number of the original enriched system matrix indeed deteriorates with mesh refinement (curve \(\kappa (\bar{\varvec{K}}_{\mathrm {ns}})\)). Also in this case, the optimal scaling proposed in Ref. [21] (curve \(\kappa (\bar{\varvec{K}}_{\mathrm {os}})\)) has no effect on the conditioning. However, applying the simple diagonal preconditioner improves the condition number significantly (curve \(\kappa (\bar{\varvec{K}}_{\mathrm {pc}})\)). Noteworthy, the condition number of the enriched system matrices increases at the same rate as that of standard FEM with node-to-node contact. Therefore, the enriched method constrained using MPCs with a simple preconditioner is as stable as standard FEM.

For ALM, the condition numbers are generally worse than those obtained with MPCs. The condition number also deteriorates with mesh refinement, and the magnitude with and without scaling is also the same (curves \(\kappa (\hat{\varvec{K}}_{\mathrm {ns}})\) and \(\kappa (\hat{\varvec{K}}_{\mathrm {os}})\), respectively). We find that the condition number of the enriched system matrix with a simple pre-conditioner (curve \(\kappa (\hat{\varvec{K}}_{\mathrm {pc}})\)) is close to that of the preconditioned matrix using MPCs and grows at the same rate as FEM with node-to-node contact.

5.4 Hertzian contact problem

Figure 13 illustrates a Hertzian contact problem, where a semi-circular punch with radius \(r=10\) and material properties \(E_2 ={700,000}\) and \(\nu _2 = 0.3\) is subject to a uniformly distributed load \(\bar{\varvec{t}}=-25\varvec{e}_2\) and pushed against a substrate with material properties \(E_1 = {7000}\) and \(\nu _1=0.3\). The substrate has length \(l = 20\) and height \(h= 10\) and is simply supported along the bottom edge. The solution to this problem is given in Ref. [85]:

where \(\bar{t}\) is the magnitude of applied traction, \(p_n\) denotes the contact pressure, b the contact area, and \(E^{*}\) the effective stiffness.

Traction profile for the Hertz contact problem: a Comparison of the numerical profiles obtained with the discretizations shown in Fig. 14; b Relative error with respect to the analytical solution

The load is applied in twenty load increments of equal magnitude. We used the enriched method with ALM, and convergence (with a tolerance \(\Vert {\Delta \varvec{U}} \Vert /\Vert {\varvec{U}} \Vert < 10^{-5}\)) was reached within four iterations per step. Two different meshes are used in this numerical test: for the first one, the substrate discretization is coarser than that of the punch, while in the second one punch and substrate are discretized with elements of similar sizes. In both cases, Fig. 14 shows the presence of enriched nodes also far from the contact area. Enriched nodes are actually added also far from the contact area for convenience (in practice, all standard nodes from one contact surface are projected to the other and vice versa). Enriched nodes that do not come into contact with the corresponding standard node will be regarded as inactive in the calculation of stiffness matrix and force vector components. The results are reported in terms of stress distribution \(\sigma _{22}\) in Fig. 14 and contact pressure profile in Fig. 15. The stress field shows a typical Hertzian contact distribution. Figure 15b shows the contact pressure relative error, computed as \(e = \vert p_n-p_n^h \vert / \vert p_n\vert \), where \(p_n^h\) is the pressure obtained numerically. We find that the steep pressure gradients in elements that transition from contact to no contact are responsible of yielding inaccurate contact tractions. The error in the interior region is within 7% for both discretizations studied. Therefore, in this region the numerical contact pressure profile approximates the analytical solution closely.

6 Discussion

Compared to traditional contact and coupling formulations, the proposed method has a number of advantages. As the enriched formulation essentially transforms the problem into a node-to-node discretization, it is possible to utilize the most straightforward coupling and contact techniques. This also facilitates the implementation in existing standard displacement-based finite element packages. Furthermore, as the tractions are properly transferred and over-constrained locking is avoided, no contact stabilization techniques are required. This important intrinsic property of the formulation, which was first noticed while comparing DE-FEM with X/GFEM in Ref. [14], relates also to the recovery of smooth tractions in immersed analysis using IGFEM and DE-FEM [16, 22]. Noteworthy, our traction profiles are on par with those obtained by the virtual element method (VEM) [85], whereby a non-conforming problem is transformed into a node-to-node VEM-conforming discretization; in our procedure, however, formulation and computer implementation are much simpler than VEM. It is important to note that the shape functions of the original element are kept intact, and enrichments are only nonzero in the elements along the contact boundary (thus the partition of unity property in these elements is lost). Because enrichment functions are local by construction and vanish at the original mesh nodes, the Kronecker delta property on those nodes is retained, and all standard DOFs preserve their physical interpretation. As a result, post-processing is only required to compute the solution at enriched nodes, and because every enriched node is matched to a standard node, obtaining the DOFs of the latter further reduces post-processing.

It has been acknowledged in previous works that interface- and discontinuity-enriched formulations may have difficulties in properly reconstructing field gradients (strains and thus stresses). This issue stems from the construction of the enriched finite element space, which may use sliver integration elements that degrade the accuracy of field gradients (as in standard FEM). While the issue is more pronounced for material interfaces [102, 103], it has been shown recently that the issue is negligible near Dirichlet boundaries [22] and near traction-free cracks [93]. In the context of contact and mesh coupling problems, our numerical experiments indicate that this issue is not present. As enriched nodes are only placed along the contact/coupling interfaces, we conjecture that the presence of sliver integration elements does not adversely affect the gradient field accuracy and thus the method can properly recover strains and stresses. It is worth noting that there is work [104] that aims at improving the accuracy of recovered gradient fields from enriched FEMs (and in fact unfitted FEMs in general).

Although in this work we considered linearized kinematics and frictionless contact, the extension of the current enriched framework to more advanced problems such as frictional contact, contact in 3D, and contact in large deformation is relatively straightforward. The only drawbacks we see at the moment are related to the non-symmetric global stiffness matrix stemming from the frictional contact formulation, the necessity of a more efficient way of contact detection in a three-dimensional implementation, and the possible need of smoothing techniques to achieve better convergence properties in large deformation problems. Finally, the proposed method could also be applied to high-order approximations, albeit high-order enriched functions would be needed to properly describe curved edges (assuming that geometry is described nonlinearly).

References

Wriggers P (2006) Computational contact mechanics. Springer, Berlin. https://doi.org/10.1007/978-3-540-32609-0

Papadopoulos P, Taylor RL (1992) A mixed formulation for the finite element solution of contact problems. Comput Methods Appl Mech Eng 94(3):373–389. https://doi.org/10.1016/0045-7825(92)90061-N

Haikal G, Hjelmstad KD (2010) An enriched discontinuous Galerkin formulation for the coupling of non-conforming meshes. Finite Elem Anal Des 46(6):496–503. https://doi.org/10.1016/j.finel.2009.12.008

Farhat C, Roux F-X (1991) A method of finite element tearing and interconnecting and its parallel solution algorithm. Int J Numer Methods Eng 32(6):1205–1227. https://doi.org/10.1002/nme.1620320604

Bernardi C, Maday Y, Patera AT (1992) A new nonconforming approach to domain decomposition: the Mortar element method. In: Brezis H, Lions JL (eds) Nonlinear partial differential equations and their applications, vol XI. Pitman Press, New York, pp 13–51

Flemisch B, Puso MA, Wohlmuth BI (2005) A new dual mortar method for curved interfaces: 2D elasticity. Int J Numer Methods Eng 63(6):813–832. https://doi.org/10.1002/nme.1300

Babuška I (1973) The finite element method with Lagrangian multipliers. Numer Math 20(3):179–192. https://doi.org/10.1007/BF01436561

Brezzi F, Fortin M (2012) Mixed and hybrid finite element methods. Springer, New York. https://doi.org/10.1007/978-1-4612-3172-1

Duarte CA, Liszka TJ, Tworzydlo WW (2007) Clustered generalized finite element methods for mesh unrefinement, non-matching and invalid meshes. Int J Numer Methods Eng 69(11):2409–2440. https://doi.org/10.1002/nme.1862

Dolbow J, Moës N, Belytschko T (2001) An extended finite element method for modeling crack growth with frictional contact. Comput Methods Appl Mech Eng 190(51–52):6825–6846. https://doi.org/10.1016/S0045-7825(01)00260-2

Hirmand M, Vahab M, Khoei AR (2015) An augmented Lagrangian contact formulation for frictional discontinuities with the extended finite element method. Finite Elem Anal Des 107:28–43. https://doi.org/10.1016/j.finel.2015.08.003

Akula BR, Vignollet J, Yastrebov VA (2019) MorteX method for contact along real and embedded surfaces: coupling X-FEM with the Mortar method

Khoei AR, Nikbakht M (2007) An enriched finite element algorithm for numerical computation of contact friction problems. Int J Mech Sci 49(2):183–199. https://doi.org/10.1016/j.ijmecsci.2006.08.014

Aragón AM, Simone A (2017) The discontinuity-enriched finite element method. Int J Numer Methods Eng 112(11):1589–1613. https://doi.org/10.1002/nme.5570

Haslinger J, Renard Y (2009) A new fictitious domain approach inspired by the extended finite element method. SIAM J Numer Anal 47(2):1474–1499. https://doi.org/10.1137/070704435

Cuba Ramos A, Aragón AM, Soghrati S, Geubelle PH, Molinari J-F (2015) A new formulation for imposing Dirichlet boundary conditions on non-matching meshes. Int J Numer Methods Eng 103(6):430–444. https://doi.org/10.1002/nme.4898

Babuška I, Banerjee U (2012) Stable generalized finite element method (SGFEM). Comput Methods Appl Mech Eng 201–204:91–111. https://doi.org/10.1016/j.cma.2011.09.012

Babuška I, Banerjee U, Kergrene K (2017) Strongly stable generalized finite element method: application to interface problems. Comput Methods Appl Mech Eng 327:58–92. https://doi.org/10.1016/j.cma.2017.08.008

Soghrati S, Aragón AM, Duarte CA, Geubelle PH (2012) An interface-enriched generalized FEM for problems with discontinuous gradient fields. Int J Numer Methods Eng 89(8):991–1008. https://doi.org/10.1002/nme.3273

Soghrati S (2014) Hierarchical interface-enriched finite element method: an automated technique for mesh-independent simulations. J Comput Phys 275:41–52. https://doi.org/10.1016/j.jcp.2014.06.016

Aragón AM, Liang B, Ahmadian H, Soghrati S (2020) On the stability and interpolating properties of the hierarchical interface-enriched finite element method. Comput Methods Appl Mech Eng 362:112671. https://doi.org/10.1016/j.cma.2019.112671

van den Boom SJ, Zhang J, van Keulen F, Aragón AM (2019) A stable interface-enriched formulation for immersed domains with strong enforcement of essential boundary conditions. Int J Numer Methods Eng 120(10):1163–1183. https://doi.org/10.1002/nme.6139

van den Boom SJ, Zhang J, van Keulen F, Aragón AM (2019) Cover image. Int J Numer Methods Eng 120(10)

Taylor R, Papodopoulos P (1991) On a patch test for contact problems in two dimensions. In: Wriggers P, Wagner W (eds) Computational methods in nonlinear mechanics. Springer, Berlin, pp 690–702

Oden JT (1981) Exterior penalty methods for contact problems in elasticity. In: Nonlinear finite element analysis in structural mechanics. Springer, Berlin, pp 655–665. https://doi.org/10.1007/978-3-642-81589-8_33

Wriggers P, Simo JC (1985) A note on tangent stiffness for fully nonlinear contact problems. Commun Appl Numer Methods 1(5):199–203. https://doi.org/10.1002/cnm.1630010503

Benson DJ, Hallquist JO (1990) A single surface contact algorithm for the post-buckling analysis of shell structures. Comput Methods Appl Mech Eng 78(2):141–163. https://doi.org/10.1016/0045-7825(90)90098-7

Papadopoulos P, Taylor RL (1993) A simple algorithm for three-dimensional finite element analysis of contact problems. Comput Struct 46(6):1107–1118. https://doi.org/10.1016/0045-7949(93)90096-V

Zavarise G, de Lorenzis L (2009) A modified node-to-segment algorithm passing the contact patch test. Int J Numer Methods Eng 79(4):379–416. https://doi.org/10.1002/nme.2559

Kikuchi N, Oden JT (1988) Contact problems in elasticity: a study of variational inequalities and finite element methods

Papadopoulos P, Jones RE, Solberg JM (1995) A novel finite element formulation for frictionless contact problems. Int J Numer Methods Eng 38(15):2603–2617. https://doi.org/10.1002/nme.1620381507

Papadopoulos P, Solberg JM (1998) A Lagrange multiplier method for the finite element solution of frictionless contact problems. Math Comput Model 28(4–8):373–384. https://doi.org/10.1016/S0895-7177(98)00128-9

Jones RE, Papadopoulos P (2001) A novel three-dimensional contact finite element based on smooth pressure interpolations. Int J Numer Methods Eng 51(7):791–811. https://doi.org/10.1002/nme.163.abs

Simo JC, Wriggers P, Taylor RL (1985) A perturbed Lagrangian formulation for the finite element solution of contact problems. Comput Methods Appl Mech Eng 50(2):163–180. https://doi.org/10.1016/0045-7825(85)90088-X

Zavarise G, Wriggers P (1998) A segment-to-segment contact strategy. Math Comput Model 28(4–8):497–515. https://doi.org/10.1016/S0895-7177(98)00138-1

El-Abbasi N, Bathe KJ (2001) Stability and patch test performance of contact discretizations and a new solution algorithm. Comput Struct 79(16):1473–1486. https://doi.org/10.1016/S0045-7949(01)00048-7

Belgacem FB, Hild P, Laborde P (1998) The mortar finite element method for contact problems. Math Comput Model 28(4–8):263–271. https://doi.org/10.1016/S0895-7177(98)00121-6

McDevitt TW, Laursen TA (2000) A mortar-finite element formulation for frictional contact problems. Int J Numer Methods Eng 48(10):1525–1547. https://doi.org/10.1002/1097-0207(20000810)48:10<1525::AID-NME953>3.0.CO;2-Y

Wohlmuth BI (2001) A mortar finite element method using dual spaces for the Lagrange multiplier. SIAM J Numer Anal 38(3):989–1012. https://doi.org/10.1137/S0036142999350929

Puso MA (2004) A 3D mortar method for solid mechanics. Int J Numer Methods Eng 59(3):315–336. https://doi.org/10.1002/nme.865

Puso MA, Laursen TA (2004) A mortar segment-to-segment contact method for large deformation solid mechanics. Comput Methods Appl Mech Eng 193(6–8):601–629. https://doi.org/10.1016/j.cma.2003.10.010

Fischer KA, Wriggers P (2005) Frictionless 2D contact formulations for finite deformations based on the mortar method. Comput Mech 36(3):226–244. https://doi.org/10.1007/s00466-005-0660-y

Yang B, Laursen TA, Meng X (2005) Two dimensional mortar contact methods for large deformation frictional sliding. Int J Numer Methods Eng 62(9):1183–1225. https://doi.org/10.1002/nme.1222

Puso MA, Laursen TA, Solberg J (2008) A segment-to-segment mortar contact method for quadratic elements and large deformations. Comput Methods Appl Mech Eng 197(6–8):555–566. https://doi.org/10.1016/j.cma.2007.08.009

Tur M, Fuenmayor FJ, Wriggers P (2009) A mortar-based frictional contact formulation for large deformations using Lagrange multipliers. Comput Methods Appl Mech Eng 198(37–40):2860–2873. https://doi.org/10.1016/j.cma.2009.04.007

Hüeber S, Stadler G, Wohlmuth BI (2007) A primal-dual active set algorithm for three-dimensional contact problems with Coulomb friction. SIAM J Sci Comput 30(2):572–596. https://doi.org/10.1137/060671061

Laursen TA, Puso MA, Sanders J (2012) Mortar contact formulations for deformable-deformable contact: past contributions and new extensions for enriched and embedded interface formulations. Comput Methods Appl Mech Eng 205–208(1):3–15. https://doi.org/10.1016/j.cma.2010.09.006

Temizer I (2012) A mixed formulation of mortar-based frictionless contact. Comput Methods Appl Mech Eng 223–224:173–185. https://doi.org/10.1016/j.cma.2012.02.017

Popp A (2012) Mortar methods for computational contact mechanics and general interface problems. Dissertation, Technische Universität München, München

Farah P, Popp A, Wall WA (2015) Segment-based vs. element-based integration for mortar methods in computational contact mechanics. Comput Mech 55(1):209–228. https://doi.org/10.1007/s00466-014-1093-2

Solberg JM, Jones RE, Papadopoulos P (2007) A family of simple two-pass dual formulations for the finite element solution of contact problems. Comput Methods Appl Mech Eng 196(4–6):782–802. https://doi.org/10.1016/j.cma.2006.05.011

Puso MA, Solberg JM (2020) A dual pass mortar approach for unbiased constraints and self-contact. Comput Methods Appl Mech Eng 367:113092. https://doi.org/10.1016/j.cma.2020.113092

Park KC, Felippa CA, Rebel G (2002) A simple algorithm for localized construction of non-matching structural interfaces. Int J Numer Methods Eng 53(9):2117–2142. https://doi.org/10.1002/nme.374

Rebel G, Park KC, Felippa CA (2002) A contact formulation based on localized Lagrange multipliers: formulation and application to two-dimensional problems. Int J Numer Methods Eng 54(2):263–297. https://doi.org/10.1002/nme.426

González JA, Park KC, Felippa CA (2006) Partitioned formulation of frictional contact problems using localized Lagrange multipliers. Commun Numer Methods Eng 22(4):319–333. https://doi.org/10.1002/cnm.821

Popp A, Gitterle M, Gee MW, Wall WA (2010) A dual mortar approach for 3D finite deformation contact with consistent linearization. Int J Numer Methods Eng 83(11):1428–1465. https://doi.org/10.1002/nme.2866

Popp A, Wall WA (2014) Dual mortar methods for computational contact mechanics—overview and recent developments. GAMM Mitteilungen 37(1):66–84. https://doi.org/10.1002/gamm.201410004

Gitterle M, Popp A, Gee MW, Wall WA (2010) Finite deformation frictional mortar contact using a semi-smooth newton method with consistent linearization. Int J Numer Methods Eng 84(5):543–571. https://doi.org/10.1002/nme.2907

Belytschko T, Daniel WJT, Ventura G (2002) A monolithic smoothing-gap algorithm for contact-impact based on the signed distance function. Int J Numer Methods Eng 55(1):101–125. https://doi.org/10.1002/nme.568