Abstract

Background

Annotated data are foundational to applications of supervised machine learning. However, there seems to be a lack of common language used in the field of surgical data science.

The aim of this study is to review the process of annotation and semantics used in the creation of SPM for minimally invasive surgery videos.

Methods

For this systematic review, we reviewed articles indexed in the MEDLINE database from January 2000 until March 2022. We selected articles using surgical video annotations to describe a surgical process model in the field of minimally invasive surgery. We excluded studies focusing on instrument detection or recognition of anatomical areas only. The risk of bias was evaluated with the Newcastle Ottawa Quality assessment tool. Data from the studies were visually presented in table using the SPIDER tool.

Results

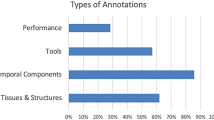

Of the 2806 articles identified, 34 were selected for review. Twenty-two were in the field of digestive surgery, six in ophthalmologic surgery only, one in neurosurgery, three in gynecologic surgery, and two in mixed fields. Thirty-one studies (88.2%) were dedicated to phase, step, or action recognition and mainly relied on a very simple formalization (29, 85.2%). Clinical information in the datasets was lacking for studies using available public datasets. The process of annotation for surgical process model was lacking and poorly described, and description of the surgical procedures was highly variable between studies.

Conclusion

Surgical video annotation lacks a rigorous and reproducible framework. This leads to difficulties in sharing videos between institutions and hospitals because of the different languages used. There is a need to develop and use common ontology to improve libraries of annotated surgical videos.

Similar content being viewed by others

References

Maier-Hein L, Eisenmann M, Sarikaya D, März K, Collins T, Malpani A, Fallert J, Feussner H, Giannarou S, Mascagni P, Nakawala H, Park A, Pugh C, Stoyanov D, Vedula SS, Cleary K, Fichtinger G, Forestier G, Gibaud B, Grantcharov T, Hashizume M, Heckmann-Nötzel D, Kenngott HG, Kikinis R, Mündermann L, Navab N, Onogur S, Roß T, Sznitman R, Taylor RH, Tizabi MD, Wagner M, Hager GD, Neumuth T, Padoy N, Collins J, Gockel I, Goedeke J, Hashimoto DA, Joyeux L, Lam K, Leff DR, Madani A, Marcus HJ, Meireles O, Seitel A, Teber D, Ückert F, Müller-Stich BP, Jannin P, Speidel S (2022) Surgical data science - from concepts toward clinical translation. Med Image Anal 76:102306

Maier-Hein L, Vedula SS, Speidel S, Navab N, Kikinis R, Park A, Eisenmann M, Feussner H, Forestier G, Giannarou S, Hashizume M, Katic D, Kenngott H, Kranzfelder M, Malpani A, März K, Neumuth T, Padoy N, Pugh C, Schoch N, Stoyanov D, Taylor R, Wagner M, Hager GD, Jannin P (2017) Surgical data science for next-generation interventions. Nat Biomed Eng 1:691–696

Cleary K, Kinsella A (2005) OR 2020: the operating room of the future. J Laparoendosc Adv Surg Tech A 15(495):497–573

Lalys F, Jannin P (2014) Surgical process modelling: a review. Int J Comput Assist Radiol Surg 9:495–511

Jannin P, Raimbault M, Morandi X, Riffaud L, Gibaud B (2003) Model of surgical procedures for multimodal image-guided neurosurgery. Comput Aided Surg 8:98–106

Riffaud L, Neumuth T, Morandi X, Trantakis C, Meixensberger J, Burgert O, Trelhu B, Jannin P (2010) Recording of surgical processes: a study comparing senior and junior neurosurgeons during lumbar disc herniation surgery. Neurosurgery 67:325–332

Moglia A, Georgiou K, Georgiou E, Satava RM, Cuschieri A (2021) A systematic review on artificial intelligence in robot-assisted surgery. Int J Surg 95:106151

Anteby R, Horesh N, Soffer S, Zager Y, Barash Y, Amiel I, Rosin D, Gutman M, Klang E (2021) Deep learning visual analysis in laparoscopic surgery: a systematic review and diagnostic test accuracy meta-analysis. Surg Endosc 35:1521–1533

Meireles OR, Rosman G, Altieri MS, Carin L, Hager G, Madani A, Padoy N, Pugh CM, Sylla P, Ward TM, Hashimoto DA (2021) SAGES consensus recommendations on an annotation framework for surgical video. Surg Endosc 35:4918–4929

Page MJ, Moher D, Bossuyt PM, Boutron I, Hoffmann TC, Mulrow CD, Shamseer L, Tetzlaff JM, Akl EA, Brennan SE, Chou R, Glanville J, Grimshaw JM, Hróbjartsson A, Lalu MM, Li T, Loder EW, Mayo-Wilson E, McDonald S, McGuinness LA, Stewart LA, Thomas J, Tricco AC, Welch VA, Whiting P, McKenzie JE (2021) PRISMA 2020 explanation and elaboration: updated guidance and exemplars for reporting systematic reviews. BMJ 372:n160

Khan DZ, Luengo I, Barbarisi S, Addis C, Culshaw L, Dorward NL, Haikka P, Jain A, Kerr K, Koh CH, Layard Horsfall H, Muirhead W, Palmisciano P, Vasey B, Stoyanov D, Marcus HJ (2021) Automated operative workflow analysis of endoscopic pituitary surgery using machine learning: development and preclinical evaluation (IDEAL stage 0). J Neurosurg. https://doi.org/10.1016/j.bas.2021.100580

Kitaguchi D, Takeshita N, Matsuzaki H, Oda T, Watanabe M, Mori K, Kobayashi E, Ito M (2020) Automated laparoscopic colorectal surgery workflow recognition using artificial intelligence: experimental research. Int J Surg 79:88–94

Cheng K, You J, Wu S, Chen Z, Zhou Z, Guan J, Peng B, Wang X (2022) Artificial intelligence-based automated laparoscopic cholecystectomy surgical phase recognition and analysis. Surg Endosc 36:3160–3168

Hashimoto DA, Rosman G, Witkowski ER, Stafford C, Navarette-Welton AJ, Rattner DW, Lillemoe KD, Rus DL, Meireles OR (2019) Computer vision analysis of intraoperative video: automated recognition of operative steps in laparoscopic sleeve gastrectomy. Ann Surg 270:414–421

Yeh HH, Jain AM, Fox O, Wang SY (2021) PhacoTrainer: a multicenter study of deep learning for activity recognition in cataract surgical videos. Transl Vis Sci Technol 10:23

Derathé A, Reche F, Moreau-Gaudry A, Jannin P, Gibaud B, Voros S (2020) Predicting the quality of surgical exposure using spatial and procedural features from laparoscopic videos. Int J Comput Assist Radiol Surg 15:59–67

Garcia Nespolo R, Yi D, Cole E, Valikodath N, Luciano C, Leiderman YI (2022) Evaluation of artificial intelligence-based intraoperative guidance tools for phacoemulsification cataract surgery. JAMA Ophthalmol 140:170–177

Huaulmé A, Jannin P, Reche F, Faucheron JL, Moreau-Gaudry A, Voros S (2020) Offline identification of surgical deviations in laparoscopic rectopexy. Artif Intell Med 104:101837

Bodenstedt S, Rivoir D, Jenke A, Wagner M, Breucha M, Müller-Stich B, Mees ST, Weitz J, Speidel S (2019) Active learning using deep Bayesian networks for surgical workflow analysis. Int J Comput Assist Radiol Surg 14:1079–1087

Bodenstedt S, Wagner M, Mündermann L, Kenngott H, Müller-Stich B, Breucha M, Mees ST, Weitz J, Speidel S (2019) Prediction of laparoscopic procedure duration using unlabeled, multimodal sensor data. Int J Comput Assist Radiol Surg 14:1089–1095

Dergachyova O, Bouget D, Huaulmé A, Morandi X, Jannin P (2016) Automatic data-driven real-time segmentation and recognition of surgical workflow. Int J Comput Assist Radiol Surg 11:1081–1089

Jin Y, Li H, Dou Q, Chen H, Qin J, Fu CW, Heng PA (2020) Multi-task recurrent convolutional network with correlation loss for surgical video analysis. Med Image Anal 59:101572

Lecuyer G, Ragot M, Martin N, Launay L, Jannin P (2020) Assisted phase and step annotation for surgical videos. Int J Comput Assist Radiol Surg 15:673–680

Ramesh S, Dall’Alba D, Gonzalez C, Yu T, Mascagni P, Mutter D, Marescaux J, Fiorini P, Padoy N (2021) Multi-task temporal convolutional networks for joint recognition of surgical phases and steps in gastric bypass procedures. Int J Comput Assist Radiol Surg 16:1111–1119

Shi X, Jin Y, Dou Q, Heng PA (2021) Semi-supervised learning with progressive unlabeled data excavation for label-efficient surgical workflow recognition. Med Image Anal 73:102158

Twinanda AP, Shehata S, Mutter D, Marescaux J, de Mathelin M, Padoy N (2017) EndoNet: a deep architecture for recognition tasks on laparoscopic videos. IEEE Trans Med Imaging 36:86–97

Twinanda AP, Yengera G, Mutter D, Marescaux J, Padoy N (2019) RSDNet: learning to predict remaining surgery duration from laparoscopic videos without manual annotations. IEEE Trans Med Imaging 38:1069–1078

Meeuwsen FC, van Luyn F, Blikkendaal MD, Jansen FW, van den Dobbelsteen JJ (2019) Surgical phase modelling in minimal invasive surgery. Surg Endosc 33:1426–1432

Kitaguchi D, Takeshita N, Matsuzaki H, Takano H, Owada Y, Enomoto T, Oda T, Miura H, Yamanashi T, Watanabe M, Sato D, Sugomori Y, Hara S, Ito M (2020) Real-time automatic surgical phase recognition in laparoscopic sigmoidectomy using the convolutional neural network-based deep learning approach. Surg Endosc 34:4924–4931

Malpani A, Lea C, Chen CC, Hager GD (2016) System events: readily accessible features for surgical phase detection. Int J Comput Assist Radiol Surg 11:1201–1209

Guédon ACP, Meij SEP, Osman K, Kloosterman HA, van Stralen KJ, Grimbergen MCM, Eijsbouts QAJ, van den Dobbelsteen JJ, Twinanda AP (2021) Deep learning for surgical phase recognition using endoscopic videos. Surg Endosc 35:6150–6157

Blum T, Padoy N, Feußner H, Navab N (2008) Workflow mining for visualization and analysis of surgeries. Int J Comput Assist Radiol Surg 3:379–386

Quellec G, Lamard M, Cochener B, Cazuguel G (2014) Real-time segmentation and recognition of surgical tasks in cataract surgery videos. IEEE Trans Med Imaging 33:2352–2360

Zhang B, Ghanem A, Simes A, Choi H, Yoo A (2021) Surgical workflow recognition with 3DCNN for sleeve gastrectomy. Int J Comput Assist Radiol Surg 16:2029–2036

Zhang Y, Bano S, Page AS, Deprest J, Stoyanov D, Vasconcelos F (2022) Large-scale surgical workflow segmentation for laparoscopic sacrocolpopexy. Int J Comput Assist Radiol Surg 17:467–477

Huaulmé A, Despinoy F, Perez SAH, Harada K, Mitsuishi M, Jannin P (2019) Automatic annotation of surgical activities using virtual reality environments. Int J Comput Assist Radiol Surg 14:1663–1671

Katić D, Schuck J, Wekerle AL, Kenngott H, Müller-Stich BP, Dillmann R, Speidel S (2016) Bridging the gap between formal and experience-based knowledge for context-aware laparoscopy. Int J Comput Assist Radiol Surg 11:881–888

Mascagni P, Alapatt D, Urade T, Vardazaryan A, Mutter D, Marescaux J, Costamagna G, Dallemagne B, Padoy N (2021) A computer vision platform to automatically locate critical events in surgical videos: documenting safety in laparoscopic cholecystectomy. Ann Surg 274:e93–e95

Lalys F, Bouget D, Riffaud L, Jannin P (2013) Automatic knowledge-based recognition of low-level tasks in ophthalmological procedures. Int J Comput Assist Radiol Surg 8:39–49

Lalys F, Riffaud L, Bouget D, Jannin P (2012) A framework for the recognition of high-level surgical tasks from video images for cataract surgeries. IEEE Trans Biomed Eng 59:966–976

Mascagni P, Alapatt D, Laracca GG, Guerriero L, Spota A, Fiorillo C, Vardazaryan A, Quero G, Alfieri S, Baldari L, Cassinotti E, Boni L, Cuccurullo D, Costamagna G, Dallemagne B, Padoy N (2022) Multicentric validation of EndoDigest: a computer vision platform for video documentation of the critical view of safety in laparoscopic cholecystectomy. Surg Endosc. https://doi.org/10.1007/s00464-022-09112-1

Yu F, Silva Croso G, Kim TS, Song Z, Parker F, Hager GD, Reiter A, Vedula SS, Ali H, Sikder S (2019) Assessment of automated identification of phases in videos of cataract surgery using machine learning and deep learning techniques. JAMA Netw Open 2:e191860

Gibaud B, Forestier G, Feldmann C, Ferrigno G, Gonçalves P, Haidegger T, Julliard C, Katić D, Kenngott H, Maier-Hein L, März K, de Momi E, Nagy D, Nakawala H, Neumann J, Neumuth T, Rojas Balderrama J, Speidel S, Wagner M, Jannin P (2018) Toward a standard ontology of surgical process models. Int J Comput Assist Radiol Surg 13:1397–1408

Gholinejad M, Loeve AJ, Dankelman J (2019) Surgical process modelling strategies: which method to choose for determining workflow? Minim Invasive Ther Allied Technol 28:91–104

Garrow CR, Kowalewski KF, Li L, Wagner M, Schmidt MW, Engelhardt S, Hashimoto DA, Kenngott HG, Bodenstedt S, Speidel S, Müller-Stich BP, Nickel F (2021) Machine learning for surgical phase recognition: a systematic review. Ann Surg 273:684–693

Marcus HJ, Khan DZ, Borg A, Buchfelder M, Cetas JS, Collins JW, Dorward NL, Fleseriu M, Gurnell M, Javadpour M, Jones PS, Koh CH, Layard Horsfall H, Mamelak AN, Mortini P, Muirhead W, Oyesiku NM, Schwartz TH, Sinha S, Stoyanov D, Syro LV, Tsermoulas G, Williams A, Winder MJ, Zada G, Laws ER (2021) Pituitary society expert Delphi consensus: operative workflow in endoscopic transsphenoidal pituitary adenoma resection. Pituitary 24:839–853

Rosse C, Mejino JL Jr (2003) A reference ontology for biomedical informatics: the foundational model of anatomy. J Biomed Inform 36:478–500

Lomax J, McCray AT (2004) Mapping the gene ontology into the unified medical language system. Comp Funct Genomics 5:354–361

Grenon P, Smith B, Goldberg L (2004) Biodynamic ontology: applying BFO in the biomedical domain. Stud Health Technol Inform 102:20–38

Moglia A, Georgiou K, Morelli L, Toutouzas K, Satava RM, Cuschieri A (2022) Breaking down the silos of artificial intelligence in surgery: glossary of terms. Surg Endosc 36:7986–7997

Protégé. https://protege.stanford.edu/

Huaulmé A, Dardenne G, Labbe B, Gelin M, Chesneau C, Diverrez JM, Riffaud L, Jannin P (2022) Surgical declarative knowledge learning: concept and acceptability study. Comput Assist Surg (Abingdon) 27:74–83

Hung AJ, Ma R, Cen S, Nguyen JH, Lei X, Wagner C (2021) Surgeon automated performance metrics as predictors of early urinary continence recovery after robotic radical prostatectomy-a prospective bi-institutional study. Eur Urol Open Sci 27:65–72

Ma R, Lee RS, Nguyen JH, Cowan A, Haque TF, You J, Robert SI, Cen S, Jarc A, Gill IS, Hung AJ (2022) Tailored feedback based on clinically relevant performance metrics expedites the acquisition of robotic suturing skills-an unblinded pilot randomized controlled trial. J Urol. https://doi.org/10.1097/JU.0000000000002691

Pangal DJ, Kugener G, Cardinal T, Lechtholz-Zey E, Collet C, Lasky S, Sundaram S, Zhu Y, Roshannai A, Chan J, Sinha A, Hung AJ, Anandkumar A, Zada G, Donoho DA (2021) Use of surgical video-based automated performance metrics to predict blood loss and success of simulated vascular injury control in neurosurgery: a pilot study. J Neurosurg 137(3):840–849. https://doi.org/10.3171/2021.10.JNS211064

Guerin S, Huaulmé A, Lavoue V, Jannin P, Timoh KN (2022) Review of automated performance metrics to assess surgical technical skills in robot-assisted laparoscopy. Surg Endosc 36:853–870

Acknowledgements

The authors want to acknowledge Felicity Neilson, native English speaker specialized in scientific writing for English editing.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Disclosure

Dr. Krystel NYANGOH TIMOH, Dr. Arnaud HUAULMÉ, Dr. Kevin CLEARY, Mrs. Myrah A ZAHEER, Dr. Dan DONOHO, and Dr. Pierre JANNIN have no conflict of interest or financial ties to disclose. Pr. Vincent LAVOUÉ has a contract with Intuitiv® for proctoring.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix: Risk of bias and the Newcastle–Ottawa quality assessment scale

Appendix: Risk of bias and the Newcastle–Ottawa quality assessment scale

Study | Selection | Outcome |

|---|---|---|

Ascertainment of exposure | Assessment of outcome | |

Secure record (e.g., surgical records) | Independent blind assessment, record linkage | |

Blum et al. [32] | * | * |

Bodenstedt et al. [19] | * | * |

Bodenstedt et al. [20] | * | * |

Cheng et al. [13] | * | * |

Derathé et al. [16] | * | * |

Dergachyova et al. [21] | * | * |

Guedon et al. [31] | * | * |

* | * | |

Hashimoto et al. [14] | * | * |

Huaulmé et al. [18] | * | * |

Jalal et al. [56] | * | * |

Jin et al. [22] | * | * |

Katic et al. [37] | * | * |

Khan et al. [11] | * | * |

Kitugachi et al. [12] | * | * |

Kitugachi et al. [29] | * | * |

Pangal et al. [55] | * | * |

Lalys et al. [39] | * | * |

Lalys et al. [40] | * | * |

Lecuyer et al. [23] | * | * |

* | * | |

Malpani et al. [30] | * | * |

Mascagani et al. [38] | * | * |

Mascagani et al. [41] | * | * |

Meuwssen et al. [28] | * | * |

Nespolo et al. [17] | * | * |

Guerin et al. [56] | * | * |

Quellec et al. [33] | * | * |

Ramesh et al. [24] | * | * |

Shi et al. [25] | * | * |

* | * | |

Twinanda et al. [26] | * | * |

* | * | |

Twinanda et al. [27] | * | * |

* | * | |

Yeh et al. [15] | * | * |

Yu et al. [42] | * | * |

Zhang et al. [35] | * | * |

Zhang et al. [34] | * | * |

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Nyangoh Timoh, K., Huaulme, A., Cleary, K. et al. A systematic review of annotation for surgical process model analysis in minimally invasive surgery based on video. Surg Endosc 37, 4298–4314 (2023). https://doi.org/10.1007/s00464-023-10041-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00464-023-10041-w