Abstract

Background

The laparoscopic approach to liver resection may reduce morbidity and hospital stay. However, uptake has been slow due to concerns about patient safety and oncological radicality. Image guidance systems may improve patient safety by enabling 3D visualisation of critical intra- and extrahepatic structures. Current systems suffer from non-intuitive visualisation and a complicated setup process. A novel image guidance system (SmartLiver), offering augmented reality visualisation and semi-automatic registration has been developed to address these issues. A clinical feasibility study evaluated the performance and usability of SmartLiver with either manual or semi-automatic registration.

Methods

Intraoperative image guidance data were recorded and analysed in patients undergoing laparoscopic liver resection or cancer staging. Stereoscopic surface reconstruction and iterative closest point matching facilitated semi-automatic registration. The primary endpoint was defined as successful registration as determined by the operating surgeon. Secondary endpoints were system usability as assessed by a surgeon questionnaire and comparison of manual vs. semi-automatic registration accuracy. Since SmartLiver is still in development no attempt was made to evaluate its impact on perioperative outcomes.

Results

The primary endpoint was achieved in 16 out of 18 patients. Initially semi-automatic registration failed because the IGS could not distinguish the liver surface from surrounding structures. Implementation of a deep learning algorithm enabled the IGS to overcome this issue and facilitate semi-automatic registration. Mean registration accuracy was 10.9 ± 4.2 mm (manual) vs. 13.9 ± 4.4 mm (semi-automatic) (Mean difference − 3 mm; p = 0.158). Surgeon feedback was positive about IGS handling and improved intraoperative orientation but also highlighted the need for a simpler setup process and better integration with laparoscopic ultrasound.

Conclusion

The technical feasibility of using SmartLiver intraoperatively has been demonstrated. With further improvements semi-automatic registration may enhance user friendliness and workflow of SmartLiver. Manual and semi-automatic registration accuracy were comparable but evaluation on a larger patient cohort is required to confirm these findings.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Avoid common mistakes on your manuscript.

Laparoscopic liver resection (LLR) reduces pain and complications resulting in shorter hospital stay with comparable oncological outcomes to open liver resection [1,2,3,4]. Uptake of the laparoscopic approach has been slow [3, 5] but is progressing [4] with most HPB centres carrying out at least minor liver resections laparoscopically whilst only a few centres perform major hepatectomies or complex liver resections (e.g. superior-posterior segments), laparoscopically [4, 5]. Expansion of laparoscopic liver surgery is slowed by inherent limitations to depth perception, tactile feedback and field of view which are compounded by the livers varied and complex anatomy [6, 7]. These limitations have given rise to concern over controlling bleeding and ensuring adequate oncological clearance [3,4,5, 8,9,10].

Navigated image guidance systems (IGS) have been shown to improve outcomes in neurosurgery [11,12,13], and have also been applied to LLR with the aim of enhancing intraoperative orientation and to improve safety [7, 14,15,16]. IGS allow surgeons to view structures, such as tumours and blood vessels, that can be seen on preoperative scans but that are not visible with a laparoscopic camera [16, 17].

Laparoscopic ultrasound, an alternative technique for operative imaging is limited by its two-dimensionality and poor contrast between tumours and normal liver [7, 18,19,20]. Video-based IGS using augmented reality (AR) can superimposes a 3D liver model directly onto the laparoscopic screen [21, 22]. Generally application of these systems requires three key steps; the creation of a personalised 3D liver model from a preoperative CT or MRI scan, intraoperative image registration and tracking of the laparoscope to guide the image overlay.

Two commercial IGS designed for open liver surgery [23, 24], have been adapted for LLR with studies demonstrating comparable accuracy to open surgery [7, 22]. These systems however are limited by the need for separate screens to demonstrate image guidance [7] and the use of manual registration [7, 22] which is a source of errors and delay to the intraoperative workflow [25]. To address these issues an IGS is being developed with capabilities for AR and semi-automatic registration [26, 27]. These features may improve the performance and usability of navigated image guidance. The current study tests the feasibility of using the new IGS, Smart Liver, in a clinical setting and is the first study to compare manual with semi-automatic registration [28].

Methods

A novel image-guided surgery system (SmartLiver) was designed for use in LLR through a programme of basic research and clinical development commissioned by the Wellcome Trust in partnership with the Department of Health (UK) [21, 26, 27, 29].

System description

The 3D models used for AR visualisation (Fig. 1) were produced by Visible Patient™ (Strasbourg, France). In brief, 3D models were constructed from the contrast enhanced CT carried out as part of routine investigations for patients with suspected hepato-pancreato-biliary malignancy. The Polaris Spectra™ system (NDI Medical, Waterloo, Canada) was employed for optical tracking of the laparoscope [26]. SmartLiver is being developed to function with either manual or semi-automatic registration. Manual registration is controlled by a touch screen monitor or mouse which enables manipulation of the 3D model into an anatomically appropriate position. Semi-automatic registration is facilitated by a computer vision technique called stereoscopic surface reconstruction which enables the acquisition of the biometrical liver surface features (dense surface reconstruction) that are subsequently represented as 3D points cloud. Stereoscopic surface reconstruction functions by triangulating the right and left video channel of a 3D laparoscope (IMAGE 1S—TIPCAM, KARL STORZ™, Tuttlingen, Germany) and therefore this technique cannot be applied to standard monocular laparoscopes [30]. Using the iterative closest point matching method, corresponding 3D points cloud from patient liver and 3D liver model are aligned to complete the registration [26, 27] (Fig. 2). Iterative closest point matching is initialised by manually positioning the 3D model in proximity to the patient liver. Following liver mobilisation the registration process was usually repeated to adjust for liver position changes. For in-depth details on SmartLiver technology please see [21, 26, 27].

Augmented reality visualisation of a 3D liver model overlayed onto the laparoscopic view. The liver surface outline (arrows) is not displayed to allow a clearer view of blood vessels and bile ducts (hepatic veins—blue; portal veins—purple; arteries—red, bile ducts & gallbladder—green). NB: The text on top of the image will be removed for the revised version of the manuscript (Color figure online)

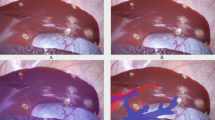

A Several patches of point clouds (yellow dots) represent the shape of the liver surface. The un-registered (non-aligned) position of the 3D model can be seen as a brown liver shape below the patches. B Following iterative closest point matching, the semi-automatic registration algorithm has positioned the 3D liver model optimally to reflect the intraoperative anatomy

Workflow in theatre

Two separate stacks are used. One 3D laparoscope stack with its own screen and a second stack that contains all components of SmartLiver including a flexible arm for the optical tracking sensor which is positioned at the head end of the operating table to obtain an unobstructed line of sight of the laparoscope (Fig. 3). Finally the laparoscope is calibrated according to the Zhang [31] chequerboard method or in later cases according to the novel “cross-hair” method that was developed by our team [32].

The surgeon uses a standard laparoscopic screen (1) whilst the research team uses a separate screen (2) for calibration, registration and data capture. In the later phase of the study the surgeon is allowed to visualise the AR view through this screen. The optical tracking camera (3) is attached to an adjustable arm

Patients

The study was approved by the local research ethics committee (Reference: 14/LO/1264 & 10/HO720/87) and registered with ISRCTN (ID: 77923416). Written consent was obtained from recruited patients. Because the accuracy of SmartLiver was unknown at the outset, the ethics approval did not permit surgeons to use the IGS to adjust operative strategy. For this reason this study did not evaluate SmartLiver’s impact on surgical outcomes but rather the feasibility of intraoperative use.

Patients who were 18 years or older undergoing staging laparoscopy or LLR were eligible for recruitment. Demographic information and perioperative data were recorded for all patients. In addition to these the conversion rate, need for perioperative blood transfusion, postoperative complications (Clavien-Dindo grade), resection margin status and length of hospital stay were recorded for LLR patients [33]. Clinical evaluation was supervised by an HPB surgeon with over 15 years experience in LLR.

Task description and endpoints

The aim of the study was to assess the feasibility of using SmartLiver for image guidance in laparoscopic liver surgery and to compare navigation accuracy between manual and semi-automatic registration. The primary endpoint was defined as successful registration as determined by the operating surgeon who had reviewed the preoperative imaging and was judged on whether the 3D model maintained an anatomically appropriate and stable position during surgery. Failure of semi-automatic registration resulted in an error message.

Secondary endpoints were system usability and comparison of the accuracy of manual and semi-automatic registration. Data for registration were obtained by recording the liver surface from different laparoscope angles. Registration was carried out by a technical developer. Because of ongoing system development intraoperative registration was found to be very time consuming. Therefore postoperative registration, based on intraoperatively recorded data, was performed in phase one. This approach allowed us to obtain the data required to improve workflow and system functionality, in a more time efficient manner. In the second phase, registration was carried out intraoperatively and additional surface data were acquired to facilitate semi-automatic registration (Fig. 4). In cases where intraoperative registration failed, retrospective registration was performed.

Usability evaluation

Usability was assessed by a structured Likert scale survey which was completed by the primary surgeon, postoperatively. The survey also encouraged comments in a free text section. In the first study phase surgeons could not view the AR visualisation because it was carried out retrospectively. Therefore survey questions were changed in the second phase to include feedback on how well AR visualisation reflected the anatomical situation. To aid in this assessment surgeons compared the congruence of external liver landmarks (e.g. extrahepatic bile duct, left liver margin, umbilical fissure) between laparoscopic display and registered 3D model.

Comparison of manual and semi-automatic registration

Due to the lack of standardised methods for assessing IGS navigation accuracy [34], our group previously proposed a landmark based method which was also employed here [21, 26]. In brief, distances between corresponding anatomical landmarks on laparoscopic images and the 3D liver model are measured and compared (Fig. 5). Essentially, an increase in the measured distance results in an increased registration error (i.e. decreased accuracy). Distance measurements are possible because the registration process between the 3D model, its inherent volumetric data and laparoscopic images creates fixed reference points akin to a 3D coordinate system. These reference points subsequently enable extrapolation of distance measurements from laparoscopic images [26]. Common liver landmarks used for accuracy calculation were the left lateral margin, lower margin, falciform ligament and umbilical notch. Occasionally patients had unique anatomical features such as liver indentations, scarring or superficial liver cysts that could also be used as landmarks. To provide an estimate of the optimal target registration error (TRE), the root mean square value of all individual distance errors (i.e. distances) is calculated across multiple video frames and stated in millimetre root mean square (abbreviated to mm). Root mean square is defined as the square root of the mean square which is the arithmetic mean of the squares of all distance errors. Shapiro-Wilks testing was conducted to check for normal distribution of data. TRE values for groups of patients are stated as mean ± standard deviation (SD). To test accuracy results for statistically significant differences between groups, independent and paired t-testing was used as appropriate. For in-depth details on accuracy evaluation please see [21, 26].

A A registration with a low error results in relative proximity of patient anatomy (blue landmarks) and 3D model anatomy (green landmarks). B In contrast to this a registration with a substantial error results in long distance between the corresponding landmarks. NB: The landmarks have been highlighted to enhance visibility. Landmark 3 is outside the visible area of the screen

Results

Patient characteristics and data acquisition

Eighteen patients underwent image-guided surgery, of which 7 were scheduled for LLR and 11 for staging laparoscopy. The gender ratio was 8 women to 10 men and the median age was 61.5 years (range 38–87). All staging laparoscopies were done as day case surgery. The only complication was urinary retention in one patient who was discharged on the 4th postoperative day. LLR patient characteristics are summarised in Table 1. Median operating time was 150 min (range 75–330 min). All patients had clear resection margins on histopathological evaluation. There was one significant (Clavien-Dindo ≥ 3) postoperative complication (grade 4) in a patient who required re-intubation on postoperative day 2 for respiratory failure. Including patients converted to open surgery the median length of hospital stay was 6.5 days (range 3–14). None of the patients had significant blood loss.

Success of registration

Registration failed in two patients. In one patient a software failure of the graphic user interface prevented registration. In the other patient tracking markers became dislodged during surgery which caused tracking issues. Therefore 16 patients had successful registration and were suitable for further analysis.

Usability evaluation

Preoperative setup took 20–35 min. Because it was carried out before anaesthetic induction it did not impact on overall operating time. Intraoperative setup took approximately 10–15 min. Following the introduction of the crosshair calibration method [32] intraoperative setup time decreased to approximately 5–10 min.

Usability assessment

Feedback data were available from 10 individual surgeons carrying out 16 cases. Feedback from phase one where AR was demonstrated after the operative procedure is given in the first section of Table 2. Free text comments expressed the desire for a simplified setup process and a more compact system. Feedback from phase two where AR visualisation was presented throughout surgery is given in the second section of Table 2. Free text comments requested a better way of combining SmartLiver with laparoscopic ultrasound and increasing setup speed. One surgeon indicated that the camera optics made it difficult to reach certain angles (Table 2).

SmartLiver upgrades before phase two

User handling was improved by the implementation of a graphic user interface with touchscreen controls. Laparoscope calibration was simplified and rendered less time consuming by replacing the chequerboard with the crosshair calibration method [32].

Despite encouraging results from pre-clinical studies [26], stereoscopic surface reconstruction was initially unsuccessful in patients. An error analysis indicated that the stereoscopic surface reconstruction algorithm was unable to discriminate between surface points from the liver and surrounding structures (e.g. diaphragm). Based on data from phase one, a convolutional neural network, was trained to perform automatic segmentation (i.e. recognition) of the liver surface [35]. Following integration into SmartLiver, this algorithm subsequently enabled successful stereoscopic surface reconstruction and semi-automatic registration in study phase two.

Comparison of manual and semi-automatic registration

In phase one, retrospective manual registration was performed in 6 patients. In phase two, both manual and semi-automatic registration were performed in 10 patients. Accuracy for manual registration in phase one was 15.8 ± 14.2 mm. In phase two manual registration accuracy improved to 10.9 ± 4.2 mm. This improvement did not reach statistical significance with a mean difference of 4.9 mm (− 1.1 to 10.9 mm 95% CI; p = 0.104). Semi-automatic registration accuracy in phase two was 13.9 ± 4.4 mm which was not statistically significant different compared to manual registration with a mean difference of − 3 mm (− 7.4 to 1.4 mm 95% CI; p = 0.158) (Table 3).

Discussion

This study has described the development and current performance of the SmartLiver IGS. Focus was on feasibility as opposed to clinical impact because at the outset, navigation accuracy was unknown and therefore no ethical approval was sought to use SmartLiver to adjust surgical strategy. Evaluation was carried out on 18 patients undergoing either LLR or staging laparoscopy. There were no patient safety incidents associated with the use of SmartLiver and perioperative outcomes for patients undergoing LLR were similar to previous reports [4, 36, 37]. Although IGS are widely used in neurosurgery, orthopaedic surgery and otolaryngology, implementation in LLR has been slow and difficult [38]. Major challenges are the lack of fixed bony landmarks, paucity of liver surface features, organ motion secondary to diaphragmatic and cardiac movement as well as soft tissue deformation due pneumoperitoneum and surgical manipulation [7, 38, 39].

The experimental work leading up to this study demonstrated that liver motion and deformation, contribute approximately 7.5 mm to the TRE of SmartLiver [26, 39, 40]. To achieve a greater level of accuracy requires a deformable 3D model that can adjust its shape and position to reflect intraoperative changes [26]. The research community has attempted to develop deformable 3D models with varying degrees of success. Because modelling of soft tissue deformation is exceedingly complex and computationally expensive, this technology has not yet reached sufficient maturity for clinical studies [41, 42].

The primary endpoint of successful registration as assessed by the operating surgeon was achieved in 16 out of 18 patients. Success was indicated by the 3D model maintaining an anatomically appropriate and stable position. It has been previously reported that liver mobilisation results in significant positional shift of landmarks and therefore necessitates repeat registration [43]. Although not formally quantified, this was also the case in the current study. Hypothetically image guidance should be most beneficial during dissection at the liver hilum and parenchymal transection since the exact position of intrahepatic structures and tumours are crucial during these steps. Liver mobilisation is a standardised process and therefore registration and image guidance may be less important at this stage. Although re-registration issues affect all current IGS, we strongly believe that semi-automatic registration renders this process less cumbersome.

During the first study phase, registration was carried out postoperatively which meant that surgeons could not evaluate the quality AR visualisation. Feedback about equipment handling was positive whereas negative feedback mainly centred on the complexity of the intraoperative setup (e.g. tracker installation) and its impact on surgical workflow. To simplify the setup process, our group developed the crosshair calibration method and a graphic user interface [29]. In the second study phase, AR visualisation was evaluated intraoperatively. Positive feedback points were that SmartLiver improved intraoperative orientation, aided in the detection of extrahepatic structures and was consistent in the way it displayed anatomy (Table 2). Feedback about the combination of SmartLiver with laparoscopic ultrasound was less favourable, because viewing both, the AR and ultrasound -screen simultaneously was challenging. Our group previously demonstrated how SmartLiver can effectively integrate ultrasound images into AR [44]. This approach however requires electromagnetically tracked ultrasound, which was not ethically approved for this study. The anatomical precision of the overlay also received negative feedback which probably reflects the fact that there were obvious discrepancies between 3D model position and corresponding liver sections in 4 patients with a higher than average TRE. To improve anatomical precision, efforts were increased to improve manual registration accuracy. In contrast to our groups experience from porcine studies [26], it was observed that, in patients stereoscopic surface reconstruction may misalign different anatomical regions (e.g. diaphragm with liver), if they have a similar surface structure. Hypothetically the coarser, more lobulated surface of the porcine liver may be more amenable to stereoscopic surface reconstruction because it contains more features to distinguish it from surrounding structures. As demonstrated on this data set, stereoscopic surface reconstruction for the purpose of semi-automatic registration of the human liver is feasible if the liver surface is automatically segmented prior to registration [35, 45]. To the best of our knowledge this is the first clinical study to compare accuracy of manual and semi-automatic registration in a group of patients. Although the accuracy for manual registration was better than for semi-automatic registration, this did not reach statistical significance. Accuracy of manual registration is comparable to that from other groups previously published in the literature [22, 46, 47]. Various methodologies for accuracy evaluation have been proposed over time which makes direct comparison between different IGS challenging [34]. Generally the best published accuracies for video-based IGS are in the range of 10 mm and thus at the current state of the art, any IGS should perhaps be considered as an orientation aid rather than a precise navigation tool [18, 23, 24]. The utility for visualising intrahepatic structures depended mainly on the quality of the registration. In patients where accuracy ≤ 10 mm was achieved, it was feasible to approximate the position of sectoral branches (e.g. right anterior sector) and major hepatic vein branches. To maximise the potential of IGS it is important to enable smooth integration into the surgical workflow. The main benefit of AR is the intuitive use of image guidance information by obviating the need for two separate screens, therefore reducing the potential for associated errors [14]. Pending further validation, semi-automatic registration could improve user friendliness and render accuracy less operator dependent compared to manual registration [48]. Indeed, time efficiency and operator dependence may be crucial advantages of IGS compared to laparoscopic ultrasound. AR visualisation can be switched on and off within seconds whereas ultrasound requires insertion of a laparoscopic probe and manual scanning of the liver surface. Precise use of laparoscopic ultrasound is heavily operator dependent and has a steep learning curve [49, 50], whereas surgeon feedback indicates that SmartLiver’s AR is easy to mentally integrate.

Although the results from this study have demonstrated the technical feasibility of using SmartLiver intraoperatively, there are some limitations that have to be taken into account. To confirm that the accuracy of semi-automatic registration is non-inferior to manual registration, validation on a larger patient cohort is required. In the second study phase manual registration accuracy was comparable to that of other systems [22, 46, 47]. Since this is the first clinical report on semi-automatic registration in a clinical series, there are no published data to compare our results to.

It is often criticised that liver surface features may be an inadequate representation of intrahepatic anatomy. Our group and others however recently demonstrated that liver surface landmarks have a good correlation with the anatomical location of intrahepatic structures (e.g. blood vessels) [21, 51]. Simultaneous localisation and mapping (SLAM) and 3D pose estimation are alternative approaches to image guidance that do not require tracking and therefore may reduce complexity of IGS setup and handling. It remains to be seen however if these technologies can be successfully applied to an intraoperative, clinical setting [15, 52]. Other groups have demonstrated the feasibility of image-guided laparoscopic liver ablation [19, 53]. Hypothetically, SmartLiver has the potential to provide image guidance for laparoscopic liver ablation as well but at present further evaluation is required to verify this. In principal IGS can be applied to robotic assisted surgery [54] without requiring significant alterations. Again our group has not explored this option yet because the main focus is on improving SmartLiver’s performance for LLR first.

In summary, this article has described the clinical development and usability a novel IGS for laparoscopic surgery. For the first time, accuracy metrics for manual and semi-automatic registration have been compared in a clinical series. The next stage of system development will focus on improving SmartLiver’s setup process and to explore alternative methods of semi-automatic registration.

Abbreviations

- 3D:

-

Three-dimensional

- AR:

-

Augmented reality

- CI:

-

Confidence interval

- CT:

-

Computer tomography

- IGS:

-

Image guidance system

- LLR:

-

Laparoscopic liver resection

- MRI:

-

Magnetic resonance imaging

- TRE:

-

Target registration error

References

Wakabayashi G, Cherqui D, Geller DA, Buell JF, Kaneko H, Han HS et al (2015) Recommendations for laparoscopic liver resection. Ann Surg. 261(4):619–629

Fuks D, Cauchy F, Ftériche S, Nomi T, Schwarz L, Dokmak S et al (2015) Laparoscopy decreases pulmonary complications in patients undergoing major liver resection. Ann Surg 00(00):1

Kirchberg J, Reißfelder C, Weitz J, Koch M (2013) Laparoscopic surgery of liver tumors. Langenbecks Arch Surg 398:931–938

Ciria R, Cherqui D, Geller DA, Briceno J, Wakabayashi G (2015) Comparative short-term benefits of laparoscopic liver resection. Ann Surg 4:761–777

Nomi T, Fuks D, Kawaguchi Y, Mal F, Nakajima Y, Gayet B (2015) Learning curve for laparoscopic major hepatectomy. Br J Surg 102(7):796–804

Fusaglia M, Hess H, Schwalbe M, Peterhans M, Tinguely P, Weber S et al (2015) A clinically applicable laser-based image-guided system for laparoscopic liver procedures. Int J Comput Assist Radiol Surg 11:1499–1513

Kingham TP, Jayaraman S, Clements LW, Scherer MA, Stefansic JD, Jarnagin WR et al (2013) Evolution of image-guided liver surgery: transition from open to laparoscopic procedures. J Gastrointest Surg 17(7):1274–1282

Buell JF, Cherqui D, Geller DA, Orourke N, Iannitti D, Dagher I et al (2009) The international position on laparoscopic liver surgery: the Louisville Statement, 2008. Ann Surg 250(5):825–830

Cai X, Li Z, Zhang Y, Yu H, Liang X, Jin R et al (2014) Laparoscopic liver resection and the learning curve: a 14-year, single-center experience. Surg Endosc 28:1334–1341

Cauchy F, Fuks D, Nomi T, Schwarz L, Barbier L, Dokmak S et al (2015) Risk factors and consequences of conversion in laparoscopic major liver resection. Br J Surg 102:785–795

Azagury DE, Dua MM, Barrese JC, Henderson JM, Buchs NC, Ris F et al (2015) Image-guided surgery. Curr Probl Surg 52:476–520

Pessaux P, Diana M, Soler L, Piardi T, Mutter D, Marescaux J (2014) Towards cybernetic surgery: robotic and augmented reality-assisted liver segmentectomy. Langenbeck’s Arch Surg 400(3):381–385

Miner RC (2017) Image-guided neurosurgery. J Med Imaging Radiat Sci 48(4):328–335

Kang X, Azizian M, Wilson E, Wu K, Martin AD, Kane TD et al (2014) Stereoscopic augmented reality for laparoscopic surgery. Surg Endosc 28(7):2227–2235

Reichard D, Bodenstedt S, Suwelack S, Mayer B, Preukschas A, Wagner M et al (2015) Intraoperative on-the-fly organ-mosaicking for laparoscopic surgery. J Med Imaging 2(4):045001

Nicolau S, Soler L, Mutter D, Marescaux J (2011) Augmented reality in laparoscopic surgical oncology. Surg Oncol 20(3):189–201

Soler L, Nicolau S, Pessaux P, Mutter D, Marescaux J (2014) Real-time 3D image reconstruction guidance in liver resection surgery. Hepatobiliary Surg Nutr 3(2):73–81

Hammill CW, Clements LW, Stefansic JD, Wolf RF, Hansen PD, Gerber DA (2014) Evaluation of a minimally invasive image-guided surgery system for hepatic ablation procedures. Surg Innov 21(4):419–426

Kingham TP, Scherer MA, Neese BW, Clements LW, Stefansic JD, Jarnagin WR (2012) Image-guided liver surgery: intraoperative projection of computed tomography images utilizing tracked ultrasound. HPB (Oxford) 14(9):594–603

Tummers QRJG, Verbeek FPR, Prevoo HAJM, Braat AE, Baeten CIM, Frangioni JV et al (2014) First experience on laparoscopic near-infrared fluorescence imaging of hepatic uveal melanoma metastases using indocyanine green. Surg Innov 22(1):20–25

Thompson S, Schneider C, Bosi M, Gurusamy K, Ourselin S, Davidson B et al (2018) In vivo estimation of target registration errors during augmented reality laparoscopic surgery. Int J Comput Assist Radiol Surg 13(6):865–874

Prevost GA, Eigl B, Paolucci I, Rudolph T, Peterhans M, Weber S et al (2019) Efficiency, accuracy and clinical applicability of a new image-guided surgery system in 3D laparoscopic liver surgery. J Gastrointest Surg. https://doi.org/10.1007/s11605-019-04395-7

Peterhans M, vom Berg A, Dagon B, Inderbitzin D, Baur C, Candinas D et al (2011) A navigation system for open liver surgery: design, workflow and first clinical applications. Int J Med Robot 7(1):7–16

Cash DM, Miga MI, Glasgow SC, Dawant BM, Clements LW, Cao Z et al (2007) Concepts and preliminary data toward the realization of image-guided liver surgery. J Gastrointest Surg 11(7):844–859

Teatini A, Pelanis E, Aghayan DL, Kumar RP, Palomar R, Fretland ÅA et al (2019) The effect of intraoperative imaging on surgical navigation for laparoscopic liver resection surgery. Sci Rep 9(1):18687

Thompson S, Totz J, Song YY, Johnsen S, Stoyanov D, Gurusamy K et al (2015) Accuracy validation of an image guided laparoscopy system for liver resection. SPIE Proc 9415(7):941509

Totz J, Thompson S, Stoyanov D, Gurusamy K, Davidson BR, Hawkes DJ et al (2014) Fast semi-dense surface reconstruction from stereoscopic video in laparoscopic surgery. IPCAI 8498:206–215

Kleemann M, Deichmann S, Esnaashari H, Besirevic A, Shahin O, Bruch H-P et al (2012) Laparoscopic navigated liver resection: technical aspects and clinical practice in benign liver tumors. Case Rep Surg 2012:265918

Thompson S, Stoyanov D, Schneider C, Gurusamy K, Ourselin S, Davidson B et al (2016) Hand–eye calibration for rigid laparoscopes using an invariant point. Int J Comput Assist Radiol Surg 11(6):1071–1080

Stoyanov D, Scarzanella MV, Pratt P, Yang G-Z (2010) Real-time stereo reconstruction in robotically assisted minimally invasive surgery. Med Image Comput Comput Assist Interv 13(Pt 1):275–282

Zhang Z (2000) A flexible new technique for camera calibration. IEEE Trans Pattern Anal Mach Intell 22(11):1330–1334

Thompson S, Stoyanov D, Schneider C, Gurusamy K, Ourselin S, Davidson B et al (2016) Hand–eye calibration for rigid laparoscopes using an invariant point. Int J Comput Assist Radiol Surg 11(6):1–10

Dindo D, Demartines N, Clavien P-A (2004) Classification of surgical complications: a new proposal with evaluation in a cohort of 6336 patients and results of a survey. Ann Surg 240(2):205–213

Luo H, Yin D, Zhang S, Xiao D, He B, Meng F et al (2019) Augmented reality navigation for liver resection with a stereoscopic laparoscope. Comput Methods Progr Biomed 7:105099

Gibson E, Robu MR, Thompson S, Edwards PE, Schneider C, Gurusamy K et al (2017) Deep residual networks for automatic segmentation of laparoscopic videos of the liver. In: Webster RJ, Fei B (eds) Prog Biomed Opt Imaging—Proc SPIE. International Society for Optics and Photonics, London

Polignano FM, Quyn AJ, De Figueiredo RSM, Henderson NA, Kulli C, Tait IS (2008) Laparoscopic versus open liver segmentectomy: prospective, case-matched, intention-to-treat analysis of clinical outcomes and cost effectiveness. Surg Endosc Other Interv Tech 22(12):2564–2570

Abu Hilal M, Di Fabio F, Syed S, Wiltshire R, Dimovska E, Turner D et al (2013) Assessment of the financial implications for laparoscopic liver surgery: A single-centre UK cost analysis for minor and major hepatectomy. Surg Endosc Other Interv Tech 27:2542–2550

Okamoto T, Onda S, Yanaga K, Suzuki N, Hattori A (2015) Clinical application of navigation surgery using augmented reality in hepatobiliary pancreatic surgery. Surg Today 45(4):397–406

Plantefève R, Peterlik I, Haouchine N, Cotin S (2016) Patient-specific biomechanical modeling for guidance during minimally-invasive hepatic surgery. Ann Biomed Eng 44(1):139–153

Haouchine N, Cotin S, Peterlik I, Dequidt J, Kerrien E, Berger M et al (2015) Impact of soft tissue heterogeneity on augmented reality for liver surgery. IEEE Trans Vis Comput Graph 21(5):584–597

Haouchine N, Dequidt J, Peterlik I (2014) Towards an accurate tracking of liver tumors for augmented reality in robotic assisted surgery. Springer, Berlin

Suwelack S, Röhl S, Bodenstedt S, Reichard D, Dillmann R, dos Santos T et al (2014) Physics-based shape matching for intraoperative image guidance. Med Phys 41(11):111901

Tinguely P, Fusaglia M, Freedman J, Banz V, Weber S, Candinas D et al (2017) Laparoscopic image-based navigation for microwave ablation of liver tumors: a multi-center study. Surg Endosc 31(10):4315–4324

Song Y, Totz J, Thompson S, Johnsen S, Barratt D, Schneider C et al (2015) Locally rigid, vessel-based registration for laparoscopic liver surgery. Int J Comput Assist Radiol Surg 10(12):1951–1961

Fu Y, Robu MR, Koo B, Schneider C, van Laarhoven S, Stoyanov D et al (2019) More unlabelled data or label more data? A study on semi-supervised laparoscopic image segmentation. Springer, Cham

Hayashi Y, Misawa K, Oda M, Hawkes DJ, Mori K (2015) Clinical application of a surgical navigation system based on virtual laparoscopy in laparoscopic gastrectomy for gastric cancer. Int J Comput Assist Radiol Surg 11:827–836

Yasuda J, Okamoto T, Onda S, Fujioka S, Yanaga K, Suzuki N et al (2019) Application of image-guided navigation system for laparoscopic hepatobiliary surgery. Asian J Endosc Surg 13:39–45

Robu MR, Edwards P, Ramalhinho J, Thompson S, Davidson B, Hawkes D et al (2017) Intelligent viewpoint selection for efficient CT to video registration in laparoscopic liver surgery. Int J Comput Assist Radiol Surg 12(7):1079–1088

Chmarra MK, Hansen R, Hofstad EF, Våpenstad C, Marvik R, Langø T (2013) Development of laparoscopic ultrasound training system. Surg Endosc Other Interv Tech 27:S165

Solberg OV, Langø T, Tangen GA, Mårvik R, Ystgaard B, Rethy A et al (2009) Navigated ultrasound in laparoscopic surgery. Minim Invasive Ther Allied Technol 18(1):36–53

Modrzejewski R, Collins T, Seeliger B, Bartoli A, Hostettler A, Marescaux J (2019) An in vivo porcine dataset and evaluation methodology to measure soft-body laparoscopic liver registration accuracy with an extended algorithm that handles collisions. Int J Comput Assist Radiol Surg 14:1237–1245

Stoyanov D (2012) Surgical vision. Ann Biomed Eng 40(2):332–345

Beermann M, Lindeberg J, Engstrand J, Galmén K, Karlgren S, Stillström D et al (2019) 1000 consecutive ablation sessions in the era of computer assisted image guidance: lessons learned. Eur J Radiol open 6:1–8

Buchs NC, Volonte F, Pugin F, Toso C, Fusaglia M, Gavaghan K et al (2013) Augmented environments for the targeting of hepatic lesions during image-guided robotic liver surgery. J Surg Res 184(2):825–831

Funding

This publication presents independent research commissioned by the Health Innovation Challenge Fund (HICF-T4-317), a parallel funding partnership between the Wellcome Trust and the Department of Health. The views expressed in this publication are those of the author(s) and not necessarily those of the Wellcome Trust or the Department of Health. In addition this work was supported by the Wellcome/EPSRC [203145Z/16/Z].

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Disclosures

Professor Hawkes is a co-founder of IXICO Ltd. Drs. Schneider, Thompson, Allam, Sodergren, Song, Totz and Clarkson as well as Profs. Davidson, Gurusamy, Desjardins, Stoyanov, Ourselin and Barratt have no conflicts of interest or financial ties to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Schneider, C., Thompson, S., Totz, J. et al. Comparison of manual and semi-automatic registration in augmented reality image-guided liver surgery: a clinical feasibility study. Surg Endosc 34, 4702–4711 (2020). https://doi.org/10.1007/s00464-020-07807-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00464-020-07807-x