Abstract

In this paper, we consider a general notion of convolution. Let \(D\) be a finite domain and let \(D^n\) be the set of n-length vectors (tuples) of \(D\). Let \(f :D\times D\rightarrow D\) be a function and let \(\oplus _f\) be a coordinate-wise application of f. The \(f\)-Convolution of two functions \(g,h :D^n \rightarrow \{-M,\ldots ,M\}\) is

for every \(\textbf{v}\in D^n\). This problem generalizes many fundamental convolutions such as Subset Convolution, XOR Product, Covering Product or Packing Product, etc. For arbitrary function f and domain \(D\) we can compute \(f\)-Convolution via brute-force enumeration in \(\widetilde{{\mathcal {O}}}(|D|^{2n} \cdot \textrm{polylog}(M))\) time. Our main result is an improvement over this naive algorithm. We show that \(f\)-Convolution can be computed exactly in \(\widetilde{{\mathcal {O}}}( (c \cdot |D|^2)^{n} \cdot \textrm{polylog}(M))\) for constant \(c {:}{=}3/4\) when \(D\) has even cardinality. Our main observation is that a cyclic partition of a function \(f :D\times D\rightarrow D\) can be used to speed up the computation of \(f\)-Convolution, and we show that an appropriate cyclic partition exists for every f. Furthermore, we demonstrate that a single entry of the \(f\)-Convolution can be computed more efficiently. In this variant, we are given two functions \(g,h :D^n \rightarrow \{-M,\ldots ,M\}\) alongside with a vector \(\textbf{v}\in D^n\) and the task of the \(f\)-Query problem is to compute integer \((g \mathbin {\circledast _{f}}h)(\textbf{v})\). This is a generalization of the well-known Orthogonal Vectors problem. We show that \(f\)-Query can be computed in \(\widetilde{{\mathcal {O}}}(|D|^{\frac{\omega }{2} n} \cdot \textrm{polylog}(M))\) time, where \(\omega \in [2,2.372)\) is the exponent of currently fastest matrix multiplication algorithm.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Convolutions occur naturally in many algorithmic applications, especially in the exact and parameterized algorithms. The most prominent example is a subset convolution procedure [22, 37], for which an efficient \(\widetilde{{\mathcal {O}}}(2^n \cdot \textrm{polylog}(M))\) time algorithm for subset convolution dates back to Yates [40] but in the context of exact algorithms it was first used by Björklund et al. [6].Footnote 1 Researchers considered a plethora of other variants of convolutions, such as: Cover Product, XOR Product, Packing Product, Generalized Subset Convolution, Discriminantal Subset Convolution, Trimmed Subset Convolution or Lattice-based Convolution [6,7,8, 10, 11, 20, 24, 35]. These subroutines are crucial ingredients in the design of efficient algorithms for many exact and parameterized algorithms such as Hamiltonian Cycle, Feedback Vertex Set, Steiner Tree, Connected Vertex Cover, Chromatic Number, Max k-Cut or Bin Packing [5, 10, 19, 28, 39, 41]. These convolutions are especially useful for dynamic programming algorithms on tree decompositions and occur naturally during join operations (e.g., [19, 34, 35]). Usually, in the process of algorithm design, the researcher needs to design a different type of convolution from scratch to solve each of these problems. Often this is a highly technical and laborious task. Ideally, we would like to have a single tool that can be used as a blackbox in all of these cases. This motivates the following ambitious goal in this paper:

Towards this goal, we consider the problem of computing f-Generalized Convolution (\(f\)-Convolution) introduced by van Rooij [34]. Let \(D\) be a finite domain and let \(D^n\) be the n length vectors (tuples) of \(D\). Let \(f :D\times D\rightarrow D\) be an arbitrary function and let \(\oplus _f\) be a coordinate-wise application of the function f.Footnote 2 For two functions \(g,h :D^n \rightarrow {\mathbb {Z}}\) the \(f\)-Convolution, denoted by \((g \mathbin {\circledast _{f}}h) :D^n \rightarrow {\mathbb {Z}}\), is defined for all \(\textbf{v}\in D^n\) as

Here we consider the standard \({\mathbb {Z}}(+,\cdot )\) ring. Through the paper we assume that M is the absolute value of the maximum integer given on the input.

In the \(f\)-Convolution problem the functions \(g,h :D^n \rightarrow {\{-M,\ldots , M\}}\) are given as an input and the output is the function \((g \mathbin {\circledast _{f}}h)\). Note, that the input and output of the \(f\)-Convolution problem consist of \(3\cdot |D|^n\) integers. Hence it is conceivable that \(f\)-Convolution could be solved in \(\widetilde{{\mathcal {O}}}(|D|^n \cdot \textrm{polylog}(M))\). Such a result for arbitrary f would be a real breakthrough in how we design parameterized algorithms. So far, however, researchers have focused on characterizing functions f for which \(f\)-Convolution can be solved in \(\widetilde{{\mathcal {O}}}(|D|^n \cdot \textrm{polylog}(M))\) time. In [34] van Rooij considered specific instances of this setting, where for some constant \(r \in {\mathbb {N}}\) the function f is defined as either (i) standard addition: \(f(x,y) {:}{=}x+y\), or (ii) addition with a maximum: \(f(x, y) {:}{=}\min (x+y,r-1)\), or (iii) addition modulo r, or (iv) maximum: \(f(x,y) {:}{=}\max (x,y)\). Van Rooij [34] showed that for these special cases the \(f\)-Convolution can be solved in \(\widetilde{{\mathcal {O}}}(|D|^n \cdot \textrm{polylog}(M))\) time. His results allow the function f to differ between coordinates. A recent result regarding generalized Discrete Fourier Transform [32] can be used in conjunction with Yates’s algorithm [40] to compute \(f\)-Convolution in \(\widetilde{{\mathcal {O}}}(|D|^{\omega \cdot n / 2} \cdot \textrm{polylog}(M))\) time when f is a finite-group operation and \(\omega \) is the exponent of the currently fastest matrix-multiplication algorithms.Footnote 3 To the best of our knowledge these are the most general settings where convolution has been considered so far.

Nevertheless, for an arbitrary function f, to the best of our knowledge the state-of-the-art for \(f\)-Convolution is a straightforward quadratic time enumeration.

Similar questions were studied from the point of view of the Fine-Grained Complexity. In that setting the focus is on convolutions with sparse representations, where the input size is only the size of the support of the functions g and h. It is conjectured that even subquadratic algorithms are highly unlikely for these representations [18, 25]. However, these lower bounds do not answer Question 1, because they are highly dependent on the sparsity of the input.

1.1 Our Results

We provide a positive answer to Question 1 and show an exponential improvement (in n) over a naive \(\widetilde{{\mathcal {O}}}(|D|^{2n} \cdot \textrm{polylog}(M))\) algorithm for every function f.

Theorem 1.1

(Generalized Convolution) Let \(D\) be a finite set and \(f:D\times D\rightarrow D\). There is an algorithm for \(f\)-Convolution with the following running time \(\widetilde{{\mathcal {O}}}\left( \big ( \frac{3}{4} \cdot |D|^2 \big )^{n} \cdot \textrm{polylog}(M)\right) \) when \(\vert {D} \vert \) is even, or \(\big (\big ( \frac{3}{4} \cdot |D|^2+\frac{1}{4}\cdot |D| \big )^{n}\big ) \) when \(\vert {D} \vert \) is odd.

Observe that the running time obtained by Theorem 1.1 improves upon the brute-force for every \(|D| \ge 2\). Our technique works in a more general setting when \(g :L^n \rightarrow {\mathbb {Z}}\) and \(h:R^n \rightarrow {\mathbb {Z}}\) and \(f:L \times R \rightarrow T\) for arbitrary domains L, R and T (see Sect. 2 for the exact running time dependence).

Our Technique: Cyclic Partition Now, we briefly sketch the idea behind the proof of Theorem 1.1. We say that a function is k-cyclic if it can be represented as an addition modulo k (after relabeling the entries of the domain and image). These functions are somehow simple, because as observed in [33, 34] \(f\)-Convolution can be computed in \(\widetilde{{\mathcal {O}}}(k^n \cdot \textrm{polylog}(M))\) time if f is k-cyclic. In a nutshell, our idea is to partition the function \(f:D\times D\rightarrow D\) into cyclic functions and compute the convolution on these parts independently.

More formally, a cyclic minor of the function \(f:D\times D\rightarrow D\) is a (combinatorial) rectangle \(A \times B\) with \(A,B\subseteq D\) and a number \(k\in {\mathbb {N}}\) such that f restricted to A, B is a k-cyclic function. The cost of the cyclic minor (A, B, k) is \( \textrm{cost}(A,B) {:}{=}k \). A cyclic partition is a set \(\{(A_1,B_1,k_1),\ldots ,(A_m,B_m,k_m)\}\) of cyclic minors such that for every \((a,b) \in D\times D\) there exists a unique \(i \in [m]\) with \((a,b) \in A_i \times B_i\). The cost of the cyclic partition \({\mathcal {P}}= \{(A_1,B_1,k_1),\ldots ,(A_m,B_m,k_m)\}\) is \(\textrm{cost}({\mathcal {P}}) {:}{=}\sum _{i=1}^m k_i\). See Fig. 1 for an example of a cyclic partition.

Left figure illustrates exemplar function \(f :D\times D\rightarrow D\) over domain \(D{:}{=}\{a,b,c,d\}\). We highlighted a cyclic partition with red, blue, yellow and blue colors. Each color represents a different minor of f. On the right figure we demonstrate that the red-highlighted minor can be represented as addition modulo 3 (after relabeling \(a \mapsto 0\), \(b\mapsto 1\) and \(c \mapsto 2\)). Hence the red minor has cost 3. The reader can further verify that green and blue minors have cost 2 and yellow minor has cost 1, hence the cost of that particular partition is \(3+2+2+1 = 8\) (Color figure online)

Our first technical contribution is an algorithm to compute \(f\)-Convolution when the cost of a cyclic partition is small.

Lemma 1.2

(Algorithm for \(f\)-Convolution ) Let \(D\) be an arbitrary finite set, \(f:D\times D\rightarrow D\) and let \({\mathcal {P}}\) be the cyclic partition of f. Then there exists an algorithm which given \(g,h:D^n \rightarrow {\mathbb {Z}}\) computes \((g \mathbin {\circledast _{f}}h)\) in \(\widetilde{{\mathcal {O}}}((\textrm{cost}({\mathcal {P}})^n + |D|^n) \cdot \textrm{polylog}(M))\) time.

The idea behind the proof of Lemma 1.2 is as follows. Based on the partition \({\mathcal {P}}\), for any pair of vectors \(\textbf{u},\textbf{w}\in D^n\), we can define a type \({\varvec{p}}\in [m]^n\) such that \((\textbf{u}_i,\textbf{w}_i) \in A_{{\varvec{p}}_i} \times B_{{\varvec{p}}_i}\) for every \(i \in [n]\). Our main idea is to go over each type \({\varvec{p}}\) and compute the sum in the definition of \(f\)-Convolution only for pairs \((\textbf{v}_g,\textbf{v}_h)\) that have type \({\varvec{p}}\). In order to do this, first we select the vectors \(\textbf{v}_g\) and \(\textbf{v}_h\) that are compatible with this type \({\varvec{p}}\). For instance, consider the example in Fig. 1. Whenever \({\varvec{p}}_i\) refers to, say, the red-colored minor, then we consider \(\textbf{v}_g\) only if its i-th coordinate is in \(\{b,c,d\}\) and consider \(\textbf{v}_h\) only if its i-th coordinate is in \(\{b,d\}\). After computing all these vectors \(\textbf{v}_g\) and \(\textbf{v}_h\), we can transform them according to the cyclic minor at each coordinate. Continuing our example, as the red-colored minor is 3-cyclic, we can represent the i-th coordinate of \(\textbf{v}_g\) and \(\textbf{v}_h\) as \(\{0,1,2\}\) and then the problem reduces to addition modulo 3 at that coordinate. Therefore, using the algorithm of van Rooij [34] for cyclic convolution we can handle all pairs of type \({\varvec{p}}\) in \(\widetilde{{\mathcal {O}}}((\prod _{i=1}^n k_{{\varvec{p}}_i}) \cdot \textrm{polylog}(M))\) time. As we go over all \(m^n\) types \({\varvec{p}}\) the sum of \(m^n\) terms is

Hence, the overall running time is \(\widetilde{{\mathcal {O}}}(\textrm{cost}({\mathcal {P}})^n \cdot \textrm{polylog}(M))\). This running time evaluation ignores the generation of the vectors given as input for the cyclic convolution algorithm. The efficient computation of these vectors is nontrivial and requires further techniques that we explain in Sect. 3.

It remains to provide the low-cost cyclic partition of an arbitrary function f.

Lemma 1.3

For any finite set \(D\) and any function \(f:D\times D\rightarrow D\) there is a cyclic partition \({\mathcal {P}}\) of f such that \(\textrm{cost}({\mathcal {P}}) \le \frac{3}{4} |D|^2\) when \(\vert {D} \vert \) is even, or \(\textrm{cost}({\mathcal {P}}) \le \frac{3}{4} |D|^2 + \frac{1}{4} |D|\) when \(\vert {D} \vert \) is odd.

For the sake of presentation let us assume that \(|D|\) is even. In order to show Lemma 1.3, we partition \(D\) into pairs \(A_1,\ldots ,A_{k}\) where \(k {:}{=}|D|/2\) and consider the restrictions of f to \(A_j \times D\) one by one. Intuitively, we partition the \(D\times D\) table describing f into pairs of rows and give a bound on the cost of each pair. This partition allows us to encode f on \(A_j \times D\) as a directed graph G with \(|D|\) edges and \(|D|\) vertices. We observe that directed cycles and directed paths can be represented as cyclic minors. Our goal is to partition graph G into such subgraphs in a way that the total cost of the resulting cyclic partition is small. Following this argument, the proof of Lemma 1.3 becomes a graph-theoretic analysis. The proof of Lemma 1.3 is included in Sect. 4. We also give an example which suggests that the constant \(\frac{3}{4}\) in Lemma 1.3 cannot be improved further while using the partition of \(D\) into arbitrary pairs (see Lemma 4.16).

Our method applies for more general functions \(f :L \times R \rightarrow T\), where domains L, R, T can be different and have arbitrary cardinality. We note that a weaker variant of Lemma 1.3 in which the guarantee is \(\textrm{cost}({\mathcal {P}}_f) \le \frac{7}{8} |D|^2\) is easier to attain (see Sect. 4).

Efficient Algorithm for Convolution Query Our next contribution is an efficient algorithm to query a single value of \(f\)-Convolution. In the \(f\)-Query problem, the input is \(g,h :D^n \rightarrow {\mathbb {Z}}\) and a single vector \(\textbf{v}\in D^n\). The task is to compute a value \((g \mathbin {\circledast _{f}}h)(\textbf{v})\). Observe that this task generalizesFootnote 4 the fundamental problem of Orthogonal Vectors. We show that computing \(f\)-Query is much faster than computing the full output of \(f\)-Convolution.

Theorem 1.4

(Convolution Query) For any finite set \(D\) and function \(f:D\times D\rightarrow D\) there is a \(\widetilde{{\mathcal {O}}}(|D|^{\omega \cdot n / 2} \cdot \textrm{polylog}(M))\) time algorithm for the \(f\)-Query problem.

Here \(\widetilde{{\mathcal {O}}}(m^\omega \cdot \textrm{polylog}(M))\) is the time needed to multiply two \(m \times m\) integer matrices with values in \({\{-M,\ldots , M\}}\) and currently \(\omega \in [2,2.372)\) [2, 21]. Note, that under the assumption that two matrices can be multiplied in the linear in the input time (i.e., \(\omega = 2\)) then Theorem 1.4 runs in the nearly-optimal \(\widetilde{{\mathcal {O}}}(|D|^n \cdot \textrm{polylog}(M))\) time. Theorem 1.4 is significantly faster than Theorem 1.1, which can be used to solve \(f\)-Query in time \(\widetilde{{\mathcal {O}}}\left( \left( \frac{3}{4} \cdot |D|^2 \right) ^{n} \cdot \textrm{polylog}(M)\right) \) when \(\vert {D} \vert \) is even, or \(\widetilde{{\mathcal {O}}}\left( \left( \frac{3}{4} \cdot |D|^2+\frac{1}{4}\cdot |D| \right) ^{n} \cdot \textrm{polylog}(M)\right) \) when \(\vert {D} \vert \) is odd. This holds true even if we plug-in the naive algorithm for matrix multiplication (i.e., \(\omega = 3\)). The proof of Theorem 1.4 is inspired by an interpretation of the \(f\)-Query problem as counting length-4 cycles in a graph.

1.2 Related Work

Arguably, the problem of computing the Discrete Fourier Transform (DFT) is the prime example of convolution-type problems in computer science. Cooley and Tukey [17] proposed the fast algorithm to compute DFT. Later, Beth [4] and Clausen [16] initiated the study of generalized DFTs whose goal has been to obtain a fast algorithm for DFT where the underlying group is arbitrary. After a long line of works (see [31] for the survey), the currently best algorithm for generalized DFT concerning group G runs in \({\mathcal {O}}(|G|^{\omega /2+\epsilon })\) operations for every \(\epsilon > 0\) [32].

A similar technique to ours was introduced by Björklund et al. [9]. The paper gave a characterization of lattices that admit a fast zeta transform and a fast Möbius transform.

From the lower-bounds perspective to the best of our knowledge only a naive \(\Omega (|D|^n)\) lower bound is known for \(f\)-Convolution (as this is the output size). We note that known lower bounds for different convolution-type problems, such as \((\min ,+)\)-convolution [18, 25], \((\min ,\max )\)-convolution [13], min-witness convolution [26], convolution-3SUM [14] or even skew-convolution [12] cannot be easily adapted to \(f\)-Convolution as the hardness of these problems comes primarily from the ring operations.

The Orthogonal Vectors problem is related to the \(f\)-Query problem. In the Orthogonal Vectors problem we are given two sets of n vectors \(A,B \subseteq \{0,1\}^d\) and the task is to decide if there is a pair \(a \in A\), \(b \in B\) such that \(a \cdot b = 0\). In [38] it was shown that there is no algorithm with a running time of \(n^{2-\epsilon } \cdot 2^{o(d)}\) for the Orthogonal Vectors problem for any \(\epsilon > 0\), assuming SETH [36]. The currently best algorithm for Orthogonal Vectors runs in time \(n^{2-1/{\mathcal {O}}(\log (d)/\log (n))}\) [1, 15], \({\mathcal {O}}(n \cdot 2^{cd})\) for some constant \(c < 0.5\) [30], or \({\mathcal {O}}(|{\downarrow }A| + |{\downarrow }B|)\) [7] (where \(|{\downarrow }F|\) is the total number of vectors whose support is a subset of the support of input vectors).

1.3 Organization

In Sect. 2 we provide the formal definitions of the problems alongside the general statements of our results. In Sect. 3 we give an algorithm for \(f\)-Convolution that uses a given cyclic partition. In Sect. 4 we show that for every function \(f :D\times D\rightarrow D\) there exists a cyclic partition of low cost. Finally, in Sect. 5 we give an algorithm for \(f\)-Query and prove Theorem 1.4. In Sect. 6 we conclude the paper and discuss future work.

2 Preliminaries

Throughout the paper, we use Iverson bracket notation, where for the logic expression P, the value of \(\llbracket {P}\rrbracket \) is 1 when P is true and 0 otherwise. For \(n \in {\mathbb {N}}\) we use [n] to denote \(\{1,\ldots ,n\}\). Through the paper we denote vectors in bold, for example, \(\textbf{q}\in {\mathbb {Z}}^k\) denotes a k-dimensional vector of integers. We use subscripts to denote the entries of the vectors, e.g., \(\textbf{q}{:}{=}(\textbf{q}_1,\ldots ,\textbf{q}_k)\).

Let L, R and T be arbitrary sets and let \(f :L \times R \rightarrow T\) be an arbitrary function. We extend the definition of such an arbitrary function f to vectors as follows. For two vectors \(\textbf{u}\in L^n\) and \(\textbf{w}\in R^n \) we define

In this paper, we consider the \(f\)-Convolution problem with a more general domain and image. We define it formally as follows:

Definition 2.1

(f-Convolution) Let L, R and T be arbitrary sets and let \(f:L \times R \rightarrow T\) be an arbitrary function. The \(f\)-Convolution of two functions \(g:L^n \rightarrow {\mathbb {Z}}\) and \(h:R^n\rightarrow {\mathbb {Z}}\), where \(n\in {\mathbb {N}}\), is the function \((g \mathbin {\circledast _{f}}h):T^n \rightarrow {\mathbb {Z}}\) defined by

for every \(\textbf{v}\in T^n\).

As before the operations are taken in the standard \({\mathbb {Z}}(+,\cdot )\) ring and M is the maximum absolute value of the integers given on the input.

Now, we formally define the input and output to the \(f\)-Convolution problem.

Definition 2.2

(f-Convolution Problem (\(f\)-Convolution)) Let L, R and T be arbitrary finite sets and let \(f:L \times R \rightarrow T\) be an arbitrary function. The f-Convolution Problem is the following.

Input: Two functions \(g:R^n\rightarrow {\{-M,\ldots , M\}}\) and \(h:L^n\rightarrow {\{-M,\ldots , M\}}\).

Task: Compute \(g \mathbin {\circledast _{f}}h\).

Our main result stated in the most general form is the following.

Theorem 2.3

Let \(f:L\times R\rightarrow T\) such that L, R and T are finite. There is an algorithm for the \(f\)-Convolution problem with \(\widetilde{{\mathcal {O}}}(c^n \cdot \textrm{polylog}(M))\) time, where

Theorem 1.1 is a corollary of Theorem 2.3 by setting \(L = R = T = D\).

The proof of Theorem 2.3 utilizes the notion of cyclic partition. For any \(k\in {\mathbb {N}}\), let \({\mathbb {Z}}_k=\{0,1,\ldots , k-1\}\). We say a function \(f:A\times B\rightarrow C\) is k-cyclic if, up to a relabeling of the sets A, B and C, it is an addition modulo k. Formally, \(f:A\times B\rightarrow C\) is k-cyclic if there are \(\sigma _A:A\rightarrow {\mathbb {Z}}_k\), \(\sigma _B:B\rightarrow {\mathbb {Z}}_k\), and \(\sigma _C :{\mathbb {Z}}_k \rightarrow C\) such that

We refer to the functions \(\sigma _A\), \(\sigma _B\) and \(\sigma _C\) as the relabeling functions of f. For example, a constant function \(f:A \times B \rightarrow \{0\}\) defined by \(f(a,b)=0\) for all \((a,b)\in A\times B\) is 1-cyclic.

The restriction of \(f:L\times R \rightarrow T\) to \(A\subseteq L\) and \(B\subseteq R\) is the function \(g:A\times B\rightarrow T\) defined by \(g(a,b) = f(a,b)\) for all \(a\in A\) and \(b\in B\). We say (A, B, k) is a cyclic minor of \(f:L\times R \rightarrow T\) if the restriction of f to A and B is a k-cyclic function.

A cyclic partition of \(f:L\times R \rightarrow T\) is a set of minors \({\mathcal {P}}=\{(A_1,B_1,k_1),\ldots , (A_m,B_m,k_m)\}\) such that \((A_i,B_i,k_i)\) is a cyclic minor of f and for every \((a,b)\in L\times R\) there is a unique \(1\le i\le m\) such that \((a,b)\in A_i\times B_i\). The cost of the cyclic partition is \(\textrm{cost}({\mathcal {P}})=\sum _{i=1}^{m} k_i\).

Theorem 2.3 follows from the following lemmas.

Lemma 3.1

(Algorithm for Generalized Convolution) Let L, R and T be finite sets. Also, let \(f:L\times R\rightarrow T\) be a function and let \({\mathcal {P}}\) be a cyclic partition of f. Then there is an \(\widetilde{{\mathcal {O}}}((\textrm{cost}({\mathcal {P}})^n + |L|^n + |R|^n + |T|^n) \cdot \textrm{polylog}(M))\) time algorithm for \(f\)-Convolution.

Lemma 4.1

Let \(f:L\times R\rightarrow T\) where L, R and T are finite sets. Then there is a cyclic partition \({\mathcal {P}}\) of f such that \(\textrm{cost}({\mathcal {P}})\le \frac{\vert {L} \vert }{2} \cdot (\vert {R} \vert + \frac{\vert {T} \vert }{2})\) when \( \vert {L} \vert \) is even, and \(\textrm{cost}({\mathcal {P}}) \le \vert {R} \vert + \frac{\vert {L} \vert -1}{2} \cdot (\vert {R} \vert + \frac{\vert {T} \vert }{2})\) when \(\vert {L} \vert \) is odd.

The proof of Lemma 3.1 is included in Sect. 3 and proof of Lemma 4.1 is included in Sect. 4. The proof of Lemma 3.1 uses an algorithm for Cyclic Convolution.

Definition 2.4

(Cyclic Convolution) Let \(k\in {\mathbb {N}}\) and \(\textbf{r}\in {\mathbb {N}}^k\). Also, let \(g,h:Z\rightarrow {\mathbb {N}}\) be two functions where \(Z={\mathbb {Z}}_{\textbf{r}_1}\times \cdots \times {\mathbb {Z}}_{\textbf{r}_k}\). The Cyclic Convolution of g and h is the function \((g\mathbin {\odot }h):Z \rightarrow {\mathbb {N}}\) defined by

for every \(\textbf{v}\in Z\).

For any \(K\subseteq {\mathbb {N}}\) we define the K-\({\textsc {Cyclic Convolution Problem}} \) in which we restrict the entries of the vector \(\textbf{r}\) in Definition 2.4 to be in K.

Definition 2.5

(K-\({\textsc {Cyclic Convolution Problem}} \)) For any \(K\subseteq {\mathbb {N}}\) the K-Cyclic Convolution Problem is defined as follows.

Input: Integers \(k, M \in {\mathbb {N}}\), a vector \(\textbf{r}\in {\mathbb {N}}^k\) such that \(\textbf{r}_j \in K\) for every \(j\in [k]\) and two functions \(g,h:Z\rightarrow {\{-M,\ldots , M\}}\) where \(Z={\mathbb {Z}}_{\textbf{r}_1}\times \cdots \times {\mathbb {Z}}_{\textbf{r}_k}\).

Task: Compute the \({\textsc {Cyclic Convolution}} \) \(g\mathbin {\odot }h :Z \rightarrow {\mathbb {Z}}\).

Van Rooij [33] claimed that the \({\mathbb {N}}\)-Cyclic Convolution Problem can be solved in \(\widetilde{{\mathcal {O}}}\left( \big (\prod _{i=1}^k \textbf{r}_i\big ) \cdot \textrm{polylog}(M)\right) \) time. However, for his algorithm to work it must be given an appropriate large prime p and several primitives roots of unity in \({{\mathbb {F}}}_p\). We are unaware of a method which deterministically finds such a prime and roots while retaining the running time. To overcome this obstacle we present an algorithm for the K-Cyclic Convolution Problem when \(K\subseteq {\mathbb {N}}\) is a fixed finite set. Our solution uses multiple smaller primes and the Chinese Reminder Theorem. We include the details in Appendix A.

Theorem 2.6

(K-Cyclic Convolution) For any finite set \(K\subseteq {\mathbb {N}}\), there is an \(\widetilde{{\mathcal {O}}}\left( (\prod _{i=1}^k \textbf{r}_i) \cdot \textrm{polylog}(M)\right) \) algorithm for the K-Cyclic Convolution Problem.

3 Generalized Convolution

In this section we prove Lemma 3.1.

Lemma 3.1

(Algorithm for Generalized Convolution) Let L, R and T be finite sets. Also, let \(f:L\times R\rightarrow T\) be a function and let \({\mathcal {P}}\) be a cyclic partition of f. Then there is an \(\widetilde{{\mathcal {O}}}((\textrm{cost}({\mathcal {P}})^n + |L|^n + |R|^n + |T|^n) \cdot \textrm{polylog}(M))\) time algorithm for \(f\)-Convolution.

Throughout the section we fix L, R and T, and \(f :L \times R \rightarrow T\) to be as in the statement of Lemma 3.1. Additionally, fix a cyclic partition \({\mathcal {P}}= \{(A_1, B_1, k_1), \ldots , (A_m, B_m, k_m)\}\). Furthermore, let \(\sigma _{A,i}\), \(\sigma _{B,i}\) and \(\sigma _{C,i}\) be the relabeling functions of the cyclic minor \((A_i,B_i,k_i)\) for every \(i \in [m]\). We assume the labeling functions are also fixed throughout this section.

In order to describe our algorithm for Lemma 3.1, we first need to establish several technical definitions.

Definition 3.2

(Type) The type of two vectors \(\textbf{u}\in L^{n}\) and \(\textbf{w}\in R^{n}\) is the unique vector \({\varvec{p}}\in [m]^{n}\) for which \(\textbf{u}_i \in A_{{\varvec{p}}_i}\) and \(\textbf{w}_i \in B_{{\varvec{p}}_i}\) for all \(i \in [n]\).

Observe that the type of two vectors is well defined as \({\mathcal {P}}\) is a cyclic partition. For any type \({\varvec{p}}\in \{1, \ldots , m\}^{n}\) we define

to be vector domains restricted to type \({\varvec{p}}\). For any type \({\varvec{p}}\) we introduce relabeling functions on its restricted domains. The relabeling functions of \({\varvec{p}}\) are the functions \(\varvec{\sigma }_{{\varvec{p}}}^{L}:L_{{\varvec{p}}}\rightarrow Z_{{\varvec{p}}}\), \(\varvec{\sigma }_{{\varvec{p}}}^{R}:R_{{\varvec{p}}}\rightarrow Z_{{\varvec{p}}}\), and \(\varvec{\sigma }_{{\varvec{p}}}^{T}:Z_{{\varvec{p}}}\rightarrow T^{n}\) defined as follows:

Our algorithm heavily depends on constructing the following projections.

Definition 3.3

(Projection of function) The projection of a function \(g:L^{n} \rightarrow {\mathbb {Z}}\) with respect to the type \({\varvec{p}}\in [m]^{n}\), is the function \(g_{\varvec{p}}:Z_{{\varvec{p}}}\rightarrow {\mathbb {Z}}\) defined as

Similarly, the projection \(h_{\varvec{p}}:Z_{{\varvec{p}}}\rightarrow {\mathbb {Z}}\) of a function \(h :R^{n} \rightarrow {\mathbb {Z}}\) with respect to the type \({\varvec{p}}\in [m]^n\) is defined as

The projections are useful due to the following connection with \(g\mathbin {\circledast _{f}}h\).

Lemma 3.4

Let \(g :L^{n} \rightarrow {\mathbb {Z}}\) and \(h :R^{n} \rightarrow {\mathbb {Z}}\), then for every \(\textbf{v}\in T^n\) it holds that:

where \(g_{\varvec{p}}\mathbin {\odot }h_{\varvec{p}}\) is the cyclic convolution of \(g_{\varvec{p}}\) and \(h_{\varvec{p}}\).

We give the proof of Lemma 3.4 in Sect. 3.1. It should be noted that the naive computation of the projection functions of g and h with respect to all types \({\varvec{p}}\) is significantly slower than the running time stated in Lemma 3.1. To adhere to the stated running time we use a dynamic programming procedure for the computations, as stated in the following lemma.

Lemma 3.5

There exists an algorithm which given a function \(g:L^n \rightarrow {\{-M,\ldots , M\}}\) returns the set of its projections, \(\{g_{\varvec{p}}\mid {\varvec{p}}\in [m]^{n} \}\), in time \(\left( \left( \textrm{cost}({\mathcal {P}})^n + |L|^n\right) \right) \).

Remark 3.6

Analogously, we can also construct every projection of a function \(h :R^n \rightarrow {\{-M,\ldots , M\}}\) in \(\widetilde{{\mathcal {O}}}\left( \left( \textrm{cost}({\mathcal {P}})^n + |R|^n\right) \cdot \textrm{polylog}(M)\right) \) time.

The proof of Lemma 3.5 in given in Sect. 3.1.

Our algorithm for \(f\)-Convolution (see Algorithm 1 for the pseudocode) is a direct implication of Lemmas 3.4 and 3.5. First, the algorithm computes the projections of g and h with respect to every type \({\varvec{p}}\). Subsequently, the cyclic convolution of \(g_{\varvec{p}}\) and \(h_{\varvec{p}}\) is computed efficiently as described in Theorem 2.6. Finally, the values of \((g \mathbin {\circledast _{f}}h)\) are reconstructed by the formula in Lemma 3.4.

Proof of Lemma 3.1

Observe that Algorithm 1 returns \({\textsf{r}} :T^n\rightarrow {\mathbb {Z}}\) such that for every \(\textbf{v}\in T^n\) it holds that

where the last equality is by Lemma 3.4. Thus, the algorithm returns \((g\mathbin {\circledast _{f}}h)\) as required. It therefore remains to bound the running time of the algorithm.

By Lemma 3.5, Line 1 of Algorithm 1 runs in time \(\widetilde{{\mathcal {O}}}((\textrm{cost}({\mathcal {P}})^n + |L|^n+|R|^n) \cdot \textrm{polylog}(M))\). Define \(K=\{ k \mid (A,B,k) \in {\mathcal {P}}\}=\{k_1,\ldots , k_m\}\) be different costs of cyclic minors in \({\mathcal {P}}\). By Theorom 2.6, for any type \({\varvec{p}}\in [m]^n\) the computation of \(g_{\varvec{p}}\mathbin {\odot }h_{{\varvec{p}}}\) in Line 2 is an instance of K-Cyclic Convolution Problem which can be solved in time \(\widetilde{{\mathcal {O}}}((\prod _{ i=1}^{n} k_{{\varvec{p}}_i}) \cdot \textrm{polylog}(M)) \). Thus the overall running time of Line 2 is \(\widetilde{{\mathcal {O}}}\left( (\sum _{{\varvec{p}}\in [m]^{n} } \prod _{i=1}^n k_{{\varvec{p}}_i}) \cdot \textrm{polylog}(M)\right) \).

Finally, observe that the construction of \({\textsf{r}}\) in Line 3 can be implemented by initializing \({\textsf{r}}\) to be zeros and iteratively adding the value of \({\textsf{c}}_{{\varvec{p}}}(\textbf{q})\) to \({\textsf{r}}(\sigma ^T_{{\varvec{p}}}(\textbf{q}))\) for every \({\varvec{p}}\in [m]^n\) and \(\textbf{q}\in Z_{{\varvec{p}}}\). The required running time is thus \(\widetilde{{\mathcal {O}}}(|T|^n \cdot \textrm{polylog}(M))\) for the initialization and \(\widetilde{{\mathcal {O}}}\left( (\sum _{{\varvec{p}}\in [m]^n} |Z_{{\varvec{p}}}|) \cdot \textrm{polylog}(M)\right) =\left( (\sum _{{\varvec{p}}\in [m]^n} \prod _{i=1}^{n} k_{{\varvec{p}}_i})\right) \) for the addition operations. Thus, the overall running time of Line 3 is

Combining the above, with \(\sum _{{\varvec{p}}\in [m]^{n} } \prod _{i=1}^n k_{{\varvec{p}}_i}= \left( \sum _{i=1}^{m} k_i \right) ^n = \left( \textrm{cost}({\mathcal {P}})\right) ^n\) means that the running time of Algorithm 1 is

This concludes the proof of Lemma 3.1. \(\square \)

3.1 Properties of Projections

In this section we provide the proofs for Lemmas 3.4 and 3.5. The proof of Lemma 3.4 uses the following definitions of coordinate-wise addition with respect to a type \({\varvec{p}}\).

Definition 3.7

(Coordinate-wise addition modulo for type) For any \({\varvec{p}}\in [m]^n\) we define a coordinate-wise addition modulo as

Proof of Lemma 3.4

By Definition 2.1 it holds that:

Recall that the type of every two vectors \((\textbf{u},\textbf{w})\in L^n \times R^n\) is unique and \([m]^n\) contains all possible types and hence, we can rewrite (3.1) as

By the properties of the relabeling functions, we get

Observe that we can partition \(L_{{\varvec{p}}}\) (respectively \(R_{{\varvec{p}}}\)) by considering the inverse images of \(\textbf{r}\in Z_{{\varvec{p}}}\) under \(\varvec{\sigma }_{{\varvec{p}}}^{L}\) (respectively \(\varvec{\sigma }_{{\varvec{p}}}^{R}\)), i.e. \(L_{{\varvec{p}}}= \biguplus _{\textbf{r}\in Z_{{\varvec{p}}}} \{\textbf{u}\in L_{{\varvec{p}}}\mid \varvec{\sigma }_{{\varvec{p}}}^{L}(\textbf{u}) = \textbf{r}\}\). Hence, for every \({\varvec{p}}\in [m]^n\) and \(\textbf{q}\in Z_{{\varvec{p}}}\) it holds that

By plugging (3.3) into (3.2) we get

as required. \(\square \)

Proof of Lemma 3.5

The idea is to use a dynamic programming algorithm loosely inspired by Yates’s algorithm [40].

Define \(X^{(\ell )} =\left\{ ({\varvec{p}}, \textbf{q})~\big |~{\varvec{p}}\in [m]^{\ell },~\textbf{q}\in {\mathbb {Z}}_{{\varvec{p}}_1} \times \dots \times {\mathbb {Z}}_{{\varvec{p}}_\ell }\right\} \) for every \(\ell \in \{0,\ldots , n\}\). We use \(X^{(\ell )}\) to define a dynamic programming table \({\textsf{DP}}^{(\ell )}:X^{(\ell )} \times L^{n-\ell } \rightarrow {\mathbb {Z}}\) for every \(\ell \in \{0,\ldots n\}\) by:

The tables \({\textsf{DP}}^{(0)},{\textsf{DP}}^{(1)},\ldots , {\textsf{DP}}^{(n)}\) are computed consecutively where the computation of \({\textsf{DP}}^{(\ell )}\) relies on the values of \({\textsf{DP}}^{(\ell -1)}\) for any \(\ell \in [n]\). Observe that \(g_{\varvec{p}}(\textbf{q}) = {\textsf{DP}}^{(n)}[({\varvec{p}}_1,\ldots ,{\varvec{p}}_n),(\textbf{q}_1,\ldots ,\textbf{q}_n)][\varepsilon ]\) for every \({\varvec{p}}\) and \(\textbf{q}\), which means that computing \({\textsf{DP}}^{(n)}\) is equivalent to computing the projection functions \(g_{{\varvec{p}}}\) of g for every type \({\varvec{p}}\).Footnote 5

It holds that \({\textsf{DP}}^{(0)}[\varepsilon ,\varepsilon ][\textbf{t}] = g(\textbf{t})\). Hence, \({\textsf{DP}}^{(0)}\) can be trivially computed in \(|L|^n\) time. We use the following straightforward recurrence to compute \({\textsf{DP}}^{(\ell )}\):

A dynamic programming algorithm which computes \({\textsf{DP}}^{(n)}\) can be easily derived from (3.4) and the formula for \({\textsf{DP}}^{(0)}\). The total number of states in the dynamic programming table \({\textsf{DP}}^{(\ell )}\) is

This is bounded by \(\textrm{cost}({\mathcal {P}})^n + |L|^n\) for every \(\ell \in [n]\). To transition between states we spend polynomial time per entry because we assume that \(|L| = {\mathcal {O}}(1)\). Hence, we can compute \(g_{\varvec{p}}\) for every \({\varvec{p}}\) in \(\widetilde{{\mathcal {O}}}((\textrm{cost}({\mathcal {P}})^n + |L|^n) \cdot \textrm{polylog}(M))\) time. \(\square \)

4 The Existence of a Low-Cost Cyclic Partition

In this section we prove Lemma 4.1.

Lemma 4.1

Let \(f:L\times R\rightarrow T\) where L, R and T are finite sets. Then there is a cyclic partition \({\mathcal {P}}\) of f such that \(\textrm{cost}({\mathcal {P}})\le \frac{\vert {L} \vert }{2} \cdot (\vert {R} \vert + \frac{\vert {T} \vert }{2})\) when \( \vert {L} \vert \) is even, and \(\textrm{cost}({\mathcal {P}}) \le \vert {R} \vert + \frac{\vert {L} \vert -1}{2} \cdot (\vert {R} \vert + \frac{\vert {T} \vert }{2})\) when \(\vert {L} \vert \) is odd.

We first consider the special case when \(\vert {L} \vert =2\). Later we reduce the general case to this scenario and use the result as a black-box.

As a warm-up we construct a cyclic partition of cost at most \(\frac{7}{8} \vert {D} \vert ^2\) assuming that \(L=R=T=D\) and that \(\vert {D} \vert \) is even. For this, we first partition \(D\) into pairs \(d_1^{(i)},d_2^{(i)}\) where \(i\in [\vert {D} \vert /2]\) and show for each such pair that f restricted to \(\{d_1^{(i)},d_2^{(i)}\}\) and \(D\) has a cyclic partition of cost at most \(\frac{7}{4} \vert {D} \vert \). The union of these cyclic partitions forms a cyclic partition of f with cost at most \(\frac{\vert {D} \vert }{2} \cdot \frac{7}{4} \vert {D} \vert = \frac{7}{8} \vert {D} \vert ^2\).

To construct the cyclic partition for a fixed \(i\in [\vert {D} \vert /2]\), we find a maximal number r of pairwise disjoint pairs \(e_1^{(j)},e_2^{(j)} \in D\) such that \(\vert {\{f(d_{a}^{(i)},e_b^{(j)}) \mid a,b \in \{1,2\} \}} \vert \le 3\) for each \(j \in [r]\), i.e. for each j at least one of the four values \(f(d_1^{(i)},e_1^{(j)}),f(d_1^{(i)},e_2^{(j)}),f(d_2^{(i)},e_1^{(j)}),f(d_2^{(i)},e_2^{(j)})\) repeats. With this assumption, f restricted to \(\{d_1^{(i)},d_2^{(i)}\}\) and \(\{e_1^{(j)},e_2^{(j)}\}\) is either a cyclic minor of cost at most 3 or can be decomposed into 3 trivial cyclic minors of the total cost at most 3. We claim that \(r \ge \vert {D} \vert /4\). Indeed, assume that there are fewer than |D|/4 such pairs, i.e. \(r < \vert {D} \vert /4\). Let \({\overline{D}}\) denote the \(\vert {D} \vert -2 \cdot r > \vert {D} \vert /2\) remaining values in \(D\). As the set \(\{f(d_a^{(i)}, d) \mid d \in {\overline{D}}, a \in \{1,2\} \}\) can only contain at most \(\vert {D} \vert \) values, we can find another pair \(e_1^{(r+1)},e_2^{(r+1)}\) with the above constraints. Note that f restricted to \(\{d_1^{(i)},d_2^{(i)}\}\) and \(\overline{D}\) can be decomposed into at most \(2\vert {{\overline{D}}} \vert \) trivial minors. Hence, the cyclic partition for f restricted to \(\{d_1^{(i)},d_2^{(i)}\}\) and \(D\) has cost at most

4.1 Special Case: \(|L| = 2\)

In this section, we prove the following lemma that is a special case of Lemma 4.1.

Lemma 4.2

If \(f:L \times R \rightarrow T\) with \(\vert {L} \vert =2\), then there is a cyclic partition \({\mathcal {P}}\) of f such that \(\textrm{cost}({\mathcal {P}}) \le \vert {R} \vert + \vert {T} \vert /2\).

To construct the cyclic partition we proceed as follows. First, we define, for a function f, the representation graph \(G_f\). Next, we show that if this graph has a special structure, which we later call nice, then we can easily find a cyclic partition for the function f. Afterwards we decompose (the edges of) an arbitrary representation graph \(G_f\) into nice structures and then combine the cyclic partitions coming from these parts to a cyclic partition for the original function f.

Definition 4.3

(Graph Representation) Let \(f:L \times R \rightarrow T\) be such that \(\vert {L} \vert =2\) with \(L = \{\ell _0, \ell _1\}\).

We say a function \(\lambda _f:R \rightarrow T \times T\) with \(\lambda _f:r \mapsto (f(\ell _0,r),f(\ell _1,r))\) is the edge mapping of f. We say that a directed graph \(G_f\) (which might have self-loops) with vertex set \(V(G_f) {:}{=}T\) and edge set \(E(G_f) {:}{=}\{ \lambda _f(r) \mid r \in R\}\) is the representation graph of f.

We say that the representation graph \(G_f\) is nice if \(G_f\) is a directed cycle or a directed path (potentially with a single edge).

As a next step we define the restriction of a function based on a subgraph of the corresponding representation graph.

Definition 4.4

(Restriction of f) Let \(f:L \times R \rightarrow T\) be a function such that \(\vert {L} \vert =2\)

and let \(G_f\) be the representation graph of f. Let \(E'\subseteq E(G_f)\) be a given subset of edges inducing the subgraph \(G'\) of \(G_f\).

Based on \(E'\) (and thus, \(G'\)) we define a new function \(f'\) in the following and say that \(f'\) is the function represented by \(G'\) or \(E'\).

With \(T'{:}{=}V(G')\) and \(R'{:}{=}\{ r \in R \mid \lambda _f(r) \in E'\}\), we define \(f' :L \times R' \rightarrow T'\) as the restriction of f such that the representation graph of \(f'\) is \(G'\). Formally, we set \(f'(\ell ,r) {:}{=}f(\ell ,r)\) for all \(\ell \in L\) and \(r \in R'\).

A decomposition of a directed graph G is a family \({\mathcal {F}}\) of edge-disjoint subgraphs of G, such that each edge belongs to exactly one subgraph in \({\mathcal {F}}\). The following observation follows directly from the previous definition.

Observation 4.5

Let \(\{G_1,\dots ,G_k\}\) be a decomposition of the graph \(G_f\) into k subgraphs, let \(f_i\) be the function represented by \(G_i\), and let \({\mathcal {P}}_i\) be a cyclic partition of \(f_i\).

Then \({\mathcal {P}}=\bigcup _{i\in [k]} {\mathcal {P}}_i\) is a cyclic partition of f with cost \(\textrm{cost}({\mathcal {P}}) = \sum _{i\in [k]} \textrm{cost}({\mathcal {P}}_i)\).

Example of the construction of a representation graph from the function f to obtain a cyclic partition. We put an edge between vertices u and v if there is an \(r_i\) with \(u = f(\ell _0,r_i)\) and \(v = f(\ell _1,r_i)\). We highlight an example decomposition of the edges into a cycle with 4 vertices (highlighted red) and three paths with 5, 2 and 4 vertices (highlighted blue, yellow and green respectively). The cost of this cyclic partition is \(4 + 5 + 2 + 4 = 15\) (Color figure online)

Cyclic Partitions Using Nice Representation Graphs As a next step, we show that functions admit cyclic partitions if the representation graph is nice. We extend these results to functions with arbitrary representation graphs by decomposing these graphs into nice subgraphs. Finally, we combine these results to obtain a cyclic partition for the original function f (Fig. 2).

Lemma 4.6

Let \(f:L \times R \rightarrow T\) be a function such that \(G_f\) is nice. Then f has a cyclic partition of cost at most \(\vert {T} \vert =\vert {V(G_f)} \vert \).

Proof

By definition, a nice graph is either a cycle or a path. We handle each case separately in the following. Let \(L = \{\ell _0, \ell _1\}\).

- \(G_f\) is a cycle.:

-

We first define the relabeling functions of f to show that f is \(\vert {T} \vert \)-cyclic. For the elements in L, let \(\sigma _L :L \rightarrow {\mathbb {Z}}_2\) with \(\sigma _L(\ell _i) = i\). To define \(\sigma _R\) and \(\sigma _T\), fix an arbitrary \(t_0 \in T\). Let \(t_1,\dots ,t_{\vert {T} \vert }\) be the elements in T with \(t_{\vert {T} \vert }=t_0\) such that, for all \(j\in {\mathbb {Z}}_{\vert {T} \vert }\), there is some \(r_j\in R\) with \(\lambda _f(r_j) = (t_j, t_{j+1})\).Footnote 6 Note that these \(r_i\) exist since \(G_f\) is a cycle. Using this notation, we define \(\sigma _T :{\mathbb {Z}}_{\vert {T} \vert } \rightarrow T\) with \(\sigma _T(j) = t_j\), for all \(j \in {\mathbb {Z}}_{\vert {T} \vert }\). For the elements in R we define \(\sigma _R:R \rightarrow {\mathbb {Z}}_{\vert {R} \vert }\) with \(\sigma _R(r) = j\) whenever \(\lambda _f(r)=(t_j, t_{j+1})\) for some j. It is easy to check that f can be seen as addition modulo \(\vert {T} \vert \). Indeed, let \(i \in \{0,1\}\) and \(r \in R\) with \(\lambda _f(r) = (t_j, t_{j+1})\). Then we get

$$\begin{aligned}{} & {} \sigma _T( \sigma _L(\ell _i) + \sigma _R(r) \bmod \vert {T} \vert ) = \sigma _T(i + j \bmod \vert {T} \vert )\\{} & {} = t_{(i+j \bmod \vert {T} \vert )} = f(\ell _i, r_j) = f(\ell _i, r). \end{aligned}$$Thus, f is \(\vert {T} \vert \)-cyclic and \(\{ (L, R, \vert {T} \vert ) \}\) is a cyclic partition of f.

- \(G_f\) is a path.:

-

Similarly to the previous case, f can be represented as addition modulo \(\vert {T} \vert \). The proof is essentially identical to the cyclic case and we include it for completeness. Let \(\sigma _L :L \rightarrow {\mathbb {Z}}_2\) with \(\sigma _L(\ell _i) = i\). Let \(t_0,\dots ,t_{\vert {T} \vert -1}\) be the elements of T such that, for all \(j\in {\mathbb {Z}}_{\vert {T} \vert -1}\), there exist \(r_j\in R\) with \(\lambda _f(r_j) = (t_j, t_{j+1})\). Since \(G_f\) is a path, such \(r_j\)’s must exist. We let \(\sigma _T(j) = t_j\) for every \(j \in {\mathbb {Z}}_{\vert {T} \vert }\). We define \(\sigma _R:R \rightarrow {\mathbb {Z}}_{\vert {R} \vert }\) with \(\sigma _R(r) = j\) whenever \(\lambda _f(r)=(t_j, t_{j+1})\) for some j. Now, we verify that f can be interpreted as addition modulo \(\vert {T} \vert \). Consider \(i \in \{0,1\}\) and \(r \in R\) with \(\lambda _f(r) = (t_j, t_{j+1})\) for some \(j \in {\mathbb {Z}}_{\vert {T} \vert -1}\). Observe that \(j < \vert {T} \vert -1\), hence \(t_{j+1 \bmod \vert {T} \vert } = t_{j+1}\). Therefore, we get

$$\begin{aligned}{} & {} \sigma _T( \sigma _L(\ell _i) + \sigma _R(r) \bmod \vert {T} \vert ) = \sigma _T(i + j \bmod \vert {T} \vert )\\{} & {} = t_{(i+j \bmod \vert {T} \vert )} = f(\ell _i, r_j) = f(\ell _i, r). \end{aligned}$$Hence, f is \(\vert {T} \vert \)-cyclic with cyclic partition \(\{(L,R,\vert {T} \vert )\}\).

\(\square \)

In the next step, we decompose arbitrary graphs into nice subgraphs. To present our decomposition we need to introduce the following notation related to the degree of vertices.

Definition 4.7

(Sources, Sinks and Middle Vertices) Let \(G=(V,E)\) be a directed graph. We denote by \(\textrm{indeg}(v)\) the in-degree of v, i.e., the number of edges terminating at v, and by \(\textrm{outdeg}(v)\) the out-degree of v, i.e., the number of edges starting at v.

We partition V into the three sets \(V_{\textsf {src} }(G)\), \(V_{\textsf {mid} }(G)\), and \(V_{\textsf {snk} }(G)\) defined as follows:

-

Set \(V_{\textsf {src} }(G)\) contains all source vertices of G, that is, vertices with no incoming edges (i.e., \(\textrm{indeg}(v)=0\)). This includes all isolated vertices.

-

Set \(V_{\textsf {mid} }(G)\) contains all middle vertices of G, that is vertices with incoming and outgoing edges (i.e., \(\textrm{indeg}(v),\textrm{outdeg}(v)\ge 1\)).

-

Set \(V_{\textsf {snk} }(G)\) contains the (remaining) sink vertices of G, that is, vertices with incoming but no outgoing edges (i.e., \(\textrm{indeg}(v)\ge 1\) and \(\textrm{outdeg}(v)=0\)).

We additionally introduce the notion of deficiency which we use in the following proofs.

Definition 4.8

(Deficiency) Let \(G=(V,E)\) be a directed graph. For all \(v\in V\), we denote by \( \textrm{defi}(v) {:}{=}\max \{ \textrm{outdeg}(v) - \textrm{indeg}(v), 0 \} \) the deficiency of v.

We define \(\textrm{Defi}(G) {:}{=}\sum _{v \in V} \textrm{defi}(v)\) as the total deficiency of the graph G.

We omit the graph G from the notation if it is clear from the context.

We use the deficiency to decompose the acyclic graphs into paths.

Lemma 4.9

Every directed graph G can be decomposed into \(\textrm{Defi}(G)\) paths and an arbitrary number of cycles.

Proof

We construct the decomposition \({\mathcal {F}}\) of G as follows. In the first phase, we exhaustively find a directed cycle C in G. We add cycle C to the decomposition \({\mathcal {F}}\) and remove the edges of C from G. We continue the above procedure until graph G becomes acyclic. Next, in the second phase we exhaustively find a directed maximum length path P (note that P may be a single edge). We add P to the decomposition \({\mathcal {F}}\) and remove the edges of P from G. We repeat the second phase until the graph G becomes edgeless.

This concludes the construction of decomposition \({\mathcal {F}}\). For correctness observe that the above procedure always terminates because in each step we decrease the number of edges of G. Moreover, at the end of the above procedure \({\mathcal {F}}\) is a decomposition of G that consists only of paths and cycles.

We are left to show that the number of paths in \({\mathcal {F}}\) is exactly \(\textrm{Defi}(G)\). Note that deleting a cycle in G does not change the value of \(\textrm{Defi}(G)\), hence the first phase of the procedure does not influence \(\textrm{Defi}(G)\) and we can assume that G is acyclic.

Next, we show that deleting a maximum length path from an acyclic graph decrements its deficiency by exactly 1. This then conclude the proof, because in the second phase of the procedure the deficiency of G decreases from \(\textrm{Defi}(G)\) down to 0, which means that exactly \(\textrm{Defi}(G)\) maximum length paths were added to \({\mathcal {F}}\).

Let P be a maximum length, directed path in the acyclic graph G. Let \(s,t \in V(G)\) be the starting and terminating vertices of path P. Path P must start at a vertex with a positive deficiency, because otherwise P could have been extended at the start which would contradict the fact that P is of maximum length. Similarly, since P is of maximum length it must terminate in a sink vertex. Hence \(\textrm{defi}(s) > 0\) and \(\textrm{defi}(t) = 0\). Moreover, every vertex \(v \in P {\setminus } \{s,t\}\) has exactly one incoming and one outgoing edge in P. Therefore, in the graph \(G \setminus P\) the contribution to the total deficiency decreased only in the vertex s and only by 1. This means that \(\textrm{Defi}(G) = \textrm{Defi}(G {\setminus } P) + 1\) which concludes the proof. \(\square \)

Now we combine Lemmas 4.6 and 4.9 to show Lemma 4.10.

Lemma 4.10

Let \(f:L \times R \rightarrow T\) be a function with \(\vert {L} \vert =2\) and let \(G_f\) be the representation graph of f. Then, there exists a cyclic partition \({\mathcal {P}}\) for f with \(\textrm{cost}({\mathcal {P}}) \le \vert {E(G_f)} \vert + \textrm{Defi}(G_f) \).

Proof

First, use Lemma 4.9 to decompose the graph into cycles and \(\textrm{Defi}(G_f)\) paths. Then, for each of these paths and cycles, use Lemma 4.6 to obtain the cyclic minor. By Observation 4.5, these minors form a cyclic partition for the function represented by \(G_f\). Let \({\mathcal {P}}\) be the resulting cyclic partition.

It remains to analyze the cost of the cyclic partition \({\mathcal {P}}\). By construction, each cyclic minor in \({\mathcal {P}}\) corresponds to a path or a cycle (possibly of length 1). By Lemma 4.6 the cost of a path or a cycle is the number of vertices it contains. Thus, for a path, the cost is equal to the number of edges plus one, and for a cycle the cost is equal to the number of edges. Hence, the cost of \({\mathcal {P}}\) is bounded by the number of edges of \(G_f\) plus the number of paths in the decomposition. The latter is precisely \(\textrm{Defi}(G_f)\) by Lemma 4.9. \(\square \)

Cyclic Partitions Using a Direct Construction In the following, we use a different method to construct a cyclic partition of the function f. Instead of decomposing the graph into nice subgraphs, we directly construct a partition and bound its cost.

Lemma 4.11

Let \(f:L \times R \rightarrow T\) be a function with \(\vert {L} \vert =2\) and let \(G_f\) be the representation graph of f. Then, there is a cyclic partition \({\mathcal {P}}\) of f with \(\textrm{cost}({\mathcal {P}}) \le \vert {V(G_f)} \vert + \vert {V_{\textsf {mid} }(G_f)} \vert \).

Proof

For each \(\ell \in L\), we use a single cyclic minor. Let \(L=\{\ell _0, \ell _1\}\). For \(i\in \{0,1\}\) define \(T_i = \{f(\ell _i,r) \mid r\in R\}\) and \(k_i =\vert {T_i} \vert \). Then, \({\mathcal {P}}{:}{=}\{ (\ell _i, R, k_i) \mid i\in \{0,1\}\}\) is the cyclic partition of f.

To see that \((\{\ell _i\}, R, k_i)\) is a cyclic minor for \(i\in \{0,1\}\), assume w.l.o.g. that \(T_i = \{0,1,\ldots , k_i-1\}\) and define \(\sigma _L(\ell _i)=0\), \(\sigma _R(r) = f(\ell _i,r)\), and \(\sigma _T(t) =t\). Thus, \({\mathcal {P}}\) is a cyclic partition of f of cost \(k_0+k_1 = |T_0|+|T_1|\).

Observe that \(\vert {T_0} \vert = \vert {V_{\textsf {src} }(G_f)} \vert + \vert {V_{\textsf {mid} }(G_f)} \vert \) as every \(t\in T_0\) has an outgoing edge in \(G_f\), and \(\vert {T_1} \vert = \vert {V_{\textsf {snk} }(G_f)} \vert + \vert {V_{\textsf {mid} }(G_f)} \vert \) as every \(t\in T_1\) has an incoming edge in \(G_f\). Hence,

which finishes the proof. \(\square \)

Bounding the Cost of Cyclic Partitions Now, we combine the results from Lemmas 4.10 and 4.11,. We first show how the number of edges relates to the total deficiency of a graph and the number of middle vertices.

Lemma 4.12

For every directed graph G it holds that \( \vert {V_{\textsf {mid} }(G)} \vert + \textrm{Defi}(G) \le \vert {E(G)} \vert \).

Proof

Let m be the number of edges of G and let \(e_1,\ldots ,e_m \in E(G)\) be some arbitrarily fixed order of its edges. For every \(i \in \{0,\ldots ,m\}\) let \(G_i\) be the graph with vertices V(G) and edges \(E(G_i) = \{e_1,\ldots ,e_i\}\). Hence \(G_0\) is an independent set of V(G) and \(G_m = G\).

For every \(i \in \{0,\ldots ,m\}\) let \(\textrm{LHS}(G_i) {:}{=}\vert {V_{\textsf {mid} }(G_i)} \vert + \textrm{Defi}(G_i)\) be the quantity we need to bound. We show that

which then concludes the proof because

From now, we focus on the proof of Eq. 4.1. For every \(v \in V(G)\) and \(i \in \{0,\ldots ,m\}\), let \(\textrm{defi}_i(v)\) be the deficiency of vertex v in graph \(G_i\). Next, for every \(v \in V(G)\) and \(i \in [m]\), we define

Consider a step \(i \in [m]\). Let \(e_i = (s,t)\) be an ith edge that starts at a vertex s and terminates at a vertex t. It holds that

Therefore \(\textrm{LHS}(G_i) - \textrm{LHS}(G_{i-1}) = \Delta _i(s) + \Delta _i(t)\) and to establish Eq. 4.1 it is enough to show that \(\Delta _i(s) \le 1\) and \(\Delta _i(t) \le 0\).

Claim 4.13

It holds that \(\Delta _i(s) \le 1\).

Proof

We consider two cases depending on whether u became a middle vertex. If it happened that \(s \in V_{\textsf {mid} }(G_i) {\setminus } V_{\textsf {mid} }(G_{i-1})\), then \(s \in V_{\textsf {snk} }(G_{i-1})\) which means that s has more incoming than outgoing edges in \(G_{i-1}\). Hence \(\textrm{defi}_{i-1}(s) = \textrm{defi}_i(s) = 0\) and we conclude that \(\Delta _i(s)=1\).

Otherwise \(s \notin V_{\textsf {mid} }(G_i)\setminus V_{\textsf {mid} }(G_{i-1})\). Because the edge \(e_i\) starts at s, the deficiency of s can increase by at most 1. Hence, by \((\textrm{defi}_i(s) - \textrm{defi}_{i-1}(s)) \le 1\) we conclude that \(\Delta _i(s) \le 1\). \(\square \)

Finally, we consider the end vertex t of the edge \(e_i\).

Claim 4.14

It holds that \(\Delta _i(t) \le 0\).

Proof

We again distinguish two cases depending on whether t became a middle vertex. If \(t \in V_{\textsf {mid} }(G_i) \setminus V_{\textsf {mid} }(G_{i-1})\), then \(t \in V_{\textsf {src} }(G_{i-1})\) and moreover, t has no incoming edges and the positive number of outgoing edges in \(G_{i-1}\). Therefore \(\textrm{defi}_i(t) = \textrm{defi}_{i-1}(t)-1\) which means that \(\Delta _i(t) \le 0\).

It remains to analyse the case when \(t \notin V_{\textsf {mid} }(G_i) {\setminus } V_{\textsf {mid} }(G_{i-1})\). Since the edge \(e_i\) ends at t, the deficiency of t cannot increase and \(\textrm{defi}_i(v) \le \textrm{defi}_{i-1}(v)\). This means that \(\Delta _i(t) \le 0\). \(\square \)

By Claims 4.13 and 4.14, it follows that \(\Delta _i(s) + \Delta _i(t) \le 1\). This establishes Eq. 4.1 and concludes the proof. \(\square \)

Now we are ready to combine Lemmas 4.10 and 4.11, and prove Lemma 4.2.

Proof of Lemma 4.2

As before, we denote by \(G_f\) the representation graph of f. Let V and E be the set of vertices and edges of graph \(G_f\).

Let \({\mathcal {P}}_1\) be the cyclic partition of f from Lemma 4.10 with cost at most \(\vert {E} \vert +\textrm{Defi}(G_f)\) and let \({\mathcal {P}}_2\) be the cyclic partition of f from Lemma 4.11 with cost at most \(\vert {V} \vert + \vert {V_{\textsf {mid} }(G_f)} \vert \).

We define \({\mathcal {P}}\) as the minimum cost partition among \({\mathcal {P}}_1\) and \({\mathcal {P}}_2\). This implies that

Next, we use the inequality \(\vert {V_{\textsf {mid} }(G_f)} \vert + \textrm{Defi}(G_f)\le \vert {E} \vert \) from Lemma 4.12, and get

Since \(\vert {E} \vert \le \vert {R} \vert \) and \(\vert {V} \vert =\vert {T} \vert \) this concludes the proof. \(\square \)

4.2 General Case: Proof of Lemma 4.1

Now we have everything ready to prove the main result of this section.

Proof of Lemma 4.1

We first handle the case when \(\vert {L} \vert \) is even. We partition L into \(\lambda =\vert {L} \vert /2\) sets \(L_1,\dots ,L_\lambda \) consisting of exactly two elements. We use Lemma 4.2 to find a cyclic partition \({\mathcal {P}}_i\) for each \(f_i:L_i \times R \rightarrow T\). By definition of the cyclic partition, \({\mathcal {P}}= \bigcup _{i\in [\lambda ]} {\mathcal {P}}_i\) is a cyclic partition for f, hence it remains to analyze the cost of \({\mathcal {P}}\).

Observe that for each \(G_i\) we have that \(\vert {V_i} \vert \le \vert {T} \vert \) and \(\vert {E_i} \vert \le \vert {R} \vert \). By the definition of the cost of the cyclic partition, we immediately get that

If \(\vert {L} \vert \) is odd, then we remove one element \(\ell \) from L and let \(L_0=\{\ell \}\). There is a trivial cyclic partition \({\mathcal {P}}_0\) for \(f_0:L_0\times R \rightarrow T\) of cost at most \(\vert {R} \vert \). Then we use the above procedure to find a cyclic partition \({\mathcal {P}}'\) for the restriction of f to \(L{\setminus }\{\ell \}\) and R. Hence, setting \({\mathcal {P}}= {\mathcal {P}}_0 \cup {\mathcal {P}}'\) gives a cyclic partition for f with cost

\(\square \)

Remark 4.15

If \(\vert {L} \vert \) and \(\vert {R} \vert \) are both even, one can easily achieve a cost of

by swapping the role of L and R and considering the function \(f':R \times L \rightarrow T\) with \(f'(r,\ell )=f(\ell ,r)\) for all \(\ell \in L\) and \(r \in R\).

4.3 Tight Example: Lower Bound on Lemma 4.2

To complement the previous results, we show that Lemma 4.2 is tight. That is, there is a function \(f:L\times R\rightarrow T\) with \(\vert {L} \vert =2\) such that no cyclic partition \({\mathcal {P}}\) of f has smaller cost, i.e., \(\textrm{cost}({\mathcal {P}}) < \vert {R} \vert + \vert {T} \vert /2\). In particular, this demonstrates that to improve the constant \(c {:}{=}3/4\) in Theorem 1.1 new ideas are needed.

The definition of the function f used by Lemma 4.16, which shows that the bound from Lemma 4.2 is tight. The representation graph of f is depicted on the right. We highlight the cyclic partition returned by Lemma 4.16. The red path contains 4 vertices and the blue path contains 2 vertices. Hence, the cost of that cyclic partition is 6. Lemma 4.16 shows that this is the best possible (Color figure online)

Lemma 4.16

There exist sets L, R, and T with \(\vert {L} \vert =2\) and a function \(f:L\times R\rightarrow T\) such that, every cyclic partition \({\mathcal {P}}\) of f has \(\textrm{cost}({\mathcal {P}}) \ge \vert {R} \vert + \vert {T} \vert /2\).

Proof

Define \(L =\{\ell _0,\ell _1\}\), \(R=\{r_1,r_2,r_3,r_4\}\), and \(T=\{a,b,c,d\}\). Let f be the function as defined in Fig. 3. Note that we need to show that every cyclic partition of f has cost at least 6.

Let \({\mathcal {P}}\) be a cyclic partition of f. We first claim that the cyclic partition \({\mathcal {P}}\) of f contains a single cyclic minor, i.e., \({\mathcal {P}}=\{(L,R,k)\}\) for some integer k. For contradictions sake, we analyse every other remaining structure of \({\mathcal {P}}\) and argue that in each case \(\textrm{cost}({\mathcal {P}})\ge 6=\vert {R} \vert +\vert {T} \vert /2\).

-

Every cyclic minor in \({\mathcal {P}}\) is of the form \((\{\ell _i\}, B, k)\) (i.e., uses only values from a single row). Then, \(\textrm{cost}({\mathcal {P}})\ge 6\) as each row has 3 distinct values.

-

There is a cyclic minor \((\{\ell _0,\ell _1\}, \{r_j\}, k)\) in \({\mathcal {P}}\). Since each column contains two distinct elements, It must hold that \(k\ge 2\). Furthermore, the cyclic minors which cover the remainder of the graph must have a total cost of 4 (or more) as all values in T appear in the remainder of the graph. Hence \(\textrm{cost}({\mathcal {P}})\ge 6\).

-

There is a cyclic minor \((\{\ell _0, \ell _1\}, \{r_j,r_{j'}\},k)\) in \({\mathcal {P}}\). Since each pair of two columns contains (at least) three values, it must hold that \(k\ge 3\). There are at least 3 distinct values in the remainder of the graph, hence, the cost of the remaining minors in \({\mathcal {P}}\) is at least 3. Thus \(\textrm{cost}({\mathcal {P}})\ge 6\).

-

There is a cyclic minor \((\{\ell _0, \ell _1\}, R\setminus \{r_j\},k)\) in \({\mathcal {P}}\). It holds that \(k\ge 4\) as every three columns include all values in T. In each case, there are two different values in the remaining column. Hence, the cost of the remaining minors is at least 2. Therefore \(\textrm{cost}({\mathcal {P}})\ge 6\).

With this, we know that \({\mathcal {P}}\) contains only the single cyclic minor (L, R, k). Let \(\sigma _L, \sigma _R\) and \(\sigma _T\) be the relabelling functions of (L, R, k). From the definition of the relabeling functions, we get that \(F{:}{=}\{ \sigma _L(\ell _i) + \sigma _R(r_j) \mod k \mid i \in \{0,1\} \text { and } j\in \{1,2,3\} \}\) contains at least four elements.

We claim that \((\sigma _L(\ell _0)+\sigma _R(r_4) \mod k) \notin F\). For the sake of contradiction assume otherwise. Then, by the definition of \(\sigma _T\), it must hold that \(\sigma _L(\ell _0)+\sigma _R(r_1) \equiv _k \sigma _L(\ell _0)+\sigma _R(r_4)\). As this implies \(\sigma _R(r_1)=\sigma _R(r_4)\), we get

which is a contradiction.

Similarly, we get that \((\sigma _L(\ell _1)+\sigma _R(r_4) \mod k) \notin F\). Again assuming otherwise, we have that \(\sigma _R(r_3)=\sigma _R(r_4)\) which then implies

which is a contradiction.

Since, \(F \cup \{ \sigma _L(\ell _0)+\sigma _R(r_4) \mod k, \sigma _L(\ell _1)+\sigma _R(r_4) \mod k \} \subseteq {{\mathbb {Z}}}_k\), contains at least six distinct elements, we get \(k \ge 6\) and therefore, \(\textrm{cost}({\mathcal {P}}) \ge 6\). \(\square \)

5 Querying a Generalized Convolution

In this section, we prove Theorem 1.4. The main idea is to represent the \(f\)-Query problem as a matrix multiplication problem, inspired by a graph interpretation of \(f\)-Query.

Let \(D\) be an arbitrary set and \(f:D\times D\rightarrow D\). We assume D and f are fixed throughout this section. Let \(g,h:D^n\rightarrow {\{-M,\ldots , M\}}\) and \(\textbf{v}\in D^n\) be a \(f\)-Query instance. We use \(\textbf{a}\Vert \textbf{b}\) to denote the concatenation of \(\textbf{a}\in D^{m}\) and \(\textbf{b}\in D^{k}\). That is \((\textbf{a}_1,\ldots , \textbf{a}_{m})\Vert (\textbf{b}_1,\ldots ,\textbf{b}_{k}) = (\textbf{a}_1,\ldots , \textbf{a}_{m}, \textbf{b}_1,\ldots , \textbf{b}_{k})\). If we assume that n is even, then, for a vector \(\textbf{v}\in D^n\), let \(\textbf{v}^{(\textrm{high})},\textbf{v}^{{(\textrm{low})}}\in D^{n/2}\) be the unique vectors such that \(\textbf{v}^{{(\textrm{high})}} \Vert \textbf{v}^{{(\textrm{low})}} = \textbf{v}\). Indeed, to achieve this assumption let n be odd, fix an arbitrary \(d \in D\), and define \({\widetilde{g}}, {\widetilde{h}} :D^{n+1}\rightarrow {\{-M,\ldots , M\}}\) as \({\widetilde{g}}(\textbf{u}_1,\ldots \textbf{u}_{n+1}) = \llbracket {\textbf{u}_{n+1} = d}\rrbracket \cdot g(\textbf{u}_1,\ldots \textbf{u}_{n}) \) and \({\widetilde{h}}(\textbf{u}_1,\ldots \textbf{u}_{n+1}) = \llbracket {\textbf{u}_{n+1} = d}\rrbracket \cdot h(\textbf{u}_1,\ldots \textbf{u}_{n})\) for all \(\textbf{u}\in D^{n+1}\). It can be easily verified that \((g\mathbin {\circledast _{f}}h)(\textbf{v}) = ({\widetilde{g}} \mathbin {\circledast _{f}}{\widetilde{h}})(\textbf{v}\Vert (f(d,d)))\). Thus, we can solve the \(f\)-Query instance \({\widetilde{g}}\), \({\widetilde{h}}\) and \(\textbf{v}\Vert (f(d,d))\) and obtain the correct result.

We first provide the intuition behind the algorithm and then formally present the algorithm and show correctness.

Intuition We define a directed multigraph G where the vertices are partitioned into four layers \(\text {L}^{{(\textrm{high})}}\), \(\text {L}^{{(\textrm{low})}}\), \(\text {R}^{{(\textrm{low})}}\), and \(\text {R}^{{(\textrm{high})}}\). Each of these sets consists of \(|D|^{n/2}\) vertices representing every vector in \(D^{n/2}\). For ease of notation, we use the vectors to denote the associated vertices; furthermore, the intuition assumes g and h are non-negative. The multigraph G contains the following edges:

-

\(g(\textbf{w}\Vert \textbf{x})\) parallel edges from \(\textbf{w}\in D^{n/2}\) in \(\text {L}^{{(\textrm{high})}}\) to \(\textbf{x}\in D^{n/2}\) in \(\text {L}^{{(\textrm{low})}}\).

-

One edge from \(\textbf{x}\in D^{n/2}\) in \(\text {L}^{{(\textrm{low})}}\) to \(\textbf{y}\in D^{n/2}\) in \(\text {R}^{{(\textrm{low})}}\) if and only if \(\textbf{x}\oplus _f \textbf{y}=v^{(\textrm{low})}\).

-

\(h(\textbf{z}\Vert \textbf{y})\) parallel edges from \(\textbf{y}\in D^{n/2}\) in \(\text {R}^{{(\textrm{low})}}\) to \(\textbf{z}\in D^{n/2}\) in \(\text {R}^{{(\textrm{high})}}\).

-

One edge from \(\textbf{z}\in D^{n/2}\) in \(\text {R}^{{(\textrm{high})}}\) to \(\textbf{w}\in D^{n/2}\) in \(\text {L}^{{(\textrm{high})}}\) if and only if \(\textbf{w}\oplus _f\textbf{z}=v^{(\textrm{high})}\).

In the formal proof, we denote the adjacency matrix between \(\text {L}^{{(\textrm{high})}}\) and \(\text {L}^{{(\textrm{low})}}\) by W, between \(\text {L}^{{(\textrm{low})}}\) and \(\text {R}^{{(\textrm{low})}}\) by X, between \(\text {R}^{{(\textrm{low})}}\) and \(\text {R}^{{(\textrm{high})}}\) by Y, and between \(\text {R}^{{(\textrm{high})}}\) and \(\text {L}^{{(\textrm{high})}}\) by Z. See Fig. 4 for an example of this construction.

Construction of the directed multigraph G. Each vertex in a layer corresponds to the vector in \(D^{n/2}\). We highlighted 4 vectors \(\textbf{w},\textbf{x},\textbf{y},\textbf{z}\in D^{n/2}\) each in a different layer. Note that the number of 4 cycles that go through all four \(\textbf{w},\textbf{x},\textbf{y},\textbf{z}\) is equal to \(g(\textbf{w}\Vert \textbf{x}) \cdot h(\textbf{z}\Vert \textbf{y})\). The total number of directed 4-cycles in this graph corresponds to the value \((g\mathbin {\circledast _{f}}h)(\textbf{v})\) and \(\text {tr}(W\cdot X \cdot Y\cdot Z)\)

Let \(\textbf{w}, \textbf{x}, \textbf{y},\textbf{z}\in D^{n/2}\) be vertices in \(\text {L}^{{(\textrm{high})}}\), \(\text {L}^{{(\textrm{low})}}\), \(\text {R}^{{(\textrm{low})}}\), and \(\text {R}^{{(\textrm{high})}}\). It can be observed that if \((\textbf{w}\Vert \textbf{x}) \oplus _f (\textbf{y}\Vert \textbf{z}) \ne \textbf{v}\), then G does not contain any cycle of the form \(\textbf{w}\rightarrow \textbf{x}\rightarrow \textbf{y}\rightarrow \textbf{z}\rightarrow \textbf{w}\) as one of the edges \((\textbf{x}, \textbf{y})\) or \((\textbf{z}, \textbf{w})\) is not present in the graph. Conversely, if \((\textbf{w}\Vert \textbf{x})\oplus _f (\textbf{y}\Vert \textbf{z})= \textbf{v}\), then one can verify that there are \(g(\textbf{w}\Vert \textbf{x})\cdot h(\textbf{z}\Vert \textbf{y})\) cycles of the form \(\textbf{w}\rightarrow \textbf{x}\rightarrow \textbf{y}\rightarrow \textbf{z}\rightarrow \textbf{w}\). We therefore expect that \((g \mathbin {\circledast _{f}}h)(\textbf{v}) \) is the number of cycles in G that start at some \(\textbf{w}\in D^{n/2}\) in \(\text {L}^{{(\textrm{high})}}\), have length four, and end at the same vertex \(\textbf{w}\) in \(\text {L}^{{(\textrm{high})}}\) again.

Formal Proof We use the notation \(\textsf{Mat}_{\mathbb {Z}}(D^{n/2}\times D^{n/2})\) to refer to a \(|D|^{n/2} \times |D|^{n/2}\) matrix of integers where we use the values in \(D^{n/2}\) as indices. The transition matrices of g, h and \(\textbf{v}\) are the matrices \(W,X,Y,Z\in \textsf{Mat}_{\mathbb {Z}}(D^{n/2}\times D^{n/2})\) defined by

Recall that the trace \(\text {tr}(A)\) of a matrix \(A \in \textsf{Mat}_{\mathbb {Z}}(m \times m)\) is defined as \(\text {tr}(A) {:}{=}\sum _{i=1}^m A_{i,i}\). The next lemma formalizes the correctness of this construction.

Lemma 5.1

Let \(n\in {\mathbb {N}}\) be an even number, \(g,h :D^n\rightarrow {\mathbb {Z}}\) and \(\textbf{v}\in D^n\). Also, let \(W,X,Y,Z\in \textsf{Mat}_{\mathbb {Z}}(D^{n/2}\times D^{n/2})\) be the transition matrices of g, h and \(\textbf{v}\). Then,

Proof

For any \(\textbf{w}, \textbf{y}\in D^{n/2}\) it holds that,

Similarly, for any \(\textbf{y},\textbf{w}\in D^{n/2}\) it holds that,

Therefore, for any \(\textbf{w}\in D^{n/2}\),

where the second equality follows by (5.1) and (5.2). Thus,

\(\square \)

Now we have everything ready to give the algorithm for \(f\)-Query.

Proof of Theorem 1.4

The algorithm for solving \(f\)-Query works in two steps:

-

1.

Compute the transition matrices W, X, Y, and Z of g, h and \(\textbf{v}\) as described above.

-

2.

Compute and return \(\text {tr}(W \cdot X \cdot Y \cdot Z)\).

By Lemma 5.1 this algorithm returns \((g\mathbin {\circledast _{f}}h)(\textbf{v})\). Computing the transition matrices in Step 1. requires \(\widetilde{{\mathcal {O}}}(|D|^n \cdot \textrm{polylog}(M))\) time. Observe the maximal absolute values of an entry in the transition matrices is M. The computation of \(W\cdot X\cdot Y\cdot Z\) in Step 2. requires three matrix multiplications of \(|D|^{n/2}\times |D|^{n/2}\) matrices, which can be done in \(\widetilde{{\mathcal {O}}}((|D|^{n /2})^\omega \cdot \textrm{polylog}(M))\) time. Thus, the overall running time of the algorithm is \(\widetilde{{\mathcal {O}}}(|D|^{\omega \cdot n / 2} \cdot \textrm{polylog}(M))\). \(\square \)

6 Conclusion and Future Work

In this paper, we studied the \(f\)-Convolution problem and demonstrated that the naive brute-force algorithm can be improved for every \(f :D \times D \rightarrow D\). We achieve that by introducing a cyclic partition of a function and showing that there always exists a cyclic partition of bounded cost. We give an \(\widetilde{{\mathcal {O}}}((c|D|^2)^{n} \cdot \textrm{polylog}(M))\) time algorithm that computes \(f\)-Convolution for \(c {:}{=}3/4\) when \(|D|\) is even.

The cyclic partition is a very general tool and potentially it can be used to achieve greater improvements for certain functions f. For example, in multiple applications (e.g., [19, 23, 29, 34]) the function f has a cyclic partition with a single cyclic minor. Nevertheless, in our proof we only use cyclic minors where one domain is of size is at most 2. We suspect that larger minors have to be considered to obtain better results. Indeed, the lower bound from Lemma 4.16 implies that our technique of considering two arbitrary rows together cannot give a faster algorithm than \(\widetilde{{\mathcal {O}}}((3/4 \cdot \vert {D} \vert ^2)^n \cdot \textrm{polylog}(M))\) in general. An improved algorithm would have to select these rows very carefully or consider three or more rows at the same time.

We leave several open problems. Our algorithm offers an exponential (in n) improvement over a naive algorithm for domains \(D\) of constant size. Can we hope for an \(\widetilde{{\mathcal {O}}}(|D|^{(2-\epsilon )n} \cdot \textrm{polylog}(M))\) time algorithm for \(f\)-Convolution for some \(\epsilon > 0\)? We are not aware of any lower bounds, so in principle even an \(\widetilde{{\mathcal {O}}}(|D|^n \cdot \textrm{polylog}(M))\) time algorithm is plausible.

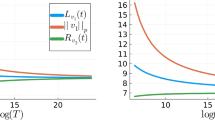

Ideally, we would expect that the \(f\)-Convolution problem can be solved in \(\widetilde{{\mathcal {O}}}((|L|^n+|R|^n+|T|^n) \cdot \textrm{polylog}(M))\) for any function \(f :L \times R \rightarrow T\). In Fig. 5 we include three examples of functions that are especially difficult for our methods.

Here are three concrete examples of functions f for which we expect that the running times for \(f\)-Convolution should be \(\widetilde{{\mathcal {O}}}(3^n \cdot \textrm{polylog}(M))\), \(\widetilde{{\mathcal {O}}}(3^n \cdot \textrm{polylog}(M))\) and \(\widetilde{{\mathcal {O}}}(4^n \cdot \textrm{polylog}(M))\). However, the best cyclic partitions for this functions have costs 4, 4 and 5 (the partitions are highlighted appropriately). This implies that the best running time, which may be attained using our techniques are \(\widetilde{{\mathcal {O}}}(4^n \cdot \textrm{polylog}(M))\), \(\widetilde{{\mathcal {O}}}(4^n \cdot \textrm{polylog}(M))\) and \(\widetilde{{\mathcal {O}}}(5^n \cdot \textrm{polylog}(M))\) (Color figure online)

Finally, we gave an \(\widetilde{{\mathcal {O}}}(|D|^{\omega \cdot n / 2} \cdot \textrm{polylog}(M))\) time algorithm for \(f\)-Query problem. For \(\omega = 2\) this algorithm runs in almost linear-time, however for the current bound \(\omega < 2.372\) our algorithm runs in time \(\widetilde{{\mathcal {O}}}(|D|^{1.19n} \cdot \textrm{polylog}(M))\). Can \(f\)-Query be solved in \(\widetilde{{\mathcal {O}}}(|D|^n \cdot \textrm{polylog}(M))\) time without assuming \(\omega =2\)?

Notes

We use \(\widetilde{{\mathcal {O}}}(x)=x\cdot {{\,\mathrm{\textrm{polylog}}\,}}(x)\) notation to hide polylogarithmic factors. We assume that M is the maximum absolute value of the integers on the input.

We provide a formal definition of \(\oplus _f\) in Sect. 2.

This observation was brought to our attention by Nederlof [27].

It is a special case with \(D= \{0,1\}\), \(\textbf{v}= 0^n\) and \(f(x,y) = x \cdot y\)

We use \(\varepsilon \) to denote the vector of length 0.

Note that there might be multiple \(r \in R\) with \(\lambda _f(r)=(t_j,t_{j+1})\).

References

Abboud, A., Williams, R.R., Yu, H.: More applications of the polynomial method to algorithm design. In: Indyk, P. (ed.) Proceedings of the Twenty-Sixth Annual ACM-SIAM Symposium on Discrete Algorithms, SODA 2015, San Diego, CA, USA, January 4–6, 2015, pp. 218–230. SIAM (2015)

Alman, J., Williams, V.V.: A refined laser method and faster matrix multiplication. In: Marx D (ed.) Proceedings of the 2021 ACM-SIAM Symposium on Discrete Algorithms, SODA 2021, Virtual Conference, January 10–13, 2021. SIAM, pp. 522–539 (2021)

Bennett, M.A., Martin, G., O’Bryant, K., Rechnitzer, A.: Explicit bounds for primes in arithmetic progressions. Ill. J. Math. 62(1–4), 427–532 (2018)

Beth, T.: Verfahren der schnellen Fourier-Transformation: die allgemeine diskrete Fourier-Transformation–ihre algebraische Beschreibung, Komplexität und Implementierung, vol. 61. Teubner (1984)

Björklund, A., Husfeldt, T.: The parity of directed Hamiltonian cycles. In: 54th Annual IEEE Symposium on Foundations of Computer Science, FOCS 2013, 26–29 October, 2013, Berkeley, CA, USA, pp. 727–735. IEEE Computer Society (2013)

Björklund, A., Husfeldt, T., Kaski, P., Koivisto, M.: Fourier meets Möbius: fast subset convolution. In: Johnson, D.S., Feige, U. (eds.) Proceedings of the 39th Annual ACM Symposium on Theory of Computing, San Diego, California, USA, June 11–13, 2007, pp. 67–74. ACM (2007)

Björklund, A., Husfeldt, T., Kaski, P., Koivisto, M.: Counting paths and packings in halves. In: Fiat A, Sanders P (eds.) Algorithms—ESA 2009, 17th Annual European Symposium, Copenhagen, Denmark, September 7–9, 2009. Proceedings, volume 5757 of Lecture Notes in Computer Science, pp. 578–586. Springer (2009)

Björklund, A., Husfeldt, T., Kaski, P., Koivisto, M.: Covering and packing in linear space. Inf. Process. Lett. 111(21–22), 1033–1036 (2011)

Björklund, A., Husfeldt, T., Kaski, P., Koivisto, M., Nederlof, J., Parviainen, P.: Fast zeta transforms for lattices with few irreducibles. ACM Trans. Algorithms 12(1), 4:1-4:19 (2016)

Björklund, A., Husfeldt, T., Koivisto, M.: Set partitioning via inclusion–exclusion. SIAM J. Comput. 39(2), 546–563 (2009)

Brand, C.: Discriminantal subset convolution: Refining exterior-algebraic methods for parameterized algorithms. J. Comput. Syst. Sci. 129, 62–71 (2022)

Bringmann, K., Fischer, N., Hermelin, D., Shabtay, D., Wellnitz, P.: Faster minimization of tardy processing time on a single machine. Algorithmica 84(5), 1341–1356 (2022)

Bringmann, K., Künnemann, M., Węgrzycki, K.: Approximating APSP without scaling: equivalence of approximate min-plus and exact min-max. In: Proceedings of the 51st Annual ACM SIGACT Symposium on Theory of Computing, pp. 943–954 (2019)

Chan, T.M., He, Q.: Reducing 3SUM to convolution-3SUM. In: Farach-Colton, M., Gørtz, I.L. (eds.) 3rd Symposium on Simplicity in Algorithms, SOSA 2020, Salt Lake City, UT, USA, January 6–7, 2020, pp. 1–7. SIAM (2020)

Chan, T.M., Williams, R.R.: Deterministic APSP, Orthogonal Vectors, and more: quickly derandomizing Razborov–Smolensky. ACM Trans. Algorithms 17(1), 2:1-2:14 (2021)

Clausen, M.: Fast generalized Fourier transforms. Theor. Comput. Sci. 67(1), 55–63 (1989)

Cooley, J.W., Tukey, J.W.: An algorithm for the machine calculation of complex Fourier series. Math. Comput. 19(90), 297–301 (1965)

Cygan, M., Mucha, M., Węgrzycki, K., Włodarczyk, M.: On problems equivalent to \((\min , +)\)-convolution. ACM Trans. Algorithms 15(1), 14:1-14:25 (2019)

Cygan, M., Nederlof, J., Pilipczuk, M., Pilipczuk, M., van Rooij, J.M.M., Wojtaszczyk, J.O.: Solving connectivity problems parameterized by treewidth in single exponential time. ACM Trans. Algorithms 18(2), 17:1-17:31 (2022)

Cygan, M., Pilipczuk, M.: Exact and approximate bandwidth. Theor. Comput. Sci. 411(40–42), 3701–3713 (2010)

Duan, R., Wu, H., Zhou, R.: Faster Matrix Multiplication via Asymmetric Hashing. CoRR arXiv:2210.10173 (2022)

Hall, P.: A contribution to the theory of groups of prime-power order. Proc. Lond. Math. Soc. 2(1), 29–95 (1934)

Hegerfeld, F., Kratsch, S.: Solving connectivity problems parameterized by treedepth in single-exponential time and polynomial space. In: Paul, C., Bläser, M. (eds.) 37th International Symposium on Theoretical Aspects of Computer Science, STACS 2020, March 10–13, 2020, Montpellier, France, volume 154 of LIPIcs, pp. 29:1–29:16. Schloss Dagstuhl - Leibniz-Zentrum für Informatik (2020)

Hegerfeld, F., Kratsch, S.: Tight algorithms for connectivity problems parameterized by clique-width. In: Proceedings of ESA (2023) (to appear)

Künnemann, M., Paturi, R., Schneider, S.: On the fine-grained complexity of one-dimensional dynamic programming. In: Chatzigiannakis, I., Indyk, P., Kuhn, F., Muscholl, A. (eds.) 44th International Colloquium on Automata, Languages, and Programming, ICALP 2017, July 10–14, 2017, Warsaw, Poland, volume 80 of LIPIcs, pp. 21:1–21:15. Schloss Dagstuhl - Leibniz-Zentrum für Informatik (2017)

Lincoln, A., Polak, A., Williams, V.V.: Monochromatic triangles, intermediate matrix products, and convolutions. In: Vidick, T. (ed.) 11th Innovations in Theoretical Computer Science Conference, ITCS 2020, January 12–14, 2020, Seattle, Washington, USA, volume 151 of LIPIcs, pp. 53:1–53:18. Schloss Dagstuhl - Leibniz-Zentrum für Informatik (2020)

Nederlof, J.: Personal communication (2022)