Abstract

Purpose

This PRISMA-compliant systematic review aims to analyze the existing applications of artificial intelligence (AI), machine learning, and deep learning for rhinological purposes and compare works in terms of data pool size, AI systems, input and outputs, and model reliability.

Methods

MEDLINE, Embase, Web of Science, Cochrane Library, and ClinicalTrials.gov databases. Search criteria were designed to include all studies published until December 2021 presenting or employing AI for rhinological applications. We selected all original studies specifying AI models reliability. After duplicate removal, abstract and full-text selection, and quality assessment, we reviewed eligible articles for data pool size, AI tools used, input and outputs, and model reliability.

Results

Among 1378 unique citations, 39 studies were deemed eligible. Most studies (n = 29) were technical papers. Input included compiled data, verbal data, and 2D images, while outputs were in most cases dichotomous or selected among nominal classes. The most frequently employed AI tools were support vector machine for compiled data and convolutional neural network for 2D images. Model reliability was variable, but in most cases was reported to be between 80% and 100%.

Conclusions

AI has vast potential in rhinology, but an inherent lack of accessible code sources does not allow for sharing results and advancing research without reconstructing models from scratch. While data pools do not necessarily represent a problem for model construction, presently available tools appear limited in allowing employment of raw clinical data, thus demanding immense interpretive work prior to the analytic process.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

“The Skynet Funding Bill is passed. The system went online August 4th, 1997. Human decisions are removed from strategic defense. Skynet begins to learn at a geometric rate. It becomes self-aware at 2:14 a.m. Eastern time, August 29th” [1]. Introducing the second chapter of the Terminator franchise, director James Cameron insinuates a gritty, yet precise, definition of what is usually defined as general artificial intelligence (AI), i.e., a machine perfectly mimicking human intelligence. Outside of science fiction and speculation, we are limited to working with narrow AI: electronic systems created with the capacity to substitute for humans in various specific tasks. When integrated with machine learning (ML) algorithms, an AI is allowed to learn and improve from experience, becoming progressively capable to learn how to execute specific tasks even if it has not been specifically programmed to do it ab initio. However, ML algorithms still require human intervention in the training phase. More recently introduced deep learning (DL) models are specific ML applications whose complex algorithms and neural nets (consisting of many hierarchical layers—i.e., deep—of non-linear processing units) train models, with little to no explicit human data input. These progressive developments make AI an incredible tool in various fields, including healthcare, where it has been deemed suitable for repetitive analytic tasks [2], complex calculations [3], and complex forecasts [4, 5].

Rhinology is not immune to such tasks considering procedures, such as nasal cytology smears analysis (repetitive task), nasal airflow computational fluid dynamics modeling (complex calculations), and radiomics-based oncological risk stratification (complex forecast).

Several intrinsic technical issues make AI applications in rhinology challenging and embryonic at best. First, researchers must choose from several computational techniques for ML, many of which have been used in different situations based on complex program decision-making [6]. Different computational techniques require different input data (qualitative and quantitative) for algorithm training, results validation, and so-called “truth” imposition on the AI. Furthermore, commonly used clinical data, particularly those in graphical forms, such as radiologic studies or histology slides, require heavy manipulation before being fed to the AI. Finally, currently available rhinological AI studies rely on different algorithms developed de novo for nearly every study rather than sharing open-access infrastructures facilitating progressive development.

This systematic review aims at analyzing the existing literature on AI applications in rhinology, defining technologies, data sets, and inputs appropriate for AI/ML/DL, verifying the real-world verified applications, and determining whether AI in rhinology might benefit from a stricter commitment to open science.

Methods

Search strategy

After PROSPERO database registration (ID CRD42022298020), a systematic review was conducted between December 15, 2021, and April 30, 2022, according to the PRISMA reporting guidelines [7]. We conducted systematic electronic searches for studies in the English, Italian, German, French and Spanish languages reporting original data concerning AI, ML, or DL applications in human rhinology.

On December 15, 2021, we searched the MEDLINE, Embase, Web of Science, Cochrane Library, and ClinicalTrials.gov databases for AI-related terms in association with rhinology-, nose- or paranasal sinuses-related terms. Full search strategies and the number of items retrieved from each database are available in Table 1.

We included articles, where AI, ML, or DL was explicitly used by the authors for any rhinological purpose in humans providing model reliability metrics. We excluded meta-analyses and systematic and narrative reviews, which were nevertheless hand-checked for additional potentially relevant studies. No minimum study population was required.

Abstracts and full texts were reviewed in duplicate by different authors. At the abstract review stage, we included all studies deemed eligible by at least one rater. At the full-text review stage, disagreements were resolved by consensus between raters.

PICOS criteria

The PICOS (Population, Intervention, Comparison, Outcomes, and Study) framework [7] for the review was:

P: any patient with confirmed or potential rhinological conditions or simply acting as a model of sinonasal anatomy or rhinological conditions.

I: any application of artificial intelligence for rhinological diagnostic, therapeutic, classification, or speculative purposes.

C: no comparator available.

O: effectiveness of created models.

S: all original study types.

For each article, we recorded: country of origin, type of article (whether technical or clinical and indicating the study type for the latter group), data set numerosity with train:validation:test split ratios, type of input, type of output, type of AI model, broad field of application, specific model application, model reliability, and source code availability. Data extraction was performed in duplicate by different authors (AMB and AMS) and disagreements were solved by consensus.

Clinical studies were assessed for both quality and methodological bias according to the National Heart, Lung, and Blood Institute Study Quality Assessment Tools (NHI-SQAT) [8]. Articles were rated in duplicate by two authors and disagreements were resolved by consensus. Items were rated as good if they fulfilled at least 80% of the items required by the NHI-SQAT, fair if they fulfilled between 50% and 80% of the items, and poor if they fulfilled less than 50% of the items, respectively.

The level of evidence for clinical studies was scored according to the Oxford Centre for evidence-based medicine (OCEBM) level of evidence guide [9].

Due to the significant heterogeneity of study populations and methods and the predominantly qualitative nature of collected data, no meta-analysis was originally planned or performed a posteriori.

Results

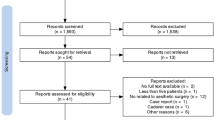

Among the 1378 unique research items initially identified, a total of 133 articles were selected for full-text evaluation. No further study was identified for full-text evaluation after reference checking. Thirty-nine studies published between 1997 and 2021 were retained for analysis (see Fig. 1) [2,3,4, 6, 10,11,12,13,14,15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36,37,38,39,40,41,42,43,44]. Most studies were published in the last 5 years. Eleven of these studies were completed in the United States (US), with South Korea being the second most productive country (n = 5). Publications were collected from 14 different countries on four continents. Twenty-nine studies were purely technical in their structure. The remaining 10 clinical articles were retrospective cohort studies (n = 3), prospective cohort studies (n = 6), and a single case series. Accordingly, their level of evidence according to the OCEBM scale was IV (n = 1), III (n = 3), and II (n = 2). Clinical articles were rated as good (n = 7) or fair (n = 3) according to the NHI-SQAT tools, with no article being rated as low quality. No significant biases toward the objectives of our systematic review were identified. Table 2 reports the country of origin, evidence, and quality rating (where available) for all studies.

Data set numerosity order of magnitude ranged from 101 to 104. Train:validation:test splits (reported for 29 articles) were extremely varied. Four articles used variable train:validation:test splits. With one exception, train data sets outweighed test data sets, with ratios ranging from 2:1 to 30:1. There were multiple inputs including: manually compiled binary, continuous and/or categorical variables (n = 15), pre-elaborated bidimensional graphics (n = 12), native bidimensional graphics (n = 8), native tridimensional graphics (n = 3), and verbal fragments (n = 1). Most outputs (n = 23) were binary classifications of items processed from the AI (e.g., presence or absence of maxillary inflammation on a radiologic image), 9 were categorical classifications of items, and 7 were continuous estimates, such as cell counts and radiological volumes segmentations.

Regarding AI models and architectures employed, a convolutional neural network (CNN) was the most frequently used, particularly for graphic input elaboration, while support vector machine (SVM) was the most prevalent model for compiled data analysis. Eight articles purposely employed different AI models, often comparing them in terms of reliability. Sinonasal anatomy (n = 8), rhinosinusitis (n = 24), and allergy (n = 7) were the most frequent broad fields of application of AI models. These were also applied to endoscopic sinus surgery, sinonasal neoplasms, and rhinoplasty, although in fewer instances.

Specific AI applications were protean and extremely well-defined. Anatomical structure identification and segmentation, in addition to disease diagnosis from radiologic studies, represented the most frequent scenarios. Authors chose different metrics for AI model reliability, with accuracy (32–100%) and area under the curve (0.6–0.974) being the most frequently employed. AI software code availability was scarce. No code was available for two studies, six were built on a third-party open-source framework, three used precompiled free software (usually R), three used commercial software, and three others provided links to the code employed or formally stated free availability of such code upon request. Table 3 reports specific information on the AI models presented in the studies.

Discussion

To the authors’ knowledge, this is the first systematic review addressing the role of AI in rhinology. Our reviews showed that several AI rhinological applications have been developed recently, yet none has been validated in a real-world setting, with a sporadical application of open science principles.

While AI studies often enable claims of boasting efficiency and superiority to human analytical accuracy and speed, their application to real-world scenarios remains far off [45], thus emphasizing the need for an analytical breakdown of articles technical frameworks. Our review revealed that rhinology is not immune to this issue and AI applications remain more theoretical than useful in day-to-day clinics.

Rhinological AI applications appear generally restricted to extremely specific tasks, specifically regulated by the input homogeneity required by AI models and the oversimplifications required to provide answers. Therefore, inputs are often numerically compiled from a prior set of variables. Likewise, graphical information undergoes heavy preprocessing before AI submission. For example, only three reviewed articles used three-dimensional native volume information to allow segmentation of sinonasal structures [25, 27, 33], eight studies used native bidimensional images, and all others used some form of data manipulation.

Theoretically simple analyses such as locating the sinuses in a CT volume remain challenging for AI and only volume estimates have been performed on three-dimensional models. Narrow categorization of answers is required at the output level, therefore, that nearly, half of the reviewed models used dichotomous outputs, while the remaining used predefined categorical answers or continuous numerical scales. This rigid input–output relationship, pivotal for understanding AIs development, is often only hinted at.

The review shows that inconsistent use of reporting parameters hinders an accurate evaluation of rhinological AIs reliability. Reviewed articles employ more than ten different model fitting metrics, the most common being accuracy and area under the curve. As this issue is common to many AI applications, the choice of reporting metrics is a matter of debate among data scientists [45], which led to the development of dedicated metrics, such as F1 score and Matthews correlation coefficient to replace accuracy, which might be affected by data set imbalances.

The strikingly good performances of reviewed models might nevertheless point toward a potential reporting bias, where less-than-optimal models are not allowed enough editorial space. Publication of negative/intermediate results might allow tackling structural issues and highlight subjects requiring further research or finer model tuning.

Only three studies stated their code was publicly available [12, 23, 37], and few others were adapted from free software or built upon open frameworks. Such source code unavailability hinders testing models on different data sets, thus preventing overfitting which, along with small samples, arbitrary selection of samples, and poor handling of missing data has been exposed as one of the most frequent sources of bias in medical AI studies [46].

Our review further shows that no univocal indications can be drawn for data pool sizes, as published works suggest that models can rely on minimal numbers of patients, though most data sets collected between 103 and 104 items. Analogously train:validation:test splits—required to evaluate algorithm performance with new data—are extremely variable and unrelated to reliability.

Conversely, the choice of AI model appears more consistent. Without consideration of proprietary software, our review shows that the use of CNN for graphical data analysis, SVM for numerical and compiled data analysis, and decision tree/random forest algorithms for making predictions from compiled data represent most scenarios. CNNs are artificial neural networks using a mathematical operation called convolution to fulfill their design task, i.e., process pixel data for image recognition and processing. SVMs are supervised learning models built to analyze data for classification and regression analysis purposes. Although they are able also to handle graphic data, they are not especially designed for this task which is usually addressed with CNNs. Last, decision tree learning is a method commonly used in data mining that aims to create a predictive model of a target variable based on several input variables.

It is also of interest to note how the terms “machine learning” and “deep learning” are used almost interchangeably in the reviewed articles (occasionally in the context of the same article), though they represent different aspects of AI technology. Even if we acknowledge that there is no rigid classification of what constitutes AI, ML or DL, this further supports the notion that there may be a lack of cohesion in AI research. While not intrinsically wrong, such interlabeling hinders the understanding of articles.

There are some limitations to our work that should be considered. In the context of this systematic review, we strived to minimize bias articles selection and data extraction, therefore, not imposing time limits for our searches and including all potential applications. For this purpose, we also decided to include both clinical studies of any design and purely technical studies, though they offer radically different perspectives. While including only articles reporting model reliability minimizes inclusion of purely theoretical studies, it might also have restricted the potential applications presented in this review.

At present, the best AI models available in health sciences are considered non-inferior to expert specialists [47] and are still characterized by technical limits and demands. It comes naturally that rhinology experiences the same distance between AI and everyday practice as other fields of medicine.

Conclusions

Our review suggests that rhinological AI applications remain only speculative due to the complexities of using data in real-world scenarios. Until more agile algorithms become available on a larger scale, AI will not be able to substitute for clinician work in rhinology. Widespread use of open software policies and lean methodological and technical reporting might allow swifter advances in this field.

Data availability

All data pertaining to this systematic review are available from the corresponding author upon reasonable request.

References

Frakes R, Cameron J, Wisher W (1991) Terminator 2: Judgment Day. Spectra

Kim DK, Lim HS, Eun KM et al (2021) Subepithelial neutrophil infiltration as a predictor of the surgical outcome of chronic rhinosinusitis with nasal polyps. Rhinology 59:173–180. https://doi.org/10.4193/rhin20.373

Lamassoure L, Giunta J, Rosi G et al (2021) Anatomical subject validation of an instrumented hammer using machine learning for the classification of osteotomy fracture in rhinoplasty. Med Eng Phys 95:111–116. https://doi.org/10.1016/j.medengphy.2021.08.004

Kim HG, Lee KM, Kim EJ, Lee JS (2019) Improvement diagnostic accuracy of sinusitis recognition in paranasal sinus X-ray using multiple deep learning models. Quant Imaging Med Surg 9:942–951. https://doi.org/10.21037/qims.2019.05.15

Barkana DE, Masazade E (2014) Classification of the Emotional State of a Subject Using Machine Learning Algorithms for RehabRoby. In: Habib MK (ed) Handbook of Research on Advancements in Robotics and Mechatronics, 1st edn. IGI Global, Hershey, PA, pp 2160–2187.

Arfiani A, Rustam Z, Pandelaki J, Siahaan A (2019) Kernel spherical K-means and support vector machine for acute sinusitis classification. IOP Conf Ser Mater Sci Eng 546:052011. https://doi.org/10.1088/1757-899X/546/5/052011

Liberati A, Altman DG, Tetzlaff J et al (2009) The PRISMA statement for reporting systematic reviews and meta-analyses of studies that evaluate healthcare interventions: explanation and elaboration. BMJ 339:b2700. https://doi.org/10.1136/bmj.b2700

National Heart, Lung and Blood Institute (2013) Study Quality Assessment Tools. https://www.nhlbi.nih.gov/health-topics/study-quality-assessment-tools. Accessed 8 Apr 2022

Centre for Evidence-Based Medicine (2011) OCEBM levels of evidence. https://www.cebm.ox.ac.uk/resources/levels-of-evidence/ocebm-levels-of-evidence Accessed 8 Apr 2022

Aggelides X, Bardoutsos A, Nikoletseas S, Papadopoulos N, Raptopoulos C, Tzamalis P (2020) A Gesture Recognition approach to classifying Allergic Rhinitis gestures using Wrist-worn Devices : a multidisciplinary case study. In: 16th Int Conf Distr Comp Sens Syst (DCOSS), 2020:1–10. https://doi.org/10.1109/DCOSS49796.2020.00015

Bieck R, Heuermann K, Pirlich M, Neumann J, Neumuth T (2020) Language-based translation and prediction of surgical navigation steps for endoscopic wayfinding assistance in minimally invasive surgery. Int J Comput Assist Radiol Surg 15:2089–2100. https://doi.org/10.1007/s11548-020-02264-2

Borsting E, DeSimone R, Ascha M, Ascha M (2020) Applied deep learning in plastic surgery: classifying rhinoplasty with a mobile app. J Craniofac Surg 31:102–106. https://doi.org/10.1097/scs.0000000000005905

Chowdhury NI, Li P, Chandra RK, Turner JH (2020) Baseline mucus cytokines predict 22-item Sino-Nasal Outcome Test results after endoscopic sinus surgery. Int Forum Allergy Rhinol 10:15–22. https://doi.org/10.1002/alr.22449

Chowdhury NI, Smith TL, Chandra RK, Turner JH (2019) Automated classification of osteomeatal complex inflammation on computed tomography using convolutional neural networks. Int Forum Allergy Rhinol 9:46–52. https://doi.org/10.1002/alr.22196

Hassid S, Decaestecker C, Hermans C et al (1997) Algorithm analysis of lectin glycohistochemistry and Feulgen cytometry for a new classification of nasal polyposis. Ann Otol Rhinol Laryngol 106:1043–1051. https://doi.org/10.1177/000348949710601208

Dimauro G, Ciprandi G, Deperte F et al (2019) (2019) Nasal cytology with deep learning techniques. Int J Med Inform 122:13–19. https://doi.org/10.1016/j.ijmedinf.2018.11.010

Dimauro G, Deperte F, Maglietta R et al (2020) A novel approach for biofilm detection based on a convolutional neural network. Electronics 9:881. https://doi.org/10.3390/electronics9060881

Dimauro G, Bevilacqua V, Fina P et al (2020) Comparative analysis of rhino-cytological specimens with image analysis and deep learning techniques. Electronics 9:952. https://doi.org/10.3390/electronics9060952

Dorfman R, Chang I, Saadat S, Roostaeian J (2020) Making the subjective objective: machine learning and rhinoplasty. Aesthet Surg J 40:493–498. https://doi.org/10.1093/asj/sjz259

Elgin Christo VR, Kannan A, Khanna Nehemiah H, Nahato KB, Brighty J (2020) Computer assisted medical decision-making system using genetic algorithm and extreme learning machine for diagnosing allergic rhinitis. Int J Bio-Inspir Comp 16:148. https://doi.org/10.1504/IJBIC.2020.111279

Farhidzadeh H, Kim JY, Scott JG, Goldgof DB, Hall LO, Harrison LB (2016) Classification of progression free survival with nasopharyngeal carcinoma tumors. In: Proceedings of SPIE 9785, Medical Imaging 2016: Computer-Aided Diagnosis. 87851l. https://doi.org/10.1117/12.2216976

Fujima N, Shimizu Y, Yoshida D et al (2019) Machine-learning-based prediction of treatment outcomes using MR imaging-derived quantitative tumor information in patients with sinonasal squamous cell carcinomas: a preliminary study. Cancers 11:800. https://doi.org/10.3390/cancers11060800

Girdler B, Moon H, Bae MR, Ryu SS, Bae J, Yu MS (2021) Feasibility of a deep learning-based algorithm for automated detection and classification of nasal polyps and inverted papillomas on nasal endoscopic images. Int Forum Allergy Rhinol 11:1637–1646. https://doi.org/10.1002/alr.22854

Huang J, Habib AR, Mendis D et al (2020) An artificial intelligence algorithm that differentiates anterior ethmoidal artery location on sinus computed tomography scans. J Laryngol Otol 134:52–55. https://doi.org/10.1017/s0022215119002536

Humphries SM, Centeno JP, Notary AM et al (2020) Volumetric assessment of paranasal sinus opacification on computed tomography can be automated using a convolutional neural network. Int Forum Allergy Rhinol 10:1218–1225. https://doi.org/10.1002/alr.22588

Jeon Y, Lee K, Sunwoo L et al (2021) Deep learning for diagnosis of paranasal sinusitis using multi-view radiographs. Diagnostics (Basel) 11:250. https://doi.org/10.3390/diagnostics11020250

Jung SK, Lim HK, Lee S, Cho Y, Song IS (2021) Deep active learning for automatic segmentation of maxillary sinus lesions using a convolutional neural network. Diagnostics (Basel) 11:688. https://doi.org/10.3390/diagnostics11040688

Kim Y, Lee KJ, Sunwoo L et al (2019) Deep learning in diagnosis of maxillary sinusitis using conventional radiography. Invest Radiol 54:7–15. https://doi.org/10.1097/rli.0000000000000503

Kuwana R, Ariji Y, Fukuda M et al (2021) Performance of deep learning object detection technology in the detection and diagnosis of maxillary sinus lesions on panoramic radiographs. Dentomaxillofac Radiol 50:20200171. https://doi.org/10.1259/dmfr.20200171

Laura CO, Hofmann P, Drechsler K, Wesarg S (2019) Automatic detection of the nasal cavities and paranasal sinuses using deep neural networks. In: 2019 IEEE 16th international symposium on biomedical imaging (ISBI 2019), 2019:1154–1157. https://doi.org/10.1109/ISBI.2019.8759481

Lötsch J, Hintschich CA, Petridis P, Pade J, Hummel T (2021) Machine-learning points at endoscopic, quality of life, and olfactory parameters as outcome criteria for endoscopic paranasal sinus surgery in chronic rhinosinusitis. J Clin Med Res 10:4245. https://doi.org/10.3390/jcm10184245

Murata M, Ariji Y, Ohashi Y et al (2019) Deep-learning classification using convolutional neural network for evaluation of maxillary sinusitis on panoramic radiography. Oral Radiol 35:301–307. https://doi.org/10.1007/s11282-018-0363-7

Neves CA, Tran ED, Blevins NH, Hwang PH (2021) Deep learning automated segmentation of middle skull-base structures for enhanced navigation. Int Forum Allergy Rhinol 11:1694–1697. https://doi.org/10.1002/alr.22856

Parmar P, Habib AR, Mendis D et al (2020) An artificial intelligence algorithm that identifies middle turbinate pneumatisation (concha bullosa) on sinus computed tomography scans. J Laryngol Otol 134:328–331. https://doi.org/10.1017/s0022215120000444

Parsel SM, Riley CA, Todd CA, Thomas AJ, McCoul ED (2021) Differentiation of clinical patterns associated with rhinologic disease. Am J Rhinol Allergy 35:179–186. https://doi.org/10.1177/1945892420941706

Putri AM, Rustam Z, Pandelaki J, Wirasati I, Hartini S (2021) Acute sinusitis data classification using grey wolf optimization-based support vector machine. IAES Int J Artif Intell 10:438–445. https://doi.org/10.11591/ijai.v10.i2.pp438-445

Quinn SP, Zahid MJ, Durkin JR, Francis RJ, Lo CW, Chennubhotla SC (2015) Automated identification of abnormal respiratory ciliary motion in nasal biopsies. Sci Transl Med 7:299ra124. https://doi.org/10.1126/scitranslmed.aaa1233

Ramkumar S, Ranjbar S, Ning S et al (2017) MRI-based texture analysis to differentiate sinonasal squamous cell carcinoma from inverted papilloma. AJNR Am J Neuroradiol 38:1019–1025. https://doi.org/10.3174/ajnr.a5106

Soloviev N, Khilov A, Shakhova M et al (2020) Machine learning aided automated differential diagnostics of chronic rhinitis based on optical coherence tomography. Laser Phys Lett 17:115608. https://doi.org/10.1088/1612-202X/abbf48

Staartjes VE, Volokitin A, Regli L, Konukoglu E, Serra C (2021) Machine vision for real-time intraoperative anatomic guidance: a proof-of-concept study in endoscopic pituitary surgery. Oper Neurosurg (Hagerstown) 21:242–247. https://doi.org/10.1093/ons/opab187

Thorwarth RM, Scott DW, Lal D, Marino MJ (2021) Machine learning of biomarkers and clinical observation to predict eosinophilic chronic rhinosinusitis: a pilot study. Int Forum Allergy Rhinol 11:8–15. https://doi.org/10.1002/alr.22632

Wirasati I, Rustam Z, Wibowo VVP (2020) Combining convolutional neural network and long short-term memory to classify sinusitis. In: 2020 International conference on decision aid sciences and application (DASA), vol 2020, pp 991–995. https://doi.org/10.1109/DASA51403.2020.9317280

Wu Q, Chen J, Deng H et al (2020) Expert-level diagnosis of nasal polyps using deep learning on whole-slide imaging. J Allergy Clin Immunol 145:698-701.e6. https://doi.org/10.1016/j.jaci.2019.12.002

Wu Q, Chen J, Ren Y et al (2021) Artificial intelligence for cellular phenotyping diagnosis of nasal polyps by whole-slide imaging. EBioMedicine 66:103336. https://doi.org/10.1016/j.ebiom.2021.103336

Chicco D, Jurman G (2020) The advantages of the Matthews correlation coefficient (MCC) over F1 score and accuracy in binary classification evaluation. BMC Genom 21:6. https://doi.org/10.1186/s12864-019-6413-7

Andaur Navarro CL, Damen JAA, Takada T et al (2021) Risk of bias in studies on prediction models developed using supervised machine learning techniques: systematic review. BMJ 375:n2281. https://doi.org/10.1136/bmj.n2281

Topol EJ (2019) High-performance medicine: the convergence of human and artificial intelligence. Nat Med 25:44–56. https://doi.org/10.1038/s41591-018-0300-7

Acknowledgements

None

Funding

Open access funding provided by Università degli Studi di Milano within the CRUI-CARE Agreement. The authors received no financial support for the research, authorship, and/or publication of this article.

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. Study selection was performed by AMB and FF. Data extraction was performed by CR and CP. AMM and EF drafted the article. GF and AMS conceptualized the study and designed the methodology. All authors commented on previous versions of the manuscript. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors have no potential conflict of interest or financial disclosures pertaining to this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Bulfamante, A.M., Ferella, F., Miller, A.M. et al. Artificial intelligence, machine learning, and deep learning in rhinology: a systematic review. Eur Arch Otorhinolaryngol 280, 529–542 (2023). https://doi.org/10.1007/s00405-022-07701-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00405-022-07701-3