Abstract

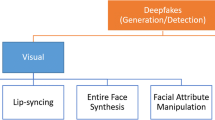

Fake portrait video generation techniques are posing a new threat to society as photorealistic deepfakes are being used for political propaganda, celebrity imitation, forged pieces of evidences, and other identity-related manipulations. Despite these generation techniques, some detection approaches have also been proven useful due to their high classification accuracy. Nevertheless, almost no effort has been spent tracking down the source of deepfakes. We propose an approach not only to separate deepfakes from real videos, but also to discover the specific generative model behind deepfakes. Some pure deep learning-based approaches try to classify deepfakes using CNNs which actually learn the residuals of the generator. Our key observation is that the spatiotemporal patterns in biological signals can be conceived as a representative projection of the residuals. To justify this observation, we extract PPG cells from real and fake videos and feed these to a state-of-the-art classification network, with an attempt to detect which generative model was used to create a certain fake video. Our results indicate that our approach can detect fake videos with 97.29% accuracy and the source model with 93.39% accuracy. We further evaluate and compare our approach on six datasets to assess its expansibility with new models and generalizability across skin tones and genders, run ablation studies for various components, and analyze its robustness toward compression, landmark noise, and postprocessing operations. The experiments show the superior performance of our proposed approach as compared to the state of the art.

Similar content being viewed by others

Data availability

The datasets used and analyzed during the current study are available in the following repositories. No new data collection or generation has been performed by this study. FaceForensics++ [30]: https://github.com/ondyari/FaceForensics CelebDF [32]: https://github.com/yuezunli/celeb-deepfakeforensics DeeperForensics–1.0 [97]: https://github.com/EndlessSora/DeeperForensics-1.0 FakeAVCeleb [31]: https://sites.google.com/view/fakeavcelebdash-lab/download Deep Fakes in the Wild [27]: http://cs.binghamton.edu/ncilsal2/DeepFakesDataset/.

References

Thies, J., Zollhöfer, M., Nießner, M.: Deferred neural rendering: image synthesis using neural textures. ACM Trans. Graph. 38(4), 1–12 (2019)

Karras, T., Laine, S., Aila, T.: A style-based generator architecture for generative adversarial networks. In: The IEEE conference on computer vision and pattern recognition (CVPR) (2019)

Karras, T., Aila, T., Laine, S., Lehtinen, J.: Progressive growing of gans for improved quality, stability, and variation. CoRR abs/1710.10196 (2017) arXiv:1710.10196

Wang, S.-Y., Wang, O., Owens, A., Zhang, R., Efros, A.A.: Detecting photoshopped faces by scripting photoshop. In: The IEEE international conference on computer vision (ICCV) (2019)

FaceSwap. https://github.com/MarekKowalski/FaceSwap. Accessed: 2020-03-16

DeepFakes. https://github.com/deepfakes/faceswap. Accessed: 2020-03-16

FakeApp. https://www.malavida.com/en/soft/fakeapp/. Accessed: 2020-03-16

Deepfakes are being used to dub adverts into different languages. https://www.newscientist.com/article/2220628-deepfakes-are-being-used-to-dub-adverts-into-different-languages/. Accessed: 2020-05-27

Here’s Harrison Ford Starring in ’Solo’ Thanks to Deepfakes. https://www.popularmechanics.com/culture/movies/ a23867069/harrison-ford-han-solo-deepfakes/. Accessed: 2020-05-27

Are Deepfakes the future of advertising? https://gritdaily.com/deepfakes-in-advertising/. Accessed: 2020-05-27

Yuan, M., Khan, I.R., Farbiz, F., Yao, S., Niswar, A., Foo, M.: A mixed reality virtual clothes try-on system. IEEE Trans Multimed 15(8), 1958–1968 (2013)

Deepfake technology in the entertainment industry: potential, limitations and protections. https://amt-lab.org/blog/2020/3/deepfake-technology-in-the-entertainment-industry-potential-limitations-and-protections. Accessed: 2020-05-27

Lawmakers warn of ’deepfake’ videos ahead of 2020 election. https://www.cnn.com/2019/01/28/tech/deepfake-lawmakers/index.html. Accessed: 2020-05-27

Deepfake porn nearly ruined my life. https://www.elle.com/uk/life-and-culture/a30748079/ deepfake-porn/. Accessed: 2020-05-27

Chu, D., Demir, İ., Eichensehr, K., Foster, J.G., Green, M.L., Lerman, K., Menczer, F., O’Connor, C., Parson, E., Ruthotto, L., et al.: White paper: Deep fakery – an action plan. Technical Report http://www.ipam.ucla.edu/wp-content/uploads/2020/01/Whitepaper-Deep-Fakery.pdf, Institute for pure and applied mathematics (IPAM), University of California Los Angeles, Los Angeles, CA (January 2020)

Yadav, D., Salmani, S.: Deepfake: a survey on facial forgery technique using generative adversarial network. In: 2019 International conference on intelligent computing and control systems (ICCS), pp. 852–857 (2019)

Nadeem, M.S., Franqueira, V.N.L., Zhai, X., Kurugollu, F.: A survey of deep learning solutions for multimedia visual content analysis. IEEE Access 7, 84003–84019 (2019)

Li, Y., Chang, M.-C., Lyu, S.: In ictu oculi: Exposing ai created fake videos by detecting eye blinking. In: 2018 IEEE International workshop on information forensics and security (WIFS), pp. 1–7 (2018). https://doi.org/10.1109/WIFS.2018.8630787

Sun, Y., Xiong, H., Yiu, S.M.: Understanding deep face anti-spoofing: from the perspective of data. The Visual Computer 37(5), 1015–1028 (2021)

Arora, S., Bhatia, M., Mittal, V.: A robust framework for spoofing detection in faces using deep learning. Vis. Comput. 38, 2461–2472 (2022)

Çiftçi, U.A., Demir, İ., Yin, L.: How do the hearts of deep fakes beat? deep fake source detection via interpreting residuals with biological signals. In: 2020 IEEE International joint conference on biometrics (IJCB), pp. 1–10 (2020). https://doi.org/10.1109/IJCB48548.2020.9304909

Wang, W., den Brinker, A.C., Stuijk, S., de Haan, G.: Algorithmic principles of remote ppg. IEEE Trans Biomed Eng 64(7), 1479–1491 (2017)

McDuff, D.J., Estepp, J.R., Piasecki, A.M., Blackford, E.B.: A survey of remote optical photoplethysmographic imaging methods. In: 2015 37th annual international conference of the IEEE engineering in medicine and biology society (EMBC), pp. 6398–6404 (2015). https://doi.org/10.1109/EMBC.2015.7319857

Çiftçi, U.A., Yin, L.: Heart rate based face synthesis for pulse estimation. In: ISVC (2019)

Chen, M., Liao, X., Wu, M.: Pulseedit: editing physiological signals in facial videos for privacy protection. IEEE Trans Inf Forensics Secur (2022). https://doi.org/10.1109/TIFS.2022.3142993

Conotter, V., Bodnari, E., Boato, G., Farid, H.: Physiologically-based detection of computer generated faces in video. In: 2014 IEEE International conference on image processing (ICIP), pp. 248–252 (2014)

Çiftçi, U.A., Demir, İ, Yin, L.: FakeCatcher: detection of synthetic portrait videos using biological signals. IEEE Trans Pattern Anal Mach Intell (PAMI) (2020). https://doi.org/10.1109/TPAMI.2020.3009287

Yang, X., Li, Y., Lyu, S.: Exposing deep fakes using inconsistent head poses. In: ICASSP 2019 - 2019 IEEE International conference on acoustics, speech and signal processing (ICASSP), pp. 8261–8265 (2019)

Hsiao C (1996) Logit and Probit Models. Springer, Dordrecht (1996)

Rossler, A., Cozzolino, D., Verdoliva, L., Riess, C., Thies, J., Niessner, M.: Faceforensics++: Learning to detect manipulated facial images. In: The IEEE International conference on computer vision (ICCV) (2019)

Khalid, H., Tariq, S., Kim, M., Woo, S.S.: Fakeavceleb: A novel audio-video multimodal deepfake dataset (2021) arXiv:2108.05080 [cs.CV]

Li, Y., Sun, P., Qi, H., Lyu, S.: Celeb-DF: A Large-scale Challenging Dataset for DeepFake Forensics. In: IEEE conference on computer vision and patten recognition (CVPR), Seattle, WA, United States (2020)

Rössler, A., Cozzolino, D., Verdoliva, L., Riess, C., Thies, J., Nießner, M.: FaceForensics: A large-scale video dataset for forgery detection in human faces. arXiv e-prints, 1803–09179 (2018) arXiv:1803.09179 [cs.CV]

Mirsky, Y., Lee, W.: The creation and detection of deepfakes: a survey. ACM Comput. Surv. (2021). https://doi.org/10.1145/3425780

Thies, J., Zollhöfer, M., Stamminger, M., Theobalt, C., Nießner, M.: Face2Face: Real-time Face capture and reenactment of RGB videos. In: Proceeding computer vision and pattern recognition (CVPR), IEEE (2016)

Thies, J., Zollhöfer, M., Nießner, M., Valgaerts, L., Stamminger, M., Theobalt, C.: Real-time expression transfer for facial reenactment. ACM Trans. Graph. (2015). https://doi.org/10.1145/2816795.2818056

Huang, Y., Juefei-Xu, F., Wang, R., Guo, Q., Ma, L., Xie, X., Li, J., Miao, W., Liu, Y., Pu, G.: Fakepolisher: Making deepfakes more detection-evasive by shallow reconstruction. In: Proceedings of the 28th ACM International conference on multimedia (2020)

Choi, Y., Choi, M., Kim, M., Ha, J.-W., Kim, S., Choo, J.: Stargan: Unified generative adversarial networks for multi-domain image-to-image translation. In: Proceedings of the IEEE conference on computer vision and pattern recognition (2018)

Demir, I., Ciftci, U.A.: Mixsyn: Learning composition and style for multi-source image synthesis. arXiv preprint arXiv:2111.12705 (2021)

Nirkin, Y., Masi, I., Tran Tuan, A., Hassner, T., Medioni, G.: On face segmentation, face swapping, and face perception. In: 2018 13th IEEE International conference on automatic face gesture recognition (FG 2018), pp. 98–105 (2018)

Garrido, P., Valgaerts, L., Rehmsen, O., Thormahlen, T., Perez, P., Theobalt, C.: Automatic face reenactment. In: The IEEE conference on computer vision and pattern recognition (CVPR) (2014)

Wu, W., Zhang, Y., Li, C., Qian, C., Change Loy, C.: Reenactgan: Learning to reenact faces via boundary transfer. In: The European conference on computer vision (ECCV) (2018)

Prajwal, K., Mukhopadhyay, R., Namboodiri, V., Jawahar, C.: A lip sync expert is all you need for speech to lip generation in the wild. In: Proceedings of the 28th ACM International conference on multimedia, pp. 484–492 (2020)

Tolosana, R., Vera-Rodriguez, R., Fierrez, J., Morales, A., Ortega-Garcia, J.: Deepfakes and beyond: a survey of face manipulation and fake detection. Inf Fus 64, 131–148 (2020)

Masi, I., Killekar, A., Mascarenhas, R.M., Gurudatt, S.P., AbdAlmageed, W.: Two-branch recurrent network for isolating Deepfakes in videos. arXiv e-prints, 2008–03412 (2020) arXiv:2008.03412 [cs.CV]

Pan, D., Sun, L., Wang, R., Zhang, X., Sinnott, R.O.: Deepfake detection through deep learning. In: 2020 IEEE/ACM International conference on big data computing, applications and technologies (BDCAT), pp. 134–143 (2020). https://doi.org/10.1109/BDCAT50828.2020.00001

Liu, J., Zhu, K., Lu, W., Luo, X., Zhao, X.: A lightweight 3d convolutional neural network for deepfake detection. Int J Intell Syst 36(9), 4990–5004 (2021)

Sebyakin A, Soloviev VAZ (2021) Spatio-temporal deepfake detection with deep neural networks. In: Toeppe K, Yan H, Chu SKW (eds.) Diversity Divergence Dialogue. iConference Lecture Notes in Computer Science. Springer, Cham

Zhao, H., Wei, T., Zhou, W., Zhang, W., Chen, D., Yu, N.: Multi-attentional deepfake detection. In: 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2185–2194. IEEE Computer Society, Los Alamitos, CA, USA (2021). https://doi.org/10.1109/CVPR46437.2021.00222

Amerini, I., Galteri, L., Caldelli, R., Del Bimbo, A.: Deepfake video detection through optical flow based cnn. In: 2019 IEEE/CVF International conference on computer vision workshop (ICCVW), pp. 1205–1207 (2019). https://doi.org/10.1109/ICCVW.2019.00152

Trinh, L., Tsang, M., Rambhatla, S., Liu, Y.: Interpretable and trustworthy deepfake detection via dynamic prototypes. In: 2021 IEEE Winter conference on applications of computer vision (WACV), pp. 1972–1982 (2021). https://doi.org/10.1109/WACV48630.2021.00202

Rana, M.S., Sung, A.H.: Deepfakestack: A deep ensemble-based learning technique for deepfake detection. In: 2020 7th IEEE International conference on cyber security and cloud computing (CSCloud)/2020 6th IEEE International conference on edge computing and scalable cloud (EdgeCom), pp. 70–75 (2020). https://doi.org/10.1109/CSCloud-EdgeCom49738.2020.00021

Lu, C., Liu, B., Zhou, W., Chu, Q., Yu, N.: Deepfake video detection using 3d-attentional inception convolutional neural network. In: 2021 IEEE International conference on image processing (ICIP), pp. 3572–3576 (2021). https://doi.org/10.1109/ICIP42928.2021.9506381

Nawaz, M., Javed, A., Irtaza, A.: Resnet-swish-dense54: a deep learning approach for deepfakes detection. Vis Comput (2022). https://doi.org/10.1007/s00371-022-02732-7

Farid, H. The MIT Press essential knowledge series (2019)

Zhang, Y., Zheng, L., Thing, V.L.L.: Automated face swapping and its detection. In: 2017 IEEE 2nd International conference on signal and image processing (ICSIP), pp. 15–19 (2017). https://doi.org/10.1109/SIPROCESS.2017.8124497

Zhou, P., Han, X., Morariu, V.I., Davis, L.S.: Two-stream neural networks for tampered face detection. In: 2017 IEEE Conference on computer vision and pattern recognition workshops (CVPRW), pp. 1831–1839 (2017). https://doi.org/10.1109/CVPRW.2017.229

Khodabakhsh, A., Ramachandra, R., Raja, K., Wasnik, P., Busch, C.: Fake face detection methods: Can they be generalized? In: 2018 International conference of the biometrics special interest group (BIOSIG), pp. 1–6 (2018). https://doi.org/10.23919/BIOSIG.2018.8553251

Wang, S. Y., Wang, O., Zhang, R., Owens, A., Efros, A. A: CNN-generated images are surprisingly easy to spot... for now. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (2020)

Li, L., Bao, J., Zhang, T., Yang, H., Chen, D., Wen, F., Guo, B.: Face x-ray for more general face forgery detection. In: 2020 IEEE/CVF Conference on computer vision and pattern recognition (CVPR), pp. 5000–5009 (2020). https://doi.org/10.1109/CVPR42600.2020.00505

Huh, M., Liu, A., Owens, A., Efros, A.A.: Fighting fake news: Image splice detection via learned self-consistency. In: Proceedings of the European conference on computer vision (ECCV) (2018)

Carlini, N., Wagner, D.: Towards evaluating the robustness of neural networks. In: 2017 IEEE Symposium on Security and Privacy (SP), pp. 39–57. IEEE computer society, Los Alamitos, CA, USA (2017)

Saremsky, S.R., Çiftçi, U.A., Greene, E.A., Demir, İ.: Adversarial deepfake generation for detector misclassification. In: IEEE/CVF Conference on computer vision and pattern recognition (CVPR) Workshops (2022)

Demir, İ., Çiftçi, U.A.: Where do deep fakes look? synthetic face detection via gaze tracking. In: ACM Symposium on eye tracking research and applications. association for computing machinery, New York, NY, USA (2021). https://doi.org/10.1145/3448017.3457387

Li, Y., Lyu, S.: Exposing deepfake videos by detecting face warping artifacts. In: The IEEE Conference on computer vision and pattern recognition (CVPR) Workshops (2019)

Li, H., Li, B., Tan, S., Huang, J.: Identification of deep network generated images using disparities in color components. Signal Proc 174, 107616 (2020)

Matern, F., Riess, C., Stamminger, M.: Exploiting visual artifacts to expose deepfakes and face manipulations. In: 2019 IEEE winter applications of computer vision workshops (WACVW), pp. 83–92 (2019). https://doi.org/10.1109/WACVW.2019.00020

Korshunov, P., Marcel, S.: Speaker inconsistency detection in tampered video. In: 2018 26th European signal processing conference (EUSIPCO), pp. 2375–2379 (2018). https://doi.org/10.23919/EUSIPCO.2018.8553270

McCloskey, S., Albright, M.: Detecting gan-generated imagery using saturation cues. In: 2019 IEEE International conference on image processing (ICIP), pp. 4584–4588 (2019)

Yu, N., Davis, L.S., Fritz, M.: Attributing fake images to gans: Learning and analyzing gan fingerprints. In: The IEEE International conference on computer vision (ICCV) (2019)

Roy, A., Bhalang Tariang, D., Subhra Chakraborty, R., Naskar, R.: Discrete cosine transform residual feature based filtering forgery and splicing detection in jpeg images. In: The IEEE Conference on computer vision and pattern recognition (CVPR) Workshops (2018)

Afchar, D., Nozick, V., Yamagishi, J., Echizen, I.: Mesonet: a compact facial video forgery detection network. In: 2018 IEEE International workshop on information forensics and security (WIFS), pp. 1–7 (2018). https://doi.org/10.1109/WIFS.2018.8630761

Tariq, S., Lee, S., Kim, H., Shin, Y., Woo, S.S.: Detecting both machine and human created fake face images in the wild. In: Proceedings of the 2nd International workshop on multimedia privacy and security. MPS ’18, pp. 81–87. ACM, New York, NY, USA (2018). https://doi.org/10.1145/3267357.3267367

Güera, D., Delp, E.J.: Deepfake video detection using recurrent neural networks. In: 2018 15th IEEE International conference on advanced video and signal based surveillance (AVSS), pp. 1–6 (2018). https://doi.org/10.1109/AVSS.2018.8639163

Nguyen, H.H., Yamagishi, J., Echizen, I.: Capsule-forensics: Using capsule networks to detect forged images and videos. In: ICASSP 2019 - 2019 IEEE International conference on acoustics, speech and signal processing (ICASSP), pp. 2307–2311 (2019)

Boulkenafet, Z., Komulainen, J., Hadid, A.: Face spoofing detection using colour texture analysis. IEEE Trans Inf Forensics Secur 11(8), 1818–1830 (2016). https://doi.org/10.1109/TIFS.2016.2555286

Barni, M., Bondi, L., Bonettini, N., Bestagini, P., Costanzo, A., Maggini, M., Tondi, B., Tubaro, S.: Aligned and non-aligned double jpeg detection using convolutional neural networks. J. Vis. Comun. Image Represent. 49, 153–163 (2017)

Qi, H., Guo, Q., Juefei-Xu, F., Xie, X., Ma, L., Feng, W., Liu, Y., Zhao, J.: Deeprhythm: Exposing deepfakes with a entional visual heartbeat rhythms. In: Proceedings of the 28th ACM International conference on multimedia (2020)

Straub, J.: Using subject face brightness assessment to detect ‘deep fakes’ (Conference Presentation). In: Kehtarnavaz, N., Carlsohn, M.F. (eds.) Real-Time Image Processing and Deep Learning 2019, vol. 10996 (2019). International Society for Optics and Photonics. https://doi.org/10.1117/12.2520546

Korshunov, P., Halstead, M., Castan, D., Graciarena, M., McLaren, M., Burns, B., Lawson, A., Marcel, S.: Tampered speaker inconsistency detection with phonetically aware audio-visual features. In: ICML Workshop "Synthetic Realities: deep learning for detecting AudioVisual Fakes" (2019). http://infoscience.epfl.ch/record/270130

Le, N., Odobez, J.-M.: Learning multimodal temporal representation for dubbing detection in broadcast media. In: Proceedings of the 24th ACM International conference on multimedia. MM ’16, pp. 202–206. Association for Computing Machinery, New York, NY, USA (2016). https://doi.org/10.1145/2964284.2967211

Cozzolino, D., Verdoliva, L.: Noiseprint: a cnn-based camera model fingerprint. IEEE Trans Inf Forensics Secur 15, 144–159 (2020)

Marra, F., Gragnaniello, D., Verdoliva, L., Poggi, G.: Do gans leave artificial fingerprints? In: 2019 IEEE Conference on multimedia information processing and retrieval (MIPR), pp. 506–511 (2019)

Guarnera, L., Giudice, O., Battiato, S.: Deepfake detection by analyzing convolutional traces. In: Proceedings of the IEEE/CVF Conference on computer vision and pattern recognition (CVPR) workshops (2020)

Asnani, V., Yin, X., Hassner, T., Liu, X.: Reverse engineering of generative models: inferring model hyperparameters from generated images (2021) arXiv:2106.07873 [cs.CV]

Lukas, J., Fridrich, J., Goljan, M.: Digital camera identification from sensor pattern noise. IEEE Trans Inf Forensics Secur 1(2), 205–214 (2006)

Albright, M., McCloskey, S.: Source generator attribution via inversion. In: The IEEE Conference on computer vision and pattern recognition (CVPR) Workshops (2019)

Marra, F., Saltori, C., Boato, G., Verdoliva, L.: Incremental learning for the detection and classification of gan-generated images. In: 2019 IEEE International workshop on information forensics and security (WIFS), pp. 1–6 (2019)

Miyato, T., Kataoka, T., Koyama, M., Yoshida, Y.: Spectral normalization for generative adversarial networks. CoRR abs/1802.05957 (2018) arXiv:1802.05957

Bellemare, M.G., Danihelka, I., Dabney, W., Mohamed, S., Lakshminarayanan, B., Hoyer, S., Munos, R.: The cramer distance as a solution to biased wasserstein gradients. CoRR abs/1705.10743 (2017) arXiv:1705.10743

Bińkowski, M., Sutherland, D.J., Arbel, M., Gretton, A.: Demystifying MMD GANs. In: International conference on learning representations (2018). https://openreview.net/forum?id=r1lUOzWCW

100,000 Faces Generated by AI, 2018. https://generated.photos. Accessed: 2020-05-27

Neves, J.C., Tolosana, R., Vera-Rodriguez, R., Lopes, V.: Ganprintr: Improved fakes and evaluation of the state of the art in face manipulation detection. IEEE J Sel Top Signal Proc 14(5), 1038–1048 (2020)

Dufour, N., Gully, A., Karlsson, P., Vorbyov, A., Leung, T., Childs, J., Bregler, C.: Deepfakes detection dataset (2019) https://ai.googleblog.com/2019/09/contributing-data-to-deepfake-detection.html

Sanderson, C., Lovell, B.C.: Multi-region probabilistic histograms for robust and scalable identity inference. In: Tistarelli, M., Nixon, M.S. (eds.) Advances in biometrics, pp. 199–208. Springer, Berlin (2009)

FaceSwap-GAN. https://github.com/shaoanlu/faceswap-GAN. Accessed: 2020-03-16

Jiang, L., Li, R., Wu, W., Qian, C., Loy, C.C.: Deeperforensics-1.0: A large-scale dataset for real-world face forgery detection. In: 2020 IEEE/CVF conference on computer vision and pattern recognition (CVPR), pp. 2886–2895 (2020). https://doi.org/10.1109/CVPR42600.2020.00296

Li, L., Bao, J., Yang, H., Chen, D., Wen, F.: Faceshifter: Towards high fidelity and occlusion aware face swapping. arXiv preprint arXiv:1912.13457 (2019)

Dolhansky, B., Howes, R., Pflaum, B., Baram, N., Ferrer, C.C.: The deepfake detection challenge (dfdc) preview dataset (2019) arXiv:1910.08854 [cs.CV]

Dolhansky, B., Bitton, J., Pflaum, B., Lu, J., Howes, R., Wang, M., Ferrer, C.C.: The deepfake detection challenge (dfdc) dataset (2020) arXiv:2006.07397 [cs.CV]

Korshunova, I., Shi, W., Dambre, J., Theis, L.: Fast face-swap using convolutional neural networks. In: 2017 IEEE International Conference on computer vision (ICCV), pp. 3697–3705 (2017). https://doi.org/10.1109/ICCV.2017.397

Perov, I., Gao, D., Chervoniy, N., Liu, K., Marangonda, S., Umé, C., Dpfks, M., Facenheim, C.S., RP, L., Jiang, J., Zhang, S., Wu, P., Zhou, B., Zhang, W.: Deepfacelab: Integrated, flexible and extensible face-swapping framework (2021) arXiv:2005.05535 [cs.CV]

Nirkin, Y., Keller, Y., Hassner, T.: Fsgan: Subject agnostic face swapping and reenactment. In: 2019 IEEE/CVF International Conference on computer vision (ICCV), pp. 7183–7192 (2019). https://doi.org/10.1109/ICCV.2019.00728

Jia, Y., Zhang, Y., Weiss, R.J., Wang, Q., Shen, J., Ren, F., Chen, Z., Nguyen, P., Pang, R., Moreno, I.L., Wu, Y.: Transfer learning from speaker verification to multispeaker text-to-speech synthesis (2019) arXiv:1806.04558 [cs.CL]

Pu, J., Mangaokar, N., Kelly, L., Bhattacharya, P., Sundaram, K., Javed, M., Wang, B., Viswanath, B.: Deepfake videos in the wild: analysis and detection (2021) arXiv:2103.04263 [cs.CR]

Baltrusaitis, T., Zadeh, A., Lim, Y.C., Morency, L.-P.: Openface 2.0: Facial behavior analysis toolkit. In: 2018 13th IEEE International Conference on automatic face & gesture recognition (FG 2018), pp. 59–66 (2018). IEEE

Fortune, S.: Handbook of discrete and computational geometry, pp. 377–388. CRC Press, Inc., Boca Raton, FL, USA (1997). Chap. Voronoi Diagrams and Delaunay Triangulations. http://dl.acm.org/citation.cfm?id=285869.285891

Wang, W., Stuijk, S., de Haan, G.: Living-skin classification via remote-ppg. IEEE Trans Biomed Eng 64(12), 2781–2792 (2017)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. In: International conference on learning representations (2015)

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z.: Rethinking the inception architecture for computer vision. In: The IEEE conference on computer vision and pattern recognition (CVPR) (2016)

Chollet, F.: Xception: Deep learning with depthwise separable convolutions. In: The IEEE conference on computer vision and pattern recognition (CVPR) (2017)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: The IEEE conference on computer vision and pattern recognition (CVPR) (2016)

Huang, G., Liu, Z., Weinberger, K.Q.: Densely connected convolutional networks. CoRR (2016) arXiv:1608.06993

Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T., et al.: An image is worth 16x16 words: Transformers for image recognition at scale (2020). arXiv:2010.11929

vit-pytorch. https://github.com/lucidrains/vit-pytorch. Accessed 12 Nov 2022

Mutegeki, R., Han, D.S.: A cnn-lstm approach to human activity recognition. In: 2020 international conference on artificial intelligence in information and communication (ICAIIC), IEEE, pp. 362–366 (2020)

Zhang, X., Zhou, X., Lin, M., Sun, J.: Shufflenet: An extremely efficient convolutional neural network for mobile devices. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 6848–6856 (2018)

Howard, A.G., Zhu, M., Chen, B., Kalenichenko, D., Wang, W., Weyand, T., Andreetto, M., Adam, H.: Mobilenets: Efficient convolutional neural networks for mobile vision applications. CoRR (2017) arXiv:1704.04861

Bradski, G.: The openCV library. Dr. Dobbs J Softw Tools Prof Prog 25(11), 120–123 (2000)

Chollet, F., et al.: Keras. https://keras.io (2015)

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Ethical approval

This work is supported in part by the National Science Foundation under grant CNS-1629898. Dr. Demir receives a salary from IntelCorporation; Drs. Ciftci and Yin receive a salary from Binghamton University.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Çiftçi, U.A., Demir, İ. & Yin, L. Deepfake source detection in a heart beat. Vis Comput 40, 2733–2750 (2024). https://doi.org/10.1007/s00371-023-02981-0

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00371-023-02981-0