Abstract

The main contribution of this paper lies in the extension towards group lasso of a Mallows’ Cp-like information criterion used in finetuning the lasso selection in a high-dimensional, sparse regression model. The optimisation of an information criterion paired with an \(\ell _1\)-norm regularisation method of the lasso leads to an overestimation of the model size. This is because the shrinkage following from the \(\ell _1\) regularisation is too permissive towards false positives, since shrinkage reduces the effects of false positives. The problem does not arise with \(\ell _0\)-norm regularisation but this is a combinatorial problem, which is computationally unfeasible in the high-dimensional setting. The strategy adopted in this paper is to select the non-zero variables with \(\ell _1\) method and estimate their values with the \(\ell _0\), meaning that lasso is used for selection, followed by an orthogonal projection, i.e., debiasing after selection. This approach necessitates the information criterion to be adapted, in particular, by including what is called a “mirror correction”, leading to smaller models. A second contribution of the paper is situated at the methodological level, more precisely in the development of the corrected information criterion using random hard thresholds as a model for the selection process.

Similar content being viewed by others

References

Akaike H (1973) Information theory and an extension of the maximum likelihood principle. In: Petrov B, Csáki F (eds) Second international symposium on information theory. Akadémiai Kiadó, Budapest, pp 267–281

Belloni A, Chernozhukov V (2013) Least squares after model selection in high-dimensional sparse models. Bernoulli 19(2):521–547

Benjamini Y, Hochberg Y (1995) Controlling the false discovery rate: a practical and powerful approach to multiple testing. J R Stat Soc Ser B 57:289–300

Claeskens G, Hjort NL (2008) Model selection and model averaging, 1st edn. Cambridge University Press, Cambridge

Das D, Chatterjee A, Lahiri SN (2020) Higher order refinements by bootstrap in lasso and other penalized regression methods. Tech. Rep. Indian Institute of Technology/Indian Statistical Institute/Washington University in St. Louis, Kanpur/Delhi/St. Louis. arXiv: 1909.06649

Daubechies I, Defrise M, De Mol C (2004) An iterative thresholding algorithm for linear inverse problems with a sparsity constraint. Commun Pure Appl Math 57:1413–1457

Donoho DL (1995) De-noising by soft-thresholding. IEEE Trans Inf Theory 41(3):613–627

Donoho DL (2006) For most large underdetermined systems of linear equations the minimal \(\ell _1\)-norm solution is also the sparsest solution. Commun Pure Appl Math 59:797–829

Donoho DL, Johnstone IM (1995) Adapting to unknown smoothness via wavelet shrinkage. J Am Stat Assoc 90(432):1200–1224

Efron B, Hastie TJ, Johnstone IM, Tibshirani RJ (2004) Least angle regression. Ann Stat 32(2):407–499 (With discussion)

Fan J, Li R (2001) Variable selection via nonconcave penalized likelihood and its oracle properties. J Am Stat Assoc 96(456):1348–1360

Foygel Barber R, Candès E (2015) Controlling the false discovery rate via knockoffs. Ann Stat 43(5):2055–2085

Friedman J, Hastie T, Hofling H, Tibshirani R (2007) Pathwise coordinate optimization. Ann Appl Stat 1(2):302–332

Friedman J, Hastie T, Tibshirani R (2008) Sparse inverse covariance estimation with the graphical lasso. Biostatistics 9(3):432–441

Fu W (1998) Penalized regressions: the bridge vs the lasso. J Comput Graph Stat 7(3):397–416

Huang J, Breheny P, Ma S (2012) A selective review of group selection in high-dimensional models. Stat Sci 27(4):481–499

Jansen M (2014) Information criteria for variable selection under sparsity. Biometrika 101(1):37–55

Jansen M (2015) Generalized cross validation in variable selection with and without shrinkage. J Stat Plan Inference 159:90–104

Javanmard A, Montanari A (2018) Debiasing the lasso: optimal sample size for Gaussian designs. Ann Stat 46(6A):2593–2622

Leadbetter MR, Lindgren G, Rootzén H (1983) Extremes and related properties of random sequences and processes. Springer series in statistics. Springer, New York

LeCun Y, Bottou L, Bengio Y, Haffner P (1998) Gradient-based learning applied to document recognition. Proc IEEE 86(11):2278–2324

Mallows C (1973) Some comments on \({C}_p\). Technometrics 15:661–675

Meinshausen N, Bühlmann P (2006) High-dimensional graphs and variable selection with the lasso. Ann Stat 34(3):1436–1462

Stein C (1956) Inadmissibility of the usual estimator for the mean of a multivariate distribution. In: Third Berkeley symposium on mathematical statistics and probability. University of California Press, Berkeley, pp 197–206

Tibshirani R, Saunders M, Rosset S, Zhu J, Knight K (2005) Sparsity and smoothness via the fused lasso. J R Stat Soc Ser B 67(1):91–108

Tibshirani RJ (1996) Regression shrinkage and selection via the lasso. J R Stat Soc Ser B 58(1):267–288

Tibshirani RJ, Taylor JE (2012) Degrees of freedom in lasso problems. Ann Stat 40(2):1198–1232

Wainwright MJ (2009) Sharp thresholds for noisy and high-dimensional recovery of sparsity using \(\ell _1\)-constrained quadratic programming (lasso). IEEE Trans Inf Theory 55(5):2183–2202

Wang H, Leng C (2008) A note on adaptive group lasso. Comput Stat Data Anal 52(12):5277–5286

Yang Y (2005) Can the strengths of AIC and BIC be shared? Biometrika 92:937–950

Ye J (1998) On measuring and correcting the effects of data mining and model selection. J Am Stat Assoc 93:120–131

Yuan M, Lin Y (2006) Model selection and estimation in regression with grouped variables. J R Stat Soc Ser B 68:49–67

Zhang C (2010) Nearly unbiased variable selection under the minimax concave penalty. Ann Stat 38(2):894–942

Zhao P, Rocha G, Yu B (2009) The composite absolute penalties family for grouped and hierarchical variable selection. Ann Stat 37:3468–3497

Zhao P, Yu B (2006) On model selection consistency of lasso. J Mach Learn Res 7:2541–2563

Zou H (2006) The adaptive lasso and its oracle properties. J Am Stat Assoc 101:1418–1429

Zou H, Hastie TJ, Tibshirani RJ (2007) On the degrees of freedom of the lasso. Ann Stat 35(5):2173–2192

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A: Proof of Proposition 1

Proof

The key point in the proof is to realise that the largest contributions to the approximation error come from the values in \(\mu \) away from zero. Using the assumption of asymptotic sparsity, these contributions become less and less important.

Defining

we can write from (9),

The value of \({\bar{h}}(t; {\varvec{\mu }})\) is depicted as a function of t in Fig. 2. The individual contributions \(h(t; \mu _i)\) for a typical sparse signal are plotted in Fig. 3.

We construct an upper bound for \(h(t; \mu )\), consisting of three parts, depending on the value of \(\mu \). First, we have a general upper bound

as indeed, on the positive axis, \(|G_{\varepsilon }(x)|\) is unimodal with global maximum in \(x = \sigma \).

Remark 1

The upper bound is pessimistic, since \(\lim _{t \rightarrow \infty } |G_{\varepsilon }(t)| = 0\), so for every \(\eta > 0\), there exists a \(t^{*}\), so that for \(t > t^{*}\), we find \( 2 |G_{\varepsilon }(t) - G_{\varepsilon }(t + \mu _i)| \le |2 G_{\varepsilon }(t)| < 2 \eta , \) and so \( |2 G_{\varepsilon }(t) - G_{\varepsilon }(t-\mu _i) - G_{\varepsilon }(t+\mu _i)| < 2|G_{\varepsilon }(\sigma )| + 2 \eta . \)

The second part of the upper bound is for small values of \(\mu \), as illustrated in Fig. 4. Let M be a constant, a priori depending on t, so that for \(|\mu | \le t - \sigma \), we have that

This construction is possible since \(h'(t; 0) = 0\).

Remark 2

Obviously, one can take \([M - h(t; 0)] / (t - \sigma )^2\) to be equal to \(\max _{x \in \mathrm{I\!R}} 2 |G_{\varepsilon }''(x)|\), but that choice would lead to a pessimistic upper bound when t grows larger. We will keep \(2 \max _{x \in \mathrm{I\!R}} |G_{\varepsilon }''(x)|\) as an upper bound when \([M - h(t; 0)] / (t - \sigma )^2\) is replaced by the random version \([M - h(t; 0)] / (T_{k,i} - \sigma )^2\).

For \(t \ge \sigma \) and \(\mu \ge \tau \), we have that \(-G_{\varepsilon }(t + \tau + \mu ) \le -G_{\varepsilon }(t - \tau + \mu )\), and so that \(h(t + \tau ; \mu ) \le h(t; \mu - \tau )\), and thus

For t sufficiently large, \(h(t; 0) - h(t + \tau ; 0)\) is small enough for any \(\tau \), so that

This is equivalent to

This implies that on \([\tau , t + \tau - \sigma ]\),

as indeed both quadratic forms have the same value, M, at \(\mu = t + \tau - \sigma \), while for \(\mu = \tau \) this reduces to (22). The right hand side of (23) has the same form as the right hand side in (21). As a result, for t sufficiently large, the constant M in (21) does not depend on t. By choosing a value for M larger than \(4 |G_{\varepsilon }(\sigma )|\), the upper bound in (21) holds for any \(\mu \). Taking into account Remark 2, we can write

where

For large values of \(\mu \), we however need a third and tighter upper bound. Because of the symmetry in \(f_{\varepsilon }(x)\), we have for \(\mu = 2 t\), that \(h(2t; t) = -G_{\varepsilon }(3 t) - G_{\varepsilon }(-t) = -G_{\varepsilon }(3 t) + G_{\varepsilon }(t)\) and as 2t is far beyond the largest local maximum of \(|h(\mu ; t)|\) as a function of \(\mu \), it holds for \(\mu > 2 t\) that \(h(\mu ; t) > h(2 t; t)\), and so

The three parts of the analysis allow us to conclude that the approximation error of \({\widetilde{m}}_k\) is bounded by

Moreover, it is easy to find a constant K so that \(q(t) \le K/t\), for all values of t, and also, because q(t) is a monotonously non-increasing function, we have

Combining this with Assumption (11), we find for the first sum in (24),

For the second sum in (24), we see that if \(P(T_{k,i} \le |\mu _i|/2)\) does not tend to zero, then by Markov’s inequality, we have

With \(|\mu _i| \rightarrow \infty \) and \(E\left( 1/T_{k,i}\right) \rightarrow 0\), the value of \(E\left( |G_{\varepsilon }(T_{k,i})|~|~T_{k,i} \le |\mu _i|/2\right) \) then tends to \(E\left( |G_{\varepsilon }(T_{k,i})|\right) \) which in turn tends to zero since, under Assumption (A2), there exists a constant L so that \(|G_{\varepsilon }(t)| \le L/t\). As a result, we have

thereby completing the proof. \(\square \)

Appendix B: Development of calculations for Eqs. 17 and 18

With \(\varGamma \) the gamma function and \(F_{\chi ^2_w}\) and \(f_{\chi ^2_w}\) the cumulative distribution function and density of the \(\chi ^2_w\) distribution, we find the following result for Eq. (17):

Also, when the group size w is 1 (singletons), Eq. (17) reduces to Eq. (18):

as \(e^{-\frac{u^2}{2}} - u^2 e^{-\frac{u^2}{2}}\) is the derivative of \(u e^{-\frac{u^2}{2}}\) and \(\varGamma (\frac{1}{2}) = \sqrt{\pi }\), \(\phi _{\sigma }\) being the density of a zero-mean normal random variable with variance \(\sigma ^2\).

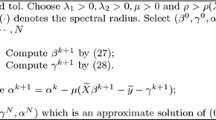

Appendix C: Illustration for image denoising

In its first column, Fig. 5 presents a sample of 5 noise-free images, then the noisy ones in the second column. Finally the denoised images using Mallows’ Cp and the mirror-corrected Cp in unstructured and group selections are shown in the third to fourth, and fifth to sixth columns respectively.

Rights and permissions

About this article

Cite this article

Marquis, B., Jansen, M. Information criteria bias correction for group selection. Stat Papers 63, 1387–1414 (2022). https://doi.org/10.1007/s00362-021-01283-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00362-021-01283-8