Abstract

Objective

To explore the ability of artificial intelligence (AI) to classify breast cancer by mammographic density in an organized screening program.

Materials and method

We included information about 99,489 examinations from 74,941 women who participated in BreastScreen Norway, 2013–2019. All examinations were analyzed with an AI system that assigned a malignancy risk score (AI score) from 1 (lowest) to 10 (highest) for each examination. Mammographic density was classified into Volpara density grade (VDG), VDG1–4; VDG1 indicated fatty and VDG4 extremely dense breasts. Screen-detected and interval cancers with an AI score of 1–10 were stratified by VDG.

Results

We found 10,406 (10.5% of the total) examinations to have an AI risk score of 10, of which 6.7% (704/10,406) was breast cancer. The cancers represented 89.7% (617/688) of the screen-detected and 44.6% (87/195) of the interval cancers. 20.3% (20,178/99,489) of the examinations were classified as VDG1 and 6.1% (6047/99,489) as VDG4. For screen-detected cancers, 84.0% (68/81, 95% CI, 74.1–91.2) had an AI score of 10 for VDG1, 88.9% (328/369, 95% CI, 85.2–91.9) for VDG2, 92.5% (185/200, 95% CI, 87.9–95.7) for VDG3, and 94.7% (36/38, 95% CI, 82.3–99.4) for VDG4. For interval cancers, the percentages with an AI score of 10 were 33.3% (3/9, 95% CI, 7.5–70.1) for VDG1 and 48.0% (12/25, 95% CI, 27.8–68.7) for VDG4.

Conclusion

The tested AI system performed well according to cancer detection across all density categories, especially for extremely dense breasts. The highest proportion of screen-detected cancers with an AI score of 10 was observed for women classified as VDG4.

Clinical relevance statement

Our study demonstrates that AI can correctly classify the majority of screen-detected and about half of the interval breast cancers, regardless of breast density.

Key Points

• Mammographic density is important to consider in the evaluation of artificial intelligence in mammographic screening.

• Given a threshold representing about 10% of those with the highest malignancy risk score by an AI system, we found an increasing percentage of cancers with increasing mammographic density.

• Artificial intelligence risk score and mammographic density combined may help triage examinations to reduce workload for radiologists.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Most European countries have implemented mammographic screening programs over the last decades, aiming to reduce breast cancer mortality [1, 2]. In Norway, the national screening program for breast cancer, BreastScreen Norway, started in 1996 and invites all women aged 50–69 to two-view mammographic screening biennially [3]. All examinations are independently double read, and more than 99% of the women are not diagnosed with breast cancer at screening.

The target group for mammographic screening is asymptomatic women with average risk of breast cancer [4]. However, breast cancer risk varies substantially across the general screening population [5]. Mammographic breast density, i.e., the proportion of fibroglandular tissue in the breast relative to the proportion of fatty tissue, is an independent risk factor for breast cancer, and women with extremely dense breasts reportedly have 4–6 times higher risk of breast cancer compared to women with fatty breasts [6, 7]. Further, the sensitivity of mammographic screening for these women is below 70% compared to 85–90% for women with fatty breasts, due to a masking effect [8].

In March 2022, screening recommendations for women with extremely dense breasts were published by the European Society of Breast Imaging (EUSOBI) [9] and further supported by several EU groups [10, 11]. These risk-based screening recommendations stated that women with extremely dense breasts should be offered supplemental screening with breast MRI every 2 to 4 years [9]. Other suggestions to detect cancers in dense breasts are additional ultrasound [12] and contrast-enhanced spectral mammography [13] as well as annual mammography [14, 15].

The introduction of artificial intelligence (AI) as an adjunct tool for cancer detection in mammographic screening has shown promising results in retrospective and prospective studies [16,17,18,19,20,21,22,23,24,25,26]. However, results from studies reporting the accuracy of AI breast cancer risk scores based on mammographic density categories are sparse. In a retrospective study from Norway with cancer-enriched data from a screening setting, AI scored the highest risk of malignancy on all cancer cases in women with extremely dense breasts [13]. Knowledge about the AI risk scores and cancer detection across all mammographic densities is thus important, especially for women with extremely dense breasts who already experience lower screening sensitivity.

In this study, we aimed to analyze the ability of an AI system to classify breast cancers by mammographic density in BreastScreen Norway, an organized screening environment that utilizes independent double reading. Further, we analyzed histopathological characteristics of screen-detected and interval cancers by AI score.

Materials and methods

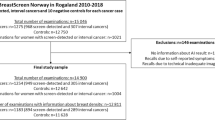

This retrospective cohort study included images from digital mammography and screening data obtained from two breast centers, Rogaland (Stavanger) and Hordaland (Bergen), during the period from January 2013 to December 2019 (Fig. 1). The study was approved by the Regional Committee for Medical and Health Research Ethics (REC # 2018/2574) and has a legal basis in accordance with Articles 6 (1) (e) and 9 (2) (j) of the General Data Protection Regulation (GDPR). The data was disclosed with legal basis in the Cancer Registry Regulations section 3–1 and the Personal Health Data Filing System Act section 19 a to 19 h [27, 28]. Parts of the study sample have been used in previous publications, but for different study objectives [13, 15].

In BreastScreen Norway, all women aged 50–69 years are invited to two-view biennial digital mammographic screening. All mammograms are interpreted independently by two breast radiologists. Each breast is given an interpretation score between 1 and 5, where 1 indicates negative for abnormality; 2, probably benign; 3, intermediate suspicion; 4, probably malignant; and 5, high suspicion of malignancy. If either radiologist assigns a score 2 or higher, the examination is discussed in a consensus meeting to determine whether or not to recall the woman for further assessment [3].

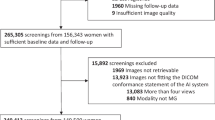

Examinations performed in Hordaland 2016–2019 were excluded due to a tomosynthesis trial in Bergen [29]. All women were screened with GE Senographe Pristina or GE Senographe Essential. After excluding examinations without information on mammographic breast density and examinations without AI score, the final study sample included 99,489 screening examinations (Fig. 1).

AI risk score and mammographic breast density

The commercial AI system Transpara version 1.7.0, developed by ScreenPoint Medical, was used to score mammography exams in this study [18, 30]. The AI system uses convolutional neural networks to analyze mammographic images and provide one malignancy risk score for each view of each breast [18]. The algorithm has been trained on mammograms from different screening programs and vendors. In this study, the highest score of all views was referred to as the overall AI score of an examination. The AI score ranged from 1 to 10; a score of 1–7 was considered low risk of breast cancer, a score of 8–9 intermediate risk, and a score of 10 was considered high risk of breast cancer, as prescribed by ScreenPoint Medical.

Volumetric breast density was obtained from the automated software Volpara, versions 1.5.0 and 1.5.4.0 [31]. Examinations were classified into four Volpara density grades (VDG) based on the maximum percentage of volumetric breast density between the left and right breast per examination. Examinations with a volumetric density of ≤ 3.4% were classified as VDG1, 3.5–7.4% as VDG2, 7.5–15.4% as VDG3, and ≥ 15.5% as VDG4 [32]. The classification system is analogous to the four-category BI-RADS, 5th edition system, categories: almost entirely fatty, scattered fibroglandular tissue, heterogeneously dense tissue, and extremely dense tissue.

Breast cancer and histopathological tumor characteristics

A negative screening examination was defined as an examination scored 1 by both radiologists, an examination with score ≥ 2, but dismissed at consensus, or a recall from screening with negative diagnostic outcome (false-positive screening result). Screen-detected cancer was defined as histologically verified ductal carcinoma in situ (DCIS) or invasive breast cancer diagnosed after a recall assessment and within 6 months after the screening examination. Interval cancer was defined as DCIS or invasive breast cancer diagnosed within 24 months of a negative screening result or 6–24 months after a false-positive screening result [3]. For the interval cancer cases, mammograms from the screening examination prior to diagnosis were scored by the AI system.

Histopathological tumor characteristics were collected for invasive screen-detected and interval cancers, and included histologic type (invasive carcinoma of no special type, invasive lobular carcinoma, invasive tubular carcinoma, and other invasive carcinomas), tumor diameter in millimeters, histologic grade 1–3 [33], lymph node involvement, and immunohistochemical subtypes. Subtypes were categorized into Luminal A-like, Luminal B-like Her2 + , Luminal B-like Her2 − , Her2 + , and triple negative [34].

Statistical analysis

Using descriptive analyses, we assessed the overall performance of the AI system in successfully categorizing cancers into high-risk categories, stratified by VDG. We provided frequencies and percentages of all screening examinations, examinations with a negative screening result, screen-detected cancers, interval cancers, and all cancers combined, stratified by AI scores 1–10. Cumulative frequencies and percentages were presented for screen-detected and interval cancers, and the percentage of screen-detected + interval cancers among all cancers was presented by cumulative AI score. 95% confidence intervals were computed using the exact binomial distribution. The percentages of screen-detected or screen-detected + interval cancer could be considered the sensitivity of the AI system at a given threshold. Histopathological tumor characteristics of invasive screen-detected and interval cancers (median tumor diameter with interquartile range (IQR) and numbers and percentages for lymph node status, grade, and immunohistochemical subtypes) are presented for AI score 10 and AI score 1–9. Associations between each tumor characteristic and AI score were tested with bivariate tests. Frequencies and percentages of all examinations, and for screen-detected cancers separately, were presented by VDG and by AI score 1 to 10. For screen-detected and interval cancers with an AI score of 10, the percentages of cases classified into each density category were graphically presented. Further, theoretical triage scenarios for the AI system were explored based on combinations of AI scores and mammographic density. All statistical analyses were performed in Stata version 17.0.

Results

Overall performance

The final study sample included data from 99,489 screening examinations among 74,941 women, including 883 breast cancers (688 screen-detected, 195 interval cancers) (Fig. 1). Among all screening examinations, 20.5% (20,378/99,489, 95% CI, 20.2–20.7) had an AI score of 1, and 10.5% (10,406/99,489, 95% CI,10.3–10.7) had an AI score of 10 (Table 1). We found that 89.7% (617/688, 95% CI, 87.2–91.9) of the screen-detected and 44.6% (87/195, 95% CI, 37.5–51.9) of the interval cancers were assigned an AI score of 10 (Table 1) and 5.9% (617/10,406, 95% CI, 5.5–6.4) of the examinations assigned an AI score of 10 to be screen-detected cancer (Table 2). In total, the sensitivity of the AI system was 79.7% (704/883, 95% CI, 76.9–82.3). In Stavanger, 94.8% (290/306) of the screen-detected and 42.9% (42/98) of the interval cancers had an AI score of 10, while in Bergen, the corresponding percentages were 85.6% (327/382) and 46.4% (45/97), respectively (Supplementary Table 1).

Histopathological tumor characteristics

For an AI score of 10, 18.6% (115/617) of the screen-detected cancers was DCIS while it was 5.6% (4/71, p = 0.01) for those with an AI score of 1–9 (Table 3). For interval cancers, the percentages were 6.9% (6/87) for an AI score of 10 and 7.4% (8/108, p = 0.89) for an AI score of 1–9. Tumor diameter and Van Nuys grade for screen-detected and interval DCIS included a small number of cases and are shown in Supplementary Table 2.

Among screen-detected cancers with an AI score of 10, 81.4% (502/617) were invasive (Table 3). For these cancers, median tumor diameter was 14 mm (IQR, 10–21), 23.0% (112/486) were histologic grade 3, 16.5% (81/491) were lymph node positive, and 7.4% (35/475) triple negative. For screen-detected cancers with an AI score of 1–9, 94.4% (67/71, p = 0.01) were invasive, median tumor diameter was 13 mm (IQR, 8–16, p = 0.01), 20.0% (13/65, p = 0.83) histologic grade 3, 12.3% (8/65, p = 0.39) lymph node positive, and 4.9% (3/61, p = 0.48) triple negative.

For interval cancers, 93.1% (81/87) with an AI score of 10 were invasive versus 92.6% (100/108, p = 0.89) for AI score 1–9 (Table 3). Among the cases with an AI score of 10, median tumor diameter was 19 mm (IQR, 13–30) and 32.4% (24/74) were histologic grade 3 compared to median tumor diameter of 24 mm (IQR, 15–30, p = 0.80) and 45.9% (39/85, p = 0.17) histologic grade 3 for those with an AI score of 1–9. A total of 35.5% (27/76) of interval cancers with an AI score of 10 were lymph node positive and 13.2% (10/76) were triple negative. The percentages for lymph node positive and triple negative were 30.8% (28/91, p = 0.52) and 19.8% (18/91, p = 0.11), respectively, for interval cancer cases with an AI score of 1–9.

Mammographic density

In our study sample, 20.3% (20,178/99,489) of the examinations were classified as VDG1, 49.6% (49,345/99,489) as VDG2, 24.0% (23,919/99,489) as VDG3, and 6.1% (6047/99,489) as VDG4 (Fig. 1). The highest rate of screen-detected cancers was observed for VDG3 (8.4 per 1000), while the lowest observed was for VDG1 (4.0 per 1000). The highest interval cancer rate (4.1 per 1000) was observed for VDG4 while the lowest rate was observed for VDG1 (0.4 per 1000).

Among the examinations classified as VDG1, 7.4% (1484/20,178) had the highest AI score of 10 (Table 4). The percentage was 11.0% (5405/49,345) for those with VDG2, 11.3% (2705/23,919) for VDG3, and 13.4% (812/6047) for VDG4. In Stavanger and Bergen, 12.7% (417/3276) and 14.3% (395/2771) of those with VDG4 had an AI score of 10, respectively (Supplementary Table 3).

For screen-detected cancers, 84.0% (68/81, 95% CI, 74.1–91.2) of the women classified as VDG1 had an AI score of 10 (Table 5, Fig. 2). The percentage was 88.9% (328/368, 95% CI, 85.2–91.9) for women with VDG2, 92.5% (185/200, 95% CI, 87.9–95.7) for VDG3, and 94.7% (36/38, 95% CI, 82.3–99.4) for VDG4. Of the interval cancer cases, 33.3% (3/9, 95% CI, 7.5–70.1) of women with VDG1, 37.0% (30/81, 95% CI, 26.6–48.5) with VDG2, 52.5% (42/80, 95% CI, 41.0–63.8) with VDG3, and 48.0% (12/25, 95% CI, 27.8–68.7) with VDG4 had the highest AI score of 10 (Supplementary Table 4, Fig. 2).

Triaging examinations based on AI score and mammographic density

In a hypothetical triage setting, where examinations with an AI score of 1–5 are classified as negative and excluded from the radiologist interpretive workflow, the reader volume would be reduced by 55.4% (55,150/99,489), while 2.2% (15/688) of the screen-detected cancers would be classified as negative (Table 1). Defining AI scores 1–7 as negative, the reading volume would be reduced by 70.8% (70,447/99,489), at the cost of classifying 3.1% (21/688) of the screen-detected cancers as negative (Table 1).

Hypothetically, if examinations were triaged based on AI scores and mammographic density, where VDG1–3 examinations with an AI score of 1–7 (low risk) and VDG4 examinations with an AI score of 1–9 (low and intermediate risk) were excluded from the radiologists’ workflow, then the reading volume would be reduced by 72.1% (71,715/99,489, 95% CI, 71.8–72.4) at the cost of 3.3% (23/688, 95% CI, 2.1–5.0) of the screen-detected cancers classified as negative (scenario 1 in Fig. 3, Table 4, Table 5). The reader volume would be reduced by 77.0% (76,619/99,489, 95% CI, 76.7–77.3) at the cost of classifying 4.8% (33/688, 95% CI, 3.3–6.7) of the screen-detected cancers as negative if VDG1–2 examinations with an AI score of 1–7 and VDG3–4 examinations with an AI score of 1–9 were interpreted only by AI (scenario 2 in Fig. 3). In a setting where VDG3–4 examinations with an AI score of 1–9 were interpreted only by AI, 26.6% (26,449/99,489, 95% CI, 26.3–26.9) of the examinations would be removed from the radiologist’s workflow and 2.5% (17/688, 95% CI, 1.4–3.9) of the screen-detected cancers would be classified as negative (scenario 3 in Fig. 3).

Discussion

In this retrospective cohort study including nearly 100,000 examinations performed with mammography equipment from GE Healthcare, we found that 89.7% (617/688) of screen-detected cancers and 44.6% (87/195) of the interval cancers were assigned an AI score of 10, implicating the highest suspicion of malignancy. For women with extremely dense breasts (VDG4), 94.7% (36/38) of the screen-detected cancers and 48.0% (12/25) of the interval cancers were given an AI score of 10. For VDG2, the corresponding numbers were 88.9% (328/368) and 37.0% (30/81).

A prior retrospective AI-study with data from two breast centers in the Central Norway Regional Health Authority, where examinations were performed on Siemens machines, reported that 86.8% (653/752) of the screen-detected and 44.9% (92/205) of the interval cancers had an AI score of 10 [25]. This is comparable with the findings in this study of examinations performed on GE machines. There was variability across our two sites, Bergen (85.6%, 327/382) and Stavanger (94.8%, 290/306); however, Stavanger used GE Senographe Pristina from 2018 and Bergen only used GE Senographe Essential. Another study using the AI system from Kheiron Medical Technologies has reported similar results across different mammography vendors [35].

The figures above focus on cancer detection for cases with an AI score of 10 among all cancers. Using all cases with an AI score of 10 in the denominator results in a screen-detected cancer rate of about 6%. This means that 94 out of 100 cases are negative for breast cancer and should be interpreted negative by the radiologists or dismissed at consensus. To do so, knowledge about, e.g., mammographic features of cases with a high AI score but no cancer is required.

A study using data from one of the breast centers included in this study, Rogaland (Stavanger), reported a higher percentage of screen-detected cancers with an AI score of 10 (92.7% versus 89.7%), but a lower percentage for interval cancers with an AI score of 10 (40.0% versus 44.6%) compared to this study [13]. However, their data was from women screened 2010–2018 and included a cancer-enriched sample where 10 negative cases were included for each cancer case. Further, in contrast to the study with a cancer-enriched sample, we found that the highest proportion of an AI score of 10 for examinations prior to interval cancers was observed for VDG3 and not VDG4. Despite including almost 100,000 examinations in this study, the number of interval cancers was lower as the data was from a regular screening setting, without enrichments. The low number of cases weakens the validity and power of our results. All screen-detected cancers among women with VDG4 had an AI score of 10 in the enriched study compared to 94.7% in the present study; however, the cancer-enriched study included only 59 screen-detected cancers for women with VDG4.

AI markings indicate areas suspicious for cancer and are directing the radiologists’ attention. However, the validity of the AI markings is limited explored, and review studies are needed to fill this knowledge gap. A review study from Norway showed that all screen-detected (n = 126) and 78% (93/120) of interval cancers with AI score 10 were correctly located by the AI system [36]. Among the interval cancers with AI score 10 and correctly located AI marking, 60% (56/93) were classified as false negative or minimal sign. Informed review studies have classified 20–30% of the screen-detected and interval cancers as missed at prior screening [37, 38]. We found that 44.6% (87/185) of interval cancers had an AI score of 10. A study from Sweden reported that 58% (83/143) of the interval cancers with an AI score of 10 were classified as false negative or minimal sign and could potentially be detected at screening with support from AI in the reading process [16]. The results might indicate a potential for earlier detection of interval cancer when using AI in screen reading.

The scenarios illustrated in Fig. 3 assume AI as a stand-alone interpreter. Interpretations of mammograms without involvement of radiologists require ethical and legal considerations. A study has shown that 23% of the screen-detected cancers were interpreted negatively by one of the two readers in an independent double reading setting [39]. This is important evidence in the discussion we must have before making the decision about how to use AI in the interpretation procedure in screen reading. However, the most common way of using AI is as decision support, which might influence the reading time [40] and recall rate [26, 41].

When comparing the percentage of screen-detected cancers with an AI score of 10 between density categories, the highest percentage was observed for VDG4. Furthermore, the percentage of all examinations, including negative examinations, with an AI score of 10 was also highest for VDG4. This means that specificity was lower for higher AI risk scores for VDG4 cases and the risk of increasing the rate of false-positive screening results must be balanced against possible increased sensitivity for women with extremely dense breasts. Furthermore, we must also bear in mind that about 75% of the screen-detected cancers in our study were among women with VDG2 or VDG3, and only 6% among those with VDG4.

Strengths of our study include the use of data from a regular screening setting, mammographic density measurements from an automated software, and the use of a CE-marked and FDA-approved AI system. Limitations include a relatively low number of cancer cases, especially for VDG1 and VDG4 examinations. The retrospective approach and the limited generalizability to a prospective screening setting also represent shortcomings of our study. Furthermore, we did not have information about the location of the AI marking versus the location of the cancer, which is an important for the validity of the AI system. We did not include interval cancers in the scenarios, which might underestimate the potential of cancer detection by the AI system, as including interval cancers would have added cancer cases. However, this might also have resulted in lower percentages for reduction of screen reading volume in all scenarios.

In conclusion, this study showed promising results for AI to classify cancer cases into different risk score categories regardless of mammographic density. Future prospective studies are needed to support our findings and to establish the evidence needed for the safe implementation of AI in the interpretation process in mammographic screening and as a potential tool to offer personalized screening.

Abbreviations

- AI:

-

Artificial intelligence

- DCIS:

-

Ductal carcinoma in situ

- GDPR:

-

General data protection regulation

- Her2:

-

Human epidermal growth factor receptor 2

- IQR:

-

Interquartile range

- VDG:

-

Volpara density grade

References

Lauby-Secretan B, Scoccianti C, Loomis D et al (2015) Breast-cancer screening–viewpoint of the IARC Working Group. N Engl J Med 372(24):2353–2358

European Commission initiative on breast cancer (2021) Manual for Breast Cancer Services. European Quality Assurance Scheme for Breast Cancer Services. Cited February 2024: https://cancer-screening-and-care.jrc.ec.europa.eu/en/ecibc/breast-quality-assurance-scheme

Bjørnson EW, Holen ÅS, Sagstad S et al (2022) BreastScreen Norway: 25 years of organized screening. Oslo: Cancer Registry of Norway. Cited February, 2024. Avaliable from: https://www.kreftregisteret.no/Generelt/Rapporter/Mammografiprogrammet/25-arsrapport-mammografiprogrammet/

Sardanelli F, Aase HS, Álvarez M et al (2017) Position paper on screening for breast cancer by the European Society of Breast Imaging (EUSOBI) and 30 national breast radiology bodies from Austria, Belgium, Bosnia and Herzegovina, Bulgaria, Croatia, Czech Republic, Denmark, Estonia, Finland, France, Germany, Greece, Hungary, Iceland, Ireland, Italy, Israel, Lithuania, Moldova, The Netherlands, Norway, Poland, Portugal, Romania, Serbia, Slovakia, Spain, Sweden Switzerland and Turkey. Eur Radiol 27(7):2737–2743

Harkness EF, Astley SM, Evans DG (2020) Risk-based breast cancer screening strategies in women. Best Pract Res Clin Obstet Gynaecol 65:3–17

Boyd NF, Huszti E, Melnichouk O et al (2014) Mammographic features associated with interval breast cancers in screening programs. Breast Cancer Res 16(4):417

McCormack VA, dos Santos SI (2006) Breast density and parenchymal patterns as markers of breast cancer risk: a meta-analysis. Cancer Epidemiol Biomarkers Prev 15(6):1159–1169

Freer PE (2015) Mammographic breast density: impact on breast cancer risk and implications for screening. Radiographics 35(2):302–315

Mann RM, Athanasiou A, Baltzer PAT et al (2022) Breast cancer screening in women with extremely dense breasts recommendations of the European Society of Breast Imaging (EUSOBI). Eur Radiol 32(6):4036–4045

SAPEA, Science Advice for Policy by European Academies (2022) Improving cancer screening in the European Union. Berlin: SAPEA

Council Recommendation on cancer screening (update). Cited February 2024. Available from: https://www.europarl.europa.eu/legislative-train/theme-promoting-our-european-way-of-life/file-cancer-screening?sid=7701

Scheel JR, Lee JM, Sprague BL, Lee CI, Lehman CD (2015) Screening ultrasound as an adjunct to mammography in women with mammographically dense breasts. Am J Obstet Gynecol 212(1):9–17

Koch HW, Larsen M, Bartsch H, Kurz KD, Hofvind S (2023) Artificial intelligence in BreastScreen Norway: a retrospective analysis of a cancer-enriched sample including 1254 breast cancer cases. Eur Radiol 33(5):3735–3743

Seely JM, Peddle SE, Yang H et al (2022) Breast density and risk of interval cancers: the effect of annual versus biennial screening mammography policies in Canada. Can Assoc Radiol J 73(1):90–100

Larsen M, Lynge E, Lee CI, Lång K, Hofvind S (2023) Mammographic density and interval cancers in mammographic screening: moving towards more personalized screening. Breast 69:306–311

Lång K, Hofvind S, Rodríguez-Ruiz A, Andersson I (2021) Can artificial intelligence reduce the interval cancer rate in mammography screening? Eur Radiol 31(8):5940–5947

Rodriguez-Ruiz A, Lång K, Gubern-Merida A et al (2019) Can we reduce the workload of mammographic screening by automatic identification of normal exams with artificial intelligence? A feasibility study. Eur Radiol 29(9):4825–4832

Rodriguez-Ruiz A, Lång K, Gubern-Merida A et al (2019) Stand-alone artificial intelligence for breast cancer detection in mammography: comparison with 101 radiologists. J Natl Cancer Inst 111(9):916–922

Salim M, Wåhlin E, Dembrower K et al (2020) External evaluation of 3 commercial artificial intelligence algorithms for independent assessment of screening mammograms. JAMA Oncol 6(10):1581–1588

McKinney SM, Sieniek M, Godbole V et al (2020) International evaluation of an AI system for breast cancer screening. Nature 577(7788):89–94

Schaffter T, Buist DSM, Lee CI et al (2020) Evaluation of combined artificial intelligence and radiologist assessment to interpret screening mammograms. JAMA Netw Open 3(3):e200265

Bahl M (2020) Artificial intelligence: a primer for breast imaging radiologists. J Breast Imaging 2(4):304–314

Sechopoulos I, Teuwen J, Mann R (2021) Artificial intelligence for breast cancer detection in mammography and digital breast tomosynthesis: state of the art. Semin Cancer Biol 72:214–225

Johansson G, Olsson C, Smith F, Edegran M, Björk-Eriksson T (2021) AI-aided detection of malignant lesions in mammography screening - evaluation of a program in clinical practice. BJR Open 3(1):20200063

Larsen M, Aglen CF, Lee CI et al (2022) Artificial intelligence evaluation of 122 969 mammography examinations from a population-based screening program. Radiology 303(3):502–511

Lång K, Josefsson V, Larsson AM et al (2023) Artificial intelligence-supported screen reading versus standard double reading in the Mammography Screening with Artificial Intelligence trial (MASAI): a clinical safety analysis of a randomised, controlled, non-inferiority, single-blinded, screening accuracy study. Lancet Oncol 24(8):936–944

Lovdata. Kreftregisterforskriften. 2001. Cited February 2024. Available from: https://lovdata.no/dokument/SF/forskrift/2001-12-21-1477

Lov om helseregistre og behandling av helseopplysninger (helseregisterloven) [21.04.2023]. Cited February 2024. Available from: https://lovdata.no/dokument/NL/lov/2014-06-20-43

Hofvind S, Holen ÅS, Aase HS et al (2019) Two-view digital breast tomosynthesis versus digital mammography in a population-based breast cancer screening programme (To-Be): a randomised, controlled trial. Lancet Oncol 20(6):795–805

https://screenpoint-medical.com/ Cited February 2024

https://www.volparahealth.com/ Cited February 2024

Aitken Z, McCormack VA, Highnam RP et al (2010) Screen-film mammographic density and breast cancer risk: a comparison of the volumetric standard mammogram form and the interactive threshold measurement methods. Cancer Epidemiol Biomarkers Prev 19(2):418–428

Balleine RL, Webster LR, Davis S et al (2008) Molecular grading of ductal carcinoma in situ of the breast. Clin Cancer Res 14(24):8244–8252

Perou CM, Sørlie T, Eisen MB et al (2000) Molecular portraits of human breast tumours. Nature 406(6797):747–52

Sharma N, Ng AY, James JJ et al (2023) Multi-vendor evaluation of artificial intelligence as an independent reader for double reading in breast cancer screening on 275,900 mammograms. BMC Cancer 23(1):460

Koch HW, Larsen M, Bartsch H et al (2024) How do AI-markings on screening mammograms correspond to cancer location? An informed review of 270 breast cancer cases in BreastScreen Norway. Eur Radiol. https://doi.org/10.1007/s00330-024-10662-2

Houssami N, Hunter K (2017) The epidemiology, radiology and biological characteristics of interval breast cancers in population mammography screening. NPJ Breast Cancer 3:12

Hovda T, Hoff SR, Larsen M, Romundstad L, Sahlberg KK, Hofvind S (2022) True and missed interval cancer in organized mammographic screening: a retrospective review study of diagnostic and prior screening mammograms. Acad Radiol Suppl 1:S180–S191. https://doi.org/10.1016/j.acra.2021.03.022

Martiniussen MA, Sagstad S, Larsen M et al (2022) Screen-detected and interval breast cancer after concordant and discordant interpretations in a population based screening program using independent double reading. Eur Radiol 32(9):5974–5985

Pinto MC, Rodriguez-Ruiz A, Pedersen K et al (2021) Impact of artificial intelligence decision support using deep learning on breast cancer screening interpretation with single-view wide-angle digital breast tomosynthesis. Radiology 300(3):529–536

Dembrower K, Salim M, Eklund M, Lindholm P, Strand F (2023) Implications for downstream workload based on calibrating an artificial intelligence detection algorithm by standalone-reader or combined-reader sensitivity matching. J Med Imaging (Bellingham) 10(Suppl 2):S22405

Funding

Open access funding provided by Norwegian Institute of Public Health (FHI) This study was supported by the Pink Ribbon campaign in Norway (#214931) and the Research Council of Norway (#309755).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Guarantor

The scientific guarantor of this publication is Solveig Hofvind.

Conflict of interest

The authors declare no competing interests.

Statistics and biometry

One of the authors (ML) has significant statistical expertise, but no complex statistical methods were necessary for this paper.

Informed consent

The study was approved by the Regional Committees for Medical and Health Research Ethics (#13294) and had a legal basis in accordance with Articles 6 (1) (e) and 9 (2) (j) of the GDPR. Pursuant to Section 35 of the Health Research Act, the Regional Committees for Medical Research Ethics has granted the project exemption from the requirement of consent.

Ethical approval

Institutional review board approval was obtained. The study was approved by the Regional Committee for Medical and Health Research Ethics (#2018/2574).

Study subjects or cohorts overlap

A retrospective study using a cancer-enriched sample from Rogaland, 2010–2018, has been published (Koch HW, Larsen M, Bartsch H, Kurz KD, Hofvind S (2023) Artificial intelligence in BreastScreen Norway: a retrospective analysis of a cancer-enriched sample including 1254 breast cancer cases. Eur Radiol 33:3735–3743). This study uses data from a regular screening setting in Rogaland, 2017–2019, so there are some overlapping examinations from 2017 and 2018.

Methodology

• retrospective

• registry study/observational study

• performed at two breast centers

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Bergan, M.B., Larsen, M., Moshina, N. et al. AI performance by mammographic density in a retrospective cohort study of 99,489 participants in BreastScreen Norway. Eur Radiol (2024). https://doi.org/10.1007/s00330-024-10681-z

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s00330-024-10681-z