Abstract

The majority of epidemic models are described by non-linear differential equations which do not have a closed-form solution. Due to the absence of a closed-form solution, the understanding of the precise dynamics of a virus is rather limited. We solve the differential equations of the N-intertwined mean-field approximation of the susceptible-infected-susceptible epidemic process with heterogeneous spreading parameters around the epidemic threshold for an arbitrary contact network, provided that the initial viral state vector is small or parallel to the steady-state vector. Numerical simulations demonstrate that the solution around the epidemic threshold is accurate, also above the epidemic threshold and for general initial viral states that are below the steady-state.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Epidemiology originates from the study of infectious diseases such as gonorrhoea, cholera and the flu (Bailey 1975; Anderson and May 1992). Human beings do not only transmit infectious diseases from one individual to another, but also opinions, on-line social media content and innovations. Furthermore, man-made structures exhibit epidemic phenomena, such as the propagation of failures in power networks or the spread of a malicious computer virus. Modern epidemiology has evolved into the study of general spreading processes (Pastor-Satorras et al. 2015; Nowzari et al. 2016). Two properties are essential to a broad class of epidemic models. First, individuals are either infected with the disease (respectively, possess the information, opinion, etc.) or healthy. Second, individuals can infect one another only if they are in contact (e.g., by a friendship). In this work, we consider an epidemic model which describes the spread of a virus between groups of individuals.

We consider a contact network of N nodes, and every node \(i=1,\ldots , N\) corresponds to a groupFootnote 1 of individuals. If the members of two groups i, j are in contact, then group i and group j can infect one another with the virus. We denote the symmetric \(N \times N\) adjacency matrix by A and its elements by \(a_{ij}\). If there is a link between node i and node j, then \(a_{ij}=1\), and \(a_{ij}=0\) otherwise. Hence, the virus directly spreads between two nodes i and j only if \(a_{ij}=1\). We stress that in most applications it holds that \(a_{ii}\ne 0\), since infected individuals in group i usually do infect susceptible individuals in the same group i. At any time \(t\ge 0\), we denote the viral state of node i by \(v_i(t)\). The viral state \(v_i(t)\) is in the interval [0, 1] and is interpreted as the fraction of infected individuals of group i. N-intertwined mean-field approximation (NIMFA) with heterogeneous spreading parameters (Lajmanovich and Yorke 1976; Van Mieghem and Omic 2014) assumes that the curing rates \(\delta _i\) and infection rates \(\beta _{ij}\) depend on the nodes i and j.

Definition 1

(Heterogeneous NIMFA) At any time \(t\ge 0\), the NIMFA governing equation is

for every group \(i=1,\ldots , N\), where \(\delta _i >0\) is the curing rate of node i, and \({\tilde{\beta }}_{i j} > 0\) is the infection rate from node j to node i.

For a vector \(x\in {\mathbb {R}}^N\), we denote the diagonal matrix with x on its diagonal by \({\text {diag}}(x)\). We denote the \(N\times N\) curing rate matrix \(S = {\text {diag}}(\delta _1,\ldots , \delta _N)\). Then, the matrix form of (1) is a vector differential equation

where \(v(t) = (v_1(t),\ldots , v_N(t))^T\) is the viral state vector at time t, the \(N\times N\) infection rate matrix B is composed of the elements \(\beta _{ij} = {\tilde{\beta }}_{ij} a_{ij}\), and u is the \(N\times 1\) all-one vector. In this work, we assume that the matrix B is symmetric.

Definition 2

(Steady-State Vector) The \(N \times 1\) steady-state vector \(v_\infty \) is the non-zero equilibrium of NIMFA, which satisfies

In its simplest form, NIMFA (Van Mieghem et al. 2009) assumes the same infection rate \(\beta \) and curing rate \(\delta \) for all nodes. More precisely, for homogeneous NIMFA the governing equations (2) reduce to

For the vast majority of epidemiological, demographical, and ecological models, the basic reproduction number \(R_0\) is an essential quantity (Hethcote 2000; Heesterbeek 2002). The basic reproduction number \(R_0\) is defined (Diekmann et al. 1990) as “The expected number of secondary cases produced, in a completely susceptible population, by a typical infective individual during its entire period of infectiousness”. Originally, the basic reproduction number \(R_0\) was introduced for epidemiological models with only \(N=1\) group of individuals. Van den Driessche and Watmough (2002) proposed a definition of the basic reproduction number \(R_0\) to epidemic models with \(N>1\) groups. For NIMFA (1), the basic reproduction number \(R_0\) follows (Van den Driessche and Watmough 2002) as \(R_0 = \rho (S^{-1}B)\), where \(\rho (M)\) denotes the spectral radius of a square matrix M. For the stochastic Susceptible-Infected-Removed (SIR) epidemic process on data-driven contact networks, Liu et al. (2018) argue that the basic reproduction number \(R_0\) is inadequate to characterise the behaviour of the viral dynamics, since the number of secondary cases produced by an infectious individual varies greatly with time t. In contrast to the stochastic SIR process, for the deterministic NIMFA equations (1), the basic reproduction number \(R_0 = \rho (S^{-1}B)\) is of crucial importance for the viral state dynamics. Lajmanovich and Yorke (1976) showed that there is a phase transition at the epidemic threshold criterion \(R_0 = 1\): If \(R_0 \le 1\), then the only equilibrium of NIMFA (1) is the origin, which is globally asymptotically stable. Else, if \(R_0 > 1\), then there is a second equilibrium, the steady-state \(v_\infty \), whose components are positive, and the steady-state \(v_\infty \) is globally asymptotically stable for every initial viral state \(v(0) \ne 0\). For real-world epidemics, the regime around epidemic threshold criterion \(R_0 = 1\) is of particular interest. In practice, the basic reproduction number \(R_0\) cannot be arbitrarily great, since natural immunities and vaccinations lead to significant curing rates \(\delta _i\) and the frequency and intensity of human contacts constrain the infection rates \(\beta _{ij}\). Beyond the spread of infectious diseases, many real-world systems seem to operate in the critical regime around a phase transition (Kitzbichler et al. 2009; Nykter et al. 2008).

The basic reproduction number \(R_0\) only provides a coarse description of the dynamics of NIMFA (1). Recently (Prasse and Van Mieghem 2019), we analysed the viral state dynamics for the discrete-time version of NIMFA (1), provided that the initial viral state v(0) is small (see also Assumption 2 in Sect. 3). Three results of Prasse and Van Mieghem (2019) are worth mentioning, since we believe that they could also apply to NIMFA (1) in continuous time. First, the steady-state \(v_\infty \) is exponentially stable. Second, the viral state is (almost always) monotonically increasing. Third, the viral state v(t) is bounded by linear time-invariant systems at any time t. In this work, we go a step further in analysing the dynamics of the viral state v(t), and we focus on the region around the threshold \(R_0 =1\). More precisely, we find the closed-form expression of the viral state \(v_i(t)\) for every node i at every time t when \(R_0 \downarrow 1\), given that the initial state v(0) is small or parallelFootnote 2 to the steady-state vector \(v_\infty \).

We introduce the assumptions in Sect. 3. Section 4 gives an explicit expression for the steady-state vector \(v_\infty \) when \(R_0 \downarrow 1\). In Sect. 5, we derive the closed-form expression for the viral state vector v(t) at any time \(t\ge 0\). The closed-form solution for \(R_0 \downarrow 1\) gives an accurate approximation also for \(R_0 >1\) as demonstrated by numerical evaluations in Sect. 6.

2 Related work

Lajmanovich and Yorke (1976) originally proposed the differential equations (1) to model the spread of gonorrhoea and proved the existence and global asymptotic stability of the steady-state \(v_\infty \) for strongly connected directed graphs. In Lajmanovich and Yorke (1976), Fall et al. (2007), Wan et al. (2008), Rami et al. (2013), Prasse and Van Mieghem (2018) and Paré et al. (2018), the differential equations (1) are considered as the exact description of the virus spread between groups of individuals. Van Mieghem et al. (2009) derived the differential equations (1) as an approximation of the Markovian Susceptible-Infected-Susceptible (SIS) epidemic process (Pastor-Satorras et al. 2015; Nowzari et al. 2016), which lead to the acronym “NIMFA” for “N-Intertwined Mean-Field Approximation” (Van Mieghem 2011; Van Mieghem and Omic 2014; Devriendt and Van Mieghem 2017). The approximation of the SIS epidemic process by NIMFA is least accurate around the epidemic threshold (Van Mieghem et al. 2009; Van Mieghem and van de Bovenkamp 2015). Thus, the solution of NIMFA when \(R_0\downarrow 1\), which is derived in this work, might be inaccurate for the description of the probabilistic SIS process.

Fall et al. (2007) analysed the generalisation of the differential equations (1) of Lajmanovich and Yorke (1976) to a non-diagonal curing rate matrix S. Khanafer et al. (2016) showed that the steady-state \(v_\infty \) is globally asymptotically stable, also for weakly connected directed graphs. Furthermore, NIMFA (1) has been generalised to time-varying parameters. Paré et al. (2017) consider that the infection ratesFootnote 3\(\beta _{ij}(t)\) depend continuously on time t. Rami et al. (2013) consider a switched model in which both the infection rates \(\beta _{ij}(t)\) and the curing rates \(\delta _i(t)\) change with time t. NIMFA (1) in discrete time has been analysed in Ahn and Hassibi (2013), Paré et al. (2018), Prasse and Van Mieghem (2019) and Liu et al. (2020).

In Van Mieghem (2014b), NIMFA (4) was solved for a special case: If the adjacency matrix A corresponds to a regular graph and the initial state \(v_i(0)\) is the sameFootnote 4 for every node i, then NIMFA with time-varying, homogeneous spreading parameters \(\beta (t), \delta (t)\) has a closed-form solution. In this work, we focus on time-invariant but heterogeneous spreading parameters \(\delta _i, \beta _{ij}\). We solve NIMFA (1) for arbitrary graphs around the threshold criterion \(R_0 = 1\) and for an initial viral state v(0) that is small or parallel to the steady-state vector \(v_\infty \).

3 Notations and assumptions

The basic reproduction number \(R_0= \rho (S^{-1}B)\) is determined by the infection rate matrix B and the curing rate matrix S. Thus, the notation \(R_0 \downarrow 1\) is imprecise, since there are infinitely many matrices B, S such that the basic reproduction number \(R_0\) equals 1. To be more precise, we consider a sequence \(\left\{ \left( B^{(n)}, S^{(n)}\right) \right\} _{n \in {\mathbb {N}}}\) of infection rate matrices \(B^{(n)}\) and curing rate matrices \(S^{(n)}\) that convergesFootnote 5 to a limit \((B^*, S^*)\), such that \(\rho \left( \left( S^* \right) ^{-1}B^*\right) =1\) and

For the ease of exposition, we drop the index n and replace \(B^{(n)}\) and \(S^{(n)}\) by the notation B and S, respectively. In particular, we emphasise that the assumptions below apply to every element \(\left( B^{(n)}, S^{(n)}\right) \) of the sequence. In Sects. 4 to 6, we formally abbreviated the limit process \(\left( B^{(n)}, S^{(n)}\right) \rightarrow \left( B^*, S^*\right) \) by the notation \(R_0\downarrow 1\). For the proofs in the appendices, we use the lengthier but clearer notation \(\left( B, S\right) \rightarrow \left( B^*, S^*\right) \). Furthermore, we use the superscript notation \(\varXi ^*\) to denote the limit of any variable \(\varXi \) that depends on the infection rate matrix B and the curing rate matrix S. For instance, \(\delta ^*_i\) denotes the limit of the curing rate \(\delta _i\) of node i when \(\left( B, S\right) \rightarrow \left( B^*, S^*\right) \). The Landau-notation \(f(R_0) = {\mathcal {O}}(g(R_0))\) as \(R_0 \downarrow 1\) denotes that \(|f(R_0)| \le \sigma |g(R_0)|\) for some constant \(\sigma \) as \(R_0 \downarrow 1\). For instance, it holds that \((R_0-1)^2 = {\mathcal {O}}( R_0-1 )\) as \(R_0 \downarrow 1\).

In the remainder of this work, we rely on three assumptions, which we state for clarity in this section.

Assumption 1

For every basic reproduction number \(R_0>1\), the curing rates are positive and the infection rates are non-negative, i.e., \(\delta _i >0\) and \(\beta _{ij} \ge 0\) for all nodes i, j. Furthermore, in the limit \(R_0\downarrow 1\), it holds that \(\delta _i \not \rightarrow 0\) and \(\delta _i \not \rightarrow \infty \) for all nodes i.

We consider Assumption 1 a rather technical assumption, since only non-negative rates \(\delta _i\) and \(\beta _{ij}\) have a physical meaning. Furthermore, if the curing rates \(\delta _i\) were zero, then the differential equations (1) would describe a Susceptible-Infected (SI) epidemic process. In this work, we focus on the SIS epidemic process, for which it holds that \(\delta _i>0\).

Assumption 2

For every basic reproduction number \(R_0>1\), it holds that \(v_i(0) \ge 0\) and \(v_i(0) \le v_{\infty , i}\) for every node \(i=1,\ldots , N\). Furthermore, it holds that \(v_i(0)>0\) for at least one node i.

For the description of most real-world epidemics, Assumption 2 is reasonable for two reasons. First, the total number of infected individuals often is small in the beginning of an epidemic outbreak. (Sometimes, there is even a single patient zero.) Second, a group i often contains many individuals. For instance, the viral state \(v_i(t)\) could describe the prevalence of virus in municipality i. Thus, even if there is a considerable total number of infected individuals in group i, the initial fraction \(v_i(0)\) would be small.

Assumption 3

For every basic reproduction number \(R_0>1\), the infection rate matrix B is symmetric and irreducible. Furthermore, in the limit \(R_0\downarrow 1\), the infection rate matrix B converges to a symmetric and irreducible matrix.

Assumption 3 holds if and only if the infection rate matrix B (and its limit) corresponds to a connected undirected graph (Van Mieghem 2014a).

4 The steady-state around the epidemic threshold

We define the \(N\times N\) effective infection rate matrix W as

In this section, we state an essential property that we apply to solve the NIMFA equations (1) when the basic reproduction number \(R_0\) is close to 1: The steady-state vector \(v_\infty \) converges to a scaled version of the principal eigenvector \(x_1\) of the effective infection rate matrix W when \(R_0 \downarrow 1\).

Under Assumptions 1 and 3, the effective infection rate matrix W is non-negative and irreducible. Hence, the Perron–Frobenius Theorem (Van Mieghem 2014a) implies that the matrix W has a unique eigenvalue \(\lambda _1\) which equals the spectral radius \(\rho (W)\). As we show in the beginning of Appendix B, the eigenvalues of the effective infection rate matrix W are real and satisfy \(\lambda _1 = \rho (W) > \lambda _2\ge \cdots \ge \lambda _N\). In particular, under Assumptions 1 and 3, the largest eigenvalue \(\lambda _1\), the spectral radius \(\rho (W)\) and the basic reproduction number \(R_0\) are the same quantity, i.e., \(R_0 = \rho (W) = \lambda _1\).

In Van Mieghem (2012, Lemma 4) it was shown that, for homogeneous NIMFA (4), the steady-state vector \(v_\infty \) converges to a scaled version of the principal eigenvector of the adjacency matrix A when \(R_0 \downarrow 1\). We generalise the results of Van Mieghem (2012) to heterogeneous NIMFA (1):

Theorem 1

Under Assumptions 1 and 3, the steady-state vector \(v_\infty \) obeys

where the scalar \(\gamma \) equals

and the \(N\times 1\) vector \(\eta \) satisfies \(\Vert \eta \Vert _2 \le {\mathcal {O}}\left( \left( R_0-1\right) ^2\right) \) when the basic reproduction number \(R_0\) approaches 1 from above.

Proof

Appendix B. \(\square \)

5 The viral state dynamics around the epidemic threshold

In Sect. 5.1, we give an intuitive motivation of our solution approach for the NIMFA equations (1) when \(R_0 \downarrow 1\). In Sect. 5.2, we state our main result.

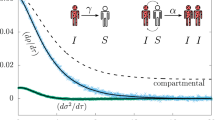

5.1 Motivation of the solution approach

For simplicity, this subsection is confined to the homogeneous NIMFA equations (4). In numerical simulations (Prasse and Van Mieghem 2018), we observed that the \(N \times N\) viral state matrix \(V=(v(t_1),\ldots , v(t_N))\), for arbitrary observation times \(t_1<\cdots < t_N\), is severely ill-conditioned. Thus, the viral state v(t) at any time \(t\ge 0\) approximately equals the linear combination of \(m<<N\) orthogonal vectors \(y_1,\ldots , y_m\), and we can write \(v(t) \approx c_1(t) y_1 + \cdots + c_m(t) y_m\), see also Prasse and Van Mieghem (2020). Here, the functions \(c_1(t),\ldots , c_m(t)\) are scalar. We consider the most extreme case by representing the viral state v(t) by a scaled version of only \(m=1\) vector \(y_1\), which corresponds to \(v(t)\approx c(t) y_1\) for a scalar function c(t). The viral state v(t) converges to the steady-state vector \(v_\infty \) as \(t\rightarrow \infty \). Hence, a natural choice for the vector \(y_1\) is \(y_1 = v_\infty \), which implies that \(c(t)\rightarrow 1\) as \(t\rightarrow \infty \). If \(R_0 \approx 1\) and \(v(0)\approx 0\), then the approximation \(v(t) \approx c(t) v_\infty \) is accurate at all times \(t\ge 0\) due to two intuitive reasons.

-

1.

If \(v(t)\approx 0\) when \(t \approx 0\), then NIMFA (4) is approximated by the linearisation around zero. Hence, it holds that

$$\begin{aligned} \frac{d v (t)}{d t } \approx \left( \beta A - \delta I \right) v(t) \end{aligned}$$(8)when \(t\approx 0\). The state v(t) of the linear system (8) converges rapidly to a scaled version of the principal eigenvector \(x_1\) of the matrix \(\left( \beta A - \delta I \right) \). Furthermore, Theorem 1 states that \(v_\infty \approx \gamma x_1\) when \(R_0 \approx 1\). Thus, the viral state v(t) rapidly converges to a scaled version of the steady-state \(v_\infty \):

-

2.

Suppose that the viral state v(t) approximately equals to a scaled version of the steady-state vector \(v_\infty \). (In other words, the viral state v(t) is “almost parallel” to the vector \(v_\infty \).) Then, it holds that

$$\begin{aligned} v(t) \approx c(t) v_\infty \end{aligned}$$(9)for some scalar c(t). We insert (9) into the NIMFA equations (4), which yields that

$$\begin{aligned} \frac{d c(t)}{d t } v_\infty&\approx c(t) \left( \beta A - \delta I \right) v_\infty - \beta c^2(t) {\text {diag}}(v_\infty ) A v_\infty . \end{aligned}$$(10)For homogeneous NIMFA (4), the steady-state equation (3) becomes

$$\begin{aligned} \left( \beta A - \delta I \right) v_\infty = \beta {\text {diag}}\left( v_\infty \right) A v_\infty . \end{aligned}$$(11)We substitute (11) in (10) and obtain that

$$\begin{aligned} \frac{d c(t)}{d t } v_\infty&\approx \left( c(t) - c^2(t) \right) \left( \beta A - \delta I \right) v_\infty . \end{aligned}$$(12)Since \(v_\infty \approx \gamma x_1\) around the epidemic threshold, it holds that \(A v_\infty \approx \rho (A) v_\infty \). Hence, we obtain that

$$\begin{aligned} \frac{d c(t)}{d t } v_\infty&\approx \left( c(t) - c^2(t) \right) \left( \beta \rho (A) - \delta \right) v_\infty . \end{aligned}$$(13)Left-multiplying (13) by \(v^T_\infty \) and dividing by \(v^T_\infty v_\infty \) yields that

$$\begin{aligned} \frac{d c(t)}{d t }&\approx \left( c(t) - c^2(t) \right) \left( \beta \rho (A) - \delta \right) . \end{aligned}$$(14)The logistic differential equation (14) has been introduced by Verhulst (1838) as a population growth model and has a closed-form solution.

Due to the two intuitive steps above, NIMFA (4) reduces around the threshold \(R_0 \approx 1\) to the one-dimension differential equation (14). Solving (14) for the function c(t) gives an approximation of the viral state v(t) by (9). The solution approach is applicable to other dynamics on networks, see for instance (Devriendt and Lambiotte 2020).

However, the reasoning above is not rigorous for two reasons. First, the viral state vector v(t) is not exactly parallel to the steady state \(v_\infty \). To be more specific, instead of (9) it holds that

for some \(N\times 1\) error vector \(\xi (t)\) which is orthogonal to the steady-state vector \(v_\infty \). In Sect. 5.2, we use (15) as an ansatz for solving NIMFA (1).

Second, the steady-state vector \(v_\infty \) is not exactly parallel to the principal eigenvector \(x_1\). More precisely, we must consider the vector \(\eta \) in (6). Since \(\eta \ne 0\), the step from (12) to (13) is affected by an error.

5.2 The solution around the epidemic threshold

Based on the motivation in Sect. 5.1, we aim to solve the NIMFA differential equations (1) around the epidemic threshold criterion \(R_0 = 1\). The ansatz (15) forms the basis for our solution approach. From the orthogonality of the error vector \(\xi (t)\) and the steady-state vector \(v_\infty \), it follows that the function c(t) at time t equals

The error vector \(\xi (t)\) at time t follows from (15) and (16) as

Our solution approach is based on two steps. First, we show thatFootnote 6 the error term \(\xi (t)\) satisfies \(\xi (t)= {\mathcal {O}}((R_0 - 1)^2)\) at every time t when \(R_0 \downarrow 1\). Hence, the error term \(\xi (t)\) converges to zero uniformly in time t. Second, we find the solution of the scalar function c(t) at the limit \(R_0 \downarrow 1\).

Assumption 2 implies thatFootnote 7 the viral state v(t) does not overshoot the steady-state \(v_\infty \):

Lemma 1

Under Assumptions 1 to 3, it holds that \(v_i(t) \le v_{\infty , i}\) for all nodes i at every time \(t \ge 0\). Furthermore, it holds that \(0 \le c(t) \le 1\) at every time \(t \ge 0\).

Proof

Appendix C. \(\square \)

Theorem 2 states that the error term \(\xi (t)\) converges to zero in the order of \((R_0 - 1)^2\) when \(R_0 \downarrow 1\).

Theorem 2

Under Assumptions 1 to 3, there exist constants \(\sigma _1, \sigma _2 >0\) such that the error term \(\xi (t)\) at any time \(t\ge 0\) is bounded by

when the basic reproduction number \(R_0\) approaches 1 from above.

Proof

Appendix D. \(\square \)

Under Assumption 2, the steady-state \(v_\infty \) is exponentially stable for NIMFA in discrete time (Prasse and Van Mieghem 2019). If the steady-state \(v_\infty \) is exponentially stable, then the error vector \(\xi (t)\) goes to zero exponentially fast, since \(\xi (t)\) is orthogonal to \(v_\infty \). Thus, the first addend on the right-hand side in (18) is rather expectable, under the conjecture that the steady-state \(v_\infty \) is exponentially stable also for continuous-time NIMFA (1). Regarding this work, the most important implication of Theorem 2 is that \(\xi (t) = {\mathcal {O}}\left( (R_0-1)^2\right) \) uniformly in time t when \(R_0 \downarrow 1\), provided the initial value \(\xi (0)\) of the error vector is negligibly small.

We define the constant \(\varUpsilon (0)\), which depends on the initial viral state v(0), as

Furthermore, we define the viral slope w, which determines the speed of convergence to the steady-state \(v_\infty \), as

Then, building on Theorems 1 and 2, we obtain our main result:

Theorem 3

Suppose that Assumptions 1 to 3 hold and that, for some constant \(p>1\), \(\Vert \xi (0) \Vert _2 = {\mathcal {O}}\left( (R_0 - 1)^p\right) \) when \(R_0 \downarrow 1\). Furthermore, define

Then, there exists some constant \(\sigma >0\) such that

where \(s = {\mathrm{min}}\{p, 2\}\), when the basic reproduction number \(R_0\) approaches 1 from above.

Proof

Appendix E. \(\square \)

We emphasise that Theorem 3 holds for any connected graph corresponding to the infection rate matrix B. Theorem 3 is in agreement with the universality of the SIS prevalence (Van Mieghem 2016). The bound (21) states a convergence of the viral state v(t) to the approximation \(v_{\text {apx}}(t)\) which is uniform in time t. Furthermore, since both the viral state v(t) and the approximation \(v_{\text {apx}}(t)\) converge to the steady-state \(v_\infty \), it holds that \(\Vert v(t) - v_{\mathrm{apx}}(t)\Vert _2\rightarrow 0\) when \(t \rightarrow \infty \). At time \(t=0\), we obtain from Theorem 3 and (17) that

Since \(\Vert \xi (0) \Vert _2 = {\mathcal {O}}\left( (R_0 - 1)^p\right) \) and, by Theorem 1, \(\Vert v_\infty \Vert _2 = {\mathcal {O}}\left( R_0 - 1\right) \), we obtain that

Hence, for general \(t\ge 0\) the approximation error \(\Vert v(t) - v_{\mathrm{apx}}(t)\Vert _2 / \Vert v_\infty \Vert _2\) does not converge to zero faster than \({\mathcal {O}}\left( (R_0 - 1)^{p-1}\right) \), and the bound (21) is best possible (up to the constant \(\sigma \)) when \(p\le 2\). With (17), the term \(\Vert \xi (0) \Vert _2\) in Theorem 2 can be expressed explicitly with respect to the initial viral state v(0) and the steady-state \(v_\infty \). In particular, it holds that \(\Vert \xi (0) \Vert _2 \le \Vert v(0)\Vert _2\). Furthermore, if the initial viral state v(0) is parallel to the steady-state vector \(v_\infty \), then it holds that \(\xi (0)=0\). Thus, if the initial viral state v(0) is small or parallel to the steady-state vector \(v_\infty \), then it holds that \(\xi (0)=0\) and the bound (21) on the approximation error vector becomes

The time-dependent solution to NIMFA (1) at the epidemic threshold criterion \(R_0 = 1\) depends solely on the viral slope w, the steady-state vector \(v_\infty \) and the initial viral state v(0). The viral slope w converges to zero as \(R_0 \downarrow 1\). Thus, Theorem 3 implies that the convergence time to the steady-state \(v_\infty \) goes to infinity when \(R_0 \downarrow 1\), even though the steady-state \(v_\infty \) converges to zero. More precisely, it holds:

Corollary 1

Suppose that Assumptions 1 and 3 hold and that the initial viral state v(0) equals \(v(0) = r_0 v_\infty \) for some scalar \(r_0 \in (0, 1)\). Then, for any scalar \(r_1 \in [r_0 , 1)\), the largest time \(t_{01}\) at which the viral state satisfies \(v_i(t_{01}) \le r_1 v_{\infty , i}\) for every node i converges to

when the basic reproduction number \(R_0\) approaches 1 from above.

Proof

Appendix F. \(\square \)

We combine Theorem 1 and Theorem 3 to obtain Corollary 2.

Corollary 2

Suppose that Assumptions 1 to 3 hold and that, for some constant \(p>1\), \(\Vert \xi (0) \Vert _2 = {\mathcal {O}}\left( (R_0 - 1)^p\right) \) when \(R_0 \downarrow 1\). Furthermore, define

Then, there exists some constant \(\sigma >0\) such that

where \(s = {\mathrm{min}}\{p, 2\}\), when the basic reproduction number \(R_0\) approaches 1 from above.

In contrast to Theorem 3, the approximation error \(\Vert v(t) - {\tilde{v}}_{\mathrm{apx}}(t)\Vert _2\) in Corollary 2 does not converge to zero when \(t \rightarrow \infty \), since we replaced the steady-state \(v_\infty \) by the first-order approximation of Theorem 1. Corollary 2 implies that

at every time t when \(R_0 \downarrow 1\), provided that the initial viral state v(0) is small or parallel to the steady-state vector \(v_\infty \). From (24) it follows that, around the epidemic threshold criterion \(R_0 = 1\), the eigenvector centrality (Van Mieghem 2010) fully determines the “dynamical importance” of node i versus node j.

For homogeneous NIMFA (4), the infection rate matrix B and the curing rate matrix S reduce to \(B = \beta A\) and \(S = \delta I\), respectively. Hence, the effective infection rate matrix becomes \(W = \frac{\beta }{\delta } A\), and the principal eigenvector \(x_1\) of the effective infection rate matrix W equals the principal eigenvector of the adjacency matrix A. Furthermore, the limit process \(R_0 \downarrow 1\) reduces to \(\tau \downarrow \tau _c\), with the effective infection rate \(\tau = \frac{\beta }{\delta }\) and the epidemic threshold \(\tau _c = 1/\rho (A)\). For homogeneous NIMFA (4), Theorem 3 reduces to:

Corollary 3

Suppose that Assumptions 1 to 3 hold and consider the viral state v(t) of homogeneous NIMFA (4). Furthermore, suppose that \(\Vert \xi (0) \Vert _2 = {\mathcal {O}}\left( (\tau - \tau _c)^p\right) \) for some constant \(p>1\) when \(\tau \downarrow \tau _c\) and define

Then, there exists some constant \(\sigma >0\) such that

where \(s = {\mathrm{min}}\{p, 2\}\), when the effective infection rate \(\tau \) approaches the epidemic threshold \(\tau _c\) from above.

Proof

Appendix G. \(\square \)

From Corollary 3, we can obtain the analogue to Corollary 2 for NIMFA (4) with homogeneous spreading parameters \(\beta , \delta \). Furthermore, the approximation \(v_{\mathrm{apx}}(t)\) defined by (25) equals the exact solution (Van Mieghem 2014b) of homogeneous NIMFA (4) on a regular graph, provided that the initial state \(v_i(0)\) is the same for every node i. In particular, the net dose \(\varrho (t)\), a crucial quantity in Van Mieghem (2014b); Kendall (1948), is related to the viral slope w via \(\varrho (t)=wt\).

Theorem 3 and Corollary 3 suggest that, around the epidemic threshold criterion \(R_0 =1\), the dynamics of heterogeneous NIMFA (1) closely resembles the dynamics of homogeneous NIMFA (4). In particular, we pose the question: Can heterogeneous NIMFA (1) be reduced to homogeneous NIMFA (4) around the epidemic threshold criterion \(R_0 = 1\) by choosing the homogeneous spreading parameters \(\beta , \delta \) and the adjacency matrix A accordingly?

Theorem 4

Consider heterogeneous NIMFA (1) with given spreading parameters \(\beta _{ij}, \delta _i\). Suppose that Assumptions 1 to 3 hold and that, for some constant \(p>1\), \(\Vert \xi (0) \Vert _2 = {\mathcal {O}}\left( (R_0 - 1)^p\right) \) when the basic reproduction number \(R_0\) approaches 1 from above. Define the homogeneous NIMFA system

where the homogeneous curing rate \(\delta _{{\mathrm{hom}}}\) equals

the homogeneous infection rate \(\beta _{{\mathrm{hom}}}\) equals

with the variable \(\gamma \) defined by (7), and the self-infection rates \(\beta _{ii, {{\mathrm{hom}}}}\) equal

Then, if \(v_{{\mathrm{hom}}}(0)=v(0)\), there exists some constant \(\sigma >0\) such that

where \(s = {\mathrm{min}}\{p, 2\}\), when the basic reproduction number \(R_0\) approaches 1 from above.

Proof

Appendix H. \(\square \)

In other words, when \(R_0 \downarrow 1\), for any contact network and any spreading parameters \(\delta _i, \beta _{ij}\), heterogeneous NIMFA (1) can be reduced to homogeneous NIMFA (4) on a complete graph plus self-infection rates \(\beta _{ii, {{\mathrm{hom}}}}\). We emphasise that the sole influence of the topology on the viral spread is given by the self-infection rates \(\beta _{ii, {{\mathrm{hom}}}}\). Thus, under Assumptions 1to 3, the network topology has a surprisingly small impact on the viral spread around the epidemic threshold.

6 Numerical evaluation

We are interested in evaluating the accuracy of the closed-form expression \(v_{\text {apx}}(t)\), given by (20), when the basic reproduction number \(R_0\) is close, but not equal, to one. We generate an adjacency matrix A according to different random graph models. If \(a_{ij}= 1\), then we set the infection rates \(\beta _{ij}\) to a uniformly distributed random number in [0.4, 0.6] and, if \(a_{ij}= 0\), then we set \(\beta _{ij}=0\). We set the initial curing rates \(\delta ^{(0)}_l\) to a uniformly distributed random number in [0.4, 0.6]. To set the basic reproduction number \(R_0\), we set the curing rates \(\delta _l\) to a multiple of the initial curing rates \(\delta ^{(0)}_l\), i.e. \(\delta _l = \sigma \delta ^{(0)}_l\) for every node l and some scalar \(\sigma \) such that \(\rho (W) = R_0\). Thus, we realise the limit process \(R_0 \downarrow 1\) by changing the scalar \(\sigma \). Only in Sect. 6.2, we consider homogeneous spreading parameters by setting \(\beta _{ij}=0.5\) and \(\delta ^{(0)}_i=0.5\) for all nodes i, j. Numerically, we obtain the “exact” NIMFA viral state sequence v(t) by Euler’s method for discretisation, i.e.,

for a small sampling time T and a discrete time slot \(k\in {\mathbb {N}}\). In Prasse and Van Mieghem (2019), we derived an upper bound \(T_{\text {max}}\) on the sampling time T which ensures that the discretisation (29) of NIMFA (1) converges to the steady-state \(v_\infty \). We set the sampling time T to \(T = T_{\text {max}}/100\). Except for Sect. 6.3, we set the initial viral state to \(v(0)= 0.01 v_\infty \). We define the convergence time \(t_{\text {conv}}\) as the smallest time t at which

holds for every node i. Thus, at the convergence time \(t_{\text {conv}}\) the viral state \(v(t_{\text {conv}})\) has practically converged to the steady-state \(v_\infty \). We evaluate Theorem 3 with respect to the approximation error \(\epsilon _V\), which we define as

All results are averaged over 100 randomly generated networks.

6.1 Approximation accuracy around the epidemic threshold

We generate a Barabási–Albert random graph (Barabási and Albert 1999) with \(N=500\) nodes and the parameters \(m_0 = 5\), \(m= 2\). Figure 1 gives an impression of the accuracy of the approximation of Theorem 3 around the epidemic threshold criterion \(R_0 = 1\). For a basic reproduction number \(R_0 \le 1.1\), the difference of the closed-form expression of Theorem 3 to the exact NIMFA viral state trace is negligible.

For a Barabási–Albert random graph with \(N=500\) nodes, the approximation accuracy of Theorem 3 is depicted. Each of the sub-plots shows the viral state traces \(v_i(t)\) of seven different nodes i, including the node i with the greatest steady-state \(v_{\infty , i}\)

We aim for a better understanding of the accuracy of the closed-form expression of Theorem 3 when the basic reproduction number \(R_0\) converges to one. We generate Barabási–Albert and Erdős–Rényi connected random graphs with \(N=100,\ldots , 1000\) nodes. The link probability of the Erdős–Rényi graphs (Erdős and Rényi 1960) is set to \(p_{\text {ER}}=0.05\). Figure 2 illustrates the convergence of the approximation of Theorem 3 to the exact solution of NIMFA (1). Around the threshold criterion \(R_0=1\), the approximation error \(\epsilon _V\) converges linearly to zero with respect to the basic reproduction number \(R_0\), which is in agreement with Theorem 3. The greater the network size N, the greater is the approximation error \(\epsilon _V\) for Barabási–Albert networks. The greater the network size N, the lower is the approximation error \(\epsilon _V\) for Erdős–Rényi graphs.

6.2 Impact of degree heterogeneity on the approximation accuracy

For NIMFA (4) with homogeneous spreading parameters \(\beta , \delta \), the approximation \(v_{\text {apx}}(t)\) defined by (4) is exact if the contact network is a regular graph. We are interested how the approximation accuracy changes with respect to the heterogeneity of the node degrees. We generate Watts–Strogatz (Watts and Strogatz 1998) random graphs with \(N=100\) nodes and an average node degree of 4. We vary the link rewiring probability \(p_{\text {WS}}\) from \(p_{\text {WS}}=0\), which correspond to a regular graph, to \(p_{\text {WS}}= 1\), which corresponds to a “completely random” graph. Figure 3 depicts the approximation error \(\epsilon _V\) versus the rewiring probability \(p_{\text {WS}}\) for homogeneous spreading parameters \(\beta , \delta \). Interestingly, the approximation error reaches a maximum and improves when the adjacency matrix A is more random.

6.3 Impact of general initial viral states on the approximation accuracy

Theorem 3 required that the initial error \(\xi (0)\) converges to zero, which means that the initial viral state v(0) must be parallel to the steady-state \(v_\infty \) or, since \(\Vert \xi (0)\Vert _2 \le \Vert v(0)\Vert \), converge to zero. To investigate whether the approximation of Theorem 3 is accurate also when the initial error \(\xi (0)\) does not converge to zero, we set the initial viral state \(v_i(0)\) of every node i to a uniformly distributed random number in \((0, r_0 v_{\infty , i}]\) for some scalar \(r_0 \in (0, 1]\). By increasing the scalar \(r_0\), the initial viral state v(0) is “more random”. Figure 4 shows that the approximation error \(\epsilon _V\) is almost unaffected by an initial viral state v(0) that is neither parallel to the steady-state \(v_\infty \) nor small. Figure 5 shows that the viral state v(t) converges rapidly to the approximation \(v_{\text {apx}}(t)\) as time t increases.

For general initial viral states v(0) with \(\xi (0)\ne 0\), it holds that \(v_{\text {apx}}(0) \ne v(0)\) since the approximation \(v_{\text {apx}}(0)\) is parallel to the steady-state vector \(v_\infty \). Hence, the approximation \(v_{\text {apx}}(t)\) does not converge point-wise to the viral state v(t) when \(R_0 \downarrow 1\). However, based on the results shown in Figs. 4 and 5, we conjecture convergence with respect to the \(L_2\)-norm for general initial viral states v(0) when \(R_0 \downarrow 1\).

Conjecture 1

Suppose that Assumptions 1 to 3 hold. Then, it holds for the approximation \(v_{\mathrm{apx}}(t)\) defined by (20) that

when the basic reproduction number \(R_0\) approaches 1 from above.

For a Barabási–Albert random graph with \(N=500\) nodes, a basic reproduction number \(R_0=1.01\) and a randomly generated initial viral state v(0), the approximation accuracy of Theorem 3 is depicted. The viral state traces \(v_i(t)\) of seven different nodes i are depicted

6.4 Directed infection rate matrix

The proof of Theorem 3 relies on a symmetric infection rate matrix B as stated by Assumption 3. We perform the same numerical evaluation as shown in Fig. 2 in Sect. 6.1 with the only difference that we generate strongly connected directed Erdős–Rényi random graphs. Figure 6 demonstrates the accuracy of the approximation \(v_{\text {apx}}(t)\) for a directed infection rate matrix B, which leads us to:

Conjecture 2

Suppose that Assumptions 1 and 2 hold and that the infection rate matrix B is irreducible but, in contrast to Assumption 3, not necessarily symmetric. Then, the viral state v(t) is “accurately described” by the approximation \(v_{\mathrm{apx}}(t)\) when the basic reproduction number \(R_0\) approaches 1 from above.

6.5 Accuracy of the approximation of the convergence time

Corollary 1 gives the expression of the convergence time \(t_{01}\) from the initial viral state \(v(0) = r_0 v_\infty \) to the viral state \(v(t_{01}) \le r_1 v_\infty \) for any scalars \(0< r_0 \le r_1 <1\) around the epidemic threshold criterion \(R_0 = 1\). We set the scalars to \(r_0 = 0.01\) and \(r_1= 0.9\) and define the approximation error

where \(t_{01}\) denotes the exact convergence time and \({\hat{t}}_{01}\) denotes the approximate expression of Corollary 1. We generate Barabási–Albert and Erdős–Rényi random graphs with \(N=100,\ldots , 1000\) nodes. Figure 7 shows that Corollary 1 gives an accurate approximation of the convergence time \(t_{01}\) when the basic reproduction number \(R_0\) is reasonably close to one.

6.6 Reduction to a complete graph with homogeneous spreading parameters

Theorem 4 states that, around the epidemic threshold, heterogeneous NIMFA (1) on any graph can be reduced to homogeneous NIMFA (4) on a complete graph. Figures 8 and 9 show the approximation accuracy of Theorem 4 for Erdős–Rényi and Barabási–Albert random graphs, respectively. To accurately approximate heterogeneous NIMFA on Barabási–Albert graphs by homogeneous NIMFA on a complete graph, the basic reproduction number \(R_0\) must be closer to 1 than for Erdős–Rényi graphs.

The approximation accuracy of Theorem 4 on a Erdős–Rényi random graph with \(N=100\) nodes. Each of the sub-plots shows the viral state traces \(v_i(t)\) of seven different nodes i, including the node i with the greatest steady-state \(v_{\infty , i}\)

The approximation accuracy of Theorem 4 on a Barabási–Albert random graph with \(N=100\) nodes. Each of the sub-plots shows the viral state traces \(v_i(t)\) of seven different nodes i, including the node i with the greatest steady-state \(v_{\infty , i}\)

7 Conclusion

We solved the NIMFA governing equations (1) with heterogeneous spreading parameters around the epidemic threshold when the initial viral state v(0) is small or parallel to the steady-state \(v_\infty \), provided that the infection rates are symmetric (\(\beta _{ij}=\beta _{ji}\)). Numerical simulations demonstrate the accuracy of the solution when the basic reproduction number \(R_0\) is close, but not equal, to one. Furthermore, the solution serves as an accurate approximation also when the initial viral state v(0) is neither small nor parallel to the steady-state \(v_\infty \). We observe four important implications of the solution of NIMFA around the epidemic threshold.

First, the viral state v(t) is almost parallel to the steady-state \(v_\infty \) for every time \(t\ge 0\). On the one hand, since the viral dynamics approximately remain in a one-dimensional subspace of \({\mathbb {R}}^N\), an accurate network reconstruction is numerically not viable around the epidemic threshold (Prasse and Van Mieghem 2018). Furthermore, when the basic reproduction number \(R_0\) is large, then the viral state v(t) rapidly converges to the steady-state \(v_\infty \), which, again, prevents an accurate network reconstruction. On the other hand, only the principal eigenvector \(x_1\) of the effective infection rate matrix W and the viral slope w are required to predict the viral state dynamics around the epidemic threshold. Thus, around the epidemic threshold, the prediction of an epidemic does not require an accurate network reconstruction.

Second, the eigenvector centrality (with respect to the principal eigenvector \(x_1\) of the effective infection rate matrix W) gives a complete description of the dynamical importance of a node i around the epidemic threshold. In particular, the ratio \(v_i(t)/v_j(t)\) of the viral states of two nodes i, j does not change over time t.

Third, around the epidemic threshold, we gave an expression of the convergence time \(t_{01}\) to approach the steady-state \(v_\infty \). The viral state v(t) converges to the steady-state \(v_\infty \) exponentially fast. However, as the basic reproduction number \(R_0\) approaches one, the convergence time \(t_{01}\) goes to infinity.

Fourth, around the epidemic threshold, NIMFA with heterogeneous spreading parameter on any graph can be reduced to NIMFA with homogeneous spreading parameters on the complete graph plus self-infection rates.

Potential generalisations of the solution of NIMFA to non-symmetric infection rate matrices B or time-dependent spreading parameters \(\beta _{ij}(t), \delta _l(t)\) stand on the agenda of future research.

Notes

In this work, we use the words node and group interchangeably.

The initial state vector v(0) is parallel to the steady-state vector \(v_\infty \) if \(v(0) = \alpha v_\infty \) for some scalar \(\alpha \in {\mathbb {R}}\).

More precisely, Paré et al. (2017) assume that the adjacency matrix A(t) is time-varying but not necessarily symmetric nor binary-valued, which is equivalent to time-varying infection rates \(\beta _{ij}(t)\).

The steady-state \(v_{\infty , i}\) is the same for every node i in a regular graph for homogeneous spreading parameters \(\beta , \delta \). Hence, the initial state \(v_i(0)\) is the same for every node i if and only if the initial state v(0) is parallel to the steady-state vector \(v_\infty \).

By convergence of the sequence of tuples \(\left( B^{(n)}, S^{(n)}\right) \) to the limit \((B^*, S^*)\), we mean that, for all \(\epsilon >0\), there exists an \(n_0(\epsilon )\in {\mathbb {N}}\) such that both \(\Vert B^{(n)} - B^* \Vert _2 < \epsilon \) and \(\Vert S^{(n)} - S^* \Vert _2 < \epsilon \) holds for all \(n\ge n_0(\epsilon )\).

Theorem 1 implies that the steady-state \(v_\infty \) satisfies \(\Vert v_\infty \Vert _2 = {\mathcal {O}}\left( R_0 - 1\right) \) when \(R_0 \downarrow 1\). Thus, also \(\Vert c(t) v_\infty \Vert _2= {\mathcal {O}}\left( R_0 - 1\right) \) at every time t. Thus, a linear convergence of the error term \(\xi (t)\) to zero, i.e., \(\Vert \xi (t)\Vert _2= {\mathcal {O}}\left( R_0 - 1\right) \), would not be sufficient to show that the viral state v(t) converges to \(c(t)v_\infty \) when \(R_0 \downarrow 1\).

The numerical radius is not a matrix norm, since the numerical radius is not sub-multiplicative.

References

Abramowitz M, Stegun IA (1965) Handbook of mathematical functions: with formulas, graphs, and mathematical tables, vol 55. Courier Corporation, North Chelmsford

Ahn HJ, Hassibi B (2013) Global dynamics of epidemic spread over complex networks. In: Proceedings of 52nd IEEE conference on decision and control, CDC, IEEE, pp 4579–4585

Anderson RM, May RM (1992) Infectious diseases of humans: dynamics and control. Oxford University Press, Oxford

Arfken GB, Weber HJ (1999) Mathematical methods for physicists. Am J Phys 67:165

Bailey NTJ (1975) The mathematical theory of infectious diseases and its applications, 2nd edn. Charles Griffin & Company, London

Barabási AL, Albert R (1999) Emergence of scaling in random networks. Science 286(5439):509–512

Devriendt K, Lambiotte R (2020) Non-linear network dynamics with consensus-dissensus bifurcation. arXiv preprint arXiv:2002.08408

Devriendt K, Van Mieghem P (2017) Unified mean-field framework for susceptible-infected-susceptible epidemics on networks, based on graph partitioning and the isoperimetric inequality. Phys Rev E 96(5):052314

Diekmann O, Heesterbeek JAP, Metz JA (1990) On the definition and the computation of the basic reproduction ratio \(R_0\) in models for infectious diseases in heterogeneous populations. J Math Biol 28(4):365–382

Van den Driessche P, Watmough J (2002) Reproduction numbers and sub-threshold endemic equilibria for compartmental models of disease transmission. Math Biosci 180(1–2):29–48

Erdős P, Rényi A (1960) On the evolution of random graphs. Publ Math Inst Hung Acad Sci 5(1):17–60

Fall A, Iggidr A, Sallet G, Tewa JJ (2007) Epidemiological models and Lyapunov functions. Math Model Nat Phenom 2(1):62–83

He Z, Van Mieghem P (2020) Prevalence expansion in NIMFA. Physica A 540:123220

Heesterbeek JAP (2002) A brief history of \(R_0\) and a recipe for its calculation. Acta Biotheor 50(3):189–204

Hethcote HW (2000) The mathematics of infectious diseases. SIAM Rev 42(4):599–653

Horn RA, Johnson CR (1990) Matrix analysis. Cambridge University Press, Cambridge

Issos JN (1966) The field of values of non-negative irreducible matrices. PhD thesis, Auburn University

Kendall DG (1948) On the generalized birth-and-death process. Ann Math Stat 19(1):1–15

Khanafer A, Başar T, Gharesifard B (2016) Stability of epidemic models over directed graphs: a positive systems approach. Automatica 74:126–134

Kitzbichler MG, Smith ML, Christensen SR, Bullmore E (2009) Broadband criticality of human brain network synchronization. PLoS Comput Biol 5(3):e1000314

Lajmanovich A, Yorke JA (1976) A deterministic model for gonorrhea in a nonhomogeneous population. Math Biosci 28(3–4):221–236

Li CK, Tam BS, Wu PY (2002) The numerical range of a nonnegative matrix. Linear Algebra Appl 350(1–3):1–23

Liu QH, Ajelli M, Aleta A, Merler S, Moreno Y, Vespignani A (2018) Measurability of the epidemic reproduction number in data-driven contact networks. Proc Natl Acad Sci 115(50):12680–12685

Liu F, Cui S, Li X, Buss M (2020) On the stability of the endemic equilibrium of a discrete-time networked epidemic mode. arXiv preprint arXiv:2001.07451

Maroulas J, Psarrakos P, Tsatsomeros M (2002) Perron-Frobenius type results on the numerical range. Linear Algebra Appl 348(1–3):49–62

Nowzari C, Preciado VM, Pappas GJ (2016) Analysis and control of epidemics: a survey of spreading processes on complex networks. IEEE Control Syst Mag 36(1):26–46

Nykter M, Price ND, Aldana M, Ramsey SA, Kauffman SA, Hood LE, Yli-Harja O, Shmulevich I (2008) Gene expression dynamics in the macrophage exhibit criticality. Proc Natl Acad Sci 105(6):1897–1900

Paré PE, Beck CL, Nedić A (2017) Epidemic processes over time-varying networks. IEEE Trans Control Netw Syst 5(3):1322–1334

Paré PE, Liu J, Beck CL, Kirwan BE, Başar T (2018) Analysis, estimation, and validation of discrete-time epidemic processes. IEEE Trans Control Syst Technol 28(1):79–93

Pastor-Satorras R, Castellano C, Van Mieghem P, Vespignani A (2015) Epidemic processes in complex networks. Rev Mod Phys 87(3):925

Prasse B, Van Mieghem P (2018) Network reconstruction and prediction of epidemic outbreaks for NIMFA processes. arXiv preprint arXiv:1811.06741

Prasse B, Van Mieghem P (2019) The viral state dynamics of the discrete-time NIMFA epidemic model. IEEE Trans. Netw. Sci. Eng. 7(3):1667–1674

Prasse B, Van Mieghem P (2020) Predicting dynamics on networks hardly depends on the topology. arXiv preprint arXiv:2005.14575

Rami MA, Bokharaie VS, Mason O, Wirth FR (2013) Stability criteria for SIS epidemiological models under switching policies. arXiv preprint arXiv:1306.0135

Van Mieghem P (2010) Graph spectra for complex networks. Cambridge University Press, Cambridge

Van Mieghem P (2011) The N-intertwined SIS epidemic network model. Computing 93(2–4):147–169

Van Mieghem P (2012) The viral conductance of a network. Comput Commun 35(12):1494–1506

Van Mieghem P (2014a) Performance analysis of complex networks and systems. Cambridge University Press, Cambridge

Van Mieghem P (2014b) SIS epidemics with time-dependent rates describing ageing of information spread and mutation of pathogens. Delft Univ Technol 1(15):1–11

Van Mieghem P (2016) Universality of the SIS prevalence in networks. arXiv preprint arXiv:1612.01386

Van Mieghem P, van de Bovenkamp R (2015) Accuracy criterion for the mean-field approximation in susceptible-infected-susceptible epidemics on networks. Phys Rev E 91(3):032812

Van Mieghem P, Omic J (2014) In-homogeneous virus spread in networks. arXiv preprint arXiv:1306.2588

Van Mieghem P, Omic J, Kooij R (2009) Virus spread in networks. IEEE/ACM Trans Netw 17(1):1–14

Verhulst PF (1838) Notice sur la loi que la population suit dans son accroissement. Corresp Math Phys 10:113–126

Wan Y, Roy S, Saberi A (2008) Designing spatially heterogeneous strategies for control of virus spread. IET Syst Biol 2(4):184–201

Watts DJ, Strogatz SH (1998) Collective dynamics of “small-world” networks. Nature 393(6684):440

Acknowledgements

We are grateful to Karel Devriendt for his help in proving Theorem 4.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendices

Nomenclature

The eigenvalues of the effective infection rate matrix W are denoted, in decreasing order, by \(| \lambda _1 | \ge \cdots \ge |\lambda _N|\). The principal eigenvector of unit length of the matrix W is denoted by \(x_1\) and satisfies \(W x_1 = \lambda _1 x_1\). The greatest and smallest curing rate in \(\{\delta _1,\ldots , \delta _N\}\) are denoted by \(\delta _{\text {max}}\) and \(\delta _{\text {min}}\), respectively. The numerical radius r(M) for an \(N \times N\) matrix M is defined as (Horn and Johnson 1990)

where \(z^H\) is the conjugate transpose of a complex \(N\times 1\) vector z. For a square matrix M, we denote the 2-norm by \(\Vert M \Vert _2\), which equals the largest singular value of M. In particular, it holds that the 2-norm of the curing rate matrix S equals \(\Vert S \Vert _2 = \delta _{\text {max}}\). Table 1 summarises the nomenclature.

Proof of Theorem 1

The steady-state \(v_\infty \) solely depends on the effective infection rate matrix W: By left-multiplication of (3) with the diagonal matrix \(S^{-1}\), we obtain that

In general, the effective infection rate matrix W, defined in (5) as \(W=S^{-1}B\), is asymmetric, which prevents a straightforward adaptation of the proof in Van Mieghem (2012, Lemma 4). However, the matrix W is similar to the matrix

Since the infection rate matrix B is symmetric under Assumption 3, the matrix \({\tilde{W}}\) is symmetric. Hence, the matrix \({\tilde{W}}\), and also the effective infection rate matrix W, are diagonalisable. With (32), we write the steady-state (31) with respect to the symmetric matrix \({\tilde{W}}\) as

We decompose the matrix \({\tilde{W}}\) as

where the eigenvalues of \({\tilde{W}}\) are real and equal to \(\lambda _1 > \lambda _2 \ge \cdots \ge \lambda _N\) with the corresponding normalized eigenvectors denoted by \({\tilde{x}}_1,\ldots , {\tilde{x}}_N\). Then, the steady-state vector \(v_\infty \) can be expressed as linear combination

where the coefficients equal \(\psi _l = v^T_\infty {\tilde{x}}_l\). To prove Theorem 1, we would like to express the coefficients \(\psi _1,\ldots , \psi _N\) as a power series around \(R_0 = 1\). However, in the limit process \(\left( B, S\right) \rightarrow \left( B^*, S^*\right) \), the eigenvectors \({\tilde{x}}_1,\ldots , {\tilde{x}}_N\) of the matrix \({\tilde{W}}\) are not necessarily constant. Hence, the coefficients \(\psi _l\) depend on the full matrix \({\tilde{W}}\) and not only on the basic reproduction number \(R_0\). To overcome the challenge of non-constant eigenvectors \({\tilde{x}}_1,\ldots , {\tilde{x}}_N\) in the limit process \(\left( B, S\right) \rightarrow \left( B^*, S^*\right) \), we define the symmetric auxiliary matrix

for a scalar \(z \ge 1\). Thus, the matrix M(z) is obtained from the matrix \({\tilde{W}}\) by replacing the largest eigenvalue \(\lambda _1\) of \({\tilde{W}}\) by z. In particular, the definition of the matrix M(z) in (35) and (34) illustrate that \(M(\lambda _1) = {\tilde{W}}\). When the matrix \({\tilde{W}}\) is formally replaced by the matrix M(z), the steady-state equation (33) becomes

where the \(N\times 1\) vector \({\tilde{v}}(z)\) denotes the solution of (36). Since \(M(R_0) = {\tilde{W}}\), the solution of (36) at \(z=R_0\) and the solution to (33) coincide, i.e., \({\tilde{v}}(R_0)=v_\infty \). Lemma 2 expresses the solution of the equation (36) as a power series.

Lemma 2

Suppose that Assumptions 1 and 3 hold. If (B, S) is sufficiently close to \((B^*, S^*)\), then the \(N\times 1\) vector \({\tilde{v}}(z)\) which satisfies (36) equals

where the \(N\times 1\) vector \(\phi (z)\) satisfies \(\Vert \phi (z) \Vert _2 \le \sigma (B, S) (z - 1)^2\) for some scalar \(\sigma (B, S)\) when z approaches 1 from above.

Proof

The proof is an adaptation of the proof (Van Mieghem 2012, Lemma 4). We express the solution \({\tilde{v}}(z)\) of (36) as linear combination of the vectors \(S^{-\frac{1}{2}}{\tilde{x}}_1,\ldots , S^{-\frac{1}{2}}{\tilde{x}}_N\), i.e.,

Since the diagonal matrix \(S^{-\frac{1}{2}}\) is full rank, the vectors \(\left( S^{-\frac{1}{2}} {\tilde{x}}_k\right) \), where \(k=1,\ldots , N\), are linearly independent. Furthermore, we express the coefficients \(\psi _k(z)\) as a power series

where \(g_0(k) = 0\) for every \(k=1,\ldots , N\), since (Lajmanovich and Yorke 1976) it holds that \({\tilde{v}}(z) = 0\) when \(z = 1\). We denote the eigenvalues of the matrix M(z) by

By substituting (38) into (36), we obtain that

and left-multiplying with the eigenvector \({\tilde{x}}^T_m\), for any \(m=1,\ldots , N\), yields

We define

Then, we rewrite (41) as

First, we focus on the left-hand side of (42), which we denote by

With the power series (39), we obtain that

Further rewriting yields that

Second, we rearrange the right-hand side of (42) as

By the definition of \(\lambda _k(z)\) in (40) it holds that \(\lambda _1(z) = z\), and we obtain that

where

Introducing the power series (39) into (44) and executing the Cauchy product for \(\psi _l(z) \psi _k(z)\) yields

We shift the index j in the first term and obtain

Finally, we equate powers in \((z-1)^j\) in (43) and (45), which yields for \(j=1\) that

for every \(m=1,\ldots , N\). The spectral radius of the limit \(W^*\) of the effective infection rate matrix W equals 1. Furthermore, the limit \(W^*\) is a non-negative and irreducible matrix. Thus, the eigenvalues of the limit \(W^*\) obey \(\lambda ^*_1 = 1 > |\lambda ^*_m|\) for every \(m\ge 2\), which implies that \(|\lambda _m| < 1\) for every \(m\ge 2\) provided that (B, S) is sufficiently close to \((B^*, S^*)\). With the definition of \(\lambda _m(z)\) in (40), we obtain from (46) that \(g_1(m) = 0\) when \(m \ge 2\) provided that (B, S) is sufficiently close to \((B^*, S^*)\), since \(z\ge 1\).

For \(j\ge 2\), equating powers in (45) yields that

In particular, for the case \(j=2\), we obtain

since \(g_1(l)=0\) for all \(l\ge 2\) and \({\tilde{\lambda }}_1 = 1\). Since \(\lambda _1(z) = z\), we obtain for \(m=1\) from (48) that

and, hence,

Since \(g_1(m) = 0\) for \(m\ge 2\), we obtain that the power series (38) for the solution \({\tilde{v}}(z)\) of (36) becomes

where the \(N\times 1\) vector \(\phi (z)\) equals

Thus, it holds \(\Vert \phi (z)\Vert _2 = {\mathcal {O}}\left( (z-1)^2\right) \) when z approaches 1 from above, which proves Lemma 2. \(\square \)

We believe that, based on (47), a recursion for the coefficients \(g_j(k)\) can be obtained for powers \(j\ge 2\), similar to the proof of Van Mieghem (2012, Lemma 4). The radius of convergence of the power series (49) is an open problem, see also He and Van Mieghem (2020). To express the solution \({\tilde{v}}(z)\) in (37) in terms of the principal eigenvector \(x_1\) of the effective infection rate matrix W, we propose Lemma 3.

Lemma 3

Under Assumptions 1 and 3, it holds that

Proof

From (32), it follows that the principal eigenvector \({\tilde{x}}_1\) of the matrix \({\tilde{W}}\) and the principal eigenvector \(x_1\) of the effective infection rate matrix W are related via

or, component-wise,

Then, we rewrite the left-hand side of (50) as

which simplifies to

Writing out the quadratic form in the numerator completes the proof. \(\square \)

The basic reproduction number \(R_0\) converges to 1 when \((B, S) \rightarrow (B^*, S^*)\). Hence, if (B, S) is sufficiently close to \((B^*, S^*)\), then the basic reproduction number \(R_0\) is smaller than the radius of convergence of the power series (38). Thus, if (B, S) is sufficiently close to \((B^*, S^*)\), then the solution \({\tilde{v}}(R_0)\) to (36) at \(z=R_0\) follows with Lemma 2 as

where the last equality follows from Lemma 3 and the definition of the scalar \(\gamma \) in (7). We emphasise that Lemma 2 implies that \(\gamma = {\mathcal {O}}(R_0 -1)\) and, hence, \(\Vert {\tilde{v}}(R_0) \Vert _2= {\mathcal {O}}(R_0 -1)\) as \((B, S) \rightarrow (B^*, S^*)\). Since \(M(R_0) = {\tilde{W}}\), the solution of (36) at \(z=R_0\) and the solution to (33) coincide, i.e., \({\tilde{v}}(R_0)=v_\infty \). Thus, from the definition of the vector \(\eta \) in (6), we obtain that

when \((B, S) \rightarrow (B^*, S^*)\). Lemma 2 states that \(\left\Vert \phi (z) \right\Vert _2 = {\mathcal {O}}\left( (z-1)^2\right) \) as \(z \downarrow 1\). Hence, we obtain from (51) that

for some scalar \(\sigma (B, S)\) when \((B, S) \rightarrow (B^*, S^*)\).

Furthermore, when (B, S) converge to the limit \((B^*, S^*)\), the scalar \(\sigma (B, S)\) converges to some limit \(\sigma (B^*, S^*)\). Hence, by defining the constant

for some \(\epsilon _\sigma > 0\), it holds that

for all (B, S) which are sufficiently close to \((B^*, S^*)\). Finally, we obtain from (52) that

when (B, S) approaches \((B^*, S^*)\).

Proof of Lemma 1

We divide Lemma 1 into two parts. In Sect. C.1, we prove that the viral state v(t) does not overshoot the steady-state \(v_\infty \). In Sect. C.2, we show that the function c(t) lies in the interval [0, 1].

1.1 Absence of overshoot

The proof follows the same reasoning as Prasse and Van Mieghem (2019, Corollary 1). Assume that at some time \(t_0\) it holds \(v_i(t_0) = v_{\infty , i}\) for some node i and that \(v_j(t_0) \le v_{\infty , j}\) for every node j. Since \(v_i (t_0) = v_{\infty , i}\), the NIMFA equation (1) yields that

Since \(v_j(t_0) \le v_{\infty , j}\) for every node j, we obtain that

where the last equality follows from the steady-state equation (3). Thus, \(v_i (t_0) = v_{\infty , i}\) implies that \(\left. \frac{d v_i (t)}{d t } \right| _{t = t_0} \le 0\), which means that, at time \(t_0\), the viral state \(v_i(t_0)\) does not increase. Hence, the viral state \(v_i(t_0)\) cannot exceed the steady-state \(v_{\infty , i}\) at any time \(t \ge 0\).

1.2 Boundedness of the function c(t)

Relation (16) indicates that

Section C.1 shows that Assumption 2 implies that \(v_i(t)\le v_{\infty , i}\) for all nodes i and every time t. Thus, we obtain from (53) that

Analogously, since \(v_i(t)\ge 0\) for all nodes i and every time t, we obtain from (53) that \(c(t) \ge 0\).

Proof of Theorem 2

By inserting the ansatz (15) into the NIMFA equations (2), we obtain that

Here, the function \(\varLambda _1(t)\) is given by

which simplifies, with the steady-state equation (3), to

The function \(\varLambda _2(t)\) is given by

With \({\text {diag}}(\xi (t)) B v_\infty = {\text {diag}}(B v_\infty ) \xi (t)\), we obtain that

To show that the error term \(\xi (t)\) converges to zero at every time t when \(\left( B, S\right) \rightarrow \left( B^*, S^*\right) \), we consider the squared Euclidean norm \(\Vert \xi (t)\Vert ^2_2\). The convergence of the squared norm \(\Vert \xi (t)\Vert ^2_2\) to zero implies the convergence of the error term \(\xi (t)\) to zero. The derivative of the squared norm \(\Vert \xi (t)\Vert ^2_2\) is given by

Thus, we obtain from (54) that

since \(\xi ^T(t) v_\infty =0\) by definition of \(\xi (t)\). We do not know how to solve (57) exactly, and we resort to bounding the two addends on the right-hand side of (57) in Sects. D.1 and D.2, respectively. In Sect. D.3 we complete the proof of Theorem 2 by deriving an upper bound on the squared norm \(\Vert \xi (t)\Vert ^2_2\).

1.1 Upper bound on \(\xi ^T(t) \varLambda _1(t)\)

We obtain an upper bound on the projection of the function \(\varLambda _1(t)\) onto the error vector \(\xi (t)\), which is linear with respect to the norm \(\Vert \xi (t)\Vert _2\):

Lemma 4

Under Assumptions 1 to 3, it holds at every time \(t\ge 0\) that

Proof

From (55) and the definition of the matrix W in (5) it follows that

With Theorem 1, we obtain

The triangle inequality yields that

With the Cauchy–Schwarz inequality, the first addend in (58) is upper-bounded by

since \(\Vert x_1 \Vert _2 = 1\) and the matrix S is symmetric. The matrix 2-norm is sub-multiplicative, which yields that

Thus, (58) gives that

since \(\gamma > 0\) and \(R_0 >1\). We consider the second addend in (59), which we write with (32) as

From the Cauchy–Schwarz inequality and the sub-multiplicativity of the matrix norm we obtain

The triangle inequality and the symmetry of the matrix \({\tilde{W}}\) imply that

Thus, we can upper bound the second added in (59) by

since \(\Vert S^{\frac{1}{2}} \Vert _2 = \sqrt{\delta _{\text {max}}}\). Hence, (59) yields the upper bound

Finally, Lemma 1 states that \(0\le c(t)\le 1\), which implies that

and completes the proof. \(\square \)

1.2 Upper bound on \(\xi ^T(t) \varLambda _2(t)\)

Lemma 5 states an intermediate result, which we will use to bound the projection of the function \(\varLambda _2(t)\) onto the error vector \(\xi (t)\).

Lemma 5

Suppose that Assumptions 1 to 3 hold. Then, at every time \(t \ge 0\), it holds that

Proof

From (56) it follows that

To simplify (60), we aim to bound the last addend of (60) by an expression that is quadratic in the error vector \(\xi (t)\). The last addend equals

Since \(v(t) = c(t) v_\infty + \xi (t)\) and \(v_i(t) \ge 0\) for every node i at every time t, it holds that

By inserting (62) in (61), the last addend of (60) is upper bounded by

which simplifies to

By applying the upper bound (63) to (60), we obtain that

With the definition of the matrix \({\tilde{W}}\) in (32), we obtain

and further rearranging completes the proof. \(\square \)

For any scalar \(\varsigma \in [0, 1]\) and any vector \(\upsilon \in {\mathbb {R}}^N\), we define

Then, we obtain from Lemma 5 that

To upper-bound the term \(\varTheta ( c(t), \xi (t), B, S )\), we make use of (parts of) the results of Issos (1966), which are analogues of the Perron–Frobenius Theorem for the numerical radius of a non-negative, irreducible matrix:

Theorem 5

(Issos 1966) Let M be a real irreducible and non-negative \(N\times N\) matrix. Then, there is a positive vector \(z \in {\mathbb {R}}^N\) of length \(z^T z = 1\) such that \(z^T M z = r(M)\). Furthermore, if \({\tilde{z}}^T M {\tilde{z}} = r(M)\) holds for a vector \({\tilde{z}} \in {\mathbb {R}}^N\) of length \({\tilde{z}}^T {\tilde{z}} = 1\), then either \({\tilde{z}} = z\) or \({\tilde{z}} = -z\).

We refer the reader to Issos (1966), Maroulas et al. (2002) and Li et al. (2002) for further results on the numerical radius of non-negative matrices. We apply Theorem 5 to obtain:

Lemma 6

Denote the set of \(N\times 1\) vectors with at least one positive and at least one negative component as

Then, it holds \(\varTheta ( \varsigma , \upsilon , B, S ) < R_0\) for every scalar \(\varsigma \in [0,1]\) and for every vector \(\upsilon \in {\mathcal {S}}\).

Proof

By introducing the \(N\times 1\) vector

and by using (32), we rewrite the term \(\varTheta ( \varsigma , \upsilon , B, S )\) as

For every scalar \(\varsigma \in [0,1]\) the matrix \(({\text {diag}}(u - \varsigma v_\infty ) {\tilde{W}})\) is irreducible and non-negative. Since \(\upsilon \in {\mathcal {S}}\) and the matrix S is a diagonal matrix with non-negative entries, it holds that \({\tilde{\upsilon }}_i < 0\) and \({\tilde{\upsilon }}_j>0\) for some i, j. Hence, at least two components of the vector \({\tilde{\upsilon }}\) have different signs, and Theorem 5 implies that (65) is upper-bounded by

Since the matrix \({\tilde{W}}\) is irreducible and \({\text {diag}}(u - \varsigma v_\infty ) {\tilde{W}} \le {\tilde{W}}\) for every \(\varsigma \in [0,1]\), where the inequality holds element-wise, it holds (Li et al. 2002, Corollary 3.6.) that

The matrix \({\tilde{W}}\) is symmetric, and, hence, the numerical radius \(r\left( {\tilde{W}} \right) \) equals the spectral radius \(\rho \left( {\tilde{W}} \right) = R_0\), which yields that

\(\square \)

Finally, we obtain a bound on the projection of the function \(\varLambda _2(t)\) onto the error vector \(\xi (t)\):

Lemma 7

Under Assumptions 1 to 3, there is some constant \(\omega > 0\) such that

holds at every time \(t\ge 0\) when \(\left( B, S\right) \) approaches \(\left( B^*, S^*\right) \).

Proof

We denote the maximum of the function \(\varTheta ( \varsigma , \upsilon , B, S )\) with respect to \(\varsigma \in [0, 1]\) and \(\upsilon \in {\mathcal {S}}\) by

As a first step, we consider the value of \(\varTheta _{\text {max}}( B^*, S^* )\) at the limit \((B^*, S^*)\). Since the steady-state \(v_\infty \) equals to zero at the limit \((B^*, S^*)\), we obtain from (65) that

where we denote \({\tilde{W}}^* = \left( S^*\right) ^{-\frac{1}{2}} B^* \left( S^*\right) ^{-\frac{1}{2}}\). Since it holds \(R_0 =1\) at the limit \((B^*, S^*)\), Lemma 6 implies that

As a second step, we consider that the infection rate matrix B and the curing rate matrix S do not equal the respective limit \(B^*\) and \(S^*\). Thus, there are non-zero \(N\times N\) matrices \(\varDelta B, \varDelta S\) and \(\varDelta {\tilde{W}}\) such that \(B = B^* +\varDelta B\), \(S = S^* + \varDelta S\), and \({\tilde{W}} = {\tilde{W}}^* + \varDelta {\tilde{W}}\). Then, we obtain from (65) that

which is upper-bounded by

Maximising every addend in (69) independently yields an upper bound on \(\varTheta _{\text {max}}( B, S )\) as

In the following, we state upper bounds for each of the three addends in (67) separately. With (67), we write the first addend in (70) as

where the last equality follows from the definition of \(\varTheta _{\text {max}}( B^*, S^* )\) in (66). Regarding the second addend in (70), it holds that

where the last equality follows from the definition the numerical radius. Hence, the second addend in (70) is upper-bounded by

for some \(\varsigma ^{(1)}_{\text {opt}} \in [0,1]\). Similarly, we obtain an upper bound on the third addend in (70) as

for some \(\varsigma ^{(2)}_{\text {opt}} \in [0,1]\). With (71), (72) and (73), we obtain from (70) that

The numerical radius r(M) is a vectorFootnote 8 norm (Horn and Johnson 1990) on the space of \(N\times N\) matrices M. Thus, the numerical radius r(M) converges to zero if the matrix M converges to zero. Since \(v_\infty \rightarrow 0\) and \(\varDelta {\tilde{W}} \rightarrow 0\) as \((B, S) \rightarrow (B^*, S^*)\) and \(\varsigma ^{(1)}_{\text {opt}}, \varsigma ^{(2)}_{\text {opt}}\) are bounded, the last two addends in (74) converge to zero as \((B, S) \rightarrow (B^*, S^*)\). Hence, for every scalar \(\omega >0\) there is a \(\vartheta (\omega )\) such that \(\Vert B - B^* \Vert _2 < \vartheta (\omega )\) and \(\Vert S - S^* \Vert _2 < \vartheta (\omega )\) implies that

We choose the scalar \(\omega = (1- \varTheta _{\text {max}}( B^*, S^* ))/2\), which is positive due to (68). Then, the right-hand side of (75) becomes

Thus, we obtain from (75) that

holds for all (B, S) which are sufficiently close to the limit \((B^*, S^*)\).

By definition, the error vector \(\xi (t)\) at any time \(t\ge 0\) is orthogonal to the steady-state vector \(v_\infty \). Since the steady-state \(v_\infty \) is positive, the error vector \(\xi (t)\) has at least one positive and one negative element, and, hence, it holds that \(\xi (t) \in {\mathcal {S}}\). Thus, we obtain from the definition of the term \(\varTheta _{\text {max}}(B, S)\) in (66) that

With (76), we obtain from (64) that

From the sub-multiplicativity of the matrix norm, we obtain

which completes the proof, since \(\Vert S^{\frac{1}{2}} \Vert ^2_2 = \delta _{\mathrm{max}}\). \(\square \)

1.3 Bound on the error vector \(\xi (t)\)

With Lemma 4 and Lemma 7, we upper-bound (57) by

From

it follows that

We denote

and we obtain that

The upper bound (78) is a linear first-order ordinary differential inequality, which is solved by (Arfken and Weber 1999)

which simplifies to

The triangle inequality yields that

Furthermore, since \(e^{-\omega \delta _{\text {max}} t} \le 1\) at every time \(t\ge 0\), we obtain from (79) that

The maximum \(\delta _{\text {max}}\) of the curing rates converges to some limit \(\delta ^*_{\text {max}}\) when \(\left( B, S\right) \rightarrow \left( B^*, S^*\right) \). Hence, for any \(\epsilon >0\) it holds that \(\delta ^*_{\text {max}} - \epsilon < \delta _{\text {max}}\) when \(\left( B, S\right) \) approaches \(\left( B^*, S^*\right) \). For some \(\epsilon \in (0, \delta ^*_{\text {max}})\), we set the constant

Then, it holds that \(\sigma _1 < \omega \delta _{\text {max}}\) when \(\left( B, S\right) \) approaches \(\left( B^*, S^*\right) \), and we obtain from (80) that

Theorem 1 states that \(\gamma = {\mathcal {O}}(R_0 - 1)\) and \(\Vert \eta \Vert _2 = {\mathcal {O}}\left( (R_0 - 1)^2\right) \) when \(\left( B, S\right) \) approaches \(\left( B^*, S^*\right) \). Thus, it follows from the definition of the term \(\varphi \left( B, S \right) \) in (77) that \(\varphi \left( B, S \right) = {\mathcal {O}}\left( (R_0-1)^2\right) \). Hence, there is a constant \(\sigma _2 >0\) such that (81) yields

when \(\left( B, S\right) \) approaches \(\left( B^*, S^*\right) \).

Proof of Theorem 3

By projecting the differential equation (54) onto the steady-state vector \(v_\infty \), we obtain that

since \(v^T_\infty \xi (t) = 0\) by definition of the error term \(\xi (t)\). We divide by \(\Vert v_\infty \Vert ^2_2\) and obtain with (55) that

The first addend in the differential equation (82) can be expressed in a simpler manner when \(\left( B, S\right) \) approaches \(\left( B^*, S^* \right) \):

Lemma 8

Under Assumptions 1 and 3, it holds

where \(\zeta = {\mathcal {O}}\left( (R_0 - 1)^2\right) \) when \(\left( B, S\right) \) approaches \(\left( B^*, S^* \right) \).

Proof

With Theorem 1 and the definition of the matrix W in (5), the numerator of the left-hand side of (83) becomes

where the last equality follows from \(Wx_1 = R_0 x_1\). Thus, it holds that

Under Assumption 3, both matrices B and S are symmetric, which implies that

Hence, we obtain from (84) that

Since \(\gamma = {\mathcal {O}}(R_0 - 1)\) and \(\Vert \eta \Vert _2 = {\mathcal {O}}\left( (R_0 - 1)^2 \right) \), we finally rewrite the numerator of the left-hand side of (83) as

With Theorem 1, the denominator of the left-hand side of (83) equals

Combining the approximate expressions for the numerator (85) and the denominator (86) completes the proof. \(\square \)

We define the viral slope w as

and the function n(t) as

Then, we obtain from (82) that

The function n(t) is complicated and depends on the error vector \(\xi (t)\). Hence, we cannot solve the differential equation (89) for the function c(t) without knowing the solution for the error vector \(\xi (t)\). However, as \((B, S) \rightarrow (B^*, S^*)\), the function n(t) converges to zero uniformly in time t as stated by the bound in Lemma 9.

Lemma 9

Under Assumptions 1 to 3, it holds at every time \(t \ge 0\) that

for some constants \(\sigma _1, \sigma _2, \sigma _3 >0\) when (B, S) approaches \((B^*, S^*)\).

Proof

Regarding the first addend in the definition of the function n(t) in (88), Lemma 1 implies that \(0 \le c(t) - c^2(t) \le 1/4\) at every time t. Hence, Lemma 8 yields that there is a constant \({\tilde{\sigma }}_0\) such that

at every time t when (B, S) approaches \((B^*, S^*)\). Regarding the second addend of the function n(t) defined in (88), it follows from the definition of the function \(\varLambda _2(t)\) in (56) that

since \(v(t)=c(t)v_\infty + \xi (t)\). Thus, it holds that

With the definition of the matrix \({\tilde{W}}\) in (32), we obtain that

The Cauchy–Schwarz inequality yields an upper bound as

With \(\left\Vert S^{\frac{1}{2}} \xi (t) \right\Vert _2 \le \sqrt{\delta _{\text {max}}}\left\Vert \xi (t) \right\Vert _2\) and the triangle inequality, we obtain

In the following, we consider the three addends in (90) separately. Regarding the first addend, we obtain with the definition of the matrix \({\tilde{W}}\) in (32) that

where the last equality follows from Theorem 1. Thus, the triangle inequality yields

With the sub-multiplicativity of the matrix 2-norm, we obtain

since \( \left\Vert \left( W - I \right) \right\Vert _2 \le R_0 + 1\). Since \(\gamma = {\mathcal {O}}(R_0 - 1)\) and \(\left\Vert \eta \right\Vert _2 = {\mathcal {O}}((R_0 - 1)^2)\) when \((B, S)\rightarrow (B^*, S^*)\), there is a constant \({\tilde{\sigma }}_1\) such that

when (B, S) approaches \((B^*, S^*)\). Regarding the second addend in (90), it holds that

Since \(\left\Vert v_{\infty }\right\Vert _2 = {\mathcal {O}}(R_0 - 1)\) when \((B, S)\rightarrow (B^*, S^*)\), it follows that there is a constant \({\tilde{\sigma }}_2\) such that

when (B, S) approaches \((B^*, S^*)\). Regarding the third addend in (90), it holds per definition of the matrix 2-norm that

where the last inequality follows from \(c(t)\le 1\), as stated by Lemma 1, and the definition of the effective infection rate matrix W in (5). Hence, we obtain the upper-bound

for some constant \({\tilde{\sigma }}_3\) when (B, S) approaches \((B^*, S^*)\). We apply the three upper bounds (91), (92) and (93) to (90) and obtain that

when (B, S) approaches \((B^*, S^*)\). Since \(\left\Vert v_{\infty }\right\Vert ^2_2 = {\mathcal {O}}((R_0 - 1)^2)\) when \((B, S)\rightarrow (B^*, S^*)\), there is a constant \({\tilde{\sigma }}_4\) such that, as (B, S) approaches \((B^*, S^*)\), it holds

at every time t. Thus, we have obtained an upper bound, which is proportional to the norm of the error vector \(\xi (t)\). Finally, we apply Theorem 2 to bound the norm \(\left\Vert \xi (t) \right\Vert _2 \), which completes the proof. \(\square \)

Lemma 9 suggests that, since \(n(t)\rightarrow 0\) when \(\left( B, S\right) \rightarrow \left( B^*, S^*\right) \), the differential equation (89) for the function c(t) is approximated by the logistic differential equation

To make the statement (94) precise, we define the function \(c_b(t, x)\), for any scalar x with \(|x|<w\), as

where the constant \(\varUpsilon (x)\) is set such that \(c_b(0, x) = c(0)\), i.e.,

Lemma 10 states an upper and a lower bound on the function c(t).

Lemma 10

Suppose that Assumptions 1 to 3 hold and that

for some constants \(\sigma _1>0\) and \(p>1\) when \(\left( B, S\right) \) approaches \(\left( B^*, S^*\right) \). Then, the function c(t) is bounded by

where the scalar \(\kappa \) equals \(\kappa = \sigma _2 (R_0 - 1)^s\) with \(s = {\mathrm{min}}\{p, 2\}\) and some constant \(\sigma _2 >0\) as \(\left( B, S\right) \) approaches \(\left( B^*, S^*\right) \).

Proof

With (96), Lemma 9 implies that it holds

for some constants \({\tilde{\sigma }}_1, {\tilde{\sigma }}_2, {\tilde{\sigma }}_3>0\). Since \(e^{-{\tilde{\sigma }}_2 t}\le 1\), we obtain that \(\left| n(t)\right| \le \kappa \) at every time t, where we define the scalar