Abstract

A common view in evolutionary biology is that mutation rates are minimised. However, studies in combinatorial optimisation and search have shown a clear advantage of using variable mutation rates as a control parameter to optimise the performance of evolutionary algorithms. Much biological theory in this area is based on Ronald Fisher’s work, who used Euclidean geometry to study the relation between mutation size and expected fitness of the offspring in infinite phenotypic spaces. Here we reconsider this theory based on the alternative geometry of discrete and finite spaces of DNA sequences. First, we consider the geometric case of fitness being isomorphic to distance from an optimum, and show how problems of optimal mutation rate control can be solved exactly or approximately depending on additional constraints of the problem. Then we consider the general case of fitness communicating only partial information about the distance. We define weak monotonicity of fitness landscapes and prove that this property holds in all landscapes that are continuous and open at the optimum. This theoretical result motivates our hypothesis that optimal mutation rate functions in such landscapes will increase when fitness decreases in some neighbourhood of an optimum, resembling the control functions derived in the geometric case. We test this hypothesis experimentally by analysing approximately optimal mutation rate control functions in 115 complete landscapes of binding scores between DNA sequences and transcription factors. Our findings support the hypothesis and find that the increase of mutation rate is more rapid in landscapes that are less monotonic (more rugged). We discuss the relevance of these findings to living organisms.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Mutation is one of the most important biological processes that influence evolutionary dynamics. During replication mutation leads to a loss of information between the offspring and its parent, but it also allows the offspring to acquire new features. These features are likely to be deleterious, but have the potential to be beneficial for adaptation. Thus, mutation can be seen as a process of innovation, which is particularly important as the number of all living organisms is tiny relative to the number of all possible organisms. A question that naturally arises with regards to mutation is whether there is an optimal balance between the amount of information lost and potential fitness gained.

The seminal mathematical work to investigate biological mutation is by Fisher (1930), who considered mutation as a random motion in Euclidean space, the points of which are vectors representing collections of phenotypic traits of organisms. Using the geometry of Euclidean space, Fisher showed that probability of adaptation decreases exponentially as a function of mutation size (defined using the ratio of mutation radius and distance to the optimum), and concluded, therefore, that adaptation is more likely to occur by small mutations. Several studies, however, suggested that large mutations can be quite frequent in nature, thereby prompting re-examination of the theory (Orr 2005). Thus, Kimura (1980) extended the theory to take into account differences in probabilities of fixation for mutations of small and large size. Subsequently Orr (1998) considered the effect of mutation across several replications. Interestingly, while Fisher had a critical role in developing mathematical theory around discrete alleles, in his geometric model he used Euclidean space of traits as the domain of mutation, which is uncountably infinite and unbounded. This important issue only became apparent after the realisation that biological evolution occurs in a countable or even finite space of discrete molecular sequences (Smith 1970). However, subsequent geometric models based on Fisher’s, while explicitly modelling discrete mutational steps (e.g. Orr 2002), continue to assume that they occur within the same infinite Euclidean space. This issue may contribute to the fact that the predictions of such models have at best only been partially verified in actual biological systems (McDonald et al. 2011; Bataillon et al. 2011; Kassen and Bataillon 2006; Rokyta et al. 2008). In this and previous work, we consider mutation using the geometry and combinatorics of Hamming spaces (Belavkin et al. 2011; Belavkin 2011), which are finite, and this leads to a radically different view about the role of large mutations.

Independent of such biological concerns, researchers in evolutionary computation and operations research have a long history of considering variable mutation rates in genetic algorithms (GAs) (e.g. see Eiben et al. 1999; Ochoa 2002; Falco et al. 2002; Cervantes and Stephens 2006; Vafaee et al. 2010, for reviews). In particular, Ackley (1987) suggested that mutation probability is analogous to temperature in simulated annealing, which decreases with time through optimisation. A gradual reduction of mutation rate was also proposed by Fogarty (1989). Markov chain analysis of GAs was used by Yanagiya (1993) to show that a sequence of optimal mutation rates maximising the probability of obtaining the global solution exists in any problem. In particular, Bäck (1993) studied the probability of adaptation in the space of binary strings and derived optimal mutation rates depending on the distance from the global optimum. More recently, numerical methods have been used to optimise a mutation operator (Vafaee et al. 2010) that was based on the Markov chain model of GA by Nix and Vose (1992), although the complexity of this method may restrict its application to small spaces and populations. More recently, several authors have analysed the run-time of the co-called \((1+1)\)-evolutionary algorithm using constant and adaptive mutation rates and demonstrating some advantages of the latter (Böttcher et al. 2010; Sutton et al. 2011). Thus, the idea of using variable mutation rates to optimise evolutionary dynamics is not new. Unfortunately, these results in the field of evolutionary computation (EC) have specific computational focus, which limits their appeal for biology.

First, theoretical work on EC has focused almost exclusively on systems of binary strings. Optimisation of mutation rates of DNA strings, which have the alphabet of four bases, involves analysis of a significantly more difficult combinatorics and geometry. Previously, we presented some results on optimal mutation rates (Belavkin et al. 2011; Belavkin 2011), which used formula (2) for the intersection of spheres in general Hamming spaces. Here we give the derivation of this formula in Appendix 1 and show how it can be used to generalise Fisher’s geometric model of adaptation in Sect. 2.

Second, the run-time analysis and optimisation of evolutionary algorithms is concerned with their long term behaviour, which may have little relevance for biological systems. For example, Böttcher et al. (2010) show that the run time of the \((1+1)\)-evolutionary algorithm is on the order of \(l^2\), where l is the length of a binary string. In biological organisms, the typical length of DNA sequence is \(l\in [10^8,10^{11}]\) (and the alphabet size is \(\alpha =4\)). Assuming the minimum of 20 minutes between replications, the run-time of order \(l^2\) will significantly exceed \(10^{14}\) years—the estimated time after which stars will cease to exist in the Universe (Adams and Laughlin 1997). Moreover, biological landscapes may fail to have a global optimum to converge to, because the set of all DNA sequences with variable lengths is infinite. In addition, biological landscapes are not static, and change on a regular basis. Thus, the short-term behaviour, perhaps within one or several replications, is more important for optimisation of parameters in biological systems. Here we develop these insights regarding mutation rate variation towards the particular issues presented by biological systems.

In Sect. 2 we show how the problem of optimal control of mutation rate can be defined in different ways leading to different solutions. In some cases, these solutions can be obtained analytically. For example, in the idealised geometric model, when maximisation of fitness is equivalent to minimisation of distance to a global optimum, the optimal mutation rates can be derived as functions of the distance (Belavkin 2012, 2013). This, however, is not the case for more realistic landscapes, which can be rugged. In Sect. 3, we address how the control functions can be obtained numerically. Although fitness landscapes have been analysed and classified in terms of hardness for evolutionary algorithms (He et al. 2015), there is no general theory about optimal mutation rates in arbitrary landscapes. The development of such theory is the main focus and contribution of this paper. In Sect. 4, we consider a fitness landscape as a communication channel between fitness values and distances from a nearest optimum. We introduce various notions of monotonicity of a fitness landscape, and discuss how these properties are related to the genotype-phenotype mapping. The main theoretical result is a theorem about weak monotonicity of continuous landscapes, which establishes the condition for a similarity between fitness and distance to an optimum in a broad class of landscapes. This suggests a similarity between fitness-based and distance-based optimal control functions for mutations rates.

These theoretical results allow us to formulate hypotheses about monotonicity and mutation rate control in biological fitness landscapes. We test these hypotheses by numerically obtaining optimal mutation rate control functions for 115 published complete landscapes of transcription factor binding (Badis et al. 2009). Our results presented in Sect. 5 show that all the optimal mutation rate control functions in these biological landscapes do indeed converge to non-trivial forms consistent with the theory developed here. We also observe differences among optimal mutation rate control functions, variation that relates to variation in the landscapes’ monotonic properties. We conclude in Sect. 6 by discussing how mutation rate control as considered here may be manifested in living organisms.

2 Fisher’s geometric model of adaptation in Hamming space

In this section, we consider an abstract problem, in which organisms are represented as points in some metric space and adaptation as a motion in this space towards some target point (an optimal organism), and fitness is negative distance to target. Minimisation of distance to the target is therefore equivalent to maximisation of fitness. Geometry of the metric space allows us to solve the optimisation problem precisely. These abstract results will be used in the following sections to develop the theory further bringing it closer to biology.

2.1 Representation and assumptions

Let \(\varOmega \) be the set of all possible genotypes representing organisms. This set is usually equipped with a metric \(d:\varOmega \times \varOmega \rightarrow [0,\infty )\) related to the mutation operator, such that large mutations correspond to large distance d(a, b) and vice versa. For example, the set of all DNA sequences of length \(l\in \mathbb {N}\) can be represented by vectors in the Hamming space \(\mathcal {H}_\alpha ^l:=\{1,\ldots ,\alpha \}^l\) equipped with the Hamming metric \(d_H(a,b)=\sum _{i=1}^l\delta _{a_i}(b_i)\) counting the number of different letters in two strings. This choice of metric is particularly suitable for a simple point-mutation, which will be the focus of this paper. A sphere S(a, r) and a closed ball B[a, r] of radius \(r\in [0,\infty )\) around \(a\in \varOmega \) are defined as usual:

We refer to \(r=d(a,b)\) as the mutation radius.

Environment defines a preference relation \(\lesssim \) (a total pre-order) so that \(a\lesssim b\) means genotype b represents an organism that is better adapted to or has a higher replication rate in a given environment than an organism represented by genotype a. We shall consider only countable or even finite \(\varOmega \), so that there always exists a real function \(f:\varOmega \rightarrow \mathbb {R}\) such that

In game theory, such a function is called utility, but in the biological context it is called fitness, and usually it is assumed to have non-negative values representing replication rates of the organisms. The non-negativity assumption is not essential, however, because the preference relation \(\lesssim \) induced by f does not change under a strictly increasing transformation of f. Thus, our interpretation of fitness simply as a numerical representation of a preference relation is distinct from population genetic definitions of fitness (e.g. see Orr 2009). We shall assume also that there exists a top (optimal) genotype \(\top \in \varOmega \) such that \(f(\top )=\sup f(\omega )\), which represents the most adapted or quickly replicating organism. Note that a finite set \(\varOmega \) always contains at least one top \(\top \) as well as at least one bottom element \(\bot \).

Generally, one should consider also the set of all environments (including other organisms), because different environments impose different preference relations on \(\varOmega \), which have to be represented by different fitness functions. In this paper, however, we shall assume that fitness in any particular environment has been fixed.

During replication, genotype a can mutate into b with transition probability \(P(b{\,\mid \,} a)\). Mutation can have different effects on fitness: It can be deleterious, if \(f(a)>f(b)\); neutral, if \(f(a)=f(b)\); or beneficial, if \(f(a)<f(b)\).

In this section, we consider a simple picture \(f(\omega )=-d(\top ,\omega )\), so that maximization of fitness \(f(\omega )\) is equivalent to minimization of distance \(d(\top ,\omega )\), and adaptation (beneficial mutation) corresponds to a transition from a sphere of radius \(n=d(\top ,a)\) into a sphere of a smaller radius \(m=d(\top ,b)\), which is depicted in Fig. 1. This geometric view of mutation and adaptation is based on Ronald Fisher’s idea (Fisher 1930), which was, perhaps, the earliest mathematical work on the role of mutation in adaptation. Fisher represented individual organisms by points of Euclidean space \(\mathbb {R}^l\) of \(l\in \mathbb {N}\) traits, and equipped with the Euclidean metric \(d_E(a,b)=(\sum _{i=1}^l |b_i-a_i|^2)^{1/2}\). The top element \(\top \) was identified with the origin in \(\mathbb {R}^l\), and fitness \(f(\omega )\) with the negative distance \(-d_E(\top ,\omega )\). Then Fisher used the geometry of the Euclidean space to show that the probability of beneficial mutation decreases exponentially as the mutation radius increases, and therefore mutations of small radii are more likely to be beneficial. Despite subsequent development of the theory (Orr 2005), the use of Euclidean space for representation was not revised.

Euclidean space is unbounded (and therefore non-compact) and the interior of any ball has always smaller volume than its exterior. Therefore, assuming mutation in random directions, a point on the surface of a ball around an optimum is always more likely to mutate into the exterior than the interior of this ball. This simple property is key for Fisher’s conclusion that adaptation is more likely to occur by small mutations. We showed previously, however, that the geometry of a finite space, such as the Hamming space of strings, implies a different relation between the radius of mutation and adaptation (Belavkin et al. 2011; Belavkin 2011). In particular, the mutation radius maximising the probability of adaptation varies as a function of the distance to the optimum.

2.2 Probability of adaptation in a Hamming space

Consider mutation of genotype \(a\in S(\top ,n)\) in a Hamming space \(\mathcal {H}_\alpha ^l\) into \(b\in S(\top ,m)\) with mutation radius \(r=d(a,b)\), as shown on Fig. 1. Assuming equal probabilities for all points in the sphere S(a, r), the probability that the offspring is in the sphere \(S(\top ,m)\) is given by the number of points in the intersection of spheres \(S(\top ,m)\) and S(a, r):

where \(|\cdot |\) denotes cardinality of a set (the number of its elements). The cardinality of the intersection \(S(\top ,m)\cap S(a,r)\) with condition \(d(\top ,a)=n\) is computed as follows:

where summation runs over indexes \(r_+\in [0,\lfloor (n-|r-m|)/2\rfloor ]\) and \(r_-\in [0,\lfloor (r+m-n)/2\rfloor ]\) (here \(\lfloor \cdot \rfloor \) denotes the floor operation) and satisfying conditions \(r_0+r_-+r_+=\min \{r,m\}\) and \(r_+-r_-=n-\max \{r,m\}\). See Appendix 1 for the derivation of this combinatorial result. We point out that for \(r\le m\), the indexes \(r_+\), \(r_-\) and \(r_0\) count respectively the numbers of beneficial, deleterious and neutral substitutions in \(r\in [0,l]\).

The cardinality of sphere \(S(a,r)\subset \mathcal {H}_\alpha ^l\) is

Equations (1)–(3) allow us to compute the probability of adaptation, which is the probability that the offspring is in the interior of ball \(B[\top ,n]\):

Figure 2 shows the probability of adaptation for Hamming space \(\mathcal {H}_4^{100}\) as a function of mutation radius r for different values of \(n=d(\top ,a)\). One can see that when \(n<75\) (more generally when \(n<l(1-1/\alpha )\)), the probabilities of adaptation decrease with increasing radius \(r>0\), similar to Fisher’s conclusion for the Euclidean space. However, for \(n=75\) there is no such decrease, and when \(n>75\) (i.e. for \(n>l(1-1/\alpha )\)), the probability of adaptation actually increases with r. This is due to the fact that, unlike Euclidean space, Hamming space is finite, and the interior of ball \(B[\top ,n]\) can be larger than its exterior. The geometry of a Hamming space has a number of interesting properties (Ahlswede and Katona 1977). For example, every point \(\omega \) has \((\alpha -1)^l\) diametric opposite points \(\lnot \omega \), such that \(d_H(\omega ,\lnot \omega )=l\), and the complement of a ball \(B[\omega ,r]\) in \(\mathcal {H}_\alpha ^l\) is the union of \((\alpha -1)^l\) balls \(B[\lnot \omega ,l-r-1]\).

2.3 Random mutation

By mutation we understand a random process of transforming the parent string a into offspring b, so that the mutation radius is a random variable. The simplest form of mutation, called point mutation, is the random process of independently substituting each letter in the parent string \(a\in \{1,\ldots ,\alpha \}^l\) to any of the other \(\alpha -1\) letters with probability \(\mu \). At its simplest, with one parameter, there is an equal probability \(\mu /(\alpha -1)\) of mutating to each of the \(\alpha -1\) letters. The parameter \(\mu \) is called the mutation rate. For point mutation, the probability of mutating by radius \(r\in [0,l]\) is given by the binomial distribution:

We assume that the mutation rate \(\mu \) may depend on the distance \(n=d(\top ,a)\) from the top string n, and therefore the probability is also conditional on n.

Optimisation of the mutation rate requires knowledge of the probability \(P_\mu (m\mid n)\) that mutation of a into b leads to a transition from sphere \(S(\top ,n)\) into \(S(\top ,m)\). This transition probability can be expressed as follows:

Substituting (1) and (5) into the above equation, and taking into account (3), we obtain the following expression:

where the number \(|S(\top ,m)\cap S(a,r)|_{d(\top ,a)=n}\) is given by Eq. (2). The case \(\alpha =2\) was investigated previously by several authors (e.g. Bäck 1993; Braga and Aleksander 1994). The expressions for arbitrary alphabets were first presented in (Belavkin et al. 2011) (see also Belavkin 2011).

We note that simple, one parameter point mutation is optimal in a certain sense: it is the solution of a variational problem of minimisation of expected distance between points a and b in a Hamming space subject to a constraint on mutual information between a and b (see Belavkin 2011, 2013). The constraint on mutual information between strings a and b represents the fact that perfect copying is not possible. The optimal solutions to this problem are conditional probabilities having exponential form \(P_\beta (b\mid a)\propto \exp [-\beta \,d(a,b)]\), where parameter \(\beta >0\), called the inverse temperature, is related to the mutation rate, and it is defined from the constraint on mutual information. The reason why this exponential solution in the Hamming space corresponds to independent substitutions with the same probability \(\mu /(\alpha -1)\) is because Hamming metric is computed as the sum \(d_H(a,b)=\sum _{i=1}^l\delta _{a_i}(b_i)\) of elementary distances \(\delta _{a_i}(b_i)\) between letters \(a_i\) and \(b_i\) in ith position in the string, and the values \(\delta _{a_i}(b_i)\) are equal to zero or one independent of the specific letters of the alphabet or their position i. Other, more complex mutation operators, which incorporate multiple parameters or non-independent substitutions (the phenomenon known in biology as epistasis) can be considered as optimal solutions of the same variational problem, but applied to a different representation space \(\mathcal {H}\) with a different metric.

2.4 Optimal control of mutation rates

The fact that the transition probability \(P_\mu (m\mid n)\), defined by Eq. (6), depends on the mutation rate \(\mu \) introduces the possibility of organisms maximising the expected fitness of their offspring by controlling the mutation rate. We call the collection of pairs \((n,\mu )\) the mutation rate control function \(\mu (n)\). Indeed, let \(P_t(a)\) be the distribution of parent genotypes in \(\mathcal {H}_\alpha ^l\) at time t, and let \(P_t(n)=\sum _{a:d(\top ,a)=n}P_t(a)\) be the distribution of their distances \(n=d_H(\top ,a)\) from the optimum. Transition probabilities \(P_\mu (m\mid n)\) define a linear transformation \(T_{\mu (n)}(\cdot ):=\sum _{n=0}^lP_\mu (m\mid n)(\cdot )\) of distribution \(P_t(n)\) into distribution \(P_{t+1}(m)\) of distances \(m=d_H(\top ,b)\) of their offspring at time \(t+1\):

If this transformation does not change with time, then the distribution \(P_{t+s}(m)\) after s generations is defined by \(T_{\mu (n)}^s\), the sth power of \(T_{\mu (n)}\). The optimal mutation rates can be found (at least in principle) by minimising the expected distance subject to additional constraints, such as the time horizon \(\lambda \):

For example, mutation rates minimising the expected distance at \(\lambda =1\) generation should depend on n according to the following step function:

This function is shown on Fig. 3 for Hamming space \(\mathcal {H}_4^{10}\). The sudden change of the optimal mutation rate from \(\mu =0\) at \(n<l(1-1/\alpha )\) to \(\mu =1\) at \(n>l(1-1/\alpha )\) corresponds to the sudden change of the effect of the mutation radius on the probability of adaptation shown on Fig. 2. Note that this mutation control function is not optimal for minimisation of the expected distance up to \(\lambda >1\) generations, because strings that are closer to the optimum than \(l(1-1/\alpha )\) do not mutate, so that there is no chance of improvement.

Different optimal mutation rate control functions derived mathematically to optimise different criteria in Hamming space \(\mathcal {H}_4^{10}\): step function minimising expected distance to optimum in one generation, linear function maximising probability of mutating directly into optimum, a function maximising conditional probability \(P(m<n\mid n)\) that an offspring is closer to optimum than its parent, cumulative distribution function \(P_0(m<n)=\sum _{m=0}^{n-1}{l \atopwithdelims ()m}\frac{(\alpha -1)^m}{\alpha ^l}\) minimising expected distance to optimum subject to an information constraint (Belavkin 2012)

The variational problem for the optimal control of the mutation rate, such as problem (7), can be formulated in different ways optimising different criteria (e.g. instantaneous or cumulative expected distance, probability of adaptation, probability of mutating directly into the optimum) or taking into account additional constraints (e.g. the time horizon, information constraints), and generally they lead to different solutions. Previously, we investigated various types of such problems and obtained their solutions (Belavkin et al. 2011; Belavkin 2011, 2012), some of which are shown on Fig. 3. One can see that there is no single optimal mutation rate control function. However, it is also evident that all these control functions have a common property of monotonically increasing mutation rate with increasing distance from the optimum. The main question that we are interested in this paper is whether such monotonic control of mutation rate is beneficial in a broader class of landscapes, when fitness is not equivalent to distance. In Sect. 4, we shall further develop the theory from the simple case considered in this section to more general fitness landscapes and formulate hypotheses which will be tested in biological landscapes in Sect. 5. To generate data for this testing, we develop an evolutionary technique in Sect. 3 to obtain approximations to the optimal control functions in a broad class of problems, when the derivation of exact solutions is impractical or impossible.

3 Evolutionary optimisation of mutation rate control functions

Analytical approaches cannot always be applied to derive optimal mutation rate control functions due to high problem complexity. Moreover, when fitness is not equivalent to negative distance, the transition probabilities between fitness levels may be unknown, so that analytical solutions are impossible. Another approach is to use numerical optimisation to obtain approximately optimal solutions. In this section, we describe an evolutionary technique that uses two genetic algorithms. The first, which we refer to as the Inner-GA, evolves individual string with the mutation rate controlled by some function \(\mu (y)\) that maps fitness value \(y=f(\omega )\) of a string to its mutation rate \(\mu \in [0,1]\). The second, which we refer to as the Meta-GA, evolves a population \(\{\mu _1(y),\ldots ,\mu _n(y)\}\) of such mutation rate control functions for better performance of the Inner-GAs. Note that the Inner-GA can use any fitness function. In this section, we shall apply the technique to the case when fitness is equivalent to negative distance from an optimum (a selected point in a Hamming space). The purpose of this exercise is to demonstrate that the functions \(\mu (y)\) evolved by the Meta-GA have monotonic properties, similar to those possessed by the optimal mutation rate control function obtained analytically. Later we shall apply the technique to more general fitness landscapes.

3.1 Inner-GA

The Inner-GA is a simple generational genetic algorithm, where each genotype is a string in Hamming space \(\mathcal {H}_\alpha ^l\), and the optimal string is defined by a fitness function \(y=f(\omega )\). The initial population of 100 individuals had equal numbers of individuals at each fitness value, and they were evolved by the Inner-GA for 500 generations using simple point mutation. The mutation rates were controlled according to function \(\mu (y)\), specified by the Meta-GA, with fitness values as the input. In the experiments described, we used no selection and no recombination in order to isolate the effect on evolution of the mutation rate control from other evolutionary operators.

Note that the parameters of the Inner-GA (e.g. population size, the number of generations) were chosen empirically to satisfy two conflicting objectives: On one hand, the parameters should be large enough to get any sort of convergence at the Meta-GA level; on the other hand, the parameters should be small enough for the system to obtain satisfactory results in feasible time (in our case several months of run-time using a cluster of 72 GPUs).

3.2 Meta-GA

The Meta-GA is a simple generational genetic algorithm that uses tournament selection, which is known to be robust for fitness scores on arbitrary scales and shifts, and because of its suitability for highly parallel implementation. Each genotype in the Meta-GA is a mutation rate function \(\mu (y)\) of fitness values y. The domain of \(\mu (y)\) is an ordered partition of the range \(\{y:f(\omega )=y,\ \omega \in \mathcal {H}_\alpha ^l\}\) of the Inner-GA fitness function. Thus, individuals in the Meta-GA are strings of real values \(\mu \in [0,1]\) representing probabilities of mutation at different fitnesses, as used in the Inner-GA.

At each generation of the Meta-GA, multiple copies of the Inner-GA were evolved for 500 generations, with the mutation rate in each copy controlled by a different function \(\mu (y)\) taken from the Meta-GA population. We used populations of 100 individual functions, which were initialised to \(\mu (y)=0\). All runs within the same Meta-GA generation were seeded with the same initial population of the Inner-GA. The Meta-GA evolved functions \(\mu (y)\) for \(5\cdot 10^5\) generations to maximise the average fitness \(\bar{y}(t)\approx \mathbb {E}\{y\}(t)\) in the final generation of the Inner-GA.

The Meta-GA used the following selection, recombination and mutation methods:

-

Randomly select (without replacement) three individuals from the population and replace the least fit of these with a mutated crossover of the other two; repeat with the remaining individuals until all individuals from the population have been selected or fewer than three remain.

-

Crossover recombines the start of the numerical string representing one mutation rate function with the end of another using a single cut point chosen randomly, excluding the possibility of being at either end, so that there are no clones.

-

Mutation adds a uniform-random number \(\varDelta \mu \in [-.1,.1]\) to one randomly selected value \(\mu \) (mutation rate) on the individual mutation rate function, but then bounds that value to be within [0, 1].

The Meta-GA returns the fittest mutation rate function \(\mu (y)\). In this study, the parameters in the Meta-GA were not optimised, as this would probably take more computational time than conducting the study itself. However, given that Meta-GA converged to the same result, the only difference the parameters could make were how quickly the result was found.

3.3 Evolved control functions

The kind of mutation rate control function the Meta-GA evolves depends greatly on properties of the fitness landscape used in the Inner-GA. In Sect. 2.4 we showed theoretically that for \(f(\omega )\) corresponding to negative distance to optimum \(-d_H(\top ,\omega )\), the optimal mutation rate increases with \(n=d_H(\top ,\omega )\). Therefore, the population of mutation rate functions in the Meta-GA should evolve the same characteristics in such a landscape. Figure 4 shows the average and standard deviations of the fittest control functions evolved in 20 runs of the Meta-GA using Inner-GAs with strings in \(\mathcal {H}_4^{10}\) (i.e. \(\alpha =4\), \(l=10\)) and fitness defined by \(f(\omega )=-d_H(\top ,\omega )\). As predicted, the mutation rate increases with \(n=d_H(\top ,\omega )\). We shall now consider more complex landscapes.

4 Weakly monotonic fitness landscapes

The derivation of variable (or adaptive) optimal mutation rates described in Sect. 2.4 was based on the assumption that fitness \(f(\omega )\) is equivalent to negative distance \(-d(\top ,\omega )\) from the top genotype. Biological fitness landscapes, however, can be rugged (Lobkovsky et al. 2011), meaning that fitness may have very little relation to distance in the space of genotypes. In this section, we consider a more general relation between fitness and distance to study the effect of variable mutation rates on adaptation in more biologically realistic landscapes. We begin by considering fitness as a noisy or partially observed distance, and then discuss monotonic relation between these ordered random variables. We introduce several notions of monotonicity and then prove a theorem on weak monotonicity in a general class of fitness landscapes.

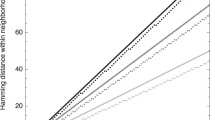

Means and standard deviations of mutation rates evolved to minimise expected distance to the optimum in Hamming space \(\mathcal {H}_4^{10}\) after 500 generations. The results are based on 20 runs of the Meta-GA, each evolving mutation rates for \(5\cdot 10^5\) generations. Each generation of the Meta-GA involved running the Inner-GAs for 500 generations with 100 individuals. Dashed lines represent theoretical functions optimising short-term (step) and long-term (linear) criteria

4.1 Fitness-distance communication

If fitness \(y=f(\omega )\) is not isomorphic with distance \(n=d(\top ,\omega )\), but there is some degree of dependency between the two variables, then one could try to estimate unobserved distance from observed values of fitness and employ the control function \(\mu (n)\) of mutation rate based on the estimated distance. Such a control becomes \(\varepsilon \)-optimal, where \(\varepsilon \) represents some deviation from optimality. The estimation of distance could be done sequentially using, for example, the filtering theory (Stratonovich 1959). Here, however, we shall limit our discussion to a simple case of using just the current fitness value \(y_t\) instead of current distance \(n_t\) to control the mutation rate.

Given a distribution \(P(\omega )\) of strings in \(\varOmega \) (e.g. a uniform distribution \(P(\omega )=\alpha ^{-l}\) on a Hamming space \(\mathcal {H}_\alpha ^l\)), the fitness \(y=f(\omega )\) and distance \(n=d(\top ,\omega )\) is a pair of random variables with joint distribution P(y, n). Note that if \(\varOmega \) has multiple optima \(\top \), then n should be understood as the distance from the nearest optimum: \(n=\inf \{d(\top ,\omega ):\top \in \varOmega \}\). Joint distribution P(y, n) defines conditional probabilities \(P(n\mid y)\) and \(P(y\mid n)\) by the Bayes formula. Mutation of string a into string b results in the change of distance from \(n_t=d(\top ,a)\) to \(n_{t+1}=d(\top ,b)\) and the change of fitness from \(y_t=f(a)\) to \(y_{t+1}=f(b)\). If fitness does not communicate more information about the distance than distance itself (i.e. fitness is a ‘noisy’ distance), then one can show that fitness and distance are conditionally independent: \(P(y_{t+1},y_t\mid n_{t+1},n_t)=P(y_{t+1}\mid n_{t+1})\,P(y_t\mid n_t)\) (see Remark 1 in Appendix 2). In this case, the transition probability \(P_\mu (y_{t+1}\mid y_t)\) between fitness values is expressed using the following composition of transition probabilities \(P(n_t\mid y_t)\), \(P_\mu (n_{t+1}\mid n_t)\) and \(P(y_{t+1}\mid n_{t+1})\):

(see Appendix 2 for details). The transition probability \(P_\mu (n_{t+1}\mid n_t)\) is defined by the geometry of the mutation operator in the space of genotypes \(\varOmega \), and for simple point mutation in a Hamming space it is given by Eq. (6). Conditional probabilities \(P(n_t\mid y_t)\) and \(P(y_{t+1}\mid n_{t+1})\) are defined by the fitness landscape, and they represent dependency between fitness and distance.

The simplest and, perhaps, the most important such relationship is linear dependency, represented by correlation. The fitness-distance correlation has been used previously to describe problem difficulty for evolutionary algorithms (Jones and Forrest 1995; Jansen 2001) and neutral mutations (Poli and Galvan-Lopez 2012). The fitness-distance correlation reflects global monotonic dependency between the pair of ordered random variables. In biological context, however, such a global measure of monotonicity may be less important, because biological organisms tend to populate some neighbourhoods of local optima of fitness landscapes due to selection. Thus, we define the concepts of local and weak monotonicity relative to a chosen metric. We shall also prove that all landscapes that are continuous and open at local optima are weakly monotonic. This result will allow us to formulate three hypotheses about control of mutation rates in biological landscapes, which we shall test experimentally in Sect. 5.

4.2 Monotonicity of fitness and distance

We first consider monotonic relationships between values of fitness function \(f:\varOmega \rightarrow \mathbb {R}\) at points a, \(b\in \varOmega \) and their distances to an arbitrary point \(\omega \) given by a metric \(d:\varOmega \times \varOmega \rightarrow \mathbb {R}_+\) (e.g. a Hamming metric in \(\mathcal {H}_\alpha ^l\)). If all a and b inside some ball \(B[\omega ,n]\), \(n>0\), satisfy the properties below, we say that:

-

f is locally monotonic relative to metric d at \(\omega \) if:

$$\begin{aligned} -d(\omega ,a)\le -d(\omega ,b)\quad \Longrightarrow \quad f(a)\le f(b) \end{aligned}$$ -

d is locally monotonic relative to f at \(\omega \) if:

$$\begin{aligned} -d(\omega ,a)\le -d(\omega ,b)\quad \Longleftarrow \quad f(a)\le f(b) \end{aligned}$$ -

f and d are locally isomorphic at \(\omega \) if both implications hold.

-

We say that d or f are globally monotonic (isomorphic) at \(\top \) relative to each other if the relevant property holds over \(B[\omega ,l]\equiv \varOmega \).

Schematic representation of monotonic properties described. Abscissae represent string space, ordinates represent fitness. a Fitness is monotonic relative to distance to optimum (fitness landscape can have ‘plateaus’); b distance to optimum is monotonic relative to fitness (landscape can have ‘cliffs’); c fitness and distance to optimum are isomorphic (neither cliffs nor plateaus are allowed)

The three monotonic relations between fitness and distance defined above are illustrated on Fig. 5. These cases represent idealised situations, because usually the value of distance does not define the value of fitness uniquely and vice versa. Indeed, the pre-image of distance \(d(\top ,\omega )=n\) is a sphere \(S(\top ,n):=\{\omega :d(\top ,\omega )=n\}\), and there can be strings with different fitness values within the sphere. Similarly, the pre-image of fitness \(f(\omega )=y\) is the set \(f^{-1}(y)=\{\omega :f(\omega )=y\}\), and strings within this set may have different distances from \(\top \). Thus, to describe monotonicity in realistic landscapes, one can modify the definitions by considering the ‘average’ (i.e. expected) fitness or distance within the sets. In particular, we shall denote the average fitness at distance \(d(\top ,\omega )=n\) and the average distance at fitness \(f(\omega )=y\) respectively as follows:

The above averages are particular cases of conditional expectations under the assumption of a uniform distribution \(P(\omega )=\alpha ^{-l}\) of strings in a Hamming space \(\mathcal {H}_\alpha ^l\). Appendix 3 gives definitions for arbitrary Borel probability measure on a metric space.

In what follows, we shall use specific notation \(\mathbb {E}[f(a)]\), with letter ‘a’ instead of \(\omega _n\), to denote average fitness at distance \(d(\top ,\omega )=d(\top ,a)\) (instead of \(d(\top ,\omega )=n\)). Similarly, we shall use notation \(\mathbb {E}[d(\top ,a)]\), with a specific letter ‘a’ instead of \(\omega _y\), to denote average distance at fitness \(f(\omega )=f(a)\) (instead of \(f(\omega )=y\)). Such notation is convenient to define average (mean) monotonicity. If all a and b inside some ball \(B[\omega ,n]\), \(n>0\), satisfy the properties below, we say that:

-

f is on average locally monotonic relative to metric d at \(\omega \) if:

$$\begin{aligned} -d(\omega ,a)\le -d(\omega ,b)\quad \Longrightarrow \quad \mathbb {E}[f(a)]\le \mathbb {E}[f(b)] \end{aligned}$$ -

d is on average locally monotonic relative to f at \(\omega \) if:

$$\begin{aligned} -\mathbb {E}[d(\omega ,a)]\le -\mathbb {E}[d(\omega ,b)]\quad \Longleftarrow \quad f(a)\le f(b) \end{aligned}$$ -

f and d are on average locally isomorphic at \(\omega \) if both implications hold.

If \(\omega \) is a local optimum in a finite space (e.g. a Hamming space), then fitness and distance are always locally monotonic relative to each other (and hence isomorphic) at least inside the ball \(B[\omega ,1]\) of radius one (otherwise, \(\omega \) cannot be a local optimum). However, if the size \(|\varOmega |\) of the space is large, then the neighbourhood becomes negligible, and therefore the notions of local monotonicity become less important in larger landscapes. For larger neighbourhoods one can speak only about the probability that the implications above are true for some pair of points a and b. Thus, for larger neighbourhoods we can define monotonicity in probability (or in measure). However, because monotonicity always holds with some (possibly zero) probability, and it holds trivially with probability one at each point (i.e. in a zero-radius ball \(B[\omega ,0]\)), we should make such a definition more useful by distinguishing, for example, landscapes, in which the probability of monotonicity gradually increases as points get closer to a local optimum. We refer to this notion as weak monotonicity.

Let \(\{\omega _n\}_{n\in \mathbb {N}}\) be a sequence of points such that the distances \(d(\omega ,\omega _n)\) converge to zero or fitness values \(f(\omega _n)\) converge to \(f(\omega )\). If a, \(b\in \{\omega _n\}_{n\in \mathbb {N}}\) of any such sequence satisfy the properties below, we say that:

-

f is weakly monotonic relative to metric d at \(\omega \) if:

$$\begin{aligned} \lim _{d(\omega ,b)\rightarrow 0} P\{-d(\omega ,a)\le -d(\omega ,b)\quad \Longrightarrow \quad \mathbb {E}[f(a)]\le \mathbb {E}[f(b)]\}=1 \end{aligned}$$ -

d is weakly monotonic relative to f at \(\omega \) if:

$$\begin{aligned} \lim _{f(b)\rightarrow f(\omega )} P\{-\mathbb {E}[d(\omega ,a)]\le -\mathbb {E}[d(\omega ,b)]\quad \Longleftarrow \quad f(a)\le f(b)\}=1 \end{aligned}$$ -

f and d are weakly isomorphic at \(\omega \) if both conditions hold.

Weak monotonicity is implied by the average local monotonicity in some ball \(B[\omega ,n]\) with \(n>0\), because the latter means that the implications above hold with probability one in \(B[\omega ,n]\). The average local monotonicity is in turn implied by the (strong) local monotonicity. The relation between the three notions is shown by the implications below:

Moreover, one may consider an increasing sequence of finite landscapes such that in the limit \(|\varOmega |\rightarrow \infty \) the landscape is modelled by a continuum metric space. In this case, fitness may fail to be monotonic at any point, including the global optimum, even if fitness is a continuous function. Indeed, it is well known that almost all continuous functions are nowhere differentiable, and therefore they are also nowhere monotonic (Banach 1931; Mazurkiewicz 1931). However, as will be shown by the theorem below, weaker monotonicity may still hold in such landscapes.

Like weak monotonicity, fitness-distance correlation can also be applied to infinite landscapes, including nowhere monotonic landscapes. However, while fitness-distance correlation describes global property of a landscape, weak monotonicity effectively describes a gradual increase of fitness-distance correlation in decreasing neighbourhoods of a point. Thus, although weak monotonicity is related to fitness-distance correlation, these notions are not equivalent. In fact, unlike fitness-distance correlation, weak monotonicity holds in a very broad class of landscapes, including infinite landscapes.

Theorem 1

Let \((\varOmega ,d)\) be a metric space equipped with a Borel probability measure P, and let \(f:\varOmega \rightarrow \mathbb {R}\) be P-measurable. Let \(\top \) be a local optimum: \(f(\top )=\sup \{f(\omega ):\omega \in E\subseteq \varOmega \}\). Then

- \((\Rightarrow )\) :

-

If f is continuous at \(\top \), then f is weakly monotonic relative to d at \(\top \).

- \((\Leftarrow )\) :

-

If f maps open balls \(B[\top ,\delta )\subseteq E\) to open intervals \((f(\top )-\varepsilon ,f(\top )]\), then d is weakly monotonic relative to f at \(\top \).

- \((\iff )\) :

-

If f satisfies both conditions then f and d are weakly isomorphic at \(\top \).

The proof of this theorem is given in Appendix 3, and it is based on the construction of a decreasing sequence \(\{\delta _n\}_{n\in \mathbb {N}}\) of radii \(\delta _n>0\) around \(\top \) for any increasing sequence \(\{f(\top )-\varepsilon _n\}_{n\in \mathbb {N}}\), which is guaranteed by continuity of f at \(\top \). Note that we used metric in the theorem, because metric spaces are well-understood, but the theorem and its proof can be reformulated in terms of a quasi-pseudometric. Every quasi-uniform space with countable base (and hence every corresponding topological space) is quasi-pseudometrisable (e.g. see Fletcher and Lindgren 1982, Theorem 1.5), which probably subsumes any topology on DNA or RNA structures (Stadler et al. 2001).

Weak monotonicity implies increasing probability of positive correlation between fitness and negative distance to a local or global optimum in decreasing neighbourhoods. This suggests that the fitness-based control \(\mu (y_t)\) of mutation rate in any continuous and open landscape should resemble the distance-based control \(\mu (n_t)\) in some neighbourhood of an optimum. This forms our first hypothesis:

Hypothesis 1

Optimal mutation rate increases with a decrease in fitness in some neighbourhood of an optimum for realistic fitness landscapes (e.g. biological landscapes), where fitness is not globally isomorphic to distance.

Further, the more monotonic the landscape, the more the optimal mutation rate control function will resemble theoretical functions derived and discussed in Sect. 2; this forms our second hypothesis:

Hypothesis 2

The larger the neighbourhood of weak monotonicity, the more mutation rate control may contribute to evolution towards high fitness.

We test these hypotheses in Sect. 5.

4.3 On the role of genotype-phenotype mapping

Mutation occurs at the microscopic level as a random change of a genotype, whereas fitness is defined by the interaction of an organism with its environment, and therefore is a property of the phenotype rather than genotype. If we denote by X the set of all phenotypes, then fitness of genotypes \(f:\varOmega \rightarrow \mathbb {R}\) can be factorised into a composition \(f=\varphi \circ \kappa \) of a genotype-phenotype mapping \(\kappa :\varOmega \rightarrow X\) and phenotypic fitness \(\varphi :X\rightarrow \mathbb {R}\). We use a function \(\kappa \) for genotype-phenotype mapping, because we assume for simplicity that one genotype cannot be decoded into two or more phenotypes. On the other hand, there are usually many genotypes corresponding to the same phenotype (Schuster et al. 1994). The genotype-phenotype mapping \(\kappa \) can be seen as a black-box model of DNA decoding via translation and transcription.

The set X of phenotypes is pre-ordered by the values of phenotypic fitness (\(x\lesssim _X z\) iff \(\varphi (x)\le \varphi (z)\)), while the set \(\varOmega \) of genotypes is pre-ordered by the values of distance from the nearest top genotype (\(a\lesssim _\varOmega b\) iff \(-d(\top ,a)\le -d(\top ,b)\)). It is clear from factorisation \(f=\varphi \circ \kappa \) that the relation between fitness f of genotypes and their distance from an optimum depends on monotonic properties of the genotype-phenotype mapping. For example, genotypic fitness is order-isomorphic with distance when the genotype-phenotype mapping satisfies the condition: \(a\lesssim _\varOmega b\) if and only if \(\kappa (a)\lesssim _X\kappa (b)\).

The factorisation \(f=\varphi \circ \kappa \) shows that part of the fitness function, specifically \(\kappa \), is property of an organism, and therefore a monotonic relation between fitness and distance can be an adaptive and evolving property. This forms our third hypothesis:

Hypothesis 3

The extent to which mutation rate control may contribute to the evolution of high fitness is itself a trait, which will evolve across biological organisms.

We analyse data that may support this hypothesis in Sect. 5.

5 Evolving fitness-based mutation rate control functions

In this section, we conduct a computational experiment using landscapes with biological origins to test the hypotheses arising from our theory in Sect. 4. We used the earlier described Meta-GA technique (see Sect. 3) to evolve approximately optimal functions for 115 published complete landscapes of transcription factor binding (Badis et al. 2009). This also allows us to establish the range of fitness values over which monotonicity of optimal mutation rate holds, quantifying the extent to which Hypothesis 1 holds for these biological landscapes. TFs have evolved over very long periods to bind to specific DNA sequences. The landscapes show experimentally measured strengths of interaction (DNA-TF binding score) between the double-stranded DNA sequences of length \(l=8\) of base pairs each and a particular transcription factor. Thus, we represent the set of all DNA sequences by Hamming space \(\mathcal {H}_4^8\) (i.e. \(\alpha =4\), \(l=8\)), and consider the DNA-TF binding score as their fitness, which is clearly different from the negative Hamming distance from the top string (a sequence with the maximum DNA-TF binding score).

5.1 Evolved control functions

We used the Meta-GA evolutionary optimisation technique, described in Sect. 3, to obtain for each landscape an approximately optimal mutation rate control function maximising the average DNA-TF binding score in the population (expected fitness) after 500 replications. Our experiments showed that 16 replicate runsFootnote 1 were sufficient to achieve satisfactory convergence in feasible time for each of the 115 transcription factor landscapes.

Examples of GA-evolved optimal mutation rate control functions. Data are shown for the transcription factors Srf, Glis2 and Zfp740. Each curve represents the average of 16 independently evolved optimal mutation rate functions on a particular transcription factor DNA-binding landscape (Badis et al. 2009). Error bars represent standard deviations from the mean. Similar curves for all 115 landscapes are shown in supplementary Fig. 9. The arrows indicate the monotonicity radius \(\varepsilon \), that defines an interval of fitness values below the maximum, where mutation rate monotonically increases

Figure 6 shows the average values and standard deviations of the evolved mutation rates for three transcription factors: Srf, Glis2 and Zfp740. Evolved functions for all landscapes are shown on Figure 9 in supplementary material. One can see that the evolved functions for each transcription factor landscape is approximately monotonic in the direction predicted: close to zero mutation at the maximum fitness, rising to high levels further from the maximum fitness value. This supports Hypothesis 1 as developed from the theory in Sect. 4.

Small standard deviations indicate good convergence to a particular control function. Observe that there is poor convergence at low fitness areas of the landscape that are poorly explored by the genetic algorithm. Once the mutation rate has peaked near the maximum value \(\mu =1\), the mutation rates tend to decrease and become chaotic. As will be shown in the next section, this occurs at lower fitness values at which the landscape is no longer monotonic (i.e. further from the peak of fitness).

5.2 Landscapes for transcription factors

The variation in the evolved mutation rate control function is clearly related to a variation in the properties of the landscapes. Our theoretical analysis suggests that the main property affecting mutation rate control is monotonicity of the landscape relative to a metric measuring the mutation radius. In particular, the radius of point-mutation is measured by the Hamming metric, and we shall look into the local and weak monotonic properties of the transcription factors landscapes relative to the Hamming metric.

Examples of fitness landscapes based on the binding score between DNA sequences and transcription factors (TF) from (Badis et al. 2009). Data are shown for the transcription factors: Srf, Glis2 and Zfp740. Lines connect mean values of the binding score shown as functions of the Hamming distance from the top string (a sequence with the highest DNA-TF binding score). Error bars represent standard deviations. Similar curves for all 115 landscapes are shown in supplementary Fig. 10

Figure 7 shows average DNA-TF binding scores within spheres \(S(\top ,n)\) around the optimal string as a function of Hamming distance \(n=d_H(\top ,\omega )\) from the optimum. Data is shown for three transcription factors: Srf, Glis2 and Zfp740. Lines connect average values at discrete distances for visualisation purposes. Error bars show standard deviations of the DNA-TF binding scores within the spheres. Distributions of fitness with respect to Hamming distance \(d_H(\top ,\omega )\) for all 115 transcription factors are shown on Figure 10 (supplementary material).

One can see from Fig. 7 that the landscape for the Srf factor has monotonic properties: the average values increase steadily for strings that are closer to the optimum, and the deviations from the mean within the spheres are relatively small. This is in contrast to the other two landscapes. We note also that the average values for Glis2 decrease quite sharply around the optimum, while the landscape for Zfp740 has a relatively flat plateau area around the optimum, which means that there are many sequences with high DNA-TF binding score. This difference may explain different gradients of optimal mutation rates near the maximum fitness shown on Fig. 6.

Linear relation between monotonicity of the landscapes measured by the Kendall’s \(\tau \) correlation (ordinates) and the monotonicity radius \(\varepsilon \) (abscissae) of the corresponding evolved mutation rate control functions. Three labels show data for three transcription factors shown in Figs. 6 and 7

5.3 Monotonicity and controllability

Our results have confirmed that the evolved optimal mutation rates rise from zero to very high levels as fitness decreases from the maximum value \(f(\top )\) to some value \(f(\top )-\varepsilon \) (see Fig. 6 and supplementary Fig. 9). We refer to the corresponding value \(\varepsilon >0\) as the monotonicity radius, as it defines the neighbourhood of \(\top \) in terms of fitness values in which the evolved mutation rate control function has monotonic properties. We find substantial variation in monotonicity radius among transcription factors.

We hypothesised that the variation in the optimal mutation rate control functions relates to variation in the monotonicity of the transcription factor landscapes (Hypothesis 2). Various measures have been proposed for the roughness of biological landscapes (Lobkovsky et al. 2011). Here we focus on Kendall’s \(\tau \) correlation, which is directly concerned with monotonicity; specifically, \(\tau \) measures the proportion of mutations that, in moving closer to the optimum in string space, also increase in fitness. As shown in Fig. 8, we find that the value of \(\tau \) of the landscape does indeed have a relationship with the monotonicity radius \(\varepsilon \) of the evolved mutation rate control functions (Spearman’s \(\rho = 0.77\), \(P \approx 10^{-16}\), \(N=115\)), supporting Hypothesis 2.

Finally, we investigated whether these related features of the TF landscapes and mutation rate functions themselves relate to the biological evolution of these TF systems. To test this we looked at the evolutionary origins of the TF families, to which the 115 TFs tested above belonged, using an integer scale indicating key splits in the tree of eukaryotic life (Weirauch and Hughes 2011). We find a significant relationship between this scale of biological evolution and the monotonicity radius \(\varepsilon \) (Spearman’s \(\rho = 0.21\), \(P = 0.021\), \(N = 115\)). This indicates that TFs in families that originated more recently (e.g. in families restricted to Deuterostomes, rather than being present across all eukaryotic life) tend to have broader regions over which the optimal mutation rate monotonically increases with distance from the binding optimum. This is consistent with Hypothesis 3, indicating that the extent to which mutation rate control may contribute to the evolution of high fitness itself evolves through the tree of life.

6 Discussion

In this paper we have developed and tested theory relating to the control of the mutation rate in biological sequence landscapes. To do so, we had to move the theory closer to the biology in three ways. Firstly (in Sect. 2), we generalised Fisher’s geometric model of adaptation, from its Euclidean space (continuous and infinite) to a discrete, finite Hamming space of strings. Doing so demonstrated that, in contrast to the behaviour in Euclidean space, where the probability of beneficial mutation behaves similarly at different distances from the optimum (Orr 2003), the probability of beneficial mutation, for a given mutation size, varies markedly depending on the distance from the optimum (Fig. 2). Secondly, we analytically derived functions for optimal control of the mutation rate minimising the expected Hamming distance to a particular point (optimal string). We also demonstrated a variation of these control functions dependent on specific formulations of the optimisation problem. Nonetheless we observed consistency: all optimal functions increase monotonically (Fig. 3). Thirdly, we developed theory concerning monotonic properties of fitness landscapes and establishing sufficient conditions of weak monotonicity. The theory demonstrated that all biological landscapes over discrete spaces, however rugged, are characterised by monotonic properties in some neighbourhood of the optimum. Therefore, optimal solutions to the geometric problem of optimal mutation rate control based on distance can be applied more broadly to problems of \(\varepsilon \)-optimal control of mutation rate based on fitness in biological systems.

Empirical biological fitness landscapes mapping genotypes to fitness values within a small, defined, subset of genotypic space are becoming increasingly available (de Visser and Krug 2014). Here we use the test case of the affinities of 115 different transcription factors for all possible eight base-pair DNA sequences (Badis et al. 2009). We used these landscapes to test hypotheses arising from the theory, relating to the nature of optimal mutation rate functions (Hypothesis 1; Figs. 6, 7, 8). In each case we find evidence to support the hypothesis, consistent with the idea that our theory is not only correct, but, as expected, substantively relevant to such biological fitness landscapes.

Given that we find this theory to be relevant to biological fitness landscapes, we need to ask how it might manifest itself within biology. There are several requirements if biological organisms are to exert any approximation to optimal mutation rate control. The first requirement is variation in mutation rate. There is evidence for abundant variation in biological mutation rates, both across species (Sung et al. 2012) and among populations of a species (Bjedov et al. 2003). Variation is therefore possible. However, for this theory to be relevant, that variation needs to be controllable by the organism. This in turn requires that mutation rate varies right down to the level of an individual genotype, i.e. mutation rate plasticity (MRP). There is evidence for MRP in ‘stress-induced mutagenesis’ (Galhardo et al. 2007) and related phenomena, such as the increased number of mutations in sperm from older males (Kong et al. 2012). However, while this constitutes MRP, the possibility of control requires that this plasticity is not merely the inevitable result of an organism’s environment (e.g. the accumulation of damage with time or due to stress factors), but controllable by the organism in response to that environment. The proximate and ultimate causes of stress-induced mutagenesis are much debated, but that they include any form of ‘control’ is far from clear (MacLean et al. 2013). Clearer evidence of control is, however, present in a novel example of MRP we described recently (Krašovec et al. 2014). In this case, there is environmentally dependent MRP that can be switched on or off by the presence or absence of a particular gene (luxS).

The next requirement for a biological analogue of the theory described here is that control of the mutation rate may be exercised as a decreasing function of fitness. This requires that an organism can somehow assay its own fitness. This is a non-trivial requirement in that fitness is a function of one or more generations of an organism’s offspring, not of an organism itself. Various proxies are conceivable that might give an organism an indication of its fitness. These include counting its offspring relative to some internal or external clock, counting the population as a whole, or testing aspects of the environment that may correlate with the future likelihood of offspring. The last of these could include stressors, meaning that stress-induced mutagenesis might meet this requirement. In our recently identified example, the aspect of the environment with which mutation rate varies is the density of a bacterial culture. Population density can act as a good proxy for fitness in some circumstances (e.g. in a fixed volume bacterial culture), and the mutation rate does indeed decrease with increasing density (Krašovec et al. 2014), consistent with the fitness-associated control of mutation rate we here determine to be optimal.

The final requirement for the existence of biological mutation-rate control of the sort addressed here is that it is possible for it to evolve and be maintained by the processes of biological evolution. This is not trivial in that it involves the evolution of plasticity, which is not as straight-forward or common in biology as might be expected (Scheiner and Holt 2012). It also involves so-called ‘second-order selection’ (Tenaillon et al. 2001). This is because any particular mutation rate or MRP is unlikely to affect an individual’s fitness (and therefore selection) directly; rather, MRP must be selected for indirectly via the genetic effects it produces. Nonetheless, phenotypic plasticity occurs widely and, while rare, there are clear examples of second-order selection occurring in biology (Woods et al. 2011). Furthermore, here we demonstrate MRP rapidly evolving de novo to particular forms (Fig. 6). The genetic algorithm (GA) in this case was not created to mimic biology, and the group-selection used by the outer GA in particular is rather un-biological. However, others, working with explicitly biological population genetic models, also find the evolution of MRP (Ram and Hadany 2012). This implies that not only is the MRP predicted here possible for biological organisms, but it may reasonably be expected to evolve and be maintained. It remains to be tested whether the precise range and nature of the MRP identified by Krašovec et al. (2014) does indeed fulfil this role i.e. to enable populations to evolve faster and/or further in realised, whole organism biological fitness landscapes in a similar fashion to the evolutionary advantage seen for in silico, molecular interaction landscapes tested here (Fig. 6). Nonetheless, such density-dependent MRP (Krašovec et al. 2014) is a prime candidate for a biological manifestation of the mutation rate control which we have addressed here.

We have focused on fitness-associated control of mutation rate. However, mutation is only one evolutionary process where fitness-associated control may be beneficial. Recombination and dispersal are also evolutionary processes that may be under the control of the individual and therefore open to similar effects. Fitness-associated recombination has been demonstrated to be advantageous theoretically (Hadany and Beker 2003; Agrawal et al. 2005) and identified in biology (Agrawal and Wang 2008; Zhong and Priest 2011). Similarly, the idea that dispersal associated with low fitness might be advantageous has a basis in simulation of spatially differentiated populations (Aktipis 2004, 2011). This association might perhaps be framed more generally in terms of ‘fitness-associated dispersal’. Thus, the framework for control of mutation rate in response to fitness that we have developed here may in future be applicable to both recombination and dispersal.

Overall, our development of theory and testing its predictions in silico not only clarifies ideas around the monotonicity of fitness landscapes and mutation rate control, it leads directly to hypotheses about specific systems in living organisms. At the same time there is the potential for greater insight through further development of the theory. Three directions seem particularly likely to be fruitful.

First, while it is striking how effective mutation rate control is at enabling adaptive evolution, without invoking selection in our in silico experiments, it will be important to consider the role of selection strategies. Such strategies may implicitly modify fitness functions. For instance, one of the analytically derived functions shown in Fig. 3 is the mutation rate function for a DNA space (\(\mathcal {H}_4^{10}\)) which maximises the probability of adaptation (as derived by Bäck (1993) for binary strings). As outlined in Sect. 2.4, maximising the probability of adaptation is equivalent to maximising expected fitness of the offspring relative to its parent. This effect may be implicit in a selection strategy that removes the offspring of reduced fitness that will inevitably be produced by maximising offspring expected fitness. Given the importance of selection in biology, we therefore anticipate that such functions may be closer to mutation rate control functions in living organisms. This requires further work.

A second area for development is in variable adaptive landscapes. The importance of time-varying adaptive landscapes in biological evolution is becoming increasingly appreciated (Mustonen and Lassig 2009; Collins 2011) and variable mutation rates have a particular role here (Stich et al. 2010). It is worth noticing, however, that our derivation of optimal mutation rate functions is not dependent on a fixed landscape, as it depends only on the fitness values. Nonetheless, as we demonstrate for the transcription factor landscapes, variation in landscapes’ monotonic properties relates to the shape of mutation rate functions in predictable ways (Fig. 8). This deserves further exploration both theoretically and empirically: measuring variation in the monotonic properties of real biological landscapes will be informative about optimal mutation rate functions and vice versa.

Finally, there is potential to develop theory around the role of the genotype-phenotype mapping. Landscape monotonicity, as explored here, is not absolute; it may depend on this mapping. That is, if the decoding of DNA changes, it may be possible to convert a non-monotonic landscape into a monotonic one. Biology uses a variety of such decoding schemes which may themselves evolve. For the transcription factor landscapes used here, the decoding scheme is defined by the biochemical interactions between the transcription factor (a protein molecule) and DNA. Thus, evolution of transcription factors constitutes evolution of DNA-decoding, and indeed we do find a relationship between the evolutionary age of gene families and the monotonic properties of the associated landscapes. A more familiar example is the genetic code, where there is much existing work on its evolution (e.g. Freeland et al. 2000). Determining how evolution of such codes affects the monotonic properties of biological landscapes as explored here may, therefore, provide novel insights into large-scale evolutionary patterns. Ultimately, theory such as this that identifies analytically or empirically optimal mutation rate control functions may help make predictions about evolutionary responses to future environmental change (Chevin et al. 2010) or inferences about the environment(s) within which particular organisms evolved. In the meantime, mutation rate control as developed here may assist directed evolution within biological and other complex landscapes, for instance in the evolution of DNA-protein binding (Knight et al. 2009).

Notes

We used a multiple of 4 due to 4 GPUs used in one node.

References

Ackley DH (1987) An empirical study of bit vector function optimization. In: Davis L (ed) Genetic algorithms and simulated annealing, Pitman, chap 13, pp 170–204

Adams FC, Laughlin G (1997) A dying universe: the long-term fate and evolutionof astrophysical objects. Rev Mod Phys 69:337–372

Agrawal AF, Wang AD (2008) Increased transmission of mutations by low-condition females: evidence for condition-dependent DNA repair. PLoS Biol 6(2):e30

Agrawal AF, Hadany L, Otto SP (2005) The evolution of plastic recombination. Genetics 171(2):803–12

Ahlswede R, Katona G (1977) Contributions to the geometry of Hamming spaces. Discrete Math 17(1):1–22

Aktipis CA (2004) Know when to walk away: contingent movement and the evolution of cooperation. Journal of Theoretical Biology 231(2):249–60

Aktipis CA (2011) Is cooperation viable in mobile organisms? Simple walk away rule favors the evolution of cooperation in groups. Evol Human Behav Off J Human Behav Evol Soc 32(4):263–276

Bäck T (1993) Optimal mutation rates in genetic search. In: Forrest S (ed) Proceedings of the 5th international conference on genetic algorithms. Morgan Kaufmann, Burlington, pp 2–8

Badis G, Berger MF, Philippakis AA, Talukder S, Gehrke AR, Jaeger SA, Chan ET, Metzler G, Vedenko A, Chen X, Kuznetsov H, Wang CF, Coburn D, Newburger DE, Morris Q, Hughes TR, Bulyk ML (2009) Diversity and complexity in DNA recognition by transcription factors. Science 324(5935):1720–3

Banach S (1931) Über die Baire’sche kategorie gewisser funktionenmengen. Studia Math 3:174–179

Bataillon T, Zhang T, Kassen R (2011) Cost of adaptation and fitness effects of beneficial mutations in pseudomonas fluorescens. Genetics 189(3):939–49

Belavkin RV (2011) Mutation and optimal search of sequences in nested Hamming spaces. In: IEEE information theory workshop. IEEE, New York

Belavkin RV (2012) Dynamics of information and optimal control of mutation in evolutionary systems. In: Sorokin A, Murphey R, Thai MT, Pardalos PM (eds) Dynamics of information systems: mathematical foundations. In: Springer proceedings in mathematics and statistics, vol 20. Springer, Berlin, pp 3–21

Belavkin RV (2013) Minimum of information distance criterion for optimal control of mutation rate in evolutionary systems. In: Accardi L, Freudenberg W, Ohya M (eds) Quantum bio-informatics V, QP-PQ: quantum probability and white noise analysis, vol 30. World Scientific, Singapore, pp 95–115

Belavkin RV, Channon A, Aston E, Aston J, Knight CG (2011) Theory and practice of optimal mutation rate control in Hamming spaces of DNA sequences. In: Lenaerts T, Giacobini M, Bersini H, Bourgine P, Dorigo M, Doursat R (eds) Advances in artificial life, ECAL 2011: proceedings of the 11th European conference on the synthesis and simulation of living systems. MIT Press, Cambridge, pp 85–92

Bjedov I, Tenaillon O, Gerard B, Souza V, Denamur E, Radman M, Taddei F, Matic I (2003) Stress-induced mutagenesis in bacteria. Science 300(5624):1404–9

Böttcher S, Doerr B, Neumann F (2010) Optimal fixed and adaptive mutation rates for the leadingones problem. In: Schaefer R, Cotta C, Koodziej J, Rudolph G (eds) Parallel Problem Solving from Nature, PPSN XI, vol 6238. Lecture Notes in Computer ScienceSpringer, Berlin Heidelberg, pp 1–10

Braga ADP, Aleksander I (1994) Determining overlap of classes in the \(n\)-dimensional Boolean space. In: Neural networks, 1994. In: 1994 IEEE international conference on IEEE world congress on computational intelligence, vol 7, pp 8–13

Cervantes J, Stephens CR (2006) ‘Optimal’ mutation rates for genetic search. In: Cattolico M (ed) Proceedings of genetic and evolutionary computation conference (GECCO-2006). ACM, Seattle, pp 1313–1320

Chevin LM, Lande R, Mace GM (2010) Adaptation, plasticity, and extinction in a changing environment: towards a predictive theory. PLoS Biol 8(4):e1000,357

Collins S (2011) Many possible worlds: expanding the ecological scenarios in experimental evolution. Evol Biol 38(1):3–14

de Visser JA, Krug J (2014) Empirical fitness landscapes and the predictability of evolution. Nat Rev Genet 15(7):480–490

Eiben AE, Hinterding R, Michalewicz Z (1999) Parameter control in evolutionary algorithms. IEEE Trans Evol Comput 3(2):124–141

Falco ID, Cioppa AD, Tarantino E (2002) Mutation-based genetic algorithm: performance evaluation. Appl Soft Comput 1(4):285–299

Fisher RA (1930) The genetical theory of natural selection. Oxford University Press, Oxford

Fletcher P, Lindgren WF (1982) Quasi-uniform spaces. In: Lecture notes in pure and applied mathematics, vol 77. Marcel Dekker, New York

Fogarty TC (1989) Varying the probability of mutation in the genetic algorithm. In: Schaffer JD (ed) Proceedings of the 3rd International Conference on Genetic Algorithms, Morgan Kaufmann, pp 104–109

Freeland SJ, Knight RD, Landweber LF, Hurst LD (2000) Early fixation of an optimal genetic code. Mol Biol Evol 17(4):511–518

Galhardo RS, Hastings PJ, Rosenberg SM (2007) Mutation as a stress response and the regulation of evolvability. Crit Rev Biochem Mol Biol 42(5):399–435

Hadany L, Beker T (2003) On the evolutionary advantage of fitness-associated recombination. Genetics 165(4):2167–79

He J, Chen T, Yao X (2015) On the easiest and hardest fitness functions. IEEE Trans Evol Comput 19(2):295–305

Jansen T (2001) On classifications of fitness functions. In: Kallel L, Naudts B, Rogers A (eds) Theoretical aspects of evolutionary computing. Natural computing series. Springer, Berlin, pp 371–385

Jones T, Forrest S (1995) Fitness distance correlation as a measure of problem difficulty for genetic algorithms. In: Eshelman L (ed) Proceedings of the sixth international conference on genetic algorithms, San Francisco, pp 184–192

Kassen R, Bataillon T (2006) Distribution of fitness effects among beneficial mutations before selection in experimental populations of bacteria. Nat Genet 38(4):484–8

Kimura M (1980) Average time until fixation of a mutant allele in a finite population under continued mutation pressure: Studies by analytical, numerical, and pseudo-sampling methods. Proc Natl Acad Sci 77(1):522–526

Knight CG, Platt M, Rowe W, Wedge DC, Khan F, Day PJ, McShea A, Knowles J, Kell DB (2009) Array-based evolution of DNA aptamers allows modelling of an explicit sequence-fitness landscape. Nucl Acids Res 37(1):e6

Kong A, Frigge ML, Masson G, Besenbacher S, Sulem P, Magnusson G, Gudjonsson SA, Sigurdsson A, Jonasdottir A, Jonasdottir A, Wong WSW, Sigurdsson G, Walters GB, Steinberg S, Helgason H, Thorleifsson G, Gudbjartsson DF, Helgason A, Magnusson OT, Thorsteinsdottir U, Stefansson K (2012) Rate of de novo mutations and the importance of father’s age to disease risk. Nature 488(7412):471–475

Krašovec R, Belavkin RV, Aston JA, Channon A, Aston E, Rash BM, Kadirvel M, Forbes S, Knight CG (2014a) Where antibiotic resistance mutations meet quorum-sensing. Microbial Cell 1(7):250–252

Krašovec R, Belavkin RV, Aston JAD, Channon A, Aston E, Rash BM, Kadirvel M, Forbes S, Knight CG (2014b) Mutation rate plasticity in rifampicin resistance depends on escherichia coli cell-cell interactions. Nature Commun 5(3742)

Lobkovsky AE, Wolf YI, Koonin EV (2011) Predictability of evolutionary trajectories in fitness landscapes. PLoS Comput Biol 7(12):e1002,302

MacLean RC, Torres-Barcelo C, Moxon R (2013) Evaluating evolutionary models of stress-induced mutagenesis in bacteria. Nat Rev Genet 14(3):221–7

Mazurkiewicz S (1931) Sur les fonctions non dérivables. Studia Math 3:92–94

McDonald MJ, Cooper TF, Beaumont HJ, Rainey PB (2011) The distribution of fitness effects of new beneficial mutations in pseudomonas fluorescens. Biol Lett 7(1):98–100

Mustonen V, Lassig M (2009) From fitness landscapes to seascapes: non-equilibrium dynamics of selection and adaptation. Trends Genet 25(3):111–9

Nix AE, Vose MD (1992) Modeling genetic algorithms with Markov chains. Ann Math Artif Intell 5(1):77–88

Ochoa G (2002) Setting the mutation rate: scope and limitations of the \(1/l\) heuristics. In: Proceedings of genetic and evolutionary computation conference (GECCO-2002). Morgan Kaufmann, San Francisco, pp 315–322

Orr HA (1998) The population genetics of adaptation: the distribution of factors fixed during adaptive evolution. Evolution 52(4):935–949

Orr HA (2002) The population genetics of adaptation: the adaptation of DNA sequences. Evolution 56(7):1317–30

Orr HA (2003) The distribution of fitness effects among beneficial mutations. Genetics 163(4):1519–26

Orr HA (2005) The genetic theory of adaptation: a brief history. Nat Rev Genet 6(2):119–27

Orr HA (2009) Fitness and its role in evolutionary genetics. Nat Rev Genet 10(8):531–539

Poli R, Galvan-Lopez E (2012) The effects of constant and bit-wise neutrality on problem hardness, fitness distance correlation and phenotypic mutation rates. IEEE Trans Evol Comput 16(2):279–300

Ram Y, Hadany L (2012) The evolution of stress-induced hypermutation in asexual populations. Evol Int J Org Evol 66(7):2315–2328

Rokyta DR, Beisel CJ, Joyce P, Ferris MT, Burch CL, Wichman HA (2008) Beneficial fitness effects are not exponential for two viruses. J Mol Evol 67(4):368–376

Scheiner SM, Holt RD (2012) The genetics of phenotypic plasticity. x. Variation versus uncertainty. Ecol Evol 2(4):751–767

Schuster P, Fontana W, Stadler PF, Hofacker IL (1994) From sequences to shapes and back: a case study in RNA secondary structures. Proc R Soc Lond B Biol Sci 255(1344):279–284

Smith JM (1970) Natural selection and concept of a protein space. Nature 225(5232):563–564

Stadler BMR, Stadler PF, Wagner GP, Fontana W (2001) The topology of the possible: formal spaces underlying patterns of evolutionary change. J Theor Biol 213(2):241–274

Stich M, Manrubia SC, Lazaro E (2010) Variable mutation rates as an adaptive strategy in replicator populations. PLoS ONE 5(6):e11,186

Stratonovich RL (1959) On the theory of optimal non-linear filtration of random functions. Theory Probab Appl 4:223–225 (English translation)