Abstract

A distributed optimal control problem for a semilinear parabolic partial differential equation is investigated. The stability of locally optimal solutions with respect to perturbations of the initial data is studied. Based on different types of sufficient optimality conditions for a local solution of the unperturbed problem, Lipschitz or Hölder stability with respect to perturbations are proved. Moreover, a particular example with semilinear equation, constant initial data, and standard quadratic tracking type objective functional is constructed that has at least two different locally optimal solutions. By the perturbation analysis, the existence of a problem with non-constant initial data is shown that also has at least two different locally optimal solutions.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

We consider the optimal control problem

where \(y_u\) denotes the solution of the semilinear parabolic Neumann problem

and the set of admissible controls \({\mathcal {U}}_{ad}\) is defined by

with numbers \(-\infty \le \alpha < \beta \le \infty \). We assume that \(\gamma \) and \(\kappa \) are nonnegative real numbers, and \(y_Q\), \(y_\Omega \), and f are given functions to be specified later.

We address two main issues. The first is the stability of selected local solutions of the optimal control problem with respect to a perturbation of the initial function \(y_0\). We select a fixed local minimizer \({{\bar{u}}}\) of the problem and estimate the distance to an associated local minimizer of the problem with perturbed initial function \(y_0 + \phi \), where \( \Vert \phi \Vert _{L^2(\Omega )}\) is small enough. Such stability results might be of some interest for investigations on the value function in the context of feedback control, although the case \(T = \infty \) is needed there. We refer, for instance, to the recent contributions [2, 20, 27]. For feedback control, the unbounded case (\(\alpha = -\infty \), \(\beta = \infty \)) is important that is allowed in our paper as a particular case.

In Sect. 3, the associated stability analysis is performed for Tikhonov parameter \(\kappa >0\) under a second order sufficient optimality condition imposed on \({{\bar{u}}}\). The main result of this section is Theorem 3.4 on Lipschitz stability of local solutions with respect to \(\phi \). In Sect. 4, we investigate the same issue for \(\kappa =0\), where the second order sufficient optimality condition of Sect. 3 cannot be expected to hold. Here, we apply a second order condition that is sufficient for strong local minimizers in the sense of calculus of variations. Under this second order condition, in Theorem 4.4 we derive Hölder stability of associated optimal states with respect to the perturbation. For the stability of strong locally optimal controls we invoke the known condition (4.13) on the level set of optimal adjoint states, cf. [14]. Under this assumption, in Theorem 4.6 we are able to prove Hölder or even Lipschitz stability of strong locally optimal controls.

To our best knowledge, these results are new. In the literature on optimal control of partial differential equations, several contributions to the stability analysis with respect to perturbations were published, we mention [1, 11, 19, 21,22,23, 28, 29].

Moreover, we refer to the discussion of general control and optimization problems in [18] and to the case of optimal control problems for ODEs in [17]. However, we do not know associated works, where perturbations of the initial data were addressed in the context of PDE control. Moreover, the application of our type of critical cone to such problems is new. In the above-mentioned papers on the control of PDEs, perturbations appeared in the differential equation, in its boundary condition, in the objective functional, or in inequality constraints. Handling perturbations of the initial data is more complicated for a nonlinear state equation, in particular since bounded initial data are needed to have a differentiable control-to-state mapping.

In Sect. 3, we construct a particular example of (P) that has two different local minimizers. It was a longstanding problem for optimal control problems with semilinear PDEs and quadratic objective, if more than one optimal solution can exist. Recently, in [24] this question was answered for a semilinear elliptic boundary control problem by constructing a problem with two different optimal solutions. The reader is also referred to [16], where the non-uniqueness of minimizers is established for abstract tracking type problems with quite general state equations generating non affine-linear control-to-state mappings.

While [16] and [24] prove the existence of problems with non-unique minimizers, they do not construct a concrete example. In our paper, we proceed in a different way and provide a concrete example with two different local minimizers. It uses the nonlinearity \(f(y) = y^3-y\). For a nonconvex objective functional, an example with two different global solutions was given in [4].

2 Assumptions and Preliminary Results

We impose the following assumptions on the problem \(\hbox {(P)}\):

(A1) \(\Omega \) is an open bounded set in \({\mathbb {R}}^n\), \(1 \le n \le 3\), with a Lipschitz boundary \(\Gamma \). The time T is finite, \(0< T < \infty \). (A2) We assume that \(f:Q\times {\mathbb {R}}\rightarrow {\mathbb {R}}\) is a Carathéodory function of class \(C^2\) with respect to the last variable satisfying the following properties:

for almost all \((x,t) \in Q\). (A3) Functions \(y_0 \in L^\infty (\Omega )\), \(y_Q \in L^{{{\hat{r}}}}(0,T;L^{{{\hat{s}}}}(\Omega ))\) for some \({{\hat{r}}},{{\hat{s}}}\ge 2\) with \(\frac{1}{{{\hat{r}}}} + \frac{n}{2{{\hat{s}}}}<1\), and \(y_\Omega \in L^\infty (\Omega )\) are given. (A4) We assume that \(\kappa > 0\) or \(-\infty< \alpha< \beta < +\infty \).

Remark 2.1

In the quite common case \({\hat{p}} = {{\hat{q}}} \), the inequality in (2.2) amounts to \({{\hat{p}}} > \frac{n}{2} + 1\).

Assumption (A2) is fulfilled in particular by any polynomial f of odd degree and with a positive leading coefficient or \(f(y) = e^y\). In particular, the function \(f(y) = (y-y_1)(y-y_2)(y-y_3)\) with fixed real numbers \(y_i, \, i=1,2,3\), satisfies our assumptions. This function that appears in the so-called Schlögl model, will be used in Sect. 5.

Throughout the paper, we use the standard space \(W(0,T) = L^2(0,T;H^1(\Omega )) \cap H^1(0,T;H^1(\Omega )^*)\).

Let us recall the following known results :

Theorem 2.1

Under the previous assumptions, for every \(u \in L^r(0,T;L^s(\Omega ))\) with \(\frac{1}{r} + \frac{n}{2s} < 1\) and \(r, s \ge 2\), there exists a unique solution \(y_u \in L^\infty (Q) \cap W(0,T)\) of (1.1). Moreover, the following estimates hold :

for a monotone non-decreasing function \(\eta :[0,\infty ) \longrightarrow [0,\infty )\) and some constant K both independent of u. Finally, if \(u_k \rightharpoonup u\) in \(L^r(0,T;L^s(\Omega ))\), then

holds.

The reader is referred to [5] and [6] for the proof of this result. Then, the mapping \(G:L^r(0,T;L^s(\Omega )) \longrightarrow L^\infty (Q) \cap W(0,T)\) given by \(G(u) = y_u\), the solution of (1.1), is well defined. The following differentiability properties of G are known:

Theorem 2.2

The mapping G is of class \(C^2\). For \(u,v,v_1,v_2 \in L^r(0,T;L^s(\Omega ))\), the derivatives \(z_v = G'(u)v\) and \(z_{v_1,v_2} = G''(u)(v_1,v_2)\) are the solutions of the equations

We refer to [5] for the proof of these theorems. Though the proof of Theorem 2.1 in [5] is performed for Dirichlet condition, the same arguments can be applied for the Neumann case with obvious modifications. In [5], the proof of Theorem 2.2 was carried out for \(s = 2\), but it remains valid for our setting of r and s.

As a consequence of Theorem 2.2 and the chain rule, we deduce the following result:

Corollary 2.1

The functional \(J:L^r(0,T;L^s(\Omega )) \longrightarrow {\mathbb {R}}\) is of class \(C^2\). Its first and second order derivatives are given by the expressions

where \(z_{v_i} = G'(u)v_i\), \(i = 1, 2\), and \(\varphi _u \in W(0,T) \cap L^\infty (Q)\) is the solution of the adjoint state equation

Remark 2.2

Though J is neither differentiable nor well defined in \(L^2(Q)\) for \(n > 1\), the linear and bilinear forms \(J'(u)\) and \(J''(u)\) can be extended to continuous forms defined on \(L^2(Q)\) and \(L^2(Q) \times L^2(Q)\), respectively, by the same expressions (2.10) and (2.11).

Problem \(\hbox {(P)}\) is a non-convex problem in general; see [24]. Therefore, we will distinguish between local and global minimizers for \(\hbox {(P)}\).

Definition 2.1

Given \(r, s \in [1,\infty ]\), we say that \({{\bar{u}}}\) is an \(L^r(0,T;L^s(\Omega ))\)-local minimizer of \(\hbox {(P)}\), if \(u \in {\mathcal {U}}_{ad}\) and there exists an \(L^r(0,T;L^s(\Omega ))\) ball \(B_\varepsilon ({{\bar{u}}})\) such that \(J({{\bar{u}}}) \le J(u)\) \(\forall u \in {\mathcal {U}}_{ad}\cap B_\varepsilon ({{\bar{u}}})\). If this inequality is strict whenever \(u \ne {{\bar{u}}}\), then \({{\bar{u}}}\) is called an \(L^r(0,T;L^s(\Omega ))\)-strict local minimizer of \(\hbox {(P)}\). We say that \({{\bar{u}}}\) is a solution of \(\hbox {(P)}\) or a global minimizer if \(u \in {\mathcal {U}}_{ad}\) and \(J({{\bar{u}}}) \le J(u)\) \(\forall u \in {\mathcal {U}}_{ad}\).

Theorem 2.3

Problem \(\hbox {(P)}\) has at least one solution.

This result is well known if \(-\infty< \alpha< \beta < +\infty \). In the other case, due to Assumption (A4) we have that \(\kappa > 0\). Then, the existence of a solution for \(\hbox {(P)}\) is also true; see [4] or [8]. This is remarkable because the \(L^2(Q)\)-Tikhonov term implies the boundedness of minimizing sequences only in \(L^2(Q)\). This is not sufficient for dealing with the state equation, see Theorem 2.1.

From Corollary 2.1, the following well known results are deduced; see, for instance, [10] or [12] :

Theorem 2.4

Let \({{\bar{u}}}\) be an \(L^r(0,T;L^s(\Omega ))\)-local minimizer of \(\hbox {(P)}\) with \(\frac{1}{r} + \frac{n}{2s} < 1\) and \(r, s \ge 2\). Then, there exist unique functions \({{\bar{y}}}, {{\bar{\varphi }}} \in W(0,T) \cap L^\infty (Q)\) such that

Moreover, the inequality \(J''({{\bar{u}}})v^2 \ge 0\) \(\forall v \in C_{{{\bar{u}}}}\) holds, where \(C_{{{\bar{u}}}}\) is the cone of critical directions defined by

This theorem provides the first and second order necessary conditions for local optimality. To establish our stability results of \(\hbox {(P)}\), we need sufficient second order conditions. They will be addressed in Sects. 3 and 4.

Remark 2.3

Observe that (2.15) implies

for every \(u \in L^2(Q)\) satisfying the control constraints. Indeed, it is enough to take into account that these controls u can be approximated in \(L^2(Q)\) by controls of \({\mathcal {U}}_{ad}\).

Remark 2.4

It is important to remark that there exists a constant \(K_U\) such that any global minimizer \({{\bar{u}}}\) for \(\hbox {(P)}\) satisfies \(\Vert {{\bar{u}}}\Vert _{L^\infty (Q)} \le K_U\). Indeed, this is obvious if \(-\infty< \alpha< \beta < +\infty \). If this is not the case, then from Assumption (A4) we have that \(\kappa > 0\) and we argue as follows: Let \(u_0 \in {\mathcal {U}}_{ad}\) be a fixed control. From the optimality of \({{\bar{u}}}\) we know that \(\frac{\kappa }{2}\Vert {{\bar{u}}}\Vert ^2_{L^2(Q)} \le J({{\bar{u}}}) \le J(u_0)\). This yields \(\Vert {{\bar{u}}}\Vert _{L^2(Q)} \le \sqrt{\frac{2}{\kappa }J(u_0)}\). Then, we get with (2.6)

Hence, invoking \(y_Q \in L^{{{\hat{r}}}}(0,T;L^{{{\hat{s}}}}(\Omega ))\) with \(\frac{1}{{{\hat{r}}}} + \frac{n}{2{{\hat{s}}}} < 1\) and \(y_\Omega \in L^\infty (\Omega )\), we infer from (2.14)

It is well known that (2.15) implies

Hence, the inequality \(\Vert {{\bar{u}}}\Vert _{L^\infty (Q)} \le \max \big \{\frac{C_3}{\kappa }, \alpha ^+,(-\beta )^+ \big \}\) follows.

Remark 2.5

-

(1)

Let \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) be a global minimizer for \(\hbox {(P)}\) and \(u \in L^r(0,T;L^s(\Omega ))\) with \(r, s \ge 2\) and \(\frac{1}{r} + \frac{n}{2s} < 1\) satisfy the control constraints \(\alpha \le u(x,t) \le \beta \) for almost all \((x,t) \in Q\). Since \({\mathcal {U}}_{ad}\) was selected as a subset of \(L^\infty (Q)\), this control u is not necessarily admissible for \(\hbox {(P)}\). Can it happen that \(J(u) < J({{\bar{u}}})\)? The answer is no. Indeed, for every integer \(k \ge 1\) we set \(u_k(x,t) = {\text {Proj}}_{[-k,+k]}(u(x,t))\). Then \(u_k \in {\mathcal {U}}_{ad}\) holds for every \(k \ge \max \{\alpha ^+,(-\beta )^+\}\). The convergence \(u_k \rightarrow u\) in \(L^r(0,T;L^s(\Omega ))\) follows from Lebesgue’s dominated convergence theorem. Using the estimates (2.5) and (2.6), it is easy to prove that \(y_{u_k} \rightarrow y_u\) in W(0, T). From the optimality of \({{\bar{u}}}\), we get that \(J({{\bar{u}}}) \le J(u_k)\) for every \(k \ge 1\) and, consequently, \(J({{\bar{u}}}) \le \lim _{k \rightarrow \infty }J(u_k) = J(u)\).

-

(2)

Assume now that \({{\bar{u}}} \in L^r(0,T;L^s(\Omega ))\) satisfies the control constraints and that \({{\bar{u}}}\) is a local minimizer for J in the following sense: there exists \(\rho > 0\) such that \(J({{\bar{u}}}) \le J(u)\) for every u satisfying the control constraints and such that \(\Vert u - {{\bar{u}}}\Vert _{L^r(0,T;L^s(\Omega ))} \le \rho \). Then, \({{\bar{u}}} \in L^\infty (Q)\) holds. Once again this is obvious if \(-\infty< \alpha< \beta < +\infty \). Otherwise, we observe that (2.10) leads to (2.15) and, hence, (2.17) is satisfied. Then, we can argue as in Remark 2.2 to deduce that \({{\bar{u}}} \in L^\infty (Q)\). These observations justify the selection of \({\mathcal {U}}_{ad}\) as a subset of \(L^\infty (Q)\).

3 Lipschitz Stability. Case \(\kappa > 0\)

Let \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfy the first order necessary optimality conditions (2.13)–(2.15). A sufficient condition for strict local optimality of \({{\bar{u}}}\) is the following

where the critical cone \(C_{{{\bar{u}}}}\) is defined above. Even more, the next theorem establishes that, under this assumption, the quadratic growth condition holds.

Theorem 3.1

Let us assume that \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfies the first order optimality conditions (2.13)–(2.15) and the second order condition (3.1). Then, given \(r , s \ge 2\) such that \(\frac{1}{r} + \frac{n}{2s} < 1\) , it holds that

where \(B_\varepsilon ({{\bar{u}}})\) is the \(L^r(0,T;L^s(\Omega ))\)-ball. Therefore, \({{\bar{u}}}\) is a local minimizer in the sense of \(L^r(0,T;L^s(\Omega ))\). Moreover, if \(-\infty< \alpha< \beta < +\infty \), then (3.2) holds with \(r = s = 2\).

Proof

In the case \(-\infty< \alpha< \beta < +\infty \), the proof of (3.2) with \(r = s = 2\) can be found in [10]. In the other case, due to (A4) we have that \(\kappa > 0\). Then the proof follows the same steps as in [10] with some technical differences. Thus, we argue by contradiction: If (3.2) fails, then there exists a sequence \(\{u_k\}_{k = 1}^\infty \subset {\mathcal {U}}_{ad}\) such that

We set \(\rho _k = \Vert u_k - {{\bar{u}}}\Vert _{L^2(Q)}\) and \(v_k = \frac{1}{\rho _k}(u_k - {{\bar{u}}})\). By taking a subsequence, we can assume that \(v_k \rightharpoonup v\) in \(L^2(Q)\). Then, the proof follows as in [10]. The differences concern the proof of the following facts :

where \(\theta _k \in [0,1]\). To prove this, we first observe that \({{\bar{u}}} + \rho _kv_k = u_k \rightarrow {{\bar{u}}}\) and \( {{\bar{u}}} + \theta _k\rho _kv_k = {{\bar{u}}} + \theta _k(u_k - {{\bar{u}}}) \rightarrow {{\bar{u}}}\) strongly in \(L^r(0,T;L^s(\Omega ))\). Hence, from Theorem 2.1 we get \(y_k \rightarrow {{\bar{y}}}\) and \(y_{\theta _k} \rightarrow {{\bar{y}}}\) strongly in \(L^\infty (Q) \cap W(0,T)\). Denote by \(\varphi _k\) and \(\varphi _{\theta _k}\) the adjoint states corresponding to \(u_k\) and \({{\bar{u}}} + \theta _k(u_k - {{\bar{u}}})\), respectively. Subtracting the equations satisfied by them and invoking (2.3), it is easy to deduce that that \(\varphi _k \rightarrow {{\bar{\varphi }}}\) and \(\varphi _{\theta _k} \rightarrow {{\bar{\varphi }}}\) strongly in \(L^\infty (Q) \cap W(0,T)\). Finally, looking at the equation satisfied by \(z_{k,{v_k}} = G'({{\bar{u}}} + \theta _k(u_k - {{\bar{u}}}))v_k\), it is easy to confirm that \(z_{k,{v_k}} \rightharpoonup z_v\) in W(0, T), where \(z_v\) is the solution of (2.8) for \(y_u = {{\bar{y}}}\). Therefore, \(z_{k,{v_k}} \rightarrow z_v\) strongly in \(L^2(Q)\). Combining all these convergence properties and recalling the expressions for the derivatives of J, (2.10) and (2.11), we readily confirm the desired convergences. \(\square \)

Let us point out that, as proved in [10], the condition (3.1) is equivalent to

where

Now, we consider perturbations in the initial condition of (1.1) leading to a family of perturbed optimal control problems \((\hbox {P}\!_\varepsilon )\). Let \(\{\phi _\varepsilon \}_{\varepsilon > 0} \subset L^\infty (\Omega )\) be a family of functions satisfying

Let us comment on this class of admissible perturbations: We need \(\phi _\varepsilon \in L^\infty (\Omega )\) to have associated states in \(L^\infty (Q)\). Otherwise we cannot prove the differentiability of the control-to-state mapping that is needed for first and second order optimality conditions. The selection of the \(L^2\)-norm in (3.7) is to obtain better and more practical perturbation results. In practice, perturbations are bounded in \(L^\infty (\Omega )\). However, the requirement \(\lim _{\varepsilon \rightarrow 0}\Vert \phi _\varepsilon \Vert _{L^\infty (\Omega )} = 0\) is too strong. For instance, let \(\Omega _\varepsilon \subset \Omega \) be a sequence of measurable subsets with \(|\Omega _\varepsilon | \rightarrow 0\) and \(\phi _\varepsilon = \delta _\varepsilon \chi _{\Omega _\varepsilon }\) with \(\{\delta _\varepsilon \}_{\varepsilon > 0} \subset {\mathbb {R}}\) bounded. This sequence of perturbation functions obeys (3.7), but not in the norm of \(L^\infty (\Omega )\).

We associate with this family the state equations

For given \(\varepsilon \) and u, the solution of this equation will be denoted by \(y^\varepsilon _u\). Then, we consider the perturbed optimal control problems

Analogously to problem \(\hbox {(P)}\), every problem \((\hbox {P}\!_\varepsilon )\) has at least one global minimizer \(u_\varepsilon \). All these minimizers are uniformly bounded in \(L^\infty (Q)\) by a constant depending on \(\Vert y_0 + \phi _\varepsilon \Vert _{L^\infty (\Omega )}\); see Remark 2.4. Then, due to (3.6), this constant can be selected independently of \(\varepsilon \), hence

The next two theorems analyze the relation between the solutions of \(\hbox {(P)}\) and \((\hbox {P}\!_\varepsilon )\).

Theorem 3.2

Let \(\{u_\varepsilon \}_{\varepsilon > 0}\) be a family of global minimizers of problems \((\hbox {P}\!_\varepsilon )\). Any control \({{\bar{u}}}\) that is the weak\(^*\) limit in \(L^\infty (Q)\) of a sequence \(\{u_{\varepsilon _k}\}_{k = 1}^\infty \) with \(\varepsilon _k \rightarrow 0\) as \(k \rightarrow \infty \) is a global minimizer of \(\hbox {(P)}\). Moreover, the convergence is strong in \(L^2(Q)\).

Proof

Notice that the existence of such weakly\(^*\) converging sequences \(\{u_{\varepsilon _k}\}_{k = 1}^\infty \) follows from (3.9). We denote by \(y^{\varepsilon _k}\) and \({{\bar{y}}}\) the states associated with \(u_{\varepsilon _k}\) and \({{\bar{u}}}\), solutions of (3.8) and (1.1), respectively. From Theorem 2.1, (3.7), and (3.9), we infer that \(y^{\varepsilon _k} \rightharpoonup {{\bar{y}}}\) in W(0, T), hence strongly in \(L^2(Q)\). Using this fact and the optimality of \(u_{\varepsilon _k}\), we deduce for every \(u \in {\mathcal {U}}_{ad}\)

Since \({{\bar{u}}} \in {\mathcal {U}}_{ad}\), the above inequalities imply that \({{\bar{u}}}\) is a global minimizer of \(\hbox {(P)}\). With the convergence \(y^{\varepsilon _k} \rightarrow {{\bar{y}}}\) in \(L^2(Q)\) and \(\kappa > 0\), the strong convergence \(u_{\varepsilon _k} \rightarrow {{\bar{u}}}\) in \(L^2(Q)\) follows. \(\square \)

Conversely, we have the following result:

Theorem 3.3

Let \({{\bar{u}}}\) be a strict local minimizer of \(\hbox {(P)}\) in the \(L^r(0,T;L^s(\Omega ))\)-sense. Then, there exists a set \(\{u_{\varepsilon }\}_{\varepsilon > 0}\) of local minimizers of the problems \((\hbox {P}\!_\varepsilon )\) such that \(u_\varepsilon \rightarrow {{\bar{u}}}\) strongly in \(L^2(Q)\) when \(\varepsilon \rightarrow 0\).

Proof

Since \({{\bar{u}}}\) is a strict local minimizer for \(\hbox {(P)}\), there exists a closed \(L^2(Q)\)-ball \(B_\rho ({{\bar{u}}})\) such that \(J({{\bar{u}}}) < J(u)\) for every \(u \in {\mathcal {U}}_{ad}\cap B_\rho ({{\bar{u}}}) \setminus \{{{\bar{u}}}\}\). Let us consider the control problems

Obviously, \({{\bar{u}}}\) is the unique solution of \(({\mathcal {P}})\) and every \(({\mathcal {P}}\!_\varepsilon )\) has at least one solution \(u_\varepsilon \). As in Theorem 3.2, we deduce that every weak limit of a converging sequence \(\{u_{\varepsilon _k}\}_{k = 1}^\infty \) is a solution of \(({\mathcal {P}})\). Since \({{\bar{u}}}\) is the unique solution of \(({\mathcal {P}})\), we deduce that the whole family \(\{u_\varepsilon \}_{\varepsilon > 0}\) converges to \({{\bar{u}}}\). Moreover, arguing as in the proof of Theorem 3.2, we infer that this convergence is strong in \(L^2(Q)\). This implies that there exists \(\varepsilon _0 > 0\) such that \(\Vert u_\varepsilon - {{\bar{u}}}\Vert _{L^2(Q)} < \rho \) for every \(\varepsilon < \varepsilon _0\). Therefore, \(u_\varepsilon \) is a local minimizer of \((\hbox {P}\!_\varepsilon )\) for every \(\varepsilon < \varepsilon _0\). Indeed, for each \(\varepsilon < \varepsilon _0\) we take a constant \(\rho _\varepsilon > 0\) such that \(\rho _\varepsilon + \Vert u_\varepsilon - {{\bar{u}}}\Vert _{L^2(Q)} < \rho \). Then, for every \(u \in {\mathcal {U}}_{ad}\cap B_{\rho _\varepsilon }(u_\varepsilon )\) we have

Then, u is an admissible control for \(({\mathcal {P}}\!_\varepsilon )\) and, consequently, \(J(u_\varepsilon ) \le J(u)\). \(\square \)

In the remainder of this section, \({{\bar{u}}}\) will denote a local minimizer of \(\hbox {(P)}\) satisfying the sufficient second order condition (3.1). Its corresponding state and adjoint state will be denoted by \({{\bar{y}}}\) and \({{\bar{\varphi }}}\), respectively. Hence, Theorem 3.3 implies the existence of a set \(\{u_\varepsilon \}_{\varepsilon > 0}\) of local minimizers of the problems \((\hbox {P}\!_\varepsilon )\) such that \(u_\varepsilon \rightarrow {{\bar{u}}}\) strongly in \(L^2(Q)\) as \(\varepsilon \rightarrow 0\). The next theorem estimates \(u_\varepsilon -{{\bar{u}}}\).

Theorem 3.4

Let \({{\bar{u}}}\) be a local minimizer of \(\hbox {(P)}\) satisfying the sufficient second order condition (3.1). Then, with the notation above, there exist \(\varepsilon _0 > 0\) and \(L_\kappa \) such that

Before proving this theorem, we establish two auxiliary results. First, we fix the following notation: \(y_\varepsilon \) and \(y^\varepsilon \) denote the solutions of the unperturbed equation (1.1) and the perturbed equation (3.8), respectively, corresponding to \(u = u_\varepsilon \). Analogously, \(\varphi _\varepsilon \) and \(\varphi ^\varepsilon \) stand for the corresponding adjoint states.

Lemma 3.1

There exist constants \(C > 0\) and \(\varepsilon _1 > 0\) such that

Proof

Since \(u_\varepsilon \rightarrow {{\bar{u}}}\) in \(L^2(Q)\), there exist constants \(C_1 > 0\) and \(\varepsilon _1 > 0\) such that \(\Vert u_\varepsilon \Vert _{L^2(Q)} \le C_1\) for every \(\varepsilon \in (0,\varepsilon _1)\). From (2.6) and (3.6) we infer

With the adjoint state equations satisfied by \(\varphi ^\varepsilon \), we obtain

Since \(u_\varepsilon \) is a local minimum for \((\hbox {P}\!_\varepsilon )\), the projection formula (2.17) yields

Therefore, the estimate \(\Vert u_\varepsilon \Vert _{L^\infty (Q)} \le M_\kappa = \max \big \{\frac{C_4}{\kappa },\alpha ^+,(-\beta )^+\}\) holds for every \(\varepsilon \in (0,\varepsilon _1)\). Now, invoking (2.5) and (3.6) we infer the estimates

for every \(\varepsilon \in (0,\varepsilon _1)\). This leads to the existence of a constant M such that

We set \(w_\varepsilon = y^\varepsilon - y_\varepsilon \) and subtract the equations for \(y^\varepsilon \) and \(y_\varepsilon \). By the mean value theorem for real-valued functions, we get for some intermediate function \({{\hat{y}}}^\varepsilon = y^\varepsilon + \theta _\varepsilon (y_\varepsilon - y^\varepsilon )\) with measurable \(0 \le \theta _\varepsilon (x,t) \le 1\),

Using (3.12) and Assumption (2.3), we deduce that \(\frac{\partial f}{\partial y}(x,t,{{\hat{y}}}^\varepsilon )\) is uniformly bounded in \(L^\infty (Q)\) by a constant \(C_{f,M}\). Hence, (2.6) implies that

which proves the first part of (3.11). To prove the second part, we put \(\psi _\varepsilon = \varphi ^\varepsilon - \varphi _\varepsilon \). Subtracting their corresponding equations we obtain

With (2.3), (3.12), the mean value theorem, and the estimate \(\Vert \varphi ^\varepsilon \Vert _{L^\infty (Q)} \le C_4\) established above, we infer

Thus, the estimate

follows from the partial differential equation above, which concludes the proof. \(\square \)

Lemma 3.2

There exists \(\varepsilon _0 \in (0,\varepsilon _1]\) such that

where \(\mu \) is given by (3.3).

Proof

Let us take \(\tau > 0\) as in (3.3). We first prove that \(u_\varepsilon -{{\bar{u}}} \in E_{{{\bar{u}}}}^\tau \) for every sufficiently small \(\varepsilon \). Since obviously \(u_\varepsilon - {{\bar{u}}}\) satisfies the sign conditions (3.5), it is enough to show that \(J'({{\bar{u}}})(u_\varepsilon - {{\bar{u}}}) \le \tau \Vert u_\varepsilon - {{\bar{u}}}\Vert _{L^2(Q)}\) for \(\varepsilon \) small enough. To this end we set \(v_\varepsilon = \frac{u_\varepsilon - {{\bar{u}}}}{\Vert u_\varepsilon - {{\bar{u}}}\Vert _{L^2(Q)}}\). Taking a subsequence, we can assume that \(v_\varepsilon \rightharpoonup v\) in \(L^2(Q)\). In the proof of Lemma 3.1, the boundedness of \(\{u_\varepsilon \}_{\varepsilon > 0}\) in \(L^\infty (Q)\) was established. Therefore, \(u_\varepsilon \rightarrow {{\bar{u}}}\) strongly in every \(L^p(Q)\) for \(p < \infty \). Using this fact along with the optimality of \({{\bar{u}}}\) and \(u_\varepsilon \), we obtain

Hence, \(\lim _{\varepsilon \rightarrow 0} J'({{\bar{u}}})v_\varepsilon = J'({{\bar{u}}})v = 0\) holds. Since this is true for any convergent subsequence of \(\{v_\varepsilon \}_{\varepsilon > 0}\), we infer that the convergence \(J'({{\bar{u}}})v_\varepsilon \rightarrow 0\) as \(\varepsilon \rightarrow 0\) holds for the whole family. Therefore, there exists \(\varepsilon _0\) such that \(J'({{\bar{u}}})v_\varepsilon \le \tau \) for every \(\varepsilon \in (0,\varepsilon _0)\) or equivalently \(J'({{\bar{u}}})(u_\varepsilon - {{\bar{u}}}) \le \tau \Vert u_\varepsilon - {{\bar{u}}}\Vert _{L^2(Q)}\). This implies that \(u_\varepsilon - {{\bar{u}}} \in E^\tau _{{{\bar{u}}}}\). Then, (3.3) yields \(J''({{\bar{u}}})v^2 \ge \mu \Vert u_\varepsilon - {{\bar{u}}}\Vert ^2_{L^2(Q)}\). Hence, (3.14) follows from the fact that J is of class \(C^2\) in \(L^r(0,T;L^s(\Omega ))\) with \(\frac{1}{r} + \frac{n}{2s} < 1\), \(r, s \ge 2\), and the convergence \({{\bar{u}}} + \theta (u_\varepsilon - {{\bar{u}}}) \rightarrow {{\bar{u}}}\) in this space. \(\square \)

Proof of Theorem 3.4

From the local optimality of \({{\bar{u}}}\) and \(u_\varepsilon \), we infer

Adding these inequalities, we get

Then, using (3.14), the mean value theorem, (2.10), and (3.11), we deduce for \(\varepsilon \) small enough

These inequalities imply (3.10). \(\square \)

4 Stability Analysis. Case \(\kappa = 0\)

In this case, due to Assumption (A4), the set \({\mathcal {U}}_{ad}\) is bounded in \(L^\infty (Q)\). This simplifies some aspects of the analysis of \(\hbox {(P)}\). However, the second order analysis is more complicated. We follow [6] to formulate sufficient second order conditions for local optimality. Given \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfying the first order necessary optimality conditions (2.13)–(2.15), we define the following cones for arbitrary \(\tau > 0\):

The next result was proved in [6].

Theorem 4.1

Let \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfy the first order optimality conditions (2.13)–(2.15). Suppose in addition that there exist \(\mu > 0\) and \(\tau > 0\) such that

where \(z_v = G'({{\bar{u}}})v\). Then, there exist \(\delta > 0\) and \(\varepsilon > 0\) such that

for all \(u \in {\mathcal {U}}_{ad}\) such that \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} \le \varepsilon \).

Definition 4.1

We say that \({{\bar{u}}}\) is a strong local minimizer of \(\hbox {(P)}\) if there exists \(\varepsilon > 0\) such that \(J({{\bar{u}}}) \le J(u)\) for every \(u \in {\mathcal {U}}_{ad}\) with \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} \le \varepsilon \). If the inequality is strict for \(u \ne {{\bar{u}}}\), we say that \({{\bar{u}}}\) is a strict strong local minimizer. If \(J({{\bar{u}}}) \le J(u)\) holds for every \(u \in {\mathcal {U}}_{ad}\) such that \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (0,T;L^2(\Omega ))} \le \varepsilon \), then \({{\bar{u}}}\) is said to be a strong local minimizer in the sense of \(L^\infty (0,T;L^2(\Omega ))\).

Remark 4.1

The following properties hold:

-

1.

\({{\bar{u}}}\) is an \(L^1(Q)\)-local minimizer of \(\hbox {(P)}\) iff it is an \(L^r(0,T;L^s(\Omega ))\)-local minimizer of \(\hbox {(P)}\) for every \(r , s \in [1,+\infty )\).

-

2.

If \({{\bar{u}}}\) is an \(L^r(0,T;L^s(\Omega ))\)-local minimizer of \(\hbox {(P)}\) for some \(s, r \in [1,\infty )\), then it is an \(L^\infty (Q)\)-local minimizer of \(\hbox {(P)}\).

-

3.

If \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) is a strong local minimizer of \(\hbox {(P)}\), then it is an \(L^r(0,T;L^s(\Omega ))\)-local minimizer of \(\hbox {(P)}\) for every \(s, r \in [1,\infty ]\).

The reader is referred to [6, Lemma 2.8] for the proof of these statements.

Corollary 4.1

The control \({{\bar{u}}}\) is a strong local minimizer of \(\hbox {(P)}\) if and only if it is a strong local minimizer of \(\hbox {(P)}\) in the sense of \(L^\infty (0;T;L^2(\Omega ))\).

Proof

If \({{\bar{u}}}\) is a strong local minimizer of \(\hbox {(P)}\) in the sense of \(L^\infty (0;T;L^2(\Omega ))\), then from the inequality \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (0,T;L^2(\Omega ))} \le \sqrt{|\Omega |}\,\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)}\) we infer that \({{\bar{u}}}\) is also a strong local minimizer of \(\hbox {(P)}\). The converse is proved by contradiction:

Let \(\{u_k\}_{k = 1}^\infty \subset {\mathcal {U}}_{ad}\) with associated states \(\{y_k\}_{k = 1}^\infty \) satisfy

Since \(\{u_k\}_{k = 1}^\infty \) is a bounded sequence in \(L^\infty (Q)\), we can extract a subsequence denoted in the same way such that \(u_k {\mathop {\rightharpoonup }\limits ^{*}} {{\tilde{u}}}\) in \(L^\infty (Q)\). Moreover, it follows from Theorem 2.1 that \(y_k \rightarrow y_{{{\tilde{u}}}}\) in \(L^\infty (Q)\). Then, (4.6) implies that \(y_{{{\tilde{u}}}} = {{\bar{y}}}\) and, hence, \({{\tilde{u}}} = {{\bar{u}}}\). We select \(\varepsilon > 0\) such that \(J({{\bar{u}}}) \le J(u)\) for every \(u \in {\mathcal {U}}_{ad}\) with \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} \le \varepsilon \). However, there exists \(k_0\) such that \(\Vert y_k - {{\bar{y}}}\Vert _{L^\infty (Q)} \le \varepsilon \) for all \(k \ge k_0\). This contradicts (4.6). \(\square \)

Corollary 4.2

Let \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfy the first and second order optimality conditions (2.13)–(2.15) and (4.4) for some \(\mu > 0\) and \(\tau > 0\). Then, there exist \(\delta > 0\) and \(\varepsilon > 0\) such that (4.5) holds for all \(u \in {\mathcal {U}}_{ad}\) such that \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (0,T;L^2(\Omega ))} \le \varepsilon \).

Proof

We argue again by contradiction. Let \(\{u_k\}_{k = 1}^\infty \subset {\mathcal {U}}_{ad}\) with associated states \(\{y_k\}_{k = 1}^\infty \) satisfy

As in the proof of Corollary 4.1, we find that \(y_k \rightarrow {{\bar{y}}}\) in \(L^\infty (Q)\). From Theorem 4.1, we deduce the existence of \(\varepsilon > 0\) and \(\delta > 0\) such that (4.5) holds. Let us take \(k_0\) such that \(\Vert y_k - {{\bar{y}}}\Vert _{L^\infty (Q)} \le \varepsilon \) and \(\frac{1}{k} < \delta \) for every \(k \ge k_0\). Then, (4.8) contradicts (4.5) \(\square \)

From this corollary, we deduce that any control \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfying the first and second order optimality conditions (2.13)–(2.15) and (4.4) is a strict strong local minimizer of \(\hbox {(P)}\) in the sense of \(L^\infty (0,T;L^2(\Omega ))\). Now, we analyze the relationship between \(\hbox {(P)}\) and the perturbed problems \((\hbox {P}\!_\varepsilon )\) introduced in Sect. 3. We adopt the notation introduced there for the states \(y^\varepsilon = y^\varepsilon _{u_\varepsilon }\) and \(y_\varepsilon = y_{u_\varepsilon }\), as well as for the adjoint states \(\varphi ^\varepsilon = \varphi ^\varepsilon _{u_\varepsilon }\) and \(\varphi _\varepsilon = \varphi _{u_\varepsilon }\). Theorems 3.2 and 3.3 are reformulated as follows:

Theorem 4.2

Let \(\{u_\varepsilon \}_{\varepsilon > 0}\) be a family of global minimizers of problems \((\hbox {P}\!_\varepsilon )\). Any control \({{\bar{u}}}\) that is a weak\(^*\) limit in \(L^\infty (Q)\) of a sequence \(\{u_{\varepsilon _k}\}_{k = 1}^\infty \) with \(\varepsilon _k \rightarrow 0\) as \(k \rightarrow \infty \) is a global minimizer of \(\hbox {(P)}\). Moreover, the strong convergence \(y^{\varepsilon _k} \rightarrow {{\bar{y}}}\) in \(L^\infty (0,T;L^2(\Omega ))\) holds.

The proof of this theorem is almost the same as that of Theorem 3.2. The only difference is that we cannot prove the strong convergence of \(\{u_{\varepsilon _k}\}_{k = 1}^\infty \) to \({{\bar{u}}}\) in \(L^2(Q)\), because \(\kappa = 0\). Instead, the strong convergence \(y^{\varepsilon _k} \rightarrow {{\bar{y}}}\) in \(L^\infty (0,T;L^2(\Omega ))\) can be obtained. Indeed, if we subtract the equations satisfied by \(y^{\varepsilon _k}\) and \({{\bar{y}}}\) we get \(y^{\varepsilon _k} - {{\bar{y}}} = \psi _k + \eta _k\), where \(\psi _k\) and \(\eta _k\) satisfy

From the first equation, we deduce that \(\{\psi _k\}_{k = 1}^\infty \) is bounded in \(C^{0,\theta }({{\bar{Q}}})\) for some \(\theta \in (0,1)\). From this boundedness, we immediately obtain that \(\psi _k \rightarrow 0\) in \(C({{\bar{Q}}}) \subset L^\infty (0,T;L^2(\Omega ))\) as \(k \rightarrow \infty \). From the second equation, we infer \(\Vert \eta _k\Vert _{C([0,T],L^2(\Omega ))} \le C\Vert \phi _{\varepsilon _k}\Vert _{L^2(\Omega )}\) for some constant C independent of k. Hence, \(\{\eta _k\}_{k = 1}^\infty \) converges to zero in \(C([0,T],L^2(\Omega ))\). This proves the convergence \(y_k \rightarrow {{\bar{y}}}\) in \(L^\infty (0,T;L^2(\Omega ))\).

Theorem 4.3

Let \({{\bar{u}}}\) be a strict strong local minimizer of \(\hbox {(P)}\). Then, there exist \(\varepsilon _ 0 > 0\) and a family \(\{u_{\varepsilon }\}_{\varepsilon \le \varepsilon _0}\) of strong local minimizers of the problems \((\hbox {P}\!_\varepsilon )\) such that \(u_\varepsilon {\mathop {\rightharpoonup }\limits ^{*}} {{\bar{u}}}\) in \(L^\infty (Q)\) as \(\varepsilon \rightarrow 0\). Moreover, \(\{y^\varepsilon \}_{\varepsilon > 0}\) converges strongly to \({{\bar{y}}}\) in \(L^\infty (0,T;L^2(\Omega ))\).

Proof

Since \({{\bar{u}}}\) is a strict local minimizer for \(\hbox {(P)}\), in view of Corollary 4.1 there exists \(\rho > 0\) such that \(J({{\bar{u}}}) < J(u)\) for every \(u \in {\mathcal {U}}_{ad}^\rho \setminus \{{{\bar{u}}}\}\), where

Let us consider the control problems

Despite that \({\mathcal {U}}_{ad}^\rho \) is not convex in general, with (2.7) it is easy to prove that \({\mathcal {U}}_{ad}^\rho \) is bounded and weakly\(^*\) sequentially closed in \(L^\infty (Q)\). Hence, every problem \(({\mathbb {P}}\!_\varepsilon )\) has at least one solution \(u_\varepsilon \). Moreover, \({{\bar{u}}}\) is the unique solution of \(({\mathbb {P}})\). As in Theorem 3.3, every weak\(^*\) limit in \(L^\infty (Q)\) of a weakly\(^*\) converging subsequence of \(\{u_\varepsilon \}_{\varepsilon > 0}\) is \({{\bar{u}}}\). Therefore, the whole family \(\{u_\varepsilon \}_{\varepsilon > 0}\) converges weakly\(^*\) to \({{\bar{u}}}\) in \(L^\infty (Q)\). In addition, arguing as in the previous theorem, the associated states \(\{y^\varepsilon \}_{\varepsilon > 0}\) converge strongly to \({{\bar{y}}}\) in \(L^\infty (0,T;L^2(\Omega ))\). This implies the existence of \(\varepsilon _1 > 0\) such that \(\Vert y^\varepsilon - {{\bar{y}}}\Vert _{L^\infty (Q)} < \rho /3\) for every \(\varepsilon \le \varepsilon _1\). We prove that \(u_\varepsilon \) is a strong local minimizer of \((\hbox {P}\!_\varepsilon )\) in the sense of \(L^\infty (0,T;L^2(\Omega ))\). Let \(u \in {\mathcal {U}}_{ad}\) be such that \(\Vert y^\varepsilon _u - y^\varepsilon \Vert _{L^\infty (0,T;L^2(\Omega ))} < \rho /3\). Using (2.5) and (3.6) we infer that \(\{y_u^\varepsilon \}_{\varepsilon > 0}\) is bounded in \(L^\infty (Q)\). Hence, we argue as in (3.13) to deduce the existence of a constant K independent of \(u \in {\mathcal {U}}_{ad}\) such that

Selecting \(\varepsilon \in (0,\varepsilon _1]\) such that \(K\Vert \phi _\varepsilon \Vert _{L^2(\Omega )} < \rho /3\) for every \(\varepsilon \le \varepsilon _0\), we obtain

Therefore, \(u \in {\mathcal {U}}_{ad}^\rho \) and, consequently, \(J_\varepsilon (u_\varepsilon ) \le J_\varepsilon (u)\) holds. This proves that \(u_\varepsilon \) is a strong local minimizer of \((\hbox {P}\!_\varepsilon )\) in the sense of \(L^\infty (0,T;L^2(\Omega ))\) for every \(\varepsilon < \varepsilon _0\). By Corollary 4.1 this also holds in the sense of \(L^\infty (Q)\), hence \(u_\varepsilon \) is a strong local minimizer of \((\hbox {P}\!_\varepsilon )\). \(\square \)

Let \({{\bar{u}}}\) be a strong local minimizer of \(\hbox {(P)}\) satisfying the sufficient second order condition (4.4). Its corresponding state and adjoint state will be denoted by \({{\bar{y}}}\) and \({{\bar{\varphi }}}\), respectively. From the quadratic growth condition (4.5) we know that \({{\bar{u}}}\) is a strict strong local minimizer of \(\hbox {(P)}\). In view of this, Theorem 4.3 implies the existence of a set \(\{u_\varepsilon \}_{\varepsilon > 0}\) of strong local minimizers of the problems \((\hbox {P}\!_\varepsilon )\) such that \(u_\varepsilon {\mathop {\rightharpoonup }\limits ^{*}} {{\bar{u}}}\) in \(L^\infty (Q)\) and \(y^\varepsilon \rightarrow {{\bar{y}}}\) strongly in \(L^\infty (0,T;L^2(\Omega ))\).

Remark 4.2

Though \(\kappa = 0\), due to the boundedness of \({\mathcal {U}}_{ad}\) in \(L^\infty (Q)\), Lemma 3.1 is still valid. Moreover, taking \(w_\varepsilon = y^\varepsilon _{{{\bar{u}}}} - {{\bar{y}}}\) and \(\psi _\varepsilon = \varphi ^\varepsilon _{{{\bar{u}}}} - {{\bar{\varphi }}}\) and arguing as in the last part of the proof of Lemma 3.1, we deduce the existence of \(\varepsilon _0 > 0\) such that

Now, we are able to prove a result on Hölder stability of optimal states. We recall the notation \(y^\varepsilon = y^\varepsilon _{u_\varepsilon }\) and \(\varphi ^\varepsilon = \varphi ^\varepsilon _{u_\varepsilon }\).

Theorem 4.4

If \(\{u_\varepsilon \}_{\varepsilon > 0}\) is a sequence of strong local minimizers of the problems \((\hbox {P}\!_\varepsilon )\) according to Theorem 4.3, there exists a constant \(L_0\) such that

Proof

From (3.11) and the triangle inequality we infer

As in the proof of Theorem 4.2, we have that \(y_\varepsilon \rightarrow {{\bar{y}}}\) strongly in \(L^\infty (0,T;L^2(\Omega ))\) as \(\varepsilon \rightarrow 0\). Therefore, we can apply (4.5) to deduce for every sufficiently small \(\varepsilon \)

Using again (3.11), we estimate \(I_1\) as follows :

Since \(u_\varepsilon \) is a global minimizer of \(({\mathbb {P}}\!_\varepsilon )\) and \({{\bar{u}}}\) is a feasible control for this problem, we have that \(I_2 \le 0\). Finally, we can estimate \(I_3\) as \(I_1\) by using (4.9) instead of (3.11). Inserting these estimates in (4.12) and invoking (4.11), we obtain (4.10). \(\square \)

Unlike in the case \(\kappa > 0\), in order to prove a stability estimate for the controls as \(\kappa = 0\), we need an extra assumption. The proof of the stability inequality (3.10) was based on the second order condition (3.3). This condition does not hold if \(\kappa = 0\) except for a few extreme cases; see [3]. That is why we have used (4.4).

Nevertheless, (4.4) leads only to the quadratic growth condition (4.5) that has been crucial in the proof of the stability estimate (4.10). The question is if we could have an inequality of type

for all \(u \in {\mathcal {U}}_{ad}\) such that \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} \le \varepsilon \) with some \(\delta > 0\), \(\varepsilon > 0\), and \(r \in [1,\infty )\). An inequality of this type would allow us to get some stability estimate for optimal controls. It was proved in [15] that an inequality of this type is impossible unless \({{\bar{u}}}\) is a bang-bang control. In [14], an inequality of the above type was proved under a structural assumption on the adjoint state \({{\bar{\varphi }}}\). Following this idea, we assume

This assumption includes that \({{\bar{u}}}\) is a bang-bang control. Indeed, (4.13) implies that the set of points where \({{\bar{\varphi }}}\) vanishes has a zero Lebesgue measure. Moreover, (2.15) with \(\kappa = 0\) yields that \({{\bar{u}}}(x,t) = \alpha \) if \({{\bar{\varphi }}}(x,t) > 0\) and \({{\bar{u}}}(x,t) = \beta \) if \({{\bar{\varphi }}}(x,t) < 0\). Therefore, \({{\bar{u}}}(x,t)\) belongs to \(\{\alpha ,\beta \}\) for almost every point \((x,t) \in Q\). We will prove that (4.13), along with the sufficient second order optimality condition (4.4), implies the stability of the optimal control with respect to the perturbations of the initial condition. We prepare the proof by some technical results.

Lemma 4.1

There exists a constant \(C_{\alpha ,\beta }\) such that

where \(z_{u - {{\bar{u}}}} = G'({{\bar{u}}})(u - {{\bar{u}}})\).

Proof

From Theorem 2.1 and the boundedness of \({\mathcal {U}}_{ad}\) in \(L^\infty (Q)\), we deduce the existence of a constant M such that \(\Vert y_u\Vert _{L^\infty (Q)} \le M\) \(\forall u \in {\mathcal {U}}_{ad}\). Moreover, taking \(u = {{\bar{u}}}\) and \(v = u - {{\bar{u}}}\) in (2.8) with \(u \in {\mathcal {U}}_{ad}\) arbitrary, we conclude that \(z_{u - {{\bar{u}}}} \in C({{\bar{Q}}})\). Subtracting the equations satisfied by \(y_u\), \({{\bar{y}}}\), and \(z_{u - {{\bar{u}}}}\), and setting \(w = y_u - {{\bar{y}}} - z_{u -{{\bar{u}}}}\), we get

Then, using (2.3) we obtain

This yields

which proves the lemma. \(\square \)

Lemma 4.2

There exists a constant \(C_\gamma \) such that

where \(z_v = G'({{\bar{u}}})v\).

Proof

Let \(\psi \in W(0,T) \cap L^\infty (Q)\) be the solution of

Then, we have that \(\Vert \psi \Vert _{L^\infty (Q)} \le C_\gamma \) and consequently

\(\square \)

Lemma 4.3

Let \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfy the first and second order optimality conditions (2.13)–(2.15) and (4.4). Then, to every \(\rho > 0\) an \(\varepsilon _\rho > 0\) can be found such that

for all \(\theta \in [0,1]\) and for all \(u \in {\mathcal {U}}_{ad}\) satisfying \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} < \varepsilon _\rho \).

Proof

The proof of this lemma follows the steps of the corresponding proof for [7, Lemma 2] established for the elliptic case. We will use the following property: for all \(\varrho > 0\) there exists \(\varepsilon _\varrho > 0\) such that, if \(u \in {\mathcal {U}}_{ad}\) and \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} < \varepsilon _\varrho \), we have

where \(u_\theta = {{\bar{u}}} + \theta (u - {{\bar{u}}})\), \(z_v = G'({{\bar{u}}})v\), and \(z_{u - {{\bar{u}}}} = G'({{\bar{u}}})(u - {{\bar{u}}})\). For the proof of (4.17), the reader is referred to [13, Lemma 6]; see also [9, Lemma 3.5]. Moreover, we will use the following fact established in the proof of Corollary 3 in [13]: there exists \(\varepsilon _1 > 0\) such that

for every \(u \in {\mathcal {U}}_{ad}\) such that \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} < \varepsilon _0\). We will prove that

for all \(u \in {\mathcal {U}}_{ad}\) satisfying that \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} < \varepsilon _\rho \) for some \(\varepsilon _{\rho } \in (0,\varepsilon _{0}]\). Then, (4.16) is a straightforward consequence of (4.18) and (4.19). The proof is split into three cases.

Case I - \(u - {{\bar{u}}} \in C_{{{\bar{u}}}}^\tau \). Since \({{\bar{u}}}\) satisfies the first order optimality conditions, we have that \(J'({{\bar{u}}})(u - {{\bar{u}}}) \ge 0\). Moreover, from (4.4) we get

Then, taking \(\varrho = \frac{\mu }{2}\) in (4.17) and using the above inequality, (4.16) follows with \(\varepsilon _\rho = \varepsilon _\varrho \).

Case II - \(u - {{\bar{u}}} \not \in G_{{{\bar{u}}}}^\tau \). Since \(u - {{\bar{u}}}\) satisfies the sign condition (3.5), we infer from (4.1) that

From (4.14) we obtain

Inserting this inequality in (4.20), we obtain

Moreover, from (2.11) we infer the existence of a constant \(C_1\) such that

for all \(u \in {\mathcal {U}}_{ad}\) and all \(v_1, v_2 \in L^2(Q)\). It is enough to select \(\varepsilon _\rho \) such that \(\frac{\rho \tau }{C_{\alpha ,\beta }\varepsilon _\rho } - C_1 \ge \frac{\mu }{2}\) to get (4.19).

Case III - \(u - {{\bar{u}}} \not \in D_{{{\bar{u}}}}^\tau \)and \(u - {{\bar{u}}} \in G_{{{\bar{u}}}}^\tau \). Let \(C_\gamma \) be the constant introduced in (4.15) and set \(\tau ^{*} = \tau / \max \{1,C_{\gamma }\}\). If \(u - {{\bar{u}}} \not \in G_{{{\bar{u}}}}^{\tau ^*}\), then case II applies. Otherwise, we define the sets

Associated with these sets, we consider the controls \(u_1 = (u - {{\bar{u}}})\chi _{Q_1}\) and \(u_2 = (u - {{\bar{u}}})\chi _{Q_2}\), where \(\chi _{Q_i}\) is the characteristic function of \(Q_i\). It is an immediate consequence of (2.10) that

The definition of \(u_1\) yields \(u_1 \in D^\tau _{{{\bar{u}}}}\). Let us prove that \(u_1 \in G^\tau _{{{\bar{u}}}}\) holds as well. Using (4.22), (4.15), and recalling the definition of \(\tau ^*\), we infer

Moreover, since \(u - {{\bar{u}}} \in G^{\tau ^*}_{{{\bar{u}}}}\), we get

From the last two estimates for \(J'({{\bar{u}}})(u - {{\bar{u}}})\) and the fact that \(\tau ^* \le \tau \), we get

This implies \(u_1 \in G^\tau _{{{\bar{u}}}}\) and, consequently, \(u_1 \in C^\tau _{\bar{u}}\). Let us confirm that \(u_2\) is a small perturbation of \(u_1\). Indeed, using (4.22), the fact that \(u - {{\bar{u}}} \in G^\tau _{{{\bar{u}}}}\), and (4.14) it follows

This leads to

Moreover, since \(|u_2| \le |u - {{\bar{u}}}| \le \beta - \alpha \), we obtain

that proves the smallness of \(u_2\). This implies

To prove (4.19), we first analyze the term \(\rho J'({{\bar{u}}})(u - {{\bar{u}}})\). From (4.22) and (4.15) we infer

To estimate the term \(J''(u_\theta )(u - {{\bar{u}}})^2\), we proceed as follows: using (4.17) with \(\varrho = \mu /12\), (4.4) and the fact that \(u_{1} \in C^{\tau _{\bar{u}}}\), and(4.21)

Combining this inequality with (4.23) and (4.24), we get

Then, it is enough to select \(\varepsilon _\rho \) such that \(\frac{\rho \tau }{C_\gamma } > C_2C_3C_4\root 3 \of {\varepsilon _\rho }\) to conclude the proof. \(\square \)

Lemma 4.4

Let \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfy the first order optimality conditions (2.13)–(2.15) and the structural assumption (4.13). Then, the following inequality holds:

where \(\sigma = \big (2[2(\beta - \alpha )C_\lambda ]^{\frac{1}{\lambda }}\big )^{-1}\).

Proof

Given \(\varepsilon > 0\) to be fixed later, we set \(Q_\varepsilon =\{(x,t) \in Q : |{{\bar{\varphi }}}(x,t)| > \varepsilon \}\). From (4.13) we have \(|Q \setminus Q_\varepsilon | \le C_\lambda \varepsilon ^\lambda \). Using this we get

Then, taking \(\varepsilon = [2(\beta - \alpha )C_\lambda ]^{-\frac{1}{\lambda }}\Vert u - {{\bar{u}}}\Vert _{L^1(Q)}^{\frac{1}{\lambda }}\), (4.25) follows from the above inequality. \(\square \)

The reader is referred to [26, Lemma 6.3] for an extension of this result to sparse optimal control; see also [25]. Using the previous lemmas, we obtain the following result:

Theorem 4.5

Let \({{\bar{u}}} \in {\mathcal {U}}_{ad}\) satisfy the first and second order optimality conditions (2.13)–(2.15) and (4.4) along with the structural assumption (4.13). Then, there exists \(\varepsilon > 0\) such that

\(\forall u \in {\mathcal {U}}_{ad}\) such that \(\Vert y_u - {{\bar{y}}}\Vert _{L^\infty (Q)} \le \varepsilon \), where \(\sigma \) and \(\mu \) are introduced in Lemma 4.4 and in the assumption (4.4), respectively.

Proof

Performing a Taylor expansion, from (4.25) and (4.16) with \(\rho = 1\), we infer

\(\square \)

We finish this section by establishing a stability result for optimal controls and associated states. Let \({{\bar{u}}}\) be a strong local minimizer of \(\hbox {(P)}\) satisfying the sufficient second order condition (4.4) and Assumption (4.13). Its corresponding state and adjoint state will be denoted by \({{\bar{y}}}\) and \({{\bar{\varphi }}}\), respectively. From the growth condition (4.5), we know that \({{\bar{u}}}\) is a strict strong local minimizer of \(\hbox {(P)}\). Hence, Theorem 4.3 implies the existence of a set \(\{u_\varepsilon \}_{\varepsilon > 0}\) of strong local minimizers of the problems \((\hbox {P}\!_\varepsilon )\) such that \(u_\varepsilon {\mathop {\rightharpoonup }\limits ^{*}} {{\bar{u}}}\) in \(L^\infty (Q)\) and \(y^\varepsilon \rightarrow {{\bar{y}}}\) strongly in \(L^\infty (0,T;L^2(\Omega ))\).

Theorem 4.6

Under the above notation, there exist \(L_\lambda \) and \(\varepsilon _0 > 0\) such that

hold for every \(\varepsilon < \varepsilon _0\).

Proof

The convergence \(u_\varepsilon {\mathop {\rightharpoonup }\limits ^{*}} {{\bar{u}}}\) in \(L^\infty (Q)\) and Theorem 2.1 yield \(y_\varepsilon = y_{u_\varepsilon } \rightarrow {{\bar{y}}}\) in \(L^\infty (Q)\). Taking \(\rho = \frac{1}{2}\) in (4.16), we deduce the existence of \(\varepsilon _0 > 0\) such that \(\Vert y_\varepsilon - {{\bar{y}}}\Vert _{L^\infty (Q)} < \varepsilon _\rho \) \(\forall \varepsilon < \varepsilon _0\). Therefore, every \(u_\varepsilon \) with \(\varepsilon < \varepsilon _0\) satisfies (4.16) for \(\rho = \frac{1}{2}\).

Since \(u_\varepsilon \) is a strong local minimizer of \(\hbox {(P)}\), we have \(J_\varepsilon '(u_\varepsilon )({{\bar{u}}} - u_\varepsilon ) \ge 0\). Using (4.25), this variational inequality, and (2.10) we obtain

Invoking (4.16) with \(\rho = \frac{1}{2}\) and (3.11), we infer from the above inequality

Estimate (4.27) follows from this inequality. Now, inserting (4.27) in (4.31), we infer

Combining this inequality with (3.11) and applying the triangle inequality, we get (4.29). Finally, (4.28) and (4.30) are obtained using in (4.31) the estimate

\(\square \)

5 A Problem with at Least Two Local Minimizers

5.1 The Problem

Although semilinear parabolic control problems were considered since decades, it was an open question if problems with semilinear parabolic equation and standard quadratic tracking type objective functional may have more than one solution. Due to the quadratic structure of the objective functional, one could think of a hidden convexity of the problem. In general, the answer is no. Indeed, in [24] a semilinear elliptic optimal boundary control problem with quadratic objective functional was constructed that has two different optimal controls. The author also proved a remarkable result for functionals of the form

in real Hilbert spaces H and U, where a mapping \(G:U \rightarrow H\) and \(z \in H\) are given. He proved the following: The functional J is convex for all \(z\in H\) if and only if G is affine. This result was extended in [16] to quite general tracking type functionals and applied to control-to-state mappings G associated with a nonsmooth elliptic equation, a Signorini type variational inequality, and an evolutionary obstacle problem.

In our class of optimal control problems, G stands for the nonlinear control-to-state mapping and \(z = y_Q\). The result of [24] shows that there exists a desired state function \(y_Q\) such that J is nonconvex. This does not yet imply the existence of multiple local minima, but it is an indication that they might exist for a suitable \(y_Q\).

Indeed, in this section we present a semilinear parabolic optimal control problem with at least two different local solutions, one of them being globally optimal. We also constructed a problem with two different global solutions. However, it has a slightly different and more academic structure and will be discussed elsewhere.

We consider the problem

subject to

and to the pointwise control constraints \(\alpha \le u(x,t) \le \beta \) a.e. in Q. The desired state \(y_Q\) is defined by

and the nonlinearity f is \(f(y) = y(y-1)(y+1) = y^3 - y\). Therefore, the PDE is a particular case of the so-called Schlögl model of theoretical chemistry.

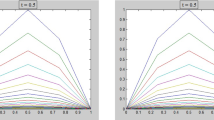

For the spatial domain \(\Omega \subset {\mathbb {R}}^n\), we assume \(|\Omega |=1\), for instance, \(\Omega = (0,1)^n\). The other data are taken as: \(T=4\), \(\alpha = 0\), \(\beta = 10\), \(\kappa \in [0,0.3]\).

5.2 A First Locally Optimal Control

As in the previous sections, we denote by \(y_u\) the state associated with the control u. For \(u = 0\), we obtain \(y_u = 0\) as associated state, because zero is one of the so-called fixed points of f, i.e. we have \(f(0)=0\).

The idea for the example is as follows: We shall show that \({{\bar{u}}}=0\) is a strict local minimizer. Next, we construct another control that has a smaller objective value than \({{\bar{u}}}\). Because the problem has a (global) solution, there must be a global solution distinct from the zero control. Therefore, at least two different local solutions must exist. To proceed in this way, we consider the adjoint equation for \({{\bar{u}}} = 0\). It is

where \({{\bar{y}}} = y_{{{\bar{u}}}} = 0\). Hence, we have \(f'({{\bar{y}}}) = 3 {{\bar{y}}}^2 - 1 = -1\). The reader can easily check that

It is easy to verify that \({{\bar{\varphi }}}(x,t) \ge 0\) in Q if and only if \(0 \le t_s\le {{\hat{t}}} = \ln (\frac{1}{2}(e^4+1))\); notice that \({{\bar{\varphi }}}(x,0)=0\) iff \(t_s = {{\hat{t}}}\).

Theorem 5.1

If \(0 \le t_s \le {\hat{t}}\), then \({{\bar{u}}} \equiv 0\) is an \(L^p(Q)\)-strict local minimum of \(\hbox {(E)}\) for every \(p \in [1,\infty ]\).

Proof

First of all, we observe that \({{\bar{u}}}\) is an admissible control for \(\hbox {(E)}\). Moreover, \({{\bar{u}}}\) satisfies the first order optimality conditions (2.13)–(2.15). Indeed, the inequality

obviously holds because \({{\bar{\varphi }}} \ge 0\), \({{\bar{u}}} = 0\), and every control \(u \in {\mathcal {U}}_{ad}\) is nonnegative. In addition, according to (2.11), the second-order derivative of J is

Therefore, (3.3) and (4.4) hold for \(\kappa > 0\) and \(\kappa = 0\), respectively. Then, Theorems 3.1 and 4.1 along with Remark 4.2 imply that \({{\bar{u}}} \equiv 0\) is an \(L^p(Q)\)-strict local minimum of \(\hbox {(E)}\) for every \(p \in [1,\infty ]\). \(\square \)

Finally, we mention that the objective value for \({{\bar{u}}}=0\) is

we recall that \(|\Omega | = 1\) and \({{\bar{y}}} = 0\). This value is independent on \(\kappa \), because the Tikhonov regularization term vanishes for \({{\bar{u}}} = 0\).

5.3 Existence of Another (Globally) Optimal Control

Once and for all, we select \(t_s =3.3\). Notice that \(t_s < {{\hat{t}}} = 3.325003...\). We recall that \(t_s\) defines the location of the switching point of \(y_Q\). We define the following bang-bang control with switching point \(\tau = 0.02\) :

Let us compute an upper bound for \(J(u_\tau )\). We denote by \(y_\tau \) the state associated with \(u_\tau \). Since \(u_\tau \) does not depend on x and \(y_0 = 0\), we get that \(y_\tau \) is independent of x. With a slight abuse of notation we write \(y_\tau (t) = y_\tau (x,t)\). Then \(y_\tau \) satisfies the ordinary differential equation

In the interval \([0,\tau ]\), we have \(y'_\tau (t) = 10 + y_\tau (t) - y_\tau ^3(t)\). Using that \(0 \le s - s^3 \le \frac{2}{3\sqrt{3}}\) for every \(s \in [0,1]\) and taking the functions

we infer that \(\eta _1(t) = 10t \le y_\tau (t) \le \eta _2(t) = (10 + \frac{2}{3\sqrt{3}})t\) for every \(t \in [0,\tau ]\). Let us set \(v_i = \eta _i(\tau )\), \(i = 1, 2\). Then we have that \(v_1 = 0.2 \le v_\tau = y_\tau (\tau ) \le v_2 = 0.2 + \frac{0.04}{3\sqrt{3}}\). By separation of variables, we can solve the differential equation (5.3) in \([\tau ,T]\), where the control \(u_\tau \) is zero and get

Setting \(c_i = \frac{1}{v_i^2}-1\) and \(y_i(t) = \frac{1}{\sqrt{1 + c_i\text { e}^{-2(t-\tau )}}}\), \(i = 1, 2\), we deduce that \(c_2 \approx 22.18117 \le c_\tau \le c_1 = 24\) and, consequently, \(y_1(t) \le y_\tau (t) \le y_2(t)\) for all \(t \in [\tau ,T]\). Then, recalling that \(|\Omega | = 1\), we have

Above we have used

for \(c = c_i, \, i = 1,2\). Hence, we know that \(J(u_\tau ) < J({{\bar{u}}}) = 2 \ \text { if } \ 0 \le \kappa \le 0.3.\) Actually, the numerical computation of \(y_\tau \) as well as \(J(u_\tau )\) delivers the value \(J(u_\tau ) \approx 1.6864 + \kappa < J({{\bar{u}}})\). As a consequence, we deduce that a global minimizer of \(\hbox {(E)}\) distinct from \({{\bar{u}}}\) must exist. For comparison, the parabolic optimal control problem has been solved numerically. The computed optimal objective value is 1.6140 for \(\kappa = 0.3\). All these numerical computations were performed by Mariano Mateos (University of Oviedo). We very much acknowledge his support.

5.4 Example with Perturbed Initial Data

In the previous subsection, the considered controls depended on t only, i.e. they were constant with respect to x. In this way, we were able to solve the state and adjoint equation as ordinary differential equation. We do not know, if other local minimizers exist for \(\hbox {(E)} \) that depend also on x. Nevertheless, the example had the flavour of an example for ODEs. By our perturbation analysis, we are able to construct an example that cannot be reduced to the discussion of ODEs. To this aim, we consider the perturbed example

subject to

and to the pointwise control constraints \(\alpha \le u(x,t) \le \beta \) a.e. in Q, where

Theorem 5.2

For every \(\varepsilon > 0\), problem \((\hbox {E}_\varepsilon )\) has a strict local minimizer \(u_\varepsilon \) in the \(L^p(Q)\)-sense, for every \(p \in [1,\infty ]\), with associated state \(y^\varepsilon \) such that

for all \(\varepsilon > 0\) and for some constants \(L_i > 0\), \(i = 0, 1\).

Proof

We have already proved that \({{\bar{u}}} \equiv 0\) is a strict local minimizer of \(\hbox {(E)}\) in the \(L^p(Q)\)-sense for every \(p \in [1,\infty ]\). Moreover, \({{\bar{u}}}\) satisfies the second order optimality condition (3.3) if \(\kappa > 0\) or (4.4) if \(\kappa = 0\). Let us prove that \({{\bar{\varphi }}}\) satisfies (4.13) with \(\lambda = 1\). For this purpose, without loss of generality we assume \(\varepsilon \le 1\) in (4.13). Looking at the expression for \({{\bar{\varphi }}}\) in (5.1) and recalling that \(t_s = 3.3\), we have that \({{\bar{\varphi }}}(x,t) > 1\) \(\forall (x,t) \in \Omega \times [0, t_s]\). Then, \(|{{\bar{\varphi }}}(x,t)| \le \varepsilon \) if and only if e\(^{T - t} -1 \le \varepsilon \) or equivalently \(t \ge T - \ln (1 + \varepsilon )\). Therefore, with \(|\Omega | = 1\) we have

Now, Theorems 3.3 and 4.3 with Remark 4.1, and estimates (3.10) and (4.28) imply the existence of a family of strict local minimizers \(\{u_\varepsilon \}_{\varepsilon > 0}\) in the \(L^p(Q)\)-sense, for every \(p \in [1,\infty ]\), such that (5.6) and (5.7) hold. To prove (5.8), we first note that the boundedness of \(\{u_\varepsilon \}_{\varepsilon }\) in \(L^\infty (Q)\) and equation (5.4) imply the boundedness of \(\{y^\varepsilon \}_{\varepsilon > 0}\) in \(L^\infty (Q)\). Then, recalling that \({{\bar{y}}} = 0\), \(\alpha = 0\), \(\beta = 10\), and using (5.6) and (5.7), we get

which implies (5.8). In the last estimate, we used \(u_\varepsilon \le \beta = 10\). \(\square \)

Next, we prove that \(J_\varepsilon (u_\tau ) < J_\varepsilon (u_\varepsilon )\) is satisfied for all sufficiently small \(\varepsilon > 0\). Then, we conclude that \((\hbox {E}_\varepsilon )\) has at least two different local minimizers. Let us denote by \(y^\varepsilon _\tau \) the solution of (5.4) for \(u = u_\tau \). Subtracting the equations satisfied by \(y^\varepsilon _\tau \) and \(y_\tau \), we obtain

where \(y^\varepsilon _{\tau ,\theta } = y_\tau +\theta (y^\varepsilon _\tau - y_\tau )\) with \(0 \le \theta (x,t) \le 1\). From (5.4) we get that \(\{y^\varepsilon _\tau \}_{\varepsilon > 0}\) is uniformly bounded in \(L^\infty (Q)\). Then, from the above equation we infer that

This leads to

Selecting \(\varepsilon > 0\) such that \(\max \{C',L_0\}\varepsilon \Vert \phi \Vert _{L^\infty (\Omega )} < 0.0001\) and taking into account that \(J({{\bar{u}}}) = 2\) and \(J(u_\tau ) \le 1.6899\), we infer from (5.8) and the above inequality that \(J_\varepsilon (u_\varepsilon ) > 1.9999\) and \(J_\varepsilon (u_\tau ) < 1.69 + \kappa \le 1.99\) because \(0 \le \kappa \le 0.3\). Therefore, \((\hbox {E}_\varepsilon )\) has a global minimizer different from \(u_\varepsilon \).

References

Alt, W., Griesse, R., Metla, N., Rösch, A.: Lipschitz stability for elliptic optimal control problems with mixed control-state constraints. Optimization 59(5–6), 833–849 (2010)

Breiten, T., Kunisch, K., Rodrigues, S.S.: Feedback stabilization to nonstationary solutions of a class of reaction diffusion equations of FitzHugh-Nagumo type. SIAM J. Control Optim. 55(4), 141–148 (2017)

Casas, E.: Second order analysis for bang-bang control problems of PDEs. SIAM J. Control Optim. 50(4), 2355–2372 (2012)

Casas, E.: The influence of the Tikhonov term in optimal control of partial differential equations. In: Guillén-González, F., González-Burgos, M., Doubova, A., Marín-Beltrán, M. (eds.) Recent advances in PDEs: Analysis, Numerics and Control. SEMA SIMAI Springer Series, vol. 17. Springer, Berlin (2018)

Casas, E., Kunisch, K.: Optimal control of semilinear parabolic equations with non-smooth pointwise-integral control constraints in time-space. Appl. Math. Optim. 85(12), 1–40 (2022)

Casas, E., Mateos, M.: Critical cones for sufficient second order conditions in PDE constrained optimization. SIAM J. Optim. 30(1), 585–603 (2020)

Casas, E., Mateos, M.: State error estimates for the numerical approximation of sparse distributed control problems in the absence of Tikhonov regularization. Vietnam J. Math. 49, 713–738 (2021)

Casas, E., Mateos, M., Rösch, A.: Approximation of sparse parabolic control problems. Math. Control Relat. Fields 7(3), 393–417 (2017)

Casas, E., Mateos, M., Rösch, A.: Error estimates for semilinear parabolic control problems in the absence of Tikhonov term. SIAM J. Control Optim. 57(4), 2515–2540 (2019)

Casas, E., Tröltzsch, F.: Second order analysis for optimal control problems: improving results expected from from abstract theory. SIAM J. Optim. 22(1), 261–279 (2012)

Casas, E., Tröltzsch, F.: Second-order and stability analysis for state-constrained elliptic optimal control problems with sparse controls. SIAM J. Control Optim. 52(2), 1010–1033 (2014)

Casas, E., Tröltzsch, F.: Second order optimality conditions and their role in PDE control. Jahresber. Dtsch. Math.-Ver. 117(1), 3–44 (2015)

Casas, E., Tröltzsch, F.: Second order optimality conditions for weak and strong local solutions of parabolic optimal control problems. Vietnam J. Math. 44(1), 181–202 (2016)

Casas, E., Wachsmuth, D., Wachsmuth, G.: Sufficient second-order conditions for bang-bang control problems. SIAM J. Control Optim. 55(5), 3066–3090 (2017)

Casas, E., Wachsmuth, D., Wachsmuth, G.: Second order analysis and numerical approximation for bang-bang bilinear control problems. SIAM J. Control Optim. 56(6), 4203–4227 (2018)

Christof, C., Hafemeyer, D.: On the nonuniqueness and instability of solutions of tracking type optimal control problems. Math. Control Relat. Fields 12(2), 421–431 (2022)

Dontchev, A.L., Kolmanovsky, I., Krastanov, M.I., Nicotra, M., Veliov, V.M.: Lipschitz stability in discretized optimal control with application to SQP. SIAM J. Control Optim. 57(1), 468–489 (2019)

Dontchev, A.L., Hager, W.W.: Lipschitz stability in nonlinear control and optimization. SIAM J. Control Optim. 31, 569–603 (1993)

Griesse, R.: Lipschitz stability of solutions to some state-constrained elliptic optimal control problems. Z. Anal. Anwend. 25(4), 435–455 (2006)

Kunisch, K., Rodrigues, S.S., Walter, D.: Learning an optimal feedback operator semiglobally stabilizing semilinear parabolic equations. Appl. Math. Optim. 84, 277–318 (2021)

Malanowski, K., Tröltzsch, F.: Lipschitz stability of solutions to parametric optimal control for parabolic equations. J. Anal. Appl. (ZAA) 18, 469–489 (1999)

Malanowski, K., Tröltzsch, F.: Lipschitz stability of solutions to parametric optimal control for elliptic equations. Control Cybern. 29, 237–256 (2000)

Mordukhovich, B.S., Nghia, T.T.A.: Full Lipschitzian and Hölderian stability in optimization with applications to mathematical programming and optimal control. SIAM J. Optim. 24(3), 1344–1381 (2014)

Pighin, D.: Nonuniqueness of minimizers for semilinear optimal control problems. J. Eur. Math. Soc., To appear

Pörner, F., Wachsmuth, D.: An iterative Bregman regularization method for optimal control problems with inequality constraints. Optimization 65, 2195–2215 (2016)

Pörner, F., Wachsmuth, D.: Tikhonov regularization of optimal control problems governed by semi-linear partial differential equations. Math. Control Relat. Fields 8, 315–335 (2018)

Rodrigues, S.S.: Oblique projection output-based feedback exponential stabilization of nonautonomous parabolic equations. Autom. J. IFAC 129, 109621 (2021)

Roubíček, T., Tröltzsch, F.: Lipschitz stability of optimal controls for the steady state Navier-Stokes equations. Control Cybern. 32, 683–705 (2003)

Tröltzsch, F.: Lipschitz stability of solutions of linear-quadratic parabolic control problems with respect to perturbations. Dyn. Contin. Discret. Impuls. Syst. 7, 289–306 (2000)

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Eduardo Casas was supported by MCIN/ AEI/10.13039/501100011033/ under research project PID2020-114837GB-I00.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Casas, E., Tröltzsch, F. Stability for Semilinear Parabolic Optimal Control Problems with Respect to Initial Data. Appl Math Optim 86, 16 (2022). https://doi.org/10.1007/s00245-022-09888-7

Accepted:

Published:

DOI: https://doi.org/10.1007/s00245-022-09888-7

Keywords

- Semilinear parabolic equations

- Optimal control

- Stability of local solutions

- Non-uniqueness of local minima