Abstract

We prove a sharp quantitative version of the Faber–Krahn inequality for the short-time Fourier transform (STFT). To do so, we consider a deficit \(\delta (f;\Omega )\) which measures by how much the STFT of a function \(f\in L^{2}(\mathbb{R})\) fails to be optimally concentrated on an arbitrary set \(\Omega \subset \mathbb{R}^{2}\) of positive, finite measure. We then show that an optimal power of the deficit \(\delta (f;\Omega )\) controls both the \(L^{2}\)-distance of \(f\) to an appropriate class of Gaussians and the distance of \(\Omega \) to a ball, through the Fraenkel asymmetry of \(\Omega \). Our proof is completely quantitative and hence all constants are explicit. We also establish suitable generalizations of this result in the higher-dimensional context.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

1.1 Main results

Given a function \(g\in L^{2}(\mathbb{R})\) (called the window), the short-time Fourier transform (STFT) of a function \(f \in L^{2}(\mathbb{R})\) is usually defined as

This transform plays a distinguished role in different areas of mathematics, including time-frequency analysis [19] and signal processing [30], mathematical physics [29], where it is also known as the coherent state transform, and semiclassical and microlocal analysis [21, 37].

From the point of view of time-frequency analysis, the STFT is a measure of the “instantaneous frequency” of the signal \(f\) at each point, in analogy to what a music score does. As the notion of “instantaneous frequency” is not well-defined for generic signals, due to the uncertainty principle, the STFT can only concentrate a limited amount of its \(L^{2}\)-norm on any set \(\Omega \subset \mathbb{R}^{2}\) with finite Lebesgue measure \(|\Omega |\), and finding explicit bounds in terms of \(|\Omega |\) is an important issue in time-frequency analysis. For a general window \(g\), this appears to be extremely challenging and only suboptimal bounds have been obtained: we refer the reader to the work of E. Lieb [28] for what is, to our knowledge, the current best result at this level of generality.

For very regular windows, however, the situation improves. In particular, in the relevant case (extensively studied in the literature also in connection with the spectrum of localization operators in the radially symmetric case, see e.g. [1, 9, 18, 35]) where \(g=\varphi \) is the Gaussian window

a complete solution to this concentration problem has recently been given in [33], thus proving a conjecture from [2] (see also [10]). Denoting by \(\mathcal{V}f := V_{\varphi}f\) the STFT with the Gaussian window \(\varphi \) defined in (1.2), the main result of [33] can be stated as follows:

Theorem A

[33]; Faber-Krahn inequality for the STFT

If \(\Omega \subset \mathbb{R}^{2}\) is a measurable set with finite Lebesgue measure \(|\Omega |>0\), and \(f\in L^{2}(\mathbb{R})\setminus \{0\}\) is an arbitrary function, then

Moreover, equality is attained if and only if \(\Omega \) coincides (up to a set of measure zero) with a ball centered at some \(z_{0}=(x_{0}, \omega _{0})\in \mathbb{R}^{2}\) and, at the same time, \(f\) is a function of the kind

for some \(c\in \mathbb{C}\setminus \{0\}\).

Note that the optimal functions in (1.4) are scalar multiples of the Gaussian window defined in (1.2), translated and modulated according to the center of the ball \(\Omega \).

This result, which improves upon Lieb’s uncertainty principle [28], has inspired other subsequent works: [36], where a similar result is extended to the case of Wavelet transforms; [4], where Kulikov used techniques inspired by those of [33] to prove some contractivity conjectures; and [14], where R. Frank uses the same circle of ideas to generalize a series of entropy-like inequalities (see also the recent preprint [15]). We refer the reader to [22–25, 27, 31, 34] and the references therein for further closely related work.

In the present paper we investigate the stability of Theorem A: given \(\Omega \subset \mathbb{R}^{2}\) and \(f\in L^{2}(\mathbb{R})\) which are almost optimal, in the sense that they almost saturate inequality (1.3), can we infer (and to what extent) that \(\Omega \) is close to a ball and that \(f\) is close to a function of the form (1.4)? To formulate this question precisely, a crucial point is choosing how to measure almost optimality as well as closeness.

To measure almost optimality in (1.3) for a pair \((f,\Omega )\), we will consider the combined deficit

while we will use the Fraenkel asymmetry of \(\Omega \subset \mathbb{R}^{2}\) to measure its distance to a ball:

The Fraenkel asymmetry is a natural notion of asymmetry and it is often used to formulate the stability of geometric and functional inequalities, such as the isoperimetric inequality [8, 12, 16, 17] or the Faber–Krahn inequality for the Dirichlet Laplacian [5, 7].

Our main result reads as follows:

Theorem 1.1

Stability of the Faber-Krahn inequality for the STFT

There is an explicitly computable constant \(C>0\) such that, for all measurable sets \(\Omega \subset \mathbb{R}^{2}\) with finite measure \(|\Omega |>0\) and all functions \(f \in L^{2}(\mathbb{R})\backslash \{0\}\), we have

Moreover, for some explicit constant \(K(|\Omega |)\) we also have

Remark 1.2

Sharpness

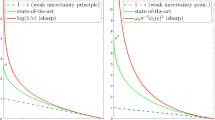

In Theorem 1.1 the factor \(\delta (f;\Omega )^{1/2}\) in (1.7) and (1.8) cannot be replaced by \(\delta (f;\Omega )^{\beta}\), for any \(\beta > 1/2\). Similarly, the dependence on \(|\Omega |\) in (1.7) is also sharp, in the sense that factor \(e^{|\Omega |/2}\) cannot be replaced by \(e^{\beta |\Omega |}\) for any \(\beta <1/2\). We refer to Sect. 6 for proofs of these claims.

Remark 1.3

Higher dimensions

There is a natural generalization of the \(STFT\) to functions \(f\in L^{2}(\mathbb{R}^{d})\), for any \(d\geq 1\). In Sect. 7 we show that a more general version of Theorem 1.1 holds in all dimensions. It is worth noting that, although \(\delta (f;\Omega )^{1/2}\) still controls the distance of \(f\) to the set of optimizers, there is a dimensional dependence of this estimate on \(|\Omega |\).

As observed in [33], if the set \(\Omega \) is fixed and has finite measure, Theorem A (and consequently also Theorem 1.1) can be interpreted in terms of the well-known localization operator [6, 9] defined, in terms of the STFT operator \(\mathcal{V}\colon L^{2}(\mathbb{R})\to L^{2}(\mathbb{R}^{2})\) with Gaussian window, by

This is a positive trace-class operator, hence its norm coincides with its largest eigenvalue

In particular, due to the arbitrariness of \(f\), (1.3) entails that

with equality if and only if \(\Omega \) is a ball, and so we call (1.10) a Faber–Krahn inequality, by analogy with the Dirichlet Laplacian. Clearly, for any fixed \(\Omega \), the functions \(f_{\Omega}\) that achieve the maximum in (1.9) (i.e. the eigenfunctions of \(L_{\Omega}\) associated with its first eigenvalue \(\lambda _{1}(\Omega )\)) are those functions whose STFT is optimally concentrated in that particular set \(\Omega \). When \(\Omega \) is a ball, these eigenfunctions are the functions described in (1.4) and appearing also in (1.7): therefore, specifying Theorem 1.1 to the case where \(f = f_{\Omega }\) is the first eigenfunction of \(L_{\Omega}\), normalized so that \(\|f_{\Omega }\|_{L^{2}}=1\), we obtain the following stability result for the first eigenvalue and eigenfunction of localization operators:

Corollary 1.4

Let \(\Omega \subset \mathbb{R}^{2}\) be a measurable set of positive finite Lebesgue measure, and let \(\lambda _{1}(\Omega )\) be the first eigenvalue of the localization operator \(L_{\Omega}\) as in (1.9), with unit-norm eigenfunction \(f_{\Omega}\). Then (1.10) holds true, and

for some universal (explicitly computable) constant \(C\). Moreover, for some explicit constant \(K(|\Omega |)\) we also have

This result is the analogue of the stability results for the Faber–Krahn inequality for the Dirichlet Laplacian [5, 7, 13]. Note, however, that the stability estimate (1.7) is more general than (1.11), because it holds for arbitrary functions \(f \in L^{2}(\mathbb{R})\) which are not assumed to be eigenfunctions of the localization operator \(L_{\Omega }\). Indeed, the results of Theorem 1.1 are stronger than the available stability results for the Faber–Krahn inequality for the Dirichlet Laplacian also in that, contrarily to [5, 7], our proof of Theorem 1.1 is quantitative and does not rely on compactness arguments, as in the penalization method [8]. It is for this reason that the constants in estimates (1.7)–(1.8) can be made explicit. Note, moreover, that the set \(\Omega \) in Theorem 1.1 is not assumed to be smooth; in fact, since \(\mathcal{V}f\) is essentially an entire function via the Bargmann transform, we can replace \(\Omega \) with a suitable super-level set of a holomorphic function, which in Sect. 3 we prove to be very well-behaved (we then use the rigidity of the problem to come back from super-level sets of holomorphic functions to the original set \(\Omega \)).

We saw in Remark 1.2 that (1.7) is sharp, but whether Corollary 1.4 is sharp as well is a more delicate question. To answer it, one would need to either (i) compute the first eigenfunctions of \(L_{\Omega}\) for domains \(\Omega \) close to a ball, or (ii) given a function \(f\) close to the Gaussian \(\varphi \), construct a domain \(\Omega _{f} \subset \mathbb{R}^{2}\) such that \(f\) is the first eigenfunction of \(L_{\Omega}\). Strategy (i) appears rather difficult: to the best of our knowledge, the eigenfunctions of \(L_{\Omega}\) are not known even in the simple case where \(\Omega \) is an ellipse of small eccentricity; see [1, 9]. Implementing strategy (ii) involves tools essentially disjoint from those of this manuscript and so we decided not to address the question of optimality of Corollary 1.4 here; instead, this is one of the main goals of an upcoming work by the third author [35].

To discuss the main ideas behind the proofs of our results, we now briefly recall some facts and background notions from [33], which we shall use throughout the paper. We point out, however, that the proof of Theorem A in [33] cannot be readily adapted to yield quantitative results such as (1.7) or (1.8). Instead, the proof of these inequalities requires a set of new geometric ideas and estimates in the Fock space, which are the core of the present paper and which (often being of a general character, such as Lemma 2.1 or the results in Sect. 3), are of interest on their own.

1.2 Proof strategy in the Bargmann–Fock space

As shown in [33], energy concentration problems for the STFT can be very cleanly formulated (and dealt with) in terms of the Fock space [39], i.e. the Hilbert space \(\mathcal{F}^{2}(\mathbb{C})\) of all holomorphic functions \(F \colon \mathbb{C}\to \mathbb{C}\) for which

endowed with the natural scalar product

Here and throughout, \(z=x+i y\) and \(\operatorname{d\!}z=\operatorname{d\!}x\operatorname{d\!}y\) denotes Lebesgue measure on ℂ, always identified with \(\mathbb{R}^{2}\). This Hilbert space is closely connected to the STFT through the Bargmann transform \(\mathcal{B}\colon L^{2}(\mathbb{R})\to \mathcal{F}^{2}(\mathbb{C})\), defined for \(f \in L^{2}(\mathbb{R})\) as

see e.g. [19, Sect. 3.4]. The Bargmann transform is a unitary isomorphism which maps the orthonormal basis of Hermite functions on ℝ onto the orthonormal basis of \(\mathcal{F}^{2}(\mathbb{C})\) given by the normalized monomials

More importantly for us, the definition of ℬ encodes the crucial property that

which allows us to express the energy concentration in the time-frequency plane in terms of functions in the Fock space, since

where \(\Omega ' = \left \{ (x, \omega ): (x,-\omega ) \in \Omega \right \}\). In this new setting, the image via ℬ of the functions \(\varphi _{z_{0}}\) defined in (1.4) takes the form

and therefore Theorem A can be rephrased in terms of the Fock space as follows, cf. [33, Theorem 3.1]:

Theorem B

If \(\Omega \subset \mathbb{R}^{2}\) is a measurable set with positive and finite Lebesgue measure, and if \(F \in \mathcal{F}^{2}(\mathbb{C}) \setminus \left \{ 0 \right \}\) is an arbitrary function, then

Moreover, equality is attained if and only if \(\Omega \) coincides (up to a set of measure zero) with a ball centered at some \(z_{0}\in \mathbb{C}\) and, at the same time, \(F = c F_{z_{0}}\) for some \(c\in \mathbb{C}\setminus \{0\}\).

Similarly, we can rephrase Theorem 1.1 over the Bargmann–Fock space, as follows:

Theorem 1.5

Fock space version of Theorem 1.1

There is an explicitly computable constant \(C>0\) such that, for all measurable sets \(\Omega \subset \mathbb{R}^{2}\) with positive finite measure and all functions \(F\in \mathcal{F}^{2}(\mathbb{C})\backslash \{0\}\), we have

where

Moreover, for some universal explicit constant \(K(|\Omega |)\) we also have

We warn the reader that, in (1.18), we used the same notation as in (1.5) to denote the Fock-counterpart of the deficit. However, no confusion should arise from this conflict of notation, since we always use an upper-case letter to denote elements \(F\) of the Fock space, corresponding to elements \(f\) of \(L^{2}\).

We will provide two different proofs of this theorem, based on a careful study of the real analytic function

and the properties of its super-level sets

where \(F\) is an arbitrary function in \(\mathcal{F}^{2}(\mathbb{C})\setminus \{0\}\). This study was initiated in [33], where it was proved that the distribution function

is locally absolutely continuous on \((0,\infty )\) and satisfies

from which one readily obtains that

Notice that, when \(F=c F_{z_{0}}\) as in the last part of Theorem B, then \(T=|c|^{2}\) and \(\mu (t)=\log _{+} T/t\). In [33], (1.23) can be found in the equivalent form

where \(u^{*}\colon \mathbb{R}^{+}\to (0,T]\) is the decreasing rearrangement of \(u\), usually defined as

The function \(u^{*}\) is proved to be invertible, with \(\mu |_{(0,T]}\) as inverse function (see [4] for a direct usage of (1.23) in this form). This fact enables one to find, for any number \(s\geq 0\), a unique super-level set \(A_{t}=A_{u^{*}(s)}\) of measure \(s\), which is the set where \(u\) is most concentrated among all sets of measure \(s\), namely

Based on (1.25), it was proved in [33] that the function \(G(\sigma ) := I(- \log \sigma )\) is convex on \([0,1]\). Since

the convexity of \(G\) yields the upper bound \(G(\sigma ) \le \|F\|_{\mathcal{F}^{2}}^{2}(1-\sigma )\) or, equivalently,

which, combined with (1.27), proves (1.16).

It was then observed in [33] that, if equality holds in (1.16), then by convexity we must have \(G(\sigma )\equiv \|F\|_{\mathcal{F}^{2}}^{2} (1-\sigma )\) on \([0,1]\) or, equivalently, \(I(s)=\|F\|_{\mathcal{F}^{2}}^{2} ( 1- e^{-s})\) for every \(s\geq 0\), and in particular

But since \(\mathcal{F}^{2}(\mathbb{C})\) is a Hilbert space with reproducing kernel \(K_{w}(z) = e^{\frac{\pi}{2} \left | w \right |^{2}} F_{w}(z)\), we have

for all \(F\in \mathcal{F}^{2}(\mathbb{C})\), with equality at some \(z=z_{0}\) if and only if \(F = c F_{z_{0}}\) for some \(c \in \mathbb{C}\) (see e.g. [33, Proposition 2.1]). Since in any case \(I'(0)=T:=\max _{z \in \mathbb{C}} |F(z)|^{2} e^{-\pi |z|^{2}}\), (1.29) shows that equality in (1.16) forces equality (for at least one \(z\)) also in (1.30), and this proves the last part of Theorem B.

In the rest of this introduction (and also in Sect. 2) we assume without loss of generality the normalization condition \({\|F\|_{\mathcal{F}^{2}}=1}\). A simple but fundamental observation to both our proofs of Theorem 1.5 is that equality in (1.30) can be precisely quantified: indeed,

cf. Lemma 2.5 below. Thus, to prove estimate (1.17) in Theorem 1.5, we need to show that the deficit controls \((1-T)\).

Our first proof of Theorem 1.5 is based on a careful study of the area between the graphs of \(s\mapsto u^{*}(s)\) and \(s\mapsto e^{-s}\). Consider a parameter \(s^{*}>0\), defined to be a solution of the equation

Such a solution always exists and, as soon as \(T<1\), it is unique. An argument relying on the convexity inequality (1.25) yields

cf. Lemma 2.3. Thus, to prove the desired stability estimate (1.17), by (1.31) and (1.32) it is enough to show that the integral above controls \((1-T)\). In fact, it is not difficult to see that this integral controls \((1-T)\) to a suboptimal power, as we have

Thus, by (1.31), (1.33) already yields a suboptimal form of stability.

To upgrade (1.33) to an optimal estimate, we need to estimate the integral in (1.32) much more precisely, and our approach is to give a precise quantification of the equality cases in (1.24). By passing to the inverse functions we have

cf. (2.50) below, and our proof proceeds by establishing a sharp estimate for the distribution function \(\mu (t)\): precisely, there is a universal constant \(C>0\) such that

provided that \(t\) and \(T\) are sufficiently close to 1 (see Lemma 2.1); in this paper, \(C\) always denotes a universal constant, which however may change from line to line. Note that, by the suboptimal estimate (1.33), this restriction on \(t\), \(T\) does not restrict generality. Establishing (1.35) is the most delicate part of the whole argument, as this estimate relies on a cancellation effect due to analyticity of \(F\). The desired estimate (1.17) then follows by an elementary analysis, after plugging in (1.35) into (1.34) and using again (1.31) and (1.32).

Concerning the stability of the set in (1.19), we note that it is not clear how to quantify inequality (1.27) used in the proof of Theorem B described above, at least for general sets \(\Omega \). Nonetheless, since we already have estimate (1.17), we know that \(u\) is close to a Gaussian. This allows us to first compare \(\Omega \) with \(A_{u^{*}(|\Omega |)}\), and then compare \(A_{u^{*}(|\Omega |)}\) with a ball.

The described strategy also works to show the stability of a similar Faber-Krahn inequality for wavelet transforms (see [36]), after adapting the current arguments. We plan to address this in a future work.

1.3 The geometry of super-level sets and a variational approach

As mentioned above, we will give two different proofs of Theorem 1.5, the first one having been described in the previous subsection. We now describe our second proof, which is variational in nature and based on the following result, which is of independent interest:

Proposition 1.6

There are small explicit constants \(\delta _{0},c>0\) such that the following holds: for all \(F\in \mathcal{F}^{2}(\mathbb{C})\) such that

and for all \(s< c\log (1/\delta _{0})\), the super-level set

has smooth boundary and convex closure.

Proposition 1.6 shows in particular that level sets of \(u\) sufficiently close to its maximum can be seen as smooth graphs over a circle, thus they can be deformed to a circle through an appropriate flow. This observation, in turn, allows us to give a variational approach to Theorem 1.5, in the spirit of Fuglede’s computation [16] for the quantitative isoperimetric inequality. We refer the reader to [20] for a detailed introduction to variational methods in shape-optimization problems.

To be precise, and comparing with (1.28), for some fixed \(s>0\) we consider the functional

We study perturbations of \(F_{0}\equiv 1\), i.e. we consider \(F=1+\varepsilon G\) for some small \(\varepsilon >0\). Taking \(\Omega =A_{u^{*}(s)}\), we note that by a formal Taylor expansion we have

since \(\nabla \mathcal {K}[1](G)=0\) for all \(G\in \mathcal{F}^{2}(\mathbb{C})\) satisfying the orthogonality conditions

according to Theorem 5.1 and Lemma 5.2. Thus, once the Taylor expansion above has been justified (and this is achieved in Appendix A), we see that for small perturbations of \(F_{0}\equiv 1\) the deficit is governed by the second variation of \(\mathcal {K}\). For stability to hold, this variation ought to be uniformly negative definite, since \(\varepsilon \) is essentially the left-hand side in (1.17). In Proposition 5.3 we show that, under the above orthogonality conditions, we have

This inequality is interesting for several reasons. Firstly, it is sharp, as highlighted by taking \(G(z)=z^{2}\). Secondly, by the suboptimal stability result (1.33), to prove (1.17) it is enough to consider functions with small deficit. Therefore, the above Taylor expansion, combined with (1.36), easily yields the stability estimate (1.17), although with a suboptimal dependence of the constant on \(|\Omega |\). Finally, the non-degeneracy of \(\nabla ^{2} \mathcal {K}\) provided by (1.36), combined once again with the above Taylor expansion, shows that the deficit behaves quadratically near \(F_{0}\equiv 1\), which leads to a direct proof of the optimality of our estimates, as claimed in Remark 1.2.

1.4 Outline

In Sect. 2 we give a first proof of (1.17), following the strategy described in Sect. 1.2 above. In Sect. 3 we study the geometry of the super-level sets of functions with small deficit and, in particular, we prove Proposition 1.6 above. In Sect. 4 we prove the set stability estimate (1.8). Section 5 contains the variational proof described in Sect. 1.3 and in particular the proof of (1.36). In Sect. 6 we prove the claims from Remark 1.2. Finally, in Sect. 7 we extend our results to the higher-dimensional setting, as claimed in Remark 1.3.

2 First proof of the function stability part

The goal of this section is to prove (1.17), by combining a series of new results (potentially of independent interest) valid for arbitrary functions \(F\in \mathcal{F}^{2}\), which for convenience will be assumed to be normalized by

In these statements, we will make extensive use of the notation and the background results recalled in Sect. 1.2, concerning the functions \(u(z)\), \(\mu (t)\) and \(u^{*}(s)\) that can be associated with a given \(F\in \mathcal{F}^{2}\). In particular, as in (1.23), in our statements we will let

recalling that \(T\in [0,1]\) whenever (2.1) is assumed.

We also note that, since \(u^{*}\) is (by its definition) equimeasurable with \(u\) and decreasing, there holds

Moreover, as recalled in Sect. 1.2, when \(F=c F_{z_{0}}\) (with \(|c|=1\)) is one of the optimal functions described in Theorem B, one has \(\mu (t)=\log _{+} \frac {1}{t}\) or, equivalently, \(u^{*}(s)=e^{-s}\). For this reason, a careful comparison between \(e^{-s}\) and \(u^{*}(s)\) (for an arbitrary \(F\) satisfying (2.1)) will be the core of the results of this section. Since, when (2.1) holds, letting \(s_{0}\to \infty \) in (2.3) we have

as noted in [27] there exists at least one value \(s^{*}>0\) for which

or, equivalently, in terms of the inverse functions, a value \(t^{*} \in (0,T)\) for which

(note \(\mu (t)=0\) for \(t\geq T\)). When \(T=1\) (or, equivalently, if \(F\) is one of the optimal functions described in Theorem B, see [33, Proposition 2.1]) and hence \(u^{*}(s)=e^{-s}\), clearly all values of \(s^{*}\) (or \(t^{*}\)) have this property, but when \(T<1\) we will prove in Corollary 2.2 that \(s^{*}\) and \(t^{*}\) are in fact unique, with an unexpected universal upper bound on \(t^{*}\) (or lower bound on \(s^{*}\)).

With this background, we are now ready to state and prove the results of this section, starting with a sharp estimate for \(\mu (t)\), which shows that (1.24) becomes almost an equality when \(T\) is close to 1.

Lemma 2.1

For every \(t_{0}\in (0,1)\), there exists a threshold \(T_{0}\in (t_{0},1)\) and a constant \(C_{0}>0\) with the following property. If \(F\in \mathcal{F}^{2}(\mathbb{C})\) is such that \(\Vert F\Vert _{\mathcal{F}^{2}}=1\) and \(T \geq T_{0}\), then

We note, before proving such a result, that the proof presented below shows that one can choose \(C_{0}=C/t_{0}^{3}\), where \(C\) is some universal constant.

Proof

Given \(t_{0}\in (0,1)\) and \(F\) as in the statement, we split the proof into several steps.

Step I. We may assume that \(u(z)\) achieves its absolute maximum \(T\) at \(z=0\) and that \(F(0)\) is a real number, so that \(F(0)=\sqrt {T}\). Expanding \(F\) with respect to the orthonormal basis of monomials (1.13), we have

where \(R(z)\) is the entire function

The fact that \(a_{1}=0\), i.e. \(F'(0)=0\), follows easily from our assumption that \(u(z)\) has a critical point at \(z=0\), which by (1.20) forces a critical point for \(|F(z)|^{2}\) and ultimately for \(F(z)\). The assumption that \(1=\Vert F\Vert _{\mathcal{F}^{2}}^{2}\) takes the form \(1=T+\sum _{n=2}^{\infty }|a_{n}|^{2}\), which we record in the form

hereby defining \(\delta \). In the sequel we will often tacitly assume that \(\delta \) is small enough, depending only on \(t_{0}\); in the end, the required smallness of \(\delta \) will determine the threshold \(T_{0}\) in the statement of Lemma 2.1.

From (2.9), Cauchy-Schwarz and (2.10) we obtain

In particular, \(|R(z)|^{2}\leq \delta ^{2}\left (e^{\pi |z|^{2}}-1\right )\), hence squaring (2.8) we have

where \(h(z)\) is the real valued harmonic function

Step II: Estimates for \(h\). Since \(|h(z)|\leq 2 |R(z)|\), the elementary inequality \(e^{x}-1-x\leq \frac {x^{2}}{2} e^{x}\), written with \(x=\pi |z|^{2}\) and combined with (2.11), implies

Differentiating (2.9), and then using Cauchy-Schwarz and (2.10) as in (2.11), we have

having used the inequality \(\frac {n^{2}}{n!}\leq \frac {2}{(n-2)!}\) in the last passage. Similarly, differentiating (2.9) twice, using Cauchy-Schwarz and estimating the resulting power series, we find

By (2.13) and the Cauchy-Riemann equations \(|\nabla h(z)|=2 |R'(z)|\) and \(|D^{2} h(z)|=2\sqrt {2} |R''(z)|\), we obtain the following uniform estimates with respect to the angular variable \(\theta \) for the first and second radial derivatives of \(h(r e^{i\theta})\):

and

Step III: Definition of \(E_{\sigma}\) and \(r_{\!\sigma }(\theta )\). Assuming \(T>t_{0}\), we consider any \(t\in [t_{0},T)\) and any complex number \(z=r e^{i\theta}\) (\(r\geq 0\)), and we observe that

Hence, by virtue of (2.12), we obtain the implication

where, for every fixed \(\theta \in [0,2\pi ]\), \(g_{\theta}\) is defined as

The variable \(\sigma \in [0,1]\) plays the role of a parameter that defines the family of planar sets

Since (2.19) is equivalent to the set inclusion \(\{u>t\}\subseteq E_{1}\), (2.7) will be proved if we show that

The advantage of the parameter \(\sigma \) is that we can easily prove the analogous estimate for \(E_{0}\) – which is a circle, since \(g_{\theta}(r,0)\) is independent of \(\theta \) –, and then show that this estimate is inherited by every \(E_{\sigma}\) (including \(E_{1}\)), by exploiting a cancellation effect due to the harmonicity of \(h\).

We first show that each set \(E_{\sigma}\) is star-shaped with respect to the origin, by showing that \(g_{\theta}(r,\sigma )\) is increasing in \(r\) (for fixed \(\theta \) and \(\sigma \)). Using (2.17) and assuming e.g. that \(\delta ^{2}+\sqrt {2}\delta \leq t_{0}/2\), we have from (2.20)

Since \(g_{\theta}(0,\sigma )=t/T\geq t_{0}\), integrating the previous bound we also obtain that

and hence, since \(g_{\theta}(0,\sigma )=t/T< 1\), for every \(\sigma \in [0,1]\) the equation in \(r>0\)

has a unique solution \(r_{\!\sigma }> 0\), which we shall also denote by \(r_{\!\sigma }(\theta )\) when the dependence of \(r_{\!\sigma }\) on the angle \(\theta \) is to be stressed, as in (2.25) below. Since \(E_{\sigma}\) is star-shaped, using polar coordinates we can compute its area \(|E_{\sigma}|\) in terms of \(r_{\!\sigma }\), as

Notice that \(f(1)\) is the area of \(E_{1}\) that we want to estimate as in (2.21), while

since when \(\sigma =0\), equation (2.24) simplifies to

so \(r_{0}\) is independent of \(\theta \) and \(E_{0}\) is a ball of radius \(r_{0}\). Note that the sets \(E_{\sigma}\) are uniformly bounded, since (2.23) and (2.24) entail that

Step IV: Estimates for \(r_{\!\sigma }'\) and \(r_{\!\sigma }''\). By (2.22) and the implicit function theorem, \(r_{\!\sigma }\) is, for every fixed value of \(\theta \in [0,2\pi ]\), a smooth, bounded function of the parameter \(\sigma \in [0,1]\). Denoting for simplicity by \(r_{\!\sigma }'\) its derivative with respect to \(\sigma \), we have

and using (2.14) and (2.22) we find the bound

In particular, this implies that

since by (2.30) \(|r_{\!\sigma }'|/r_{\!\sigma }\leq \sqrt {2}\,\delta /t_{0} \leq \log \sqrt{2}\) provided \(\delta \) is small enough, we have for every \(\sigma \in [0,1]\)

and (2.31) follows.

Differentiating (2.29) with respect to \(\sigma \), we have

from (2.32) and (2.14) we see that

Combining with (2.30),

Step V: Proof of (2.21). Now, recalling the bounds (2.28) and (2.30), one can differentiate under the integral in (2.25), obtaining

Differentiating (2.34) again, and then using (2.33) and (2.30), we obtain the estimate

This, combined with (2.31) and recalling (2.26), gives

We now claim that \(f'(0)=0\), which is the crucial step of the proof. Indeed, when \(\sigma =0\), we see from (2.27) and (2.22) that \(r_{0}\) and \(\partial g_{\theta}/\partial r\) are independent of \(\theta \), and therefore, by (2.29), when \(\sigma =0\) we may write

where \(\phi (r_{0})\neq0\) depends on \(r_{0}\) but is independent of \(\theta \). Therefore, from (2.34),

On the other hand, the last integral vanishes, since the mean value theorem applied to the harmonic function \(h\) gives

Hence, as \(f'(0)=0\), we may write, through Taylor’s formula,

and taking \(s=1\) and using (2.35) gives

Now, as we may assume that \(2\delta ^{2}\leq t_{0}\), we claim that

which, according to (2.27), is equivalent to

Setting for convenience \(\kappa =1/t_{0}\) and defining the function

we observe that (2.38) is equivalent to \(\psi (T/t)\geq 1\). Since \(\psi (1)=1\), \(T/t\in [1,\kappa ]\) and \(\psi \) is concave (note that \(2\delta ^{2}\kappa \leq 1\) by assumption), it suffices to prove that \(\psi (\kappa )\geq 1\). Indeed, we have

having used \(1-\delta ^{2}\kappa \geq \frac {1}{2}\) in the last passage. This shows that \(\psi (\kappa )\geq 1\), hence (2.37) is established.

Thus, (2.21) follows by combining (2.36) with (2.26) and (2.37). □

Corollary 2.2

Uniqueness and non-degeneracy of \(t^{*}\)

If \(F\in \mathcal{F}^{2}(\mathbb{C})\) is such that \(\Vert F\Vert _{\mathcal{F}^{2}}=1\) and \(T<1\), then there is a unique value \(t^{*}\in (0,T)\) satisfying (2.6). Moreover,

for some universal constant \(\tau ^{*}\in (0,1)\).

Note that the uniqueness of \(t^{*}\) implies the uniqueness of \(s^{*}\) defined in (2.5), and \(t^{*}=e^{-s^{*}}\). We also note that there cannot be any universal lower bound on \(t^{*}\), since \(t^{*}\leq T\) and \(T\) can be arbitrarily small.

Proof

If (2.6) were true for two distinct values \(t_{1}< t_{2}< T\) of \(t^{*}\), then we would have \(\mu (t)=\log 1/t\) for every \(t\in [t_{1},t_{2}]\), whence \(\mu '(t)=-1/t \) for every \(t\in (t_{1},t_{2})\). But the proof of [33, Remark 3.5] shows that this happens if and only if the corresponding sets \(\{ u > t \}\) are balls, \(|\nabla u|\) being constant on each boundary \(\partial \{ u > t \} = \{ u = t \}\): this in turn implies that \(u(z) = e^{-\pi |z-z_{0}|^{2}}\), for some \(z_{0} \in \mathbb{C}\) and hence \(u(z_{0}) = 1\) (or equivalently that \(u^{*}(s)\equiv e^{-s}\)), contradicting to our assumption that \(T<1\).

Now let \(T_{0}\) and \(C_{0}\) be the constants provided by Lemma 2.1 when \(t_{0}=\frac {1}{2}\), and define

Given \(F\) as in our statement, if \(t^{*}\leq 1/2\) then clearly \(t^{*}\leq \tau ^{*}\), and the same is true if \(T< T_{0}\), because certainly \(t^{*}\leq T\). Finally, if \(t^{*}>1/2\) and \(T\geq T_{0}\), then (2.7) written with \(t=t^{*}\) becomes

which is equivalent to

But then, since \(T\leq 1\), we obtain

and \(t^{*}\leq \tau ^{*}\) also in this case (notice that \(x^{\frac {1}{1-x}}\leq e^{-1}\) for every \(x\in (0,1)\)). □

We are now ready to start the comparison between \(u^{*}(s)\) and \(e^{-s}\), where the number \(s^{*}\), uniquely defined by (2.5) if \(T<1\), will play a crucial role. In the next two lemmas, however, it is not necessary to assume that \(T<1\), since when \(T=1\) (and \(u^{*}(s)=e^{-s}\)) their claims remain true (though trivial) for all values of \(s^{*}\).

Lemma 2.3

For every \(F\in \mathcal{F}^{2}(\mathbb{C})\) such that \(\Vert F\Vert _{\mathcal{F}^{2}}=1\) and every \(s_{0}>0\), there holds

where \(T\) is as in (2.2) and

Note that \(\delta _{s_{0}}\) coincides with the deficit \(\delta (F;\Omega )\) of Theorem 1.5 when \(\Omega =\{u>u^{*}(s_{0})\}\) is the super-level set of \(u\), with measure \(s_{0}\).

Proof

Instead of writing explicitly \(e^{-s}\), we will use the notation

This will be particularly useful in Sect. 7, when we adapt the current proof to higher dimensions.

Since \(u^{*}(x)\leq T\) and \(v^{*}(s)\geq 1-s\), the first inequality in (2.41) follows from

To prove the second inequality, note that \(1-e^{-s_{0}}=\int _{0}^{s_{0}} v^{*}(s)\operatorname{d\!}s\), and hence we can rewrite (2.42) as

The key of the proof is that the ratio

as follows immediately from (1.25) since \(r(s)=e^{s}u^{*}(s)\). In order to implement such an idea, we must now distinguish between some cases:

Case 1: \(s_{0} > s^{*}\). Since \(r(s^{*})=1\) and \(r(s)\) is increasing by the convexity inequality (1.25), we have from (2.45)

On the other hand, for the same reason,

which, combined with the previous estimate, gives

Thus, recalling (2.45) and using the last inequality, we find that

having used (2.4) for the numerator, and the fact that \(u^{*}(s)\geq v^{*}(s)\) when \(s\geq s^{*}\), for the denominator. Given that clearly \(\varepsilon \leq \delta _{s_{0}}\), the second inequality in (2.41) follows immediately since \(\int _{s_{0}}^{\infty} v^{*}(s)\operatorname{d\!}s=e^{-s_{0}}\).

Case 2: \(s_{0} \leq s^{*}\). As \(r(s^{*})=1\) and \(r(s)\) is increasing, we have from (2.45) again that

On the other hand, for the same reason,

which combined with the previous estimate gives

Thus, using the last inequality, we find

and the second inequality in (2.41) follows also in this case. □

We are now ready to show that, in (2.41), the first inequality holds in fact in a much stronger form.

Lemma 2.4

Under the same assumptions as in Lemma 2.3, there holds

where \(C>0\) is a universal constant.

Proof

Passing to the inverse functions, and recalling that \(\mu (t)\), restricted to \((0,T)\), is the inverse of \(u^{*}(s)\), we have

Observe that, given any universal constant \(\tau \in (0,1)\), in proving (2.49) we may assume (if convenient) that

because otherwise (2.49) would immediately follow from the first inequality in (2.41), as soon as \(C\geq 2/(1-\tau )\). In particular, letting \(T_{0}\) and \(C_{0}\) be the constants provided by Lemma 2.1 when \(t_{0}=\tau ^{*}\), where \(\tau ^{*}\) is the constant obtained in Corollary 2.2, we may assume that \(T\geq T_{0}\), so that (2.7) reads

Relying on (2.40), we now use (2.52) to minorize the last integral in (2.50). More precisely, letting \(\tau _{1}\in [\tau ^{*},1)\) denote a universal constant to be chosen later, and further assuming (in addition to \(T\geq T_{0}\)) that (2.51) holds also with \(\tau =\tau _{1}\), from (2.40), (2.52) and (2.50) we find

Using \(-\log T\geq 1-T\), for every \(t\in (\tau _{1},T)\) we have

and choosing now \(\tau _{1}\in [\tau ^{*},1)\) sufficiently close to 1 in such a way that

from (2.53) and the subsequent estimate we obtain

Finally, choosing a larger number \(\tau _{2}\in (\tau _{1},1)\) and further assuming that (2.51) holds also with \(\tau =\tau _{2}\), we obtain

and (2.49) follows, by letting \(C^{-1}=\varepsilon _{1}(\tau _{2}-\tau _{1})\). □

The final ingredient we need is a well-known lemma, whose statement and proof are well-known in the theory of Reproducing Kernel Hilbert spaces. For completeness, we provide its proof here.

Lemma 2.5

If \(F\in \mathcal{F}^{2}(\mathbb{C})\) and \(\Vert F\Vert _{\mathcal{F}^{2}}=1\), then

Proof

Since \(\Vert F_{z_{0}}\Vert _{\mathcal{F}^{2}}=1\) for every \(z_{0}\in \mathbb{C}\), for any \(c\) with \(|c|=1\) we have

and, since \(\mathcal{F}^{2}(\mathbb{C})\) is a reproducing kernel Hilbert space with kernel \(K_{w}(z) = e^{\frac{\pi}{2} \left | w \right |^{2}} F_{w}(z)\), we have \(\left \langle F,F_{z_{0}} \right \rangle _{\mathcal {F}^{2}} = F(z_{0}) e^{- \frac{\pi}{2} \left | z_{0} \right |^{2}}\). Therefore,

and choosing the unimodular \(c\) that minimizes the last term, we obtain for every \(z_{0}\)

The equality in (2.56) then follows by minimizing over \(z_{0}\in \mathbb{C}\), while the inequality is a direct consequence thereof. □

We are now ready to prove (1.17).

Proof of (1.17)

By homogeneity, in (1.17) one can assume that \(F\in \mathcal{F}^{2}(\mathbb{C})\) and \(\Vert F\Vert _{\mathcal{F}^{2}}=1\). Then, given \(\Omega \) as in Theorem 1.5 and letting \(s_{0}=|\Omega |\), on combining (2.56) with (2.49) and the second inequality in (2.41), one finds

where \(\delta _{s_{0}}\) is the deficit defined in (2.42), relative to the super-level set \(\{u>u^{*}(s_{0})\}\). But (1.27) (rewritten with \(s=s_{0}\)) reveals that \(\delta _{s_{0}}\leq \delta (F;\Omega )\), where \(\delta (F;\Omega )\) is the deficit relative to \(\Omega \) as defined in (1.18). Then (1.17) follows from (2.58), taking square roots. □

3 The geometry of super-level sets

In this section we study, for a fixed number \(t>0\), some basic geometric properties of the super-level sets \(\{z\in \mathbb{C}:u_{F}(z)>t\}\). In the proof of Lemma 2.1 we saw that the function \(g_{\theta}(r,\sigma )\), defined in (2.20), is monotone increasing in \(r\) and, in particular, its sub-level sets are star-shaped. We will soon see that, by doing a finer analysis, we can prove a stronger version of this result, namely Proposition 1.6.

We begin by discussing some useful normalizations that we will use throughout the next sections. Let us first consider the quantity

Without loss of generality, we will assume that

that is, the closest function to \(F\) in \(\{c F_{z_{0}}\}_{z_{0} \in \mathbb{C}, c\in \mathbb{C}}\) is a multiple of the constant function \(F_{0}\equiv 1\). This follows by (2.57), since \(\rho (F)^{2} = \min _{z_{0} \in \mathbb{C}} \frac{\|F\|_{\mathcal {F}^{2}}^{2} - |F(z_{0})|^{2} e^{-\pi |z_{0}|^{2}}}{\|F\|_{\mathcal {F}^{2}}^{2}}\). Moreover, we can also assume that

Now, we note that, by the previous assumptions, we have

This shows that \(\rho (F)\) is attained at \(z_{0} = 0\) if and only if 0 is a maximum for \(u_{F}\); hence, our normalization also implies

Observe that \(\rho \) differs slightly from the distance to the extremizing class used in (1.17), due to the condition on \(c\). However, it is equivalent to this distance: indeed, by Lemma 2.5, we have

Lemma 2.5 also shows that

Note that, by our normalizations, we have \(F(0)=1\leq \|F\|_{\mathcal {F}^{2}}\). One the other hand, (3.3), Lemma 2.5, and (2.41) show that

provided that the deficit is sufficiently small.

In addition to \(\rho (F)\), it will be convenient to consider the slightly different quantity

This allows us to write

and then the above assumptions are translated as

Note that, by (3.4), we can assume that \(\varepsilon \) is sufficiently small: indeed, if \(e^{|\Omega |}\delta (F;\Omega )\) is sufficiently small, then

We are now ready to begin the main part of this section. We begin with a key technical lemma which shows that, above a certain threshold, all level sets of the function \(u_{F}\) behave like those of the standard Gaussian, as long as \(\varepsilon (F)\) is sufficiently small.

Lemma 3.1

Let \(F \in \mathcal{F}^{2}(\mathbb{C})\) satisfy the normalizations in the beginning of this section. There are constants \(\varepsilon _{0}, c_{1} >0\) with the following property: if \(\varepsilon (F)\leq \varepsilon _{0}\), then for any \(\alpha \in [0,2\pi ]\), the function

is strictly decreasing on the interval \(\left [0,c_{1} \sqrt{\log (1/\varepsilon (F))}\right ]\).

Proof

Without loss of generality we will take \(\alpha =0\). In order to prove the desired assertion, we shall divide our analysis in two cases.

Case 1: \(1/10 < r < c_{1} \sqrt{\log (1/\varepsilon )}\). We differentiate the function \(\mathcal{G}_{0}\) in terms of \(r\), which gives us

where in the last line we used (3.5). In order to bound the last term we note that, by the Cauchy integral formula,

since \(|G(z)|\leq e^{\pi |z|^{2}/2}\), and in addition

since \(\|1+\varepsilon G\|_{\mathcal {F}^{2}}\le 2\). Thus, as the \(\varepsilon ^{2}\)-term in (3.8) is negative, and \(|\operatorname{Re}G(r)|e^{-\pi r^{2}}\leq 1\) as \(r>1/10\), we can estimate

Since \(r < c_{1} \sqrt{\log (1/\varepsilon )}\), we obtain that \(e^{\pi r^{2}} \le e^{\pi c_{1}^{2} \log (1/\varepsilon )} = \varepsilon ^{-\pi c_{1}^{2}}\). For all \(\varepsilon \) small enough, we have \(e^{-\pi r^{2}}- 2 \varepsilon \geq \varepsilon ^{\pi c_{1}^{2}}- 2 \varepsilon > 0\) provided that \(\pi c_{1}^{2} < 1\). Since also \(1/10\leq r\), we have

Hence, as long as

the term \(-2 \pi r \varepsilon ^{\pi c_{1}^{2}}\) dominates over the others. Thus, for sufficiently small \(\varepsilon \), we have \(\mathcal{G}_{0}'(r) < 0\).

Case 2: \(0 < r \leq 1/10\). Notice that this case is more subtle, as \(\mathcal{G}_{0}'(r) \to 0\) when \(r \to 0\). We will show that the second derivative of \(\mathcal{G}_{0}\) is strictly negative for \(r \in (0,1/10)\): thus the first derivative decreases in \((0,1)\) and, as \(\mathcal{G}_{0}'(0) = 0\), it follows that \(\mathcal{G}_{0}'(r) < 0\) in this interval, proving the claim.

Starting from (3.8), we compute:

We now follow the same strategy as in the first case. For \(|w|\leq 1\), we find the estimates

Therefore we have

where \(\mathfrak{h} \colon \mathbb{R}\to [0,\infty )\) is a smooth function. Since \(r<1/10\), we have \(1-2\pi r^{2}>0\) and so the first term above is negative. Hence, if \(\varepsilon \) is sufficiently small, it holds that \(\mathcal{G}_{0}''(r) < 0\) for all \(r \in (0,1/10)\), and the conclusion follows. □

In spite of its simple nature, we can derive several important conclusions from Lemma 3.1, such as the following result.

Lemma 3.2

Under the same hypotheses of Lemma 3.1, one may find a small constant \(c_{2}> 0\) such that, for \(t > \varepsilon (F)^{c_{2}}\), the level sets

are all star-shaped with respect to the origin. Moreover, for such \(t\), the boundary \(\partial \{ u_{F} > t\} = \{ u_{F} = t \}\) is a smooth, closed curve.

Proof

Let \(c_{1} >0\) be given by Lemma 3.1. We first prove the following assertion: if \(|z| > c_{1} \sqrt{\log (1/\varepsilon )}\) then

As before, we use the decomposition (3.5) to write

For \(|z| > c_{1} \sqrt{\log (1/\varepsilon )}\), and \(\varepsilon \) sufficiently small, since \(\|G\|_{\mathcal {F}^{2}}=1\) one readily sees that

since we can choose \(\pi c_{1}^{2} \leq \frac {1}{2}\), cf. (3.10).

We now claim that the conclusion of the lemma holds with \(c_{2} =\frac{\pi c_{1}^{2}}{2}\). If this is not the case, there is \(t_{0} > \varepsilon ^{c_{2}}\) such that \(A_{t_{0}} := \{z \in \mathbb{C}\colon u_{F}(z) > t_{0} \}\) is not star-shaped with respect to 0. Thus, there would be a point \(w_{0} \in A_{t_{0}}\), such that, for some \(r \in (0,1)\), \(r\cdot w_{0} \notin A_{t_{0}}\). By (3.12), we must have that

indeed, if \(|w_{0}|>c_{1} \sqrt{\log (1/\varepsilon )}\) then, by choosing \(\varepsilon \) even smaller if need be, we would have \(u(w_{0}) < 4 \varepsilon ^{\pi c_{1}^{2}} < \varepsilon ^{\pi c_{1}^{2}/2}<t_{0}\), contradicting the fact that \(w_{0}\in A_{t_{0}}\). However, (3.13) leads to a contradiction already: if we write \(e^{i \alpha _{0}} = \frac{w_{0}}{|w_{0}|}\) then Lemma 3.1 ensures that the function \(s \mapsto |F(s e^{i \alpha _{0}})|^{2} e^{-\pi s^{2}}\) is strictly decreasing for \(s < c_{1} \sqrt{\log (1/\varepsilon )}\) and thus we would have

which is a contradiction. Hence \(A_{t_{0}}\) is star-shaped with respect to the origin.

The final claim of the lemma, concerning the smoothness of the boundary \(\partial \{u_{F} > t\} = \{ u_{F} =t\}\), follows from the Inverse Function Theorem. Indeed, by (3.12) we see that if \(z\) is such that \(u_{F}(z) = t > \varepsilon ^{c_{2}}\) then \(|z| < c_{1} \sqrt{\log (1/\varepsilon )}\), and Lemma 3.1 then guarantees that \(\nabla u_{F}(z) \neq 0\). Thus \(t\) is a regular value of \(u_{F}\) and the set \(\{u_{F}=t\}\) is a smooth curve. □

Lemmata 3.1 and 3.2 already show that the super-level sets of \(u_{F}\) are regular and have controlled geometry. We now show that they are in fact convex:

Proposition 3.3

Under the same assumptions as in Lemma 3.1, there are small constants \(\varepsilon _{0},c_{3} > 0\) such that, as long as \(\varepsilon (F)\leq \varepsilon _{0}\) and \(s<-c_{3} \log (\varepsilon (F))\), the set

has convex closure.

Proof

Choosing \(\varepsilon _{0}\) appropriately, we can apply Lemmas 3.1 and 3.2 to conclude that, for \(t > \varepsilon (F)^{c_{2}}\), the level sets \(\{z \in \mathbb{C}\colon u_{F}(z) > t\}\) are all star-shaped with respect to the origin and have smooth boundary.

We write, for shortness, \(u=u_{F}\) and \(u_{0}=e^{-\pi |\cdot |^{2}}\) throughout the rest of this proof. By the triangle inequality and (3.4), we have

and so

This implies that

or, rearranging,

In particular, if \(e^{-s} >\varepsilon (F)^{c_{3}}\), then

provided \(c_{3}\) and \(\varepsilon _{0}\) are chosen sufficiently small. Thus, for our choice of parameters, the set \(A_{u^{*}(s)}\) is star-shaped and has a smooth boundary.

Arguing similarly to Lemma 3.1 we see that, by further shrinking \(c_{3}\) if needed, we have

whenever \(s \le - c_{3} \log (\varepsilon (F))\). Indeed, recalling again (3.5), (3.18) is equivalent to

Using (3.9), (3.11), a suitable version of the first of those estimates for the second derivative, and (3.14)–(3.17), we see that

If \(w\in A_{u^{*}(s)}\), by (3.15) and similarly to (3.16), we have

and hence (3.19) implies (3.18) with \(C_{s} = C \cdot e^{4s}\), where \(C\) is an absolute constant.

Let then \(\kappa _{s}\) denote the curvature of \(\partial A_{u^{*}(s)}= \{ u = u^{*}(s)\}\), thus

For \(0< s<-c_{3} \log (\varepsilon (F))\), by (3.15) and (3.17) we have \(\{u_{0}>\frac{1}{4}\varepsilon (F)^{c_{3}}\}\supset A_{u^{*}(s)}\), and hence

Let us denote by \(\tilde{\kappa}_{s}>0\) the curvature of the circle \(\{u_{0} = u^{*}(s) \}\) and notice that, by (3.17), \(\tilde{\kappa}_{s}\to \infty \) as \(s\to 0\). By (3.18) and (3.20), choosing \(c_{3}\) and \(\varepsilon _{0}\) sufficiently small, we have an estimate

where we used the bound on \(s\) in the last inequality. Combining the last two facts, we see that we can choose \(\varepsilon _{0}\) small enough so that \(\varepsilon _{0}^{1/4} \leq \tilde{\kappa}_{s}\) and \(\varepsilon _{0}^{1/2}\leq \frac {1}{2} \varepsilon _{0}^{1/4}\). These choices ensure that

for all \(s<-c_{3} \log \varepsilon (F)\). This lower bound implies that \(A_{u^{*}(s)}\) is locally convex. We then use the well-known Tietze–Nakajima theorem (see [32, 38]) which asserts that, as \(\overline{A_{u^{*}(s)}}\) is a closed, connected set, its local convexity implies its convexity, and the assertion is proved. □

Proof of Proposition 1.6

Proposition 1.6 follows immediately from Proposition 3.3 and (3.7), taking \(\Omega =A_{u_{F}^{*}(s)}\) as usual. □

4 Proof of the set stability

In this section we complete the proof of our main Theorem 1.1. As explained in the introduction, it suffices to prove its Fock space analogue, Theorem 1.5.

Proof of Theorems 1.1 and 1.5

Since the stability for the function has already been proved in Sect. 2, it remains to prove stability of the set, i.e. estimate (1.8).

Fix \(f \in L^{2}\) as in the statement of Theorem 1.1, let \(F = \mathcal{B}f\), \(u_{F}(z) = |F(z)|^{2} e^{-\pi \left | z \right |^{2}}\) and let us write \(\delta = \delta (F;\Omega )\) for simplicity. Clearly we may assume that \(\delta \leq \delta _{0}\), for some arbitrarily small constant \(\delta _{0}\). We may also suppose that \(F\) is normalized as at the beginning of Sect. 3 and so, as in (3.5), we can write \(F=1+\varepsilon G\), where \(\|G\|_{\mathcal{F}^{2}}=1\) satisfies (3.6) and \(\varepsilon \) satisfies (3.7).

Let \(A_{\Omega} := A_{u_{F}^{*}(|\Omega |)}\), as in (1.21). Let \(\mathcal{T}\) be any transport map \(\mathcal{T}\colon A_{\Omega } \setminus \Omega \to \Omega \setminus A_{ \Omega }\), that is,

cf. [11, page 12] for details on the existence of such a map. Define

where \(C_{|\Omega |}\), \(\gamma \) are constants to be chosen later. Since \(\mathcal{T}\) is a transport map,

In (4.1), the inequality holds by the fact that, for \(z \in A_{\Omega }\setminus \Omega \), \(\quad u_{F}(z) > u_{F}^{*}(| \Omega |)\), and the reverse inequality holds for \(z \in \Omega \setminus A_{\Omega }\). Note that from (1.28) we have the bound

by (3.4) and the assumption that \(\delta \) is sufficiently small.

Step I. Control over \(B\). In this step, we will show that

after choosing \(C_{|\Omega |}\) and \(\gamma \) correctly. To see this, we begin by writing

Since \(|G|^{2}e^{-\pi |\cdot |^{2}} \le 1\), we have promptly

whenever \(z \in B\). Moreover, since \(z \in B\subset A_{\Omega }\), (3.14) shows that

here we used also

cf. (3.15) and (3.16). If \(\pi (|\mathcal{T}(z)|^{2}-|z|^{2}) \geq 1\) then we find

On the other hand, if \(\pi (|\mathcal{T}(z)|^{2}-|z|^{2}) \leq 1\), from (4.4),

Choosing \(C_{|\Omega |} = 20 e^{|\Omega |}\) and \(\gamma \ge \varepsilon \), the previous estimates yield the desired (4.3).

Step II.Showing that \(\Omega \) is close to \(A_{\Omega }\). Note the identities

hence

In this step, we want to estimate both terms on the right-hand side. The estimate for the first term follows by combining (4.1), (4.2) and (4.3):

To estimate the second term, note that \(\Omega \setminus \mathcal{T}(B)\) is contained in a \(C_{|\Omega |} \gamma \)-neighborhood of \(A_{\Omega}\); in turn, by (3.15), \(A_{\Omega}\) is nested between two concentric balls:

Combining this information with (4.5), and setting \(\lambda _{\pm} = \sqrt{-\pi ^{-1} \log (u^{*}(s) \pm 3\varepsilon )}\) we can estimate

provided that \(\varepsilon \) is sufficiently small, depending on \(|\Omega |\). Choosing \(\varepsilon \leq \gamma = C (e^{|\Omega |}\delta )^{1/2}\), where \(C\) is the constant provided by Theorem 1.5, and combining (4.6), (4.7) and (4.9), we get

for some new but still explicitly computable constant \(C=C(|\Omega |)\).

Step III. Conclusion. To conclude, we just need to compare \(\Omega \) with the ball \(S_{\Omega}:=\{z:e^{-\pi |z|^{2}}\geq e^{-|\Omega |}\}\). By (4.5) and (4.8), we have \(S_{\Omega }\subset E_{\Omega }\) and

where we also used (3.7). It follows that

and so it is enough to bound \(|E_{\Omega }\triangle \Omega |\). We then estimate

where in the last inequality we estimate \(|E_{\Omega}\setminus A_{\Omega}|\) as in (4.10) and we also used the estimate from the last step. We have now proved (1.19) and thus also (1.8). □

We remark that, in spite of the sharp exponent of \(\delta \) in the result above, the asymptotic growth of the constant \(K(|\Omega |)\) in (1.8) from the proof above is likely not sharp: as we shall see in Sect. 6, one expects, from the functional stability part, that the sharp growth of the constant should be of the form \(\sim e^{|\Omega |/2}\), while the proof above yields \(K(|\Omega |) \sim e^{2|\Omega |}\).

Although there is room for improving such a constant with the current methods, it is unlikely that these will suffice in order to upgrade \(K(|\Omega |)\) to the aforementioned conjectured optimal growth rate. For that reason, we consider this to be a genuinely interesting problem, which we wish to revisit in a future work.

5 An alternative variational approach to the function stability

The purpose of this section is to give a variational proof of the function stability in Theorem 1.5. Fix \(s>0\) and consider the functional

where we recall that \(I_{F}(s)\) is the integral of \(u_{F}\) over its superlevel set of measure \(s\), cf. (1.27). We will prove the following result:

Theorem 5.1

Fix \(s\in (0,\infty )\). There are explicit constants \(\varepsilon _{0}(s),C(s)>0\) such that, for all \(\varepsilon \in (0,\varepsilon _{0})\), we have

whenever \(\|G\|_{\mathcal {F}^{2}}=1\) satisfies (3.6).

The proof of Theorem 5.1 is almost independent of the results of Sect. 2, as we will only rely on the suboptimal stability result from Lemma 2.3. This lemma, in turn, does not rely on the other results from that section.

Let us first note that Theorem 5.1 indeed implies the function stability part Theorem 1.5, although without the optimal dependence of the constant on \(|\Omega |\).

Alternative proof of (1.17), assuming Theorem 5.1

Without loss of generality, we can assume the normalizations detailed at the beginning of Sect. 3. By the same argument as in (3.7), if the deficit is sufficiently small we see that

where now the last inequality follows by combining Lemma 2.3 with the simple Lemma 2.5, instead of using (1.17). Here, we take \(\Omega =A_{u_{F}^{*}(t)}=\{u_{F}>u_{F}^{*}(t)\}\). Hence, we can write

where \(G\) satisfies (3.6), and we can assume that \(\varepsilon \) is sufficiently small. Theorem 5.1 then implies that

To complete the proof it suffices to note that, by our normalizations, \(F(0)=1\leq \|F\|_{\mathcal {F}^{2}}\). Thus

where the last inequality follows from (3.2). □

The proof of Theorem 5.1 is based on the following technical result:

Lemma 5.2

There is \(\varepsilon _{0}=\varepsilon _{0}(s)\) and a modulus of continuity \(\eta \), depending only on \(s\), such that

for all \(0\leq \varepsilon \leq \varepsilon _{0}(t)\) and \(G\in \mathcal {F}^{2}(\mathbb{C})\) such that \(\|G\|_{\mathcal {F}^{2}}=1\) and which satisfy (3.6). Here we have defined

The proof of Lemma 5.2 is rather technical and standard, for which reason we moved it to Appendix A. Lemma 5.2 shows that \(\mathcal {K}[1+\varepsilon G]-\mathcal {K}[1]\) is essentially controlled by the second variation of \(\mathcal {K}\) at 1, in the direction of \(G\). Since 1 is a local maximum for \(\mathcal {K}\), this variation is negative definite, but to prove Theorem 5.1 we need to show that it is uniformly negative definite. This is the content of the next proposition, which is the main result of this section.

Proposition 5.3

For all \(G\in \mathcal {F}^{2}(\mathbb{C})\) such that \(\|G\|_{\mathcal {F}^{2}}=1\) and which satisfy (3.6), we have

It is clear that Theorem 5.1 is an immediate consequence of the above two results:

Proof of Theorem 5.1

Combining Lemma 5.2 and Proposition 5.3, we have

The conclusion now follows by choosing \(\varepsilon _{0}=\varepsilon _{0}(s)\) even smaller so that \(\frac{C(s)}{4}\geq \eta (\varepsilon _{0})\). □

The rest of this section is dedicated to the proof of Proposition 5.3. Clearly we first need to compute the second variation of \(\mathcal {K}\) and, in order to do so, our strategy is to consider the sets

and to write

for a suitable volume-preserving flow \(\Phi _{\varepsilon }\). In order to construct such a flow, we first prove a general lemma which allows us to build a flow that deforms the unit disk into a given family of graphical domains over the unit circle. This type of result is well-known, and we refer the reader for instance to [3, Theorem 3.7] for a more general statement.

Lemma 5.4

Denote by \(D_{0} \subset \mathbb{R}^{2}\) the unit disk, and suppose that we are given a one-parameter family \(\{D_{\varepsilon }\}_{\varepsilon \in [0,\varepsilon _{0}]}\) of domains, whose boundaries are given by smooth graphs over the unit circle:

We assume that the family \(\{g_{\varepsilon }\}_{\varepsilon \in [0,\varepsilon _{0}]}\) depends smoothly on \((\varepsilon , \omega )\).

Then there exists a family \(\{Y_{\varepsilon }\}_{\varepsilon \in [0,\varepsilon _{0}]}\) of smooth vector fields, which depends smoothly on the parameter \(\varepsilon \), such that, if \(\Psi _{\varepsilon }\) denotes the flow associated with \(Y_{\varepsilon }\), i.e. if

then \(\Psi _{\varepsilon }(D_{0}) = D_{\varepsilon }\). In addition, \(Y_{\varepsilon }\) is such that \(\operatorname*{div}(Y_{\varepsilon }) = 0\) in a neighbourhood of \(\mathbb{S}^{1}\).

Proof

By translating into polar coordinates \(r=|z|\) and \(\omega = z/|z|\) we see that, if we define a vector field \(Y_{\varepsilon }\) locally on a neighbourhood of \(\mathbb{S}^{1}\) by

then \(Y_{\varepsilon }\) satisfies \(\operatorname*{div}(Y_{\varepsilon }) = 0\) in a neighbourhood of \(\mathbb{S}^{1}\), Moreover, in the same neighbourhood of \(\mathbb{S}^{1}\), we may write the flow \(\Psi _{\varepsilon }\) of \(Y_{\varepsilon }\) explicitly as

We then extend \(\Psi _{\varepsilon }\) from the neighbourhood of \(\mathbb{S}^{1}\) to the whole complex plane, in such a way that \(\Psi _{\varepsilon }(D_{0}) = D_{\varepsilon }\) and the map \((\varepsilon ,x) \mapsto \Psi _{\varepsilon }(x)\) is smooth. Taking \(\tilde{Y}_{\varepsilon }\) to be the vector field of the extended version of \(\Psi _{\varepsilon }\), we see that \(\tilde{Y}_{\varepsilon }\) is an extension of \(Y_{\varepsilon }\) to the whole space, and moreover, the map \((\varepsilon ,x) \mapsto \tilde{Y}_{\varepsilon }(x)\) is smooth. □

Using the results of Sect. 3 we can readily apply Lemma 5.4 to the sets \(\Omega _{\varepsilon }\):

Lemma 5.5

Let \(G\in \mathcal {F}^{2}(\mathbb{C})\) satisfy (3.6). There is \(\varepsilon _{0} =\varepsilon _{0}(s,\|G\|_{\mathcal {F}^{2}})> 0\) such that, for all \(\varepsilon \in [0,\varepsilon _{0}]\), there are globally defined smooth vector fields \(X_{\varepsilon }\), with associated flows \(\Phi _{\varepsilon }\), such that

Moreover, \(X_{\varepsilon }\) depends smoothly on \(\varepsilon \) and is divergence-free in a neighborhood of \(\partial \Omega _{0}\). We also have

where \(\nu _{\varepsilon }\) denotes the outward-pointing unit vector field on \(\partial \Omega _{\varepsilon }\).

Proof

Up to dilating by a constant (which depends only on \(s\)) we can assume that \(\Omega _{0}=B_{1}\). Lemma 3.2 shows that, if \(\varepsilon _{0}\) is chosen sufficiently small, the boundaries \(\partial \Omega _{\varepsilon }\) are smooth and the sets \(\Omega _{\varepsilon }\) are star-shaped with respect to zero, hence they can be written as graphs over \(\mathbb{S}^{1}\):

We now claim that the function \((\varepsilon ,\omega ) \mapsto f_{\varepsilon }(\omega )\) is smooth as long as \(\varepsilon \) is sufficiently small.

Indeed, for fixed \(\varepsilon \), the function \(\omega \mapsto f_{\varepsilon }(\omega )\) is smooth, by Lemma 3.2, since it is implicitly defined by \(u_{\varepsilon }((1+f_{\varepsilon }(\omega ))\cdot \omega ) = u_{ \varepsilon }^{*}(s)\). Moreover, since \(\nabla u_{\varepsilon }\) is bounded by a constant depending only on \(s\) when restricted to \(\{u_{\varepsilon }= u_{\varepsilon }^{*}(s)\}\) (this follows, for instance, from the proof of Lemma 3.1), any careful quantification of the proof of the implicit function theorem (cf. [26]) implies that there is a universal \(\varepsilon _{0}(s) > 0\) such that, if \(\varepsilon < \varepsilon _{0}(s)\), then \(\varepsilon \mapsto f_{\varepsilon }(\omega )\) is smooth for any fixed \(\omega \in \mathbb{S}^{1}\). This proves the desired smoothness claim.

By Lemma 5.4, the associated vector fields are explicitly given in a neighbourhood of \(\mathbb{S}^{1}\) by

and they are divergence-free in a neighbourhood of \(\mathbb{S}^{1}\). Their smoothness then follows from the smoothness of \(f_{\varepsilon }\) in \(\varepsilon \).

To prove the final claim we note that, since \(\Omega _{\varepsilon}\) has constant measure equal to \(s\) for all \(\varepsilon \in [0,\varepsilon _{0}]\), by a calculation in polar coordinates we see that the function

is constant in the interval \([0,\varepsilon _{0}]\). Thus,

and so the integral above has to vanish; here we used the fact that \(\Omega _{0}\) is a ball. Since \(\operatorname*{div}(X_{\varepsilon }) = 0\) in a neighbourhood of \(\partial \Omega _{0}\), and as \(\int _{\partial \Omega _{0}} \langle X_{\varepsilon }, \nu \rangle \operatorname{d\!}\mathcal{H}^{1}= 0\), the divergence theorem shows that, for any Lipschitz Jordan curve \(\gamma \) in the same neighbourhood of \(\partial \Omega _{0}\), we have

where \(\nu _{\gamma}\) denotes the outward-pointing normal field on \(\gamma \). Thus (5.3) follows. □

Having the previous lemma at our disposal, we can now obtain an explicit formula for \(\nabla ^{2} \mathcal {K}[1]\).

Lemma 5.6

For all \(G\in \mathcal{F}^{2}(\mathbb{C})\) which satisfy (3.6), we have

Proof

Setting \(\operatorname{d\!}\sigma (z) := e^{-\pi |z|^{2}} \operatorname{d\!}z\) for brevity, let us introduce the auxiliary functions

we also write \(K_{\varepsilon }:= \mathcal {K}[1+\varepsilon G]\), where we recall that \(\Omega _{\varepsilon }:= \{u_{\varepsilon }>u_{\varepsilon }^{*}(s) \}\), cf. (5.1). We will always take \(\varepsilon \leq \varepsilon _{0}\), where \(\varepsilon _{0}\) is as in Lemma 5.5. Here and henceforth, we shall denote derivatives of the quantities \(K_{\varepsilon }\), \(\quad I_{\varepsilon }\), \(\quad J_{\varepsilon }\) in the \(\varepsilon \) variable with primes, that is, \(K_{\varepsilon }'\), \(\quad J_{\varepsilon }'\), \(\quad I_{\varepsilon }'\), etc. With that in mind, we have:

and using Reynold’s theorem we further compute

Here and in what follows, we write \(X_{\varepsilon }\) to be the vector fields built in Lemma 5.5. Note that, to obtain (5.9), we used the fact that \(u_{\varepsilon }\) is constant on \(\partial \Omega _{\varepsilon }\), together with the cancelling property (5.3) of the vector fields.

Since \(\langle G, 1\rangle _{\mathcal {F}^{2}}=0\), \(\Omega _{0}\) is a ball and \(G\) is holomorphic, from (5.9) it is easy to see that

where the implication follows from the first equation in (5.8).

Combining (5.8)–(5.11), we arrive at

Since \(\partial \Omega _{0}\) is a circle of radius \(r_{0}\), where \(\pi r_{0}^{2} =s\), our main task is to simplify the last term: specifically, we want to show that

In order to prove (5.13), we have to understand how to write \(X_{0}\) in terms of \(G\) on \(\partial \Omega _{0}\).

We first claim that

where \(\mu _{\varepsilon }(t) := \mu _{1+\varepsilon G}(t) =|\{u_{ \varepsilon }>t\}|\). To prove this claim we build, exactly as in Lemma 5.5, a family of vector fields \(Y_{\varepsilon }\) with associated flows \(\Psi _{\varepsilon }\) such that \(\Psi _{\varepsilon }(\{u_{0}>t\}) = \{u_{\varepsilon }>t\}\) (note that, by Lemma 3.2, these sets have smooth boundaries, hence we can apply Lemma 5.4). We compute, for \(z \in \partial \{ u_{0} > t \} = \{u_{0} = t\}\),

and thus, since the first order term in \(\varepsilon \) vanishes, we have

We can now prove (5.14): again by Reynold’s formula, we have

since \(\partial \{u_{0}>t\}\) is a circle and \(\operatorname{Re}G\) is harmonic, where the last equality follows from (3.6).

As we explain in Remark 5.7 below, the function \(\varepsilon \mapsto \mu _{\varepsilon }(t)\) is smooth in \(\varepsilon \), whenever \(\varepsilon \) is sufficiently small, and also smooth in \(t\), for \(t \in (\varepsilon ^{c_{2}},\max u_{\varepsilon })\), where \(c_{2}\) is as in Lemma 3.2. Now let us fix \(s>0\) and recall that \(G(0)=0\). Using the smoothness of \(\varepsilon \mapsto \mu _{\varepsilon }\) first and then the smoothness of \(\mu _{0}\) on a neighbourhood of \(u_{0}^{*}(s)\), we obtain:

where we used the fact that \(G(0)=0\) by (3.6). Thus, after rearranging, we find

Since \(\Phi _{\varepsilon }\) is the flow of \(X_{\varepsilon }\), we have

We now compare the two expansions

and we deduce that, on \(\partial \{u_{0}>u_{0}^{*}(s)\} = \partial \Omega _{0}\), the first order terms in \(\varepsilon \) must be the same, thus

Finally, since \(G\) is holomorphic and \(G(0)=0\), we have

Now (5.13) follows by combining this identity with (5.19):

as wished. □

Proof of Proposition 5.3

Since \(G\in \mathcal {F}^{2}(\mathbb{C})\) satisfies (3.6), we can write

It is direct to see that (5.6) can be rewritten using the power series for \(G\) as

where

We claim that \(V_{k}(s) \le 0\) for all \(s \ge 0\). This follows by a simple calculus observation:

where \(\Gamma (a,s) := \int _{s}^{\infty} r^{a-1} e^{-r} \operatorname{d\!}r\) denotes the upper incomplete Gamma function. In order to conclude the desired bound, notice that \(\lim _{k \to \infty} V_{k}(s) = e^{-s} - 1 <0\), and, since \(V_{k}(s)\) is decreasing in \(k\) for \(s>0\) fixed,

The conclusion of Proposition 5.3 follows then directly from (5.20). □

Remark 5.7

In the proof of Lemma 5.6 above we used the fact that the function \((\varepsilon ,t)\mapsto \mu _{\varepsilon }(t)\) is smooth provided that \(\varepsilon \) is sufficiently small and that \(t\in (\varepsilon ^{c_{2}},\max u_{\varepsilon })\), where \(c_{2}\) is as in Lemma 3.2. This can be seen explicitly as follows: the smoothness in \(\varepsilon \) follows by Lemma 5.4 and the fact that \(\Psi _{\varepsilon }(\{u_{0} > t\}) = \{u_{\varepsilon }> t\}\). On the other hand, by [33, Lemma 3.2] we have

By the proof of Lemma 3.1 we see that

hence \(\mu _{\varepsilon }\in C^{0,1}_{\mathrm{loc}}(\varepsilon ^{c_{2}}, \max u_{\varepsilon })\). Moreover, the divergence theorem allows us to write

for a.e. \(t < t_{0}\). By (5.22), \(|\nabla u_{\varepsilon }|^{-2}\) is bounded and smooth in the set \(\{t_{0} > u_{\varepsilon }> t\}\), which shows that \(\partial _{t} \mu _{\varepsilon }\in C^{0,1}_{\mathrm{loc}}( \varepsilon ^{c_{2}}, \max u_{\varepsilon })\). By a straightforward use of the coarea formula, iterating such an argument yields the desired smoothness property of \(\mu _{\varepsilon }\). Moreover, we also have that \(\partial _{t}\mu _{\varepsilon }(t) \le - \frac{1}{t}\), cf. (1.23), and so by the Implicit Function Theorem the functions \(u_{\varepsilon }^{*}(s)\) are differentiable in the variable \(\varepsilon \) for all fixed \(s\), for \(\varepsilon < \varepsilon _{0}(s)\) sufficiently small.

It is important to note that (3.6) is crucial as a normalization for the above proof to work: as a matter of fact, many of the cancellations in the proof of Lemma 5.6 only appeared since \(G(0) = \langle G, 1 \rangle = 0\). Moreover, if \(\langle G,z \rangle \neq 0\), it could happen that \(\nabla ^{1} \mathcal{K}[1](G,G) = 0\), which would cause the proof of sharp stability to collapse.

The argument implicit in the reduction to (3.6) is hence a vital part of the proof: heuristically, it plays the pivotal role of providing us with a single point \(z_{0}\) – which, through translations, may be assumed to be the origin – for which one can compare the level sets of the functions \(u_{\varepsilon }\) to balls centered at \(z_{0}\). The fact that \(z_{0}\) is given by the point where each \(u_{\varepsilon }\) attains its maximum allows thus for a connection between the analytic and geometric natures of the problem, highlighting further the importance of the aforementioned reduction.

6 Sharpness of the stability estimates

In this short section we prove the sharpness claimed in Remark 1.2 concerning the estimates in Theorem 1.1. We will see that the variational approach of the previous section is quite useful in this regard. The following is the key proposition we require:

Proposition 6.1

Let \(s>0\) be a fixed positive real number. For each \(\varepsilon > 0\) sufficiently small there is a constant \(C>0\) and sequences \(\{\Omega _{\varepsilon }\}_{\varepsilon }\) and \(\{\tilde{F}_{\varepsilon }\}_{\varepsilon } \subset \mathcal {F}^{2}( \mathbb{C}) \) with \(\|\tilde{F}_{\varepsilon }\|_{\mathcal {F}^{2}} = 1\), \(\quad \forall \, \varepsilon > 0\), and such that:

-

(i)

\(\Omega _{0}\) a ball and \(|\Omega _{\varepsilon }| = s\);

-

(ii)

\(\inf _{c,z_{0} \in \mathbb{C}} \|\tilde{F}_{\varepsilon }- c \cdot F_{z_{0}} \|_{\mathcal {F}^{2}} \ge \frac{\varepsilon }{C}\);

-

(iii)

the deficit satisfies \(\delta (\tilde{F}_{\varepsilon };\Omega _{\varepsilon }) \le C \frac{s e^{-s}}{1-e^{-s}} \varepsilon ^{2}\).

Proof

Let \(F_{\varepsilon }(z) = 1 + \varepsilon z^{2}\) and as usual let us write \(u_{\varepsilon }(z):= |F_{\varepsilon }(z)|^{2} e^{-\pi |z|^{2}}\). Consider, as in (5.1), the domains

where \(s> 0\) is fixed. We then have

where we used Lemma 5.2 to pass to the second line and also (5.6) in the last equality. Now note that taking \(\varepsilon \) sufficiently small yields the desired upper bound if we choose \(\tilde{F}_{\varepsilon }= \frac{F_{\varepsilon }}{\|F_{\varepsilon }\|_{\mathcal {F}^{2}}}\). For the lower bound on \(\|\tilde{F}_{\varepsilon }- c F_{z_{0}}\|_{\mathcal {F}^{2}}\), we recall from (3.1) that

In order to finish, we only need to show that the only global maximum of \(|F_{\varepsilon }(z)|^{2} e^{-\pi |z|^{2}}\) occurs at \(z=0\), which is equivalent to showing that

for each \(z \in \mathbb{C}\setminus \{0\}\). As \(1 + \pi |z|^{2} + \frac{\pi ^{2}}{2} |z|^{4}< e^{\pi |z|^{2}}\), this inequality is true if \(\varepsilon < \frac{\pi}{4}\). Thus, for such \(\varepsilon \),

which concludes the proof. □

We are now ready to prove the claims in Remark 1.2.

Corollary 6.2

The following assertions hold:

-

(i)

The factor \(\delta (f;\Omega )^{1/2}\) cannot be replaced by \(\delta (f;\Omega )^{\beta}\), for any \(\beta > 1/2\), in (1.7) and (1.8);

-

(ii)

There is no \(c\in (0,1)\) such that, for all measurable sets \(\Omega \subset \mathbb{C}\) of finite measure, we have

$$ \min _{z_{0}\in \mathbb{C}, |c|=\|f\|_{2}} \frac{\|f - c\, \varphi _{z_{0}} \|_{2}}{\|f\|_{2}} \leq C \Big(e^{c | \Omega |} \delta (f;\Omega )\Big)^{1/2}. $$

Proof

Notice that (ii) follows directly from the statement of Proposition 6.1 by taking \(s \to \infty \), so we just have to prove (i). The fact that one cannot improve the exponent in (1.7) follows directly from Proposition 6.1 above.

To see that one cannot improve the exponent in (1.8) we argue as follows. For the domains \(\Omega _{\varepsilon }\) built in Proposition 6.1, we may use Lemma 5.5 to write \(\Omega _{\varepsilon }= \Phi _{\varepsilon }(\Omega _{0})\), provided that \(\varepsilon \) is small enough. As we saw in (5.18) we may write

for some scalar function \(h_{0}\colon \mathbb{C}\backslash \{0\}\to \mathbb{R}\). Indeed, that \(X_{0}\) has this form follows from its explicit formula (5.5) in Lemma 5.5. Since \(\pi \langle X_{0}(z), z \rangle = \operatorname{Re}(z^{2})\) on \(\partial \Omega _{0}\) by (5.19), we have

for \(z = r(\Omega _{0})e^{i \theta} \in \partial \Omega _{0}\), where \(r(\Omega _{0})\) denotes the radius of the ball \(\Omega _{0}\). Hence,

which concludes the proof. □

7 Generalizations to higher dimensions

In this section we will provide the generalization of Theorems 1.1 and 1.5 to higher dimensions \(d\geq 1\). We believe that the results of Sects. 5 and 6 also have higher-dimensional counterparts, but as the focus of this paper is on the 1-dimensional case we do not elaborate further on this.

Given a window function \(g \in L^{2}(\mathbb{R}^{d})\), the STFT of a function \(f \in L^{2}(\mathbb{R}^{d})\) is defined as

coherently with (1.1). As in dimension 1, we will only be interested in the case where \(g(x)=\varphi (x)\) is the standard Gaussian window defined as

so as before we set \(\mathcal{V}f := V_{\varphi} f\). Note that (7.1) reduces to (1.2) when \(d=1\).

The \(d\)-dimensional version of Theorem A, proved in [33], can be stated as follows.

Theorem C

[33]; Faber-Krahn inequality for the STFT in dimension \(d\)

If \(\Omega \subset \mathbb{R}^{2d}\) is a measurable set with finite Lebesgue measure \(|\Omega |>0\), and \(f\in L^{2}(\mathbb{R}^{d})\setminus \{0\}\) is an arbitrary function, then

Moreover, equality is attained if and only if \(\Omega \) coincides (up to a set of measure zero) with a ball centered at some \(z_{0}=(x_{0}, \omega _{0})\in \mathbb{R}^{2d}\) and, at the same time, for some \(c\in \mathbb{C}\setminus \{0\}\)

where \(\varphi \) is the Gaussian defined in (7.1).

We point out that in [33, Theorem 4.1] the right hand side of (7.2) is expressed in terms of the incomplete Gamma function and implicit constants depending on \(d\), whereas the present formulation (which is equivalent but more explicit) is taken from [34] (see the remark after Theorem 2.3 therein).

It appears from (7.2) that, in dimension \(d\geq 1\), the function

plays a crucial role, since when \(d=1\), \(v^{*}(s)=e^{-s}\) and the right hand side of (7.2) reduces to \(1-e^{-|\Omega |}\), as in (1.16). To state a stability result in dimension \(d\), we must modify the deficit \(\delta \) defined in (1.5) to suit the right hand side of (7.2), so we let

which once again reduces to (1.5) when \(d=1\). Redefining the asymmetry index \(\mathcal{A}(\Omega )\) by simply replacing \(\mathbb{R}^{2}\) with \(\mathbb{R}^{2d}\) in (1.6), our extension of Theorem 1.1 to dimension \(d\) can be stated as follows.

Theorem 7.1

Stability of the FK inequality for the STFT in dimension \(d\)

There is an explicitly computable constant \(C=C(d)>0\) such that, for all measurable sets \(\Omega \subset \mathbb{R}^{2d}\) with finite measure \(|\Omega |>0\) and all functions \(f \in L^{2}(\mathbb{R}^{d})\backslash \{0\}\), we have

Moreover, for some explicit constant \(K=K(d,|\Omega |)\) we also have

As in the case of Theorem 1.1, the first step is to translate the problem into the Fock space \(\mathcal{F}^{2}(\mathbb{C}^{d})\), now defined as the Hilbert space of all holomorphic functions \(F \colon \mathbb{C}^{d} \to \mathbb{C}\) such that

with its induced inner product. An orthonormal basis – that reduces to (1.13) when \(d=1\) – is given, using multi-index notation, by the normalized monomials

while the reproducing kernels are the functions \(K_{w}(z)=e^{\frac {\pi }{2} |w|^{2}} F_{w}(z)\), where, in analogy to (1.15),

The Bargmann transform is now an unitary operator from \(L^{2}(\mathbb{R}^{d})\) onto \(\mathcal{F}^{2}(\mathbb{C}^{d})\), defined as in (1.12), with \(\mathbb{R}^{d}\) and \(\mathbb{C}^{d}\) in place of ℝ and ℂ, and the multi-index notation being adopted. Moreover, the functions \(F_{z_{0}}\) in (7.9) are, much as in (1.15), the Bargmann transforms of the optimal functions \(\varphi _{z_{0}}\) defined in (7.3). In this setting, since by an identity similar to (1.14) the concentration of a function \(f\) on \(\Omega \) can still be expressed in terms of its Bargmann transform, one can rephrase Theorem 7.1 in terms of Fock spaces.

Theorem 7.2

Fock space version of Theorem 7.1

There is a computable constant \(C=C(d)>0\) such that, for all measurable sets \(\Omega \subset \mathbb{R}^{2d}\) with finite measure \(|\Omega |>0\) and all functions \(F\in \mathcal{F}^{2}(\mathbb{C}^{d})\backslash \{0\}\), we have

where

Moreover, for some explicit constant \(K=K(d,|\Omega |)\) we also have

We point out that (7.10) reduces to (1.17), when \(d=1\) and \(v^{*}(s)=e^{-s}\).

The proof of (7.10) can be obtained by arguments similar to those given in Sect. 2, where every result has a suitable analogue in dimension \(d\). Therefore, we limit ourselves to describing the relevant, and not always trivial, changes that are necessary to adapt Sect. 2 to dimension \(d\).

We start with the necessary background results from [33], which we discussed in Sect. 1.2 only in dimension one. We warn the reader that in [33] some numerical constants were written in terms of \(\boldsymbol{\omega }_{2d}\), the volume of the unit ball in \(\mathbb{R}^{2d}\): here, in (7.13) and (7.14), we write them explicitly using the fact that \(\boldsymbol{\omega }_{2d}=\pi ^{d}/d!\), as done in [34].

Given \(F\in \mathcal{F}^{2}(\mathbb{C}^{d})\), the function \(u\) and its super-level sets \(A_{t}\) are defined as in (1.20) and (1.21), now and henceforth with \(z\in \mathbb{C}^{d}\). The distribution function \(\mu (t)\) is defined as in (1.22), with the adopted convention that \(|\cdot |\) denotes Lebesgue measure in \(\mathbb{R}^{2d}\), but with (1.23) being replaced (see [33, §4]) by

while (1.24) becomes

Similarly, the decreasing rearrangement \(u^{*}(s)\) (i.e., the inverse function of \(\mu (t)\)) is defined exactly as in (1.26), but now with (1.25) being replaced by

These changes are natural in dimension \(d\), since when \(F\) equals one of the optimal functions defined in (7.9), we have \(u(z)=e^{-\pi |z-z_{0}|^{2}}\) and its distribution function is

as the explicit volume of the unit ball is \(\boldsymbol{\omega }_{2d}=\pi ^{d}/d!\). Note that, for the particular \(\mu \) in (7.15), (7.13) is an equality. Moreover, if in (7.15) we let \(\mu (t)=s>0\) and solve for \(t\), the resulting inverse function is just the function \(v^{*}(s)\) defined in (7.4), much as \(e^{-s}\) is the inverse of \(\log _{+} \frac {1}{t}\) when \(d=1\). In particular, note that (7.14) becomes an equality when \(u^{*}=v^{*}\).

Finally, the fact, expressed by (1.27), that super-level sets maximize the concentration under a volume constraint, is clearly still valid, as so is (1.30), with equality if and only if \(F\) is a multiple of some \(F_{z_{0}}\).