Abstract

The detection of unattended visual changes is investigated by the visual mismatch negativity (vMMN) component of event-related potentials (ERPs). The vMMN is measured as the difference between the ERPs to infrequent (deviant) and frequent (standard) stimuli irrelevant to the ongoing task. In the present study, we used human faces expressing different emotions as deviants and standards. In such studies, participants perform various tasks, so their attention is diverted from the vMMN-related stimuli. If such tasks vary in their attentional demand, they might influence the outcome of vMMN studies. In this study, we compared four kinds of frequently used tasks: (1) a tracking task that demanded continuous performance, (2) a detection task where the target stimuli appeared at any time, (3) a detection task where target stimuli appeared only in the inter-stimulus intervals, and (4) a task where target stimuli were members of the stimulus sequence. This fourth task elicited robust vMMN, while in the other three tasks, deviant stimuli elicited moderate posterior negativity (vMMN). We concluded that the ongoing task had a marked influence on vMMN; thus, it is important to consider this effect in vMMN studies.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Information processing of events outside the set of task-related stimuli is important in a complex environment. Electrophysiological methods provide exceptional tools for the investigation of the system capable of dealing with such information. This is because the auditory and visual mismatch components of event-related potentials (ERPs) are signatures of environmental changes without the requirement of overt responses (for a review of auditory mismatch negativity (MMN) and visual mismatch negativity (vMMN) see Fitzgerald and Todd 2020; Stefanics et al. 2014, respectively). MMN and vMMN are usually investigated in the passive oddball paradigm, where within a stream of a frequent stimulus category (standard), there are infrequent (deviant) stimuli from a different category. MMN and vMMN are the ERP differences between the deviant and standard stimuli. To investigate the responses to task-irrelevant events, a usual solution in both auditory and visual modality is the introduction of visual tasks. In the passive oddball paradigm, the task-related events are supposed to prevent attentional processing of MMN- and vMMN-related events.

Recently, auditory and visual MMN is discussed in the predictive coding framework (Friston 2008, 2010; Garrido et al. 2008). According to this framework, the standard events build up a short-term predictive representation of environmental regularities, and in a cascade of processes, the difference between the model and the input as error signal modifies the model. The match between the input and the modified model is the result of perceptual representation of an event. The vMMN is an indicator how this system is working in case of task-irrelevant visual events. Results of vMMN studies using facial stimuli show that this system is capable of dealing with fairly complex events. However, it is important to investigate how much the various methods of diverting attention from vMMN-related events succeed. That is, to what extent do they inform us about the automaticity (i.e., unrelated to attentive processing) of the mechanism proposed by the predictive theory?

Thus, the aim of the present study was to assess the effects of the frequently applied tasks on change detection within the stream of visual events, unrelated to the ongoing task. In vMMN studies (277 items in the WOS database on August 28, 2022), there is a large variety of tasks. In our study, the vMMN-related stimuli were photographs of human faces. We chose these stimuli because (1) it is the most frequently investigated stimulus type in vMMN research (see Czigler and Kojouharova 2022; Kovarski et al. 2017), (2) human faces are highly important stimuli in social interactions.

We investigated vMMN to deviant emotions in human faces. This selection is motivated by the fact, that a search with the term “visual mismatch negativity” in the Web of Science database resulted in 278 items (on September 6, 2022), and 25 percent of the items appeared to be “visual mismatch negativity” AND “face”. Within the face-related studies, we obtained 55 studies on facial emotion.

In the present study, we selected four vMMN paradigms with different sequential and spatial relations to the vMMN-related events. In three cases, the task- and vMMN-related events are spatially separated. In these studies, task-related stimuli are presented to the center of the visual field, and the vMMN-related stimuli appear in eccentric locations. In one set of studies, the task-related stimuli appear in the inter-stimulus interval (ISI) of stimuli of the oddball sequence (for representative studies, see He et al. 2019; Li et al. 2018; Liu et al. 2015; She et al. 2017; Wang et al. 2014; Yin et al. 2018; Zhang et al. 2018). In the present study, this is the ISI task. In other studies, task-related stimuli were presented at any time (i.e., sometimes together with the oddball stimuli; as representative studies, see Amado and Kovács 2016; Fan et al. 2021; Kovács-Bálint et al. 2014; Stefanics et al. 2012). In the present study, this is the ALL task. In the third set of studies, continuous tasks are introduced like visual tracking. For such studies, see Csizmadia et al. 2021; Durant et al. 2017; Heslenfeld 2003; Kojouharova et al. 2019; Sulykos et al. 2018. In the present study, a continuous task was introduced in the TRACK condition. In the ISI task, the participants may discover the temporal separation of task-related and vMMN-related events, and outside the ISI periods, they can observe the stimuli of the oddball sequence. Due to the continuous performance in the TRACK task, participants have less opportunity to observe the oddball stimuli. The FRAME task is a typical three-stimulus oddball paradigm (e.g., Polich and Criado 2006). In the sequence, there are infrequent (standard), infrequent non-target (deviant), and infrequent target stimuli. In such tasks, the stimuli are presented in the center of the screen, and the task requires discrimination between the non-target (standard and deviant) and target stimuli (e.g., Baus et al. 2021; Kask et al. 2021; Kimura et al. 2011; Kovarski et al. 2017, 2021; Sel et al. 2016; Susac et al. 2010). In the FRAME task, the target feature is a colored frame around the facial stimuli. Being members of the same sequence, and there is no spatial separation, participants have the opportunity to observe the vMMN-related stimuli.

It should be noted that, in some study, the task was presented in the auditory modality (e.g., Astikainen and Hietanen 2009; Gayle et al. 2012; Zhao and Li 2006). However, in this respect, the two modalities are not symmetrical. Irrelevant auditory sequences easily become background events, whereas the onset of a sole visual event on the screen attracts attention automatically. In the present study, we do not apply auditory task.

Method

Participants

Twenty students (one left handed and one male, mean age = 20.7 years, SD = 2.13) with normal, or corrected-to-normal vision (at least 5/5 in a version of the Snellen charts), participated in the experiment for course credits, who had no known neurological or psychiatric disorder. The reason to choose this sample size was that through the search of the Web of Science database (“visual mismatch negativity”) AND (face or facial) AND (emotion OR emotional OR affective OR affect), we identified 55 datasets (06.09.2022). The average sample size of these datasets was 19.5 (SD = 8.16). Furthermore, according to a G*Power calculation (Faul et al. 2009), it was reported (Chen et al. 2020) that at effect size of d = 0.7, at least 19 participants would be required for 80% power to detect the effect with an alpha level of 0.05.

Written informed consent was obtained from all participants prior to the experimental procedure. The study was conducted in accordance with the Declaration of Helsinki and approved by the United Ethical Review Committee for Research in Psychology (EPKEB).

Stimuli and procedure

The participants were seated in a dark, electrically shielded and sound-attenuated room. The stimuli were presented on a 24-in. LCD monitor (Asus VS229na) with a 60 Hz refresh rate. Participants were seated with their head 1.4 m from the computer screen.

The vMMN-related stimuli were photos of happy and sad faces from the Karolinska Directed Emotional Faces (KDEF) database (Lundqvist et al. 1998). The set of photographs consisted of greyscale, portrait photos of three male and three female models with both expressions. In all four paradigms, the size of the photographs was 7.4 degrees of vertical visual arc and 5.5 degrees of horizontal visual arc, and they were displayed on a light grey background (47 cd/m2). The photos appeared quasi-randomly, so that all of them were presented in equal number, and the same face never appeared consecutively. There were four conditions. In the three-stimulus oddball condition (FRAME), the photos appeared in the middle of the screen. In the other three conditions (TRACK, ISI, ALL), two identical photographs were presented on the left and right side of the display with a distance of 5.57 degrees of visual arc between them. The stimulus duration was 150 ms, the inter-stimulus interval was 450 ± 33 ms in all conditions. In the FRAME condition, the participants had to press the space button on a computer keyboard as fast as possible, whenever the photo appeared with a red (0.6, 0, 0 in RGB values, 14 cd/m2) frame (30 pixel wide). There were 800 standard stimuli, 100 standards with a frame (target), and 100 deviant stimuli. Framed standards and deviants were interspersed randomly in the standard sequence with at least two standards between targets or deviants. In the other three conditions, 100 deviants were interspersed in the sequence of 400 standards randomly in a similar manner. In the ISI and ALL conditions, the participants’ task was to press the space button of the keyboard as fast as possible, whenever the dimension of the fixation cross (white, 230 cd/m2) changed (the length of the horizontal line was 0.66 grade of visual arc and that of the vertical line was 0.33, and vice versa after a change). These changes occurred randomly between 5 and 15 s. In the ALL condition, this change could occur at any time. In the ISI condition, the fixation cross changed only in the inter-stimulus interval. In the TRACK condition, the participants performed a similar tracking task as in the study of Sulykos et al. (2018). In the middle of the screen between the photos, there was a red fixation point (diameter: 3 min of visual arc), with a green disc (‘ball’; diameter: 6 min of visual arc) moving horizontally across this, and the task being to keep this moving ball on the red fixation point using the left and right arrow keys on the keyboard. Maintaining the disc on the fixation point, or no more than 0.4 min of visual arc beyond this was taken to be on the fixation point, as at a further distance, this color changed to blue to indicate an error. This tracking task demanded continuous visual attention on the center of the screen as the direction of the moving disc changed randomly.

Reverse control procedure was applied in all four conditions. That is, the standard and deviant stimuli (happy or sad faces) were interchanged in separate sequences in a counterbalanced order between participants. We split the sequences within each condition into 2.5 min-long blocks to avoid fatigue. These blocks were presented consecutively. So, the whole experiment was 20 × 2.5 min = 50 min long (net recording time). The order of conditions was varied and counterbalanced between participants. The participants received feedback after each block on their performance (mean reaction time, or the number of errors in the tracking task). At the beginning of a new task type, the participants received some verbal information, and instructions were displayed on the screen.

Measurement and analysis of brain electric activity

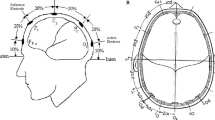

Electrical brain activity was recorded from 64 locations according to the international 10–20 system (BrainVision Recorder 1.21.0303, ActiChamp amplifier, Ag/AgCl active electrodes, EasyCap (Brain Products GmbH), sampling rate: 500 Hz, DC-70 Hz online filtering). The reference electrode was on the nose tip, and the ground electrode was placed on the forehead (FPz). Both horizontal and vertical electrooculogram (HEOG and VEOG) were recorded with bipolar configurations between two electrodes (placed lateral to the outer canthi of the two eyes and above and below the left eye, respectively). The EEG signal was bandpass filtered offline with a non-causal Kaiser-windowed Finite Impulse Response filter (low pass filter parameters: 30 Hz of cutoff frequency, beta of 12.265, a transition bandwidth of 10 Hz; high pass filter parameters: 0.1 Hz of cutoff frequency). Stimulus onset was measured by a photodiode, providing exact zero value for averaging. Epochs ranging from − 100 to 450 ms relative to the onset of stimuli were extracted for further analysis. The first 100 ms of each epoch served as the baseline. Epochs with larger than 100 μV voltage change at any electrode were considered artefacts and rejected from further processing. The EEG data were processed with MATLAB R2014a (MathWorks, Natick, MA). ERPs to standard stimuli that preceded deviants were involved to the averaging.

VMMN is expected in the 150–300 ms post-stimulus range as the difference between the ERP amplitudes between the deviant and standard. Deviant minus standard difference potentials frequently consists of an earlier and a later part (e.g., Kimura et al. 2009; Maekawa et al. 2005; Sulykos et al. 2018). Therefore, we divided this epoch into two parts (150–225 and 226–300 ms), and measured the average amplitudes elicited by the deviant and standard at the PO7 and PO8 locations (i.e., the most frequent locations of vMMN to facial emotions). The two epochs were analyzed separately. To analyze deviance effects, we applied the following calculations. The TRACK, ISI, and ALL conditions were different only in the tasks. Therefore, these conditions were compared in three-way ANOVAs with factors of condition (TRACK, ALL, ISI), stimulus (deviant, standard), and side (left, right). Separate two-way ANOVAs with factors of stimulus (deviant, standard) and side (left, right) was calculated for the FRAME condition, because the stimulation was different (photographs appeared centrally) in this condition. Difference potentials of the four conditions were compared in two-way ANOVA with factors of Condition (TRACK, ISI, ALL, FRAME) and Side (left, right). When appropriate, the Greenhouse–Geisser procedure was applied. Effect size was calculated as partial eta square (ƞp2). In post hoc analyses, Bonferroni test was calculated, and in reported differences, the alpha levels were 0.05. We used the Statistica package (Version 13.4.0.14, TIBCO Software Inc.) for statistical analyses.

We conducted Bayesian analyses (van den Bergh et al. 2020) with the same factors, as suggested by van den Bergh et al. (2020). As an indicator of the evidence for the alternative and null hypothesis, we applied BFincl. In these analyses, the JASP (https://jasp-stats.org/) programs were used.

Results

Behavioral results

Performance in the tracking task of the TRACK condition was expressed as the number of color changes of the disc, i.e., the incidents when the disc was outside of the target field. The mean of such erroneous events in the four blocks was 0.66 (S.E.M. = 0.14). In the ISI and ALL conditions, the mean reaction time for the occasional changes in the fixation cross was 473 ms (S.E.M. = 12.62) and 471 ms (S.E.M. = 11.98), respectively. In both conditions, there were on average 14.6 cross-flips per block. Participants missed the cross-flips 0.8 times/block (S.E.M. = 0.29) in the ISI condition, and 0.32 times/block (S.E.M. = 0.1) in the ALL condition. This difference between the two conditions is significant, t(19) = 2.1, p < 0.05, Cohen’s d = 0.47. In the FRAME condition, the average reaction time for the appearance of framed picture was 449 ms (S.E.M. = 8.58). There were 24 framed pictures per block, and participants missed 0.84 times/block (S.E.M. = 0.18). Accordingly, performance in the four conditions was fairly high, showing that participants attended to the task-related stimuli. Due to the high performance in the ISI condition, the slightly lower performance in this condition than in the ALL condition should be treated carefully, even if this could indicate that, in the ISI conditions, the saliency of the faces was higher.

ERPs

Figure 1 shows the ERPs to the deviant and standard stimuli at PO7 and PO8 locations (a), and the difference potentials at these locations (b). Table 1 shows the mean amplitude of the difference potentials, and Fig. 2 shows the scalp distribution of the difference potentials in the four conditions in the 150–225 and 226–300 ms ranges. As this figure shows, robust negativity emerged in the FRAME condition in both epochs, but deviant stimuli elicited less positivity (i.e., the difference potential was negative) also in all other conditions, with a more typical posterior distribution in the ISI condition. In the TRACK condition, anterior locations also indicated negative difference. However, as Fig. 2 and Table 1 show, the amplitude differences in TRACK, ISI, and ALL conditions were low, it was in the − 0.23 to − 0.49 µV range in the earlier epoch, and + 0.11 to − 0.67 µV range in the later epoch. The ANOVA results corresponded to this notion.

In the 150–225 ms epoch, the three-way ANOVA on the TRACK, ISI, and ALL conditions resulted only significant main effect of stimulus, F(1,19) = 4.88, ƞp2 = 0.20, p < 0.05 (3.94 vs. 4.30 µV). However, the Bayesian analysis provided only anecdotal evidence of this difference, BFincl. = 1.790. Concerning the differences among the conditions, there was moderate evidence for the null hypothesis, BFincl. = 0.195. In the twoway analysis on the FRAME condition, the main effects of stimulus and side were significant, F(1,19) = 25.34, p < 0001, ƞp2 = 0.57 and F(1,19) = 5.24, p < 0.05, ƞp2 = 0.22. The Bayesian analysis resulted in similar results, it provided moderate evidence for the stimulus difference, BFincl. = 5.629, and very strong evidence for the lateral (side) difference, BFincl. = 63.579, i.e., deviants elicited less positive ERPs (7.94 vs. 9.21 µV, and the ERPs were larger on the right side (9.44 vs. 7.71 µV).

The two-way ANOVA on the difference potentials of the four conditions resulted significant condition main effect, F(3,57) = 3.38, ɛ = 0.90, ƞp2 = 0.15, p < 0.05. According to the Bonferroni calculations, the FRAME condition resulted in larger negativity than the other conditions. According to the Bayesian analysis, the BFincl. = 127.177 value indicated extreme evidence of the difference.

In the 226–300 ms epoch, the three-way ANOVA on the TRACK, ISI, and ALL conditions resulted in F(2,38) = 4.56, ɛ = 0.82, p < 0.05, ƞp2 = 0.19 main effect of condition and condition × side interaction, F(2,38) = 6.49, p < 0.01, ƞp2 = 0.25. Positivity in the ERPs in the ALL condition was larger than in the other conditions, and it was smaller in the TRACK condition. The Bayesian analysis indicated anecdotal evidence for the condition difference, BFincl. = 5.367 and extreme evidence for lateral difference, BFincl. = 9412.63, but no evidence of condition × side interaction, BFincl. = 0.194. In the two-way analysis on the FRAME condition, the condition and side main effects were significant, F(1,19) = 45.58, p < 0001, ƞp2 = 0.71 and F(1,19) = 12.52, p < 0.01, ƞp2 = 0.40, respectively. Deviants elicited smaller positivity, (5.98 vs. 7.79 µV), and ERPs were smaller on the left side (5.72 vs. 8.05 µV). The Bayesian analysis resulted in similar results, extreme evidence for the stimulus and side difference, BFincl. = 406.653 and 22,736.057, respectively.

The two-way ANOVA on the difference potentials resulted in significant condition main effect, F(3,57) = 7.54, ɛ = 0.98, p < 0.001, ƞp2 = 0.28. According to the Bonferroni calculation, the negative difference was larger in the FRAME condition than in the other three ones. The Bayesian analysis resulted in similar result, i.e., extreme evidence for the alternative hypothesis, BFincl. = 2.189*106.

Discussion

The aim of this study was to investigate the effect of various tasks on the ERP signature of detecting task-irrelevant changes (i.e., vMMN). The compared tasks varied in their demand of attention. As a summary of our results, the two sets of analyses (ANOVAs and Bayesian calculations) resulted in robust deviant–standard difference in the FRAME condition (three-stimulus oddball), whereas in the other three conditions, i.e., tracking task (TRACK), target only in the inter-stimulus interval (ISI), and target at any time (ALL) resulted in moderate evidence for vMMN emergence. Although, as Table 1 shows, from the 12 values of the deviant–standard, there was only one positive value, negativities were only in the − 0.14 to − 0.67 μV range. However, studies with similar sample size and design (e.g., Gong et al. 2011; Li et al. 2018; She et al. 2017; Yin et al. 2018) reported vMMN similar to our ISI condition.

Concerning the main question of the present study, i.e., the effect of the ongoing task on vMMN, the results show that central presentation of stimuli together with the temporal separation of task-related and task-unrelated stimuli resulted in a large deviant–standard ERP difference. However, the parafoveal presentation of the task-unrelated stimuli in the other three conditions did not result in detectable vMMN difference. The emergence of a large deviant–standard ERP difference in the FRAME condition corresponds to results of previous studies (e.g., Baus et al. 2021; Kask et al. 2021; Kimura et al. 2011; Kovarski et al. 2017, 2021; Sel et al. 2016; Susac et al. 2010). These studies applied various target stimuli, like having faces with different skin color, spectacle wearers, or marked faces. However, central presentation of the vMMN-related stimuli is not a requirement for vMMN to emerge. Beside the studies at the domain of face processing, parafoveally presented stimuli elicited reliable vMMN (e.g., Berti 2011; Clifford et al. 2010; Czigler et al. 2004; Lorenzo-López et al. 2004; Müller et al. 2012).

We investigated two latency ranges, 150–225 and 226–300 ms. As Fig. 1 shows, in all conditions, the peak of negative component (N1/N170) is within the epoch of the earlier component. N1/N170 is an ERP component characteristic at the onset of visual, and especially facial stimuli (for a review, see Schindler and Bublatzky 2020; Tüttenberg and Wiese 2022). Earlier interpretation of the deviant–standard difference in the range of stimulus-specific (exogenous) negativities proposed that such difference is due to the refractoriness of input neurons (Näätänen and Picton 1987; for a detailed explanation, see May and Tiitinen 2010), in contrast to the deviant-related “genuine vMMN” (e.g., Kimura et al. 2009). O’Shea (2015) proposed that instead of refractoriness, it is a stimulus-specific adaptation process. Within the framework of predictive coding theory, stimulus-specific adaptation means a process of building up a model of the characteristic of expected events (Lieder et al. 2013; Stefanics et al. 2014). Therefore, even if adaptation processes played some role in the difference, a parsimonious interpretation of the results is that the system underlying detection of emotional difference did not require that the faces are involved in the ongoing task. As another and also important point is that in the present study, we involved into the averaging process only standard stimuli followed by a deviant. Accordingly, the averaged standards were always preceded by another standard. In the visual modality, initial stimuli of a sequence elicit fairly large exogenous components, but after the second stimuli, there is hardly any further amplitude decrement (see e.g., Johnston et al. 2017, Fig. 1). Finally, and most importantly, as the results of recent studies (Baker et al. 2021; Johnston et al. 2017) show, increased amplitude in the N1/N170 latency range is a direct consequence of a violated prediction. Therefore, we concluded that even in the earlier period, we registered vMMN, although a small one in the TRACK, ISI, and ALL conditions.

Studies in the field of vMMN to facial stimuli use different set of photography. East-Asian studies applied pictures with standardized East-Asian sets. In European and American studies, a large variation of sets were applied. For example, Astikainen and Heitanen (2009) and Stefanics et al. (2014) applied pictures from the Ekman and Friesen (1976) picture set, Kovács-Bálint et al. (2014) from the Trustworthiness Face Database (Oosterhof and Todorov 2008), and Vogel et al. (2015) applied the Max-Planck Institute of Biological Cybernetics set (Troje and Bülthoff 1996). Furthermore, many studies applied schematic faces. Therefore, it is possible that emotional discrimination among the sets were different.

In light of the present results, the question is the automaticity of processes underlying vMMN. In other words: to elicit vMMN, is it necessary or unnecessary to process attentively deviant events within the stimulus sequence? Although “Everyone knows what attention is” (James 1890, p. 403), in fact “No one knows what attention is” (Hommel et al. 2019). The aim of this study was far from defining attention or to select from the various attempts to define this term. In the field of ERP research, a signature of orienting to unexpected stimuli in the three-stimulus oddball task is a positivity (P3a, for a review, see Polich and Criado 2006) corresponding to the later vMMN range (226–300 ms) of the present analysis. As the scalp distribution in the FRAME condition shows, in this range, the ERP was negative. Kovarski et al. (2017, 2021) obtained similar results. Therefore, even if studies on auditory MMN and vMMN sometimes report that the mismatch component is followed by positivity, the present results did not show P3a emergence. As the attentional issue is unsolved at both theoretical and empirical levels, we suggest the following description for characterizing vMMN: this ERP component is a signature of the detection of events violating task-unrelated sequential regularities.

As limitations of the present study, we investigated a specific type of stimuli, emotional faces. It is possible that in case of other types of stimuli (e.g., different stimulus features, object-related differences, or different perceptual categories), there were different effects of the various tasks. Furthermore, it would be useful to involve an ALL-type of task with central presentation of facial stimuli, because in a study using this paradigm (Kecskés-Kovács et al. 2013), fairly large vMMN emerged. Finally, increased sample size may serve to discriminate among the TRACK, ISI, and ANY tasks, but our purpose in the present study was to use a sample size typical in the field.

Availability of data and materials

The datasets generated and analyzed during the current study are available here: https://gin.g-node.org/gaalzs/Method.

References

Amado C, Kovács G (2016) Does surprise enhancement or repetition suppression explain visual mismatch negativity? Eur J Neurosci 43(12):1590–1600. https://doi.org/10.1111/ejn.13263

Astikainen P, Hietanen JK (2009) Event-related potentials to task-irrelevant changes in facial expressions. Behav Brain Funct 5(1):30. https://doi.org/10.1186/1744-9081-5-30

Baker KS, Pegna AJ, Yamamoto N, Johnston P (2021) Attention and prediction modulations in expected and unexpected visuospatial trajectories. PLoS ONE 16(10):e0242753. https://doi.org/10.1371/journal.pone.0242753

Baus C, Ruiz-Tada E, Escera C, Costa A (2021) Early detection of language categories in face perception. Sci Rep 11(1):9715. https://doi.org/10.1038/s41598-021-89007-8

Berti S (2011) The attentional blink demonstrates automatic deviance processing in vision. NeuroReport 22(13):664–667. https://doi.org/10.1097/WNR.0b013e32834a8990

Chen B, Sun P, Fu S (2020) Consciousness modulates the automatic change detection of masked emotional faces: evidence from visual mismatch negativity. Neuropsychologia 144:107459. https://doi.org/10.1016/j.neuropsychologia.2020.107459

Clifford A, Holmes A, Davies IRL, Franklin A (2010) Color categories affect pre-attentive color perception. Biol Psychol 85(2):275–282. https://doi.org/10.1016/j.biopsycho.2010.07.014

Csizmadia P, Petro B, Kojouharova P, Gaál ZA, Scheiling K, Nagy B, Czigler I (2021) Older adults automatically detect age of older adults’ photographs: a visual mismatch negativity study. Front Hum Neurosci 15:707702. https://doi.org/10.3389/fnhum.2021.707702

Czigler I, Kojouharova P (2022) Visual mismatch negativity: a mini-review of non-pathological studies with special populations and stimuli. Front Hum Neurosci 15:781234. https://doi.org/10.3389/fnhum.2021.781234

Czigler I, Balázs L, Pató LG (2004) Visual change detection: event-related potentials are dependent on stimulus location in humans. Neurosci Lett 364(3):149–153. https://doi.org/10.1016/j.neulet.2004.04.048

Durant S, Sulykos I, Czigler I (2017) Automatic detection of orientation variance. Neurosci Lett 658:43–47. https://doi.org/10.1016/j.neulet.2017.08.027

Ekman P, Friesen WV (1976) Pictures of facial affect [slides]. Department of Psychology, San Francisco State University, San Francisco

Fan Z, Guo Y, Hou X, Lv R, Nie S, Xu S, Chen J, Hong Y, Zhao S, Liu X (2021) Selective impairment of processing task-irrelevant emotional faces in cerebral small vessel disease patients. Neuropsychiatr Dis Treat 17:3693–3703. https://doi.org/10.2147/NDT.S340680

Faul F, Erdfelder E, Buchner A, Lang A-G (2009) Statistical power analyses using G*Power 3.1: tests for correlation and regression analyses. Behav Res Methods 41(4):1149–1160. https://doi.org/10.3758/BRM.41.4.1149

Fitzgerald K, Todd J (2020) Making sense of mismatch negativity. Front Psych 11:468. https://doi.org/10.3389/fpsyt.2020.00468

Friston K (2008) Hierarchical models in the brain. PLoS Comput Biol 4(11):e1000211. https://doi.org/10.1371/journal.pcbi.1000211

Friston K (2010) The free-energy principle: a unified brain theory? Nat Rev Neurosci 11(2):127–138. https://doi.org/10.1038/nrn2787

Garrido MI, Friston KJ, Kiebel SJ, Stephan KE, Baldeweg T, Kilner JM (2008) The functional anatomy of the MMN: a DCM study of the roving paradigm. Neuroimage 42(2):936–944. https://doi.org/10.1016/j.neuroimage.2008.05.018

Gayle LC, Gal DE, Kieffaber PD (2012) Measuring affective reactivity in individuals with autism spectrum personality traits using the visual mismatch negativity event-related brain potential. Front Hum Neurosci. https://doi.org/10.3389/fnhum.2012.00334

Gong X, Huang Y-X, Wang Y, Luo Y-J (2011) Revision of the Chinese facial affective picture system. Chin Ment Health J 25(1):40–46

He J, Zheng Y, Fan L, Pan T, Nie Y (2019) Automatic processing advantage of cartoon face in internet gaming disorder: evidence from P100, N170, P200, and MMN. Front Psych 10:824. https://doi.org/10.3389/fpsyt.2019.00824

Heslenfeld DJ (2003) Visual mismatch negativity. In: Polich J (ed) Detection of change. Springer US, p 41–59 https://doi.org/10.1007/978-1-4615-0294-4_3

Hommel B, Chapman CS, Cisek P, Neyedli HF, Song J-H, Welsh TN (2019) No one knows what attention is. Atten Percept Psychophys 81(7):2288–2303. https://doi.org/10.3758/s13414-019-01846-w

James W (1890) The principles of psychology. Max Millan. https://rauterberg.employee.id.tue.nl/lecturenotes/DDM110%20CAS/James-1890%20Principles_of_Psychology_vol_I.pdf. Accessed 8 June 2022

Johnston P, Robinson J, Kokkinakis A, Ridgeway S, Simpson M, Johnson S, Kaufman J, Young AW (2017) Temporal and spatial localization of prediction-error signals in the visual brain. Biol Psychol 125:45–57. https://doi.org/10.1016/j.biopsycho.2017.02.004

Kask A, Põldver N, Ausmees L, Kreegipuu K (2021) Subjectively different emotional schematic faces not automatically discriminated from the brain’s bioelectrical responses. Conscious Cogn 93:103150. https://doi.org/10.1016/j.concog.2021.103150

Kecskés-Kovács K, Sulykos I, Czigler I (2013) Is it a face of a woman or a man? Visual mismatch negativity is sensitive to gender category. Front Hum Neurosci. https://doi.org/10.3389/fnhum.2013.00532

Kimura M, Katayama J, Ohira H, Schröger E (2009) Visual mismatch negativity: new evidence from the equiprobable paradigm. Psychophysiology 46(2):402–409. https://doi.org/10.1111/j.1469-8986.2008.00767.x

Kimura M, Schröger E, Czigler I (2011) Visual mismatch negativity and its importance in visual cognitive sciences. NeuroReport 22(14):669–673. https://doi.org/10.1097/WNR.0b013e32834973ba

Kojouharova P, File D, Sulykos I, Czigler I (2019) Visual mismatch negativity and stimulus-specific adaptation: the role of stimulus complexity. Exp Brain Res 237(5):1179–1194. https://doi.org/10.1007/s00221-019-05494-2

Kovács-Bálint Z, Stefanics G, Trunk A, Hernádi I (2014) Automatic detection of trustworthiness of the face: a visual mismatch negativity study. Acta Biol Hung 65(1):1–12. https://doi.org/10.1556/ABiol.65.2014.1.1

Kovarski K, Latinus M, Charpentier J, Cléry H, Roux S, Houy-Durand E, Saby A, Bonnet-Brilhault F, Batty M, Gomot M (2017) Facial expression related vMMN: disentangling emotional from neutral change detection. Front Hum Neurosci. https://doi.org/10.3389/fnhum.2017.00018

Kovarski K, Charpentier J, Roux S, Batty M, Houy-Durand E, Gomot M (2021) Emotional visual mismatch negativity: a joint investigation of social and non-social dimensions in adults with autism. Transl Psychiatry 11(1):1–12. https://doi.org/10.1038/s41398-020-01133-5

Li Q, Zhou S, Zheng Y, Liu X (2018) Female advantage in automatic change detection of facial expressions during a happy-neutral context: an ERP study. Front Hum Neurosci 12:146. https://doi.org/10.3389/fnhum.2018.00146

Lieder F, Stephan KE, Daunizeau J, Garrido MI, Friston KJ (2013) A neurocomputational model of the mismatch negativity. PLoS Comput Biol 9(11):e1003288. https://doi.org/10.1371/journal.pcbi.1003288

Liu T, Xiao T, Li X, Shi J (2015) Fluid intelligence and automatic neural processes in facial expression perception: an event-related potential study. PLoS ONE 10(9):e0138199. https://doi.org/10.1371/journal.pone.0138199

Lorenzo-López L, Amenedo E, Pazo-Alvarez P, Cadaveira F (2004) Pre-attentive detection of motion direction changes in normal aging. NeuroReport 15(17):2633–2636

Lundqvist D, Flykt A, Öhman A (1998) Karolinska directed emotional faces [Data set]. American Psychological Association. https://doi.org/10.1037/t27732-000.

Maekawa T, Goto Y, Kinukawa N, Taniwaki T, Kanba S, Tobimatsu S (2005) Functional characterization of mismatch negativity to a visual stimulus. Clin Neurophysiol 116(10):2392–2402. https://doi.org/10.1016/j.clinph.2005.07.006

May PJC, Tiitinen H (2010) Mismatch negativity (MMN), the deviance-elicited auditory deflection, explained. Psychophysiology 47(1):66–122. https://doi.org/10.1111/j.1469-8986.2009.00856.x

Müller D, Roeber U, Winkler I, Trujillo-Barreto N, Czigler I, Schröger E (2012) Impact of lower- vs. upper-hemifield presentation on automatic colour-deviance detection: a visual mismatch negativity study. Brain Res 1472:89–98. https://doi.org/10.1016/j.brainres.2012.07.016

Näätänen R, Picton T (1987) The N1 wave of the human electric and magnetic response to sound: a review and an analysis of the component structure. Psychophysiology 24(4):375–425. https://doi.org/10.1111/j.1469-8986.1987.tb00311.x

O’Shea RP (2015) Refractoriness about adaptation. Front Hum Neurosci. https://doi.org/10.3389/fnhum.2015.00038

Oosterhof NN, Todorov A (2008) The functional basis of face evaluation. Proc Natl Acad Sci 105(32):11087–11092. https://doi.org/10.1073/pnas.0805664105

Polich J, Criado JR (2006) Neuropsychology and neuropharmacology of P3a and P3b. Int J Psychophysiol 60(2):172–185. https://doi.org/10.1016/j.ijpsycho.2005.12.012

Schindler S, Bublatzky F (2020) Attention and emotion: an integrative review of emotional face processing as a function of attention. Cortex 130:362–386. https://doi.org/10.1016/j.cortex.2020.06.010

Sel A, Harding R, Tsakiris M (2016) Electrophysiological correlates of self-specific prediction errors in the human brain. Neuroimage 125:13–24. https://doi.org/10.1016/j.neuroimage.2015.09.064

She S, Li H, Ning Y, Ren J, Wu Z, Huang R, Zhao J, Wang Q, Zheng Y (2017) Revealing the dysfunction of schematic facial-expression processing in schizophrenia: a comparative study of different references. Front Neurosci 11:314. https://doi.org/10.3389/fnins.2017.00314

Stefanics G, Csukly G, Komlósi S, Czobor P, Czigler I (2012) Processing of unattended facial emotions: a visual mismatch negativity study. Neuroimage 59(3):3042–3049. https://doi.org/10.1016/j.neuroimage.2011.10.041

Stefanics G, Kremláček J, Czigler I (2014) Visual mismatch negativity: a predictive coding view. Front Hum Neurosci. https://doi.org/10.3389/fnhum.2014.00666

Sulykos I, Gaál ZA, Czigler I (2018) Automatic change detection in older and younger women: a visual mismatch negativity study. Gerontology 64(4):318–325. https://doi.org/10.1159/000488588

Susac A, Ilmoniemi RJ, Pihko E, Ranken D, Supek S (2010) Early cortical responses are sensitive to changes in face stimuli. Brain Res 1346:155–164. https://doi.org/10.1016/j.brainres.2010.05.049

Troje NF, Bülthoff HH (1996) Face recognition under varying poses: the role of texture and shape. Vis Res 36(12):1761–1771. https://doi.org/10.1016/0042-6989(95)00230-8

Tüttenberg SC, Wiese H (2022) Event-related brain potential correlates of the other-race effect: a review. Br J Psychol. https://doi.org/10.1111/bjop.12591

van den Bergh D, van Doorn J, Marsman M, Draws T, van Kesteren E-J, Derks K, Dablander F, Gronau QF, Kucharský Š, Gupta ARKN, Sarafoglou A, Voelkel JG, Stefan A, Ly A, Hinne M, Matzke D, Wagenmakers E-J (2020) A tutorial on conducting and interpreting a Bayesian ANOVA in JASP. L’année Psychologique 120(1):73–96. https://doi.org/10.3917/anpsy1.201.0073

Vogel BO, Shen C, Neuhaus AH (2015) Emotional context facilitates cortical prediction error responses. Hum Brain Mapp 36(9):3641–3652. https://doi.org/10.1002/hbm.22868

Wang W, Miao D, Zhao L (2014) Automatic detection of orientation changes of faces versus non-face objects: a visual MMN study. Biol Psychol 100:71–78. https://doi.org/10.1016/j.biopsycho.2014.05.004

Yin G, She S, Zhao L, Zheng Y (2018) The dysfunction of processing emotional faces in schizophrenia revealed by expression-related visual mismatch negativity. NeuroReport 29(10):814–818. https://doi.org/10.1097/WNR.0000000000001037

Zhang S, Wang H, Guo Q (2018) Sex and physiological cycles affect the automatic perception of attractive opposite-sex faces: a visual mismatch negativity study. Evol Psychol 16(4):147470491881214. https://doi.org/10.1177/1474704918812140

Zhao L, Li J (2006) Visual mismatch negativity elicited by facial expressions under non-attentional condition. Neurosci Lett 410(2):126–131. https://doi.org/10.1016/j.neulet.2006.09.081

Acknowledgements

This work has been implemented with the support provided by the Ministry of Innovation and Technology of Hungary from the National Research, Development and Innovation Fund, financed under the funding scheme (NKFIH 143178).

Funding

Open access funding provided by ELKH Research Centre for Natural Sciences.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Communicated by Melvyn A. Goodale.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Petro, B., Gaál, Z.A., Kojouharova, P. et al. The role of attention control in visual mismatch negativity (vMMN) studies. Exp Brain Res 241, 1001–1008 (2023). https://doi.org/10.1007/s00221-023-06573-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00221-023-06573-1