Abstract

We reformulate expected utility theory, from the viewpoint of bounded rationality, by introducing probability grids and a cognitive bound; we restrict permissible probabilities only to decimal (\(\ell \)-ary in general) fractions of finite depths up to a given cognitive bound. We distinguish between measurements of utilities from pure alternatives and their extensions to lotteries involving more risks. Our theory is constructive from the viewpoint of the decision maker. When a cognitive bound is small, the preference relation involves many incomparabilities, but these diminish as the cognitive bound is relaxed. Similarly, the EU hypothesis would hold more for a larger bound. The main part of the paper is a study of preferences including incomparabilities in cases with finite cognitive bounds; we give representation theorems in terms of a 2-dimensional vector-valued utility functions. We also exemplify the theory with one experimental result reported by Kahneman and Tversky.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

We reconsider EU theory from the viewpoints of bounded rationality and preference formation. We restrict permissible probabilities to decimal (\( \ell \)-ary, in general) fractions up to a given cognitive bound \(\rho ;\) if \(\rho \) is a natural number k, the set of permissible probabilities is given as \(\varPi _{\rho }=\varPi _{k}=\{\frac{0}{10^{k}},\frac{1}{10^{k}},\ldots , \frac{10^{k}}{10^{k}}\}\). The decision maker makes preference comparisons step by step using probabilities with small k to those with larger \( k^{\prime }\) to obtain accurate comparisons. The derived preference relation is incomplete in general, but the EU hypothesis holds for some lotteries and it would hold more when there is no cognitive bound, i.e., \(\rho =\infty \) and \(\varPi _{\infty }=\cup _{k<\infty }\varPi _{k}\). However, our main concern is the case of finite and small \(\rho \). Since the theory involves various entangled aspects, we first disentangle them.

The concepts of probability grids and cognitive bounds are introduced based on the idea of “bounded rationality.” The idea can be interpreted in many ways such as bounded logical inference, bounded perception ability, though Simon’s (1956) original concept meant a relaxation of utility maximization. The mathematical components involved in EU theory are classified into two types; object components used by the decision maker and meta-components used by the outside analyst and possibly by the decision maker himself. The former are primary targets in EU theory, and the latter such as highly complex rational as well as irrational probabilities are added for analytic convenience. A free use of the latter leads to a critique that the theory presumes “super rationality” (Simon 1983).

As a significance level for statistical hypothesis testing is typically \(5\%\) or \(1\%\), probability values \(\frac{t}{10^{2}} (t=0,\ldots ,10^{2})\) are already quite accurate for ordinary people. However, the classical EU theory starts with the full real number theory and makes no separation between the viewpoints of the decision maker and the outside analyst for available probabilities. This appears to be a problem of degree, but it would be meaningful if they are separated in some manner. The concepts of probability grids and a cognitive bound \(\rho \) make this separation.

Turing (1937) in his attempt to define computable numbers faced a similar situation: “...The differences from our point of view between the single and compound symbols is that the compound symbols, if they are too lengthy, cannot be observed at one glace. This is in accordance with experience. We cannot tell at a glance whether \(\underline{0.}9999999999999999\) and \(\underline{0.}999999999999999\) (the underlined parts by the author) are the same (1937, p. 250).”Footnote 1 In contrast, it is easy for us to distinguish between 0.999 and 0.99. Turing’s theory tried to abstract calculation in the human mind, but a built machine has no such problem since it reads each primitive symbol one by one. This is, so far, due to the difference in human and machine cognition. A cognitive bound \(\rho \) is a bound on such distinguishability and also on how deep the decision maker cares about those probabilities.

The set of probability grids up to depth k is given as \(\varPi _{k}=\{\frac{0 }{10^{k}},\frac{1}{10^{k}},\ldots ,\frac{10^{k}}{10^{k}}\}\). The decision maker thinks about his preferences with \(\varPi _{k}\) from a small k to a larger k up to bound \( \rho ;\) for example, when \(\rho =2\), \(\varPi _{0}\), \(\varPi _{1}\), and \(\varPi _{2}\) are only allowed. This is a constructive approach from the viewpoint of the decision maker in the sense that he finds/forms his own preferences.Footnote 2\(^{,}\)Footnote 3

We turn our attention to the development of our constructive EU theory. Construction needs a basis; we take a hint from Von Neumann and Morgenstern (1944), Section 3.3.2, p.17. They mentioned a separation of their argument into the following two steps, though this was not reflected in their mathematical development:

- Step B::

-

measurements of utilities from pure alternatives in terms of probabilities;

- Step E::

-

extensions of these measurements to lotteries involving more risks.

These steps differ in their natures: Step B is to measure a “satisfaction”, “desire”, etc. from a pure alternative, while Step E is to extend the measured satisfactions given by Step B to lotteries including more risks. An important difference is that Step B is to find the subjective preferences hidden in the mind of the decision maker, while Step E is to extend logically the preferences found in Step B to lotteries with more risks.

We develop our theory based on the above two steps and also take two approaches in terms of preferences and numerical utilities; each approach consists of Steps B and E. In this introduction, we focus mainly on the former theory, and we give a brief explanation of the latter.Footnote 4

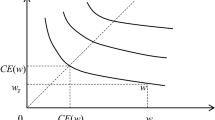

We assume two pure alternatives \({\overline{y}}\) and \({\underline{y}}\), called the upper and lower benchmarks; these together with the probability grids \(\varPi _{k}\) form the benchmark scale \(B_{k}({\overline{y}}; {\underline{y}})\) in layer k. In Step B, pure alternatives are measured by this scale. Preferences are constructed in shallow to deeper layers, where preferences are incomplete in the beginning, except for benchmark lotteries as measurement units, and in deeper layers, more precise preferences may be found. In Fig. 1, the benchmark scale for layer k is depicted as the right broken line with dots; x is measured exactly by the scale, y need a more precise scale within \(\rho \). However, z is not done within \(\rho \).

Two different roles of probability grids appear for evaluation of a lottery:

-

(i)

probability grids used for measurement of a pure alternative in Step B;

-

(ii)

probability coefficients to pure alternatives.

By these, relevant cognitive depths of lotteries become more complex, especially with a finite cognitive bound; this leads to incomparabilities in preferences and a violation of the EU hypothesis. This is central in our development and is closely related to the issue of “bounded rationality”.

Let us illustrate (i) and (ii) via an example, which is a lottery for choice in the Allais paradox in Sect. 8. Consider one example with the upper and lower benchmarks \({\overline{y}}\), \({\underline{y}}\), and the third pure alternative y with strict preferences \({\overline{y}}\succ y\succ {\underline{y}}\). In Step B, the decision maker looks for a probability \(\lambda \) so that y is indifferent to a lottery \([{\overline{y}},\lambda ; {\underline{y}}]=\lambda {\overline{y}}*(1-\lambda ){\underline{y}}\) with probability \(\lambda \) for \({\overline{y}}\) and \(1-\lambda \) for \({\underline{y}} ;\) this indifference is denoted by

Suppose that this \(\lambda \) is uniquely determined as \(\lambda =\lambda _{y}=\frac{83}{10^{2}}\in \varPi _{2}\). Here, exact measurement of y is successful in layer 2, where Step B is enough here.

We have the other source of cognitive depths as mentioned in (ii). Consider lottery \(d=\tfrac{25}{10^{2}}y*\tfrac{75}{10^{2}}{\underline{y}}\), which includes the third pure alternative y. The independence condition of the classical EU theory dictates that because of (1), \([{\overline{y}}, \frac{83}{10^{2}};{\underline{y}}]\) is substituted for y in d, and d is reduced to:

where \(\frac{2075}{10^{4}}=\frac{25}{10^{2}}\times \frac{75}{10^{2}}\) and \(\frac{7925}{10^{4}}=1-\frac{2075}{10^{4}}\). Thus, y is evaluated as being indifferent to \([{\overline{y}},\frac{83}{10^{2}};{\underline{y}}]\) in Step B, but y also has a probability coefficient \(\frac{25}{10^{2}} \) in d, which is taken into account in Step E. These steps lead to probability \(\frac{2075}{10^{4}}\), which is much more precise than either of \(\tfrac{83}{10^{2}}\) and \(\tfrac{25}{10^{2}}\).

As indicated in (i) and (ii), lottery \(d=\tfrac{25}{10^{2}}y*\tfrac{75}{ 10^{2}}{\underline{y}}\) has two types of cognitive depths; one is simply a probability coefficient \(\frac{25}{10^{2}}\) and the other is \(\lambda _{y}= \tfrac{83}{10^{2}}\) from (1). Although d itself is expressed as a lottery of depth 2, the total depths including these two are 4, which is beyond the cognitive bound \(\rho =2\). One point is that the resulting probability may become more precise from lotteries of a relatively small bound, and the other is that this is intimately related to the EU hypothesis. When \(\rho \) is small, the EU hypothesis does not hold typically, while it would hold more as \(\rho \) is getting larger.

The preference formation by Steps B and E is formulated as a form of mathematical induction; Step B is the inductive base and Step E is the inductive step. Step B is spread out to layers of various depths, i.e., the induction base is spread, too. These steps are described in Table 1: the relation \(\unrhd _{k}\) for layer k of row B expresses preferences measured in Step B . In layer k, \(\succsim _{k}\) is derived from \(\unrhd _{k}\) and \( \succsim _{k-1};\) the former is a part of the inductive base and the latter is the inductive step. This is a weak form of “independence condition.”

As stated earlier, we provide another approach in terms of a 2-dimensional vector-valued utility functions \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}=\langle [{\overline{\upsilon }}_{k},{\underline{\upsilon }} _{k}]\rangle _{k<\rho +1}\) and \(\langle {\varvec{u}}_{k}\rangle _{k<\rho +1}=\langle [{\overline{u}}_{k},{\underline{u}}_{k}]\rangle _{k<\rho +1}\) with Fishburn’s (1970) interval order \(\ge _{I}\). In each of Steps B and E, this approach is entirely equivalent to the preference approach, as depicted in Table 2. This may be interpreted as what Von Neumann and Morgenstern (1944), p.29, indicated. The approaches in terms of preferences and utilities enable us to view Steps B and E in different ways as well as they serve different analytic tools for studies of incomparabilities/comparabilities involved.

Our theory enjoys a weak form of the expected utility hypothesis. This will be discussed in Sect. 6. In the case of \(\rho =\infty \), restricting our attention to the set of measurable pure alternatives, Sect. 7 shows that our theory exhibits a form of the classical EU theory. We provide a further extension of \(\succsim _{\infty }\) to have the full form of the classical EU theory; this extension involves some unavoidable non-constructive step, which may be interpreted as the criticism of “super rationality” by Simon (1983).

A remark is on the relationship between k and \(\rho \) exhibiting a layer and a cognitive bound. The former is a variable in our theory, and the latter is a parameter of the theory. We talk about the sequences \(\langle \succsim _{k}\rangle _{k<\rho +1}\) and \(\langle {\varvec{u}}_{k}\rangle _{k<\rho +1} \) describing the process of preference/utility formation, layer to layer, up to \(\rho \). Nevertheless, the final target preferences and utilities are \(\succsim _{\rho }\) and \({\varvec{u}}_{\rho }\). In the context of the quotation from Turing (1937), within the layers up to \(\rho \), the decision maker can distinguish each probability as a single symbol, but beyond \(\rho \), he would have a difficulty; here, it is assumed that he does not think about his decision problem beyond \(\rho \). When \(\rho =\infty \), he can treat any grid probability as a single entity. This remark leads to the view that our theory is a generalization of the classical EU theory, which is discussed in Sect. 7.

In Sect. 8, we apply our theory to the Allais paradox, specifically to an experimental result from Kahneman and Tversky (1979). We show that the paradoxical results remains when the cognitive bound \(\rho \ge 3\). However, when \(\rho =2\), the resultant preference relation \( \succsim _{\rho }\) is compatible with their experimental result, where incomparabilities play crucial roles in explaining them.

The paper is organized as follows: Section 2 explains the concept of probability grids and other basic concepts. Section 3 formulates Step B in terms of preferences and utilities and states their equivalence. Section 4 discusses Step E in terms of preferences, and Sect. 5 does it in terms of utilities. Section 6 discusses the measurable/non-measurable lotteries and shows that the expected utility hypothesis holds for the measurable lotteries. Section 7 discusses the connection from our theory to the classical EU theory. In Sect. 8, we exemplify our theory with an experimental result in Kahneman and Tversky (1979). Section 9 concludes this paper with comments on further possible studies. Proofs of all the results in each section are given in a separate subsection; only proof of Lemma 2.1 is given in “Appendix.”

2 Preliminaries

Our theory is about preference formation in the context of EU theory. The classical EU theory is the reference point, but our theory deviates from it in various manners. To have clear relations between the classical EU theory and our development, we first mention the classical theory (cf. Herstein and Minor 1953; Fishburn 1982), and then, we start our development. In Sect. 2.2, we give various basic concepts for our theory and one basic lemma. In Sect. 2.3, we give definitions of preferences, indifferences, incomparabilities, and their counterparts in terms of vector-valued utility functions.

2.1 Classical EU theory

Let X be a given set of pure alternatives with cardinality \( \left| X\right| \ge 2\). A lottery f is a function over X taking values in [0, 1] such that for some finite subset S of X, \( \sum \nolimits _{x\in S}f(x)=1\), \(f(x)>0\) if \(x\in S\), and \(f(x)=0\) if \(x\in X-S\). This subset S is called the support of f. We define \( L_{[0,1]}(X)= \{f:f:X\rightarrow [0,1]\) is a lottery\(\}\). The set \( L_{[0,1]}(X)\) is uncountable. We define compound lotteries: for any \(f,g\in L_{[0,1]}(X)\) and \(\lambda \in [0,1]\), \(\lambda f*(1-\lambda )g\) is a lottery in \(L_{[0,1]}(X)\) defined by \((\lambda f*(1-\lambda )g)(x)=\lambda f(x)+(1-\lambda )g(x)\) for all \(x\in X\).

Let \(\succsim _{E}\) be a binary relation over \(L_{[0,1]}(X);\) we assume NM0 to NM2 on \(\succsim _{E}\). This system is one among various equivalent systems.

-

Axiom NM0 (Complete preordering): \(\succsim _{E}\) is a complete and transitive relation on \(L_{[0,1]}(X)\).

-

Axiom NM1 (Intermediate value): For any \(f, g, h\in L_{[0,1]}(X)\), if \(f\succsim _{E}g\succsim _{E}h\), then \(\lambda f*(1-\lambda )h\sim _{E}g\) for some \( \lambda \in [0,1]\).

-

Axiom NM2 (Independence): For any \(f,g,h\in L_{[0,1]}(X)\) and \(\lambda \in (0,1]\),

-

ID1: \(f\succ _{E}g\) implies \(\lambda f*(1-\lambda )h\succ _{E}\lambda g*(1-\lambda )h;\)

-

ID2: \(f\sim _{E}g\) implies \(\lambda f*(1-\lambda )h\sim _{E}\lambda g*(1-\lambda )h\),

-

where the indifference part and strict preference part of \( \succsim _{E}\) are denoted by \(\sim _{E}\) and \(\succ _{E};\) that is, \(f\sim _{E}g\) means \(f\succsim _{E}g\) & \(g\succsim _{E}f;\) and \(f\succ _{E}g\) does \(f\succsim _{E}g\) & not \((g\succsim _{E}f)\).

The following two are the key theorems in the classical EU theory. For a fruitful development of our theory, we should be conscious of how they remain in our theory.

Theorem 2.1

(Classical EU theorem). A preference relation \(\succsim _{E}\) satisfies Axioms NM0 to NM2 if and only if there is a function \(u:X\rightarrow {\mathbb {R}}\) so that for any \(f,g\in L_{[0,1]}(X)\),

where the expected utility functional \(E_{f}(u)\) is defined as:

Theorem 2.2

(Uniqueness up to Affine transformations). Suppose that \(\succsim _{E}\) satisfies Axioms NM0 to NM2. If two functions \(u,v:X\rightarrow {\mathbb {R}}\) satisfy (3), then there are two real numbers \(\alpha >0\) and \(\beta \) such that \(u(x)=\alpha v(x)+\beta \) for all \(x\in X\).

This theory is silent about how a decision maker finds/forms his preferences and does not separate between object components and meta-components for decision making. The above two theorems are meta-components, and some structural components such as lotteries and a preference relation \( \succsim _{E}\) in \((L_{[0,1]},\succsim _{E})\) are object components, but some include both such as highly complex probabilities. Another difficulty is the presumption that a well-formed preference relation \(\succsim _{E}\) already exists somewhere in the mind of decision maker. Here, our theory studies a formation of such a preference relation by reflecting upon his mind (with past experiences) from the simplest case to complex cases. We target to describe this process; Sects. 2.2 and 2.3 prepare basic concepts for the description of the process. Using these basic concepts, Sect. 3 describes Measurement Step B and Sects. 4 and 5 describe Extension Step E.

Since we target a process of preference formation, a preference relation contains almost necessarily some or many incomparabilities at least in the beginning. In the literature, we find some studies on expected utility theory without the completeness axiom. Aumann (1962) and Fishburn (1971) considered one-way representation theorem [i.e., the only-if of (3)], dropping completeness. See Fishburn (1972) for further studies. Dubra and Ok (2002) and Dubra et al. (2004) developed representation theorems in terms of utility comparisons based on all possible expected utility functions for the relation without completeness. In this literature, incomparabilities are given in the preference relation. In contrast, in our approach, incomparabilities are changing with a cognitive bound and may disappear when there are no cognitive bounds.

2.2 Probability grids, lotteries, and decompositions

Let \(\ell \) be an integer with \(\ell \ge 2\). This \(\ell \) is the base for describing probability grids; we take \(\ell =10\) in the examples in the paper. The set of probability grids \(\varPi _{k}\) is defined as

Here, \(\varPi _{1}=\{\tfrac{\nu }{\ell }:\nu =0,\ldots ,\ell \}\) is the base set of probability grids for measurement, whereas \(\varPi _{0}=\{0,1\}\) is needed for completeness of our discourse; Table 1 starts with layer 0 and continues up to layer \(\rho \). Each \(\varPi _{k}\) is a finite set, and \( \varPi _{\infty }:=\cup _{k<\infty }\varPi _{k}\) is countably infinite. We use the standard arithmetic rules over \(\varPi _{\infty }\); sum and multiplication are needed.Footnote 5 We allow reduction by eliminating common factors; for example, \(\tfrac{20}{10^{2}}\) is the same as \(\tfrac{2}{10}\). Hence, \(\varPi _{k}\subseteq \varPi _{k+1}\) for \( k=0,1,\ldots \) The parameter k is the precision of probabilities that the decision maker uses. We define the depth of each \(\lambda \in \varPi _{\infty }\) by: \(\delta (\lambda )=k\) iff \(\lambda \in \varPi _{k}-\varPi _{k-1}\). For example, \(\delta (\tfrac{25}{10^{2}})=2\) but \(\delta (\tfrac{20}{10^{2}} )=\delta (\tfrac{2}{10})=1\). The concept of a layer of probability grids up to a given depth k is well defined. The decision maker thinks about his preferences along probability grids from a shallow layer to a deeper one.

We use the standard equality \(=\) and strict inequality > over \(\varPi _{k}\). Then, trichotomy holds: for any \(\lambda ,\lambda ^{\prime }\in \varPi _{k}\),

Each element in \(\varPi _{k}\) is obtained by taking the weighted sums of elements in \(\varPi _{k-1}\) with the equal weights:

This is basic for the connection between layer \(k-1\) to the next. A proof of (7) is not given here, but an extension will be given in Lemma 2.1 with a proof in “Appendix.”

The union \(\varPi _{\infty }=\cup _{k<\infty }\varPi _{k}\) is a proper and dense subset of \([0,1]\cap \mathrm {{\mathbb {Q}}}\), where \({\mathbb {Q}}\) is the set of rational numbers. For example, when \(\ell =10\), \(\varPi _{\infty }\) has no recurring decimals, but they are rationals. We also note that \(\varPi _{\infty }\) depends upon the base \(\ell ;\) for example, \(\varPi _{1}\) with \(\ell =3\) has \(\frac{1}{3}\), but \(\varPi _{\infty }\) with \( \ell =10\) has no element corresponding to \(\frac{1}{3}\).

For any \(k<\infty \), we define \(L_{k}(X)\) by

We identify each pure alternative x with the lottery having x as its support; so X is regarded as a subset of \(L_{k}(X)\). Specifically, \( L_{0}(X)=X\). Since \(\varPi _{k}\) is a finite set, every \(f\in L_{k}(X)\) has a finite support. Since \(\varPi _{k}\subseteq \varPi _{k+1}\), it holds that \( L_{k}(X)\subseteq L_{k+1}(X)\). We denote \(L_{\infty }(X)= \cup _{k<\infty }L_{k}(X)\). As long as X is finite, \(L_{k}(X)\) is also a finite set, but \( L_{\infty }(X)\) is a countable set and is dense in \(L_{[0,1]}(X)\).

We define the depth of a lottery f in \(L_{\infty }(X)\) by \(\delta (f)=k\) iff \(f\in L_{k}(X)-L_{k-1}(X)\). We use the same symbol \(\delta \) for the depth of a lottery and the depth of a probability. It holds that \(\delta (f)=k\) if and only if \(\max _{x\in X}\delta (f(x))=k\). This is relevant in Sect. 6. Lottery \(d=\frac{25}{10^{2}}y*\frac{75}{10^{2}} {\underline{y}}\) of (2) is in \(L_{2}(X)-L_{1}(X)\) and its depth \( \delta (d)=2,\) but since \(d^{\prime }=\frac{20}{10^{2}}y*\frac{80}{10^{2} }{\underline{y}}= \frac{2}{10}y*\frac{8}{10}{\underline{y}}\in L_{1}(X)\), we have \(\delta (d^{\prime })=1\).

The decision maker thinks about and/or forms his own preferences from shallow layers to deeper ones. This stops at a cognitive bound \(\rho , \) which is a natural number or infinity \(\infty \). If \(\rho =k<\infty \), he eventually reaches the set of lotteries \(L_{\rho }(X)=L_{k}(X)\), and if \( \rho =\infty \), he has no cognitive limit; we define \(L_{\rho }(X)=L_{\infty }(X)=\cup _{k<\infty }L_{k}(X)\).

We formulate a connection from \(L_{k-1}(X)\) to \(L_{k}(X);\) we say that \( {\widehat{f}}=(f_{1},\ldots ,f_{\ell })\) in \(L_{k-1}(X)^{\ell }=L_{k-1}(X)\times \cdots \times L_{k-1}(X)\) is a decomposition of \(f\in L_{k}(X)\) iff for all \(x\in X\),

We denote this by \(\sum _{t=1}^{\ell }\frac{1}{\ell }*f_{t}\), and letting \({\widehat{e}}=(\tfrac{1}{\ell },\ldots ,\tfrac{1}{\ell })\), it is written as \({\widehat{e}} \mathbf {*} {\widehat{f}}\). We can regard \({\widehat{e}} \mathbf { *} {\widehat{f}}\) as a compound lottery connecting \(L_{k-1}(X)\) to \( L_{k}(X) \) by reducing \({\widehat{e}} \mathbf {*} {\widehat{f}}\) to f in (9). Our theory allows only this form of compound lotteries and reduction with the depth constraint. The next lemma states that \(L_{k}(X)\) is generated from \(L_{k-1}(X)\) by taking all compound lotteries of this kind. It facilitates our induction method described in Table 1 reducing an assertion in layer k to layer \(k-1\). A proof of Lemma 2.1 is given in “Appendix.”Footnote 6

Lemma 2.1

(Decomposition of lotteries). Let \(1\le k<\infty \). Then,

Furthermore, for any \(f\in L_{k}(X)\) with \(\delta (f)>0\), there is a decomposition of \({\widehat{f}}\) of f so that

The right-hand side of (10) is the set of composed lotteries from \( L_{k-1}(X)\) with the equal weights. The inclusion \(\supseteq \) states that the composed lotteries from \(L_{k-1}(X)\) belong to \(L_{k}(X)\). The converse inclusion \(\subseteq \) is essential and means that each lottery in \(L_{k}(X)\) is decomposed to an equally weighted sum of some \( (f_{1},\ldots ,f_{\ell })\) in \(L_{k-1}(X)^{\ell }\) with the depth constraint in (9). In the trivial case that \(f=x\in L_{0}(X)\) is decomposed to \( {\widehat{f}}=(x,\ldots ,x)\). This will be used in Lemma 4.2. The latter with (11) asserts the choice of a strictly shallower decomposition for f with \(\delta (f)>0\).

Here, we give three remarks. One is that when f is a benchmark lottery in \( B_{k}({\overline{y}};{\underline{y}})\), for its decomposition \({\widehat{f}} =(f_{1},\ldots ,f_{\ell })\), each \(f_{t}\) is a benchmark lottery in \(B_{k-1}( {\overline{y}};{\underline{y}})\). This fact will be used without referring. The second is that when \(f\in L_{k-1}(X)\), \({\widehat{f}}=(f,\ldots ,f)\) is a decomposition of f. To allow this triviality, we require only the weak inequality in the depth constraint in (9). The third is that for any subset \(X^{\prime }\) of X, we define \(L_{k}(X^{\prime })=\{f\in L_{k}(X): f(x)>0\) implies \(x\in X^{\prime }\}\). Hence, \(L_{k}(X^{\prime })\) is a subset of \(L_{k}(X)\). Lemma 2.1 holds for \(L_{k}(X^{\prime })\) and \(L_{k-1}(X^{\prime })\).

The lottery \(d=[y,\frac{25}{10^{2}};{\underline{y}}]\) has three types of decompositions:

Here, a decomposition \({\widehat{f}}= (f_{1},\ldots ,f_{10})\) is given as \( f_{1}=\cdots =f_{t}=y\), \(f_{t+1}=\cdots =f_{5-t}=[y,\frac{5}{10};{\underline{y}}]\), and \(f_{5-t+1}=\cdots =f_{10}={\underline{y}}\). We use this short-hand expressions rather than a full specification of \({\widehat{f}}= (f_{1},\ldots ,f_{10})\). We should be careful about this multiplicity.

The reason for explicit considerations of layers for \(L_{k}(X)\) and also preference relation \(\succsim _{k}\) is to avoid collapse from a layer to a shallower one. Without them, we may have a difficulty in identifying the sources for preferences. For example, the weighted sum \(\frac{5}{10}[\frac{25 }{10^{2}}y*\frac{75}{10^{2}}{\underline{y}}] *\frac{5}{10}[\frac{ 75}{10^{2}}y*\frac{25}{10^{2}}{\underline{y}}]\) is reduced to \(\frac{5}{10} y*\frac{5}{10}{\underline{y}};\) preferences about \(\frac{5}{10}y*\frac{ 5}{10}{\underline{y}}\) may possibly come from layer 2 or from layer 0. To prohibit such collapse, we take depths of layers explicitly into account in (9).

2.3 Incomplete preference relations and vector-valued utility functions

We consider two methods to express the decision maker’s desires: a preference relation and a utility function. We starts with an incomplete preference relation and then works on a vector-valued utility function with the interval order. These are the first departures from the classical EU theory.

Let \(\succsim \) be a preference relation over a given set, say A. For \( f,g\in A\), the expression \(f \succsim g\) means that f is strictly preferred to g or is indifferent to g. We define the strict (preference) relation \(\succ \), indifference relation \(\sim \), and incomparability relation \(\bowtie \) by

All the axioms are given on the relations \(\succsim \), \(\succ \), \(\sim \), and the relation \(\bowtie \) is defined as the residual part of \(\succsim \). Although \(\sim \) and \(\bowtie \) are sometimes regarded as closely related (cf. Shafer 1986, p. 469), they are well separated in Theorem 6.2 in our theory.

In the classical theory in Sect. 2.1, the preference relation \( \succsim _{E}\) is assumed to be complete. Since, however, we consider a formation of preferences, our theory should avoid this completeness assumption. Nevertheless, it appears as a result when a domain of lotteries is restricted.

Another method of measurement of desires is by a vector-valued function \( {\varvec{u}}\) with the interval order introduced by Fishburn (1970). Let \({\varvec{u}}(f)=[{\overline{u}}(f),{\underline{u}}(f)]\) be a 2 -dimensional vector-valued function from its domain A to the set \(\mathbb {Q }^{2}=\mathbb {Q\times Q}\) with \({\overline{u}}(f)\ge {\underline{u}}(f)\) for each \(f\in A\). The components \({\overline{u}}(f)\) and \({\underline{u}}(f)\) are interpreted as the least upper and greatest lower bounds of possible utilities from f. We say that \({\varvec{u}}(f)\) is effectively single-valued iff \({\overline{u}}(f)={\underline{u}}(f);\) in this case, we write \({\overline{u}}(f)={\underline{u}}(f)=u(f)\), dropping the upper and lower bars. We use the interval order \(\ge _{I}\) over the values of \(\varvec{ u};\) for \(f,g\in A\),

That is, f and g are ordered if and only if the greatest lower bound \( {\underline{u}}(f)\) from f is larger than or equal to the least upper bound \({\overline{u}}(g)\) from g. This \(\ge _{I}\) allows incomparabilities, for example, if \({\varvec{u}}(f)=[\frac{9}{10},\frac{7}{10}]\) and \(\varvec{ u}(g)=[\frac{83}{10^{2}},\frac{83}{10^{2}}]\), then f and g are incomparable by \(\ge _{I}\). The relation \(\ge _{I}\) is transitive, but is not reflexive; \({\varvec{u}}(f)\ge _{I}{\varvec{u}}(g)\) and \( {\varvec{u}}(g)\ge _{I}{\varvec{u}}(f)\) are equivalent to \(u(f)= {\underline{u}}(f)={\overline{u}}(g)={\underline{u}}(g)\), i.e., this is the case only when the values \({\varvec{u}}(f)\) and \({\varvec{u}}(g)\) are effectively single-valued and identical.

3 Measurement Step B

We formulate Step B of measurement of pure alternatives up to cognitive bound \(\rho \). This has two sides: in terms of preference relations \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) and in terms of vector-valued utility functions \(\langle \varvec{\upsilon } _{k}\rangle _{k<\rho +1}\). We show the representation theorem on \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) by \(\langle \varvec{\upsilon } _{k}\rangle _{k<\rho +1}\), and the uniqueness theorem on \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\) up to positive linear transformations. Finally, we mention that these are well interpreted in terms of Simon’s (1956) satisficing/aspiration argument.

3.1 Base preference streams

The set of pure alternatives X is assumed to contain two distinguished elements \({\overline{y}}\) and \({\underline{y}}\), which we call the upper and lower benchmarks. Let \(k<\infty \). We call an \(f\in L_{k}(X)\) a benchmark lottery of depth (at most) k iff \(f(\overline{ y})=\lambda \) and \(f({\underline{y}})=1-\lambda \) for some \(\lambda \in \varPi _{k}\), which we denote by \([{\overline{y}},\lambda ;{\underline{y}}]\). The benchmark scale of depth k is the set \(B_{k}({\overline{y}};{\underline{y}} )=\{[{\overline{y}},\lambda ;{\underline{y}}]:\lambda \in \varPi _{k}\}\). In particular, \(B_{0}({\overline{y}};{\underline{y}})=\{{\overline{y}},{\underline{y}} \}\). The grids (dots) in Fig. 1 express the benchmark lotteries. We define \( B_{\infty }({\overline{y}};{\underline{y}})=\cup _{k<\infty }B_{k}({\overline{y}}; {\underline{y}})\). The depth of a benchmark lottery \([{\overline{y}},\lambda ; {\underline{y}}]\) is the same as the depth of \(\lambda \), i.e., \(\delta ([ {\overline{y}},\lambda ;{\underline{y}}])=\delta (\lambda )\).

We denote a cognitive bound by \(\rho \), which is a natural number or \( \rho =\infty \). We use k as a variable expressing a natural number of a layer, but \(\rho \ \)as a constant parameter of the theory. Stipulating \( \infty +1=\infty \), “\(k<\rho +1\)” expresses the two statements “\(k\le \rho \) if \(\rho <\infty \)” and “\(k<\rho \) if \(\rho =\infty \)”. This constant \(\rho \) plays an active role as a small constraint such as \(\rho =2\) or 3 in Example 5.1 and Sect. 8, and as \(\rho =\infty \) in Sect. 7 for consideration of the expected utility hypothesis.

Let \(\trianglerighteq _{k}\) be a subset of

Thus, \(\trianglerighteq _{k}\) consists of the scale part of the benchmarks and the measurement part of pure alternatives. The scale part allows the decision maker to make comparisons between any grids of depth k. For a pure alternative \(x\in X\), he thinks about where x is located in the benchmark scale \(B_{k}({\overline{y}};{\underline{y}});\) it may or may not correspond to a grid, which is seen in Fig. 1. For example, if \((x,g)\in \trianglerighteq _{k}\) but \((g,x)\notin \trianglerighteq _{k}\), then x is strictly better than the grid g, and if \((x,g)\notin \trianglerighteq _{k}\) and \((g,x)\notin \trianglerighteq _{k}\), then x and g are incomparable for him.

A stream of basic preference relations is expressed as \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1};\) when \(\rho <\infty \), it is expressed as \(\langle \trianglerighteq _{0},\trianglerighteq _{1},\ldots ,\trianglerighteq _{\rho }\rangle \), and when \(\rho =\infty \), it is as \(\langle \trianglerighteq _{0},\trianglerighteq _{1},\ldots \rangle \). We make four axioms on \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1};\) the first requires a property on measurement on layer 0, the second and third require properties on measurement on each layer k, and the fourth connects measurements in layers k and \(k+1\).

Specifically, Axiom B0 requires pure alternatives to be between the upper and lower benchmarks \({\overline{y}}\), \({\underline{y}}\).

Axiom B0 (Benchmarks): \({\overline{y}}\trianglerighteq _{0}x\) and \( x\trianglerighteq _{0}{\underline{y}}\) for all \(x\in X\).

The next states that preferences over \(B_{k}({\overline{y}};{\underline{y}})\) are the same as the natural order on \(\varPi _{k}\).

Axiom B1 (Benchmark scale): For \(\lambda ,\lambda ^{\prime }\in \varPi _{k}\), \([{\overline{y}},\lambda ;{\underline{y}}]\trianglerighteq _{k}[ {\overline{y}},\lambda ^{\prime };{\underline{y}}]\) if and only if \(\lambda \ge \lambda ^{\prime }\).

It follows from Axiom B1 that for \(\lambda ,\lambda ^{\prime }\in \varPi _{k}\),

Also, \(\lambda =\lambda ^{\prime }\) if and only if \([{\overline{y}},\lambda ; {\underline{y}}]\) and \([{\overline{y}},\lambda ^{\prime };{\underline{y}}]\) are indifferent. Thus, \(\trianglerighteq _{k}\) is a complete relation over \(B_{k}({\overline{y}};{\underline{y}})\) by (6). This is the scale part of \(\trianglerighteq _{k}\) and is precise up to \(\varPi _{k}\). Since \({\overline{y}}=[{\overline{y}},1;{\underline{y}}]\) and \({\underline{y}} =[{\overline{y}},0;{\underline{y}}]\), it follows from (16) that \( {\overline{y}}\vartriangleright _{0}{\underline{y}}\).

Measurement is required to be coherent with the scale part given by Axiom B1.

Axiom B2 (Monotonicity): For all \(x\in X\) and \(\lambda ,\lambda ^{\prime }\in \varPi _{k}\), if \([{\overline{y}},\lambda ;{\underline{y}} ]\trianglerighteq _{k}x\) and \(\lambda ^{\prime }>\lambda \), then \([{\overline{y}},\lambda ^{\prime };{\underline{y}}]\vartriangleright _{k}x,\) and if \(x\trianglerighteq _{k}[{\overline{y}},\lambda ;{\underline{y}}]\) and \(\lambda >\lambda ^{\prime }\), then \(x\vartriangleright _{k}[{\overline{y}},\lambda ^{\prime };{\underline{y}} ]. \)

This implies no reversals with Axiom B1; if \([{\overline{y}},\lambda ; {\underline{y}}]\trianglerighteq _{k}x\) and \(x\trianglerighteq _{k}[\overline{y },\lambda ^{\prime };{\underline{y}}]\), then \(\lambda \ge \lambda ^{\prime }\). Indeed, if \(\lambda <\lambda ^{\prime }\), then \([{\overline{y}},\lambda ^{\prime };{\underline{y}}]\vartriangleright _{k}x\) by B2, which implies not \( x\trianglerighteq _{k}[{\overline{y}},\lambda ^{\prime };{\underline{y}}]\). If we assume transitivity for \(\trianglerighteq _{k}\) over \(D_{k}\), B2 could be derived from B1, but we adopt B2 instead of transitivity, since B2 gives a more specific property to the measurement step.

The last requires the preferences in layer \(k\ \) to be preserved in the next layer \(k+1\). This is expressed by the set-theoretical inclusion \(\subseteq \) in Table 1.

Axiom B3 (Preservation): For all \(f,g\in D_{k}\), \( f\trianglerighteq _{k}g\) implies \(f\trianglerighteq _{k+1}g\).

The above axioms still allow great freedom for base preference relations \( \langle \trianglerighteq _{k}\rangle _{k<\rho +1}\). To see this fact and how the measurement step B of utilities from pure alternatives goes on, we consider vector-valued utility functions with the interval order \(\ge _{I}\) in Sect. 3.2.

3.2 Base utility streams

Let \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}=\langle [{\overline{\upsilon }}_{k},{\underline{\upsilon }}_{k}]\rangle _{k<\rho +1}\) be a sequence of vector-valued functions, where \(\langle \varvec{\upsilon } _{k}\rangle _{k<\rho +1}=\langle \varvec{\upsilon }_{0},\varvec{ \upsilon }_{1},\ldots ,\varvec{\upsilon }_{\rho }\rangle \) if \(\rho <\infty \) and \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}=\langle \varvec{\upsilon }_{0},\varvec{\upsilon }_{1},\ldots \rangle \) if \(\rho =\infty \). For each \(k<\rho +1\), \(\varvec{\upsilon }_{k} =[{\overline{\upsilon }}_{k},{\underline{\upsilon }}_{k}]\) is a function from \(B_{k}( {\overline{y}};{\underline{y}})\cup X\) to \({\mathbb {Q}}^{2}\varvec{\ }\)such that \(\overline{\upsilon }_{k}(f)\ge {\underline{\upsilon }}_{k}(f)\) for all \( f\in B_{k}({\overline{y}};{\underline{y}})\cup X\), which are intended to be the least upper utility and greatest lower utility from lottery f. Recall that when \(\varvec{\upsilon }_{k}(f)\) is effectively single-valued, we write \(\overline{\upsilon }_{k}(f)={\underline{\upsilon }}_{k}(f)=\upsilon _{k}(f)\). The following conditions on \(\langle \varvec{\upsilon } _{k}\rangle _{k<\rho +1}\) are not exactly parallel to Axioms B0 to B3, but these two systems are equivalent, which is stated in Theorem 3.1 :

- b0::

-

\(\upsilon _{0}({\overline{y}} )>\upsilon _{0}({\underline{y}});\)

- b1::

-

for \(k <\rho +1\), \(\upsilon _{k}([ {\overline{y}},\lambda ;{\underline{y}}])=\lambda \upsilon _{k}({\overline{y}} )+(1-\lambda )\upsilon _{k}({\underline{y}})\) for all \([{\overline{y}},\lambda ;{\underline{y}}]\in B_{k}({\overline{y}};{\underline{y}});\)

- b2::

-

for \(k <\rho +1\) and \(x\in X\), \( {\overline{\upsilon }}_{k}(x)=\upsilon _{k}([{\overline{y}},{\overline{\lambda }} _{x};{\underline{y}}])\) and \({\underline{\upsilon }}_{k}(x)=\upsilon _{k}([ {\overline{y}},{\underline{\lambda }}_{x};{\underline{y}}])\) for some \({\overline{\lambda }}_{x}\) and \( {\underline{\lambda }}_{x}\) in \(\varPi _{k};\)

- b3::

-

for \(k<\rho \) and \(x\in X\), \( {\overline{\upsilon }}_{k}(x)\ge {\overline{\upsilon }}_{k+1}(x)\ge \underline{ \upsilon }_{k+1}(x)\ge {\underline{\upsilon }}_{k}(x).\)

Condition b0 fixes the utility values from the upper and lower benchmarks \( {\overline{y}}\) and \({\underline{y}}\), which corresponds to the implication of B0. Then, b1 means that for each benchmark lottery \([{\overline{y}},\lambda ; {\underline{y}}]\in B_{k}({\overline{y}};{\underline{y}})\), \(\varvec{\upsilon }_{k}([{\overline{y}},\lambda ;{\underline{y}}])\) is effectively single-valued and takes the expected utility value of \({\overline{y}}\) and \({\underline{y}}\), which corresponds to B1. b2 states that for each pure alternative \(x\in X\), the least upper and greatest lower utilities from x are measured by the benchmark scale \(B_{k}({\overline{y}};{\underline{y}});\) this does not exactly correspond to B2, but it does an implication of B2 with the help of transitivity of the interval order \(\ge _{I}\). Corresponding to B3, b3 states that \({\overline{\upsilon }}_{k}(x)\) and \({\underline{\upsilon }}_{k}(x)\) are getting more accurate as k increases.

We observe that by b3, \(\overline{\upsilon }_{k}(x)={\underline{\upsilon }} _{k}(x)\) implies \(\overline{\upsilon }_{k}(x)={\overline{\upsilon }}_{k+1}(x)= \underline{\upsilon }_{k+1}(x)={\underline{\upsilon }}_{k}(x)\), i.e., if \( \varvec{\upsilon }_{k}(x)=[\overline{\upsilon }_{k}(x),{\underline{\upsilon }}_{k}(x)]\) is effectively single-valued, then \(\varvec{\upsilon }_{k^{\prime }}(x)\) is constant and effectively single-valued for any \( k^{\prime }>k\). In particular,

This and b1 imply that a benchmark lottery takes the same utility value as long as it belongs \(B_{k}({\overline{y}};{\underline{y}})\). Also, the values \( \lambda _{{\overline{y}}}\) and \(\lambda _{{\underline{y}}}\) given by b2 are 1 and 0, since \({\overline{y}}=[{\overline{y}},1;{\underline{y}}]\) and \( {\underline{y}}=[{\overline{y}},0;{\underline{y}}]\). Hence,

These observations will be used later.

Now, we have Theorem 3.1. As stated, all proofs are given in separate subsections.

Theorem 3.1

(Representation in Step B). A base preference stream \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) satisfies Axioms B0 to B3 if and only if there is a base utility stream \( \langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\) satisfying b0 to b3 such that for any \(k<\rho +1\) and \((f,g)\in D_{k}\),

Without difficulty, we can construct a sequence \(\langle \varvec{ \upsilon }_{k}\rangle _{k<\rho +1}\) satisfying b0 to b3. Thus, by Theorem 3.1, there is a sequence \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) satisfying B0 to B3, which implies the consistency of Axioms B0 to B3.

We have the uniqueness theorem.

Theorem 3.2

(Uniqueness up to affine transformations). Let \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) satisfy Axioms B0 to B3. If \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\) and \(\langle \varvec{\upsilon }_{k}^{\prime }\rangle _{k<\rho +1}\) satisfying b0 to b3 represent \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) in the sense of (19), there are rational numbers \(\alpha >0\) and \(\beta \) such that \(\varvec{\upsilon }_{k}^{\prime }(x)=\alpha \varvec{ \upsilon }_{k}(x)+\beta =[\alpha {\overline{\upsilon }}_{k}(x)+\beta ,\alpha {\underline{\upsilon }}_{k}(x)+\beta ]\) for all \(x\in X\) and \(k<\rho +1\).

Conditions b0 to b3 require \(\varvec{\upsilon }_{k}(x)=[{\overline{\upsilon }}_{k}(x),\underline{\upsilon }_{k}(x)]\) to be represented essentially by two values \({\overline{\lambda }}_{x}\) and \({\underline{\lambda }}_{x}\) in \( \varPi _{k}\) with \(\upsilon _{0}({\overline{y}})\) and \(\upsilon _{0}({\underline{y}} )\). However, \(\upsilon _{0}({\overline{y}})\) and \(\upsilon _{0}({\underline{y}})\) are allowed to take any values in \({\mathbb {Q}}\) only with \(\upsilon _{0}( {\overline{y}})> \upsilon _{0}({\underline{y}})\). Hence, for two streams \( \langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\) and \(\langle \varvec{\upsilon }_{k}^{\prime }\rangle _{k<\rho +1}\) representing the same \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\), one is expressed as a positive linear transformation of the other, which is the above uniqueness result.

The uniqueness up to a positive linear transformation plays a crucial role in the literature on bargaining such as Nash (1950) and also the Nash welfare function theory by Kaneko and Nakamura (1979). The rational number scalars are enough for the 2-person case (cf. Kaneko 1992) and the real-algebraic numbers are enough for the general n-person case . It is easy to generalize Theorem 3.2 for the real numbers scalars, but the problem is how much we can restrict the scalars. Theorem 3.2 is suggestive of how bounded rationality is incorporated to these theories.

The processes described in terms of \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) and/or \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\) are regarded as thought experiments by the decision maker to search preferences/ utilities in his mind. These preference and utility comparisons are object components for the decision maker, but the above theorems belong to the level of meta-components. From the viewpoint of “bounded rationality”, he may stop his search when he is satisfied and/or tired. This is the same as Simon’s (1956) argument of satisficing/aspiration. We consider one example and exemplify the satisficing/aspiration argument.

Example 3.1

Let \(X=\{{\overline{y}},y,{\underline{y}}\}\), \( \varvec{\upsilon }_{0}({\overline{y}})=[1,1]\), \(\varvec{\upsilon }_{0}( {\underline{y}})=[0,0]\), and \(\varvec{\upsilon }_{0}(y)=[1,0]\). Also, let \( \varvec{\upsilon }_{1}(y)=[\frac{9}{10},\frac{7}{10}]\). For \(f=[ {\overline{y}},\frac{8}{10};{\underline{y}}]\), \(\varvec{\upsilon }_{1}(f)=[ \frac{8}{10},\frac{8}{10}]\) by (17) and b1. Then \(\varvec{ \upsilon }_{1}(y)\ngeq _{I}\varvec{\upsilon }_{1}(f)\) and \(\varvec{ \upsilon }_{1}(f)\ngeq _{I}\varvec{\upsilon }_{1}(y);\) so y and f are incomparable with respect to \(\trianglerighteq _{1}\) by (19). In Fig. 2, \(\langle \varvec{\upsilon }_{k}(y)\rangle _{k<\rho +1}=\langle [{\overline{\upsilon }}_{k}(y),\underline{\upsilon }_{k}(y)]\rangle _{k<\rho +1}\) is described as solid lines in cases A, B, and C. Since \(\varvec{\upsilon }_{0}(y)=[1,0]\), we have \({\overline{y}}\vartriangleright _{0}y\vartriangleright _{0}{\underline{y}}\) by (19). For \(k=2\), in A, \(\varvec{\upsilon }_{2}(y)=[\frac{77}{10^{2}}, \frac{77}{10^{2}}]\) and the decision maker prefers \(f=[{\overline{y}},\frac{8}{ 10};{\underline{y}}]\) to y; and in B, \(\varvec{\upsilon } _{2}(y)=[\frac{83}{10^{2}},\frac{83}{10^{2}}];\) he prefers y to f. In C, \(\varvec{\upsilon }_{k}(y)=[\frac{9}{10},\frac{7}{10}]\) is constant for \(k\ge 2;\) he gives up comparisons between y and f after \( k=1\).

This example is interpreted in terms of Simon’s satisficing/aspiration. The decision maker starts evaluating the pure alternative y the benchmark scale \(B_{0}({\overline{y}};\underline{y })\). Suppose that he finds \(\varvec{\upsilon }_{0}(y)=[1,0]\), i.e., he attaches the upper value 1 and lower value 0 to y. When \(\rho =0\), he immediately concludes that y is between \({\overline{y}}\) and \({\underline{y}}\) . When \(\rho \ge 1\), he goes to layer 1 and uses the more precise scale \( B_{1}({\overline{y}};{\underline{y}})\) to measure y. Since \(\varvec{ \upsilon }_{1}(y)=[\frac{9}{10},\frac{7}{10}],\ y\) is better than \([ {\overline{y}},\frac{7}{10};{\underline{y}}]\) but worse than \([{\overline{y}}, \frac{9}{10};{\underline{y}}]\). Still, he has not reached an exact measurement. If \(\rho =1\), he stops introspection and is satisfied with these evaluations of y. If \(\rho \ge 2\), he goes to layer \(k=2;\) in A of Fig. 2, he reaches the exact utility value \(\varvec{\upsilon } _{2}(y)= [\frac{77}{10^{2}},\frac{77}{10^{2}}]\),Footnote 7 but in C, he has still imprecise values \({\varvec{\upsilon }}_{2}(y)=[ \frac{9}{10},\frac{7}{10}]\) and does not improve them any more even for \(k>2\).

3.3 Proofs

Proof of Theorem 3.1

(If): Suppose that \( \langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\) satisfies b0 to b3 and that (19) holds for \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) and \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\)

B0: We have, by b0, b1, and b2, \(\upsilon _{0}({\overline{y}} )\ge {\overline{\upsilon }}_{0}(x)\) and \({\underline{\upsilon }}_{0}(x)\ge \upsilon _{0}({\underline{y}})\), i.e., \({\overline{y}}\trianglerighteq _{0}x\trianglerighteq _{0}{\underline{y}}\). Thus, B0.

B1: By (19), b1, and b2, we have \([{\overline{y}},\lambda ; {\underline{y}}]\trianglerighteq _{k}[{\overline{y}},\lambda ^{\prime }; {\underline{y}}]\) if and only if \(\varvec{\upsilon }_{k}([{\overline{y}} ,\lambda ;{\underline{y}}])\ge _{I} \varvec{\upsilon }_{k}([{\overline{y}} ,\lambda ^{\prime };{\underline{y}}])\) if and only if \(\lambda \upsilon _{0}( {\overline{y}})+(1-\lambda )\upsilon _{0}({\underline{y}})\ge \lambda ^{\prime }\upsilon _{0}({\overline{y}})+(1-\lambda ^{\prime })\upsilon _{0}(\underline{y })\). By b0, this is equivalent to \(\lambda \ge \lambda ^{\prime }\). That is, B1.

B2: Let \([{\overline{y}},\lambda ;{\underline{y}}]\trianglerighteq _{k}x \) and \(\lambda ^{\prime }>\lambda \). By b2, (19), (17 ), and b0, we have \(\lambda ^{\prime }\upsilon _{0}({\overline{y}} )+(1-\lambda ^{\prime })\upsilon _{0}({\underline{y}})> \lambda \upsilon _{0}({\overline{y}})+ (1-\lambda )\upsilon _{0}({\underline{y}}) \ge {\overline{\upsilon }}_{k}(x)\). Thus, \(\upsilon _{k}([{\overline{y}},\lambda ^{\prime };{\underline{y}}])=\lambda ^{\prime }\upsilon _{k}({\overline{y}} )+(1-\lambda ^{\prime })\upsilon _{k}({\underline{y}})>{\overline{\upsilon }} _{k}(x)\). By (19), we have \([{\overline{y}},\lambda ^{\prime }; {\underline{y}}]\vartriangleright _{k}x\). The other case is symmetric.

B3: Let \(f\trianglerighteq _{k}g\). By (19), we have \( {\underline{\upsilon }}_{k}(f)\ge {\overline{\upsilon }}_{k}(g)\). Let \(f=x\in X\) and \(g=[{\overline{y}},\lambda ;{\underline{y}}]\in B_{k}({\overline{y}}; {\underline{y}})\). Then, by b3, we have \({\underline{\upsilon }}_{k+1}(f)\ge {\underline{\upsilon }}_{k}(f)\ge \upsilon _{k}(g)=\upsilon _{k+1}(g)\). By ( 19), \(f\trianglerighteq _{k+1}g\). The case \(f\in B_{k}({\overline{y}}; {\underline{y}})\), \(g=x\in X\) is parallel. The case \(f=[{\overline{y}},\lambda ; {\underline{y}}]\), \(g=[{\overline{y}},\lambda ^{\prime };{\underline{y}}]\in B_{k}( {\overline{y}};{\underline{y}})\) is similar.

(Only-if): Suppose that \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) satisfying Axioms B0 to B3 is given. We construct a base utility stream \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}=[ {\overline{\upsilon }}_{k},{\underline{\upsilon }}_{k}]\) satisfying (19 ), as follows: for any \(f\in B_{k}({\overline{y}};{\underline{y}})\cup X\),

Note that when \(f=[{\overline{y}},\lambda _{f};{\underline{y}}]\in B_{k}( {\overline{y}};{\underline{y}})\), we have \({\overline{\upsilon }}_{k}(f)= {\underline{\upsilon }}_{k}(f)=\lambda _{f}\) for \(f\in B_{k}({\overline{y}}; {\underline{y}})\) by (20). Consider \(f=[{\overline{y}},\lambda _{f}; {\underline{y}}],g =[{\overline{y}},\lambda _{g};{\underline{y}}]\in B_{k}( {\overline{y}};{\underline{y}})\). Then, \(f\trianglerighteq _{k}g\) if and only if \([{\overline{y}},\lambda _{f};{\underline{y}}]\trianglerighteq _{k} [\overline{ y},\lambda _{g};{\underline{y}}]\) if and only if\(\ \lambda _{f}\ge \lambda _{g}\), i.e., \(\varvec{\upsilon }_{k}(f)\ge _{I}\varvec{\upsilon } _{k}(g)\) by B1. Let \(f\in B_{k}({\overline{y}};{\underline{y}})\) and \(g=x\in X\). Denote \(\upsilon _{k}(f)=\lambda _{f}\) and \({\overline{\upsilon }}_{k}(x)= {\overline{\lambda }}_{x}\). Suppose \(f\trianglerighteq _{k}x\). By (20 ), \([{\overline{y}},\lambda _{f};{\underline{y}}]=f \trianglerighteq _{k}[ {\overline{y}},{\overline{\lambda }}_{x};{\underline{y}}]\). By B1, \(\lambda _{f}\ge {\overline{\lambda }}_{x}\), i.e., \(\varvec{\upsilon }_{k}(f)\ge _{I}\varvec{\upsilon }_{k}(x)\). The converse is obtained by tracing this back. Thus, \(f\trianglerighteq _{k}x\) if and only if\(\ \varvec{\upsilon } _{k}(f)\ge _{I}\varvec{\upsilon }_{k}(x)\). The case \(f=x\in X\), \(g\in B_{k}({\overline{y}};{\underline{y}})\) is parallel.

By (16) and B0, we have b0. Consider b1. Since \(\upsilon _{k}({\overline{y}})=1\) and \(\upsilon _{k}({\underline{y}})=0\), it follows from the note immediately after (20) that \(\upsilon _{k}(f)=\lambda =\lambda \upsilon _{k}({\overline{y}})+(1-\lambda )\upsilon _{k}({\underline{y}})\), which is b1. By (20), we have b2 and b3. \(\square \)

Proof of Theorem 3.2

Let \(\alpha =(\upsilon _{0}^{\prime }({\overline{y}})-\upsilon _{0}^{\prime }({\underline{y}} ))/(\upsilon _{0}({\overline{y}})-\upsilon _{0}({\underline{y}}))\) and \(\beta = (\upsilon _{0}({\overline{y}})\upsilon _{0}^{\prime }({\underline{y}})-\upsilon _{0}^{\prime }({\overline{y}})\upsilon _{0}({\underline{y}}))/(\upsilon _{0}( {\overline{y}})-\upsilon _{0}({\underline{y}}))\). By b1 and (17), we have \(\varvec{\upsilon }_{k}^{\prime }({\overline{y}})=\alpha \varvec{ \upsilon }_{k}({\overline{y}})+\beta \) and \(\varvec{\upsilon }_{k}^{\prime }({\underline{y}})=\alpha \varvec{\upsilon }_{k}({\underline{y}}) +\beta \) . For \([{\overline{y}},\lambda ;{\underline{y}}]\in B_{k}({\overline{y}}; {\underline{y}})\), we have \(\varvec{\upsilon }_{k}^{\prime }([{\overline{y}} ,\lambda ;{\underline{y}}])=\lambda \varvec{\upsilon }_{k}^{\prime }( {\overline{y}})+ (1-\lambda )\varvec{\upsilon }_{k}^{\prime }({\underline{y}}) = \alpha \varvec{\upsilon }_{k}([{\overline{y}},\lambda ; {\underline{y}}]) +\beta \) by b1.

For \(x\in X\), we have \({\overline{\lambda }}_{x}\) and \( {\underline{\lambda }}_{x}\) in \(\varPi _{k}\) by b2 for \(\varvec{\upsilon } _{k}\) such that \(\varvec{\upsilon }_{k}(x)= [\upsilon _{k}([\overline{ y},{\overline{\lambda }}_{x};{\underline{y}}]),\upsilon _{k}([{\overline{y}}, {\underline{\lambda }}_{x};{\underline{y}}])]\). Let \({\overline{\lambda }} _{x}^{\prime }\) and \({\underline{\lambda }}_{x}^{\prime }\) be given by b2 for \(\varvec{\upsilon }_{k}^{\prime }\). Suppose \({\overline{\lambda }} _{x}\ne {\overline{\lambda }}_{x}^{\prime }\), say, \({\overline{\lambda }}_{x}> {\overline{\lambda }}_{x}^{\prime }\). Then, \(\varvec{\upsilon }_{k}([ {\overline{y}},{\overline{\lambda }}_{x};{\underline{y}}])\ge _{I}\varvec{ \upsilon }_{k}(x)\), but \({\overline{\upsilon }}_{k}(x)=\upsilon _{k}([ {\overline{y}},{\overline{\lambda }}_{x};{\underline{y}}])> \upsilon _{k}([ {\overline{y}},{\overline{\lambda }}_{x}^{\prime };{\underline{y}}])\). Hence, \( \varvec{\upsilon }_{k}([{\overline{y}},{\overline{\lambda }}_{x}^{\prime }; {\underline{y}}])\ngeq _{I}\varvec{\upsilon }_{k}(x)\). However, by definition of \({\overline{\lambda }}_{x}^{\prime }\), we have \(\varvec{ \upsilon }_{k}^{\prime }([{\overline{y}},{\overline{\lambda }}_{x}^{\prime }; {\underline{y}}])\ge _{I}\varvec{\upsilon }_{k}^{\prime }(x)\). This is impossible since \(\varvec{\upsilon }_{k}\) and \(\varvec{\upsilon } _{k}^{\prime }\) represent the same \(\trianglerighteq _{k}\). The case \( {\overline{\lambda }}_{x}<{\overline{\lambda }}_{x}^{\prime }\) is parallel. Thus, \({\overline{\lambda }}_{x}^{\prime }={\overline{\lambda }}_{x}\), and similarly, \({\underline{\lambda }}_{x}^{\prime }={\underline{\lambda }}_{x}\). It was shown in the above paragraph that \(\varvec{\upsilon }_{k}(f)= \alpha \varvec{\upsilon }_{k}^{\prime }(f)+\beta \) for any \(f\in B_{k}( {\overline{y}};{\underline{y}})\). This together with \({\overline{\lambda }} _{x}^{\prime }={\overline{\lambda }}_{x}\) and \({\underline{\lambda }} _{x}^{\prime }={\underline{\lambda }}_{x}\) implies \(\varvec{\upsilon } _{k}^{\prime }(x)= [\upsilon _{k}^{\prime }([{\overline{y}},\overline{ \lambda }_{x};{\underline{y}}])\), \(\upsilon _{k}^{\prime }([{\overline{y}}, {\underline{\lambda }}_{x};{\underline{y}}])] = \alpha [\upsilon _{k}([{\overline{y}},{\overline{\lambda }}_{x};{\underline{y}}]),\upsilon _{k}([ {\overline{y}},{\underline{\lambda }}_{x};{\underline{y}}])]+\beta =\alpha [{\overline{\upsilon }}_{k}(x),{\underline{\upsilon }}_{k}(x)]+\beta =\varvec{ \upsilon }_{k}(x)+\beta \). \(\square \)

4 Extension Step E: preferences

Step B is an introspection process to find preferences hidden in the mind of the decision maker. On the other hand, Step E is a logical process to extend base preferences found in Step B; it involves a possible difficulty generated by a new type of probability depths, \(\delta (f(x))\), interacting with a finite cognitive bound \(\rho \). This requires our axiomatic system, Axiom E1 in particular, to take a certain specific form. Keeping this remark in mind, we present our axiomatic system for Step E. Throughout this section, let \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) be a given base preference stream satisfying Axioms B0 to B3.

4.1 Extended preference streams

We consider how \(\trianglerighteq _{k}\) is extended to \( L_{k}(X)\) for \(k<\rho +1\). We formulate this derivation by a kind of mathematical induction from the base preferences \( \trianglerighteq _{k}\) and the previously derived relation \( \succsim _{k-1};\) this process starts at layer 0 to the last layer \(\rho \) (or goes to any layer if \(\rho =\infty \) ). These are formulated by three axioms; the first axiom E0 corresponds to the start, and the other two, E1 and E2, describe the extension process. It is shown by Theorem 4.1 that our formalism involves no logical difficulty. Then, we give one additional axiom to capture the central part determined by E0 to E2.

First, Axiom E0 is to convert base preferences \(\trianglerighteq _{k}\) to \( \succsim _{k}\) for each \(k<\rho +1\), depicted as the vertical arrows in Table 1.

Axiom E0 (Extension)(i): For any \((f,g)\in D_{0}\), \( f\trianglerighteq _{0}g\) if and only if \(f\succsim _{0}g\).

(ii): For any \(k (1\le k<\rho +1)\) and \((f,g)\in D_{k}\), if \( f\trianglerighteq _{k}g\), then \(f\succsim _{k}g\).

This states that \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) is the ultimate source for \(\langle \succsim _{k}\rangle _{k<\rho +1}\) in Step E. For \(k=0\), the base preferences are only the direct source for \( \succsim _{0};\) thus, (i) has both directions. For \(k\ge 1\), in addition to the base preferences, there is another source from the previous \(\succsim _{k-1};\) (ii) requires only one direction. We will show that as long as the domain \(D_{k}\) is concerned, the converse of (ii) holds for our intended preference stream \(\langle \succsim _{k}\rangle _{k<\rho +1}\).

Now, we consider the connection between layers \(k-1\) and k. For \({\widehat{f}}=(f_{1},\ldots ,f_{\ell })\) and \({\widehat{g}}=(g_{1},\ldots ,g_{\ell })\), we write \( {\widehat{f}}\succsim _{k}{\widehat{g}}\) iff \(f_{t}\succsim _{k}g_{t}\) for all \( t=1,\ldots ,\ell \). Recall that a decomposition of \(f\in L_{k}(X)\) is defined by (9)\(\mathbf {.}\) We formulate a derivation of \(\succsim _{k}\) from \(\succsim _{k-1}\) as follows: let \(1\le k<\rho +1\).

Axiom E1 (Derivation from the previous layer): Let \(f\in L_{k}(X)\), \(g\in B_{k}({\overline{y}};{\underline{y}})\), and \({\widehat{f}}\), \({\widehat{g}}\) their decompositions. If \({\widehat{f}} \succsim _{k-1}{\widehat{g}}\) or \({\widehat{g}} \succsim _{k-1}{\widehat{f}}\), then \( f\succsim _{k}g\) or \(g\succsim _{k}f\), respectively.

In layer \(k-1\), each \(f_{t}\) of \({\widehat{f}}=(f_{1},\ldots ,f_{\ell })\) is compared with the corresponding benchmark lottery \(g_{t}\) of \({\widehat{g}} =(g_{1},\ldots ,g_{\ell })\). These preferences are extended to layer k. In Table 1, the horizontal arrows indicate this derivation. A lottery \(f\in L_{k}(X)\) may involve the depth \(\delta (f(x))\) of the probability value f(x) and the depths of \(\delta ({\overline{\lambda }}_{x})\), \(\delta ({\underline{\lambda }} _{x})\) of \({\overline{\lambda }}_{x}\), \({\underline{\lambda }}_{x}\) given in b2, for each pure alternative \(x\in X\) with \(f(x)>0\). In the lottery \(d=\frac{25}{10^{2}}y*\frac{75}{ 10^{2}}{\underline{y}}\) in Example 3.1, the former is \(\delta ( \frac{25}{10^{2}})=2\) and the latter is \(\delta ({\overline{\lambda }} _{x})=\delta ({\underline{\lambda }}_{x})= \delta (\frac{77}{10^{2}})=2\) in case A. On the other hand, benchmark lotteries involve only the former depths since \(\delta (\lambda _{{\overline{y}}})=\delta (\lambda _{ {\underline{y}}})=0\) by (18). In Axiom E1, extension is always made based on the benchmark scale. In fact, Lemma 4.1 does not take this constraint into account, but Theorem 4.1 does.

Preferences extended through the benchmark scale \(B_{k}({\overline{y}}; {\underline{y}})\) in E1 are further extended by transitivity, which is the next axiom. Let \(0\le k<\rho +1\).

Axiom E2 (Transitivity): For any \(f,g,h\in L_{k}(X)\), if \( f\succsim _{k}g\) and \(g\succsim _{k}h\), then \(f\succsim _{k}h\).

We interpret Axioms E1 and E2 as inference rules with Axiom E0 as the bases for \(\succsim _{k}\). This means that the decision maker constructs \(\succsim _{0},\succsim _{1},\ldots \), step by step, using these axioms. This may involve some subtlety; Axioms E0 to E2 may lead to new unintended preferences. We will show Theorem 4.1, implying that this is not the case for the constructed preference relations.

The following are strengthened versions of E0 and E1:

E0\(^{*}:\) for all \(k<\rho +1\) and \((f,g)\in D_{k}\), \( f\trianglerighteq _{k}g\) if and only if \(f\succsim _{k}g;\)

E1\(^{*}:\) E1 holds and if the premise of E1 includes strict preferences, so does the conclusion.

Condition E0\(^{*}\) states that \(\langle \succsim _{k}\rangle _{k<\rho +1} \) is a faithful extension of \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\) as long as the pairs of lotteries in \(D_{k}\) are concerned. The other, E1\(^{*}\), is a strengthening of E1, too. Without these, some preferences would be added in the derivation process of \(\succsim _{0},\succsim _{1},\ldots \) Note that E2 (transitivity) preserves strict preferences in the same way as E1\(^{*}\).

To prove that our extended stream \(\langle \succsim _{k}\rangle _{k<\rho +1}\ \)enjoys E0\(^{*}\), E1\(^{*}\), and E2, we first show the following lemma using the EU hypothesis, which is an auxiliary step to Theorem 4.1. A by-product is the consistency of E0\(^{*}\), E1\(^{*}\), and E2. For the lemma, a base utility stream \(\langle \varvec{\upsilon }_{k}\rangle _{k<\rho +1}\) satisfying (19) in Theorem 3.1 is given.

Lemma 4.1

(Direct application of the EU hypothesis). Let \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) be defined as follows: for all \(k<\rho +1\),

Then, \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) satisfying Axioms E0\(^{*}\), E1\(^{*}\), and E2.

The right-hand side is given by comparisons of the expected values of the vector-valued utility function \(\varvec{\upsilon }_{k}\). The point of the lemma is not the representation of expected utility values; instead, it is the consistency of E0\(^{*}\), E1\(^{*}\), and E2, which will be used in Theorem 4.1. The consistency of Axioms E0, E1, and E2 is straightforward since E0 takes only preferences given by B0 to B3, and E1 and E2 introduce new preferences from them. On the other hand, E0\(^{*}\ \text { and E1}^{*}\) may generate strict preferences, including negations. Hence, the consistency implied by Lemma 4.1 is a basis of our development.

It will be argued in Sect. 7 that when \(\rho =\infty \), the limit preference relation \(\succsim _{\infty }^{*}\) is determined E0, E1, and E2 under some additional condition on \(L_{\infty }(X)=\cup _{k<\infty }L_{k}(X)\).

Now, we prepare a few concepts for Theorem 4.1. Let \(\langle \succsim _{k}\rangle _{k<\rho +1}\) be a stream satisfying E0 to E2. We say that \(\langle \succsim _{k}\rangle _{k<\rho +1}\) is the smallest stream iff for any \(\langle \succsim _{k}^{\prime }\rangle _{k<\rho +1}\) satisfying E0 to E2, and \(f,g\in L_{k}(X)\), \(k<\rho +1\),

Also, the set of preferences over \(L_{k}(X)\) derived from \(\succsim _{k-1}\) by E1 is denoted by \((\succsim _{k-1})^{\text {E1}}\), and the set of transitive closure of \(F\subseteq L_{k}(X)^{2}\) is denoted by \(F^{tr}\), i.e., \((f,g)\in F^{tr}\) if and only if there is a finite sequence \(f=h_{0}\), \(h_{1},\ldots ,h_{m}=g\) such that \((h_{t},h_{t+1})\in F\) for \(t=0,\ldots ,m-1\).

Using these concepts, we construct the smallest stream \(\langle \succsim _{k}\rangle _{k<\rho +1}\), each of which is a binary relation on \(L_{k}(X)\), and show, using Lemma 4.1, that it satisfies E0\(^{*}\) and E1\(^{*}\) as well as E2.

Theorem 4.1

(Smallest extended stream). The stream \( \langle \succsim _{k}\rangle _{k<\rho +1}\) of the sets generated by the following induction:

is the smallest stream satisfying E0 to E2. This \(\langle \succsim _{k}\rangle _{k<\rho +1}\) satisfies E0\(^{*}\) and E1\(^{*}\).

The construction of \(\langle \succsim _{k}\rangle _{k<\rho +1}\) starts with \( \succsim _{0} = (\trianglerighteq _{0})^{tr}\), which is well defined since \(\trianglerighteq _{0}\) is a binary relation in \(D_{0}\). Then, provided that \(\succsim _{k-1}\) and \(\trianglerighteq _{k}\) are already given, \(\succsim _{k}\) is defined to be \([(\succsim _{k-1})^{\text {E1}}\cup (\trianglerighteq _{k})]^{tr}\). This is a subset of \(L_{k}(X)^{2};\) thus, it is a binary relation. Thus, the stream \(\langle \succsim _{k}\rangle _{k<\rho +1}\) is unique and is the smallest among the streams satisfying E0 to E2. Furthermore, it satisfies E0\(^{*}\) and E1\(^{*}\), where Lemma 4.1 is used. This assertion guarantees that the constructed stream \(\langle \succsim _{k}\rangle _{k<\rho +1}\) is a faithful extension of \(\langle \trianglerighteq _{k}\rangle _{k<\rho +1}\).Footnote 8

The stream \(\langle \succsim _{k}\rangle _{k<\rho +1}\) constructed in Theorem 4.1 differs from the stream \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) given in Lemma 4.1 in that the two types of depths, \(\delta ({\overline{\lambda }}_{x})\), \(\delta ({\underline{\lambda }}_{x})\) , and \(\delta (f(x))\) are taken into account in the former, but for the latter, (21) defines the expected utility, ignoring them. These depths are interactive with cognitive bound \(\rho \). When \(\rho =\infty \), \(\succsim _{\infty }=\cup _{k<\rho +1}\succsim _{k}\) and \(\succsim _{\infty }^{*}=\cup _{k<\rho +1}\succsim _{k}^{*}\) coincide under some additional restriction on \(L_{\infty }(X)\), which will be discussed in Sect. 7.1. In general, \(\langle \succsim _{k}\rangle _{k<\rho +1}\) and \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) differ and coincide only in partial domains, which will be discussed in Sect. 6.

In the constructing process of \(\langle \succsim _{k}\rangle _{k<\rho +1}\), E0 to E2 are all extension axioms, and the resulting stream of (23) is uniquely constructed, while there are multiple preference streams satisfying E0 to E2. It would be easier for various purposes to extract the essence of (23) by formulating one axiom. It is formulated as Axiom E3, which requires that a preference \(f\succsim _{k}g\) be based on comparisons with the benchmark scale \(B_{k}({\overline{y}};{\underline{y}})\) with either \(\trianglerighteq _{k}\) or \(\succsim _{k-1}\), that is, it eliminates preferences from other possible sources. Note that h in E3 may be the same as f or g.

Axiom E3.Let \(k<\rho +1\) and \(f,g\in L_{k}(X)\) with \( f\succsim _{k}g\). Then \(f\succsim _{k}h \succsim _{k}g\) for some \(h\in B_{k}({\overline{y}};{\underline{y}})\). When \(k\ge 1\), for the pair (f, h), \(f\trianglerighteq _{k}h\) holds or f, h have decompositions \({\widehat{f}},{\widehat{h}}\) with \({\widehat{f}}\succsim _{k-1}{\widehat{h}}\); the same holds for the pair (h, g).

The stream \(\langle \succsim _{k}\rangle _{k<\rho +1}\) given by (23) is characterized by adding E3 to E0 to E2.

Theorem 4.2

(Unique determination by E0 to E3). Any extended stream satisfying E0 to E3 is the same as the preference stream \( \langle \succsim _{k}\rangle _{k<\rho +1}\) given by Theorem 4.1.

Throughout the following, the stream given by (23) is denoted by \( \langle \succsim _{k}\rangle _{k<\rho +1}\). Other streams may have some additional superscripts such as \(^{\prime }\), \(*\).

Lemma 4.2 will be used in the subsequent analyses; (1) is the horizontal arrows in Table 1; and (2) means that \( \succsim _{k}\) is bounded in \(L_{k}(X)\) by the upper and lower benchmarks \({\overline{y}}\) and \({\underline{y}}\).

Lemma 4.2

Let \(\langle \succsim _{k}\rangle _{k<\rho +1}\) satisfies E0 to E3, and \(1\le k<\rho +1\).

- (1):

-

(Preservation of preferences): For any \(f,g\in L_{k-1}(X)\), \( f\succsim _{k-1}g\) implies \(f\succsim _{k}g\).

- (2):

-

\({\overline{y}}\succsim _{k}f\succsim _{k}{\underline{y}}\) for any \(f\in L_{k}(X)\).

The EU hypothesis is included in Axiom B1 and condition b1 along the benchmark scale \(B_{k}({\overline{y}};{\underline{y}})\), and E1 is a weak form of Axiom NM2 (independence). It follows from Lemma 4.1 and Theorem 4.1 that there are possibly multiple preference streams satisfying E0 to E2, among which some satisfy the EU representation in (21) but not in general. This is caused by two types of depths included in a lottery. For example, lottery \(d=\frac{25}{10^{2}}y*\frac{75}{10^{2}}{\underline{y}}\) involves the depths of coefficient \(\frac{25}{10^{2}}\) and of evaluation \(\lambda _{y}\). This is the reason for the EU hypothesis to hold only for some partial domain, which will be explicitly studied in Sect. 6.

4.2 Proofs

Proof of Lemma 4.1

We show that \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) given by (21) satisfies E0\(^{*}\), E1\(^{*}\), and E2. By Theorem 3.2, we can assume that \( \upsilon _{k}({\overline{y}})=1\) and \(\upsilon _{k}({\underline{y}})=0\).

Since \(E_{f}({\underline{\upsilon }}_{k})=\lambda \) if \( f=[{\overline{y}},\lambda ;{\underline{y}}]\in B_{k}({\overline{y}};{\underline{y}})\) and \(E_{x}(\overline{\upsilon }_{k})={\overline{\upsilon }}_{k}(x)\) if \(f=x\in X\). Hence, by (19) and b2, \(f\trianglerighteq _{k}x\) if and only if \(\lambda \ge {\overline{\upsilon }}_{k}(x)\) if and only if \(E_{f}({\underline{\upsilon }}_{k})\ge E_{x}(\overline{\upsilon }_{k})\). The other cases are symmetric. Thus, E0\(^{*}\) holds for any \((f,g)\in D_{k}\).

It remains to show that \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) satisfies E1\(^{*}\) and E2. Since (21) gives the interval order over the set \(\{[E_{f}({\overline{\upsilon }} _{k}),E_{f}({\underline{\upsilon }}_{k})]:f\in L_{k}(X)\}\), E2 holds. We show E1\(^{*}\). Let \(f\in L_{k}(X),g\in B_{k}[{\overline{y}};{\underline{y}}]\) and their decompositions \({\widehat{f}}\) and \({\widehat{g}}\) with \({\widehat{f}} \succsim _{k-1}^{*}{\widehat{g}}\). By (21), \(E_{f_{t}}(\underline{ \upsilon }_{k})\ge E_{g_{t}}({\overline{\upsilon }}_{k})\) for all \( t=1,\ldots ,\ell \). Then, \(E_{f}({\underline{\upsilon }}_{k})=E_{{\widehat{e}}*{\widehat{f}}}({\underline{\upsilon }}_{k})=\sum _{t=1}^{\ell }\tfrac{1}{\ell } E_{f_{t}}({\underline{\upsilon }}_{k})\ge \sum _{t=1}^{\ell }\tfrac{1}{\ell } E_{g_{t}}({\overline{\upsilon }}_{k})=E_{{\widehat{e}}*{\widehat{g}}}( {\overline{\upsilon }}_{k})=E_{g}({\overline{\upsilon }}_{k})\). If strict preferences are included in the decompositions, the conclusion is strict; thus, we have E1\(^{*}\). \(\square \)

Proof of Theorem 4.1

This has the three assertions: ( a) \(\langle \succsim _{k}\rangle _{k<\rho +1}\) is a sequence of a binary relations satisfying Axioms E0 to E2; (b) it is the smallest in the sense of (22) among the streams \(\langle \succsim _{k}^{\prime }\rangle _{k<\rho +1}\) satisfying E0 to E2; and (c) E0\(^{*}\), E1\( ^{*}\) hold for \(\langle \succsim _{k}\rangle _{k<\rho +1}\).

(a): E2 follows directly from (23). Consider E0. (ii) follows from (23). We show that \(\succsim _{0} =(\trianglerighteq _{0})^{tr} \) satisfies that for any \((f,g)\in D_{0}\), \(f\succsim _{0}g\) implies \(f\trianglerighteq _{0}g\). Since \(\succsim _{0} =(\trianglerighteq _{0})^{tr}\), there is a sequence \(f=h_{0}\trianglerighteq _{0}\ldots \trianglerighteq _{0}h_{m}=g\). If \(h_{t}\in X-B_{0}({\overline{y}}; {\underline{y}})\), then \(h_{t-1}\in B_{0}({\overline{y}};{\underline{y}})\) and \( h_{t+1}\in B_{0}({\overline{y}};{\underline{y}})\). By B2, \(\lambda _{t-1}\ge \lambda _{t+1}\), where \(h_{t-1}=[{\overline{y}};\lambda _{t-1},{\underline{y}}]\) and \(h_{t+1}=[{\overline{y}};\lambda _{t+1},{\underline{y}}]\). If \( h_{t},h_{t+1}\in B_{0}({\overline{y}};{\underline{y}})\), then \(\lambda _{t}\ge \lambda _{t+1}\). Hence, we can shorten the sequence to \(f=h_{0} \trianglerighteq _{0}h_{m}=g\). Thus, \(f\trianglerighteq _{0}g\).

Consider E1. Suppose that \(f\in L_{k}(X)\) and \(g\in B_{k}[{\overline{y}};{\underline{y}}]\) have decompositions \({\widehat{f}},{\widehat{g}}\) with \({\widehat{f}}\succsim _{k-1}{\widehat{g}}\). By (23), we have \( f=e*{\widehat{f}}\succsim _{k}e*{\widehat{g}}=g\). This causes no difficulty, even if \(f\in B_{k}(X)\) and \(g\in B_{k}[{\overline{y}};{\underline{y}}]\). The symmetric case \({\widehat{g}}\succsim _{k-1}{\widehat{f}}\) is similar.

(b): We prove by induction on k that \(\langle \succsim _{k}\rangle _{k<\rho +1}\) satisfies (22) for any \(\langle \succsim _{k}^{\prime }\rangle _{k<\rho +1}\) satisfying E0 to E2. When \(k=0\), we have \(\succsim _{0} = (\trianglerighteq _{0})^{tr}\) by (23). Let \(f\succsim _{0}g\), i.e., \(f (\trianglerighteq _{0})^{tr}g\), which implies that there is a sequence \(f=h_{0}\trianglerighteq _{0}h_{1}\trianglerighteq _{0}\ldots \trianglerighteq _{0}h_{m}=g\). By E0.(i), we have \(f=h_{0}\succsim _{0}^{\prime }h_{1}\succsim _{0}^{\prime }\ldots \succsim _{0}^{\prime }h_{m}=g\). By E2 for \(\succsim _{0}^{\prime }\), we have \(f\succsim _{0}^{\prime }g\).

Now, we assume that (22) holds for \(k-1\). Let \( f\succsim _{k}g\). By (23), there is a sequence \(f=h_{0}\succsim _{k}\ldots \succsim _{k}h_{m}=g\) such that each \(h_{t}\succsim _{k}h_{t+1}\) is a consequence of E1 or \(h_{t}\succsim _{k}h_{t+1}\) is \(h_{t}\trianglerighteq _{k}h_{t+1}\). In the first case, there are decompositions \({\widehat{h}}_{t}, {\widehat{h}}_{t+1}\) of \(h_{t},h_{t+1}\) such that \({\widehat{h}}_{t}\succsim _{k-1}{\widehat{h}}_{t+1}\). By the induction hypothesis, we have \({\widehat{h}} _{t}\succsim _{k-1}^{\prime }{\widehat{h}}_{t+1}\). Thus, \(h_{t}\succsim _{k}^{\prime }h_{t+1}\) by E1 for \(\succsim _{k}^{\prime }\). In the second case, \(h_{t}\trianglerighteq _{k}h_{t+1}\) implies \(h_{t}\succsim _{k}^{\prime }h_{t+1}\) by E0.(ii) for \(\succsim _{k}^{\prime }\). Hence, \( f\succsim _{k}^{\prime }g\) by E2 for \(\succsim _{k}^{\prime }\).

(c): Take \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) given by Lemma 4.1. Since \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) satisfies E0 to E2, it holds by (b) that for all \( k<\rho +1\) and \(f,g\in L_{k}(X)\),

E0\(^{*}\): Since E0\(^{*}\) holds for \(\succsim _{k}^{*}\) by Lemma 4.1, for any \((f,g)\in D_{k}\), \(f\succsim _{k}^{*}g\) implies \(f\trianglerighteq _{k}g\). Thus, if \(f\succsim _{k}g\), then \(f\succsim _{k}^{*}g\) by (24), which implies \(f\trianglerighteq _{k}g\). For the converse, \(f\trianglerighteq _{k}g\) implies \(f\succsim _{k}g\) by (23).

\(E1^{*}\): First, we prove by induction on k that for all \(k<\rho +1\) and \(f,g\in L_{k}(X)\),

We make the induction hypothesis that (25) holds for \(k-1\). Now, let \(f,g\in L_{k}(X)\) with \(f\succ _{k}g\). By (23), there are \( h_{0}=f,h_{1},\ldots ,h_{m}=g\) in \(L_{k}(X)\ \)such that \((h_{l},h_{l+1})\in (\succsim _{k-1})^{\text {E1}}\) or \((h_{l},h_{l+1})\in (\trianglerighteq _{k}) \) for each \(l=0,\ldots ,m-1\).

Consider case \((i): (h_{l},h_{l+1})\in (\trianglerighteq _{k})\). Then by E0\(^{*}\) for \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\), it holds that \(h_{l}\succsim _{k}^{*}h_{l+1}\), and also, if the premise is strict, it follows from E0\(^{*}\) for \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) that \(h_{l}\succ _{k}^{*}h_{l+1}\). Now, consider case \((ii): (h_{l},h_{l+1})\in (\succsim _{k-1})^{\text {E1}}\). Let \({\widehat{h}}_{l},{\widehat{h}}_{k+1}\) be decompositions of \(h_{l},h_{l+1}\) so that \({\widehat{h}}_{l}\succsim _{k-1} {\widehat{h}}_{l+1}\) with/without strict preferences for some components. Hence, by (24) and (25) (the induction hypothesis), the same holds for \(\succsim _{k-1}^{*}\). By E1\(^{*}\) for \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\), we have \(h_{l}\succsim _{k}^{*}h_{l+1} \) and \(h_{l}\succ _{k}^{*}h_{l+1}\) if strict preferences hold for some components. At least one of \(l=0,\ldots ,m-1\), we have strict preferences for \((h_{l},h_{l+1})\in (\trianglerighteq _{k})\) or \({\widehat{h}} _{l}\succsim _{k-1}{\widehat{h}}_{l+1}\), because of \(f\succ _{k}g\). This and the above assertions in (i) and (ii) imply \(f\succ _{k}^{*}g\).

Finally, we verify E1\(^{*}\) for \(\langle \succsim _{k}\rangle _{k<\rho +1}\). Let \(f\in L_{k}(X)\) and \(g\in B_{k}({\overline{y}}; {\underline{y}})\), and let their decompositions be \({\widehat{f}},{\widehat{g}}\) with \({\widehat{f}}\succsim _{k-1}{\widehat{g}}\). Suppose that one of these preferences is strict. By E1, we have \(f\succsim _{k}g\). It suffices to show not \(g\succsim _{k}f\). However, \({\widehat{f}}\succsim _{k-1}{\widehat{g}}\) implies \({\widehat{f}}\succsim _{k-1}^{*}{\widehat{g}}\) by (24), and some components of the latter hold strictly by (25). By E1\(^{*}\) for \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\), we have \(f\succ _{k}^{*}g\), implying not \(g\succsim _{k}^{*}f\). By the contrapositive of (24), we have not \(g\succsim _{k}f\). \(\square \)

Proof of Theorem 4.2

Let \(\langle \succsim _{k}^{*}\rangle _{k<\rho +1}\) be any extended stream satisfying E0 to E3. We prove by induction on \(k<\rho +1\) that for any \(f,g\in L_{k}(X)\),

Since \(\langle \succsim _{k}\rangle _{k<\rho +1}\) is the smallest stream satisfying E0 to E2 by Theorem 4.1, the if part holds for any \(k<\rho +1\).

Consider the only-if part. Let \(k=0\). Let \( f,g\in L_{0}(X)\) with \(f\succsim _{0}^{*}g\). Then, by E3 for \(\succsim _{0}^{*}\), we have an \(h\in B_{0}({\overline{y}};{\underline{y}})\) with \( f\succsim _{0}^{*}h\succsim _{0}^{*}g\). Thus, \(f\trianglerighteq _{0}h\trianglerighteq _{0}g\) by E0 for \(\succsim _{0}^{*}\). Hence, \(f (\trianglerighteq _{0})^{tr}g\), i.e., \(f\succsim _{0}g\) by (23). Now, we make the induction hypothesis that the only-if part holds for \( k-1. \) Let \(f\succsim _{k}^{*}g\). Then, by E3, we have \( h_{0}:=f\succsim _{k}^{*}h_{1}\succsim _{k}^{*}h_{2}:=g\) for some \( h_{1}\in B_{k}({\overline{y}};{\underline{y}})\). Let \(h_{0}=x\in X\). If \( h_{0}\trianglerighteq _{k}h_{1}\), then \(h_{0}\succsim _{k}h_{1}\) by E0\( ^{*}\) for \(\succsim _{k} \). Suppose \({\widehat{h}}_{0}\succsim _{k-1}^{*}{\widehat{h}}_{1}\) for some decompositions \({\widehat{h}}_{0}\), \( {\widehat{h}}_{1}\) of \(h_{0}\), \(h_{1}\). By the induction hypothesis, we have \( {\widehat{h}}_{0}\succsim _{k-1}{\widehat{h}}_{1}\). Thus, by E1 for \(\succsim _{k}\), we have \(h_{0}\succsim _{k}h_{1}\). In the above two cases, we have \( h_{0}\succsim _{k}h_{1}\). By the same argument, we have \(h_{1}\succsim _{k}h_{2}\). Thus, by E2 for \(\succsim _{k}\), we have \(f=h_{0}\succsim _{k}h_{2}=g\), i.e., \(f\succsim _{k}g\). \(\square \)

Proof of Lemma 4.2