Abstract

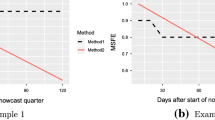

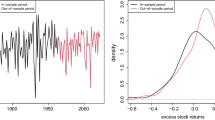

Empirical studies in the forecast combination literature have shown that it is notoriously difficult to improve upon the simple average despite the availability of optimal combination weights. In particular, historical performance-based combination approaches do not select forecasters that improve upon the simple average going forward. This paper shows that this is due to the high correlation among forecasters, which only by chance causes some individuals to have lower root-mean-squared error (RMSE) than the simple average. We introduce a new nonparametric approach to eliminate forecasters who perform well based on chance as well as poor performers. This leaves a subset of forecasters with better performance in subsequent periods. The average of this group improves upon the simple average in the SPF particularly for bond yields where some forecasters may be more likely to have superior forecasting ability.

Similar content being viewed by others

Notes

Note that we are focusing in this paper on point forecasts. Jore et al. (2010) and Kascha and Ravazzolo (2010) show that the estimation of time-varying weights provides gains over equal weights for density forecasts. Kenny et al. (2015) evaluate macroeconomic density forecasts and show that for many forecasters it is possible to systematically improve their forecast performance. Lahiri et al. (2015) show that the density forecasts can be used to create a subset of forecasts that improve over the simple average. Lahiri and Yang (2015) show that for binary outcomes, nonlinear combination schemes perform well.

In our analysis, here we focus on the mean of the forecasters, which is typically used in the forecast combination literature. For the SPF, the median is what is often used. Our results are robust to using the median instead and the results are available from the authors upon request.

The higher minimum number of forecasts here is necessary to have forecasters overlap more over the entire sample.

This result would not change, if forecasters had different variances but are still independent from each other.

We do not assume different regimes or more complicated environments in this paper. Zhao (2015) conducts simulations for a number of different environments for a range of approaches.

We found that the median pairwise correlation and the median number of periods forecasters contributed to the survey correspond well to the share of individual forecasters beating the simple average in the empirical distribution.

While our approach might lead to a slightly higher share of truly better forecasters not being detected than other methods in complete samples, this is outweighed by being less affected by missing values and the lower false-positive rate at high correlations.

This approach is similar to the method used by Gamber et al. (2014). They want to compare a subset of the best forecasters in the SPF to the Federal Reserve forecasts, which is very different from our objective. To obtain the subset, they used a static RMSE percentile performance threshold in the first stage instead of the rank-based performance threshold relative to the mean we use. The second selection stage is the same as ours. While the first stage of their approach will guarantee to have a subset in every period unlike ours, their approach does not require forecasters in the subset to perform well relative to the mean. Assuming the performance remains the same before and after the selection into the subset, their approach might not perform better than the mean for certain distributions of forecasters.

As an alternative to this nonparametric threshold approach, one could also use an estimated approach like impulse indicator saturation as described in Ericsson and Reisman (2012), but this would require more modifications to the dataset due to the large gaps.

We will evaluate this in the next section.

The three approaches tend to perform worse on an expanding window basis.

As a crosscheck, we also compared MSE directly, which led to a worse performance of the subsets of the alternative approaches.

Note that while both the subset and the simple average are biased for 10-year bond yields, the subset is clearly less biased.

While it might be preferable to use the subset of the previous period instead of the simple average, that subset might also not contain any forecast for the current period, due to (re-)entry and exit of forecasters.

Note that this would also reduce the lag between forecasts being made and evaluated for longer horizons.

References

Batchelor R (1990) All forecasters are equal. J Bus Econ Stat 8(1):44–143

Bates J, Granger C (1969) The combination of forecasts. J Oper Res Soc 20(4):451–468

Blix M, Wadefjord J, Wienecke U, Adahl M (2001) How good is the forecasting performance of major institutions? Sveriges Riksbank Econ Rev 3:38–68

Bürgi C, Stekler H (2015) Forecast evaluation with re-entry of forecasters. Unpublished

Capistrán C, Timmermann A (2009) Forecast combination with entry and exit of experts. J Bus Econ Stat 27(4):428–440

Clark TE, McCracken MW (2010) Averaging forecasts from vars with uncertain instabilities. J Appl Econ 25(1):5–29

Clemen RT (1989) Combining forecasts: a review and annotated bibliography. Int J Forecast 5(4):559–583

Conflitti C, Mol CD, Giannone D (2015) Optimal combination of survey forecasts. Int J Forecast 31(4):1096–1103

D’Agostino A, Mcquinn K, Whelan K (2012) Are some forecasters really better than others? J Money Credit Bank 44(4):715–732

Davies A, Lahiri K (1995) A new framework for analyzing survey forecasts using three-dimensional panel data. J Econ 68(1):205–227

Diebold FX, Mariano RS (1995) Comparing predictive accuracy. J Bus Econ Stat 13(3):63–253

Elliott G (2011) Forecast combination when outcomes are difficult to predict. UCSD working paper available at: http://econweb.ucsd.edu/~gelliott/fcforecastability.pdf

Ericsson N, Reisman E (2012) Evaluating a global vector autoregression for forecasting. Int Adv Econ Res 18(3):247–258

Gamber EN, Smith JK, McNamara DC (2014) Where is the fed in the distribution of forecasters? J Policy Model 36(2):296–312

Genre V, Kenny G, Meyler A, Timmermann A (2013) Combining expert forecasts: can anything beat the simple average? Int J Forecast 29(1):108–121

Harvey D, Leybourne S, Newbold P (1997) Testing the equality of prediction mean squared errors. Int J Forecast 13(2):281–291

Jore AS, Mitchell J, Vahey SP (2010) Combining forecast densities from vars with uncertain instabilities. J Appl Econ 25(4):621–634

Kascha C, Ravazzolo F (2010) Combining inflation density forecasts. J Forecast 29(1–2):231–250

Kenny G, Kostka T, Masera F (2015) Density characteristics and density forecast performance: a panel analysis. Empir Econ 48(3):1203–1231

Lahiri K, Peng H, Zhao Y (2014) On-line learning and forecast combination in unbalanced panels. Econ Rev (Forthcoming)

Lahiri K, Peng H, Zhao Y (2015) Testing the value of probability forecasts for calibrated combining. Int J Forecast 31(1):113–129

Lahiri K, Yang L (2015) A non-linear forecast combination procedure for binary outcomes. Nonlinear Dyn Econ (5175) (Forthcoming)

Poncela P, Rodríguez J, Sánchez-Mangas R, Senra E (2011) Forecast combination through dimension reduction techniques. Int J Forecast 27(2):224–237

Stekler HO (1987) Who forecasts better? J Bus Econ Stat 5(1):155–158

Timmermann A (2006) Chapter 4 forecast combinations. In: Elliott G, Timmermann A (eds) Handbook of economic forecasting, vol 1., handbook of economic forecastingLondon, Elsevier, pp 135–196

Zhao Y (2015) Robustness of forecast combination in unstable environment: a monte carlo study of advanced algorithms. Research program on forecasting working paper no. 2015-005

Author information

Authors and Affiliations

Corresponding author

Additional information

We would like to thank the editor and an anonymous referee for helpful suggestions. We also thank Neil Ericsson, Tatevik Sekhposyan, Herman Stekler, Benjamin Williams, and Herbert Zhao for their valuable comments and support and participants in the Federal Forecasters Conference, the Georgetown Center for Economic Research (GCER) conference, the GWU SAGE seminar series, the 16th IWH-CIREQ Macroeconometric Workshop, and the Towson University Economics Seminar.

Rights and permissions

About this article

Cite this article

Bürgi, C., Sinclair, T.M. A nonparametric approach to identifying a subset of forecasters that outperforms the simple average. Empir Econ 53, 101–115 (2017). https://doi.org/10.1007/s00181-016-1152-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00181-016-1152-y

Keywords

- Forecast combination

- Forecast evaluation

- Multiple model comparisons

- Real-time data

- Survey of professional forecasters