Abstract

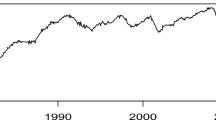

Claims about distributions of time series are often unproven assertions instead of substantiated conclusions for lack of hypotheses testing tools. In this work, Kolmogorov–Smirnov type simultaneous confidence bands (SCBs) are constructed based on simple random samples (SRSs) drawn from realizations of time series, together with smooth SCBs using kernel distribution estimator (KDE) instead of empirical cumulative distribution function of the SRS. All SCBs are shown to enjoy the same limiting distribution as the standard Kolmogorov–Smirnov for i.i.d. sample, which is validated in simulation experiments on various time series. Computing these SCBs for the standardized S&P 500 daily returns data leads to some rather unexpected findings, i.e., student’s t-distributions with degrees of freedom no less than 3 and the normal distribution are all acceptable versions of the standardized daily returns series’ distribution, with proper rescaling. These findings present challenges to the long held belief that daily financial returns distribution is fat-tailed and leptokurtic.

Similar content being viewed by others

References

Billingsley P (1999) Convergence of probability measures, 2nd edn. Wiley, New York

Brockwell PJ, Davis RA (1991) Time series: theory and methods, 2nd edn. Springer, New York

Cai L, Yang L (2015) A smooth simultaneous confidence band for conditional variance function. TEST 24:632–655

Cao G, Wang L, Li Y, Yang L (2016) Oracle-efficient confidence envelopes for covariance functions in dense functional data. Stat Sin 26:359–383

Cao G, Yang L, Todem D (2012) Simultaneous inference for the mean function based on dense functional data. J Nonparametr Stat 24:359–377

Cardot H, Degras D, Josserand E (2013) Confidence bands for Horvitz–Thompson estimators using sampled noisy functional data. Bernoulli 19:2067–2097

Cardot H, Josserand E (2011) Horvitz–Thompson estimators for functional data: asymptotic confidence bands and optimal allocation for stratified sampling. Biometrika 98:107–118

Cheng M, Peng L (2002) Regression modeling for nonparametric estimation of distribution and quantile functions. Stat Sin 12:1043–1060

Deo CM (1973) A note on empirical process of strong-mixing sequences. Ann Probab 5:870–875

Degras D (2011) Simultaneous confidence bands for nonparametric regression with functional data. Stat Sin 21:1735–1765

Falk M (1985) Asymptotic normality of the kernel quantile estimator. Ann Stat 13:428–433

Francq C, Zakoian J (2010) GARCH models: structure, statistical inference and financial applications. Wiley, Paris

Gu L, Wang L, Härdle W, Yang L (2014) A simultaneous confidence corridor for varying coefficient regression with sparse functional data. TEST 23:806–843

Gu L, Yang L (2015) Oracally efficient estimation for single-index link function with simultaneous band. Electron J Stat 9:1540–1561

Kong J, Gu L, Yang L (2018) Prediction interval for autoregressive time series via oracally efficient estimation of multi-step ahead innovation distribution function. J Time Ser Anal 39:690–708

Liu R, Yang L (2008) Kernel estimation of multivariate cumulative distribution function. J Nonparametr Stat 20:661–677

Ma S, Yang L, Carroll R (2012) A simultaneous confidence band for sparse longitudinal regression. Stat Sin 22:95–122

Reiss R (1981) Nonparametric estimation of smooth distribution functions. Scand J Stat 8:116–119

Rosén B (1964) Limit theorems for sampling from finite populations. Ark Mat 5:383–424

Shao Q, Yang L (2017) Oracally efficient estimation and consistent model selection for auto-regressive moving average time series with trend. J R Stat Soc Ser B Stat Methodol 79:507–524

Song Q, Liu R, Shao Q, Yang L (2014) A simultaneous confidence band for dense longitudinal regression. Commun Stat A-Theory Methods 43:5195–5210

Song Q, Yang L (2009) Spline confidence bands for variance functions. J Nonparametr Stat 5:589–609

Wang J, Cheng F, Yang L (2013) Smooth simultaneous confidence bands for cumulative distribution functions. J Nonparametr Stat 25:395–407

Wang J, Liu R, Cheng F, Yang L (2014) Oracally efficient estimation of autoregressive error distribution with simultaneous confidence band. Ann Stat 42:654–668

Wang J, Wang S, Yang L (2016) Simultaneous confidence bands for the distribution function of a finite population and its superpopulation. TEST 25:692–709

Wang J, Yang L (2009) Polynomial spline confidence bands for regression curves. Stat Sin 19:325–342

Xue L, Wang J (2010) Distribution function estimation by constrained polynomial spline regression. J Nonparametr Stat 22:443–457

Yamato H (1973) Uniform convergence of an estimator of a distribution function. Bull Math Stat 15:69–78

Zheng S, Liu R, Yang L, Härdle W (2016) Statistical inference for generalized additive models: simultaneous confidence corridors and variable selection. TEST 25:607–626

Zheng S, Yang L, Härdle W (2014) A smooth simultaneous confidence corridor for the mean of sparse functional data. J Am Stat Assoc 109:661–673

Zhang Y, Liu R, Shao Q, Yang L (2020) Two-step estimation for time varying arch models. J Time Ser Anal 41:551–570

Zhang Y, Yang L (2018) A smooth simultaneous confidence band for correlation curve. TEST 27:247–269

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supported by National Natural Science Foundation of China awards 11771240, 11801272, 12026242, Natural Science Foundation of Jiangsu Province of China BK20180820, Qinglan Project of Jiangsu Province of China, Philosophy and Social Science Research in Colleges and Universities of Jiangsu Province 2019SJA0353.

Appendix

Appendix

Throughout this section, c denotes any positive constant and \(\mathcal {O}_{p}\) (or \( o_{p}\)) a sequence of random variables of certain order in probability. In addition, \(u_{p}\) denotes a sequence of random functions which are \(o_{p}\) uniformly defined in the domain. For any continuous function \(\phi \) defined on an interval \(\mathcal {I}\), the modulus of continuity is defined as \(\omega \left( \phi ,\varDelta \right) =\sup _{x,x^{\prime }\in \mathcal {I} ,\left| x-x^{\prime }\right| \le \varDelta }\left| \phi \left( x^{\prime }\right) -\phi \left( x\right) \right| \)

Lemma 1

(Theorem 7.1,2, Brockwell and Davis (1991)) If \(\left\{ X_t\right\} _{t=1}^n\) is the stationary process,

with \(\sum _{j=-\infty }^{\infty }\left| \psi _j\right| <\infty \) and \(\sum _{j=-\infty }^{\infty }\left| \psi _j\right| \ne 0\), then \(\overline{X}_n\) is AN\(\left( \mu ,n^{-1}v\right) \), where \(\overline{X}_n=n^{-1}\sum _{t=1}^n X_t\), \(v=\sum _{h=-\infty }^{\infty }\gamma (h)=\sigma ^2\left( \sum _{j=-\infty }^{\infty }\psi _j \right) ^2\), and \(\gamma (\cdot )\) is the autocovariance function of \(\left\{ X_t\right\} _{t=1}^n\).

Lemma 2

(Theorem 8.1, Brockwell and Davis (1991)) If \(\left\{ X_t\right\} _{t=1}^n\) is the zero-mean causal AR(p) process,

and \(\hat{\varvec{\phi }}\) is the Yule-Walker estimator of \({\varvec{\phi }}\), that is \(\hat{\varvec{\phi }}={\varvec{\varGamma }}_p^{-1}\hat{\varvec{\gamma }}_p\) with \(\hat{\varvec{\varGamma }}_p=\left\{ {\hat{\gamma }}(i-j)\right\} _{i,j=1}^p\) and \(\hat{\varvec{\gamma }}_p=\left( {\hat{\gamma }}(1),\ldots ,{\hat{\gamma }}(p)\right) ^{\top }\), then

where \({\varvec{\varGamma }}_p\) is the covariance matrix with \({\varvec{\varGamma }}_p=\left\{ \gamma (i-j)\right\} _{i,j=1}^p\). Moreover,

where \({\hat{\sigma }}^2={\hat{\gamma }}_0-\hat{\varvec{\phi }}^{\top }\hat{{\varvec{\gamma }}}_p\).

1.1 A.1 Preliminary results on weak convergence

The next weak convergence result extends (1) of the Donsker’s Theorem to strongly mixing time series.

Lemma 3

(Deo 1973) Let \(\{\xi _n:-\infty<n <\infty \}\) be a strictly stationary sequence of random variables, \(\{F_n\left( t\right) : 0\le t\le 1\}\) be the empirical process for \(\xi _1, \xi _2, \ldots , \xi _n\), i.e., \(F_n(t) =n^{-1}\sum _{i=1}^nI_{\left[ 0,t\right] }\left( \xi _i\right) \) where \(I_{\left[ 0,t\right] }\left( \cdot \right) \) is the indicator function of the interval [0, t]. Suppose that \(0\le \xi _0 \le 1\) and \(\xi _0\) have continuous distribution function F with \(F(0)=0\) and \(F(1)=1\). Normalize \(F_n(t)\) as

For \(0\le t\le 1\), define the function \(g_t\) by

and suppose further that \(\{\xi _n\}\) satisfies the mixing condition

Then the sequence \(\{Y_n(t): 0\le t\le 1\}\) of normalized empirical processes converges weakly in \(\mathcal {D}[0,1]\) to a Gaussian random function \(\{Y(t): 0\le t\le 1\}\) specified by \(\mathbb {E}\left( Y\left( t\right) \right) =0\) and

Furthermore, the series in (14) converges absolutely and the sample paths of Y are continuous with probability one.

The following lemma yields a uniformly continuous Gaussian limiting process \(\zeta \left( \cdot \right) \) on \(\mathbb {R}\) for the empirical process \(N_{k}^{1/2}\left\{ F_{N_{k}}\left( \cdot \right) -F\left( \cdot \right) \right\} \), which is used in the proof of Theorem 2.

Lemma 4

Under Assumptions (A1) and (A2), there exists a mean-zero Gaussian process \(Y\left( \cdot \right) \) whose sample path is continuous on \(\left[ 0,1\right] \) with probability one such that as \(k\rightarrow \infty \), \(N_{k}^{1/2}\left\{ F_{N_{k}}\left( \cdot \right) -F\left( \cdot \right) \right\} \overset{d}{ \rightarrow }\zeta \left( \cdot \right) =Y\left( F\left( \cdot \right) \right) \). Furthermore, the process \(\zeta \left( \cdot \right) \) is uniformly continuous on \(\mathbb {R}\) with modulus of continuity \(\omega \left( \zeta , \varDelta \right) \le \omega \left( Y, \omega \left( F,\varDelta \right) \right) \rightarrow 0 \ a.s.\ \text { as } \varDelta \rightarrow 0\).

Proof. Define a transformed time series \(u_{i}=F\left( x_{t}\right) \), \(i=0,\pm 1,\pm 2,\ldots \). For any \(x\in \mathbb {R}\), let \(t=F(x)\in [0,1]\), then \(F_{N_{k}}(x)=F_{U,N_{k}}(t)\), in which

The \(\alpha \)-mixing coefficients for \(\left\{ u_{i}\right\} _{i=-\infty }^{\infty }\) is the same as those for \(\left\{ x_{i}\right\} _{i=-\infty }^{\infty }\), which satisfy Assumption (A1) that \(\alpha (n) \ll n^{ -6 -\epsilon }\), hence there exists \(\tau \in \left( 0,1/2\right) \) such that \(\alpha (n)^{1/2-\tau } \ll n^{-3}\), and thus \(\sum _{n=1}^{\infty } n^2\alpha (n)^{1/2-\tau }<\infty \). Then applying Lemma 3 with \(\xi _{i}\) replaced by \(u_{i}\), one has \(N_k^{1/2}\{F_{U,N_{k}}(t)-t\}\rightarrow Y(t)\).

Define \(\zeta (x)=Y\left( F\left( x\right) \right) \), then \(N_{k}^{1/2}\left\{ F_{N_{k}}\left( \cdot \right) -F\left( \cdot \right) \right\} \overset{d}{ \rightarrow }\zeta \left( \cdot \right) \) as \(k\rightarrow \infty \) and

The uniform continuity of \(F(\cdot )\) is guaranteed by Assumption (A2), and almost sure uniform continuity of \(Y(\cdot )\) by the fact that sample paths of \(Y(\cdot )\) are almost surely continuous over the compact interval \(\left[ 0,1\right] \). These facts imply that \(\omega \left( Y, \omega \left( F,\varDelta \right) \right) \rightarrow 0 \ a.s.\ \text { as } \varDelta \rightarrow 0\), thus \(\zeta \) is continuous with probability one and \(\omega \left( \zeta , \varDelta \right) \le \omega \left( Y, \omega \left( F,\varDelta \right) \right) \).

1.2 A.2 Proof of Theorem 1

Define a transformed time series \(u_{i}=F\left( x_{i}\right) \), \(i=0,\pm 1,\pm 2,\ldots \) and for any \(k=1,2,\ldots \), a finite population \(\pi _{k,U}=\{u_{1},u_{2},\ldots \text {,}\) \(u_{N_{k}}\}\) together with a simple random sample \(U_{i}=F\left( X_{i}\right) \), \(1\le i\le n_{k}\) from population \(\pi _{k,U}\). For any \(x\in \mathbb {R}\), let \(t=F(x)\in \left[ 0,1 \right] \), then

in which

By Assumption (A1), the time series \(\left\{ u_{t},t=0,\pm 1,\pm 2,\ldots \right\} \) is ergodic and has stationary distribution \(\mathcal {U}\left( 0,1\right) \), hence almost surely \(\lim _{k\rightarrow \infty }F_{U,N_{k}}(t)=t\) for \(0\le t\le 1\). As \(\lim _{k\rightarrow \infty }\min \left( n_{k},N_{k}-n_{k}\right) =\infty \) is contained in Assumption (A3), applying Theorem 14.1 of Rosén (1964), one obtains that as random elements taking values in the space \(\mathcal {D}\left[ 0,1\right] \) of cadlag functions:

almost surely. Lastly, Skorohod’s Representation Theorem (Theorem 6.7, Billingsley 1999) provides versions \(B_{k}^{*}\) of Brownian bridge such that

which implies that

The Theorem 1 is proved.

1.3 A.3 Proof of Theorem 2

Lemma 5

Under Assumptions (A1) to (A3), (A5), as \(k \rightarrow \infty \),

Proof

For the Brownian bridges \(B_{k}^{*}\left\{ \cdot \right\} \) in Theorem 1,

Since \(F\left( \cdot \right) \) is uniformly continuous by Assumption (A2), and Assumption (A5) implies that \(h \rightarrow 0\) as \(k\rightarrow \infty \), so \(\omega \left( F,h\right) \rightarrow 0\) as \(k\rightarrow \infty \). Assumptions (A1), (A3) ensure Theorem 1, so \(l_{k}\left\{ F_{n_{k}}\left( \cdot \right) -F_{N_{k}}\left( \cdot \right) \right\} -B_{k}^{*}\left\{ F\left( \cdot \right) \right\} \rightarrow 0\ a.s. \) as \(k\rightarrow \infty \). Thus, the expression in (6) is bounded by

In other words,

\(\square \)

Lemma 6

Under Assumptions (A1), (A2), (A5), as \(k \rightarrow \infty \),

Proof

Next, since Lemma 4 implies that \(N_{k}^{1/2}\left\{ F_{N_{k}}\left( \cdot \right) -F\left( \cdot \right) \right\} \overset{d}{ \rightarrow }\zeta \left( \cdot \right) \), Skorohod’s Representation Theorem (Theorem 6.7, Billingsley 1999) provides versions \(\zeta _{k}\left( \cdot \right) \) of \(\zeta \left( \cdot \right) \) such that

Consequently

Hence the following holds

Lemma 7

Under the Assumptions (A2), (A5) and (A6), as \(k\rightarrow \infty \),

Proof

According to the assumptions of the cumulative distribution function, we discuss the problem in two cases.

Case 1: \(\nu \ge 1\). Note that by Assumption (A6) \( \int _{-1}^{1}K\left( w\right) w^{r}dw\equiv 0,r=1,\ldots ,l-1\), and by Assumption (A2) \(F(\cdot )\in C^{\left( \nu ,\mu \right) }\left( \mathbb {R} \right) \). Hence

Furthermore, by Assumption (A2) \(F^{\left( \nu \right) }(\cdot )\in C^{\left( 0,\mu \right) }\left( \mathbb {R}\right) \) and

which follows from Assumption (A5) that \(\lim _{k\rightarrow \infty }l_{k}h_{n_{k}}^{\nu +\mu }=0\).

Case 2: \(\nu =0\). By Assumption (A2) \(F(x)\in C^{\left( 0,\mu \right) }\left( \mathbb {R}\right) \). Hence

which follows from Assumption (A5) that \(\lim _{k\rightarrow \infty }l_{k}h_{n_{k}}^{\nu +\mu }=0\). \(\square \)

Proof of Theorem 2

Define \(G(x)=\int _{-\infty }^{x}K\left( u\right) du\). By the definition of \( \hat{F}_{k}(x)\), one obtains

Therefore, by the definition of \(F_{n_{k}}(x)=n_{k}^{-1}\sum _{i=1}^{n_{k}}I \left( X_{i}\le x\right) \) in (4)

using integration by parts and a change of variable \(w=\left( x-u\right) /h\) . The following decomposition plays an important role:

Since Assumption (A4) requires that \(n_{k}/N_{k}=o\left( 1\right) \) and consequently \(N_{k}^{-1/2}=o\left( l_{k}^{-1}\right) \), using Lemma 5 and Lemma 6 together with the triangle inequality imply that as \(k\rightarrow \infty \)

By Lemma 7 and applying (23), (24), (21) and (22), the following holds

Applying Theorem 1, one has \(l_{k}\left\{ \hat{F} _{k}(x)-F_{N_{k}}(x)\right\} \overset{d}{\rightarrow }B\left\{ F(x)\right\} \) , proving (11).

Notice that under Assumption (A4), \(n_{k}^{-1/2}/l_{k}^{-1}\rightarrow 1\), \( N_{k}^{-1/2}=o\left( l_{k}^{-1}\right) \), \(N_{k}^{-1/2}=o\left( n_{k}^{-1/2}\right) \) as \(k\rightarrow \infty \), and that \( N_{k}^{1/2}\left\{ F_{N_{k}}\left( \cdot \right) -F\left( \cdot \right) \right\} \overset{d}{\rightarrow }\zeta \left( \cdot \right) \) by Lemma . Hence, as \(k\rightarrow \infty \)

Likewise, \(l_{k}D\left( F_{N_{k}},F\right) =o_{p}\left( 1\right) \). These, together with (11) establish (12). The proof of Theorem 2 is complete by applying Slutsky’s Theorem.

Rights and permissions

About this article

Cite this article

Li, J., Wang, J. & Yang, L. Kolmogorov–Smirnov simultaneous confidence bands for time series distribution function. Comput Stat 37, 1015–1039 (2022). https://doi.org/10.1007/s00180-021-01149-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00180-021-01149-5