Abstract

Additive manufacturing (AM) offers exciting opportunities to manufacture parts of unprecedented complexity. Topology optimization is essential to fully exploit this capability. However, AM processes have specific limitations as well. When these are not considered during design optimization, modifications are generally needed in post-processing, which add costs and reduce the optimized performance. This paper presents a filter that incorporates the main characteristics of a generic AM process, and that can easily be included in conventional density-based topology optimization procedures. Use of this filter ensures that optimized designs comply with typical geometrical AM restrictions. Its performance is illustrated on compliance minimization problems, and a 2D Matlab implementation is provided.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Additive layer manufacturing, or additive manufacturing (AM) in short, comprises a collection of techniques that allow the creation of components in a layer-by-layer, additive fashion. AM techniques are developing rapidly, and processes for a wide variety of materials are commercially available (Gibson et al. 2015). Compared to conventional subtractive fabrication techniques, AM offers a much larger design freedom, and a completely different cost structure: for AM, geometrical complexity of a component is no longer a main indicator of manufacturing costs.

Specifically in metal-based AM, advances over the past decade have improved quality and reliability of the technology to a level, that is suitable for creating fully functional end products instead of prototypes. Many branches of industry are looking to benefit from the opportunities this development offers (Gao et al. 2015). However, simply using an existing design and manufacturing it using AM is not an effective approach. Instead, parts need to be redesigned to truly benefit from AM opportunities (Atzeni and Salmi 2012). To fully exploit the large design freedom of AM, particulary topology optimization (TO) is universally recognized as a key enabling design technology (Rosen 2014; Zhu et al. 2015).

While AM technologies can create parts of unprecedented complexity, AM processes also have certain limitations. The dominant processes in metal-based AM currently use a powder bed, combined with laser or electron beams (SLM/EBM) that selectively melt metal powder to create structures. A universal limitation in these processes is that the inclination of downward facing (overhanging) surfaces is limited to a maximum angle with respect to the build direction. This overhang angle limitation has been extensively characterized, and typically the critical angle amounts to 40–50 ∘ (Wang et al. 2013; Mertens et al. 2014; Kranz et al. 2015).

In order to successfully print parts, one solution is to find a build orientation where no overhanging section exceeds the maximum overhang angle. This is often not possible for complex parts. Common practice is therefore to support the overhanging sections using sacrificial support structures, which are removed in a post-processing step. This solution consumes extra material, energy and time, and one has to take care that the added support structures are accessible for removal. Various strategies to automate the addition of minimal support structures have been proposed, see e.g. Strano et al. (2013), Vanek et al. (2014), and Calignano (2014). Others have proposed a procedure to augment a previously optimized design with additional structures, to make it self-supporting (Leary et al. 2014). The added structures are not sacrificial but become part of the design, which however alters the mass and performance of the original part. This paper presents another alternative: by including AM design limitations in the TO process, optimized designs can be generated that do not require any support structures.

The elimination of the need for additional supports at the TO design phase has been recognized and pursued by other authors: Brackett et al. (2011) proposed an overhang angle detection procedure to be combined with TO, but no integrated results were reported. A critical overhang angle of 45 ∘ was used. Gaynor and Guest (2014) introduced a wedge-shaped spatial filter for use during TO, that should ensure the presence of sufficient material in a region underneath all parts of the design. When the density average in the wedge exceeded a set threshold, the part above was considered to be properly supported. The published results show that generated designs are indeed self-supporting to a degree, but intermediate density material can readily be used by the optimizer to support fully dense structures, which is undesirable.

This paper proposes a new method to generate self-supporting, print-ready designs. It is also based on the idea of only instantiating material that is sufficiently supported, but applies a more detailed procedure to capture the essence of the targeted SLM/EBM AM processes. Using a simplified virtual AM fabrication model, implemented as a filter applied in a layer-by-layer fashion, at every optimization iteration an ‘as-printed’ design is created from a given blueprint. This operation can be classified as a nonlinear, adaptive spatial filter (Weeks 1996). The performance of this printed design is evaluated and optimized. In this way, unprintable designs are rigorously banned from the design space.

The filter is defined such, that it adds little computational effort and consistent sensitivities can be computed efficiently. While effective, it is not perfect: the employed numerical approximations allow for a small but gradual increase of density in the build direction, which sometimes shows in converged results. While it is significantly less problematic than in previous approaches, this tendency is still undesired. We show it can be controlled using the filter parameters, or by applying the AM filter in combination with established techniques to enforce crisp designs. Numerical examples are treated in Section 3, but first the following section introduces the formulation of the virtual layer-by-layer fabrication model.

2 Formulation

2.1 Fabrication model

The AM fabrication model is defined on a regular mesh, as is typically used in TO in an early design stage. For clarity we limit the discussion to the 2D case for a rectangular domain discretized by n i × n j elements, where the vertical direction is the printing direction. The extension to 3D is straightforward but will be discussed elsewhere (Langelaar 2016). Every element in the mesh is associated with a blueprint density variable x (i,j), where i and j denote the vertical and horizontal location of the element. The first layer on the base plate has index i = 1. Our aim is to express the printed densities ξ (i,j) in terms of the blueprint densities.

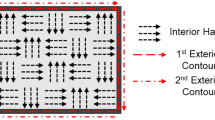

For an element at position (i,j) to be printable, it needs to be sufficiently supported by printed elements in the underlying layer i−1, etc. By definition, all elements supported by the base plate (i = 1) can be printed. For subsequent layers, we define that the printed density ξ (i,j) of an element cannot be higher than the maximum printed density Ξ(i,j) present in its supporting region S (i,j). This supporting region is chosen to consist of the element directly below the element, and the direct neighbours thereof, see Fig. 1. This choice is motivated by the fact that the critical self-supporting overhang angle for the considered processes typically amounts to 45 ∘ (Wang et al. 2013; Mertens et al. 2014; Kranz et al. 2015). In 2D, this results in a region of n S =3 elements. In 3D, one may use a support region of 5 or 9 elements. Mathematically, for the 2D case, this is expressed as:

At the domain boundaries the supporting region only consists of 2 elements, and either the left- or rightmost element is omitted from (2). This special consideration is omitted in the following for clarity. By sweeping through the domain from layer 1 to n i , the printed density field can be constructed. The process is illustrated in Fig. 2.

In this form, the fabrication model is not differentiable due to the nonsmooth min and max operators in (1) and (2). As gradient information is essential in the TO process, the model is cast in a differentiable form using smooth approximations smin and smax. In this paper we opt for the following approximations:

Here the parameters ε and P control the accuracy and smoothness of the approximations. For \(\varepsilon \!\rightarrow \!0\) and \(P\!\rightarrow \!\infty \) the exact min and max operators are obtained, but smoothness is lost. For other values, deviations arise in particular situations. For ε > 0 the smin operator gives exact results for equal inputs, i.e. smin(a,a) = a. For dissimilar inputs however the true minimum is slightly overestimated. The smax operator (P-norm) gives exact results for finite P for cases of the form smax(a,0,0) with a≥0, which is necessary to represent critical overhanging sections. For other inputs, the maximum is overestimated. The largest overshoot occurs for equal inputs, i.e. layers of uniform printed density. This overshoot is proportional to the density of the layer. For fully solid layers, this error is mitigated by the subsequent application of (3), with the fact that x (i,j) ≤ 1. However, for intermediate densities a build-up of layers of gradually increasing density could occur, which does not match the fabrication model assumptions. To counteract this without requiring extreme P values, a simple and effective solution is to slightly penalize the output of smax, such that the overestimation of the maximum of intermediate density regions is reduced. Thus, we redefine smax as follows:

with Q≤P. Lower values of Q result in stronger penalization of lower densities. Given a layer density value 0 ≤ ξ 0 ≤ 1 for which zero overshoot is desired, it follows that Q should be chosen as:

In this paper, we choose ξ 0 = 0.5 as default value. The support capability (maximum density) of uniform printed layers below this density is underestimated by (5), while for printed densities above ξ 0 the support capability is slightly overestimated. The effect of this penalization of the P-norm is investigated in Section 3. The reformulated, differentiable AM fabrication model now becomes:

with smin and smax according to (3) and (5), respectively. Note that printed densities in layer i depend on densities in all underlying layers.

2.2 Sensitivity analysis

Optimization of printed parts involves performance criteria f p that depend on the printed geometry, which in turn depends on the blueprint design, i.e. f p (ξ(x)), where bold symbols indicate the entire density fields organized in vector form. Response sensitivities with respect to x are given by:

Responses are computed using the as-printed design ξ, often involving finite element analysis. The term ∂ f p /∂ ξ is then obtained by (adjoint) sensitivity analysis of the performance criterium. The term ∂ ξ/∂ x expresses the dependence of printed densities on blueprint densities, which can be obtained through direct differentiation of (7) and (8). However, as the printed densities in a given layer depend on blueprint densities of all underlying layers, ∂ ξ/∂ x is a densely populated triangular matrix. For large problems, the computational cost and required memory become prohibitive.

For a more efficient approach, an adjoint formulation is employed. Combining (7) and (8), the following relation holds between printed and blueprint densities:

Here \(\breve {s}\) is introduced as shorthand notation for smin, and the single subscripts are layer indices. Using (10) as constraint equations, an augmented response \(\tilde {f}_{p}\) can be defined as:

with λ i as multiplier vectors. From this point, for brevity the arguments of \(\breve {s}\) are omitted, and instead the layer of its first argument is given as a subscript. At the first layer, we define \(\boldsymbol {\xi }_{1}=\breve {s}_{1}\equiv \mathbf {x}_{1}\) and thus \(\partial {\breve {s}_{1}} / \partial{\mathbf {x}_{1}}={\mathbf {I}}\). Differentiation of (11) yields:

where δ i j denotes the Kronecker delta, and 1 ≤j ≤n i . Since ∂ ξ i /∂ x j = 0 for i < j (printed densities only depend on blueprint densities in underlying layers), terms in the summations with i < j vanish. Taking terms with i = j outside of the summations, and using \(\partial {\boldsymbol {\xi }}_{j} / \partial \textbf {x}_{j}=\partial \breve {s}_{j} / \partial \textbf {x}_{j}\), gives:

Next, the last term in the summation is written as a separate sum and the first term (i = j + 1) is taken out:

By reindexing, the last sum can be changed into a summation from i = j + 1 to n i − 1. By taking the last term i = n i out of the first summation, both sums regain the same limits. Again using \(\partial {\boldsymbol {\xi }}_{j} / \partial \textbf {x}_{j}=\partial \breve {s}_{j} / \partial \textbf {x}_{j}\) and recombining summations gives:

From this equation, which holds for 1 ≤ j ≤ n i , it follows that computation of \(\frac {\partial {\boldsymbol {\xi }}_{i}}{\partial \textbf {x}_{j}}\)-terms can be avoided when choosing the multipliers as:

As each multiplier depends on the one associated with the layer above, the sequence of evaluation starts at the top layer and proceeds downwards. Note the resemblance to adjoint sensitivity analysis of transient problems (Van Keulen et al. 2005), caused by the layerwise, sequential nature of the AM filter. With multipliers according to (13), sensitivities of response f p follow from (12) as:

The derivatives of \(\breve {s}\) follow from (3), (5), (7) and (8) as:

and

In this last expression, only printed densities ξ k in the support region of an element in the next layer affect Ξ and give a nonzero contribution to the derivative. The local support of the filter operation thus limits the number of operations needed to evaluate the multipliers in each layer. The transformation of design sensitivities ∂ f p /∂ ξ to ∂ f p /∂ x using this adjoint approach involves simple operations with sparse or even diagonal matrices per layer, and is inexpensive compared to e.g. the finite element analysis of the design.

2.3 Integration in the TO process

The AM filter can be easily integrated in a conventional TO process, similar to other filters commonly used in density-based approaches (e.g. Bourdin (2001), Sigmund (2007)). A sample Matlab™ implementation, AMfilter.m, is digitally provided with this paper. It has been prepared for integration with the well-known 88-line topology optimization code by Andreassen et al. (2011). The implementation is adapted to the element numbering convention used in the 88-line code, and differs from the paper in the orientation of the vertical axis. Instructions to integrate AMfilter.m in the 88-line code are given in the AppendixAppendix.

By default, the 88-line code uses the optimality criteria (OC) optimizer. This procedure contains an inner loop where the volume constraint is evaluated repeatedly. This constraint involves the volume of the as-printed design ξ. This means that the AM filter is called multiple times in this inner loop, which raises the computation time. With other optimizers, e.g. the popular MMA method (Svanberg 1987), this inconvenience does not occur and only a single AM filter evaluation per design iteration is needed. In all cases the sensitivity transformation discussed in the previous section is performed once per iteration.

The proposed AM-fabrication filter can also easily be combined with other filtering techniques. As an illustration, in a setting of density variables associated with element centroids r e , by filtering a field of optimizer-controlled element densities x e a blueprint design field \(\tilde {\textbf {x}}\) can be defined as follows (Bruns and Tortorelli (2001), Bourdin (2001), Sigmund (2007)):

Here \(\tilde {x}_{e}\) is the blueprint density at position r e , and w e,i defines the relative weight of a control variable at spatial position r i , using e.g. a linearly decaying distance function: \(w_{e,i} =\max (0, R - ||\textbf {r}_{i}-\textbf {r}_{e}||)\) with filter radius R. Subsequently, the printed density field ξ is obtained by applying the AM filter to \(\tilde {\textbf {x}}\). Consistent sensitivities of response quantities f p follow by the chain rule:

To compute these sensitivites, first \(\partial f_{p} / \partial \tilde {\textbf {x}}\) is evaluated using the procedure discussed in Section 2.2. Next the effect of the density filtering is accounted for by multiplication with \(\partial \tilde {\textbf {x}} / \partial \textbf {x}\), which can be found in the mentioned references. Similarly, one can extend the chain of filters by adding e.g. a thresholding filter to obtain black-white designs. This is demonstrated in Section 3.4.4, where the AM filter is combined with density filtering and Heaviside projection techniques. Note that the AM filter should typically be the last filter in the chain, otherwise subsequent filtering operations could result in unprintable geometries, which would defeat the purpose of the filter.

3 Numerical examples

To illustrate the functionality and characteristics of the AM filter, we discuss several examples. All cases have been evaluated using the provided sample implementation, and additional examples can be created readily by the interested reader. As default parameters for the smooth min/max operators, ε=10−4, P=40 and ξ 0 = 0.5 are used.

3.1 Pattern tests

Before considering optimization results, we apply the proposed AM filter to a test pattern to highlight its characteristics. Figure 3a depicts the considered blueprint test pattern, together with processed results. It features straight and zig-zag lines of different densities (indicated by grayscale value), a solid portal that is connected to the baseline only on one side, closely spaced horizontal solid lines, and a ‘floating’ solid block. The ideal AM-filtered result obtained with exact min/max operators is shown in Fig. 3b. The second set of vertical lines is not connected to the baseline, and thus cannot be printed. The same happens to part of the portal, the horizontal lines, and the floating region. The portal clearly shows the 45 ∘ maximum overhang angle that is implicitly enforced by the filter. The other features remain identical to the blueprint.

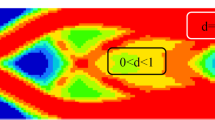

Figure 4 shows the result of applying the proposed AM filter to this pattern. In Fig. 4a the filter is applied with a regular P-norm smax operator ((4), i.e. P = Q, ξ 0 = 0). In Fig. 4b, we use the modified smax version (5), where the overshoot is set to zero at uniform regions of ξ 0 = 0.5. Due to the applied smooth min/max approximations, neither of these filters achieves the ideal performance. However, a clear difference is observed between the two cases. Without additional overshoot suppression, densities can increase relatively quickly in subsequent layers (Fig. 4a). This is undesirable, as it allows features that are not properly supported. The case with ξ 0 = 0.5 shows this tendency to a far lesser extent. However, this comes at the cost of reduced propagation of intermediate density features. Thinner and less dense lines gradually fade in vertical direction, due to the undershoot introduced by Q < P. Solid features are however represented properly. Since in most applications of topology optimization a solid/void end result is desired, it is of primary importance that the filter fully complies with AM overhang restrictions for solid parts. By increasing blueprint densities in printing direction, the optimizer still has the option to create regions of constant intermediate density when this proves favourable. The subsequent optimization tests demonstrate how these characteristics translate to the generated designs.

3.2 Problem definition

Two optimization cases will be considered. As our focus is on the performance of the AM filter, we restrict the discussion to linear elastic compliance minimization under a volume constraint, using the SIMP material interpolation (Bendsøe 1989; Rozvany et al. 1992). Examples use Young’s modulus E = 1 and Poisson ratio ν = 0.3, and an objective scaling factor of 0.01 was used. The first case is a problem designed to challenge the optimizer to create printable structures. It features a square domain loaded in tension at the top edge, as seen in Fig. 5. The second case is the well-known MBB beam problem. For the full problem definition the reader is referred to Sigmund (2001) and Andreassen et al. (2011). The design domain is rectangular with aspect ratio 3:1, as shown in Fig. 5. In both problems, a load of magnitude 1.0 is used, density filtering is applied and the SIMP penalization exponent is fixed at 3.0. Optimizations are initialized by distributing the available material uniformly over the domain.

In the tensile problem, the bottom side of the domain is taken as the baseplate. For the MBB beam, all four sides of the design domain will be individually considered as baseplate for the printing process. Sides are denoted by the cardinal directions N, E, S, W. Instead of redefining the problem in different orientations, this baseplate direction is implemented in the AM filter by transforming the blueprint density field.

3.3 Tensile test case

The domain of the tensile test case is discretized with 70×70 finite elements, the maximum volume is set to 30 %, and density filtering is applied with a radius of 3.0 element widths. In this test case, as in Section 3.1, both the regular (ξ 0=0) and modified P-norm formulation (ξ 0>0) have been applied, in order to illustrate the importance of the proposed modification. Figure 6 shows designs obtained using OC and MMA optimizers, for two different values of ξ 0. All cases have been run for 250 iterations with default optimizer settings, with exception of the OC ξ 0 = 0.5 case, which required a reduced move limit of 0.01 instead of 0.05 to converge.

All designs in Fig. 6 feature a solid bar connecting the load to the support as expected, but have also formed structures to allow manufacturing of this bar at an elevated height from the baseplate. Overhanging sections at the limit angle of 45 ∘ can also be recognized. Note that in reality a bar-shaped design could simply be translated to the baseplate to eliminate the need for support material, but the intention of this test problem is to study the ability of the AM filter to create printable solutions. Note also that there are no prescribed displacements applied at base to provide mechanical support to the component in its loadcase (see Fig. 5): the creation of the supporting structures is driven by printability requirements.

Regarding objective values, P-norm results ( ξ 0 = 0) outperform the ξ 0 = 0.5 cases. However, both optimizers clearly exploit the possibility of gradually increasing density values in the build direction that was also seen in the pattern tests (Fig. 6a,b). This is not desirable. The modified P-norm, with suppression of overshoot at lower densities ( ξ 0 = 0.5), results in higher compliances as more material must be invested in supports. The MMA design (Fig. 6d) features a connecting bar at the base, which adds some stiffness in horizontal direction by connecting the two support struts. In this way, not all support material is ‘wasted’, as it now serves a dual purpose: printability and improving the objective. In the OC case (Fig. 6c), a different local optimum is found, but compliances are not far apart. As the modified P-norm results show superior printability, we will exclusively use this in the following examples.

The addition of a strongly nonlinear AM filter does not make the optimization problem easier to solve, and numerical tests indicate that the P-norm modification further increases its complexity. This makes that the OC optimizer needed a tightened move limit and an increased number of iterations in the latter case. MMA proved more suited to handle the increased nonlinearity, and moreover it does not require repeated AM filter evaluations within each iteration as the OC method does. The rest of the numerical examples have been optimized using MMA, with default settings.

The designs presented in Fig. 6 all contain a relatively large amount of intermediate density, as also indicated by the measures of nondiscreteness ( M n d , Sigmund (2007)) in the order of 30 %, reported in the caption. For the ξ 0 = 0 cases, the undesired low density support structures contribute to this value, but the main cause is the applied density filter with a relatively large radius of 3 element widths. This enforces a gradual transition between solid and void regions, leading to intermediate density zones near all structural boundaries.

To illustrate the influence of filter radius on the design and its nondiscreteness, case d) (MMA, ξ 0.5 = 0) has also been optimized using a density filter of half the size, and without any density filter. Results are depicted in Fig. 7. The M n d values (18 % and 1.5 %, respectively) show that nondiscreteness is primarily linked to filter size. The smaller filter allows finer detail, resulting in a lower compliance compared to Fig. 6d. While the designs obtained for different filters differ in their specific layout, the general concept with the dual-purpose support structures can be recognized in all three cases.

The case without density filtering has resulted in a local optimum, as the compliance of the filtered design is better. Checkerboard patterns also appear (Fig. 7b), as these are properly supported structures according to the AM process definition, and therefore not suppressed by the AM filter. However, apart from the misleading artificial stiffness of checkerboard patterns, checkerboard patterns are also undesired for manufacturability reasons. Hence, use of a density filter in combination with the proposed AM filter is recommended, and all following examples use this combination.

3.4 MBB beam

3.4.1 Influence of part orientation

In this study, the MBB beam domain is discretized using 180×60 finite elements, and prior to the AM filter a density filter with a radius of 2.0 element widths is applied to create a filtered blueprint design. Optimization is performed for 4 different part orientations (N, E, S, W) using 300 MMA iterations. In addition, the problem is solved without the AM filter. Figure 8 shows the resulting designs.

The different orientations clearly have resulted in different topologies. All designs fully comply with the stated AM fabrication rules, and the influence of the 45 ∘ overhang angle limitation can be recognized by various members that reach exactly this angle. Interestingly, similar patterns to those suggested by Leary et al. (2014) to make existing designs printable by adding auxiliary structures, appear in these optimized designs directly due to the inclusion of the AM filter in the TO process. This is seen in particular in the S and N orientation.

Relative to the reference MBB beam optimized without AM filter, the N/E/S/W designs achieved compliances of 111 %, 101 %, 106 % and 100.0 %, respectively. Clearly the W orientation is preferred in this case, achieving a printable design without loss of performance. The reference design without AM filter cannot be printed in any of the considered orientations. The N case shows the largest compliance increase compared to the reference design, as a relatively large amount of material must be invested to support the long horizontal member at the bottom of the structure. The same holds for the S case, to a lesser extent. The E and W cases only require modest design changes, resulting in marginal compliance increases.

3.4.2 Influence of AM filter parameters

Using the most challenging case of the MBB beam, in the N orientation, we study the influence of parameters P and ε used in the definition of the smooth max and min operators, respectively. The aim is to give an impression of the effect of these parameters, and the sensitivity of designs, for this particular example. Results for various combinations of P and ε are shown in Fig. 9. As these parameters affect the accuracy of the smooth min and max operators, they also affect the overshoot of densities in uniform regions. This can result in printed density values ξ > 1, which affects the obtained compliance. To make an unbiased comparison in this parameter study, all densities have been capped exactly at 1 in a post-processing step, after which the compliance of the design was evaluated. This compliance, normalized by that of the reference design without AM filtering, is reported in Fig. 9 together with the maximum ξ-value found in the optimized design.

While the designs in Fig. 9 show a wide variety of topologies, a first thing that can be noticed is that the normalized compliances are not that far apart. The best and worst values differ only 7.5 %. The obtained performances are not very sensitive to the values of P and ε for this example, and selection of these values is therefore not very critical.

Considering ε, which controls the accuracy of the smooth min operator, as expected the overshoot in density increases for larger ε values. At the same time, the general trend is that better compliances are obtained for larger ε. We suspect that reduced ε, while giving improved accuracy, increases the nonlinearity and nonconvexity of the problem, making it harder for the optimizer to converge to superior (local) optima. The value of ε=10−4 seems a good compromise, with maximum density overshoot remaining below 0.5 %.

The P-norm parameter P has a more subtle effect on the results. Density overshoot increases slightly with decreasing P, as the P-norm increasingly overestimates printed densities in fully supported regions. This effect is most clearly seen for high ε, e.g. ε=10−3. Although the trend is not universal, the majority of cases indicate a slight improvement of compliance for increasing P. The main conclusion of this parameter study is however, that there are no clear ‘best’ values and that performances are fairly insensitive to the AM filter parameters.

3.4.3 Influence of mesh refinement

The aim of this section is to illustrate the effect of mesh refinement. The MBB beam in S orientation is considered as a test problem. As the discretization is refined, the filter radius (defined in element widths) is increased by the same factor, such that the absolute size of the filter remains unchanged. The obtained designs are depicted in Fig. 10a–d.

Results obtained for various discretizations of the MBB beam problem with the baseplate in S orientation (indicated in blue). The filter radius R is given in element units, and compliances C are normalized with respect to the unrestricted 180×60 reference design from Fig. 8e

Different topologies are obtained for each resolution, so in spite of using a density filter mesh-independence is not achieved. This is caused by the mesh-dependent nature of the AM filter, which defines relations between densities in adjacent elements. When refining the mesh, the AM fabrication conditions are enforced on a finer lengthscale than on coarser meshes. In terms of performance, no significant changes are observed in this case in final compliance and nondiscreteness. The M n d values increase slightly with mesh refinement, presumably this is linked to the number of members and boundaries present in the designs.

A limitation of the proposed AM filter can be observed in the 360×120 case (Fig. 10d): at the location indicated by the red arrow a member is present that is not fully dense, but shows increasing density in the build direction. Mesh refinement has lead to an increased number of layers in the build direction, and the numerical errors due to the smooth min/max operators can accumulate to noticeable positive density gradients in the build direction. As this member has primarily a support function for manufacturability, rather than a structural function, achieving full density to maximize its stiffness apparently is not optimal. Instead, the optimizer exploits the fact that the AM filter allows a slight gradual build-up of density at finite P and ε values, and renders this member with a density gradient such that it can contribute with minimal material usage to the support of the structurally important horizontal top beam.

This issue could be dealt with in post-processing, as a clear design interpretation is still possible. However, it is preferable to address it within the optimization itself. One solution is to reduce the approximation errors by using stricter settings for P and ε. Another effective approach is to apply continuation to the ξ 0 parameter, which controls the density level where the approximations result in zero error for regions of uniform density. Starting the optimization with the default value of ξ 0 = 0.5, and increasing this by a factor 1.15 at iteration 150, 225 and 300, resulted after 500 iterations in the design depicted in Fig. 10e. The problematic member has disappeared, as the more strict AM filter after continuation increasingly suppresses the option for the optimizer to build structures with gradually increasing density. However, the AM filter allows intermediate density members with constant or decreasing density, as long as they are properly supported. This design also features a layer of intermediate density (indicated by the arrow in Fig. 10e), which is properly supported. In addition, all boundaries not affected by the AM filter show intermediate densities due to density filtering. An approach to enforce fully dense results is discussed in the next section.

3.4.4 Combination with Heaviside projection

As seen in the previous examples, areas with intermediate densities may occur in obtained designs, as long as they are supported according to the assumed AM fabrication rules. However, in most cases a crisp black/white result is preferred, ready for printing without additional interpretation. To achieve this, the proposed AM filter can be combined with existing measures for suppression of intermediate densities. To demonstrate this, here we apply a volume-preserving Heaviside projection scheme (Xu et al. 2010) to the MBB problem on a mesh of 360×120 elements, again with a filter radius of 4 element widths. The \(\tanh \)-based formulation proposed by Wang et al. (2011) is applied, defined as:

Here \(\tilde {x}_{i}\) denotes a density variable obtained after applying density filtering on the design field, and x i is the blueprint density.

Continuation is performed by doubling the β parameter every 125 iterations, starting from 2.0. The η parameter, initially 0.5, is simultaneously adjusted to satisfy the volume constraint, making the parameter change less disruptive for the optimizer. In addition, continuation is applied to the AM filter parameter ξ 0 following the scheme introduced in the previous section.

The results obtained after 500 iterations are shown in Fig. 11. All designs are crisp and feature low M n d values, which shows that the AM filter can effectively be combined with Heaviside projection. It can be observed, that fine features of the design are smaller than the length scale imposed by the filter radius. Neither the AM filter nor the Heaviside projection preserve length scale, as discussed in Section 3.4.3 and in Wang et al. (2011), respectively.

The N/E/S/W designs achieved compliances of 106 %, 102 %, 102 % and 99.9 %, relative to the reference MBB beam optimized without AM filter (Fig. 11e). As before, the changes in performance caused by enforcing printability are relatively minor. In fact, the W orientation result performs slightly better than the unrestricted case, while topologies are quite similar. Either the reference case is a local optimum, or the sharp edges caused by the 45 ∘ overhang limit imposed by the AM filter provide an advantage over the smoothed Heaviside projection in this case ( β f i n a l = 16). The lower M n d value of the W design could be an indication for this.

The layout of the S orientation result (Fig. 11c) shows conceptual similarities to that obtained without Heaviside projection (Fig. 10e), but the geometries and the level of crispness and detail are clearly different. In terms of performance, Heaviside projection resulted in a 3.75 % lower compliance compared to the case only using density filtering. We speculate that this is caused by the fact that Heaviside projection allows for fully solid designs, while density filtering restricts solutions to structures with intermediate density boundaries that are less efficient in minimizing compliance.

4 Discussion

The examples demonstrate that the proposed AM filter is effective at generating designs that can be printed without additional supports. Not only does this save material, printing time and post-processing costs, it also makes modification of optimized designs for printability unnecessary, such that optimal performance is not compromised. It was also shown, that in some cases the optimizer can take advantage of the employed smooth approximations by creating support structures with slight density gradients in the build direction. This phenomenon could be counteracted effectively by a ξ 0-continuation strategy.

There are, however, some limitations that must be mentioned. Firstly, the filter is not process-specific and only provides an approximation of the fabrication envelope of a particular process. The overhang angle is fixed at 45 ∘, although this can be adjusted by changing the element aspect ratios. Secondly, the presented filter is defined for a regular mesh where the part is oriented in a principal direction. That this limitation can be overcome by a mapping or transformation of the domain, at the cost of an increase in complexity. Thirdly, this AM filter primarily targets a particular geometrical limitation of many AM processes: the critical overhang angle. This does not exclude other undesired geometrical features such as enclosed cavities to appear in the solution. Possibly the present AM filter can be combined with approaches to suppress cavities, e.g. Liu et al. (2015). Other important aspects such as part deformation, overheating during processing and residual stresses are not included and require far more sophisticated and computationally demanding process models.

Given these properties, this AM filter is expected to be most useful in an early design stage, where the use of regular meshes is not an important limitation and the chosen process model is sufficient. Nonetheless, decisions made in early stages strongly impact final performance and costs, and therefore including even approximate AM restrictions from the beginning can be crucial.

5 Conclusion

A new filter for density-based topology optimization is proposed that mimics a typical powderbed-based Additive Manufacturing (AM) process. In a layerwise process, it transforms a given blueprint design to an ‘as-printed’ design for performance evaluation. In this way, unprintable designs with e.g. infeasible overhanging sections are rigorously banned from the design space. The computational cost of both the filter and its sensitivity analysis is small compared to the finite element analysis, and the filter can be successfully combined with other techniques commonly applied in density-based topology optimization, e.g. Heaviside projection for black/white designs.

The proposed AM filter only targets fundamental geometrical printability aspects, and does not include more sophisticated criteria related to internal stresses, distortion, enclosed cavities, etc. Also, the critical overhang angle and part orientations are directly linked to the applied discretization in the described formulation. These limitations are to be addressed in future investigations.

With this paper, a sample Matlab™ implementation is provided for use with popular topology optimization scripts. An implementation in 3D has also been developed and will be presented elsewhere. Given its ease of use and low computational burden it is expected that the present virtual fabrication filter can be of considerable practical value as a first-order approximation.

References

Andreassen E, Clausen A, Schevenels M, Lazarov B, Sigmund O (2011) Efficient topology optimization in MATLAB using 88 lines of code. Struct Multidiscip Optim 43(1):1–16

Atzeni E, Salmi A (2012) Economics of additive manufacturing for end-usable metal parts. Int J Adv Manuf Technol 62(9-12):1147–1155

Bendsøe M (1989) Optimal shape design as a material distribution problem. Struct optim 1(4):193–202

Bourdin B (2001) Filters in topology optimization. Int J Numer Methods Eng 50(9):2143–2158

Brackett D, Ashcroft I, Hague R (2011) Topology optimization for additive manufacturing. In: Proceedings of the solid freeform fabrication symposium, Austin, TX, pp 348– 362

Bruns T, Tortorelli D (2001) Topol Optim Non-Linear Elastic Struct Comput Mech 190(26–27):3443–3459

Calignano F (2014) Design optimization of supports for overhanging structures in aluminum and titanium alloys by selective laser melting. Mater Des 64:203–213

Gao W, Zhang Y, Ramanujan D, Ramani K, Chen Y, Williams C, Wang C, Shin Y, Zhang S, Zavattieri P (2015) The status, challenges, and future of additive manufacturing in engineering. Computer-Aided Design

Gaynor A, Guest J (2014) Topology optimization for additive manufacturing: considering maximum overhang constraint. In: 15th AIAA/ISSMO multidisciplinary analysis and optimization conference, pp 16–20

Gibson I, Rosen D, Stucker B (2015) Additive Manufacturing Technologies. Springer, Berlin

Kranz J, Herzog D, Emmelmann C (2015) Design guidelines for laser additive manufacturing of lightweight structures in TiAl6V4. J Laser Appl 27(S1):S14,001

Leary M, Merli L, Torti F, Mazur M, Brandt M (2014) Optimal topology for additive manufacture: a method for enabling additive manufacture of support-free optimal structures. Mater Des 63:678–690

Langelaar M (2016) Topology optimization of 3D self-supporting structures for additive manufacturing. Additive Manufacturing 12(A):60–70

Liu S, Li Q, Chen W, Tong L, Cheng G (2015) An identification method for enclosed voids restriction in manufacturability design for additive manufacturing structures. Front Mech Eng 10(2):126–137

Mertens R, Clijsters S, Kempen K, Kruth JP (2014) Optimization of scan strategies in selective laser melting of aluminum parts with downfacing areas. J Manuf Sci Eng 136(6):061,012

Rosen D (2014) Design for additive manufacturing: past, present, and future directions. J Mech Des 136 (9):090,301

Rozvany G, Zhou M, Birker T (1992) Generalized shape optimization without homogenization. Struct Optim 4(3-4):250–252

Sigmund O (2001) A 99 line topology optimization code written in matlab. Struct Multidiscip Optim 21 (2):120–127

Sigmund O (2007) Morphology-based black and white filters for topology optimization. Struct Multidiscip Optim 33(4-5):401– 424

Strano G, Hao L, Everson R, Evans K (2013) A new approach to the design and optimisation of support structures in additive manufacturing. Int J Adv Manuf Technol 66(9-12):1247– 1254

Svanberg K (1987) The method of moving asymptotes - a new method for structural optimization. Int J Numer Methods Eng 24(2):359–373

Van Keulen F, Haftka R, Kim N (2005) Review of options for structural design sensitivity analysis. Part 1: Linear systems. Comput Methods Appl Mech Eng 194(30):3213–3243

Vanek J, Galicia J, Benes B (2014) Clever support: efficient support structure generation for digital fabrication. In: Computer graphics forum, wiley online library, vol 33, pp 117– 125

Wang F, Lazarov B, Sigmund O (2011) On projection methods, convergence and robust formulations in topology optimization. Struct Multidiscip Optim 43(6):767–784

Wang D, Yang Y, Yi Z, Su X (2013) Research on the fabricating quality optimization of the overhanging surface in slm process. Int J Adv Manuf Technol 65(9-12):1471– 1484

Weeks A (1996) Fundamentals of electronic image processing. SPIE Optical Engineering Press, Bellingham

Xu S, Cai Y, Cheng G (2010) Volume preserving nonlinear density filter based on heaviside functions. Struct Multidiscip Optim 41(4):495–505

Zhu JH, Zhang WH, Xia L (2015) Topology optimization in aircraft and aerospace structures design. Archives of Computational Methods in Engineering 1–28

Acknowledgments

The author thanks Krister Svanberg for use of the MMA optimizer, and Fred van Keulen for the suggestion to explore an adjoint sensitivity formulation.

Author information

Authors and Affiliations

Corresponding author

Additional information

Open Access

This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Appendix: : Use of AMfilter.m

Appendix: : Use of AMfilter.m

The proposed AM filter is provided as a Matlab™ subroutine named AMfilter.m. The data formats used in this routine are compatible with the well-known 99- and 88-line topology optimization codes (Sigmund 2001; Andreassen et al. 2011). It can be called in two ways:

-

1.

To filter a blueprint density field ‘xPhys’:

xPrint=AMfilter(xPhys,baseplate);This command filters blueprint field xPhys using a certain baseplate orientation, given by a character N/E/S/W or X, where the latter simply bypasses the AM filter.

-

2.

To transform sensitivities, as described in Section 2.2:

[xPrint,df1,df2,..]=... AMfilter(xPhys,baseplate,df1,df2,..);This call transforms as many sensitivity fields df as specified, and overwrites them with the result. Combining many sensitivities in a single call is more efficient. In the process also the density field is filtered.

AMfilter can be integrated into the 88 line code as follows (line numbers refer to the original code):

-

Compute initial printed densities after line 47, based on blueprint densities xPhys [Call 1].

-

Replace xPhys by xPrint in lines 54, 59, 60.

-

Transform sensitivities dc and dv before filtering (line 62) [Call 2].

-

Reduce the OC move limit to e.g. 0.05 (line 70).

-

Compute printed densities inside the OC loop, after filtering (line 78) [Call 1].

-

Replace xPhys by xPrint in lines 79, 85, 87.

Density filtering is preferred for its consistency. When using sensitivity filtering, the volume constraint sensitivity field should also be filtered, as it is no longer uniform. Depending on the considered case, further reduction of the default OC move limits may be required to handle the nonlinearity of the AM filter. Use of MMA is recommended, also to avoid repeated AMfilter calls inside the optimizer loop.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Langelaar, M. An additive manufacturing filter for topology optimization of print-ready designs. Struct Multidisc Optim 55, 871–883 (2017). https://doi.org/10.1007/s00158-016-1522-2

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00158-016-1522-2