Abstract

This paper traces the empiricist program from early debates between nativism and behaviorism within philosophy, through debates about early connectionist approaches within the cognitive sciences, and up to their recent iterations within the domain of deep learning. We demonstrate how current debates on the nature of cognition via deep network architecture echo some of the core issues from the Chomsky/Quine debate and investigate the strength of support offered by these various lines of research to the empiricist standpoint. Referencing literature from both computer science and philosophy, we conclude that the current state of deep learning does not offer strong encouragement to the empiricist side despite some arguments to the contrary.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

We aim to trace the strength of support for empiricism in several debates about the nature of human cognition since the 1950s till the present. We address early behaviorist approaches to learning, connectionism, and some influential versions of deep learning in turn. Each of these approaches has been criticized for its important limitations and we will demonstrate that these limitations also undermine the assumed support for empiricism. Surprisingly, some of the argumentative strategies used to attack these different research programs are very similar. While all three approaches have been used to defend empiricist theories in cognition, we find that such usage is largely unsupported.

The long-standing empiricist tradition is based on a firm belief that knowledge and the content of the mind arise primarily, if not exclusively, from sensory input. Its paradigmatic slogan, nihil est in intellectu quod non sit prius in sensu, was updated in the first decades of the twentieth century to construe a picture of the world from pure experience (the phenomenalism of Russell 1914 and Carnap 1928/1967). Through a series of transformations, the tradition went from explaining internal model of the world as a collection of theories consistent with a very basic notion of observation to its various current iterations that claim the sufficient amount of data and immense processing power of deep learning networks can by themselves arrive at and possibly go beyond human-level cognitive capacities.

Alongside these philosophical developments, science also adopted empiricism early on as its fundamental approach to cognition. With the publications of Skinner and colleagues in the 1930s, empiricism became a standard scientific methodology. We stress that this methodology has strongly influenced the current domain of connectionism (Walker 1992). Moreover, it still finds its adherents in several areas of deep learning. These computer science strategies build on early behaviorism, envisioned as a radical version of empiricism. They rely on large sets of data and aim to match and overcome the achievements of human cognition just by adding sufficient computing power. Our goal is to show that all these strategies failed to deliver justification for empiricism. Instead, we will come to the conclusion that to match the human cognitive level, machine learning needs to embrace hybrid models, broadly inspired by Kantian approaches to cognition.

1.1 Skinner, behaviorism, and language learning

The first step in our intellectual history is behaviorism of Skinner and his followers. We are aware that there are important predecessors to this school of thought (Walker 1992), but we do not concentrate on them as they are not as strongly theoretically founded and do not have to systematically answer challenges to their theoretical commitments. For us, the debate starts to be genuinely about empiricism at the moment when there is a serious contender that rejects empiricist assumptions. While the first part of our philosophical story relies heavily on debates about forms of language acquisition, it serves only to illustrate general foundations of associationist learning strategies and their legitimate criticism. We first introduce basic building blocks of behaviorism. Then we take up Chomsky’s critique of behaviorism, beginning with his 1959 review of Skinner’s Verbal Behaviour and continuing through the 1970s and see how Skinner and Quine altered their position in its light.

Let us start with the basic tenets of behaviorism. First, behaviorism holds that mental entities are not explanatory. This is not tantamount to a claim to eliminate the mental domain. Instead, it is meant to expel the mental as an explanatory category in psychology. Hence, the notorious ‘black box’ argument: whatever may go on inside the black box of our heads, i.e., a subject’s mental processes, is irrelevant to explanation. Second, the radical empiricism of behaviorism is restricted to externally observable inputs (known as stimuli) and outputs (known as behavior). It subscribes to a strict empiricist understanding of what constitutes credible scientific entities. If it is to be scientific, psychology must search for correlations between stimuli and outputs. Correlations identified by researchers do not necessarily correspond with traditional psychological notions. Third, behaviorism rejects traditional (or folk) categories of psychological explanation based on thoughts, attitudes, and other psychological states. Explanations of behavior must fundamentally be based on the observed data. The work of a behaviorist should thus proceed in a piecemeal fashion. Scientists need to focus on particular sets of stimuli and on particular behaviors. The correlation of behavior with desired outcomes is at the heart of the behaviorist explanation for learning. Learning consists of links between stimulus and behavior governed by operant conditioning. In the strict empiricist fashion, it is the history of encounters with a given phenomenon that shapes up any individual future performance on the relevant task. It is shaped by the tendency to repeat behavior that is rewarded in a particular situation, and to refrain from behavior that is punished. This process gives us the principles of positive and negative reinforcement—the principles that will become crucial in artificial neural networks several decades later. The strategy envisioned by the behaviorist theory of the mind aims at a creation of an implicit list of probabilistic correlations of stimuli and responses—and little else (we will return to this point in Sect. 1.4 and later).

The behaviorist project culminates with Skinner attempting at a construction of an account for language learning in his 1957 work Verbal Behaviour. On his account, language is understood as behavior elicited by certain other (often linguistic) behavior. Given its highly speculative account of how we come to learn languages, Skinner’s theory would probably be largely forgotten by now if it were not close to the behaviorist views held by Quine. As the criticism of Skinner transfers to Quine, one of the most influential empiricists philosophers of the twentieth century, it is worth focusing on his radical vision of the mind.

1.2 Quine and Chomsky

In philosophy, behaviorism is most famously represented by Quine. In fact, Quine and Skinner were close associates. On their picture, psychological processes are not based on internal representations or models. Instead, they take place in the purely physical space of interactions. These processes are therefore not far away from later connectionist views on categorization and other cognitive tasks, where interactions between neurons and input/output relations are all that matters.

In his work, Quine aimed to build a theory of language learning based on conditioning. When a child is presented with a red ball, for example, the child might be rewarded for uttering the word “red”. The basis for learning language (and hence everything else) is ostentation, or constant pointing to objects and naming them (Quine 1950). Our primary aim is not an exegesis of Quine. Rather, we concentrate on an argumentative exchange between Quine and his prime opponent Chomsky as it is this exchange that forces Quine to abandon some of his early strict empiricist inclinations, and implicitly embrace some nativist presuppositions.

As Chomsky was recently involved in exchanges with deep learning advocates (Norvig 2017), we want to point out that a predecessor for these exchanges took place in the 1950s and afterward—namely, Chomsky’s debates with Quine. Interestingly, various recently employed arguments on deep learning (see Sect. 3 below) resemble those employed in their early debate.

Chomsky questions the innocence of the notion of stimulus upon which the Quine account for language learning rests. To borrow his example, suppose we seat a subject in front of a red chair and wait for an utterance. If the subject says “red” (or “chair”, or “red chair”), we praise the subject’s correct utterance. Our cheering is meant to reinforce such utterances following presentation with such stimuli. But suppose the subject says, “It smells funny in here”. Then, the stimulus of the utterance must be the smell of the room (and the bombarding of the subject’s olfactory receptors). In that case, what counts as stimulus depends on what the subject utters. This leads to a bigger worry. By invoking the method of ostension, one tacitly introduces an intentional vocabulary. Such vocabulary needs to specify what a speaker intends to point to through an ostensive gesture. It must also identify the link between the referent object and the co-occurring utterance. This, Chomsky (1967) argues, is nothing other than a retreat to mentalist explanation. To make sense of the response, we must refer to the stimuli. Locating the stimuli requires knowing what the response is actually about. The intensionality of the utterance presents a further problem. The same stimulus may invoke many responses under various modes of presentation. Without recourse to mentalism, this many-to-one relation cannot be resolved at all.

These arguments first appeared in Chomsky’s review of Skinner’s Verbal Behaviour and apply, mutatis mutandis, to Quine’s position. They are all part of a more general problem wherein utterance and stimuli seem to be independent of one another. For instance, we often utter names when their bearers are not present. Broader considerations about context play a crucial role in establishing the existence of any relation between utterance and its referent. Quine seems to be aware of this complication and in his later writings claims that reduction of many-to-one relation can be achieved via a specific mechanism within human subjects: “…learning depends indeed on both the public currency of the observation sentences and on a preestablished harmony of people’s private scales of perceptual similarity.” (1995, 254). It is worthy of noticing that metaphorical language of preestablished harmony and private scales neither offer a satisfactory explanation nor present a firm empiricist stance.

1.3 Mining for sentences: the probability of utterances

That more is needed in explication of the complex relation between worldly inputs and linguistic outputs is clear to many observers. Nulty describes the situation in no uncertain terms: “The typical empirical perspective on learning the referents of single terms is that of an overwhelming problem space in which the novitiate language learner must find the correct connections for words and objects from a practically infinite number of possible couplings” (2005, 377).

Empiricists have often tried to save themselves from falling into the infinity abyss by invoking dispositional accounts, linked to probability theory. Chomsky quotes Quine’s characterization of language as a “complex of present dispositions to verbal behavior, in which speakers of the same language have perforce come to resemble one another” (Chomsky 1968, p. 57, quoting Quine). He then notes that we can treat dispositions as probabilities: “[p]resumably, a complex of dispositions is representable as a set of probabilities for utterances (responses) in certain definable circumstances or situations” (ibid.).

This follows Skinner’s approach, in which “the probability that a verbal response of given form will occur at a given time is the basic datum to be predicted and controlled” (Skinner 1957, p. 27). “The response Quiet! is reinforced through the reduction of an aversive condition, and we can increase the probability of its occurrence by creating such a condition that is, by making a noise” (ibid., p. 35). All of this greatly depends on the notion of resemblanceFootnote 1 of the context for utterance, something Skinner spends a great deal of time with (as does Quine 1969a, b).

The aim is ultimately to eliminate intensional idioms and replace them with probabilities determined by frequencies. Skinner invents the world ‘tact’ to describe spontaneous behavior in the presence of non-verbal stimuli (such as the presence of a dog, which could prompt the response, “Oh look, a doggy!”). In his discussion, he observes:

[i]t may be tempting to say that in a tact the response “refers to,” “mentions,” “announces,” “talks about,” “names,” “denotes,” or “describes” its stimulus. But the essential relation between response and controlling stimulus is precisely the same as in echoic, textual, and intraverbal behavior. We are not likely to say that the intraverbal stimulus is “referred to” by all the responses it evokes, or that an echoic or textual response “mentions” or “describes” its controlling variable. The only useful functional relation is expressed in the statement that the presence of a given stimulus raises the probability of occurrence of a given form of response. (ibid., p. 82)

This quote is a clear illustration of the attempt to reduce intensional idioms to an extensional notion (counting the frequency of occurrence). But the easiness with which behaviorists move from referential relations to purely statistical ones, occurring in-between utterances, remains very problematic. Quine’s radical metaphysical physicalism makes things even worse. For him, physicalism means that, ultimately, there is nothing in the universe other than atoms moving in the void. Given this underlying approach, behaviorism is a natural fit, as stimuli and responses are observable parts of the natural world, unlike mental entities. It follows that, fundamentally, the probabilities mentioned above are not linking stimuli with sentences, but only one set of physical events with another.Footnote 2 In Sect. 2.4 we will demonstrate how analogical strategies based on statistics are used in machine learning to achieve human-level cognitive capacities.

1.4 The poverty of stimulus (via probabilities of utterances)

Chomsky attacks Quine’s behaviorism by arguing that the frequency of an utterance following some occurrence is effectively zero:

“...assuming ‘circumstances’ and ‘situations’ to be defined in terms of objective criteria, as Quine insists, it is surely the case that almost all entries in the situation-response matrix are null. That is, in any objectively definable situation, the probability of my producing any given sentence of English is zero, if probabilities are assessed on empirical grounds” (Chomsky 1975, pp. 310–311).

Hence, he concludes, the probability of producing a sentence in Japanese is the same as the probability of producing a sentence in English (i.e., zero) (Chomsky 1975, p. 311). This consequence follows from Quinean naturalism, as utterances in various languages are just physical phenomena and as such cannot be distinguished from each other on any non-physical ground. This makes statistical analysis a very unsuitable starting point for the endeavour of linking utterances in various languages to their referents or causes. The discussion presented here is a probabilistic restatement of the poverty of stimulus argument.Footnote 3 This argument standardly states that children do not learn language by stimulus, response, and reward only as these elements are not sufficiently structured to fix correct utterances (of words and sentences). Put differently, children acquire language far too quickly for acquisition to be a matter of finding a proper probabilistic matrix of stimuli and responses.Footnote 4

Interestingly, Quine does not respond by denying that a disposition towards particular types of linguistic behavior should be qualified probabilistically. He instead argues that Chomsky is focused on the wrong probabilities because the probability of a disposition toward uttering a particular sentence is conditioned by very specific circumstances—and hence, not zero at all:

I am puzzled by how quickly he [Chomsky] turns his back on the crucial phrase “in certain definable ‘circumstances.’” Solubility in water would be a pretty idle disposition if defined in terms of the absolute probability of dissolving, without reference to the circumstance of being in water. … Verbal dispositions would be pretty idle if defined in terms of the absolute probability of utterance out of the blue. I, among others, have talked mainly of verbal dispositions in a very specific circumstance: a questionnaire circumstance, the circumstance of being offered a sentence for assent or dissent or indecision or bizarreness reaction. (Quine 1972, pp. 444–445).

Similar attack is waged by MacCorquodale:

Chomsky seems not to grasp the difference between the overall probability of occurrence of an item in a speaker's verbal repertoire, which is the frequency with which it occurs in his speech over time without regard to his momentary circumstances, and the momentary probability of a given response in some specified set of circumstances. (See, for example, Chomsky, 1959, p. 34). The two probabilities are very different. The overall probability that any speaker will say, for example, 'mulct', is very low; it occurs rarely in comparison with such responses as 'the' or 'of. The probability that he will say 'mulct' may become momentarily extremely high, as when he sees the printed word. Of the two, overall probability is a typically linguistic concern, while momentary probability shifts are, in a sense, the very heart of the psychologists' problem, since they reflect the relation between speech and its controlling variables. Under what conditions does an organism speak an item from his repertoire? Simply knowing the repertoire tells us precisely nothing about that (MacCorquodale, 1970, p. 88).

Before we comment on the general issue, let us briefly comment on two problems we see in MacCorquodale’s critique. His notion of momentary probability is utterly idiosyncratic and does not refer to anything in a regular literature on the topic. More importantly, his claim that under certain circumstances the relevant probability becomes “extremely high” is unwarranted, unless we already know how the language functions. Yet for knowing more about the functions of language, one needs to invoke the vocabulary of intentions, reference, ostentation, circumstances and other related phenomena that make behaviorist reading unlikely. If, as we noted before, the probability ultimately links two physical events, there is no good reason to assume immense fluctuations in probability distribution.

Overall, if conditional probabilities are to offer a solution, we need to consider how they are obtained. Obtaining conditional probability through counting occurrences of pairs of words, phrases, and sentences would require immense amounts of data.Footnote 5 Given that this requirement is unrealistic in the case of human cognitive functioning, it seems some additional apparatus is needed to provide the relevant conditional probabilities. But reference to any such apparatus would, as Chomsky charged, also seem to reintroduce a priori notions and thereby weaken the empiricist inspirations of the Quinean project. As we will see, this debate on prior conditions for processing closely resembles debates on setting parameters within deep learning networks, which is addressed in the final parts (Sect. 4.2) of the paper.

Alongside the above-mentioned issues, additional problems appear within the behaviorist paradigm. One of the most critical involves the notion of abstraction. Humans can abstract from concrete particulars to categories and then apply these categories to other exemplars (we might move from a golf ball, say, to the notion of a ‘sphere’ then on to a ball ornament for a Christmas tree). Abstraction must employ mechanisms other than a basic form of induction over instances. Indeed, it is hard to see how any form of induction could lead to a formulation of claims about abstract objects. This is yet another restatement of the poverty of stimulus argument—no amount of exposure to stimuli gets us to abstract notions. Quine, of course, is aware of this difficulty. He thus supplements his account for language learning with the conditional notion of ‘analogical synthesis’ (for discussion, see Gibson 1987). Chomsky responds that this notion is empirically empty; it can only serve as a serious fix to the problem of abstraction if we are provided with an exact account of what it is and how it is used (1969, p. 56). Section 3.2 will recount how the problematic issue of abstraction has resurfaced, decades later, within the debate on deep learning.

Under the argumentative pressure, Quine later appears to change his position. He denies that the introduction of mechanisms other than the correlation of stimulus and response amounts to an abandonment of behaviorism. For him, “empiricism of this modern sort, or behaviorism broadly so called, comes of the old empiricism by a drastic externalisation. The old empiricist looked inward upon his ideas; the new empiricist looks outward upon the social institution of language…. Externalised empiricism or behaviorism sees nothing uncongenial in the appeal to innate dispositions to overt behavior, innate readiness for language learning” (1969a, p. 58). In another paper from the same year, he is more specific:

Language aptitude is innate; language learning, on the other hand, in which that aptitude is put to work, turns on intersubjectively observable features of human behavior and its environing circumstances, there being no innate language and no telepathy. … Chomsky says 'I postulate a pre-linguistic (and presumably innate) 'quality space' with a built-in distance measure'. But 'postulate' is an odd word for it, since a quality space is so obviously a prerequisite of learning, and since distances in a quality space can be compared experimentally. Quine (1969b, p. 306).

Yet the notion of the linguistic aptitude remains unexplained and unless we learn more, its collapse toward Chomskian model of the mind is likely. In any case, this is indeed a very different empiricism than the traditional one we started from.Footnote 6 We have moved from the original Humean notions where nothing is said about the content of the mind to the Kantian picture with inner mechanisms and structures that shape up incoming stimuli. It marks the beginning of a tendency of hybridization that we will observe repeatedly. Hybridization is a process of enriching the originally pure empiricist position with nativist or explicit representational elements in the face of mounting criticism. While hybridization is not a problem in itself, it makes the defense of the original empiricist position significantly less compelling.Footnote 7 This argument will come to the fore in the Sect. 3 with the discussion of the resource inefficiency of deep learning.

1.5 Beyond behaviorism

While essential in taking scientific psychology off the ground, behaviorism, with its extreme empiricist tendencies, became unattractive. It did not deliver on its original promises to provide a firm background for reliable connections between stimuli and responses. Given the computational difficulties of such a task, behaviorists faced ever increasing problems with restraining the domain over which probabilistic operations link inputs and outputs. More importantly, Chomsky has forced empiricists to acknowledge that even if such a domain exists, it has to take into account inner mechanisms of the mind. This is an important sign of demise of behaviorist original aspirations. Instead of a pristine black box, we now have a black box with functions, parameters and inner structures. Once these are postulated, it is only a matter of time till we learn more about their precise values and mutual dependencies. The result is a model of the mind, where it would be odd not to label its inner workings as mental. Once this is allowed, the behaviorist program is over. A parallel story can be told about empiricism. As soon as Quine moves from input-based dependencies to amend his approach with “innate dispositions” (see Sect. 1.5), he is significantly weakening its status. One might still insist (as he does) that we are just altering empiricism. After all, ever since Kant, internal constraints on the mind’s processing of external inputs have been widely acknowledged (Van Cleve 1999). The question to be answered is how such constraints are to be mapped out for empiricism to deserve its name. As the reader will see in the end of this paper, there is growing evidence that chances to preserve a credible version of empiricism are rather bleak.

1.6 Computationalism as anti-empiricist movement

Partly due to its inability to respond to the Chomskian criticism and partly due to unavailability of viable alternatives, two decades following the 1960s have seen an almost complete rejection of empiricist approaches. Computationalism has ruled supreme, with its emphasis on explicit inner representations that are both installed in early computers and postulated in biological minds.Footnote 8 Computationalism equates the mind with a device that employs algorithms on symbols endowed with meaning. Its starting point is psychological. It takes for granted traditional psychological mental states (beliefs, desires etc.) and asks which processes are such that allow for these states to process worldly inputs and culminate in appropriate actions. Its view of the mind is compositional and it takes concepts to be the basic building blocks of cognition (Pylyshyn and Demopoulos 1986). Concepts are combined in accordance with algorithmic rules to create novel contents that can be cognitively utilized in various ways (stored in memory, used in arguments, acted upon, etc.). There are several persistent difficulties with computationalism. We have little idea how tenets of computationalism relate to brain’s neural underpinnings of cognition, how concepts get their meanings or how syntactic brain operations preserve semantic mental relations. Yet these are not the topics to be addressed in our paper. We only want to contrast the explicitly representationalist story of computationalism with both that of its behaviorist predecessor and its current successors in machine learning. In a stark contrast to both of them, defenders of computationalism declare a strongly anti-empiricist stance, with concepts as representations being the precondition of processing of empirical inputs and delivering relevant outputs. Where do concepts come from is an uneasy question, yet some version of nativism seems acceptable for most in the field (Fodor 1998; Marcus 2018a). Given various successes of computationalism across many domains, it looked for quite some time that empiricism is a completely untenable position. Yet with the onset of connectionism in the 1980s, nativist assumptions have gradually lost their upper hand.

2 Connectionism

Out of assumptions about an alternative model of the mind, and also in response to the problems with computationalism briefly outlined in the previous section, a novel approach emerged in the late 1980s in the form of connectionism. It is important to note that main terms and ideas that eventually paved the way toward connectionism still play a substantial role in machine learning today. Our task is not to trace developments within connectionism in any details (for a very detailed exposition, see Schmidthuber 2015). Rather, we concentrate on those strategies within connectionism and its current iterations that tentatively support empiricism. We thereby pay closer attention to the early installments of connectionism because we believe many of the current worries about empiricism in the domain of model-free machine learning and deep networks (that we discuss in Sects. 3) can be traced to its founding principles in Rumelhart and McClelland (1987).

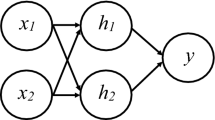

The major appeal of connectionism lies in its offer of a parsimonious model of the mind. Unlike a complex computer with a library of concepts and a set of commands, the connectionist model consists of a simple network made up of three (or more) fundamental layers. On one end of the network, there is an input layer that roughly corresponds to sensory apparatus. On the other end of the network, there is an output layer that stands for an action. This latter layer often consists of nothing other than a binary node with a Yes or No indicator. The most important part lies in between the input and output layers: the so-called hidden layers. This is a complex web of nodes, often structured into several sublayers, where all of the substantive computation takes place. In its basic architecture, each node of the inner layer is usually connected to all of other nodes. The inner layer computes its output by summing up inputs from incoming connections. If the weighted sum passes a certain threshold, the node sends a signal further and strengthens its connectivity with successive nodes. Conversely, if the weighted sum does not pass the node’s threshold, connectivity is weakened. This process of gradual change in the connection strength between the nodes aims at optimizing correct outputs for given inputs for the system as a whole. Given what has been said, neural networks training can be understood as providing a transition function between inputs and outputs. The function optimization is obtained by training the net on considerable quantities of data.

2.1 The appeal of connectionism

It is indeed remarkable that these simple networks, often containing just a few dozen nodes and a corresponding number of connections, have achieved significant results across various fields of cognition. Three elements of connectionism are worthy of special attention. Connectionist networks are very simple, with no explicit conceptual structure of their own. They are also modelled on the brain’s wiring, thereby overcoming an objection of the disconnection of computationalism from its underlying biological substrate. Their flexibility allows for the experimental testing of various models of cognitive processing. Finally, in their thorough associationism, they are as close to an implementation of empiricist ideas as possible.Footnote 9 In diverse categorization and recognition tasks, artificial neural networks have surpassed expectations and performed very —often approximating or even outperforming human subjects. While remarkable in their own right, their success has led their defenders to rethink the basic elements of cognitive mechanisms (for a more detailed exposition, see Rumelhart 1998). As networks achieve their results primarily by being trained on large amount of input data, one revolutionary consequence of their success is implicit support for empiricism.

Advocates of connectionism stress that networks are modelled on the actual (albeit extremely simplified) biology of the brain. The functionality of both neurons and network nodes depends on their interconnectivity and processing of incoming signals. Points of similarity do not end at the level of architecture. They are also manifest at the cognitive level: like human minds, neural networks are well equipped to work with degraded, context dependent, and multivariate inputs. Unlike in classical computational models where the damage of a single component often leads to devastating consequences for the overall task performance, the failure of one or even several network nodes is hardly noticeable. The output might be slightly more inaccurate, yet it remains reliable. Networks also master situations where the damage occurs not within their architecture, but with the stimulus. Just as human beings can perceive and recognize objects under visually challenging conditions, connectionist networks produce correct outputs for partially occluded, degraded, noisy, or otherwise altered stimuli.

Despite their operational successes, there is a limited way to ascribe individual features or recognizably human categories to a particular node.Footnote 10 Representations of features relevant to performance of a given categorization task are widely distributed. This has been an exciting piece of news to all defenders of anti-representationalism because it seems to indicate that the artificial systems have no need for robust, semantically evaluated representations. If they prove successful across a variety of tasks, networks might shed a light on human minds as well. Maybe our minds are also devoid of representations and the contrary assumptions have been held due to our inability to shed off folk psychological intuitions. Behind debates on representationalism lies a deeper question about the smallest building blocks of complex mental processes. While computationalism is fully committed to the existence of explicit representations (and has to face the worries introduced by their presence), connectionism follows in the footsteps of Skinner and Quine to exclude explicit representational features from the mind.

While the debate on the nature of representations within networks would lead us away from our main target, we want to at least indicate what is at stake and what positions were adopted by researchers on this issue. In classical computationalism, all representations are fully explicit and, whether they are taken as innate or implemented, there is no issue about their identity and specific role. Conversely, neural networks lack explicit representations, and the debate has emerged on how to account for their absence. Some authors (Rougier 2009, O’Brien and Opie 2009) argue that given the causal role representations play in any explanation of cognitive processes, it is reasonable to assume there are representations within the networks, albeit in an implicit form. Other authors argue that such an assumption rests on a confusion. Darwiche (2018) puts this point succinctly:

Architecting the structure of a neural network is ‘function engineering’ not ‘representation learning,’ particularly since the structure is penalized and rewarded by virtue of its conformity with input-output pairs. The outcome of function engineering amounts to restricting the class of functions that can be learned using parameter estimation techniques…. The practice of representation learning is then an exercise of identifying the classes of functions that are suitable for certain tasks.

Whatever the outcome of this debate, it is important to see how the influence of connectionism extends beyond the field of computer science and changes the landscape within cognitive science, psychology and philosophy. While it does not promote the radical behaviorist ‘black box’ approach to the mind that recognizes knowledge of inputs and outputs only and ignores any of its inner workings, it makes the mind significantly less transparent than its opponents would like to have it. Were the connectionist program successful, Occam’s razor would make postulations of true representations at the psychological level of explanation unlikely. Psychological terms and their adoption at the level of scientific mentalism would have to go, and the doors of empiricism would remain wide open.

2.2 Limitations of connectionist networks

While remarkably successful, connectionist networks are also prone to problems. We will only briefly mention some of them as the real target of our endeavor are analogous problems in the newest incarnation of networks within the deep learning paradigm. From the onset of their application on cognitive tasks, networks have been under attack on their incapacity to solve their target tasks properly. Notorious are exchanges between defenders and opponents of networks on the topics of compositionality and systematicity in natural languages (Fodor and Pylyshyn 1988; Aizawa 2003).

Even with a space for skepticism regarding the notion of systematicity (cf. Johnson 2004), troubles modelling compositional and systemic linguistic intuitions in connectionist networks seem to stem from the absence of any basic building blocks that could be combined to form larger linguistic units.

While we cannot delve into these discussions more deeply, we would at least like to connect them to some other issues that have been identified within the connectionist’s domain. These include difficulties with generalization from concrete examples to a highly abstract level, issue with general rules or output invariance (see Mayor et al. 2014 for an overview).

All these difficulties seem to originate from an overemphasis on empiricism. Any move from the concrete to an abstract level has been difficult for empiricists since early on and the technological advances do not come to a cheap rescue.

We are not arguing that connectionist networks are a priori unable to deal with the set of problematic cases that were used as a weapon against them early on. On the contrary, many ingenious solutions to these challenges have been offered and some are in use till now. For the thesis of the paper, the problem lies elsewhere. While various solutions might have worked, they achieved satisfactory results only because the novel architectures introduced dedicated modules and specific structures within the networks to deal with various mentioned difficulties. Their introduction meant abandonment of the original empiricist credential. Once auxiliary dedicated structural features were in, the tangibility of the claim that networks operate purely on the inputs and arrive at abstractions on their own lost its credibility. While these amalgamated results of newer networks satisfied the majority of connectionist community, because the emphasis has focused on achieving the target efficiency, empiricist undertone has been lost. None argues that complex connectionist networks with memory slots and explicit categorization modules offer a strong support to empiricism.

2.3 From connectionism to deep learning

While the connectionist program has continued mostly uninterrupted, its philosophical significance has temporarily ceased. This was largely because the debate over the nature of connectionism has moved from philosophical quarrels about the building blocks of cognition into practical concerns about optimal architecture for various tasks that systems were trained for. Yet, very recent developments in the field (since around 2015) have once again galvanized disputes about suitable approaches to cognition based on complex neural networks. The last decade has seen a revolution in artificial intelligence (AI) based on a variety of sophisticated network architectures that are often grouped together under the rubric of ‘deep learning’ (DL). The term usually refers to very large-scale networks consisting of tens of thousands of nodes with a multitude of mutually connected layers and additional dedicated features. While starting off from basic connectionist principles, deep learning resulted from a skillful combination of important architectural insights and technological advances that moved the field significantly forward.

Deep networks entered public imagination by proving remarkably successful compared to all other competitors. They achieved superhuman results in a variety of domains, such as image recognition and highly sophisticated game playing (checkers, chess, Go, and Starcraft), and very good results in a number of other fields (a list can be found at https://deepindex.org/). Experts recognise that without hardware advances, the field could not have achieved these successes. Geffner (2018) admits that: “The recent successes have to do with the gains in computational power and the ability to use deeper nets on more data.” (p.2) In his extensive historical review, Schmidhuber (2015) describes how techniques essential for the current string of deep network accomplishments are founded on principles and theories that have been known for decades. Similar observations have been made by Darwiche (2018) who credits Oren Etzioni (see ibid, ft. 9) with the thought about not-so-novel theoretical foundations of deep learning. Whether hardware powers bear crucial responsibility for the DL successes is debatable, yet it is likely that a brute force is not solely responsible for its successes. Without specific architectural advances that we comment on in the next section, the field would not achieve what it did. Darwiche (ibid.) concurrently speaks of the employment of better statistical methods for data fitting in various current approaches. Skeptically, he also assigns a role for the hype surrounding the deep learning to the downgrading of the measurement of success. For example, in the domain of language translation, early challenges in machine translation quantified the success rate of a system to translate a previously unknown text to a foreign language and back while novel approaches are fine with giving us a reasonable enough estimate of what the target text in foreign language is about. The so-called gist translation would fail the early criteria on translation success miserably, though it became the recent new standard of achievement.

We are not in a position to adjudicate a debate about the causes for DL successes. Instead, our investigation is interested in its philosophical significance. Despite the notorious triumphs of DL and vocal voices to the contrary, we believe there are good reasons for skepticism about the kind of support deep networks provide for empiricism. Our aim in this part of the paper is twofold. While we want to show that current debates on the nature of cognition via deep network architecture echo some of the core issues from the Chomsky/Quine debate, our purpose is to go further. We aspire to provide additional arguments for skepticism with regards to the overall tangibility of empiricism.

2.4 Empiricism and deep learning

There are many types of deep networks, differing in architectures and the presence of specialized submodules. Instead of a simple forward-feed model where information flows from input nodes through internal nodes towards output ones, current more complex nets include backward loops and dedicated memory modules. Because the field of deep networks is multifaceted and the optimization tasks remain of a prominent interest to the majority of researchers, only few nets are built with intentionally empiricist principles in mind. Many researchers are aware of the distinctions between bottom-up purely data-driven networks and more complex setups that includes internal modules, various gates or encoder/decoder architecture (see Baroni 2020). All of these additional architectural features have been utilized to enhance networks’ performance. As we have already indicated, our primary concern lies with the first, seemingly simpler group of networks, devoid of dedicated internal modules. There are various ways to categorize these distinct types of nets. Darwiche (2018) speaks of model-based and function-based approaches to AI, with the latter determined solely by inputs. Geffner (2018) distinguishes between solvers and learners. While the solvers compute their outputs in accordance with a model, learners are empiricist in nature, driven by the data. Geffner further divides learners into two sub-groups: deep learners and deep reinforcement learners. It is the last group that is of most interest to us as it utilizes “a non-supervised method that learns from experience, where the error function depends on the value of states and their successors” (2018, p. 2), while standard deep learning regularly relies on supervision. An analogous distinction of model-free and model-based systems is drawn in more details by Lake et al.:

The statistical pattern recognition approach treats prediction as primary, usually in the context of a specific classification, regression, or control task. In this view, learning is about discovering features that have high-value states in common—a shared label in a classification setting or a shared value in a reinforcement learning setting—across a large, diverse set of training data. The alternative approach treats models of the world as primary, where learning is the process of model building. The difference between pattern recognition and model building, between prediction and explanation, is central to our view of human intelligence. (Lake et al. 2017, p. 3)

In this text we will follow the terminology of Lake et al. and speak of model-free and model-based systems, with model-free approach being our primary concern.

The quotes above might make one believe that both systems are essential for understanding cognition. However, a part of the commotion surrounding the recent advances of deep learning stems from a conviction of some of the researchers that there are good reasons to concentrate exclusively on the model-free variants while regarding model-based approaches as secondary. If so, then the combination of ever larger data sets and brute force computing brings upon the final justification of empiricism. When Silver et al. (2017) describe their famous AlphaGo Zero system, they use unequivocal language:

… our results comprehensively demonstrate that a pure reinforcement learning approach is fully feasible, even in the most challenging of domains: it is possible to train to superhuman level, without human examples or guidance, given no knowledge of the domain beyond basic rules. (emphasis ours)

They then continue by contrasting the performance of their system with that of human beings:

… humankind has accumulated Go knowledge from millions of games played over thousands of years, collectively distilled into patterns, proverbs and books. In the space of a few days, starting tabula rasa, AlphaGo Zero was able to rediscover much of this Go knowledge, as well as novel strategies that provide new insights into the oldest of games. (emphasis ours).

The reader should take into account straightforward gestures toward empiricism in both quotes. Some researchers even argue that model-free systems can do all the work that we expect from any cognitive system. Ng endorses a full (present!) replacement of human cognitive capacities by artificial systems: “If a typical person can do a mental task with less than one second of thought, we can probably automate it using AI either now or in the near future.” (Ng 2016).

It is difficult to take these quotes seriously. While partly justified by practical successes of their systems, these quotes rely on strong philosophical assumptions which have been sufficiently scrutinized neither by the computer science community nor by the external experts. To defend empiricism in the form of the model-free approaches requires a thorough philosophical exercise. At least one philosopher has offered his methodical support for these optimistic judgments. In his 2018 paper, Buckner argues that most direct philosophically support for empiricism comes with convolutional deep networks. While relying on the already mentioned hardware advances, three characteristics have combined to make convolutional DNs strikingly powerful: network depth, convolution, and pooling (Buckner 2018, p. 5350). Originally, networks’ depth referred to the number of its layers. The greater number of layers, the deeper the network. In accordance with this assessment, a straightforward analysis might take the early connectionist attempts as fairly shallow and recent developments as significantly deep. Yet, the current complexity of the architecture might call for a more detailed assessment of depth criterion. Several suggestions from the field go beyond a simple counting of layers. Schmidhuber (2015) highlights the role of credit assignment paths in tracing the causal origins of a given output, while Sun et al. (2016) concentrate their effort on the effectiveness of margin bounds. On all approaches, the depth reflects a measure of the complexity of network processes, and proves an ever-increasing hierarchical complexity of network architecture. From the perspective of empiricism, it is important to note that the greater depth permits of a more nuanced analysis of the input data. In combination with other two mentioned features (convolution and pooling), layers at different depth can focus on distinct features of the input. The invention of convolution altered the foundations of neural networks substantially. Convolution refers to filtering algorithms that select certain input features as belonging to a particular category. For example, filters may discern the presence of an edge or a colour at a particular location in a visual object recognition task. By doing so, convolution extracts from the image specific features that lead to its ultimate classification. Crucially, the combination of depth and convolution allows for detection of invariances that are common across various target objects from a given category but might not be straightforwardly detectable at each instance of object presentation. For example, in detection of faces, eyes might be once fully visible, once seen only partially from an angle and once completely hidden, yet the network learns that despite these noticeable differences, one category of objects (a face) is always present. Finally, the pooling mechanism assesses detected lower-level features and sends an affirmation of their presence to a next layer. It works by averaging over the results of convolution, or by using a particular down-sampling operation such as max-pooling (for details, see the cited piece by Buckner). From the perspective of empiricism, pooling allows for capture of categories across various layers of complexity. Analogously to the processing of images in brain’s visual areas, networks can work their way out from the detection of the simplest features like lines and shadows all the way to the high-level categories like faces. Thanks to these three characteristics of depth, convolution and pooling, networks can learn to classify a set of objects despite a great number of nuisance variations in their presentation.

Given that convolutional networks arrive at their results solely with the help of the three above-mentioned features, they present for us a prototypical example of function-based, model-free architecture. As such, they bring in the approach that is the closest to the empiricist mind, thereby deserving a detailed critical scrutiny. In the remaining part we will demonstrate that model-free networks of this type face their own serious limitations, significantly weakening support for the thesis that artificial minds can be empiricist.

3 Limitations of deep learning

We now dive deeper into problems with specified types of the model-free networks. Our starting points find inspirations in Garnelo and Shanahan (2019), who introduced several avenues for criticism of deep learning. They locate three types of deficienciesFootnote 11 with deep learning: (1) data inefficiency, as DL requires vast amounts of data; (2) poor generalization; and (3) lack of interpretability, as deep learning achieves its classificatory task along a pathway that we have difficulties to interpret. We are about to analyze all three of them and focus on their role in the undermining of DLs empiricism. We also add one more problem, that of transferability, because it is closely related to our main concern. It is also worth noting that several authors have detected other important difficulties with the overall performance and promises of deep learning (Marcus 2018b; Pearl 2018). While appreciating their contribution to the overall discussion on the feasibility of the deep network research program, we are not addressing their additional worries, because they bear little or no relation to the question of empiricism.

In the next sections, we address each of the concerns and link them to the issue of the tenability of empiricism. While the issue of resource inefficiency illustrates a mismatch between deep learning and human cognition, it is the other three concerns that display failures to support empiricism. Issues with the failure of abstraction indicate a crucial weakness in model-free architecture that we have little idea how to remedy. This weakness prevents deep networks to both get a grasp on some of the most general categories of cognition and understand causal underpinning of worldly events. Transferability failures show lack of robust representational powers on the side of the nets. These are further demonstrated in a set of opacity concerns. The opacity of deep learning algorithms usually means that “the computations carried out by successive layers rarely correspond to humanly comprehensible reasoning steps, and the intermediate vectors of activations they generate usually lack a humanly comprehensible semantics” (Garnelo and Shanahan 2019, p. 17). Yet, this partly epistemic reading of opacity is secondary to us. The real limitation, with regards to empiricism, comes from seeing how opaque nature of deep networks creates its own idiosyncratic categorization structure. The resulting categorization is then inherently vulnerable to various kinds of adversarial attacks. Upon discussing all these various difficulties, we will finally be able to spell out parallels to the Chomsky/Quine debate and address the current status of empiricism directly.

3.1 Resource inefficiency

The first category of problems arises from quantitative requirements on deep learning. Data dependence is listed among the most perplexing issues in the field (Tan et al. 2018).

Let us compare the learning curve for human beings and networks on identical tasks. The often-cited successes of deep networks in game playing seem less impressive when considering how humans can achieve similar results with much less input—and much more quickly (see Fig. 3 in Lake et al. 2017). To attain the celebrated victory in the game of Go, the winning algorithm had trained on a number of games that would correspond to some 200,000 “human” years of playing the game—relying on extremely large data sets. Networks typically demand training sessions that last for tens (and hundreds) of thousands of trials. It is only after this amount of exposure that they are able to categorize objects from a given domain. This does not even remotely match the human ability to learn from just one example (Lake et al. 2015).Footnote 12 It is unclear (and improbable) whether increasing the number of nodes and layers or tweaking the internal structure of a network can remedy such a huge discrepancy.Footnote 13

While the belief in the brute force paradigm of ever-increasing computational power to overcome resource inefficiency remains popular, there is an increasing skepticism about the approach. One of the most persistent objections is the lack of learning-to-learn strategies within networks (Lake et al. 2017). This limited ability to come forward with new learning strategies is what prevents model-free systems from reducing the size of data sets, required for training.

Yet, we also want to argue that, while indicating a significant gap between humans and networks, resource inefficiency is in itself not an argument against generic empiricism. If the crux of empiricist commitments relies on derivation of categories from pure data, we should not be surprised that immensely large data sets are required. On the other hand, an attempt to explain human minds in traditional empiricist terms loses its attractiveness due to the significant dissimilarity in efficiency of learning strategies between networks and humans.

3.2 Abstraction

Abstraction is a broad notion and when researchers report failures of networks to abstract, they often mean different things. The most common usage is that of obtaining invariant categorial information about a given target from its tokens. A related usage refers to a possibility to derive general rules from circumstantially distinct instances of certain phenomena. While the first notion of categorization is crucial for various discrimination tasks, the second is often invoked in language comprehension and production. Finally, there is a third layer of abstraction, that of uncovering causal relations between phenomena. It plays a dominant role in science, but is also prominent in folk theoretical explanations. We will briefly demonstrate that, within the field of model-free deep learning, processes of abstraction face difficulties at all three layers.

3.2.1 Categorial abstraction

While the concept of abstraction is not precisely delineated, several important notions seem to play a role in judging an operation as abstraction. On the most common reading, abstraction amounts to an extrapolation of a relevant category from concrete examples within a particular domain. Taylor et al. (2015) define abstraction as

a process of creating general concepts or representations by emphasizing common features from specific instances, where unified concepts are derived from literal, real, concrete, or tangible concepts, observations, or first principles, often with the goal of compressing the information content of a concept or an observable event and retaining only information which is relevant for an individualized goal or action.

This process can achieve various levels of generality as any target input can be subsumed under several distinct categories. The task of a network is to learn to detect and reliably track criteria according to which targets are subsumed to the particular category. However, there is some inherent difficulty in abstraction tasks for the networks:

In classic abstraction, states that are similar with respect to a property of interest are merged for analysis. In contrast, for NN, it is not immediately clear which neurons to merge and what similarity means. Indeed, neurons are not actually states/configurations of the system; as such, neurons, as opposed to states with values of variables, do not have inner structure. Consequently, identifying and dropping irrelevant information (part of the structure) becomes more challenging. (Ashok et al. 2020).

As the quote indicates, the very architectures of networks makes it inherently difficult to select relevant invariance features and suppress the redundant ones. To put it differently, networks have to find a way to compress information about their input while preserving only information pertinent for generalization to yet unobserved examples from the same category. Undoubtedly, it is a daunting task for any empiricist.

In line with the empiricist assumptions, it has been long assumed that networks do not search for predefined features as they only generalize from the input data and nothing else (Ramsey and Stich 1991). However, even before network training process starts, fundamentals of abstraction are smuggled into the learning process by a pre-classification of a training set. The presence of classificatory labels that are associated with training sets constitutes implicit comparative patterns, providing a springboard for abstraction. Successful image recognition, for instance, is based on images that have been pre-classified by hand. The provision of prior classifications can create the illusion that networks are classifying all by themselves. Yet without supervised pre-labeling, no classification would be possible. This supervised pre-labeling is not to be confused with a more general notion of supervised learning. While supervised labeling delineates the target category, in supervised learning the network receives feedback on the precision of its categorization processes. Even in unsupervised learning, feeding the network unlabeled content from within one category is sufficient to provide implicit category membership. Neglecting the crucial role played by pre-classified input generates a misleading notion about the spontaneous emergence of a necessary categorial structure. We also want to point out that the presence of implicit labels that result from pre-classification does not constitute a problem in itself. Empiricism operates with labels—after all, they are to be found everywhere and serve empiricists just like any other input. On the empiricist picture, minds are learning to label particular items, properties or events. The real problem consists in the fact that pre-classified inputs are fed to the network as if they were raw data, when in fact they are pre-processed by human minds.

Whatever the origins and hidden characteristics of the input data, the question remains whether a network is capable of real abstraction over its inputs. Buckner (2018) argues that, due to their specific architectural design, deep networks are indeed conducting a genuine process of abstraction. He speaks of a specific transformational abstraction, which employs all three essential building blocks for networks, mentioned in Sect. 2.4. Their depth allows an input to undergo hierarchical processing that, with each step, abstracts away from the particularities and fixates a categorial invariance. During the process, the network learns to ignore a number of nuisance variables of token inputs and to acquire relevant categories instead. Convolution functions as a filter that detects essential features and leaves aside all the others. Concurrently, the pooling operation decides about the presence of categorial features to be delegated to a higher processing layer. It does so across a larger detection area, thereby determining whether a feature is a local aberration or occupies a more significant position and is therefore crucial for the categorization. A subsequent layer conducts the process again, this time searching for a more abstract input feature. With enough depth, networks can learn to classify vastly divergent stimuli within a single high-level category while neglecting their idiosyncratic individual features.

It is easy to see why the process of transformational abstraction is seen by Buckner (2018) as justifying empiricism. While networks are building up their categories solely from inputs, it looks like we are back in the tradition of furnishing minds with experiences only. Given that Buckner explicitly ties his analysis of deep learning to the central debate on empiricism, we will address his conclusions shortly. However, one difficulty should be noted right away. Even by invoking their intricate processes, convolutional networks never arrive at some of the most general concepts, such as negation, and universal or existential quantifiers. As Buckner (2018, p. 5360) notes, “additional components … might need to be added to deep convolutional neural networks [DCNNs] for them discover mathematical or geometric properties themselves”. We claim that such additional components are likely to lead us astray from the doctrine of empiricism.

3.2.2 General rules

The second layer of abstraction, that of acquiring general rules, can be illustrated by an intense battle waged since the early days of connectionism and continuing to the present. We refer to the debates (Fodor and Pylyshyn 1988; Fodor 1992) on compositionality (in vision, action, language, goal-settings, etc.). The vast complexity and productivity of cognitive processes give support to an assumption that such diversity is possible, because simpler elements of cognition are combined to create novel units. For the new units not to be random, systematic rules are needed. This overall compositional character of mental and behavioral processes brings in serious difficulties to all explanatory strategies built on empiricist foundations. In representationalist architecture, the units of combination are clearly delineated and as such they can enter as variables into place holders of general rules. In neural networks, it is not at all clear what elements could be combined in such a manner. Classical connectionism struggled with this fact, echoing Chomsky’s criticisms of Quine’s associationism. Unless their creators deliberately formulate a more explicit account of simpler building blocks, such as with objects in scenes (Eslami et at. 2016) or explicit subgoals in an action (Kulkarni et al. 2016), novel deep learning approaches also face difficulties accounting for this phenomenon.Footnote 14 Yet moves to enrich networks with additional dedicated structures resemble Quine’s eventual abandonment of his purely empiricist strategy for a hybrid view that does not reconcile well with his original intentions.

Problems with abstracting towards general rules directly follow from an assumption that networks learn by association only. That assumption makes learning general rules especially troubling. But are not general rules a primary resource for our cognitive make-up? We do not necessarily have to think of complex moral rules; rules for mathematical operations or learning by induction are problematic enough. Just as one can learn to categorize images on the basis of countless examples, one should be able to obtain general rules from observing their individual instances. Yet this level of abstraction has not been observed in deep learning networks. In fact, even defenders of abstraction processes within networks acknowledge failures in this domain. For example, when Baroni (2020) analyzes an ability of networks to capture hierarchical tree structure of language, he discovers that “when … models are not provided with explicit information about conventional compositional derivations, they come up with tree structures that do not resemble those posited by linguists at all.” Once again, this is not a welcoming development for a defender of model-free approaches.

3.2.3 Causality and correlation

The third level of abstraction is concerned with capturing causal relations between events. It is the very nature of association processes that they are merely uncovering correlations. Philosophy of science has taught us long ago that correlations and causations differ radically and unless networks are able to capture the latter, they will not be of much help to explicate the events they observe. Throughout his career, Judea Pearl has offered many important insights on the difference between simple correlations and causality. In his recent paper (Pearl 2018), he points out inherent limitations of neural networks in tackling causal relations. Causality necessarily involves counterfactual reasoning. In causal investigation one asks whether a specified event would bring about another under different circumstances. However, associations exist between observed events only. Because networks are built as association machines, there is no place for them to handle the domain of counterfactuals. In the same paper Pearl (ibid.) points out that this does not rule out a possibility to capture causal relations by artificial intelligence. Artificial systems, enriched with various models of the world, are capable of detecting and explicating its underlying causal structure. A similar point is made by Lake et al. (2017) when he advocates for the use of explicit models in cognition: “Cognition is about using … models to understand the world, to explain what we see, to imagine what could have happened that didn’t, or what could be true that isn’t, and then planning actions to make it so.” (ibid., 2). In this quote, we want to stress the role of counterfactual situations. The only way a network can assess for such situations is with the help of an explicit model. Yet such move cannot satisfy a defender of empiricism. Counterfactual relations can be discovered, but not by purely empiricist methods.

3.3 Transferability

The general project of artificial intelligence was originally conceived as that of building artificial systems that attain general intelligence or at least solve generic problems (for the very early formulation, see Newell, Shaw and Simon, 1959). While it is hard to specify what the criterion of attaining general intelligence or generic problem-solving amounts to, one requirement seems obvious. It is a system’s transferability. A system can be assigned general intelligence or said to be capable of solving generic problems when its success to solve tasks in one domain can be transferred onto another domain. The scope of generality is difficult to set beforehand (should one system be able to solve quadratic equations, design reclining chairs and shoot basketball to count as a generic problem solver?), so some reasonable restrictions of the target domain is legitimate. Even with such restrictions in place, no system has demonstrated its ability to solve generic problems. However, given the input-driven architecture of model-free networks, their attainment of the general problem-solver’s goal seems particularly hopeless. Their training, focused on a particular task, makes transferability of the resulting function particularly troublesome. Geffner (2018, p. 4) describes “transferring useful knowledge from instances of one domain to instances of another” for model-free networks (“learners”, in his vocabulary) as particularly challenging. He also explains why that is the case: “Learners can infer the heuristic function h over all the states s of a single problem P in a straightforward way, but they cannot infer an heuristic function h that is valid for all problems. This is natural: learners can deal with new problems only if they have acquired experience on related problems.” (p. 3). Importantly, it is fair to say that some transferability is achievable in deep learning networks. However, as Marcus observes, the applicability of a learnt function is surprisingly restrictive: situations “in which the deep reinforcement learning system is confronted with scenarios that differ in minor ways from the ones on which the system was trained show that deep reinforcement learning’s solutions are often extremely superficial.” (2018, 8) He illustrates the point on various games that networks have excelled at. Networks that mastered Atari games were very different from those successful in Go. Differences within the domain of board games (board size and its symmetry, differences between games with perfect and imperfect information) are sufficient to block an algorithm’s efficacy.

Lake and Baroni (2018) make similar point in the domain of language comprehension, when they claim that recurrent networks “generalize well when the differences between training and test … are small [but] when generalization requires systematic compositional skills, RNNs fail spectacularly” (2018, 1). This is a very unwelcoming consequence of the model-free deep learning approach. Yet it is illustrative of the pitfalls of empiricism. If a system is fitted to a particular task by being endlessly trained on one kind of input data, there is only a diminished chance it would perform well on dissimilar kinds. If empiricist-based models are to bring problem-solving success, it is going to be necessarily restricted to a small subset of tasks. Transferability even within a single domain (say, board games), is virtually impossible to ensure and general problem solving remains an elusive dream.

3.4 Opacity

The property of opacity is hotly debated within the domain of deep learning networks (Burrell 2016; Zednik 2019). Opacity refers to our inability to comprehend functional dependencies within the network. We can also say that it signifies the indeterminate way networks represent humanly recognizable features of input. Due to their immense distributive complexity, deep learning networks are epistemically almost impenetrable. Tinkering with networks is often a matter of trial-by-error as we have very little idea why they perform the way they do and how they learn what they do. Consequently, in comparison to systems with explicit instructions, networks suffer from low debuggability. When nothing is known about their inner dependencies, it is nearly impossible to fix possible errors. This observation points us once again to the ‘black box’ problem that we have repeatedly encountered before. While networks are not black boxes in the strict behaviorist sense, their inner workings are substantially obscured. Black boxing, it turns out, is not only epistemically significant, but it also has practical consequences.

Acknowledging epistemic and practical difficulties of opacity, we try to extend its significance to the debate on empiricism. We illustrate our point with notorious cases of adversarial attacks on deep learning networks. So far, the problem has been covered almost exclusively by the computer science community; philosophers and theoreticians of cognition have not taken a strong enough lesson from these effective efforts to block networks’ performance. Adversarial attacks are simple methods of stalling the success of networks by targeting the categorization process for the very domain in which they are supposed to be expert classifiers. The unusual behavior of networks under the attacks was first noted by Szegedy et al. (2013). Since then, it has been demonstrated that the issue is more widespread than originally conceived. Adversarial interventions either alter non-robustFootnote 15 features of the target inputs (inputs still remain easily recognizable by humans) or introduce changes at the level completely imperceptible to human observers. During the attack, alterations of the input data substantially weaken or completely paralyze successful operation of a network.Footnote 16 There are two versions of adversarial attacks, white box and black box ones (Huang et al. 2017). A white box attack requires knowledge of the network’s architecture. This knowledge expedites access to system vulnerabilities. While white box attacks are interesting, from the perspective of empiricism black box attacks are more intriguing. These occur with no prior knowledge of a particular network architecture. “Attackers” only know the training set and categorization task (e.g., the classification of a picture as a human face). Upon witnessing a black box attack, an uninitiated observer might be very puzzled by the behavior of the network that fails utterly, while all features of its operations remain apparently undisturbed. The fact that consolidated networks can be fooled by imperceptibly minor perturbances on the input side should cause alarm for any proponent of empiricism.

The scope of these disturbing outcomes has been placed under scrutiny by Ilyas et al. (2019). Their research team suggests a reversal of a common explanation of successful adversarial attacks. The previously held views explain vulnerability to attacks as resulting from overfitting or particular design flaws. The authors point out that non-robust features of the dataset, susceptible to the attacks, actually perform essential functions within the network. These features that humans are not capable of detecting, help to optimize the performance of the network. In an insightful experimental setup, Ilyas and his team separated humanly undetectable features within a given category (e.g., planes) that are vital for network’s classification and overlapped them onto images of a different category class (e.g., animals), preserving the original labels (names of airplanes). The network was then trained on these newly conjoined images with labels from the original category set. Although the exercise was deeply confusing in human terms, the trained network was eventually able to classify a standardly labelled dataset with both images and labels that corresponded to the robust human categories. (Trained on pictures of animals that contained undetectable features of planes, together with the plane labels, the network learnt successfully to classify planes.) As the researchers concluded, “this demonstrates that adversarial perturbations can arise from flipping features in the data that are useful for classification of correct inputs (hence not being purely aberrations)” (Ilyas et al. 2019, p. 2).

There is a further worrisome consequence of adversarial attacks. As Ilyas et al. (2019) and other teams have observed, these attacks are transferable across various architectures trained on identical data sets with unexpected ease. Authors suggest why: “different classifiers trained on independent samples from [the same] distribution are likely to utilise similar non-robust features” (ibid., p. 8). Networks find humanly indiscernible input features very instrumental in their image categorization.

These recently discovered properties of network make them not only vulnerable to malicious attackers, but also inform us about principled limitations of model-free networks. Crucially, these observations of adversarial attacks should be very unwelcoming to any defender of empiricism. If the thesis of empiricism consists of utmost reliance on human experiences to obtain the cognitive content, with networks we see those experiences as we know them, are actually secondary. Some other, humanly undetectable features of input turn out to be cognitively more important than experiences.

4 Analysis of the central problems

We have seen in the previous section that despite their successes, deep networks are engulfed in several serious difficulties. While proving their skills across diverse domains, they do not meet proclamations of some of their proponents of achieving human-level general intelligence solely from large-scale dataset training. Accomplishments of the model-free networks remain limited to specifically defined tasks. Furthermore, as the issues with adversarial attacks demonstrate, even within accomplished tasks networks are performing in a highly unorthodox manner. As we have already indicated, the above-mentioned limitations of the nets are closely tied to the empiricist nature of network’s operations. Let us start with a closer look at opacity and its causes. Eventually we will expand our picture to cover all the other problems discussed above.