Abstract

Robotics is currently not only a cutting-edge research area, but is potentially disruptive to all domains of our lives—for better and worse. While legislation is struggling to keep pace with the development of these new artifacts, our intellectual limitations and physical laws seem to present the only hard demarcation lines, when it comes to state-of-the-art R&D. To better understand the possible implications, the paper at hand critically investigates underlying processes and structures of robotics in the context of Heidegger’s and Nishitani’s accounts of science and technology. Furthermore, the analysis draws on Bauman’s theory of modernity in an attempt to assess the potential risk of large-scale robot integration. The paper will highlight undergirding mechanisms and severe challenges imposed upon our socio-cultural lifeworlds by massive robotic integration. Admittedly, presenting a mainly melancholic account, it will, however, also explore the possibility of robotics forcing us to reassess our position and to solve problems, which we seem unable to tackle without facing existential crises.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

It is hard to find domains in our lives that have not been conceived of as potential application contexts for robotic technologies. Although there is still a long way to go, science seems to close in on fiction. Robots are, for instance, already being deployed to assist in precision surgery, as integral tools in modern warfare (e.g., drones), as social companions in the healthcare sector (e.g., ParoFootnote 1), and in outer space (Kibo Robot ProjectFootnote 2). In Japan, robotics is even utilized as an instrument by the government to deliberately engineer culture (cf. Robertson 2017; Wagner 2013). Extending far beyond mechanical engineering, robotics has drawn symbiotic interest from a great variety of disciplines; philosophers, anthropologists, psychologists, and historians, and even roboticists themselves (e.g., Seibt 2017; Robertson 2017; Turkle 2012; Nocks 2008; Nourbakhsh 2013) are exploring the various non-technical facets and implications of robotics. The pervasiveness and impact of this type of technology propels ongoing debates within academia and the media with its prospects ranging from utopian to dystopian magnitudes, e.g., solving demographic challenges (Breazeal 2005), threatening the well-being of our elderly (Sparrow 2015), risking emotional impoverishment (Turkle 2012), and leading to mass unemployment (Ford 2015). Predicting concrete usage of novel technology is notoriously hard (cf. Pearson 1991; Ihde 2008; Postman 1993), but due care dictates that we at least try our best instead of leaving future developments to serendipity or mere business interests. It is in the context of speculative prudence that this paper aims to contribute with a philosophically informed outlook on the disruptive potentials of what will be referred to as robotification (i.e., massive robotic integration). Such an inquiry is not new; in similar ways, worst-case scenarios were investigated and taken seriously by the scientific community in the 1970s with respect to genetical engineering—although, the research community de facto had no hard evidence for the realization of the envisioned scenarios (cf. Berg 2008). By exploring ‘potentials’ in this sense, as real possibilities, not only researchers in human–robot interaction but also all stakeholders are, qua being humans who care for each other, invited to find responsible ways of participating in the technological evolution.

The critique offered in this paper will build on interpretational concepts and theories of three thinkers—Martin Heidegger, Keiji Nishitani, and Zygmunt Bauman—to present a hermeneutics of disruption pertaining to robotification; or phrased differently, the aim of the paper is to spell out latent structures and mechanisms identified by these intellectuals and point toward their possible implications. In a first step (“Mechanics”), I will introduce robotics in the theoretical embedding of Heidegger’s and Nishitani’s philosophy of science and technology, transposing their findings as underlying mechanisms into the domain of robotics. Consequently, it will be argued that robotification severely affects the meaning and value of whatever it touches upon. In a second step ("Disruptive potential: ethical cleansing"), I will expand the investigation with Bauman’s analysis of modernity to better fathom the magnitude of the potential negative impact of robotification on our society. These two theoretical sections will be followed ("Tearing and stretchmarks") by more concrete exemplifications of present/projectible challenges, which highlight the complexity and dual nature of robotification as well as the dangers outlined in the previous sections. While the nature of the inquiry is dystopian, the final part ("A way out?") discusses whether our human limitations may in the end not only safeguard us but also enable us to improve our ways of living with each other.

After this brief introduction and roadmap, it may be appropriate to outline some of the paper’s limitations. The paper draws exclusively on the thoughts of only a handful of thinkers (mainly Heidegger, Nishitani, and Bauman) and, by a rather coarse-grained and implicit synthesis, applies their theories to the topic of inquiry.Footnote 3 It is not about proving that these thinkers are right or wrong (e.g., with their understanding of science and technology) nor about producing an in-depth analysis of their compatibility. The main ambition is to use their ideas and build on their shoulders a philosophically informed account of the possibly highly challenging impact that robotics may have on our culture and society. It is also not the case that I have no ‘techno optimism’ or suffer from misoneism; on the contrary, there are many fascinating and benign aspects of robotics. Nevertheless, this does not exempt us from investigating its ‘dark side’ seriously. How the future will turn out and whether robotics will transform our world into some sort of global Shangri-La would be the topic of another paper. Given these limitations, there is no claim that what is presented here is the only and correct view on the topic at hand. Hopefully, however, the reader will find the analysis and conclusion robust and informative.

Another point of clarification worth taking up from the beginning is of a conceptual nature. It is notoriously hard to provide a meaningful definition of a robot (e.g., Wagner 2013: 4–5; Nourbakhsh 2013: xiv–xv). Furthermore, with this in mind, it is also important to stress that it is not the concrete robotic artifact that is the central topic of investigation as such, but large-scale robotic integration into our various lifeworldsFootnote 4—including technologies such as IoT, automatization, AI, machine learning, etc.Footnote 5—which will also be referred to as robotification throughout the paper. While providing a more rigorous definition of the term is impossible due to the indeterminateness and inclusiveness of the phenomena it tries to cover, I hope that the meaning and intention will be sufficiently clear in the rest of the paper.

2 Mechanics

To better fathom possible challenges imposed on us and our institutions by robotification, it is not sufficient to only put on our empirical lenses when attempting to evaluate envisaged practical dis-/advantages. While industrial deployment of robotic solutions is nothing new, social robotics opens entirely novel domains of application to mechanization. We would need to brace ourselves with patience until mainstream adoption of social robots to assess their full impact on our lifeworld, i.e., beyond the various microcosms of living labs, experimental setups, or nascent first mover implementations. Hence, given the incipient and wide spanning nature of the robotic phenomenon—or better phenomena—it is important to also examine the inherently underlying metaphysical mechanics and ontological processes that are at play. The attempt to probe into the latter structure to understand its significance, the fiat of robotification, is the aim of this section. It will thereby demonstrate the inherent ‘mechanics’ by which robotification challenges the meaning of the domains it touches upon and provide the more concrete interpretations of possible negative real-world impact in the subsequent sections, with a firmer foundation.

Many factors drive robotic developments, such as political and economic dynamics(cf. Sparrow 2015) or the visions of science fiction which have been and still are amongst the formative sources of inspiration for roboticists (cf. Robertson 2017; Wagner 2013; Gunkel 2018), but science provides the undergirding realm for robotic development—in the form of, for instance, instruments and methodologies. Importantly, it is no longer a mere engineering undertaking; research into robotics has become a truly interdisciplinary field, and it is discussed whether it will even transform into a transdisciplinary endeavor (Seibt et al. 2020). Science and technology have blessed us with ever more knowledge, their spin-offs substantially improved our ways of living; however, there is a fundamental challenging aspect, easily hidden by its triumphal procession, that needs to be accounted for especially when it comes to robotification. In the following, the paper will examine the notion of science and technology. More concretely, it will build on the work of Martin Heidegger, and Keiji Nishitani,Footnote 6 and present social robots as crystalized manifestations of the inherent modus operandi of modern science and technology. Based on these thinkers, the case will be made that large-scale robotic integration comes with the imminent risk of depletion of meaning, i.e., an existentialFootnote 7 risk not only to our self-understanding but also to the significance of our relations and institutions in general.Footnote 8 This risk and the practical magnitude of the potential disruption will be further explored in the subsequent next sections of the paper. However, first, I will sketch how science and technology are understood in this paper, i.e., as outlined by Heidegger and Nishitani. Different theories or even interpretations could have been used instead of the ones proposed here, which furthermore may lead to different conclusions (for instance, with respect to Heidegger, see Nørskov 2011, 2016; Gunkel 2012). However, as mentioned in the previous section, this paper constrains itself to a narrow theoretical scope and is not about providing a comparison, contrasting opposing views, nor arguing for the right one. Instead, it is about providing and examining one of the options in the vast space of possible intellectual theories and futures.

On a fundamentally epistemological level, science is always about something. The various phenomena of our world become objectified and categorized—hence, interpretable and subjectable to the methods and everyday business of the various scientific disciplines.Footnote 9 Striving toward objective generalizability and universal validity, science aims for impartiality when it comes to the constituents that comprise its domain of investigation. Heidegger makes explicit that this also comes with a significant limitation and gives the following example in Das Ding (2000a) [The Thing]Footnote 10:

In the scientific view, the wine became a [mere] liquid, and liquidity in turn became one of the states of aggregation of matter, possible everywhere. (Heidegger 2001: 169)Footnote 11, Footnote 12

In the process of understanding the various phenomena in the world—in the quotation above, a wine jug—the scientific account goes beyond an exhaustive understanding of the actual concrete reality of the jug at hand. His contemporary and former student Keiji Nishitani follows the same line of thought:

The progress of modern science has painted the true portrait of the world as a desert uninhabitable by living beings; and since, in this world, all things in their various modes of being are finally reduced to material elements—to the grains of sand in the desert of the physical world—modern science has deprived the universe of its character as a ‘home’. (1982: 109)

Central to Nishitani’s critique is that science sets us on a path toward nihilism, since its inquiry basically reduces everything to impersonal objects. Caricaturized, the issue brought to our attention by these two thinkers is that scientific investigation is like using a sledgehammer to crack a nut when it comes to the methodological approach of understanding its essence; the sledgehammer will crack the nutshell and together with the seed every component will be there. Nishitani provides the following example with a much more complex object of investigation, namely human consciousness:

The various activities of human consciousness itself come to be regarded in the same way as the phenomena of the external world; they, too, now become processes governed by mechanical laws of nature (in the broader sense). In this progressive exteriorization, not even the thinking activities of man elude the grasp of the mechanistic view. (ibid.: 110)

This type of inquiry does not warrant that we are able to holistically grasp the full phenomenological meaning of the nut or human consciousness of a concrete animal or a human being. However, there is more to the modus operandi of science. Following Heidegger’s account, the modality of the interplay between us and the world is pivotal. In his writing, modern technology is a central driving force of modern science as for instance indicated in Wissenschaft und Besinnung [Science and Reflection] (2000c), elaborated upon in Die Frage nach der Technik [The Question concerning Technology] (2000b), and summarized by Ihde in the following two quotes:

[T]echnology is the source of science, technology as enframing is the origin of the scientific view of the world as standing-reserve. (2010: 37)

The science Heidegger refers to in, e.g., Die Frage nach der Technik, at least the one he uses to exemplify his position, is modern physics (2000b: 23). However, since mathematics, most parts of contemporary philosophy, psychology, history, etc., and even anthropology, all engage in ordering and categorizing the empirical or abstract phenomena in the process of rational sensemaking, his theory is here taken to be applicable to all disciplines which aspire to the acquisition of objective knowledge. If Heidegger is right, which will not be contested here, modern technology as well as science, qua transitivity, inherently subject us to great danger. More concretely, we become exposed to being caught in a modality where we only interpret the world around us as instrumental goods, or in his technical diction, standing reserves—i.e., in terms of its utility and usability for something else, and furthermore, we may even never become aware that we are part of this process as it unfolds (2000b). Think of how many of us meet animals on a daily basis, vacuum packed or chopped into palatable refrigerated parts, ready for consumption. Amongst Heidegger’s own examples, we find the hydroelectric power station or the wind turbine, which highjack the meaning of the river or wind entirely by being resources for energy transformation (ibid.). While many aspects of our human lifeworld reflect this modus of interpretation and reasoning—e.g., human resource management is such an instrument—robotics potentially subjects all aspects of the human condition and its socio-cultural habitat predominantly to the technological realm.

As mentioned in the beginning of this section, robotic research has transformed from being more or less exclusively an engineering undertaking to including a broader portfolio of disciplines. This trend is more than welcome as new knowledge is generated; nevertheless, it is important to remember that the attempts to materialize human structures are still made within the restrictions of objective scientific investigations. By mapping an analogue world to digital platforms via the various scientific methodologies, social robotics subjects us to the above-mentioned risks of deflation and estrangement; again, in Heidegger’s terminology, we may transform and view our environment and even ourselves in terms of standing reserves. In this technological mode of being, we establish a world where we are enticed to entrust and outsource, for instance, our dearest and ourselves to mechanical structures in which everything is basically reduced to fungible commodities that obtain their value according to some objective metrics (cf. Sparrow’s critique of the automatization of the elderly care sector, 2015; for an overview of the current automation potential see, e.g., McKinsey 2017).

Given the vital role of social relationships when it comes to our well-being (e.g., Aristotle), the development of robots, which are considered to function as, e.g., interaction partners for our elderly and children, basically epitomizes this aspect of modern technology and science. This dimension is even spelled out by its etymology, as the word describes the affordance space of the ostensible phenomenon rather nicely. Derived from Czech, it translates into forced laborer, i.e., a slave or servant. The deliberate creation of machines that love us, entertain us, care for us, and work for us (in all the shadings of the ‘as if’, cf. Turkle 2012; Seibt 2017), i.e., satisfy whatever individual or social need we have—like slaves—basically reduces these activities to services. The uniqueness of the service provider in principle becomes irrelevant to the degree of specifiability and emulability of what constitutes the service. From a purely functional point of view, it makes no difference whether robots serve us the wine in a restaurant, drive our cars, and take care of our kids, or whether human beings do it, as long as certain capacity criteria are sufficiently met. That these criteria may be arbitrary, hard, or impossible to meet (cf. Gunkel 2012) does not exclude technological rendition but may rather function as a catalyst and motivate researchers and roboticists to attempt to break these thresholds. In his analysis of Heidegger’s philosophy of technology and alterity, Tomaz highlights the reductionistic convergence linked to the technological impact on society:

In modern societies, that is, in the age of technology, we have a tendency to reduce all our actions to formal behaviors, to machine-like procedures. This tendency becomes a self-fulfilling prophecy—reduced our Being (capacity-to-be) to machine-like aspects, we cannot distinguish ourselves from technological equipment that appears to behave like we behave. Hence the tendency to design “friendliness” (i.e., “a user friendly interface”) into our technological equipment develops not because of the technological advance in itself, but because of its progressive success in reducing our comprehension of the complexity of our existence and social life. (2016: 173–174)

As technology drives us toward maximizing the effect with the least amount of effort (cf. Heidegger 2000b: 16), it only seems natural that also social relations are subjected to the scrutiny of its calculus in progress. We are not isolated otherworldly entities, but are always embedded in the context of our lifeworld (Heidegger 1926); philosophers and psychologists from various epochs have drawn attention to our deeply social nature (e.g., Schopenhauer 1990: 156; Watsuji 1996: 14; Hood 2013: 28–31). Our cognitive make-up, nevertheless, seems limited with respect to how many social relations we can maintain thoroughly (Dunbar 1998; Hood 2013). Consequently, an influx of robotic social agents into our social affordance spaces is a serious intervention as it competes with human–human relations—or put more explicitly by Postman:

Technological change is neither additive nor subtractive. It is ecological. I mean "ecological" in the same sense as the word is used by environmental scientists. One significant change generates total change. If you remove the caterpillars from a given habitat, you are not left with the same environment minus caterpillars: you have a new environment, and you have reconstituted the conditions of survival; the same is true if you add caterpillars to an environment that has had none. (1993: 118)

Turkle’s psychological assessment of the impact of social technological artifacts adds further tension. She termed the break-even point an “emotional […] and […] philosophical—readiness”, where “performance of connection seems connection enough” (2012: 9) or is even preferred over human–human interaction as the “robotic moment” (see, for instance, quotation below). Her criticism is captured by the very title of her book, namely that we are “alone—together”, while the machines we interact with elicit us to think otherwise. Referring to her empirical studies, she writes:

As I listen for what stands behind this moment [referring to the robotic moment], I hear a certain fatigue with the difficulties of life with people. We insert robots into every narrative of human frailty. People make too many demands; robot demands would be of a more manageable sort. People disappoint; robots will not. (ibid.: 10)

The technological mode of engaging with the world, be it humans or other entities, pushes us toward an irresistible direction when it comes to the leveling of whatever dissatisfaction we carry with us or the realization of our hedonistic urges. However, applying Heidegger’s as well as Nishitani’s critique from above, this comes at a price, namely that if we interpret the affordances within this notion of science and technology, epitomized by robotics, we risk deflating the significance and meaning of our relations to the level of services. Nishitani captures this dimension leaning on Nietzsche’s Zarathustra in the aforementioned quotation, where he accuses “modern science [of having] deprived the universe of its character as a ‘home’” (Nishitani 1982: 109).

Currently, robotics seems to be the Wild West where no type of relationship nor their relata are sacrosanct when it comes to robotic integration (robotics for caring—e.g., Paro,Footnote 13 killing—e.g., LAWS,Footnote 14 preaching—e.g., BlessU-2,Footnote 15 etc.) Whether ethics is fit to mitigate and provide the necessary impact to tackle the robotic moment is unclear. For instance, Seibt’s non-replacement maxim demands that “social robots may only do what humans should but cannot do” (e.g., Seibt et al. 2018: 37) may be a promising guideline. However, an important point to take from Heidegger is that the way we interpret the world is not entirely up to us (cf. 2000b: 24; Thomson 2011: 203). He writes with respect to technology:

As this destining, the coming to presence of technology gives man entry into That which, of himself, he can neither invent nor in any way make. For there is no such thing as a man who, solely of himself, is only man. (2004b: 49)Footnote 16

And explicitly with respect to science:

The power of science cannot be stopped by an intervention or offensive of whatever kind, because “science” belongs in the gathered setting-in-place [Ge-stell] that continues to obscure the place [verstellt] of the event of appropriation. (1998: 259 f.n. a)Footnote 17

Accordingly, our influence—as individuals and collectives—when it comes to the robotification of our lifeworld is nontrivial. Instead of figuring out how we can deliberately tackle the problem, the paper will try to examine the structures and limitations that may actually force us into a symbiotic relationship with robots and each other. Before doing so, the next section will bring this issue closer by further investigating the disruptive potential on a socio-cultural level.

3 Disruptive potential: ethical cleansing

Taught to respect and admire technical efficiency and good design, we cannot but admit that, in the praise of material progress which our civilization has brought, we have sorely underestimated its true potential. (Bauman 2013: 9)

Various reports indicate a considerable growth in all robotic sectors, ranging from industrial robots to service and entertainment robots (cf. IFR 2019a; b; Bhutani and Bhardwaj 2018, 2019; McKinsey 2017). Under the assumption that they are right and given the limited influence that we have on the plasticity and reversibility of technology, it becomes important to understand the disruptive potential of large-scale robot integration. Culture influences science and technology and vice versa (e.g., McLuhan and Fiore 1967; Postman 1993), or framed slightly differently:

To enter any human-technology relation is already both to “control” and to “be controlled”. (Ihde 1990: 140)

Intellectuals such as Panikkar emphasize that the usurpation of technology is likely to infringe upon cultural diversity, resulting in technocracy becoming the dominant monoculture of our future (2000). If we think about the impact of the agricultural and industrial revolution or the computer and Internet on our human products and relations (e.g., Bostrom 2003), this seems to be a reasonable assessment. Robotics may very well represent a next disruptive step in this evolution—software programs (interconnected or not) become embodied and, hence, the domains of application increase considerably.

The previous section offered a philosophically informed view “under the hood”—i.e., at some of the fundamental structures and processes that are formative to the nature of the affordance spaces realized by robots. Robotics and its products were presented as materialized convergence point of the modus operandi of science and technology. Intertwined with Martin Bauman’s theory on modernity (2013), I will now examine these findings in greater detail with respect to the disruptive potential of robotic integration to our socio-cultural lifeworld. It will be argued that robotification is subjecting us to the risk of what will be termed ethical cleansing and its existential repercussions will be elaborated upon.

Roughly summarized, Bauman argues that technological tools and bureaucracy empowered Nazi (but also Stalinist) culture and ideology and rendered possible humanitarian atrocities like the holocaust which would otherwise not have been feasible. While this concrete historical tragedy is not the topic of investigation here, the abstract structure of social engineering described by Bauman bears remarkable resemblance to the robotic project and will be expanded upon in the rest of this section.

As briefly mentioned previously, there are many reasons why we produce robots, ranging from curiosity (e.g., blue-sky research, leisure time activity) to sheer necessity (e.g., robots for deployment in danger zones, demographics)—for an informed discussion on this topic, see also the special plenary event documented in the proceedings of the Robophilosophy 2018/TRANSOR 2018 conference (Funk et al. 2018). Regardless of the source of funding or the intentions behind, robotics is potentially affecting our social lifeworld. Social robotsFootnote 18 are by definition meant to engage with us in our social spheres. However, service and industrial robots are non-negligible influencers in this context too. They have already (e.g., in the automobile industry) or are expected to (e.g., collaborative robots) transform, amongst other things, our work life, which for most of us not only consumes a large part of our time, but in that capacity also sets the stage for a large part of our social life. As a result, robotics directly or indirectly tinkers with our sociality and is thereby a form of social engineering par excellence. A rather well-analyzed and criticized example is found in Japan, where the government strategically promotes and envisions a certain way of living empowered by robotics, e.g., enticing women to perform their traditional roles in society (cf. Robertson 2017; Wagner 2013). This is an attempt of social engineering writ large. As has been pointed out by, for instance, Rapoport, robotic technologies and embedded normativity come in tandem:

Social directives are already embedded in the very choices intelligent technologies are intended to facilitate: one cannot choose to install an energy-wasting or medicine-forgetting device, since such devices do not exist. (2016: 226)

The quotation above highlights that robotic high-tech products promote a culture where its participants relate “correctly” through technologically materialized filters (Borenstein and Arkin (2016) also discuss well-intended robotic nudging; for a concrete case of cultural embedded narrative, see Nørskov and Yamazaki (2018)). They enforce conventions of social and ethical significance and hence materialize some sort of technologically empowered humanism. This conversion of what constitutes our practical lifeworld toward ethically impeccable, or at least improved, spheres by means of robotification (e.g., package delivering systems,Footnote 19 robotic co-workers, artificial friends, etc.) seems to be the rational thing to do—at least in terms of performance optimization. It is, nevertheless, important to note, with Bauman, that the project of enhancement through social engineering is one of the necessary (importantly: not sufficient) ingrediencies to a potentially dangerous cocktail:

Modern genocide is an element of social engineering, meant to bring about a social order conforming to design of the perfect society. To the initiators and the managers of modern genocide, society is a subject of planning and conscious design. One can and should do more about the society than change one or several of its many details, improve it here or there, cure some of its troublesome ailments. One can and should set oneself goals more ambitious and radical: one can and should remake the society, force it to conform to an overall, scientifically conceived plan. (Bauman 2013: 91)

Conditioning ourselves as individuals and collectives, in accordance with given cultural rationalities, has since ancient times been considered a viable way to realize some sort of social equilibrium and prosperity (e.g., Aristotle 1991; Confucius 2008). However, as Bauman points out in his analysis (2013), and as endorsed by post-war intellectuals such as Horkheimer and Adorno (2014), rationality does not warrant morality; according to Bauman, rationality is almost neutral to such an extent that it actually becomes governed by a given culture as described in the following:

As far as modernity goes, genocide is neither abnormal nor a case of malfunction. It demonstrates what the rationalizing, engineering tendency of modernity is capable of if not checked and mitigated, if the pluralism of social powers is indeed eroded—as the modern ideal of purposefully designed, fully controlled, conflict-free, orderly and harmonious society would have it. (2013: 114)

The definition in the previous section of robotics as representing the materialized essence of technology and science seems to be consistent with his analysis of technology and bureaucracy. It manifests a framing where the world is sought to be understood as computable and mechanizable—it is about rationalization and optimization—as if computation is conflated with our human capacity of judgment, which has been heavily criticized in the AI literature (cf. Weizenbaum 1976; Searle 1980; Smith 2019). This indifference toward functionally non-reducible unique individuals is what Bauman’s critique reveals as an enabling ground for potential nightmares:

Dehumanization starts at the point when, thanks to the distantiation, the objects at which the bureaucratic operation is aimed can, and are, reduced to a set of quantitative measures. [… Railway managers] do not deal with humans, sheep, or barbed wire; they only deal with the cargo, and this means an entity consisting entirely of measurements and devoid of quality. (Bauman 2013: 102–103)

The ambition to outsource and automate central managerial decisional processes, evenin “mission critical” contexts, i.e., where lives are at stake, such as warfare, medical diagnostics, or more mundane areas such human resource management, with the help of, e.g., AI, represents a transgression of bureaucracy into the realm of technology in a Heideggerian sense.Footnote 20 Technological artifacts are here taken as a functional optimization of the detached structure represented by bureaucratism as laid out by Bauman (cf. 2013: 228). Hence, from this point, the Heideggerian notion of technology will be used interchangeably with the Bauman’s, as it adequately covers the intertwining of concrete enabling technologies and bureaucracy that is so central to the latter’s analysis. Providing further traction to this interpretation, the enforced ordering of the various phenomena of the world through the prism science and technology, as outlined in the previous section, resonates well with Bauman’s theory, as can be seen in the following quote:

What may lift that possibility to the level of reality is, however, the characteristically modern zeal for order-making; the kind of posture which casts the extant human reality as a perpetually unfinished project in need of critical scrutiny, constant revision and improvement. When confronted with that stance, nothing has a right to exist just because of the fact that it happens to be around. To be granted the right of survival, every element of reality must justify itself in terms of its utility for the kind of order envisaged in the project. (ibid.: 229)

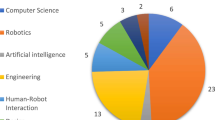

The two necessary conditions that Bauman highlights—i.e., the codependence of technological means/bureaucracy and culture with all its norms and impact—are, hence, understood as the relation between technology and culture. This interdependence is graphically illustrated in Fig. 1 where the shaded area represents the socio-cultural dimension given its technological (enabling/restricting) outlines. The three sides of the triangle symbolize the individual, social, and institutional dimensions addressed by robotics (in the form of, e.g., robotic prosthesis, social robots, and industrial robots).

It is imperative to stress at this point, with Bauman, that technology is not necessarily leading us to dystopia—it is not a sufficient condition—however, it empowers the alteration of our human condition and builds up a framework, which increases the probability of otherwise unrealizable negative outcomes. Technology has greatly advanced and become much more ubiquitous and potent since the original publication ofModernity and the Holocaust.Footnote 21 Given Bauman’s theory, it will be assumed that this increase in technological sophistication and pervasiveness also heightens the scope and risk of disproportionally negative outcomes accordingly.

Whereas Bauman’s analysis focuses on ‘ethnic cleansing’ facilitated and enabled by the technology available during WW2, I would like to draw attention to the potential of what will be referred to asethical cleansing—a sanitation of culture by the calculus of science and technology—as an inherent risk of large-scale robotic intervention. More concretely: If social and morally significant interactions are reduced to optimization and performance, we face the risk of becoming technologically imbued by social and moral correctness enforced by our robotic environment as outlined in the quote above by Rapoport. Albeit it may be conceivable that robotification in principle could ‘realize’ (applying Seibt’s taxonomy of simulation, 2017) or even go beyond and improve our moral systems and normative praxes,Footnote 22 such scenarios are beyond the scope of the paper, which is dedicated to exploring the risks associated with the phenomenon. Returning to the latter topic, two existential dimensions of ethical cleansing will be elaborated in more detail below, namely, its possible impact on our (i) personal and (ii) social nexus to morality.

Rapoport goes as far as to argue that persuasive robotics technologies, not only impose certain sets of values on us like in the quotation above, but actually “infringe upon personal autonomy and the constitution of subjectivity” (2016: 219). Assuming that she is right, we stand to lose the power to authenticate and existentially realize our morality as Nietzsche would have us:

Can you give yourself your own evil and your own good and hang your own will over yourself as a law? Can you be your own judge and avenger of your law? Terrible it is to be alone with the judge and avenger of one’s own law. Thus is a star thrown out into the void and into the icy breath of solitude. (Nietzsche 1988b: 175)Footnote 23

Pieper’s(1990) interpretation of the quoted passage addresses the remorselessness of the exhaustive transparency we face when we, from a subjective standpoint, constitute and subscribe to our moral norms—i.e., there is nowhere to hide as we know all our wrongdoings. For Nietzsche this first-person moral constitutional process, as challenging as it may be, is central for us when it comes to unfolding our subjective potential. The mere blind adherence to collective moral standards is not enough (not that there is no interdependence between the two) as this would, extrapolating on Nietzsche’s terminology, turn us into materialized ghosts—i.e., fulfilling higher non-worldly standards, nevertheless dead (cf. ibid.: 57). It will be assumed here that any form of self-development/-understanding has direct impact on our conduct in the world and, importantly, vice versa. In this line of thought, a massive robotic-induced sanitation of our imperfections in moral relations,Footnote 24 e.g., via robotic nudging (Borenstein and Arkin 2016; Rapoport 2016), seems to come with the potential/danger of shaping a culture where we basically turn ourselves into robots; in ethical regards, mere subscribers to the procrustean protocols imposed on us by whatever the machines—representing the calculus of science and technology—make of our instructions. This is in contrast to Simondon’s benevolent framing of human–machine interaction:

Far from being the supervisor of a group of slaves, man is the permanent organizer of a society of technical objects that need him in the same way musicians in an orchestra need the conductor. The conductor can only direct the musicians because he plays the piece the same way they do, as intensely as they all do; he tempers or hurries them, but is also tempered or hurried by them; in fact, it is through the conductor that the members of the orchestra temper or hurry one another, he is the moving and current form of the group as it exists for each of them; he is the mutual interpreter of all of them in relation to one another. Man thus has the function of being the permanent coordinator and inventor of the machines that surround him. He is among the machines that operate with him. (2017: 17–18)

It is not that I disagree with Simondon regarding the interdependence between us and machines; nor that I believe that culture and technology should be viewed as being of essentially different nature. The crux of what I have tried to work out so far can be summarized by erasing the “with” in the last sentence of his quotation. Robotification comes with the risk of ‘improving’ our moral modalities at the expense of existential disenfranchisement. There may be harmonic cases like the picture alluded to by Simondon, where we even experience some sort of artistic realization (see also Nørskov 2016). However, even the most ecstatic unfolding of the artists’ creativity does not seem to last forever. With robotification, our options of subscription, when it comes to being full-fledged members who are able to authenticate ourselves in the normative affordance spaces as captured by the quotation by Nietzsche and elaborated above, may dwindle to the extent of its assiduous pervasiveness.

Moving on to the second item outlined above, the implications of ethical cleansing—i.e., where robotification basically expropriates our individual moral autonomy and diversity—will in the following be further explored with respect to its relational context. Some of the theories covered here have also been the topic of Gunkel’s The Machine Question (2012)—e.g., the enlisting of Heidegger and Levinas. However, there is a great difference with respect to emphasis. Where Gunkel tries to open up our moral space to robots, I am trying to point at the risk at which robotification comes. It is important to stress that this does not mean that I think Gunkel is wrong—but that my aim is to work out the possible price paid for massive robotic integration. Researchers such as Turkle (2012), Darling (2017), Kahn et al. (2012), etc. have all demonstrated how willing we are to engage with robots even on deeply social levels. Hence, it seems sound to assume that an influx of social robots in our various social contexts will result in more social interaction with these machines. Reiterating Postman: “Technological change is neither additive nor subtractive. It is ecological” (1993: 118). In this line of thought, one of the central models for our ethical ecosystem may be fundamentally challenged. More concretely, from a Levinasian point of view, the face of the Other is what undergirds the morality of our relationships as such (cf. Levinas 2017c)—but even a Rodin sculpture may be able to provide us with such a face (2017b: 208). Hence, there seems to be no principal reason why a social robot should not be able to perform in similar fashion. If robotics systematically enters into our interpersonal sphere, the symmetric reciprocal meeting between Levinasian faces, where the other is a unique and mortal individual (Levinas 2017a, d) and elicits an ethical imperative, risks being subverted by the reduction to some quantitative computational measures which are digitally representable and match whatever criteria implemented in or feeding the program running the robot. The exclusivity of the individual is lost for the sake of technological palatability and optimization. This represents an urgent challenge to our understanding of ourselves as individuals and collectives. Hence, robotics assimilated into all parts of our lives comes with no little risk as such a development represents at the same time a technological erosion and an immurement of our sociality. This resonates well with Bauman’s warning:

More thoroughly than any other known form of social organization, the society that surrenders to the no-more challenged or constrained rule of technology has effaced the human face of the Other and thus pushed the adiaphorization of human sociability to a yet-to-be-fathomed depth. (2013: 220)

Rephrased, we subject ourselves to the greatest danger as described by Heidegger, i.e., becoming standing reserves ourselves without even being aware of it, as we are caught up in the technological mode of interpreting and engaging with the world. Relating these findings to the graphical simplification of Bauman’s theory (Fig. 1), the impact of massive robotic integration could be thought of in the heuristics of a Sierpinski triangle like a fractal structure (Fig. 2): Where the surface—representing culture—converges toward zero and the perimeter—representing technology—toward infinityFootnote 25 (i.e., the interior, culture in Fig. 1, is getting dissolved into the edges of technology).

The previous sections investigated the inherent danger that comes not necessarily with the introduction of robots as such, but with the ubiquitous integration of robots into all domains of our lifeworld. Next, the focus will shift slightly toward a less abstract exploration of the phenomenon of robotification before expanding the analysis to assess a more sanguine potential within the admittedly discomforting prospect outlined so far.

4 Tearing and stretchmarks

Given the more abstract considerations in the previous sections a more concrete discussion on how robotification basically challenges our central institutions and relations—i.e., as represented in Fig. 2—will be presented. The examples used for this purpose will move from (i) labor/work to (ii) work relations, and finally, to (iii) relations in general, to provide some important indications of the impact of the technology at hand. The complexity of robotification will to some extent be taken into account by discursively elaborating on a selection of pros and cons. Nevertheless, the emphasis will be on exemplifying how it pushes the human factor out of any context where it can be out-performed by technological artifacts.Footnote 26

Robotics comes with a truly double-edged potential and an example from a socio-economic setting will serve as a vantage point for the following elaborations. Ethical constraints, human psychology, and physiology impose limitations when it comes to the optimization of tasks still carried out by a human work force. In modern society, it would, for instance, be considered immoral to go systematically against Kant’s categorical imperative (1999) and basically squander human beings like slaves. One of the central incentives driving mechanical automatization and robotics, empowered by the mechanisms presented in the last two sections, is to bypass such limitations. Cynically speaking, the break-even point when it comes to employment is probably where robots are more cost-efficient or convenient than humans—or in the words of a former McDonald’s USA CEO responding to workers’ demand for minimum wages “[…] it’s cheaper to buy a $35,000 robotic arm than it is to hire an employee who’s inefficient making $15 an hour bagging French fries—it’s nonsense and it’s very destructive and it’s inflationary and it’s going to cause a job loss across this country like you’re not going to believe.” (Limitone 2016). The offset created by robots and AI challenges labor markets across professional sectors (e.g., Ford 2015; McKinsey 2017). In a nutshell, the mollifying interpretation of technological devolvement goes something like this (e.g., Ford 2015 (Robots); Floridi 2014 (Information technologies); Bostrom 2014 (AI)): Historically, jobs rendered into oblivion by technology have been substituted by novel professions and, maybe more importantly, have increased welfare. However, modern technologies are of a different caliber compared to earlier developments. We may simply not be able to follow the machines of the future since the limits of our ‘wetware’ may stand no chance against technologically materialized inventiveness; this applies to sheer efficiency of task performance, but we may even be unable to comprehend what and how the machines of the future are doing. The current efforts to create explainable AI can be seen as a struggle to precisely mitigate for this problem. However, as pointed out by Rodogno and Nørskov (2019), if unsuccessful or insufficient for our moral praxes.

we face the risk of being deprived of the essential moral practice of understanding our own, and the actions of the other. Our practice of justification will suffer as a result and so will our capacity to restore relations. (ibid.: 68).

In general, under the technological and scientific reign, as outlined in the previous sections, it would be naïve to assume that established or emerging companies would insist on employing humans for tasks that could be carried out more efficiently by robotic solutions (resonating well with, e.g., Pham et al. 2018: 126). What makes the situation stand out in contrast to the various milestones of technological evolution hitherto is captured in the following quote by Ford:

The fact is that “routine” may not be the best word to describe the jobs most likely to be threatened by technology. A more accurate term might be “predictable”. (2015: xv)

Accordingly, in Rise of the Robots (2015), Ford brings to our attention that almost all jobs are potentially subjectable to robotification—with the only jobs left for us humans being those of a sufficiently unpredictable nature. Nevertheless, the prospect of outsourcing certain parts of our professional lives to robotic systems seems like a promising relief to many of us.

[…] many of the tasks that robots would be able to solve for us, well, it is work which is boring […], work which characterized by routine. And if you chose to work for a municipality or social security, where we each day get up in the morning in order to help others, that’s not because you are dreaming of getting a task where you need to type in data on three different screens. So, we are all very positive [about the virtual robotic processing system]. (EY 2017: 1'09" to 01'31")Footnote 27

The appraisal is from a short promotional video by Earnest & Young who assisted in the automatization of case handling in Odense Municipality’s social security service in Denmark. Automatization was enhanced by more than 80% in a mere 7-week pilot project (Kjærsdam 2017). The enthusiasm of the just quoted manager probably resonates well with sentiments deeply felt by many of us: drowning in everyday administrative tasks, paperwork, meetings, etc., we find ourselves disenfranchised from conducting research, teaching, selling, buying, caring, or whatever core activities that comprise our imagined professional raison d'être. However, what at face value seems to be a development to be welcomed—bringing us closer to the stage of ‘self-actualization’, the pinnacle of Maslow’s hierarchy (i.e., “[a] musician must make music, an artist must paint [etc.]” (Maslow 1943: 382))—may come with considerable repercussions. Questions that have been underexamined so far are: i) whether or not the leftovers available to us in the slipstream of robotification will be of ever higher complexity (cf. Ford 2015) and responsibility—ultimately high-stress jobs; ii) whether, as a corollary, our well-being is the currency of trade when it comes to prolonging our relevance while competing against robotic optimization (cf. Pham et al. 2018: 127); and going even further iii) how real is the threat of moral and psychological deskilling as outlined by, e.g., Turkle (2012), Vallor (2015), and Darling (2016), i.e., will robots taking over activities and entering relations that hitherto have been considered true manifestations of our humanity, like caregiving (symbolically as well as literally), deprive us of vital opportunities to not only exercise but to also to cultivate such capacities? As pointed out by Seibt: “We urgently need more knowledge on how automation will likely affect those positive factors related to work—will they increase if the 4D work activities—dangerous, dull, dirty, or dignity-threatening—are outsourced to artificial agents?” (2018: 134).

Although having arrived at an ambivalent point, another perspective needs to be addressed in this line of investigation. While the ‘we’ applied so far appears inclusive, it is only befitting to turn the attention explicitly toward the ‘other’, who at such distance, eo ipso, is so easily violated and still paying a considerable price for a convenient way of living that many of us benefit from. Companies often profit by outsourcing their business activities to regions with weak labor protection—and hereby, for instance, intentionally or not, engage in the exploitation of child labor. Substituting humans in sweatshops, robots could be a real game changer as nations unfit to protect their citizens may be forced to change their priorities facing the disruption of mass unemployment. However, here again, we are dealing with robotics as a double-edged sword. As pointed out in an article by The Wall Street Journal, countries such as Bangladesh, focusing on the production of garment, will have a hard time trying to diversify their economy as “most of the alternatives higher up the value chain, like electronics, are automating as well” (Emont 2018).

[… T]he detrimental effect of robots on employment is concentrated in emerging economies, taking place both within countries and through the global supply chain. (Carbonero et al. 2018: 12)

The robotic offset of even ‘substandard’ jobs hence induces collateral damage, and it is important to recognize the possible price paid in the process of robotification; especially if the hope is to level or increase the standard of living globally in the long run. Robotification is a global enterprise and its disruptive impact is not limited to dull, dirty, and demeaning jobs or a particular region (cf. Ford 2015).

Although the degree and timeline of job eliminations caused by automation are still debated, there is a consensus that, in the present global context of stagnant and interdependent economies, automation will inevitably take away a significant number of jobs. This means that, in the next few years and decades, many workers will lose their jobs to robots, while those keeping their jobs will experience increased physical and psychological pressure and still more will face unemployment due to the lack of jobs. (Pham et al. 2018: 127)

Weighing in on the utilitarian assessment scale, there are some more philosophical considerations. Central thinkers such as Hegel and Marx have pointed to the importance of work on our subjective situatedness in nature and with respect to self-realization (Sayers 2005). It should be needless to say that most workplaces are not established for the sake of promoting fun and the various employees’ self-realization projects. Given the underlying structural processes, worked out in the forgoing two sections, a more realistic take is that new companies will strive toward becoming as ‘dark’ factories as possible—i.e., with an as limited staff as possible. On a side note, it becomes hard to blame them, and by the very concept, mass firings should become harder to find as newly established companies have fewer employees to let go; we are facing a potentially truly stealthy development, which is neutral in the sense that nobody is explicitly targeted (besides everybody) and the only criteria for evaluation is efficiency—like in the case of Amazon’s AI assessing and letting employees go based on their performance (cf. Lecher 2019).

We may find ourselves interacting with robotic entities in our work environment to a greater extent, in contexts that would otherwise facilitate and promote human–human interaction. Here it is important to note, with Seibt, that robots strictly speaking cannot work:

The phenomenal experience in working we may describe as a mixture of drudgery, labor, suffering, fulfilling transformative power, enjoyment, etc. in different proportions at different times, but it always includes the awareness of a limitation of our freedom. […] Robots—at least the actual and possible roots we currently discuss—cannot have phenomenal experiences, and thus in particular not the phenomenal experiences of necessitation involved in working. (2018: 136)

Social identity in the workspace is closely tied to our well-being (cf. Steffens et al. 2017). Combined with the introduction of new types of social agents that may decrease the likelihood of human–human encounters in our professional daily lives, it becomes imperative to assess whether robots can provide us with, for instance, the vital recognition which, from a philosophical point of view, is centrally at stake in any human–human interaction (Nørskov 2011; Gertz 2016; Brinck and Balkenius 2018; Nørskov and Nørskov 2020). Work makes up a significant part of most of our lives—bringing many of us together with co-workers, customers, and other stakeholders—and it hereby forces us to participate in the vital game of recognition. If work becomes impoverished when it comes to satisfying central human needs, as it has been subjected to the process of extreme optimization ("Introduction") and ethical cleansing ("Mechanics"), we may turn our hopes and attention toward what has so far been classified as leisure time.

However, it is not that simple. The problem also transposes into our spare time and private spheres of life. Here, robotic entities such as lawnmowers and robotic vacuum cleaners already to a certain extent liberate us from our household chores and, in principle, provide us with the opportunity for quality time with our families and meaningful projects—even new fulfilling practices are conceivable with these machines (Nørskov 2016). However, when social robots enter this domain and relegate our social relations to services provided by machines, we face serious potential challenges, e.g.: (i) as outlined in multiple passages in Turkle’s Alone Together (2012), people may actually prefer robotic companions over the real ones, while (ii) it is uncertain whether or not these robotic agents can be adequate interaction partners (see Bickhard 2017; Gunkel 2012; Rodogno 2016 for examples on the discourse), and iii) robots taking the place of or outdating what otherwise would be reserved for humans in interpersonal relationships changes our existential self-understanding and culture (e.g., Yamazaki et al. 2012)—for better and worse.

Like in the ongoing debate on the impact of violent video games on gamers (e.g., Schulzke 2010), it has been questioned whether or not interaction with robots affects and conditions the way we treat our fellow human beings (e.g., Darling 2016). Until we have a sound understanding hereof, we are in principle gambling without even knowing the actual stakes. It seems that we are on an expedition searching for ever more convenience, fairness, profit, entertainment, etc. What makes it difficult is that such an enterprise is not necessarily a bad thing—who would not like to be treated fairly using a teleoperated robot to sidestep perceptual biases (for examples of research in that direction, see, e.g., Seibt and Vestergaard 2018; Nørskov et al. 2020; Nørskov and Ulhøi 2020), have more quality time with their family, etc.? However, it is vital to note that the process of robotification may stretch the plasticity of our relational modalities to the limit. While it is certainly relevant to ask whether or not they will tear, a no less relevant question is whether adjustments will leave behind deep stretch marks—distort culture to something utterly optimized, sanitized, and meaningless as elaborated on in the previous sections, i.e., through ethical cleansing.

So far, the disposition at hand has highlighted the rather distressing potential of robotification. However, as we shall see in the next section, both Heidegger and Nishitani point to a positive potential that may emerge within the gloomy modality of technology. Besides offering the possibility of some sort of happy ending, and given that their theories form the foundation for the paper, this dimension needs to be folded into the investigation at hand which will be attempted next.

5 A way out?

Given the pessimistic outlook so far, it is natural to ask whether any hope is to be found within the heuristics of the exposition? As this paper fundamentally builds on the theory, structure and sentiment of Heidegger’s (2000b) and Nishitani’s (1982) analyses of science and technology, the high stakes may actually foster the potential of bringing about some sort of reevaluation and realignment of our lives and ways of thinking as indicated in the following quotation:

[… S]cience for Heidegger and Nishitani is a potentially self-surpassing issue. Both thinkers suggest that the ideological encounter with science as the extreme limit of manipulative and distorted ontology may paradoxically lead, through a radical reversal based on meditative thought, to the recovery of a genuine and regenerating experiential philosophy surpassing the deficiencies of metaphysics. (Heine 1990: 177)

Whereas Heidegger proposes that art may be a ‘saving power’, Nishitani assesses that science and technology will push us toward an existential/religious crisis where we may realize our full potential. In the following, it will be discussed how robotics could steer us toward the verge of an abyss (as Nishitani would phrase it), i.e., on one hand exposing us to the risk of estrangement, as outlined in the previous sections, and, on the other, forcing us to act and to envisage the possibility of a constructive reorientation.

According to Nishitani, the very urgency of the danger induced by science and technology may give us epistemic access of extraordinary depth—he writes:

Nihility [triggered by science and technology] refers to that which renders meaningless the meaning of life. When we become a question to ourselves and when the problem of why we exist arises, this means that nihility has emerged from the ground of our existence and that our very existence has turned into a question mark. The appearance of this nihility signals nothing less than that one’s awareness of self-existence has penetrated to an extraordinary depth. (Nishitani 1983: 4)

In the case of Heidegger, we may become ready to ‘think’, and he hints at that one facilitating modality within the realm of art:

Human activity can never directly counter this danger. Human achievement alone can never banish it. But human reflection can ponder the fact that all saving power must be of a higher essence than what is endangered, though at the same time kindred to it. But might there not perhaps be a more primally granted revealing that could bring the saving power into its first shining forth in the midst of the danger, a revealing that in the technological age rather conceals than shows itself? […] Because the essence of technology is nothing technological, essential reflection upon technology and decisive confrontation with it must happen in a realm that is, on the one hand, akin to the essence of technology and, on the other, fundamentally different from it. Such a realm is art. (Heidegger 2004b: 50–51)Footnote 28

Hence, in principle, a possible outcome of robotic endeavor should be conceivable, given that our affordance spaces enable a tuning into the right modality of experiencing and co-existing with our world. However, I do not mean to attempt to venture into the vital question of ‘how this could be done’. This is beyond its scope. It would be too much to hope for a universally applicable and effective ‘how-to’, ‘step-by-step’ guide, as what Heidegger and Nishitani are offering are non-naturalized, phenomenological insights. Instead, I would like to discuss a more modest, but in the light of the previous pessimistic prospects hopefully somehow comforting, supplementary perspective on what could actually trigger a reorientation as hoped for by the two thinkers.

It has been suggested by, e.g., Kahn et al. (2009: 41) that technological artifacts and renditions of the world condition us evolutionarily to adapt to them although we are missing out on something essential by doing so, or as Turkle states:

They [young people who grow up with and are surrounded by modern technology] come to accept lower expectations for connection and, finally, the idea that robot friendships could be sufficient unto the day. (2012: 17)

It is as if Baudrillard was on to something when he was diagnosing the imperialism of the Simulacra (2017). The sheer growth rate of the robotic sector may, however, turn out to increase so fast that we as individuals and collectives may not be equipped with the adequate faculties and institutions to even follow the pace ofrobotification (cf. Ford 2015; Bostrom 2014). Lobby organizations such as the Foundation for Responsible Robotics,Footnote 29 the call for ethics boards (Sullins 2016), and The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems,Footnote 30 amongst many other initiatives, indicate the acuteness of some of the challenges imposed on us by robotics. Counterintuitively, it may be exactly the very speed of progress that could turn out to be of significant importance. More concretely, pervasive robotification may be perceived as happening so fast that what is left of our humanity is being surpassed rather than transformed. This could constitute a vital opportunity, leaving something in us that is capable of being perplexed, something that can resist being lullabied by the various technological pacifiers tempting us to solve human problems by the efficiency and comfort of technological brute force and ingenuity. Whatever residue that is struggling to reconcile with the image or reality of rapid robotic integration, usurping all parts of our lives, may simply be a product of us thinking too slowly. Pushed by alienation, we may find ourselves in an identity crisis and thereby enticed to fundamentally contemplate who we are. To Nishitani, this could pertain to facing the ‘abyss’ (1982), and in Heidegger’s terminology, we may start ‘denken’ (2000b). Robotics could force us into a Zugzwang where we either become torn away by technological currents, i.e., are consumed by this modality of interpreting the world (as presented in the last two sections), or unfold a hopefully somehow more sustainable modality of co-existing. The problem with the latter is that it is easily conflated and confused with ethical cleansing, as explained above, which treats symptoms exclusively with high-tech fixes.

In an effort to avoid an anticlimactic ending, the more sanguine prospect could nevertheless be as follows. Assuming that the philosophers, coming from various cultures and epochs (such as Mencius 1895: book 6, chapter 2; or Rousseau 1992: 76), who believe in the intrinsic goodness of human beings are right, we could hope that care, compassion, and benevolence for others, oneself, and our environment (robots included) would be revitalizing as a counter-reaction to the race for efficacy and optimization as represented by robotification. At the same time, the robotic challenge to our relationships and institutions may provide us with a tabula rasa conducive to such ideals. (i) At the level of such a reset, we could hope that wrongs that have been just wrong from times immemorial could be short-circuited. For instance, the exploitation of workers, gender inequality, etc. may simply become issues of the past to the degree of automation. In a nutshell: if “humans may not apply” (to use the catchphrase of Grey’s short documentary, 2014) for a given job any more, then we do not need to worry about the female worker getting paid less for doing the same type of work compared to her male colleague. The problem in some sense ceases to exist so to speak. Of course, this seems to be a disappointingly impoverished solution to the problem, as many of us would probably hope for some type of collective enlightenment making us come to our senses and empowering us to straighten things out. However, the two are not mutually exclusive: (ii) On the humanistic level, by robotification challenging what it means to have appropriate and genuine relationships, i.e., to be a co-worker, friend, partner, to have a car, pets, etc. (e.g., Turkle 2012; Ess 2016), the dogmas of centuries of culture and tradition may be put to the test (cf. Gunkel 2012) as if we were on a Nietzschean journey. We could hope that the outcome of being perplexed and out-performed by technology will make us open to truly reassess the authenticity of our ways of living, and entice us to act accordingly instead of letting everything be turned into services facilitating ever more intense and sophisticated needs and growth. This could be a concrete realization of what Heidegger alluded to when quoting Hölderlin “But where danger is, grows [t]he saving power also” (Heidegger 2004b: 47).Footnote 31

6 Conclusion: honing optimism

In this paper, robots were interpreted as materialized prisms of science and technology, potentially affecting all aspects of our lives. It is not just a question of whether or not something is lost or new meanings emerge in the embodied process of rendering an analogue world into a digital one. Robotics becoming not a way but converging toward the way of understanding and relating to the various phenomena in the world constitutes a fundamental challenge to who we are as individuals and cultures. In this context, the paper addressed the problem of ethical cleansing latently built into robotics qua its undergirding framework. It is as if we are gambling: The acquisition of great wealth and liberty—utopia—empowered by socio-political structures draws us into the game. While robotics, as discussed, may even stimulate and tease out our finest qualities, we are currently left hoping that our odds of not losing (or what is worse) are significantly higher than in the casino. Maybe, like with the ongoing climate crisis, humankind needs the push back from the world of a magnitude that disrupts a sufficient number of individuals’ lives. Preventing significant loss seems to be the only incentive that we are currently responsive enough to when it comes to initiating mitigation.

Notes

http://www.parorobots.com, accessed: September 23, 2019.

E.g. https://global.toyota/en/detail/56528, accessed: September 23, 2019.

Some positions and concepts (e.g., pertaining to virtue ethics) are only implicitly addressed and would have deserved to be expanded upon. However, due to limited space, I hope that the reader may use the adumbrated as a vantagepoint for further inquiry.

Lifeworld is here understood in the Husserlian tradition, namely, as the fundamental meaning-bestowing contexts and structures of our everyday lives, such as the socio-cultural spheres that comprise this dimension of our lived reality.

E.g., the Society 5.0 initiative by the Japanese government (https://www.gov-online.go.jp/cam/s5/eng, accessed: October 31, 2019).

For a systematic analysis of the affinity and differences of these two thinkers, see Heine (1990).

As the paper draws on thinkers linked to the Existentialism, the usage of the term ‘existential’ needs some clarification as it may insinuate that it should be understood in the narrow meaning of this tradition. However, the word here does not only cover a certain intellectual stance on who we are but also its practical repercussions and significance.

This critique resonates well with Horkheimer and Adorno’s more large-scale critique of the Enlightenment (2014).

The aim here is not to find sufficient requirements for activities to qualify as scientific; the above pertains to basically any conscious relation we have to the world.

References to Heidegger’s works will be to the German versions of the Gesamtausgabe [Complete Edition]; however, quotations are provided in English translations (with references to the corresponding German original supplied in footnotes).

Bracketed, I have added the word ‘mere’ as this was not included in the translation by Hofstadter. However, this little word makes a difference as it underscores the non-exhaustiveness of ‘the scientific view’ and hence must not be omitted given the nature of the enquiry at hand.

For the original German version, see Heidegger (2000a: 173).

http://www.parorobots.com, accessed: October 7, 2019.

Lethal Autonomous Weapon Systems (e.g., Sharkey 2018).

For the original German version, see Heidegger (2000b: 32–33).

For the original German version, see Heidegger (2004a: 341 f.n. a).

Here to be understood as sociable robots in opposition to earlier uses of the term (cf. Breazeal 2003).

Sticking with the narrative also including autonomous cars.

One could object that this may bring us closer to the saving power that Heidegger (2000b) alludes. And this may very well be the case, and is also brought up to some extent in "Tearing and stretchmarks".

I either did not have or maybe just created an e-mail account around 1989.

Whatever that means in this context.

For the original German version, see Nietzsche (1988a: 67).

For a concrete discussion of the impact of self-driving cars on our moral space, see. Rodogno and Nørskov (2019).

This ties nicely in with the way Panikkar predicted technology as the monoculture of the future (cf. 2000).

Assuming that there is something that is not reducible to functionality.

Freely translated by the author from Danish to English.

For the original German version, see Heidegger (2000b: 35–36).

https://responsiblerobotics.org, accessed: September 4, 2018.

https://standards.ieee.org/industry-connections/ec/autonomous-systems.html, accessed: September 4, 2018.

For the original German version, see Heidegger (2000b: 29).

References

Aristotle (1991) Nicomachean ethics (trans: Ross WD). In: Barnes J (ed) The complete works of Aristotle: the revised oxford translation, 4th edn, Vol 2, Bollingen Series, Vol 71:2. Princeton Univeristy Press, pp 1729–1867

Baudrillard J (2017) Simulacra and simulation (trans: Glaser SF) The body. In: Theory: histories of cultural materialism. The University of Michigan Press

Bauman Z (2013) Modernity and the Holocaust. John Wiley & Sons

Berg P (2008) Meetings that changed the world: Asilomar 1975: DNA modification secured. Nature 455(7211):290–291

Bhutani A, Bhardwaj P (2018) Service robotics market size by product (Professional, Personal), By Application (Professional [Defense, Field, Healthcare, Logistics], Personal (Household, Entertainment]), Industry Analysis Report, Regional Outlook (U.S., Canada, UK, Germany, France, Italy, China, Japan, South Korea, India, Brazil, Mexico, Saudi Arabia, UAE, South Africa), Growth Potential, Price Trends, Competitive Market Share & Forecast, 2017–2024: Sumary. Global Market Insights

Bhutani A, Bhardwaj P (2019) Robot end-effector market size, by product (Welding Guns, Grippers, Tool Changers, Suction Cups), By Application (Material Handling, Assembly, Welding, Painting), By End-Use (Automotive, Metals & Machinery, Plastics, Food & Beverage, Electrical & Electronics), Industry Analysis Report, Regional Outlook (U.S., Canada, Germany, UK, Italy, France, Spain, China, India, Japan, Taiwan, South Korea, Brazil, Mexico), Growth Potential, Price Trends, Competitive Market Share & Forecast, 2019–2025: Summary. Global Market Insights

Bickhard MH (2017) Robot Sciality: genuine or simulation? In: Hakli R, Seibt J (eds) Sociality and normativity for robots: philosophical inquiries into human-robot interactions, Studies in the Philosophy of Sociality, vol 9. Springer, pp 41–66

Borenstein J, Arkin R (2016) Robotic nudges: the ethics of engineering a more socially just human being. Sci Eng Ethics 22(1):31–46

Bostrom N (2003) Ethical issues in advanced artificial intelligence. In Cognitive, emotive and ethical aspects of decision making in humans and in artificial intelligence, vol 2. Int. Institute of Advanced Studies in Systems Research and Cybernetics, pp 12–17

Bostrom N (2014) Superintelligence: Paths, dangers, strategies. Oxford University Press, United Kingdom

Breazeal C (2003) Toward sociable robots. Robot Auton Syst 42(3–4):167–175

Breazeal C (2005) Socially intelligent robots. Interactions 12(2):19–22

Brinck I, Balkenius C (2018) Mutual recognition in human-robot interaction: a deflationary account. Philoso Technol 33(1):53–70

Carbonero F, Ernst E, Weber E (2018) Robots worldwide: the impact of automation on employment and trade. Working Paper no. 36, ILO Research Department

Confucius (2008) The Analects of Confucius (trans: Legge J). In: The Analects of Confucius with A Selction of the Sayings of Mencius, The Way and Its Power of Laozi. Signature Press, pp 13–139

Darling K (2016) Extending legal protection to social robots: the effects of anthropomorphism, empathy, and violent behavior towards robotic objects. In: Calo R, Froomkin AM, Kerr I (eds) Robot Law. Edward Elgar Publishing Limited, pp 213–232

Darling K (2017) 'Who's Johnny?' Anthropomorphic framing in human-robot interaction, integration, and policy. In: Lin P, Abney K, Jenkins R (eds) Robot Ethics 2.0: from autonomous cars to artificial intelligence. Oxford University Press

Dunbar RIM (1998) The social brain hypothesis. Evolutionary Anthropology Issues News Rev 6(5):178–190

Emont J. (2018, February 16) The robots are coming for garment workers. That's good for the U.S., Bad for poor countries. The Wall Street Journal Online. https://www.wsj.com/articles/the-robots-are-coming-for-garment-workers-thats-good-for-the-u-s-bad-for-poor-countries-1518797631. Accessed 12 Apr 2019

Ess CM (2016) What’s love Got to Do with it?: Robots, sexuality, and the arts of being human. In: Nørskov M (ed) Social robots: boundaries, potential, challenges, 1st edn, Emerging Technologies, Ethics and International Affairs Series, Routledge, pp 57–79

EY (2017) Robotics hos Odense Kommune. https://youtu.be/nOcYylAmVhk. Accessed 1 Apr 2019

Floridi L (2014) The fourth revolution: how the infosphere is reshaping human reality. Oxford University Press

Ford M (2015) Rise of the robots: technology and the threat of a jobless future. Basic Books, New York, NY

Funk M, Seibt J, Coeckelbergh M (2018) Why do/should we build robots?—summary of a plenary discussion session. In: Coeckelbergh M, Loh J, Funk M, Seibt J, Nørskov M (eds) Envisioning robots in society—power, politics, and public space: Proceedings of robophilosophy 2018/TRANSOR 2018, Frontiers in Artificial Intelligence and Applications, vol 311. IOS Press, Amsterdam, pp 369–384

Gertz N (2016) The master/iSlave dialectic: post (Hegelian) phenomenology and the ethics of technology. In: Seibt J, Nørskov M, Andersen SS (eds) What social robots can and should do: Proceedings of Robophilosophy 2016/TRANSOR 2016, Frontiers in Artificial Intelligence and Applications, vol 290. IOS Press Ebooks, Amsterdam, pp 136–144

Grey CGP (2014) Humans Need Not Apply. https://youtu.be/7Pq-S557XQU. Accessed 20 Sept 2019

Gunkel DJ (2012) The machine question: critical perspectives on AI, robots, and ethics. The MIT Press, Cambridge, MA

Gunkel DJ (2018) Robot rights. The MIT Press, Cambridge, MA

Heidegger M (1926) Sein und Zeit, 19th edn. Max Niemeyer Verlag Tübingen, Tübingen

Heidegger M (1998) Letter on “Humanism” (trans: Capuzzi FA). In: McNeill W (ed) Pathmarks, 1st edn. Cambridge University Press, pp 239–276

Heidegger M (2000a) Das Ding. In: von Herrmann F-W (ed) Vorträge und Aufsätze, vol 7, Gesamtausgabe. Vittorio Klostermann, Frankfurt am Main, pp 165–187

Heidegger M (2000b) Die Frage nach der Technik. In: von Herrmann F-W (ed) Vorträge und Aufsätze, vol 7, Gesamtausgabe. Frankfurt am Main, Vittorio Klostermann, pp 5–36

Heidegger M (2000c) Wissenschaft und Besinnung. In: von Herrmann F-W (ed) Vorträge und Aufsätze, vol 7, Gesamtausgabe. Frankfurt am Main, Vittorio Klostermann, pp 37–65

Heidegger M (2001) The thing (trans: Hofstadter A). In: Poetry, language, thought. Perennial, pp 160–184

Heidegger M (2004a) Brief über den »Humanismus«. In: von Herrmann F-W (ed) Gesamtausgabe Bd. 9, 3rd edn. Frankfurt am Main, Vittorio Klostermann, pp 313–364